Abstract

Part one of this mini-series on statistics in cerebrovascular research uses the simplest yet most common comparison in experimental research (two groups with a continuous outcome variable) to introduce the very basic concepts of statistical testing:

Keywords

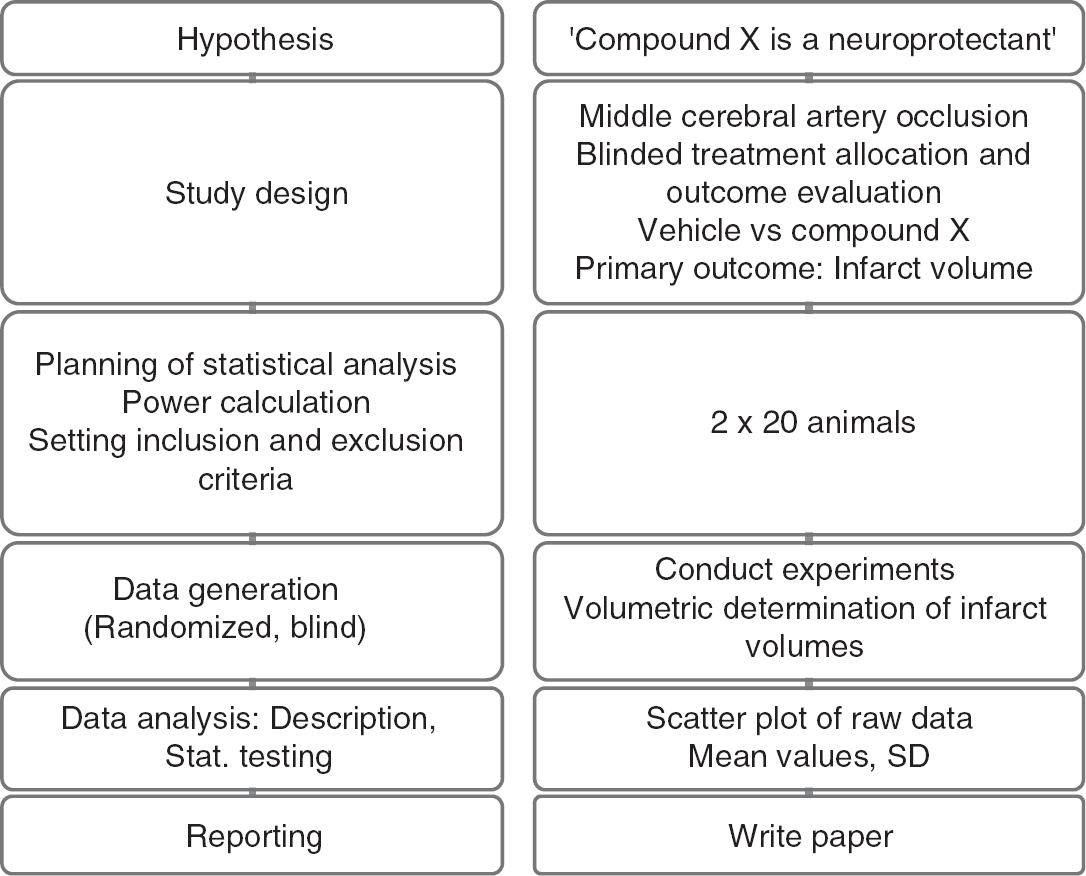

Experimental Design

For ethical and economic reasons, it is important to design animal experiments properly, analyze the data correctly, and to use the minimum number of animals necessary to achieve the scientific objectives of the study (Festing and Altman, 2002). Recently, evidence has been presented that weaknesses in design, analysis, and reporting in experimental stroke research are prevalent (Dirnagl, 2006), and that these weaknesses can have quantifiable effects on the predictiveness and ultimately on the validity of preclinical research in the cerebrovascular field (Crossley et al, 2008; Macleod et al, 2008). A systematic analysis of all papers reporting original research and published in the

We would like to guide researchers of this journal with a mini-series on the design of experiments, as well as the analysis, interpretation, and presentation of data. In the first article, we will start with the comparison of two experimental conditions, which is one of the most frequent trial designs in stroke research. This simple design allows us to introduce the very basic concepts of Null Hypothesis statistical testing:

Where ever possible, to minimize bias in biomedical research, experiments require randomized allocation to experimental groups and outcome assessment without knowledge of the assignment to these groups. We start by formulating a hypothesis (e.g., ‘Compound X is a neuroprotectant and affects injury after focal cerebral ischemia’), and then choose an appropriate study design. After we have selected an adequate outcome measure, the sample size of the study needs to be determined

Study workflow (left panel: principle; right panel: corresponding example).

Errors and Sample Size Calculation

The sample size of the study depends on the error we are prepared to live with. This error is of two types. The

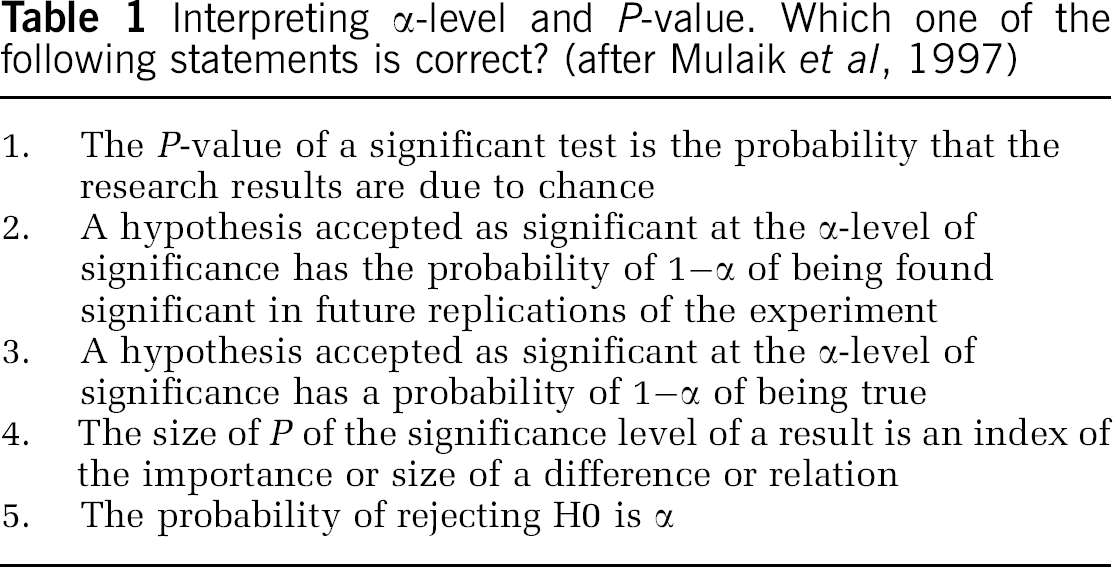

But what does it mean if we find that our results are significant on the 5% level? Please consult Table 1 and see which interpretations you agree with!

Interpreting α-level and

Some researchers will pick at least one of the choices of Table 1. However, none of those interpretations is correct! What an α-level of 5% really implies is that if we were to repeat the analysis many times,

H0 usually states the opposite of what we really want to find, namely that there is no difference between the two groups. As we are performing the statistical test under the assumption that H0 is true, it is impossible that we make a probability statement about H0 at the same time. We cannot assess what we assume to be true (Goodman, 1999)! Thus,

The

Let us fix α at 5% and β at 20%, a value often chosen. Researchers seem to be more afraid of a false-positive than a false-negative result. To plan the study, we make the assumption that our data follow a normal distribution with common s.d. σ and means μ1 and μ2. The difference of means δ = μ1–μ2 is often called the effect size. The ratio of difference and common s.d.

is called the standardized effect. For example, a reduction of infarct size by δ = 30 mm3 results in a standardized effect size Δ = 1 if σ was also 30 mm3. These definitions of effect size may be found in the book by Hulley et al (2007).

Sample size estimation for two independent samples requires several assumptions and specifications. A minimally relevant effect difference δ and the common s.d. need to be ‘guesstimated’. To ‘guesstimate’ δ, we need previous experience with the model; setting d is a matter of pathophysiological reasoning. In addition, the significance level a and the desired power need to be set.

If we, for example, want to be able to detect a reduction in the infarct volume of at least δ = 15 mm3 (effect size), and we expect a s.d. of σ = 30 mm3, at 80% power and α = 0.05 (two-sided) we need a total sample size of 128 animals, that is 64 per group based on the two sample

A simple rule of thumb (van Belle, 2008) can also be used to estimate sample sizes for a two-sided α = 0.05 and 80% power:

If we set standardized effect size Δ to 0.5, that is, we want to be able to detect a difference of half the s.d., then 16/0.52 = 64 subjects per group are needed.

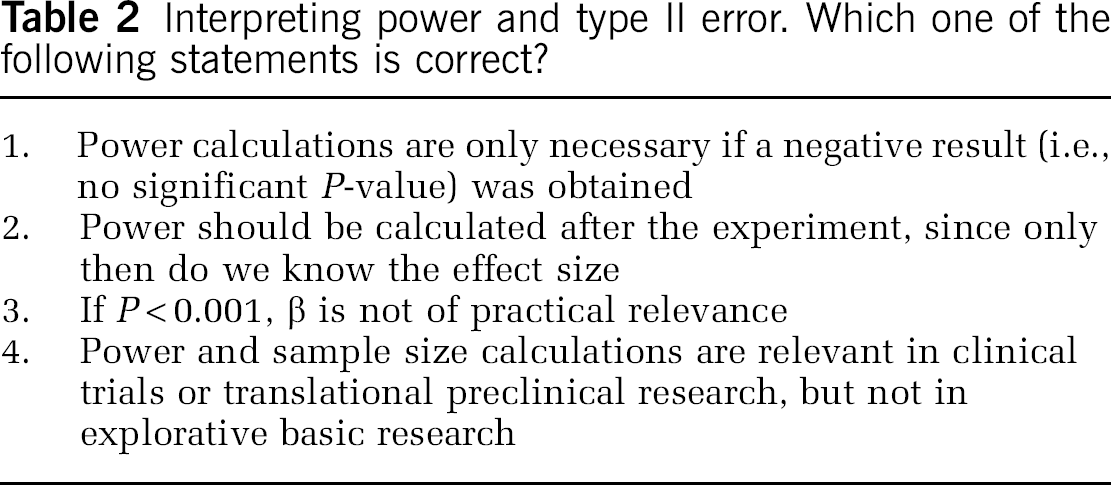

Similar to the type I error, the concept of type II error is often misunderstood, and consequently power and sample size calculation have no role in the overwhelming majority of papers in the cerebrovascular and experimental stroke field. Quiz yourself and try to evaluate the statements in Table 2.

Interpreting power and type II error. Which one of the following statements is correct?

As in Table 1, none of the statements in Table 2 is correct! The prototypical misunderstanding of the type II error is that if one has obtained a statistically significant

Descriptive Statistics

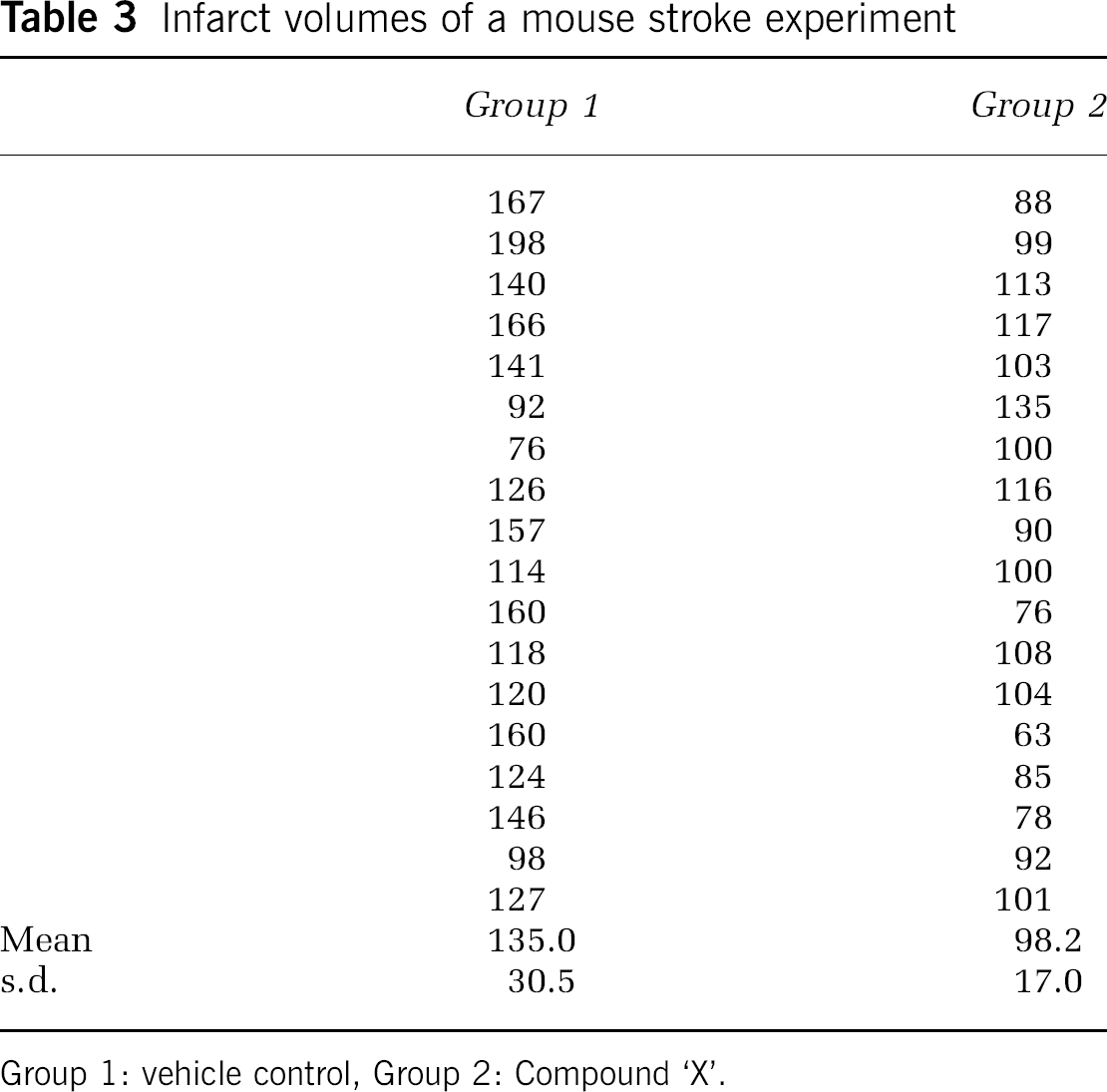

Categorical data such as behavioral scores or presence or absence of symptoms can be summarized as frequencies and percentages. Continuous data such as infarct volume may be summarized using the mean and s.d. Table 3 shows data from a typical experimental stroke experiment and corresponding summary statistics.

Infarct volumes of a mouse stroke experiment

Group 1: vehicle control, Group 2: Compound ‘X’.

For descriptive purposes, only the s.d. of the data is an acceptable measure, but not the standard error of the mean (s.e.m.). The latter is an estimate for the precision of estimating the mean, not a description of the sample (Altman and Bland, 2005).

Graphical Display of the Data

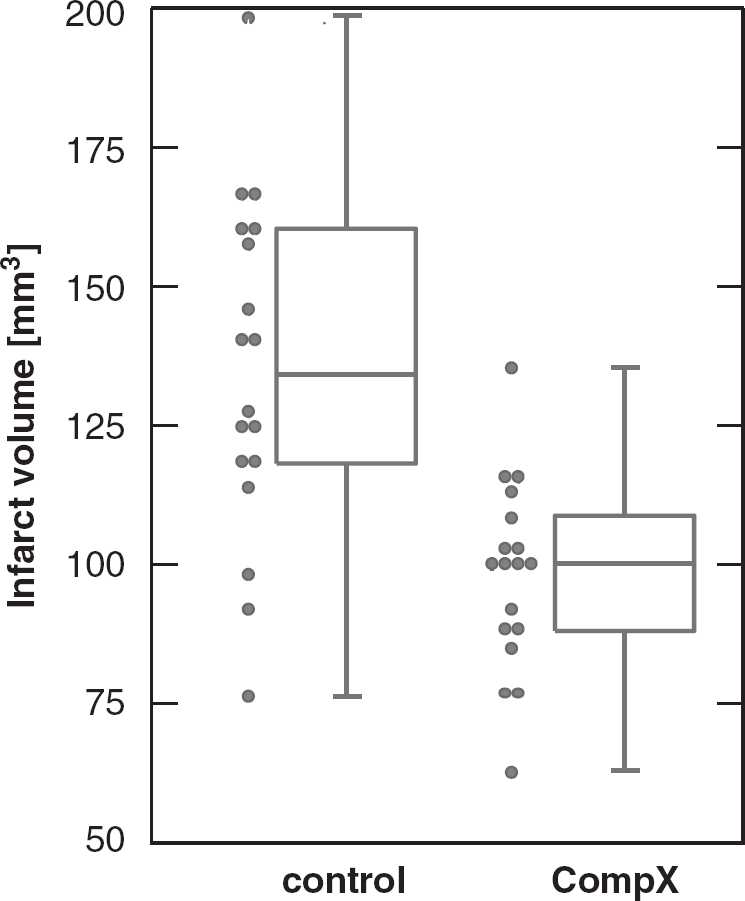

It is very common in cerebrovascular research, but unsatisfactory, to summarize the results of a two-group comparison of continuous variables with a bar graph and s.e.m.s. s.e.m.s should not be used for graphical data presentation (or for data presentation in the text, see above). Second, displaying only the mean is the least informative option available. A very useful graph is the box-and-whisker plot, which is helpful in interpreting the distribution of data (see Figure 2). A box-and-whisker plot provides a graphical summary of a set of data based on the quartiles of that data set: quartiles are used to split the data into four groups, each containing 25% of the measurements. By combining the box-and-whisker plot with a display of each data point as a scatter plot, a most informative data display can be obtained.

Box-and-whisker plot (median, first and third percentiles, range) of the infarct volume data of Table 3, displayed with the scatter plot of raw data.

Confirmatory data analysis

A properly designed experiment in which the hypotheses are stated and type I and II error considerations as well as the plan for statistical analysis are specified in advance—and ideally published later—allows a confirmatory analysis. In such experiments, the key hypothesis of interest follows directly from the experiment's primary objective, which is always predefined, and is the hypothesis that is subsequently tested when the experiment is complete.

For this purpose, statistical tests are used. Statistical tests are constructed on the basis of null hypothesis, which states that no treatment difference exists. More formally expressed, the null hypothesis (H0) states that there is no difference between the two groups, whereas the alternative hypothesis (H1) states that there is a difference, which is what we usually state in our biological hypothesis.

The two-sample

Here

For our data in Table 3, assuming unequal variances in each group, we obtain a

It is also desirable to have an estimate of effect size. This is given by δ = 135.0-98.2 = 36.8. It is the average difference of infarct volume between the two treatments. In addition, a 95% confidence interval can be given for this estimate of effect, which leads to a 95% CI (20.1, 53.5) based on the

Conclusions

William Sealy Gosset (1876 to 1937), the eminent statistician who under the pseudonym ‘Student’ published the

Footnotes

Acknowledgements

The work of PS is supported by the Deutsche Forschungsgemeinschaft DFG (Schl 3-1). The work of UD is supported by the European Union's Seventh Framework Programme (FP7/2008–2013) under grant agreements n° 201024 and n° 202213 (European Stroke Network), and the German Ministry for Health and Education (BMBF).

The authors declare no conflict of interest.