Abstract

This paper addresses a local environment recognition system for obstacle avoidance. In vision systems, obstacles that are located beyond the Field of View (FOV) cannot be detected precisely. To deal with the FOV problem, we propose a 3D Panoramic Environment Map (PEM) using a Modified SURF algorithm (MSURF). Moreover, in order to decide the avoidance direction and motion automatically, we also propose a Complexity Measure (CM) and Fuzzy-Logic-based Avoidance Motion Selector (FL-AMS). The CM is utilized to decide an avoidance direction for obstacles. The avoidance motion is determined using FL-AMS, which considers environmental conditions such as the size of obstacles and available space. The proposed system is applied to a humanoid robot built by the authors. The results of the experiment show that the proposed method can be effectively applied to a practical environment.

Keywords

1. Introduction

The study of humanoid robotics has recently evolved into an active area of research and development. Studies have been published in many related areas of research, such as autonomous walking, obstacle avoidance, stepping over obstacles, and walking up and down slopes and stairs. Yagi and Lumelsky [1] presented a robot that adjusts the length of its steps until it reaches an obstacle, depending on the distance to the closest obstacle in the direction of motion. If the size of the obstacle is small, the robot steps over the obstacle; if it is too tall to step over, the robot starts sidestepping until it clears the obstacle. Obviously, the decision to sidestep left or right is a pre-programmed one. Kuffner et al. [2] presented a footstep planning algorithm based on game theory that takes into account the global positioning of obstacles in the environment. Chestnutt et al. [3] and Michel et al. [4] presented vision-guided foot planning to avoid obstacles using Asimo Honda. These systems get environment information from a top-down view installed above the humanoid robot. Stasse et al. [5] and Kanehiro et al. [6] presented a stereo-vision-based locomotion planning algorithm that can modify the robot's waist height and upper body posture according to the size of the available space. Ayaz et al. [7] suggested a footstep approach suited to cluttered environments using a footstep planning algorithm that depends on the obstacle conditions. Gutmann et al. [8] suggested a modular architecture for humanoid robot navigation consisting of perception, control, and planning layers. The existing methods mentioned above use a global path planner that knows all the information on the walking environment. Global path planners, which provide information regarding the walking path and obstacles, have been used to guide humanoid robots to pre-defined goal positions [3, 8]. However, this assumption that the path planner knows all everything about the walking environment in advance is not appropriate if a humanoid robot walks through an unknown environment. Recently, local environment recognition systems have been studied. Wong et al. have proposed path planning systems using IR-sensor-based fuzzy controllers [19] and vision-based fuzzy controllers [20]. However, these systems do not supply decisions for avoidance direction and the first system does not provide accurate environment information due to the error of the IR sensor. Moreover, the vision-based fuzzy controller has problems with obstacles beyond the FOV and multi-obstacle avoidance.

Therefore, in this study, we focus on a local environment system for obstacle avoidance using a 3D-vision system. In particular, we address the Field of View (FOV) problem. Because the FOV problem occurs whenever humanoid robots meet obstacles that are too large, humanoid robots cannot precisely estimate the obstacle size and decide the appropriate motion. Therefore, we propose a Panoramic Environment Map (PEM) using a Modified Speeded-Up Robust Feature (MSURF). The conventional SURF [9] has weaknesses with respect to rotation and viewpoint change [10]; therefore, we modified the descriptor of the SURF algorithm by replacing the gradient-based method with a Modified Discrete Gaussian-Hermite Moment (MDGHM) method [11].

In [20], the vision-based fuzzy controller contained a fixed rotation angle according to the avoidance direction, which is inefficient when obstacles are of varying sizes. In this case, the humanoid robot finds it hard to escape various obstacles using only rotation motions with a fixed rotation angle. Therefore, the united avoidance motions of the humanoid robot need to be divided into avoidance direction and avoidance motion in terms of efficiency and adaptability of obstacle avoidance in an unknown walking environment. To achieve this, we propose a Complexity Measure (CM) and Fuzzy-Logic-based Avoidance Motion Selector (FL-AMS). The CM calculates the complexity of avoidance direction so that a humanoid robot can decide the avoidance direction by itself. The CM values measure the threat to the walking humanoid robot. A high CM value means that the walking environment for the humanoid robot is complex and difficult. As a result, the robot avoids the obstacle by walking in the direction with a lower CM value in terms of efficiency of motion. In addition, we also propose the FL-AMS method to avoid obstacles using environment information. The fuzzy-logic-based control method does not require a mathematical model and has the ability to approximate nonlinear systems. In addition, it can be easily implemented and extended without additional computational cost. In order to specify various motions in the robot, we define the avoidance motion as a combination of two motions based on four basic motions: sidestep walking (S), forward walking (F), rotation walking (R), and turning (T). The FL-AMS determines the optimal avoidance motion according to the environment information extracted from the PEM.

The remainder of this paper is organized as follows. In Section 2, we introduce the local environment recognition system. Section 3 gives the results of experiments to verify the performance of the proposed system. Section 4 concludes the paper by presenting contributions and plans for future work.

2. Local Environment Recognition System Using Modified SURF-Based 3D Panoramic Environment Map

To overcome the FOV problem, we generated a 3D Panoramic Environment Map (PEM) using a MDGHM-based SURF algorithm. From the PEM, we can obtain information about obstacles that exist beyond the limited FOV. Then, environment information, such as the location or sizes of obstacles, is extracted. Finally, avoidance motion planning, including avoidance direction and avoidance motion selection, is performed. Detailed methods are presented in the following subsections.

2.1 Architecture of Overall System

The overall local environment recognition system architecture is illustrated in Figure 1. The system largely consists of two parts: (1) PEM generation and extraction of environment information, and (2) determination of avoidance direction and avoidance motion. In the first part, we use an MSURF algorithm to generate the PEM. The MSURF algorithm has a modified version of the descriptor stage in the SURF algorithm. In [9], SURF was shown to be sensitive to rotation and viewpoint change caused by the gradient method. Therefore, we modified the conventional SURF using a moment-based method. The MSURF-based PEM generation gives environment information more precisely. To avoid obstacles in the determination of avoidance direction and avoidance motion, the robot calculates the CM values for the obstacles extracted from the PEM and determines the avoidance direction. In addition, we propose FL-AMS to select the optimal avoidance motion in various walking environments.

Generation of Panoramic Environment Map

2.2 Modified DGHM-based SURF Algorithm

2.2.1 SURF Algorithm

With respect to processing speed, the SURF algorithm is a very efficient instrument to accomplish goals such as searching for point correspondence between distinct images. Its properties, e.g., repeatability, distinctiveness, and robustness, are stronger than those of previous, similar tools. The process of finding point correspondence between distinct images is composed of three main parts: first, interest point detection, the main component of the overall process; second, feature vector construction from existing interest points employing information about the local neighbourhood; and third, the matching of interest point pairs by relevant descriptors from each distinct image [9]. SURF strictly takes a role in only two parts: the detector and descriptor parts.

2.2.1.1 Detection

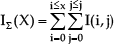

At the detection step, the major characteristic of SURF is a higher speed than previous algorithms. In order to obtain this, the key concept is an integral image adapted to the SURF algorithm. The integral image IΣ(X) is computed by the summation of all pixels building the input image I at position X = (x,y)T as

The processing time of the convolution calculation can be reduced sharply using box filtering. Figure 2 shows the integral image computation.

Integral Image Computation

Another key component in the detection stage of SURF is the Hessian matrix that decides the interest points, i.e., features, required. It is made up of a box filter approximation of a convolution result between the Gaussian second order partial derivatives (x-axis and y-axis) and the image I.

Within a given image, the determinant of the Hessian matrix also plays a critical role when choosing interest points at each iteration. This is performed through non-maximum suppression (NMS) [9]. In addition, to extract interest points from an image, a Gaussian approximation filter is adapted to each level of filter size included in a scale space.

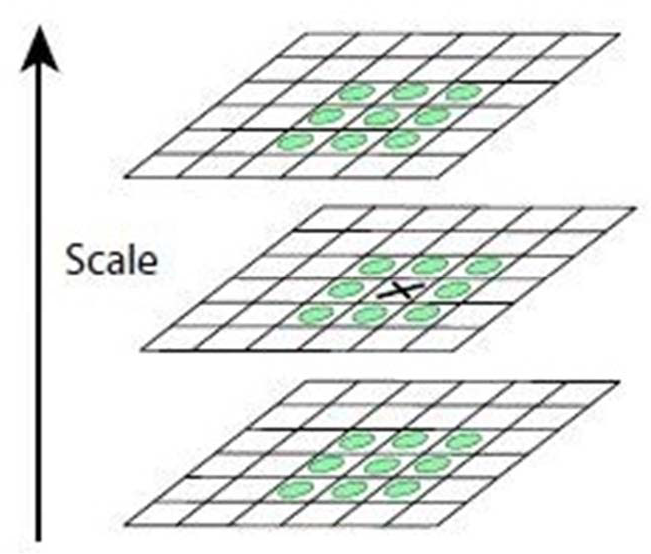

The scale space representation concept was also used in SIFT (Scale-Invariant Feature Transform); however, the scale space representation used in SURF is more computationally efficient and conserves more high-frequency components with no aliasing. Figure 3 shows the scale space representation of the SURF algorithm.

Scale space representation of the SURF algorithm

As shown in Figure 3, by enlarging the size of the box filter instead of scaling down images, SURF operates with better speed than other feature detectors using the more prevalent concept of scale space. The final stage of detecting interest points is to compute NSM (non-maximum suppression) over the neighbourhood at three scales. To ensure that one interest point in the scale is the chosen feature, 3 × 3 × 3 neighbourhood pixels are processed, then one point selected by NMS becomes the keypoint, as shown in Figure 4.

Feature extraction by NSM

2.2.1.2 Description

In order to assign invariability to the interest points, every interest point found by the detector is assigned a descriptor. When modifications happen to the scene, e.g., viewpoint angle change, scale change, blur increase, image rotation, image blur, compression, or illumination change, the descriptor of an interest point can be employed to identify correspondences between the original and transformed image. In SURF, this description is composed of two parts: orientation assignment and a Haar wavelet responses-based descriptor.

In orientation assignment, image orientation is specifically used to make an interest point invariable with respect to image rotation. Orientation is computed by detecting the dominant vector of the summation of the Gaussian-weighted Haar wavelet responses under a sliding window circle region spit into regions in increments of π/3 [9].

To calculate the descriptor of the interest point, the selected orientation and the square region centred around the interest point are needed. In the square region about the interest point, a 4 × 4 split square sub-region is assigned. This acts as the base stage for computing the horizontal Haar wavelet response, dx, and the vertical Haar wavelet response, dy. Then the dx and dy from each of the sub-regions are used to form the vector v=(Σdx, Σdy, Σ|dx|, Σ|dy|), called a descriptor. Some restrictions can be applied to the vector v to make extended versions of SURF, i.e., SURF-36 and SURF-128.

2.2.2 Modified DGHM (MDGHM)

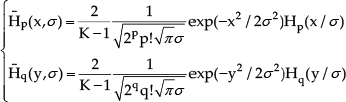

The DGHM, including the Hermite polynomial and the Gaussian-Hermite Moment (GHM), was introduced in [12]. The pth degree Hermite polynomial, one of the orthogonal polynomials, is given as follows:

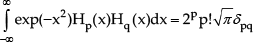

Hermite polynomials satisfy the following orthogonality condition with respect to the weight function exp(–x2) as

where δpq is the Kronecker delta.

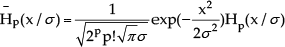

In order to obtain the orthonormal version, the normalized Hermite polynomial Hp(x) is calculated as

Gaussian-Hermite functions Hp(x/σ) can be calculated by replacing x with x / σ in (4).

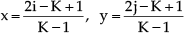

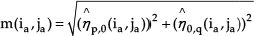

In order to compute the moments for a digital image I(i,j) whose size is K×K [0≤i, j≤K − 1], the coordinate transformation over the square [–1≤x, y≤1] is performed using

The Discrete Gaussian-Hermite functions can be calculated using equidistance sampling as a substitute for the following equation.

From (5) and (7), the DGHM ηp,q, which is a discrete version of the GHM, can be derived as

The DGHM is a global feature representation method for a square image in the discrete domain. Therefore, we need to modify the conventional DGHM to represent the local feature of a non-square image. The Modified DGHM (MDGHM), proposed by Kang in [11], is the DGHM of a mask with sampling intervals. Let I(i,j) be a digital 2D image and let t(u,v) be a mask whose size is M×N [0 ≤ u ≤ M − 1, 0 ≤ v ≤ N − 1]. The maximum number of samples, kM, kN, are calculated as follows:

where mM and mN are sampling intervals on the u – axis and v – axis respectively. The pixel values of the mask t(u, v)(i.j) located at an arbitrary point on the input image I(i, j) are obtained as

For the mask t(u, v)(i,j), the coordinates are transformed such that −1 ≤ x, y ≤ 1, as follows:

and the Discrete Gaussian-Hermite functions of the mask t(u, v)(i,j) can be written as follows:

From (10) and (12), the MDGHM with sampling intervals at arbitrary point on the input image I(i,j) can be written as follows:

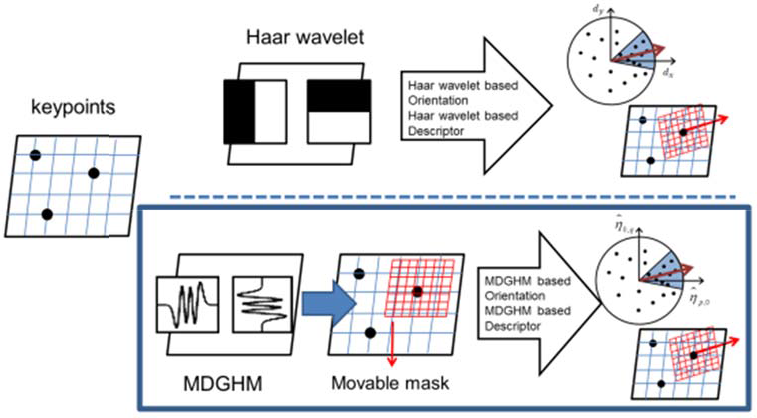

2.2.3 Modified SURF (MSURF) Algorithm

In [10], SURF was shown to be sensitive to conditions such as rotation and viewpoint changes, stemming from the gradient method used in the description part of SURF. To solve this problem and improve the matching accuracy of the SURF algorithm, we propose a Modified SURF (MSURF) algorithm. Figure 5 presents an overview of the proposed MSURF algorithm.

Overview of MSURF algorithm

As shown in Figure 5, we use MDGHM for orientation assignment and description in conventional SURF by calculating an MDGHM-based orientation and descriptor.

For orientation assignment, we replace the gradient-based, dx, dy filters based on the Haar wavelet response with MDGHM to represent the feature information more precisely. Figure 6 shows an example of the MDGHM-based box filter whose size is 2s × 2s.

Examples of MDGHM-based box filter

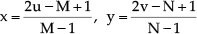

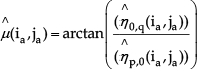

Let (ia, ja) be the locations of a keypoint in a digital image ID, where a is the index of the keypoints. From (13), the MDGHM-based box-filter response at (ia, ja) can therefore be calculated as follows:

To extract the dominant direction, we calculate the magnitude m(ia, ja) and angle μ(ia, ja) as follows:

The size of the MDGHM-based box filter is defined as follows:

where p and q are the orders of the MDGHM and σ denotes the standard deviation. All keypoints with their own MDGHM-based magnitude and orientation are used when structuring the dominant orientation in the description step of conventional SURF. The rest of the description step of the SURF descriptor is similar to conventional SURF, except that we replace the gradient magnitude and orientation of the descriptor with the MDGHM-based magnitude and orientation.

2.3 PEM generation based on the MSURF-SURF algorithm and extraction of Environment Information

2.3.1 PEM generation based on the MSURF-SURF algorithm

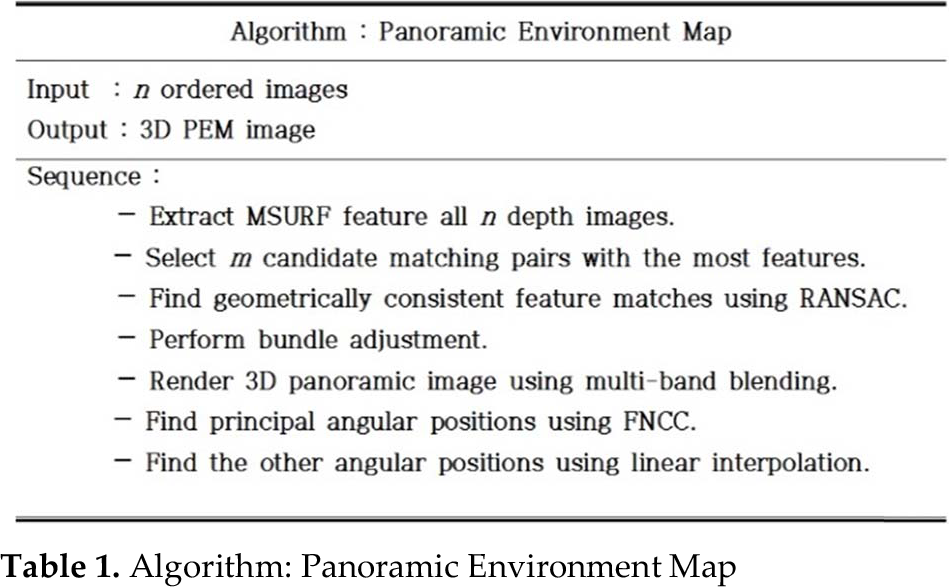

As mentioned above, because the FOV problem occurs whenever humanoid robots meet obstacles that are too large, humanoid robots cannot precisely estimate the obstacle's size and decide the appropriate reaction. To solve this problem, we propose the Panoramic Environment Map (PEM) using the MSURF algorithm. Table 1 shows the overall procedure of PEM generation. The procedure of PEM generation is similar to [13] until the fifth step in Table 1.

Algorithm: Panoramic Environment Map

The first step is to extract and match MSURF features between two images. Since MSURF features are more invariant to changes in scale, rotation, viewpoint, and illumination than those of traditional SURF, our method can handle images with varying orientation and zoom. To obtain a good solution for the image geometry at the second stage, it is only necessary to select a small number of matching pairs to stitch together a panoramic image using the invariant features of overlapping images. In the third stage, we only consider the n images that have the greatest number of feature matches to the current image for potential image matches (we use n = 9). In this stage, we use RANSAC to select a set of inliers that are compatible with a homography between the images. In the fourth stage, given a set of geometrically consistent matches between the images, we use bundle adjustment [13] to provide solutions for all of the camera parameters jointly. This is an essential step, since concatenation of pairwise homographies causes accumulated errors and disregards multiple constraints between images, e.g., that the ends of a panorama should join up. Images are added to the bundle adjuster one by one, with the best-matching image being added at each step. Then the parameters are updated using a least squares framework [13].

Ideally, each sample (pixel) along a ray would have the same intensity in every image that it intersects, but in reality this is not the case. Even after gain compensation, some image edges are still visible due to a number of un-modelled effects, such as vignetting, where intensity decreases towards the edge of the image, parallax effects due to unwanted motion of the optical centre, misregistration errors due to mismodelling of the camera, radial distortion, and so on. Because of this, a good blending strategy is important. In the final stage, we use the multi-band blending algorithm to solve the problem, which blends low frequencies over a large spatial range and high frequencies over a short range [13].

Figure 7 shows an example of the 3D panoramic images using nine 3D depth images. Figure 7(a) shows the input images gathered from the 3D TOF camera installed on the humanoid robot and Figure 7(b) image shows the resulting generated 3D panoramic image.

The 3D panoramic image generation: (a) nine input images obtained from 3D TOF camera installed on the Humanoid Robot, and (b) generated 3D panoramic image

From the 3D panoramic image, we can extract the obstacles and their information. Let θ and φ be angles on the xz and yz planes, respectively. The key angular position for the nine 3D TOF images in the 3D panoramic image can be estimated using Fast Normalized Cross Correlation (FNCC) [14] as follows:

where f is the 3D panoramic image, t is an input depth image from the TOF sensor, t̄ is the mean of t, and fu,v̄ is the mean of f(x,y) in the region under the depth image t. Each corresponded point is considered to have an angular position from −60 to 60 degrees with intervals of 30 degrees. The angular positions for θ and φ can be calculated from the PEM using linear interpolation as follows:

where

Environment information result from the PEM image

To calculate obstacle information such as size or location, we need to transform from spherical to Cartesian coordinates.

2.3.2 Extraction of Environment Information

In order to extract the obstacles, we need to detect blobs in the 3D PEM. Blob detection is preceded by a Connected Component Labelling (CCL) algorithm [21]. Before blob detection, preprocessing such as level-set division is carried out because CCL operates on binary images. Let I(xi,yi,zi), i = 1, …, N be an input 3D PEM with N pixels. The level-set is divided into m bins that affect the interval of each level-set. First, we define maximum available distance dmax = 2.5 m and m = 50. From the distance of each pixel zi, level-set is defined as follows:

where k = 0, …, m − 1, j = floor(floor(zi)/Δd), and Δd = dmax / m. From the level-set, obstacles are indexed by CCL as follows:

In (22), o1k is the l th obstacle in the kth level-set extracted by CCL. These indexed obstacles are used as the input data for decisions regarding avoidance direction and obstacle analysis. From (22), we can calculate the width, distance, and location of the obstacles. Figure 9 shows the parameters used to calculate the location and width for each extracted obstacle, including the angle, size, and distance between obstacles and the distance between the robot and the obstacle.

Environment information: location and width calculation

Firstly, the perpendicular distance dx1k is calculated as follows:

From (23), the maximum width of FOV wFOV at the perpendicular distance dx1k can be calculated as follows:

Using (24), the width of the obstacles w1k can be calculated by using the ratio between the number of pixels of the FOV and the number of pixels of the obstacle o1k as follows:

We can also calculate the angle θk1 between the centre of the robot and the centre of an obstacle.

2.4 Determination of Avoidance Direction and Avoidance Motion

2.4.1 Determination of Avoidance Direction Using the Complexity Measure (CM)

The environment information from obstacle extraction and size estimation is used as the input data to decide the best direction for avoiding obstacles. To decide the avoidance direction, we propose the complexity measure (CM) as follows:

As shown in (26), the CM is determined by three factors: the distance between the robot and the obstacle, the width and the height of the obstacle, and the angle of the obstacle from the robot's current direction. The CM has a large value in cases where the robot is close to the obstacle, where the angle from the centre of the PEM to the obstacle is small, and where the obstacle is large in size. A large CM value indicates that the environment is too complex for the robot to avoid the obstacle. Therefore, we get the final avoidance decision from the determination value D as follows:

In (27), the CMs for obstacles located on the left side of the robot have negative values, and the CMs for obstacles located on the right side of the robot have positive values. Therefore, the avoidance direction can be determined by the summation of all CMs over all obstacles. If D is positive, the robot turns to the left, and if D is negative, the robot turns to the right.

2.4.2 Decision of Avoidance Motion Using a Fuzzy-Logic-Based Avoidance Motion Selector (FL-AMS)

After choosing the direction to take to avoid the obstacle, a motion type must be selected based on the environment conditions. To achieve this purpose, we need an intelligent control approach. Fuzzy logic is one such approach that does not require mathematical modelling and has the ability to approximate nonlinear systems. In addition, it can be easily implemented and extended without additional computational cost. Therefore, we propose a fuzzy-logic-based avoidance motion selector (FL-AMS).

In order to perform fuzzification [15], the difference wi between the obstacle width and robot width and the space difference Si between the avoidance space width and the robot width are set up as the input variables. Figure 10 shows the input and output membership functions. As shown in Figure 10, triangular-type membership functions and fuzzy-singleton-type membership functions are used for the input and output variables, respectively. The term sets of the input and output variables are selected as follows:

Membership functions of fuzzy system: (a) Input variable wi, (b) input variable si, and (c) output variable fi

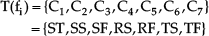

where fuzzy sets A1, A2, A3, A4, and A5 are respectively denoted as Negative Large (NL), Negative Small (NS), Zero (Z), Positive Small (PS), and Positive Large (PL) for the input variable wi. Fuzzy sets B1, B2, B3, B4, and B5 are also respectively denoted as Negative Large (NL), Negative Small (NS), Zero (Z), Positive Small (PS), and Positive Large (PL) for the input variable di. Fuzzy sets C1, C2, C3, C4, C5, C6, and C7 are respectively denoted as Stop (ST), Slip step + Slip step (SS), Slip step + Forward step (SF), Rotation step + Slip step (RS), Rotation step + Forward step (RF), Turning step + Slip step (TS), and Turning step + Forward step (TF). All of these are combinations of any two motions: Slip step (S), Forward step (F), Rotation step (R), Turning step (T), but excluding the Stop motion (ST) for the output variable fi.

To determine the final output of the FL-AMS, we use the weighted average method described by:

where v(Cf(j1,j2)) is the crisp value of the fuzzy set, Cf(j1,j2). The function u(j1, j2) is the fire strength of the rule R(j1, j2) and can be described by:

Based on the width difference wi between obstacle width and robot width and space difference si between avoidance space width and robot width, the seven evaluation values fi, i ∈ {1,2,3,4,5,6,7} for the seven avoidance motions can be determined by the FL-AMS.

Walking motion types consist of seven motions, SS, SF, RS, RF, TS, and TF, which are combinations of two of the motions sidestep walking (S), forward walking (F), rotation walking (R), and turning (T), excluding the stop motion (ST). Table 2 shows the fuzzy rule table for the walking motions.

Fuzzy rule table for FL-AMS

3. Experimental Results for Intelligent Obstacle Avoidance

We carried out experiments to investigate the effectiveness of local environment recognition using the proposed MSURF algorithm. Our experiment largely consists of accuracy testing for environment information and the success rate of obstacle avoidance for the designed humanoid robot. A detailed description of these is presented below.

3.1 Humanoid Robot Design

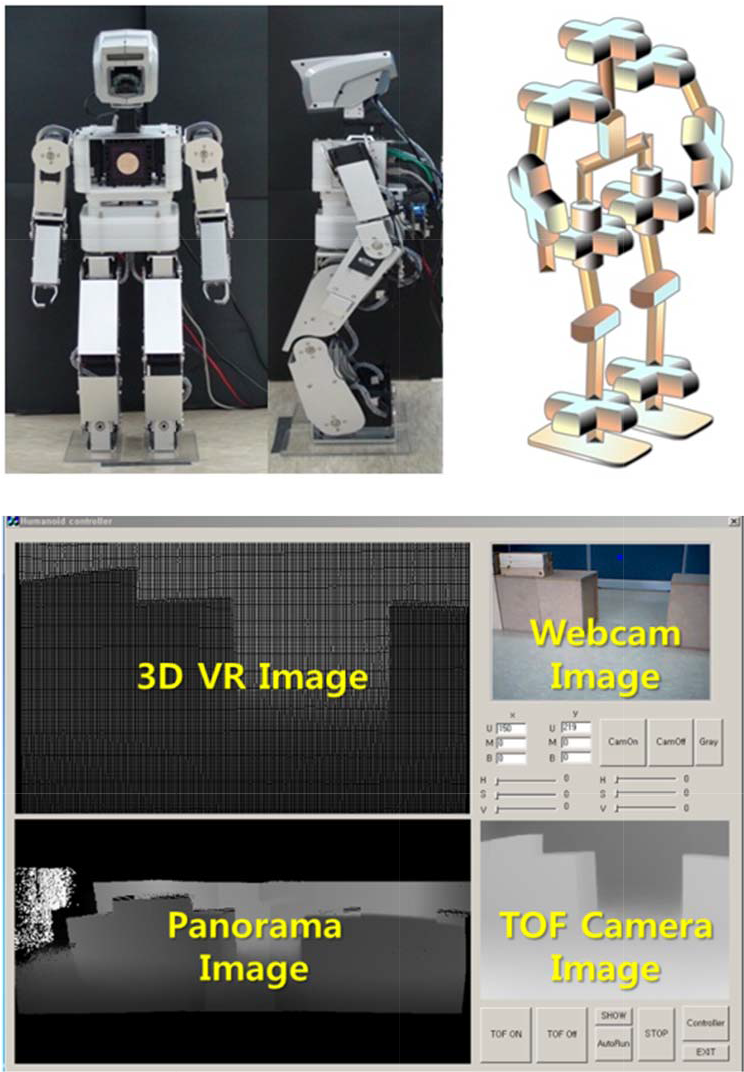

For this experiment, we designed and built a humanoid robot. The overall construction of our humanoid robot is shown in Figure 11.

Humanoid Robot Design and GUI

The height of the humanoid robot is about 600 mm. Each joint is driven by the RC servomotor, which consists of a DC motor, gear, and simple controller. Our humanoid robot also has 23 degrees of freedom (DOF) and two different vision systems, a 3D TOF camera and a webcam. As shown in Figure 11, the GUI largely consists of four parts: the 3D VR image, the webcam image, the TOF camera image, and the 3D PEM. The designed humanoid robot system has two vision systems: a webcam and a 3D TOF camera, which are shown on the webcam image part and TOF camera image part of the GUI, respectively. The 3D PEM image part shows the PEM generated by using the MSURF algorithm. Finally, the 3D VR image part, implemented using the OpenGL library [18], shows the analysed environmental information. Table 3 shows the specifications of our humanoid robot system.

Specification of the designed humanoid robot

3.2 Experimental Setup

We implemented our obstacle avoidance approach using the humanoid robot with a TOF camera. The vision system on the robot's head provides disparity images (176 × 144) at 30fps. For testing our perception method, we set up an obstacle environment containing obstacles and avoidance spaces of different sizes.

As shown in Figure 12, the experiment space was 2.5 m × 3 m and there were two types of artificial obstacles which had dimensions of 32 cm (W) × 32 cm (H) × 24 cm (D).

Experiment environment

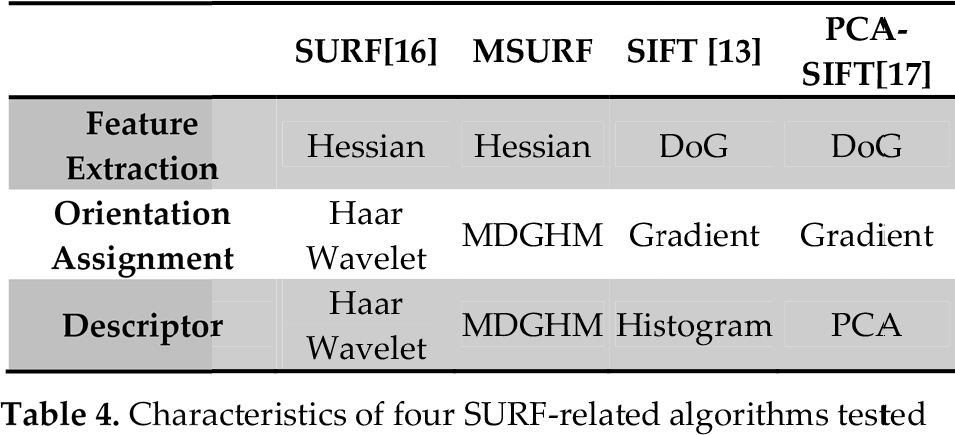

These were used in various combinations so that we could make obstacles of different widths and spacing. We evaluated four related algorithms: a SIFT-based method, a PCA-SIFT-based method, a conventional SURF-based method, and the proposed MSURF-based method, as tabulated in Table 4. We set the MSURF parameters to p = q = 3, σ = 0.3, mM = mN = 1 and used a window size of 5s×5s.

Characteristics of four SURF-related algorithms tested

3.3 Experiment Results for Environment Information

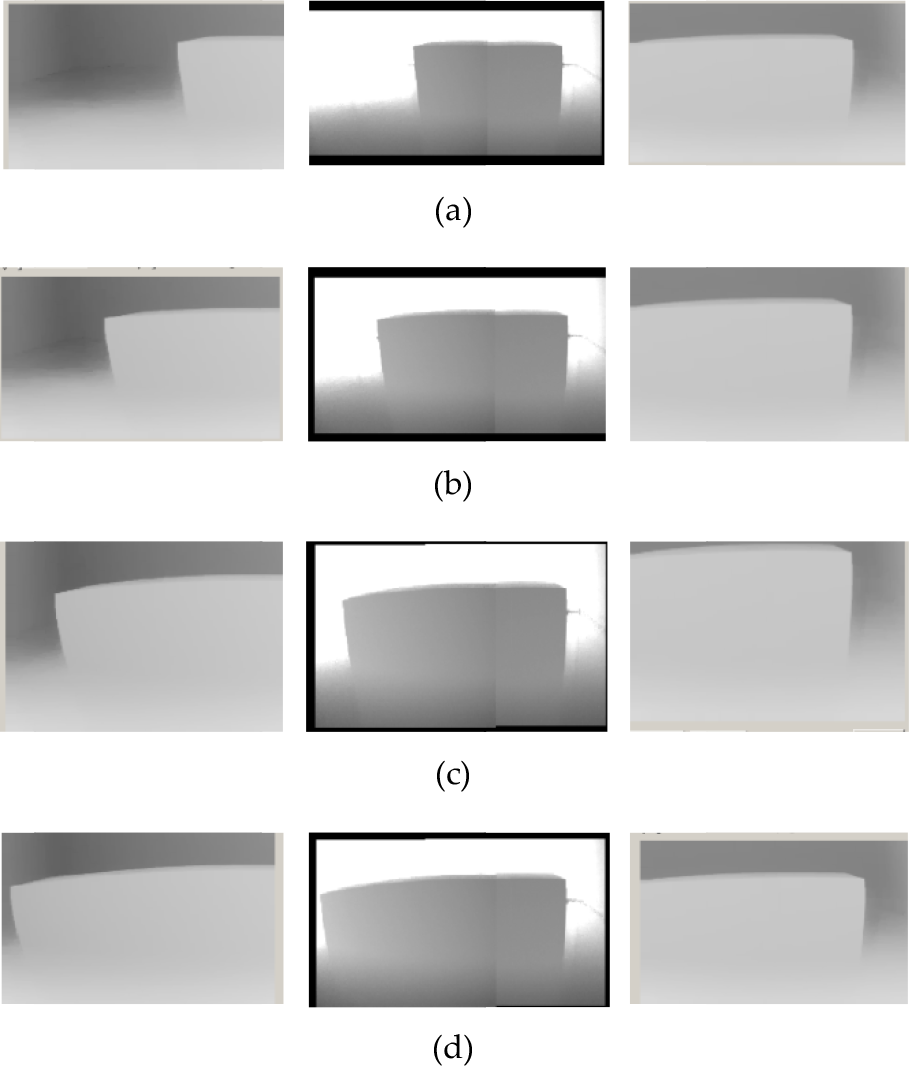

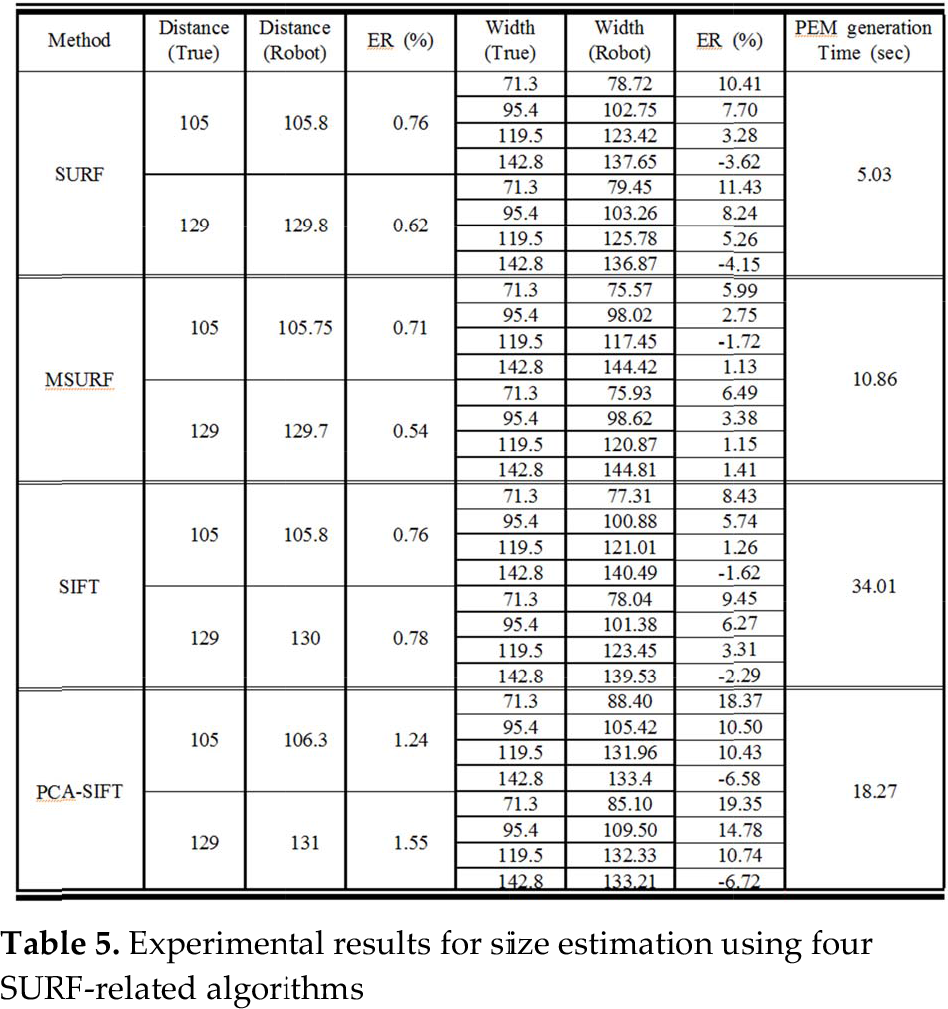

To estimate obstacle information, we proposed the PEM image using the MDGHM-SURF algorithm. In this section, we tested the accuracy of the obstacle size estimation of the proposed method and compared the results with those of the PEM image using the SIFT algorithm. In this experiment, we tested the performance of size estimation for four types of obstacles with widths of 71.3 cm, 95.4 cm, 119.5 cm, and 142.8 cm, respectively, 20 times for each width.

Table 5 shows the results for size estimation for various distances and sizes using the four SURF-related algorithms. In Table 5, the results for the estimated distance, the estimated width of obstacles, and PEM generation times were averaged over 20 trials. As shown in Table 5, although the generation time for the MSURF-based method is a little slower than the SURF-based method, the MSURF-based method shows better performance than the others with respect to the size estimation of the obstacles. Figure 13 shows some example PEM images using the MSURF algorithm.

Example PEM images made using MSURF for an obstacle at 105 cm distance: widths of obstacle are (a) 71.3 cm, (b) 95.4 cm, (c) 119.5 cm, and (d) 142.8 cm

Experimental results for size estimation using four SURF-related algorithms

3.4 Experiment Results for Decisions of Avoidance Direction and Avoidance Motion

We tested our proposed method under various environmental conditions. The humanoid robot was programmed to walk and stop when it met an obstacle within a fixed or defined distance. Then the humanoid robot determined the decision factors needed to avoid obstacles. We carried out the experiments regarding the decision and control performance under various obstacle conditions.

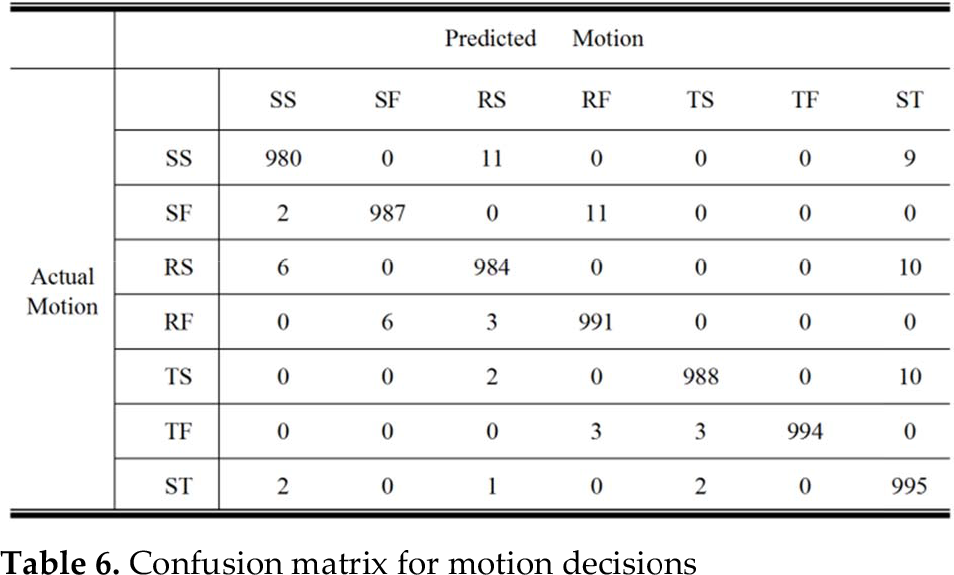

First, we verified the performance of the FL-AMS method proposed in this paper for walking obstacle avoidance using the humanoid robot. In Table 6, a confusion matrix shows that the proposed algorithm confidently predicts the correct motion decision. In Table 6, one thousand random values consisting of the obstacle size and the avoidance space were used as the input, and the outputs are tabulated for each situation.

Confusion matrix for motion decisions

As shown in Table 6, the FL-AMS shows good decision accuracies, 98% (980/1000) for SS motion, 98.7% (987/1000) for SF motion, 98.4% (984/1000) for RS motion, 99.1% (991/1000) for RF motion, 98.8% (988/1000) for TS motion, 99.4% (994/1000) for TF motion, and 99.5% (995/1000) for ST motion.

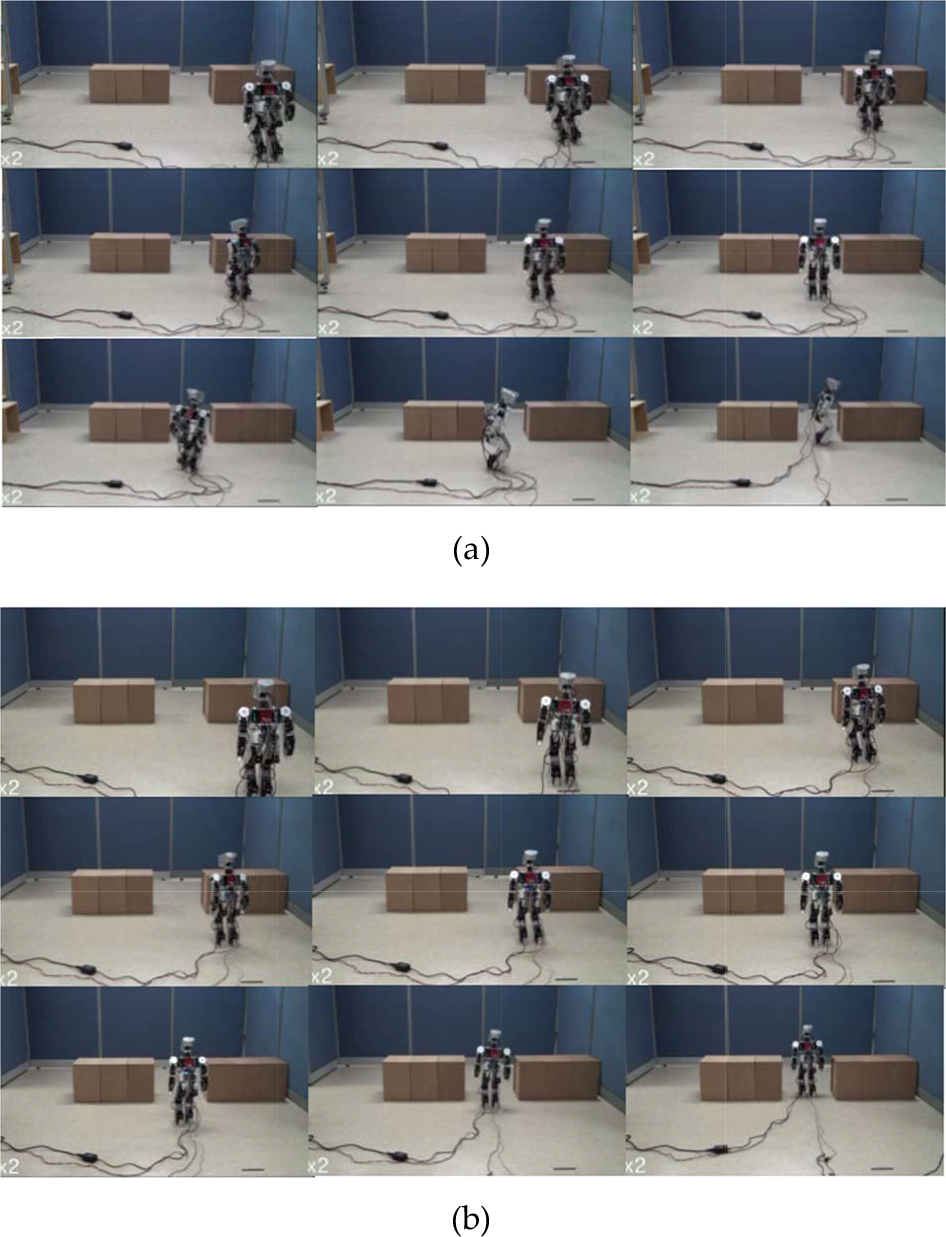

Secondly, we tested the decision and control performance under various obstacle conditions: obstacle width and avoidance space.

The experimental results are shown in Table 7, Figure 14, and Figure 15. We tested the obstacle avoidance walk of the humanoid robot under each condition 50 times. If the humanoid robot arrived at the destination we defined for it, the walking test succeeded. On the other hand, if the humanoid robot collided with an obstacle or got lost during walking, it failed. As shown in Table 7, the proposed method has a high success rate even without an optimal control method for walking. To improve the success rate of a humanoid robot system, a vision-based adaptive walking control system is needed for future work.

Experimental Results under various obstacle conditions: (a) SS motion type, (b) SF motion type

Experimental Results under various obstacle conditions: (a) RS motion type, (b) RF motion type

Experimental results for obstacle avoidance

Figure 14 illustrates a resulting image sequence when using SS and SF motion types, and Figure 15 presents an image sequence that resulted when using RS and RF motion types. In the cases shown in Figures 14 and 15, the results show good avoidance performance because the widths of the obstacles are reasonable for the humanoid robot.

4. Conclusion

In this paper, we introduced the local environment recognition system using an MSURF-based PEM for obstacle avoidance of a humanoid robot. The proposed method is based on a PEM image using an MSIFT algorithm, vision-based CM, and FL-AMS. The PEM image method can be a solution for overcoming the FOV problem, which may be a major cause of incorrect decisions in walking control and perception of environment.

The vision-based CM system decides the avoidance direction for a humanoid robot to avoid obstacles by considering environment information such as the distribution of obstacles, distance from the humanoid robot to the obstacles, and size of each obstacle.

Finally, the FL-AMS system automatically decides the best avoidance motion depending on the obstacle conditions using the environment information obtained by the PEM. These systems do not need a global path planner with all information regarding the obstacle location and path information in advance. From the experimental results, we believe that our proposed methods will be useful for humanoid robots in real environments.