Abstract

Sensory substitution is a research field of increasing interest with regard to technical, applied and theoretical issues. Among the latter, it is of central interest to understand the form in which humans perceive the environment. Ecological psychology, among other approaches, proposes that we can detect higher-order informational variables (in the sense that they are defined over substantial spatial and temporal intervals) that specify our interaction with the environment. When using a vibrotactile sensory substitution device, it is reasonable to ask if stimulation on the skin may be exploitable to detect higher-order variables. Motivated by this question, a portable vibrotactile sensory substitution device was built, using distance-based information as a source and driving a large number of vibrotactile actuators (72 in the reported version, 120 max). The portable device was designed to explore real environments, allowing natural unrestricted movement for the user while providing contingent real-time vibrotactile information. Two preliminary experiments were performed. In the first one, participants were asked to detect the time to contact of an approaching ball in a simulated (desktop) environment. Reasonable performance was observed in all experimental conditions, including the one with only tactile stimulation. In the second experiment, a portable version of the device was used in a real environment, where participants were asked to hit an approaching ball. Participants were able to coordinate their arm movements with vibrotactile stimulation in appropriate timing. We conclude that vibrotactile flow can be generated by distance-based activation of the actuators and that this stimulation on the skin allows users to perceive time-to-contact related environmental properties.

1. Introduction

The ecological theory of perception was proposed by J. J. Gibson [1] and has been developed for many years [2, 3]. The core of the theory differs from mainstream cognitive theory because it states that to understand perception one should focus on animal-environment systems rather than solely on the animal's brain processing capabilities. The informational arrays that are available in the environment play a crucial role in perception, without the need for symbolic or coding processes. In addition, the objects of perception are units relevant to behaviour (i.e., affordances specified by higher-order informational invariants), not minimal or atomic units that need to be transformed and integrated in a computer-like fashion (e.g., points of light). In coherence with the ecological approach to perception, other approaches such as the sensorimotor account [4] and the embodied cognition approach [5], have highlighted the crucial role of action in perception (something also proposed in [2]). From these viewpoints, our movements (locomotion, head movements, etc.) underlie our visual perception. Perception unfolds within a loop with motor acts that make information detectable. Hence, perception not only guides behaviour, but behaviour also determines perception.

A clear-cut example studied within ecological theory is the example of optical flow, defined as the apparent motion of objects, surfaces and edges in a visual scene, caused by the relative motion between an observer and the scene [6, 7]. Optical flow provides information about environmental properties such as time to contact (TTC), defined as the time remaining for an observer to be in contact with an object if the respective velocities stay constant [8]. TTC may be perceived directly through the detection of a unique variable (τ) that is defined as the ratio of the angular size of an object in the visual field and its rate of expansion [9, 10].

Basic research on TTC perception has proven to be useful for explaining applied problems such as risk perception in the decision to merge or not to merge when driving on a highway. The risk level depends on a higher-order property not unlike TTC, perceived by the driver. Moreover, drivers are not aware of explicit relative velocities and the distance between the vehicles. Instead, the decision seems to be made relying on a higher-order variable available from the driver's point of view [11]. Other examples of potentially-exploitable higher-order variables can be found when people estimate the force exerted by a person when pulling an object. In this case, the relationship between velocity, position of articulations and other variables, may be detected as a unique collective variable [12].

Sensory substitution devices are technical artefacts that provide some type of sensory information (distance-related in our case) through some type of stimulation (tactile in our device) different to the usual one. These devices have been used with the aim to help blind people, or to improve perception. Since the sixties, it has been a growing field of research that unites technical innovation and theoretical interest. Since the pioneering work of Bach-y-Rita and colleagues [13–17], many sensory substitution devices have been developed, some of them showing very reasonable results [18]. The classic devices are sight-tactile and sight-auditory [19, 20]. A highly interesting question concerning sensory substitution devices is to what extent they supply higher-order sensory information, that is, whether there is a similarity to optical flow, or whether it is possible to build a device that supplies such information.

Our device uses vibrotactile actuators on the skin. Various vibrotactile sensory-substitution devices have been developed. An example is the first TVSS by Bach-y-Rita et al. [15], which could drive a dense array of actuators attached to a chair. The TVSS coupled information from the environment (light intensity in 400 regions of a camera field) with the activation of the actuators of a 20×20 array of tactors, allowing the users joystick-based control of the camera. Participants identified objects such as chairs, cupboards, telephones, etc. However, the design and size of the early TVSS device did not allow extensive exploration of the environment. Portable TVSSs have later been developed, as well as devices making use of different sensory areas such as the tongue [16]. A difference with our device, however, is that the activity of tactors of the TVSS was a function of light-intensity detected with a camera, whereas our device uses distance-related information. In our device, a Kinect sensor measures distances to surfaces. Distance-related information may turn out to be more robust compared to typically quickly changing light conditions. It is important to highlight that the selection of distance-related stimulation raises the question of to what extent the device should be referred to as a sensory substitution device or as an amodal or multimodal spatial interface [14, 21].

Another example of a sensory substitution device is presented in [22]. This particular sensory substitution device uses a head-mounted Kinect camera and provides online information about different locations of stimulation. The device, however, does not provide vibrotactile flow as the distances are coded into largely arbitrary signals: short bursts for far obstacles and long bursts for nearby obstacles. This information has proven to be useful in way-finding, but it may not be as suitable for the avoidance of quickly approaching obstacles.

In previous work we have shown that users can detect vibrotactile flow with a sensory substitution device [23, 24]. Vibrotactile flow is analogous to optic flow, but within the haptic domain. It is defined as space-time variation of vibrotactile stimulation. Our hypothesis is that by presenting vibrotactile flow and allowing users to move/explore, higher-order informational variables become detectable. This may help users to improve their event perception (e.g., the perception of the TTC of an approaching ball). Actually, tactile visual substitution devices were used by Jansson [25] and Jansson and Brabyn [26] to study a task in which well-trained blind participants batted (via a keyboard) an approaching ball rolling off a table, which they could do with acceptable accuracy rates (cf. [16]). These positive results indicate that one may be able to design devices that present those variables to users that allow successful performance. Variables such as τ, the rate of expansion of vibrotactile stimulation, or changes in vibration frequency are candidates for specifying animal-environment properties. Making such variables detectable may allow: first, a faster use and learning with the device and second, a better perception of the surroundings.

2. Embodiment and sensory substitution

Before turning to a more precise description of our device, in this section we intend to make explicit why the notion of embodiment is relevant for sensory substitution and how higher-order informational variables can be made detectable with such a device. First, we address embodiment and the biological inspiration of the device, in the sense that the camera and hence the stimulation is controlled/modulated through the user's natural movements. Second, we focus on how to provide flow on the skin and on the possibility of adding higher-order variables, such as gradients, beyond the spatial expansion patterns related to τ.

A considerable advantage of using tactile sensory substitution is the under-utilization of tactile perception (with some important exceptions) in everyday locomotion, whereas the auditory modality is constantly demanded in our daily activities: if an auditory device is used, our capacity of perceiving through the auditory system could be diminished. Hence, freeing hands and ears is one goal of the present design, in order not to restrict basic abilities such as hearing and fine-grained hand usage. Attaching the actuators and the camera to the torso and putting the control stage into a backpack, allows a full repertoire of movements. This is an essential feature of the device because perception and action depend on active exploration and sampling. A deeper theoretical discussion of the role of action with a distance-based sensory substitution device may be found in [24], with specific regard to the sensorimotor hypothesis.

Insights from psychology and biology have been relevant when applied to the design of mobile robots [27, 28] and their sensors [29]. In the case of sensory substitution, the main challenge is to develop a system that provides sensory stimulation well suited to the properties of the human perceptual system. In this regard and related to the sensorimotor approach [4] we think that the contingency between movement and stimulation is crucial. Every change in the environment and/or in the user's actions will generate a change in the stimulation provided by the actuators. Regularities in the movement-stimulation relation may be used to perceive properties of the environment and to control action. Thus, a high refresh rate and a strong coupling between sensors and actuators are crucial to optimize a biological inspiration of sensory substitution.

A fundamental issue in addition to sensorimotor contingencies is that of the higher-order variables that the set of actuators makes available to the user. Among the often-studied higher-order informational variables in the ecological approach are gradients (acoustic, light intensity, etc.) and optic flow variables (changes in visual angles in the scene due to relative movement of objects and observer). One may provide useful sensory stimulation to users by coupling available environmental information or available environmental properties (such as distances measured by our Kinect sensor) to vibrotactile patterns that preserve some of the essential aspects of the environmental information or of the environmental properties. The main aspect focused on in our study concerns expansion, implemented by more and more tactors being turned on. In the same way that a pattern expands in our visual field as an object approaches, approaching objects occupy a bigger angular area in front of a Kinect camera.

It is worth noting that adding one dimension to the vibrotactile stimulation (e.g., variation of vibratory intensity instead of only on-off activation of the actuators) may be exploitable by perception and action, because it may add a distance-related gradient. An alternative version of our device [23, 24] actually used different driving voltages (and hence, different intensities) for different actuators, with driving voltage being a function of distance. In vision it has been shown that fine-grained texture is exploitable for TTC perception, in addition to the main role of the tau-component defined by the outline of the approaching object [30]. Although the device presented in this study is able to regulate the intensity of the actuators, we used this type of regulation only in one of our experiments: the more informal one with a ball-hitting task.

Generating flow patterns is a matter of making information available. Our hypothesis is that the denser the pattern of actuators, the more exploitable the vibrotactile flow. Even so, a limited number of actuators with good refreshment rates may also generate exploitable flows. Although the problem of the number of actuators is a controversial issue in sensory substitution, several authors have suggested that this issue should not be decided on the basis of the two-point thresholds at which pairs of tactors can be distinguished. An outstanding example can be found in [13] when arguing that pattern perception can be more accurate than sensory thresholds. As an example, they propose Vernier acuity as opposed to two-point thresholds in touch. Similarly, [17] stressed the strong differences between spatial acuity and our ability to detect differences in texture in the haptic modality, supporting the use of a high number of actuators to provide continuous patterns of stimulation.

To sum up, our intuition is that building a device that provides complex sensory patterns (as changes in location and intensity of stimulation) may result in a better adaptation to the device as well as an increased perceptual exploitability. Contrary to some substitution devices, environmental properties are not coded into arbitrary signals, but translated into lawfully-generated complex patterns whose dimensions (e.g., timing, location, or intensity) are exploitable by human perceptual capacities.

3. Device

The developed sensory substitution device, which we call Tactile-Sight (TSIGHT) was aimed to have a high number of vibrotactile actuators in order to present flow through variations in the area of stimulation and the intensity of vibration. Wireless communication between the processing stage and the control and power stage was built. Two versions of the device are considered. First, a version that works with desktop computers running simulated environments. Second, an embedded one with a Kinect sensor that is attached to the torso with a belt. This sensor provides real-time information to the central stage about the distance to the nearest surface for every region in sight, capturing the distance of the objects in the surroundings. The power and control modules were modular. To accomplish this, an I2C network was used with a master device receiving the activation intensity for each actuator and resending the information to the corresponding slave module. The modules could be located in any desirable place in order to have an easy distribution of the vibrotactile actuators. Each actuator needs two power drives implying that each module will need 12 of them. For this reason the design considers a drastic reduction of power wires length in a future wearable device (each power wire will not be longer than 2cm). Also, the modular design allows the possibility of expanding the number of vibrotactile actuators by adding new slave modules. However, possible delays of the I2C bus and the wireless transmission limit the number of modules that can be added without having a significant delay.

Figure 1 shows the general architecture of TSIGHT. In the following subsections the hardware used and software developed are explained.

General architecture TSIGHT.

3.1 Hardware

Different pieces of hardware were used in the sensory substitution device.

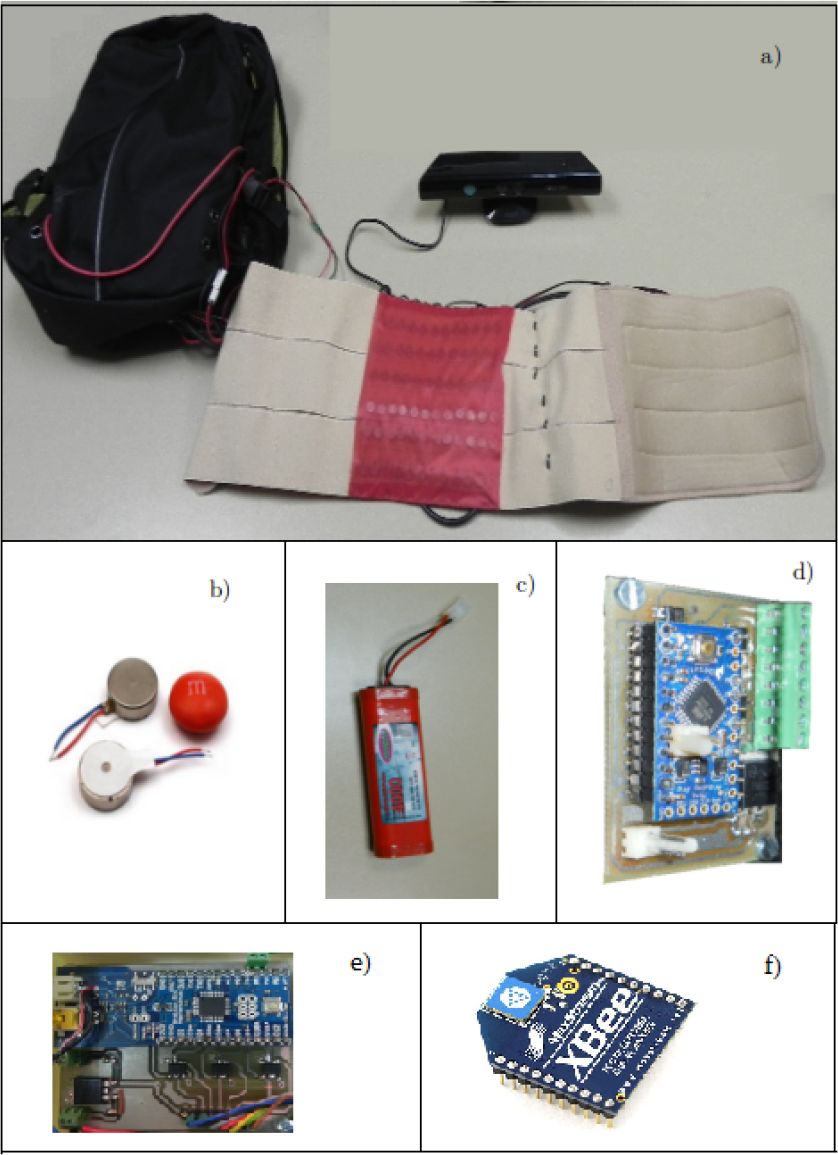

First, the vibrotactile actuators were coin-motor types [see Figure 2b]. These are small enough to fit densely on different parts of the skin such as chest, ankles and back. Having small actuators allows one to place them relatively close to each other so as to provide a more spatially continuous flow. The intensity of the vibration of each actuator was controlled with a Pulse Width Modulation (PWM).

Hardware of TSIGHT, a) Kinect and waistband, b) Vibrotactile actuators, c) Battery, d) Slave I2C power module, e) master I2C module and f) XBee wireless device.

To characterize the actuators, a Qualisys 1 multi-camera motion-capture system was used to detect the displacement of a marker located on top of an actuator. The Qualisys system is capable of precisely measuring the change of the position of infrared reflective devices (the markers) based on the relative position of these markers in the images obtained from the cameras comprising the system. Five vibrotactile actuators were characterized by varying a PWM signal applied to them and measuring the oscillation frequency. The mean of the frequency vs. PWM signal [see Figure 3] indicates that the vibration frequency can be controlled, allowing the generation of a vibrotactile flow with another degree of freedom by varying not only the position of application of the signal, but also the frequency delivered. Therefore, the TSIGHT can increase the information received by the user.

Vibrotactile actuator characterization. Frequency of vibration (Hz) vs. duty cycle PWM signal.

To measure whether people are able to detect changes in the frequency of actuators, 10 participants were asked to use the sensory substitution device TSIGHT and indicate if they were able to feel the vibration frequency changes in an actuator placed on the abdominal area and activated with a PWM signal varying in steps of 10%. All participants were able to detect the changes from step to step between 10 and 100% of the duty cycle applied, whereas between 0 and 10% participants did not feel any change. This is due to the dynamics of the actuators. In this last zone the energy is not enough to start the movement.

These results indicate that different ways of delivering information through the TSIGHT are exploitable, both over the variation of frequency of the actuators and over the spatial location of the stimulation.

The system was designed to be portable. This allowed users in real environments to explore with their body, experiencing changes in stimulation caused by their movements through the environment. Wireless communication was used between the process stage and the power and control stage. When the device is used in real environments the distance information of the surrounding objects is obtained through a 3D camera [see Figure 2a]. Then the depth information is processed and sent wirelessly through an XBee device model S1 [see Figure 2f], allowing a distance of 100m between the processing stage and the power and control stage. Such large distances, however, were never used in the current experiments: in the version of the device with the Kinect, the power and control units were carried in a backpack and in the version with simulated environments participants were seated near these units.

In the power and control stage, a master microcontroller wirelessly receives the information and sends the corresponding activation level to each module through an I2C network that uses only two wires [see Figure 4]. Each module is in charge of delivering the necessary power to the actuators attached to it, so that locating the modules directly where the actuators are going to be placed will avoid unnecessary lengths of the power wires used to drive the coin actuators.

I2C network.

There are two different types of power modules. The first one is the master device of the I2C network. It is comprised by an arduino Fio microcontroller, with an XBee device attached to it in order to wirelessly receive the commands of the activation levels of the tactors. This module, in addition to managing the communication with the slave nodes, is able to drive six vibrotactile actuators. The second type of modules are the slaves of the I2C network. These modules use a Pro-mini arduino microcontroller and, as the master device, are capable to drive six vibrotactile actuators. The network can be expanded by adding extra slave modules, just by connecting them to the I2C [see Figure 4].

For our experiments a waistband was used with 72 vibrotactile actuators arranged in six rows with 12 columns [see Figure 2a]. The waistband was placed in the abdominal area of the participant. Each actuator in the waistband vibrates as a function of the distance to the nearest object in the zone that the actuator portrait has in the image captured by the ToF camera.

The power and control stage currently has 20 modules able to drive 120 actuators, although, as mentioned, the number of modules could be expanded by attaching new slaves to the I2C bus. The system energy comes from a NiMh battery of 4000mA/h, capable of supplying energy for more than half an hour with the actuators working at the highest power level (not usual). Each module consumes about 360mA working at its maximum. Adding more modules will involve having to take considerations about the battery used to increase the autonomy. The used battery was chosen considering that it will be close to the people using TSIGHT and the LiPo batteries that could deliver more current are dangerous in the case of a short circuit.

3.2 Software

As mentioned above, two versions of the system were used, one with real environments and one with simulated environments. For real environments, the Robot Operating System (ROS) was used. This system includes nodes developed to obtain the distance and to send the corresponding activation level. The simulated environment was created with Matlab and a toolbox called Psychtoolbox. The software used in each case is explained in the following sections.

3.2.1 Software for real environments

ROS 2 is an open operating system developed to work with robots. It integrates plenty of drivers to different sorts of sensors, including, among other ToF cameras, the Microsoft Kinect camera used in our device. ROS philosophy allows different modules (that can be written in different languages such as C or python) to actualize or read the information published in a topic. That is, it allows easy integration of different functions developed for different users [see Figure 5].

ROS communication scheme Publication/Subscription between nodes.

The information about depth is obtained from the camera and is published in a topic (Image_raw). A node subscribed to this topic (Image_process) reads the information about distance and maps it to a 12×6 matrix corresponding with the 72 actuators in the waistband. Each of the 72 actuators represents an area of the image [see Figure 6].

Image captured by the ToF camera. Red rectangular areas are mapped to the waistband for the corresponding actuator.

In each area of the image the mean distance to the nearest object is calculated and an activation level is established for each vibrotactile actuator representing an area. Then the corresponding PWM signal is calculated (Frequency_sent). Priority is given to objects that are between 0 and 2m by assigning them a larger range of vibration frequencies [see Figure 7], allowing for better resolution when objects are close to the user. Then the value for the PWM is sent through the serial port where the XBee module is attached in order to wirelessly transmit the information to the power and control stage [see Figure 4 and Figure 5].

Duty cycle vs. distance to objects.

3.2.2 Software for simulated environments

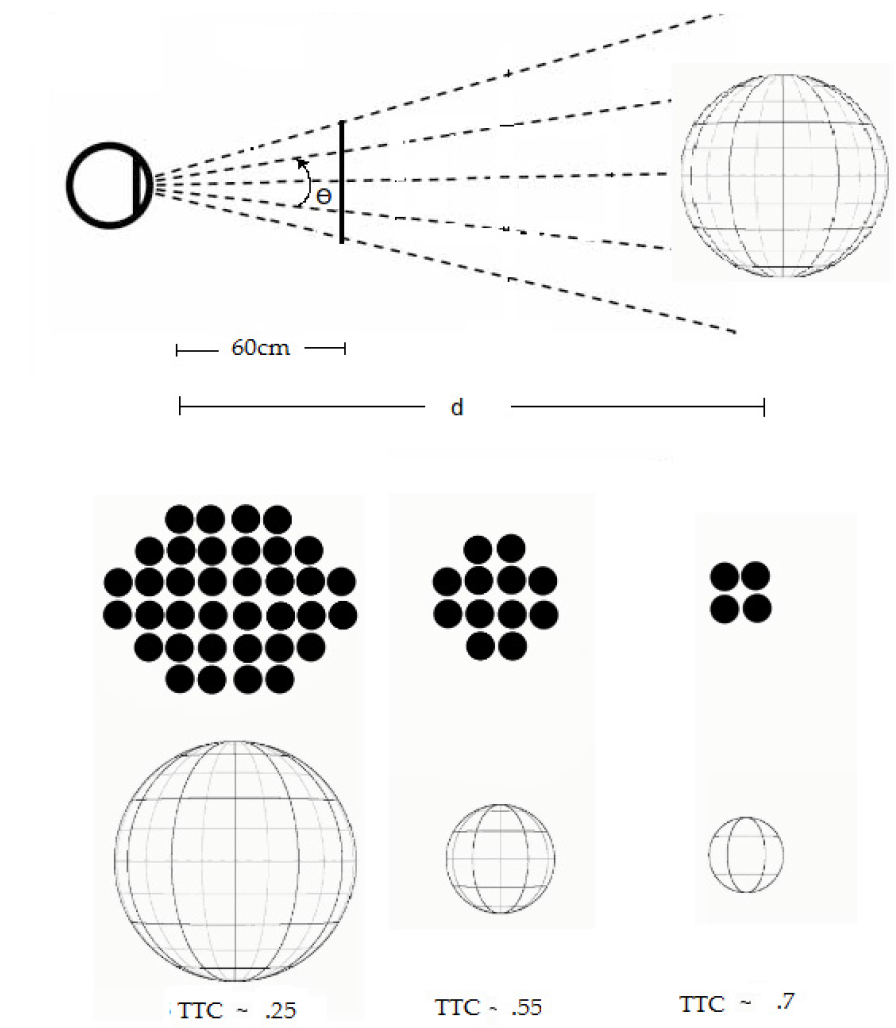

In order to have a better control of the variables under study, a classic strategy is that of developing experiments in simulated environments. We have developed a classic perceptual experiment designed to study TTC (cf. [30]). In this procedure, a sphere approaching the participant at a constant speed was simulated and presented visually on a screen and/or as a tactile stimulation through the waistband. At a certain moment the information about the sphere was occluded (i.e., not shown) and the participant estimated, with a button press, when the sphere would impact the point of observation, if the speed of the sphere remained constant. Different simulations were developed presenting different initial distances, velocities and TTCs. The software was developed using Matlab and the Psychtoolbox library (a free set of Matlab and GNU/Octave functions for visual research 3 ). As mentioned, two different types of stimulation were used, tactile and visual. Also tested was a stimulation with both visual and tactile activation (referred to as crossmodal). The activation of the vibrotactile actuators and the display of the PC screen depend on the angular size of the simulated sphere as seen from the perspective of the participant [see Figure 8 and Figure 9].

Flow chart of simulation of approaching ball.

Principle of the simulation of the approaching ball; actuators in black are the ones active in the waistband when tactile information is presented.

4. Empirical studies

The ecological theory states that human beings detect higher-order variables from the environmental energy arrays. For example, we may perceive the TTC with an object by detecting the ratio of the angular size of the object and the rate of expansion (i.e., the variable τ).

When the sensory substitution device is used, it is interesting to determine if the variation of tactile stimulation can provide information about the TTC, as the ecological theory would suggest. In order to test this hypothesis, two experiments were performed. In the first one, a virtual environment simulating an approaching ball was used in order to measure if observers are able to estimate TTC from vibrotactile stimulation. In the second one, a ball was thrown to participants and it was observed if they were able to perceive the approaching ball using only vibrotactile information. The experiments are described in the following sections.

4.1 Simulated TTC

The purpose of this experiment is to measure the TTCs estimated by participants in a controlled environment and to compare them with the corresponding TTCs of the simulations.

Twelve participants, between 20 and 40 years, participated in the experiment. Participants were asked to estimate TTC under three different conditions: a visual condition, a tactile condition and a crossmodal condition in which both tactile and visual stimulation was provided. There were 25 different trials in each condition. Information about the sphere was presented to participants (tactile, visual, or crossmodal) in each trial. At a certain moment, the information was occluded and the participants were asked to estimate the moment of impact by pressing a keyboard key, based on the information they had before the occlusion (e.g., angular size, rate of expansion, tau). Each trial had a different occlusion time, velocity and initial distance and hence a different TTC at occlusion. The order in which participants performed the different conditions was counterbalanced in order to avoid possible effects due to training. These three conditions allow us to compare the estimated TTC when vision is used with the estimation of TTC when our sensory substitution device is used.

An approaching sphere with a 20cm radius was simulated by calculating how it will be projected on a screen located at 60cm from the participant. Visual and tactile information was calculated and delivered depending upon the condition under test [see Figure 9]. The visual information presented on the screen was an image of the distribution of vibrotactile actuators on the waistband; when a portion of the region was occupied by the ball, the corresponding area representing an actuator turned black. At a certain moment the information was occluded and the participants estimated the moment at which the sphere would reach them. When tactile information was delivered, the actuators started to vibrate at their maximum when the area of the actuator was reached. Similar to the visual condition, at some point the information was occluded (vibrotactors were stopped) and the participant had to estimate the moment of impact. A crossmodal condition, with the same procedure and both types of stimulation presented simultaneously, was performed in order to measure whether the extra amount of information improved the estimation of TTC.

The sphere approached participants with velocities between 0.37 and 0.55m/s; the initial distances were from 3 to 2m; and the TTC after the occlusion varied from 0.2 to 0.9 seconds. Participants were asked to press a key on the PC when they perceived that the sphere would hit them. The estimated TTC was compared with the real TTC for each condition, using the absolute error obtained for each condition and the correlation between the real and estimated TTC. A high correlation is a starting point to indicate that participants detect information that is closely related to TTC.

4.2 Real environment

The aim of this experiment is to provide an initial indication that people are able to perceive an approaching object and to determine the TTC of the object when our sensory substitution device is used in a real environment.

Twelve participants, with an age range from 20 to 50 years, participated in the experiment. None of them participated in the previous experiment in a simulated environment. A large and light-weight ball was attached to a wire with a length of 1.5m, attached to the ceiling as a pendulum. The ball was thrown from a distance of about 2m from the participants. They were asked to hit the ball with their hand whenever they perceived it as being close enough. Participants were trained for a period of ten minutes, in which the ball was repeatedly thrown to them. First, they could use their sense of sight for four minutes. Afterwards, they were blindfolded and the ball was thrown to them while they were warned at the moment of impact. This procedure was repeated ten times. Finally, the ball was thrown ten times and the participants were asked to hit the ball without any warning [see Figure 10]. We counted the number of correct hits of the ball, delayed attempts and anticipated attempts.

Sequence of reaction of a participant in real environments.

5. Results

In the experiment in a simulated environment, the TTC estimated by the participants was compared with the actual TTC of the simulations. This was done for the three conditions: visual, tactile and crossmodal [see Figure 11]. To calculate the mean estimated TTC we discarded trials with estimated TTCs that deviated from the mean by more than three standard deviations. This procedure corrects for fails in pressing the keyboard key while the experimental trial is carried out.

Perceived vs. simulated TTC. Results are shown for each condition: a) tactile, b) visual, c) crossmodal. Blue lines indicate hypothetical perfect responses.

The absolute error and correlation between estimated and actual TTC were calculated for each condition. Results are shown in Table 1.

Correlation between real and estimated TTC and absolute error for the different types of stimulation.

As can be seen, there are high correlations between estimated and real TTCs in all conditions and there are small absolute errors. Participants therefore perceived the moment at which the simulated spheres would reach them. The differences between the experimental conditions were non-significant.

In the experiment performed in a real environment, it was observed that participants were able to hit the ball at the correct time in most of the trials. Table 2 shows the mean number of trials in which participants hit the ball (and hence correctly perceived the moment of impact), failed because of a delayed attempt to hit the ball and failed because of a premature reaction.

Mean number of trials per participant with hits, failures due to a delayed reaction, or failures due to an anticipated reaction (10 max).

6. Conclusions

The high correlation obtained between estimated TTCs and actual ones in the virtual environment, combined with the low absolute error, indicates that the use of vibrotactile flow is a promising tool for sensory substitution devices. These preliminary experiments also indicate that vibrotactile flow may allow people to detect higher-order variables such as τ. However, more experiments are needed to test the effect of reducing the correlations between τ and other available variables, such as angular size, angular speed and the number of actuators working. In the present experiment successful performance could be achieved with each of these variables.

A reasonably accurate performance was observed in the real environment with participants who detected the moment at which the approaching ball was about to hit them. This means that the vibrotactile flow provides information that allows behaviour to be well-adjusted to TTC. In this respect, it may be interesting to mention that in an improvised condition, where frontal and lateral balls thrown to participants were randomly alternated, participants were able to distinguish the direction of the approaching ball, although no systematic data were recorded. Hence, other environmental properties can be perceived with the TSIGHT.

We claim that sensory substitution devices can be improved if they allow exploration by the user and if they supply a continuous and contingent flow of sensory information, allowing the detection of higher-order informational patterns. This is consistent with the theoretical background provided by ecological and embodied approaches [1–5]. It is also consistent with the empirical work of Bach-y-Rita and colleagues [13–17], who highlighted the role of active control. However, a relevant difference between our work and that of Bach-y-Rita and colleagues is that the TSIGHT bases stimulation on distance rather than on light intensity. Moreover, early ball-batting experiments were carried out with a stationary version of the TVSS, where the participant controlled only the camera. Our research takes advantage of the availability of the Kinect sensor, allowing free movement, so that the whole body acts as an information-detecting device.

Experiment 1 did not reveal significant differences between the visual and tactile conditions. Obviously, however, the sensory substitution device does not provide as much information as the information that is detected with the sense of sight (which has much better acuity). Even so, as claimed above, the use of vibrotactile flows and gradient-based information may improve the performance with sensory substitution devices. Furthermore, the broader the repertoire of movements allowed to users, the more likely that higher-order variables become available. This applies most particularly to rapidly changing environments and other situations in which codes are not as informative as the temporal variation of the vibrotactile stimulation. We suggest that arbitrary codes require explicit learning and limit the range of information that can be provided/learned. When coupling an agent-environment property such as distance with a form of stimulation, one creates an array of information that people may learn to exploit with implicit procedures.

7. Future work

In order to improve the substitution device it is important to determine the best way of displaying vibrotactile flow. One may consider, for example, different algorithms, fuzzy logic and other methods to establish the frequency to be delivered, depending on the distance to the objects and the sensitivity of the user. It may also be useful to allow users to regulate the TSIGHT as when using field glasses.

It is also important to train the users in the use of the vibrotactile sensory substitution device and adapt the signals to the sensitivity of different users. Although learning the use of sensory substitution devices has been addressed, a more systematic training program introducing variability of practice and self-controlled feedback is expected to be beneficial [31].

The way humans perceive properties of the environment leads us to believe that new algorithms and sensors could be developed in order to integrate them in robots in such a way that they could be able to detect informational variables such as τ directly. From our point of view these algorithms will allow new, faster and more efficient forms of interaction between the robot and the environment.

Finally, improvements in matters of efficiency and size are needed. These will help us to have a higher number of vibrotactile actuators, allowing a more useful vibrotactile flow. A device should be constructed and tested in which the actuators are densely distributed over larger parts of the body, which may be more useful than a “retina-like” matrix of actuators. The development of such a wearable device is one of the major aims at this stage of the TSIGHT. In the present version we have used a modular design that will allow the distribution of the power modules, decreasing the number and length of the wires needed.

Footnotes

8. Acknowledgements

This work has been partially funded by the project ROBOCITY 2030 sponsored by the Autonomous Region of Madrid (S-0505/DPI/000235), by the project “ROTOS: Multi-Robot system for outdoor infrastructures protection” of the Spanish Mnistry of Education and Science (DPI2010-17998), as well as by projects FFI2009-13416-C02-02 and FFI2011-28835 of the Spanish ministry. We also thank all participants that collaborated in the experiments, especially Miguel Ángel Frutos and Juan David Hernandez.