Abstract

This study investigated the potential for the development of novel perceptual experiences through sustained training with a sensory augmentation device. We developed (1) a new geomagnetic sensory augmentation device, the NaviEar, and (2) a battery of tests for automaticity in the use of the device. The NaviEar translates head direction toward north into continuous sound according to a “wind coding” principle. To facilitate automatization of use, its design is informed by considerations of the embodiment of spatial orientation and multi-sensory integration, and it uses a sensory coding scheme derived from means for auditory perception of wind direction that is common in sailing because it is easy to understand and use. The test battery assesses different effects of automaticity (interference, rigidity of responses, and dynamic integration) assuming that automaticity is a necessary criterion to show the emergence of perceptual feel, that is, an augmented experience with perceptual phenomenal quality. We measured performance in simple training tasks, administered the tests for automaticity, and assessed subjective reports through a questionnaire. Results suggest that the NaviEar is easy and comfortable to use and has a potential for applications in real-world situations. Despite high usability, however, a 5-day training with the NaviEar did not reach levels of automaticity that are indicative of perceptual feel. We propose that the test battery for automaticity may be used as a benchmark test for iterative research on perceptual experiences in sensory augmentation and sensory substitution.

Keywords

1. Introduction

Is it possible to extend perceptual experience, or “feel,” through an artificial device that provides novel sensory information that is not naturally available to the senses? A positive answer would both pave the way for the compensation of perceptual deficiencies and inspire the design of extensions to our existing sensory apparatus.

The potential for experiential expansion of the sensory apparatus is a fundamental prediction of the sensorimotor theory of perceptual consciousness (O'Regan, 2011; O'Regan & Noë, 2001). Sensorimotor theory seeks to explain the distinctive phenomenal character of how perception is subjectively experienced, termed its perceptual feel. Perceptual feel denotes the “what it is like” for an observer when she experiences a percept, for example, as visual or auditory, or as green or red, or loud or soft. The perceptual feel of an experience is distinct from conscious cognitive access or evaluation of the experience but refers to the phenomenal aspect of how the experience distinctively feels (O'Regan, 2011). For example, reading a direction from a compass typically involves an effortful cognitive evaluation of one’s orientation (i.e., an interpretation of the symbolic meaning of the compass needle or digits). In contrast, hearing a sound involves an automatic (spatial) perceptual feel for the direction of the source emitting the sound.

Sensorimotor theory explains perceptual feel as constituted in a perceiver’s implicit mastery of sensorimotor contingencies, the lawful ways in which sensory signals, such as the stimulations of the photoreceptors, change when the observer interacts with their environment. Similar to ecological theories of perception, such mastery of sensorimotor contingencies is based on the active use of sensorimotor invariants. However, unlike Gibsonian affordances (Gibson, 1977; for review, see Greeno, 1994), sensorimotor theory does not utilize sensorimotor invariants as sources of information for behavioral action (e.g., Favela et al., 2018; Lobo et al., 2014; Travieso et al., 2015), but as constitutive for the phenomenal character with which perceptual content is subjectively experienced (O'Regan and Noë, 2001, p. 1019).

The assumption that mastery of sensorimotor contingencies can be acquired via the observer’s continuous interaction with the environment has been a basis for sensory rehabilitation and for empirical tests of the theory itself (e.g., Auvray, et al., 2007; Auvray & Myin, 2009; Auvray, Philipona, et al., 2007; Bermejo et al., 2015; Blackmore, 2001; Bompas & O'Regan, 2006a; 2006b; Froese et al., 2012; Kärcher et al., 2012; König et al., 2016; Lenay & Steiner, 2010; Lenay & Stewart, 2012; Nagel et al., 2005; O'Regan & Noë, 2001, p. 1020). Exposition to new sensorimotor contingencies should lead the perceiver to experience a novel perceptual feel, provided that the user masters the novel sensorimotor contingencies, either spontaneously, or through learning (Hurley & Noë, 2003; Kaspar et al., 2014; König et al., 2016; Myin & Degenaar, 2014; Nagel et al., 2005; Noë, 2004; O'Regan, 2011). Thus, a central prediction of sensorimotor theory is the potential to experientially expand the sensory apparatus.

This prediction appears to be supported by a large body of research on sensory substitution that suggests that it is possible to re-create perception and perceptual feel of one modality, say vision, by using technology that translates the sensory signals of that modality into another modality, for example, touch (Bach-y-Rita et al., 1969) or audition (Meijer, 1992). Seminal studies in the field have argued that we can learn to “see” with the ears (Meijer, 2015), tongue (Bach-y-Rita et al., 1998; Kupers & Ptito, 2004), or skin (Bach-y-Rita et al., 1969; White et al., 1970) using such artificial sensors. Several studies observed learning effects on how observers use such devices following several weeks of training (Bach-y-Rita et al., 1969; Bermejo et al., 2015), and in some cases, even after only a few hours (Bach-y-Rita et al., 1998; Durette et al., 2008; Favela et al., 2018; Lobo et al., 2014). These observations have led researchers in sensory substitution to claim that sufficient training with a well-functioning sensory substitution device can provide a kind of perception that is comparable to the perceptual feel of the source modality (Auvray, et al., 2007; Bach-y-Rita & Kercel, 2003; Capelle et al., 1998; Haigh et al., 2013; Poirier, De Volder, Tranduy, et al., 2007; Proulx, 2010; Segond et al., 2013; Ward & Meijer, 2010; Ward & Wright, 2014).

Similarly, beyond substitution, sensory augmentation research suggests that a perceiver may also develop novel kinds of perceptual feel from artificial sensorimotor contingencies provided by technical sensors that convey information not available to the natural apparatus, such as the direction of geomagnetic north obtained by a magnetic compass (e.g., Kaspar et al., 2014; König et al., 2016; Nagel et al., 2005; Schumann & O'Regan, 2017).

1.1. Automaticity as a prerequisite of perceptual feel

It is undeniable that sensory substitution and sensory augmentation devices equip their users with new skills (see, e.g., Auvray & Myin, 2009). However, the acquisition of skilled use of a device does not necessarily imply the development of a genuine perceptual feel (Arnold et al., 2017; Auvray & Harris, 2014; Block, 2003; Brown et al., 2011; Deroy & Auvray, 2012). Evidence for the effects of sensory substitution or augmentation consists mainly of performance in very specific discrimination tasks with small sets of stimuli (e.g., Auvray et al., 2007; Bach-y-Rita et al., 1969; Buchs et al., 2021; Chebat et al., 2015; Díaz et al., 2012; Favela et al., 2018; Goeke et al., 2016; Haigh et al., 2013; Lobo et al., 2014; Proulx et al., 2015; Proulx et al., 2008; Travieso et al., 2015). Yet observed performance in this kind of discrimination may be explained by explicit cognitive interpretation rather than by the development of a perceptual feel (Deroy & Auvray, 2012; see also Goeke et al., 2016; Schumann & O'Regan, 2017). Observers may learn by heart that properties specific to the substitution signal are associated with the distinctive features of a small number of stimuli in the substituted modality, for example, that a certain vibrotactile pattern corresponds with a certain visual stimulus shape. Then, observers may explicitly retrieve this associative knowledge to identify the stimulus based on “reading” the substitution signal, similar to reading and interpreting the characters of written text (Deroy & Auvray, 2012). Neither such stimulus knowledge nor retrieval from symbolic memory necessarily reflect the experience of a perceptual feel of the substituted modality, such as the visual feel when seeing the stimulus’ shape (for a recent discussion, see Cohen, 2018; Corns, 2018; Dokic, 2018; Macpherson, 2018; Noordhof, 2018; Renier, 2018; Smith, 2018; Spence, 2018).

Other kinds of evidence for the development of perceptual feel from substitution have been obtained in subjective reports (Guarniero, 1974; Segond et al., 2005; Vazquez-Alvarez et al., 2011; Ward & Meijer, 2010). However, such reports are difficult to assess objectively because of their idiosyncratic and subjective nature (Marcel, 2003; Spence, 2018). This indeed reflects the intrinsic challenge of research on perceptual feel: Its essential aspects are subjective and thus seem to evade objective assessment.

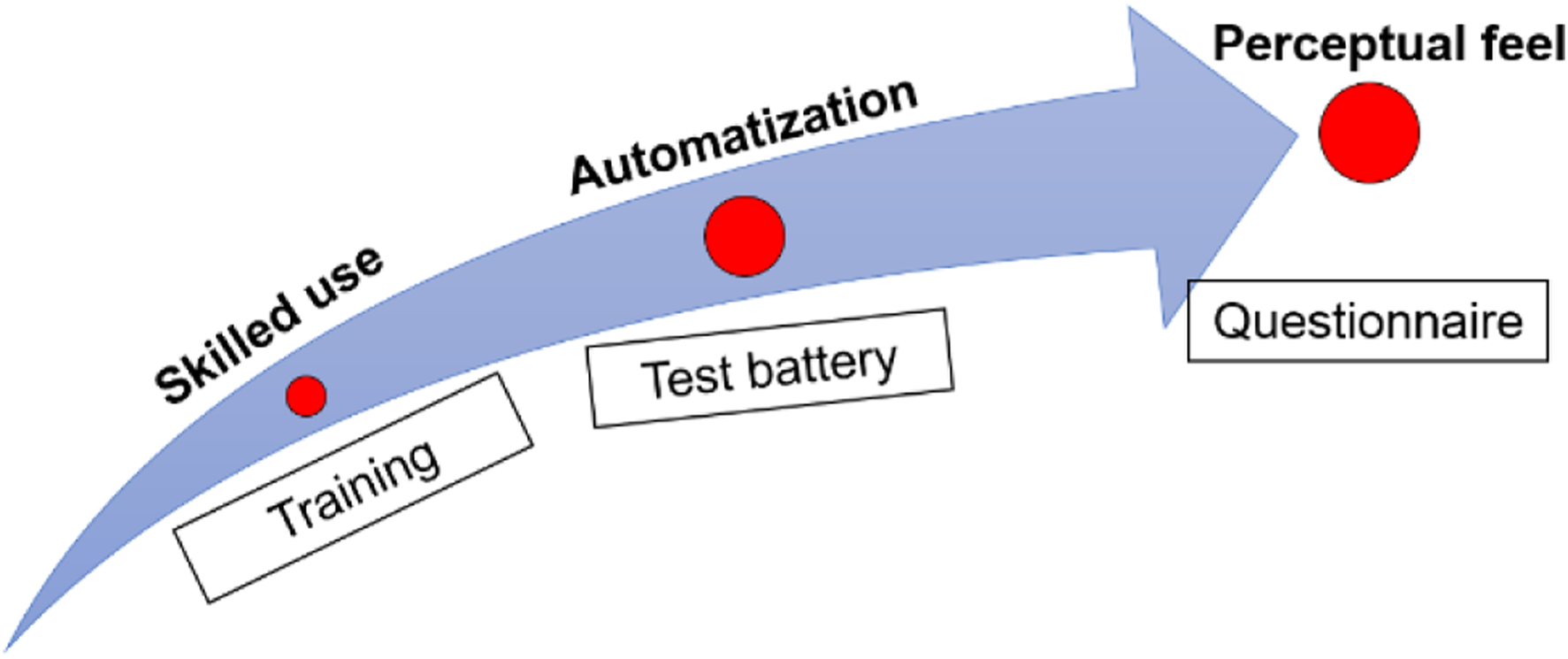

Here we argue that while a direct objective assessment of the content of perceptual feel is methodologically challenging, perceptual feel has a core feature that can be assessed operationally: Automaticity. Take for instance vision. The perceptual interpretation of cues that produce, for example, the perceptual feel of motion, texture, depth, color, or gestalt forms occurs automatically (e.g., Goldstein & Brockmole, 2017; Snowden et al., 2006). Automaticity can also occur with non-perceptual cognitive processes, such as in semantic priming (Hutchison et al., 2013) and reading (e.g., Roembke et al., 2021). So, automaticity is not sufficient to demonstrate perceptual feel. However, under the assumption that genuine perceptual feel is highly automatic, evidence for automaticity is a necessary condition to show the potential existence of perceptual feel. If observers acquire a perceptual feel from training with a sensory substitution device, their response to the substitution signal of the device must be highly automatic. Conversely, if users do not show automaticity, then their interpretation of the substitution signal was not a perceptual feel. In this way, automaticity allows us to distinguish candidate cases of perceptual feel from explicit cognitive evaluation that involves cognitive effort. Figure 1 illustrates the logic of our approach. Rationale behind our approach to perceptual feel. The learning process must start with the acquisition of skills to master the device as a tool. We assess participants’ mastery of the NaviEar through data from the training tasks. A stronger learning success is automatization, in which the participants internalized completely the functioning of the device and directly react to the device without any cognitive effort. Automaticity implies a high level of learning and tool mastery. We test automatization through our test battery. Finally, a perceptual feel develops, that is, a distinctive subjective qualitative character of not mere using but of perceiving with the augmentation device. We used a questionnaire to get a (limited) idea of participants’ subjective impressions. While due to its subjective nature perceptual feel is difficult to measure objectively, it necessarily implies that users react directly and automatically. Thus, we propose that tests of automaticity can single out cases where a genuine perceptual feel may have developed and rule out where it has not.

Tests of automaticity can make some headway toward a more operational study of perceptual feel by excluding cases where perceptual feel was absent. The criteria that characterize automaticity of responding (Ashby & Crossley, 2012; Moors, 2015; Palmeri, 2003) can be applied as an objective criterion to investigate the development of perceptual feel. Automatic responses, first, have the property that the match between stimulation and response is rigid and cannot be changed by a simple decision (rigidity and absence of cognitive control). The rigidity of automatic responses has originally been shown by the seminal experiments of Shiffrin and Schneider (Shiffrin & Schneider, 1977). The resistance of perceptual feel to cognitive control is vividly demonstrated by mandatory fusion (Hillis et al., 2002) and experiments with inverting glasses (e.g., Degenaar, 2014; Lillicrap et al., 2013). Second, automaticity requires little attentional capacity (criterion of efficiency) and is unconscious. While perceptual feel may involve attention and consciousness, the interpretation of the sensory signal itself is unconscious. Examples are the unconscious inferences involved in the color perception of #theDress (for review, see Witzel & Gegenfurtner, 2018) and the independence of visual-haptic cue combination from modality-specific attention (Helbig & Ernst, 2008). Third, automaticity is fast compared to cognitive inferences. The high automaticity of perceptual processes is illustrated by the extreme speed of scene recognition, which may occur in less than 20 ms (Potter et al., 2014). Fourth, automatic performance involves integrating single elements into a coherent whole, such as in chunking (Chase & Simon, 1973) and the automatic perception of musical sequences (Richler et al., 2011). Finally, automatized performance leads to automatic interference, such as in the Stroop effect (Augustinova & Ferrand, 2014; Stroop, 1935). An example of automatic interference specifically in perception are memory color effects (for review, see Witzel & Gegenfurtner, 2018).

The present study investigated whether the perceptual interpretation of an augmented signal can reach levels of automaticity comparable to natural (i.e., non-augmented) perceptual modalities, such as vision or audition. This approach extends the demonstrations of subcognitive integration of augmentation signals (König et al., 2016; Nagel et al., 2005) to test for automaticity as a precondition for demonstrating the emergence of perceptual feel. For this purpose, we introduced a new sensory augmentation device, called NaviEar, and developed a battery with different tests of automaticity.

1.2. The NaviEar

Geomagnetic augmentation signals the direction of geomagnetic north. This approach has been introduced to investigate learning effects in sensory augmentation. The feelSpace belt translates allocentric direction of the torso toward north into vibrations delivered on the waist (Goeke et al., 2016; Kärcher et al., 2012; Kaspar et al., 2014; König et al., 2016; Nagel et al., 2005). Following sensorimotor theory, the rationale is that mastery of sensorimotor contingencies is constitutive of perceptual feel, and hence learning to master these new sensorimotor contingencies about geomagnetic allocentric orientation should ultimately lead to a “feel of north” (and east, south, west, etc.), that is, an immediate impression of being globally oriented when engaging with these artificial sensorimotor contingencies. Results, however, have been mixed (Kaspar et al., 2014; König et al., 2016; Nagel et al., 2005).

To further develop the paradigm of geomagnetic augmentation, here we pick up the suggestion that effective augmentation devices may be informed by the science(s) of sensory integration (Cuturi et al., 2016; Gori et al., 2016). From the perspective of embodied theories of perception, such as sensorimotor theory, signaling orientation to north contingent to self-movement should lead to a perceptual feel of north not available by the natural modalities. However, considering the physiology of human embodiment, signaling the direction of north contingent to the orientation of the torso might not be the most effective way of designing an artificially augmented embodiment to endow users with a feel for their global allocentric orientation. Signaling the direction of north contingent to the orientation of the head may provide a closer link to human orientation. Head orientation aligns a magnetic directional signal with the reference frame of our natural external senses—vision, audition, and smell. From a sensory processing perspective, such implicit alignment may reduce the need for challenging reference frame transformations between these natural senses and the artificial north signal. Further, movements of the head occur much more frequently and are more fine-grained than movements of the body as a whole (Einhauser et al., 2007; Schumann et al., 2008). From a learning perspective, the head thus provides a much richer and more frequent movement source for enacting novel sensorimotor contingencies, which should aid their active exploration and learning. In addition, previous studies have shown that head movements play a key role in navigation by ear (Heller et al., 2009; Vazquez-Alvarez et al., 2011). From an ecological psychology perspective, these observations may highlight an important role of head movements in probing directional information that may further aid active exploration and learning of magnetic-directional sensorimotor contingencies. Hence, displaying magnetic allocentric information contingent to the orientation of the head rather than of the body might improve both the integration of the novel allocentric signal into the existing modalities, as well as observers’ exploration and training behavior. Indeed, in rodents, it has been shown that blind rats can use head-centered cues from an implanted geomagnetic compass for navigation (Norimoto & Ikegaya, 2015). And in humans, a self-report (Barry, 2010) described signs of automatized interpretation and perceptual experience of cardinal directions with a “compass hat.”

Also, the tactile modality, while frequently used, may pose limits to the integration of an artificial directional signal. The tactile modality has been advocated for practical reasons (e.g., Lenay et al., 2003). However, the tactile system naturally receives signals that have a proximal origin and are experienced as such. Hence, there may be a tactile proximity bias that may work toward an egocentric and against an allocentric perceptual interpretation of tactile signals. Further, the large onset asynchronies of affordable eccentric tactile actuators, such as those used in most sensory substitution studies (e.g., Cassinelli et al., 2006; Froese et al., 2012; Kärcher et al., 2012), severely limit the design of refined haptic stimulation profiles suitable to the tactile system. These onset asynchronies also impose a substantial lag between motor action and resulting sensory stimulation, typically much larger than the signal update rate in Virtual Reality applications, and orders of magnitude beyond the lag within the natural exteroceptive receptors. With high lag, a tactile directional augmentation signal may arrive only outside the temporal integration windows of co-occurrence based multisensory integration processes (Parise & Ernst, 2016), challenging the multi-modal integration of artificial information presented via (slow) tactile signals.

For these reasons, a head-centered, auditory sensory augmentation device seemed preferable to promote automatic, sensorimotor integration. This idea is supported by our previous study in the laboratory using head-centred auditory augmentation (Schumann & O’Regan, 2017). We had investigated whether training with an auditory signal conveying head-centered cardinal directions leads to automatic interference with perceived orientation under highly controlled conditions. Participants were seated in a dark room on a motorized rotation chair to precisely control their orientation and prevent interference from visual orientation cues. Results provided evidence that training with a head-centered auditory signal leads to automatic interference with perceived self-rotation. These observations contrast those with a waist-based tactile system (Goeke et al., 2016).

A drawback of such a highly controlled approach is that sensorimotor contingencies are very much limited, for example, to the rotation of the chair. In contrast, people move freely and engage in meaningful activities, such as commuting to work or doing a walk, in everyday life. Following sensorimotor theory, we considered that embedding the augmented signal into meaningful activities may be key to sensorimotor learning because they provide richer experience of sensorimotor contingencies due to freer, more complex movements. They may also improve learning through intrinsic motivation to navigate and self-orient in real life. In addition, we were inspired by the striking effects of acquired automaticity in a field setting demonstrated by riding a bike after reversing its handlebars (Sandlin, 2015). While those effects concerned sensorimotor control, here we were aiming at such effects of acquired automatization on the perceptual interpretation of an augmented signal.

Hence, we developed the NaviEar as a novel geomagnetic sensory augmentation device that can be worn during everyday life activities. The auditory directional signal provided by the NaviEar has been designed analogously to the use of the ears to determine wind direction, a simple and intuitive technique commonly used in sailing (e.g., Isler & Isler, 2006). This novel magnetic-auditory augmentation signal should thus be aligned with the reference frame of the natural exteroceptive senses, presented to a modality that naturally deals with distant sources, updated within the temporal integration windows of multisensory integration, and further easy and intuitive to understand. Thus, we expect users to learn the artificial relationship between head orientation to north and the auditory representation thereof quickly, improving the conditions for automatization and development of a perceptual feel of north when using the device during everyday-life activities outside the lab.

1.3. Tests for automaticity

Figure 1 illustrates the three parts of our experimental approach to test for automaticity: Training to use the NaviEar, tests of automaticity, and a post-experimental questionnaire.

To train participants with the NaviEar, we developed a 5-day-training program to be completed as part of everyday life activities. For users to efficiently achieve mastery of a device, it is important to engage them in relevant tasks (Bertram & Stafford, 2016; Maidenbaum, Abboud, et al., 2014). Allocentric orientation is less important in an urban than in a natural environment, as people can rely on street names and particular buildings to orient themselves. For this reason, we developed a set of training tasks specifically tailored to involve information accessible only through the NaviEar. Changes in performance on these tasks across the training period also allow us to assess general effects of training—which corresponds to evidence for “weak integration” of an augmentation signal according to Nagel et al. (2005).

To test for automaticity, we created a test battery to measure achievements of automaticity after training in the field. The battery included four test tasks that participants completed before (pre-test) and after (post-test) training. Those tests assessed involuntary automatic interference effects, cognitive flexibility, and the integration of the NaviEar signal in movement and orientation.

First, we tested whether responses to the NaviEar become robust to cognitive control in a way that indicates automaticity. To this end, we assessed participants’ ability to adapt to changes in the coding function of the NaviEar, that is, the relationship between the orientation of the head and the sound signal (rotated-signal test).

Second, an automatic interpretation of the signal should involve recognizing complex patterns defined by the sensorimotor contingencies in dynamic sequences (chunking, pattern recognition). To test whether participants can recognize complex patterns in sequences of the NaviEar signal, we developed a distortion-detection test. Here, participants walked over different paths that did or did not contain a section where the orientation-to-sound relationship was distorted and had to indicate whether they noticed an abnormality in the behavior of the NaviEar signal.

Third, in the absence of a stable external visual reference, human observers are unable to walk in a perfectly straight line but instead deviate to either side. This phenomenon is called veering (Guth & LaDuke, 1994). Veering itself happens automatically, as suggested by the fact that observers are unaware of their veering and cannot prevent themselves from veering to accomplish a straight walk. If users interpret the NaviEar signal automatically, the unbiased veridical auditory-directional information should be integrated with vestibular-proprioceptive sensory signals. This should increase the precision of orientation and reduce veering (cf. Kärcher et al., 2012). For this reason, we tested whether veering reduces after training with the NaviEar (veering test).

Finally, if using the NaviEar automatically triggers a feeling of orientation, providing an incorrect NaviEar signal should interfere with task completion. We tested whether modulations of the NaviEar signal involuntarily affect participants’ orientations when walking a series of paths (interference test).

The post-experimental questionnaire had two purposes. One part of the questions solicited participants’ first-person evaluation about the comfort and usability of the NaviEar. Similar to previous approaches (Auvray et al., 2005; Guarniero, 1974; Nagel et al., 2005; Segond et al., 2005; Vazquez-Alvarez et al., 2011; Ward & Meijer, 2010). Another set of questions targeted effects of NaviEar usage on subjective experience. These questions complemented the tests of automaticity: If the necessary condition of automaticity is met, indications of subjective experience may contribute to disambiguate whether the observed case of automaticity may be related to perceptual feel or is a non-perceptual kind of automatic cognition.

2. Method

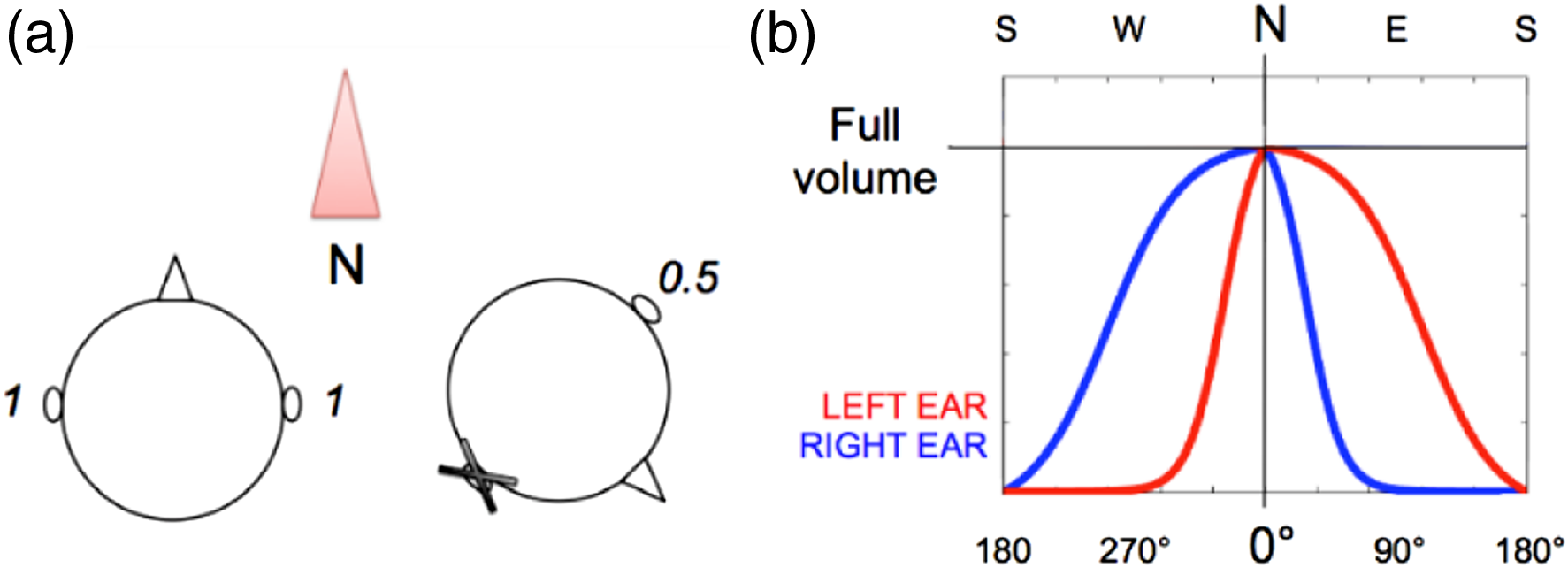

2.1. Participants

Participants. The first initial of the participant ID (PP) indicates the gender. Participant cw is the first author (male). “Sess” and days refer to sessions of training and the number of days those sessions involved.

aThis participant (f3) was trained with a 90-deg-rotated signal after the first rotated-signal test (see Results).

2.2. Apparatus and materials

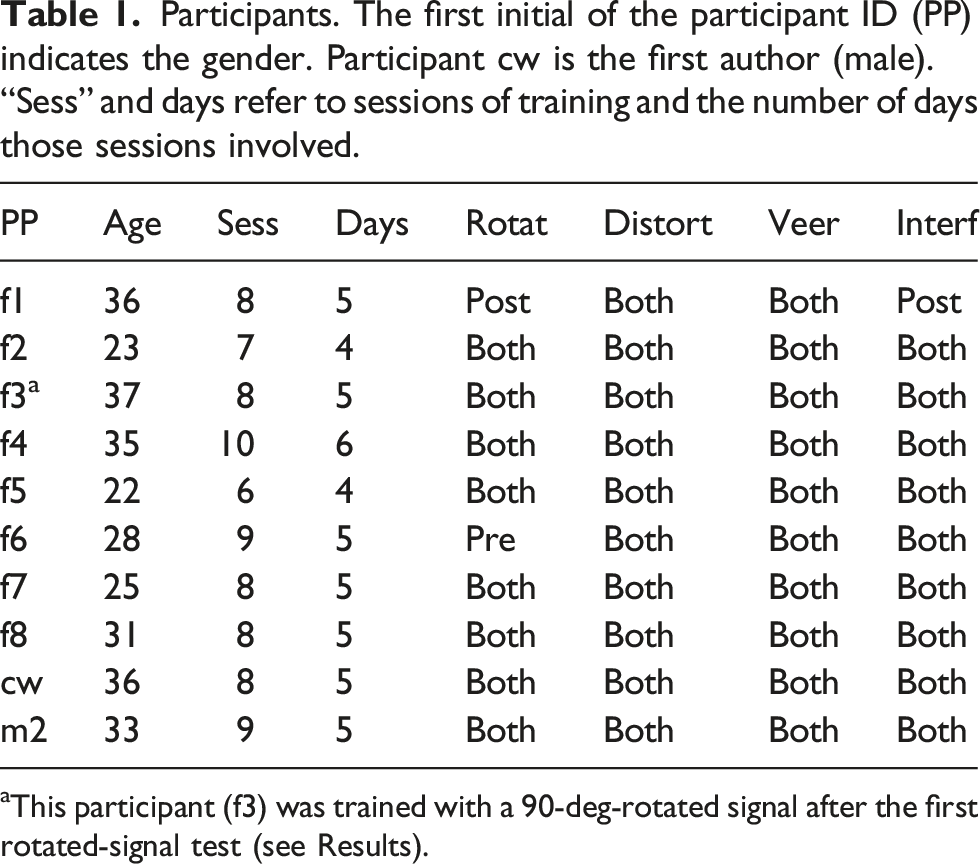

The NaviEar consists of three parts (see Figure 2): a head-mountable orientation sensor (YEI technology, 3-space Bluetooth sensor (YOST Labs, 1998-2015)), bone-conduction headphones (Aftershokz Sportz M3 AS450), and an Android phone with low latency in orientation to sound mapping (LG G3 16GB). The orientation sensor consisted of three types of sensors: a gyroscope, an accelerometer, and a compass. The raw output of these sensors was processed through a Kalman filter to provide the final orientation reading. The sensor’s recording rate was 100 Hz. Nominal orientation accuracy was on average 1 deg and orientation resolution <0.08 deg (YOST Labs, 1998-2015). Before each use, the sensor was zeroed in on north by comparison with a manual geomagnetic compass. We implemented the NaviEar orientation-to-sound coding as well as the training tasks in a custom-made Android App (for source code, see https://github.com/christophWit/NaviEar). Measured all-round latency of the final system, including the external orientation sensor, was 80–120 ms. NaviEar Hardware. (a) Individual components of the NaviEar: Android phone, bone-conduction headphones, and orientation sensor with head-mount. (b) One of the authors (AL) wearing the NaviEar for illustrative purposes.

2.3. Signal

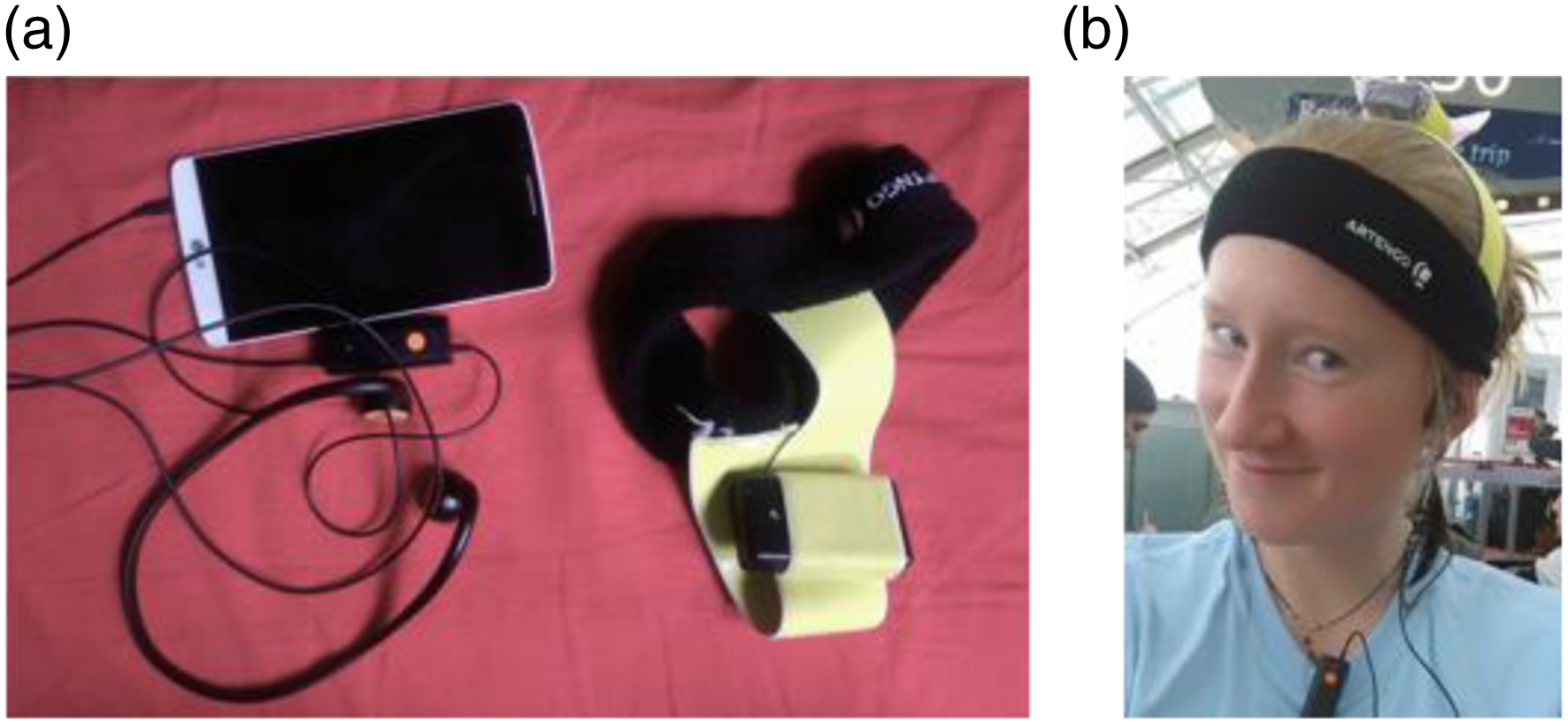

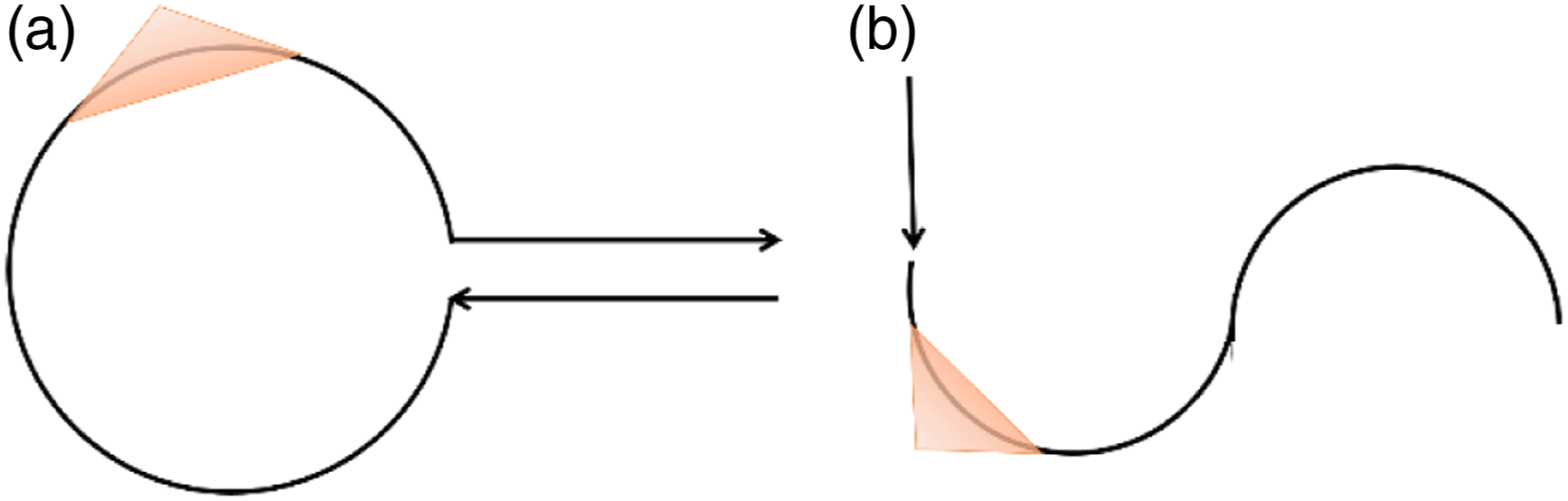

The sound signal of the NaviEar is designed in analogy to the way wind produces sound in the ears (cf. Figure 3(a)): When oriented toward the wind, the wind produces sound in both ears. In the NaviEar, the direction of the “wind” is determined to be north: When the head is oriented toward north, the NaviEar signal is played to both sides at full volume. When the head is turned clockwise away from north toward east, the signal’s volume on the right side decreases to arrive at complete silence when facing east. At the same time, the left ear continues to face north; the sound on the left is therefore still played at full volume. Turning the head further until south is reached decreases the volume on the left side, with the signal on the right remaining switched off, to end in complete silence on both sides when facing south. The same principle applies when turning counterclockwise from north toward west, with the change of the signal on the two sides now being reversed compared to the eastward turn. NaviEar coding. (a) The “wind coding” principle: The more the ear is turned toward the wind, the louder the sound played to that side; when in positions where the wind blows directly into the ear, the volume in the respective ear is at maximum (see text for details). (b) The coding function that translates cardinal directions into sound. The x-axis corresponds to allocentric orientation in degrees, the y-axis to the (relative) volume of the bubble sound in the left (red curve) and right (blue curve) ear. Note that the functions are symmetrical, implying that the same change in the signal occurs in either direction away from north (east vs. west), just inverted with respect to the left and right ear.

The sound simulated the sound of “bubbles,” which was pleasant to hear and stood out against typical noise in an everyday environment. Users adjusted the volume of the phone to a level that was comfortable while enabling them to complete the training tasks in the respective environments. As a result, the minimum intensity was silence, and maximum intensity of the bubble sound depended on user preferences and noise level in the environment. To improve the detection of volume differences between ears, the bubble sound on the left side had a slightly higher pitch than the one played on the right (the sounds are available in the GitHub repository).

The modulation of the volume was related by sigmoid functions to allocentric orientation (cf. Figure 3(b)). This was done to account for the non-linear perception of sound intensity (e.g., Stevens, 1957).

2.4. Procedure

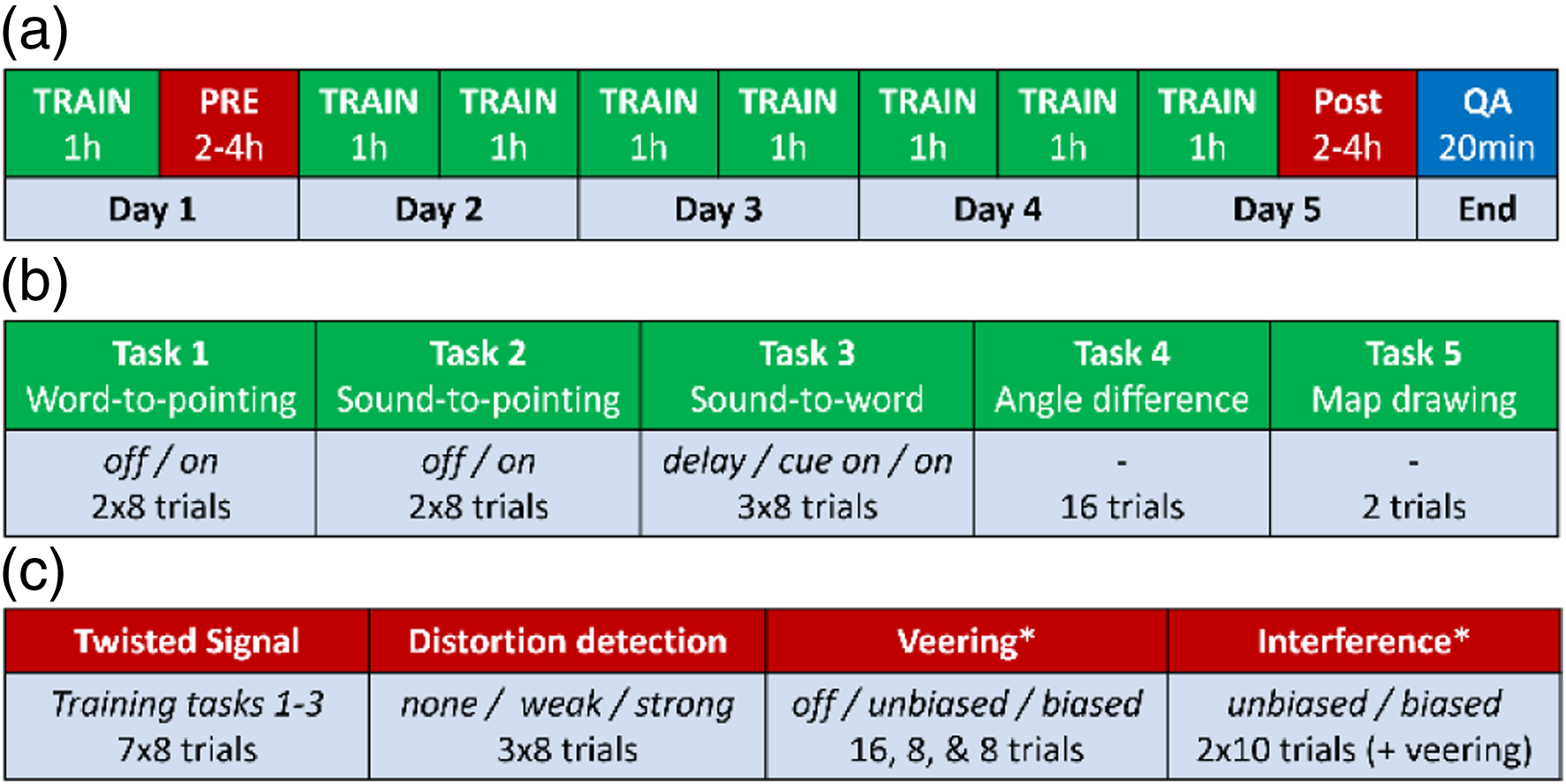

Figure 4(a) illustrates the overall time course of the study. During this time, participants engaged with the training tasks, completed the tests of automaticity before (pre) and after the training (post), and answered a questionnaire in the form of an interview at the end of the study. Experimental sessions were run by author AL. Procedure. (a) Overall procedure. TRAIN = training session, PRE/POST = pre- and post-training test session, QA = questionnaire. Approximate duration is given. (b) Training tasks. Off/on = without and with NaviEar signal; delay = delay without signal, cue-on = auditory cue until response. (c) Tests for automaticity: “none/weak/strong” = without any distortion, intact NaviEar signal/weak distortion/strong distortion; “off/unbiased/biased” = no NaviEar signal, unbiased NaviEar signal/biased NaviEar signal during test completion; half of the biased trials were biased to the left and half to the right (i.e., 4 each in veering and 5 each in interference). * blindfolded, ears plugged, step-size controlled.

2.4.1. Training procedure

Participants completed training tasks while wearing the NaviEar during everyday life navigation activities. These navigation activities mainly involved walking through Paris, for example, on their way to work or during a walk.

Figure S1 in the supplementary material illustrates how the different tasks appeared on the smart phone. We developed five different training tasks to encourage participants to learn the NaviEar signal (Figure 4(b)). Tasks 1 to 3 involved signal mapping between the following cue-to-response combinations: Word-to-Pointing (task 1), Sound-to-Pointing (task 2), and Sound-to-Word (task 3). In task 1 (Word-to-Pointing), participants were instructed to point with their head to the direction indicated to them as a verbal label, such as “NE” (north-east). In task 2 (Sound-to-Pointing) and 3 (Sound-to-Word), they were instead presented with a probe NaviEar sound representing a certain direction and asked to orient toward it with their head (task 2) or press the button that corresponds to that direction (task 3).

Tasks 1 (Word-to-Pointing) and 2 (Sound-to-Pointing) involved two sub-versions. In the first sub-version, the NaviEar signal was turned off when participants started the trial, and they had to point in the cued direction without NaviEar (off version). In the second sub-version, the NaviEar signal stayed on (on version) while participants pointed in the cued direction so that participants could use the NaviEar signal for their pointing. The sub-versions allow for examining whether improvement in performance in those training tasks is independent of wearing the NaviEar or specific to the use of the NaviEar. A trial with the second sub-version always followed a trial with the first sub-version, implying that tasks 1 and 2 were always presented as double trials.

Task 3 (Sound-to-Word) involved three sub-versions. The first sub-version required memorizing the auditory cue: The auditory cue was presented, followed by a silence of 2.5 s before participants could give their response (delay version). The second subtype aimed to measure performance without memory. For this reason, a response could be given directly after cue presentation, and the auditory cue stayed on until response (cue-on version). In the third subtype, the NaviEar signal went on after cue presentation (on version). Task 3 was always presented in triple trials with the three subtypes presented one after the other (delay, cue-on, and on versions).

In the Word-to-Pointing (1) and Sound-to-Word (3) tasks, 8 directions were cued, the 4 cardinal (north, east, south, and west) and the 4 intermediate directions (NE, SE, SW, and NW). The order of presentation of these directions was randomized and counterbalanced across sub-versions and trials. In the Sound-to-Pointing task (2), the cued direction was sampled randomly from the continuum of 360°. In all sub-versions of all three tasks, response times (time from cue presentation) and errors (the angular difference from the cue) were automatically recorded by the app. After each trial of these training tasks, feedback about the error was given by displaying the cued direction and responded direction on the mobile phone screen.

In addition, participants completed post-trial ratings after each trial of those tasks. Due to the situated setting, we could not control participants’ familiarity with and the noise level in the environment. The post-trial ratings were meant to examine these factors in our analyses and also to get an ongoing self-report of orientation throughout the training. Participants were asked to indicate on a scale from one to five (1) their familiarity with the local environment (“how often you have been here”), (2) their orientation (“how confidently you orient (N, E, S, W)”), and (3) the loudness of noise in the environment (“how loud it is around you”). At the end of each trial, the ratings set in the previous trial were displayed and participants were asked to adjust the ratings if the situation had changed (cf. Figure S1).

In a fourth task, participants were asked to estimate differences between angles corresponding to presented sounds (Angle-Difference). This task was more difficult than the others and required a higher mental effort and engagement, which we considered to be beneficial to learning (e.g., Dinsmore & Alexander, 2012; Lee et al., 1994; Royet et al., 2004). Participants would hear two NaviEar probe sounds in quick succession and indicate the angular distance between them using a slide whose displacement was visualized on a circle. The two auditory probes were determined randomly so that the angles they represented had a minimum difference of 20°. This minimum difference made sure that participants could hear the onset of the second probe sound even in a noisy street environment. We recorded accuracy and response times as in the signal mapping tasks, but we did not provide feedback in this task.

In a fifth task (map drawing), participants had to sketch the next intersection of streets they would come across with a pencil on a sheet of paper. To draw a map, participants needed to check precisely the orientation of the streets while wearing the NaviEar. Our hope was that the need for precision would naturally lead participants to integrate the NaviEar signal in their estimation of orientation. There was no guarantee that this would work; but we thought it would be good to have a diverse set of tasks to increase the likelihood that users internalize the NaviEar signal. This task was only used for training, and no data was recorded.

Trials of these tasks were presented in random order within one session. The only exception was the Angle-Difference task. It involved more complicated instructions and required more concentration than the other tasks. So, we thought it good to present it as one block at the end of each session. Each session involved all 5 types of tasks, with an overall of 74 trials that took between 45 and 60 min to be completed.

We aimed at a sufficient amount of training to reach ceiling effects in performance. Eight sessions of 45 min to 1 hour was more training than in previous studies that involved learning an orientation signal (Faugloire & Lejeune, 2014; Kärcher et al., 2012; Schumann & O'Regan, 2017). So, participants were told to complete at least 8 training sessions across 5 days (green slots in Figure 4(a)). On days without test sessions, participants were asked to complete at least two training sessions per day; on days with test sessions (first and last day), they completed at least one training session before the test session (Figure 4(a)). However, we did not have full control over the participants’ completion of the training tasks because the experiment was conducted in the field as part of their everyday life activities. As a result, the number of training sessions varied across participants, spread over a period of four to six days (see Table 1 for details, and Results for additional explanations).

2.4.2. Tests for automaticity

We used four different tests to measure the degree of automaticity participants achieved after training with the NaviEar (Figure 4(c)). Each test session (an entire pre- or post-test for an individual participant) took between two and four hours to complete. All testing took place in a park with a large open space in the north-east of Paris.

2.4.2.1. Rotated signal

In this test, we asked participants to complete a training session with a 90° or 270° shift in the coding implying that the sound “comes from” west or east, not north. Training tasks 2 to 3 (signal mapping) should become more difficult as the association between NaviEar sound and corresponding cardinal direction gets increasingly automatic.

2.4.2.2. Distortion detection

We developed the distortion-detection task to assess participants’ familiarity with dynamic patterns in the NaviEar signal. Participants walked freely over eight different paths (four paths in both directions, see Figure 5 for an example). The signal either behaved normally (control) or included a “distortion window” placed at one of two possible locations along the path (reddish triangles in Figure 5). Within a distortion window, the signal would either first decelerate (i.e., changing more slowly than normal) and then accelerate (distortion 1) or vice versa (distortion 2). Acceleration and deceleration compensated each other so that the contingency of NaviEar signal with orientation was kept undistorted outside the distortion area. High and low distortion intensities made the distortion more easy or hard to detect. Participants judged whether the signal was distorted at any point during the path (“yes/no”). If so, participants indicated where along the trajectory the distortion occurred. Participants completed 24 trials that included 8 control (no distortion), 8 high (easy), and 8 low (difficult) distortion trials (except for f2 who did only 4 easy and 4 difficult distortions). Paths, distortion types (distortion 1 or 2), and windows were selected at random. We varied the orientation of the paths across subjects, so that, within a participant, the path (and its mirror) would stay identical, but it would be laid out in a different cardinal direction for other participants. Examples of distortion-detection paths. Panels a and b show two of four different kinds of paths used in the distortion-detection task. Mirrored versions of each path were also used. The reddish triangles illustrate “distortion windows,” that is, the parts of the path in which the NaviEar signal could be biased. For the other paths, see Figure S3 of the Supplementary Material.

2.4.2.3. Veering

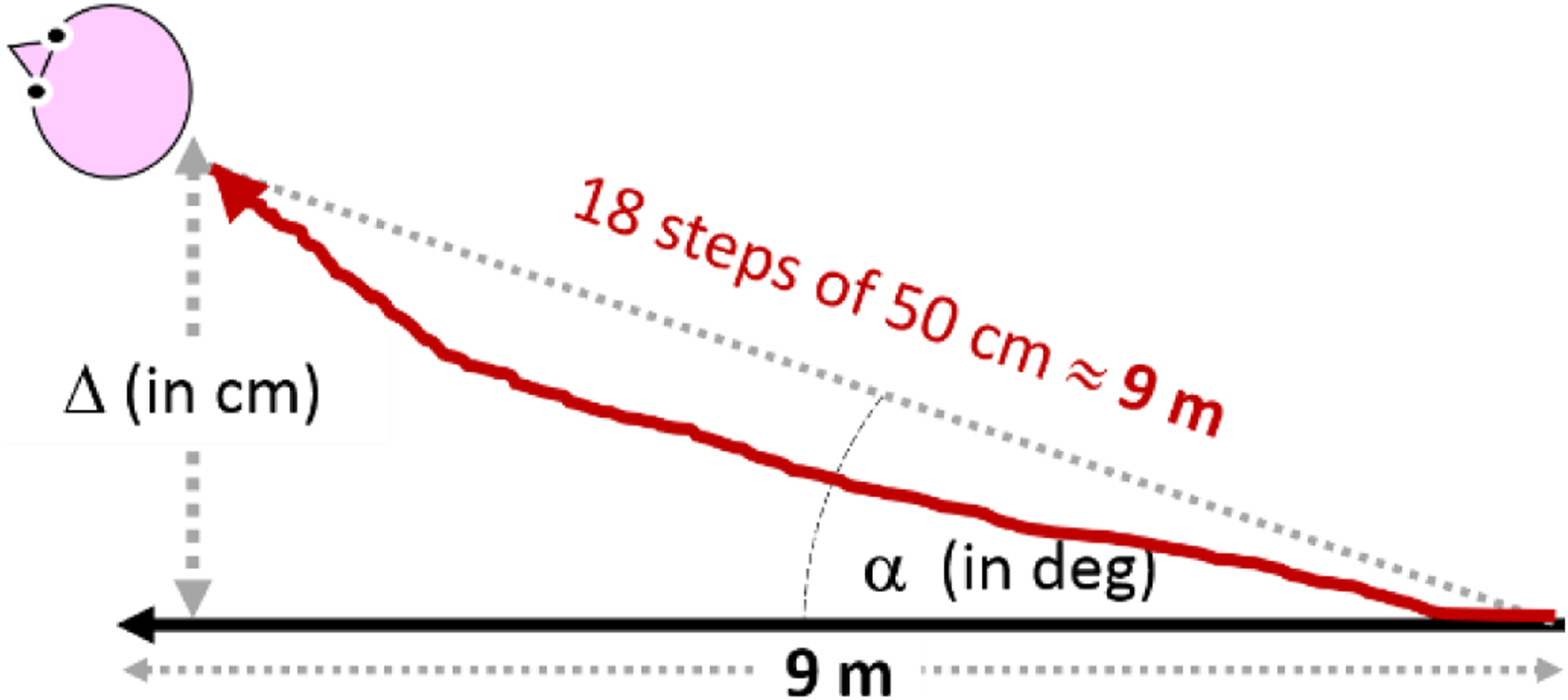

In the veering task, participants were instructed to walk 18 steps (approximately 9 m) straight ahead toward a visual target (cf. Figure 6). To quantify veering, we measured both angular deviation Example of veering path. The solid black line represents the straight reference line. The red line illustrates a veering path. It is approximately 9 m long because participants made 18 steps of 50 cm. Gray lines highlight distances. We measured: Δ = distance between target and straight path in cm;

In one session, participants completed the veering task eight times without (off version) and eight times with the NaviEar signal (on version). Half of the on-trials with NaviEar were biased to test for interference, as further explained below (see Interference). Under each condition, participants completed the task once toward each inter-cardinal direction (NE, SE, SW, and NW), resulting in overall 16 trials per session in random order. Participants were blindfolded, their ears were plugged and covered, and step-size was controlled at 50 cm by a sling when completing a trial of the task (by making steps that stretched the sling, participants knew that they were making 50 cm steps, as required for the task).

2.4.2.4. Interference

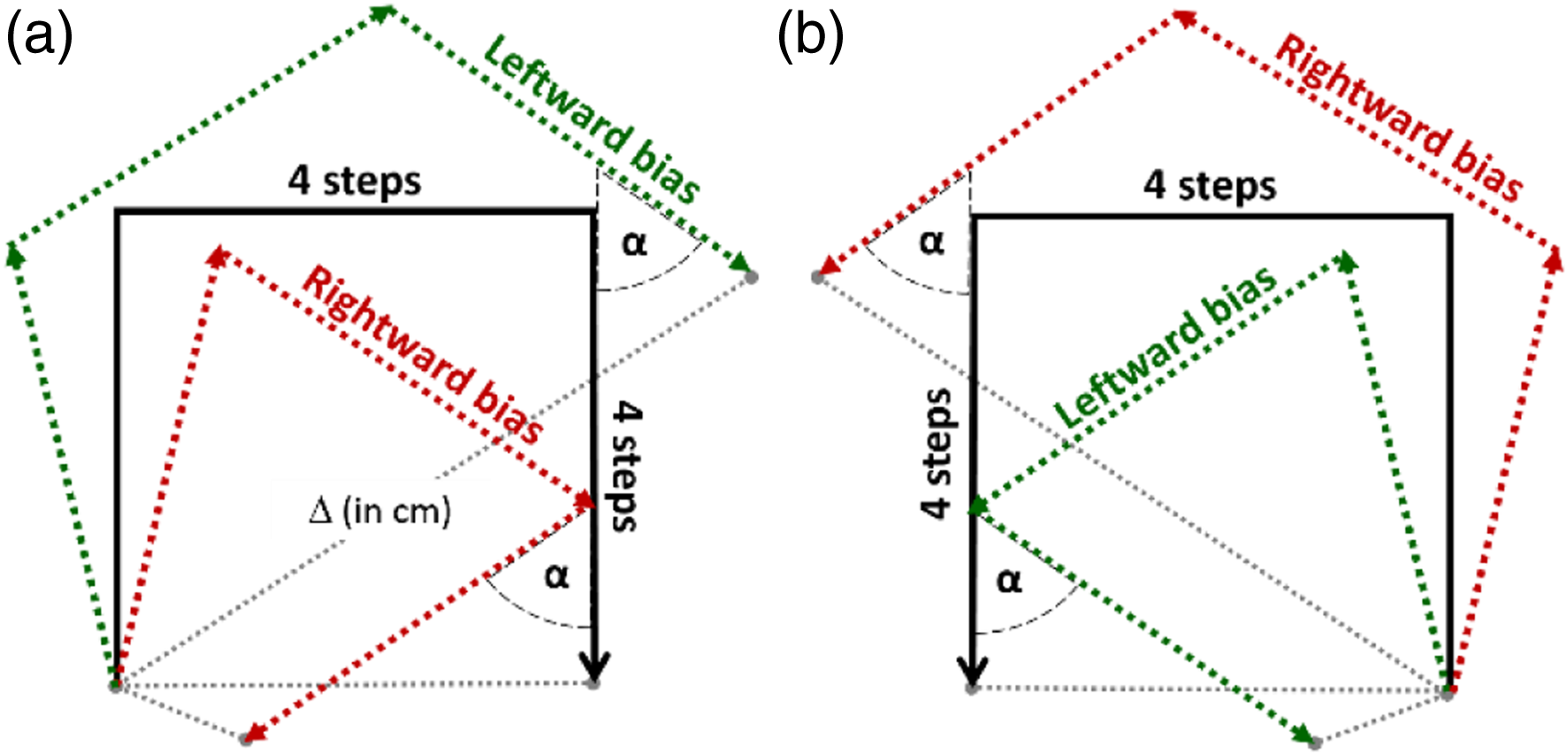

If the NaviEar signal becomes an integral part of the user’s sense of orientation, it should automatically be used in situations requiring users to orient. To test this aspect of automaticity, we implemented an “interference test,” during which we manipulated the NaviEar signal and observed whether users’ orientation showed a respective bias. Just as for the veering test, participants were blindfolded, ear-plugged, ear-covered, and their step-size was controlled. We furthermore told participants to ignore the NaviEar signal throughout the test, as it might misinform them about their orientation.

Participants’ task was to walk a path shown to them on a piece of paper before each task. For example, they were asked to walk four steps forward, take a 90° right turn, walk another four steps forward, take another right turn and again walk four steps forward (see Figure 7 for an illustration). The NaviEar signal was always on, from the beginning to the end of each trial. There were control conditions in which the NaviEar signal was unbiased (no bias) and the same as during training, and there were interference conditions, in which the NaviEar signal was biased. In interference conditions, the NaviEar signal could either be biased to the left or to the right, implying that the signal changed more slowly (as a function of angle) as participants turned left, and faster as they turned right (leftward bias), or vice versa (rightward bias). Example of interference path. In this example, participants had to walk 4 steps forward, turn and walk another 4 steps, and turn a second time and walk another 4 steps. Panels a and b illustrate the path with turns toward the right and toward the left, respectively. The green and red lines illustrate the path that corresponds to a leftward biased and a rightward biased NaviEar signal, respectively. Gray lines = distance Δ between target and actual end position in cm;

There were overall 11 paths. One of these paths was the same as the veering path, that is, a straight line, but with biased signal. The other ten paths were five paths and their mirror versions. Each path involved different numbers of steps and sequences of 90° turns in the same direction. For each of these five paths, there was one version that involved turns to the left, and a mirror version with turns to the right (Figure 7). Table S1 in the supplementary material describes all 11 paths. The NaviEar App recorded the end orientation, and we measured participants’ distance from the starting point using a tape measure.

There were 12 kinds of trials in the interference condition: Apart from the straight line, each of the 5 paths was once presented with a leftward and once with a rightward bias. There was an unbiased control condition for each of those 10 biased trials. The starting direction of a path was one of the 4 inter-cardinal directions. Turning direction (left or right), bias direction (leftward, rightward, or no bias), and start direction were randomly determined and counterbalanced across paths. The other two kinds of interference trials were left and right biased veering paths (straight lines). They were measured as part of the veering task. There were four unbiased and four biased trials (see above). Two of the biased trials involved a leftward and two a rightward bias.

2.4.3. Post-experimental questionnaire

After the last test session, participants came to the laboratory to be paid and complete a questionnaire of 20 items in total (see Table S2 of the supplementary material). This was done the day of or the day after the experiment. These questions solicited participants' subjective appraisal of how useful and comfortable they found the NaviEar, how they experienced the NaviEar, how well they could orient, and how much they paid attention to cardinal directions when orienting in everyday life. Fourteen of the 20 questions included a quantitative response (numerical item) consisting of a rating on a 1 to 5 scale.

3. Results

Data is available at Zenodo (Witzel, Lübbert, O’Regan, Hanneton, & Schumann, 2022) . As shown in Table 1, two participants completed less than 8 training sessions (f5 and f2), and two participants missed one of the test sessions (f1 and f6). Participant f2 skipped one training session for unknown reasons. Participant f6 completed unrotated instead of rotated-signal tasks in the second test session by accident (hence 9 training sessions for f6). The rotated-signal and interference data of participant 1 is missing for unknown reasons. The two missing training sessions of f5 had to be removed due to an error in handling the app. For the same reason, participant f3 was exposed to and trained with the 90-deg-rotated signal after the first rotated-signal test. Conditions were recoded accordingly (i.e., the 90-deg-rotated signal was considered as training after the pre-test). The supplementary Figure S4 illustrates the distribution of performance in the training tasks. Despite those differences in training procedure, f3 and f5 yielded performance similar to the other participants, and no participant seemed to be an outlier. We will further test for systematic effects of the different numbers of training sessions in each main analysis of training and test tasks below.

First, we analyzed the development of performance in the training tasks across days to assess the success of learning and the mastery of the device. Then, we tested effects of automaticity in each test task. In several of the below analyses, we applied similar tests to two measures (e.g., response times and errors). This requires correction for multiple testing. To account for this, we assess our predictions with two-tailed instead of one-tailed statistics even though our hypotheses are clearly directed. This implies that the p-value is twice as high as for one-tailed tests, which effectively corresponds to a Bonferroni correction for 2 tests. This approach is taken because it provides additional information for the Discussion. Cohen’s d is reported as a measure of effect size in the t-tests following below.

3.1. Training tasks

If participants learned successfully to use the NaviEar and to complete the tasks, performance should increase with training; that is, response times and errors should decrease across training sessions. For each participant, response times were aggregated by median across trials. Errors were calculated as the difference between the target direction and the response by the participant. In the Angle-Difference task, an error is defined as the deviation (absolute difference) of a user’s estimate from the real difference between the angles of the test sounds according to the NaviEar coding function. There was no interesting difference between the results for the different sub-versions. For this reason and to avoid clutter, we averaged response times and errors across subversions. For details on each subversion of the training tasks, consider supplementary Table S3-4 and Figure S5.

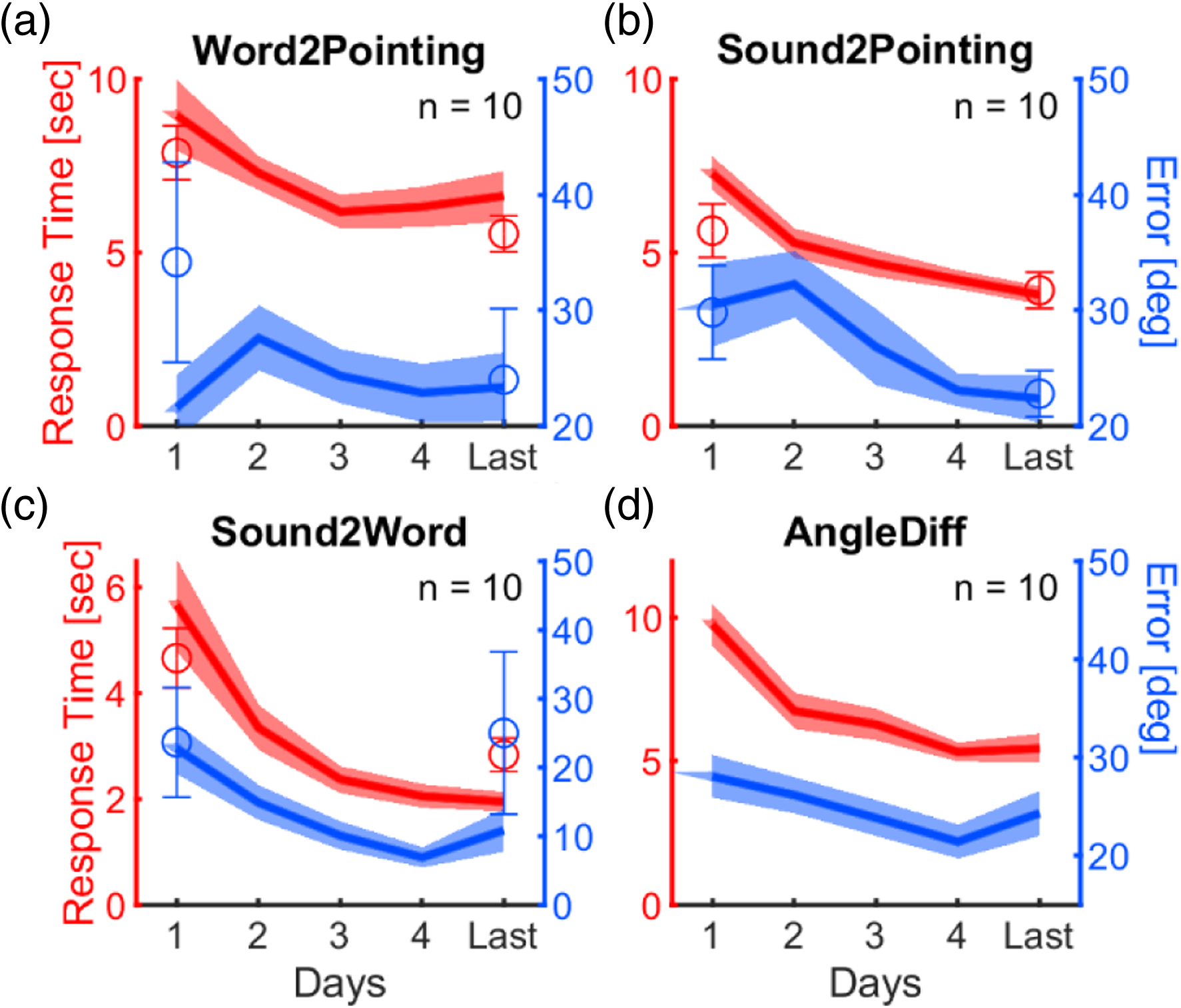

Figure 8 illustrates the development of response times (red curves) and errors (blue curves) over training days. For each participant, we calculated correlations between the number of training sessions and response times and errors, respectively, to determine whether they have a negative (decreasing) trend over the days of training. For this, we used Spearman correlations to account for nonlinearities. We determined the regression slopes for the rank-ordered data (i.e., the basis of Spearman correlations) and tested whether slopes were below zero with a t-test. According to these tests, response times and errors in all four training tasks decreased with sessions of training, min. d = −0.87, t (9) = −2.7, all p < .03. The only exception from this were the errors in the Word-to-Pointing task (blue curve in Figure 8(a)), the slopes of which did not differ from zero, d = −0.29, t (9) = −0.9, p = .38. Analyses with nonparametric sign tests instead of t-tests and with t-test for Fisher-transformed Pearson coefficients provide very similar results (see Table S4). These results show that participants became faster with training in all tasks and more precise in three of four tasks. Performance in training tasks over days. Red colors indicate response times; blue indicates average errors. Curves show results from the training tasks; symbols indicate results from the rotated-signal test (averaged across task subversions and participants). The shaded area and the error bars indicate standard errors of mean. In all panels, the x-axis refers to the number of training days. “Last” is the last session before the post-training rotated-signal test on the same day as that test. The red y-axis on the left represents response time in seconds, and the blue y-axis on the right is the difference between response and target angle in degree (error). Table S3 provides numerical details on averages and standard errors.

We examined whether the different numbers of training sessions across participants (cf. Table 1) was related to the learning effects, that is, the improvement in terms of decreasing response times and errors. For this purpose, we calculated correlations across the 10 participants between the number of training sessions and the response time and error slopes in each training task. The more negative the Spearman slope, the stronger the improvement across sessions. So, we expected a negative correlation between the amount of training and the slopes. Table S5 in the supplementary material provides detailed statistics. Errors in the Sound-to-Word tasks yielded a positive correlation coefficient close to significance, r (8) = .60, p = .06. This tendency contradicts the prediction. None of the other seven correlations was significant (ps > .15). So, there was no evidence that more training sessions led to higher levels of improvement.

Post-trial ratings were aimed to control for external factors that might affect the performance in the training tasks. Familiarity with the environment and orientation might increase the performance in the three training tasks involving self-orientation (Word-to-Pointing, Sound-to-Pointing, and Sound-to-Word) because participants might know cardinal directions even without the NaviEar. In contrast, loud noise would decrease performance, if it prevented participants to hear the NaviEar signal. For each participant, we calculated slopes of Spearman correlations between subjective ratings and performance across trials. As above, we tested across participants whether slopes differed from zero.

Ratings of orientation were negatively correlated with errors in the Word-to-Pointing, d = −1.3, t (9) = −4.0, p = .003, and the Sound-to-Word task, d = −0.92, t (9) = −2.9, p = .02. The negative Spearman slopes suggest that participants felt more oriented when their responses were more accurate (and vice versa). There were no other significant Spearman slopes between subjective ratings and performance. There were also no significant slopes for correlations between subjective orientation and familiarity across trials (all p > .11), which would relate the effects of orientation to the familiarity with the environment.

Loudness ratings were positively correlated with errors in the Sound-to-Pointing, d = 1.1, t (9) = 3.6, p = .006 and the Sound-to-Word tasks, d = 1.6, t (9) = 5.1, p < .001. The positive correlations indicate that participants made more errors when they reported louder environmental noise in the tasks that require listening to the NaviEar signal. All three types of post-trial ratings were rather flat over training sessions (cf. Figure S7), and there were no significant Spearman slopes between sessions and post-trial ratings for the three training tasks (all p > .15).

3.2. Rotated-signal test

With the rotated-signal test, we wanted to test if the flexibility of participants in interpreting the NaviEar signal decreases across training due to automatization. If this is the case, rotating the spatial orientation relative to the auditory signal should strongly reduce the performance (increase response times and errors) after training compared to the performance in the respective training tasks with the original coding of the training sessions. The change of the code should not affect their performance as strongly before as after the training because participants would not need to counteract any automatic response in the first session before any training.

In order to test this idea, we contrasted the performance (response times and errors) in the rotated-signal tests (circles in Figure 8) to the performance in the corresponding training sessions (curves in Figure 8). For this, we calculated a “rotation contrast,” which is the difference between the performance in the rotated-signal task and the performance in the training tasks of the same day of training (day 1 for the pre-training test, last day for the post-test). A positive rotation contrast indicates that performance was higher (response times and errors lower) in the training than in the rotated-signal task, and vice versa for negative rotation contrasts.

If performance in the rotated-signal test was not affected by the change of the signal, the performance should follow the overall trend of performance in the training tasks (curves in Figure 8). In this case, performance should be slightly better in the rotated-signal test than in the training task because the rotated-signal test is measured after the corresponding training task. As a result, the rotation contrast should tend to be slightly below zero. However, in the case of automatization during training, performance in the rotated-signal test should become relatively bad after training. Hence, the rotation contrast should be positive after training (post).

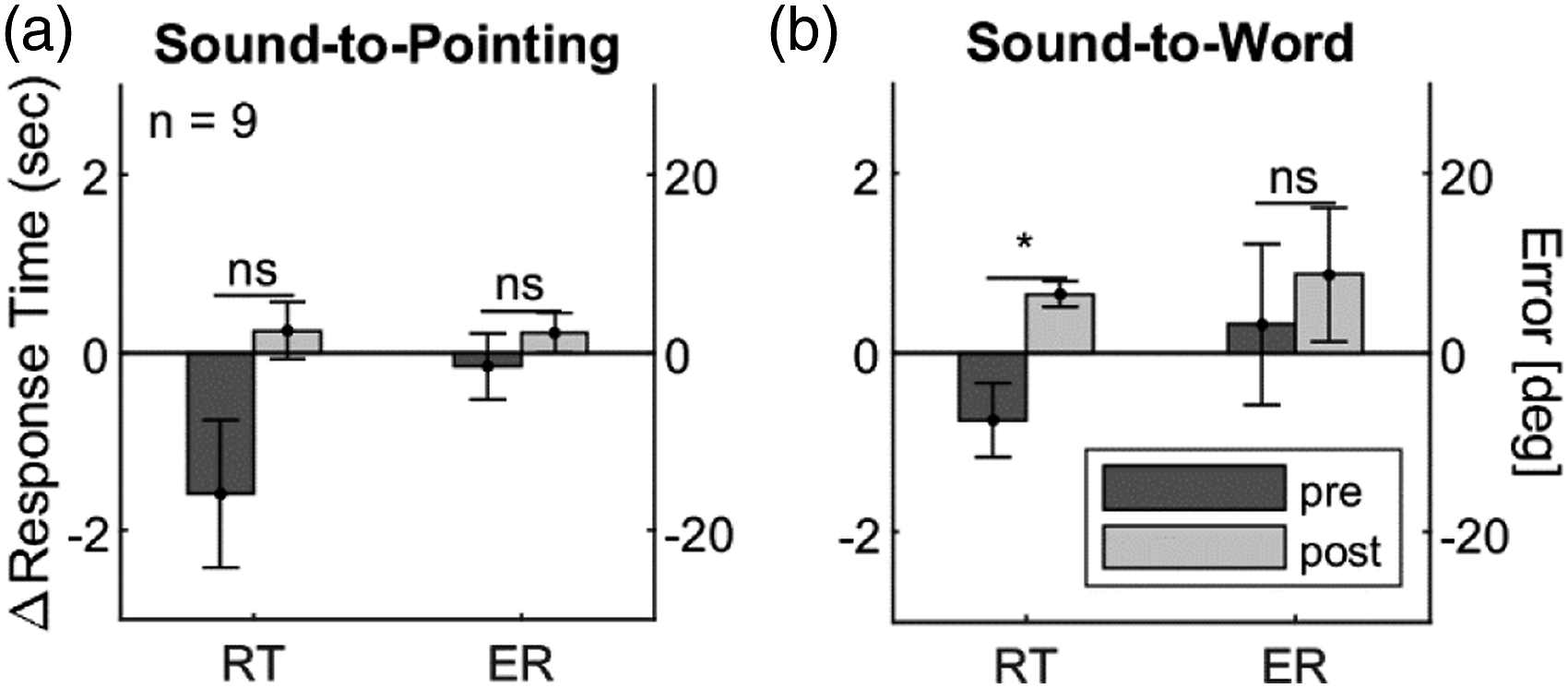

Figure 9 illustrates the rotation contrast for the two tasks that involved a response to the auditory NaviEar signal, namely, Sound-to-Pointing and Sound-to-Word. Again, we pooled the different sub-versions of these tasks to avoid clutter (for detailed results on each subversion, see supplementary Table S6). To test whether performance in the rotated-signal test differed from performance in the training task, we tested whether rotation contrasts differed from zero through a paired two-tailed t-test across participants. One participant (f1) missed the pre-training, and another participant (f6) the post-training rotated-signal test, reducing the number to nine participants per test. Rotated-signal test. The y-axis shows the difference between performance in the rotated-signal test and performance in the corresponding training task. The bars and the y-axis on the left side correspond to contrasts of response times (RT) in seconds and those on the right to contrasts of errors (ER) in degrees. Error bars here and in subsequent figures correspond to standard errors of mean. * p < 0.05.

In the pre-training tests (dark bars in Figure 9), none of the rotation contrasts differed from zero, all p > .11 (top part of Table S6). In the post-training tests (light bars in Figure 9), both tasks yielded average rotation contrasts above zero, especially in the Sound-to-Word task (light bars in Figure 9(b)). Higher response times and errors reflect the difficulty of changing from the original to the rotated NaviEar signal. However, only rotation contrasts of response times in the Sound-to-Word task were significantly above zero, d = 0.94, t (8) = 2.8, p = .02, but neither those for response times in the Sound-to-Pointing task, d = 0.25, t (8) = 0.7, p = .48, nor those for errors in both tasks, both p > .32.

If sustained training led to automatization, rotation contrasts should be larger in the post- than in the pre-test. In all tasks, average rotation contrasts for response times and errors were higher in post- than in pre-training tests. We applied a paired two-tailed t-test across participants to compare rotation contrasts in pre- and post-training tests. These comparisons could only include the eight participants with data in both, pre- and post-training tests. Only the difference between pre- and post-training rotation contrasts of response times in the Sound-to-Word task was significant (d = 1.12, t (7) = 3.2, p = .02), but neither those for response times in the Sound-to-Pointing task, d = 0.58, t (7) = 1.6, p = .14, nor those for errors in both tasks (Sound-to-Pointing: d = 0.14, t (7) = 0.4, p = .70; Sound-to-Word: d = 0.21, t (7) = .6, p = .57). After applying a Bonferroni correction for testing similar predictions in two tasks (alpha = .25), the results for response times in the Sound-to-Word task are still just so significant. Hence, these results provide weak evidence for automatization.

The extent of automatization depends on the success of the training sessions, and different participants might have engaged to different degrees with the training. Figure S8 in the Supplementary Material illustrates the pre-/post-comparisons for single participants. In the Sound-to-Pointing task, six of the eight participants produced higher rotation contrasts of response times in post- than in pre-training tests in the two tasks (cf. Figure S8(a)). This was the case for 7 of 8 in the Sound-to-Word task (cf. Figure S8(c)). To test for a relationship between training and rotated-signal tests, we calculated correlations between the Spearman-slopes in the (non-rotated) training tasks (Figure 8) and the rotation contrasts in the post-training tests (light bars in Figure 9(b)). If stronger training effects led to higher automatization, post-training contrasts should be higher when slopes are more negative, implying a negative correlation. However, only the correlation for errors in the Sound-to-Word task was significant, r (7) = −.70, p = .04 (Table S7 (left side) provides detailed statistics). So, there is some evidence for a relationship between training effects and rotated-signal effects.

We also calculated correlations between rotated-signal effects and the number of training sessions across participants. See Table S7 (right side) for detailed statistics. There was no significant correlation with rotated-signal effects on response times and errors in both, the rotated Sound-to-Pointing and Sound-to-Word task, ps > .38.

3.3. Distortion-detection test

The distortion-detection test assessed the degree to which participants integrated and coordinated the NaviEar signal with their orientation. This integration and coordination should allow them to detect the distortions in the NaviEar signal in the respective trials. Most importantly, if participants internalized the coupling between orientation and NaviEar signal through training, they should better detect distorted signals after than before training.

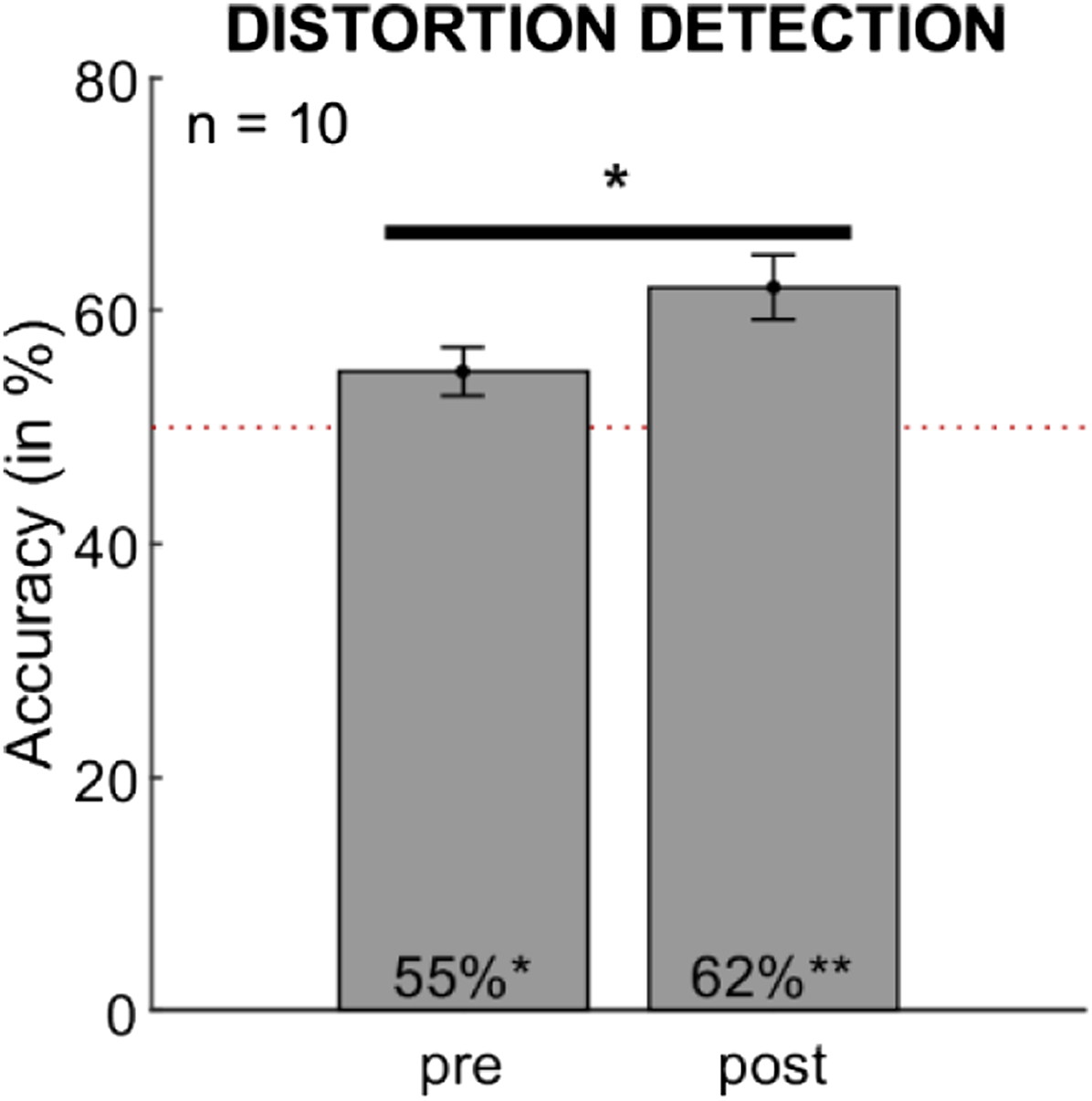

Figure 10 shows the proportions of correct answers before (pre) and after (post) the 5-day training. We used one-tailed t-tests to test whether participants’ accuracy was significantly higher than the chance level of 50% (red dotted line) and whether accuracy differed significantly between pre-training and post-training tests. Accuracy was significantly higher than chance level before, d = 0.73, t (9) = 2.3, p = .02, and after, d = 1.37, t (9) = 4.3, p < .001, training sessions. Accuracy was 17.9% higher for easy (strong distortion) than for difficult (weak distortion) trials, d = -0.93, t(9) = -2.9, p = .02. However, overall performance before (55%) and after training (62%) was not very high. These results show that it was very difficult for participants to detect smooth distortions in the signal. Accuracy after training is significantly higher than before training, d = 0.63, t (9) = 2.0, p = .04. This result implies that participants are slightly better in detecting distortions after than before training. This suggests that participants might have learned the coupling between sound and orientation across training. However, the effect of training seems to be rather weak. Distortion-detection test. The height of the bars indicates the frequency of correctly identifying whether the NaviEar signal was distorted or not. The dotted red line indicates chance level. *p < .05, **p < .01.

There was neither a significant correlation with Spearman slopes for response times and errors in the training tasks, ps > .25, nor with the number of training sessions, r (8) = −0.16, p = .67. The effects in the distortion-detection task did not depend on amount and success of training (for details, see Table S8).

3.4. Veering test

If participants integrate the NaviEar in their orientation behavior, the NaviEar should reduce veering. Moreover, veering should further reduce with training if training produces the internalization and automatization of the NaviEar signal. Veering might reduce in both conditions (with and without signal) across sessions due to general learning of how to accomplish the task. However, if there is specific learning of the NaviEar signal, the reduction of veering should be stronger with than without the NaviEar signal.

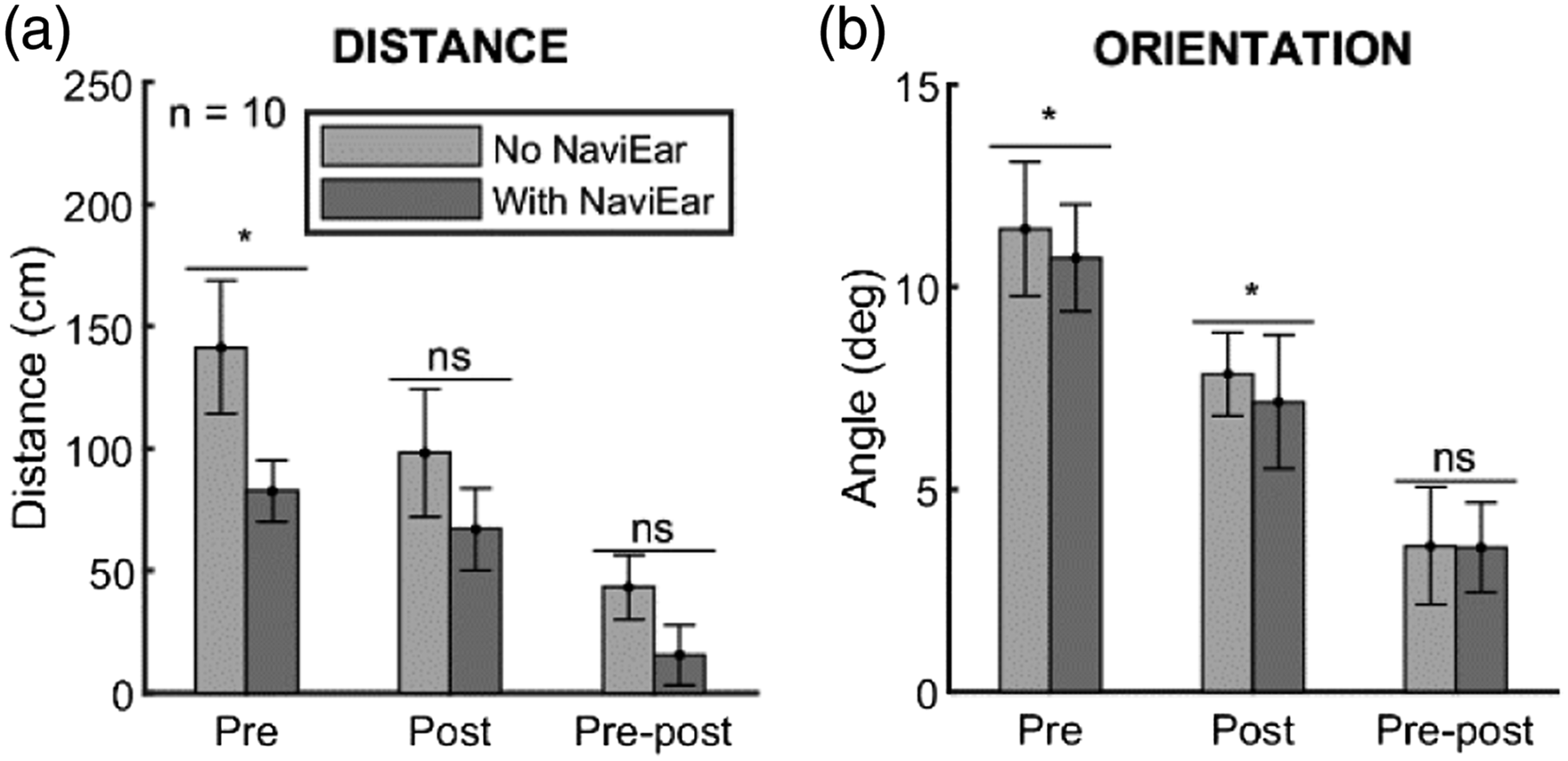

Figure 11 illustrates the amount of veering, measured as distances in cm from the straight line (panel a) and angular deviations in degree from the orientation of the straight line (panel b). We calculated a repeated measures analysis of variance with the factors condition (no NaviEar and with NaviEar) and session (pre and post) for distances and orientations, separately. For both distances and orientations trials with NaviEar (dark bars) yielded significantly less veering than without NaviEar (light bars), F (1,9) = 9.66, p = .013, η

p

2

= 0.518, and F (1,9) = 12.80, p = .006, η

p

2

= 0.587. These results are in line with the predictions: They support the idea that the NaviEar generally reduces veering and improves orientation. Veering test. The height of the bars indicates the deviation from a straight line in the veering test in terms of distance in centimeters (a) and in terms of angular differences (b). Pre-Post = Difference between pre- and post-test. *p < .05.

Concerning the effect of training, veering in post-training sessions (center groups of bars) did not significantly differ from veering in pre-training sessions (left groups of bars) (distances in panel a: F (1,9) = 4.13, p = .07, η p 2 = 0.315; orientation in panel b: F (1,9) = 0.19, p = .67, η p 2 = 0.021). Moreover, there was no interaction in the predicted direction, according to which the effects of learning would be higher with than without NaviEar, F (1,9) = 2.13, p = .18, η p 2 = 0.191 and F (1,9) = 0.0004, p = .99, η p 2 = 0. Instead, average deviations went to the opposite direction, indicating more learning without than with NaviEar (cf. rightmost group of bars).

Table S9 in the supplementary material provides details on paired, two-tailed t-tests. They showed that veering was significantly lower with than without the NaviEar in both the pre- (d = 0.74, t (9) = 2.3, p = .04) and the post-training session, d = 0.96, t (9) = 3.1, p = .01, when measured in terms of orientation (left and center groups of bars in panel b). This, however, was only the case for the pre- (d = .98, t (9) = 3.1, p = .01), but not for the post-training session, d = 0.38, t (9) = 1.2, p = .26, when veering is determined in terms of distances (left and center groups of bars in panel a). These results support the idea that participants showed less veering with than without NaviEar. However, contrary to our prediction the benefit of using the NaviEar did not increase with training.

We examined the effect of training on veering through correlations between training slopes (of each training task) and veering deviation and orientation with or without the NaviEar. We expected that stronger learning in training tasks (more negative slopes) should be correlated with higher improvement in veering after training (more negative post-pre difference), resulting in positive correlations. Table S10 provides all correlations. When measured in terms of orientation, there was a positive correlation between slopes for errors in the Sound-to-Pointing task and veering effects with the NaviEar on, r (8) = .64, p = .045. Slopes for errors in the Sound-to-Word task yielded a similar tendency that just missed significance, r (8) = .63, p = .0502. There were also significant positive correlations between the numbers of training sessions per participant (cf. Table 1) and their veering effects in the condition with NaviEar for both measures, distance (r (8) = .78, p = .007) and orientation (r (8) = .69, p = .03). There were similar tendencies in the veering condition without NaviEar, but they just missed significance (r (8) = .62, p = .06; r (8) = .59, p = .08). These correlations suggest that participants with higher levels of training and learning in those training tasks tended to improve their veering after training more than did participants with less training and learning. However, it is important to note the inconsistency of those correlations across tasks and measures.

3.5. Interference test

If the interpretation of the NaviEar signal becomes strongly automatized with training it should interfere with the user’s orientation and bias the user to orient in the direction in which the signal is biased (see Figure 7 for illustration). Such interference should occur after training (post-test) when automatization is high, but not or much less before training (pre-test) when automatization is low.

The straight lines and 90-deg turns of each of the 11 paths define a target location and orientation of the participant (arrows in Figure 7). The location has a specific distance from the starting point (horizontal dotted line in Figure 7). A bias would result in a deviation from the target orientation (

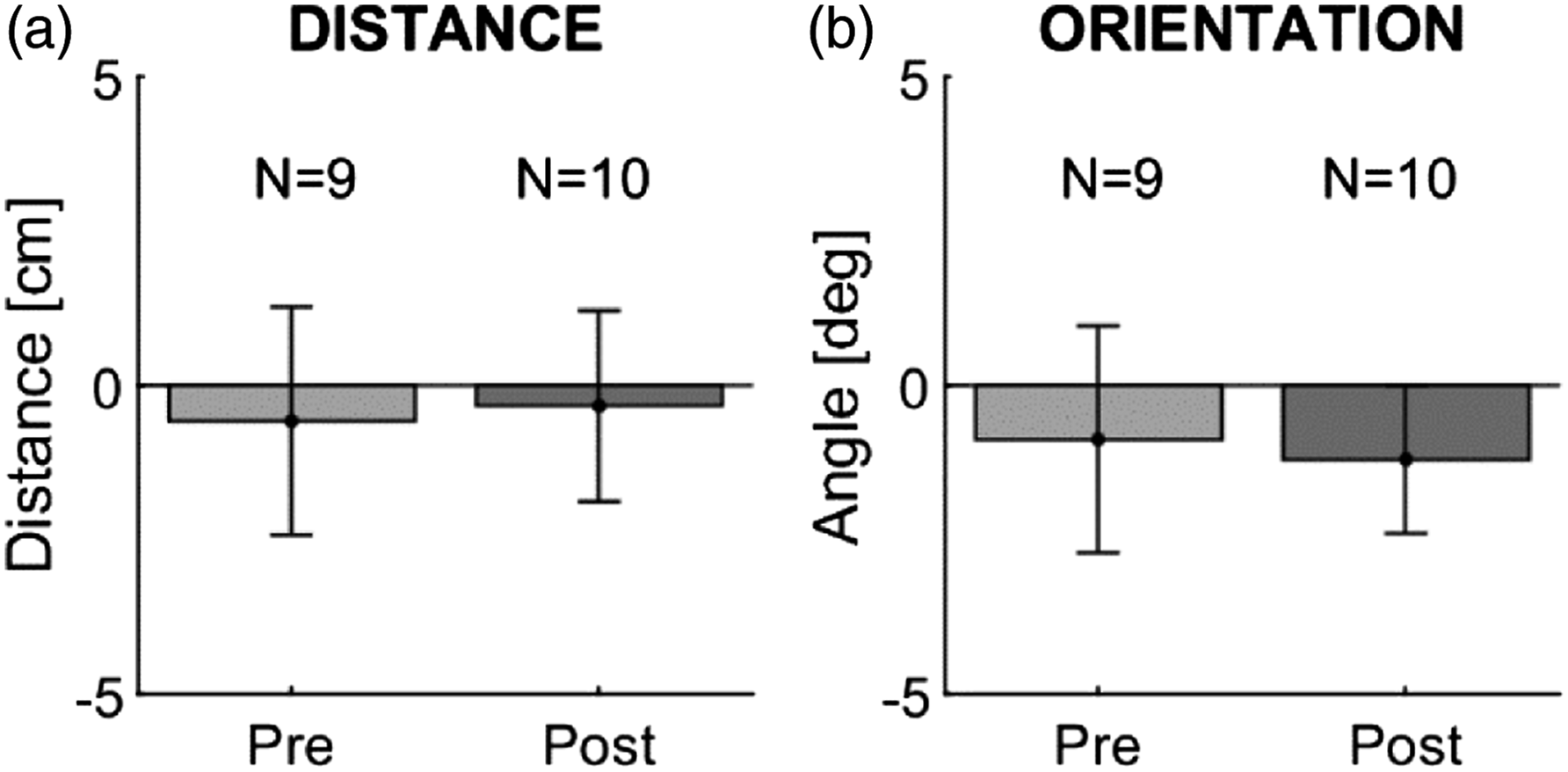

Figure 12 illustrates the differences between biased and unbiased trials averaged across participants. We calculated two-tailed paired t-tests to test whether deviations in biased trials differed from zero and whether deviations differed between pre- and post-training sessions. For details, see lower part of Table S9. Neither distances nor orientations differed significantly from zero in both, the pre- and the post-training sessions, min. d = −0.32, t (9)=-1.0, p = .34, and there was no significant difference between pre- and post-training sessions, both p > .79. These results do not provide any support for our predictions and hence the idea that the NaviEar signal automatically interferes with navigation. Interference test. Bars indicate the deviation from the paths in the interference test in terms of distance in centimeters (a) and in terms of angles (b). Positive values along the y-axis represent deviation from the paths in the same direction as the bias in the signal, and negative values indicate deviation in the direction opposite to the bias in the signal.

If interference effects were related to training, we expected stronger interference effects (difference between bias after and before training) when training slopes were more negative (negative correlation). See Table S11 for details. There were no significant correlations. However, slopes for errors in the Sound-to-Pointing (r (7) = −.59, p = .096) and Sound-to-Word (r (7) = −.61, p = .08) tasks yielded marginally, but non-significant negative correlations with interference in orientation. There was no correlation between the number of training sessions and inference effects measured as distance (r (7) = .16, p = .68) or orientation (r (7) = −.36, p = .34).

3.6. Post-experimental questionnaire

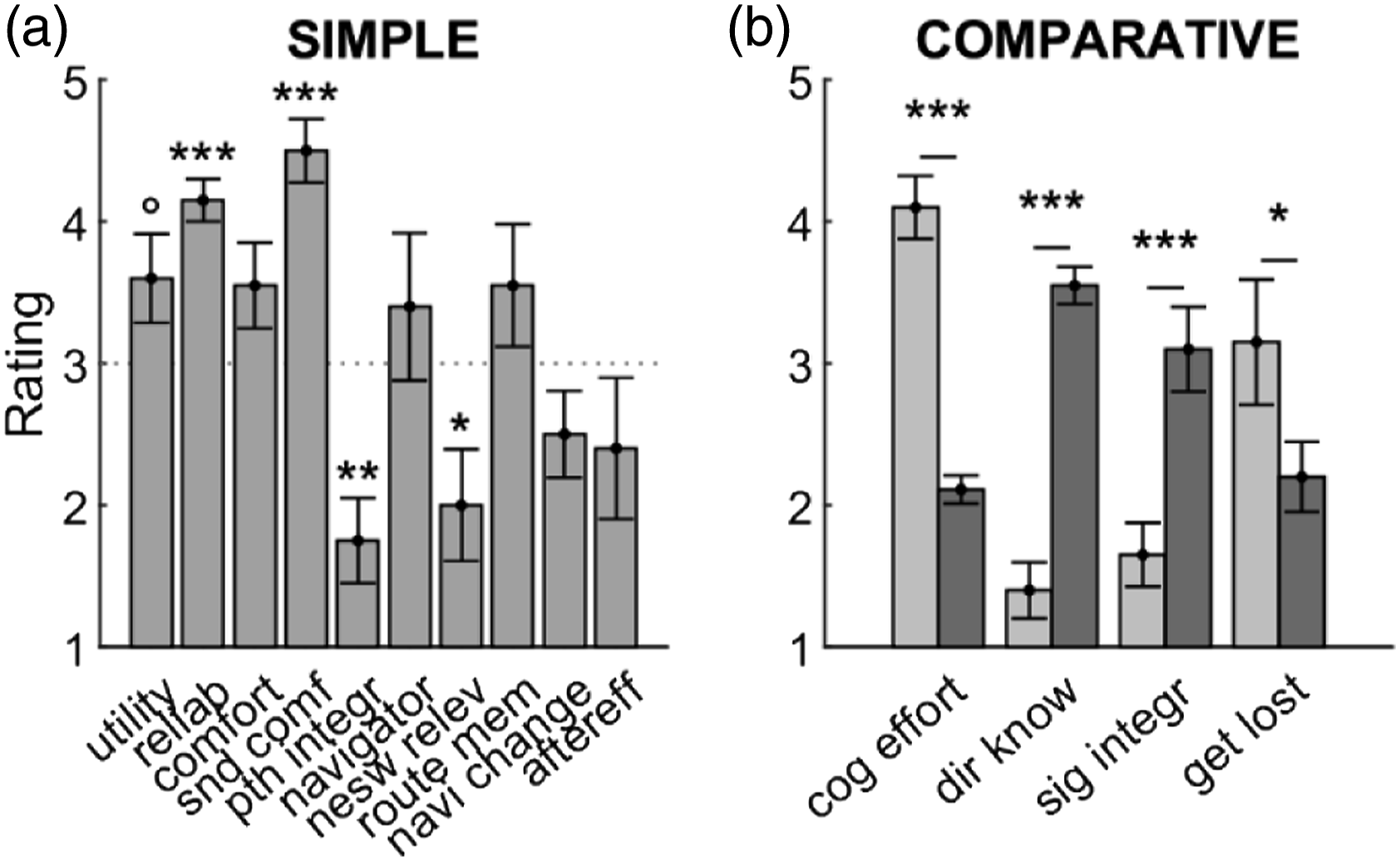

Figure 13 illustrates the ratings (numerical items) of the post-experimental questionnaire. Supplementary Table S12 reports the qualitative answers and comments.

To evaluate ratings, we tested with a two-tailed t-test whether ratings differed from 3, the center rating between 1 and 5. Users rated the overall utility of the NaviEar with on average 3.6, which missed significance (utility in Figure 13, d = 0.60, t (9) = 1.9, p = .09). Participants felt that the NaviEar signal provided reliable information about cardinal directions, as indicated by a rating of 4.2 of 5 (reliab in Figure 13, d = 2.42, t (9) = 7.7, p < .001). The comfort rating for the NaviEar in general was 3.6, but the difference from 3 did not reach significance (comfort, d = 0.58, t (9) = 1.8, p = .10). Almost all participants responded that the bubble sound of the NaviEar was very comfortable as shown by the average rating of 4.5 of 5 (snd comf, d = 2.12, t (9) = 6.7; p < .001). As the main drawback in the utility and comfort of the NaviEar, users indicated the effort of charging, mounting, and connecting the three separate items of the NaviEar (mobile phone, headset, and sensor). Results of post-experimental questionnaire. Panel a illustrates average rating responses to simple questions. Panel b shows responses to comparative questions. The questions about knowing directions (dir know) and getting lost (get lost) ask participants to compare the situation with and without NaviEar. The questions about cognitive effort (cog effort) and signal integration (sig integr) ask participants to compare the situation before and after training. *** p < .001; **p < .01; * p < .05 (two-tailed).

We also asked users to rate (1) whether they always knew the direction they were heading when wearing and when not wearing the NaviEar (dir know) and (2) how easily they got lost with and without the NaviEar (get lost). Paired two-tailed t-tests showed that users felt they knew better where they were heading (average rating of 3.5 with vs. of 1.4 without NaviEar; d = 3.03, t (9) = 9.6, p < .001) and that they got less often lost when using the NaviEar (M = 2.2 vs. M = 3.2; d = −0.94, t (9) = −3.0, p = .02). These results support the idea that the NaviEar is useful for orientation according to subjective evaluation. However, participants also responded they usually do not think in terms of cardinal directions when they navigate through the city (nesw rel, d = −0.80, t (9) = −2.5, p = .03). They also did not feel they do better in remembering their path through training with the NaviEar (pth integr, d = −1.32, t (9) = −4.2, p = .002).

Concerning the efficiency criterion of automaticity, we also asked participants to rate (1) how much effort or thinking was required to use the NaviEar and to do the training tasks (cog effort), and (2) how integrated the NaviEar signal was into normal hearing without requiring focusing on either the NaviEar signal or usual environmental sound cues (sig integr). They had to give these ratings by comparing retrospectively between their impressions before and after they completed the training tasks. Users felt they needed significantly less cognitive effort to interpret the NaviEar after than before the training (d = −3.51, t (8) = −10.5, p < .001; one answer missing). According to their ratings, the NaviEar signal was almost twice as well integrated after (M = 3.4 of 5) than before (M = 1.7) the training, d = 2.12, t (9) = 6.7, p < .001. In their comments, some participants anticipated that they would become even more automatic in interpreting the signal when given more time for training.

Concerning changes of perceptual feel due to training, several participants reported sudden insights about their orientation (“aha-effects”). For example, they realized that streets and places familiar to them were actually oriented in rather different ways than they had thought. Most interestingly, half of the participants (i.e., 5 out of 10) experienced “aftereffects.” Some of them felt as if they still heard the NaviEar signal (i.e., the bubble sound changing as a function of head orientation); others reported an immediate and accurate awareness of cardinal directions during the minutes after taking off the device.

4. Discussion

In sum, Results showed a clear increase in performance (lower response times and errors) across sessions in all four training tasks (Figure 8), except for errors in Word-to-Pointing (blue curve in Figure 8(a)). In contrast, evidence for automaticity was weak and contradictory: Results in the rotated-signal (Figure 9) and distortion-detection tasks (Figure 10) went in the predicted direction, but were very small, missed significance (Figure 9), or were just above significance (response times in Figure 9(b); Figure 10). In contrast, results from veering (Figure 11) and interference tasks (Figure 12) did not provide any evidence of automatic effects of the NaviEar on orientation during navigation. According to the post-experimental questionnaire, participants found the NaviEar useable and comfortable (Figure 13(a)), and they had the impression their use of the NaviEar improved across training (Figure 13(b)).

4.1. Usability and usefulness of the NaviEar

The increase in performance across training sessions (Figure 8) indicates that participants successfully learned how to handle and interpret the NaviEar. The only exception was the errors in the Word-to-Pointing task (blue curve in Figure 8(a)). When comparing the on- and off-condition of the Word-to-Pointing task (Figure S5(b)), the presence of the NaviEar-signal increased accuracy, but no learning took place across sessions. This observation indicates that the NaviEar signal could be cognitively interpreted up from the start, and the cognitive interpretation seemed not to benefit from additional learning.

The second day of training seemed to produce a local increase of errors in the Word-to-Pointing, Sound-to-Pointing and maybe the Angle-Difference task (blue curve at day 2 in Figure 8(a), (b) and (d)). This dip in performance coincides with a decrease of subjective orientation in the post-trial ratings (Figure S7(b)). It may be explained by participants’ confusion when the NaviEar signal was changed back after the rotated-signal test. In all four tasks, response times most steeply reduced after the first training session (cf. day 1 and day 2 in Figure 8). This fast improvement suggests that little training was necessary to reach a high level of behavioral mastery of the NaviEar.

The negative correlations between errors and subjective orientation in the post-trial ratings indicate that participants’ feeling of orientation improved thanks to the use of the NaviEar. Familiarity, as measured through subjective ratings, did not explain those correlations with orientation. However, an increased subjective impression of orientation might also result from the feedback from the tasks, rather than being the result of mastering the augmented signal.

In addition to the results from the training tasks, participants showed less veering with than without NaviEar already in the pre-training session (Figure 11). Performance in the distortion-detection test was already above chance in the pre-training session, even though only slightly (Figure 10). These results further support the idea that participants were able to understand the NaviEar signal and to use it for orientation without sustained training.

Figure S6 in the supplementary material allows for comparing the average results in our training task with those in previous, related studies. These comparisons only give a rough reference for the evaluation of the NaviEar because all those studies used different tasks and devices. Average orientation error in our training tasks in the first session were generally equal or lower than those in our previous study (10.7 to 47.8° in Schumann & O'Regan, 2017). They were also equal or lower than the landmark-pointing performance by a blind person (∼22 to 48° in Kärcher et al., 2012) and thresholds for discriminating self-orientation in a rotation chair (∼32 to 40° in Goeke et al., 2016). In contrast, the orientation errors (8.9–14.6°) in Faugloire and Lejeune (2014) were smaller than errors in our first session, and they were close to errors in the Sound-to-Word and smaller than errors in Word-to-Point and Sound-to-Point tasks in the last session. These observations are rather surprising because the studies of Schumann and O'Regan (2017), Goeke et al. (2016), and Faugloire and Lejeune (2014) were conducted at a fixed location (no movement apart from rotation) in the laboratory. The higher control of interfering factors in the laboratory was expected to produce more accurate and precise measurements. That our measurements under field conditions produced performance better or close to measurements under those controlled conditions highlights the precision of our measurements and an apt use of the NaviEar.

Together, these observations suggest that the NaviEar is easy to interpret and use for orientation. This conclusion is further supported by the positive subjective ratings in the post-experimental questionnaire: Apart from practical issues about mounting and connecting the gear, the ratings indicate that the NaviEar is generally easy and comfortable to use.