Abstract

In this paper a control system of a gait rehabilitation training robot based on Brain-Computer Interface (BCI) and virtual reality technology is proposed, which makes the patients' rehabilitation training process more interesting. A technique for measuring the mental states of the human and associated applications based on normal brain signals are examined and evaluated firstly. Secondly, the virtual game starts with the information from the BCI and then it runs in the form of a thread, with the singleton design pattern as the main mode. Thirdly, through the synergistic cooperation with the main software, the virtual game can achieve quick and effective access to blood oxygen, heart rate and other physiological information of the patients. At the same time, by means of the hardware control system, the start-up of the gait rehabilitation training robot could be controlled accurately and effectively. Therefore, the plantar pressure information and the velocity information, together with the physiological information of the patients, would be properly reflected in the game lastly and the physical condition of the patients participating in rehabilitation training would also be reflected to a great extent.

Keywords

1. Introduction

Rehabilitation robots are the new application of robot technology in medicine which has wide market prospects. The gait rehabilitation training robotic system based on virtual reality technology can not only allow the patients to receive rehabilitation training, data be recorded and the rehabilitation condition of the patients analysed and ascertained by means of robots[1], it can also encourage the patients to participate in rehabilitation training actively by means of virtual environments using training software based, so the effects of rehabilitation training can be further improved[2]. Many patients with motor dysfunction stay at home for many reasons which not only runs counter to recovery from the disease and the self-care ability of patients, but also means patients suffer from hard to attention focusing. Many rehabilitation instruments for the lower limbs presented on the market only aim at the patients' lower limbs through single and simple repetitive training, but do not cultivate the patients' interest in training, and do not consider training the patients' attention either[3-7]. In this paper, by means of getting the patients' psychological information, physiological information and motion information, proposes a rehabilitation system that can make the patient have the feeling of participation and the virtual games together with the movement of the robot are the main training modes.

As we know, brain waves are the bioelectrical signals produced when humans think and this brain activity is essential to understanding the mental states of humans. Although the brain is the most complex organ in the body, advancements in neurology have produced theories on the relationships between brainwave characteristics and mental states[8]. With the advancements in neurology, fMRI (Functional Magnetic Resonance Imaging) or infrared spectroscopes provide accurate interpretation of the brain nerve locations. This requires massive instrumentation and places several constraints on the subject. The future of electroencephalograms (EEG) research lies in the mobile recording and real-time feedback of emotional and cognitive states. In the short-term, the widespread use of EEG technology by the general public is most likely going to rely on inexpensive, easily usable (mobile, gel-free), single channel acquisition[9]. The challenge is to consolidate accurate measurements to capture subtle signals using simple instrumentation, then interpret the data into meaningful signatures. The conventional multi-point EEG requires a special headset and electrolyte gel that is unpleasant and which may lead to discomfort and result in the collection of erroneous data if applied during normal daily activity. Therefore, a lightweight, dry single-point EEG contact needs to be developed to solve this problem.

In this paper, based on BCI (brain-computer interface) technologies, a usability evaluation of NeuroSky's “MindSet” (MS) device is presented. Using non-invasive EEG with a dry electrode at the forehead, brain states could be measured and analysed without complex medical procedures. An aspect of interest is to investigate whether MS readings could be combined with user-generated data. The amalgamation of psychological and user-generated data would allow the programming of more sophisticated user models[10]. An experimental setting would be set up to analyse MS usability in an assessment exercise.

By using the brainwave sensing headset named as MS made by NeuroSky Inc. which was worn by the patients, the patients' EEG signal would be collected and analysed in real-time. Then the attention levels, in other words, the focus levels, which are called the parameters of thinking activity of the patients satisfying certain threshold would be used as operation instruction, so the game software driven by ideas turns to work and the gait rehabilitation training robot turns to be in motion gradually. When the patient was lacking concentration, a voice prompt would be given. The idea controlling can give patients training to improve their attention focus levels and after motion the velocity of the patient's movement, together with the rehabilitation robot game, can match the interface in the game which can simulate the patient's interest and initiative to utilize the gait rehabilitation training actively. In this the rehabilitation training is not boring for the patient. It not only can relieve lack of motivation in the process of training and allow the whole rehabilitation training to be fun, it can also train the patient's attention span and let them regain confidence to overcome the disease and at the same time it is convenient for doctors and patients.

2. Control system structure

The whole control system consists of three parts: a control machine, a rehabilitation robot and the signal acquisition and transmission module.

The control machine sends an instruction to control the robot to do the corresponding mode of rehabilitation training, at the same time it also receives and displays the captured plantar pressure, velocity, heart rate, pulse, blood pressure, respiration and video information.

The rehabilitation robot is used to help patients to do recovery training exercises. It should not only reduce patients' leg force, but also should guide them to do rehabilitation training.

The signal acquisition and transmission module is the connection between the control machine and the rehabilitation robot. It acquires the plantar pressure, velocity, heart rate, pulse, blood pressure, respiration and video information, and all this information is fed back to the control machine in real-time. So the control machine can adjust the control mode in time.

The control system hardware consists of pressure sensors, a hardware circuit, a servo drive and a motor. The overall control system framework is shown in Figure 1, where PC is the control machine, SCC is the signal conditioning circuit, ADC is the analogue-to-digital converter, MCU is the micro control unit, WM is the wireless module, GRTR is the gait rehabilitation training robot[11].

Overall control system framework.

3. System software design

The software function part is mainly divided into three modules: video collection, data acquisition and motion control.

Video collection: during the patient training time, the moving images are captures and displayed on the PC display.

Data acquisition: the data portion needs to be captured to include rehabilitation training robot conditions and alarm information, rehabilitation equipment training information (speed, plantar pressure), and patients' physiological information (oxygen concentration, heart rate, respiratory rate and muscle tension).

Motion control: integrates two kinds of control mode: active mode and passive mode, according to the need, then the equipment operation is adjusted and the patients are trained. It should be mentioned that the active mode is if the patient wants to be trained independently, then the patient's physical condition is analysed and lastly rehabilitation training with the matched motor load is carried out for the patient. The passive mode is if the patient cannot be trained independently, then the rehabilitation equipment is operated to move mechanically and the lower limbs of the patient are drawn for repetitive training.

The specific function module flow is shown in Figure 2. It can be seen from the diagram that in the whole working process of the system signal acquisition and transmission module will feed back the obtained data to the control machine and then the data used as the parameter to adjust the next model training.

Software function module flow chart.

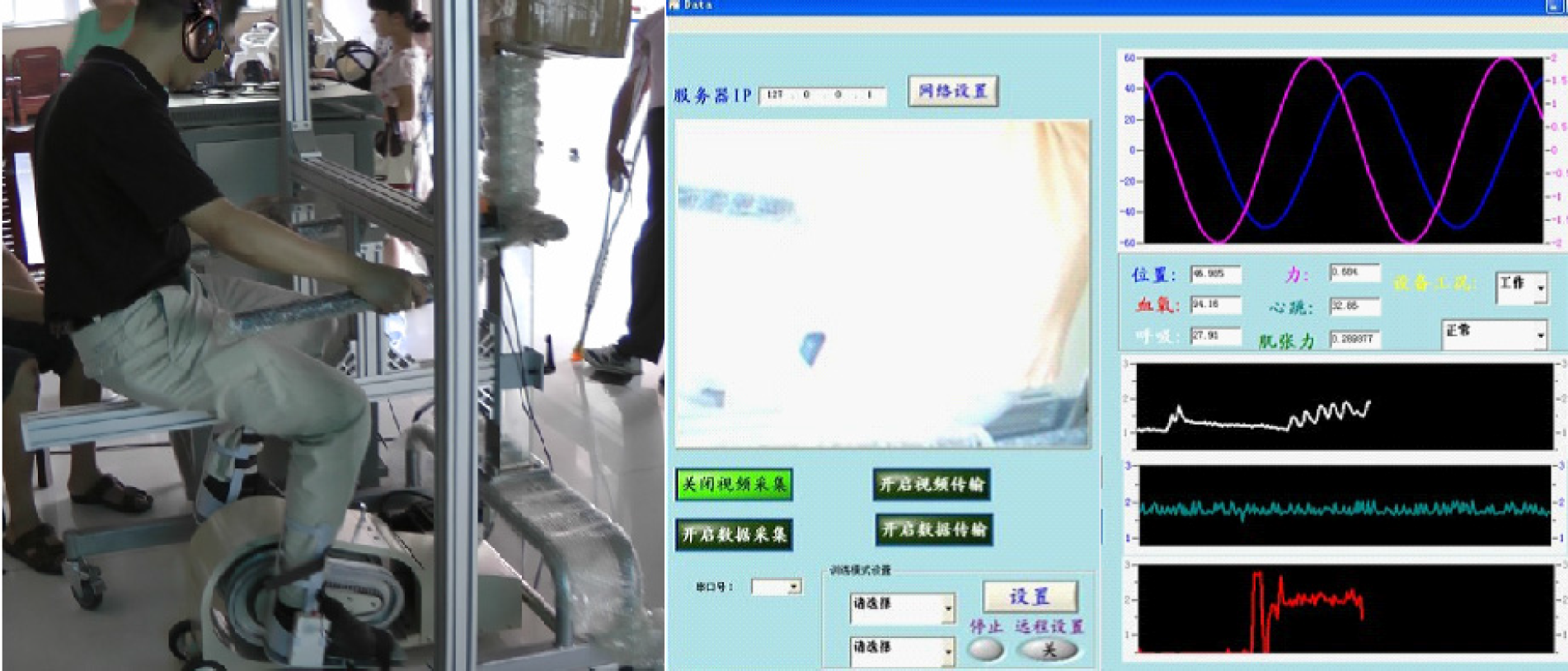

The acquired data, video and other specific information displayed by the software are shown in Figure 3. In the figure we can clearly see the plantar pressure, velocity, heart rate, pulse, blood pressure, respiration and video information. After a variety of data information is shown in curve, the doctor could observe the patient's movement condition more intuitively.

Experiments with patients and information display.

4. Immersion experiments and analysis

4.1 Virtual reality and the implementation details

We carried out some experiments and established the corresponding database of patients. The experiments picture is shown in Figure 3. The left of Figure 3 is a patient with a lower limb walking disorder wearing the MS and sitting on a chair with a LCD computer screen ahead on his left, and the right of Figure 3 is the control software interface where we can see the three modules of video collection, data acquisition and motion control. It should be mentioned that when the game begins, the screen turns to be the game interface and the LCD screen is started by the MS through a Bluetooth serial connection.

So-called “virtual reality” means letting the users interact with the environment in a natural way (including environment perceived and environment interventions), so the unreal feeling in the corresponding real environment, in other words, which is on the scene, is generated for the users. In the system, a virtual game was introduced by means of extracting the patients' focus level and their moving speed together with the rehabilitation machine movement as the ‘game parameters’, so the patients had the feeling of personally taking part in the game.

With the single MS sensor usually situated two centimetres above the eyebrow, the patients put on and adjusted the headset themselves. It should be mentioned that the MS has noise filters in place in order to ensure any noise (head movements, muscle artefacts etc.) is filtered out of the raw EEG. After the MS finished EEG signal acquisition, filtering and amplification (using the ThinkGear™ technology of intelligent chip) the NeuroSky eSense™ patent algorithm was used for data analysis, so the user's current mental state could be read in real-time[12]. ESense™ outputs two kinds of digital parameters – “attention” and “meditation”. However, in this paper only the “attention”, in other words, “focus”, which means the concentration degree of the users on something, is used. “Relaxation”, which means the user's current relaxation degree of mental state, is not measured in this paper.

The analysed out thinking activity parameter which characterizes the concentration level of the wearer, is the specific value from 1 to100 which represents the current level of attention of the user respectively. If the numerical value is greater, it indicates the wearer's current focus degree is much higher and vice versa. The computer uses the received thinking activity parameters which satisfy certain thresholds to be the control instruction for games, other software and the control machine being put into service (e.g., if the attention concentration reached 80, then the game software would be started and the control machine would be put into service). So using mental states to control virtual games and the robot working is realized. Figure 4 is the game interface of attention detection, when the focus level reaches a threshold, the sparks appear, the barrel turns to be burning and the game starts.

The game interface of attention detection.

Figure 5 is the interface after the game begins. In the game, two female roles represent the system, one male role represents the patient. After a large number of test data is integrated, two representative human motion velocities are selected as the speed values of two females in the game (one is for the human who is good at sports, the other is for the human who is not good at walking). Then the movement speed of the patient who is undertaking the rehabilitation exercises is extracted in real-time as the speed value of the male in the game.

The interface after the game begins.

After the start of the game, the winner is determined according to the movement speed among the three characters.

4.2 Game preparation

The whole game was written in the VS programming environment, using the cocos2d game engine. Throughout the game's writing process, the biggest problem was the data communication questions between the main game and the software program, and the socket mode and the address mapping mode were referenced. If the socket mode was used, the two processes to the same data often failed to achieve real-time access, if the process timer settings are not accurate, the data lag phenomenon would very likely happen. If the address mapping mode was used, the access data needed to be saved between the process of communication would likely be modified or destroyed by another program, and the data lag phenomenon would also very likely occur in this way. In synthesizing the above two methods, as well as the study of previous process communication programs, this paper introduces a new way of solving the problem of the data communication between the main game and the software program.

A specific class was defined to store the specially accessing data between the inter-process of communication. In order to prevent the accessing resource from being modified or damaged by other procedures, during the game prepared, a single case design pattern was used to design the special class. The code is as follows:

After the class was defined as datanalyse, then a variable named as m_instance of type datanalyse, as well as the reference function getInstance whose return value was called datanalyse were defined in a .Cpp document. Then at the time of visiting the specially appointed variable, some situation of data's being misunderstood or mistaken would not happen anymore.

In order to make the data access speed faster and timely, the method in this paper utilizes the game program into a thread, not the process, so that the game could share the data resource in the datanalyse class with the main software. Thereby time for data access was saved and the loss of the CPU and memory was also reduced. The code for opening the thread was as follows:

The game program was made to be threads in above code, and was named as Cocos2dMain.

Because a single case design model was used, the data access method was not the same as normal data access – the specific code for data being stored was as follows:

While the data were read in the game program, the code was different from normal read operations – the specific code was as follows:

In conclusion, the data access flow diagram between the main software and game process are shown in Figure 6.

The data access flow diagram.

As shown in the flow chart, the datanalyse class became the core portion of the whole data access process, so that the variables, such as m_instance, in this class could be defined as static type variables which could be called global variables by the other procedures in the process, in this the variables' life cycle could be extended to the whole process of the run time.

4.3 Analysis of “attention training” experiments

One of the characteristics of the MS reader is that it can be worn by different users producing different outputs. The ESense algorithm is a trade secret and cannot be described here. However, it is known that a dynamic self-learning ability using the so-called “slow adaptive” algorithm is used, which can finish dynamic compensation aiming at the fluctuation trends and individual differences in the normal range of different user brain waves. Due to the adoption of the adaptive technology, ThinkGear can be applied to different populations and different surroundings, and this allows it to have very good accuracy and reliability in these different applications. Furthermore, this adaptation would be fast and seamless without the need to train the device for a new user.

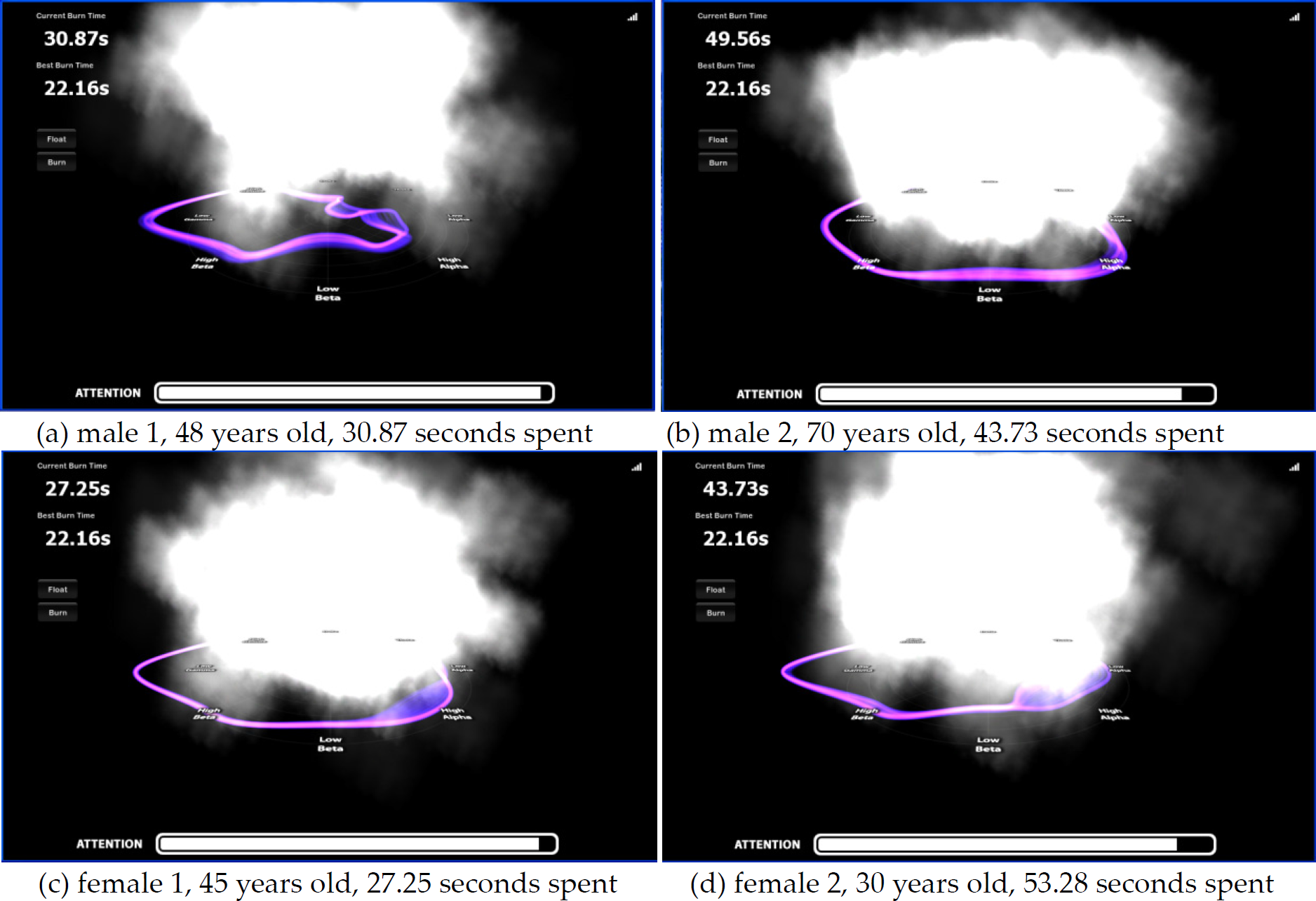

Four subjects with motor dysfunction who stay at home all day volunteered for the study (two males and two females) and took the attention training experiments. We set the threshold of focus as 80, that means as soon as the patient's attentionreaches or exceeds 80, it could be shown clearly on the screen that the sparks appear and the barrel turns to be burning. The author first undertook the experiments. The site photo of attention test for the author wearing the MS is shown in Figure 7 and the attention-time curve is shown in Figure 8, respectively. The author spent only 22.16 seconds to create the barrel burning and she concentrated on the test for the first minute and then relaxed for the second minute.

The site photo of attention test for the author wearing the MS.

The attention-time curve of the author wearing the MS.

The time for each subject to reach the threshold or in other words to make the barrel burning were recorded as shown in Figure 9.

The initial time for four subjects to reach the threshold before training.

Similar to the fact that exercising an underdeveloped muscle needs longer training, if the subjects want to skilfully operate eSense parameters through the control of their own brain ideation, they also need to spend a certain amount of time training. Typically, they can keep a close watch on an object or concentrate on a problem or other ways to control their concentration. They can gaze at a point on the screen or imagine that they are trying to accomplish actions to improve their concentration. For example, they watch the screen “attention strip” and try to imagine a pointer on the strip in to the numerical high local mobile, thereby pushing up the screen “attention” numerical value. After the training of “watching the screen ‘attention strip’ and trying to imagine a pointer on the strip in to the numerical high local mobile” for 30 minutes (occasionally relaxing for one to two minutes), the time for each subject to create the barrel burning are recorded as shown in Figure 10.

The time for four subjects to reach the threshold after 30 minute of training.

Furthermore, to show how the attention-time curve of the subjects changed before and after the attention training, we took the fourth subject who was a 30 year old man as an example, and the two attention-time curves before and after the training are shown in Figure 11 and Figure 12, respectively.

The attention-time curve of the fourth subject before training.

The attention-time curve of the fourth subject after training.

From the comparison between Figure 7 and Figure 8, we can see that by undertaking some kind of training for some time all of the four subjects succeeded in taking less time to maintain their attention to some degree – that was inspiring. Of course, a better training effect requires longer training and we are looking forward to the results of long-term training.

The experiment results showed that the game could help the users concentrate on the tests faster and give them the feeling of being in so-called “virtual reality” by personally taking part in the game, and thereby achieving the stated functionality basically and performing stably.

5. Conclusion

This paper presented a single case design model as the main way to access data. The thread was the basic unit to run, so that timely communication between the main control software and game program were realized, and the phenomenon of misreading and mistaking during access to the data process was avoided. After data acquisition, by means of the main software, the information of focus, speed, etc, would be passed to the game program in real-time and the information of speed was shown by characters that run in a speed display in the game in real-time. As the patients could focus much better and be immersed in virtual reality in the rehabilitation training, this allowed the recovery process to become more interesting. At the same time, the man-machine interaction trained patients to focus far better, aroused the enthusiasm of the patients and was also more conducive to the rehabilitation of the patients.

Footnotes

6. Acknowledgments

The authors would like to thank the financial support of the natural science foundation of Anhui Province (grant #1208085QF121) and the postdoctoral scientific research plan of Jiangsu Province (grant #1102038C). We are very grateful to the unknown referees for their valuable remarks.