Abstract

This paper presents a survey on multilingual Knowledge Graph Question Answering (mKGQA). We employ a systematic review methodology to collect and analyze the research results in the field of mKGQA by defining scientific literature sources, selecting relevant publications, extracting objective information (e.g., problem, approach, evaluation values, used metrics, etc.), thoroughly analyzing the information, searching for novel insights, and methodically organizing them. Our insights are derived from 46 publications: 26 papers specifically focused on mKGQA systems, 14 papers concerning benchmarks and datasets, and 7 systematic survey articles. Starting its search from 2011, this work presents a comprehensive overview of the research field, encompassing the most recent findings pertaining to mKGQA and Large Language Models. We categorize the acquired information into a well-defined taxonomy, which classifies the methods employed in the development of mKGQA systems. Moreover, we formally define three pivotal characteristics of these methods, namely resource efficiency, multilinguality, and portability. These formal definitions serve as crucial reference points for selecting an appropriate method for mKGQA in a given use case. Lastly, we delve into the challenges of mKGQA, offer a broad outlook on the investigated research field, and outline important directions for future research. Accompanying this paper, we provide all the collected data, scripts, and documentation in an online appendix.

Keywords

Introduction

The most popular search engines on the Web process dozens of billions of queries per day.1

While speaking about the Web, the extraction of direct answers based on structured data is enabled by the introduction of

It is well known that the Web, which in this context is frequently confused with the Internet, is the major information source for people all over the world [35,76,124] in different domains of life. Despite that, the majority of the information on the Web (53.6%)3

The scope of this paper is limited to

Our analysis of the related work published over the past decade suggests (see Section 3) that

In this work, we employ a systematic review methodology posited in [12,78,91,103] (see Section 2) to collect and analyze the research results in the field of mKGQA by defining scientific literature sources, selecting relevant publications, extracting objective information (e.g., evaluation values, used metrics, etc.), analyzing the information, searching for new insights as well as generalizing them in a structured manner (i.e., in the form of a taxonomy). Finally, we summarize our observations and present a general outlook on the investigated research direction. Our insights are mainly derived from 46 publications, which were selected from more than a thousand publications that were retrieved during the initial selection phase. After the manual verification of the formal selection criteria, we selected 26 papers about mKGQA systems, 14 papers about benchmarks and datasets, and 7 systematic survey articles. To ensure the transparency and reproducibility of this work, we provide all the collected data, scripts, and documentation as an online appendix.

This article is structured as follows. In Section 2, we describe the methodology of the systematic survey. Section 3 contains the overview of the related systematic surveys about KGQA. In Section 4, we review the mKGQA systems and propose the taxonomy of the methods. The benchmarks for the mKGQA are reviewed in Section 5. We analyze and discuss the results of the work in Section 6. The article is concluded in Section 7.

To ensure transparency and reproducibility, we followed a strict systematic review methodology, which is based on prior literature [12,78,91,103]. In this section, we describe the methodology explicitly within the context of the actual review execution process.

Selection of sources

For the sources, we used

English: German: Literally translated as: Russian: Literally translated as:

Thereafter, the search results were processed according to the following

To search for publications, we used digital research databases (sources) that were selected during the previous phase. With the advanced search functionality and the corresponding complex search queries, System aspect – Question Answering systems; Data aspect – RDF-based Knowledge Graphs; Language aspect – Multilinguality and cross-linguality. This work started in 2021; the original intention was to cover publications of the past decade, which is why we started searching from 2011.

We considered the

The conceptual representation of the query and its corresponding parts. The parts are concatenated with the

For each of the sources,

Statistics on the selected and accepted publications grouped by its sources

Considering our research background with mKGQA, we identified that

After the initial publications selection phase, all the Paper type – describing a system, a dataset, or a systematic survey; Problem – textual description of the problem; Approach – proposal of authors on how to resolve the problem in general terms; Solution – actual results of the authors towards solving the problem; Languages – a set of languages that were used regarding the multilingual aspect; Knowledge graphs – set of knowledge graphs that were directly used in the work; Datasets – set of datasets that were mentioned or directly used in a publication; Metrics – a set of metrics used for the evaluation in a publication; Technologies – a set of technologies that were mentioned explicitly in a paper or seen in a repository; Source code & demo URLs – the links to the source code or/and demo application; Comment – an optional brief comment or remarks on the publication. The first author did the manual extraction process, the other authors checked the resulted information.

Thereafter, we cross-checked19

In this section, we review the survey articles that are, first of all, related to the considered research field. Secondly, they have been chosen according to the methodology described in Section 2. In Table 3 we present an overview of the publications, described below.

Overview of the surveys

The overview of the survey papers that include the aspect of multilinguality

The overview of the survey papers that include the aspect of multilinguality

In 2017, Höffner et al. [63] dedicated their survey to the following problem: instead of a shared effort,

The search query:

The survey of Diefenbach et al. [40], which was published in 2017, targets Question Answering over Linked Data.

In 2020, da Silva et al. [33] published the survey on end-to-end “simple QA systems”. The authors claim that in The search query:

The survey article of Dimitrakis et al. [45] was published in 2020. The authors claim that

In 2021, Zhang et al. [152] published their paper on deep learning in KBQA. The authors claim that the recent advances in deep learning are entering the KBQA field to improve the corresponding systems. The survey reviews

The survey on KBQA by Pereira et al. [103] was published in 2022. The authors tackle the problem of the KB data accessibility as

In 2022, Antoniou et al. [4] published a survey on SQA systems. The authors claim that no categories of SQA systems have been identified (no typology/taxonomy) before this survey. Hence, at the date of publication, there are no surveys for the categorization of SQA systems. This survey distinguishes categories of SQA systems based on criteria in order to lay the groundwork for a collection of common practices, as no categories of SQA systems have been identified. The authors believe that the categorization and systematization can help developers, or anyone interested to find out directly the technique or steps used by each system or to benchmark their own system against existing ones. The classification created in the survey is based on the following properties: domain, data source, types of questions, types of analysis done on questions, types of representations used for questions, characteristics of the KB, techniques used for retrieving answers, user interaction, and answers. The methodology of the survey is not described.

From the survey articles considered above, we can clearly see that none of them target the multilingual aspect of KGQA specifically. However, multilinguality is mentioned in all of the publications as an important challenge.

Multilingual question answering systems over knowledge graphs

In this section, we review the 21 mKGQA systems that were discovered in the 26 publications24 Some systems are described in multiple publications as they present minor improvements.

In this section, we group the reviewed systems by methods that were used for their implementation. These method groups are described in detail in Section 4.2. Despite some systems may belong to multiple groups, we still assign them to one according to the dominance of a particular method group within a system.

Systems predominantly using methods based on rules and templates (G1)

The authors of

The

The authors of

The CLRN system [131] represents a new approach to engage with the challenges of Cross-lingual KGQA (CLKGQA). Traditional methods typically revolve around the melding of multiple CLKGs into one consolidated KG. However, the authors challenge this approach, emphasizing shortcomings in the ability of existing Entity Alignment (EA) models to accurately align entity pairs in CLKGs. The authors suggest two important challenges to address: dependency of a QA model on a unified KG, and enhancement of an EA model’s performance. To tackle these issues, they propose the Cross-lingual Reasoning Network (CLRN), a revolutionary multi-hop QA model that allows for flexible shifting between knowledge graphs at any point in the multi-hop reasoning process. Further, they establish an iterative framework that couples the CLRN and EA models to extract potential alignment triple pairs from the CLKGs during the QA procedure, thus enhancing the performance of the EA model. Their experimental results demonstrate that the CLRN outperforms other baselines. The experiments were conducted on the MLPQ [130] benchmark that incorporates language-specific DBpedia KGs in

The

In Table 4 we summarize the reviewed systems ordered by publication date with their characteristics such as:

The overview on the multilingual KGQA systems published between 2011 and 2023

The overview on the multilingual KGQA systems published between 2011 and 2023

(Continued)

In the next subsection, we present the taxonomy of the methods that are used to develop mKGQA systems.

While answering

Overview

The taxonomy is based on our review and materials from the previous survey articles [33,40,63,103]. Note that not all of the methods to be presented below are working in an end-to-end manner, meaning that not all of them directly produce an answer or a SPARQL query. Some of the methods require the use of other methods to form a complete mKGQA system.

In a nutshell, there are two system development paradigms.

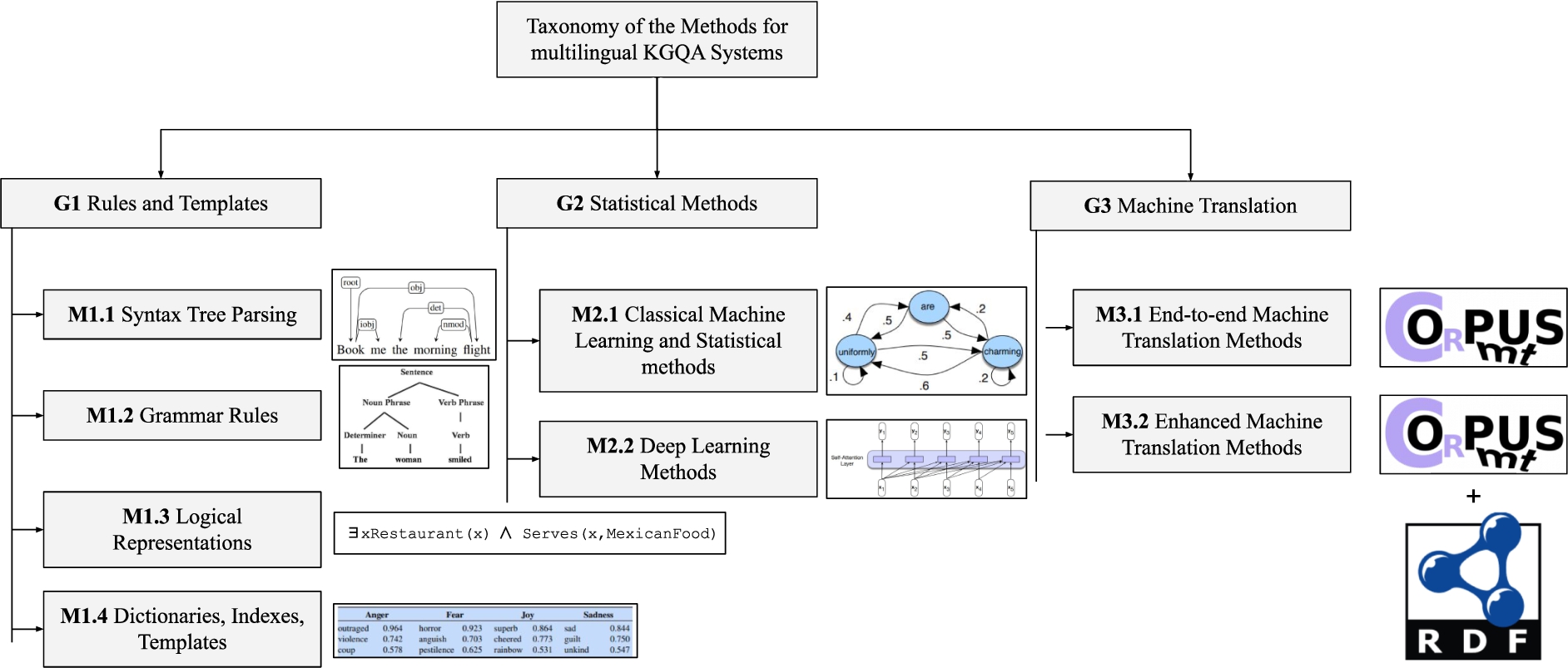

We organize the methods as follows, the high-level general groups (denoted as “G”) contain the low-level concrete methods (denoted as “M”):

The taxonomy is demonstrated in Fig. 1. It is worth mentioning that the methods are not mutually exclusive within one system. Thus, multiple of them can be used within one system, for example, M1.4 and M2.2 are used within the QAnswer system. The methods used by the corresponding systems are listed in Table 4.

Let us review the methods while specifically focusing on their multilingual capability. It is worth underlining that the methods of groups G1 and G2 are widely used in monolingual KGQA, i.e., are not multilingual by definition, however, they may provide this functionality to some extent. For example, the method M2.2 (deep learning) covers multilingual language models as well as monolingual ones. The methods of group G3 are mostly used in the multilingual context. Technically, the methods from the G3 could be placed under the groups G1 or G2, however, in the context of mKGQA, G3 represents a completely different approach on the ideological level.

Most of the

The method based on

The methods using

The methods based on

The methods based on

The methods based on

The

The

To completely cover

Therefore, a system

Visual representation of the multi-objective quality function for mKGQA systems, the gold-colored results represent the Pareto front (optimal solution). The systems are associated with the quality quadrants that help to easily interpret the values.

We encourage researchers to use this procedure and our findings for comprehensive quality measurement of mKGQA systems.

While working on

We analyzed the data on reviewed mKGQA systems (see Table 4) regarding the languages and language families that are covered by them to answer

The visual representation of language and language family coverage among the mKGQA systems.

To the best of our knowledge, during the past decade, there were only 21 mKGQA systems developed within the research context. We observed that in most of the cases, namely 95%, the mKGQA systems

We highlighted

Based on our observations, we foresee the following research challenges and research directions for the mKGQA. Developing originate from different language groups (e.g., Finno-Ugric and Balto-Slavic); have different alphabets/scripts (e.g., Cyrillic and Latin); use different writing systems (e.g., Alphabetical and Abjad). How well a system performs w.r.t. different languages (e.g., does it have a high-quality variance among different languages)? What is a criterion of high-quality w.r.t. mKGQA? incorporate diverse languages (see the above paragraph about diverse languages) gold-standard answers on multiple KGs for wider applicability (e.g., at least Wikidata, DBpedia). Using Retrieval-augmented Generation (RAG): providing LLMs with relevant triples from KGs by verbalizing the triples and using them as a part of a prompt. Fine-tuning an LLM on verbalized triples from a KG for learning the facts.

Addressing

Designing and developing

Exploring

Benchmarks for multilingual question answering over knowledge graphs

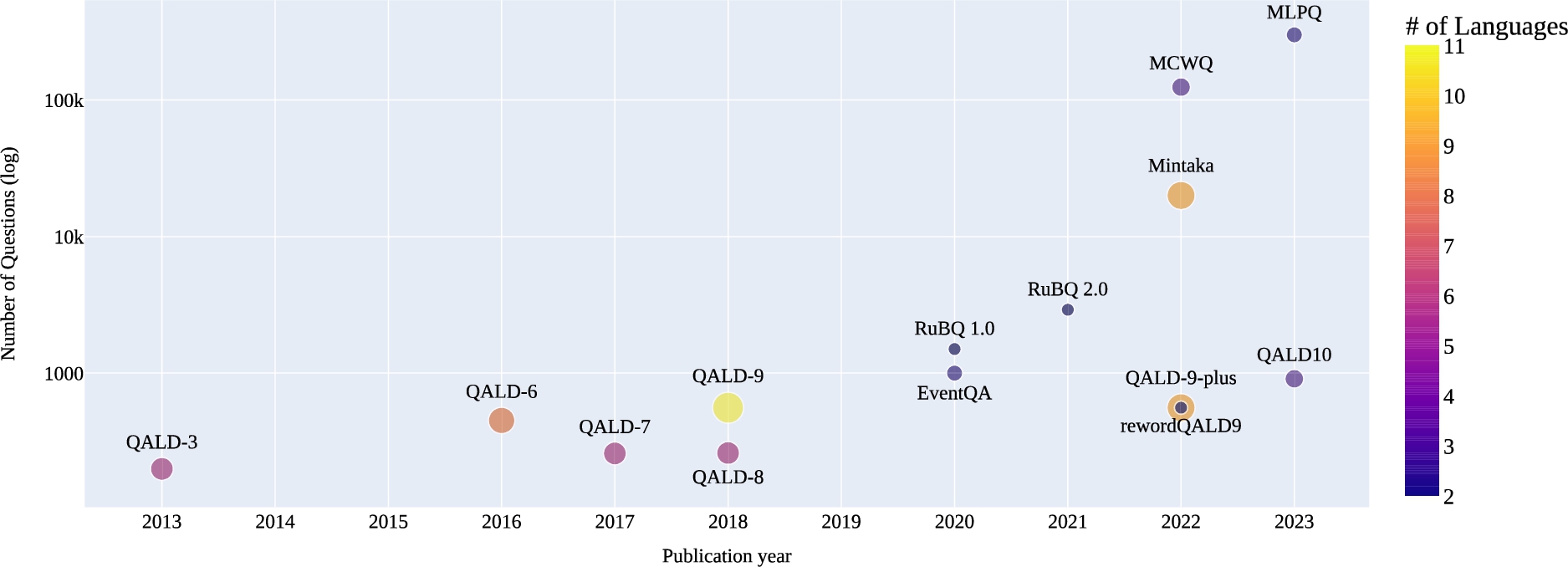

This section describes the existing benchmarks that have been developed for mKGQA. The overview of the benchmarks will showcase their unique characteristics and contributions to the field, shedding light on the respective progress and challenges of mKGQA.

Overview

The research in the field of KGQA is strongly dependent on data, nevertheless, the particular Some of the benchmarks (e.g., RuBQ 1–2, or QALD 1–10) have multiple versions. Therefore, we refer to them in general as

Overview on the mKGQA benchmarks

The QALD is a well-established benchmark series for mKGQA. It has several multilingual versions, namely QALD-{3,6,7,8,9,9-plus,10}. The QALD-3 includes 199 questions and ground truth SPARQL queries over DBpedia and MusicBrainz.35

EventQA is the benchmark for answering event-centric questions (e.g., “In which tournament, known as major, did Jason Dufner win?”). The benchmark contains 1000 questions in the corresponding languages: English, German, and Portuguese. The SPARQL queries are targeting the EventKG [54] – an event-centric KG that incorporates 690,247 events. The benchmark is represented using a newly developed JSON structure.

RuBQ KGQA benchmarking series includes two versions. The latest one – RuBQ 2.0 – contains 2910 questions and is based on its previous version RuBQ 1.0. Similarly to the latest QALD versions, the SPARQL queries within the RuBQ are written for Wikidata. The creation of this benchmark was done in a semi-automatic way: the automatically collected question-answer pairs in textual format were annotated using an entity linking tool; thereafter, the linked entities were checked by crowd-workers; finally, based on the linked question and answer entities, the SPARQL queries were generated and manually validated. The questions are represented in the native Russian language and machine-translated English language. Additionally, it contains a list of entities, relations, answer entities, SPARQL queries, answer-bearing paragraphs, and query-type tags. The benchmark uses a newly developed JSON structure.

MCWQ is a KGQA benchmarking dataset over Wikidata (similarly to QALD and RuBQ) that is based on the previously created CFQ dataset [77]. MCWQ contains questions in the Hebrew, Kannada, Chinese, and English languages. All the non-English questions were obtained using machine translation with several rule-based adjustments. It has a well-detailed structure including questions with highlighted entities, original SPARQL query over Freebase [13] (from CFQ), SPARQL query over Wikidata (introduced in MCWQ), textual representations of a question in four aforementioned languages, and additional fields. The benchmark newly developed JSON structure.

Mintaka is a recent KGQA benchmark that provides 20,000 questions in the following languages: English, Arabic, French, German, Hindi, Italian, Japanese, Portuguese, and Spanish. Similarly to QALD, RuBQ, and MCWQ, Mintaka’s SPARQL queries are over Wikidata. The structure of Mintaka includes annotated named entities, gold standard answers, and internal fields such as question category (e.g., geography) and complexity (e.g., ordinal). The benchmark does not contain the gold standard SPARQL queries, instead, the Wikidata entity IDs are provided as an answer and the corresponding language-specific labels. The questions, annotated named entities, and gold standard answers were created and annotated by crowd-workers respectively. Mintaka follows a newly created JSON structure.

MLPQ is a large-scale KGQA benchmark that contains 300,000 questions in English, Chinese, and French. MLPQ SPARQL is over DBpedia similarly to the early QALD versions. MLPQ has its own RDF structure that contains a question’s text with a language tag, ground truth answer URIs, and a SPARQL query. As the benchmark was generated automatically, the authors provided query templates that were used for the generation process. In addition, MLPQ is accompanied by the DBpedia triples covering all the used questions.

Given the number and characteristics of the reviewed benchmarks, it is clear that there is little attention paid to the mKGQA research field. To the best of our knowledge, only 14 benchmarks tackle the multilingual aspect (some of them have different versions, which are the subsets of the newest ones). The total number of benchmarks (mono- and multilingual) according to the KGQA leaderboard is 48 [107]. Hence, the

The bubble chart represents the chronological order of the benchmarking datasets, their number of questions, and languages.

We also discovered some flaws in the existing benchmarks:

The maximum order of magnitude for the number of questions among the manually and semi-automatically created benchmarks is There is no standardized format used across the research groups for the KGQA benchmarks (except QALD JSON); The majority of the benchmarks stick to only one KG, namely Wikidata (e.g., RuBQ, Mintaka); The benchmarks created with machine translation tools or automatically have unclear questions’ quality (RuBQ, MCWQ, MLPQ).

The aforementioned flaws may become the objectives for future research in this field. Especially, it is worth developing a standardized format for the benchmarks, enlarging them regarding the number of questions, languages, and knowledge graphs using the crowd-sourcing setting.

This section discusses the analyzed work on mKGQA. The first subsection focuses on the challenges encountered in this field, highlighting the obstacles and limitations that researchers face when developing and evaluating KGQA systems in multiple languages. The second subsection explores potential future research directions in mKGQA, highlighting areas that require further exploration and innovation. By examining both the challenges and future research directions, this section aims to provide valuable insights and guide future advancements in mKGQA.

Challenges

While reviewing the related survey papers, mKGQA systems, and corresponding datasets, we identified several challenges that currently exist in this research field. In the following paragraphs, we discuss the most remarkable challenges in the mKGQA field.

Noisy human natural language input

One of the challenges in mKGQA is

Grammatical and orthographic errors further contribute to the noisy input in mKGQA. Since users may not be fluent in all the languages they interact with, they are prone to making mistakes in constructing sentences, selecting appropriate words, or adhering to correct grammar rules.

Another aspect of noisy input in mKGQA is the wrong spelling of named entities. Named entities are essential components for understanding the context and semantics of a question [88]. However, users may misspell or incorrectly transliterate named entities from one language to another. For example, a user asking a question about the “Eiffel Tower” in French may mistakenly spell it as “Eiffle Tower” in English. This presents a challenge in

A user question and a knowledge graph are expressed in different languages

One of the critical challenges highlighted in the existing literature is the specific issue of handling mKGQA process, particularly

To address the cross-linguality challenge, the conventional approach involves

Another approach for dealing with cross-linguality is centered around

The use of cultural traits and country-specific aspects

In addition to the previously mentioned challenges, another significant aspect in mKGQA is the

When users search for information or ask questions, their

Addressing this challenge requires a nuanced understanding of cultural differences and the ability to provide contextually relevant answers to users from different backgrounds. The approaches to deal with this challenge should

Quality discrepancies among different languages

One significant challenge evident from evaluation values in the field of mKGQA is that the

The existing disparities in QA quality arise due to various factors. Firstly, the availability of high-quality training data and resources for languages other than English may be limited, resulting in inadequate training for multilingual models. Moreover, the linguistic complexities, divergent language structures, and semantic nuances present in different languages further contribute to the lower performance in non-English languages.

To address this challenge, substantial efforts are to be made to develop techniques that align multilingual KGs, curate larger and more diverse benchmarking datasets, and improve the training methodologies for multilingual QA models. In particular, a promising strategy is to leverage zero- or few-shot inference while working with LLMs. These approaches aim to reduce biases toward English and provide a more equitable distribution of performance across different languages.

The lack of language diversity

The landscape of mKGQA reveals certain

In practice, the availability of mKGQA systems catering to low-resource and endangered languages is severely limited, with very few systems specifically designed for these language varieties (e.g., Bashkir language in [105]). This dearth of attention towards such languages is concerning, as it hinders the inclusivity and accessibility of KG-based information retrieval for diverse linguistic communities.

A common approach adopted in the development of mKGQA is the

Size and translation quality of benchmarking datasets

It is important to acknowledge that the existing

The limited size of these benchmarks poses challenges in accurately evaluating and benchmarking the performance of such systems. The smaller scale restricts the diversity of queries and contexts that can be covered. This, in turn, limits the generalizability of the performance results and may hinder the development of robust mKGQA systems.

Additionally, the quality of translations within these benchmarks is often a concern. In some cases, the questions are machine-translated (e.g., RuBQ 2.0 [119]), leading to questionable translation quality. Poor translation quality (e.g., QALD-9 [139]) introduces noise and inaccuracies into the benchmark data, potentially affecting the reliability of evaluations and comparisons between different multilingual question answering systems.

Effective usage of large language models

In recent times, there has been a surge of attention and interest in leveraging LLMs for mKGQA as well as other NLP tasks. However, despite the initial excitement and optimism surrounding these powerful models,

In particular, cross-lingual semantic parsing has faced challenges in achieving the desired performance with LLMs. Zhang et al. [153] demonstrated that the results obtained for cross-lingual semantic parsing did not meet the anticipated levels of accuracy and quality. Similarly, even in monolingual settings, Klager et al. [79] reported underwhelming outcomes with LLMs for semantic parsing tasks.

One promising approach, which addresses these shortcomings, involves integrating structured data directly into the mKGQA process, building upon the practices established in the domain of monolingual KGQA [7,72].

General challenges

The field of mKGQA encounters several challenges that require attention and resolution. In contrast to the aforementioned challenges, these extend beyond the multilingual aspect and also encompass the broader field of KGQA:

The majority of KGQA systems predominantly target a single domain, often limited to the general domain. This domain-specificity restricts the applicability and scope of these systems, hindering their potential to address diverse domains effectively. The lack of advanced metrics for evaluating the performance of KGQA systems poses a significant challenge. Without such evaluation metrics, it becomes difficult to comprehensively measure, compare, and benchmark the effectiveness of different multilingual KGQA approaches. Ensuring the reproducibility of KGQA systems is crucial for building upon existing research. However, we have observed low reproducibility rates in some studies within the field. The lack of a standardized format for KGQA benchmarks poses another significant challenge. Without a common benchmark format (e.g., QALD-JSON), it becomes technically arduous to compare the performance of different systems or share and replicate research findings. It is prevalent for KGQA benchmarks to focus solely on a single KGs (e.g., DBpedia or Wikidata). This narrow focus limits the generalizability and applicability of the developed systems, as they may struggle when applied to different KGs.

Future research directions

Future research directions in the field of mKGQA hold substantial potential for advancements. Analyzing the challenges, the following research directions have been identified for further exploration:

Study on how people ask questions in different languages: understanding how individuals formulate questions in various languages, including their native language (L1), second language (L2), etc. is crucial. This research direction entails analyzing the syntactic structures employed, common errors made, and prevalent misspellings encountered in multilingual question posing on the Web.

mKGQA with unequal data distribution across languages: KGs often exhibit disparities in data availability across different languages. Investigating efficient mKGQA techniques that tackle this unequal distribution of language-specific data within KGs is an important research direction.

mKGQA with cultural context: consideration of a user’s cultural background can significantly influence their information needs and preferences. Therefore, incorporating and adapting the ranking of answer candidates in accordance with a user’s cultural context represents an essential research direction for enhancing the effectiveness of mKGQA.

Improving mKGQA quality in languages other than English: while much progress has been made in English KGQA, there is a need to extend the focus to other languages. A crucial objective in this research direction is to minimize the standard deviation of QA quality among different languages, ensuring comparable performance across all supported languages.

Generalization of methods to handle diverse languages: enhancing the applicability of mKGQA techniques to languages originating from different language groups, alphabets, writing systems, low-resource, and endangered languages is a vital research direction. It involves developing approaches that can effectively address the unique challenges posed by each language type.

Extending benchmarks for mKGQA: Expanding the existing benchmarks used for evaluating mKGQA systems is essential. This research direction involves augmenting the number of questions and translations available in different languages and ensuring the quality of these resources to ensure comprehensive evaluation and comparison of mKGQA approaches.

Encouraging publication of negative results: as a majority of publications tend to report positive results, advocating for the publication of negative results is vital. This research direction promotes transparency and prevents duplication of efforts by enabling researchers to learn from unsuccessful attempts and focus on more promising avenues.

Ensuring high-level reproducibility and comparability: to establish a strong foundation in the field of mKGQA, it is imperative to ensure the reproducibility and comparability of research results. This research direction emphasizes the adoption of standardized evaluation metrics, the release of open-source code, and the sharing of datasets to facilitate fair comparison and validation of proposed approaches.

We suggest the research community to consider these research directions, as further progress can be made in the field of mKGQA, leading to improved systems and comprehensive solutions for addressing multilingual information needs.

Conclusion

In conclusion, this systematic survey provides a comprehensive overview of the current state of research in mKGQA. Our work is primarily motivated by the lack of surveys that focus specifically on mKGQA (see Section 1). We followed the strict review methodology (see Section 2) to ensure the reproducibility and transparency of the results produced by us. Therefore, in the first step 1875 publications were retrieved according to the defined criteria. The publication search was conducted in three languages: English, German, and Russian. Finally, 46 publications were accepted for the review, where 26 of them are related to the systems, 14 to the benchmarks, and 7 to the related survey papers.

We systematically reviewed all the aforementioned publications and proposed the taxonomy of the methods for mKGQA systems. We formally defined three characteristics of the methods for mKGQA systems, namely: resource efficiency, multilinguality, and portability. We prepared the online repository37

To answer

While answering

Finally, to answer

It is worth mentioning that our review methodology favors precision over recall when selecting the publications. Therefore, some relevant papers (e.g., [49,151] have been left out due to a mismatch of our selection criteria. The most common mismatch reason is caused by the absence of the peer review process (e.g., preprint only, see Section 2.2) or lack of citations for preprints (our threshold is at least five, see Section 2.2). Also, some papers use multilingual benchmarks but focus only on the English language [51].

In the future, we will regularly update the survey results through the online KGQA leaderboard to keep track of the state-of-the-art in the mKGQA field. We believe that our review methodology enables other researchers to conduct similar work more efficiently. We also encourage researchers to deal with the challenges and research directions mentioned in Section 6.

Footnotes

Acknowledgements

This work has been partially supported by grants for the ITZBund

![]()