Abstract

Question Answering (QA) over Knowledge Graphs (KG) aims to develop a system that is capable of answering users’ questions using the information coming from one or multiple Knowledge Graphs, like DBpedia, Wikidata, and so on. Question Answering systems need to translate the user’s question, written using natural language, into a query formulated through a specific data query language that is compliant with the underlying KG. This translation process is already non-trivial when trying to answer simple questions that involve a single triple pattern. It becomes even more troublesome when trying to cope with questions that require modifiers in the final query, i.e., aggregate functions, query forms, and so on. The attention over this last aspect is growing but has never been thoroughly addressed by the existing literature. Starting from the latest advances in this field, we want to further step in this direction. This work aims to provide a publicly available dataset designed for evaluating the performance of a QA system in translating articulated questions into a specific data query language. This dataset has also been used to evaluate three QA systems available at the state of the art.

Introduction

Question Answering (QA) is a challenging task that allows users to acquire information from a data source through questions written using natural language. The user remains unaware of the underlying structure of the data source, which could be anything ranging from a collection of textual documents to a database. For this reason, QA systems have always represented a goal for researchers as well as industries since it allows the creation of a Natural Language Interface (NLI) [16,18]. NLIs can make accessible, especially to non-expert users, a huge amount of data that would have been undisclosed otherwise.

Several criteria can be used to categorize QA systems like the application domain (closed or open) or the kind of question that the system can handle (factoid, causal, and so on) [17]. Nevertheless, one of the most distinguishing aspects is the structure of the data source used to retrieve the answers. This feature deeply affects the QA system structure and the methods applied to retrieve the correct answer.

The first QA systems were built as an attempt to create NLIs for databases [1] thus QA over structured data has been investigated since the late sixties. However, this research area remains a challenge, and the recent advances in the Semantic Web have brought more attention to this topic. QA system can exploit the information contained within Knowledge Graphs (e.g., DBpedia [2], or Wikidata [29]), to answer questions regarding several topics and also can ease the access to this great amount of data to non-expert users.

RDF1

However, translating the natural language, which is inherently ambiguous, into a formal query using a data query language like SPARQL is not easy. The main problem that makes this process particularly difficult is recognized in the literature as

The first one is Entity Linking, i.e., find a match between portions of the question to entities within the KG. This process can be straightforward if there is a string matching between the question and the label of a KG resource: in the question

Another important issue is related to the ambiguity of the natural language. In the question

Similar issues can be found when dealing with Relation Linking, which requires a map between segments of the question and relations in the KG. Here the lexical gap can be even wider since there can be many expressions that refer to a particular relation. Questions like

These two issues can be combined in several ways. An interesting example is represented by requests like

These two problems, intertwined, represent a struggle for any QA system, and several techniques have been applied in the literature to overcome them. However, there is another kind of lexical gap that has to be considered. For example, to find an answer for questions like

SPARQL query for

The SPARQL query language makes available several modifiers: query forms (like

The construction of queries containing modifiers still represents an issue that has been explicitly explored in literature only by few works [6,9,12,13,19,20], due to the complexity of the task and the lack of resources that can help researchers to develop and evaluate novel solutions. With this paper, we want to step forward in this direction and propose MQALD: a dataset created to allow KGQA systems to take on this challenge and overcome the issues related to this specific research area.

The paper is organized as follows: in Section 2 we describe the state-of-the-art datasets and evaluation metrics used in this research area; in Section 3 we introduce our MQALD dataset, explaining how it was created and examining the modifiers it contains; in Section 4 we introduce the QA systems chosen for the evaluation and describe how it was performed; in Section 5 we analyze the results obtained by the chosen systems, and finally, in Section 6, we draw the conclusion and propose some insights for future research.

The development of a unified dataset for evaluating KGQA systems started in 2011 with the first edition of the Question Answering over Linked Data (QALD)6

Besides the QALD dataset, other datasets have been proposed to benchmark QA systems over KGs.

WebQuestions and SimpleQuestion were both built for Freebase [4]. WebQuestions [3] contains 5,810 questions obtained by exploiting the Google Suggest API and the Amazon Mechanical Turk. Due to this generation process, questions within this dataset usually follow similar templates, and each of them revolves around a single entity of the KG. All questions are associated with an entity of Freebase that can be found in the question and the right answer.

SimpleQuestions [5] instead is the dataset with the highest number of questions, and it was created to provide a richer dataset. It consists of 108,442 questions that have been created in two steps: in the first one, a list of facts or triples were extracted directly from Freebase, then these triples were sent to human annotators who were in charge of translating such triples into natural language questions. Like in the QALD dataset, each question is associated with its SPARQL version. The dataset derives its name from the fact that all the questions can be answered by exploiting just one relation of the KG.

LC-QuAD [22] is based on Wikidata using templates that allow formulating questions that are translated into SPARQL queries that require 2-hop relations. These templates are then reviewed by human annotators that verify the correctness of the questions. The final dataset comprises 5,000 questions, with the corresponding SPARQL translations and the templates used for generating them. With LC-QuAD 2.0 [11] the dataset has been further extended and now contains 30,000 questions.

TempQuestion [15] is a dataset based on Freebase and obtained by collecting questions containing temporal expressions from already existing source datasets. ComplexWebQuestions [21] is also based on Freebase and requires reasoning over multiple web snippets. Finally Event-QA [7] is a novel dataset based on a custom KG called EventKG.7

As aforementioned, QALD represents a dataset and a challenge that makes available a benchmark for QA systems. Factoid QA inherited its evaluation metrics from classical evaluation systems, thus estimating the performance in terms of Precision, Recall, and F-measure. Since the seventh edition of the challenge, the GERBIL benchmark platform [26,27] was used to evaluate the performance of the participating systems. GERBIL, which is publicly available online,8

In this section, we introduce MQALD, a dataset that is composed only of questions containing modifiers. Two sections compose MQALD: the first one contains novel questions, and the second one is obtained by extracting questions that require modifiers from the QALD dataset. In the following, we will describe with more detail each portion of MQALD.

Generating novel questions

MQALD comprises 100 questions that have been manually created by human annotators based on DBpedia 2016-10. To create MQALD, we employed two annotators who are both familiar with the use of SPARQL and SQL-like Data Query Languages. Since the dataset aims to evaluate QA systems’ capability to translate complex information needs into query with modifiers, we did not apply any constraint to the question’s creation process. Annotators are free to choose question topics and modifiers. In this way, the results obtained by a system are more likely to depend on its ability to create questions with modifiers.

Each annotator was in charge of developing 50 questions and then review the other 50 questions created by the other annotator.

Each question is provided in four languages: English, Italian, French, and Spanish. Both annotators have Italian as their mother tongue and have a good knowledge of the English language. Each question was first written in English and then translated to Italian. For the other two languages, translations of the original English question were obtained through machine translation.9 We use Google Translate.

The two annotators discussed any further discrepancy until they met an agreement.10 During the annotation process, the first annotator edited the 12% of questions of the second annotator, while the 18% of questions of the first annotator were rectified.

mandatory: the use of the modifier is indispensable to retrieve the right answer;

KG independent: modifiers are not used to adapt the query to a particular structure of the underlying KG. An example of KG dependency is represented by the use of the

Considering these two aspects is particularly important since, in this way, it is possible to guarantee that each modifier is connected to a specific semantics of the natural language question. Table 1 shows the distribution of modifiers among the 100 questions made by the annotators.

Frequencies of each modifier within the novel questions available in MQALD

JSON structure of a question within the dataset

The MQALD dataset is in JSON format and compliant with the QALD dataset structure: each question is serialized as a JSON object which is then stored in a JSON array that contains all the questions as shown in Listings 2. The only exceptions in the structure of each question is represented by the presence of a further JSON array, named

To further extend our dataset, we also collect questions with modifiers available in the QALD dataset. As stated in Section 2, in each edition of QALD, the dataset is obtained as an extension of the previous edition dataset, although we noticed there was not a complete overlap. For this reason, we decided to merge the questions contained in the last three editions of the QALD challenge over DBpedia: QALD-7 [25], QALD-8 [24], and QALD-9 [23]. We decide not to include queries with modifiers from other datasets since they are not based on DBpedia or are based on a no-standard version of DBpedia.11 This is the case of LC-QuAD that uses a version of DBpedia, called DBpedia 2018, that merges DBpedia with information coming from Wikidata.

Considering that each QALD dataset is split in training and test, the merge of the three editions was done separately. Therefore two lists of questions were obtained: one containing all the training questions from QALD-9, 8, and 7, and the other one containing all the test questions from QALD-9, 8, and 7. Since training data could have been used to develop the systems, we excluded from the test set those questions that also appeared within the training data in any of the three editions. Thus, those questions were removed from the test questions of QALD-9, 8, and 7 and added to the list composed by the training set questions. We obtained the following files:

Number of questions included in each file

Table 2 shows the number of questions included in each file. The format of the JSON file remains the same as the one shown in Listings 2 with the addition of the attribute

Frequencies of each modifier obtained after merging the last three editions of the QALD dataset

In this section, we will describe in detail the modifiers that are contained in the dataset through real examples extracted from MQALD. The modifiers will be listed according to their frequency.

FILTER

The

There are

We also have

SPARQL query for

Finally, there is also a single

The second most common modifier is

LIMIT

The

Another combination is composed of the previous one (

SPARQL query for

The

For the novel questions introduced in MQALD we employed the

SPARQL query for

Among all the possible SPARQL query forms besides

SPARQL query for

Besides from being used in combination with

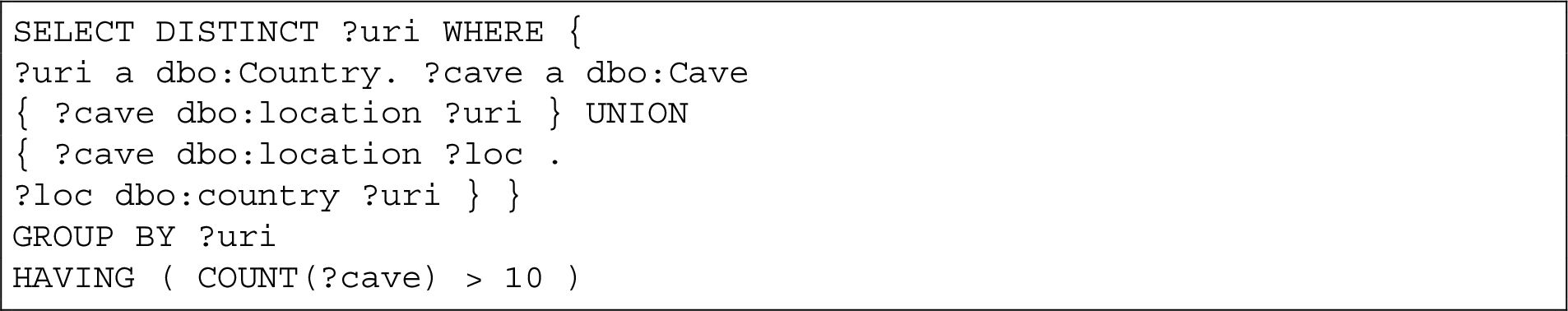

UNION

The

Despite being correct, the use of the

For this reason, in the questions we introduced in MQALD, through the help of human annotators, we employed the

SPARQL query for

SPARQL query for

The

In the questions extracted from QALD, the majority of times, the queries include an

The other existing case of non-zero

In our dataset, we always employ the

SPARQL query for

The

SPARQL query for

If the query requires to filter the result of aggregation, then it must be introduced with the modifier

SPARQL makes available several functions for dates and times, even though

SPARQL query for

The dataset can be downloaded from Zenodo12

Statistics computed on 1st April 2021.

We compare the performance of three state of the art QA systems for KGs over the different datasets we constructed. Our evaluation aims to examine how QA systems perform on questions that require one or more modifiers within the final SPARQL query and how this result impacts the performance of the system itself. The QA systems chosen for the evaluation are the following:

We decided to implement an evaluation tool following the guidelines reported in the QALD-9 report [23]. In particular, the evaluation script computes for each query

Moreover, there are additional information to take into account:

If the golden answer-set is empty and the system retrieves an empty answer, precision, recall and F-measure are equal to 1.

If the golden answer-set is empty but the system responds with any answer-set, precision, recall, and F-measure are equal to 0.

If there is a golden answer but the QA system responds with an empty answer-set, it is assumed that the system could not respond. Then the precision, recall, and F-measure are equal to 0.

In any other case, the standard precision, recall, and F-measure are computed.

In our paper, we consider the macro measures as the QALD challenge: we calculated precision, recall, and F-measure per question and averaged the values at the end. As adopted in QALD, for the final evaluation, the Macro F1 QALD metric is used. This metric uses the previously mentioned additional information with the following exception:

If the golden answer-set is not empty but the QA system responds with an empty answer-set, it is assumed that the system determined that it cannot answer the question. Then the precision is set to 1 and the recall and F-measure to 0.

The results of the evaluation of the three mentioned QA systems, i.e. gAnswer, QAnswer, and TeBaQA are reported in Table 4.

Results of the three systems over the ten datasets. The metrics used are Precision (P), Recall (R), F-Measure (F), and F1-QALD measure (F1-Q)

Results of the three systems over the ten datasets. The metrics used are Precision (P), Recall (R), F-Measure (F), and F1-QALD measure (F1-Q)

Overall, an analysis of the performance of the systems in terms of F1-QALD measure for the datasets without modifiers (no_mods) and those containing all the questions (with and without modifiers) confirms the results reported by the QALD-9 challenge: gAnswer represents the best system at the state-of-the-art for this task, followed by QAnswer and TeBaQA.

However, this ranking changes when comparing the results containing only questions that require modifiers. In fact, in this case, QAnswer outperforms the results obtained by gAnswer. To obtain more insights regarding this aspect, we checked which questions that require modifiers are answered by each system within the test set, and the results are shown in Table 5 for questions contained in the QALD testset and Table 6 for the novel questions added in MQALD. By analyzing Precision, Recall, and F-measure calculated for each question and the set of answers returned by each system, we can have an insight into how they handle modifiers.

List of the questions answered by each system over the qald_test_MODS dataset

List of the questions answered by each system over the new queries added in MQALD

Out of the 41 questions that require modifiers extracted from the QALD datasets, the number of questions where the F-score is not equal to zero is 3 for gAnswer, 7 for QAnswer, and 3 for TeBaQA. Most of the questions answered by the three systems involve the

The same happens for all the questions where the F-score is lower than 1. For example, QAnswer answers the question

Also, for the question

There is only one question that requires an

An exception is represented by the question

Regarding the novel questions included in MQALD (new queries), 4 answers were provided by gAnswer, 11 by QAnswer, and 8 by TeBaQA. The only two systems that manage to return a full answer, with Precision, Recall, and F-measure at 1, are QAnswer and TeBaQA. As stated in Section 3, MQALD questions are formulated such that modifiers are necessary to retrieve the correct set of answers; thus, by analyzing these scores, it is possible to identify which modifiers are covered by which system easily. QAnswer is able to manage the

Overall, the results show how all the systems under analysis do not perform well at handling modifiers, just with a few exceptions. Nevertheless, the capability of QAnswer to handle ASK and COUNT modifiers and better bridge the lexical gap by at least returning partial answers granted it the best score among the three selected systems.

In this paper, we have introduced MQALD, a dataset of questions that contain SPARQL modifiers. The dataset is composed of 100 new manually created queries plus 41 queries with modifiers of the last three editions of the QALD. These modifiers were then analyzed to better understand their functioning considering the SPARQL syntax and how they can reflect specific semantics of the natural language query. Through the evaluation of three systems available at the state-of-the-art, emerged that there is still much work that must be done to create a QA system capable of handling this kind of questions, not only from an architectural point of view but also in creating and updating the existing resources to allow these systems to be fairly compared.

This work aimed to give more insight about this specific issue related to Question Answering over Knowledge Graphs. This research area is fastly growing, and the availability of novel resources can significantly help develop novel solutions.

As future work, we plan to further encourage the research and development of new solutions to improve QA systems’ performance over KG handling questions involving modifiers, which are very common in natural language. The first step towards this direction would be to revise the available resources properly, fix the datasets so that question/query pairs are compliant with the last version of DBpedia, and make sure that using a specific modifier is the only way to retrieve the right answers. Next, it would be beneficial for the whole community to expand this dataset further so that a sufficient number of questions properly represent all the modifiers available in SPARQL.

Footnotes

Acknowledgement

This work was supported by the PRIN 2009 project: “Modelli per la personalizzazione di percorsi formativi in un sistema di gestione dell’apprendimento”.