Abstract

The diffusion of Human-Robot Collaborative cells is prevented by several barriers. Classical control approaches seem not yet fully suitable for facing the variability conveyed by the presence of human operators beside robots. The capabilities of representing heterogeneous knowledge representation and performing abstract reasoning are crucial to enhance the flexibility of control solutions. To this aim, the ontology SOHO (Sharework Ontology for Human-Robot Collaboration) has been specifically designed for representing Human-Robot Collaboration scenarios, following a context-based approach. This work brings several contributions. This paper proposes an extension of SOHO to better characterize behavioral constraints of collaborative tasks. Furthermore, this work shows a knowledge extraction procedure designed to automatize the synthesis of Artificial Intelligence plan-based controllers for realizing flexible coordination of human and robot behaviors in collaborative tasks. The generality of the ontological model and the developed representation capabilities as well as the validity of the synthesized planning domains are evaluated on a number of realistic industrial scenarios where collaborative robots are actually deployed.

Keywords

Introduction

Nowadays, robots are successfully deployed in a large spectrum of real-world applications. Nevertheless, robots require an increased level of autonomy and additional features to operate in “open environments” guaranteeing reliable and safe interactions. These constitute major scientific challenges and many research activities are ongoing to address them. In manufacturing, open research challenges concern in particular the design of control systems capable of robustly anticipating changes in production requirements and goals [98]. Higher levels of flexibility and adaptability of industrial robots are crucial to face the challenges of Industry 4.0 [17,34]. Industry 4.0 [37] is indeed pushing manufacturing systems towards customer-oriented and personalized production while trying to guarantee the advantages of mass production systems in terms of both productivity and costs [61]. Future manufacturing systems should in other words evolve towards flexible production embracing changes to the needs, requirements, and objectives of the factory [85]. Research in Human-Robot Collaboration (HRC) pursues these challenging objectives by investigating the tight and symbiotic collaboration between human workers and autonomous (or semi-autonomous) robots. Novel production paradigms that see humans and robots working side-by-side as interchangeable resources, thus combining the precision and tirelessness of the former with the problem-solving skills of the latter [32,97].

Classical control approaches usually rely on static models of robot skills and a static (or hard-coded) description of production requirements and objectives. Such technology is not fully able to support the level of (flexible) autonomy that future production environments need. This is especially true in HRC where robots and humans share the working space and physically interact together to achieve common objectives. Human actors introduce a significant source of uncertainty that robot controllers should properly take into account in order to safely cooperate with them while supporting production [54]. It is crucial to investigate novel control approaches implementing advanced cognitive capabilities and allow robots to achieve a higher level of awareness about themselves, their “peer companions” as well as the production context and related dynamics e.g., production procedures, task requirements, needed skills and capabilities of actors taking part to production processes.

Research in Artificial Intelligence (AI) designs and develops technologies that are suitable to realize the desired cognitive capabilities. The integration of AI and Robotics in particular [48,73] would allow (collaborative) robots to: (i) perceive the environment by correctly interpreting events and situations; (ii) build and maintain knowledge about the production context; (iii) reason about their own capabilities/skills and dynamically contextualize possible actions to the perceived state of a production scenario; (iv) autonomously decide how to act/interact with the environment and other “actors” (i.e., human operators but also other robots if necessary) to support production and; (v) adapt behaviors over time according to the “learned experience” and evolving production needs.

Our long-term research objective is to enrich robot controllers with an AI-based “perceive-reason-act” paradigm implementing advanced cognitive features. The envisaged cognitive control approach would allow a collaborative robot to be aware of the production context and autonomously decide which tasks are needed and how to execute them in order to collaborate with human workers and support production in the best way possible. For example, the way a procedure is executed may depend on several factors. The tool a worker needs for implementing the tasks could be damaged or not available. The needed resources e.g., bolts or other pieces could not be available or insufficient. The worker joining the production today could not know well the procedure because of low experience. In all these cases (and others), a robot with enhanced awareness of the production context would autonomously adapt its behavior and the execution of collaborative processes to different situations. Suppose for example that the worker cannot execute some tasks because the needed screwdriver is not available, the robot, knowing this fact and knowing that it can perform the same tasks using its own screwdriver (i.e., the robot knows its capabilities), it would autonomously synthesize a collaborative plan by assigning the tasks requiring the screwdriver to itself. This level of autonomy and flexibility could not be achieved by classic control approaches without interrupting production and manually fixing the control procedure of the robot.

To pursue this level of awareness and cognitive control, we investigate the integration of AI-based Knowledge Representation & Reasoning with Automated Planning and Execution. The combination of these AI technologies with Robotics has shown promising results in heterogeneous scenarios ranging from service and assistive robotics [4,22,53,90] to Reconfigurable Manufacturing Systems [7,13,30,77], improving flexibility of robot behaviors. Semantic technologies are crucial to endow robot controllers with the theoretical context necessary to represent (heterogeneous) information coming from different sources (e.g., deployed sensing devices or domain expert knowledge about production processes) and reason about the resulting knowledge in order to understand the state of a production environment and make contextualized decisions.

This paper advances a recent work by refining an ontological model for Human-Robot Collaboration in manufacturing [91] and investigating the integration between Knowledge Representation & Reasoning and Automated Planning in order to enhance awareness, adaptability and flexibility of collaborative robots. In particular, the work better explain the human factor ontological context and the use of ontology design patterns [40] as a means to facilitate the description of collaborative dynamics. The human factor context describes the concepts and properties that characterize skills and qualities of human workers. This knowledge is useful to adapt collaborative processes to the different features of human workers. Ontology patterns are defined according to consolidated schemes of interaction between humans and robots in HRC [44,59]. Similarly to software design patterns, ontology design patterns are used to narrow knowledge design choices and define sufficiently general and reusable concepts characterizing behavior constraints between a human and a robot when performing collaborative tasks [44,59]. Robot awareness is achieved through designed knowledge extraction procedures that automatically synthesize (and online adapt) plan-based control models. Here ontological patterns are translated into sets of causal and temporal constraints that comply with the desired “shape” of the collaboration between the human and the robot.

The conjunction of AI-based planning and semantic technologies realizes cognitive skills suitable to enhance autonomy and context awareness of industrial (collaborative) robots as well as robustly deal with evolving production needs (e.g., changing production requirements, changing capabilities of a robotic platform or changing skills of human operators, etc.). The validity and generality of the proposed approach are evaluated on a number of realistic HRC scenarios, pilots of the EU H2020 research project Sharework.1

The paper is structured as follows. Section 2 discusses related works concerning the integration of knowledge reasoning and automated planning with robotics. It highlights how other researchers are investigating the integration of the mentioned technologies to enhance the flexibility, adaptability, and (social) context awareness of robot behaviors. Section 3 discusses the Sharework Ontology for Human-Robot Collaboration (SOHO) initially introduced in [91]. SOHO defines the representational space of the proposed approach and this section provides a complete and refined definition of its concepts and properties. Section 4 briefly introduces the timeline-based planning formalism and then describes the developed knowledge extraction procedure. This procedure is the central point linking knowledge to task planning. It supports the automatic update and adaptation of the plan-based control model to the contextualized knowledge of a (collaborative) production scenario. Section 5 evaluates the proposed approach on a number of realistic scenarios taken from the pilot cases of the EU H2020 research project Sharework. On the one hand, the evaluation shows the capability of capturing all relevant aspects of collaborative scenarios. On the other hand, it shows the feasibility and correctness of the knowledge extraction procedure. Finally, Section 6 summarizes the contribution of the paper pointing out possible directions for future developments.

Robotics and Artificial Intelligence (AI) are two research areas that historically addressed the challenge (among others) of building embedded intelligent systems capable of acting in a real-world environment [73]. Recent advancements in Robotics and AI are pushing the design and deployment of autonomous robots in increasingly complex/unstructured environments. On the one hand, technological advancements concerning the increased reliability and efficiency of sensing devices, manipulation and navigation skills of robots as well as solving and predictive capabilities of AI technologies open new opportunities for the effective deployment of Robotics and AI solutions. On the other hand, the increased complexity of application scenarios raises new technical/methodological challenges.

A tight integration of Robotics and AI is crucial to enhance the autonomy and control capabilities of robots and allow them to safely and reliably act in the real-world [41,48]. In particular, robots acting in the real world should take into account a number of “non-functional” qualities that are crucial to realize behaviors that are safe and acceptable with respect to humans [15,28,75]. Robot controllers should therefore evolve towards an advanced “Perception, Reason, Act” paradigm implementing the cognitive capabilities needed to synthesize and execute flexible behaviors that are valid from both a technical and social point of view. To implement the envisaged cognitive control paradigm, we found particularly promising the integration of ontology-based reasoning with AI-based planning and robot control. The integration of semantic technologies with robot controllers has been widely studied in the literature [56].

Ontologies have been recognized as true enablers of adaptable and flexible systems compared to classic approaches [21,87]. Robot-integrated ontology-based reasoning has in particular shown effective results in the enhancement of robot flexibility and awareness [7,13,53]. This section discusses some relevant works in the literature concerning the integration of ontology-based knowledge reasoning, planning, and robotics. It shows the enhanced flexibility and awareness of robots acting in different domains, thanks to the integration of the mentioned technologies.

Ontology in robotics and human-robot interaction scenarios

KnowRob [6,84] is a well-known framework supporting advanced Perception, Reasoning, and Control. The framework provides robots with a logical representation of a number of entities ranging from robotic parts and objects (with their composition and functionalities) to tasks, actions, and behaviors. This framework in particular focuses on manipulation tasks and allows robots to perceive objects of the environment, reason about their functionalities (e.g., formal description of affordances of objects [8]), and decide how to use them within planning actions [7,31]. Although general, KnowRob is mainly suitable to deal with scenarios where a single robot manipulates objects and interacts with an environment. The dyadic nature of HRC scenarios requires reasoning on simultaneous executions of actions and the synergetic combination of robotic and human actors.

An ontological model characterizing object manipulation tasks of robots has been also considered within the PMK framework [30]. Similar to KnowRob [6], PMK supports a standardized representation of the environment defining a common language to exchange information between a human and a robot. It characterizes causal information and constraints about manipulation tasks well and defines knowledge that is useful at both task and motion planning levels. The work [82] exploits a robot knowledge framework (OMRKF) consisting of a series of ontology layers, including a robot-centered and human-centered ontology. The system in particular relies on an object layer, a context layer, and an activity layer to abstract gathered sensor data. However, this framework lacks a foundational background which limits the reliability of inferred knowledge. Furthermore, it does not distinguish between activity and functionality resulting in a rigid characterization of robot capabilities and behaviors. For example avoid obstacle is not a behavior but a function that can be implemented in different ways like, e.g., moving away or turning around.

An interesting framework concerning the ontological description and integration of robotic skills is SkiROS [76]. Less general than KnowRob, this framework is designed on ROS with the objective of proposing an ontological model of robot skills. On top of this knowledge, action-based planning supports a dynamic combination of skills to realize complex behaviors. Similar to KnowRob [84], SkiROS focuses on the description/control of a single robot acting in the environment. Concerning HRC scenarios, this framework does not support an explicit representation of the skills of multiple agents, concurrency, time, and controllability issues.

The ORO framework [52] develops a knowledge reasoning framework endowing robots with common sense reasoning capabilities to autonomously operate in semantically-rich human environments. With respect to KnowRob, the ORO framework addresses the control problem from a cognitive perspective and realizes a general cognitive architecture deployed on different robotic platforms and assessed on different cognitive scenarios [52]. This architecture has been specifically developed to support advanced cognitive skills (e.g., theory of mind capabilities) and thus support increasingly flexible and adaptive human-robot interactions [53]. Considering an HRC perspective, ORO pursues a turn-based interaction approach where the human and the robot are supposed to perform one action at a time with the robot reacting to the observed behavior and inferred state of the human. This interaction mechanism is not fully effective in production scenarios that require cooperation and simultaneous action execution of the human and a robot to achieve shared objectives.

Concerning human-robot social interactions, knowledge representation, and reasoning have been used to realize socially compliant behaviors. Non-functional requirements like those regarding social norms are crucial to realize acceptable behaviors [75]. For example, the work [4] uses knowledge reasoning to represent social norms and allow a social robot to implement socially acceptable behaviors for social tasks. More specifically, the work proposes a formal description of the functional affordances of objects to reason about their possible use and thus infer those that are suitable to accomplish the requested social task (i.e., serving coffee to guests using the right object). The work [15] proposes the use of knowledge reasoning to adapt human-robot interactions to the cultural knowledge of different contexts and people. This is another example of how ontology-based reasoning can enhance context awareness of robots. In this case, reasoning capabilities evaluate non-functional qualities of human-robot interactions and synthesize socially compliant behaviors. Another interesting work is [22]. Similar to other works e.g., KnowRob [84], it proposes an ontological model characterizing users, the interacting environment, capabilities (not necessarily correlated to the robot only), and tasks. On top of this model, the work instantiates a cognitive architecture realizing a perceive, reason, act control loop. The resulting “cognitive agent” incrementally decides which social task to perform according to the perceived state of the interaction context. An added value of [22] is the explicit representation of interacting users through user profiles that support personalized services.

Ontology-based reasoning has been used also in medical scenarios. The work [42] proposes the use of ontology in orthopedic surgery. The ontological model OROSU integrates and shows domain knowledge to different types of users uniformly e.g., surgeons, nurses, or technicians, during surgery. It relies on KnowRob [84] to describe surgical procedures concerning hip surgery. Finally, ontology-based reasoning has been used also to formally represent normative standards and evaluate compliance with them. An example is the work [74] where normative standards for indoor environmental qualities have been encoded into an ontological model. A social robot has been endowed with cognitive capabilities to ground detected quality conditions of an environment and automatically evaluate the compliance of a perceived environment to the normative standards.

Ontology in manufacturing

In manufacturing, ontologies have mainly focused on the manufacturing system as a whole or rather on specific production aspects e.g., [78,86,94]. Modeling procedures, capabilities of working entities, and possible interactions connected to production objectives are challenging. The description of so-called Cyber-Physical Systems (CPSs) (e.g., HRC Systems) requires modeling the dynamics of the involved agents from both a local perspective (i.e., the point of view of a specific agent) and global perspective (i.e., the point of view of the production) [11]. In this context, ontologies have been mainly applied with the aim of increasing flexibility in modeling and planning of, e.g., mechatronic devices [5], resources in collaborative environments, and the whole enterprise [80], collaborative robots [46] and navigation robots [16].

The work [5] uses an ontology to collect static and dynamic information relative to robots. The basic actions of a robot are hard-coded but the ontological system adds some flexibility like the possibility to learn articulated actions and to act with partial information, e.g., information about the location of the object to move. Besides the lack of functionality/activity distinction, the robot has very limited knowledge of the environment. The work [7] uses a well-structured ontological model to characterize product assembly tasks. Specifically, the work extends the KnowRob framework [84] by integrating inference rules necessary to reason about incomplete assemblies of different products. Based on the outcome of the implemented perception and reasoning processes they automatically plan the next action to be executed and incrementally assemble the desired products. The work [51] uses ontology to represent kit-building parts for assembly operations. Kit building parts are presented by means of XML descriptions whose schema (XSDL) is mapped to an ontological model providing a uniform logic-based representational space. The ontological model and the automatic generation of OWL descriptions from XML schema are then used within an agility framework to evaluate the agility performance of robotic systems.

Concerning collaborative manufacturing, the work [66] proposes an ontological model called OCRA which takes into account uncertainty and safety constraints. Interestingly, it characterizes reliable human-robot collaborations providing robots with a well-structured formalization suitable to reason on the execution perspective of their plans. The work [77] realizes an ontology-based multi-agent system integrated with a Business Rule Management System to define a language for the coordination of human and robotic agents. The ontological model does not rely on a structured theoretical background and the knowledge about tasks and agents’ capabilities is hard-coded. Furthermore, it proposes a limited, and schematic description of a collaborative environment. For example, workers and cobots are represented with a simple schema describing just their location within the environment, no additional information about their capabilities, composition, or behavioral features is provided.

Works within the ROSETTA project [68] have also investigated the use of ontology-based reasoning to simplify the programming of industrial robots and interactions with humans. The work [81] uses and extends the SIARAS ontology to characterize knowledge about robot skills focusing in particular on manipulation skills and devices that may compose a manufacturing environment (e.g., gripper, fixture, the robot itself). The framework aggregates several ontological models that are relevant also from a human-robot collaboration perspective e.g., the injury.owl which characterizes the expected risk level of injury when a robot cooperates with a human or shares the same environment.

An ontology for Human-Robot Collaboration

Considering the discussed literature, it is still missing an ontological model capable of capturing the capabilities of different types of resources, actors with different interacting features, and production requirements. Enriching manufacturing systems with such a semantically rich model would: (i) increase their level of awareness about the state and needs of a production environment; (ii) autonomously interpret production events and properly coordinate acting resources e.g., collaborative robots and workers and; (iii) dynamically adapt production processes to the skills and working and/or health conditions of collaborating workers. The work [91] made a first step toward the definition of an ontological model specifically designed for Human-Robot Collaboration (HRC). SOHO (Sharework Ontology for Human-Robot Collaboration) is a domain ontology [43] aiming at characterizing HRC scenarios from different but synergetic levels of abstraction (contexts). The objective is to define a well-structured model of production environments, human, machine, and robot structures, capabilities, and functional operations.

SOHO pursues a flexible interpretation of these concepts in order to interpret production states/situations according to the specific needs of processes and features of the environment. For example, operational capabilities (or simply capabilities) of robots and workers intrinsically depend respectively on their structures (e.g., actuators and end-effector that are part of the robotic device – embodiment) and their skills or abilities (e.g., a worker can perform specific welding operations). These capabilities, combined with the specific needs and requirements of a production environment, would enable the execution of different (instances of) functions [12]. This section provides a complete and refined description of SOHO,2

The ontology is publicly available at the following GitHub repository –

Foundational ontologies aim at describing reality from a high-level perspective in order to define concepts that are general enough to be valid across many domains. Their use is generally recommended and represents a good design choice in order to base new (more specific) ontological models on well-structured semantics. These ontological models constitute a stable theoretical background of more specific ontologies and thus foster a clear structuring and disambiguation of domain concepts [49,58]. Several foundational ontologies have been introduced in the literature e.g., BFO [67], DOLCE [14] or SUMO [65]. Among these, SOHO relies on DOLCE [14] in order to support a flexible interpretation of temporally evolving entities and, also, to rely on a recognized standard representation framework (ISO 213838-3). DOLCE, therefore, represents a flexible model, well suited to support the interpretation of domain entities whose state depends on the context and may change/evolve over time.

In addition to DOLCE, SOHO is built on top of two other ontologies: (i) the CORA ontology [71] and; (ii) the SSN ontology [27]. CORA is an IEEE standard ontology for robotics and automation aiming at promoting a common language in the robotics and automation domain. It characterizes knowledge about robots and robot parts, robot positions and configurations, and groups of robots. This standard relies on SUMO [65] as a theoretical foundation and integrates the framework ALFUS [47] to define possible autonomy levels and related operative modes of a robot. SSN is a W3C standard ontology for IoT devices and sensor networks. It defines basic concepts and properties characterizing the capabilities of sensing devices, their deployment into a physical environment, and the outcome of sensing processes. SSN relies on DOLCE and defines a sufficiently general model to represent the physical properties of an environment and physical entities that can be observed or monitored over time.

Both CORA and SSN define concepts and properties relevant to HRC but they are not sufficient to describe production procedures and possible collaborations needed between human and robot agents. The scope of SSN is limited to the characterization of a physical environment in terms of properties that can be observed and sensing devices that carry out sensing processes. This ontology is quite “self-contained” and can be easily integrated with CORA to represent also robot interfaces and sensing parts. CORA instead has a broader scope. It focuses on robot parts, robot configurations, and levels of autonomy. However, CORA does not support the contextualization and interpretation of behaviors of robots and other autonomous agents (e.g., human operators) with respect to global production objectives and processes.

Qualities, norms, and events

Human-Robot Collaboration scenarios are a combination of technical, physical, and social contexts since the acting entities should physically interact while complying with a number of rules that guarantee correct and safe execution of production processes. Indeed, an HRC scenario is composed of a number of physical entities each characterized by different features and qualities that cooperate to achieve common (production) objectives. SOHO, therefore, interprets HRC scenarios as social contexts where the behavior of each acting entity affects the behavior of others, and coordination is necessary to correctly and safely carry out production processes. As such, any HRC scenario is subject to social structures known as norms [9] either implicit or explicit rules, that constrain the behavior of involved actors. To model concepts and properties that suitably capture such dynamics SOHO relies on the DOLCE+DnS Ultralite ontology (DUL) 4

The concept

According to the documentation, a

Another relevant concept used by SOHO is

As mentioned at the beginning of the section, SOHO interprets an HRC scenario as a social context where two or more agents should cooperate to achieve a common objective (

Finally, the concept

Ontologies should be adequate to their domains, and domains come along on different granularity levels [49]. An ontology should account for all perspectives and levels of abstraction that are relevant to a domain. SOHO follows a context-based approach and organizes knowledge in a number of synergetic contexts, each describing the domain from a particular perspective. Contexts support a modular and multi-perspective representation of domain knowledge. Figure 1 shows the general structure of SOHO: (i) the Environment Context; (ii) the Behavior Context and; (iii) the Production Context. The safety and human factor contexts do not represent actual ontological contexts. Rather they define two “meta-perspectives” that must be uniformly considered at different levels of abstraction by all ontological contexts.

Overview of SOHO: (a) general structure and defined contexts; (b) excerpt of concepts and properties.

Environment context The environment context defines physical elements and general properties of an environment that can be observed. This context strongly relies on SSN which is crucial to characterize the sensing capabilities of available devices and the physical properties of domain entities they can observe. First, SOHO defines a concept to model objects that are part of a production environment by extending the concept

Some properties of the objects that constitute a production environment can change over time and it could be necessary to observe/monitor them in order to correctly carry out tasks. Examples are the position in space of objects necessary to perform a task, the position and occupancy state of an area where to place objects, or the state of a bolt, etc. SOHO relies on SSN to model sensing devices and the information they can gather through (implemented) sensing processes. SSN defines the concept

Behavior context The behavior context characterizes the behaviors of the acting entities of a production environment. The central concept is

The role

A particular type of

Another particular type of

Similar to

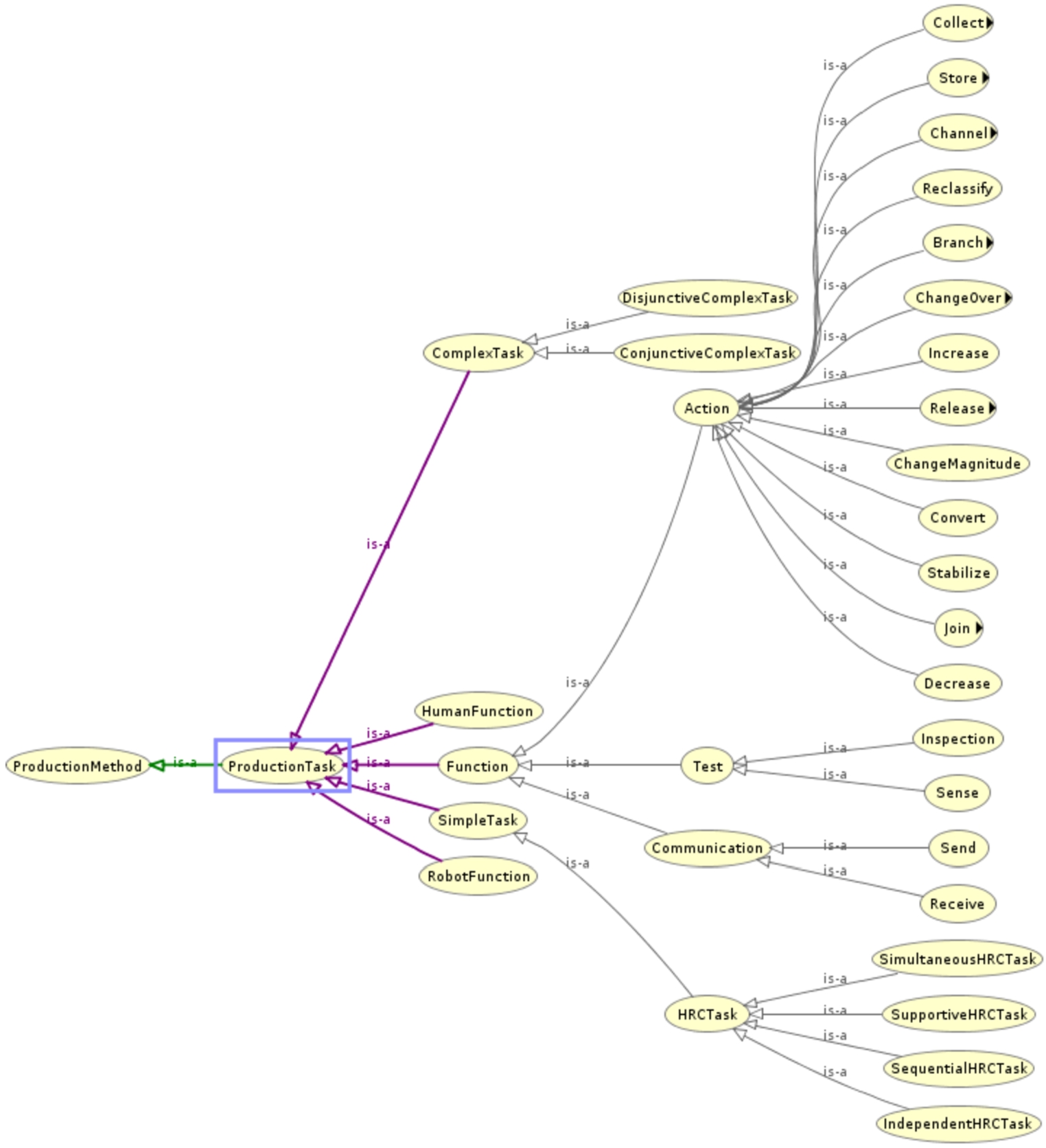

Behavioral knowledge is determined according to the specific features and internal composition (i.e., the embodiment) of the specific agents. The set of operations that can be actually implemented in a particular scenario (and “who” can implement them) can be dynamically inferred according to the known capabilities of an agent. To support this reasoning SOHO should generally characterize manufacturing operations and correlate them to the types of capabilities necessary for their execution. To this aim, SOHO integrates the Taxonomy of Functions defined in [12]. Low-level manufacturing operations are defined as

Production context Considering the production perspective, SOHO defines concepts and properties that characterize production procedures in terms of objectives and operations necessary to successfully achieve them. A proper representation of this knowledge is crucial to establish human and robot commitment to production goals [1,18] and a level of agreement about the way the human and the robot together achieve these goals [25,79]. Furthermore, it is necessary to characterize events that may occur in a production environment and that are relevant with respect to the execution of production procedures. This perspective relies on the foundational concepts

SOHO defines the concept

Goals are achieved through plans each composed of a number of actions implementing a particular production method. SOHO defines the concept

A

A

The temporal and physical occurrence of production processes entails the execution of

SOHO defines the concept

The concept

It is worth noticing that our definition of

A

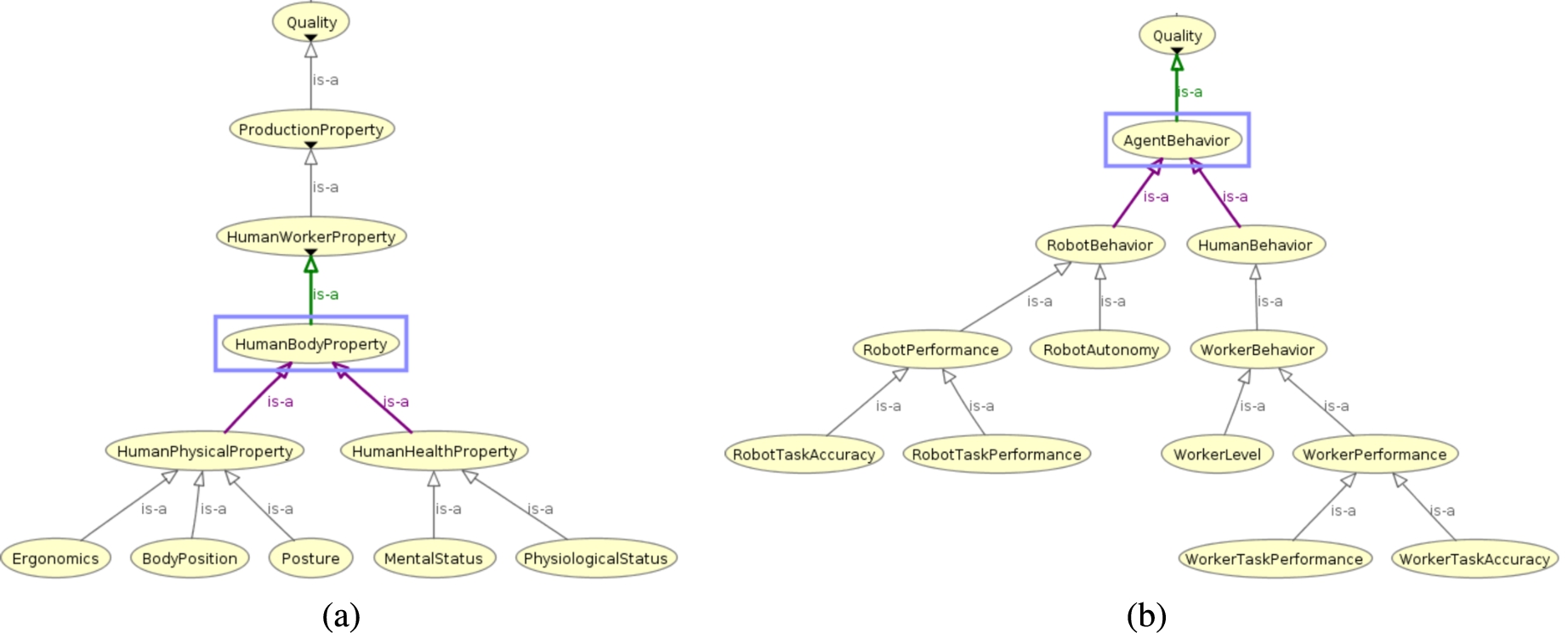

A novel aspect of SOHO is the support to the explicit representation of the human factor. SOHO interprets the human factor as the set of physical or abstract features that characterize the expected behavior and skills of a worker and that can directly (or indirectly) affect the interactions with a robot and the whole production. The human factor is defined through a set of

Excerpt of SOHO concerning physical and behavioral qualities of human workers.

Concepts like

Concepts like

The definition of a

Following this layering of a procedure, SOHO defines three types of

Taxonomical structure of production tasks with the integrated Taxonomy of Function [12]. Please note that the concept

A

A

The concepts

Although the “boundaries” of the representation space are well delimited within a domain ontology [43], there is a multitude of behaviors that can be described with a production scenario and a multitude of design choices to take into account. The correct definition of all necessary information and constraints is not always straightforward. Ontology design patterns [40] can play a role in supporting knowledge definition. Patterns can indeed specialize an ontological model without losing generality but defining useful structures that guide knowledge definition.

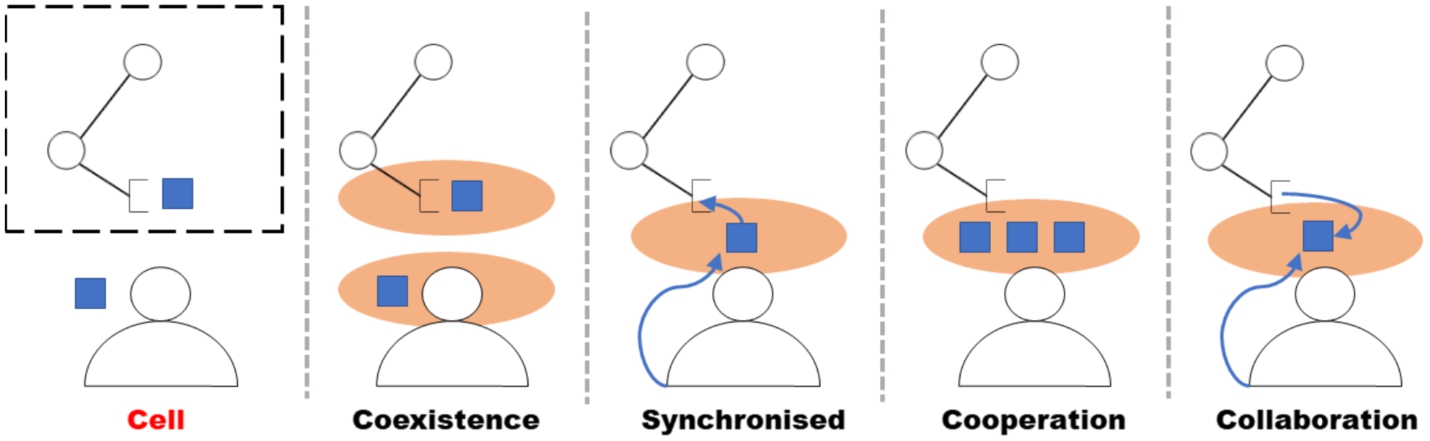

Ontological patterns in this case characterize typical and/or recurrent associations between tasks and functions. Namely, they define structures describing typical collaborative behaviors of human workers and robots in production scenarios. SOHO introduces HRC ontological patterns by taking into account interaction schema known in the literature [44]. First, SOHO defines the concept

Each

General structure of an HRC cell and types of collaboration modalities as defined in [59]: (i) Coexistence/Independent; (ii) Synchronised/Sequential; (iii) Cooperation/Simultaneous; (iv) Collaboration/Supportive.

SOHO thus defines four types of

An interaction modality of type

An interaction modality of type

An interaction modality of type

According to these four interaction modalities, SOHO defines four types of

A SOHO-compliant knowledge base (ABox) characterizes an HRC scenario from different perspectives. The use of semantic technologies based on RDFS [29], OWL [3] and SPARQL [70] supports accessibility and interoperability between knowledge and production-related processes. We are especially interested in showing how the proposed semantics and related knowledge bases would contribute to the enhancement of awareness, flexibility, and autonomy of collaborative robots. This section shows in detail how knowledge is used to automatize the synthesis of task planning models and coordinate human and robot operations through deliberative plan-based control [41,48]. A knowledge extraction procedure bridges the gap between knowledge representation and task planning for robot control, supporting the realization of a cognitive “perceive, reason, act” loop. It is worth noticing that SOHO is planning agnostic. The defined knowledge bases thus describe therefore HRC scenario in general terms without considering the particular task planning formalism used for the actual coordination. This is a key point to “standardize” production knowledge and thus realize general services that can be used and combined with different control technologies (at different levels of abstraction).

Task planning generally relies on AI Planning and Scheduling technologies [33,36,39,60] allowing robots (or more in general artificial agents) to autonomously synthesize and execute plans that achieve some desired goal. Such plans are generally seen as sequences of actions to be executed starting from an initial state. Several planning formalisms exist in literature each supporting different reasoning capabilities e.g., causal reasoning [36,45,60], numeric and temporal reasoning [26,39] or hierarchical reasoning [10,64]. HRC requires reasoning on the simultaneous execution of human and robot actions, taking into account different qualities of the resulting collaborative processes e.g., cycle time, safety, and idle time of the robot. Furthermore, the human introduces a significant source of uncertainty from a control perspective. A plan-based controller should properly deal with this level of uncertainty in order to synthesize plans that are sufficiently robust at execution time [62,96].

For these reasons, this work specifically considers the timeline-based planning formalism and proposes a knowledge extraction procedure mapping production knowledge to timeline-based specifications. The formalism has been successfully applied in many real-world scenarios e.g., [20,50,63,72] and is quite expressive supporting concurrency, durative actions, (flexible) time as well as numeric and temporal constraints. The work [24] formalizes timeline-based planning by introducing the notions of temporal uncertainty and controllability issues [23]. Timelines have been applied to HRC thanks to the capabilities of dealing with human behavioral uncertainty [35,69]. Before entering into the details of the developed knowledge extraction procedure, the next sub-section briefly introduces the main concepts regarding timelines, as introduced in [24].

Plan-based control through timeline-based planning and execution

A timeline-based planning specification describes valid temporal behaviors of a number of domain features to be controlled. The planning process consists in synthesizing valid flexible behaviors (i.e., timelines) describing how these features should evolve over time to achieve some given objectives (i.e., which states/actions assumes/executes and when). According to [24], state variables model domain features by specifying valid temporal behaviors in terms of allowed timed sequences of states/actions (generally denoted as state variable values).

A State Variable is a tuple V is a set of values

Controllability properties characterize the execution of SVs’ values with respect to the dynamics of the environment.

A value Controllable ( Partially-controllable ( Uncontrollable (

Information about controllability and temporal flexibility are crucial to solve planning problems while dealing with temporal uncertainty to support robust plan execution [19,62,95].

A flexible timeline for a state variable

If

A token

A timeline

Synchronization rules specify additional constraints that are necessary to synthesize timelines that achieve desired objectives (e.g., planning goals).

A synchronization rule has the form

Synchronization rules with the same trigger are treated as disjunctions and represent alternative constraints that should hold between different sets of token variables.

A planning problem then consists of a set of partially instantiated timelines that specify known facts about the initial state of domain features (i.e., tokens specifying the values assumed by each state variable at plan origin) and goals constraining their temporal evolution (i.e.,. tokens specifying the desired values that one or more state variables should assume during certain temporal intervals). A planning process should synthesize valid and complete temporal behaviors of all (controllable) state variables (i.e., timelines) such that all duration constraints, value transition constraints and temporal constraints of applied synchronization rules are satisfied.

The objective of the knowledge extraction procedure is to synthesize a valid timeline-based specification to coordinate human and robot agents through task planning. The procedure relies on SOHO to extract information from the knowledge base and instantiate timeline-based structures. Two types of structures compose a timeline-based model: (i) state variables and; (ii) synchronization rules. State variables describe possible behaviors of modeled domain features singularly (local perspective). Synchronization rules constrain such behaviors to coordinate state variables and carry out complex tasks.

Given these structures, it is necessary to reason about how to organize the task planning model in order to coordinate domain entities correctly (e.g., the human and the robot in HRC scenarios). A number of modeling decisions are necessary concerning the number of state variables (i.e., which and how many domain features must be modeled), and the number of decomposition and temporal constraints (i.e., which and how many synchronization rules must be modeled to effectively coordinate state variables).

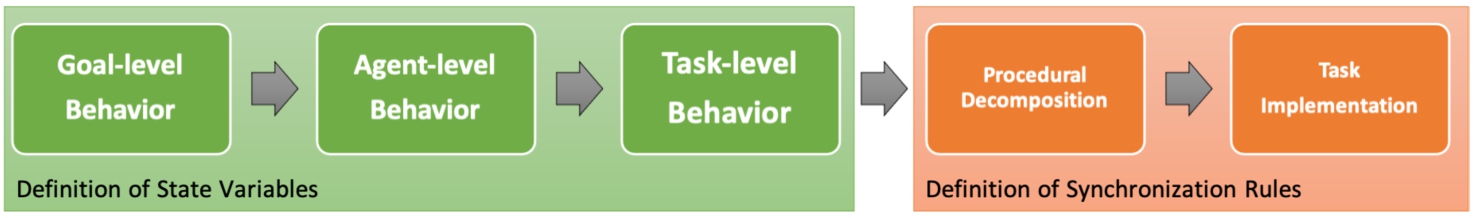

The modeling of an effective task-planning domain is not trivial and should follow a number of well-defined steps. Broadly speaking we define a timeline-based model of an HRC scenario following a hierarchical decomposition methodology [35,69]. We define a number of logical state variables modeling production goals and production tasks that could be performed over time. A number of state variables model the low-level operations that agents could perform over time. A number of synchronization rules describe how production goals are decomposed into increasingly simpler tasks and how simple (or primitive) tasks are correlated with the low-level operations that modeled agents can perform over time. This methodology has been implemented in a knowledge extraction procedure capable of generating timeline-based models from SOHO-compliant production knowledge. Figure 5 shows the general structure of the developed procedure.

Knowledge extraction pipeline for the automatic generation of timeline-based planning models.

The procedure takes as input a production knowledge defined according to SOHO and generates as output a complete timeline-based model for task planning. It consists of a number of knowledge extraction steps that can be divided into two macro-steps of the procedure. The first macro-step comprises the steps needed to generate the state variables of the timeline-based model. The second macro-step comprises the steps needed to generate the synchronization rules.

State variables model at different levels of abstraction the tasks or low-level operations that could be executed in an HRC scenario to support production. The step Goal-level Behavior extracts knowledge useful to define the goal-level state variable

The step Agent-level Behavior extracts knowledge useful to generate the state variable defining the low-level operations that agents can execute. The procedure would generate a state variable for each individual of

It is worth noticing that values of the human state variable

The step Task-level Behavior then extracts knowledge useful to generate the state variables defining the tasks modeling the production procedures. This step first analyzes the knowledge by “navigating” the described production procedures through the property

When all the state variables have been generated, the procedure generates the synchronization rules that constrain their values. The step Procedural Decomposition analyzes the property

The (last) step Task Implementation extracts information about collaborative patterns in order to generate synchronization rules correlating values

This set of synchronization rules constrains possible task allocations and possible interactions between the worker and the robot. It is at this level that ontological patterns about known collaboration modalities (i.e., Equation (16), Equation (17), Equation (18) and Equation (19)) are used to define temporal constraints determining specific human-robot collaborative behaviors.

Generation of timeline-based structures in detail

This section describes in detail the implementation of the knowledge extraction steps of the methodology encoded with the pipeline of Fig. 5. Following the procedure, we show the auxiliary data structures created to support the methodology with the pieces of the generated timeline-based model. The knowledge base and the extraction procedure have been developed in Java using the open-source library Apache Jena.5

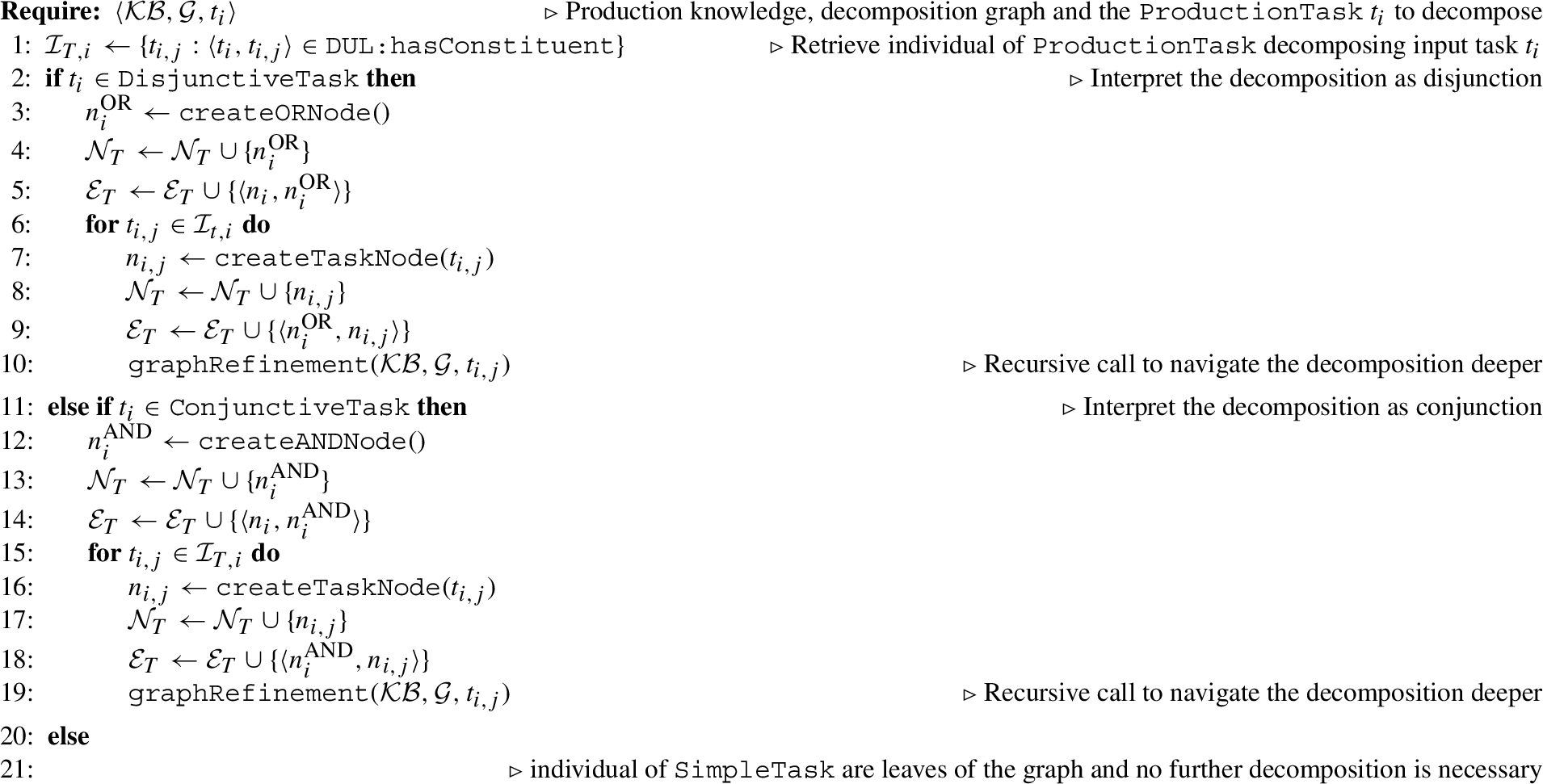

Algorithm 1 shows the implementation of the knowledge extraction pipeline of Fig. 5. Specifically, it takes as input a knowledge base

Procedure for the generation of a timeline-based model

Define state variables The procedure starts by generating the state variables modeling the temporal behaviors of domain features. The first state variable generated is the goal state variable

There is a default state

Going back to Algorithm 1, the procedure continues with the generation of the state variables of the agents of the scenario (rows 3). In the specific case of HRC, two state variables are generated. One state variable describes the low-level operations the human can perform over time

Specifically, the set of values

Define task-level state variables The last set of state variables created by Algorithm 1 concerns the set of

The graph

Algorithm 4 shows how the graph

For each individual

In the first case, the procedure creates an OR node

In the second case, only one method

Algorithm 5 recursively refines the graph

If

If

If the task

Going back to Algorithm 1, the graph

The hierarchical layering of

Define synchronization rules The definition of the synchronization rules starts with defining the rules that constrain the decomposition of goals (i.e., values of

Algorithm 6 shows the procedure generating synchronization rules for the decomposition of a

In the case of a

In the case of a

Algorithm 7 generally describes the procedure that creates the synchronization rules constraining the implementation of

A task

A task

A task

A task

We assess SOHO and the developed timeline-based model generation procedure on a number of real HRC scenarios from the pilot use cases of the EU H2020 Sharework project .6

The knowledge bases can be found on the GitHub repository of SOHO under the folder “instances”.

The reasoning mechanisms and the knowledge extraction procedure of Algorithm 1 have been developed in Java using Apache Jena.10

Distribution ROS Melodic –

The automotive scenario

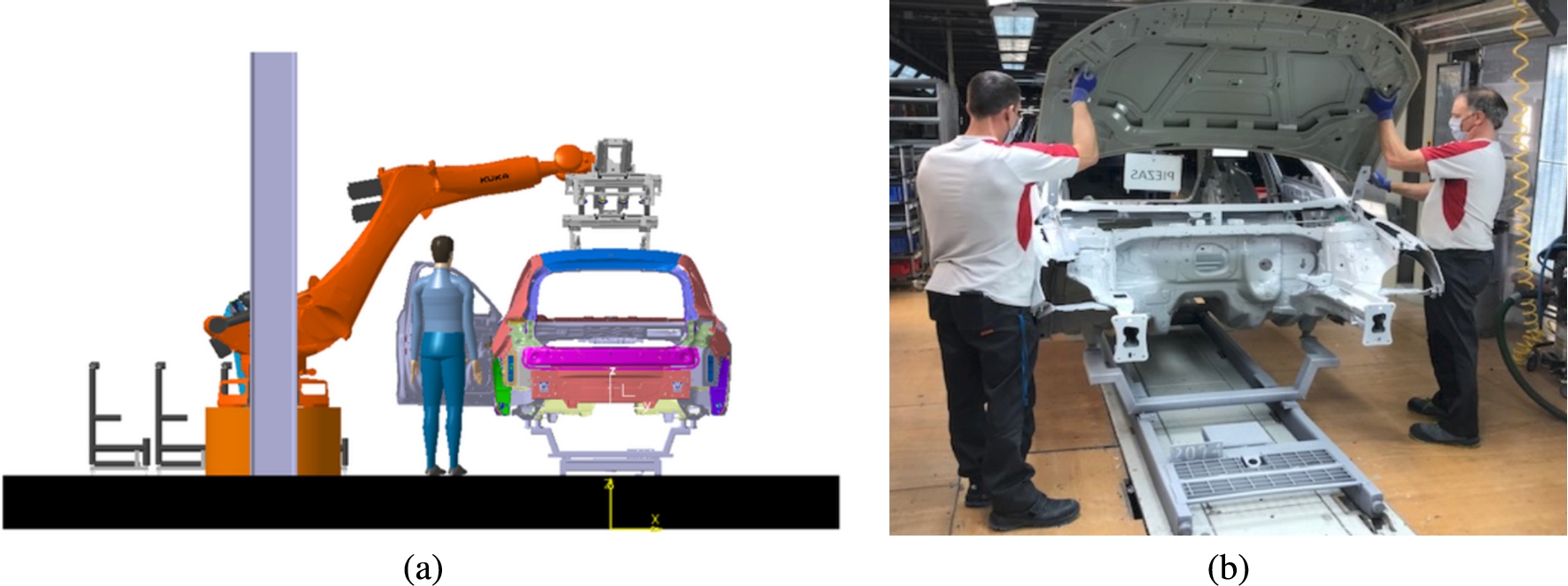

This scenario concerns a specific station of an assembly line of vehicles. The collaborative process specifically focuses on a door assembly task of chassis. The collaborative robot is in charge of moving and holding the heavy parts of the vehicle (i.e., pick-and-place of front and rear doors to be assembled on the chassis) while the human carries out assembly tasks in the same working area of the robot (i.e., fix the doors to the body of the vehicle). Figure 6 shows some pictures of the layout of the working area with the mount point of the front door on the chassis.

Design of the collaborative cell for the

This scenario is characterized by a flat production process where the human and the robot play different roles and carry out tasks autonomously but following a strict order. Door assembly is correctly performed only if the robot and the human execute their task at the correct time (e.g., the human cannot start her task if the robot does not place the door in the expected position). Roles are not interchangeable therefore it would not be possible to carry out a collaborative process without the correct coordination of the two actors. More specifically, the robot (a robotic arm) can perform only

All

The production process of this scenario is simple from a control perspective since the possible behaviors of the human and the robot are fixed and there is no need for optimization. It just requires the unfolding of a single

This scenario concerns the logistic station of a manufacturing system for electrical connectors. The workshop for the assembly of pallets and fixtures in load/unload stations is divided into two main areas: (i) a transporter panel buffer, where pallets are stored and moved, and; (ii) some CNC (Computerized Numerical Control) machines, where the pallets are moved to perform the machining operations. In this scenario, operators are generally responsible for transporting pallets and components to be mounted in a tombstone that goes inside the Flexible Manufacturing System where each part is machined. The scenario is characterized by high variability of parts to be produced. Operators therefore should be highly trained in order to correctly perform the suitable assembly procedure for each different product as well as perform the quality inspection on the pallets before/after machining. The collaborative robot is in charge of assisting operators when moving across the station, understanding operator’s behavior, and anticipating tasks in order to facilitate their work and speed up the production, i.e., increasing the throughput.

Structure of the shop-floor of the

Figure 7 shows some pictures of the layout of the logistic station. The working area is characterized by a central/shared conveyor where different types of products are loaded and processed in order to be machined. The worker and the robot (a UR10 robotic arm) are placed on two distinct sides of the conveyor and work simultaneously on the products. Products are placed and moved on the central conveyor which represents the shared working area where operations take place and where the human and the robot physically interact. The production process is characterized by different types of operations depending on the specific types of workpieces entering the collaborative cell. From a task planning perspective, the structure of the assembly/disassembly process is similar in all the cases, since the human and the robot can have the same capabilities (e.g., screw, unscrew, pick, place, etc.). Unlike the

The process consists in picking and placing workpieces transported by a pallet running over a conveyor. Each workpiece is placed over a pallet entering the conveyor from an initial position (

The replacement of a workpiece on a pallet is realized through pick & place operations that can be performed by both the human and the robot. The human or the robot

The integration of developed representation and planning capabilities contributes to the optimization and safe coordination of human and robot behaviors. The designed cognitive control approach would automatically optimize human and robot behaviors through the generated (timeline-based) task planning model. Similar to

This scenario takes into account the shop floor of a company offering differential and global solutions in power transmission and spraying components. This scenario considers a servo rotary table that is assembled in seven fixed assembly stations. In each station, there is an operator performing a specific task in the assembly process. All tasks are carried out manually, just using cranes and lifters to transport the heavy components from one station to another. The collaboration between the human and the robot concerns three out of seven tasks of the rotary table assembly process. In the current assessment, we specifically consider the task bolt tightening and torque measuring. Figure 8 shows the designed physical environment with the rotary table equipped with the collaborative robot (a UR10 robotic arm). The operator applies adhesives on the bolts of the rotary table to allow the robot to simultaneously determine its position and dimension through perception modules developed with the project. Information about detected bolts is then used by the robot to automatically screw them.

Working area of the

The developed cognitive approach supports a reactive behavior where the robot relies on perception capabilities [55] to autonomously recognize human tasks (i.e., “bolt placing” tasks) and act accordingly. The developed knowledge representation capabilities indeed allow the robot to recognize new events concerning the placement of bolts by the human and interpret these events as signals triggering the execution of robot tasks (i.e.,

The described reactive behavior represents the actual way the developed knowledge representation and reasoning modules have been deployed into this pilot case [88]. To evaluate the representation capabilities of the ontological model and the task planning model, we here model the whole production process assuming a deliberative approach similar to other use cases. Namely, we do not consider perception outcomes and assume the planner should synthesize screwing tasks of all bolts of the workpiece. The process thus consists of screwing a number

A high-level production goal

This scenario takes into account the shop floor of a railways transportation company supplying rolling stocks, services, and system infrastructure. The workshop is composed of six main stations each one dedicated to a specific set of operations concerning the assembly of trains. The project specifically focuses on the pre-assembly process of tramways windows and door frames. Among the tasks involved in the considered processes, riveting represents a repetitive and demanding task for human workers. It consists of the insertion of rivets in drilled holes along the metal pieces of window frames. This task is especially critical from safety perspective since it may cause significant injuries after a prolonged utilization of the riveting tool which weighs up to 5 kg.

Design of the collaborative cell of the

Figure 9 shows the physical layout of the shop floor and the structure of the window frames that are the target of the considered production process. The introduction of collaborative robots into the production line is designed to relieve human workers from physically demanding tasks like e.g., the riveting task in order to improve their working conditions and reduce the risk of injuries. A collaborative robot is thus supposed to work close to the window frame of Fig. 9(b) and tightly collaborate with the human worker to carry out the pre-assembly tasks. The collaboration takes place within the riveting task. The human operator is in charge of spreading the silicone over the corners of the frame structure then, the robot inserts the rivets using a riveting tool. It can be observed that the riveting task entails a synchronous behavior of the two actors since the robot can insert the rivets only after the worker has correctly applied the silicone. Similar to the screwing tasks of

Also in this case there are no disjunctive tasks to be considered in the decomposition since the roles and responsibilities of the worker and the robot are quite fixed. The key aspect is the use of

This scenario considers a general collaborative assembly of a compound workpiece. The layout of the work cell is characterized by a shared central space where the workpiece is placed and where the human and the robot simultaneously carry out assembly operations. Figure 10 shows the layout of the designed collaborative environment and the layout of the mosaic. The mosaic is modeled as a

Configuration of the

The collaborative process consists of the execution of a set of pick & place operations. Each pick & place is performed by a single agent autonomously but their execution (and assignment) should satisfy some physical constraints. Pick & place operations whose targets are colored objects placed in different areas: blue objects can be handled by both the human and the robot; orange objects for short) can be performed by the robot only; white area (i.e., white objects for short) can be performed by the human only. Although this scenario does not correspond to a concrete production process of Sharework, it describes a highly flexible collaborative process representing alternative behaviors of the robot and the human into the knowledge and the task planning model [35].

The human and the robot play the same role and carry out tasks autonomously without a specific order. The process can be seen as the problem of moving some

Given the knowledge bases of the different scenarios, this section assesses the technical feasibility of the implemented representation and reasoning processes. This section in particular evaluates the validity of the semantic models and the resulting timeline-based specifications. For each scenario, it shows the time needed for the synthesis of the task planning models in order to measure the introduced production latency. Table 1 summarizes and compares data about the defined knowledge bases, the characteristics of the synthesized planning models, and the reasoning time of Algorithm 1. As a general comment, the obtained knowledge bases have the same number of defined classes and properties since they share the same ontological framework but for each of them, it can be observed a different number of individuals and a different number of synchronization rules, temporal constraints, and state variable values in the resulting timeline-based planning models.

Data about the knowledge quality, size of knowledge bases and planning models, and performance of Algorithm 1. Coherence and consistency of the knowledge bases have been evaluated using the protégé debug tool- https://protegewiki.stanford.edu/wiki/OntoDebug

Data about the knowledge quality, size of knowledge bases and planning models, and performance of Algorithm 1. Coherence and consistency of the knowledge bases have been evaluated using the protégé debug tool-

The structure and the size of the obtained planning models indeed differ significantly from each other (see “Planning Model” columns in Table 1) leading to different model generation times. For example, The planning model generated for

The results have been obtained by configuring the Apache Jena framework with an ontological model compliant with

More specifically, we have defined a basic ontological model with

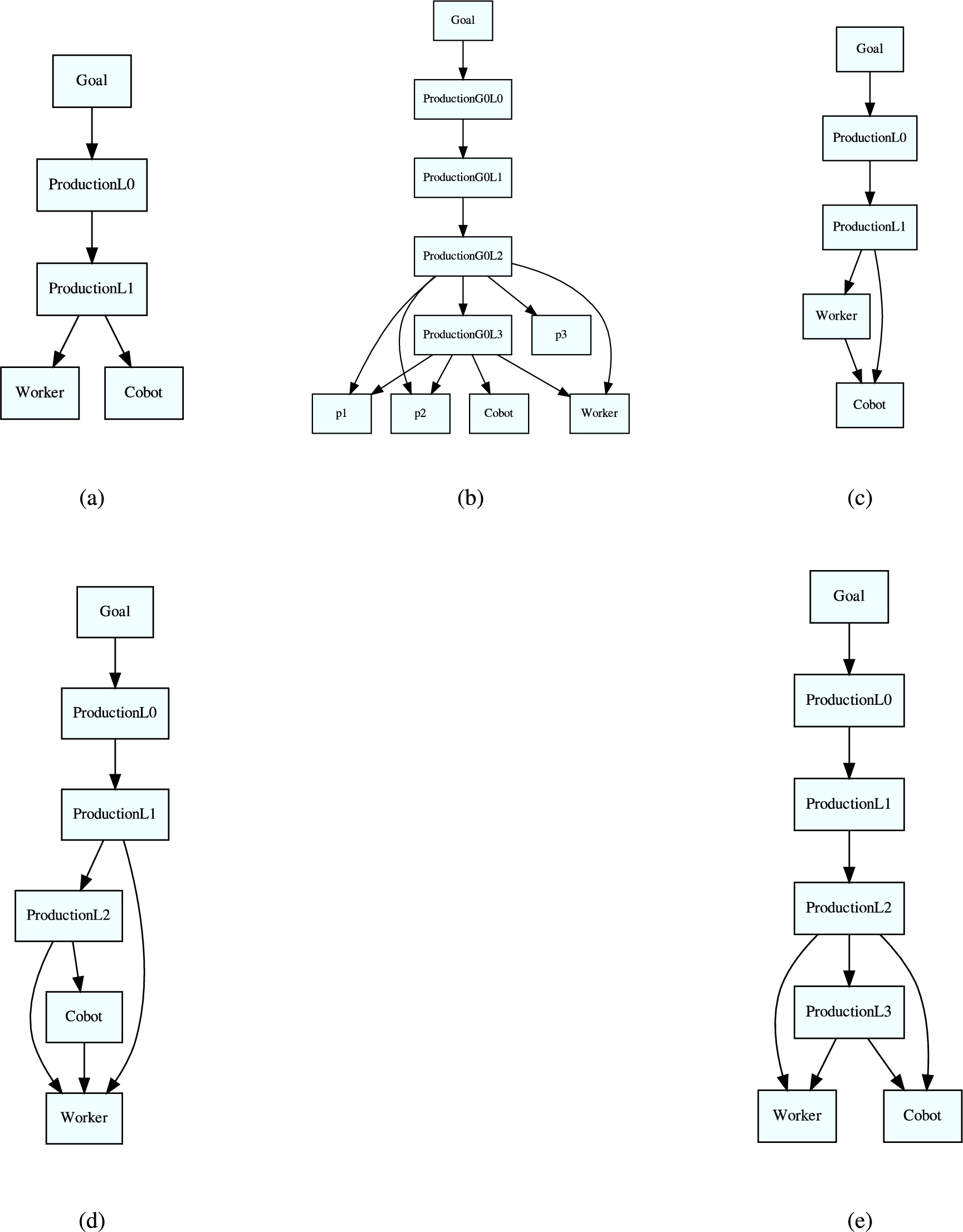

Hierarchical structure of the generated planning models. The two graphs show the inferred hierarchical relationships between the state variables generated for: (a)

Each level represents a specific abstraction level of the defined hierarchical production procedures. The highest level of the hierarchy characterizes the high-level production goals that are incrementally decomposed into lower-level tasks (i.e., production operations). The lowest level of the hierarchy characterizes the tasks the worker and the robot can perform to support the associated production procedures. Following this hierarchy, as shown in Section 4.2.2, each hierarchy level is associated with a dedicated state variable. Values of such state variables describe tasks that should be performed at the associated abstraction level of the production procedure. State variables of the last hierarchical layer describe the concrete operations (i.e., functions) the worker and the human can perform over time and thus define their possible behaviors within a specific production scenario.

Given a timeline-based model, plans specify for each state variable of the model sequences of tokens determining the production tasks performed and the low-level production operations carried out by the worker and the operator. Such sequences of tokens (i.e., timelines), as shown in other works [35,69], describe the planned decomposition of modeled production procedures and planned temporal behaviors of collaborating actors (i.e., the worker and the robot). Following [23,24] then, each token instantiates a value of a state variable to a flexible execution interval (the intervals associated with each token represent respectively the end-time interval and the duration interval of the execution). Timelines, therefore, are said to encapsulate envelopes of temporal behaviors. One interesting aspect to point out is how the defined ontological patterns that formally characterize the representation structure of collaborative tasks, entail a clear and well-defined structure of the defined synchronization constraints that implement that collaborative behavior.

The developed representation and reasoning capabilities impact different aspects concerning the design and deployment of collaborative robots. The proposed approach would facilitate modeling, maintenance, and adaptation of control dynamics to the different (evolving) requirements of industrial scenarios. Table 2 lists the aspects concerning HRC production systems that are supported by the proposed approach. The table in particular shows the relevance of each aspect taking into account the production and interaction features of each pilot. In this way, it points out the flexibility of the approach according to the requirements of different scenarios.

Aspects concerning the design and deployment of collaborative robots that are supported by the proposed ontology-based approach, and their impact on the considered HRC scenarios

Aspects concerning the design and deployment of collaborative robots that are supported by the proposed ontology-based approach, and their impact on the considered HRC scenarios

H = high relevance, M = medium relevance, L = low relevance.

The ontology proposes a kind of standardization of production knowledge and describes collaborative scenarios based on a clear and well-structured formalism. Defined concepts and properties characterize production requirements, interacting features, and skills of robotic and human actors according to clear semantics. The assessment shows that SOHO is suitable to describe both scenarios requiring simple and strict interactions between e.g.,

The integrated representation of the human factor allows SOHO and the developed ontology-based control approach to contextualize production dynamics to the known features and skills of human workers. This knowledge combined with task planning supports the synthesis of personalized collaborative plans adapted to the expected performance (e.g., expected average time of task execution) and expertise (e.g., worker experience) [92]. This knowledge and related reasoning capabilities are especially important in scenarios characterized by a high variety of workpieces and operations e.g.,

Another important aspect is the enhanced awareness of robot controllers. Developed knowledge representation and reasoning capabilities allow robot controllers to build and maintain an updated description of the production dynamics and observed state of the production environment. The semantics of SOHO in particular supports the abstraction and contextualization of sensing data that would be useful to recognize relevant production situations and proactively trigger robot actions. Considering for example the scenario

More in general the developed approach supports a modular description of robot capabilities and production requirements that can be easily extended and refined over time. For example, the knowledge base can be enriched with additional robot capabilities, human skills, production goals, and procedures. This new knowledge would automatically be contextualized with respect to production requirements and thus integrated into the reasoning and task-planning processes. From the robot perspective, the ontology-based approach supports modular programming allowing roboticists to focus on the definition of new capabilities/skills (i.e.,

SOHO (Sharework Ontology for Human-Robot Collaboration) is a novel domain ontology for Human-Robot Collaboration defined within the Sharework H2020 research project. It formally characterizes HRC manufacturing scenarios by considering different perspectives. Indeed, its main original feature relies on the use of a context-based approach to ontology design, supporting the flexible representation of collaborative production processes.

This paper proposes an extension of SOHO by defining ontology design patterns that formally characterize collaboration dynamics that are typical in many Human-Robot Collaboration manufacturing scenarios. Furthermore, we have defined a general knowledge extraction procedure that relies on the semantics proposed by SOHO to analyze production knowledge and automatically synthesize timeline-based plan-based controllers that are suitable to effectively coordinate human and robot behaviors [35,69]. An experimental evaluation of the developed representation and reasoning technology shows the efficacy of properly capturing the complexity of real industrial collaborative scenarios and the capability of automatically “compiling” such knowledge into suitable planning domains.

Future research directions will focus on further extensions of SOHO to better characterize the human factors and support the representation of preferences, expertise levels, and physical and cognitive conditions of human workers that are crucial to serving advanced personalization and finer adaptation features in collaborative processes. In addition, we plan to integrate developed ontology-based representation and reasoning into a knowledge engineering tools to facilitate domain experts in the design of collaborative process as well as in the deployment of AI-based task planning technologies for the coordination of collaborative cells [38].

Footnotes

Acknowledgements

Authors are partially supported by the European Commission within the Sharework project (H2020 Factories of the Future GA No. 820807). Authors are grateful to EURECAT, STIIMA-CNR, STAM, MCM, CEMBRE, Goizper and ALSTOM, partners in Sharework, and sharing their knowledge and experience about the productive scenarios that inspired our work. Authors are also partially supported by the EU project TAILOR “Foundations of Trustworthy AI - Integrating Learning, Optimisation and Reasoning” G.A. 952215.