Abstract

Advocates for Neuro-Symbolic Artificial Intelligence (NeSy) assert that combining deep learning with symbolic reasoning will lead to stronger AI than either paradigm on its own. As successful as deep learning has been, it is generally accepted that even our best deep learning systems are not very good at abstract reasoning. And since reasoning is inextricably linked to language, it makes intuitive sense that Natural Language Processing (NLP), would be a particularly well-suited candidate for NeSy. We conduct a structured review of studies implementing NeSy for NLP, with the aim of answering the question of whether NeSy is indeed meeting its promises: reasoning, out-of-distribution generalization, interpretability, learning and reasoning from small data, and transferability to new domains. We examine the impact of knowledge representation, such as rules and semantic networks, language structure and relational structure, and whether implicit or explicit reasoning contributes to higher promise scores. We find that systems where logic is compiled into the neural network lead to the most NeSy goals being satisfied, while other factors such as knowledge representation, or type of neural architecture do not exhibit a clear correlation with goals being met. We find many discrepancies in how reasoning is defined, specifically in relation to human level reasoning, which impact decisions about model architectures and drive conclusions which are not always consistent across studies. Hence we advocate for a more methodical approach to the application of theories of human reasoning as well as the development of appropriate benchmarks, which we hope can lead to a better understanding of progress in the field. We make our data and code available on github for further analysis.1

Keywords

Introduction

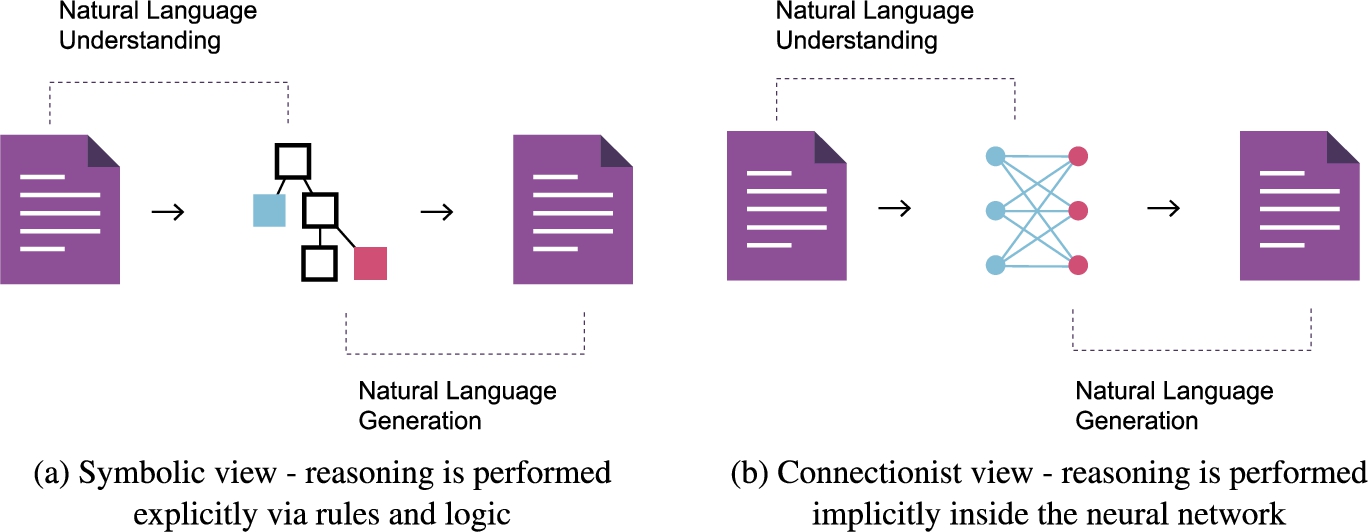

At its core, Neuro-Symbolic AI (NeSy) is “the combination of deep learning and symbolic reasoning” [51]. The goal of NeSy is to address the weaknesses of each of symbolic and sub-symbolic (neural, connectionist) approaches while preserving their strengths (see Fig. 1). Thus NeSy promises to deliver a best-of-both-worlds approach which embodies the “two most fundamental aspects of intelligent cognitive behavior: the ability to learn from experience, and the ability to reason from what has been learned” [51,145].

Remarkable progress has been made on the learning side, especially in the area of Natural Language Processing (NLP) and in particular with deep learning architectures such as the Transformer [37,147]. However, these systems display certain intrinsic weaknesses which some researchers [104,113] argue cannot be addressed by deep learning alone and that in order to do even the most basic reasoning, we need rich representations which enable precise, human interpretable inference via mathematical logic.2 See also Besold et al. [12], p.17-18 for additional context.

Symbolic vs sub-symbolic strengths and weaknesses. Based on the work of Garcez et al. [50].

Recently, a discussion between Gary Marcus and Yoshua Bengio at the 2019 Montreal AI Debate prompted some passionate exchanges in AI circles, with Marcus arguing that “expecting a monolithic architecture to handle abstraction and reasoning is unrealistic”, while Bengio defended the stance that “sequential reasoning can be performed while staying in a deep learning framework” [11]. Spurred by this discussion, and almost ironically, by the success of deep learning (and ergo, the clarity into its limitations), research into hybrid solutions has seen a dramatic increase – Fig. 2. At the same time, discussion in the AI community has culminated in “violent agreement” [80] that the next phase of AI research will be about “combining neural and symbolic approaches in the sense of NeSy AI [which] is at least a path forward to much stronger AI systems” [123]. Much of this discussion centers around the ability (or inability) of deep learning to reason, and in particular, to reason outside of the training distribution. Indeed, at IJCAI 2021, Yoshua Bengio affirms that “we need a new learning theory to deal with Out-of-Distribution generalization” [9]. Bengio’s talk is titled “System 2 Deep Learning: Higher-Level Cognition, Agency, Out-of-Distribution Generalization and Causality.” Here, System 2 refers to the System 1/System 2 dual process theory of human reasoning explicated by psychologist and Nobel laureate Daniel Kahneman in his 2011 book “Thinking, Fast and Slow” [77]. AI researchers [6,51,87,96,104,152,164] have drawn many parallels between the characteristics of sub-symbolic and symbolic AI systems and human reasoning with System 1/System 2. Broadly speaking, sub-symbolic (neural, deep-learning) architectures are said to be akin to the fast, intuitive, often biased and/or logically flawed System 1. And the more deliberative, slow, sequential System 2 can be thought of as symbolic or logical. But this is not the only theory of human reasoning as we will discuss later in this paper. It should also be noted that Kahneman himself has cautioned against the over reliance on the System 1/System 2 analogy in a followup discussion at the Montreal AI Debate 2 the following year, stating, “I think that this idea of two systems may have been adopted more than it should have been.”3

Number of neuro symbolic articles published since 2010, normalized by the total number of all computer science articles published each year. The figure represents the unfiltered results from scopus given the search keywords described in Section 5.2.

“Language understanding in the broadest sense of the term, including question answering that requires commonsense reasoning, offers probably the most complete application area of neurosymbolic AI” [51]. This makes a lot of intuitive sense from a linguistic perspective. If we accept that language is compositional, with rules and structure, then it should be possible to obtain its meaning via logical reasoning. Compositionality in language was formalized by Richard Montague in the 1970s, in what is now referred to as

On the one hand, distributed representations are desirable because they can be efficiently processed by gradient descent (the backbone of deep learning). The downside is that the meaning embedded in a distributed representation is difficult if not impossible to decompose. So while a Large Language Model (LLM), a deep learning language model based on the principle of distributional semantics, may be very good at making certain types of predictions, it cannot be queried for answers not present in the training data by way of analogy or logic. We have also seen that even as these models get infeasibly large – the larger the model, the better the predictions [131] – they still fail on tasks requiring basic commonsense. The example in Fig. 3, given by Marcus and Davis in [105] is a case in point. In their now seminal paper

Third generation generative pre-trained transformer (GPT3) [20] text completion example. The prompt is rendered in regular font, while the GPT3 response is shown in bold. It is clear that GPT3 is incapable of commonsense.

On the other hand, traditional symbolic approaches have also failed to capture the essence of human reasoning. While we may not yet understand exactly how people reason, it is generally accepted that human reasoning is nothing like the rigorous mathematical logic where the goal is validity. Though not for lack of ambition – Socrates got himself killed trying to get people to reason with logic [46]. In the

The remainder of this manuscript is structured as follows. Section 2 offers a brief history of NLP in the context of reasoning. Several recent surveys and their contributions to NeSy are discussed in Section 3, and are intended as an introduction to the field. Our contribution is given in Section 4, which also details the goals of NeSy selected for this survey. Section 5 describes the research methods employed for searching and analysing relevant studies. In Section 6 we analyze the results of the data extraction, how the studies reviewed fit into Henry Kautz’s NeSy taxonomy [80], and we propose a simplified nomenclature for describing Kautz’s NeSy categories. Section 7 discusses the limitations and challenges of the reviewed implementations. Section 8 presents limitations of this work and future directions for NeSy in NLP, followed by the conclusions in Section 9.

The study of language and reasoning goes back thousands of years, but it was not until the 1960’s that the first computational models were realized. The Association for Computational Linguistics (ACL)9

Common natural language processing tasks [97].

One of the first NLP projects was a chat-bot named ELIZA [155], written by Joseph Weizenbaum around 1965. Given a small hand crafted set of rules, ELIZA was able to hold an, albeit superficial, conversation, gaining tremendous popularity. Curiously, despite the program’s simplicity those who interacted with it, attributed to it human-like emotions. These early systems were based on pattern matching and small rule-sets, and were very limited for obvious reasons. In the 1970s and 80s linguistically rich, logic-driven, grounded systems, largely influenced by Noam Chomsky’s

Areas of NLP which are said to benefit from this approach are ones which require some form of reasoning or logic. In particular, Natural Language Understanding (NLU), Natural Language Inference (NLI), and Natural Language Generation (NLG).

NLU takes as input unstructured text and produces output which can be reasoned over. NLG takes as input structured data and outputs a response in natural language.

Several recent surveys [6,12,50,51,87,123,152,163,164] cover neuro-symbolic architectures in detail. Our aim is not to produce another NeSy survey, but rather to examine whether the promises of NeSy in NLP are materializing. However, for completeness, and by way of introduction to the subject, we briefly summarize each of these surveys and provide references for the architectures under review.

In response to recent discussions in the AI community and the resurgence of interest in NeSy AI, Garcez et al. [51] synthesize the last 20 years of research in the field in the context of the aforementioned debate. The authors highlight the need for trustworthiness, interpretability, and accountability in AI systems, which ostensibly, NeSy is most suited to, in particular when it comes to natural language understanding. The authors also emphasize the distinction between commonsense knowledge and expert knowledge, and suggest that these two goals may ultimately lead to two distinct research directions: “those who seek to understand and model the brain, and those who seek to achieve or improve AI.” Garcez at al. conclude that “Neurosymbolic AI is in need of standard benchmarks and associated comprehensibility tests which could in a principled way offer a fair comparative evaluation with other approaches” with a focus on the following goals: learning from fewer data, reasoning about extrapolation, reducing computational complexity, and reducing energy consumption14 Energy consumption is particularly significant when training Large Language Models which can cost in the thousands if not millions of dollars in electricity [131].

Neuro-symbolic artificial intelligence promise areas [51].

Sarker et al. [123] survey recent work in the proceedings of leading AI conferences. The authors review a total of 43 papers and classify them according to Henry Kautz’s categories,15 Henry Kautz introduced a taxonomy of NeSy types at the Third AAAI Conference on AI [80]. We rely on this taxonomy to classify the studies under review, and discuss each type in detail in Section 6.2.3.

Neuro-symbolic artificial intelligence promise areas [123].

The authors conclude that “more emphasis is needed, in the immediate future, on deepening the logical aspects in NeSy research even further, and to work towards a systematic understanding and toolbox for utilizing complex logics in this context.” Based on the studies in our review, we come to a similar conclusion.

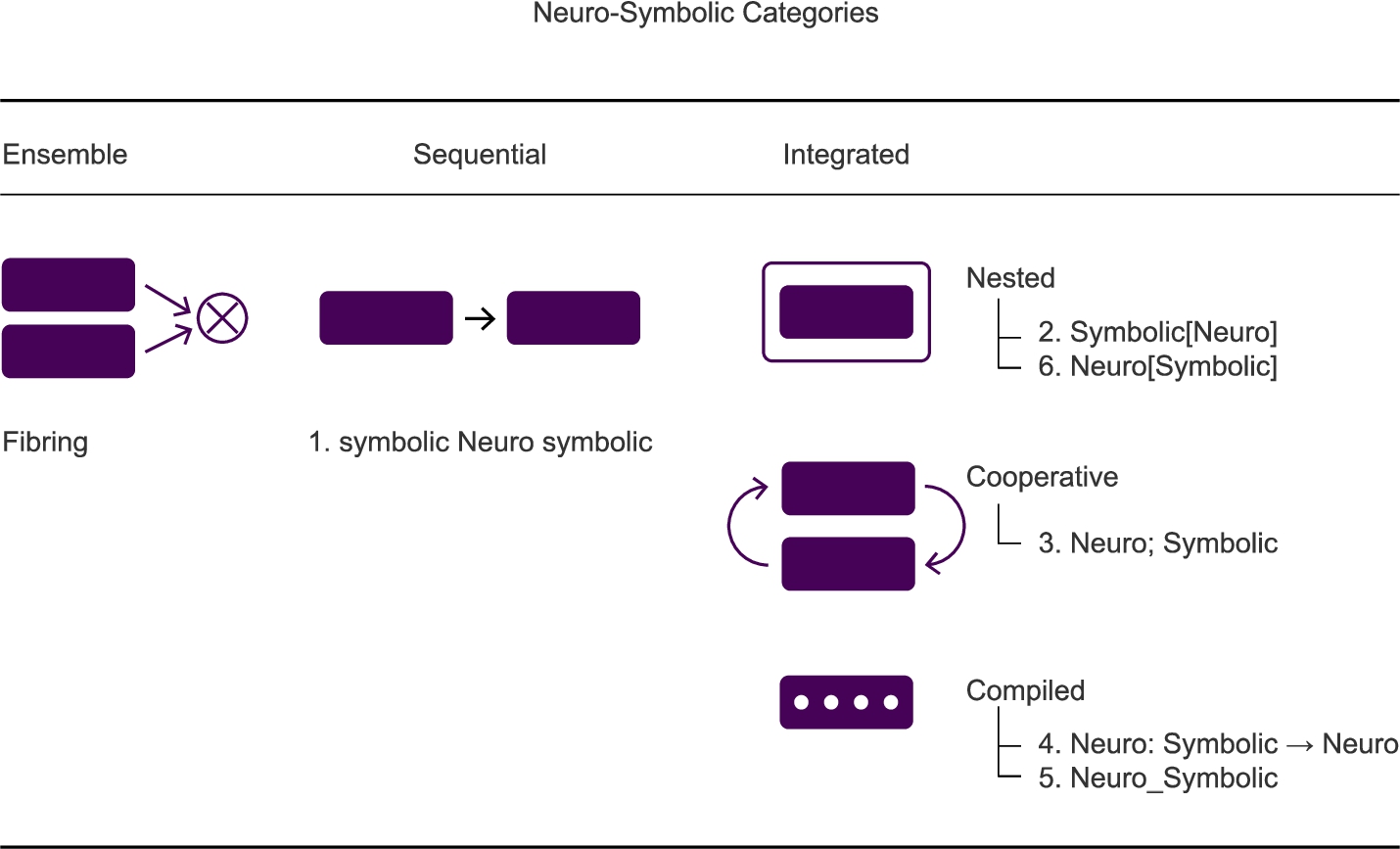

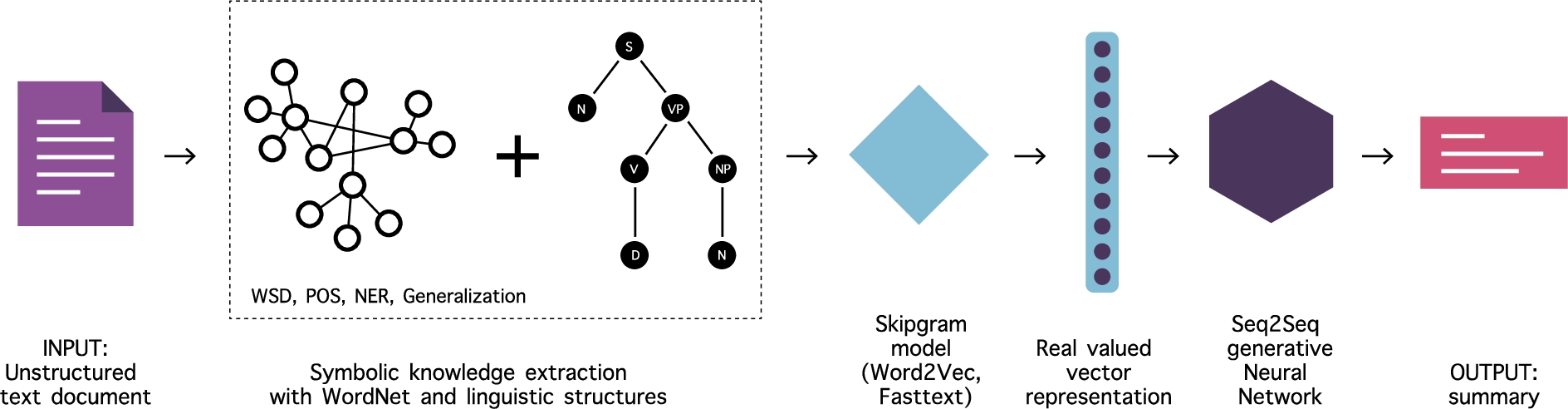

Garcez et al. [50] survey recent accomplishments for integrated machine learning and reasoning motivated by the need for interpretability and accountability in AI systems. According to [50], there are three main important features of a NeSy system: representation, extraction, and reasoning & learning. Symbolic knowledge can also be categorized into three groups: rule-based, formula-based, and embedding-based. The authors categorize and describe the following neuro-symbolic architectures.

Knowledge representation of

Logic tensor network (LTN) for

In

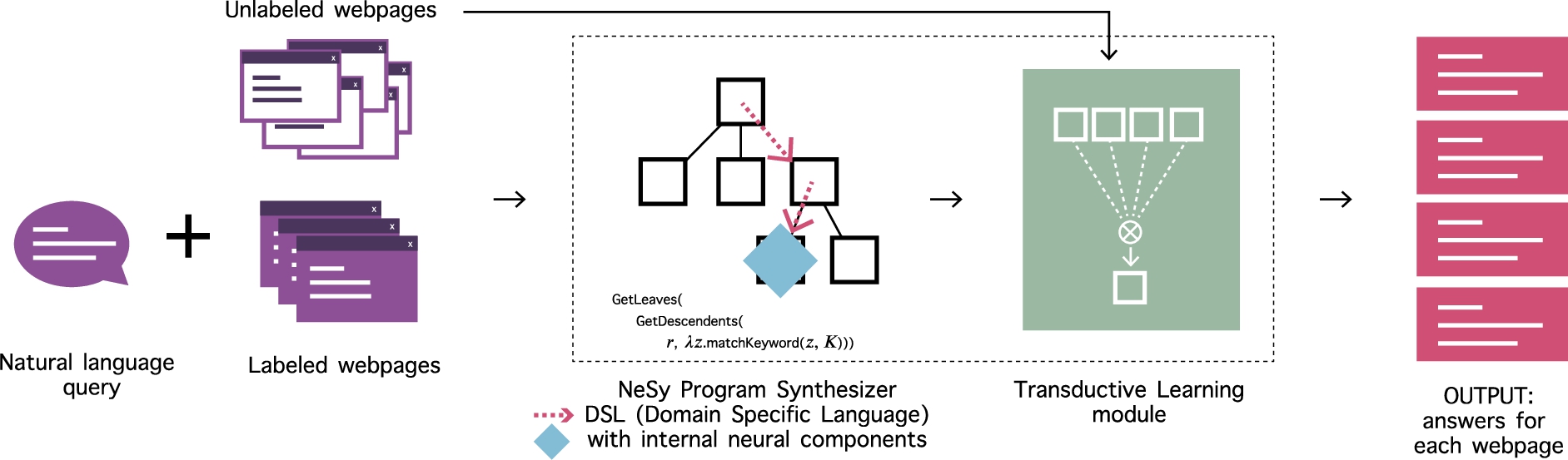

Yu et al. [163] divide neuro-symbolic systems into two types: heavy-reasoning light-learning and heavy-learning light-reasoning – Fig. 10. These are similar to the neural-symbolic reasoning and neural-symbolic learning categorization in [50] above. Heavy-reasoning light-learning mainly adopts the methods of the symbolic system to solve the problem in machine reasoning, and introduces neural networks to assist in solving those problems, while heavy-learning light-reasoning mainly applies methods of the neural system to solve the problem in machine learning, and introduces symbolic knowledge in the training process.

Two types of neuro-symbolic systems: heavy reasoning light learning, and heavy learning light reasoning [163].

Heavy-reasoning light-learning (based on Statistical Relational Learning (SRL) [84])

Heavy-learning light-reasoning

Besold et al. [12] examine neuro-symbolic learning and reasoning through the lens of cognitive science, cognitive neuro-science, and human-level artificial intelligence. This is a much more theoretical approach. The authors first describe some early systems such as CILP [53] and fibring, introduced by Garcez & Gabby [54]. Fibred networks work on the principle of recursion, where multiple neural networks are connected together, such that a fibring function in a network

According to Noam Chomsky’s theory of language, language is compositional, in the sense that a sentence is composed of phrases, which are in turn composed of sub-phrases, and so on, in a recursive manner. This idea enables the construction of infinite possibilities from finite means. This seems particularly well suited to a symbolic system which, given a finite set of rules should be capable of constructing/deconstructing, i.e., reasoning over, all possibilities. In contrast, a sub-symbolic, or distributional, system can never see the infinite amount of the data in the universe to learn from. For learning in infinite domains, see also [6].

Von Rueden et al. [152] propose a taxonomy for integrating prior knowledge into learning systems. This is an extensive work covering types of knowledge and knowledge representations, neuro-symbolic integration approaches, motivations for each approach, challenges and future directions. The authors categorize knowledge into three types: scientific knowledge, world knowledge, and expert knowledge. Furthermore, knowledge representations are classified into eight types – Fig. 11.

Zhang et al. [164] survey the area of neuro-symbolic reasoning on Knowledge Graphs (KGs). The authors contribute a unified reasoning framework for Knowledge Graph Completion (KGC) and Knowledge Graph Question Answering (KGQA). Among future directions, the authors advocate for taking inspiration from human cognition for neural-symbolic reasoning in KGs, alluding to the dual model of human reasoning (System 1/System 2). Additional future directions include:

Lamb et al. [87] review the state of the art on the use of Graph Neural Networks (GNNs) in NeSy – Fig. 12. Tutorial slides associated with [62]: Pointer Networks [151]. Pointer networks are based on the encoder/decoder with attention (i.e. transformer) architecture, with the modification that the input length can vary. This architecture lends itself to combinatorial optimization problems such as the Traveling Salesperson Problem (TSP). Graph Convolutional Networks (GCNs) [81] can be thought of as a generalization of Convolutional Neural Networks (CNNs) for non-grid topologies. Graph Neural Network Model [125] – early GNN architecture similar to GCN. Message-passing Neural Networks – similar to GNN with a slightly modified update function [87]. Graph Attention Networks (GATs) [148] – implement an attention mechanism enabling vertices to weigh neighbor representations during their aggregation. GATs are known to outperform typical GCN architectures for graph classification tasks. The authors do not provide references to relevant works. While a detailed review of GNNs in NLP is beyond the scope of this work, we point the interested reader to an online resource dedicated to this topic:

According to the authors, GNNs endowed with attention mechanisms “are a promising direction of research towards the provision of rich reasoning and learning in [Kautz’s] type 6 neuralsymbolic systems.” In NLP, GATs have enabled substantial improvements in several tasks through transfer learning over pretrained transformer language models,19

Graph neural network (GNN) intuition: generate node embeddings based on local neighborhoods, where nodes aggregate information from their neighbors using neural networks (a). The network neighborhood defines a computation graph such that every node corresponds to a unique computation graph (b). The key distinctions are in how different approaches aggregate information across the layers [62].18.

Extrapolation of a learned classification of graphs as Hamiltonian, to graphs of arbitrary size.

Reasoning about a learned graph structure to generalise beyond the distribution of the training data.

Reasoning about the

Using an adequate self-attention mechanism to make combinatorial reasoning computationally efficient.

Belle [6] aims to disabuse the reader of the “common misconception that logic is for discrete properties, whereas probability theory and machine learning, more generally, is for continuous properties.” The author advocates for tackling problems that symbolic logic and machine learning might struggle to address individually such as time, space, abstraction, causality, quantified generalizations, relational abstractions, unknown domains, and unforeseen examples.

Harmelen & Teije [146] present a conceptual framework to categorize the techniques for combining learning and reasoning via a set of design patterns. “Broadly recognized advantages of such design patterns are they distill previous experience in a reusable form for future design activities, they encourage re-use of code, they allow composition of such patterns into more complex systems, and they provide a common language in a community.” A graphical notation is introduced where boxes with labels represent symbolic, and sub-symbolic modules, connected with arrows. Harmelen & Teije’s boxology representation of AlphaGo is given in Fig. 13.

Schematic diagram using the boxology graphical notation of the AlphaGo system. Ovals denote algorithmic components (i.e. objects that perform some computation), and boxes denote their input and output (i.e. data structures) [146].

Earlier surveys [5,13,49,52,63] tend to focus more on logic and logic programming, and less on learning, which is not surprising given that the ground breaking successes in deep learning are relatively recent. Several themes run through the above listed works, namely, the inherent strengths and weaknesses of symbolic and sub-symbolic techniques when taken in isolation, the types of problems which NeSy promises to solve, and the development of approaches over time.

Two future directions of particular interest to our work emerge: building systems which take inspiration from human cognition and reasoning, and the integration of unstructured data. To our knowledge there is no survey specifically covering the application of NeSy for Natural Language Processing (NLP) where the input data is both unstructured and replete with the ambiguities and inconsistencies of human reasoning.

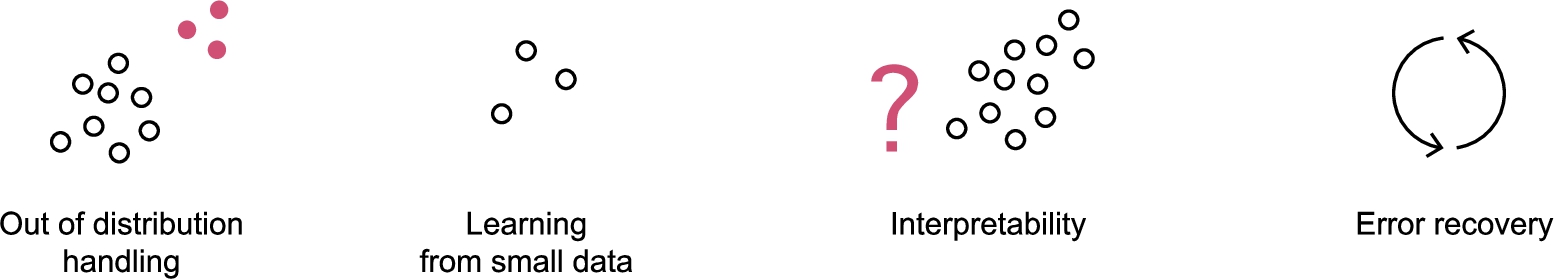

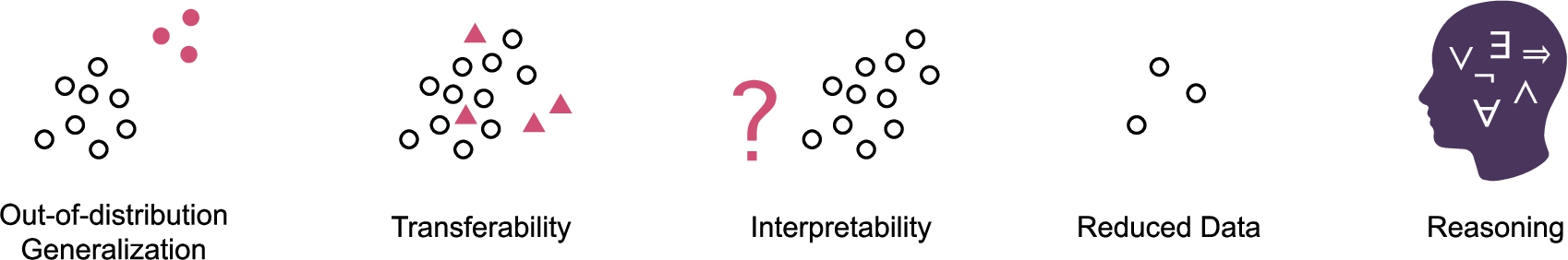

Our aim is to analyze recent work implementing NeSy in the language domain, to verify if the goals of NeSy are being realized, and to identify the challenges and future directions. We briefly describe each of the goals illustrated in Fig. 14, which we have identified based on our synthesis of the related work outlined above.

Neuro-symbolic artificial intelligence goals.

OOD generalization [132] refers to the ability of a model to extrapolate to phenomena not previously seen in the training data. The lack of OOD generalization in LLMs is often demonstrated by their inability perform commonsense reasoning, as in the example in Fig. 3.

Interpretability

As Machine Learning (ML) and AI become increasingly embedded in daily life, the need to hold ML/AI accountable is also growing. This is particularly true in sensitive domains such as healthcare, legal, and some business applications such as lending, where bias mitigation and fairness are critical. “An interpretable model is constrained, following a domain-specific set of constraints that make reasoning processes understandable” [121].

Reduced size of training data

State-of-the-art (SOTA) language models utilize massive amounts of data for training. This can cost in the thousands or even millions of dollars [131], take a very long time, and is neither environmentally friendly nor accessible to most researchers or businesses. The ability to learn from less data brings obvious benefits. But apart from the practical implications, there is something innately disappointing in LLMs’ ‘bigger hammer’ approach. Science rewards parsimony and elegance, and NeSy promises to deliver results without the need for such massive scale. While this issue can be partially solved by fine tuning a pre-trained LLM using only a small amount labeled data, these techniques come with their own limitations. For example, Jiang et al. [74] discuss issues such as over-fitting the data of downstream tasks and forgetting the knowledge of the pre-trained model.

Transferability

Transferability is the ability of a model which was trained on one domain, to perform similarly well in a different domain. This can be particularly valuable, when the new domain has very few examples available for training. In such cases we might rely on knowledge transfer similar to the way a person might rely on abstract reasoning when faced with an unfamiliar situation [168].

Reasoning

According to Encyclopedia Britannica, “To reason is to draw inferences appropriate to the situation” [117]. Reasoning is not only a goal in its own right, but also the means by which the other above mentioned goals can be achieved. Not only is it one of the most difficult problems in AI,21 As expressed by Luis Lamb at

Our review methodology is guided by the principles described in [82,111,112]. The data, queries, code, and additional details can be found in our github repository.22

Is Neuro-symbolic AI meeting its promises in NLP? What are the existing studies on neurosymbolic AI (NeSy) in natural language processing (NLP)? What are the current applications of NeSy in NLP? How are symbolic and sub-symbolic techniques integrated and what are the advantages/disadvantages?

Search process

We chose Scopus to perform our initial search, as Scopus indexes most of the top journals and conferences we were interested in. In addition to Scopus, we searched the ACL Anthology database and the proceedings from conferences specific to Neuro-symbolic AI. It is possible we missed some relevant studies, but as our aim is to shed light on the field generally, our assumption is that these journals and proceedings are a good representation of the area as a whole. The included sources are listed in Appendix C. Since we were looking for studies which combine neural and symbolic approaches, our query consists of combinations of neural and symbolic terms as well as variations thereof, listed in Table 1. The keywords are deliberately broad, as it would be impossible to come up with a complete list of all possible keywords relevant to NeSy in NLP. More importantly, the focus of the work is not on specific subfields, each of which may warrant a review of its own, but rather on the explicit use of neuro-symbolic approaches regardless of subfield. Strictly speaking the only keywords that would cover this would be neuro-symbolic and its syntactic variants, but we relaxed this slightly on the basis that works which explore both symbolic reasoning and deep learning in combination (as per the definition in Section 1) may not necessarily have used the term neuro-symbolic.

Search keywords

Search keywords

The initial query was restricted to peer-reviewed English language journal articles and conference papers from the last 3 years, which produced a total of 21,462 results.

We further limit the Scopus articles to those published by the top 20 publishers as ranked by Scopus’s CiteScore, which is based on number of citations normalized by the document count over a 4 year window,23

Inclusion/exclusion criteria

Selection process diagram.

The inclusion criteria at this stage was intentionally broad, as the process itself was meant to be exploratory, and to inform the researchers of relevant topics within NeSy. As per best practices, this first round is also designed to understand and address inter-annotator disagreement. This unsurprisingly led to some researcher disagreement on inclusion, especially since studies need not have been explicitly labeled as neuro-symbolic to be classified as such. Agreement between researchers can be measured using the Cohen Kappa statistic, with values ranging from [−1,1], where 0 represents the expected kappa score had the labels been assigned randomly, −1 indicates complete disagreement, and 1 indicates perfect agreement. Our score at this stage came to a modest 0.33. We observed that it was not always clear from the abstract alone whether the sub-symbolic and symbolic methods were integrated in a way that met the inclusion criteria.

To attain inter-annotator agreement and facilitate the next round of review, we kept a shared glossary of symbolic and sub-symbolic concepts as they presented themselves in the literature. We each reviewed all of the 1,902 studies, this time by way of a shallow reading of the full text of each study. Any disagreement at this stage was discussed in person with respect to the shared glossary. This process led to 75 journal articles and 106 conference papers marked for the final round of inclusion/exclusion.

During the final round of inclusion/exclusion, the quality of each study was determined through the use of a nine-item questionnaire. Each of the following questions was answered with a binary value, and the study’s quality was determined by calculating the ratio of positive answers. Less than a handful of studies were excluded due to a quality score of less than 50%.

Is there a clear and measurable research question? Is the study put into context of other studies and research, and design decisions justified accordingly (number of references in the literature review/ introduction)? Is it clearly stated in the study which other algorithms the study’s algorithm(s) have been compared with? Are the performance metrics used in the study explained and justified? Is the analysis of the results relevant to the research question? Does the test evidence support the findings presented? Is the study algorithm sufficiently documented to be reproducible (independent researchers arriving at the same results using their own data and methods)? Is code provided? Are performance metrics provided (hardware, training time, inference time)?

More than 85% of the studies satisfy the requirements listed from Q1 to Q6. However, over 80% of the studies fail to provide source code or details related to the computing environment which makes the system difficult to reproduce. This leads to an overall reduction of the average quality score to 76.5% – Fig. 16.

Study quality.

Finally, a deep reading of each of the eligible studies led to 59 studies selected for inclusion. Data extraction was performed for each of the features outlined in Table 3. For acceptable values of individual features see Appendix B. The lists of neural and symbolic terms referenced in the table constitute the glossary items learned from conducting the selection process. Figure 17(a) shows the breakdown of conference papers vs journal articles, while Fig. 17(b) shows the number of studies published each year.

Publications selected for inclusion.

Data extraction features

We perform quantitative data analysis based on the extracted features in Table 3. Each study was labeled with terms from the aforementioned glossary, and each term in the glossary was classified as either symbolic, or neural. A bi-product of this process are two taxonomies built bottom-up of concepts relevant to the set of studies under review. The two taxonomies are a reflection of the definition of NeSy provided earlier: “the combination of deep learning and symbolic reasoning.” To make this definition more precise, we limit the type of combination that qualifies as neuro-symbolic. Specifically, the sub-symbolic and symbolic components must be integrated in a way such that one informs the other. By way of counter example, a system which is made up of two independent symbolic and sub-symbolic components would not be considered NeSy if there is no interaction between them. For example, while a system where one component is used to process one type of data, and the other is used to process another type of data may be an effective software pipeline design, we do not consider this type of solution neuro-symbolic as the two components do not interact in any way. Thus the definition becomes “the

On the learning side, we have neural architectures (described in Section 6.2.1), and on the symbolic reasoning side we have knowledge representation (described in Section 6.2.2). These results are rendered in Table 4, with the addition of color representing a simple metric, or

Exploratory data analysis

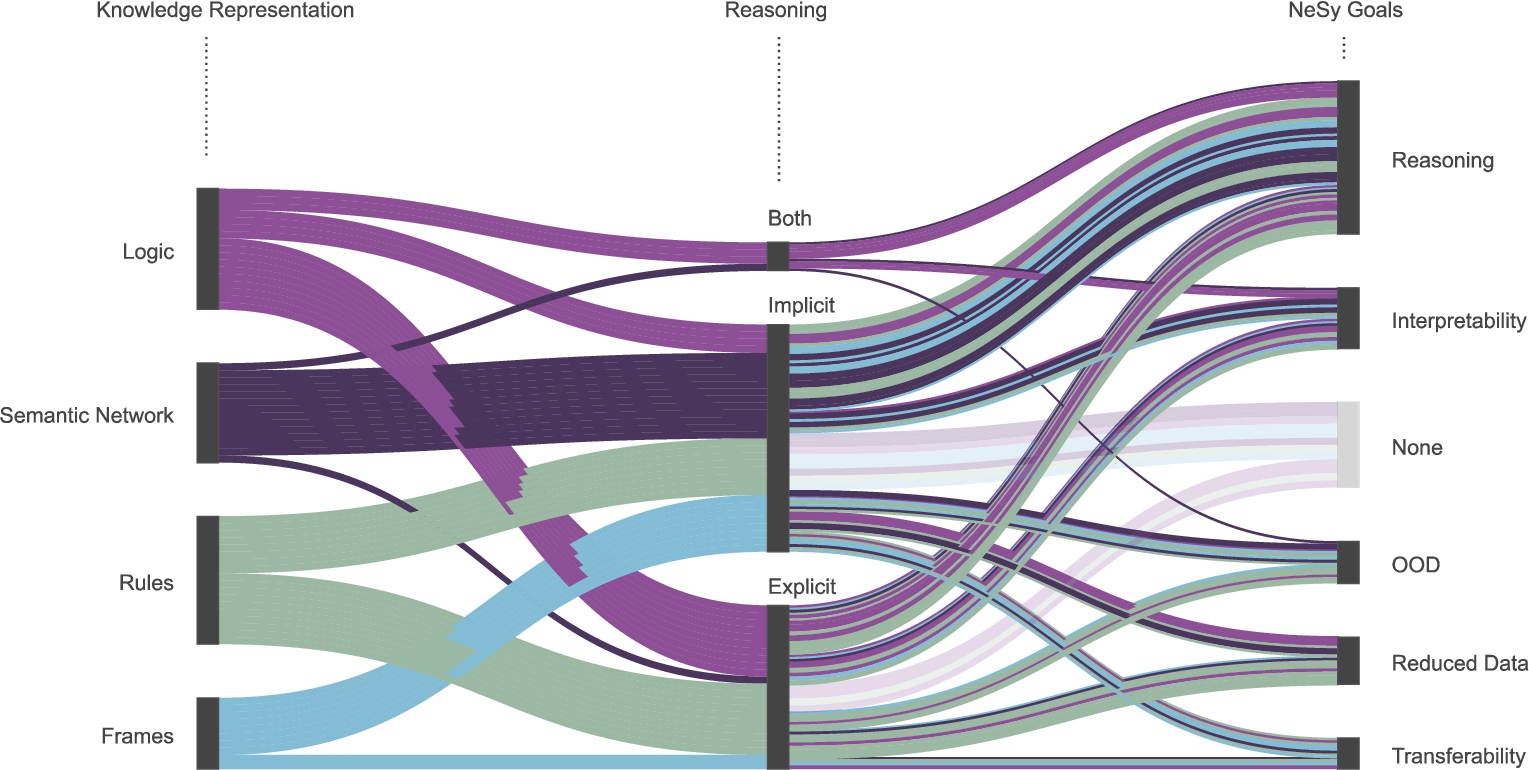

We plot the relationships between the features extracted from the studies, and the goals from Section 4 in an effort to identify any correlations between them, and ultimately to identify patterns leading to higher promise scores.

Business and technical applications

The

Relationship between business applications and NeSy goals. Question answering is the most frequently occurring task, and is associated mainly with reasoning, reduced data, and to a lesser degree, interpretability.

The business application largely determines the type of model output, or what we term

For completeness, the number of studies representing the technical applications and most frequently occurring business application is given in Fig. 19, while Fig. 20 illustrates the relationship between business applications, technical applications, and goals.

Number of studies in each application category.

Relationship between business applications, technical applications, and NeSy goals.

Machine learning algorithms are classified as supervised, unsupervised, semi-supervised, curriculum or reinforcement learning, depending on the amount and type of supervision required during training [10,16,79]. Figure 21 demonstrates that the supervised method outnumbers all other approaches.

Relationship between learning type, technical application, and NeSy goals. It is clear that supervised approaches dominate the field, are applied across a variety of technical applications, and there is no clear winner when it comes to goals.

The subset of tasks belonging to Natural Language Understanding (NLU) and Natural Language Generation (NLG) are often regarded as more difficult, and presumed to require reasoning. Given that reasoning was one of the keywords used for search, it is not surprising that many studies report reasoning as a characteristic of their model(s).

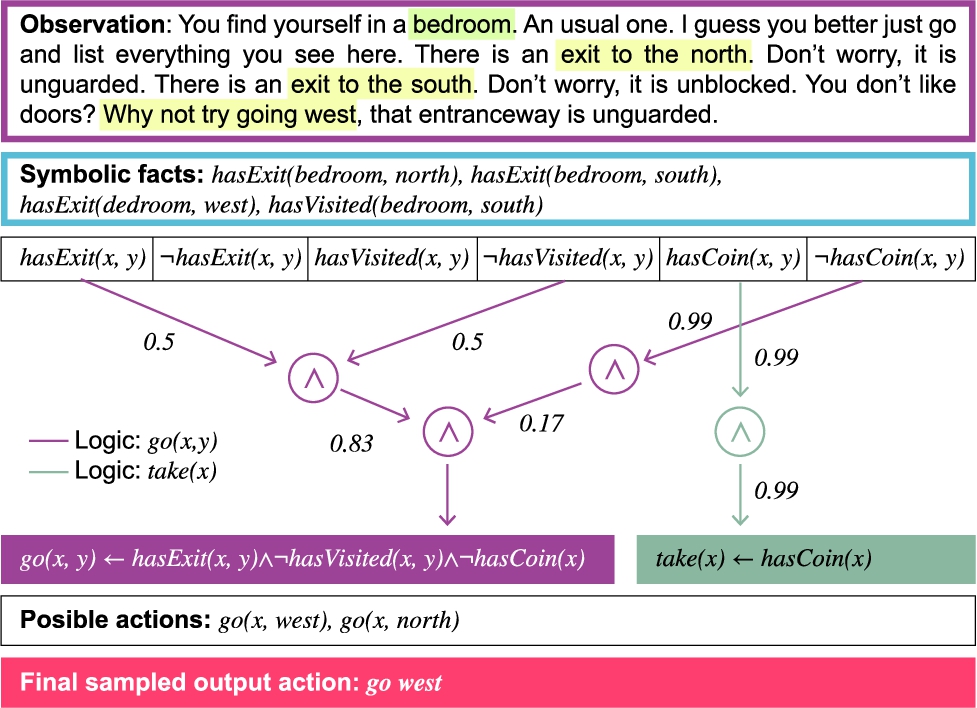

How reasoning is performed often depends on the underlying representation and what it facilitates. Sometimes the representations are obtained via explicit rules or logic, but are subsequently transformed into non-decomposable embeddings for learning. As such, we can say that any reasoning during the learning process is done implicitly. Studies utilizing Graph Neural Networks (GNNs) [26,59,70,91,124,165,167] would also be considered to be doing reasoning implicitly. The majority of the studies doing implicit reasoning leverage linguistic and/or relational structure to generate those internal representations. These studies meet 53 out of a possible 180 NeSy goals, where 180 = #goals * #studies, or 29.4%. For reasoning to be considered explicit, rules or logic must be applied during or after training. Studies which implement explicit reasoning perform slightly better, meeting 51 out of 135 goals, or 37.8% and generally require less training data. Additionally, 4 studies implement both implicit and explicit reasoning, at a NeSy promise rate of 40%. Of particular interest in this grouping is Bianchi et al. [14]’s implementation of Logic Tensor Networks (LTNs), originally proposed by Serafini and Garcez in [130]. “LTNs can be used to do after-training reasoning over combinations of axioms which it was not trained on. Since LTNs are based on Neural Networks, they reach similar results while also achieving high explainability due to the fact that they ground first-order logic” [14]. Also in this grouping, Jiang et al. [75] propose a model where embeddings are learned by following the logic expressions encoded in huffman trees to represent deep first-order logic knowledge. Each node of the tree is a logic expression, thus hidden layers are interpretable.

Figure 22 shows the relationship between implicit & explicit reasoning and goals, while the relationship between knowledge representation, type of reasoning, and goals is shown in Fig. 23.

Type of reasoning and goals. Around half, 48%, of studies where reasoning is performed explicitly mention interpretability as a feature. While nearly a third of studies performing reasoning implicitly do not meet any of the NeSy promises identified for this review.

Knowledge representation, type of reasoning, and goals. What is noteworthy, is that when semantic networks are utilized, reasoning is almost always done implicitly. The two exception are [14], and [165]. However, [14] utilizes FOL for explicit reasoning rather than its network component. On the other hand, [165] generate a novel interpretable reasoning graph as the output of their model.

In the previous section we described how linguistic and relational structures can be leveraged to generate internal representations for the purpose of implicit reasoning. Here we plot the relationships between these structures and other extracted features and their interactions – Fig. 24.

Perhaps the most telling chart is the mapping between structures and goals, where many the studies leveraging linguistic structure do not meet any of the goals. This runs counter to the intuition that language is a natural fit for NeSy.

Relationships between leveraged structures and extracted features. As can be seen in a), e), and f), studies leveraging linguistic structures often do not meet any NeSy goals, which runs counter to our original hypothesis. Further investigation into this phenomenon may be warranted.

Each study in our survey is based on a unique dataset, and a variety of metrics. Given that there are nearly as many business applications, or tasks, as there are studies, this is not surprising. As such it is not possible to compare the performance of the models reviewed. However, this brings up an interesting question, and that is how one might design a benchmark for NeSy in the first place. A discussion about benchmarks at the IBM Neuro-Symbolic AI Workshop 202225

Neural

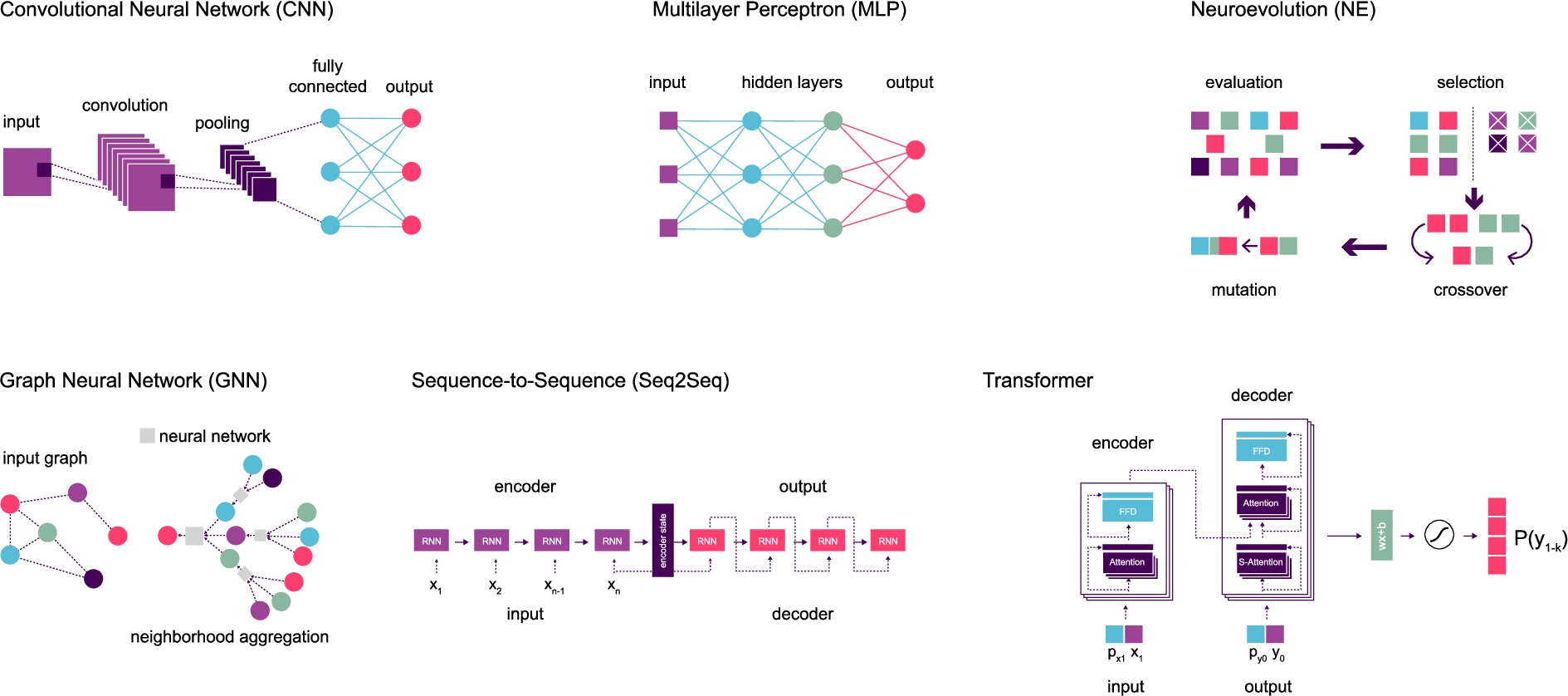

In the main, the extracted neural terms refer to the neural architecture implemented in a given study. We group these into higher level categories such as linear models, early generation (which includes CNNs), graphical models, sequence-to-sequence, and transformers. We include one study [134] which does not implement gradient descent, but rather Neuroevolution (NE). Neuroevolution involves genetic algorithms for learning neural network weights, topologies, or ensembles of networks by taking inspiration from biological nervous systems [90,108]. Neuroevolution is often employed in the service of Reinforcement Learning (RL). Studies which do not specify a particular architecture are categorised as Multilayer Perceptron (MLP) – Fig. 25.

We also include here neuro-symbolic architectures such as Logic Tensor Networks (LTN) [130], Recursive Neural Knowledge Networks (RNKN) [75], Tensor Product Representations (TPRs) [135], and Logical Neural Networks (LNN) [119] because they are suitable to optimization via gradient descent – Fig. 26.

Neural architectures represented in Table 4.

Neuro-symbolic architectures represented in Table 4.

The definition we adopted states that NeSy is

The sentence “Not all birds can fly” in First Order Logic (FOL) looks like:

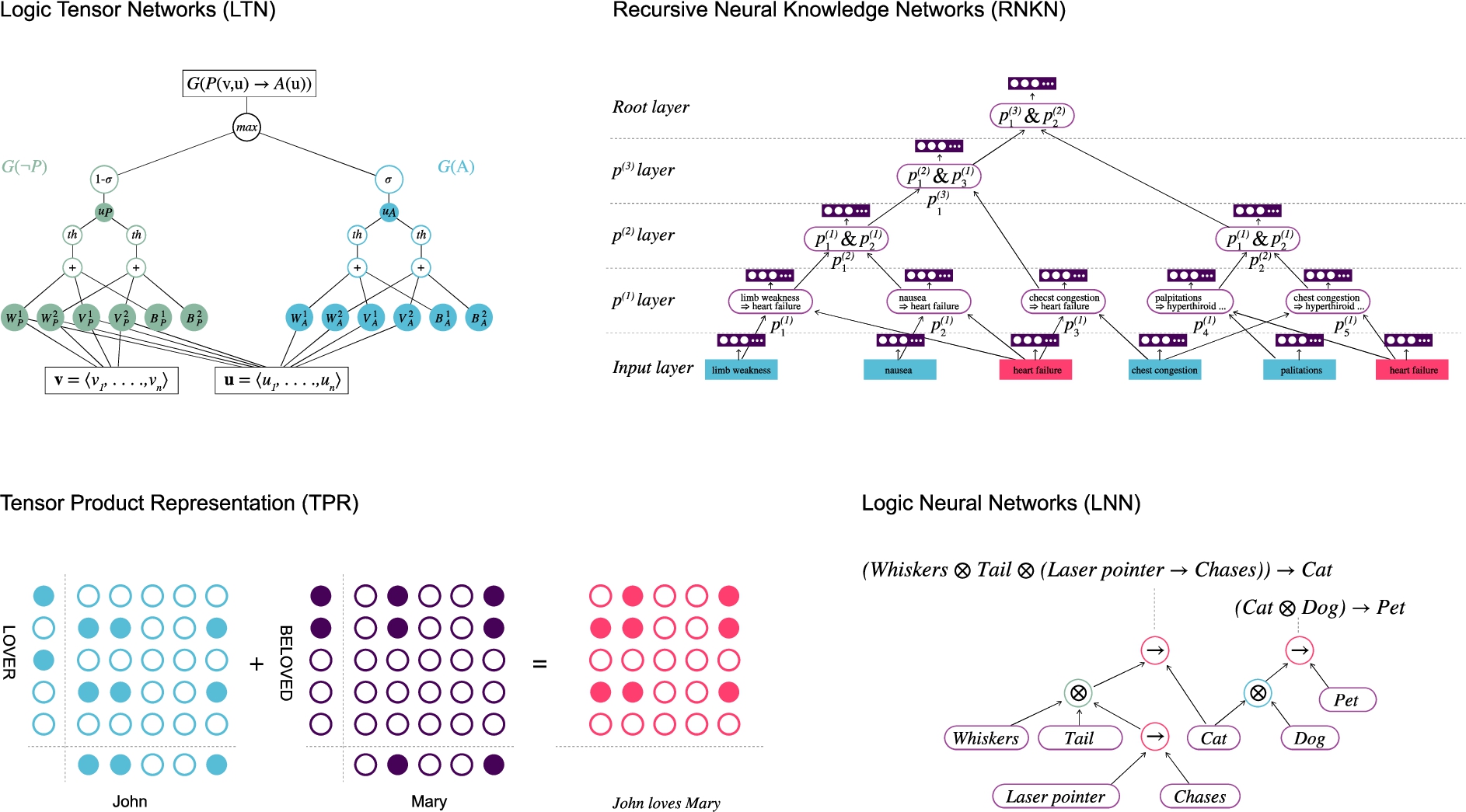

The frame and slot names are atomic symbols; the fillers are either atomic values (like numbers or strings) or the names of other individual frames [18]. This is similar to Object Oriented Programming (OOP), where the frame is analogous to the object, and slots and fillers are properties and values respectively.

This term was popularized after Google introduced contextual information to search results from their semantic network under the brand name English WordNet subgraph [107].

Table 4 shows which studies combine which of the above neural (6.2.1) and symbolic (6.2.2) categories as well as the number of NeSy goals satisfied.

Neural & symbolic combinations 1 2 3 4 5 number of NeSy goals satisfied out of the 5 described in Section 4.

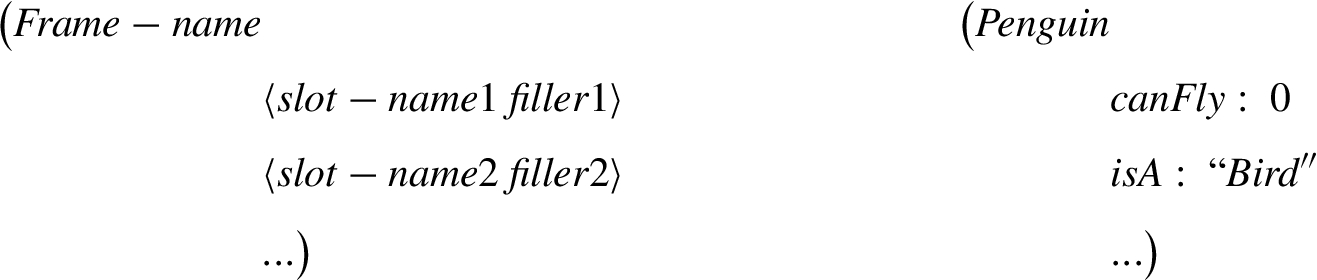

NeSy systems can be categorized according to the nature of the combination of neural and symbolic techniques. At AAAI-20, Henry Kautz presented a taxonomy of 6 types of Neuro-Symbolic architectures with a brief example of each [80]. While Kautz has not provided any additional information beyond his talk at AAAI-20, several researchers have formed their own interpretations [51,87,123]. We have categorized all the reviewed studies according to Kautz’s taxonomy as well as our proposed nomenclature – Fig. 28. Table 7 in Appendix A lists all the studies by category.

Proposed neuro-symbolic artificial intelligence categories. Adapted from Henry Kautz.

Type 1

Type 1

Type 2

Type 2

Type 3

Type 3

Types 4 and 5,

Deep Learning For Mathematics [88] is the canonical example of Type 4, where the input and output to the model are mathematical expressions. The model performs symbolic differentiation or integration, for example, given It should be noted that the authors of the LNN classify their architecture as Type 7, which is explicitly outside of Kautz’s 6 types. See Day 2, Session 2 of

Type 4

Type 4

Type 5 comprises Tensor Product Representations (TPRs) [135], Logic Tensor Networks (LTNs) [129], Neural Tensor Networks (NTN) [136] and more broadly is referred to as tensorization, where logic acts as a constraint.

Type 5

Type 6

Figure 35 shows the number of studies per category, and Fig. 36 illustrates the relationship between categories and goals. Table 5 shows the number of studies in each category per goal.

Number of studies per category.

NeSy categories to NeSy goals. There is no obvious pattern with respect to what types of goals are met within each of the NeSy categories.

Number of studies meeting each goal. The

All studies report performance either on par or above benchmarks, but we cannot compare studies based on performance as nearly every study uses a different dataset and benchmark as discussed in Section 6.1.5. Our focus is instead on whether the goals of NeSy are being met. Our

Proportion of studies which have met one or more of the 5 goals.

NeSy promises reported as having been met (y = yes, n = no)

In Section 4.5 we put forward the hypothesis that reasoning is the means by which the other goals can be achieved. This is not evidenced in the studies we reviewed. Some possible explanations for this finding are: 1) The kind of reasoning required to fulfill the other goals is not the kind being implemented; 2) The approaches are theoretically promising, but the technical solutions need further development. Next we look at each of these possibilities.

Thirty four out of the fifty nine studies mention reasoning as a characteristic of their solution. But there is a lot of variation in how reasoning is described and implemented. Given the overwhelming evidence of the fallibility of human reasoning, to understand language, AI researchers have sought guidance from disciplines such as psychology, cognitive linguistics, neuroscience, and philosophy. The challenge is that there are multiple competing theories of human reasoning and logic both across and within these disciplines. What we have discovered in our review, is a blurring of the lines between various types of logic, human reasoning, and mathematical reasoning, as well as counter-productive assumptions about which theory to adopt. For example, drawing inspiration from “how people think”, accepting that how people think is flawed, and subsequently attempting to build a model with a logical component, which by definition, is rooted in validity, seems counter productive to us. Although this does depend somewhat on the business application. For problems like MWP (

[14] describe human reasoning in terms of a dual process of “subsymbolic commonsense” (strongly correlated with associative learning), and “axiomatic” knowledge (predicates and logic formulas) for structured inference.

In [71] humans reason by way of analogy, and commonsense knowledge is represented in ConceptNet, a graphical representation of common concepts and their relationships.

For [162] human reasoning can be modeled by Image Schemas (IS). Schemas are made up of logical rules on (Entity1, Relation, Entity2) tuples, such as transitivity, or inversion.

[44] explain their choice of fuzzy logic for “its resemblance to human reasoning and natural language.” This is a probabilistic approach which attempts to deal with uncertainty.

[4] propose that human thought constructs can be modelled as cause-effect pairs. Commonsense is often described as the ability to draw causal conclusions from basic knowledge, for example:

And [25] state that “when people perform explicit reasoning, they can typically describe the way to the conclusion step by step via relational descriptions.”

But the most plausible hypothesis in our view is that of Schon et al. [127]: in order to emulate human reasoning, systems need to be flexible, be able to deal with contradicting evidence, evolving evidence, have access to enormous amounts of background knowledge, and include a combination of different techniques and logics. Most notably, no particular theory of reasoning is given. The argument put forward by Leslie Kaelbling at IBM Neuro-Symbolic AI Workshop 202233

There is strong agreement that a successful NeSy system will be characterized by compositionality [6,12,15,22,28,50,51,144]. Compositionality allows for the construction of new meaning from learned building blocks thus enabling extrapolation beyond the training data distribution. To paraphrase Garcez et al., one should be able to query the trained network using a rich description language at an adequate level of abstraction [51]. The challenge is to come up with dense/compact differentialble representations while preserving the ability to decompose, or unbind, the learned representations for downstream reasoning tasks.

One such system, proposed by Bianchi et al. [14] is the

Other studies [103,162] introduce logical inference within their solutions, but all require manually designed rules, and are limited by the domain expertise of the designer. Learning rules from data, or structure learning [42] is an ongoing research topic as pointed out by [152]. In [23] Chaturvedi et al. use fuzzy logic for emotion classification where explicit membership functions are learned. However, as stated by the authors, the classifier becomes very slow with the number of functions.

In summary, formulating logic, or more broadly reasoning, in a differentiable fashion remains challenging.

Limitations & future work

We organized our analysis according to the characteristics extracted from the studies to test whether there were any patterns leading to NeSy goals. Another approach would be to reverse this perspective, and look at each goal separately to understand the characteristics leading to its fulfillment. However, each goal is really an entire field of study in and of itself, and we do not think we could have done justice to any of them by taking this approach. We spent a lot of time looking for signal in a very noisy environment where the studies we reviewed had very little in common. More can be said about what we did not find, than what we did. Another approach might be to narrow the criteria for the type of NLP task, while expanding the technical domain. In particular, a subset of tasks from the NLU domain could be a good starting point, as these tasks are often said to require reasoning.

We tried to be comprehensive in respect to the selected studies which led to the trade-off of less space dedicated to technical details or additional context from the neuro-symbolic discussion. There are a lot of ideas and concepts which we did not cover, such as, and in no particular order, Relational Statistical Learning (RSL), Inductive Logic Programming (ILP), DeepProbLog [101], Connectionist Modal Logics (CML), Extreme Learning Machines (ELM), Genetic Programming, grounding and proposinalization, Case Based Reasoning (CBR), Abstract Meaning Representation (AMR), to name but a few, some of which are covered in detail in other surveys [12,50].

Furthermore, we argued that we need differentiable forms of different types of logic, but we did not discuss how they might be implemented. A comprehensive point of reference such as this would be a very valuable contribution to the NeSy community, especially if the implementations were anchored in cognitive science and linguistics as discussed in 7.1.

Finally, the need for common datasets and benchmarks cannot be overstated.

Conclusion

We analyzed recent studies implementing NeSy for NLP in order to test whether the promises of NeSy are materializing in NLP. We attempted to find a pattern in a small and widely variable set of studies, and ultimately we do not believe there are enough results to draw definitive conclusions. Only 59 studies met the criteria for our review, and many of them (in the

Our view is that the lack of consensus around theories of reasoning and appropriate benchmarks is hindering our ability to evaluate progress. Hence we advocate for the development of robust reasoning theories and formal logics as well as the development of challenging benchmarks which not only measure the performance of specific implementations, but have the potential to address real world problems. Systems capable of capturing the nuances of natural language (i.e., ones that “understand” human reasoning) while returning sound conclusions (i.e., perform logical reasoning) could help combat some of the most consequential issues of our times such as mis- and dis-information, corporate propaganda such as climate change denialism, divisive political speech, and other harmful rhetoric in the social discourse.

Footnotes

Acknowledgements

This publication has emanated from research supported in part by a grant from Science Foundation Ireland under Grant number 18/CRT/6183. For the purpose of Open Access, the author has applied a CC BY public copyright licence to any Author Accepted Manuscript version arising from this submission.

NeSy and Kautz categories

NeSy and Kautz categories

| NeSy (ours) | Kautz | Refs. |

| Sequential | 1. symbolic Neuro symbolic | [2,4,17,19,26,30,31,35,40,44,47,56,59,67,68,85,89,94,100,114,127,140,141,158,167] |

| Nested | 2. Symbolic[Neuro] | [23,27,29,110] |

| Cooperative | 3. Neuro; Symbolic | [32,91,103,116,133,134,153,157,162] |

| Compiled | 4. Neuro: Symbolic → Neuro | [24,36,60,70,73,75,83,95,124,128,149,159,165,166] |

| 5. Neuro_Symbolic | [1,14,25,58,69,71,93] |

Allowed values

Allowed values

| Feature | Allowed values |

| Business application | Annotation, Argumentation mining, Causal Reasoning, Decision support, Dialog system, Emotion recognition, Entity Linking, Entity Resolution, Image captioning, Information extraction, KG Completion / link prediction, Language modeling, N2F, Opinion extraction, Question answering, Reading comprehension, Relation extraction, Sentiment analysis, Text classification, Text games, Text summarization |

| Technical application | Clustering, Generative, Inference, Classification, Information extraction, Similarity |

| Type of learning | Supervised, Unsupervised, Semi-supervised, Reinforcement, Curriculum |

| Type of reasoning | Implicit, Explicit, Both |

| Language structure | Yes, No |

| Relational structure | Yes, No |

| NeSy goals | Reasoning, OOD Generalization, Interpretability, Reduced data, Transferability |

| Kautz category | 1. symbolic Neuro symbolic, 2. Symbolic[Neuro], 3. Neuro; Symbolic, 4. Neuro: Symbolic → Neuro, 5. Neuro_Symbolic, 6. Neuro[Symbolic] |

| NeSy category | Sequential, Nested, Cooperative, Compiled |

Publishers

Publishers included in the search

| American Association for the Advancement of Science |

| American Chemical Society |

| American Institute of Physics |

| American Society for Microbiology |

| Association for Computing Machinery (ACM) |

| Association for Computational Linguistics (ACL) |

| Cairo University |

| Chongqing University of Posts and Telecommunications |

| Elsevier |

| Emerald |

| IEEE |

| IOS Press |

| Institute for Operations Research and the Management Sciences |

| King Saud University |

| MIT Press |

| Mary Ann Liebert |

| Morgan & Claypool Publishers |

| Now Publishers Inc |

| Optical Society of America |

| Oxford University Press |

| Public Library of Science |

| SAGE |

| Society for Industrial and Applied Mathematics |

| Springer Nature |

| Taylor & Francis |

| University of California Press |

| University of Minnesota |

| Wiley-Blackwell |

Acronyms

(Continued)

| DLs | Description Logic |

| GAT | Graph Attention Network |

| GCN | Graph Convolutional Network |

| GNN | Graph Neural Network |

| GPT3 | Third generation Generative Pre-trained Transformer |

| IJCAI | International Joint Conference on Artificial Intelligence |

| ILP | Inductive Logic Programming |

| KG | Knowledge Graphs |

| KGC | Knowledge Graph Completion |

| KGQA | Knowledge Graph Question Answering |

| KR | Knowledge Representation |

| KRR | Knowledge Representation & Reasoning |

| LNN | Logical Neural Networks |

| LLM | Large Language Models |

| LSTM | Long Short Term Memory |

| LTN | Logic Tenson Network |

| ML | Machine Learning |

| MLN | Markov Logic Network |

| MLP | Multilayer Perceptron |

| MWP | Math Word Problem |

| NE | Neuroevolution |

| NeSy | Neuro-Symbolic AI |

| NL | Natural Logic |

| NLI | Natural Language Inference |

| NLG | Natural Language Generation |

| NLM | Neural Logic Machine |

| NLP | Natural Language Processing |

| NLU | Natural Language Understanding |

| NN | Neural Network |

| NS-CL | Neuro-Symbolic Concept Learner |

| NTN | Neural Tensor Network |

| NTP | Neural Theorem Prover |

| OOD | Out-of-distribution |

| OOP | Object-oriented programming(paradigm) |

| OWL | Web Ontology Language |

| ProbLog | Probabilistic Logic Programming |

| RcNN | Recursive Neural Network |

| RL | Reinforcement Learning |

| RNKN | Recursive Neural Knowledge Network |

| RNN | Recurrent Neural Network |

| SOTA | State of the Art |

| SVM | Support Vector Machine |

| TPR | Tensor Product Representation |

| TSP | Traveling Salesperson Problem |

| ( |

Differentiable Inductive Logic Programming |