Abstract

Last years witnessed a shift from the potential utility in digitisation to a crucial need to enjoy activities virtually. In fact, before 2019, data curators recognised the utility of performing data digitisation, while during the lockdown caused by the COVID-19, investing in virtual and remote activities to make culture survive became crucial as no one could enjoy Cultural Heritage in person. The Cultural Heritage community heavily invested in digitisation campaigns, mainly modelling data as Knowledge Graphs by becoming one of the most successful Semantic Web technologies application domains.

Despite the vast investment in Cultural Heritage Knowledge Graphs, the syntactic complexity of RDF query languages, e.g., SPARQL, negatively affects and threatens data exploitation, risking leaving this enormous potential untapped. Thus, we aim to support the Cultural Heritage community (and everyone interested in Cultural Heritage) in querying Knowledge Graphs without requiring technical competencies in Semantic Web technologies.

We propose an engaging exploitation tool accessible to all without losing sight of developers’ technological challenges. Engagement is achieved by letting the Cultural Heritage community leave the passive position of the visitor and actively create their Virtual Assistant extensions to exploit proprietary or public Knowledge Graphs in question-answering. By accessible to all, we mean that the proposed software framework is freely available on GitHub and Zenodo with an open-source license. We do not lose sight of developers’ technical challenges, which are carefully considered in the design and evaluation phases.

This article first analyses the effort invested in publishing Cultural Heritage Knowledge Graphs to quantify data developers can rely on in designing and implementing data exploitation tools in this domain. Moreover, we point out challenges developers may face in exploiting them in automatic approaches. Second, it presents a domain-agnostic Knowledge Graph exploitation approach based on virtual assistants as they naturally enable question-answering features where users formulate questions in natural language directly by their smartphones. Then, we discuss the design and implementation of this approach within an automatic community-shared software framework (a.k.a. generator) of virtual assistant extensions and its evaluation in terms of performance and perceived utility according to end-users. Finally, according to a taxonomy of the Cultural Heritage field, we present a use case for each category to show the applicability of the proposed approach in the Cultural Heritage domain. In overviewing our analysis and the proposed approach, we point out challenges that a developer may face in designing virtual assistant extensions to query Knowledge Graphs, and we show the effect of these challenges in practice.

Introduction

In the last decade, public institutions and private organisations have invested in massive digitisation campaigns to create (and co-create [18]) vast digital collections, repositories, and portals that allow online and direct access to billions of resources [29]. Digitisation causes an extraordinary acceleration in digital transformation processes [1] that affected any field, from education to business models [38], from health care [24] to Cultural Heritage (CH) [1]. Focusing on the CH field, public and private organisations have invested in digitising any form of data to ensure its long-term preservation and support the knowledge economy [29].

The United Nations Educational, Scientific and Cultural Organisation (UNESCO) defines CH as “the legacy of physical artifacts and intangible attributes of a group or society inherited from past generations, maintained in the present and bestowed for the benefit of future generations” [41]. CH includes tangible culture (such as buildings, monuments, landscapes, books, works of art, and artifacts); intangible culture (such as folklore, traditions, language, and knowledge), and natural heritage (including culturally significant landscapes, and biodiversity) [41].

Nowadays, CH has become one of the most successful application domains of the Semantic Web technologies [10]. Both public institutions (e.g., galleries, libraries, archives, and museums, a.k.a. GLAM institutions) and private providers modelled and published CH as Knowledge Graphs (KGs), i.e., a combination of ontologies to model the domain of interest and data published in the linked open data (LOD) format [34], both as independent datasets or by enriching aggregators (such as Europeana [22]) [10].

The availability of CH data in digital machine-processable form has enabled a new research paradigm called Digital Humanities [10] and aims to facilitate researchers, practitioners, and generic users to consume cultural objects [21]. CH as LOD improves data re-usability and allows easier integration with other data sources [10]. It behaves as a promising approach to face CH challenges, such as syntactically and semantically heterogeneity, multilingualism, semantic richness, and interlinking nature [21].

However, KG exploitation is mainly affected by i) required technical competencies in generic query languages, such as SPARQL, and in understanding the semantics of the supported operators [47], which is too challenging for lay users [4,9,17,32,47], and ii) conceptualisation issues to understand how data are modelled [4,47].

Natural Language (NL) interfaces mitigate these issues, enabling more intuitive data access and unlocking the potentialities of KGs to the majority of end-users [23]. NL interfaces provide lay users with question answering (QA) functionalities where users can adopt their terminology and receive a concise answer. Researchers argue that multi-modal communication with virtual characters using NL is a promising direction in accessing KGs [6]. Consequently, virtual assistants (VAs) have witnessed an extraordinary and increasing interest as they naturally behave as QA systems. Many companies and researchers have combined (CH) KGs and VAs [3,7,29], but no one has provided end-users with a generic methodology to generate extensions to querying KGs automatically.

To fill this gap, our goal is the definition of a general-purpose approach that makes KGs accessible to all by requiring minimum-no technical knowledge in Semantic Web technologies. VAs usually give the possibility to extend their capabilities by programming new features, also referred to as VA extensions. It implies that (potentially) everyone can implement custom extensions and personalise the VA behaviour. However, playing the VA extension creator’s role requires programming competencies to design and implement the application logic. Moreover, users must be aware that VA extensions are provider-dependent, meaning that an extension implemented for Alexa will not be directly reusable for other providers.

We desire to empower lay-users by letting them leave VA users’ passive position and play the role of VA extensions creator by requiring little/no technical competencies. We reformulate the goal of this work as i) enabling QA over KGs (KGQA) by VAs and ii) allowing (lay) users to automatically create ready-to-use VA extensions to query KGs by popular VAs, e.g., Amazon Alexa and Google Assistant. Thus, we propose a community-shared software framework (a.k.a. generator) that enables lay users to create custom extensions for performing KGQA for any cloud provider, unlocking the potentialities of the Semantic Web technologies by bringing KGs into everyone’s “pocket”, accessible from smartphones or smart speakers.

To determine the quantity of CH data modelled as KGs on which developers can rely in designing data exploitation tools in this domain, we overview the CH community effort to create, publish, and maintain KGs belonging to any category determined by the CH taxonomy. During the analysis, we point out which KG aspects and challenges developers may face in designing an automatic approach to exploit CH KGs. This analysis behaves as a starting point to design the proposed domain-agnostic approach to query (CH) KGs via VAs. We implement this approach in an automatic generator of VA extensions provided with KGQA functionalities to materialise this approach. We summarise the configuration of the generator and the process of creating a VA extension in Fig. 1. The generator architecture in Fig. 1 represents the community shared software framework that will be detailed in Fig. 6. The process starts with a user-defined URL of the link to a working SPARQL endpoint of interest. The returned VA extension is ready-to-be-use, and it can be used to perform QA, as simulated in Fig. 1 which will be detailed in Fig. 5 to understand the VA extension behaviour fully. We overview VA extensions in the CH field as use cases. In particular, we present a VA extension for each CH data category to demonstrate the generator in action and show that the proposed approach is general enough to work with any CH data. To assess the quality of the produced VA extensions and draw out differences in generator configuration options, we design VA extensions for well-known general-purpose KGs, i.e., DBpedia and Wikidata, and we evaluate them on a standard evaluation benchmark for KGQA systems, i.e., QALD. Finally, we perform i) a preliminary user experience to estimate the usability according to CH experts in using an auto-generated VA extension for the UNESCO Thesaurus and ii) we collect the perceived impact and utility of the proposed approach according to end-users and data curators.

The major contributions of this paper follows.

A design methodology to enable lay-users without technical competencies in programming and query languages to author VA extensions (Section 4).

An approach to make KGs compliant with VAs for the KGQA task (Section 4).

A software tool architecture to automatically generate personalised, configurable, and ready-to-use VA extensions where ready-to-use means that they can be uploaded on VA service providers as manually generated ones (Section 5).

The open-source release of the software framework v1.0 that supports Amazon Alexa, publicly available on the project GitHub repository.1

mapellegrino/virtual_assistant_generator

. Permanent URI:

A detailed review and analysis of the CH community effort in publishing KGs and registering them in standard dataset repositories (Section 3).

The open-source release of a pool of Alexa skills resulting from the generator exploitation to query CH KGs (Section 6 and GitHub repository 1 ). We present a use case for each CH category. In particular, for the tangible category, we propose the Mapping Manuscript Migrations (MMM) use case for the movable sub-category and the Hungarian museum use case for the immovable one; DBTune for the intangible category; and NaturalFeatures for the natural heritage category. We noticed a particular interest in taking care of CH terminology and modelling approaches by thesaurus and models during the analysis. Therefore, we also present the UNESCO thesaurus use case for the Terminology category.

The rest of this article is structured as follows: Section 2 overviews related work in (CH) KGQA by traditional approaches and by VAs; Section 3 quantifies the CH community effort in publishing KGs by analysing the status of the provided services and the amount of published data. This analysis aims to justify the advantages of investing in designing and developing technological solutions to engage lay users interested in CH and exploit the vast amount of available data in this domain. Section 4 details the proposed domain-agnostic approach to query KGs by VAs by pointing out technological challenges in interfacing KGs and VAs, providing design principles and the implementation methodology, and discussing its strengths and limitations. Section 5 overviews the VA extension generator that embeds the proposed general-purpose approach in querying KGs, while Section 6 presents a pool of VA extensions to query CH KGs by showing the general approach in a domain-specific application and by focusing on the impact of the design challenges in the CH context. Section 7 first assesses the performance of the generated VA extensions by evaluating their accuracy in general-purpose use cases (DBpedia and Wikidata) by using standard evaluation benchmarks, the QALD dataset. Second, it reports the user experience of the HETOR group in using the UNESCO VA extension to simulate the support in class in clarifying terminology and term hierarchies concerning CH, and, finally, discusses the impact and the potentialities of the proposed approach according to end-users and CH experts. Finally, the article concludes with some final remarks and future directions.

By focusing on

By considering

Regarding the integration of CH KGs and chatbots, we can cite the chatbot proposed by Lombardi et al. [27] to support users during archaeological park visits in Pompeii by simulating the interaction between visitors and a real guide to improve the touristic experience by exploiting NL processing techniques. In the same direction, Pilato et al. [35] proposed a community of chatbots (with specialised or generic competencies) developed by combining the Latent Semantic Analysis methodology and the ALICE technology.

These works are evidence of the interest in developing KGQA via VAs by promoting interesting applications to make CH KGs interoperable with VAs to accomplish the QA task, but they do not empower end-users by providing them with the opportunity to create their VA extensions. The main difference between our proposal and the ones reported so far is that the literature proposes ready-to-use VA extensions, while we propose a generator of VA extensions bounded to neither any KG nor any specific VA provider. To the best of our knowledge, the proposed community-shared software framework is the first attempt to let users without technical competencies in the Semantic Web technologies create KGQA systems via VAs. It represents the main novelty of our proposal.

Cultural heritage knowledge graph analysis

This section analyses the CH community effort in publishing CH data as KGs, making them accessible by either SPARQL endpoints or APIs, maintaining working SPARQL endpoints in most cases, and attaching human-readable labels to resources to make them accessible by NL interfaces. The performed analysis aims to make the potentialities of proposing exploitation tools in this application domain due to the vast amount of available data. In particular, this survey quantifies the amount of available CH KGs behaving as a source for the proposed generator, and it estimates some of the aspects that are crucial for making data accessible by any data exploitation tool, such as accessibility by a working SPARQL endpoint, and by NL interfaces, such as VA providers, that require the use of labels attached to resources.

First, it overviews the used sources to retrieve the analysed KGs; second, it provides KG details and quantitative analysis of available data and, finally, it points out considerations to consider when proposing an exploitation tool for (CH) KGs.

Selection approach It is worth clarifying that we do not aim to provide a complete overview of all published KGs in the CH context, but the described selection process seeks to point out the absence of bias in the selected KGs and, consequently, the impartiality of the considerations reported in the performed analysis.

We perform the KG selection as a non-technical user by looking at available aggregators of published KGs and querying their user interfaces. We exploit LOD cloud [30] (updated in May 2020), as it is one of the biggest aggregators of published KGs, and a combination of datasets and articles search engines. In particular, we explore datasets aggregators not specifically related to the Semantic Web, such as DataHub [33]. Finally, we consider recent publications available in Scopus to identify also KGs published recently. The variety of queried sources aims to demonstrate the lack of bias in the performed analysis. We collect more than

We exploit the LOD cloud [30] search interface to retrieve KGs containing museum, library, archive, cultur*, heritage, bibliotec*, natural, biodiversity, geodiversity as keywords that might be used in KG titles. It is worth noting that the search engine requires that the dataset title includes English terms, but it does not pose any constraint on the provider country.

We retrieve datasets registered in the DataHub with format equals to

We inspect articles indexed by Scopus and matching the article title, abstract, and keyword filter ("cultural heritage" and ("semantic web" OR "linked data" OR "knowledge graph")) from 2020 to 2018 (i.e., last two years). It results in

KG details According to the taxonomy of the CH term, we classify CH KGs according to its content by distinguish tangible (further classified as movable and immovable) (see Table 1), intangible (see Table 2) and natural heritage (see Table 3). Moreover, we notice an interesting amount of KG dedicated to clarifying and modelling CH terminology interpreted as the effort invested in defining thesaurus and data models. Therefore, we also consider the terminology class as reported in Table 4. If a KG contains elements belonging to multiple classes, we repeat it. For each KG, we report the original name, the country of the provider, the service that enables data exploitation (SPARQL endpoint or API), and the SPARQL endpoint status (working or unavailable). It represents the assessment of data accessibility that is required by any data exploitation tool. For each KG, we also generate a short name (mainly combining country and some name keywords clarifying KG content) to refer them in the following analysis quickly. Main observations follow.

The colour version of the same images are available on GitHub at CH-KG-distribution-Europe.png and CH-KG-distribution-worldwide.png.

Geographical distribution of CH KGs. The bubble size represents the number of available CH KGs.

CH KG SPARQL endpoints status. While blue represents working SPARQL endpoints, red represents unavailable ones.

Overview of KGs related to tangible CH. It contains the sub-category interpreted as movable and immovable, a short name of KG to make shorter the following references, the complete name, the country of the provider, the service that enables the LOD exploitation (SPARQL endpoint or API), and SPARQL endpoint status (✓means that it works, while empty cells mean it does not; hyphen means not applicable)

Overview of KGs related to intangible CH. It contains a short name of KG to make shorter the following references, the complete name, the country of the provider, the service that enables the LOD exploitation (SPARQL endpoint or API), and SPARQL endpoint status (✓means that it works, while empty cells mean it does not; hyphen means not applicable)

Overview of KGs related to natural heritage. It contains a short name of KG to make shorter the following references, the complete name, the country of the provider, the service that enables the LOD exploitation (SPARQL endpoint or API), and SPARQL endpoint status (✓means that it works, while empty cells mean it does not; hyphen means not applicable)

Overview of KGs related to terminology. It contains the sub-category interpreted as thesaurus and model, a short name of KG to make shorter the following references, the complete name, the country of the provider, the service that enables the LOD exploitation (SPARQL endpoint or API), and SPARQL endpoint status (✓means that it works, while empty cells mean it does not; hyphen means not applicable)

The colour version of the same image is available on GitHub at SPARQL-endpoint-status.png.

Quantitative overview of available data Concerning data quantity, we consider the number of collected datasets and the number of classes, predicates and triples accessible by a working SPARQL endpoint. We quantify CH KGs data to perceive available sources that can be exploited by automatic data exploitation tools behaving as SPARQL query builders. From a quality point of view, we report the percentage of classes and predicates provided with a human-readable label, which is a crucial aspect for NL interfaces, such as VA extensions. For each working SPARQL endpoint listed in Tables 1–4, we retrieve:

classes, both used classes returned by the

properties, both used properties returned by the

triples returned by the

Overview of classes in CH KGs (by only considering SPARQL endpoints). It contains the used classes and the classes declares as skos:Concept, rdfs:Class and owl:Class. Moreover, it contains the percentage of classes provided with a label (besides its language). Grey lines are endpoints which fail at least a SPARQL query

Overview of properties in CH KGs and triples (by only considering SPARQL endpoints). It contains the used properties and the properties declared as owl:ObjectProperty, owl:DatatypeProperty and rdf:Property. Moreover, it contains the percentage of properties provided with a label (besides its language). Grey lines are endpoints which fail at least a SPARQL query

Main observations follow, and they should guide developers in designing automatic data exploitation tools by considering technical constraints posed by available data access points and data properties.

This section introduces the design methodology to make KGs compliant with VAs to address the KGQA task. We focus on Amazon Alexa and its terminology without losing generality, as the same considerations can also be adapted for other customizable providers. Alexa VA extensions are named

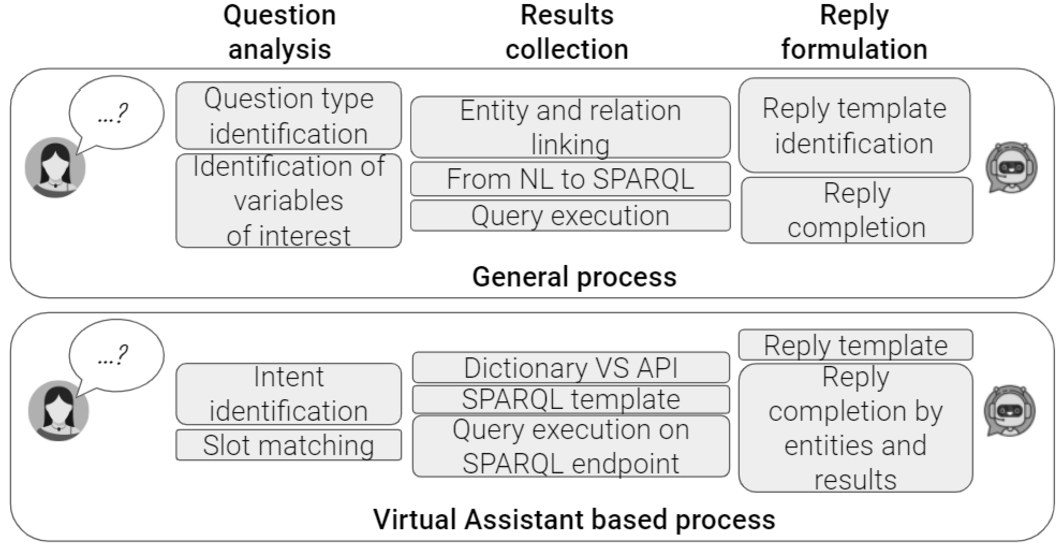

The KGQA task can be defined as follows: given an NL question Q and a KG K, the QA system produces the answer A, which is either a subset of entities in K or the result of a computation performed on this subset, such as counting or assertion replies [46]. We draw a parallel between a general process for KGQA and a VA-based process (see Fig. 4).

Parallel of a general and a VA-based KGQA process.

A general KGQA workflow is composed of the question analysis phase, followed by the query construction to retrieve results [12]. We extend this workflow by adding a final step to formulate an NL reply to verbalise the retrieved results and return it to the user. Consequently, the high-level KGQA workflow is an adaptation of the methodological approach proposed in the literature by Diefenbach et al. [12]. How this general approach has been narrowed down as a VA-based process is a proper original contribution of the paper. The general process reports a high-level approach detailing terminology commonly used in the context of KGQA. On the contrary, the VA-based process narrows it down to terms related to VA extensions (such as intents and slots) and reports low-level details considered in implementing a KGQA via VAs. For instance, while the general phase to retrieve the entity or predicate URI attached to an NL label is usually named linking, it might be implemented by using dictionaries or calling APIs in the VA-based process. While the general process focuses on the high-level role of each component, the VA-based process considers VA peculiarities and low-level implementation alternatives.

The question analysis step performs the question type identification and the linking phase. The query construction phase formulates the SPARQL query corresponding to the NL question and runs it on a SPARQL endpoint to retrieve raw results. During the reply formulation step, retrieved results are organised as an NL reply. In a VA-based process, users pose a question in NL by pronouncing or typing it via a VA app or dedicated device (e.g., Alexa app/device). During the question analysis phase, VAs interpret the request and identify the intent that matches the user query by an NL processing component. During the intent identification, VAs also solve intent slots. For instance, suppose that we implement a VA extension representing a thesaurus to recognise questions related to term definition. It might expect requests matching the template

Based on the analysis described in Section 3 and the overviewed KG aspects and issues, we identified the following challenges that must be faced in designing VA extensions to enable KGQA.

Principles and methodology

This section describes the proposed approach to design and implement a VA extension to enable KGQA by focusing on Amazon Alexa as a VA provider. It details the introduced concepts related to Alexa skills and the proposed implementation of a KGQA VA extension. It is not a loss of generality since it can be easily adapted to any other VA that enables custom VA extension definition, such as Google Assistant, or in bot implemented by Microsoft Azure Bot Service or Googlebot. We opt for Alexa instead of plausible alternatives as Amazon Alexa holds the provider’s record with the greatest number of sold devices. However, the architecture of the generator leads to easy integration of novel VA providers, such as Google Assistant, that is actually under integration.

Amazon Alexa skills Functionalities in Alexa are called skills. Among the supported types of Alexa skills, we are interested in custom Alexa skills, where we can define the requests the Alexa skill can handle (intents) and the words users say to invoke those requests (utterances) [11]. An Alexa skill developer has to define a set of intents that represent actions that users can do with the resulting VA extension; a collection of sample utterances that specify the words and phrases users can use to invoke the supported intents; an invocation name that identifies and wake-ups the resulting Alexa skill; a cloud-based service that accepts and fulfils these intents. Mapping utterances to intents defines the Alexa skill interaction model. Utterances can contain slots, i.e., variables bound by users when formulating their requests, that can be validated by attaching to each slot a list of valid options during the interaction model definition. The back-end code can be either an AWS Lambda function or a web service. An AWS Lambda (an Amazon Web Services offering) is a service that lets run code in the cloud without managing servers. When the user poses a question, Alexa recognises the activated intent and communicates both the recognised and slot(s) values to the back-end code. Then, the back-end can perform any necessary action to collect results and elaborate a reply [11].

Virtual assistants for question-answering We model each supported SPARQL query template as an intent. The implemented intents (listed in Table 7) are tailored towards SPARQL constructs, and they mainly cover questions related to a single triple enhanced by the refinement of the subject or object class. More in detail, we cover

List of implemented intents by detailing an example that activates the intent, the intent name, an exemplary utterance where slots are represented among braces, and the SPARQL triple used in the SPARQL query formulation step

List of implemented intents by detailing an example that activates the intent, the intent name, an exemplary utterance where slots are represented among braces, and the SPARQL triple used in the SPARQL query formulation step

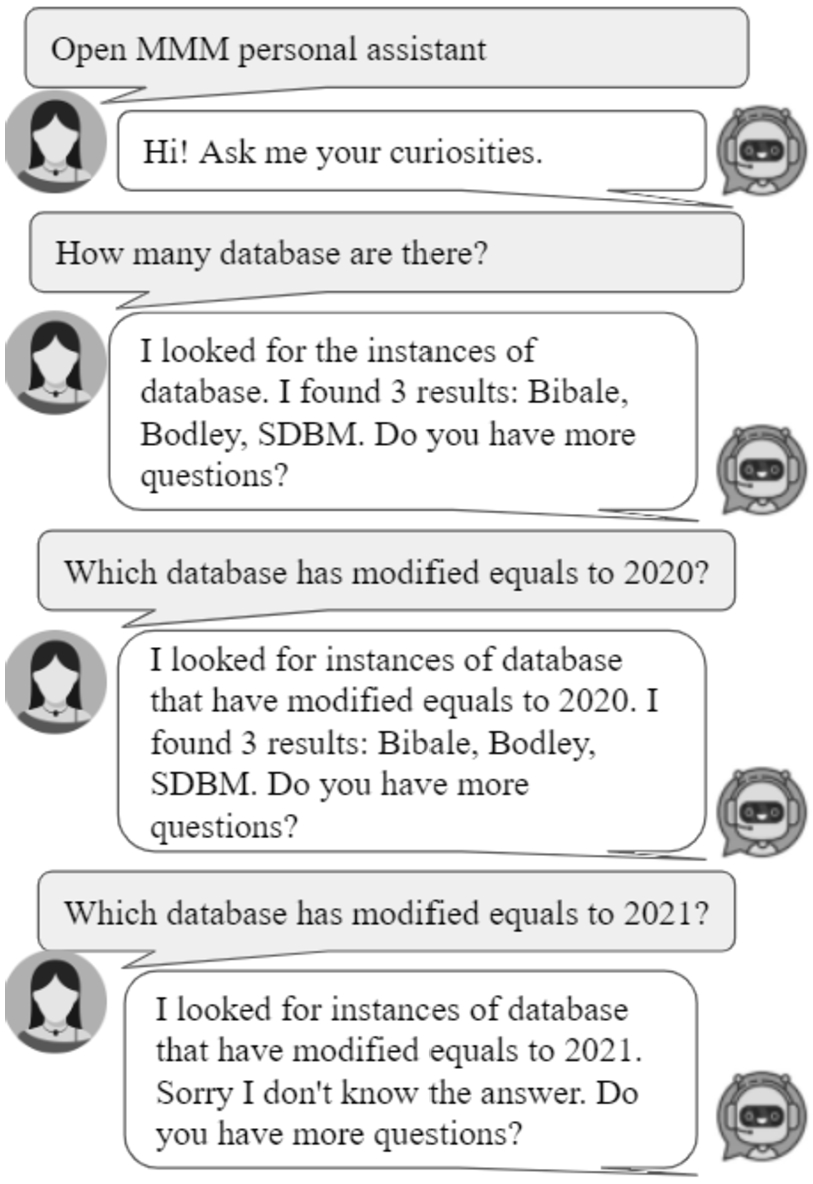

When the end-user poses a question, Alexa identifies the activated intent and notifies the back-end by communicating both the activated intent and the slot(s) values. For instance, in the CH use case reported in Fig. 5, users ask for Mona Lisa’s painter. The VA recognises that it corresponds to the

It is a graphical representation of the virtual assistant extension components where the yellow components are knowledge graph dependent and the VA extension in action in a cultural heritage use case by querying DBpedia.

Consequently, the entity and relation linking phase must be performed. It is worth noting that the performed task is a simplified version of the more general entity and relation linking problem. Entity linking is generally referred to as identifying in a text snippet entities and matching these to the corresponding KG entity. For instance, mapping in the question Who is the wife of the mayor of Rome? the textual evidence of Rome has to be isolated first, and then it can be mapped to the corresponding KG entity. In our case, named entity textual evidence is already detected by VAs, and we have only to map the named entity textual evidence to a KG node (like Rome to the node in the graph representing the city of Rome). To perform this (simplified) linking phase, an alternative is performing a dictionary lookup. In such a case, we store the mapping label URIs in a dictionary by querying KG classes, predicates, and resources URIs and the corresponding labels. The VA extension back-end exploits the dictionary to retrieve the URI(s) corresponding to NL labels. Resolved entities and predicates are used to complete the SPARQL template. We attach to each intent a different SPARQL query template. Consequently, any NL query posed by end-users is matched to the corresponding intent (according to the VA interaction model), and each intent corresponds to a SPARQL query template (according to our approach). Readers can reconstruct the complete SPARQL query corresponding to each intent by proceeding as follows: introducing the SPARQL triple(s) reported in Table 7 with the SELECT operator and appending the optional request of the label attached to the variable of interest. For instance, the triple

The proposed approach queries KGs in real-time by exploiting up-to-date data and it is entirely KG-independent. Figure 5 makes evident components that must be reconfigured based on the KG of interest and which components can be left unchanged. It is also a general-purpose approach and it can be easily adapted to domain-specific applications (see Section 6). Although, the performance of the implemented approach highly depends on the queried KG. More in detail, the quality of the replies is up to the label coverage; the execution time is up to the endpoint settings; the completeness of the reply depends on the endpoint results limit (if any); the lack of control in accessed URIs is due to the label ambiguity.

As a general process, utterances make no assumption on question interpretation and the application context. The covered SPARQL patterns contain at most three triples. We aim to extend the supported SPARQL patterns by implementing more complex queries. In particular, we are reasoning on iterative queries by consecutive query refinements conversation-based. It enables end-users to iteratively refine their questions, for instance, by applying filters consecutively.

The proposed approach is general enough to be exploited both in querying a single KG and multiple KGs by aggregating query results in the reply formulation step, which means improving the back-end implementation without modifying the general approach. At the moment, the generator can be configured to query a single KG at a time. However, we aim to investigate further how to query multiple KGs.

Automatic virtual assistant extensions generator

This section overviews the architecture and implementation of the proposed software framework to automatically generate VA extensions implementing KGQA by requiring little/no technical competencies in programming and query languages. The proposed community shared software framework is implemented in Python by guaranteeing modularity and extensibility. Our framework allows users to customise VA extension capabilities and generate ready-to-use VA extensions. Each phase is kept separate by satisfying the modularity requirement, and it is implemented as an abstract module. The proposed generator architecture is represented in Fig. 6.

Architecture of the proposed generator of question answering over knowledge graphs by virtual assistants.

The generator takes as input a configuration file containing the VA extension customisation options, as detailed in the following. The configuration file is parsed to verify the syntactical correctness and semantic validity. If both the checks pass, the generator returns the interaction model and the back-end implementation. The syntactical correctness checks if the configuration file is a valid JSON file, but it can be substituted according to the configuration file format. The semantic validation is in charge of spotting any configuration conflict and verifying consistency. Both the validations are performed by parsing the configuration file. Once passed these validations, the interaction model is created by extrapolating from a separated mapping file (stored in the back-end implementation as a JSON file) for each intent required by the configuration file, the corresponding set of utterances in the language configured by the end-user. It guarantees the ease in extending new supported languages, the possibility to revise utterances for each intent, and model new intents. The back-end is implemented in Node.js and maps to each intent the corresponding behaviour. It is configured according to the user language and the SPARQL endpoint of interest. The back-end is returned as a ZIP file containing the Node.js webhook and the implementation of the linking approach. Further details follow.

VA generator input: The configuration file The

Users can manually create the configuration file. Otherwise, they can exploit the

Workflow & output Once provided the VA Generator module with the configuration file, it can start the generation workflow, i.e., i) it checks the syntactical correctness of the configuration file by the

Links for Alexa skill deployment: developer.amazon.com and aws.amazon.com.

Extension points The generator version presented in this article (v1.0) supports the Amazon Alexa provider. Once validated the configuration file, the Alexa skills components (the JSON interaction model and the ZIP file implementing the VA extension back-end that can be uploaded on Amazon AWS) are created. Thanks to the architecture modularity, it is easy to develop new VA providers’ support by focusing on the

Concerning the linking phase, it is performed in a dedicated function (as reported in the documentation) to enable end-users (with competencies in programming and KG querying) to customise it, e.g., by calling APIs (as we point out in Section 6). The back-end exploits the (partial) dictionary to perform the linking step by default. If the slot value is resolved as a list of URIs by the dictionary lookup, it will exploit them during the SPARQL query formulation. Otherwise, the user value is used as-is in the SPARQL query formulation by comparing it with resource labels.

Moreover, developers may add new supported languages by translating utterances in the target language and extending the reply formulation mechanism to return replies in the desired language. At the moment, English and Italian are supported.

To add a new pattern, developers have to model the new intent as a set of utterances (by solving any arising conflict) and extend the back-end logic to formulate the related SPARQL query and the reply.

This section overviews the benefits and challenges in querying KGs by VAs by presenting a pool of Alexa skills for CH KGs. It is worth noting that it proposes use cases of the generator to demonstrate how a data curator might configure and use the generator to obtain a ready-to-use Alexa skill. Thus, we overview the generator configuration options, and we show the VA extension in action to make evident how the generator might be either used or configured to obtain VA extensions and to simulate all the supported patterns in practice. The VA extensions back-end and its interaction model are freely available on GitHub 1 . Moreover, the reported use cases underline the impact of data sources on the generated VA extensions. As an example, the consequences of missing labels attached to resources. While this section provides data curators with guided examples to use the proposed generator, Section 7 reports scenarios foreseen by CH experts and lovers in adopting VA extensions in CH tasks.

We propose a use case for each category of the CH taxonomy. In particular, for the tangible category, we propose the MMM use case for the movable sub-category, and the Hungarian museum use case for the immovable one; DBTune for the intangible category; NaturalFeatures for the natural heritage category; the UNESCO thesaurus for the terminology category.

Tangible movable category: MMM

MMM [39] is a semantic portal for finding and studying pre-modern manuscripts and their movements, based on linked collections of the Schoenberg Institute for Manuscript Studies, the Bodleian Libraries, and the Institute for Research and History of Texts. In particular, it models physical manuscript objects, the intellectual content of manuscripts, events, places, and people and institutions (referred to as actors) related to manuscripts.

MMM use case for the tangible category related to the movable sub-category.

The Hungarian Museum [31] provides access to the Museum of Fine Arts Budapest data.

Hungarian museum use case for the tangible category related to the immovable sub-category.

The VA extension usually refers to resources by labels. In this case, it returns the creation URL (see the reply in Fig. 8). It makes evident the consequences of lack of labels attached to resources and the difficulties in exploiting them in VA-based applications.

DBTune classical [36] describes concepts and individuals related to the Western Classical Music canon. It includes information about composers, compositions, performers, and influence relationships.

DBTune classical use case for the intangible category.

Natural Features is part of Scotland’s official statistics [37] that gives access to statistical and geographic data about Scotland from various organisations. In particular, we are interested in aspects concerning geodiversity, ecology, and biodiversity.

Natural feature use case for the natural heritage category.

The UNESCO Thesaurus [42] is a controlled and structured list of terms used in subject analysis and retrieval of documents and publications in education, culture, natural sciences, social and human sciences, communication, and information. Continuously enriched and updated, its multidisciplinary terminology reflects the evolution of UNESCO programs and activities. Like a thesaurus, it mainly provides access to synonyms and related concepts. It also partially behaves like a dictionary by providing term definitions.

UNESCO use case for the thesaurus category.

We demonstrate most of the intents listed in Table 7 by the overviewed use cases. We verify that the proposed approach is general enough to query data concerning different categories of CH, from museums to manuscripts, from music to term definition. Moreover, we also experienced some issues related to aspects pointed out in the CH KG analysis (Section 3) and challenges described in Section 4. In the following, we summarise KG properties that affect VA-based KG exploitation.

Evaluation

This section assesses the quality of the generated VA extensions and tests to what extent configuration options affect the returned VA extensions. It also tests the user experience of a group of CH experts in using an auto-generated Alexa skill and collects the impact and utility according to the CH community in making CH KGs interoperable with VAs. All the presented VA extensions and the discussed results are online available on the project GitHub repository 1 .

Performance of the proposed mechanism

It is relevant to assess the performance of the auto-generated VA extensions as a special case of KGQA over VA compared with systems categorised as traditional KGQA. This evaluation tests the accuracy and the precision of the auto-generated VA extension as an approach to verify to what extent the configuration affects the proposed assessment. It demonstrates that the generation of a VA extension in a single click already returns VA extensions as accurate as systems proposed in the literature evaluated on the same benchmark. Moreover, it also demonstrates that by tuning the generator configuration, end-users can significantly improve the accuracy and precision of the auto-generated VA extension.

Evaluation design

Methodology The following questions (Qs) guide our evaluation process:

Are the results achieved by the auto-generated VA extensions comparable with other KGQA systems in terms of precision, recall and F-score?

To what extent the manual configuration refinement affect results?

Which linking approach between the dictionary lookup and API-based approach achieve the best results?

While Q1 compares the proposed approach with alternative KGQA approaches, Q2 and Q3 have been evaluated to overcome any scepticism by end-users regarding the impact the generator configuration may have on the generated VA extensions’ performance. Thus, they analyse to what extent the linking approach and the lookup mechanism affect the performance of auto-generated VA extensions.

Dataset & baselines We rely on a standard benchmark for KGQA systems, QALD,6

QALD challenge:

Settings We generate the DBpedia and Wikidata Alexa skills by the proposed software framework. The generated VA extensions are different in configuration options (manual VS auto) and linking approach (dictionary VS APIs). Further details follow.

Wikibase-sdk:

Procedure We perform the evaluation by retracing the following steps. Given the QALD (QALD-7 for Wikidata and QALD-9 for DBpedia) question set,

for each question, we manually check if the pose question matches one of the supported intents (according to Table 7) or if we can transform it into a chain of supported intents. For instance, the question “What is the time zone of Salt Lake City?” in QALD-9 on DBpedia matches the getPropertyObject intent (“What is the p of e?”) where <time zone> plays the role of p and <Salt Lake City> plays the role of e. The question “What is the name of the university where Obama’s wife studied?” in QALD-9 on DBpedia can be transformed into a chain of supported intents where first users can ask for “Who is the wife of Obama?” (corresponding to the getPropertyObject intent where wife is the predicate and Obama is the entity) and then, “What is the school of Michelle Obama?” (corresponding to the getPropertyObject intent where school is the predicate and Michelle Obama is the entity). If not, we skip the question. Otherwise, we will continue the procedure.

we check the activated intent, and we formulate the query according to one of the supported utterances.

we query the VA extension by the adapted question;

all the replies returned by our VA extension (including empty results) are stored in a JSON file.

We exploit the official system used to evaluate the QA systems joining the QALD challenge, GERBIL [45], to perform the result assessment.

For Wikidata and QALD-7, the previous procedure requires updating replies in the testing set to compile the current Wikidata version (July 2020). We use the updated version of the QALD-7 training dataset,8

QALD-7 training set updated to July 2020 Wikidata status qald-7-train-en-wikidata-July2020Version.json .

Metrics We follow the standard evaluation metrics for the end-to-end KGQA task, i.e., we report precision (P) and recall (R) and F-measure (F1) at a micro and macro level.

Configuration options, the DBpedia case We compare results achieved by (manual and auto-configured) DBpedia Alexa skills.9

Manual configured and auto-configured DBpedia Alexa skill results, respectively:

Manual VS auto-configured DBpedia Alexa skills and systems joined the QALD-9 challenge. Best results are highlighted in bold

Linking approach, the Wikidata case The QALD- 7 training set contains 100 questions, but 4 questions cannot be more answered. We reply to 76/96 questions, while the remaining 20 questions correspond to not supported patterns. Table 9 reports results of the auto-generated WikiSkills over QALD-7.

Not surprisingly, the dictionary-based linking approach is more precise than the API-driven approach, as a dictionary gives the possibility to tune and customise the order and the priority in the URIs list attached to the same entity or predicate (Q3). For instance, the term Paris might be attached to the French capital and VIPs whose name is Paris, such as Paris Hilton. If the VA extension is designed to be used as a virtual guide in a museum, the dictionary-based configuration can attach a higher priority to Paris as a city instead of other interpretations. This mechanism cannot be performed in the API-based configuration. Even if the dictionary represents a static snapshot of the KG content, it can be exploited both in the entity and relation linking task. On the contrary, it is required to verify if APIs offer both linking mechanisms. The dictionary-based linking approach is also KG-agnostic, i.e., it is independent of any external service. It only requires configuration time and extra storage in the back-end but guarantees URIs’ direct and immediate (without execution time) access. Moreover, the dictionary-based solution is general enough to enable the VA extension back-end configuration with any KGs without any constraint.

Dictionary based VS API-driven WikiSkills (WSs) on QALD-7. Best results are highlighted in bold

This section assesses the usability of an auto-generated Alexa skill according to HETOR10

Participants and setting 5 delegates of the HETOR project joined the usability evaluation of the UNESCO Alexa skill generated by the proposed generator, corresponding to the one described in Section 6. The evaluation took place remotely due to the COVID-19. As the VA extension has not already been published on the Alexa store, we deployed the VA extension on the Alexa developer console and asked participants to interact with the textual interface. We behaved as moderators while asking participants to formulate questions, and we collected their thoughts and reactions.

Protocol The performed protocol follows:

an introductory overview of the objective of the user experience evaluation, the queried source by looking at the UNESCO Thesaurus browsing interface,11

the assignment of a collection of tasks to each participant posing questions such as The definition of digitisation, The definition of CH, The specialisations of digital heritage, The generalisation of digital heritage, The terms related to CH. Participants are encouraged to identify the pattern to pose the related questions and collect replies returned by the Alexa skill for each task.

Data collection At the end of the evaluation, the moderator asked for the fulfilment of a final questionnaire to evaluate i) users’ satisfaction based on a Standard Usability Survey (SUS [26]) and ii) their interest in using and proposing the tool by a Behavioural Intentions (BI) survey. The questions of the BI survey are i) “I will use this approach in the future”; ii) “I will recommend others to use the proposed approach.” and users can use a 5-point scale to reply. Moreover, the moderator annotates all the comments and observations raised during the evaluation.

Results The proposed approach achieved a SUS score of 80, close to the higher step, interpreted as a great appreciation of the proposed tool and the propensity to propose it to others. The latter result is verified by the BI survey which achieved a mean score of 4.6.

Besides the tasks explicitly assigned by the moderator, participants started posing queries on their own, asking for the generalisation of mosques and synagogues, the definition of amphitheatre, the generalisation of Catalan or Gothic, generalisation of painting and specialisation of fine arts.

Furthermore, the proposed mechanism seems particularly useful in guided tours to guarantee personalised interactions, guided by curiosities avoiding boring prepackaged presentations of artworks and points of interest. VA extensions as virtual guides can overcome the lack of interest in the entire exhibition and too detailed descriptions, lack of customisation in terms of tour duration, interests, and curiosities. It also solves the linguistic gap between visitors and personnel. It enables the possibility to perform tours to the desired speed with the chance to repeat unclear passages without bothering other visitors. If it may be an exciting alternative to audio guides already available in museums, it might be revolutionary for city tours to explore monuments or points of interest spread in a city or minor realities, such as small villages.

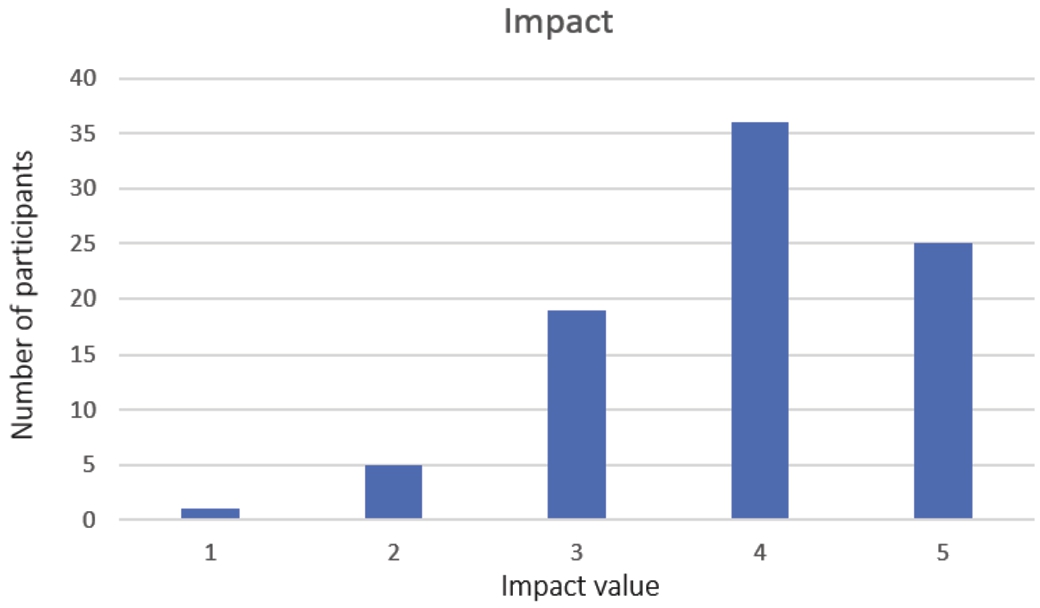

This section discusses the perceived impact and utility from the end-user perspective. We proposed an online survey to collect opinions and suggestions. We do not limit ourselves to experts in the CH field but also try to involve CH lovers to take their views into account. Moreover, it is worth noting that we do not limit this survey to experts in the field as we are assessing the perceived impact and utility from the end-users side, meaning that we need to collect opinions by interviewing potential users of the resulting VA extensions.

Participants and setting 86 people joined the online survey administered for one week, from September 15th to September 22nd, 2021. All the participants spontaneously joined the survey in an anonymous form. 73 people are (very) interested in CH by rating their interest at least as 4 out of 5. 24 of them are experts in CH by rating their expertise in CH at least as 4 out of 5. By looking at people who consider themselves experts in the CH, they have limited expertise in Computer Science, stressing that it is crucial to provide the CH community with tools that do not take their competencies in programming, query languages, and computer science for granted.

Data gathering and survey outline The survey has been administered in English and in Italian, and its content is described in Table 10 that reports questions, reply format, and the rationale behind each posed question. The survey is structured in three main sections: i) general information about participants’ expertise and interest in CH, the spread of VAs within the CH community interpreted as people that are either experts or interested in CH, alternative means used to query and explore CH; ii) the perceived utility in terms of application contexts, feeling in adopting the proposed approach instead of traditional CH exploitation means, the perceived impact achieved by spreading CH data by VAs, queries users are interested in to evaluate the intent coverage and to collect ways users naturally pose questions; iii) finally, general suggestions and comments as a free text.

Impact and utility survey outline

Impact and utility survey outline

Results This paragraph reports the most common replies and interesting considerations concerning the proposed approach in the field of CH.

Diffusion of VAs in the CH community

The diffusion of VAs within CH lovers and experts is also confirmed by results reported in Table 11 that summarises the most used VA providers and the frequency of their usage. Most of the participants have their favourite VA and usually stick to it without experiencing multiple providers. If a single provider is chosen, Google Assistant appears to be the preferred one. VA usage is still limited to a few days a week, meaning that there are still barriers to the wide exploitation of VAs in this community and it requires overcoming scepticism, perhaps, leveraging on curiosity connected to novelty or demonstrating to the potential users about utility and potentialities.

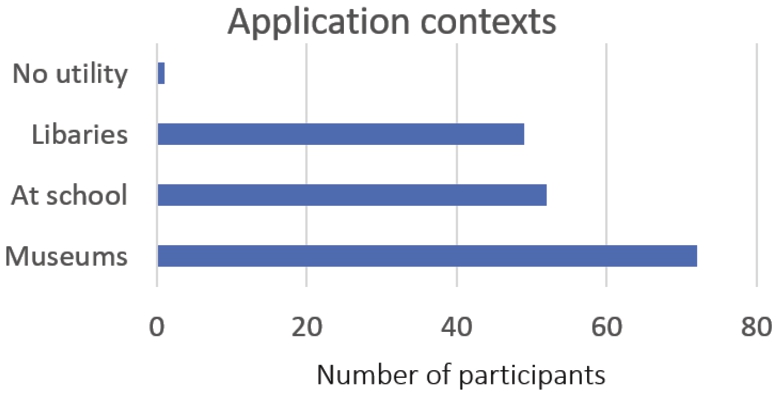

If compared with traditional text-based interfaces, by asking for activities that can be performed

Application contexts rated by survey participants.

Furthermore, we also asked users to think about any other application context that can take advantage of VAs. In 39 out of 67 cases, users see the potentiality to adopt the proposed approach as a virtual guide not only in a museum but to guide visitors while wandering in an unknown city, above all while visiting small villages, unconventional destinations, or cities with low population density and high cultural impact, dense of archaeological parks or churches. An interesting consideration has been proposed by more than one participant that assessed that the proposed approach is particularly useful when there is no possibility to type requests, for instance, while driving. Moreover, a user also suggested thinking about the exploitation of VAs in a virtual museum by simulating a real tour also in terms of a tour guide. In 14 out of 67 cases, users state that it might result in a promising individual learning tool at university to learn about terminology and clarify doubts while preparing for exams or scientific contributions, at school to disambiguate terms, at home to deeper knowledge and awareness about CH, for young learners to overcome limits posed by textual interfaces and to leveraging on their curiosity. Moreover, further considerations concern the inclusiveness of the proposed approach able to overcome disabilities related to limited usability of textual interfaces or blindness. Users also proposed VAs as support in superintendence offices, in archives to guide the lookup phase, as support in offices, and (surprisingly!) in hospitals.

Perceived impact of the capability of VAs to spread the culture of CH.

Analysing topics users are generally interested in addressing by the posed questions, they inquire about point of interest’s curiosities and details, such as “Which is the history of monument

Participants expected to use the proposed approach to plan a trip. Thus, besides collecting cultural information, users are also interested in collecting practical information about points of interest, such as the accessibility posing questions like “Which is the ticket for visiting

Some participants simulated a conversation with a thesaurus by clarifying terms and terminology. Moreover, they were interested in looking for details about the queried sources to assess their reliability or retrieving the list of sources containing information about a given topic. As an alternative, according to the school level and subject, learners might be interested in specific information, such as “Who is restoring

Many participants threatened the proposed mechanism as an approach to fulfil general curiosities, such as “Is there any legend behind

The most common questions concern tangible CH, both movable, such as artworks, coins, and documents, and immovable, such as churches, monuments, and castles. However, users are also interested in curiosities related to intangible CH, such as the folklore of local traditions, such as “What are the traditional dances of

While participants posed questions in most of the cases, some of them used their imaginary VA extension to explore available data. For instance, “Give me all the titles/authors belonging to the topic/category

Besides textual replies, users are also interested in visualising photos and videos, such as “Show me

Looking at the way questions have been formulated, the proposed intents successfully reply to most of them. In most cases, participants formulated complete questions, while they rarely posed commands to collect information. The proposed approach misses the fulfilment of complex queries, which are quite rare in this questionnaire. For instance, currently, it cannot deal with questions like “How long did it take the building of

Participants mainly used the comment question to compliment the project, assessing that the project has enormous potential, it guides digital transitions to our country, and it is extremely versatile as it might be applied to any application context. We are in a modern world, and everything is going to be connected with technology. CH should not remain out of this.

This section discusses the perceived impact and utility from the CH data curators’ perspective. We proposed an online survey to collect opinions and suggestions, and we administered it to two different groups of CH experts who are either modelling or are planning to model their data as KGs. This survey collects opinions and comments of potential users of the generator who might decide to propose the resulting VA extensions as data exploitation mean.

Participants and setting 5 people joined the online survey belonging to two different groups of CH experts. While 3 of them are delegates of the HETOR project, the other 2 researchers belong to a research group of Medieval Philosophy at the University of Salerno. The HETOR group mainly models tangible CH concerning local and national CH as tabular data releasing them according to the Open Data directive. They are planning to expose their data as KGs in the future. On the other side, the research group of philosophers is designing an ontology to model their collection of medieval manuscripts by representing the co-occurrence of terms, philosophical concept interpretation, and philosophical movements, both concerning Greek and Latin culture. The two research projects spontaneously joined the survey. They are people interested and experts in CH.

Data gathering and survey outline The survey has been administered in English and in Italian, and its content is described in Table 12 that reports questions, reply format, and the rationale behind each posed question. The survey is structured in three main sections: i) general information about participants’ expertise and interest in CH, the spread of VAs within the CH community interpreted as people that are either experts or interested in CH, alternative means used to query and explore CH; ii) the interest in making their data accessible by VAs; iii) general suggestions and comments as a free text.

Interest in making CH data accessible by VAs survey outline

Interest in making CH data accessible by VAs survey outline

Results This paragraph reports the most common replies and considerations related to the exploitation of the proposed approach in the field of CH.

The group of Medieval Philosophy is modelling history of philosophy data and related metadata by ontologies and plans to materialise the related KGs in the next future and to make them accessible to all. Even if planned exploitation tools are still under investigation, they hypothesise exploiting data in data visualisation approaches to guide users in interpreting data. They see potentialities to make them accessible by VAs, reacting with curiosity and enthusiasm but mainly focusing on actual data to improve their accessibility. They are a bit sceptical about making metadata accessible by VAs as they cannot already foresee an application context of interest as only experts are usually interested in metadata, in their opinion.

In both groups, participants stated that their working groups have no competencies in querying languages, such as SPARQL. Thus, providing this community with CH data exploitation tools not requiring technical competencies is crucial.

We propose a general-purpose approach to perform KGQA by VAs, and we embed it into a community shared software framework to generate VA extensions requiring minimum/no programming and query language competencies. Our proposal may have a significant impact as it may unlock the Semantic Web technologies potentialities by bringing KGs in everyone “pocket”. This play on words underlines that the proposed system generates VA extensions that can also be accessed by smartphones. Furthermore,‘everyone’s pocket” is a metaphorical alternative to “everyone means” and it stresses that the proposed mechanism offers the opportunity to let almost everyone query KGs without asking for any technical competence.

Besides its general-purpose nature, we considered it particularly suitable for the CH community for different reasons. First, the CH community heavily invested in publishing data as KGs, as demonstrated by the survey detailed in Section 3. Consequently, we believe it is useful to provide them with tools and approaches to exploit the vast amount of available data easily. Second, CH lovers are usually supplied with tools and interfaces to explore the results of data exploitation means, such as virtual exhibitions, data visualisation tools, and question answering applications. However, they are rarely moved to the position of active curators of available data. On the contrary, the proposed generator moves the CH community in the position of generating their QA tools able to query any data source of interest provided with a working SPARQL endpoint. Thus, librarians can query their book archives; musicians can pose queries on music collections, and art gallery curators can provide visitors with a virtual guide able to reply to questions instead of reproducing standard tracks narrating artifacts’ details. It is the first attempt in the literature to empower lay-users to create personalised and ready-to-be-use VA extensions.

We propose a reusable prototype of a VA extensions generator to query any KG. In its actual open-source release (v1.0), we allow the building of Alexa extensions, and we aim to provide support for the Google Assistant. It is important to note that we followed all the best practices in software design (e.g., abstraction and modularity) to guarantee technical quality and make the generator fully extensible.

The proposed community-shared software framework is available on GitHub 1 with an open-source license. The ISISLab research lab of our Department will maintain the code and drive its evolution. We aim to extend the supported patterns by formulating iterative queries with consecutive refinements. Moreover, we plan to evaluate further our software framework’s usability and user perception in real settings.