Abstract

Many research centers and medical institutions have been accumulating a vast amount of various biological and chemical data over the past decade and this trend continues. Based on Linked Data vision, many semantic applications for distributed access to these heterogeneous RDF (Resource Description Framework) data sources have been developed. Their improvements have brought about a decrease of intermediate results and optimizing query execution plans. But still many requests are unsuccessful and they time out without producing any answer. Also, the applications which operate over repositories taking into consideration their specificities and inter-connections are not available. In this paper, the SpecINT is proposed as a comprehensive hybrid framework for data integration and federation in semantic data query processing over repositories. The SpecINT framework represents a trade-off solution between automatic and user-guided approaches, since it can create queries which return relevant results, while not being dependent on human work. The innovativeness of the approach lays in the fact that the coordinates of graph eigenvectors are used for the automatic sub-queries joining over the most relevant data sources within repositories. In this way searching can be effected without a common ontology between resources. In experiments, we demonstrate the potential of our framework on a set of heterogeneous and distributed cheminformatics and bioinformatics data sources.

Introduction

New data about chemical compounds, the influence they have on cancer cell-lines, genes and proteins, genetic variations and cell pathways have been emerging at a staggeringly rapid pace in recent chemical and biological experiments. Research centers and laboratories work independently storing data in different data formats with different vocabularies. The very abundance of heterogenic data sources prevents the life science community reaching its maximum. In this information vortex scientists need to put effort into finding and pairing relevant information over heterogeneous data within different data sources and consolidating repositories. For the successful performance of biomedical research, data integration grows into an important precondition for overcoming the existing gaps in resources and for introducing time savings. In [48] the authors indicated the importance of data integration in cheminformatics and bioinformatics.

For efficient query processing in semantic-oriented environments, sophisticated query generators and benchmarking systems for their performance evaluation have been developed. Drawbacks of benchmarking systems arise from the fact that they rely on a set of predefined static queries over particular data sources [3,25,42]. However, the automatic query generators are still faced with many problems. Firstly, the process of setting parameters for an algorithm and thresholds can be difficult without prior knowledge of the data. This leads to the collecting of different statistics which are changeable over time. Secondly, in such piles of generated queries, many are without answer, and many of them return unnecessary data. At the same time, the processes of seeking the most promising queries, their execution and evaluation are time-consuming. Even then, in most cases the results are not satisfactory for the research community which expects correct results in real-time. Thirdly, these approaches cannot explore more repositories with many data sources following their specific integration and connections. Very often repositories integration is not possible because there are no mapping schemes between them. Additional aggravating circumstances are the completely different structure and connections between data sources within different repositories.

Automatic query generation is less tedious and can produce many queries which are used only for query execution evaluation, not for end-users and their demands. Meaningful and real queries can only be generated manually (user-guided) or semi-automatically requiring a lot of effort since the content of the data sources needs to be analyzed in advance. However, the problem of integrating data from multiple data sources and repositories is still a challenge. Hand-crafted queries require a lot of effort and knowledge about data sources, whilst automatic query generation can produce many queries which should be manually tested and chosen for further distribution.

Our solution is based on a hybrid technique involving a human role in creating hand-crafted sub-queries (patterns, templates) as a very important guide for satisfactory results. The paticular query patterns are connected into queries automatically, seeking the most relevant data sources (from different repositories) which belong to and which potentially consist of the triples of interest. For the solution to this task we used the eigenvectors of a graph which enable us to follow the paths (edges) between these data sources. These edges suggest an aggregation of the most relevant data and that is why only the connected data sources are considered. All this is performed over different repositories and on-the-fly. The project contributors found that these paths lead to the best decision-making, rather than exploring every single triple in the repositories. In contrast to the state-of-the-art Federated SPARQL query engines which are dependent on the common ontology and triple statistics, our solution connects data sources within repositories by using the graph eigenvectors [46] and vertices ranking [2,9], without a common ontology between resources.

The SpecINT1

CPCTAS-LCMB, Serbia,

Advancement: A SPARQL query framework based on the concept of a mathematical graph is developed – the graph eigenvectors are used for the relevant data sources selection and their patterns joining.

Scalability: A straightforward model for linking data from repositories on-the-fly is proposed.

Federation: Generated Federated SPARQL queries gather novel and complementary data about substances in real time. Constant statistical calculations and update monitoring are avoided.

Availability: Our data are made available to the entire research community. All the code to reproduce this study have been published online.3

The lack of information about the endpoints availability and limits, makes any query not completely applicable in the context of federations of endpoints. Because of this the results could sometimes be incomplete. The current version of the framework is specialized for the life sciences, but under certain conditions it is extendable to other areas. Also, this approach is semantic-based and we are not able to collect data from other non-RDF data sources.

The rest of the paper is organized as follows: The second section gives an overview of the existing literature of significance for the study area. The third section is devoted to novel data source integration reflecting the framework’s scalability. We give, as a motivation example, two use cases which can be performed by using the framework in the fourth section. The fifth section describes the architecture and functioning principles of the proposed system for integration and query federation. The sixth and seventh sections discuss the results, benefits and limitations of the framework. The paper concludes with a summary of key points and directions for further work.

In this section, we provide an overview of both types of existing query generators, automatic and user-guided and highlight the main differences of the SpecINT framework in respect to existing generators. Basically, the SpecINT framework can be treated as a trade-off solution between these two approaches, since it can create queries which return relevant results, while not being dependent on human work and personalized experience. More precisely, it is not a completely automatic query generator able to create queries from scratch, but it picks up the existing pattern queries automatically and fits them into the final SPARQL query. Also, the framework requires less human interventions, since the simple mathematical apparatus provides satisfactory accuracy of the queries.

First, we provide a brief overview of the existing query generators developed for grained evaluation of Federated SPARQL query engines. These federation systems are basically developed for optimizing the query runtime thus their generators cannot be used for satisfactory user experience. Although some query generators can operate over distributed data sources, they cannot select data sources on-the-fly, which have the largest probability to consist of the relevant triples, neither can they connect repositories without global mapping. Some generators of this type are mentioned below. FedX [42] has been developed for comparing the general purpose of SPARQL query federation systems. It focuses on strategies which can decrease the number of query transmissions and reduce the size of intermediate results, but their drawbacks arise from the fact that they rely on a set of predefined static queries over particular data sources. The FedBench [41] is the only benchmark proposed for Federated query which evaluates the Federated query infrastructure performance including loading time and querying time. However, the FedBench has a static data source and query set, too. DAW [39] provides a set of static queries based on the characteristics of BSBM (Berlin SPARQL Benchmark) queries [8] from four public data sources. However, all the queries are statically generated thus cannot be used for specialized federation systems. Furthermore, these queries are simple in complexity (maximum of 4 triple patterns per query). To address this problem, some federation systems generate a random query set for a specified data source. A study by Umbrich et al. [45] extended query semantics for conjunctive Linked Data queries (LidaQ). LidaQ produces queries based on three main shapes (entity, star and path shapes) for Federated queries benchmark. This query generator produces sets of similar queries by doing random walks of certain breadth or depth. The query set generation of SPLODGE [26] is based on the data source characteristic that is obtained from its predicate statistic. Due to the random query generation process in SPLODGE using cardinality estimates, it is not uncommon that different queries with the same characteristics basically yield different result sizes. DARQ [36] and SPLENDID [25] make use of statistical information (using hand-crafted data source descriptions or VOID) rather than the content itself. Some data sources are continually expanding, so an application has to frequently update from RDF repositories. However, maintaining comprehensive and up-to-date cached data is an impossible task. New improvement came with ANAPSID [1] reflected in updating the data catalogue and execution plan at runtime. For a more comprehensive survey of the listed federation systems see [37]. FEASIBLE [38] is an automatic approach for the generation of benchmarks out of the query history of applications, i.e., query logs. The generation is achieved by selecting prototypical queries of a user-defined size from the input set of queries. In the paper [40] SQCFramework is proposed, a SPARQL query containment benchmark generation framework which is able to generate customized SPARQL queries from real SPARQL query logs. By using different clustering algorithms, the framework can generate benchmarks of varying sizes, with different significant (important) SPARQL features.

Beside the earlier listed shortcomings of the automatic query generators, these generators operate over the data sources given in advance, and have no ability to include other data sources without statistical calculations or global mapping. Also, they cannot handle the same data source over repositories simultaneously, where it has different predicates and connections. The only solution which explicitly deals with the integrated querying of distributed RDF repositories is described in [44]. Stuckenschmidt et. al theoretically described how to extend the Sesame RDF [10] repository to support distributed SeRQL queries over multiple Sesame RDF repositories. They use a special index structure to determine the relevant sources for a query. However, this approach is of a purely theoretical nature.

On the other hand, many existing applications provide a user-friendly interface for exploring bioinformatics data sources and allow users to intuitively create and perform Federated SPARQL queries, since SPARQL has a complex syntax. These applications can create useful queries which follows from the fact that the user follows the imposed steps through the interface, selects the relevant data sources (endpoints), predicates and subjects/objects, thus making room for decisions on how to connect these single pieces into a query by using the expert knowledge. Examples of such applications are: GoWeb [19], SPARQLGraph [43], Smart [17], BioQueries [24], BioSearch [28] etc. These applications were designed for the visual creation, editing and execution of biological SPARQL queries. PIBAS FedSPARQL [20] is an application that also runs Federated SPARQL queries for several bioinformatics topics. In this application the user has to navigate through the system and select query parts. As an advanced feature, PIBAS FedSPARQL provides the possibility of detecting similar data using results of predefined queries as an input.

However, all these applications are based on personal experience and affinities, while the drawbacks of some applications are also reflected in the impossibility of adding new datasets and in the supporting of a small number of specific endpoints. The SpecINT framework requires less human interventions, since the relevant data sources are selected by using the graph eigenvectors which show envious accurate results. Our approach gives more general answers to researchers who are not familiar with the SPARQL syntax and repositories organization.

Automatic query generators suffer from many disadvantages described in the previous section. The SpecINT framework represents a trade-off solution between automatic and user-guided query generators which is created in order to extract knowledge from the life science repositories. Today, there are several semantic based repositories (initiatives) for biological and chemical data sources integration: Bio2RDF [5], LODD [29], Chem2Bio2RDF [13], EMBL-EBI [30], Open PHACTS [49], ChemSpider [34] etc. Most current RDF infrastructures store information locally as a single knowledge repository according to certain design decisions. It means that the RDF models are replicated locally from remote sources and are merged into a single model regardless of the distributed nature of the Semantic Web. In many cases, we are forced to access external data sources from an RDF infrastructure without being able to create a local storage of the information we want to query. For example, we do not have permission to copy the data, data sources are too large to create a single model containing all the information, a data source is not available in RDF, but can be wrapped to produce query results in RDF format and so on [44]. On the other side, the Open PHACTS Discovery Platform [27] takes a local copy for performance reasons, but the data remain in their original form. It provides integrated access to 11 Linked Datasets covering information about chemistry, pathways, and proteins. Queries then extract relevant parts of each dataset based on contextualized instance equivalences retrieved from the Identity Mapping Service. However, no repository can cover all datasets, which only confirms the need to deal with repositories that are distributed across different locations enabling data freshness and scalability (an easy integration of novel data).

New data integration

This section is devoted to the publishing of new data sources. In order to make data widely available, data should be linked to other data sources by entity matching. According to LOD cloud statistics4

Aiming to meet the principles of Linked Data5

Part of PIBAS map

Our framework enables a variety of use cases, of which two are explained below. Note that this is a proof-of-concept project and the data is not updated.

New candidates for anti-cancer drugs

Data from the SpecINT could be of high value for chemists and biologists since these scientists have insights into the antitumor properties of complexes, which could reveal a possible strategy in the designing of new metal-based drugs. They could, for example, use our framework to link both, biological data (e.g., proteins’ structure and their pathway) and chemicals (particularly drugs, interacting with proteins) together. Also, they can find out the influence the substances have on cancer cell-lines (e.g.,

It was shown, in the CPCTAS laboratory, that Pt(IV), Pd(II), and Rh(III) complexes induced oxidative stress and cytotoxicity in the HCT-116 colon cancer cell line [11]. Also, Živanović et al. [50] investigated the biological effects of bicyclic seleno-hydantoin

New integrated data

The SpecINT framework is not only a query generator over existing repositories. In Section 3 we described the procedure for new data source integration. This step makes all our data available to the research community and also demonstrates how others can publish their data and be connected to large initiatives such as KEGG, DrugBank, PubChem etc. These data arise from the CPCTAS investigation of the influence of bioactive substances on human cancer cell lines. Standardized tests cover monitoring of cytotoxicity, the type of cell death, the mechanisms of apoptosis, migration and angiogenesis and prooxidant-antioxidant mechanisms which are important for regulation of these processes. Experiments are based on protocols such as the MTT cytotoxicity test, AO/EtBr staining of cells for examination of the type of cell death, the Western blot technique for examining proteins, Multiplex and qRT-PCR, Transwell migration assays, Real Time Cell Analyses, and others.

It is well-known that cancer is the second leading cause of death after cardiovascular diseases, and finding the appropriate therapy is of key medical and scientific interest, with a potentially substantial economic impact. All types of cancer display a characteristic uncontrolled cell division followed by the ability of these cells to invade healthy tissues. This clearly shows the need for virtual integration of RDF data sources, since conducting all experiments which include a large number of complexes and all known cancers is a very expensive process. Beside the financial aspect, the framework enables the evidence that the researchers find for some substances to be compared with evidence included in other initiatives.

SpecINT architecture.

The constant expansion of new data sources brings about problems in analysis of the disconnected and heterogeneous data which are crucial for future successful and purposeful surveys. Thanks to Semantic Web standards and online data exploration through open endpoints, it is possible to search these data sources in a single SPARQL query. The integration and extraction process of novel knowledge from these data is imminently problematic.

Retrieval of information about molecular structures from databases and RDF data sources is best done with unique identifiers. The IUPAC International Chemical Identifier (InChI) has recently acquired a prominent role as a unique identifier, and is increasingly used to make resources and literature machine readable [14]. Compared to the InChI, the Simplified Molecular Input Line Entry System (SMILES) is often not unique, causing relevant data to be lost in the search. In this paper, we use the InChIKey as the framework input – a hashed version of the full standard InChI, designed to allow for easy web searches of chemical compounds. Bearing in mind how the new and existing data sources are connected within repositories, let us explain the functioning of the framework and what happens in the background, from the forwarded input to the obtained SPARQL query as a result.

The architecture of the framework is shown in Fig. 1 and is explained in the following subsections. The whole procedure of constructing the Federated SPARQL queries is presented part by part through the example. In Section 5.4 all these pieces are put together in a logical way, in order to arrive at the correct queries which encompass as many relevant results as is possible.

Sub-query patterns

Data source patterns within repositories

Data source patterns within repositories

The resulting large volume of data makes manual exploration very tedious and complicated. Moreover, the velocity at which these data change and the variety of formats in which bio-medical data are published makes it difficult to access them in an integrated form. In the case of semantically based data sources, the researchers have to explore each data source separately, its triples and mappings. Very often a data source consists of hundreds of thousands, even millions, of RDF triples. Further, the SPARQL queries have to be written and executed, the obtained data should be arranged in meaningful and useful knowledge, thus it can be used to support bio-medical experts during their work. In real-life applications the results should be filtered and well organized in the short term, which is almost impossible in these circumstances.

In Section 2 we provided an overview of the existing query generators, but this is not what we need for real-life tasks. Also, we listed several reasons why we cannot use these queries as the patterns (sub-queries) for our SPARQL queries. The lack of an integrated vocabulary makes querying this data more difficult, especially in situations when the URIs over repositories are not the same. Even when all generated sub-queries are valid, it is almost impossible to fit them all into one complex query which operates over repositories. Here, we use the pattern queries that were partially handpicked from initiative examples and partially handcrafted, since the correct results are important for our framework. Some examples of the used patterns are shown in Table 1. The bolded terms are unknown subjects and objects which are determined on-the-fly and changed with corresponding instances (URIs), while the predicates are bounded. Later, we will explore the “same as” kind of relationships within repositories which are used for the connection of these query patterns, without a common ontology between repositories.

In this subsection we describe the process of selecting data sources which looks at the most prominent resources for data of interest. In later steps, these results could be additionally filtered. The query generator should carefully determine the data sources for the query, since a wrong choice either leads to expensive communication with many intermediate results being memorized or the system failing to contribute any results.

Graph coalescence between Chem2Bio2RDF and Bio2RDF repositories.

The most practical way to connect two data sources is to use the values of the main notions which the data source is created around. Consider, for example, all drug information in KEGG can be connected with drugs in DrugBank by the owl:sameAs relation; which is an identity link that joins two entities having the same identity. To gather all information about a specific substance, the chemical structure of the substance is transformed into the corresponding InChIKey identifier. Then, we use the UniChem [12] search API from the European Bioinformatics Institute (EBI) to obtain a list of substance synonyms, but without their corresponding URIs. UniChem as a free available service allows mappings of small molecules based on adopted and stable standards, InChIs and InChIKeys. To be more precise, the synonyms represent the labels of a substance belonging to different data sources. For

Taking into account that SPARQL query originates from the directed graph, we construct a graph from the obtained synonyms as the vertices labels following the relationships within each repository. This step will determine how the repositories can be connected, and additional filtering performed. Following the certain paths in the graph, the order of patterns is determined thus searching can be effected without a common ontology between resources. If these paths are wrong, the query will not be able to connect successive patterns and automatically the query will not be valid. This procedure involves two basic steps.

A. Undirected graph construction. This step reveals our hidden intention to save the information about vertices affiliation, connecting vertex between repositories, since the following graph perturbations and edge removal would mix up known affiliations. The repositories affiliations (URIs) are very important, because the same data source could have different predicates and interlink orientation. These URIs can be obtained easily from the URI pattern belonging to the relevant repository, but they are omitted for figure clarity.

All labels, found in the previous step, form the complete graphs

For the better explanation of our example, besides the dataset label, all vertices also have number label, starting from 0 to

B. Directed graph construction. For the valid results’ retrieval, the process of creating the most suitable sequence of the data source labels is performed. Prior to query generation, the framework has to check the existence and orientation of the edges. It is possible to find interlinks between data sources by searching the specific keywords being a substring of a property string in a tie between two compounds. With edges (source, target) obtained from the triples (?source ?property ?target) we can convert the graph

It is known that the importance of each vertex is proportional to the sum of the importance of all the vertices that link to it. Simple calculation says that this is an eigenvalue and eigenvector problem (more details can be found in [9]). Now, for oriented graph

Graph construction procedure

However, this procedure does not guarantee that all data sources we want are covered. In the case when a novel source is integrated (low rank value) or the specific answers are preferred (located in specific data sources) it is necessary to provide a way to force the selection of these data sources. For example, if drug targets are in focus, specific vertices will be favored for better results. For this purpose we developed a simple ontology which consists of information about data sources. Also, we developed several heuristics which are capable of influencing the vector r and covering such vertices according to the ontology content. The tested heuristics and all results will be presented in the evaluation section.

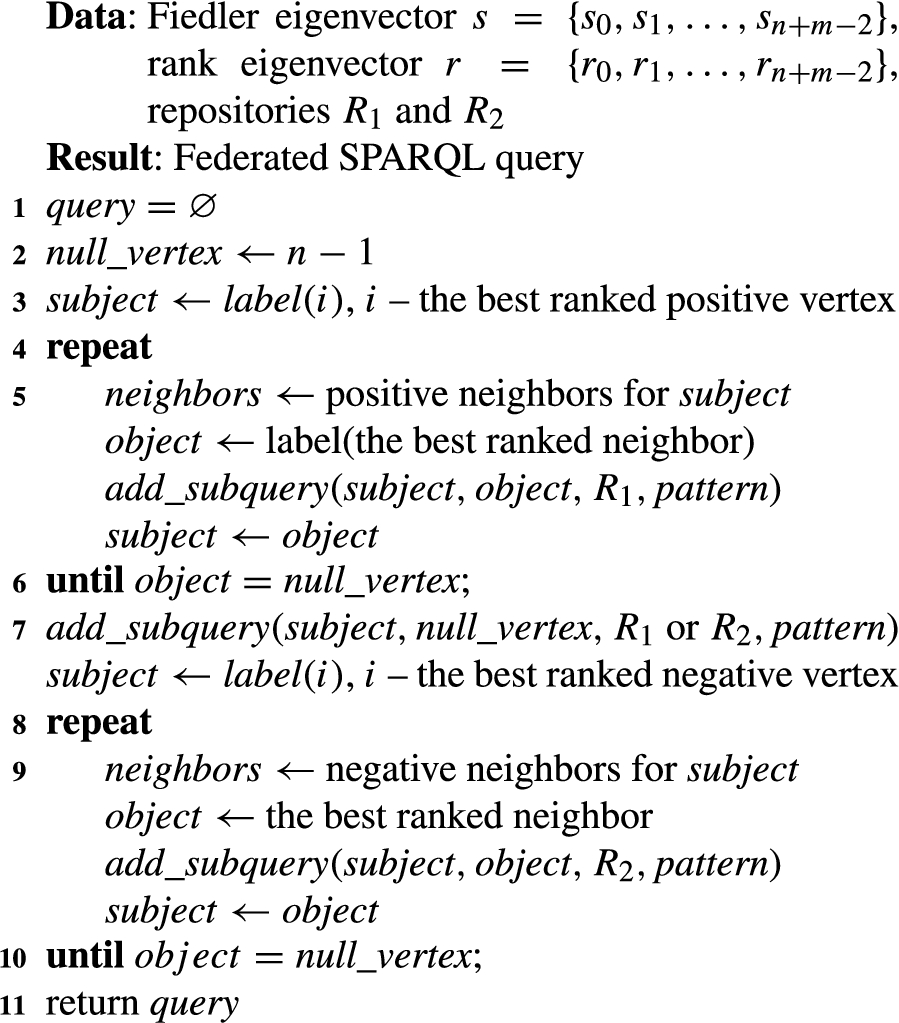

This subsection is dedicated to the algorithm for the SPARQL queries construction from two vectors s and r and the hand-crafted patterns. Here, we will explain how to follow vertex affiliations and align the most relevant data sources in a query in order to ensure results over repositories. Also, in this phase prefixes related to the data sources of vertices and their corresponding query patterns are determined. This vertex serves as a neutral source (bridge) of the RDF triple, either the subject or object in the patterns. Finally, when the idea is exposed, the highest ranked vertices are used in order to find the best path to the central vertex from both sides, positive and negative.

The input for this phase are two eigenvectors, s and r. The first eigenvector s splits the graph

For simplicity’s sake, let us suppose that the first connected component contains vertices with positions

Let us see the algorithm in action. For the two graphs in Fig. 2 two eigenvectors are calculated: Fiedler eigenvector s and rank eigenvector r. Their coordinates are

Federated SPARQL queries generator

Final SPARQL query

In this section we give an evaluation of the framework’s ability to select the most relevant data sources over repositories, taking into account their specificities. We checked the correctness of the generated queries too. Our methodology is not based on the common ontology which connects repositories, but on the detection of the “same as” relationships between data sources. The framework builds the graphs of these relationships within each repository, then uses them to build appropriate SPARQL queries which operate over repositories. The goals of the evaluation are (1) to measure the performance of the SpecINT engine in terms of relevant data sources selection, and (2) to check the validity of the created SPARQL queries. Evaluation in this context basically means checking if the generated queries meet our primary goals, i. e. whether they can actually retrieve relevant results from different data sources (and repositories) and whether the framework responses could show the actual trends in research communities related to the anti-cancer drugs. In the following, we explain our experimental setup and the evaluation results.

Experimental setup

The SpecINT framework provides information about physical and chemical properties of a substance, substance interaction with various protein targets, substance cytotoxicity on various cell-lines and so on. In order to evaluate the framework’s ability to collect specific data, the researchers started the framework for 50 substances/compounds, randomly selected from the data sources used in the experiments. For the methodology testing, only substances which belong to both repositories are selected. Table 2 lists one part of the used InChIKeys with their molecular formulas and short names.

One part of the tested InChIKeys with primary information

One part of the tested InChIKeys with primary information

Moreover, for the experiments we use substances from the CPCTAS laboratory, originally synthesized by chemists for new experiments. CPCTAS possesses a certain number of various healthy and cancer cell-lines, and at the beginning of every investigation it is of crucial importance to know whether the substance of interest has already been analyzed. The researchers could get information about the synthesis of similar substances, and substance properties, getting evidence and comparing their findings with the findings for similar substances.

In the last decade, several large cheminformatics and bioinformatics repositories were founded. Every initiative is special in some manner and all of them have made a comprehensive shift towards presenting data to a wide research community. Some of the most popular solutions based on Semantic Web technologies are: Bio2RDF [5], LODD [29], Chem2Bio2RDF [13] and Open PHACTS [49].

In our experiments we focus on two repositories Chem2Bio2RDF and Bio2RDF. Chem2Bio2RDF is one of the most popular repositories based on Semantic Web technologies which store more than 80 million triples. It covers around 25 different data sources relating to chemical/biological needs which aggregate genes, compounds, drugs, pathways, side effects, diseases, and MEDLINE/PubMed documents (last update in 2009). Bio2RDF manages to integrate public bioinformatics databases and convert them into 11 billion triples across 35 datasets. Its last release (third) dates from July 2014. Also, in our experiments we have worked with the underlying ChEMBL database from the EBI7

The task for the framework evaluation was assigned to the chemists and biologists employed at the Centre for Preclinical Testing of Active Substances (CPCTAS), Faculty of Science, University of Kragujevac. First, the members of CPCTAS, with our help, explored Chem2Bio2RDF and Bio2RDF repositories taking into account that these repositories have different predicates. This is an important step for creating a general picture of all data sources, their content and how data are connected. For the evaluation, 3 biologists and 3 chemists reviewed each recognized link between data sources manually. They checked whether the edges (predicates) between substances are real, i.e. whether there exists a triple which contains the entities of the substances. The entities position in a triple (subject or object) is an important part for the final results, since the edge orientation determines the path thus influencing the patterns order in a final query. For the specific task (targets,

Heuristics for the path navigation

Although we provide some theoretical evidence for the eigenvector coordinates signs (see the Appendix), we could not be sure that the vertex ranking will lead us to the best possible results. More precisely, the main task is to find a way of connecting two paths in the cut-vertex, positive and negative, but this selection should include the most relevant data sources from both sides. The cut-vertex serves as a mediator (bridge) for the crossing from repository to repository. One thing that is immediately apparent is that it would be impractical to explore all data sources and all their paths. The state-of-the-art algorithms for path finding such as Prim’s and Kruskal’s algorithms are not applicable in the case when it is necessary to favor particular vertices. One of the ideas could be increasing the edge weight, but it cannot be performed when the existing edges differ from substance to substance. Now, the question is what is the best approach for path selection which can include as many relevant data sources as is possible thus the loss in information is minor. We therefore tested the following three easily implementable heuristic methods that use only the vertices degrees of

The concepts of the first two heuristics are clear. The third heuristic, Favored PageRank, is introduced since the forced vertex selection is a necessary precondition if we want to favor specific data sources depending on the question. For this purpose a new fictitious vertex with a large rank is added to the graph. The edges from this high-rank vertex to the low-rank vertices can increase their rank without violating the existing graph structure. If we want to include high-rank vertex in the path (e.g. PIBAS vertex), new edges which point to it are created. For clarity’s sake the fictitious vertex is removed from the figure. Similarly, for the vertices of interest that might be encountered on the path, the vertex self-loops can be used in accordance with the present knowledge about data sources. This additional knowledge is stored in a simple ontology which includes data sources categorization and affiliation.

Number of relevant sources on the path per heuristic

Number of relevant sources on the path per heuristic

Obtained results for previously listed substances. For every substance are presented the numbers of items for targets, cell-lines and

values, found in data sources within different repositories

Obtained results for previously listed substances. For every substance are presented the numbers of items for targets, cell-lines and

In this subsection we present the obtained results for 50 substances and for each heuristic. Here, only the results related to the drug targets are shown, since the results for the cancer cell-lines and

The most effective selecting method turned out to be Favored PageRank. Involving domain knowledge in the algorithm improves the final results. This means that one could upgrade the algorithm by using novel expert knowledge which is especially important in the case when the unknown data sources are integrated. In very rare cases a Degree approach can join the paths over repositories. Even when it succeeds to construct a full-path, this path contains a small number of relevant sources. Also, the Degree approach has not proved as a good solution in practise because of the query branching. It considers execution and evaluation of several queries resulting in the framework slowing down. The PageRank approach gives much better results in most cases including a higher percentage of success in paths joining than the Degree does. These good results could be explained by the fact that the best ranked vertices are connected with many data sources and it is easer to perform merging. The main drawback of this approach is that low-rank vertices (new data sources) could not be covered in the paths. For example, a substance from CPCTAS is connected with only one substance and as such is worthless compared to the “strong” data sources. Also, this approach has not convinced us that the paths include all data sources of interest.

After the manual inspection, the results of the evaluators showed that by using SpecINT (Favour PageRank), we achieve a precision of 86% in covering relevant data sources for the drug targets. The precision of 71% and 75% is achieved for the cancer cell-lines and

Beside the relevant data sources selection, the query validity is also tested. The large number of covered data sources does not guarantee that the paths are connected in the connection vertex. Although the used graph construction enables repositories visiting, sometimes there is no edge which connects the vertex before the last in the path with the cut-vertex. Even when the necessary edges exist, it does not mean that the edge orientations are appropriate. There are about 13% of such cases, which automatically means that the queries cannot be created. For example, the substance GSDSWSVVBLHKDQ-UHFFFAOYSA-N cannot return the results.

As an addition to the use case from Section 4.1, Favour PageRank heuristic for the tested substances is applied. For every substance

In this section we provide a comparison of our framework with large Open PHACTS project, which is now used in pharmaceutical companies. Two systems can only be compared if they produce results for a given substance/compound. The sources which contribute to evaluation process are shown in Section 6.2 (including their versions). Although the SpecINT framework is proof-of-concept project, designed to operate over repositories without a common ontology between resources, we are able to return fresh data and integrate novel datasets. The goal of this comparison is to show that the Federated SPARQL queries, generated on the SpecINT, are able to achieve some complementary result compared to the results obtained by using Open PHACTS Discovery Platform [27]. For the evaluation task, the query results designed for three different tasks are tested. These tasks include discovering of 1) targets, 2) cell-lines, and 3) the corresponding

Number of returned results. One part of the tested InChIKeys for drug targets

For each InChIKey used as an input in the SpecINT, corresponding compound URI (seed URI) was used for the Open PHACTS platform. The experts from CPCTAS checked the results and made comparison between outputs from both platforms. One part of the tested substances (InChIKeys) and returned results for the targets are presented in Table 5. As far as the drug target task is concerned, we can notice that overlapping results come from ChEMBL dataset. In this case, the SpecINT produced some complementary results obtained from DrugBank dataset (from Bio2RDF and Chem2Bio2RDF repositories) and PIBAS ontology. Open PHACTS API does not return these additional results, although it contains DrugBank dataset. An overlapping between outputs of two platforms is also large in the case of cell-lines and

From obtained results, we conclude that both approaches offer a great starting point for discovering data. Although a difference between compared results is small, both frameworks can offer complementary data to the research community. New experimental results from CPCTAS laboratory are publicly available through the PIBAS ontology (see Section 4.1). On the other side, Open PHACTS, as well as the SpecINT, offers a possibility for novel data integration thus providing a possibility for additional data. For example, for the substance with

Result of usefulness evaluation by using our custom questionnaire.

The user interface on the top of the framework is developed too. It presents an easily understandable view of the information obtained in the back-end. After the methodology was developed, we had to ensure that the user interface is useful enough to be potentially used for real life cases. To assess the potential usability of our system, we used the seven-item Likert scale-based System Usability (SUS) questionnaire [33]. The survey was completed by CPCTAS staff. In order to numerically analyze the survey results, we translated the Likert scale responses to numbers using the following five point scale:

The responses to question 1 (I felt very confident using the system) suggest that our system is very well adopted by users (average score to question

In this section we cover some of the known limitations of our framework.

Conclusion and future work

In this paper, we have presented a new approach for information retrieval set in the background of the SpecINT framework. The most important contribution of this work is Federated SPARQL queries construction in a scalable manner according to the existing paths in the graph. The “same as” kind of relationships within repositories are detected for the graph construction thus the searching process can be effected without a common ontology between resources. The framework appears as a trade-off solution between automatic and user-guided generators for the Federated SPARQL queries. This framework requires less human interventions avoiding personal experience and affinities, since it uses the coordinates of the graph eigenvectors for the most relevant data sources selection and automatic joining of their sub-queries. Moreover, the achieved improvement is also reflected in the fact that the framework is able to operate over repositories taking into consideration their specific data representation and inter-connections. This methodology is not dependent on constant update monitoring, but everything is done on-the-fly, therefore expensive statistical calculations are avoided.

The SpecINT enables scientists to find information of interest on the web (under certain circumstances, e.g. up-to-date data), and it also encourages other laboratories to publish data thus extending the general idea. New members can publish their experimental results easily and become an integral part of the new virtual space dedicated to chemistry and biology. The framework arises from an optimistic idea to potentially save time and resources needed for chemical and biological investigations.

For future work, it would be interesting to study the weighted graphs obtained from RDF data, the effects of changing weighted functions of the edges and vertices for path generation and eigenvectors coordinate changes. The eigenvectors represent an excellent mathematical apparatus for future framework improvements when more than two repositories are considered.

Footnotes

Acknowledgements

The authors are grateful to the Ministry of Education, Science and Technological Development of the Republic of Serbia for financial support (Grant Nos. III41010 and 174033).

Coalescence with complete graphs

Fielder’s papers [21,22] initiated a new era in which we can use the sign of the eigenvectors’ coordinates for cut finding. In [22] it was proved that the second smallest eigenvector of the Laplacian matrix can be used for determining positive and negative vertices in a graph thus providing room for distinguishing the connected components of a graph after vertex removal. Let