Abstract

In the recent years, machine learning has made great advancements that have been at the root of many breakthroughs in different application domains. However, it is still an open issue how to make them applicable to high-stakes or safety-critical application domains, as they can often be brittle and unreliable. In this paper, we argue that requirements definition and satisfaction can go a long way to make machine learning models even more fitting to the real world, especially in critical domains. To this end, we present two problems in which (i) requirements arise naturally, (ii) machine learning models are or can be fruitfully deployed, and (iii) neglecting the requirements can have dramatic consequences. Our proposed pyramid development process integrates requirements specification into every stage of the machine learning pipeline, ensuring mutual influence between requirements and subsequent phases. Additionally, we explore the pivotal role of Neuro-symbolic AI in facilitating this integration, paving the way for more reliable and robust machine learning applications in critical domains. Through this approach, we aim to bridge the gap between theoretical advancements and practical implementations, ensuring machine learning’s safe and effective deployment in sensitive areas.

Introduction

In recent years, machine learning has made great advancements that have been at the root of many breakthroughs in different application domains. For example, AlphaFold [63] is a deep learning model that solved the “protein folding problem”, a grand challenge in the field of biology for more than half a century, while Halicin [71] is the first antibiotic discovered using machine learning, which could help in the battle against bacterial resistance. Results like those above, though ground-breaking, overshadow the dangers that come with the careless use of machine learning models in critical applications. Indeed, even though such models report an astonishingly high performance in terms of accuracy (or alternatively chosen metric), they do not give any guarantee that the model will not have any unintended behaviour when used in practice. Indeed, as pointed out by Rudin in [59], there have been several notable instances of machine learning failures. For example, individuals have been wrongly denied parole [81], deep learning models have inaccurately reported that highly polluted air was safe to breathe [47], and there has been generally inefficient use of limited resources across fields such as medicine, criminal justice, and finance [76]. Such problems in the behavior of the model are often rooted in the quality of the dataset used for training the model. This is, for example, what happened in the early stages of the Covid-19 pandemic, where (as reported in [58]) a common chest scan dataset from [35] made of pediatric scans, was used as control group against Covid-19 positive scans: as a result, instead of Covid-19 detectors, adult/child classifiers were built. Unintended outcomes like the ones described above (i) are particularly dangerous in safety-critical applications, where even a single unforeseen mistake can lead to dramatic consequences, and (ii) undermine human confidence in the models themselves, thus slowing down their adoption.

In this paper, we argue that requirements specification and verification can go a long way to make machine learning models even more fitting to the real world, by reducing the risk of getting potentially dangerous unintended behaviors. We start from the simple observation that unintended behaviors correspond to the model violating some requirements which may be known even before the data collection and model development start. To support this claim, we consider two examples, one in healthcare, and the other one in autonomous driving, in which (i) some requirements are known in advance, (ii) machine learning models have very high performance in terms of accuracy (or other selected metric), and (iii) despite the positive performance, the outcome of standardly developed machine learning models often violates the known requirements with possible dramatic consequences given the criticality of the application domains. Although we consider just two examples, we believe that analogous considerations apply to most application domains given (i) the body of knowledge developed over the years in many application domains, which can be translated in corresponding requirements (see, e.g., [22,33,50,70]), (ii) the impressive results in performance obtained by machine learning models, which will continue to push for their adoption despite the possible unintended behaviors (see, e.g., [5,24]), and (iii) the fact that it is not surprising that the resulting model will violate the requirements if they are not taken into account during data collection and model development. Refining the last observation, it is not surprising that a machine learning model has an unintended behavior if, before its deployment, the data and the model itself are not somehow verified against the requirement capturing the intended behavior. We therefore claim that the requirements’ definition should precede and involve the entire machine learning model development cycle, including the dataset construction. In particular, we propose a novel

Our proposal represents a significant shift from the traditional

Given the above, our proposal enjoys the same spirit of works such as [19,49,61], which advocate for a more structured approach to machine learning model development. In particular, Gebru et al. in [19] propose documentation guidelines for new datasets, Mitchell et al. in [49] advocate for standardized reporting of models, including training data, performance measures, and limitations, while Seedat et al. [61] propose an actionable checklist-style framework to elicit data-centric considerations at different stages of the development pipeline.

The remainder of the paper is structured as follows. First, in Section 2, we consider examples from the healthcare and autonomous driving application domains in order to show that in these domains (i) requirements arise naturally, (ii) machine learning models are or can be fruitfully deployed, and (iii) neglecting the requirements can have dramatic consequences. Secondly, in Section 3, we show how the requirements definition can be fruitfully integrated into the standard machine learning development pipeline, impacting all the phases in the pipeline. Finally, we have the conclusions and possible plans for the road ahead in Section 5.

The need for requirements

The standard machine learning pipeline naturally tends to have a sequential nature. The main steps that compose it are:

A visual representation of the standard sequential pipeline is given in Figure 1. Even though we do not show them in the figure, in practice loop-backs might be necessary in order to modify the dataset or, more often, the model. Still, all such possible changes happen mostly between the model creation step and the model training step, as they are usually driven by the desire to get a better performance, while the dataset is often considered a static element of the pipeline. As we can see from the depicted pipeline, requirements do not appear anywhere. This is even more disconcerting when we compare such development pipelines with the standard software engineering pipelines, where requirements play a central role at every step of the process – no matter how small the project is. The most surprising fact though is that the development pipelines that have been proposed to deal with big projects in big companies either do not consider requirements at all (see, e.g., [6,12]) or when they do (see, e.g., [46,72]) they only mention data-centric requirements (e.g., the training dataset should have at least a certain number of data points or the model should achieve a certain accuracy) or requirements that cannot be formally specified (e.g., the model should be robust and/or interpretable). If formal requirements are considered at all, then they are normally considered just at the very end of the pipeline, during the model testing phase, where the model gets verified and/or tested against a set of properties [54,55]. As we can imagine, acting only at the very end of the pipeline can be very costly, as one might have to re-start a project if the requirements are not satisfied. For this reason, our development pipeline will consider the requirements from the very beginning and they will effect every step of the process.

Visualization of the standard machine learning pipeline.

We now focus on two application domains, healthcare and autonomous driving, and we show how requirements arise naturally in both applications domains, how applying machine learning techniques has already brought and can bring tremendous advantages to the fields, and how deploying machine learning models in these fields without explicitly taking into account the requirements can lead to unexpected behaviors corresponding to violations of the requirements. Though we consider just two application domains, we believe that analogous considerations and results hold virtually in any application domain, given (i) the body of knowledge developed over the years in any application domain, which can be translated in corresponding requirements, (ii) the impressive results in performance obtained by machine learning models which will continue to push for their adoption despite the possible unintended behaviors, and (iii) the impossibility to certify/rule out the absence of undesired behaviors without explicitly spelling out the corresponding requirements and the testing/verification of the model against the requirements themselves.

In recent years, the developments in machine learning, and in particular in computer vision, have fuelled the hopes of autonomous vehicles being finally in reach. However, every so often, such dreams are shattered by car crashes that, in some cases, have injured or even killed people. For example, in March 2018, an autonomous vehicle developed and tested on public roads by Uber’s Advanced Technologies Group fatally injured a pedestrian who was pushing their bicycle across the street outside of a designated crossing area [51]. The most striking characteristic of this accident is the fact that the car did not make any attempt to break and/or to avoid the pedestrian [40]. Unfortunately, this is not an isolated incident, as nearly 400 car crashes involving autonomous vehicles have been reported in the United States over a period of only ten months in 2022 [9]. As reported in [9], these vehicles in order to take their decisions rely, among others, on computer vision models, which if trained to simply maximise their performance (following the standard machine learning development pipeline) might fail to abide to even the simplest requirements.

(a) and (b) show the predictions made by the I3D model (with

To exemplify the problem described above, we consider the ROAD-R dataset [22], which consists of (i) 22 relatively long (∼8 minutes each) videos annotated with road events, i.e., a sequence of frame-wise bounding boxes linked in time, each labelled with the agent in the bounding box, together with its action(s) and location(s), and (ii) 243 requirements expressed in propositional logic stating what is an admissible road event.1 The possible labels associated to each bounding box are 41, and the requirements state which combinations of labels a model can output. Hence, for instance, a requirement states that a traffic light cannot be red and green at the same, while another states that a traffic light cannot move. Given ROAD-R, six state-of-the-art temporal feature learning architectures (I3D [10], C2D [79], RCGRU [29], RCLSTM [29], RCN [66] and SlowFast [16]) as part of a 3D-RetinaNet model [65] (with a 2D-ConvNet backbone made of Resnet50 [26]) for event detection, have been trained. These models take as input a set of consecutive frames, and for each frame they output (i) a set of bounding boxes, and (ii) a set of labels for each bounding box. Such labels are decided in the standard way: for each of the 41 labels the model outputs a number

For our second example, we discuss healthcare. There is great hope that developments in machine learning will revolutionize medicine and transform clinical practice [75]. The range of applications in medicine is vast, from computer vision systems analyzing medical images in radiology and longitudinal monitoring of patient trajectories throughout a hospital stay, to genomic screening of future disease risk and much more. However, as with many other high-stakes and safety-critical applications of machine learning, there are a number of requirements for machine learning systems in healthcare. These include ethical considerations, such as fairness and bias; practical considerations, such as controlling false positive rate to prevent alarm fatigue [62] and ensuring appropriate resource allocation; and logical considerations, such as the example discussed below, among others.

As a concrete example, we consider clinical risk scores. Clinical risk scores estimate the likelihood of a specific outcome occurring after a certain period of time, such as a patient developing a particular disease or condition in the next ten years or experiencing an adverse event following a medical procedure. By definition, the chance of an outcome occurring within some time horizon

To illustrate the consequence of not incorporating the simple requirement described above into the machine learning systems, we consider the problem of predicting the risk of developing diabetes. We construct a cohort of patients from UK Biobank [73], a large-scale observational study with around 500,000 participants from 22 assessment centers across England, Wales, and Scotland enrolled between 2006 and 2010. We extracted a cohort of participants who were 40 years of age or older at enrollment with no diagnosis or history of diabetes at baseline. We considered the 18 features employed by QDiabetes [27]. We performed data imputation using HyperImpute [31] and trained classification algorithms using AutoPrognosis [30] to predict

The pyramid model

In the previous section, we have shown the standard performance-driven machine learning development pipeline, in which the requirements are traditionally neglected, and, with the means of two examples, we have highlighted how such negligence can have dire consequences. This automatically calls for the inclusion of the requirements in the development process, which are at the core of any development pipeline proposed in the field of software engineering. We thus propose to adapt the general methodologies used in software engineering for generic system development, where it is well known that the later the requirements are taken into account the higher the cost is to repair the system. In order to adapt such methodologies, we need to take into account that, differently from standard software, machine learning models are learnt from data, and thus we have less control over the behaviour of the model, which entails not only that at design time it is impossible to predict the future behaviour of the model, but also, at testing time, if a bug is found, then the steps necessary to fix it are entirely different. A development pipeline for a machine learning model thus needs to take into account that:

data are central to the process, as the quality of the model heavily depends on the quality of the collected data, the model is learnt from data, and thus it is not possible to fully know a priori its behaviour, and if the model does not behave as expected, it is advisable to not only check the model but also check the data.

Further, it is unrealistic to expect that all requirements are always known a priori and that they remain immutable throughout the development process. This is already known in the general software engineering field, and it has been already specifically tested to be the case also in our field. Indeed, as highlighted by the survey conducted in [41], some of the biggest problems that machine learning practitioners face on a daily basis include the accessibility of data (which might not be known in the requirements definition phase) and the unrealistic expectations of the stakeholders of the system (which might reveal themselves during the testing phase of the model). Thus, we also need to take into account that the requirements might change at each stage of the development process.

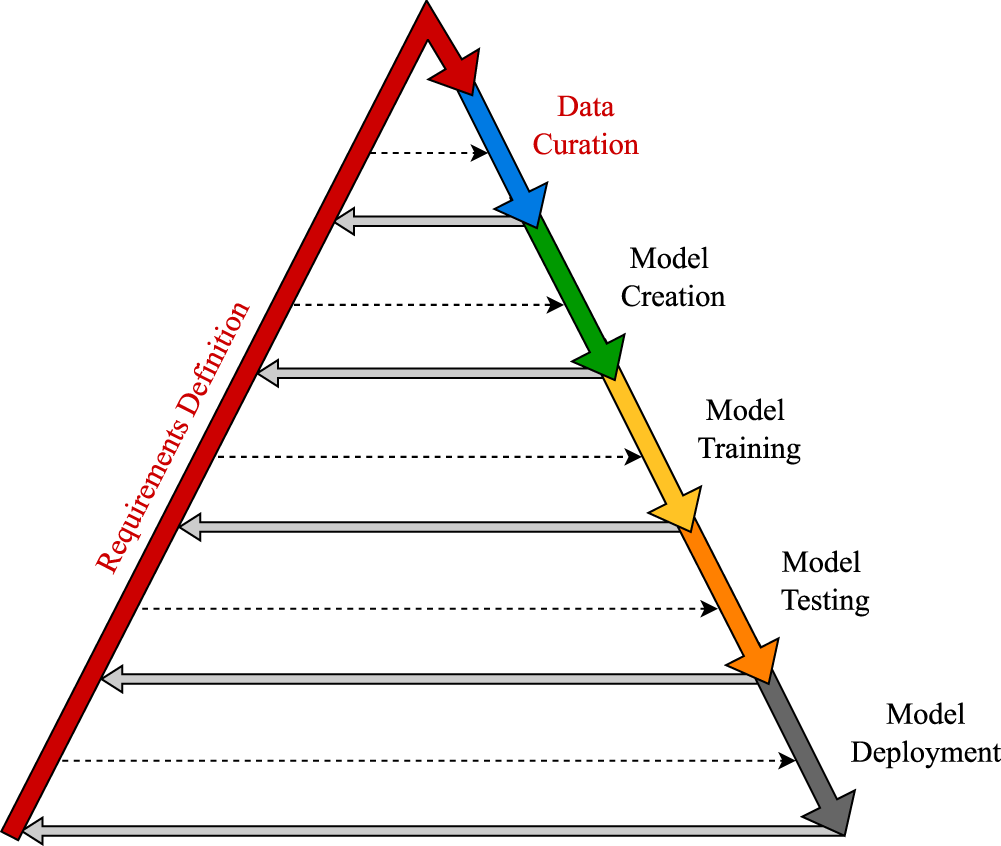

Visualization of the pyramid model. The full arrows stand for the standard procedural processes, while the dotted arrows show that the requirements impact every stage of the process.

Given the above, we propose the

As already stated in the introduction, researchers in the Neuro-symbolic AI field find themselves in the unique position of being able to not only understand the deep impact that the violation of the requirements can have on the trust the public poses in the AI models, but also leverage the techniques developed in the traditional AI field to fill the gaps existing in the current state-of-the-art machine learning models.

As a testament of this fact, they have already been at the forefront of this line of research, with researchers developing methods which are guaranteed to be compliant with a set of requirements over the output space expressed as a logic program [42,43], in propositional logic [4,23], as linear inequalities [18,70] and even as quantifier free linear arithmetic formula over the rationals [28]. If we think about it, this was already an incredible leap forward, as up to that point specialised methods were being developed for different types of requirements. As an example, we can think of the field of

We expect that in the future the expressivity of the requirements studied will increase and will thus encompass more scenarios. For example, requirements expressing the relations existing over multiple data points might be expressed in first order logic (FOL) or requirements over the behaviour of an auto-regressive model might be expressed in linear temporal logic over finite traces (

Finally, Neuro-symbolic AI has already shown very promising results in dealing with even more complex cases than the standard fully-supervised ones. For example, many works have been developed to be able to exploit (and sometimes impose) the requirements in semi-supervised settings (see, e.g., [8]), in partial label settings (see, e.g., [17]) and sometimes even in fully unsupervised settings (see, e.g., [69]). Naturally, these scenarios pose numerous challenges, including the exploitation of reasoning shortcuts by Neuro-symbolic AI models [45]; however, there is already research underway to mitigate this issue [44,78].

Summary and outlook

In this paper, we have shown that even though applying machine learning to a given problem can bring great benefits, applying it without specifying and taking into account the requirements of the problem can lead to unexpected behaviors and, possibly, dramatic consequences. We have thus proposed a new machine learning development pipeline in which the requirements definition phase is explicitly incorporated at the beginning of the process, causing a deep connection between the requirements definition and all the other phases in the development process, in which (as standard in software engineering) the former may affect the latter and vice versa. From this perspective, this paper can be seen as a call to adopt the general methodologies used in software engineering for generic system development to the specific field of machine learning, highlighting the risks of not doing so. We believe that in many cases the benefits of explicitly defining the requirements and taking them into account in the other phases outweigh their cost. It is also clear that the requirement definition and exploitation is unavoidable when machine learning models are used as stand-alone systems in domains with strict requirements to satisfy (e.g., in safety critical domains) or when used as part of larger systems with a given set of stringent requirements (e.g., memory usage; see, e.g., [57]). This view is supported by the recent trends in machine learning in which performance is just one of the requirements to satisfy. In particular, work in the fields of interpretability (see, e.g., [11,15],Imrie2023multiple), fairness (see, e.g., [48,82]) and robustness (see, e.g., [34,80]) all privilege certain characteristics of the model over performance, and all these works roots their motivation in the necessity to take into account requirements emerging from the respective application domain. However, how to define and fully exploit the domain knowledge expressed by requirements in the development process is still largely an open question, as many technical challenges need to be overcome. To this end, the Neuro-symbolic AI field can be seen as a bridge between standard machine learning and software engineering, as researchers in the field have already started developing techniques to create models that are built not only by the data, but that take explicitly as input also other types of knowledge, like formally stated requirements and use them either to design the model (see, e.g., [21,28]), or to incorporate the requirements in the loss function (see, e.g., [8,14]).

Despite the huge amount of work that can be categorized under the umbrella of “requirement-driven” machine learning, this is the first paper which explicitly advocates for a general model development methodology in which the requirement definition is a first class citizen like the other phases in the pipeline. We believe that this corresponds to a more structured approach to machine learning model development, which – as a general principle – has already been advocated in [19,49,61].