Abstract

In order to effectively control the pressure and energy consumption of multiple air compressors within an acceptable range, an intelligent pressure switching control method for air compressor group control based on multi-agent RL is studied. This method uses sensors in the air compressor field control cabinet to collect data such as header pressure, air storage tank pressure, and air storage tank temperature and sends them to the edge data collector for integration. After integration, the main control cabinet sends them to the upper computer. Combined with the on-site collected data, a multi-agent-based air compressor group control model is designed to convert multiple air compressors in the air compressor group control problem into a multi-agent mode, facilitating unified switching control of the air compressor group. Then, using the intelligent pressure switching control method based on deep Q-learning, driven by a neural network controller, the frequency of the frequency converter is adjusted to control the pressure at the outlet of the air compressor terminal header within the set value range, completing the pressure intelligent switching control. After testing, this method has good application results in pressure control, energy saving, and other aspects after being used for intelligent pressure switching control of air compressor group control.

Keywords

Introduction

After water, electricity, and coal, compressed air has become the “fourth energy source” [1, 2]. Compressed air systems have been used in the field of industrial automation since the 1970 s and are gradually expanding their application range [3, 4]. At the same time, compared to electricity, compressed air also has the following characteristics: it does not generate sparks, is not afraid of overload, does not have the risk of electric shock, and the working environment is not limited. It can still work even in environments with high humidity, high temperatures, or excessive dust [5]. Every factory can see the application of compressed air, whether it is a small machining workshop or a large chemical enterprise, and all have corresponding compressed air systems [6].

Air compressors are an important power source in industrial production. The electric energy consumed by compressed air systems accounts for about 20% to 30% of the total power consumption of enterprises, mainly the electric energy consumption of air compressors [7]. Therefore, reducing the electrical energy consumption of air compressors is the key to energy saving in compressed air systems. For a long time, most coal mines in China have adopted reciprocating plug air compressors, with the disadvantages of large volume, heavy quality, high noise, large maintenance workload, complex operation, and high operating costs [8, 9]. The outlet pressure of an air compressor is maintained within a settable limit range through loading and unloading. Since only the change in the exhaust pressure of a single air compressor is considered, it cannot reflect the change in the total exhaust pressure of the air compressor unit, which can easily lead to frequent loading and unloading of a single air compressor, leading to poor system reliability [10, 11]. The demand for compressed air is always great. Still, the capacity of a single air compressor cannot be too large, so multiple air compressors need to be operated simultaneously to meet the demand [12–14]. Therefore, how to save energy when multiple air compressors are running is extremely important [15].

Currently, many studies have conducted in-depth research on the control of a single air compressor, such in reference [16], Authors proposed a set of decoupling free air pressure and airflow closed-loop algorithms based on PID and fuzzy control. The control algorithm has been tested on the air supply subsystem bench and the proton exchange membrane fuel cell system. The results show that the error of air pressure and airflow during the steady-state operation of the fuel cell system is within 1 kPa and 1 g/s, respectively. The control effect of the algorithm is good, the accuracy meets practical requirements, and the problem of air compressor surge can be well avoided; In reference [17], researchers proposed a frequency conversion transformation scheme for some power frequency air compressors, designed an adaptive fuzzy PID control strategy for frequency conversion air compressors, and conducted simulation experiments under typical operating conditions. The simulation results show that the control strategy achieves a good energy-saving effect under time constraints and can achieve energy-saving operation while ensuring the safe operation of the platform; reference [18], used multimodal PID and traditional PID control methods in an air transmission system of 140 kW fuel cell to conduct real-time control by tracking the air mass flow at the outlet of the air compressor. Under this control method, the air transmission system has a fast dynamic response, small overshoot, and small steady-state fluctuations. However, such research methods cannot achieve simultaneous operation control of multiple air compressors. In order to further improve the control performance of single air compressor, it is also necessary to use resource allocation technology to improve the energy efficiency of air compressor system. Therefore, reference [19] proposes a dynamic optimization model that maximizes UL/DL total EE while meeting the necessary QoS constraints. Based on the non-convex property of EE maximization problem, the mathematical model can be divided into two independent subproblems: computational carrier scheduling and resource allocation. Therefore, sub-gradient method is used to allocate computing resources, successive convex approximation method and dual decomposition method are used to solve the max-min fairness problem. This method can significantly improve the total throughput of mobile computing services. Reference [20] proposes a new adaptive backhaul topology that can adapt to different business models. An adaptive system based on graph theory allows dynamic changes to the hybrid mmwave backhaul architecture and offers the possibility of efficient channel allocation for each backhaul link to meet capacity and QoS requirements. In addition, for the importance of green networks in integrated access and backhaul networks, a dynamic optimization model is proposed which, in addition to providing the necessary coverage and capacity, minimizes the overall energy consumption of UL/DL decoupled NOMA heterogeneous networks. The model optimizes user relevance/power utilization and provides an efficient modular and scalable framework for modeling analytical techniques with integrated multi-hop backtrips. Reference [21] proposes a dynamic optimization model to minimize the overall energy consumption of fifth-generation (5 G) heterogeneous networks and provide the necessary coverage and capacity. The model determines when to turn small cells on or off by optimizing carrier distribution and power utilization to meet the user’s quality-of-service constraints and achieve the highest level of energy efficiency. In order to effectively utilize the existing small cell network infrastructure for double-hop transmission at the same time, a multi-hop backhaul strategy is proposed. Numerical results show that the proposed method can significantly improve energy efficiency and system data rate while ensuring the throughput requirements in the uniform distribution of user devices and the hotspot distribution mode.

The resource allocation technology is combined with the control problem of single air compressor, and based on the application advantages of the agent, the resource allocation can be carried out according to the real-time data and energy demand, and the parameter setting and operation strategy of air compressor can be optimized to maximize the energy utilization efficiency and system performance. Based on this, this paper studies an intelligent pressure-switching control method for air compressor group control based on multi-agent reinforcement learning. When there are multiple agents in the entire RL system, it is called multi-agent RL [22–24]. In RL, agents interact with the environment to obtain data and feedback signals, and through continuous interaction and feedback, they eventually achieve control objectives. This method collects and sorts out the end pressure values of the compressed air main network through an edge server and uploads the end pressure values to the neural network controller. The multi-agent RL algorithm adjusts the frequency of the frequency converter according to the changes in the end gas consumption demand to realize intelligent pressure switching control.

Intelligent pressure switching control method for air compressor group control

Process description of air compressor group control process

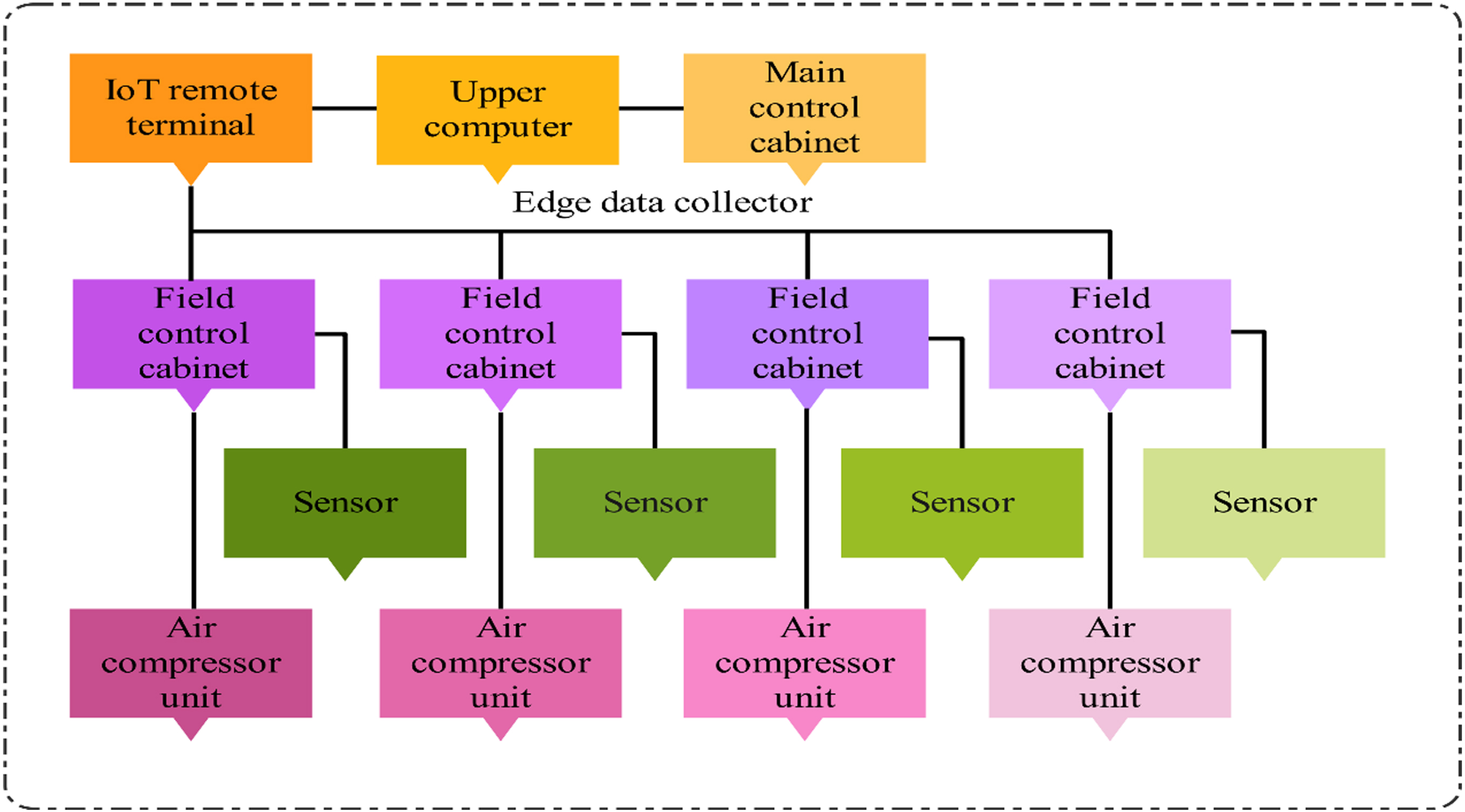

The air compressor group control system uses a domestic T910 program controller as the control core, as shown in Fig. 1.

Air compressor group control process technology.

As shown in Fig. 1, during the air compressor group control process, the sensors in the field control cabinet of the air compressor collect important parameters that affect the normal operation of the air compressor, such as header pressure, gas storage tank pressure, and gas storage tank temperature. After data collection, they are uniformly sent to the edge data collector, which integrates all the collected data and sends them to the host computer and the remote terminal of the Internet of Things through the main control cabinet. The host computer refers to a computer that can directly issue control commands. It can convert multiple air compressors in the air compressor group control problem into a multi-agent mode using a multi-agent-based air compressor group control model designed in combination with on-site data collection to facilitate unified switching control of the air compressor group. Then, using an intelligent switching control method of pressure based on deep Q-learning, driven by a neural network controller, by adjusting the frequency v1 (t) of the frequency converter, it can control the outlet pressure Q (t) of the air compressor terminal header within the set value range to complete intelligent pressure switching control.

The control objectives of the air compressor group control process are:

By adjusting the frequency v1 (t) of the frequency converter, the outlet pressure Q (t) of the air compressor header is controlled within the set value range, which satisfies Equation (1). At the same time, it is possible to reasonably load and unload the number of air compressors during startup and operation conditions, achieving the goal of energy conservation.

In Equation (1). Qmax and Qmin are the upper and lower limits of the outlet pressure of the air compressor header, respectively; Q sp is the set value of the outlet pressure of the air compressor header; Δv1 (t) and h are the current frequency of the frequency converter and the number of air compressors.

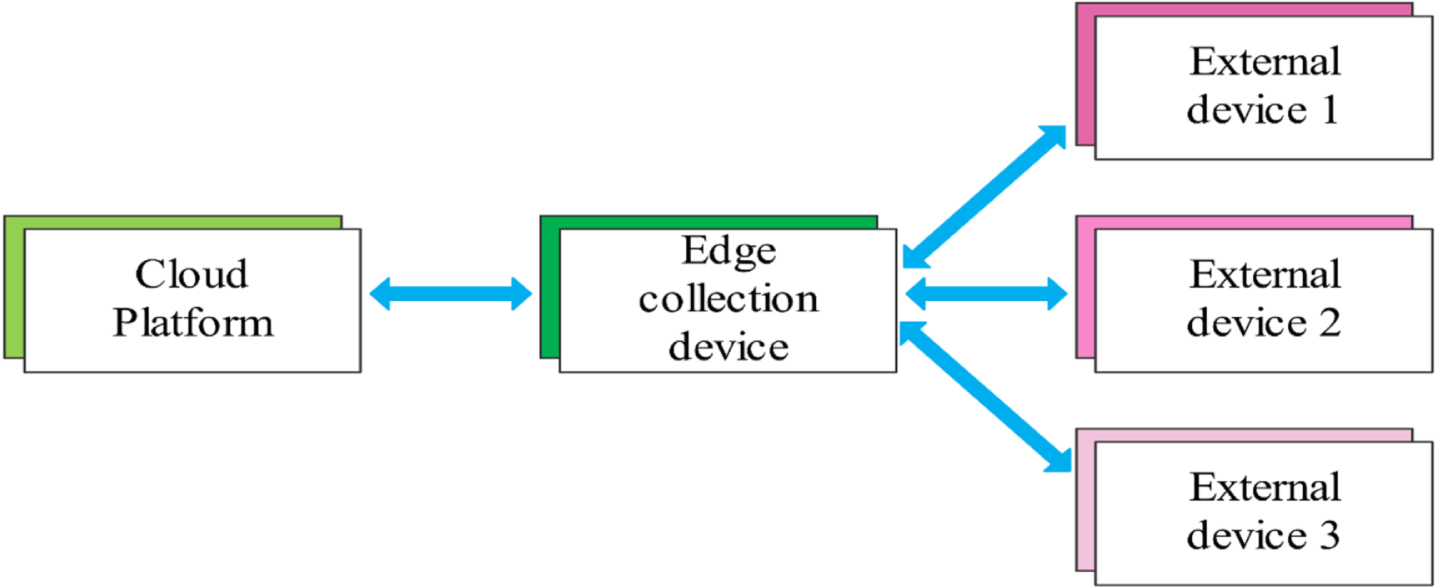

Monitoring points are distributed throughout the entire process of the air pressure system from gas production end to gas storage end to gas supply end to gas consumption end. The core key is the real-time transmission of monitoring point information from a remote location to edge data collectors. Using edge data collection technology, after configuring edge collection data points, the edge data collector polls and reads registers in external devices through various communication protocols, such as 4 G/Ethernet and transmits them to the upper computer through 4 G/Ethernet. Figure 2 is a schematic diagram of the operation mode of the edge data collector.

Schematic diagram of the operation mode of the edge data collector.

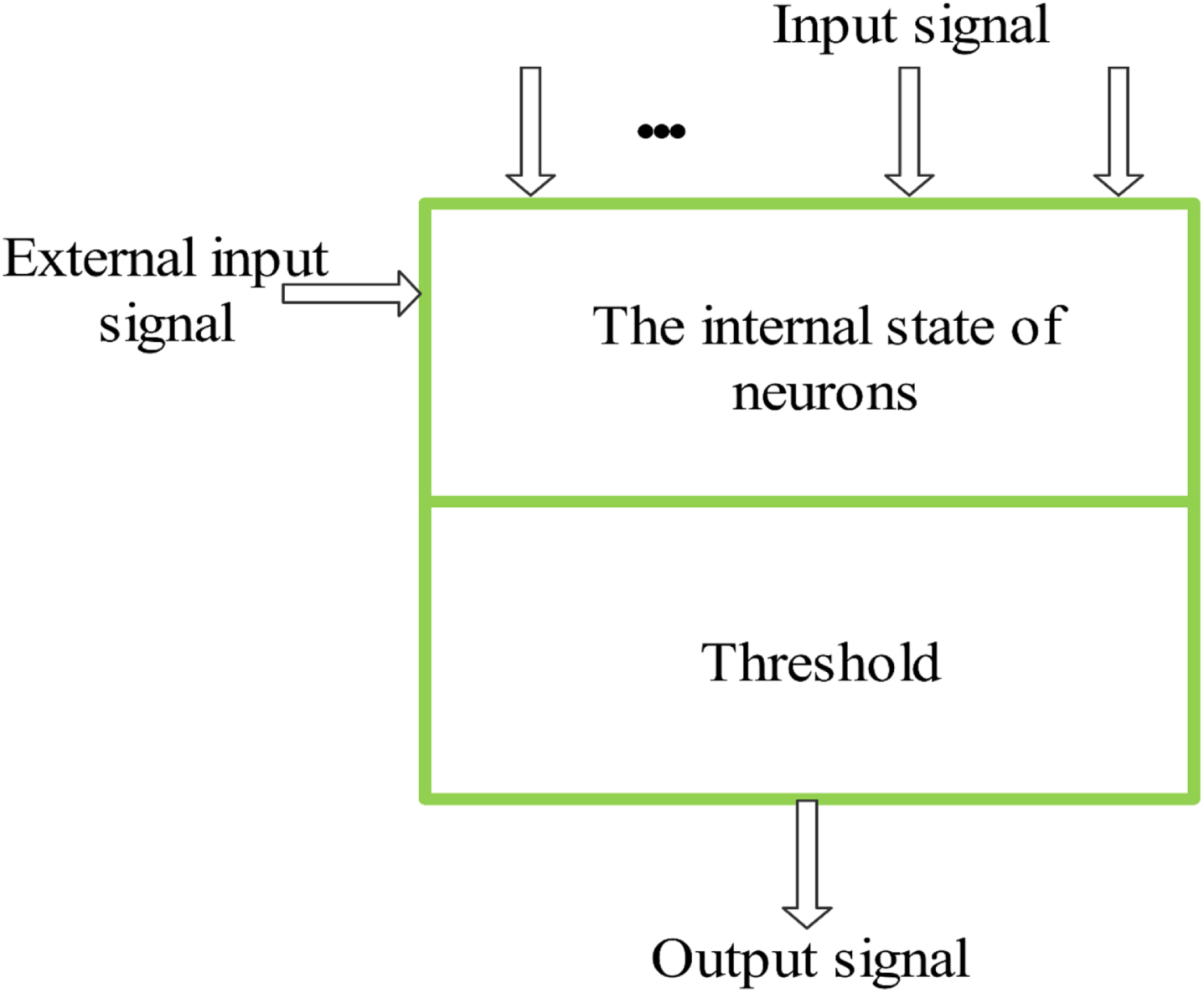

During intelligent pressure switching control of air compressor group control, it is necessary to use a reasonable controller to adjust the air compressor frequency converter. Neural network control includes decision-making, planning, and learning functions [25]. Neural networks can serve as models for objects in control systems, as well as controllers [26]. Its characteristics are prediction, rolling optimization, and feedback correction. Predictive control has been proven to have the desired stability performance for nonlinear systems [27]. By abstracting and simulating the human brain, neural networks have the characteristics of strong learning ability and high steady-state accuracy, opening up a new way to solve the control problems of complex nonlinear, uncertain, and uncertain systems. Figure 3 shows the structure of the neural network controller used in the intelligent switching control of air compressor group control pressure, as shown in Fig. 3.

Neural network controller.

The internal state of the neuron in the neural network controller is set to be Γ

j

, and the threshold value and the input intelligent switching control signal of the air compressor group control pressure are ζ

j

and Δv1 (t), respectively; The connection weight coefficient between neurons is φ; The external interference signal is Ψ. The control principle of the controller in the intelligent switching control of air compressor group control pressure is as follows:

Before the neural network controller is put into use, it is necessary to ensure its stable performance through training before it can be formally used.

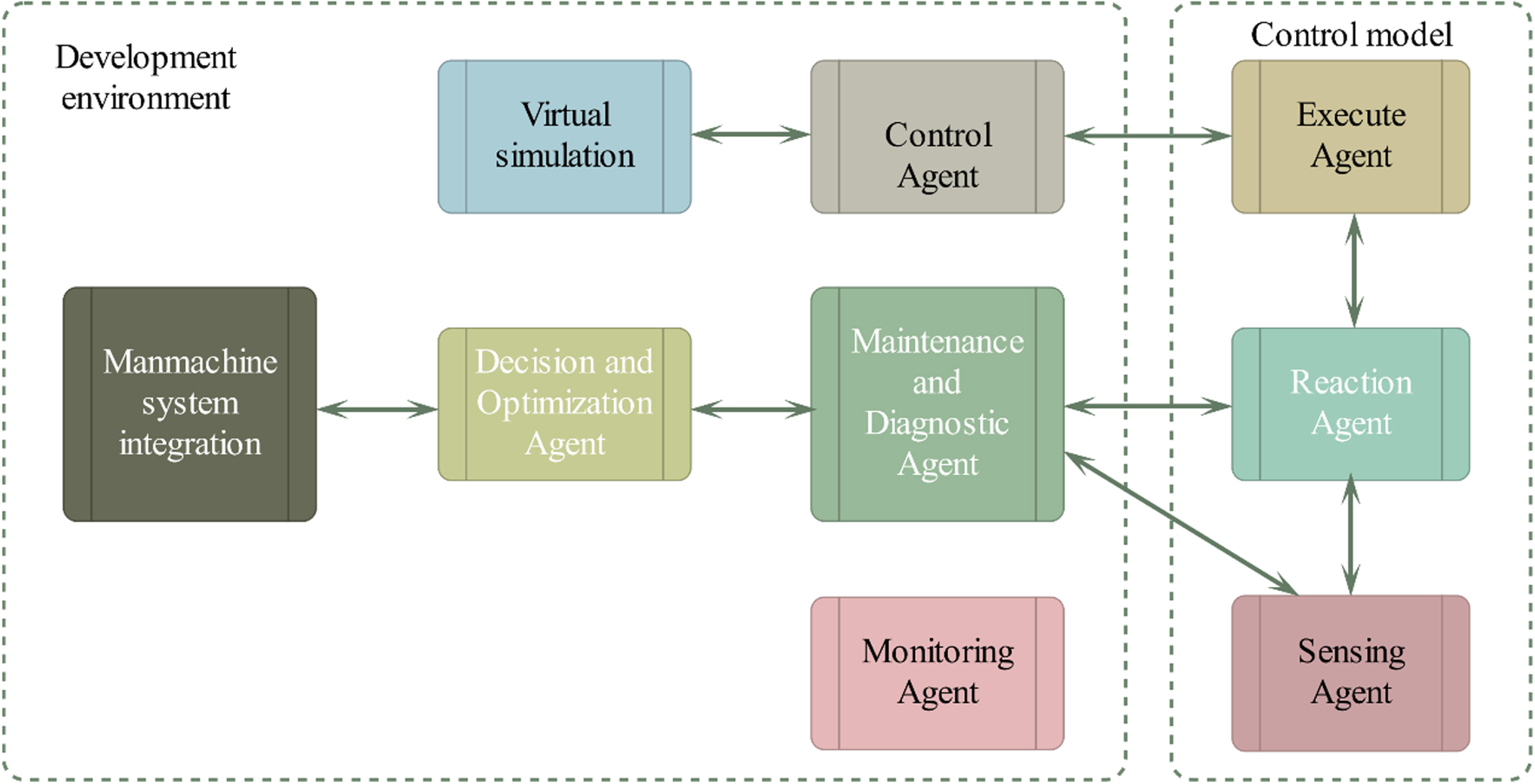

The Agent is a computer program unit model and its related physical entities that can achieve a certain function. Its goal is to maximize the benefits for its functional entities. A multi-agent system (MAS) is an organized and orderly group of Agents that work together in a specific environment and express the system’s structure, function, and behaviour characteristics through communication, cooperation, coordination, scheduling, management, and control among Agents. Therefore, MAS has strong robustness, reliability, and high problem-solving efficiency [28]. Therefore, this paper introduces Agent technology to the air compressor group control problem. Under the control of the constructed neural network controller, a multi-agent-based air compressor group control model is designed to drive the controller to complete intelligent pressure-switching control. Figure 4 is a structural diagram of this model.

Air compressor group control model based on multi-agent design.

The air compressor in the air compressor group control problem is considered a multi-agent, which coordinates and cooperates to complete overall optimization and diagnosis [29]. In each multi-agent control problem, the agent control is divided into a control unit, a human-machine interface unit, and a simulation optimization unit. The man-machine integration unit provides an effective mechanism for combining human intelligence with machines. The virtual simulation unit provides simulation information for control decision-making and fault diagnosis, and the control unit completes system monitoring, diagnosis, and control tasks. The entire process emphasizes the real-time nature of multiple Agents, which periodically respond to environmental changes. Each Agent has a final execution deadline and cycle, enabling it to work in a strong real-time environment.

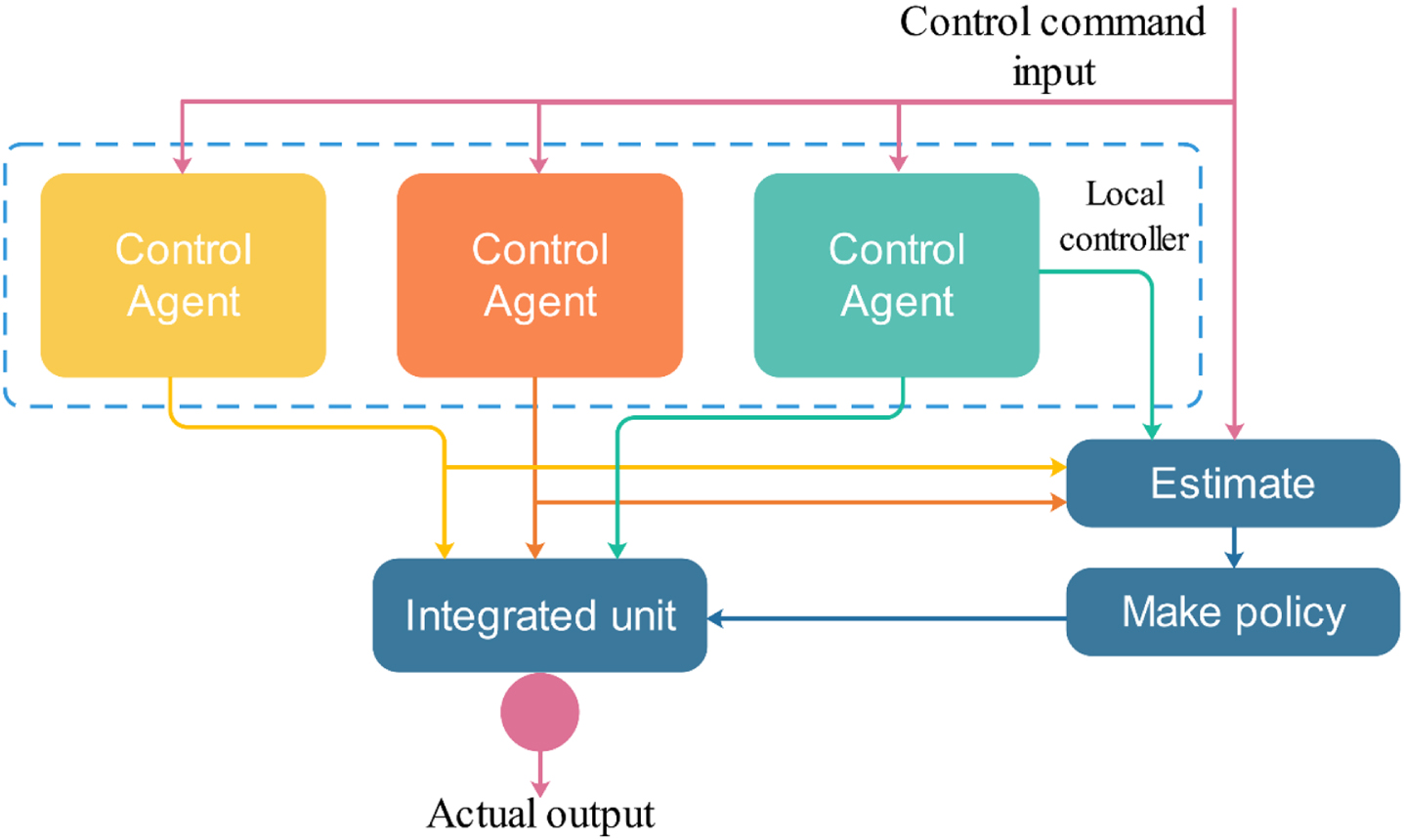

In this study, the air compressor group control is called Agent group control. In this control mode, the Control Agent group generally completes the control task [30]. The Control Agent group is composed of multiple control Agents and local collaboration mechanisms, with external control agent interface and behavioural characteristics. Therefore, other control Agents can call it to form a hierarchical structure. The internal structure of the Control Agent group is shown in Fig. 5.

Internal structure of control agent group.

As shown in Fig. 5, it includes a set of local controllers, an evaluation coordination unit, and a synthesis unit. A local controller is referred to as a local control Agent, which is used to encapsulate control algorithms, state transition mechanisms, and initial finalization processing, evaluating collaboration units to resolve associations and requests between control Agents. The control Agent request confirmation signal is calculated through the decision-making unit. The control and output of multiple control Agents are fused into the output of the Control Agent group through the comprehensive unit according to different requirements.

The evaluation unit evaluates the performance of the control Agent based on external inputs and the output and status of the control Agent, and the results are sent to the decision-making unit for state transition confirmation and fusion mode decision-making. The decision-making body determines when and how to switch between control Agents or change the weight of their output in the total output based on the evaluation results and state transition requests, mainly resolving issues such as conflicts, deadlocks, undisturbed switching, and oscillations.

The cooperation between agents can be divided into serial, parallel, and supervisory structures. A typical example of a serial structure is master-slave (cascade) control. The output of the master controller is given to the slave controller, and both tasks must be activated simultaneously to complete the corresponding control. The status of the entire Control Agent group depends on the status of the master task control Agent, while the status of the master task depends on the completion of the slave task control Agent [31].

In this paper, the intelligent switching control problem of pressure for air compressor group control is modelled as a fully collaborative multi-agent task. Considering that the pressure switching control task has incomplete information decision-making attributes during the air compressor group control process, the control problem is described as Ω =〈 R, C, W, L, B, s, M, α 〉, where Ω is the current state of the air compressor agent; R is the global environmental state space, C is the action space for pressure intelligent switching control of air compressor group control, W is the probability of air compressor state transition, L is the local observation space, B is the observation function, s is the reward function, M is the number of air compressor agents, and α is the discount factor.”.

In each period t, each air compressor agent obtains an observation l ∈ L of the local environment based on the observation function B (r) : R → L, and each Agent (in this paper, it is the air compressor) i ∈ N

R

selects the operation action

Where, S (R t , c t ) is the expected discount reward, and the strategy that maximizes the expected discount reward is the optimal strategy. According to the multi-agent cooperation task studied in this paper, the elements in the pressure switching control task of air compressor group control are specifically defined as follows:

(1) Action

The action of each air compressor device is expressed as

(2) Global state and local observation

The local observation of each air compressor includes the pressure control scheme of the equipment at the previous moment and the corresponding control effect. The observation set of all air compressor equipment is defined as a global state, expressed as:

(3) Rewards

The reward function can guide the algorithm’s optimisation direction, linking the reward function’s design with the actual optimization goal, and the algorithm performance can be improved under the incentive drive. The reward function defined in this paper includes a reward for complete control of the outlet pressure of the air compressor header and a reward for optimization of the frequency converter v1 (t).

The reward for complete control of the outlet pressure of the air compressor header is:

Where h1 and h2 are negative constants and positive proportional constants; H i is the frequency adjustment coefficient of the frequency converter; ϖ j is the importance index of the air compressor.

The optimal reward for the frequency v1 (t) of the frequency converter is: ϖ

j

· [sign (H

i

)] means that only after the pressure control of the air compressor is achieved will the revenue be obtained, and the revenue is proportional to the importance index (energy efficiency index) ϖ

j

of the air compressor. Adding fixed negative income h1, when the frequency of the frequency converter is unreasonable, the total control income

The optimal reward for the frequency v1 (t) of the frequency converter is:

Where h3 is a positive proportional constant; v j (t) is the total frequency adjustment value of the frequency converter. ϖ j · [sign (H i )/v j (t)] indicates that under the premise of achieving pressure switching control for an air compressor, the revenue obtained is inversely proportional to the total frequency of the inverter used and is proportional to the importance index. Equation (8) shows that using a smaller frequency adjustment amount of the frequency converter will bring greater control benefits on the premise of satisfying the task.

Therefore, the total reward function is:

In a multi-agent collaborative task, all agents share a reward value and use the common reward value to drive a deep Q-learning algorithm, achieving a balance between the overall complete control effect and optimal frequency resource utilization and completing pressure intelligent switching control.

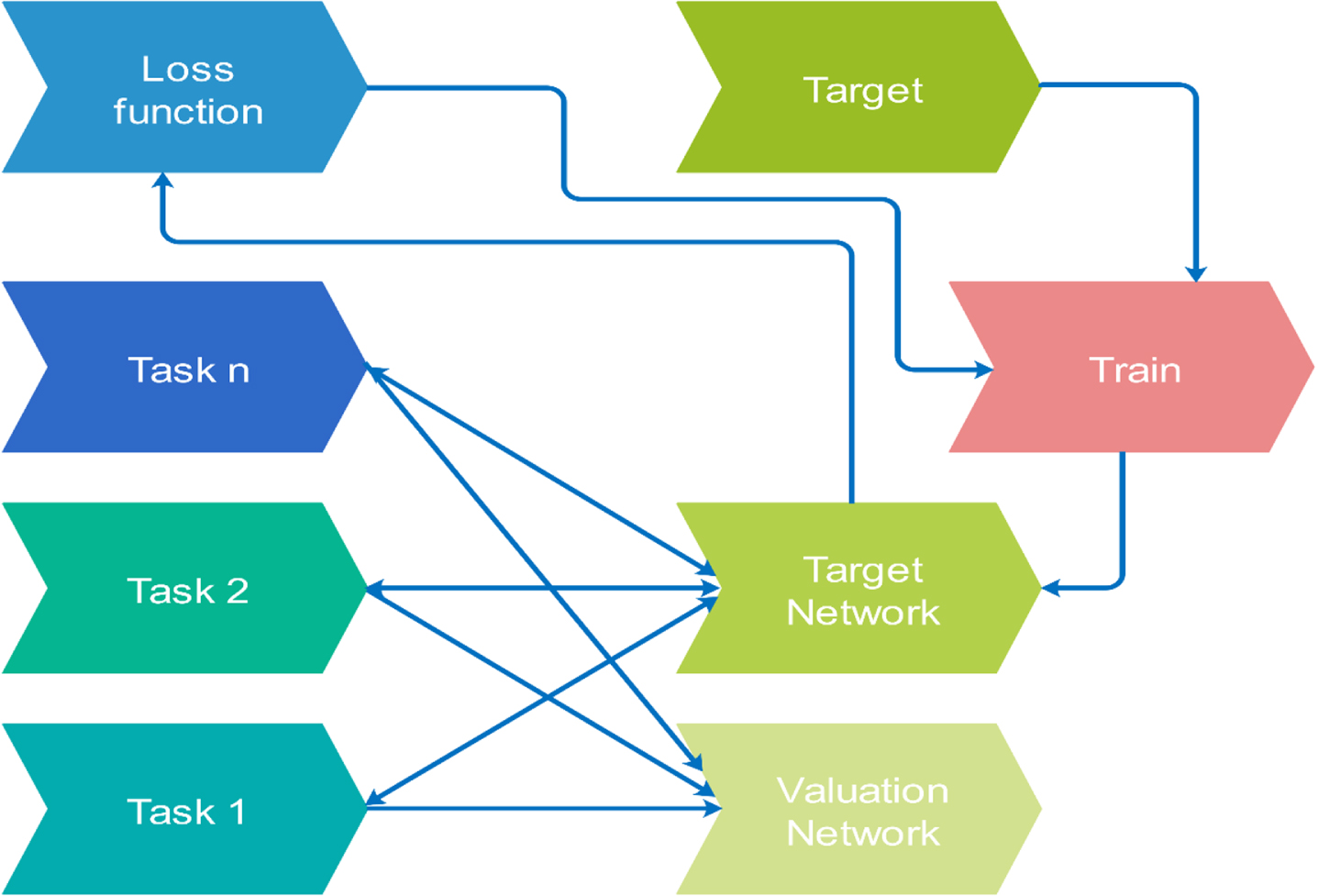

Based on the constructed model, the idea of deep Q-learning is optimized using neural networks. The current state Ω of the air compressor agent and the behaviour C are input to be taken into the neural network controller. Then the state-behaviour value is output from the neural network controller. The state-behaviour value function is expressed as S (R t , c t ; γ), and γ is the parameter of the neural network controller. The application details of deep Q-learning are shown in Fig. 6.

Application details of deep Q-learning.

Considering the application of many discrete direction selection actions and problems, this study adopts a RL method based on value function for deep Q-learning. Firstly, two neural networks with the same structure are established: the value function network (target network) and the network used to approximate the value function (estimation network) to obtain the deviation between the two networks and update the current behaviour value function through time difference. Therefore, the loss function for each iteration in deep Q-learning is:

Where

The time difference adopts the method of experience playback to improve the effect of neural network controllers. The air compressor agent obtains experience from many rounds, stores it in the experience pool E to form a cache space, and then randomly samples from this space to obtain samples for network training.

Experience playback can break the association between data, enabling neural network controllers to converge and stabilize. When rewards are fed back to the decision-making stage, whether the air compressor agent should take the path under the current optimal value or choose to explore a more optimal path is a problem that needs to be solved at the moment. To this end, a deep Q-learning algorithm is used to complete the switching of air compressor unit control actions. The deep Q-learning algorithm belongs to the value RL algorithm, that is, the reward value of the expected behaviour at a certain time step, which is recorded and represented using a Q-table. The algorithm is based on specific criteria for determining rewards and punishments, such as positive behaviours that deserve positive rewards and negative behaviours that deserve punishment. These decisions are made through the deep Q-learning algorithm. As shown in Table 1, taking the two control behaviours c1 and c2 as examples, the current and expected states of the outlet pressure of the air compressor header are Q1 (t) and Q2 (t), respectively. In the Q1 (t) state, c1 is higher than c2 in terms of obtaining the reward value, so using c1 can achieve the state Q2 (t). The deep Q-learning algorithm uses such behaviour criteria to make choices about the behaviour of each time step and form forces on the environment. When the state reaches the desired state, the mode will be updated. This mode’s updated learning procedure ensures that the air compressor group control pressure achieves intelligent switching control.

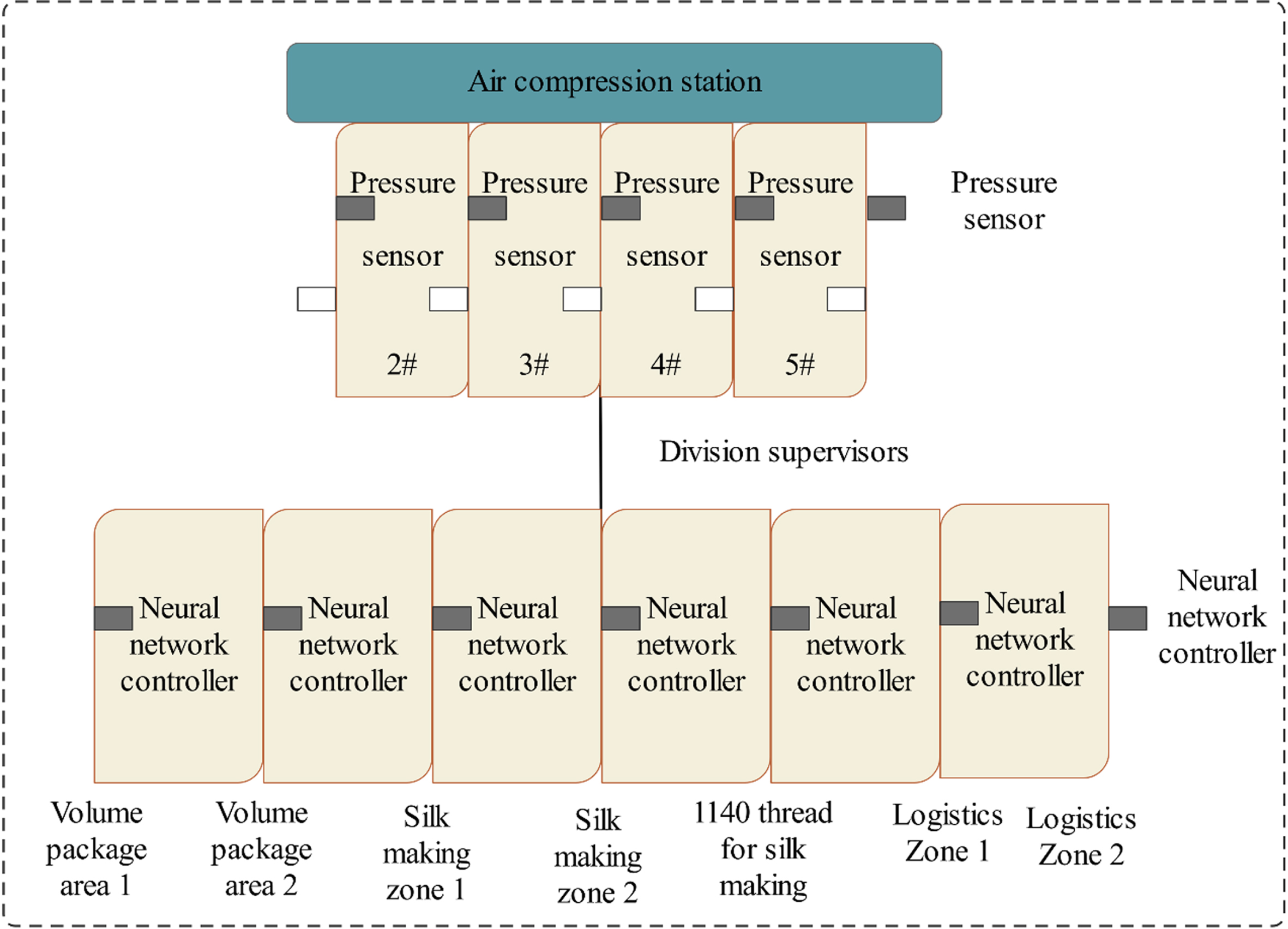

Example of Q-table

There are five pieces of equipment in the air compression station, as shown in Fig. 5. The equipment codes are 1 #, 2 #, 3 #, 4 #, and 5 #, respectively. The air compressor generates compressed air and supplies it to the workshop. The workshop includes seven areas: Wrapping Area 1, Wrapping Area 2, Silk Making Area 1, Silk Making Area 2, Silk Making Line 1140, Logistics Area 1, and Logistics Area 2. Among them, the control system of the air compression station has not been upgraded since it was put into operation in 2003 due to the relatively simple and backward group control mode, inadequate system monitoring points, and inability to effectively evaluate the operational effectiveness of the entire air compression system. Therefore, it is necessary to carry out intelligent control and energy-saving optimization transformation of the air compressor system in the power workshop to tap the energy-saving potential of the air compressor unit.

In the method in this paper, given the gas production pressure value of the air compression station and the upper and lower limits are set, the gas production pressure is limited to a small fluctuation range. On the one hand, it is used to avoid energy consumption waste caused by large fluctuations in gas production pressure. On the other hand, it is used to improve gas production quality and achieve a stable output of compressed air. The project plan in the experiment is to achieve a pressure fluctuation range of no more than 0.015Mpa in the terminal pipe network and power consumption of no more than 1.07kW·H/10000 cigarettes per 10000 cigarettes.

After applying the method in this paper, the gas production task is reasonably allocated to each air compressor based on the terminal gas consumption, air pressure, and other requirements, combined with the gas production performance of each air compressor. Especially after the linkage between the frequency converter and the air compressor system, its output power will increase or decrease with the system requirements. On the one hand, it is necessary to avoid the overloading and prolonged operation of the equipment, protect the mechanical performance of the equipment, and extend its service life; On the other hand, it is necessary to avoid significant fluctuations in gas consumption and pressure, resulting in frequent loading and unloading of the air compressor and reducing gas production efficiency. Figure 7 shows the details of the experimental environment.

Experimental environment details.

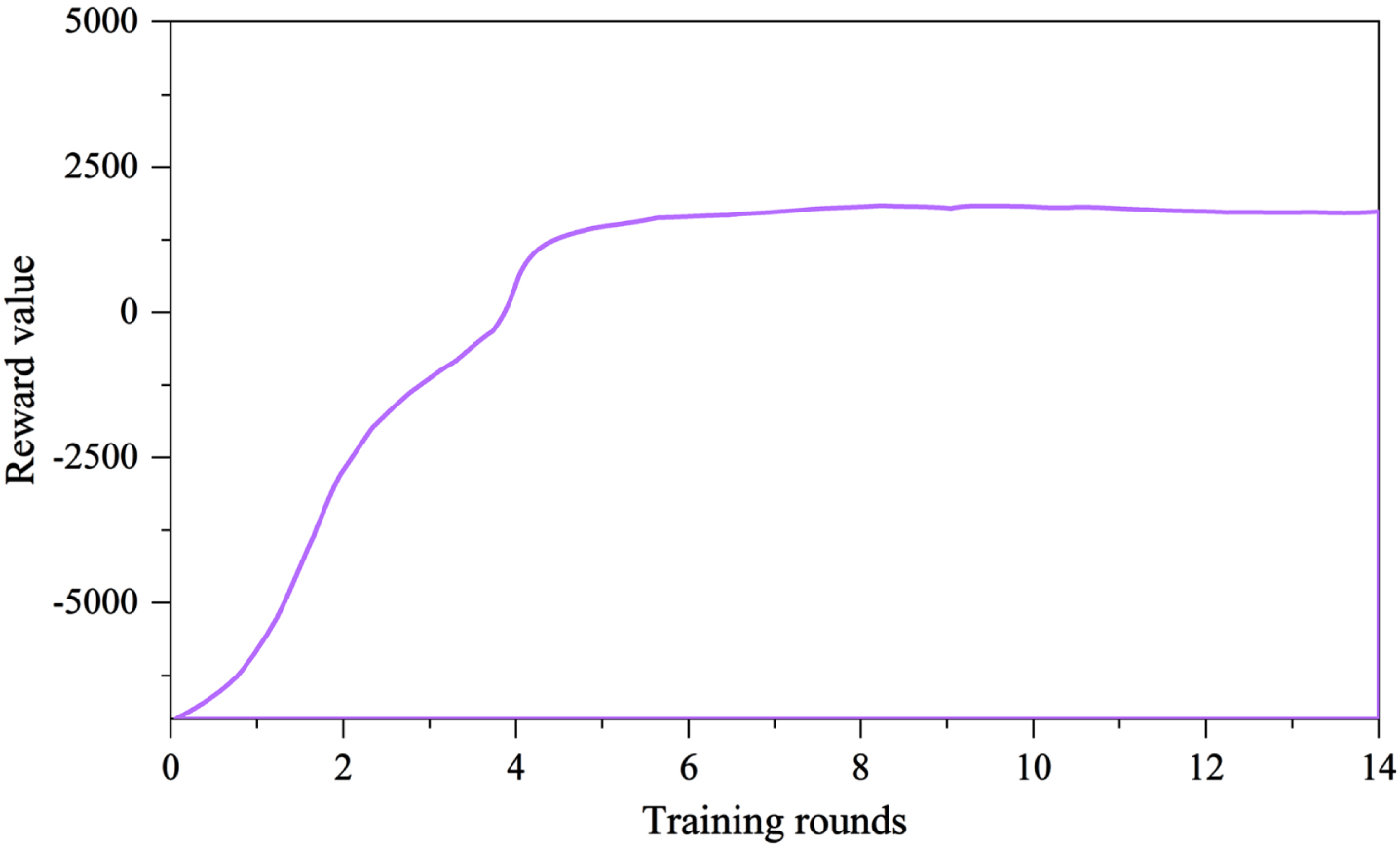

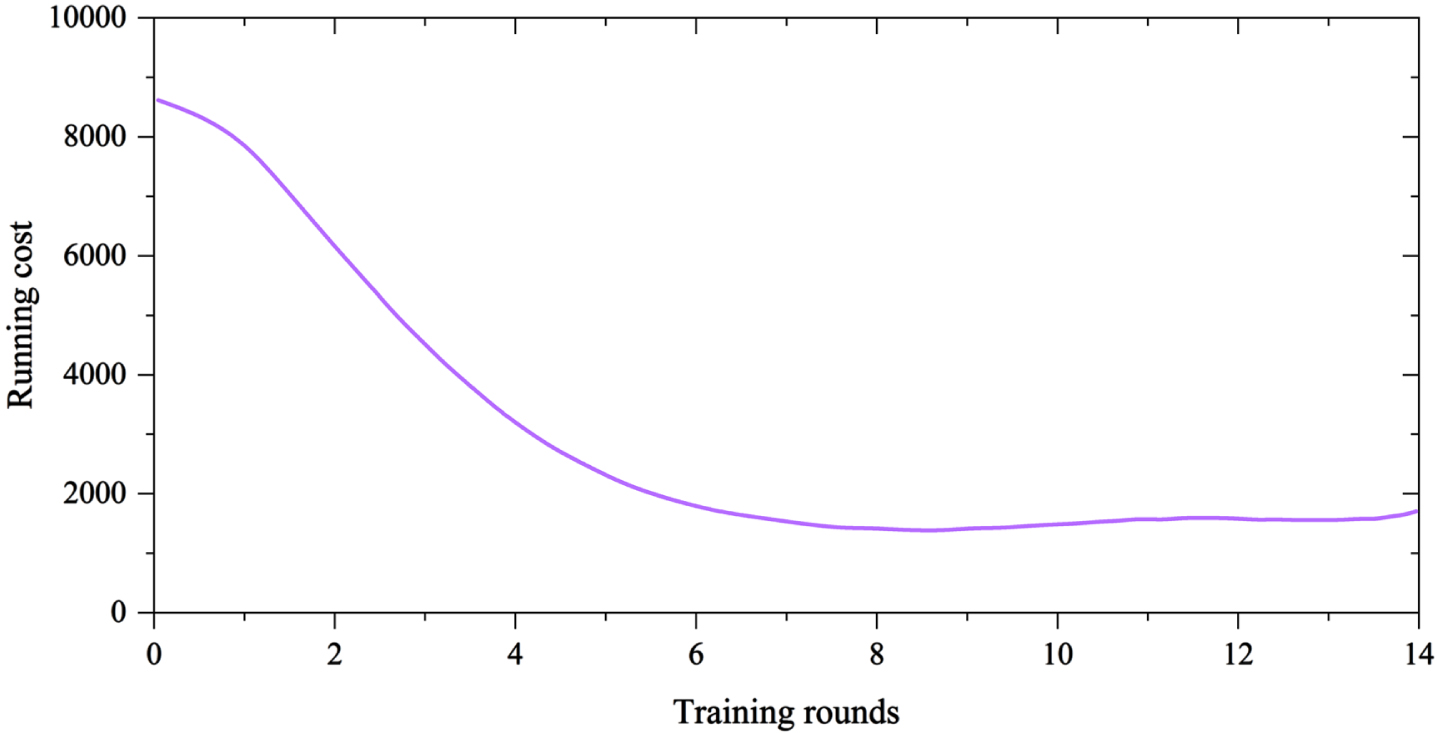

When using the method in this paper, it is necessary to first train the neural network controller as follows: input the training samples into the neural network controller in turn and calculate the average value of the total rewards for all regional air compressor intelligent units in the scheduling cycle. The resulting reward curve is shown in Fig. 8. It can be seen that at the beginning of training, there is an oscillation in the global rewards obtained by the press agent as the Agent continues to explore the action at this time; As the training progresses, the global reward value obtained by the air compressor agent converges, and the performance of the neural network controller becomes better and better. Figure 9 shows the variation curve of the operating cost of the air compressor during the training process, which shows that the cost also converges as the training proceeds.

Total reward changes of air compressor intelligent body.

Changes in operating costs of air compressors.

After the neural network controller training, the five air compressors’ energy efficiency priority is combined to configure the cluster combination reasonably. The priority of the five air compressors is: 1 # variable frequency air compressor = 4 # variable frequency air compressor = 2 # variable frequency air compressor > 3 # power frequency air compressor > 5 # air storage tank air compressor.

This paper uses a dynamic planning and scheduling method to switch and control the operation of the air compressor. Through virtual flow and rated air volume, it identifies the operating status of the air compressor under different conditions. It provides an optimal intelligent start and stops strategy for the air compressor, making the energy consumption of the air compression system minimum. The specific strategy is as follows: When the group control pressure is lower than 0.61MPa for 10 seconds, issue a command to the 2 # air compressor; If Unit 2 is originally in the startup state, the highest priority air compressor startup command among the non-startup devices will be given. When the pressure is lower than 0.61MPa for more than 80 seconds, the second air compressor that is not started will be given a start command; Similarly, when it is greater than 120 seconds, turn on the third set; By analogy, when it is greater than 150 seconds, turn on the fourth unit. When the group control pressure is greater than 0.675MPa, one power frequency air compressor is unloaded. When the power frequency compressor is unloaded, the working load of the frequency converter is automatically increased to compensate for the loss of gas production. The compensation interval is the minimum time interval required for two compensations. When the pressure drops to 0.614 MPa, the air compressor executes the loading command; If the pressure does not reach 0.614MPa, and the pressure has been constant within the normal fluctuation range, the air compressor will execute the shutdown command after 3 minutes from the start of unloading.

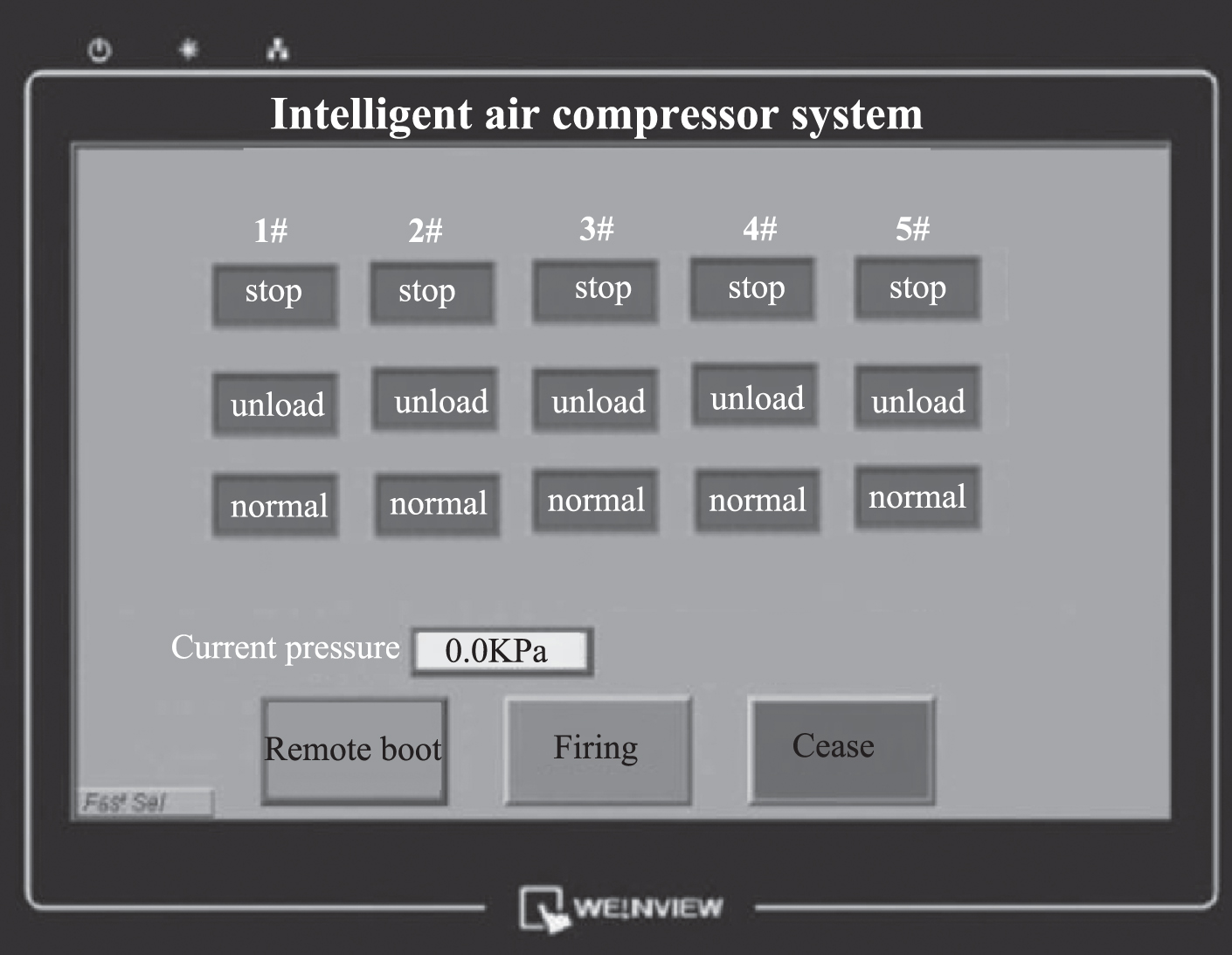

Under the above control, Fig. 10 shows the workshop’s air compressor monitoring interface for the production process.

Air compressor monitoring interface for the workshop production process.

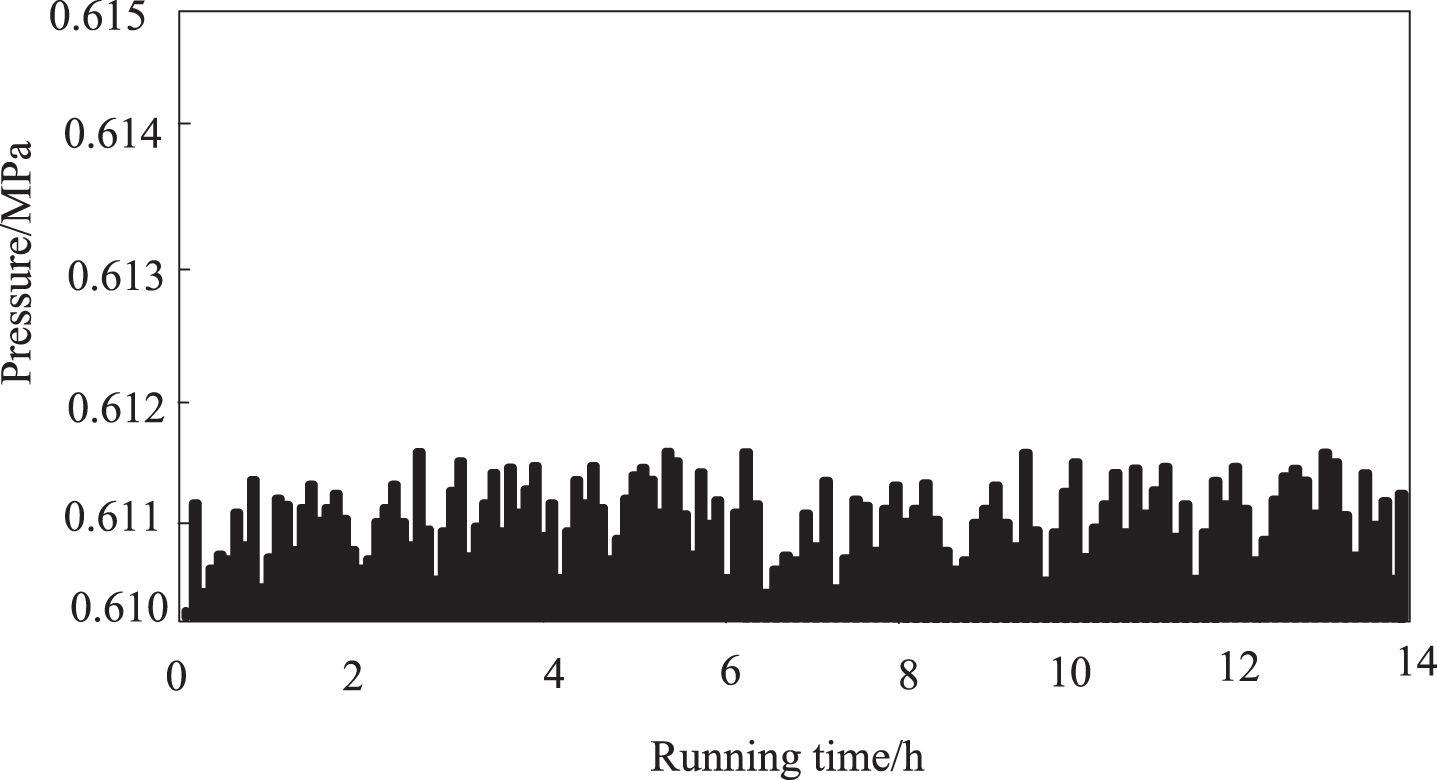

As shown in Fig. 10, the method in this paper can be used in group control production tasks of the air compressor. It can intelligently switch and control the outlet pressure of the header in combination with current operating conditions. After using the method in this paper, the pressure change at the outlet of the main pipe of the air compressor is shown in Fig. 11.

Pressure change at the outlet of the air compressor header.

As can be seen from Fig. 11, the pressure fluctuation value of the air compressor is stable between 0.610 and 0.612 Mpa, with a fluctuation amplitude of 0.015 MPa. The fluctuation of the gas supply pressure is stable within a very narrow range, indicating that the method in this paper improves the stability of the compressed air supply, indirectly ensuring the yield of the produced product.

Under the same working conditions in the gas workshop, the changes in electrical energy consumption in the workshop before and after using the method in this paper are analyzed. The analysis results are shown in Table 2.

Changes in electric energy consumption in the workshop before and after the use of this method

Consumption in each period is significantly reduced, fully reflecting the energy-saving effect brought by the method in this paper in the voltage stabilization problem, which shows the advantages of the method in this paper.

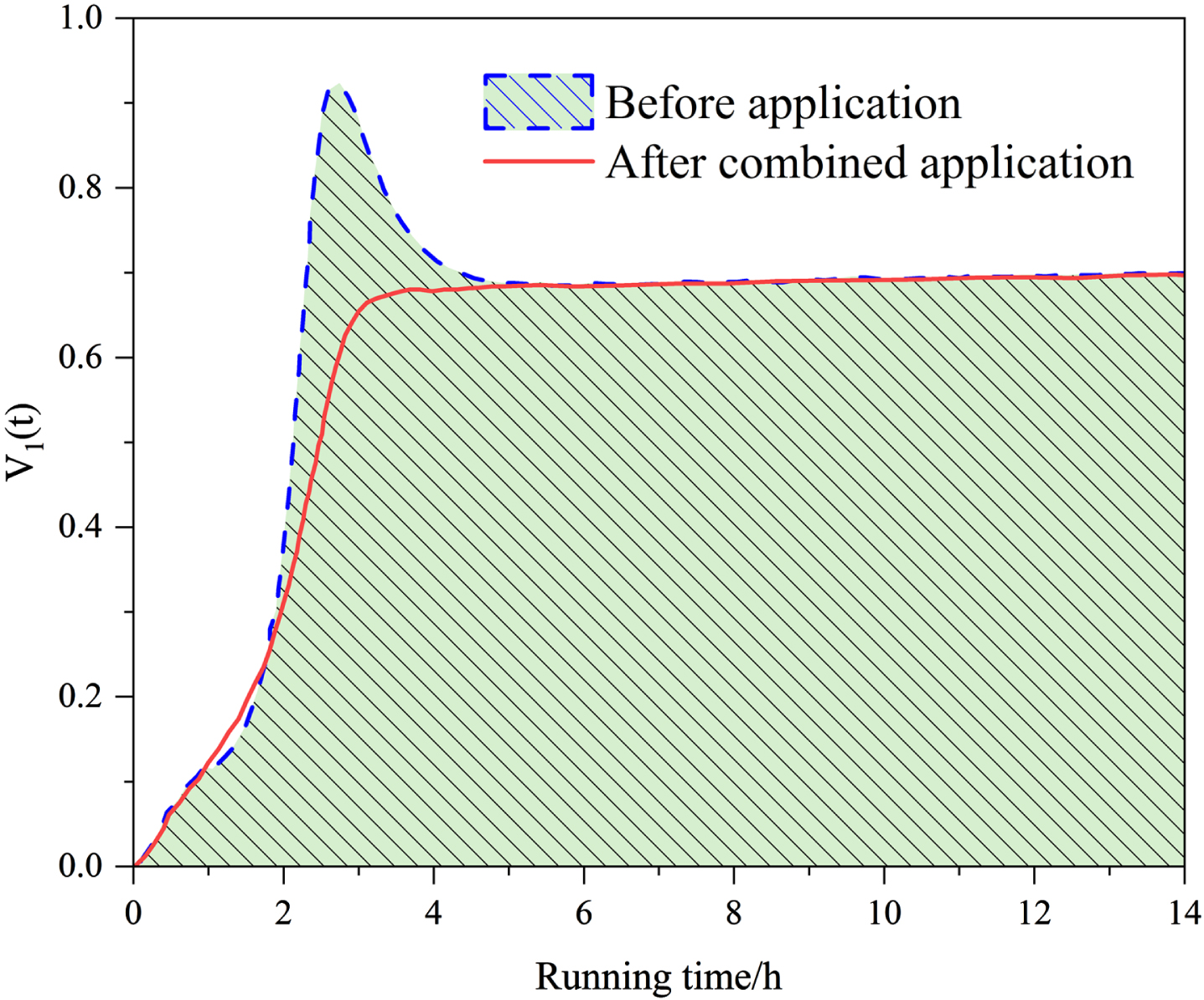

The unit step response changes of the neural network controller before and after the combination of multi-agent RL and neural network controller in the method of this paper are tested, as shown in Fig. 12.

Unit step response changes of neural network controllers.

By analyzing Fig. 12, it can be seen that the method in this paper, which combines multi-agent RL with neural network controllers, can reduce overshoot while maintaining a basically unchanged response time and significantly shorten the adjustment time, with better control effects.

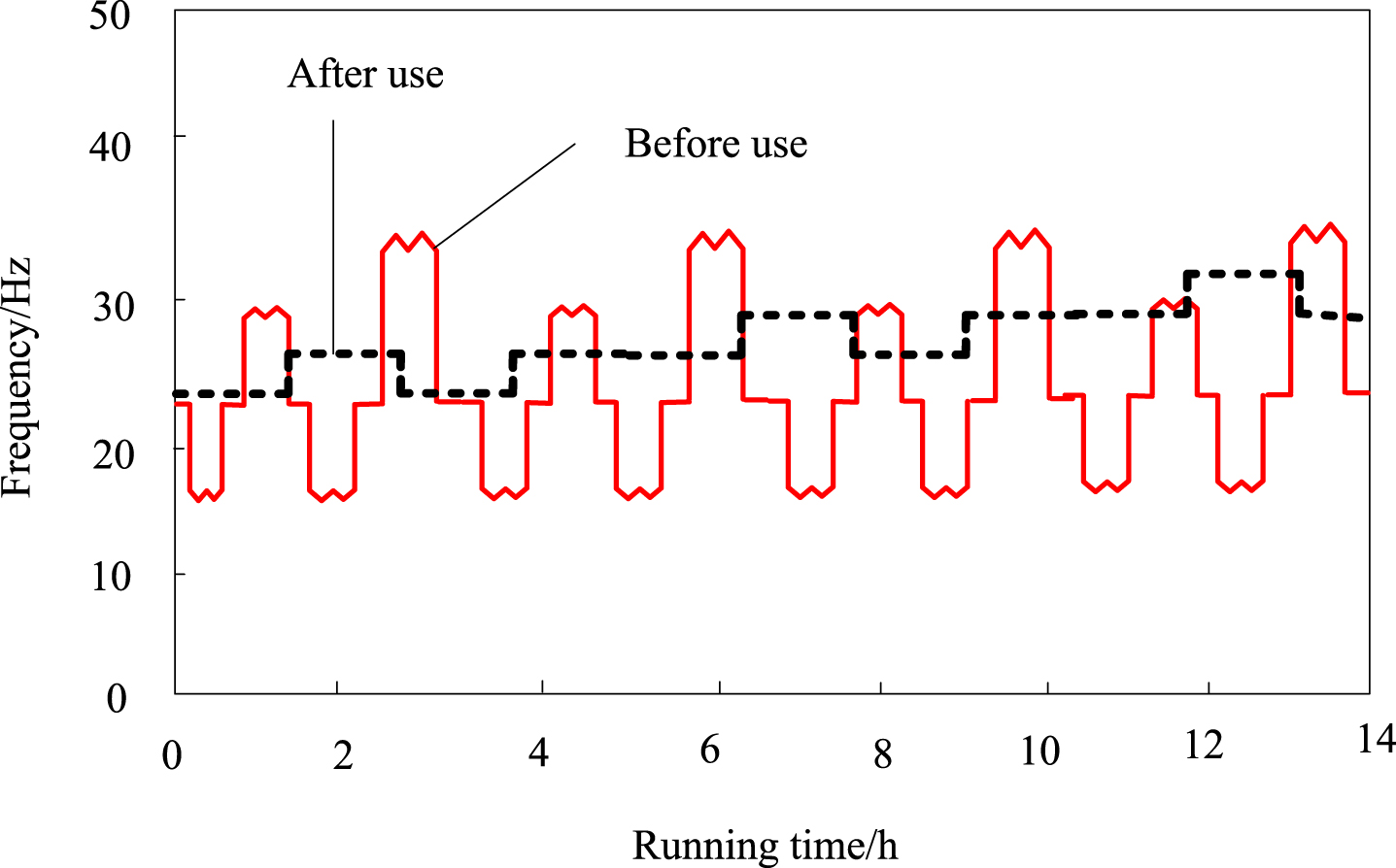

Before and after using the method in this paper, the working frequency of the variable frequency air compressor is shown in Fig. 13.

This method uses the working frequency of the front and rear frequency conversion air compressor.

From the analysis of Fig. 13, it can be seen from the changes in the operating frequency of the variable frequency air compressor that before and after use, the output frequency of the frequency converter has significantly become smoother, without sudden and significant changes, significantly reducing the difficulty of variable frequency control.

According to the historical data of this workshop, from November 2020 to October 2021, the cumulative power consumption of the air compression system in this workshop is 5922685.98 kW·H, with a production of 47258.19 million cigarettes, and a power consumption of approximately 1.252 kW·H per 10000 cigarettes. Details are shown in Table 3.

Details of accumulated power consumption and output of workshop air compression system

The method in this paper was officially put into use on November 1, 2021. The project team selects the power consumption and output of the air compressor group from November 2021 to August 2022 for comparison. The results are shown in Table 4.

Air compressor unit power consumption and production from November 2021 to August 2022

Since the method in this paper is put into use in November 2021, under the control of the method in this paper, the maximum compressed air power consumption per 10000 cigarettes is about 1.069kW·H/10000 cigarettes in July 2022; The minimum is January 2022, about 1.036kW·H/10000 units. From November 2021 to August 2022, the power consumption of the air compressor unit is 4347149kW·H, with a total output of 41093.85 million cigarettes. The power consumption per 10000 cigarettes is approximately 1.058kW·H/10000 cigarettes, which meets the expected target of 1.07kW·H/10000 cigarettes.

Before and after implementing the method in this paper, the power consumption of compressed air per 10000 cigarettes decreased from about 1.252kW·H/10000 cigarettes to 1.058kW·H/10000 cigarettes, a decrease of about 15.50%.

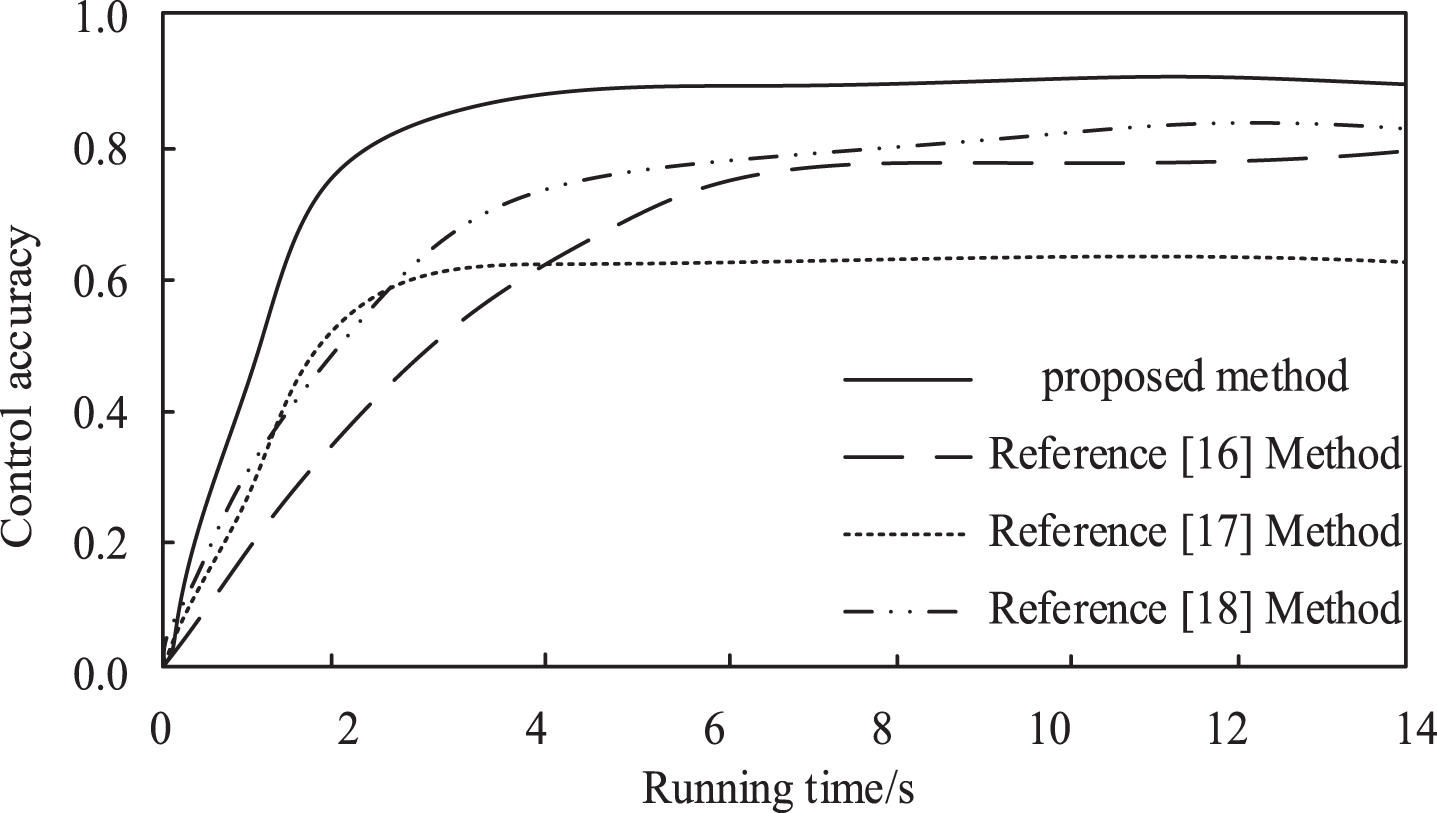

In order to further validate the effectiveness of the method proposed in this paper, the methods of reference [16], reference [17], and reference [18] were used as comparison methods for control performance. The comparison of control performance results is shown in Fig. 14.

Comparison of control performance results.

By analyzing Fig. 14, it can be seen that the method proposed in this paper achieved relatively stable control accuracy within a running time of about 2 seconds and remained above 0.8. Compared with the control accuracy results obtained by the methods in reference [16–18], the control accuracy of the method proposed in this paper is relatively high and can achieve relatively stable control within a shorter running time. Therefore, the method proposed in this paper has high practical value.

Compressed air is one of the main power sources in cigarette production. Still, it is also the first type of energy carrier that consumes a lot of energy in production enterprises. Compressed air is responsible for providing an air source for all pneumatic components in the factory, including various pneumatic valves, and also providing power for equipment purging in various industries. Current common problems in air compression systems include: Unstable air pressure; Large power loss; The operation condition is uneven, and the maintenance cost is high; There are potential safety hazards in manual attendance.

Therefore, the problem of air compressor group control plays an important role in ensuring the stable and economic operation of the air compressor system. This paper analyzes and studies an intelligent pressure switching control method for air compressor group control based on multi-agent RL in response to this problem. This method can convert the group control problem of the air compressor into a multi-agent coordinated control problem, reducing the operating energy consumption of the air compressor group as much as possible. In experiments, the method presented in this paper has been proven to be applicable to the intelligent pressure switching control problem of air compressor group control, with relatively good control effects. However, this research only focuses on the analysis and study of air compressor group control problems, which may not be applicable to other types of industrial systems. Further research can explore the application of this method in other industries and different types of energy carriers. Additionally, the use of multi-agent reinforcement learning-based control methods in large-scale systems may face challenges in terms of computational complexity and implementation difficulty. Future research can investigate more efficient and scalable control methods to adapt to a wider range of application scenarios.