Abstract

The FAIR Data Principles propose that all scholarly output should be Findable, Accessible, Interoperable, and Reusable. As a set of guiding principles, expressing only the kinds of behaviours that researchers should expect from contemporary data resources, how the FAIR principles should manifest in reality was largely open to interpretation. As support for the Principles has spread, so has the breadth of these interpretations. In observing this creeping spread of interpretation, several of the original authors felt it was now appropriate to revisit the Principles, to clarify both what FAIRness is, and is not.

Growing awareness of FAIRness

Open Science is a growing movement. The European Council adopted Open Science and the reusability of research data as a priority, as did the G7 at their summit in Japan [9]. This provided fertile ground for the rapid uptake of the FAIR Data Principles [25] since their recent publication [3]. The DG RTD (the Directorate General for Research and Innovation) of the European Commission took the lead [6], but in close collaboration with other directorates and the USA-based Big Data to Knowledge (BD2K) of the NIH (National Institutes of Health) [15]. Science Europe has adopted FAIR principles as the basis for sharing administrative data on funding [7]. The G20 went further in the 2016 Hangzhou summit by endorsing the FAIR Principles by name [8]. The Principles have also resonated in many discussions beyond their original scope of research data sharing, in domains as diverse as Archaeology [22], and environmental monitors for “smart cities” [12]. This wide embrace of the FAIR Principles by governments, governing bodies, and funding bodies, has led to a growing number of data resources attempting to demonstrate their FAIRness, for an example, see ‘Being FAIR at UniProt’ [10]. The UniProt example is spot-on, but there are also emerging indications that the original meanings of findable, accessible, interoperable, and reusable sometimes may be stretched; even, in some cases, in order to avoid change or improvement. In other cases, the proposed implementation of these principles, with the goal of an Internet of FAIR Data and Services, is beginning to raise concern and confusion. Therefore, with the broader community now forming independent, thoughtful opinions about the meaning and consequences of the FAIR Principles, it seems worthwhile to clarify their original intent and interpretation.

Becoming cloudy

Achieving the transition from the current closed and silo-based approaches to research towards more open and networked scholarship needs important changes in the science reward and methodological practice. But it also needs an increased support infrastructure of FAIR data-publishing, analytics, computational capacity, virtual machines and workflow systems.

These infrastructure needs have been – and are being – addressed intensively at the European Commission level, especially in the context of the 2016 Dutch EC Presidency [16] and the European Open Science Cloud (EOSC) [5], the e-IRG roadmap [16] and in the US through the NIH Data Commons projects. In Australia, ANDS [2] and AARnet [1] follow a very similar approach and recently, the East African Community has adopted the Dakar declaration on Open Science in Africa [23]. In South Africa, the African Data Intensive Research Cloud [21] is part of the roadmap for research infrastructures as well. Common to all these is the idea of building infrastructure based on rich metadata for the resources in the research environment, that support their optimal re-use. Provision of all such resources and services will necessarily involve a mix of players, including commercial and public ones. A group of early-adopter EU member states is preparing the GO FAIR initiative [13], which is a proposal for the fast-track implementation of the EOSC.

Ensuring that in such globally dispersed infrastructures all provided resources are findable, accessible, interoperable and reusable, as well as ensuring that the qualities of a service (i.e. what it does, and how), as well as the quality of a service (i.e. the degree of excellence), are appropriate for the researchers’ needs, requires widely shared and adopted standards and principles, In addition, there is a need for set of community-acceptable ‘rules of engagement’, that define how the resources within that community will/should function and promulgate themselves. These rules of engagement may vary depending on the needs or constraints within any given community, but in each case, the FAIR guidelines assist the interaction between those who want to use community resources and those who provide them. FAIR guiding principles provide a scaffold for building such rules of engagement within each community.

What FAIR is…

FAIR refers to a set of principles, focused on ensuring that research objects are reusable, and actually will be reused, and so become as valuable as is possible. They deliberately do not specify technical requirements, but are a set of guiding principles that provide for a continuum of increasing reusability, via many different implementations. They describe characteristics and aspirations for systems and services to support the creation of valuable research outputs that could then be rigorously evaluated and extensively reused, with appropriate credit, to the benefit of both creator and user.

…and what FAIR is not

Is FAIR fair?

The actual meaning of the term ‘fair’ in everyday life is in some ways also confusing. People have different perceptions and connotations associated with it. One major criticism (relating to the machine-actionability aspect of the principles) is the perception that non-machine-readable data would be considered in some way ‘unfair’. We must point out, again, that we explicitly describe FAIR as a spectrum, and a continuum; that there is no such thing as ‘unfair’ being associated with the FAIR principles, except maybe the specific case of data that are not even findable. As we noted above, not all data can, or should, be machine-actionable. There are numerous circumstances where making data machine-actionable would reduce its utility (e.g. due to the lack of tools capable of efficiently processing the machine-actionable format). We emphasise that as long as such data are clearly associated with FAIR metadata, we would consider them fully participating in the FAIR ecosystem.

A very positive connotation of FAIR is that the acronym carries the ring of general ‘fairness’. On the one hand the ‘A’ allows fair shielding or protection of data that cannot be open for good reasons of various kinds, so that citizens and medical researchers, but also for instance industry, are assured of proper data protection. On the other hand, from the basic principle that FAIRness is maximised when data are open, maximising ‘A’ implies maximising openness. This includes addressing, to the greatest extent reasonable, the machine-actionability aspect of FAIR. The ‘fair’ connotation should therefore not be underestimated either. Data that are not open will simply participate less in the Open-Science-driven Social Machines that will dominate science in the near future.

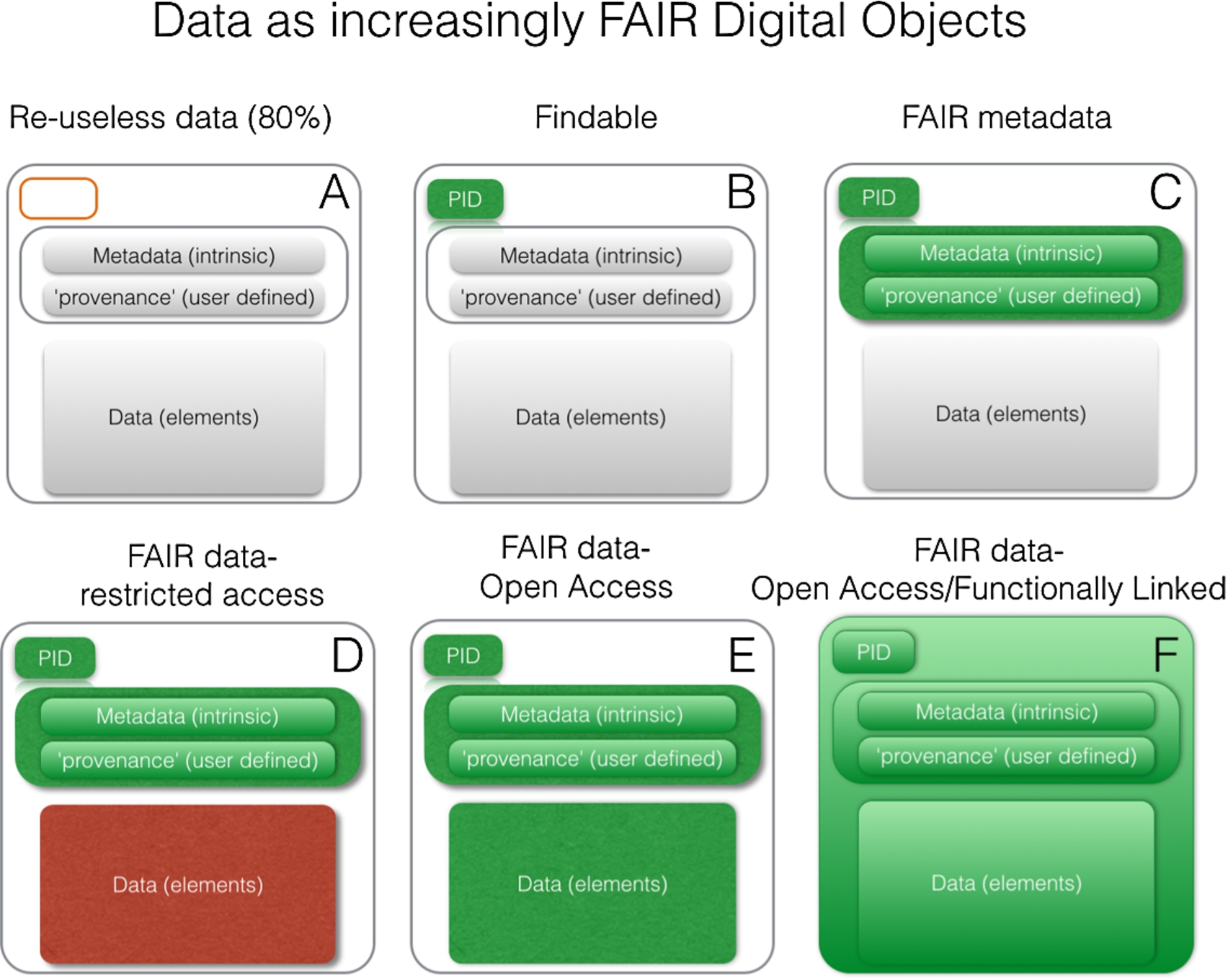

Varying degrees of FAIRness. As elements become coloured, they have become FAIR. For example, adding a persistent identifier (PID) increases the fairness of that component. Coloured elements in green are FAIR and open, coloured elements in red are FAIR and closed. In the final panel, the mechanism for expressing the relationship between the ID, the metadata, and the data, is also FAIR (i.e. follows a widely accepted and machine-readable standard, such as DCAT or NanoPublications) and interlinked with other related FAIR data or analytical tools on the Internet of FAIR Data and Services.

Figure 1 shows how data can become increasingly FAIR digital objects: Panel A represents the (unfortunate) situation of more than 80% of the datasets in current practice effectively being unavailable for reuse. Almost as many are simply unusable [20], which is why we coin the term ‘reuseless’ for those data and services, rather than the term ‘unfair’. Reuseless data are, for instance, those published as obscure and unstable links to supplemental data in narrative articles, not even (as a set) having a proper, machine-resolvable, Persistent, Unique Identifier (PID) which renders both the data elements themselves, as well as their metadata, non-machine-readable.

A minimal step towards FAIRness is to provide the data set, as an entity in its own right, with a PID that is not only intrinsically persistent, but also persistently linked to the data set (research object) it identifies (panel B). However, without machine-readable metadata it will still be difficult to find the data, unless one knows the PID. So a PID is necessary, but not sufficient.

We distinguish ‘intrinsic metadata’ and ‘user-defined’ metadata. The former category (albeit with the boundaries sometimes blurred) are the metadata that should be constructed at data capture. In other words, they describe the metadata that is often automatically added to the data by the machine or workflow that generated the data (e.g. DICOM data for biomedical images, file format, time date stamps, and other features that are intrinsic to the data). Such metadata can be anticipated by the creator and added in order to be useful to Find, Access, Interoperate, and thus reuse, the research object.

As it is very burdensome to peer review the quality of data at the time they are first published, the ongoing and extended review and annotation of data sources during the period of their existence and reuse is a crucial process in Open Science, an approach addressed for instance in the CEDAR project [14]. We argue that both intrinsic and user-defined provenance (e.g. contextual) metadata should be added, and made FAIR whenever possible (panel C).

Not all data lend themselves to be machine-actionable without human intervention (some raw data, but also images for example). However, many data that have a relational or an assertional character can be captured perfectly correctly in a machine-processable semantic syntax. Nevertheless, even if data are technically FAIR, it may be necessary to restrict access to them for reasons discussed above (panel D). That said, the default for maximal FAIRness should be that the data themselves are made available under well-defined conditions for reuse by others (panel E).

We argue here that even the step from A to B would already have a profound effect on the actual reuse of research objects, because at least they can be consistently located by those who know the identifier, and thus can be shared via that identifier. However, thereafter, the addition of rich, FAIR metadata is the first major step towards becoming maximally FAIR. When the data elements themselves can also be made FAIR and made open for reuse by anyone, we have reached the highest degree of FAIRness. When all of these are linked with other FAIR data, we will have achieved the Internet of (FAIR) Data. Once an increasing number of applications and services can link and process FAIR data we will finally achieve the Internet of FAIR Data and Services (panel F). However, when data are not FAIR (at least at level C) they simply cannot truly participate in this future scenario.

FAIR and closed could support FAIR and open

In a recent press interview article, Barend Mons proposed a new business model to allow ‘closed’ to pay for ‘open’ [24]. The basis of this proposed business model is that cloud services be free at the point-of-use in the situation when, and if, the user (not just the originator or creator of the data) contributes fully to Open Science. In other words, all user queries, annotations, analytical results and subsequent publications would be fully Open Access and therefore contribute to the public good of open data and services. Those users, however, who wish to keep any of these actions and results private or secret would need to pay. This is perceived as just (fair) by both academics and colleagues in the private sector. Researchers in hospitals, companies, national security agencies and other secrecy-prone players use Open Public Good data as much as all others, so it seems only fair that they contribute to the sustainability of the open data when they use these services for their private or proprietary goals. The fair use of FAIR data is a critical asset in the toolbox of further (and hopefully realised) sustainable development goals.

Early adoption

A Skunkworks-like group of coders spontaneously formed at the original Lorentz workshop and, after attracting additional experts from various fields, this group has recently published an exemplar implementation for Web-based discovery and interoperability that is fully compliant with the FAIR principles [26]. This exemplar is not intended to be prescriptive, or even a recommendation; its sole purpose is to describe a novel interoperability infrastructure that naturally leads to adherence to every aspect of FAIR – thus answering the question “what does FAIR look like?”

Many other implementations will no-doubt be necessary, and will be welcomed, to solve a broader range of problems currently precluding effective sharing and reuse of data and services. Obviously, the FAIR Principles are not magic, nor are they presenting a panacea, but they guide the development of infrastructure and tooling to make all research objects optimally reusable for machines and people alike, which is a crucial step forward. It is very important that the community continues to discuss, challenge and refine their own implementation choices, within the ‘behavioural’ guidelines established by the principles.

In conclusion

The FAIR Principles have further propelled the global debate about better data stewardship in data-driven and open science, and they have triggered funding bodies to discuss their requirements for implementation of the FAIR principles; some of these are very embryonic, while others have matured to actual guidelines [7] and there are already attempts to implement supporting prototypes [18]. We strongly believe that FAIR data and services are a key substrate for evidence-based decisions; allow the exposure of research and intellectual property malpractices of multiple kinds; the full participation of citizens and citizen-scientists (i.e. not only professional scientists) from developed and developing countries alike. While intentionally demanding certain qualities and properties from data resources, the FAIR principles nevertheless allow a great deal of freedom with respect to implementation. We hope that this revisiting of the FAIR principles will serve to remove some misperceptions. Nevertheless, we welcome feedback about any concerns that may emerge from the community in the future.