Abstract

Available corpora for Argument Mining differ along several axes, and one of the key differences is the presence (or absence) of discourse markers to signal argumentative content. Exploring effective ways to use discourse markers has received wide attention in various discourse parsing tasks, from which it is well-known that discourse markers are strong indicators of discourse relations. To improve the robustness of Argument Mining systems across different genres, we propose to automatically augment a given text with discourse markers such that all relations are explicitly signaled. Our analysis unveils that popular language models taken out-of-the-box fail on this task; however, when fine-tuned on a new heterogeneous dataset that we construct (including synthetic and real examples), they perform considerably better. We demonstrate the impact of our approach on an Argument Mining downstream task, evaluated on different corpora, showing that language models can be trained to automatically fill in discourse markers across different corpora, improving the performance of a downstream model in some, but not all, cases. Our proposed approach can further be employed as an assistant tool for better discourse understanding.

Introduction

Argument Mining (ArgMining) is a discourse parsing task that aims to automatically extract structured arguments from text. In general, an argument in NLP and machine learning is a graph-based structure, where nodes correspond to Argumentative Discourse Units (ADUs), which are connected via argumentative relations (e.g., support or attack) [36]. Available corpora for ArgMining differ along several axes, such as language, genre, domain, and annotation schema [33,36]. One key aspect that differs across different corpora (and even across different articles in the same corpus) is the presence (or absence) of discourse markers (DMs) [18,57]. These DMs are lexical clues that often precede ADUs [2,30,45,46,56,62,63]. DMs have been studied for several years under many different names [21], including discourse connectives, discourse particles, pragmatic markers, and cue phrases. We adopt the definition of discourse markers proposed by Fraser [20]: DMs are lexical expressions (“and”, “but”, “because”, “however”, “as a conclusion”, etc.) used to relate discourse units. With certain exceptions, they signal a relationship between the interpretation of the discourse unit they introduce (S2) and the prior discourse unit (S1). DMs might belong to different syntactic classes, drawn primarily from the syntactic classes of conjunctions, adverbials, and prepositional phrases.

Exploring effective ways to use DMs has received wide attention in various NLP tasks [47,61], including ArgMining related tasks [30,46]. In discourse parsing [38,51], DMs are known to be strong cues for the identification of discourse relations [7,39]. Similarly, for ArgMining, the presence of DMs are strong indicators for the identification of ADUs [18] and for the overall argument structure [30,63] (e.g., some DMs are clear indicators of the ADU role, such as premise, conclusion, or major claim).

The absence of DMs makes the task more challenging, requiring the system to more deeply capture semantic relations between ADUs [40].

To close the gap between these two scenarios (i.e., relations explicitly signaled with DMs vs. implicit relations), we ask whether recent large language models (LLMs) such as BART [35], T5 [54] and ChatGPT,1 can be used to automatically augment a given text with DMs such that all relations are explicitly signaled. Due to the impressive language understanding and generation abilities of recent LLMs, we speculate that such capabilities could be leveraged to automatically augment a given text with DMs. However, our analysis unveils that such language models (LMs), when employed in a zero-shot setting, underperform in this task. To overcome this challenge, we hypothesize that a sequence-to-sequence (Seq2Seq) model fine-tuned to augment DMs in an end-to-end setting (from an original to a DM-augmented text) should be able to add coherent and grammatically correct DMs, thus adding crucial signals for ArgMining systems.

Our second hypothesis is that downstream ArgMining models can profit from automatically added DMs because these contain highly relevant signals for solving ArgMining tasks, such as ADU identification and classification. Moreover, given that the Seq2Seq model is fine-tuned on heterogeneous data, we expect it to perform well across different genres. To this end, we demonstrate the impact of our approach on an ArgMining downstream task, evaluated on different corpora.

Our experiments indicate that the proposed Seq2Seq models can augment a given text with relevant DMs; however, the lack of consistency and variability of augmented DMs impacts the capability of downstream task models to systematically improve the scores across different corpora. Overall, we show that the DM augmentation approach improves the performance of ArgMining systems in some corpora and that it provides a viable means to inject explicit indicators when the argumentative reasoning steps are implicit. Besides improving the performance of ArgMining systems, especially across different genres, we believe that this approach can be useful as an assistant tool for discourse understanding to improve the readability of the text and the transparency of argument exposition, e.g., in education contexts.

In summary, our main contributions are: (i) we propose a synthetic template-based test suite, accompanied by automatic evaluation metrics and an annotation study, to assess the capabilities of state-of-the-art LMs to predict DMs; (ii) we analyze the capabilities of LMs to augment text with DMs, finding that they underperform in this task; (iii) we compile a heterogeneous collection of DM-related datasets on which we fine-tune LMs, showing that we can substantially improve their ability for DM augmentation, and (iv) we evaluate the impact of end-to-end DM augmentation in a downstream task and find that it improves the performance of ArgMining systems in some, but not all, cases. The code for replicating our experiments is publicly available.2

Discourse marker augmentation approach – high-level description

This section provides a high-level description of the proposed DM augmentation approach.

We start our analysis by assessing whether recent LMs can predict grammatically and coherent DMs (Section 3). To this end, we set up a “fill-in-the-mask” DM prediction task, based on a challenging synthetic testbed proposed in this work (Section 3.1), that allows us to evaluate whether language models are sensitive to specific semantically-critical edits in the text. Furthermore, in Section 3.2, we also propose automatic evaluation metrics (validated with an annotation study, Section 3.6) that can be employed to evaluate the quality of the DM predictions provided by the models.

However, the ultimate goal of this work is to automatically augment a text with DMs in an end-to-end setting (i.e., from raw text to a DM-augmented text). This task is framed as a Seq2Seq problem (Section 5), where end-to-end DM augmentation models must automatically (a) identify where DMs should be added and (b) determine which DMs should be added based on the context. To assess the impact of the proposed DM augmentation approach in a downstream task, we study the ArgMining task of ADU identification and classification (Section 6). Section 4 introduces the corpora used in the end-to-end DM augmentation (Section 5) and downstream task (Section 6) experiments.

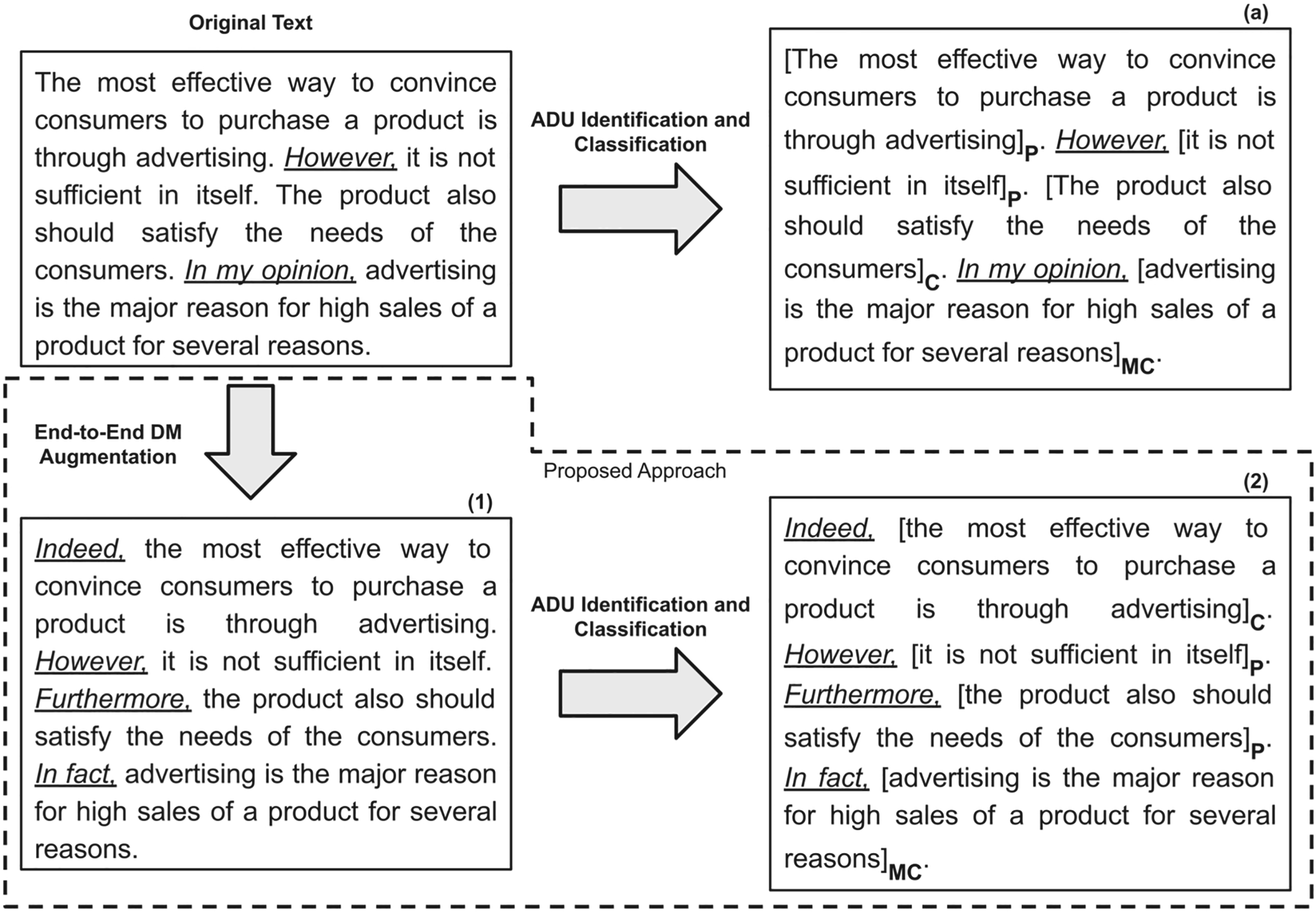

Illustration of proposed DM augmentation approach. We include annotations for ADU boundaries (square brackets) and labels (acronyms in subscript, see Section 4 for details regarding ADU labels). The underlined text highlights the presence of DMs.

Figure 1 illustrates how the proposed DM augmentation approach differs from conventional approaches to address the downstream task studied in this paper (ADU identification and classification).

At the top of Fig. 1, we illustrate the conventional approach to address the ArgMining downstream task. “Original Text” was extracted from the Persuasive Essays corpus (PEC) [63] and contains explicit DMs provided by the essay’s author (highlighted in underlined text). The downstream task model receives as input the “Original Text”, and the goal is to identify and classify the ADUs (according to a corpus-specific set of ADU labels, such as Premise (P), Claim (C), Major Claim (MC), etc.). This downstream task is formulated as a token-level sequence tagging problem. We illustrate the output of a downstream task model in the box (a) from Fig. 1 as follows: ADU boundaries (square brackets) and labels (acronyms in subscript, see Section 4 for details regarding ADU labels). The gold annotation for this example is:

In the approach proposed in this paper, in the first step, we employ DM augmentation models to augment the text with DMs in an end-to-end setting (as detailed in Section 5). As illustrated in box (1) from Fig. 1, the output from this initial step is a text augmented with DMs, containing useful indicators to unveil the argumentative content and improve the readability of the text. Then, in a second step, to assess the impact of the proposed end-to-end DM augmentation approach in a downstream task, we evaluate whether downstream task models can take profit from the DMs automatically added to the text (Section 6), as illustrated in box (2) from Fig. 1. Comparing the prediction in box (b) with the gold annotation, we can observe that the downstream task model performs both tasks (i.e., ADU identification and classification) correctly, taking advantage of the useful indicators provided by the DM augmentation model.

We now assess whether current state-of-the-art language models can predict coherent and grammatically correct DMs. To this end, we create the

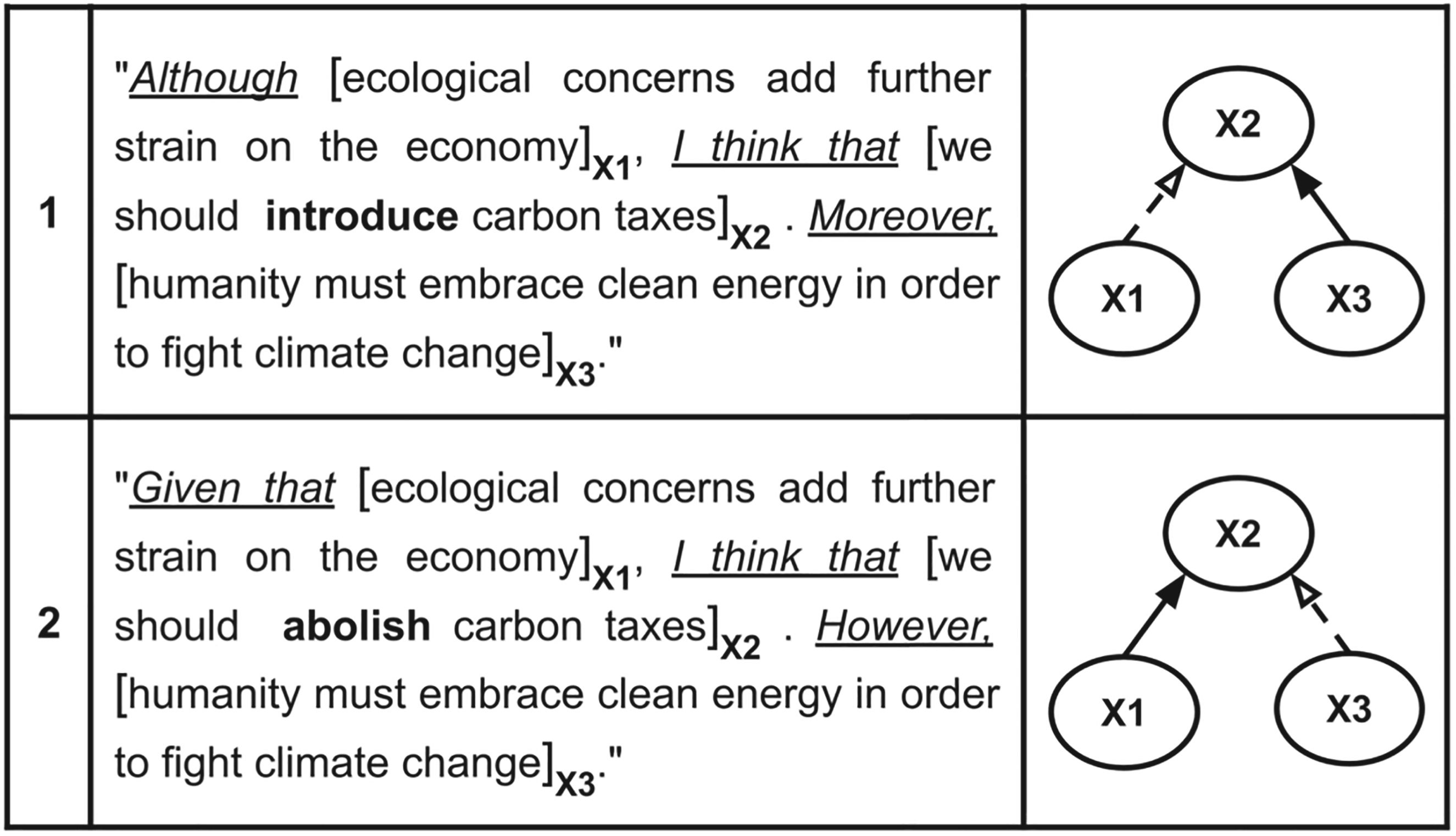

To illustrate the crucial role of DMs and stance for argument perception, consider Examples 1 and 2 in Fig. 2. In bold, we highlight the stance-revealing word. We show that it is possible to obtain different text sequences with opposite stances, illustrating the impact that a single word (the stance in this case) can have on the structure of the argument. Indeed, the role of the premises changes according to the stance (e.g., “X1” is an attacking premise in Example 1 but a supportive premise in Example 2), reinforcing that the semantically-critical edits in the stance impact the argument structure. In underlined text, we highlight the DMs. We remark that by changing the stance, adjustments of the DMs that precede each ADU are required to obtain a coherent text sequence. Furthermore, these examples also show that the presence of explicit DMs improves the readability and makes the argument structure clearer (reducing the cognitive interpretation demands required to unlock the argument structure). In some cases, the presence of explicit DMs is essential (e.g., DMs preceding the attacking premise, such as “Although” and “However” in Examples 1 and 2, respectively) to obtain a sound and comprehensible text. Overall, Fig. 2 illustrates the key property that motivated our TADM dataset: subtle edits in content (i.e., stance and DMs) might have a relevant impact on the argument structure. On the other hand, some edits do not entail any difference in the argument structure (e.g., the position of ADUs, such as whether the claim occurs before/after the premises).

To assess the sensitivity of language models to capture these targeted edits, we use a “fill-in-the-mask” setup, where some of the DMs are masked, and the models aim to predict the masked content. To assess the robustness of language models, we propose a challenging testbed by providing text sequences that share similar ADUs but might entail different argument structures based on the semantically-critical edits in the text previously mentioned (Section 3.1). To master this task, models are not only required to capture the semantics of the sentence (claim’s stance and role of each ADU) but also to take into account the DMs that precede the ADUs (as explicit indicators of other ADUs’ roles and positioning).

TADM dataset example. Columns correspond to example id, text sequence, and argument structure (respectively). In the text sequences, we highlight the DMs (underlined) and the stance revealing word (in bold). In the argument structure, nodes correspond to ADUs and arrows to argumentative relations (a solid arrow indicates a “support” relation, a dashed arrow an “attack” relation).

Each sample comprises a claim and one (or two) premise(s) such that we can map each sample to a simple argument structure. Every ADU is preceded by one DM that signals its role. Claims have a clear stance towards a given position (e.g., “we should introduce carbon taxes”). This stance is identifiable by a keyword in the sentence (e.g., “introduce”). To make the dataset challenging, we also provide samples with opposite stances (e.g., “introduce” vs. “abolish”). We have one premise in support of a given

Based on these core elements, we follow a template-based procedure to generate different samples. The templates are based on a set of configurable parameters: number of ADUs

DMs are added based on the role of the ADU that they precede, using a fixed set of DMs (details provided in Appendix B). Even though using a fixed set of DMs comes with a lack of diversity in our examples, the main goal is to add gold DMs consistent with the ADU role they precede. We expect that the language models will naturally add some variability in the set of DMs predicted, which we compare with our gold DMs set (using either lexical and semantic-level metrics, as introduced in Section 3.2).

Examples 1 and 2 in Fig. 2 illustrate two samples from the dataset. In these examples, all possible masked tokens are revealed (i.e., the underlined DMs). The parameter “prediction type” will dictate which of these tokens will be masked for a given sample.

To instantiate the “claim”, “original stance”, “premise support” and “premise attack”, we employ the Class of Principled Arguments (CoPAs) set provided by Bilu et al. [4]. CoPAs are sets of propositions that are often used when debating a recurring theme. Associated with a recurring theme, Bilu et al. [4] provide a set of motions that are relevant to the recurring theme. In the context of a debate, a motion is a proposal that is to be deliberated by two sides. For example, for the theme “Clean energy”, we can find the motion phrasing “we should introduce carbon taxes”. We use these motions as claims in our TADM dataset. For each CoPA, Bilu et al. [4] provide two propositions that people tend to agree as supporting different points of view for a given theme. We use these propositions as supportive and attacking premises towards a given claim. Further details regarding this procedure can be found in Appendix C.

The test set contains 15 instantiations of the core elements (all from different CoPAs), resulting in a total of 600 samples (40 samples per instantiation × 15 instantiations). For the train and dev set, we use only the original stance role (i.e., stance role =

Automatic evaluation metrics

To evaluate the quality of the predictions provided by the models (compared to the gold DMs), we explore the following metrics. (1)

For all metrics, scores range from 0 to 1, and obtaining higher scores means performing better (on the corresponding metric).

Models

We employ the following LMs: BERT (“bert-base-cased”) [15], XLM-RoBERTa (XLM-R) (“xlm-roberta-base”) [11], and BART (“facebook/bart-base”) [35]. As a first step in our analysis, we frame the DM augmentation problem as a single mask token prediction task.

For BART, we also report results following a Seq2Seq setting (

Experiments

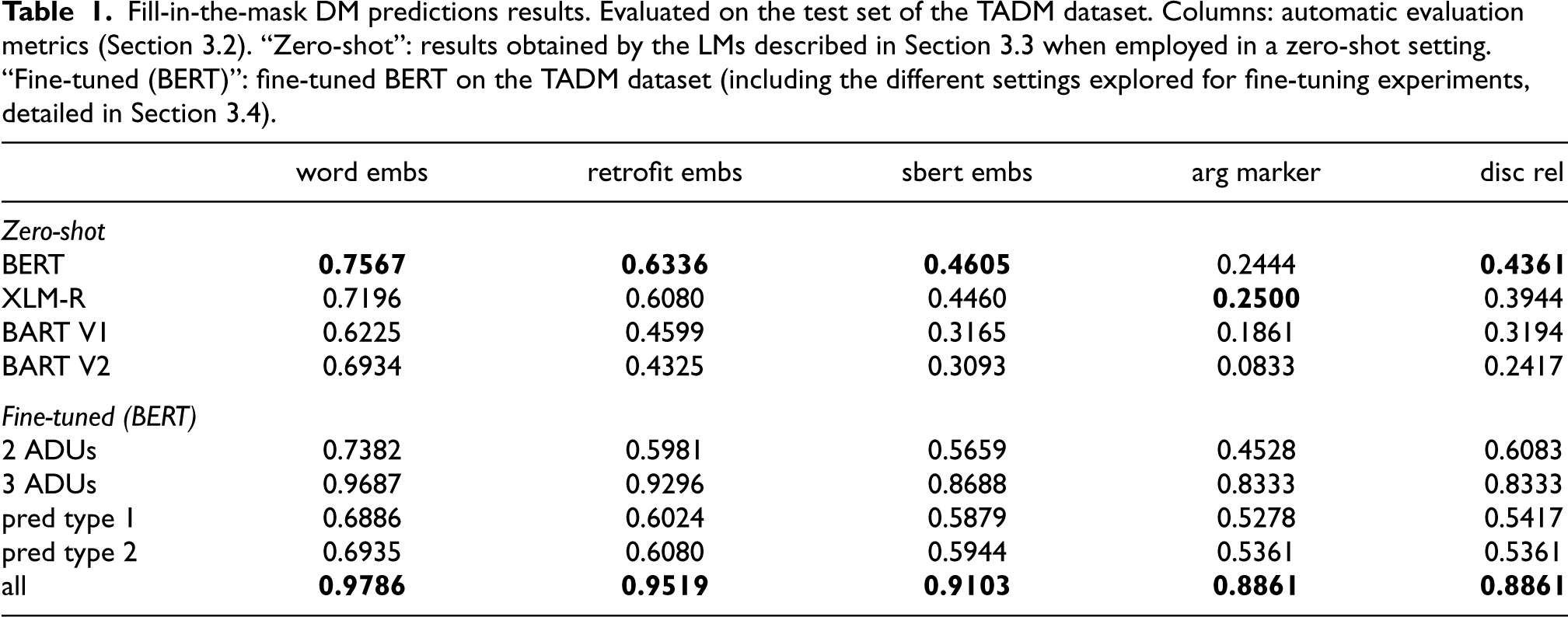

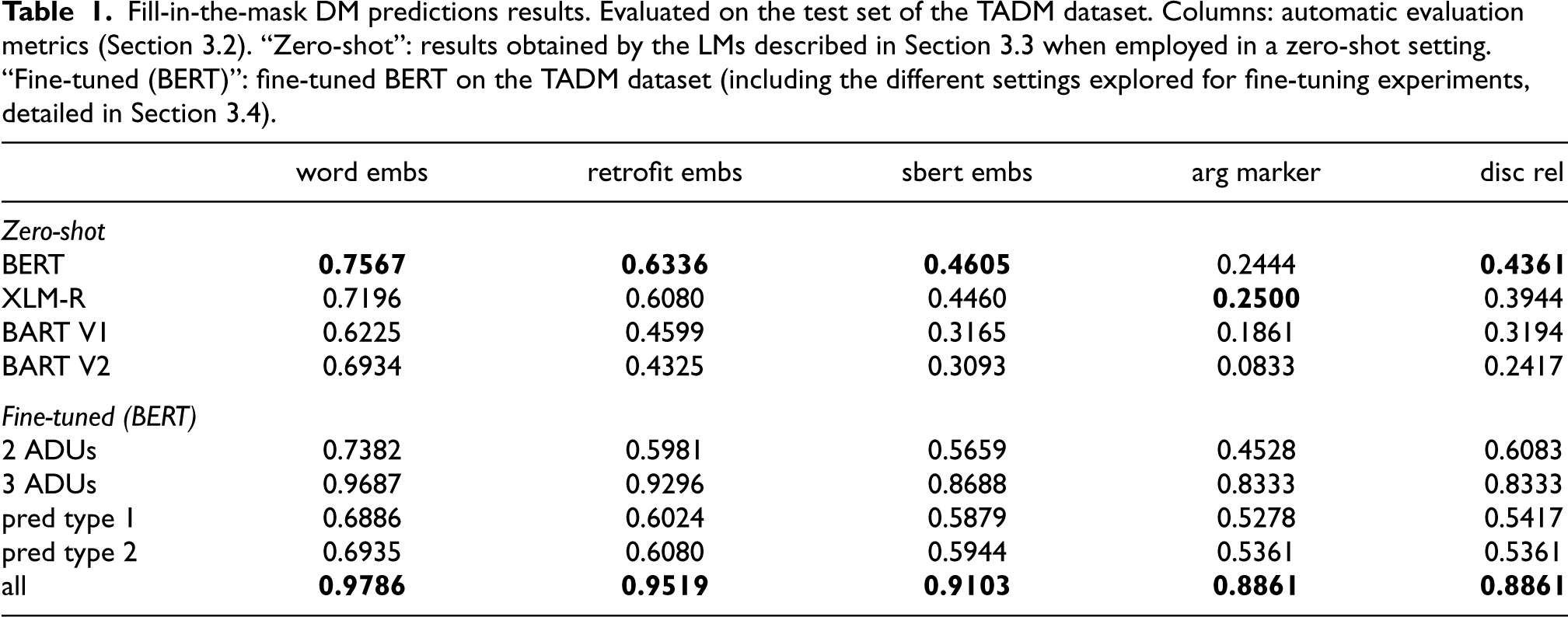

Table 1 shows the results obtained on the test set of the TADM dataset for the fill-in-the-mask DM prediction task.

Fill-in-the-mask DM predictions results. Evaluated on the test set of the TADM dataset. Columns: automatic evaluation metrics (Section 3.2). “Zero-shot”: results obtained by the LMs described in Section 3.3 when employed in a zero-shot setting. “Fine-tuned (BERT)”: fine-tuned BERT on the TADM dataset (including the different settings explored for fine-tuning experiments, detailed in Section 3.4).

Fill-in-the-mask DM predictions results. Evaluated on the test set of the TADM dataset. Columns: automatic evaluation metrics (Section 3.2). “Zero-shot”: results obtained by the LMs described in Section 3.3 when employed in a zero-shot setting. “Fine-tuned (BERT)”: fine-tuned BERT on the TADM dataset (including the different settings explored for fine-tuning experiments, detailed in Section 3.4).

In a zero-shot setting,

We explore fine-tuning the models on the TADM dataset (using the train and dev set partitions). We use BERT for these fine-tuning experiments. Comparing fine-tuned

Due to the limitations in terms of diversity of the TADM dataset, we expected some overfitting after fine-tuning the models on this data. To analyze the extent to which models overfit the fine-tuning data, we selected samples from the training set by constraining some of the parameters used to generate the TADM dataset. The test set remains the same as in previous experiments. In

Comparing the different settings explored for fine-tuning experiments, we observe that constraining the training data to single-sentence samples (“2 ADUs”) or to a specific “pred type” negatively impacts the scores (performing even below the zero-shot baseline in some metrics). These findings indicate that the models fail to generalize when the test set contains samples following templates not provided in the training data. This exposes some limitations of recent LMs, i.e., even though LMs were fine-tuned for fill-in-the-mask DM prediction, they struggle to extrapolate to new templates requiring similar skills.

We focus our error analysis on the discourse-level senses: “arg marker” and “disc rel”. The goal is to understand if the predicted DMs are aligned (regarding positioning) with the gold DMs.

Regarding zero-shot models, we make the following observations.

As the automatic evaluation metrics indicate, fine-tuned

Some examples from the test set of the TADM dataset are shown in Table 8 (Appendix G). We also show the predictions made by the zero-shot models and fine-tuned

Human evaluation

We conduct a human evaluation experiment to assess the quality of the predictions provided by the models in a zero-shot setting. Furthermore, we also aim to determine if the automatic evaluation metrics correlate with human assessments.

We selected 20 samples from the TADM dataset, covering different templates. For each sample, we get the predictions provided by each model analyzed in a zero-shot setting (i.e., Grammaticality: Coherence:

Table 10 (Appendix H) shows some of the samples presented to the annotators and corresponding ratings.

We report inter-annotator agreement (IAA) using Cohen’s

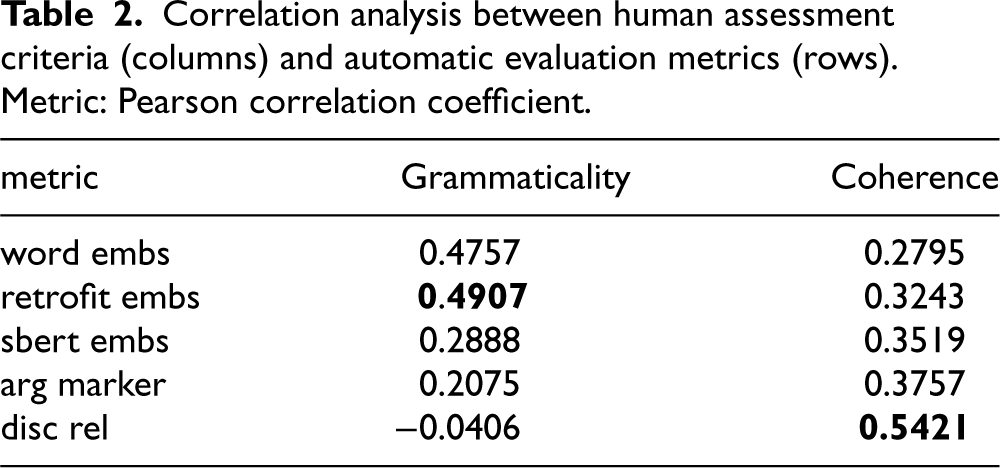

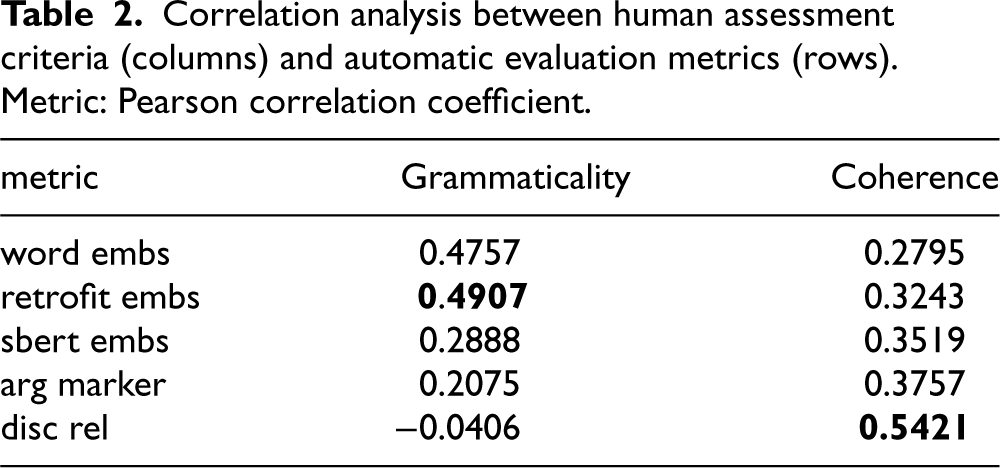

To determine whether automatic evaluation metrics (Section 3.2) are aligned with human assessments, we perform a correlation analysis. To obtain a gold standard rating for each text sequence used in the human evaluation, we average the ratings provided by the three annotators. The results for the correlation analysis are presented in Table 2 (using the Pearson correlation coefficient). For the “Grammaticality” criterion, “retrofit embs” is the metric most correlated with human assessments, followed closely by “word embs”. Regarding the “Coherence” criterion, “disc rel” is the most correlated metric. Intuitively, metrics based on discourse-level senses are more correlated with the “Coherence” criterion because they capture the discourse-level role that these words have in the sentence. On the other hand, these metrics are less correlated with the “Grammaticality” criterion. We attribute this to the fact that a DM prediction can be correct in terms of “Grammaticality” but incorrect in terms of “Coherence” (e.g., examples where “Gram.” = (+1) and “Coh.” = (−1) in Table 10). In this situation, metrics based on discourse-level senses will indicate the DM prediction is incorrect, which is not appropriate through the lens of the “Grammaticality” criterion. This observation indicates that the proposed metrics are complementary: metrics based on discourse-level senses should be employed to evaluate the “Coherence” criterion, but embeddings-based metrics are more suitable for the “Grammaticality” criterion.

Correlation analysis between human assessment criteria (columns) and automatic evaluation metrics (rows). Metric: Pearson correlation coefficient.

Correlation analysis between human assessment criteria (columns) and automatic evaluation metrics (rows). Metric: Pearson correlation coefficient.

In Section 3, based on experiments in a challenging synthetic testbed proposed in this work (the TADM dataset), we have shown that DMs play a crucial role in argument perception and that recent LMs struggle with DM prediction. We now move to more real-world experiments. The goal is to automatically augment a text with DMs (as performed in Section 5 in an end-to-end approach instead of the simplified “fill-in-the-mask” setup performed in Section 3) and assess the impact of the proposed approach in a downstream task (Section 6). In this section, we introduce the corpora used in the end-to-end DM augmentation (Section 5) and downstream task (Section 6) experiments. In Section 4.1, we describe three corpora containing annotations of argumentative content that are used in our experiments only for evaluation purposes. Then, in Section 4.2, we describe the corpora containing annotations of DMs. These corpora are used in our experiments to train Seq2Seq models to perform end-to-end DM augmentation.

Argument mining data

Data with DM annotations

End-to-end discourse marker augmentation

As detailed in Section 1, our goal is to automatically augment a text with DMs such that downstream task models can take advantage of explicit signals automatically added. To this end, we need to conceive models that can automatically (a) identify where DMs should be added and (b) determine which DMs should be added based on the context. We frame this task as a Seq2Seq problem. As input, the models receive a (grammatically sound) text sequence that might (or not) contain DMs. The output is the text sequence populated with DMs. For instance, for ArgMining tasks, we expect that the DMs should be added preceding ADUs.

Evaluation

As previously indicated, explicit DMs are known to be strong indicators of argumentative content, but whether to include explicit DMs inside argument boundaries is an annotation guideline detail that differs across ArgMining corpora. Furthermore, in the original ArgMining corpora (e.g., the corpora explored in this work, Section 4.1), DMs are not directly annotated. We identify the gold DMs based on a heuristic approach proposed by Kuribayashi et al. [30]. For PEC, we consider as gold DM the span of text that precedes the corresponding ADU; more concretely, the span to the left of the ADU until the beginning of the sentence or the end of a preceding ADU is reached. For MTX, DMs are included inside ADU boundaries; in this case, if an ADU begins with a span of text specified in a DM list,7 we consider that span of text as the gold DM and the following tokens as the ADU. For Hotel, not explored by Kuribayashi et al. [30], we proceed similarly to MTX. We would like to emphasize that following this heuristic-based approach to decouple DMs from ADUs (in the case of MTX and Hotel datasets) keeps sound and valid the assumption that DMs often precede the ADUs; this is already considered and studied in prior work [2,30], only requiring some additional pre-processing steps to be performed in this stage to normalize the ArgMining corpora in this axis.

To detokenize the token sequences provided by the ArgMining corpora, we use sacremoses.8 As Seq2Seq models output a text sequence, we need to determine the DMs that were augmented in the text based on the corresponding output sequence. Similar to the approach detailed in Section 3.3, we use a diff-tool implementation, but in this case, we might have multiple mask tokens (one for each ADU). Based on this procedure, we obtain the list of DMs predicted by the model, which we can compare to the list of gold DMs extracted from the original input sequence.

To illustrate this procedure, consider the example in Fig. 1. The gold DMs for this input sequence are [“”, “However”, “”, “In my opinion”]. An empty string means that we have an implicit DM (i.e., no DM preceding the ADU in the original input sequence); for the remaining, an explicit DM was identified in the original input sequence. The predicted DMs are [“Indeed”, “However”, “Furthermore”, “In fact”], as illustrated in the underlined text in boxes (1) and (2) from Fig. 1.

In terms of evaluation protocol, we follow two procedures: (a)

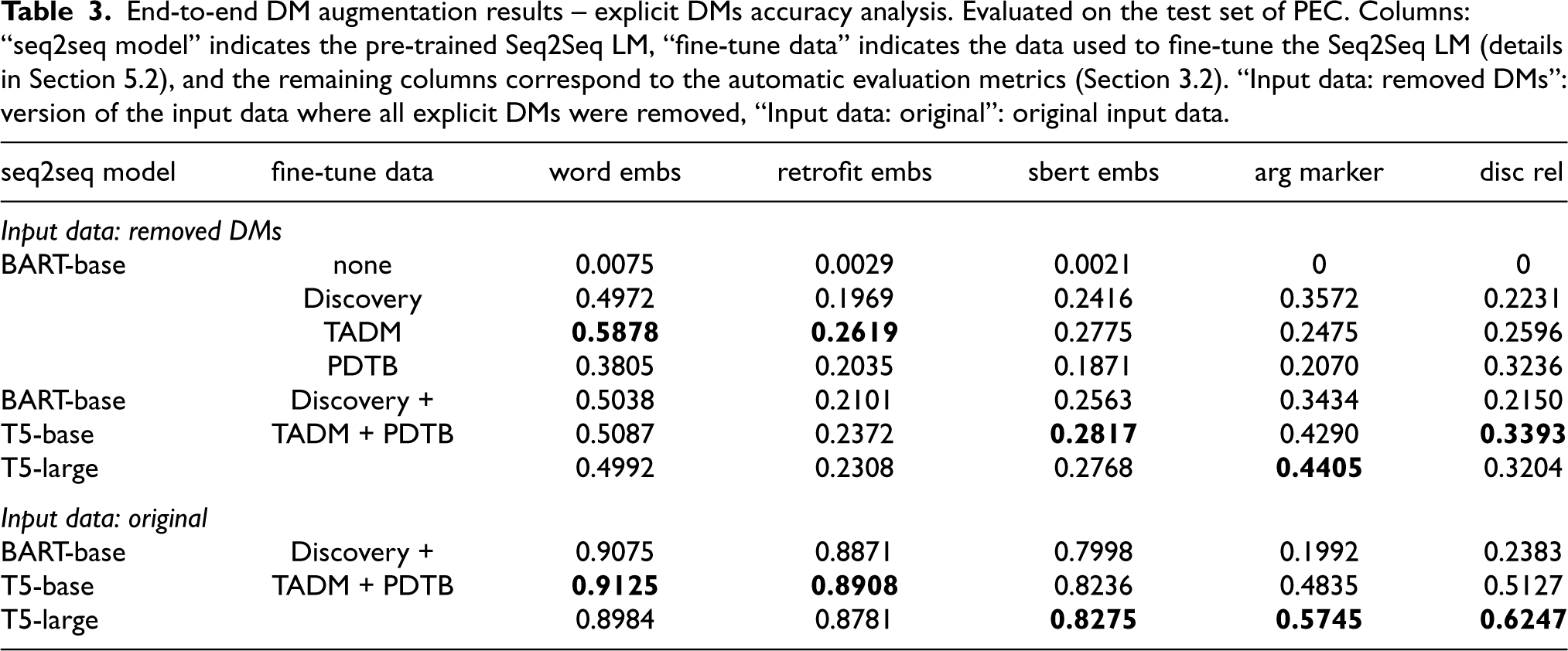

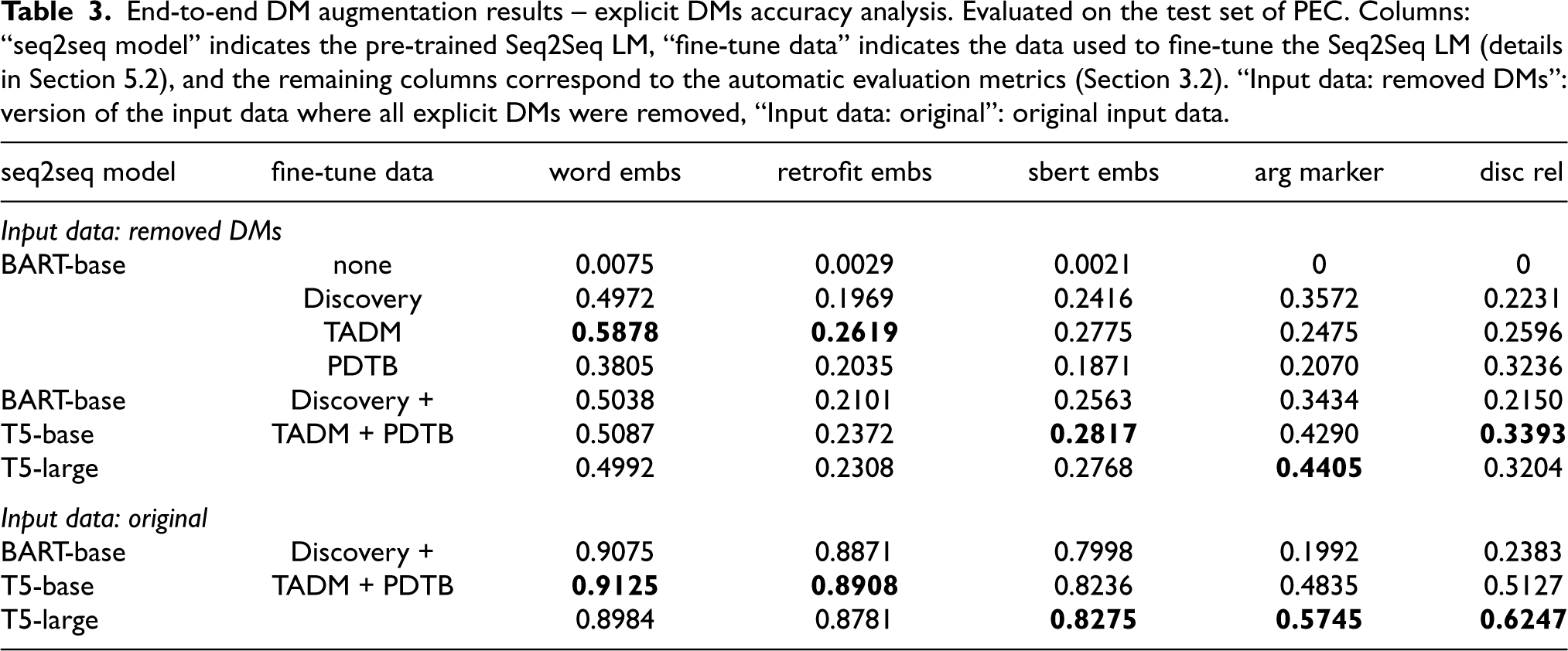

For an input sequence, there may be multiple DMs to add; our metrics average over all occurrences of DMs. Then, we average over all input sequences to obtain the final scores, as reported in Table 3 (for explicit DMs accuracy analysis) and Table 4 (for coverage analysis).

End-to-end DM augmentation results – explicit DMs accuracy analysis. Evaluated on the test set of PEC. Columns: “seq2seq model” indicates the pre-trained Seq2Seq LM, “fine-tune data” indicates the data used to fine-tune the Seq2Seq LM (details in Section 5.2), and the remaining columns correspond to the automatic evaluation metrics (Section 3.2). “Input data: removed DMs”: version of the input data where all explicit DMs were removed, “Input data: original”: original input data.

End-to-end DM augmentation results – explicit DMs accuracy analysis. Evaluated on the test set of PEC. Columns: “seq2seq model” indicates the pre-trained Seq2Seq LM, “fine-tune data” indicates the data used to fine-tune the Seq2Seq LM (details in Section 5.2), and the remaining columns correspond to the automatic evaluation metrics (Section 3.2). “Input data: removed DMs”: version of the input data where all explicit DMs were removed, “Input data: original”: original input data.

Importantly, further changes to the input sequence might be performed by the Seq2Seq model (i.e., besides adding the DMs, the model might also commit/fix grammatical errors, capitalization, etc.), but we ignore these differences for the end-to-end DM augmentation assessment.10

In the following, we describe the data preparation for each corpus (we use the corpora mentioned in Section 4.2) to obtain the input and output sequences (gold data) for training the Seq2Seq models in the end-to-end DM augmentation task.

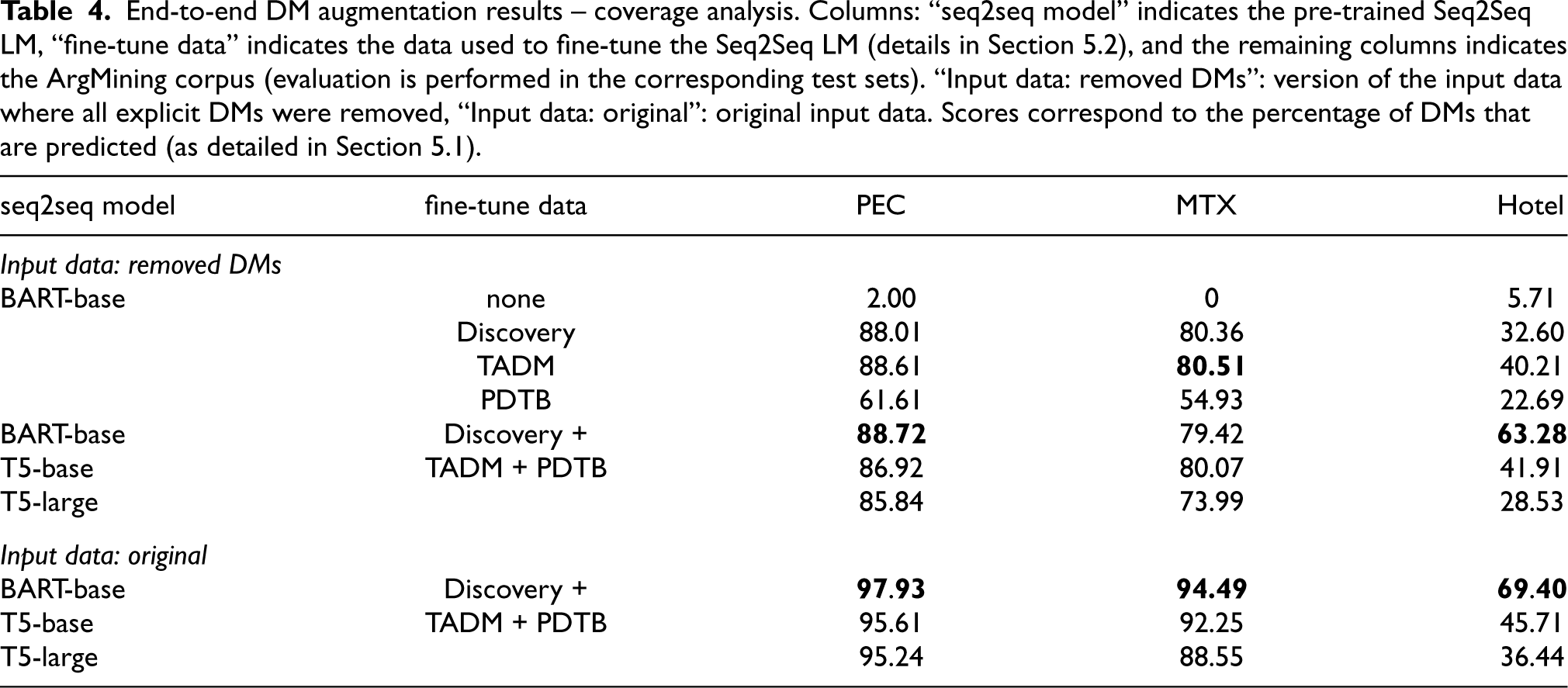

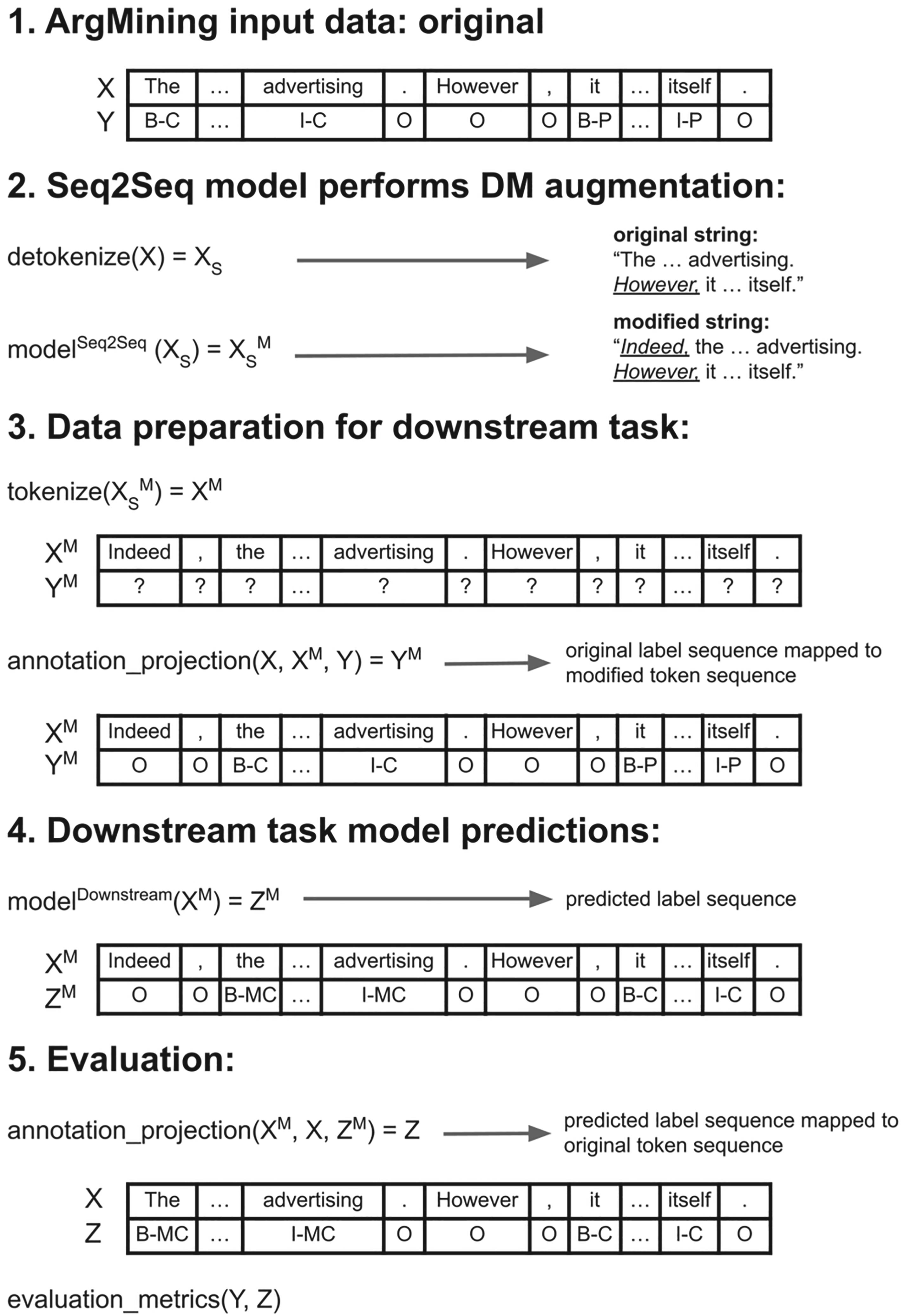

End-to-end DM augmentation results – coverage analysis. Columns: “seq2seq model” indicates the pre-trained Seq2Seq LM, “fine-tune data” indicates the data used to fine-tune the Seq2Seq LM (details in Section 5.2), and the remaining columns indicates the ArgMining corpus (evaluation is performed in the corresponding test sets). “Input data: removed DMs”: version of the input data where all explicit DMs were removed, “Input data: original”: original input data. Scores correspond to the percentage of DMs that are predicted (as detailed in Section 5.1).

End-to-end DM augmentation results – coverage analysis. Columns: “seq2seq model” indicates the pre-trained Seq2Seq LM, “fine-tune data” indicates the data used to fine-tune the Seq2Seq LM (details in Section 5.2), and the remaining columns indicates the ArgMining corpus (evaluation is performed in the corresponding test sets). “Input data: removed DMs”: version of the input data where all explicit DMs were removed, “Input data: original”: original input data. Scores correspond to the percentage of DMs that are predicted (as detailed in Section 5.1).

We start our analysis with the “Input data: removed DMs” setting. First, we observe that the pre-trained

Regarding the “Input data: original” setting, we analyze the results obtained after fine-tuning on the combination of the three corpora. As expected, we obtain higher scores across all metrics compared to “Input data: removed DMs”, as the Seq2Seq model can explore explicit DMs (given that we frame it as a Seq2Seq problem, the model might keep, edit, or remove explicit DMs) to capture the semantics of text and use this information to improve on implicit DM augmentation.

Analyzing the results obtained with the “Input data: removed DMs” setting, we observe that:

For the “Input data: original” setting, we again obtain higher scores. These improvements are smaller for Hotel because the original text is mostly deprived of explicit DMs. Finally, we observe that in this setting, we can obtain very high coverage scores across all corpora:

Comparison with ChatGPT

ChatGPT11 [34] is a popular large language model built on top of the GPT-3.5 series [8] and optimized to interact in a conversational way. Even though ChatGPT is publicly available, interacting with it can only be done with limited access. Consequently, we are unable to conduct large-scale experiments and fine-tune the model on specific tasks. To compare the zero-shot performance of ChatGPT in our task, we run a small-scale experiment and compare the results obtained in the “Input data: removed DMs” setting with the models presented in Section 5.3. We sampled 11 essays from the test set of the PEC (totaling 60 paragraphs) for this small-scale experiment, following the same evaluation protocol described in Section 5.1. ChatGPT is trained to follow an instruction expressed in a prompt, targeting a detailed response that answers the prompt. To this end, we provide the prompt “add all relevant discourse markers to the following text: {}” for each paragraph. Then, we collect the answer provided by ChatGPT as the predicted output sequence. Detailed results can be found in Appendix I. Even though our fine-tuned models surpass ChatGPT in most of the metrics (except for “disc rel”), especially in terms of coverage, it is remarkable that ChatGPT, operating in a zero-shot setting, is competitive. With some fine-tuning, better prompt-tuning or in-context learning, we believe that ChatGPT (and similar LLMs) might excel in the proposed DM augmentation task.

Downstream task evaluation

We assess the impact of the end-to-end DM augmentation approach detailed in Section 5 on an ArgMining downstream task, namely: ADU identification and classification. We operate on the token level with the label set:

Experimental setup

We assess the impact of the proposed DM augmentation approach in the downstream task when the Seq2Seq models (described in Section 5) are asked to augment a text based on two versions (similar to Section 5.3) of the input data: (a) the original input sequence (“Input data: original”); (b) the input sequence obtained after the removal of all explicit DMs (“Input data: removed DMs”).

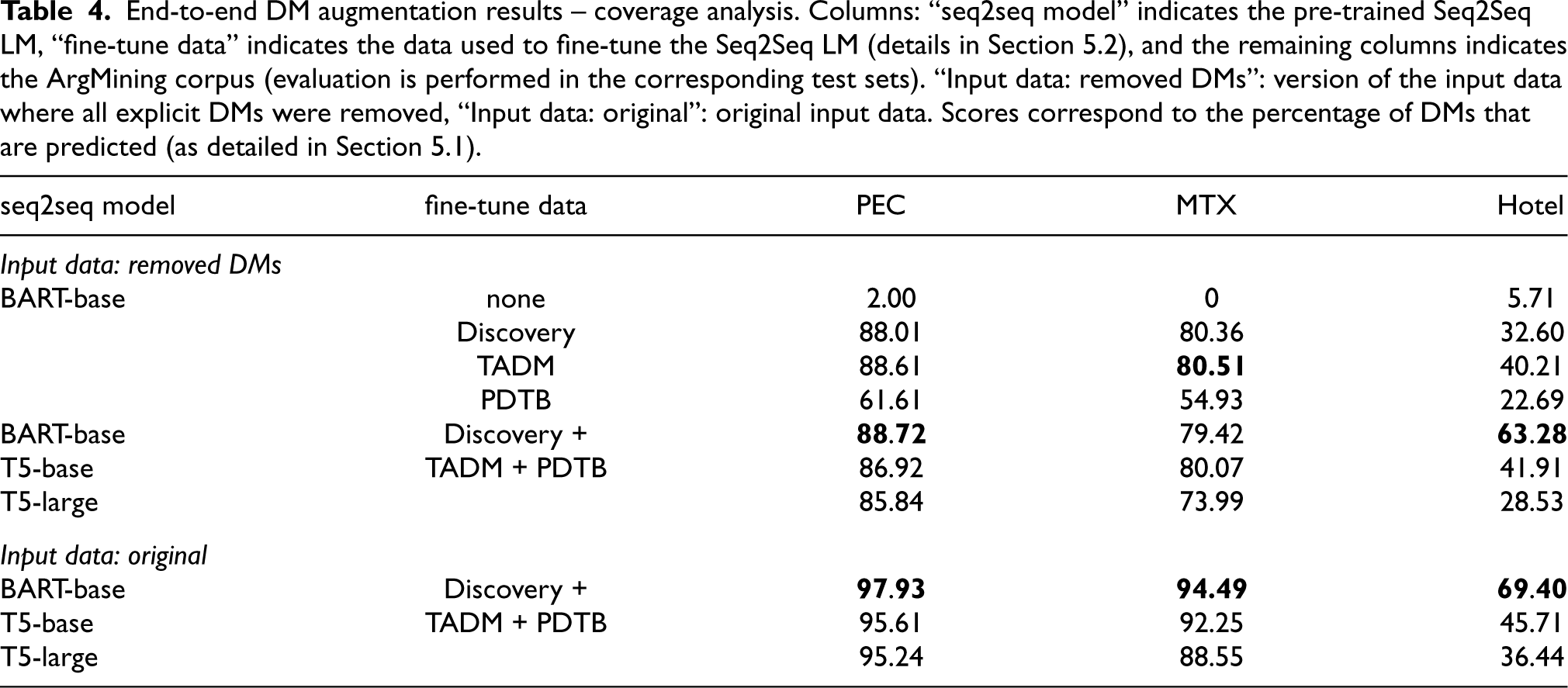

Downstream task experimental setup process (details provided in Section 6.1).

In Fig. 3, we illustrate the experimental setup process using “Input data: original” (step 1), where

In step 2 (Fig. 3), a Seq2Seq model performs DM augmentation. Since Seq2Seq models work with strings and the input data is provided as token sequences, we need to detokenize the original token sequence (resulting in

Given that the output provided by the Seq2Seq model is different from the original token sequence

For a fair comparison between different approaches, in step 5 (Fig. 3), we map back the predicted label sequence (

For the downstream task, we employ a BERT model (“bert-base-cased”) following a typical sequence labeling approach. We use default “AutoModelForTokenClassification” and “TrainingArguments” parameters provided by the HuggingFace library, except for the following: learning rate =

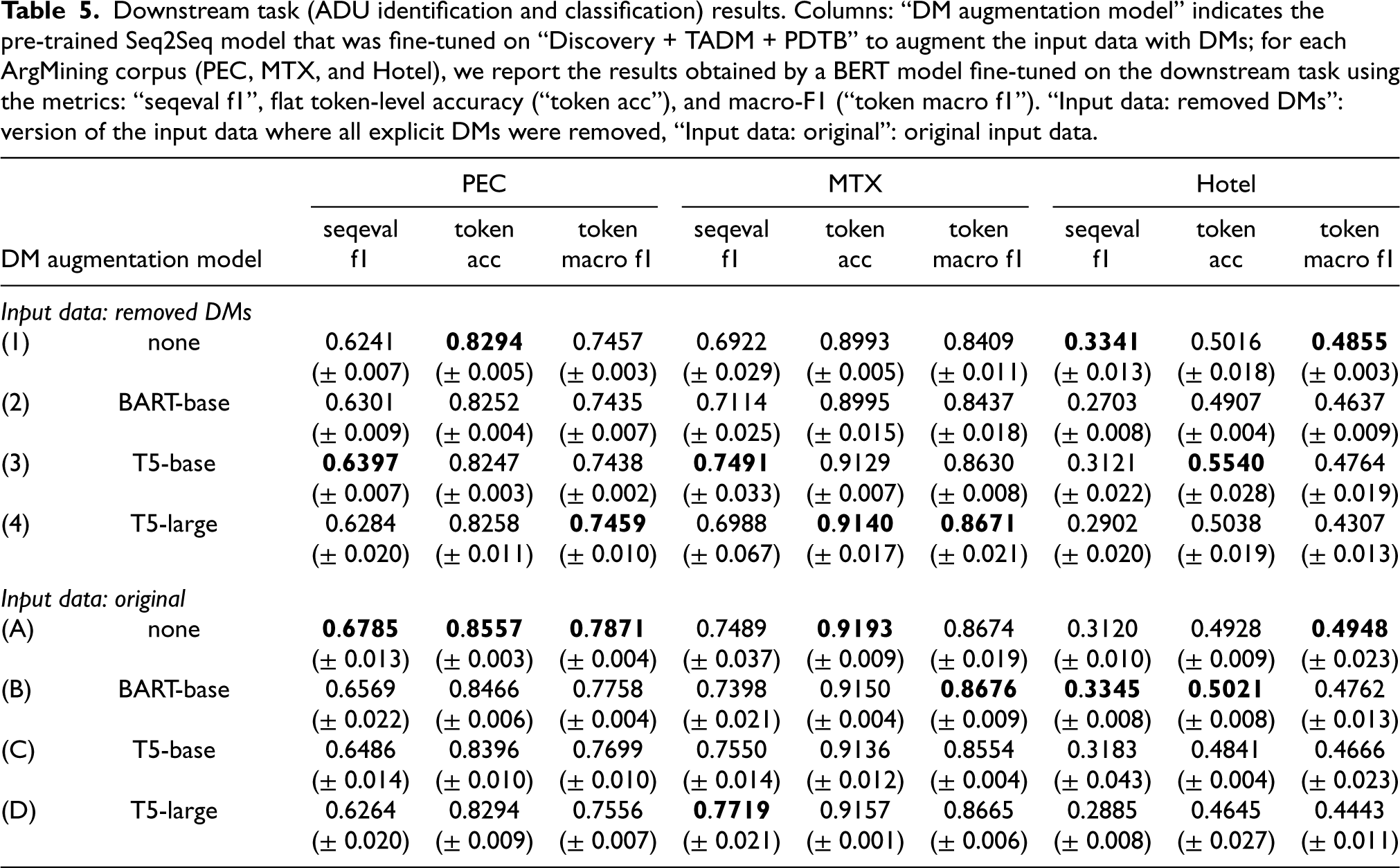

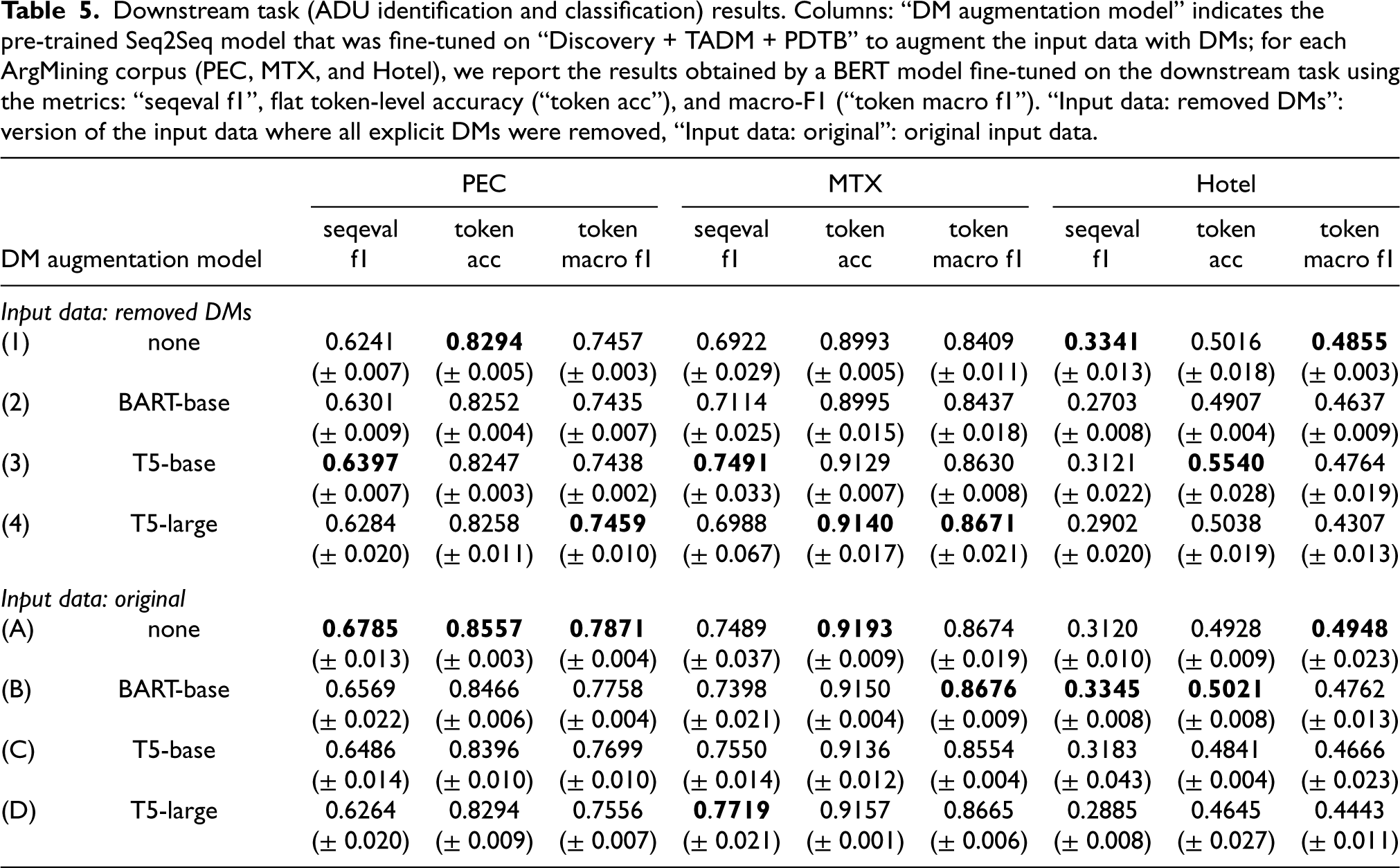

Table 5 shows the results obtained for the downstream task. The line identified with a “none” in the column “DM augmentation model” refers to the scores obtained by a baseline model in the corresponding input data setting, in which the downstream model receives as input the data without the intervention of any DM augmentation Seq2Seq model; for the remaining lines, the input sequence provided to the downstream model was previously augmented using a pre-trained Seq2Seq model (

Downstream task (ADU identification and classification) results. Columns: “DM augmentation model” indicates the pre-trained Seq2Seq model that was fine-tuned on “Discovery + TADM + PDTB” to augment the input data with DMs; for each ArgMining corpus (PEC, MTX, and Hotel), we report the results obtained by a BERT model fine-tuned on the downstream task using the metrics: “seqeval f1”, flat token-level accuracy (“token acc”), and macro-F1 (“token macro f1”). “Input data: removed DMs”: version of the input data where all explicit DMs were removed, “Input data: original”: original input data.

Downstream task (ADU identification and classification) results. Columns: “DM augmentation model” indicates the pre-trained Seq2Seq model that was fine-tuned on “Discovery + TADM + PDTB” to augment the input data with DMs; for each ArgMining corpus (PEC, MTX, and Hotel), we report the results obtained by a BERT model fine-tuned on the downstream task using the metrics: “seqeval f1”, flat token-level accuracy (“token acc”), and macro-F1 (“token macro f1”). “Input data: removed DMs”: version of the input data where all explicit DMs were removed, “Input data: original”: original input data.

We start our analysis by comparing the baseline models, i.e., rows

Most of the results obtained from DM augmentation models that receive as input the original data (“Input data: original”; rows

Comparing row

Comparing row

To summarize, we observe that in a downstream task, augmenting DMs automatically with recent LMs can be beneficial in some, but not all, cases. We believe that with the advent of larger LMs (such as the recent ChatGPT), the capability of these models to perform DM augmentation can be decisively improved in the near future, with a potential impact on downstream tasks (such as ArgMining tasks). Overall, our findings not only show the impact of a DM augmentation approach for a downstream ArgMining task but also demonstrate how the proposed approach can be employed to improve the readability and transparency of argument exposition (i.e., introducing explicit signals that clearly unveil the presence and positioning of the ADUs conveyed in the text).

Finally, we would like to reinforce that the DM augmentation models were fine-tuned on datasets (i.e., “Discovery + TADM + PDTB”) that (a) were not manually annotated for ArgMining tasks and (b) are different from the downstream task evaluation datasets (i.e., PEC, MTX, and Hotel). Consequently, our results indicate that DM augmentation models can be trained on external data to automatically add useful DMs (in some cases, as previously detailed) for downstream task models, despite the differences in the DM augmentation training data and the downstream evaluation data (e.g., domain shift).

We manually sampled some data instances from each ArgMining corpora (Section 4.1) and analyzed the predictions (token-level, for the ADU identification and classification task) made by the downstream task models. Furthermore, we also analyzed the DMs automatically added by the Seq2Seq models, assessing whether it is possible to find associations between the DMs that precede the ADUs and the corresponding ADU labels.

In Appendix K, we provide a detailed analysis for each corpus and show some examples. Overall, we observe that DM augmentation models performed well in terms of coverage, augmenting the text with DMs at appropriate locations (i.e., preceding the DMs). This observation is in line with the conclusions taken from the “Coverage analysis” in Section 5.3. However, we observed that the presence of some DMs that are commonly associated as indicators of specific ADU labels (e.g., “because” and “moreover” typically associated to P) are not consistently used by the downstream model to predict the corresponding ADU label accordingly (i.e., the predicted ADU label varies in the presence of these DMs). We attribute this to the lack of consistency (we observed, for all corpora, that some DMs are associated to different ADU labels) and variability (e.g., on PEC, in the presence of augmented DMs, the label MC does not contain clear indicators; in the original text, these indicators are available and explored by the downstream model) of augmented DMs. We conclude that these limitations in the quality of the predictions provided by the DM augmentation models conditioned the association of DMs and ADU labels that we expected to be performed by the downstream model.

Based on this analysis, our assessment is that erroneous predictions of DMs might interfere with the interpretation of the arguments exposed in the text (and, in some cases, might even mislead the downstream model). This is an expected drawback from a pipeline architecture (i.e., end-to-end DM augmentation followed by the downstream task). However, on the other hand, the potential of DM augmentation approaches is evident, as the presence of coherent and grammatically correct DMs can clearly improve the readability of the text and of argument exposition in particular (as illustrated in the detailed analysis provided in Appendix K).

Related work

Some prior work also studies ArgMining across different corpora. Given the variability of annotation schemas, dealing with different conceptualizations (such as tree vs. graph-based structures, ADU and relation labels, ADU boundaries, among others) is a common challenge [2,10,22]. Besides the variability of annotated resources, ArgMining corpora tend to be small [41]. To overcome these challenges, some approaches explored transfer learning: (a) across different ArgMining corpora [41,52,59]; (b) from auxiliary tasks, such as discourse parsing [1] and fine-tuning pre-trained LMs on large amounts of unlabeled discussion threads from Reddit [16]; and (c) from ArgMining corpora in different languages [18,19,57,66]. Exploring additional training data is pointed out as beneficial across different subtasks, especially under low-resource settings; however, domain-shift and differences in annotation schemas are typically referred to as the main challenges. Our approach differs by proposing DM augmentation to improve the ability of ArgMining models across different genres, without requiring to devise transfer learning approaches to deal with different annotation schemas: given that the DM augmentation follows a text-to-text approach, we can employ corpus-specific models to address the task for each corpus.

Aligned with our proposal, Opitz [45] frames ARC as a plausibility ranking prediction task. The notion of plausibility comes from adding DMs (from a handcrafted set of 4 possible DM pairs) of different categories (support and attack) between two ADUs and determining which of them is more plausible. They report promising results for this subtask, demonstrating that explicitation of DMs can be a feasible approach to tackle some ArgMining subtasks. We aim to go one step further by: (a) employing language models to predict plausible DMs (instead of using a handcrafted set of DMs) and (b) proposing a more realistic DM augmentation scenario, where we receive as input raw text and we do not assume that the ADU boundaries are known.

However, relying on these DMs also has downsides. In a different line of work, Opitz and Frank [46] show that the models they employ to address the task of ARC tend to focus on DMs instead of the actual ADU content. They argue that such a system can be easily fooled in cross-document settings (i.e., ADUs belonging to a given argument can be retrieved from different documents), proposing a context-agnostic model that is constrained to encode only the actual ADU content as an alternative. We believe that our approach addresses these concerns as follows: (a) for the ArgMining tasks addressed in this work, arguments are constrained to document boundaries (cross-document settings are out of scope); (b) given that the DM augmentation models are automatically employed for each document, we hypothesize that the models will take into account the surrounding context and adapt the DMs predictions accordingly (consequently, the downstream model can rely on them).

To close the gap between explicit and implicit DMs, our approach follows the line of work on explicitation. However, we work in a more challenging scenario, where the DM augmentation is performed at the paragraph level following an end-to-end approach (i.e., from raw text to a DM-augmented text). Consequently, our approach differs from prior work in multiple ways: (a) we aim to explicitate all discourse relations at the paragraph level, while prior work focuses on one relation at a time [28,29,60,64] (our models can explore a wider context window and take into account the interdependencies between different discourse relations), and (b) we do not require any additional information, such as prior knowledge regarding discourse units boundaries (e.g., clauses or sentences) [28,29,60,64] or the target discourse relations [64].

Conclusions

In this paper, we propose to automatically augment a text with DMs to improve the robustness of ArgMining systems across different genres.

First, we describe a synthetic template-based test suite created to assess the capabilities of recent LMs to predict DMs and whether LMs are sensitive to specific semantically-critical edits in the text. We show that LMs underperform this task in a zero-shot setting, but the performance can be improved after some fine-tuning.

Then, we assess whether LMs can be employed to automatically augment a text with coherent and grammatically correct DMs in an end-to-end setting. We collect a heterogeneous collection of DM-related datasets and show that fine-tuning LMs in this collection improves the ability of LMs in this task.

Finally, we evaluate the impact of augmented DMs performed by the proposed end-to-end DM augmentation models on the performance of a downstream model (across different ArgMining corpora). We obtained mixed results across different corpora. Our analysis indicates that DM augmentation models performed well in terms of coverage; however, the lack of consistency and variability of the augmented DMs conditioned the association of DMs and ADU labels that we expected to be performed by the downstream model.

In future work, we would like to assess how recent LLMs perform in these tasks. Additionally, we would like to increase and improve the variability and quality of the heterogeneous collection of data instances used to fine-tune the end-to-end DM augmentation models (possibly including data related to ArgMining tasks that might inform the models about DMs that are more predominant in ArgMining domains), as improving in this axis might have a direct impact in the downstream task performance.

We believe that our findings are evidence of the potential of DM augmentation approaches. DM augmentation models can be deployed to improve the readability and transparency of arguments exposed in written text, such as embedding this approach in writing assistant tools.

Limitations

One of the anchors of this work is evidence from prior work that DMs can play an important role to identify and classify ADUs; prior work is mostly based on DMs preceding the ADUs. Consequently, we focus on DMs preceding the ADUs. We note that DMs following ADUs might also occur in natural language and might be indicative of ADU roles. However, this phenomenon is less frequent in natural language and also less studied in related work [18,30,61].

The TADM dataset proposed in Section 3.1 follows a template-based approach, instantiated with examples extracted from the CoPAs provided by Bilu et al. [4]. While some control over linguistic phenomena occurring in the dataset was important to investigate our hypothesis, the downside is a lack of diversity. Nonetheless, we believe that the dataset contains enough diversity for the purposes studied in this work (e.g., different topics, several parameters that result in different sentence structures, etc.). Future work might include extending the dataset with a wider spectrum of DMs, data instances, templates, and argument structures (e.g., including linked and serial argument structures besides the convergent structures already included).

Our proposed approach follows a pipeline architecture: end-to-end DM augmentation followed by the downstream task. Consequently, erroneous predictions made by the DM augmentation model might mislead the downstream task model. Furthermore, the end-to-end DM augmentation employs a Seq2Seq model. Even though these models were trained to add DMs without changing further content, it might happen in some cases that the original ADU content is changed by the model. We foresee that, in extreme cases, these edits might lead to a different argument content being expressed (e.g., changing the stance, adding/removing negation expressions, etc.); however, we note that we did not observe this in our experiments. In a few cases, we observed minor edits being performed to the content of the ADUs, mostly related to grammatical corrections.

We point out that despite the limited effectiveness of the proposed DM augmentation approach in improving the downstream task scores in some settings, our proposal is grounded on a well-motivated and promising research hypothesis, solid experimental setup, and detailed error analysis that we hope can guide future research. Similar to recent trends in the community (Insights NLP workshop [65], ICBINB Neurips workshop and initiative,13 etc.), we believe that well-motivated and well-executed research can also contribute to the progress of science, going beyond the current emphasis on state-of-the-art results.

Footnotes

Acknowledgements

Gil Rocha was supported by a PhD grant (SFRH/BD/140125/2018) from Fundação para a Ciência e a Tecnologia (FCT). This work was supported by Base Funding (UIDB/00027/2020) and Programmatic Funding (UIDP/00027/2020) of the Artificial Intelligence and Computer Science Laboratory (LIACC) funded by national funds through FCT/MCTES (PIDDAC). The NLLG group is supported by the BMBF grant “Metrics4NLG” and the DFG Heisenberg Grant EG 375/5–1. We would like to thank the anonymous reviewers for their valuable and thorough feedback that greatly helped improve the current version of the paper.

TADM dataset – templates

The templates are based on a set of configurable parameters, namely:

number of ADUs stance role claim position premise role supportive premise position prediction type

TADM dataset – DMs set

DMs are added based on the role of the ADU that they precede, using the following fixed set of DMs. If preceding the claim, then we add one the following: “I think that”, “in my opinion”, or “I believe that”. For the supportive premise, if in position

TADM dataset – instantiation procedure based on CoPAs

CoPAs are sets of propositions that are often used when debating a recurring theme (e.g., the premises mentioned in Section 3.1 and used in Fig. 2 are related to the theme “Clean energy”). For each CoPA, Bilu et al. [4] provide two propositions that people tend to agree as supporting different points of view for a given theme. We use these propositions as supportive and attacking premises towards a given claim. Each CoPA is also associated with a set of motions to which the corresponding theme is relevant. A motion is defined as a pair

Automatic evaluation metrics

The evaluation metrics employed to assess the quality of the predicted DMs are the following:

word embeddings text similarity (“word embs”): Cosine similarity using an average of word vectors. Based on pre-trained embeddings “en_core_web_lg” from Spacy library;15 retrofitted word embeddings text similarity (“retrofit embs”): Cosine similarity using an average of word vectors. Based on pre-trained embeddings from LEAR;16 sentence embeddings text similarity (“sbert embs”): we use the pre-trained sentence embeddings “all-mpnet-base-v2” from SBERT library [55], indicated as the model with the highest average performance on encoding sentences over 14 diverse tasks from different domains. To compare gold and predicted DMs representations, we use cosine similarity; argument marker sense (“arg marker”): list of 115 DMs from Stab and Gurevych [63]. Senses are divided in the categories: “forward”, “backward”, “thesis”, and “rebuttal”. Each gold and predicted DM is mapped to one of the senses based on a strict lexical match with the list of DMs available for each sense. If the DM is not matched, then we assign the label “none”. If the gold DM is “none”, we do not consider this instance in the evaluation (the DM is out of the scope for the list of DMs available in the senses list, so we cannot make a concrete comparison with the predicted DM); discourse relation sense (“disc rel”): we use a lexicon of 149 English DMs called “DiMLex-Eng” [12]. These DMs were extracted from PDTB 2.0 [51], RST-SC [13], and Relational Indicator List [5]. Each DM maps to a set of possible PDTB senses. For a given DM, we choose the sense that the DM occurs more frequently. PDTB senses are organized hierarchically in 3 levels (e.g., the DM “consequently” is mapped to the sense “Contingency.Cause.Result”). In this work, we consider only the first level of the senses (i.e., “Comparison”, “Contingency”, “Expansion”, and “Temporal”) as a simplification and to avoid the propagation of errors between levels (i.e., an error in level 1 entails an error in level 2, and so on). Each prediction is mapped to one of the senses based on a strict lexical match. If the word is not matched, then we assign the label “none”;

Fill-in-the-mask discourse marker prediction – BART V2 additional edits analysis

As detailed in Section 3.3, for the fill-in-the-mask discourse marker prediction task, we report the results of BART following a Seq2Seq setting (dubbed as

TADM dataset – distribution of samples

Table 7 shows the distribution of samples in the train, dev, and test set of TADM dataset according to the discourse-level senses “arg marker” and “disc rel” for the gold DM that should replace the corresponding “<mask>” token. We report the distribution of samples for each of the settings explored in fine-tuning experiments (i.e., “2 ADUs”, “3 ADUs”, “pred type 1”, and “pred type 2”, as detailed in Section 3.4). The total number of samples in TADM dataset (without performing any selection of samples) is reported under the “all” column.

Regarding the discourse-level senses “arg marker” and “disc rel”, we first observe that the distribution of samples is unbalanced. The selection of gold DMs is based on the DM’s role in the templates explored in the TADM dataset. Consequently, these distributions reflect the roles that the DMs play in the TADM dataset. Second, we note the presence of “none” in “disc rel”. All these cases correspond to a DM preceding a claim (i.e., “I think that”, “in my opinion”, or “I believe that”, as indicated in Appendix B), which are mapped to the senses “thesis” (“arg marker” sense) and “none” (“disc rel” sense). We recall that for evaluation purposes if the gold DM is mapped to “none”, we do not consider the corresponding instance in the evaluation (the DM is out of the scope for the list of DMs available in the senses list, so we cannot make a concrete comparison with the predicted DM).

Fill-in-the-mask discourse marker prediction – error analysis

Table 8 shows some samples and the corresponding gold DMs (accompanied by the discourse-level senses “arg marker” and “disc rel” in parenthesis) from the test set of the TADM dataset. In this table, we highlight some of the challenging samples that can be found in the TADM dataset. More concretely, consecutive pairs of samples in Table 8 contain samples extracted from the TADM dataset that belong to the same instantiation of the core elements but follow different templates, resulting in samples that share some content but also contain targeted edits that require the models to adjust the prediction of DMs accordingly. For instance, samples with id 1 and 2 differ in the parameter “premise role”, which changes the premise that is generated and requires the model to predict different DMs in these samples (despite sharing the same claim); samples 3 and 4 differ in two parameters, “stance role” that dictates the stance of the claim and “supportive premise position” that dictates the position of the supportive premise, requiring the model to predict the same DM in both samples; samples 5 and 6 differ in the parameter “stance role”, requiring the model to predict different DMs; and samples 7 and 8 also differ in a single parameter, the “stance role”, requiring the model to predict different DMs.

Predictions made by the zero-shot models and fine-tuned

Human evaluation – additional details

Table 10 shows some of the samples extracted from the TADM dataset that were analyzed in human evaluation experiments. For each sample, we provided to the annotators the “Masked sentence” received as input by the zero-shot models explored in the fill-in-the-mask discourse marker prediction task (Section 3) and the corresponding “Prediction” made by the models. As detailed in Section 3.6, each annotator rated the samples based on the criteria “Grammaticality” and “Coherence”. The ratings presented in Table 10 correspond to the majority voting obtained from the ratings provided by the three annotators.

Tables 11 and 12 show the confusion matrices between each pair of annotators (“A1”, “A2”, and “A3”) for the ratings provided in the “Grammaticality” and “Coherence’ criteria, respectively.

Regarding the “Grammaticality” criterion, most samples (approximately

Regarding “Coherence”,

End-to-end DM augmentation results – comparison with ChatGPT

Table 13 shows the results obtained for the small-scale end-to-end DM augmentation experiment with ChatGPT, including both the explicit DMs accuracy and coverage analysis.

Annotation projection

To map the label sequence from the original sequence to the modified sequence, we implement the Needleman-Wunsch algorithm [42], a well-known sequence alignment algorithm. As input, it receives the original and modified token sequences. The output is an alignment of the token sequences, token by token, where the goal is to optimize a global score. This algorithm might include a special token (the “gap” token) in the output sequences. Gap tokens are inserted to optimize the alignment of identical tokens in successive sequences. The global score attributed to a given alignment is based on a scoring system. We use default values: match score (tokens are identical) = 1, mismatch score (tokens are different but aligned to optimize alignment sequence) =

Table 14 illustrates some examples of the output obtained when employing the annotation projection procedure described in this section to the three ArgMining corpora explored in this work. “Original text” corresponds to the original text from which gold annotations for ADU identification and classification are provided in the ArgMining corpora. “Text augmented with DMs + Annotation Projection” corresponds to the text obtained after performing DM augmentation (using the pre-trained Seq2Seq model

Downstream task evaluation – error analysis

We show some examples of the gold data and predictions made by the downstream task models for the ArgMining corpora explored in this work.

For each example, we provide: (a) “Gold”: the gold data, including the ADU boundaries (square brackets) and the ADU labels (acronyms in subscript); and (b) “Input data: X (Y)”: where “X” indicates the version of the input data provided to the DM augmentation model, and “Y” indicates whether we perform DM augmentation or not (“none” indicates that we do not perform DM augmentation and

Tables 15 and 16 show two examples from PEC. Table 15 shows a paragraph containing a C and MC in the “Gold” data annotations. We observe that in the “Input data: original (none)” setup, the model predicts MC frequently in the presence of a DM that can be mapped to the “arg marker” sense “thesis” (e.g., “in conclusion”, “in my opinion”, “as far as I am concerned”, “I believe that”, etc.). Similar patterns can be observed in the “Gold” data annotations. We were not able to find similar associations in the “Input data: removed DMs (

Table 17 shows an example from MTX. Regarding the setups containing the original data (i.e., “Gold” annotations and the predictions made for “Input data: original (none)”), besides a single occurrence of “therefore” and “nevertheless”, all the remaining C do not contain a DM preceding them (this analysis is constrained to the test set). Some of the ADUs labeled as P are preceded with DMs (most common DMs are: “and” (6), “but” (10), “yet” (4), and “besides” (3)), even though most of them (44) are not preceded by a DM (numbers in parentheses correspond to the number of occurrences in the test set for the “Gold” annotations, similar numbers are obtained for “Input data: original (none)”). DM augmentation approaches performed well in terms of coverage, with most of the ADUs being preceded by DMs. We can observe in Table 17 that some ADU labels become more evident after the DM augmentation performed by the models proposed in this work (“Input data: removed DMs (

Finally, Table 18 shows an example from Hotel. Similar to the observations made for MTX, in the setups containing the original data (i.e., “Gold” annotations and the predictions made for “Input data: original (none)”), most ADUs are not preceded by DMs. The only exception is the DM “and” that occurs with some frequency preceding C (10 out of 199 ADUs labeled as C) and P (4 out of 41). For instance, in Table 18, 9 ADUs were annotated and none of them is preceded by a DM; making the annotation of ADUs (arguably) very challenging. Despite the lack of explicit clues, downstream models perform relatively well in this example, only missing the two gold IPs (not identified as an ADU in one of the cases and predicted as C in the other case) and erroneously labeling as C the only sentence in the gold data that is not annotated as an ADU. Also similar to MTX, DM augmentation approaches performed well in terms of coverage, with most ADUs being preceded by DMs. However, as observed in Table 18, the impact on the downstream model predictions is small (the predictions for all the setups are similar, the only exception is the extra split on “so was the bathroom” performed in “Input data: removed DMs (