Abstract

The Better Access to Psychiatrists, Psychologists and General Practitioners through the Medicare Benefits Schedule (Better Access) initiative was introduced in November 2006 in response to low treatment rates for common mental disorders. Its ultimate aim is to improve outcomes for people with such disorders by encouraging a multi-disciplinary approach to their care. Its key feature is a series of item numbers which were added to the Medicare Benefits Schedule (MBS) to provide a rebate for selected services provided by GPs, psychiatrists, psychologists, social workers and occupational therapists.

In 2009, the Commonwealth Department of Health and Ageing commissioned an evaluation of the Better Access initiative, and appointed a Project Steering Committee to provide advice to the evaluation. We were contracted to undertake several of the components of the evaluation, including a study profiling patients who had received Better Access services from clinical psychologists, registered psychologists and GPs, and examining the outcomes of their care. The current paper presents the findings from this study; further detail can be found in the full report [1].

Method

Recruitment of providers and patients

The Medical Benefits Division of the Department of Health and Ageing acted as an intermediary in the recruitment of psychologists and GPs, identifying the potential pool of providers through their use of the relevant Medicare item numbers. Random samples (stratified by urbanicity/rurality) of clinical psychologists (n = 509), registered psychologists (n = 640) and GPs (n = 1280) were selected from listings of those who billed for at least 100 occasions of service under the Better Access item numbers in 2008. The Medical Benefits Division provided us with contact details for these providers, and we sent them letters of invitation, plain language statements and consent forms. We subsequently conducted a second mail-out, in order to maximize our response rate. Providers who agreed to participate returned signed consent forms to the study team, and were enrolled in the project.

Participating providers acted as intermediaries and were asked to recruit their next 5–10 English-speaking patients when they first presented for services partially or fully funded through the MBS item numbers. On the assumption that some patients would decline, providers were asked to approach up to 20 consecutive ‘new’ patients. Patients who agreed to be part of the project were asked to sign a consent form and return it to the recruiting provider. Providers then forwarded these consent forms to our study team. This method ensured that only the names and contact details of patients who agreed to participate were made known to us.

Data collection

The data collection period ran from 1 October 2009 to 31 October 2010. Four main types of data were collected from patients and providers via a password protected, secure, web-based minimum dataset:

Provider-level data (demographic, professional): These data were collected from providers when they enrolled in the project and included demographic details and professional qualification(s).

Patient-level data (socio-demographic, clinical): The majority of these data were collected from patients when they began treatment (i.e. at their first session) and included demographic details, socio-economic indicators (e.g. postcode, health care card status) and clinical information (e.g. previous psychiatric service use). Additional clinical information (e.g. diagnosis) relied on providers’ judgements.

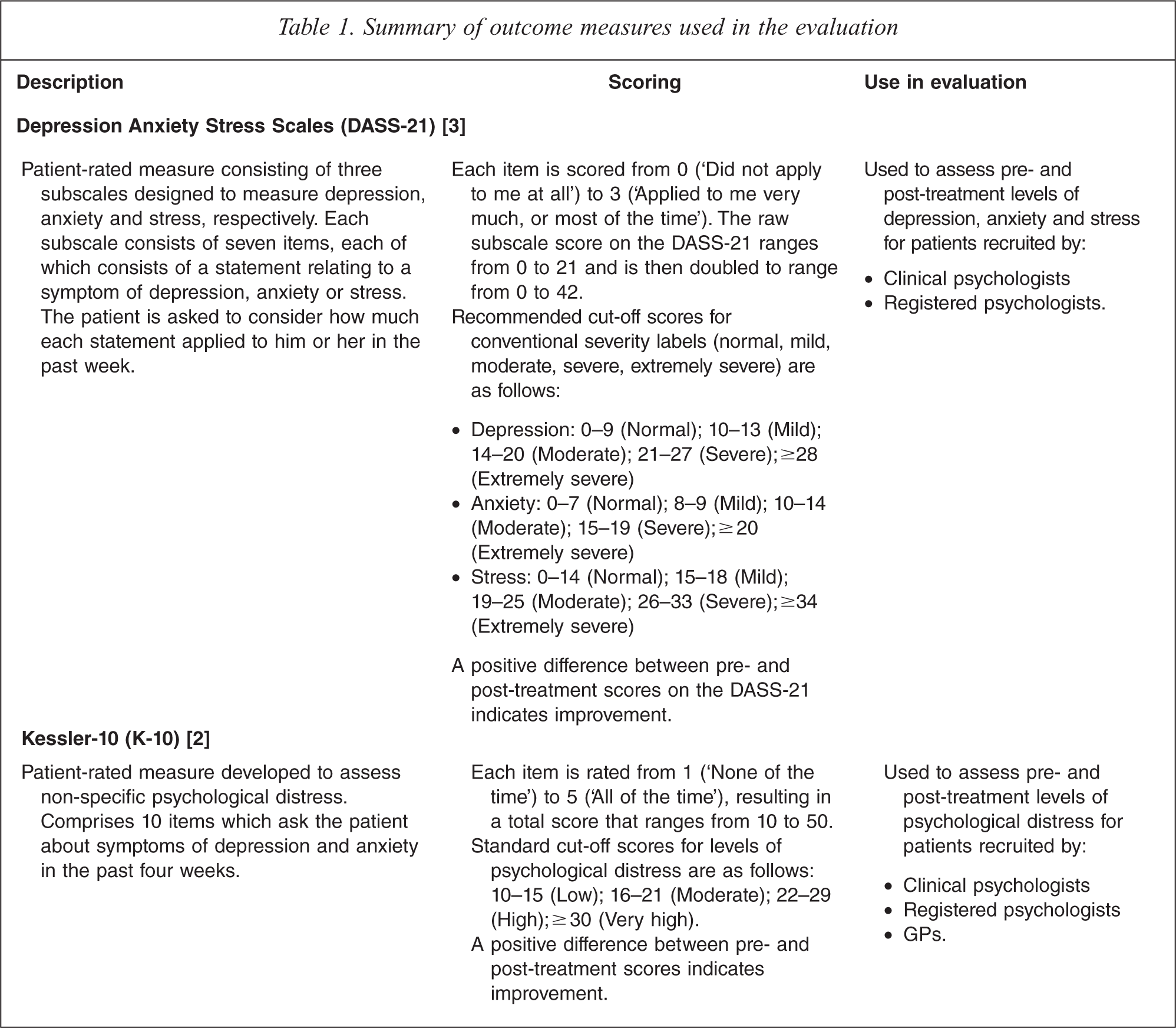

Patient-level (outcomes): These data involved the use of two standardized outcome measures, namely the Kessler-10 (K-10)2 (used with patients recruited by all providers) and Depression Anxiety Stress Scales (DASS-21)3 (used with patients recruited by clinical and registered psychologists). Table 1 summarizes the key characteristics of these outcome measures.

Session-level data: These data were collected at each session and included detail on the duration of the session, the assessment(s) and intervention(s) that were provided during its course, the item number billed, and whether the session attracted a co-payment.

Summary of outcome measures used in the evaluation

Logistically, data entry into the minimum dataset worked in the following way. The minimum dataset contained linked provider, patient and session modules, each of which took the form of a screen that showed the relevant questions and provided check boxes which could be automatically ticked as appropriate. Once they were recruited into the study, consenting providers were asked to complete a form containing the provider-level data and return it to the study team. We entered this information into the minimum dataset on the providers’ behalf. Providers were then issued with a user name and password which gave them access to the minimum dataset via the Internet. They were then able to enter data into it from their own computers. They were asked to begin the process of recruiting their 5–10 new English speaking patients. Once the patients were recruited, providers collected the relevant patient-level data for them at the required points in time. In most cases this involved asking patients to complete a paper-based version of the particular instrument. Providers then took the completed paper-based forms from patients, and entered the relevant data into the minimum dataset. For example, in the case of the patient-level outcome data, patients were given paper-based versions of the instruments and asked to complete them before they left. Once they returned these, providers entered the data into the web-based minimum dataset. Providers differed in how they chose to do this; some encouraged patients to complete the instruments during the session, whereas others asked them to complete them in the waiting room once the session was over. The patient-based clinical data on diagnosis was not elicited from patients, but relied on judgements made by providers. Similarly, the session-based data did not require input from patients, and was generated by providers. These data were entered by providers into the minimum dataset in the same way as data elicited directly from patients.

Additional data on patients’ experiences were sought via interviews and surveys. Further detail about this component of the data collection is available elsewhere [1].

Data analysis

We used paired t-tests to examine the difference between mean pre- and post-treatment scores on the K-10 and the DASS-21, excluding patients who did not have a ‘matched pair’ of pre- and post-treatment scores. We then conducted linear regression analyses using scores on the K-10 as the outcome of interest, and a range of socio-demographic, clinical and treatment variables as covariates. We selected the K-10 for this analysis because it was available for patients from all provider groups, whereas the DASS-21 was only available for patients who had been recruited by clinical and registered psychologists. Because outcomes for patients recruited by the same provider were likely to be correlated, variance was calculated using cluster-robust standard errors. Pre-treatment scores were included as a covariate. The effect of categorical predictors was assessed using the joint Wald test.

Presentation and interpretation of findings

In general, the findings are presented separately for patients recruited by clinical psychologists, registered psychologists and GPs. The study design meant that it was not appropriate to aggregate data across these three groups, or to explore whether statistically significant differences existed between the groups.

Results

Socio-demographic and professional profiles of participating providers

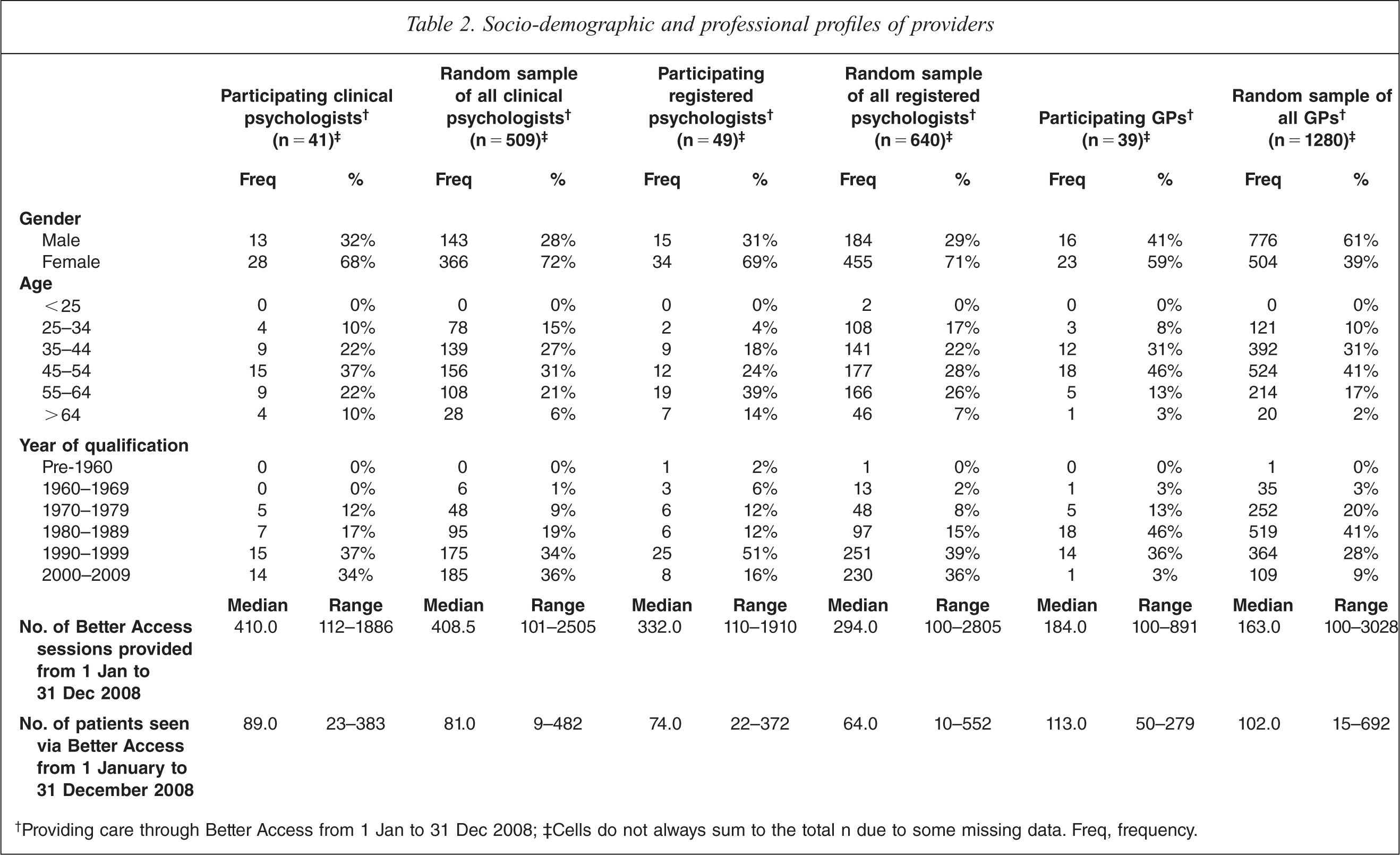

In total, 129 providers participated–41 clinical psychologists, 49 registered psychologists and 39 GPs (response rates of 8%, 8% and 3%, respectively). Table 2 compares participating providers and the overall samples of providers from which they were recruited in terms of their demographic and professional details, and their delivery of care through Better Access.

Socio-demographic and professional profiles of providers

†Providing care through Better Access from 1 Jan to 31 Dec 2008; ‡Cells do not always sum to the total n due to some missing data. Freq, frequency.

The participating clinical psychologists and registered psychologists were similar to the groups from which they were drawn in terms of gender, with two thirds being female. Nearly two thirds of the participating GPs were also female, but only about one third of the random sample of GPs were. Around 80% of all participating clinical psychologists, registered psychologists and GPs were accounted for by those aged between 35 and 64; the same was true for the random samples from which these groups came. The majority (around 80%) of both groups of participating psychologists had qualified after 1990, and a similar proportion of participating GPs had done so after 1980. These figures corresponded with the overall random samples.

Participating clinical psychologists shared a similar activity profile with the broader group from which they came; on average, they had provided a similar number of Better Access sessions and seen a similar number of patients in 2008. Participating registered psychologists and participating GPs had typically provided a slightly higher number of sessions and seen a slightly higher number of patients than the groups from which they came.

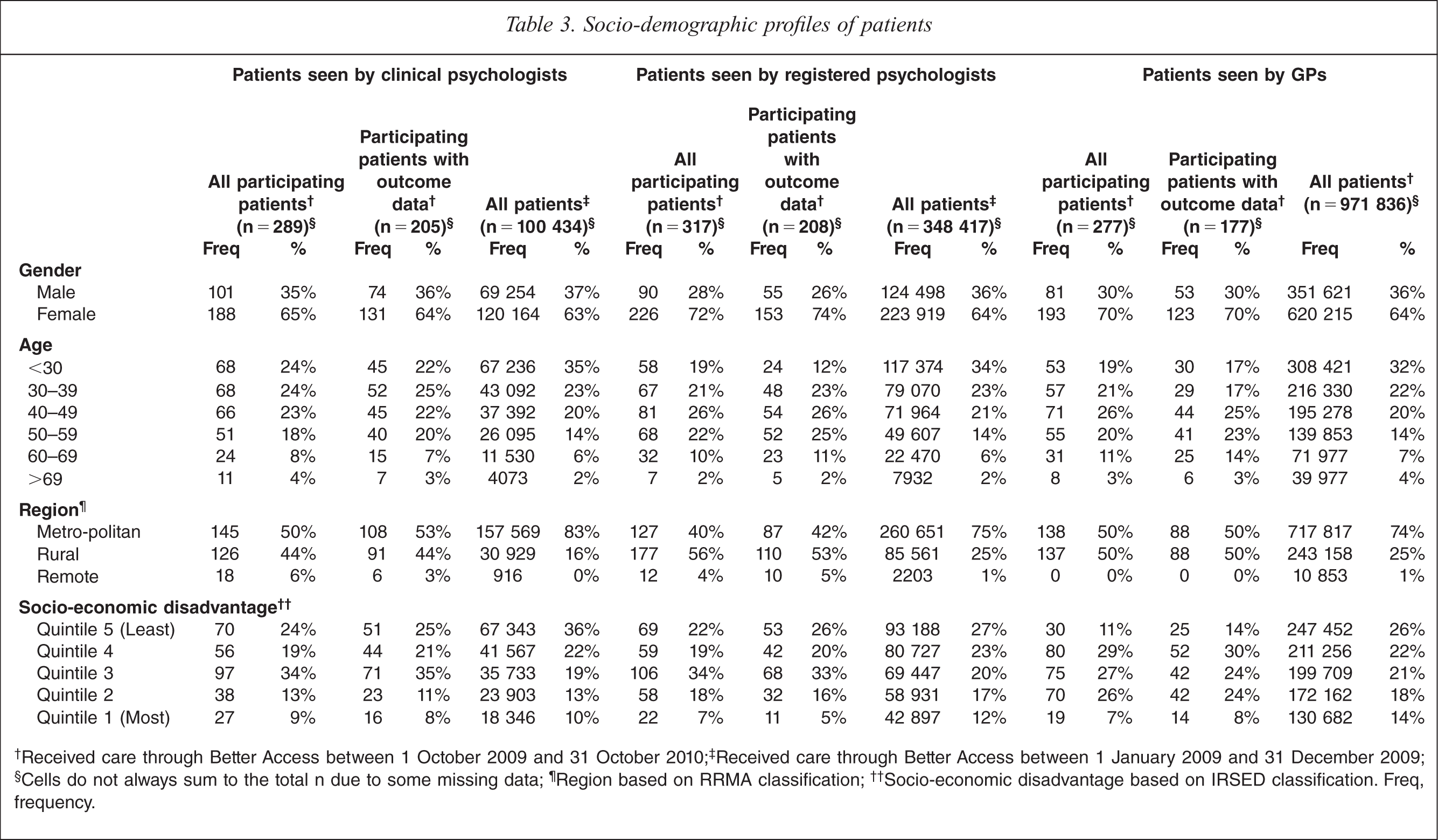

Socio-demographic profiles of participating patients

A total of 883 patients were recruited into the study (289 by clinical psychologists, 317 by registered psychologists and 277 by GPs). Pre- and post-treatment pairs of outcome data were available for 590 patients (205 recruited by clinical psychologists, 208 recruited by registered psychologists, and 177 recruited by GPs).

Table 3 provides a breakdown of the key socio-demographic characteristics of all participating patients and patients for whom outcome data were available, and compares them with the overall group of Better Access patients seen by the relevant groups of providers from 1 January 2009 to 31 December 2009. It should be noted that this time frame differs from the period in which participating patients received care from Better Access (1 October 2009 to 31 October 2010), but was chosen as the closest full one year period for which Medicare data were readily available.

Socio-demographic profiles of patients

†Received care through Better Access between 1 October 2009 and 31 October 2010; ‡Received care through Better Access between 1 January 2009 and 31 December 2009; §Cells do not always sum to the total n due to some missing data; ¶Region based on RRMA classification; ††Socio-economic disadvantage based on IRSED classification. Freq, frequency.

Participating patients who were recruited by clinical psychologists, registered psychologists and GPs, and the subsamples for whom outcome data were available, were broadly similar to all patients who received Better Access care from these providers in terms of their age and gender. In each case, about two thirds were female, and three quarters were accounted for by the youngest three age groupings.

Patients from rural and remote areas were somewhat over-represented among our participating patients (and the subsamples for whom outcome data were available) according to the Rural, Remote and Metropolitan Areas (RRMA) system; more than half of our patients fell into these groups, whereas around one quarter of all patients did so. Patients from relatively more socio-economically disadvantaged areas were also somewhat over-represented in our samples and subsamples; three fifths or more resided in areas deemed to be in the bottom three Index of Relative Socio-Economic Disadvantage (IRSED) quintiles, whereas less than half of the total patient populations did so. The over-representation of rural patients can be explained by our sampling strategy, which deliberately over-sampled rural providers (and, consequently, rural patients). This is likely to have also had some bearing on our over-representation of patients from socio-economically disadvantaged areas, although it would not completely explain it.

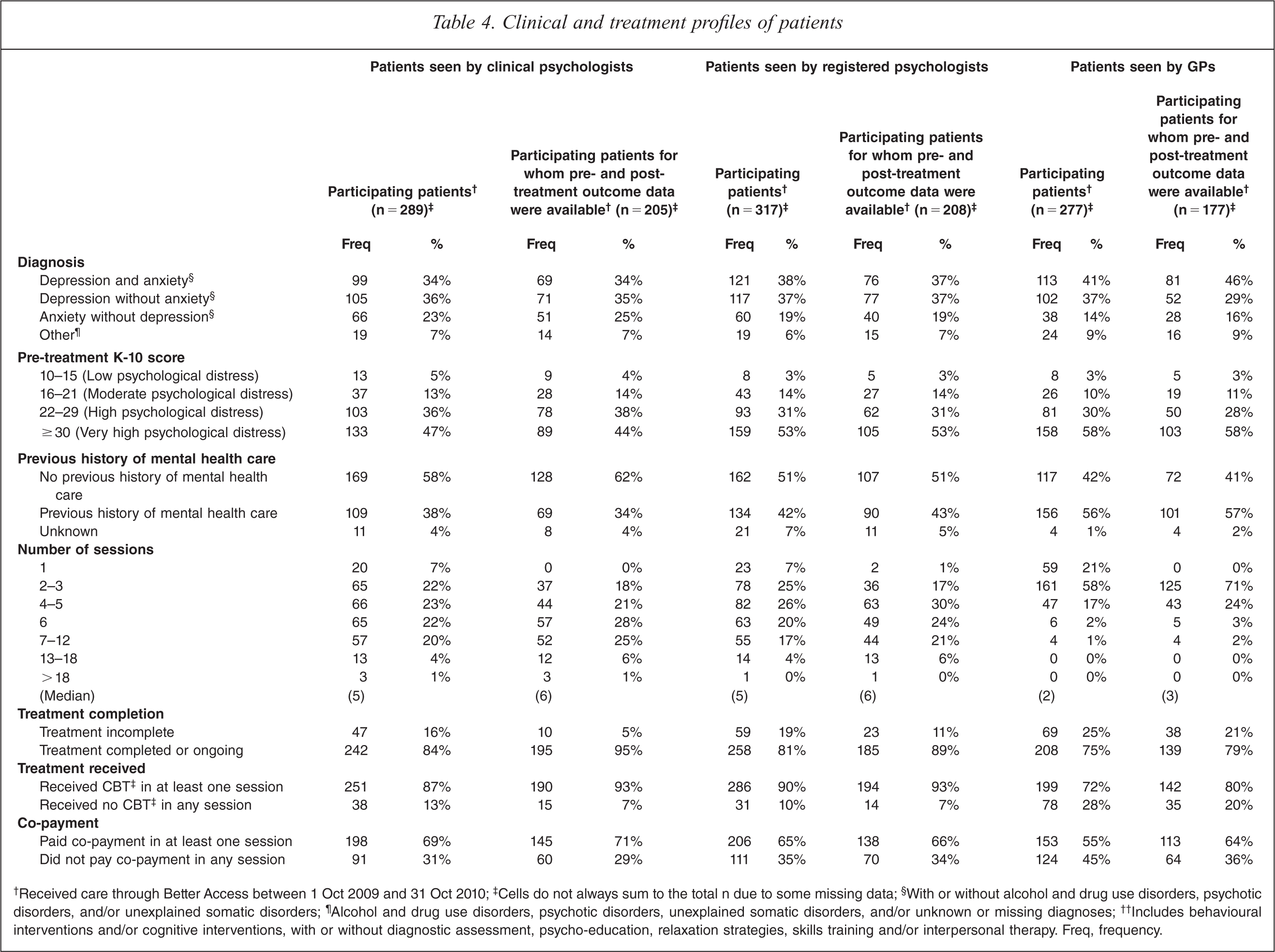

Clinical and treatment characteristics of participating patients

Until now, it has not been possible to accurately profile patients who use Better Access services in terms of their clinical characteristics and the nature of treatment they receive. Basic socio-demographic details (namely those described above) are routinely collected by Medicare Australia, as are details of the number of sessions of care provided. However, it is beyond the capacity of Medicare Australia's systems to collect patient level data on variables such as diagnosis, severity of symptoms, and specific treatment received. Our minimum dataset was purpose designed to collect key information about the clinical and treatment characteristics of Better Access patients (see Table 4).

Clinical and treatment profiles of patients

†Received care through Better Access between 1 Oct 2009 and 31 Oct 2010; ‡Cells do not always sum to the total n due to some missing data; §With or without alcohol and drug use disorders, psychotic disorders, and/or unexplained somatic disorders; ¶Alcohol and drug use disorders, psychotic disorders, unexplained somatic disorders, and/or unknown or missing diagnoses; ††Includes behavioural interventions and/or cognitive interventions, with or without diagnostic assessment, psycho-education, relaxation strategies, skills training and/or interpersonal therapy. Freq, frequency.

Diagnosis was classified hierarchically,1 with greatest emphasis given to depression and anxiety on the grounds that these are the two disorders that are primarily targeted by Better Access. Around three quarters of the patients recruited by each type of provider had depression with or without anxiety (and with or without other diagnoses), and about another fifth had anxiety without depression (with or without other diagnoses). The subgroups for whom pre- and post-treatment outcome data were available shared these diagnostic profiles.

Four fifths of participating patients recruited by each type of provider were experiencing high or very high levels of psychological distress (as assessed by the K-10) when they presented for care. The subgroups for whom pre- and post-treatment outcome data were available also demonstrated this pattern.

Only two fifths of participating patients who were recruited by clinical and registered psychologists had previously received mental health care; slightly more (three fifths) of those who were recruited by GPs had done so. These patterns held for the respective subgroups of patients for whom outcome data were available.

Participating patients who were recruited by clinical psychologists and registered psychologists received a median of five sessions of care from their psychologist; those who were recruited by GPs received a median of two sessions from their GP. Around one fifth of participating patients recruited by each provider group had not completed treatment (with the remainder either having done so or still receiving care). The subsamples of patients for whom outcome data were available had slightly higher median numbers of sessions (six for those recruited by the two groups of psychologists and three for those recruited by GPs), and were more likely to have completed treatment. This was a function of the time frame of the study. Inevitably, some patients were recruited who did not complete their recommended number of sessions of care. Although we requested that post-treatment outcome data be collected for all patients at the completion of treatment or the end of the study period, whichever came first, providers were more likely to record post-treatment outcome data for those who had completed treatment. This introduced a bias whereby those for whom outcome data were collected were more likely to have had close to the recommended number of sessions.

The vast majority of participating patients received cognitive behavioural therapy (CBT) in at least one session, irrespective of the type of provider who recruited them. This was also true for the subgroups of patients for whom outcome data were available.

Around two thirds of participating patients paid a co-payment in at least one session. Again, this pattern held for the subgroups of patients for whom outcome data were available.

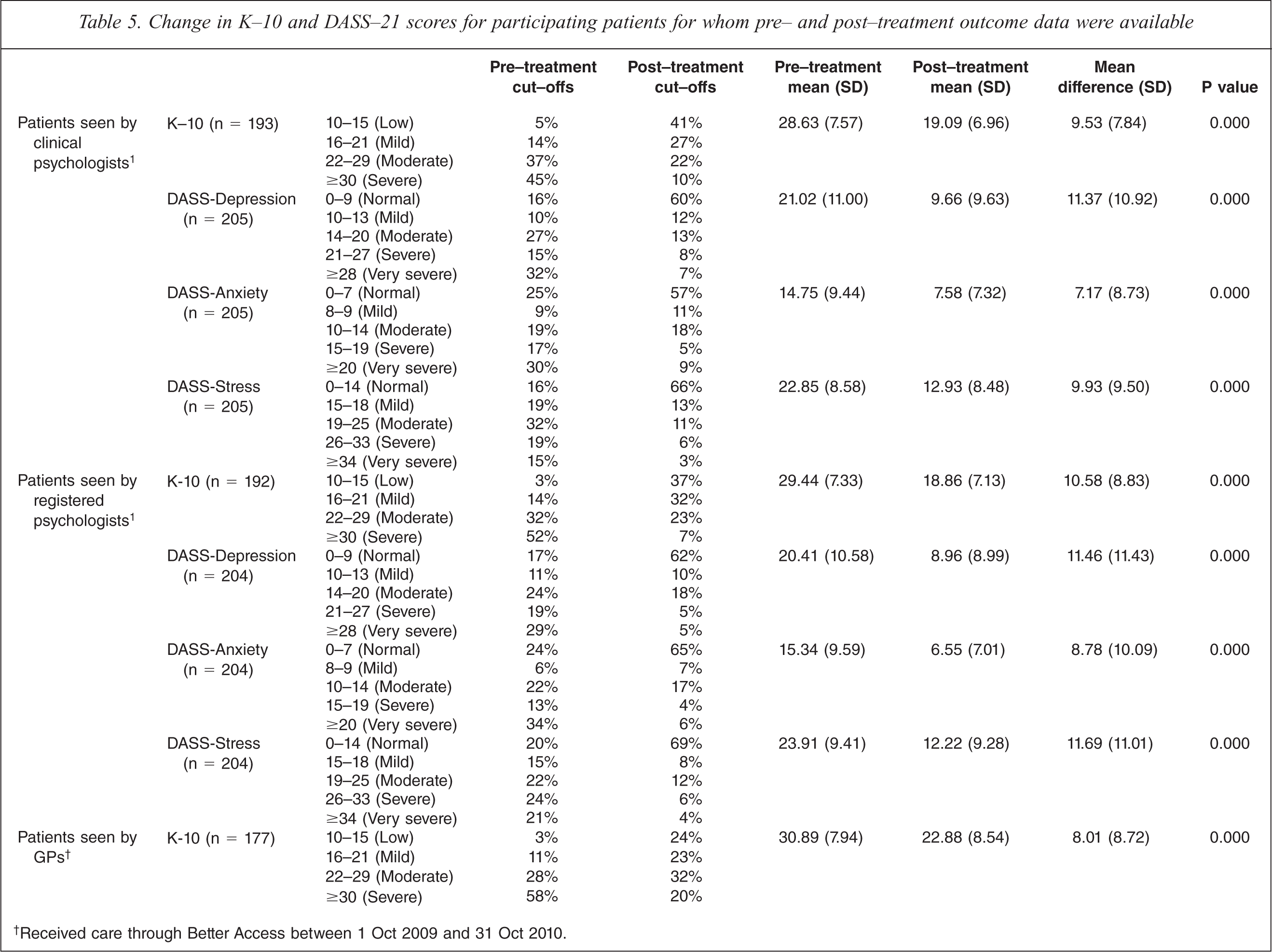

Changes in outcome measures from pre- to post-treatment

Table 5 presents outcome data for participating patients. We used paired t-tests to examine the difference between mean pre- and post-treatment scores on the range of outcome measures, excluding patients who did not have a ‘matched pair’ of pre- and post-treatment scores.

Change in K-10 and DASS-21 scores for participating patients for whom pre- and post-treatment outcome data were available

†Received care through Better Access between 1 Oct 2009 and 31 Oct 2010.

Patients who were recruited by all three types of provider shifted from having high or very high levels of psychological distress to having much more moderate levels of psychological distress (as assessed by the K-10). Patients who were recruited by clinical psychologists and registered psychologists shifted from having moderate or severe levels of depression, anxiety and stress to having normal or mild levels of these conditions (as assessed by the DASS-21).

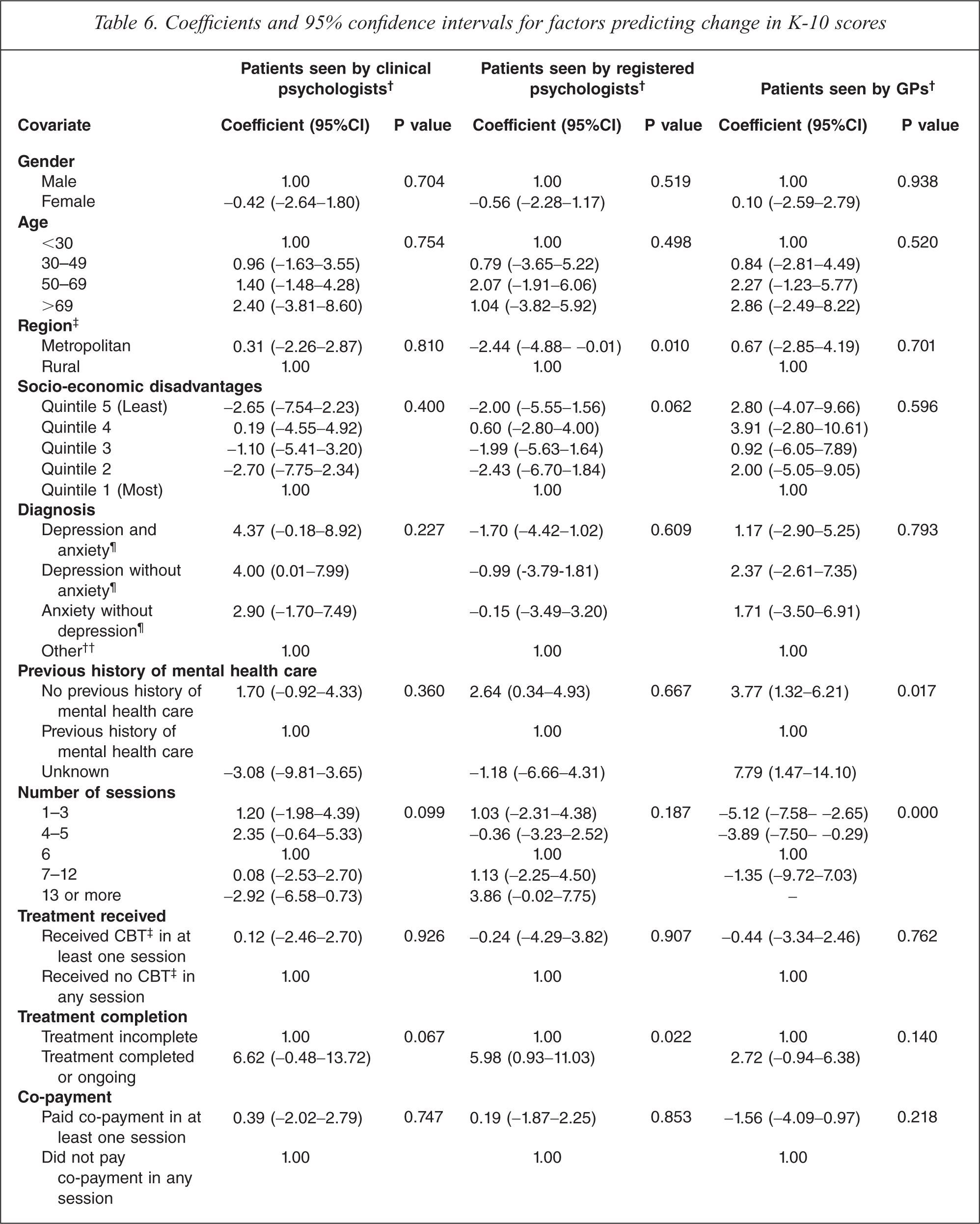

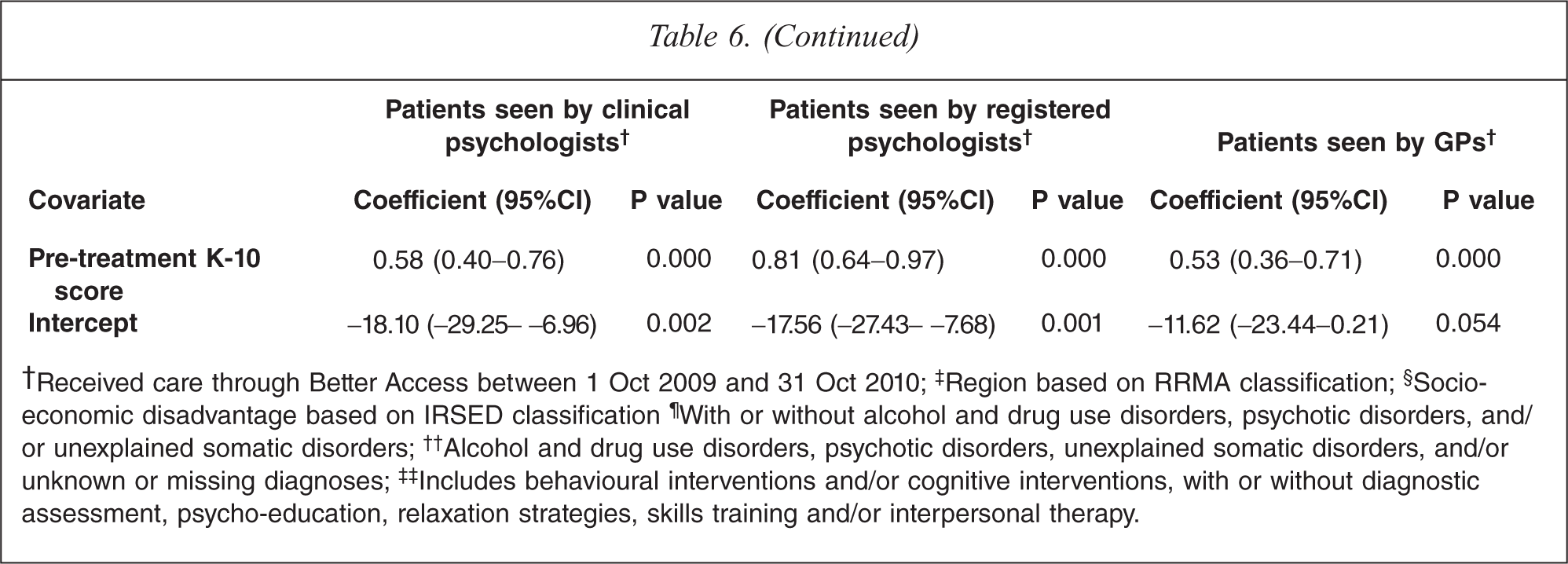

Predictors of improvement on outcome measures

We conducted three linear regression analyses (one for patients recruited by each of the three provider groups) using scores on the K-10 outcome of interest. The full range of socio-demographic, clinical and treatment variables described above were used as covariates; pre-treatment scores were also included as a covariate. Table 6 shows the results.

Coefficients and 95% confidence intervals for factors predicting change in K-10 scores

†Received care through Better Access between 1 Oct 2009 and 31 Oct 2010; ‡Region based on RRMA classification; §Socio-economic disadvantage based on IRSED classification ¶With or without alcohol and drug use disorders, psychotic disorders, and/ or unexplained somatic disorders; ††Alcohol and drug use disorders, psychotic disorders, unexplained somatic disorders, and/or unknown or missing diagnoses; ‡‡Includes behavioural interventions and/or cognitive interventions, with or without diagnostic assessment, psycho-education, relaxation strategies, skills training and/or interpersonal therapy.

Those with comparatively higher pretreatment K-10 scores (i.e. worse baseline manifestations of psychological distress) demonstrated greater levels of improvement than those with lower pretreatment scores. For patients recruited by clinical psychologists, improvements rose at a rate of 0.58 points per each additional one point increase on the pretreatment score. For patients recruited by registered psychologists and GPs, the equivalent figures were 0.81 and 0.53, respectively.

For patients recruited by clinical psychologists, no other factors were predictive of levels of gain in K-10 scores.

For patients recruited by registered psychologists, two other variables were significant predictors of outcome. The first of these was treatment completion; those who had completed treatment or were still in treatment experienced improvements 5.98 points higher than those for whom treatment was incomplete (e.g. those who had dropped out of treatment). The second significant variable was region. Those who were in metropolitan areas showed less improvement than their rural counterparts, on average gaining 2.44 points less.

For patients who were recruited by GPs, two additional variables were associated with positive outcomes. The first was the number of sessions of care received. Six sessions were optimal; fewer improvements were achieved when the patient had fewer sessions, and equivalent improvements were achieved when they had more sessions. The second important factor for patients who were recruited by GPs was whether they had previously received mental health care. Those who had not received previous mental health care showed levels of improvement that were 3.77 points higher than those who had done so.

Discussion

Making a contribution to the policy debate

Better Access represents a major programmatic change to the MBS; it marks the first time that primary mental health care services have attracted substantial reimbursement through the MBS. Various observers have expressed concerns about the initiative, often drawing on anecdotal evidence. Our study provides the first clinical, treatment and outcome profiles of Better Access patients that are based on systematically collected data. This puts us in a position to address some of the criticisms that have been levelled at Better Access since its inception.

Better Access is reaching patients with high levels of clinical need

The first of these is the criticism that Better Access is typically providing care to the group that have been disparagingly called ‘the worried well’ [4]. Our data suggest that this is not the case. The vast majority (over 90%) of our participating patients had diagnoses of depression and/or anxiety (with or without comorbid conditions), and many (over 80%) had high or very high levels of psychological distress (as assessed by the K-10). Just over one tenth of the general population meet diagnostic criteria for depression and/or anxiety in any given year [6], and less than one tenth experience these extreme levels of psychological distress [7].

Better Access is reaching patients who have not received care in the past

The second, related criticism is that many of the recipients of care under the scheme were already in receipt of psychological care [5,8]. Our data refute this suggestion – around half of our patients had no previous history of mental health care.

Better Access is achieving positive outcomes for patients

The third criticism is that Better Access may not be achieving optimal outcomes for patients [4]. Again, our data suggest that this is not the case. Participating patients who received care from clinical psychologists, registered psychologists and GPs under Better Access shifted from having high or very high levels of psychological distress to having much more moderate levels of psychological distress (as assessed by the K-10). Patients who received care from clinical psychologists and registered psychologists also showed shifts from moderate or severe levels of depression, anxiety and stress to having normal or mild levels of these conditions (as assessed by the DASS-21). These outcomes are of a similar level of magnitude to those experienced by patients who receive care from psychologists through the Access to Allied Psychological Services component of the Better Outcomes in Mental Health Care programme [9], and to those experienced by patients who receive care through the virtual clinic operated by the Clinical Research Unit for Anxiety and Depression (CRUfAD) [Andrews G: personal communication]. They also correspond with the sorts of effects seen by major primary mental health care programmes overseas, such as the Improving Access to Psychological Therapies (IAPT) initiative in the UK [10].

Clinical factors have a greater impact on outcomes than socio-demographic factors

Finally, there is the criticism that there are inequities in the way Better Access is delivered [5,8,11]. Our study did not address the question of whether access to these services is inequitable, but it did consider the related question of whether Better Access achieves poorer outcomes for people from traditionally disadvantaged groups. Our data showed that, in the main, socio-demographic factors did not appear to have a major influence on outcomes; equivalent outcomes were achieved irrespective of whether the patient was male or female, young or old, wealthy or struggling financially. Instead, clinical and treatment variables were generally the ones that made a difference.

For patients recruited by all three types of providers, those with worst baseline manifestations of psychological distress (i.e. higher pretreatment K-10 scores) made the greatest gains. Prytys et al. [12] reported a similar finding, observing that those with clinical depression benefited more from CBT workshops than those with subthreshold symptoms. By contrast, Hamilton and Dobson [13] found that people with more severe symptoms of depression were less responsive to CBT than those with milder symptoms. Our findings might be explained by the fact that those with higher original scores have greater scope for improvement before hitting a ‘floor’ score and/or, arguably, may have more ‘invested’ in treatment because they have more to gain from it.

For patients recruited by registered psychologists, completion of treatment and residential location were also significant predictors of outcome. Those who had completed treatment or were still in treatment experienced greater gains than those for whom treatment was incomplete, and those in metropolitan areas showed lesser improvement than their rural counterparts. The first finding might be explained by the fact that those who dropped out of treatment prematurely were likely to have done so because they felt it was not doing them any good or because they did not have a sufficiently good rapport with the therapist. The second finding is more difficult to interpret and requires further exploration, but may relate to the way registered psychologists in rural practice operate. They may be an important part of the community, and know its members well. This may make them particularly well placed to understand the context within which an individual is experiencing mental health problems, and assist them in shaping the treatment they offer. They are also often linked in with other local providers, which may lead to particularly productive collaborative care arrangements.

For patients recruited by GPs, those who had six sessions of care experienced optimal outcomes, and those who had no previous history of mental health care showed greater levels of improvement than those who had received mental health care in the past. The first finding is difficult to interpret because these patients may have seen the GP in isolation, or may have been referred to a psychologist or another allied health professional for additional sessions of psychological care. Therefore, the total number of sessions with the GP may not be representative of the total number of sessions of care they received. The second finding has a number of potential explanations. One might be that a considerable proportion of those who are new to the system may have had difficulties accessing services in the past, and these people may be particularly likely to be compliant with treatment now that they have been given the opportunity to access care. Another might be that these people have less chronic conditions, and may therefore have less entrenched symptoms. A third and related interpretation might be that intervention is occurring earlier for these people.

Study limitations

We believe that our evaluation was as methodologically sound as it could have been, given the circumstances under which it was conducted. It was certainly more rigorous than many evaluations of other health programmes, including those funded through Medicare, which often rely on uptake data and measures of patient satisfaction. Having said this, our evaluation had several limitations which must be acknowledged.

Our response rates for participating clinical psychologists, registered psychologists and GPs were 8%, 8% and 3%, respectively. These sorts of response rates are common for studies of this kind [14]. Higher response rates would obviously have been desirable, but the samples were broadly representative of the groups from which they were selected, which engenders confidence in the generalizability of the findings.

We could not calculate precise response rates for patients because we were not privy to how many patients each provider approached. We also had no way of ascertaining whether providers did approach up to 20 consecutive new patients, or whether they were more selective. However, what we can say is that, ultimately, our samples of participating patients were fairly representative of the groups from which they were drawn, although they tended to be less likely to live in capital cities and more likely to live in areas of socio-economic disadvantage than the overall pool of Better Access patients. They may therefore previously have had more limited access to mental health care.

Our evaluation relied heavily on outcome data collected from patients via standardized measures and entered into the minimum dataset by providers. We acknowledge that this created some potential for data distortion. Patients may have felt compelled to give socially desirable responses on the K-10 and DASS-21 because they knew that providers expected them to improve. Providers, too, may have been motivated to demonstrate the benefits of their treatment practices, although because the K-10 and DASS-21 are patient self-report measures, they would have had to actively enter different responses from those provided by patients into the minimum dataset, which we think is fairly unlikely. The only way to have countered these potential biases would have been for us to recruit, follow and assess patients ourselves, and this was not feasible from a practical or an ethical perspective. Our data collection was similar to other major routine data collection exercises (e.g. the collection of routine outcome data in public sector mental health services across Australia) [15]. We also had the opportunity to ‘triangulate’ our findings from the outcome data with our findings from the patient interviews/surveys reported elsewhere [1] and with the findings from two other smaller studies that considered outcomes for patients seen by psychologists and occupational therapists [Hitch D: personal communication,15,16]. All of these data sources pointed in a similar direction, giving us confidence in the data from our outcome measures.

Our evaluation also required providers to make some clinical judgements about patients, in particular with respect to diagnosis. Ideally, diagnosis would have been ascertained with the use of a structured instrument, but this would have placed an additional data collection burden on both providers and patients. We assumed that providers would be making diagnostic decisions based on their own routine assessment processes, and felt that it was reasonable that these diagnoses were the ones that were reflected in our minimum dataset, although we acknowledge that this may have introduced variability. This is consistent with the approach adopted in routine mental health data collections in the primary care sector and in the specialist public mental health sector.

The real-world nature of the evaluation precluded our drawing a control group. This, in turn, rendered it impossible for us to definitively determine whether treatment was responsible for the improvements in patient outcomes. Epidemiological studies that follow participants longitudinally will often show that those with high levels of symptomatology at baseline improve over time, partly because of regression to the mean effects and partly because of the natural history of disorders like depression and anxiety. This evaluation followed a group of help-seeking individuals from the beginning of their treatment, so it is arguably not surprising that they showed significant improvements. Without a control group it is not possible to say definitively that Better Access contributed to these improvements, but two further pieces of evidence add weight to the argument that this is a likely explanation. The first is the fact that the patients predominantly received CBT, which has been shown in randomized controlled trials to be beneficial. In a sense, the current evaluation could be regarded as an effectiveness study which has taken the findings from these efficacy studies to see whether they remain consistent in a less controlled context. The second is the fact that our interviews/surveys asked patients about the extent to which they would attribute any changes in their mental health status to the care they received from the particular provider. The vast majority indicated that the improvements they had experienced were partially or wholly due to the given provider's involvement [1].

Our examination of outcomes was restricted to those assessed by the K-10 and DASS-21, both of which relate to symptoms. Desirably, we would have also included measures of functioning, disability and/or quality of life, but we elected not to do so in an effort to minimize the data collection burden for providers and patients. Without such a measure, it was not possible to determine whether patients experienced improvements in these areas.

Conclusions

The findings suggest that Better Access is playing an important part in meeting the community's previously unmet need for mental health care. The initiative has enabled patients with clinically diagnosable disorders and considerable psychological distress to access care; many of these patients have not received mental health care in the past. These patients’ mental health status improves markedly during the course of their care; their symptoms reduce and their psychological distress diminishes. These achievements should not be under-estimated. Good mental health is important to the capacity of individuals to lead a fulfilling life (e.g. by studying, working, pursuing leisure interests, making housing choices, having meaningful relationships with family and friends, and participating in social and community activities). Other programmes (e.g. the Access to Allied Psychological Services component of the Better Outcomes in Mental Health Care program and the Mental Health Services in Rural and Remote Areas programme) may also be necessary to complement Better Access because of their focus on hard-to-reach populations [18,19], but this major mental health reform seems to be achieving positive outcomes. For this reason, we were encouraged to see that the integrity of the programme was largely retained in the recent Federal Budget.

Footnotes

Note

1. The hierarchy worked in the following way. Patients with depression and anxiety were classified as having both disorders, irrespective of whether they had additional diagnoses (i.e. alcohol and drug use disorders, psychotic disorders, unexplained somatic disorders, and/or other disorders). Patients with depression but not anxiety were classified as having depression, irrespective of whether they had any of the previously mentioned additional diagnoses. Patients with anxiety but not depression were classified as having anxiety, irrespective of whether they had any of the additional diagnoses. Patients without depression or anxiety were classified as having other disorders, as were those with unknown or missing diagnoses.