Abstract

Past research has demonstrated that treatment effects frequently vary across sites (e.g., schools) and that such variation can be explained by site-level or individual-level variables (e.g., school size or gender). The purpose of this study is to develop a statistical framework and tools for the effective and efficient design of multisite randomized trials (MRTs) probing moderated treatment effects. The framework considers three core facets of such designs: (a) Level 1 and Level 2 moderators, (b) random and nonrandomly varying slopes (coefficients) of the treatment variable and its interaction terms with the moderators, and (c) binary and continuous moderators. We validate the formulas for calculating statistical power and the minimum detectable effect size difference with simulations, probe its sensitivity to model assumptions, execute the formulas in accessible software, demonstrate an application, and provide suggestions in designing MRTs probing moderated treatment effects.

Keywords

Recent efforts by a broad range of societies and funding agencies have emphasized rigorous study design as an important lever for improving the quality of evidence produced by impact evaluations (e.g., U.S. Department of Education & National Science Foundation, 2013). According to the Common Guidelines (2013), a joint report released by the National Science Foundation and the Institute of Education Sciences, designs which randomly assign units to conditions are the most rigorous designs and have the potential to yield the highest quality of evidence. Random assignment may occur at the individual level, as is the case of a multisite randomized trial (MRT) in which individuals (students) are randomly assigned to condition within sites (schools). Random assignment may also occur at the cluster level, as is the case of a cluster randomized trial (CRT), in which clusters (schools) are randomly assigned to condition and students are nested within schools. Although both MRTs and CRTs are common in impact studies in education (Spybrook & Raudenbush, 2009; Spybrook, Shi, & Kelcey, 2016), the focus of this article is the design of MRTs.

Initially, the focus of MRTs in education was to address “what works” questions or questions about main effects. More recently, researchers and policymakers have broadened the focus to include questions regarding “for whom, and under what circumstances” programs work, or questions about moderated treatment effects. The impetus for broadening the scope of questions stems in part from empirical research that suggests treatment effects frequently vary across site or individual characteristics (Weiss et al., 2017). Understanding the context in which an intervention is likely to be effective is fundamental to understanding the extent to which results are applicable and scalable to a wide range of schools and students and also facilitates the development of more nuanced theories.

In this study, we consider the design of MRTs that seek to answer questions about moderated treatment effects. Recall that in an MRT, individuals (students) are randomly assigned to condition within sites (schools). Hence, students represent Level 1 and schools represent Level 2 with treatment varying across Level 1 units. Our analyses consider the intersections of three facets of multilevel moderation that are common in practice: (a) Level 1 and Level 2 moderator variables, (b) random and nonrandomly varying slopes (coefficients) of the treatment variable and the interaction term between the treatment and moderator variables, and (c) binary and continuous moderators. We consider moderators at the student level (e.g., gender) and at the school level (e.g., school size, Title 1 status). For both levels, we consider binary and continuous moderators.

In planning an MRT, a key design consideration is the sample size necessary to achieve adequate statistical power (probability of detecting the main treatment effect and moderated treatment effect). A strong literature base exists for conducting power analyses for the main effects of MRTs already exist (e.g., Borenstein & Hedges, 2012; Dong & Maynard, 2013; Konstantopoulos, 2008; Raudenbush et al., 2011) and for conducting power analyses for main effects and moderator effects in CRTs (e.g., Dong et al., 2018; Spybrook, Kelcey, & Dong, 2016). However, there is less work on power calculations for moderated treatment effects in MRTs. Raudenbush and Liu (2000) developed power formulas for the site-level (Level 2) binary moderator effect in MRTs, and Bloom and Spybrook (2017) developed formulas for the minimum detectable effect size difference (MDESD) for the site-level binary moderator in MRTs. However, the scope of such studies has largely been limited to binary site-level moderators in MRTs. Missing from this literature is a more comprehensive statistical framework for power analyses of moderated treatment effects in MRTs that incorporates the considerations noted above (e.g., continuous moderators, random slopes) and a careful analysis delineating the parameters that govern power and their proportional influence (e.g., how does the intraclass correlation [ICC] coefficient or treatment effect variation/heterogeneity of coefficients affect power).

The purpose of this study is to develop a more comprehensive statistical framework and set of tools for the effective and efficient design of MRTs probing moderated treatment effects. As noted above, the framework we develop considers the intersections of three facets of multilevel moderation that are common in practice: (a) Level 1 and Level 2 moderator variables, (b) random and nonrandomly varying slopes (coefficients) of the treatment variable and the interaction term between the treatment and moderator variables, and (c) binary and continuous moderators. Our investigation of these facets developed formulas that delineate statistical power, the MDESD, and their corresponding confidence intervals (CIs). We also created software to assist researchers conducting power analyses for various moderated treatment effects. 1

This article is organized as follows: First, we outline a working example to provide the context to our formulations, structure, and expressions. Second, we present the formulas for the standard error (

Working Example

We develop an illustrative example to frame our study. Our example focuses on a computer-assisted tutoring program intended to improve students’ reading achievement. For example, Chambers et al. (2008) used an MRT to test the effect of a computer-assisted tutoring program on reading achievement. The MRT included a total of 412 first graders randomly assigned to the computer-assisted tutoring or the traditional tutoring groups within each of 25 schools. The findings revealed no significant overall treatment effect. However, the study also suggested the potential for treatment effect heterogeneity. For instance, one common site- or school-level moderator variable that is commonly considered in moderation analyses is the average pretest. The follow-up question is how to design an MRT to systematically probe the moderated treatment effect of the computer-assisted tutoring program.

In this illustrative example and our larger study, we consider three design facets that are common in this literature. As outlined above, the first facet considers the level of the moderator (e.g., student vs. school level). For instance, the effect of the computer-assisted tutoring program may vary by the student characteristics (e.g., pretest and gender) or the site characteristics (e.g., average pretest). The levels of the moderators examine for whom (Level 1 moderators) and under what condition (Level 2 moderators) the computer-assisted tutoring program works.

The second facet concerns the quantitative nature of the moderator—that is, whether the moderator is binary (e.g., gender and program implementation [high vs. low]) or continuous (pretest and school size). When the moderator is a binary variable (e.g., gender), the moderator effect indicates the treatment effect difference between two categorical groups or the gender achievement gap in treatment effectiveness. When the moderator is a continuous variable (e.g., pretest), the moderator effect describes the disparate impact of the treatment on the outcome for different increments of the pretest.

The final facet examines whether the design calls for a random or nonrandomly varying term for the treatment and moderated treatment effects. More specifically, when the moderator is a Level 1 variable, the moderated treatment effect may randomly vary across sites (school) or be constant across schools. For instance, the treatment effect difference between males and females for the computer-assisted tutoring program may or may not be same across schools. In addition, at the school level, the treatment effect may still randomly vary across schools after accounting for the school-level moderator effect or may be constant across schools. For example, if the average pretest of a school explains some of the heterogeneity in treatment effects across schools but not all of it, there may be other factors contributing to the treatment effect heterogeneity. However, if the treatment effect is constant across schools after accounting for the differences among schools in terms of the average pretest, then it may be the only factor causing the treatment effect heterogeneity. The choice of random versus nonrandomly slope depends on the program theory and evidence from prior studies.

Statistical Power and the MDESD in Two-Level MRTs

Below we describe how we develop the formulas of the statistical power and the MDESD for Level 1 and Level 2 moderators in two-level MRTs. Suppose there are

Random Slope Models

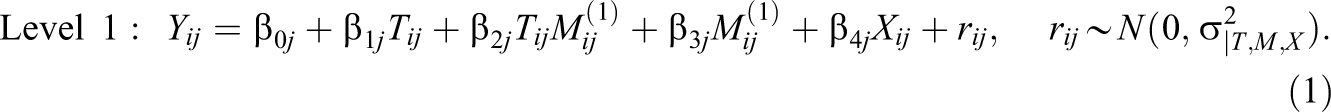

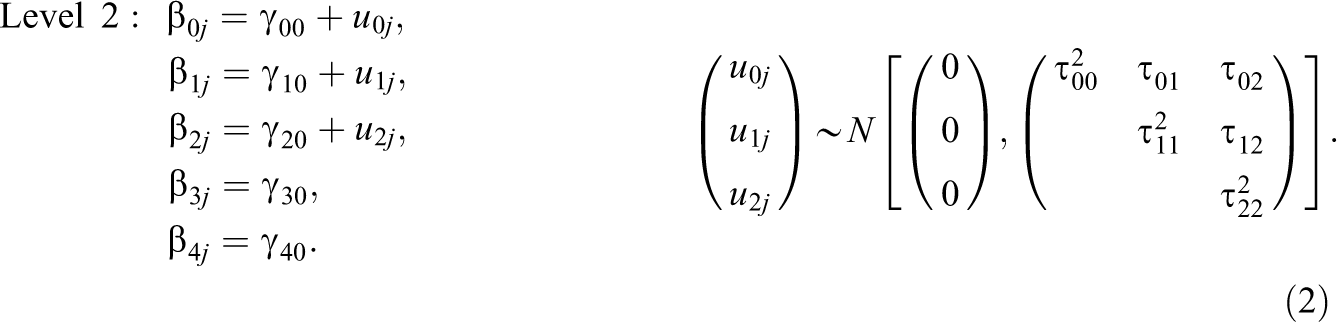

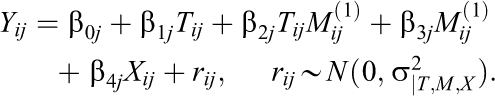

Random slope models allow us to test whether the treatment effect varies across moderator subgroups and whether the moderated treatment effects vary randomly across schools. To test for the Level 1 moderation, we use two-level random slope hierarchical linear modeling (HLM; Raudenbush & Bryk, 2002):

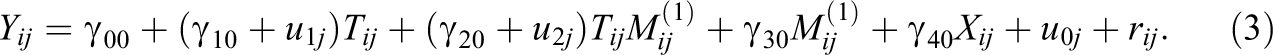

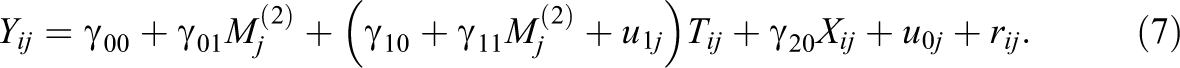

The combined model is:

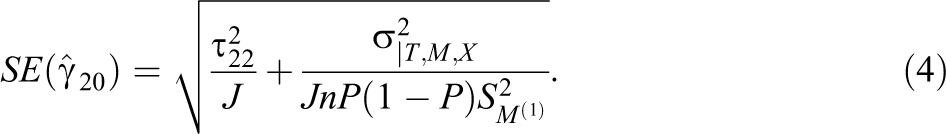

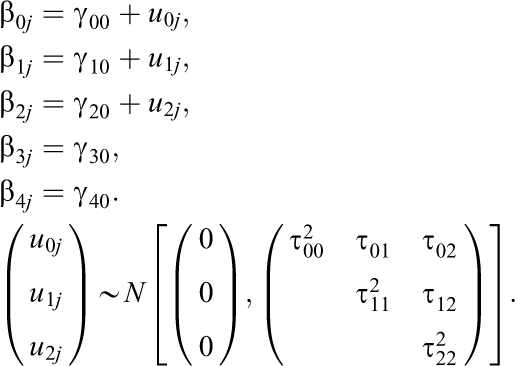

By extending Snijders’s (2001, 2005) work, the

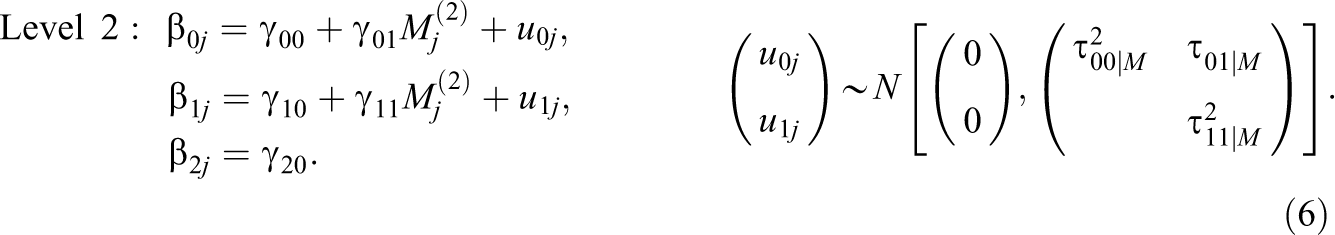

To test for the Level 2 moderation, we use two-level random slope HLM (Raudenbush & Bryk, 2002):

The combined model is:

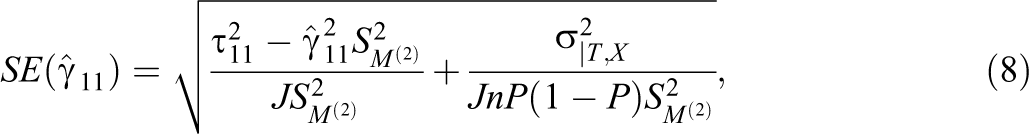

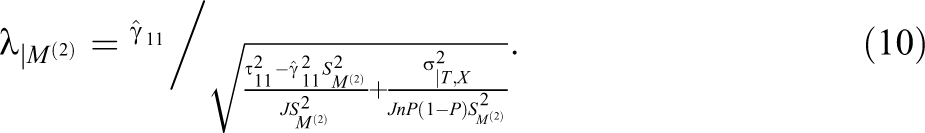

By extending Snijders’s (2001, 2005) work, the estimate of the

where

Power Formulas

We can test

and

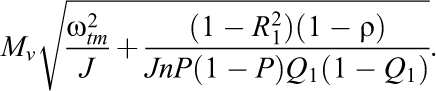

We standardize the moderation effect variability across sites such that

and

The degrees of freedom are

The statistical power for a two-sided test is

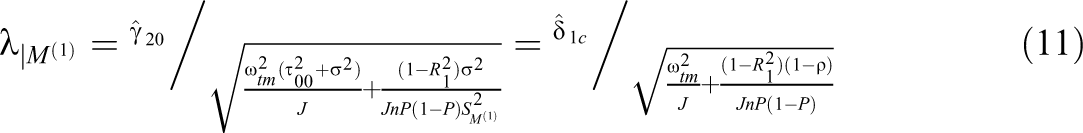

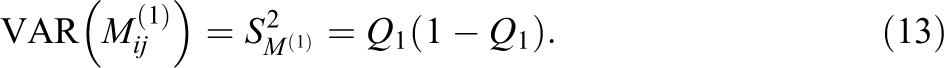

When the Level 1 moderator,

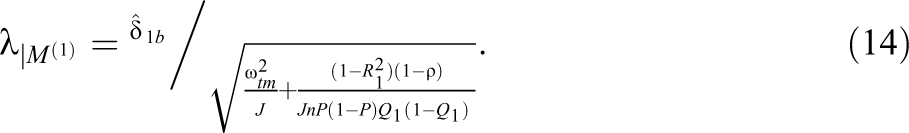

By inserting Equation 13 into Equation 9, we derived the standardized noncentrality parameters as

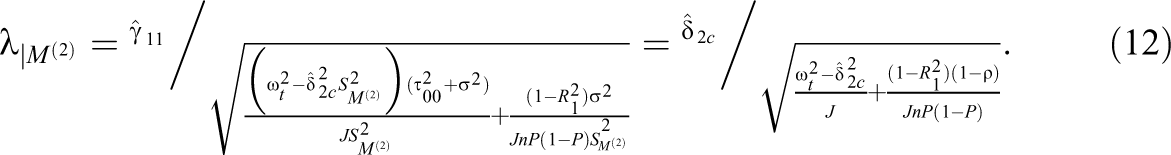

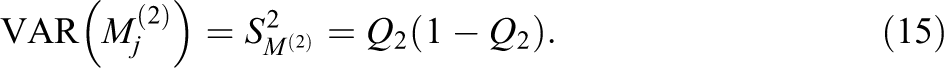

Similarly, when the Level 2 moderator,

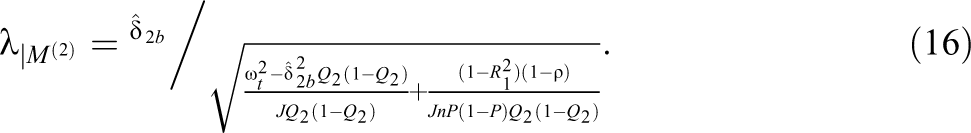

By inserting Equation 15 into Equation 10, we derived the standardized noncentrality parameters as

Note that Equation 16 above is consistent with Equation 26 in Raudenbush and Liu (2000) when

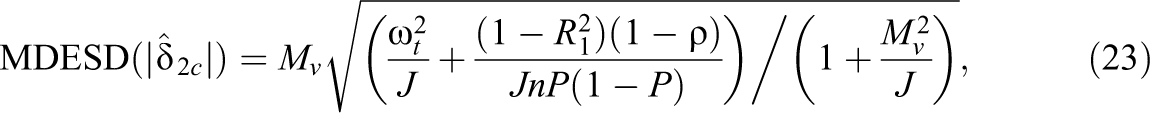

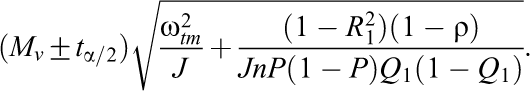

The MDESD With CI

In addition to knowing the statistical power for a study to detect a desired effect size, it is useful to know the MDESD that a moderation study can detect with sufficient power (e.g., 80%) given sample sizes. The MDESD can be expressed as (Bloom, 1995, 2005, 2006; Dong et al., 2018; Murray, 1998)

where

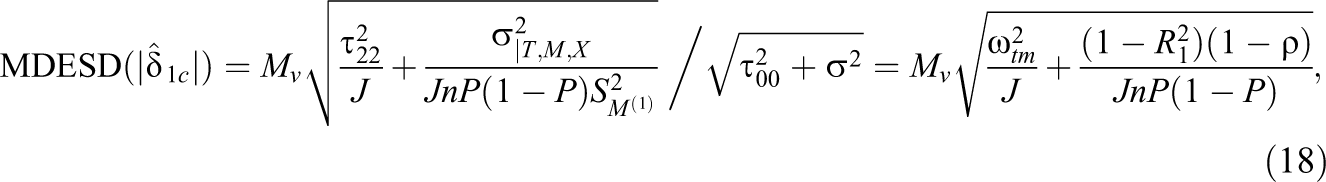

Hence, by inserting Equation 4 into Equation 17, we derived the MDESD for the standardized coefficient for a continuous Level 1 moderator as

where the standardized coefficient (

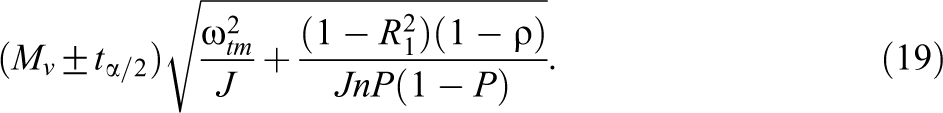

The 100 × (1−α)% CI for

The MDESD for the standardized mean difference for a binary Level 1 moderator is as follows:

where the proportion (

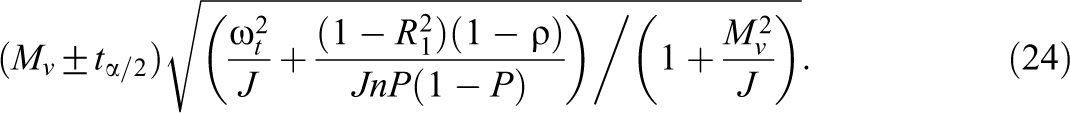

The 100 × (1−α)% CI for

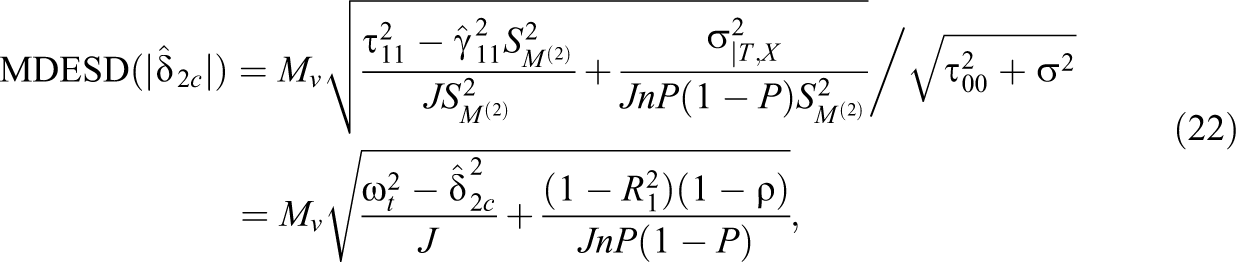

By inserting Equation 8 into Equation 17, we derived the MDESD for the standardized coefficient for a continuous Level 2 moderator as

where the standardized coefficient (

where the degrees of freedom is J − 2.

The 100 × (1−α)% CI for

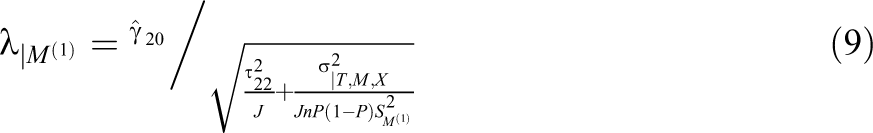

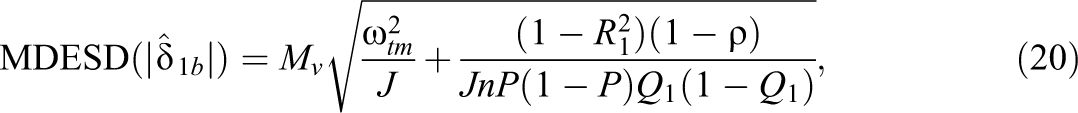

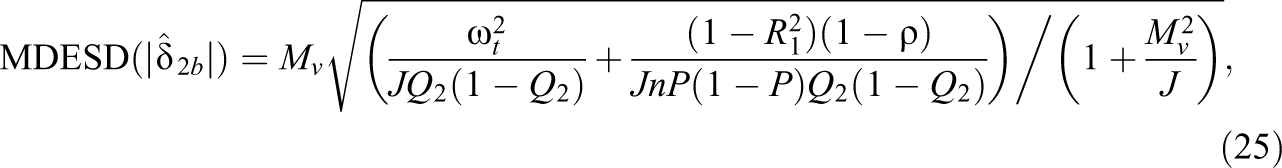

The MDESD for the standardized mean difference for a binary Level 2 moderator is as follows:

where the degrees of freedom of

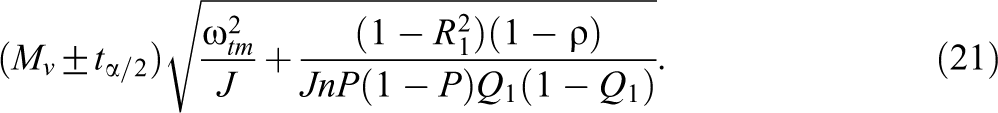

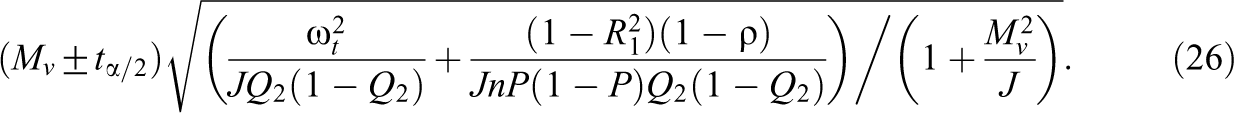

The 100 × (1−α)% CI for

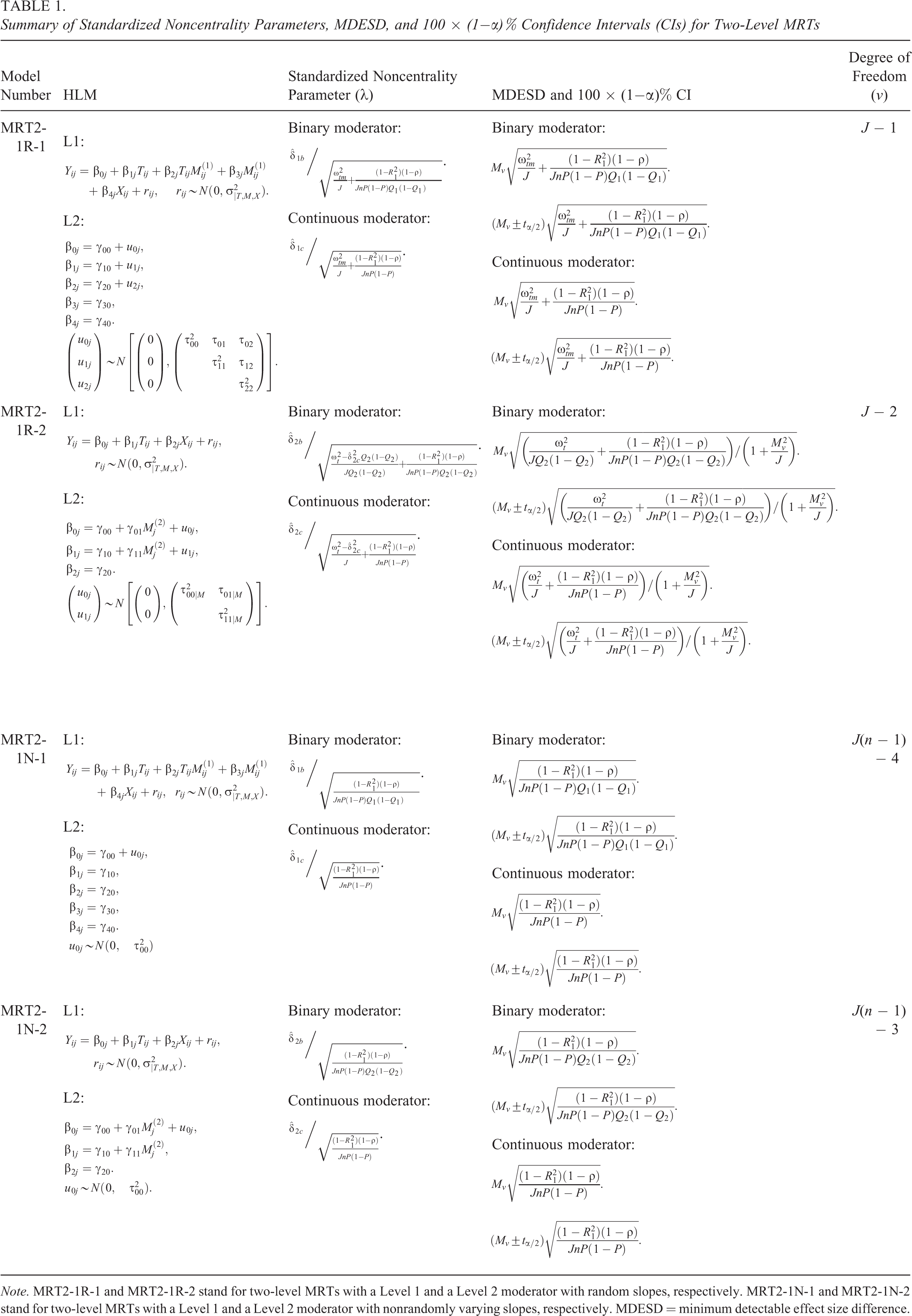

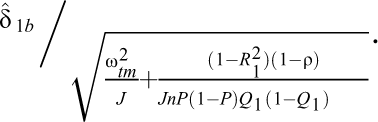

Table 1 presents the summary of standardized noncentrality parameters, MDESD and 100 × (1−α)% CIs, and degrees of freedom for the

Summary of Standardized Noncentrality Parameters, MDESD, and 100 × (1−

Nonrandomly Varying Slope Models

The hierarchical linear models with a nonrandomly varying slope assume that the treatment effect varies by the moderators but does not randomly vary across sites (Models MRT2-1N-1 and MRT2-1N-2 in Table 1 and below).

The models with a nonrandomly varying slope for a Level 1 moderator (MRT2-1N-1) are as follows:

The models with a non-randomly varying slope for a Level 2 moderator (MRT2-1N-2) are as follows:

The nonrandomly varying slope model is a special case of the random slope model. Setting

Monte Carlo Simulations

To validate the We generated data using the hierarchical linear models in Equations 1 and 2, and 5 and 6 for random slope models with Level 1 and Level 2 moderators, respectively, Equations 27 and 28, and 29 and 30 for nonrandomly varying slope models with Level 1 and Level 2 moderators, respectively. We used SAS PROC MIXED to analyze the data sets. We computed the The moderator effect was standardized to the standardized mean difference for the binary moderators or the standardized coefficient for the continuous moderators; a We replicated Steps 1 through 3 2,000 times and calculated the means of the moderator effect size,

Our Monte Carlo simulation considered several scenarios by changing the sample size, the moderator effect size, random slopes and nonrandomly varying slopes, and binary and continuous Level 1 and Level 2 moderators.

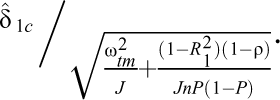

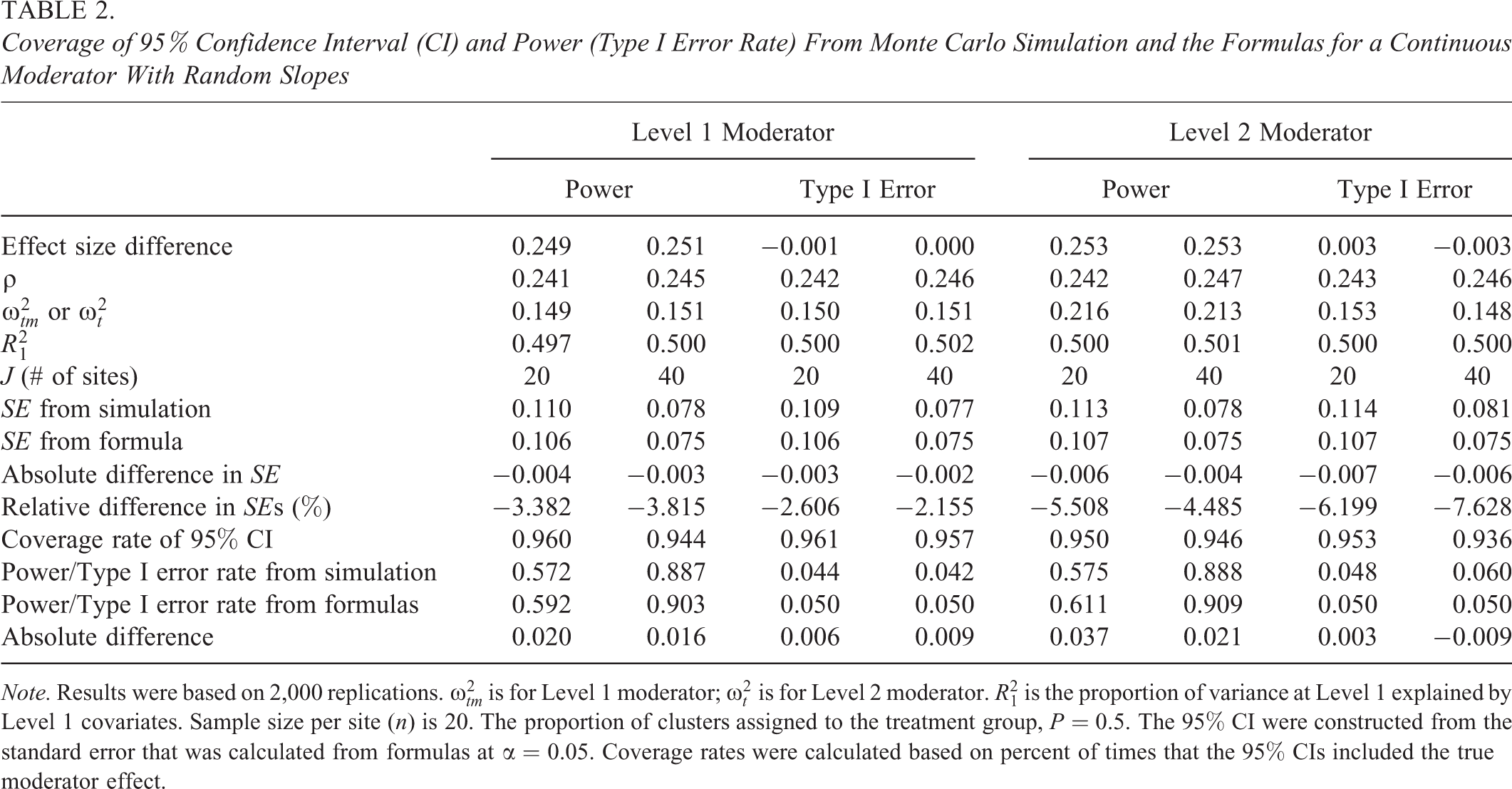

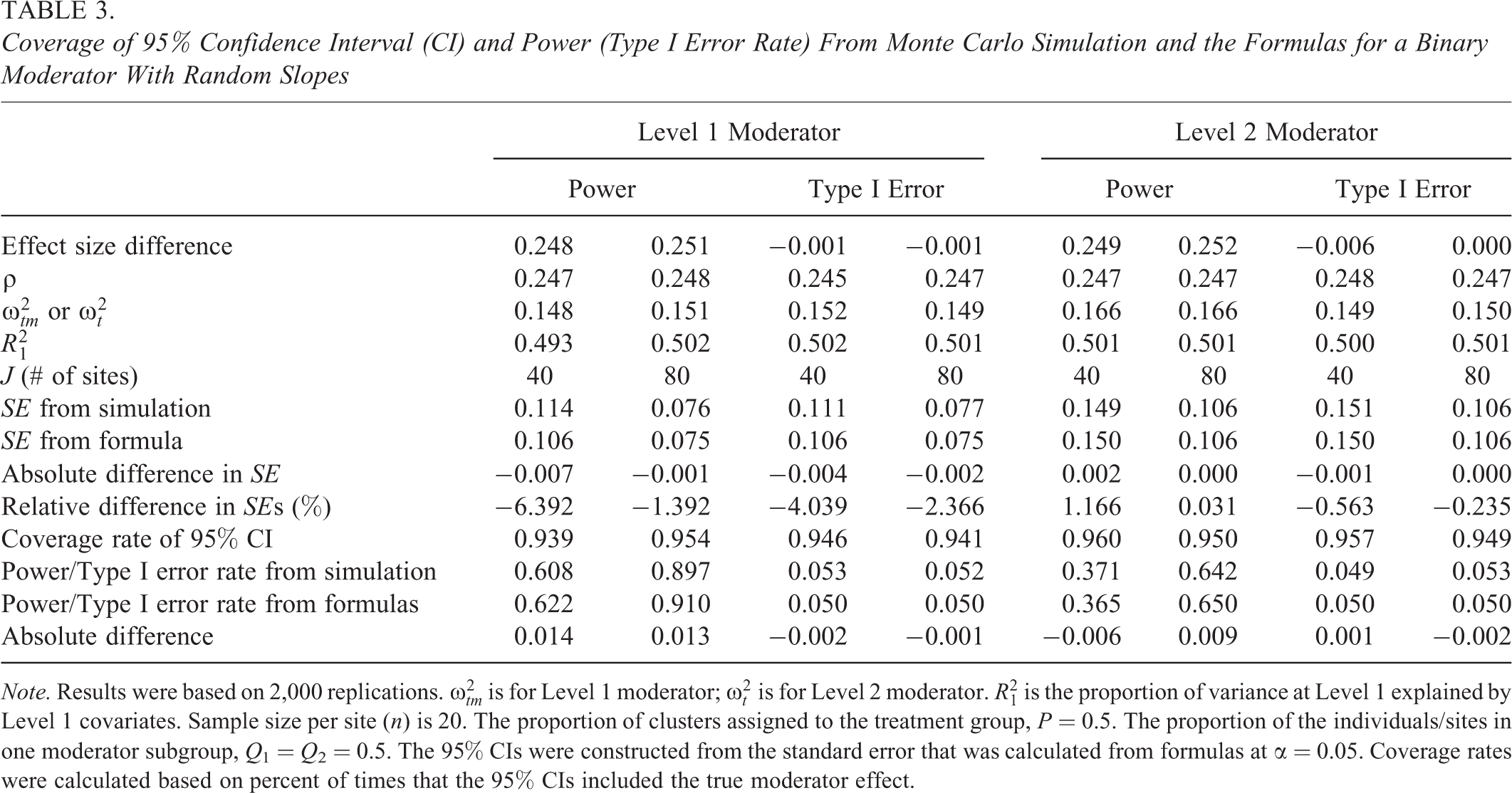

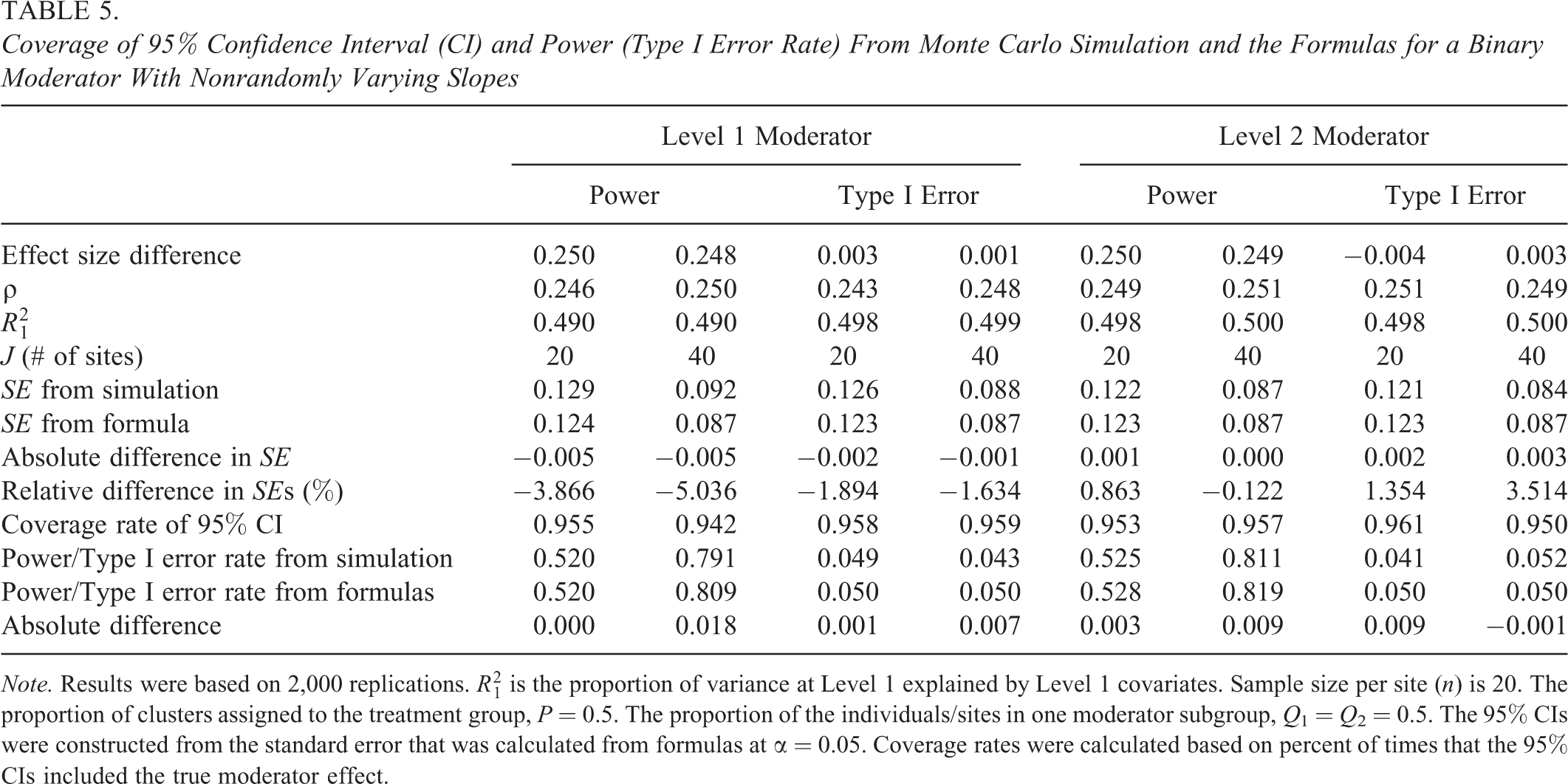

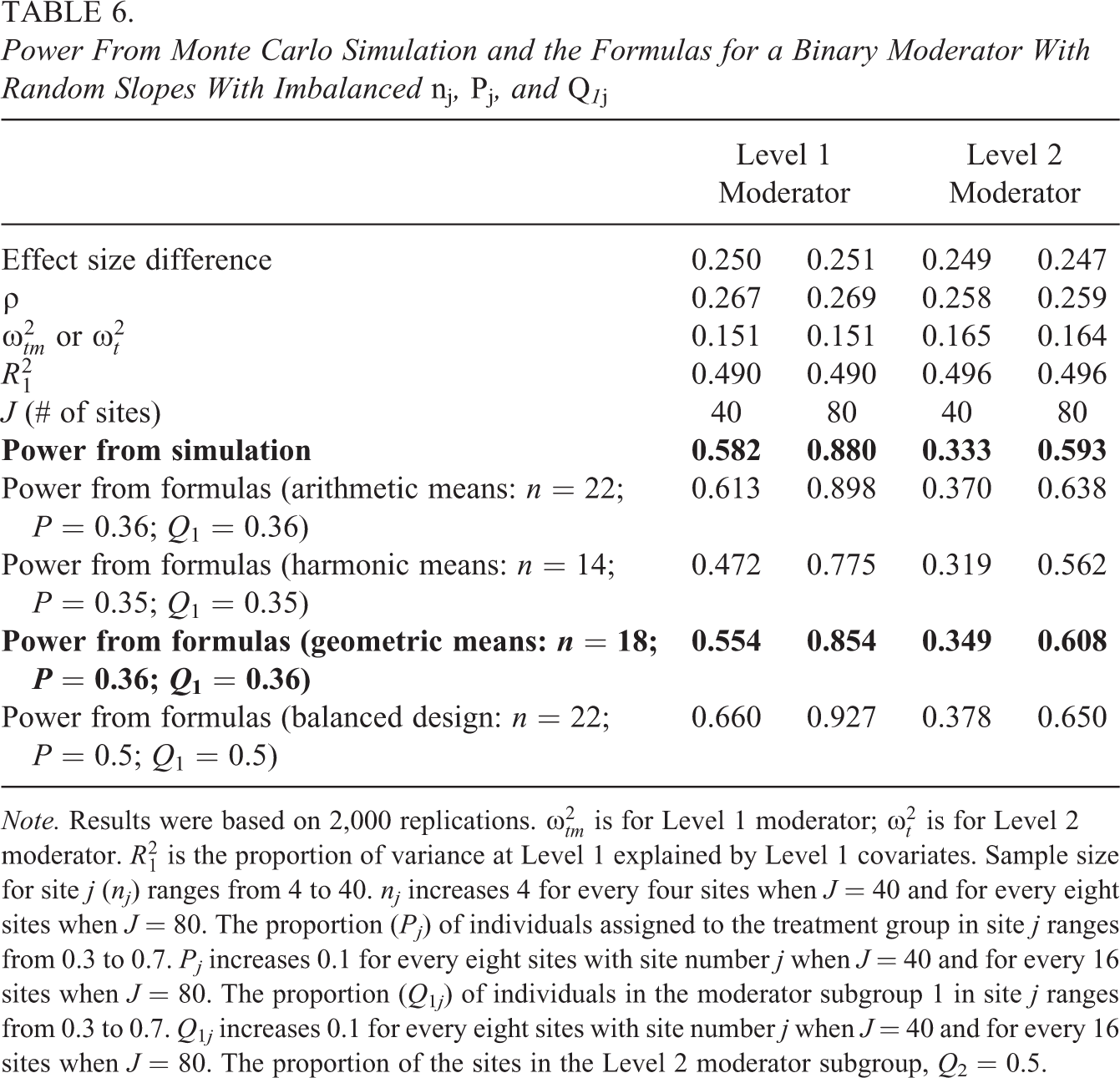

Tables 2 through 5 present the results of

Coverage of 95% Confidence Interval (CI) and Power (Type I Error Rate) From Monte Carlo Simulation and the Formulas for a Continuous Moderator With Random Slopes

Coverage of 95% Confidence Interval (CI) and Power (Type I Error Rate) From Monte Carlo Simulation and the Formulas for a Binary Moderator With Random Slopes

Coverage of 95% Confidence Interval (CI) and Power (Type I Error Rate) From Monte Carlo Simulation and the Formulas for a Continuous Moderator With Nonrandomly Varying Slopes

Coverage of 95% Confidence Interval (CI) and Power (Type I Error Rate) From Monte Carlo Simulation and the Formulas for a Binary Moderator With Nonrandomly Varying Slopes

In addition, our derived formulas are based on the balanced design, that is, equal site sizes

Power From Monte Carlo Simulation and the Formulas for a Binary Moderator With Random Slopes With Imbalanced

The results for the individual effects of imbalanced

Furthermore, we derived our formulas when the continuous moderators are assumed to be normally distributed. In practice, the continuous moderators may not be normally distributed (Micceri, 1989). Although the conventional normality assumption for the linear models applies to the residuals, not the dependent variables or predictors, and linear models are robust to violations of the normality assumption when the sample size is large, we conducted a small Monte Carlo simulation to assess how the distributions of continuous moderators affect the estimated power. We used the SAS Macro RandFleishman (Wicklin, 2013), which implemented Fleishman’s (1978) cubic transformation method, to generate the variables with specified skewness and Pearson’s kurtosis. We simulated moderators with combined skewness (ranging from 1.18 to 1.95) and Pearson’s kurtosis (ranging from 2.21 to 7.44). The absolute difference between the power calculated from the formulas and that estimated from simulation ranged from 0.013 to 0.058. For many scenarios, the simulation results are very close to those from the power formulas. However, as the number of sites decreases the differences increase some, for example, the biggest difference (0.058) occurs for the smallest sample size of sites (

We also simulated moderators with a bimodal distribution. The moderator variables were generated from the mixture distribution with two mixture components: one normal distribution (

Discussion: Comparisons Among Moderated Treatment Effects and Main Effect in MRTs

In this section, we compare the statistical power and MDESD among the moderation designs and main effect designs in two-level MRTs both conceptually (e.g., examining the formulas) and practically (e.g., using examples).

Contrasting Moderated Treatment Effects

Just as in the main effect analysis, the power of the moderated treatment effect in two-level MRTs is associated with the noncentrality parameter (λ) and the critical

When the treatment effect varies by the moderator but does not vary across sites (i.e., nonrandomly varying effect; MRT2-1N-1 and MRT2-1N-2 in Table 1), the

In particular, power increases with the sample sizes, and the sample sizes for sites (

If the moderator is a binary variable, the power is also associated with the proportion (

If the treatment effect not only varies by the moderator but also varies across sites (i.e., random slope model; MRT2-1R-1 and MRT2-1R-2 in Table 1), the variance of the moderator effect estimate is a function of the variance of the parameter (i.e., true moderator effect) and the variance of the random error (Raudenbush & Bryk, 2002, pp. 44–45). As a result, the power is also associated with the effect heterogeneity across sites (

Comparing Moderated Treatment Effects With Main Effect

Based on the expression on page 48 in Dong and Maynard (2013), the minimum detectable effect size (MDES) for the main effect in a two-level MRT can be re-expressed as follows:

where the degrees of freedom (

The ratio of the MDESD for a Level 2 binary random moderator effect to the MDES of the main effect is as follows:

Equation 32 reveals that the MDESD is

Demonstration

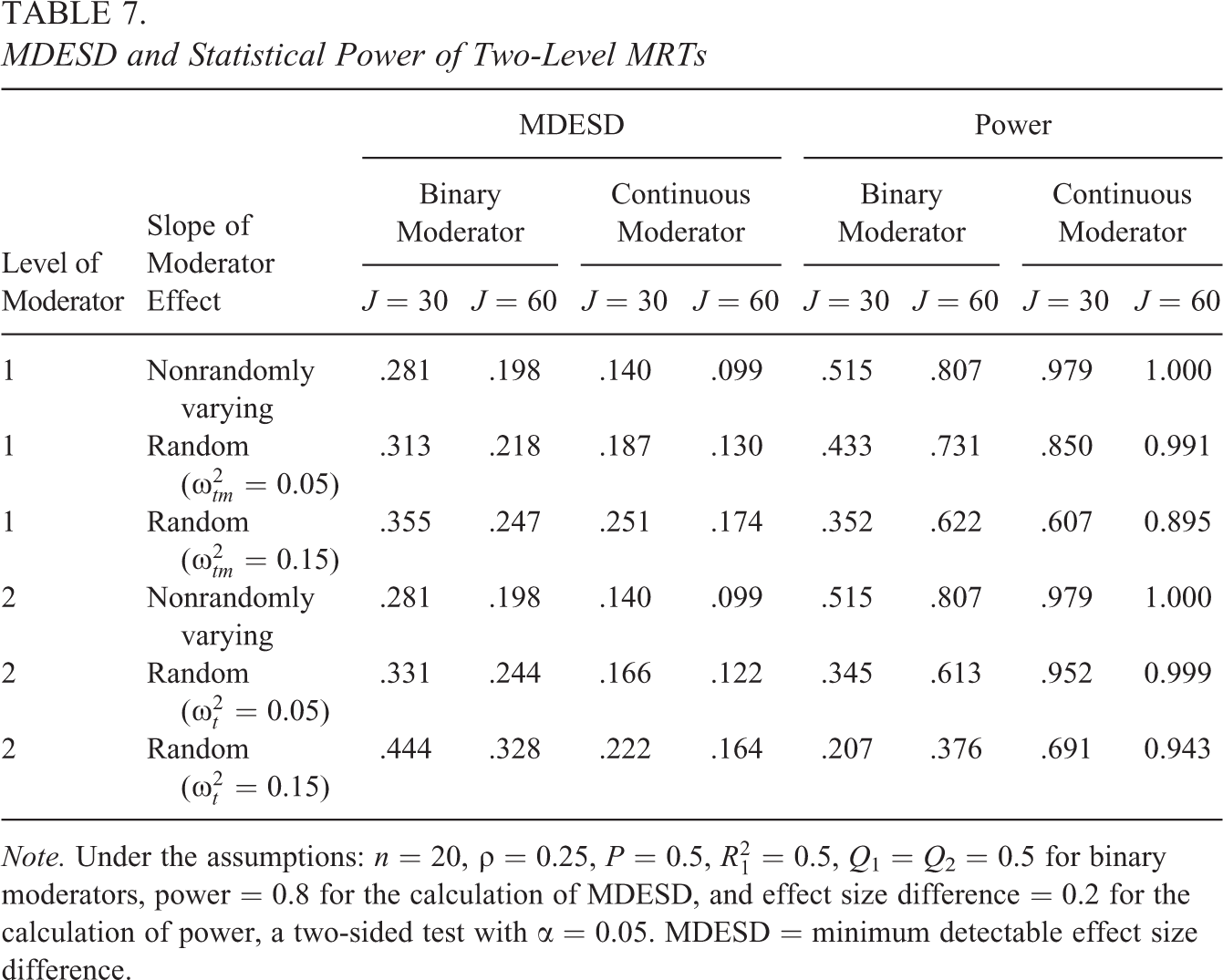

In this section, we compare the MDESD/MDES and power among four moderated treatment effects and the main effect in a two-level MRT using several examples. The MDESD and power for the moderated treatment effects are calculated using the software we developed, which is a Microsoft Excel–based software package implementing formulas in Table 1. The MDES and power for the main effects are calculated using PowerUp! (Dong & Maynard, 2013). Suppose a team of researchers are designing a two-level MRT to test the efficacy of the computer-assisted tutoring intervention on mathematics achievement for the eighth graders. They are interested in student-level moderator effects and school-level moderator effects. They approach the moderator power analyses from two perspectives: (1) What is the MDESD given power of 0.80 and (2) what is the power for a meaningful moderation effect size.

Just like conducting a power analysis for the main effect, the researchers need to determine the meaningful effect size difference with practical significance they would like to detect and make reasonable assumptions of other design parameter values in their power analysis of moderator effects in MRTs. To determine the meaningful effect size differences, researchers may refer to the empirical benchmarks regarding normative expectations of annual gain, policy-relevant performance gaps, and moderation effect size results from similar studies (Bloom et al., 2008; Dong et al., 2016; Hill et al., 2008). For example, Hill et al. (2008) reported students’ math achievement gaps in effect size units from the National Assessment of Educational Progress in Grade 8 are −1.04 for Blacks versus Whites, −0.82 for Hispanics versus Whites, and −0.80 for the eligible versus ineligible for free/reduced-price lunch. The researchers may consider an effect size difference of 0.20 for the computer-assisted tutoring intervention to have a meaningful moderation effect because it is equivalent to one fifth a reduction of Black–White achievement gap and one fourth a reduction of Hispanic–White and eligible–ineligible for free/reduced-price lunch gaps. They may refer to moderation effect size results from similar studies; however, these results are very limited. For demonstration purposes, suppose they decide to use 0.20 as their desired effect size difference in their power analysis.

For other design parameter values, the researchers need to justify their choice based on the literature or pilot studies. Recently, several studies have reported the ICC and the proportion of variance explained by the covariates for academic achievement outcome measures (e.g., Bloom et al., 2007, and Hedges & Hedberg, 2007, 2013, on mathematics and reading; Westine et al., 2013, and Spybrook, Westine, & Taylor, 2016, on science achievement), outcome measures for teacher professional development (Kelcey & Phelps, 2013), and social and behavioral outcomes (Dong et al., 2016). The researchers assume a

There are very few studies reporting the effect heterogeneity across sites values. We only identified Weiss et al. (2017) reporting the treatment effect heterogeneity values (

They use a balanced design with equal assignment of students to the treatment and control groups (

MDESD and Statistical Power of Two-Level MRTs

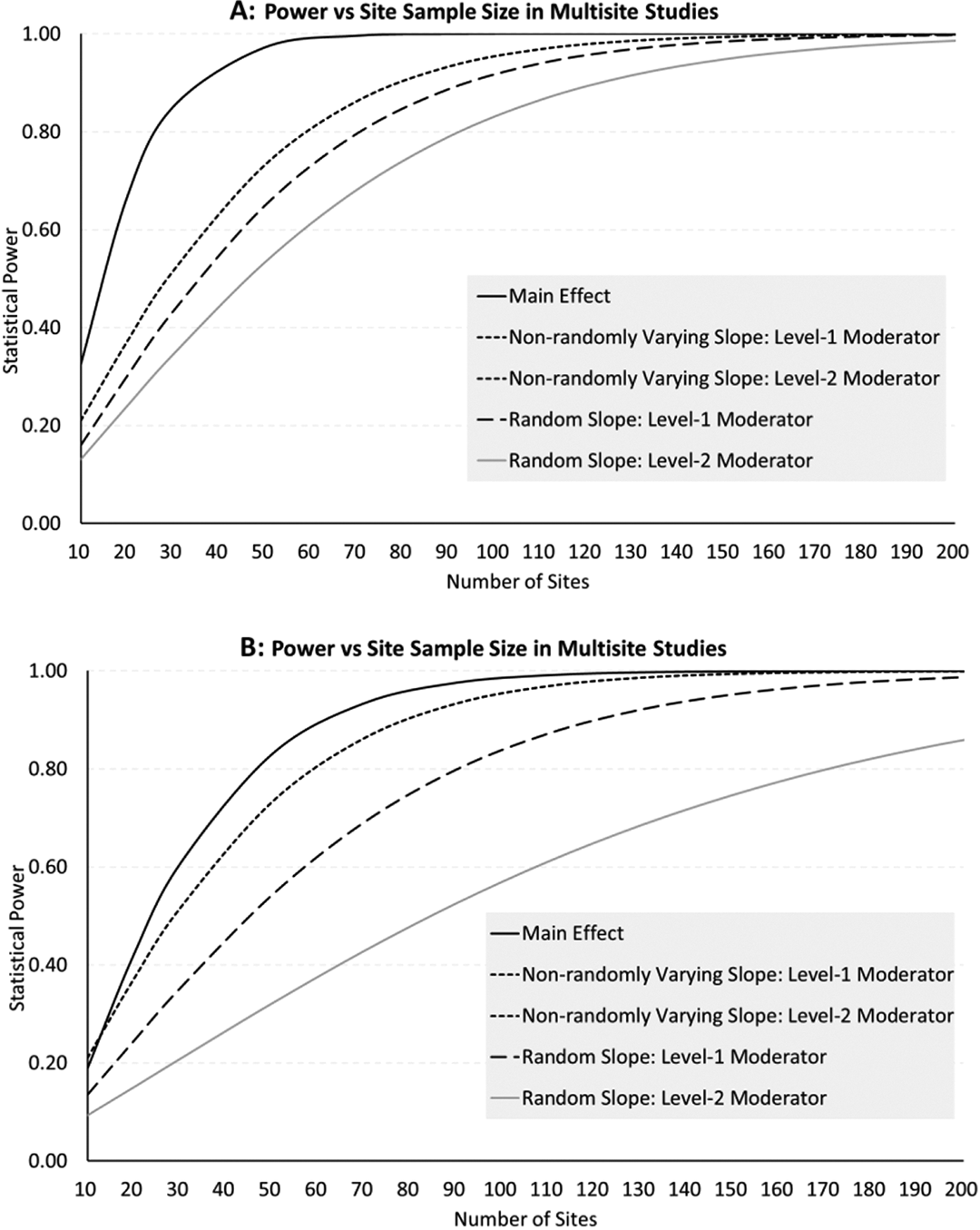

Furthermore, we demonstrate the relationship between power and total sample size of sites by comparing the main treatment effect design with four moderation designs with binary moderators in Figure 1A and 1B. The power was calculated independently for main effects and moderated effects based on the same assumptions as in Table 7:

Power versus site sample size. Note. Under the assumptions:

The findings in Table 7, Figure 1A and 1B, and conceptual comparisons are discussed below. First, as for all power analyses, the power increases with the sample sizes (

Second, the proportion of the sample allocation to the treatment and control group (

Third, the power (MDESD) increases (decreases) when the ICC increases. This is because the sites explain more Level 2 variance, reduce Level 1 variance, and hence reduce the

Fourth, the power increases with the proportion of variance explained by the covariates (

Fifth, a design for detecting main effects always has larger power than detecting moderation effects in a two-level MRT. This is different from CRTs, in which, the power for detecting the effects of a Level 1 moderator with nonrandomly varying slope can be larger than the power for the main treatment effect analysis (Dong et al., 2018).

Sixth, the MDESD is larger or the power is smaller for a random moderator effect than a nonrandomly varying moderator effect. The differences for the power and MDESD between the two models (random slope and nonrandomly varying slope models) decreases when the number of clusters (

Conclusion

As researchers and policy makers are increasingly interested in the moderated treatment effects to answer the “what works for whom, and under what circumstances” questions in MRTs, a power analysis is a critical step. This study fills the gap in the literature by developing a more comprehensive statistical framework and software for power analyses to detect a wide variety of moderated treatment effects in MRTs. We provide some suggestions below.

First, we need to consider three facets of multilevel moderation that are common in practice: (a) Level 1 and Level 2 moderator variables, (b) random and nonrandomly varying slopes (coefficients) of the treatment variable and the interaction term between the treatment and moderator variables, and (c) binary and continuous moderators. We consider binary moderators (e.g., gender) when we are interested in detecting the treatment effect difference between boys and girls or whether the intervention can reduce boys–girls achievement gap; we consider continuous moderators (e.g., pretest) when we are interested in testing whether the association of pretest and posttest is different between the treatment and control groups or whether the treatment effect varies by the pretest. Sometimes we may dichotomize our continuous moderators to produce meaningful subgroups and facilitate the interpretation of moderated treatment effects. We consider Level 1 moderators (e.g., student characteristics) when we are interested in answering “for whom the program works,” and Level 2 moderators (e.g., school characteristics) when we are interested in answering “under what condition the program works.” Furthermore, we consider random (moderated) treatment effects when the theory or prior studies suggest that the (moderated) treatment effect may vary across sites and nonrandomly varying treatment effects otherwise. However, it would be beneficial to assume random effect if there is not clear theory or prior studies suggesting nonrandomly varying treatment effects.

Second, the power for all moderated treatment effects is smaller than the main effect in two-level MRTs. We need larger sample sizes to detect a moderated treatment effect with the same magnitude as the main effect. Regarding improving power, the sample size at the site level is more important than that at the individual level for random (moderated) treatment effects, and they are equally important for nonrandomly varying (moderated) treatment effects. Including Level 1 covariates that are correlated with the outcome, for example, pretest, can improve power. In addition, the power is bigger when the sample size is more balanced among the treatment-by-moderator groups and across sites, for example, the power is the maximum when

This study focused on two-level MRTs. There are many important directions for further work. First, extending the work to three-level MRTs is necessary. For example, in three-level MRTs, where the treatment variable could be at Level 1 or Level 2, the moderator could be at any of three levels, and the (moderated) treatment effect can be either random or nonrandomly varying. The three-level MRTs provide more opportunities to probe moderated treatment effects. Second, accurate empirical estimates of the design parameters are critical for a power analysis. Hence, more empirical studies of design parameters (e.g., ICC, treatment effect heterogeneity, and meaningful size regarding the moderator effects) are important as we move forward.

Supplemental Material

Supplemental Material, 3.Appendix_2020.8.14 - Design Considerations in Multisite Randomized Trials Probing Moderated Treatment Effects

Supplemental Material, 3.Appendix_2020.8.14 for Design Considerations in Multisite Randomized Trials Probing Moderated Treatment Effects by Nianbo Dong, Benjamin Kelcey and Jessaca Spybrook in Journal of Educational and Behavioral Statistics

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project has been funded by the National Science Foundation (1913563, 1552535, and 1760884). The opinions expressed herein are those of the authors and not the funding agency.

Note

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.