Abstract

Preservice teacher performance assessments, such as the edTPA, are one of the accountability policies from states and local authorities designed to ensure the quality of beginning teachers and standardize teacher education. We studied experiences of 65 preservice teachers regarding the effect of the edTPA on their learning in field-placement classrooms. These cases revealed that the edTPA created “protected teaching spaces” for participants to experiment with student-centered instructional practices supported in university courses and codified in edTPA rubrics. This was especially impactful for novices who previously had limited opportunities to try out equitable reform-oriented instruction in their placements. In these cases, the edTPA also helped mitigate inequities in learning to teach, an unintended outcome that is important for policymakers to consider when deciding on credentialing requirements.

Keywords

P

The edTPA is also known to be challenging for TEPs to support and stressful for preservice teachers (PSTs) to complete (Cohen et al., 2020; Jones et al., 2021; Miller et al., 2015). In our own post-edTPA interviews with 65 science PSTs from three different TEPs, participants reported high stress, but the vast majority also described how teaching for the edTPA opened up novel opportunities to experiment with responsive and student-centered instructional practices and said those opportunities made them feel better prepared as teachers. Surprised by these responses, we analyzed more closely the descriptions the interviewees provided and asked the following research questions:

a. What conditions enable these novel opportunities? b. How do the practices that PSTs implement during the edTPA differ qualitatively from what they report observing and/or trying in their mentors’ classrooms prior to the edTPA?

Our findings add new insights to the debate about the challenges and benefits of high-stakes performance assessments used for credentialing by describing how the PSTs’ clinical experiences are shaped by the assessments that are intended to evaluate their readiness to teach in their own classrooms. For many of this study’s PSTs, the edTPA opened protected teaching spaces that enabled experimentation with reform-oriented teaching practices promoted by the TEPs, even if these practices were counter to the routines in their mentors’ classrooms. This opportunity for experimentation in protected teaching spaces mitigated some of the unequal opportunities between PSTs who were in classrooms with limited opportunities to engage in important aspects of teaching and those in classrooms that provided ample support for experimentation with current reform-oriented practices (Windschitl, Lohwasser, & Tasker, 2021; Windschitl, Lohwasser, Tasker, Shim, & Long, 2021)

This study does not endorse a specific performance assessment for credentialing and is not commenting on the edTPA as a benchmark for PSTs’ readiness to teach. It does, however, highlight practical consequences that occur for future teachers when a licensure assessment is adopted or forsaken. More generally, the insights from this study have implications for TEPs in their quest to help PSTs enact equitable and responsive teaching in field placement classrooms, where PSTs are both guests and novices and where instructional practices may not yet reflect a vision of teaching that is supported by current education research.

Teacher Performance Assessments as a Policy Tool

For more than 150 years, the accreditation of teachers has been at the center of educational reform efforts, leading to a goal in the 1970s of developing standards-based teacher performance assessments that would ensure teachers’ readiness for their profession (E. G. Brown, 2011). As part of these efforts, the National Board for Professional Teaching Standards was founded in 1987 to advance and maintain standards for the quality of teaching. Amid growing research on teacher effectiveness and the development of teaching standards, several models of performance assessment were created and tested. By 2002, a consortium of Californian colleges and universities had answered the call of teacher educators who were dissatisfied with the state’s generic assessments by developing subject-specific performance assessments. The resulting Performance Assessment for California Teachers (PACT) was later used to inform the development of the edTPA. Guided by input from educators and subject matter experts, Stanford University’s SCALE designed the edTPA to advance and measure competencies that are foundational to efficacious teaching, and after several years of pilot testing, the edTPA was first implemented in 2013.

Features of the edTPA

In its rubrics, the edTPA outlines a subset of skills that beginning teachers should acquire and which can be assessed through the teaching of a short learning segment. The edTPA evaluates three dimensions of teaching: planning for instruction and assessment, instructing and engaging students in learning, and assessing student learning. For each dimension, the professional performance criteria are outlined in five rubrics, each of which describes a competency level from 1 (not ready to teach) to 5 (advanced practices of a highly accomplished beginner). The instruction rubric, for example, includes criteria for a safe and respectful learning environment, and Level 5 states, “The candidate demonstrates rapport with and respect for students [and] provides a challenging learning environment that provides opportunities to express varied perspectives and promotes mutual respect among students” (SCALE, 2018, p. 22). PSTs provide evidence for meeting these criteria in the form of written commentaries for the planning, teaching, and assessment of three to five consecutive lessons, as well as teaching artifacts, video recordings of the candidate’s interactions with the class, and samples of student work. However, much more is embodied in the work of teaching than what is outlined in the edTPA. Not explicitly assessed, for example, is the deep emotional support teachers provide their students, including methodologies for sustaining culture and advancing anti-racist pedagogies. As Tuck and Gorlewski (2016) noted, “the edTPA is silent on issues of race and equity” (p. 209).

As a policy tool aimed at professionalizing teaching, the edTPA is contested. On one side, supporters claim that the edTPA provides a valid, standardized framework to measure teacher candidates’ competencies (Bastian et al., 2016; SCALE, 2018; Whittaker et al., 2018). They argue that such a unifying framework can improve collaboration, data-driven decisions, and coherence within TEPs and thus promote better support of PSTs (De Voto et al., 2021; Miller et al., 2015; Pecheone & Whittaker, 2016; Peck et al., 2014). Opponents claim that it disregards institutional knowledge (Dover, 2018; Greenblatt & O’Hara, 2015), warns about the influence of private enterprises on public education by pointing to Pearson’s responsibilities for administering and scoring edTPA portfolios (De Voto et al., 2021; Jones et al., 2021), and questions the tool’s validity, challenging whether the edTPA can accurately measure the quality of novice teachers (Gitomer et al., 2021; Lalley, 2017). Furthermore, while the scorers who are selected, employed, and trained by Pearson are experienced teachers, they might be subject to their own (unconscious) biases and thus unable to equitably evaluate unfamiliar contexts and approaches to teaching (Hébert, 2019; Kuranishi & Oyler, 2017; Tuck & Gorlewski, 2016).

Critics also point to the role of the edTPA as a gatekeeper to the profession through its high costs and argue that the edTPA reinforces normative, Whiteness-centered instructional norms (Petchauer et al., 2018; Tuck & Gorlewski, 2016). Meanwhile, others, such as Sato (2014) and Whittaker et al. (2018), contend that the edTPA is flexible enough for PSTs to include a variety of instructional frameworks, including those that emphasize and promote social justice.

This two-sided discussion emerged from edTPA studies that were mostly conducted during the implementation phase of the edTPA; however, it is also reflected by later findings, including those from Cohen et al. (2020) and De Voto et al. (2021). These later studies describe how the implementation and framing of accreditation policies by TEPs influenced whether these policies were seen as a “tool for inquiry or compliance” (De Voto et al., 2021, p. 42). De Voto et al. (2021) describe this dichotomy as a reflection of ongoing disagreements about how to define and measure effective teaching. They note that TEPs that perceived the edTPA as an inquiry tool were able to establish a support system for their candidates and to see it as beneficial for promoting communication and legitimacy across collaborators, enabling data-driven improvement, and aligning with professional standards. On the contrary, if challenges with implementation on school, program, or instructor levels were pervasive or if philosophical inconsistencies with the normative values of the edTPA or its policy implications prevailed, the edTPA was widely rejected. These findings suggest that how the edTPA is implemented impacts how PSTs experience this performance assessment (Cohen et al., 2020).

Science-Specific Features of the edTPA

For each of the 27 subjects for which the edTPA is available, its framework requires PSTs to address the knowledge, thinking skills, and subject-specific practices that their students should learn. In the area of science, the implementation of the edTPA overlapped with another policy initiative: the implementation of the Next Generation Science Standards (NGSS), which are national K–12 performance-based science teaching standards (NGSS Lead States, 2013). NGSS-aligned standards have been adopted by 44 states and the District of Columbia. Under the NGSS, teachers engage their students in disciplinary ideas through science and engineering practices to support their integrated understanding of the nature of science and their ability to explain natural phenomena or develop solutions to relevant problems. Both the edTPA and NGSS frameworks (National Research Council, 2012) emphasize contextualization and the connectedness of lessons, students’ sense-making about science ideas, engagement with disciplinary work, and the use of diverse classroom assessment practices to improve learning, as recommended in the consensus document Taking Science to School, issued by the National Resource Council in 2007. An example of a science-specific practice from the NGSS is found in the edTPA Rubric 7, Engaging Students in Learning. The rubric asks whether a “candidate supports students in constructing evidence-based explanations of or predictions about the [science] phenomenon AND Students use evidence and/or data and acceptable science concepts to support or refute alternative explanations or predictions” (Level 5). Because the science edTPA and the implementation of the NGSS communicate similar pedagogical commitments, they are expected to be mutually conducive for PSTs’ learning.

PSTs study the NGSS in their TEP methods courses, but they do not necessarily observe all the competencies the NGSS ask for in their host classrooms (Carpenter et al., 2015) because it takes “a considerable effort to embrace this new vision” (National Science Teaching Association, 2013, p. 2) and many schools are still in the process of implementing NGSS-aligned curricula. Consequently, if the NGSS are not yet realized in the field placement context, the science edTPA may evaluate candidates who are constrained by contextual factors when attempting to teach their edTPA lessons.

The edTPA and PSTs’ Learning—Overview of the Literature

As a policy tool designed to professionalize teaching through the standardization of teacher education, the edTPA can be viewed as a catalyst for change by teacher preparation programs, thus influencing what PSTs learn in their preparation courses. Although studies suggest that PSTs’ experiences in the field have an even bigger impact on the teaching of prospective teachers than their preparation courses, the impact of the edTPA on field placement settings is far less researched. In this section, we give a brief overview of some of the relevant literature concerning factors that impact how PSTs learn to teach.

The edTPA’s Influence on PST Learning Through Programmatic Factors

The edTPA was designed to assess the readiness of future teachers for effectively teaching in their own classrooms. However, it is also seen as a measure of how well TEPs prepare their candidates for their profession (Peck et al., 2014). From this perspective, the edTPA has the potential to initiate and sustain TEP improvement by highlighting areas where change may be warranted (Darling-Hammond et al., 2010; Lit & Lotan, 2013; Pecheone & Chung, 2006; Peck & McDonald, 2013; Whittaker & Nelson, 2013). Paugh et al. (2018) report, for example, how one university TEP was tasked to pilot the edTPA in 2010. Faculty took this opportunity to leverage the edTPA requirements and created a new seminar assignment that “encouraged candidates to learn more about their students’ cultural and linguistic backgrounds, individualized special needs, as well as their interests” (Paugh et al., 2018, p. 9). Field supervisors’ and PSTs’ appraisal of this assignment indicated that it opened important, previously unsupported learning opportunities within the program and prepared PSTs for the student-focused aspects of the edTPA. Other TEPs, too, have applied the edTPA as an instrument to support critical inquiry into teaching, learning, and program alignment, which has led to evidence-based improvements (Bastian et al., 2016; De Voto et al., 2021; Kissau et al., 2019; Peck et al., 2014). These programs have established a variety of mechanisms that support the implementation of the edTPA, such as professional development for faculty, PSTs, and mentor teachers, as well as edTPA advisory committees (Olson & Rao, 2017). In these ways, the edTPA can act as a lever for increasing the quality of teacher education. Other literature contradicts such experiences, however, claiming that candidates are not as well served when faculty members’ professionalism is infringed upon by outside directives (Liu & Milman, 2013; Whittaker & Nelson, 2013). This literature suggests that using edTPA results to impose directives on faculty could limit programs’ instructional vision (Lit & Lotan, 2013; Reagan et al., 2016), constrain the syllabi (Kornfeld et al., 2007), and prevent candidates from being prepared for a global society (Au, 2013; Greenblatt & O’Hara, 2015; Sato, 2014). Whittaker and colleagues (2018) respond by arguing that the edTPA encapsulates well-established education research and professional standards and does not promote specific instructional approaches. They also argue that the edTPA guidelines are flexible enough that candidates can design learning segments that fit their individual contexts and include practices that support the learning of their unique student populations.

Cohen et al. (2020) point to the differences between clinical and research faculty’s support of the edTPA. Their research indicates that faculty who directly support PSTs in their field placements are more inclined to see the edTPA framework as valuable for guiding clinical experiences compared with colleagues who are not involved in clinical work.

Learning to Teach in the Field Placement Context

Teacher education programs aim to prepare professionals who can effectively and equitably educate K–12 students. Therefore, in addition to having PSTs attend a variety of general education and subject-specific methods courses, most programs require PSTs to work in classrooms alongside experienced teachers who act as mentors and who can model competent and caring pedagogy, give feedback, and provide emotional support (A. Clarke et al., 2014; Roegman & Kolman, 2020). It is widely noted how strongly these clinical experiences influence PSTs’ learning (Anderson & Stillman, 2013; Grossman et al., 2009; National Academies of Sciences, Engineering, and Medicine, 2020). Darling-Hammond (2014) frames preservice teachers’ clinical experiences as one of the most pivotal components in the preparation of future teachers to plan and teach effectively. However, this part of a PST’s educational journey can also be characterized by lost opportunities when mentors fail to model effective teaching, give limited feedback, and provide minimal opportunity for PSTs to try what they learned in their teacher education courses (Anderson & Stillman, 2013; Valencia et al., 2009).

Coordination between university-level courses and these clinical settings poses an ongoing challenge (Zeichner, 2012) because TEPs have little influence over what kind of teaching PSTs will experience in their mentors’ classrooms. Therefore, the quality of PSTs’ experiences varies widely (Windschitl, Lohwasser, & Tasker, 2021; Windschitl, Lohwasser, Tasker, Shim, & Long, 2021; Zeichner & Bier, 2017). Windschitl, Lohwasser, and Tasker (2021) found, for example, that PSTs’ opportunities for substantive co-planning of lessons with their mentors varied in duration and quality. While some PSTs never saw their mentor teachers plan for upcoming lessons at all, others had the opportunity to gain deep knowledge of planning with guidance from their mentors and learned to design engaging lessons that were responsive to students’ interests, needs, and developing ideas. Such support from mentor teachers is seen as vital for the professional growth of PSTs (Pecheone & Chung, 2006).

Congruence between the instructional frameworks employed in the TEP and the prevailing practices and classroom cultures in field placements also plays an important role in preparing future teachers. Low congruence restricts the opportunities for candidates to engage in principled instructional experimentation and perpetuates delivery-heavy teaching and procedural activities for students (Anderson & Stillman, 2013), thus limiting the candidates’ opportunities to participate in and make sense of practices aligned with research-based teaching frameworks (Windschitl, Lohwasser, & Tasker, 2021). Even more concerning, PSTs in low-congruence classrooms reported that they became proficient in practices they considered inequitable (Windschitl, Lohwasser, Tasker, Shim, & Long, 2021). Other studies, however, provide evidence that candidates can still benefit from experimenting with effective practices even if these approaches differ from the ones their mentors employ (Braaten, 2018; Kang, 2020; Long et al., 2022).

Research also suggests that mentor familiarity with edTPA requirements benefits PSTs (Kissau et al., 2019). Less explored is the question of whether performance assessments can help candidates to shape contextual factors in their placements and consequently the opportunities to practice and learn where the teaching takes place. Bell and colleagues (2019) call the role that school contextual factors play in PSTs’ abilities to develop as educators an “underdeveloped dimension of current research” (p. 52).

The edTPA and Preservice Teachers’ Learning

In programs that require the edTPA as part of the credentialing process, PSTs conduct this classroom-based assessment later in their clinical experiences to demonstrate that they are meeting the instructional needs of their students. In these programs, the edTPA is placed at the intersection of what PSTs have learned in their preparation courses and their takeaways from the classrooms where they practice teaching. Consequently, tensions arise when the instructional visions of the performance assessment, the TEP, and the school context differ (Ahmed, 2019; Clayton, 2018; Meuwissen & Choppin, 2015). The detailed expectations of the edTPA can also feel unrealistic, which may constrain PSTs’ learning. Jones and colleagues (2021) note that PSTs experienced tensions during the edTPA when they tried to interpret what edTPA scorers might expect and when this interpretation led to inauthentic representation of their teaching. For elementary PSTs, the additional pressure of having the edTPA completed within a certain timeframe took away from their efforts to develop their teacher identities (Shin, 2021). Both studies also reference the lack of feedback that PSTs receive when teaching for the edTPA as a lost opportunity for learning.

Other studies, however, report that adhering to research-informed teaching and learning frameworks, such as the ones detailed in the edTPA rubrics, has a positive effect on PSTs’ understandings of their own instructional practices in relation to their students (Chung, 2008), which novices can carry into their future classrooms (Napolitano et al., 2022). A survey by Pecheone and Chung (2006) of more than 1,000 student teachers indicates that by completing such performance assessments, PSTs gained knowledge and skills that are commonly recognized as important for teaching. In another study with data derived from 133 elementary and secondary PSTs, Paugh and colleagues (2018) show more specifically that understanding the connections among planning, teaching, and assessment, as well as recognizing the importance of knowing their students, are crucial aspects of PSTs’ learning from the edTPA. Case studies (Chung, 2008; Huston, 2017; Lin, 2015) reveal how candidates’ concerns shifted from their own teaching toward student engagement by conducting a TPA and how they developed an increased awareness of the need for effective strategies to reach all students, including multilingual learners. In general, candidates’ learning through the edTPA is seen in this strand of literature as a result of the focused attention and guided reflection on effective teaching practices (Athanases, 1994; Chung, 2008; Darling-Hammond, 2014). Still, it is important to note that productive reflection depends on the kind of teaching experiences PSTs can reflect on, and the literature lacks analyses of how the edTPA enables or constrains these experiences.

Learning by Doing the edTPA—A Conceptual Framework

Conditions for Learning From Teaching During the edTPA

The aim of clinical experiences is to create opportunities for PSTs to take increasingly active teaching roles in a setting safeguarded by the mentor teacher so that they can develop the knowledge and skills they need to be successful in their own classrooms. This “coming to know” process can be understood through the lens of situated learning (Greeno & Engeström, 2014): PSTs’ learning in the field is rooted in activity with others (e.g., mentors, students in the classroom) and mediated by tools and culturally defined approaches that guide how these actors participate together in the work of teaching and learning. These activities, tools, and understandings are part of a broader system of social relations produced by and reproduced within communities (e.g., schools and TEPs). If learning is considered an evolving form of membership within such communities, where identity, knowing, and social membership codevelop (Holland et al., 2003; Lave & Wenger, 1991), then we need to recognize that PSTs depend on these communities for how they perform on the edTPA.

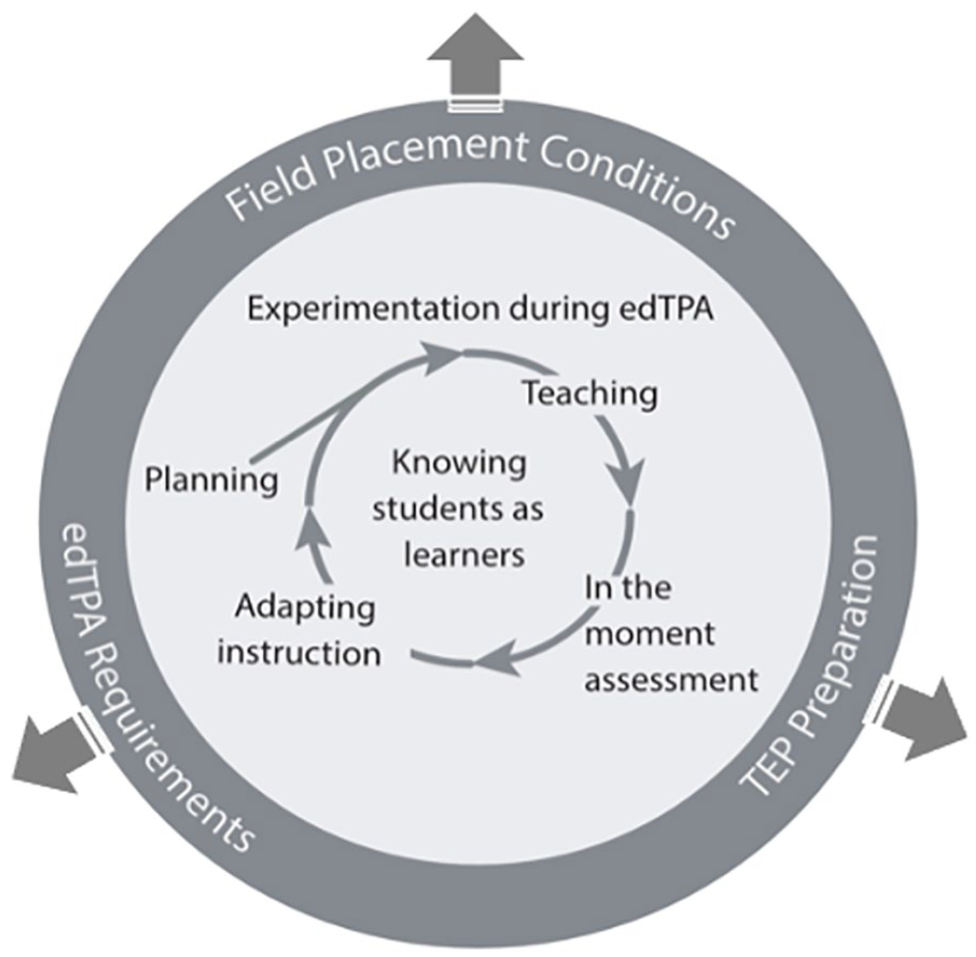

During the edTPA, PSTs are placed amid the expectations of three communities of practice. Figure 1 shows the “Spannungsfeld” (area of tension) that can result when the expectations of these communities, which exert considerable power over the PSTs’ instructional decisions, diverge (arrows pointing away from the outer ring of Figure 1). During their time in the field, candidates need to reconcile what they have learned in their TEP with what is possible in the host classrooms (Valencia et al., 2009). In addition to operating within their schools and TEPs, teacher candidates must also consider the expectations of the wider education community as reified through the edTPA and, as shown in Figure 1, consider what they have learned about their students (the teaching cycle revolves around knowing students as learners).

Conditions that influence teaching for and learning from the edTPA experience.

Experimentation as Foundation for Reflection and Learning

Guided reflection, as required by the edTPA, is widely acknowledged as an important factor in PSTs’ learning (see, for example, Chung, 2008; Lin, 2015). However, reflection on classroom experiences has long been an integral requirement in TEPs, and reflection alone cannot fully explain candidates’ novel opportunities to learn when conducting the edTPA. We therefore argue that it is the quality of the candidates’ teaching attempts that greatly impacts the quality of their reflection and learning. In other words, it is not the reflection itself but the kind of teaching that is possible in the field placement—which is the foundation of such reflection—that influences professional learning the most.

As a summative assessment, the edTPA aims for PSTs to show in their reflective commentaries that they can adapt instruction based on what they came to know about their students. Such reflection in action (Schön, 1983) requires that actors have the flexibility to experiment. This need for flexibility is in accordance with studies of teacher learning that underscore the importance of experimenting with one’s own vision and instructional understanding to learn from reflection and improve one’s practice (D. Clarke & Hollingsworth, 2002; Hammerness, 2006; Kang, 2020; Shulman & Shulman, 2009). Enacting somebody else’s approach to teaching, which is common when PSTs largely mirror the work of their mentor teachers, is less impactful. On the contrary, given that the mentor has created a supportive environment, PSTs’ productive experimentation with their own visions and understandings could result in novel opportunities to learn regardless of whether research-based practices were already the norm in the classroom where they learn to teach (Kang, 2020; Long et al., 2022). The question is: Can teaching for the edTPA provide the opportunity for such experimentation beyond what was previously possible?

The Study

In this qualitative multicase study, we analyzed how 65 secondary science PSTs from three university-based TEPs experienced a high-stakes performance assessment (the edTPA) during student teaching. We employed a subset of data from a larger study that explores the clinical experiences of these PSTs during their entire time in the field (7–9 months) in school years 2015/2016 and 2016/2017 (Windschitl, Lohwasser, & Tasker, 2021; Windschitl, Lohwasser, Tasker, Shim, & Long, 2021). Data from this overarching study informed our contextual understanding of the cases we present here.

Teacher Education Programs

The university-based teacher education programs we studied are located in the Northwest (U1), Southwest (U2), and Midwest (U3) regions of the United States. They were purposefully chosen due to their comparable features. All three graduate programs were early adopters of the edTPA with systems in place that support candidates with the logistics of taking the performance assessment—a support structure that De Voto et al. (2021) define as “high-capacities/high-will” (p. 45). Furthermore, all programs prepare their PSTs for teaching in diverse and high-needs communities and use in their science methods courses an instructional framework for science teaching that focuses on students’ experiences and evolving ideas as the basis for adapting instruction and ensuring equitable participation and deep learning in the classroom (Larkin, 2014; Stroupe et al., 2020; Windschitl et al., 2018). This framework is supportive of the complex and linguistically challenging requirements of the NGSS and promotes formative assessments, such as diagnostic conversations with students, scientific modeling, and the use of exit slips to improve instruction. These instructional practices are supported with tools and discourse routines and studied through classroom video examples. Such practices are often not present in the field placement schools (Braaten, 2018; Stroupe et al., 2020). Because the methods courses under these programs serve cohorts of only 10 to 15 science candidates per year, they can personalize support more than may be the case in larger programs (Cohen et al., 2020; De Voto et al., 2021).

University assignments for PSTs’ instructional work in their host classrooms were also similar. Early in the school year, PSTs were tasked to try short instructional practices, such as checking in with individual students, giving instructions for activities, or conducting exit slips for formative assessment. Later, PSTs were asked to implement a lesson they had prepared with the support of their instructors and peers. And in preparation for the edTPA, PSTs would independently teach several consecutive lessons. The execution of these assignments could vary from using predesigned lessons from their mentor teachers to including their own instructional ideas, dependent on the PSTs’ agency, mentor openness, and the congruency of the university and classroom instructional visions (Long et al., 2022).

TEPs can prepare their PSTs for the execution of the assignments but have no authority over the work and experiences that take place in the host classrooms. A lack of communication between teacher educators and mentor teachers in the field (A. Clarke et al., 2014) intensifies this divide. For the participating programs, the TEPs’ expectations and responsibilities for mentor teachers were mostly conveyed in a written document, and there was no training for mentors.

Participants

All science PSTs from two cohorts at the three TEPs (67 individuals) were solicited and offered a stipend that honored participants’ time commitment. All but one member of these cohorts agreed to participate, and another dropped out, leaving 65 participants from whom we collected data. Among the participants, four identified as Chinese American, four as Filipina/o, five as East Indian, four as Latina/o, five as more than one ethnicity, and 43 as White. The majority (48) of the participants identified as women. Seven were first-generation college students, and 14 were first-generation immigrants. All had earned a bachelor’s degree in science prior to entering the program. Potential gate-keeping functions of science undergraduate programs might have limited the application pool to the TEPs participating in this study.

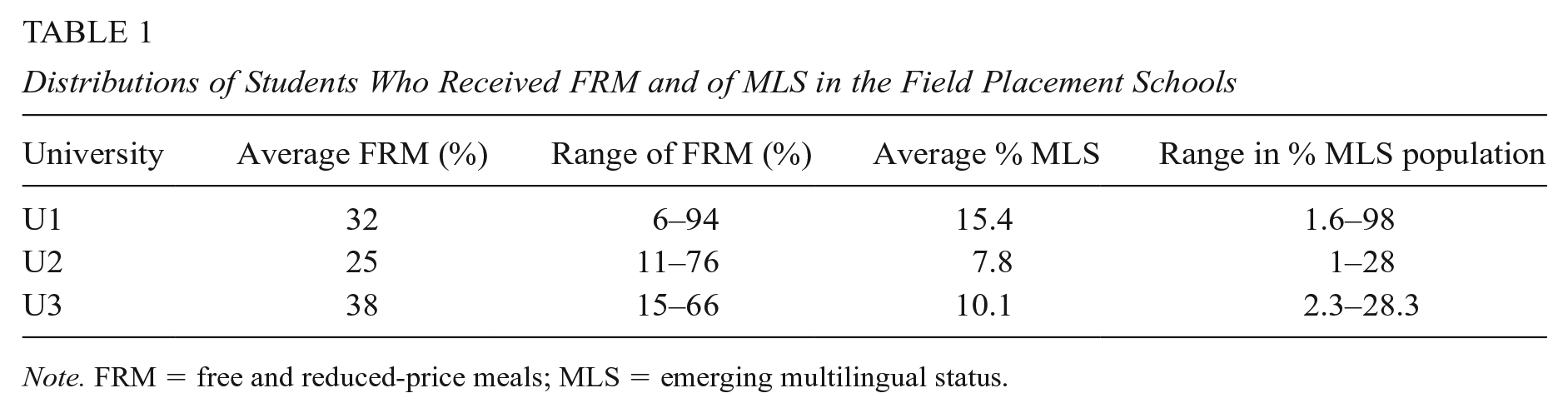

From the beginning of the school year in August until early April (U1) or June (U2 and U3), PSTs were assigned to urban and suburban middle and high schools for their clinical work. PSTs from U1 and U2 stayed with the same mentor teachers, whereas PSTs from U3 transitioned in October to another school for approximately 2 months and back to their first placement in January. A few of these U3 PSTs were placed with a third mentor teacher. The partnering schools served students with wide ranges of socioeconomic backgrounds (based on eligibility for free and reduced-price meals) and emerging multilingual status (MLS), as listed in Table 1. The student population in these communities had become considerably more diverse in recent years. At the time of the study, the NGSS had been implemented to varying degrees in the field placement schools, which created incongruencies between more traditional instructional approaches adopted in classrooms and the NGSS-aligned approaches adopted in the TEP science courses. Researchers were located at one of the participating sites. Our coauthor, Mark Windschitl, was also an instructor for one of the science methods courses. Three of the researchers had been teachers in public schools previously, and all had worked in TEPs in various capacities.

Distributions of Students Who Received FRM and of MLS in the Field Placement Schools

Note. FRM = free and reduced-price meals; MLS = emerging multilingual status.

Data Sources

We used a subset of data from a larger study that mapped the clinical experiences of the PSTs over the entirety of their time in the field. The first two interviews enabled us to construct a unique profile of each candidate’s placement and experiences prior to the edTPA (for more detail, please see Windschitl, Lohwasser, & Tasker, 2021; Windschitl, Lohwasser, Tasker, Shim, & Long, 2021). For this study, we focus on Interviews 3 and 4, one of which was conducted immediately after candidates had taught their edTPA lessons. The other was conducted at the end of their clinical placements. Interviews 1 and 2 served as a reference when comparing the learning during the edTPA with prior opportunities. During the 1-hour semi-structured interviews, we asked PSTs to identify actors, events, and conditions that influenced their understanding of practices related to planning, teaching, assessment, and what they knew about their students. In Interview 3, while not asked specifically about the edTPA, PSTs shared extensively their recent experiences of conducting the edTPA. If necessary, we prompted PSTs to elaborate on specific incidences. For example, when a candidate mentioned that they gave feedback to students on their assessments, we probed for descriptions about what that feedback looked and sounded like. During the last interview, candidates were encouraged to reflect on the entirety of their field experiences and in particular on the edTPA and how it might influence their future work as teachers. To corroborate PSTs’ accounts, we interviewed field supervisors and documented their observations of the different school contexts and classroom cultures and their insights into teacher candidates’ opportunities to engage in the work of teaching. Candidates’ edTPA commentaries were also consulted to triangulate data. We were able to interview some, but not all, of the mentor teachers, who largely confirmed the accounts of their mentees.

Data Analysis

For this study, we used transcripts of Interviews 3 and 4 from all 65 PSTs to identify narratives related to the edTPA. These narratives were not always linear and to the point, and as a first step of data reduction and organization, we summarized and sorted PSTs’ answers into categories afforded by the interview questions (Coffey & Atkinson, 1996; Lincoln & Guba, 1985). The resulting interview short-forms included PSTs’ descriptions of and verbatim quotes about (a) what they had learned about their students and how this information influenced their thinking and their practice; (b) opportunities to observe planning, co-plan, plan independently, and receive feedback on their unit and lesson plans; (c) opportunities to observe, co-teach, teach, and debrief with mentors and coaches; (d) opportunities to codesign and design formative and summative assessments; and (e) unique events or changes in the field placement context. The research team then decided for each PST’s interview whether the participant’s comments illustrated new and/or important opportunities to experiment with and learn from teaching practices. Through this last step, we realize that we had numerous cases of PSTs who pronounced their experiences with the edTPA as their main opportunity to try instructional practices they had learned in their methods courses, such as including phenomena in their teaching that they felt were relevant for their students, eliciting and working responsively with their students’ ideas, and designing assessments that made them realize how students were or were not able to make sense of science concepts.

In a second iterative round of analysis for this study, we focused first on the cases from PSTs that provided the most detailed accounts of their edTPA experiences. We “listened” for descriptions about conditions created by the edTPA that allowed these PSTs to experience novel opportunities to practice and learn from teaching, as well as for other emerging themes (open or inductive coding, Merriam, 2009; Mihas & Odum Institute, 2019). In addition, we analyzed how PSTs compared the kinds of instructional practices they implemented for the edTPA with the ways they were involved in teaching prior to the edTPA. During the last interview, the interviewees were prompted to look back on their experiences so that we could also analyze whether the changes the edTPA had reportedly initiated, such as a shift in responsibility for their students’ learning, lasted to the end of their field placements or whether the changes had (partly) reverted to pre-edTPA conditions. Owning our own experiences and what is described in the literature about the challenges PSTs face when doing the edTPA, we added “tensions” as a theme to actively look for (deductive coding, V. Brown & Clarke, 2006; Mihas & Odum Institute, 2019; Ryan & Bernard, 2003). The work with these more explicit cases primed us to recognize where these themes were expressed in more subtle ways in the remaining interviews and whether they remained relevant across all participants (Merriam, 2009).

We then (re)analyzed the experiences of all 65 participants several times, “searching for similarities and differences by making systematic comparisons across units of data” (Ryan & Bernard, 2003, p. 91), and in the process, we refined and merged codes related to the themes we had identified and defined which descriptions we would not count, such as inferences (e.g., “I think my mentor had never used this strategy”) or general statements that did not provide concrete examples (analytical coding, Merriam, 2009; Mihas & Odum Institute, 2019). This step resulted in the collaborative development of a code book that ensured interrater reliability. To gain a better sense of the prevalence of certain codes, we organized themes and related codes against all participants in a spreadsheet and marked which interviews showed certain themes and specific codes so that we could count the prevalence across our entire data set. However, the quantitative validity of these data is limited because not all candidates went into similar depth about their edTPA experiences, especially if other events were more important during the time of the interview, such as an instance in which the PST and her students were recently affected by a shooting in the community.

Throughout the process of analyzing our data, we constantly compared our analyses and had at-length discussions about each of the cases and about categorizations that were ambiguous or unclear. Although we asked for background and ethnicity data in the first interview, we could not identify any instances in which a PST connected their background or ethnicity with how they perceived their opportunities or limitations on what they could do during their edTPA experiences.

Findings

Looking back over the entirety of their clinical experiences, almost all PSTs recounted opportunities to practice and learn from teaching during the edTPA that were more intense and qualitatively different than their experiences prior to the edTPA. To answer our overarching research questions, we start with findings that we could quantify across the interviews. These findings show that the edTPA furthered novel opportunities for instructional experimentation for participants. We then share qualitative data that show how the edTPA provided opportunities to experiment with instruction. We were especially interested in how the conditions for experimentation shifted during the edTPA and whether PSTs’ instruction was qualitatively different than what they had experienced previously. Finally, we review the types of tensions that arose when PSTs conducted the edTPA.

Who Reports Opportunities to Learn From the edTPA?

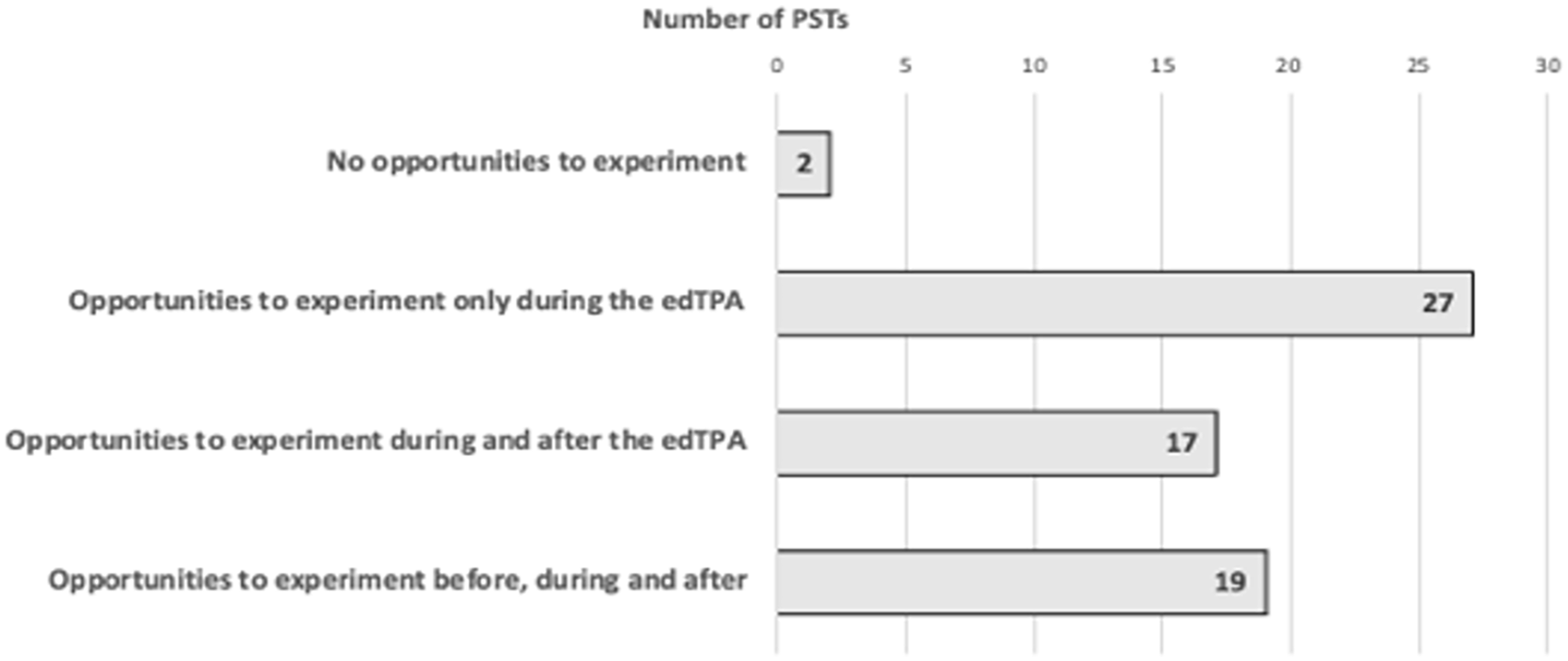

During their edTPA teaching, almost all PSTs were allowed to incorporate practices they had learned in their TEPs into the design of several lessons, teach these lessons consecutively, and assess their students’ understanding of the learning objectives. Only two candidates were required to strictly adhere to the curricula predesigned by their science departments (see Figure 2).

PSTs experiment with the interrelationships among planning, teaching, assessment, and knowledge of students.

In reviewing their clinical experiences, PSTs reported having multiple opportunities to try different parts of teaching prior to the edTPA. However, experiencing how their planning, teaching, assessing, and knowledge of students worked together to influence students’ learning outcomes was rare during this timeframe. Only 19 participants (29%) had already experienced a teaching cycle that allowed them to modify instruction based on assessments of students’ learning. For 17 PSTs (26%), the edTPA was the first opportunity to experience these interrelationships, and their opportunity to design interconnected lessons continued, at least to some degree, until the end of their placements. For 27 participants (42%), the edTPA unit was their only opportunity for such an experience. These data suggest that in more than two thirds of placements (44, or 68%), the edTPA served as an important marker indicating it was time for PSTs to take on more instructional responsibilities. A majority of PSTs (83%) expressed in their interviews that conducting the edTPA opened novel opportunities to learn. We will explore their opportunity profiles below, but first we examine why the remaining 11 PSTs (17%) claimed they had no new opportunities to learn during their work on the edTPA.

Interestingly, six of these 11 PSTs explained that early in their field placements, their mentors had already involved them in the design and enactment of teaching that showed many features of the student-centered teaching highlighted in their university courses. In addition, they had been able to successfully carry out university assignments that were aligned with the requirements of the edTPA. For example, Tracy (U2) mentioned that “one of the [TEP] assignments . . . was a 3-day unit plan, so it’s very similar to the edTPA.”

Yet even these teacher candidates who reported that they had already experienced opportunities to do learning segments similar to the ones required by the edTPA stated that the “extra effort” involved when conducting and writing up the edTPA helped them improve their practice. They recognized more details in student–teacher interactions; became more aware of the connections between planning, teaching, and assessment; and/or gained deeper insights into the effects of their teaching decisions on students’ learning. We report these findings in more detail in the qualitative section below. As another PST shared, “the only thing” he learned was about his “three focus students and really delving deeply into their thinking process [more] than I otherwise would have” (Joseph, U1). As educators, we consider this detailed examination of students’ thinking a major accomplishment for a novice teacher.

In contrast, the remaining five PSTs who could not recall new insights from conducting the edTPA reported that they felt overwhelmed by the requirements and attributed this feeling to a lack of prior opportunities to plan, teach, and assess in ways consistent with instructional reforms or the edTPA. Some of them, like Conor (U1), saw credentialing as the only merit in completing the edTPA: “I needed to get through the edTPA to graduate.”

These data suggest that the participants who described no new learning from the edTPA fell into two categories: either they were so well prepared that they felt the edTPA did not provide new or different experiences or they were too unprepared to take advantage of the learning opportunities offered by the edTPA. For most of the participants, however, the edTPA experience had major implications for their abilities to experiment with and learn from instructional practices that often diverged from the norms of their placement classrooms.

Novel Opportunities—Linking Instruction Through the Knowledge of and About Students

From the detailed accounts of 54 candidates who stated that the edTPA had provided them with considerable opportunities to learn, we identified what conditions allowed opportunities to emerge and how PSTs used these opportunities to experiment with teaching in ways that were new for them. We organize the findings along a timeline that parallels how the edTPA experiences unfolded for the candidates: preparing for edTPA teaching, experimenting with teaching for the edTPA, and assessing learning and reflecting on teaching. Finally, we exemplify the tensions that arose along the way. All these experiences appear to be linked to candidates’ knowledge about students, and references to this theme are woven throughout.

Preparing for edTPA Teaching

Candidates put significant effort into planning for their edTPA lessons, merging what they had learned in their courses and field placements with knowledge they had gained about their students. Independent and intensive planning was for many candidates (50/65) their first big shift in practice, especially for those whose mentors had neither co-planned with them nor made their planning routines explicit. As Paul (U1) acknowledged, “I pushed myself as hard as I could. Because I felt like everything I put in was worth it because . . . whatever came of it I knew was a result of what I did.” This intensive planning resulted in new and emerging instructional foci related to students’ engagement and learning that were guided by edTPA requirements and could be enacted by including instructional practices PSTs had learned in their university courses.

It was not uncommon for the PSTs to take several weeks to compose their edTPA units, as about half of the PSTs explicitly stated in their interviews (31/65). Robert (U3), for example, started in January to plan the edTPA unit he would teach during the end of March. So far, Robert had only experienced how to design instructional sequences in the first of his three placements, and he was struggling in his current placement to learn from his “hands-off” mentor who did “more lecturing and worksheets.” We will return to Robert several times throughout this “Findings” section, as his case exemplifies a PST who had very little opportunities prior to the edTPA to learn teaching in a student-centered way, yet he tried very hard to put what he had learned in his TEP courses into practice despite his lack of experience. For his edTPA, Robert chose an instructional sequence on cell division and differentiation. He decided to link the lessons through the healing of wounds as an “anchoring phenomenon” and to start with students sharing stories about their own scars. Robert’s decision was motivated by the edTPA prompt that asked how candidates would use their knowledge of students’ experiences and ideas to inform instruction. He also knew from a survey he had previously administered to his students that many of them were interested in animals. Therefore, Robert used as a contrasting phenomenon the limb regeneration of the axolotl, a “particular species of salamander . . . which is endemic to Mexico, a fact I plan on bringing up” as a nod to his students’ interests and heritage.

Such intensive preparation enabled Robert and other PSTs to not only connect lessons to students’ interests but also to focus on the instructional purpose of each activity, which often remained obscured for the PSTs, when they observed or used lessons that were predesigned by their mentors. Rachel (U2), for example, commented, “I have been . . . more deliberate in my planning since the edTPA, and everything I have my students do has a specific reason behind it that’s going to help them meet their learning goals that I set.” By creating a learning sequence herself, Rachel had recognized the importance of aligning learning goals, activities, and students’ abilities to construct new understandings. She was one of several participants who mentioned the importance of paying close attention to the purpose of each activity and its role within the larger context of instruction and students’ learning as a key takeaway from the edTPA.

Furthermore, when candidates were required to show evidence for the edTPA that they knew what accommodations their students needed, they started to realize the implications of this knowledge for their own teaching. Even when their programs had already asked them to explore their students’ backgrounds, plans for special education, and English proficiency status, this information had seldom been used to inform instructional decisions in their host classrooms. Based on their inquiry into students’ backgrounds and individualized education plans, PSTs started to translate—often for the first time—such knowledge into actionable plans for modifying their lessons to intentionally accommodate their students. Sylven (U1) made clear that the process of writing down his plans for the lessons encouraged him to be more specific about what instructional support certain students would need:

I would say the biggest thing was thinking about the accommodations and writing out the accommodations instead of just being like, “Oh, I’ll do this.” I’m going to actually create a handout that I pass out for these specific ELL students and these specific students that was like a support sheet. That, I think, is the biggest alteration I learned in planning.

In summary, planning independently and thus becoming responsible for their students’ learning was a valued experience for our participants at this point in their clinical experiences. Such intensive planning prepared them for experimenting in their edTPA lessons with the practices and strategies they had learned in their university courses and keeping their students’ ideas and experiences foregrounded while doing so.

Experimenting With Teaching

During the edTPA, PSTs could try out approaches and strategies not previously used in their classrooms, as Desiree (U2) stated: “[My mentor] gave me the freedom to do what I needed to do to meet the [edTPA] rubric.” The PSTs often took this opportunity to inquire into students’ thinking and act on students’ ideas. Although university assignments had already focused on different parts of the instructional cycle, being responsible for all aspects of teaching made many PSTs (31/65) realize the interconnectedness of planning, teaching, assessment, and their knowledge of students. “With this edTPA, it was the first time where everything was all combined together from beginning to end” (Desiree, U2). Furthermore, attaining sole responsibility for students’ learning made PSTs evaluate their own teaching skills more realistically. As Gabrielle (U2) described, “I think I interpreted them [students] as doing more reasoning than they actually did.” This new responsibility also helped the PSTs identify their own strengths and areas in need of growth.

Implementing New Approaches to Teaching

The desire among PSTs to incorporate new approaches into their teaching came with unexpected benefits. They found themselves energized to think and act as teachers. Luke (U2) mentioned,

Once I’d planned it all, I’m like, “Oh, I’m really excited to actually teach this because it’s my own thing; I’ve done it from start to finish. I want to see how it goes.” So, I think that was a difference, rather than just using something that my teacher’s used, and he kind of knows what’s going to happen. So, definitely, I was more invested in it because I’d sort of seen it through from start to finish.

Robert (U3), too, felt encouraged to try various strategies that neither he nor his students had experienced in his mentor’s classroom. For example, witnessing how student participation shifted during group work allowed Robert to identify which aspects of this instructional approach were successful:

It was an opportunity to teach in a more [scientific] model-based type of unit, which I hadn’t really done before. . . . So, there was one day that actually worked surprisingly well. We did like a jigsaw activity, and that’s definitely something that I want to incorporate more, because I noticed that I got 100% participation, which, especially for the fourth-hour class that I was filming in, is rare. I’ve got a lot of students who don’t have a whole lot of motivation to come and learn, but I notice that when I put some emphasis on the fact that you are helping out your group—you are the only person who’s going to be getting the information on this topic, and you’ll be the one to present it back, the emphasis was on: you’re responsible to your group and not responsible to me—there was a lot higher participation.

Occasionally, implementing new approaches to teaching required a change in the physical setup of the classroom (3/65). In Robert’s case, his mentor’s primary teaching mode was, in his words, “delivering of information.” This approach was reflected by the arrangement of desks in rows, with students working alone. For his unit, however, Robert (U3) rearranged the classroom so that “all of the tables were arranged in little clusters of four students” and students could work in small groups. Although at first glance such change may seem minor, rearranging the classroom requires a major shift in who is in charge of instruction. In Robert’s case, the edTPA allowed him to take on this charge, and it impacted what his students could accomplish.

Prior to the edTPA, participants were often frustrated by not having the time to teach in a way that they saw as necessary for students to deeply understand the content. In contrast, PSTs were generally allowed to finish their edTPA units at their own paces. Especially in departments where the curriculum was preplanned and meticulously paced, the edTPA was the only opportunity for candidates to vary from a predetermined timeline. As Gita (U2) explained, being free from the constraints of pacing was a highlight of her edTPA experience:

I think my struggle with planning is the time limit that you get for a unit. I feel like 2 weeks is not enough to cover ecosystems, especially in 49-minute classes. So, I loved that I got the freedom [through the edTPA] to plan whatever I wanted.

However, this more independent way of teaching also came with unexpected challenges, such as instances when the timeframe given by the edTPA (three to five lessons) was insufficient for attending to all aspects of the rubrics or when students engaged in unanticipated ways with unfamiliar forms of instruction. We will attend to these tensions in more detail below.

Adapting Teaching Based on Students’ Ideas

The investment in detailed planning allowed PSTs to elicit and explore students’ thinking throughout their lesson sequences. Consequently, 34 of the PSTs described explicitly how their knowledge about students informed their planning, teaching, and assessment and vice versa, leading to experimentation with adaptive teaching. Robert (U3) found that he needed to be responsive to what students were able or not able to understand and modify his instruction accordingly:

I did learn how difficult it could be if you are trying to build each day on the previous day. There was one day [that] didn’t go super great. So, then it was, “OK, well, I originally planned to do this tomorrow, but to really benefit the students, I’m going to have to switch it up.” So that was a good learning opportunity in terms of making sure that if I really want the students to get this by the end, I can’t just stick with my original plan. . . . Just that extra emphasis that it’s not about what I had planned. To me, originally, my plans seemed great. But it’s not about that the plans seemed great. But it’s about what the students are actually getting out of it.

This interrelationship between planning, teaching, and students’ understanding only became clear to Robert when he had ownership over all components of his instruction—prior university assignments mostly focused on only one (planning or assessment) or two (planning and teaching) aspects of instruction. For novices, basing instructional decisions on knowledge about students is an important skill to develop, and the edTPA gave some PSTs an initial opportunity to hone this skill during their field placements. Nicole (U1) said it this way:

And [students] informed my teaching in so many ways just like that. They will just take a lesson in a certain way, and I have learned not to resist that until I can harness that and just go with it. And I think that applies to outside of the edTPA and after the edTPA too.

Assessing and Reflecting to Inform Instruction

The edTPA required candidates to design assessments to inform subsequent teaching. Consequently, in place of short-answer test formats (“Before I did my edTPA, all of our tests were multiple-choice, completely!” Robert, U3), most PSTs (54/65) reported moving toward assessments that encouraged students to think more deeply about the content. Aki (U2) realized that this approach to assessment was an area where she needed more practice because of the additional layers of complexity when designing and analyzing such tests:

When [students] do things like guided notes or taking notes or worksheets . . . there’s really just a correcting process that if you got the right answer, good. If you didn’t, then correct it with this . . .With the edTPA, you can’t use an assessment that’s just correct or incorrect response. So, really, there has to be layers to it . . . It has to involve the skills-based things as well as content, and how those two interact. Whereas before it might have been more content-based, you could say . . . [It was] fun to me to pick out the patterns and see what students are or aren’t getting or what they need more help with there.

Aki referred to NGSS science practices as “skill-based things” in which students need to gain proficiency in addition to understanding the content of a subject. She noted that assessments need to “engage them,” contrasting this approach to the mere recapitulation of content.

Most PSTs had not previously been asked to create assessments that could provide feedback to students and help tailor instruction to students’ needs. In many instances (34/65), the assessment portion of the edTPA (e.g., Rubric 11: “How does the candidate analyze evidence of student learning related to conceptual understanding, the use of scientific practices during inquiry, and evidence-based explanations or reasonable predictions about a real world phenomenon?” [SCALE, 2018, p. 32]) helped candidates identify not only what students understood but also how this understanding was connected to their teaching:

Because when we reflected on things [test results] before in a [university] class, it was kind of like we would talk about, “These students got it, and we can tell because . . . and these students didn’t really get it, and we can tell because . . .” But with the edTPA . . . we had to actually analyze every student’s work and show not just if they got it or not, but what they did get and why and talk about what we did in our videos that helped that or didn’t help that. (Sophie, U2)

Sophie acknowledged that she had not previously considered why students performed in a particular way on assessments. Furthermore, she had initially thought that a sophisticated rubric would clearly communicate her expectations for students and support their performance. However, when her students did not meet these expectations, Sophie needed to analyze the results and determine what they revealed about her teaching and her students’ learning needs:

I just gave them that worksheet [as an assessment], but it was more critical thinking, where the worksheets they normally get are more multiple-choice-type things or fill-in-the-blank . . . And the one I gave them was more, “Why does this happen? What causes it? Explain this process” . . . But even the highest scoring one wasn’t good, in terms of my rubric. They’re not very good at finding evidence. I realized that.

Switching from short, known-answer prompts to open-ended questions revealed that Sophie’s students had not met the given standards. As Sophie described, both she and her students were unprepared for an assessment that required new ways of thinking, like using evidence to support an explanation (one of the NGSS science and engineering practices). Nevertheless, the assessment results gave Sophie the opportunity to learn about her students’ struggles and potentially adapt her future teaching.

Similarly, Helen (U1) took the time to develop a detailed rubric and give feedback to all students. This approach enabled her to create and learn from attempting a best-case scenario:

I ended up spending a lot of time coming up with a grading scale rubric . . . At one point, my mentor teacher was like, “If this were mine, I would just say it were two points per box and sort of check it all off.” But I was like, yes, but– so I went home and made a rubric, and she said, “Wow, this is great. Teachers don’t normally have time to do this, but this is how we should grade it.” This is essentially the feedback she gave me, and it took me a long time to give every student feedback, but I also know that that is the way they are going to learn.

The quote above also alludes to the frictions we saw in many other cases. While mentor teachers may have acknowledged the value of the candidates’ work, candidates came to realize the gap between a “best-case scenario” for preparing assessments and the time pressure under which teachers typically operate.

Trying out new forms of (formative) assessment that included students’ reasoning and explanations not only gave PSTs more insights into their students’ thinking but also helped them see how assessment depends on both planning and teaching, as Vanessa (U3) explained:

I think it really helped me see what is important to think about in terms of planning everything else when you plan your assessment. I got myself in a situation where I was going through things and then forgetting, like, “Oh, shoot! I have to assess them– I want to assess them on this, so . . . how do I present all of my other stuff in a way that when they get to the assessment it’s not going to be like they don’t know how to do it?” Or there’s [sic] other barriers to them getting their ideas out that I should have helped scaffold days earlier. So, it helped me see assessment as a bigger picture thing . . . that feeds into all these other things, like planning and implementing and other practices.

As reflected by Vanessa’s comment, purposefully planning, implementing, and analyzing assessments helped candidates to make sense of instructional practices and support (Kang, 2017) and enabled them to complete the planning, teaching, and assessment loop the edTPA is designed to evaluate (Paugh et al., 2018).

Tensions When Teaching for the edTPA

Transitioning to more ownership over their teaching was not always a smooth process for the PSTs. Three reasons for tensions related to the edTPA became evident: (a) the power dynamic between mentors and mentees was challenged; (b) PSTs, as well as their mentors and students, struggled with unrehearsed implementation of new instructional approaches; and (c) PSTs saw their learning during the edTPA curtailed by efforts to comply with requirements of all 15 edTPA rubrics within an impossibly short learning segment. In addition, a PST who taught in a nonstandard context, an English immersion school, found it difficult to convey how she had fulfilled the edTPA requirements.

The edTPA obliges candidates to work independently; mentor teachers, together with department policies, are asked to step back. Instead of being “a guest” in the mentor’s classroom, novices become Teachers of Record. This was one of the main changes in the field placement condition stipulated under the edTPA that influenced what PSTs could do and learn. Such an imposed shift in responsibilities did not come without tensions, especially when this shift was sudden and stark. In the extreme, the edTPA changed the power dynamic in the classroom in a way that allowed the PSTs to implement instructional practices not sanctioned by their mentors, as became evident in Kala’s (U3) case:

I was doing my . . . edTPA unit. I would let her [the mentor] know what I was doing prior to the lesson as a courtesy, like, “This is your classroom, and I’m kind of a guest here, so this is what I’m doing.” And she would say things like, “I’m very frustrated that you’re doing this. I don’t agree with this strategy,” and she would let me know that she doesn’t necessarily agree with what I’m doing. So, she said that the only reason I was able to do it was for the edTPA, and that after the edTPA, she would be taking over planning again or co-planning with me, because she didn’t necessarily like what she saw.

Some tensions arose when participants tried instructional approaches with which they and their students had little experience. In these situations, mentors found it hard to give up control of the classroom. When candidates struggled to be effective in their teaching, some mentors tried to maintain influence over what was taught and how. Naomi (U1) described these tensions in the following way:

I feel like the reason she might still be having—like, she wants a lot of the control—is because some of the things I’ve done were not very successful. Which is very true, but it’s like, I have to be able to try it out and then reflect on it and then think about how I’m going to change it to make it better next time. So, if you never let me try something, then I can’t, you know, I can’t just magically pretend I know how it’s going to go.

Naomi realized her own limitations and the competing interests in the classroom: teaching students well and effectively via the mentor versus letting the candidate gain the experiences they need to teach their own classes in a proficient manner. The edTPA helped push the pendulum toward the latter. As Agnes (U3) explained,

I think it forces your cooperating teachers to lose control for a while. Like, the edTPA was like, “I have to do this; this is what the edTPA wants.” And through doing the edTPA, I think my teacher liked what she saw me do during the edTPA, and now I’m doing more of that now.

Students, too, needed to become familiar with new expectations, strategies, and tools. Abrupt shifts in instructional routines during the edTPA caused disruptions for students’ learning and well-being (Bjork & Epstein, 2016). A candidate (Diego, U1) who successfully completed his edTPA gave this advice for future PSTs:

I had actually done a summary table [a form of whole-class sense-making discussion] a couple months before. So, they [the students] already had solved that. So, if you talk to any edTPA people that need to do that, make sure they practice those things before. That is so important. Because, you know, it didn’t take me very long to explain it: “Hey, we are back at our summary table” . . . They knew how to do that quickly. This time around with the summary table, which wasn’t a part of my [mentor’s] instruction . . . That went a lot smoother, and they [students] were more involved and could contribute.

Unfamiliarity with instructional approaches became even more challenging for the students when it involved a shift in assessments. In Robert’s (U3) classroom, students had so far only experienced multiple-choice tests. He switched to short-answer questions, a seemingly small change that nevertheless bewildered his students:

Then they had a quiz at the end, as well. I got a lot of pushback from that because it was not the multiple-choice test they were used to. One student called me a “terrorist” for not giving him a multiple-choice quiz.

Tensions also stemmed from the edTPA requirements themselves. Implementing what the 15 edTPA rubrics lay out in detail within a learning segment that is supposed to take “3 to 5 hours of connected instruction” (SCALE, 2018, p. 1) is an almost impossible undertaking, especially in the sciences (Brownstein & Horvath, 2016). Many PSTs considered these expectations unreasonable:

I think one of the big takeaways is I tend to put in too much content and too many standards. But I was trying to get through the hoops of the edTPA, so I’m not sure I would do that if I didn’t have that extra burden. (Blaine, U1)

Besides planning and implementing teaching within such a short window of time, writing the edTPA commentaries added more pressure on PSTs. For candidates who were not skilled writers, this was a stressful exercise. Other candidates who had minimal time left in their classrooms after completing the edTPA (U1 field placements ended in April) were challenged to prioritize writing over teaching. The time and energy required by the edTPA were severe for all candidates.

Further tensions arose for one of the candidates, Nicole (U1), who taught in a school exclusively for students who had recently immigrated to the United States. While her video footage for the edTPA showed the extraordinary gains these students had made in engaging in whole-class discussion, their performance did not compare well with more traditional classrooms. Nicole shared,

I think another challenge that we both [Nicole and another PST] were encountering with the edTPA and I think our scores ended up showing that is they—our students don’t look like regular students . . . They don’t speak as eloquently, but I know them, and she knew them, and so what we saw and could hear on the video was monumental, and I don’t think that conveys itself . . . You can put as much into your context for learning, but the reality is that one of those students is 20 years old and another one of them has interrupted educational experiences and has these crazy misconceptions about biological concepts, and you can’t understand that as an edTPA grader.

It is a challenge for PSTs who teach in alternative schools, where the demands of teaching and expectations for learning are different from regular public schools, to convey their instructional competence to edTPA scorers who are unfamiliar with such settings and may therefore not grasp the challenges that novices and students face. This illustrates the normative character of the edTPA, which becomes especially problematic when PSTs are placed in schools that serve marginalized communities where successful teachers are the most needed.

Discussion

Sixty-five PSTs from three teacher education programs recounted how they had planned for, implemented, adapted, wrote about, and struggled with their edTPA lessons and described how these experiences compared with those prior to the edTPA. Our data suggest that the edTPA has the potential to create teaching spaces for PSTs to engage in responsive and equitable practices that might not be the norm for their host classrooms. We found that such spaces allowed PSTs to aim for prioritizing student-centered teaching, designing appropriately challenging learning tasks, eliciting and using students’ ideas, and providing multiple ways for students to show what they know. This finding was especially important for PSTs in host classrooms where some of the TEP-promoted practices that they implemented were uncommon or even discouraged (see also Ahmed, 2019; Braaten, 2018; Napolitano et al., 2022). We suggest that protected teaching spaces not only allow novices to enact pedagogy that reflects research-informed practices before they encounter the complexities of their first year of professional service but also enable mentors to observe and perhaps learn from the approaches that their mentees are trying. What is less clear, however, is whether these rich opportunities to independently practice all aspects of teaching are best supported through state-level policy (as with the edTPA) or whether program-specific capstone teaching assessments could have the same effect. A third alternative—simply “taking over” a class for a period of time without any clear expectations—risks having novices reproduce status quo pedagogies that constrain opportunities for student sense-making and decouple curricula from students’ lives and ideas. In the following sections, we address our research questions about the role of the edTPA in helping PSTs secure unique opportunities to practice and learn from teaching in their field placement classrooms, the conditions that enabled these opportunities, and the quality of teaching PSTs were aiming for in their edTPA lesson sequences. We also discuss the tensions PSTs experienced when implementing edTPA lessons in their field placement classrooms.

The edTPA Protects Teaching Spaces

The vast majority of PSTs reported that the edTPA opened new and qualitatively unique opportunities to learn from enacting research-based practices with students, despite the pressures that the performance assessment added to a schedule already filled with teaching and university coursework responsibilities. Such novel opportunities to practice were especially important for those who rarely had chances to co-plan lessons and assessments with their mentors prior to the edTPA or in situations where mentor routines in the classroom were inconsistent with practices supported by TEPs. Even for those who were given opportunities to practice prior to the edTPA, their enactments of the different facets of teaching were often piecemeal, meaning that the holistic nature of the instructional enterprise, from getting to know students to planning the assessment of learning and the adaptation of instruction, remained unexplored (Windschitl, Lohwasser, & Tasker, 2021; Windschitl, Lohwasser, Tasker, Shim, & Long, 2021).

What changed because of the edTPA? For one, it was understood by all actors that the novices were solely responsible for their teaching during edTPA, and thus for the learning of their students. This shift in responsibility caused candidates to commit extraordinary efforts (“I pushed myself as hard as I could”) toward planning instructional units that embodied principles described in the edTPA rubrics. This level of effort was not always the case for performance tasks assigned through TEP courses, especially when other responsibilities competed for time and attention. We assume that this change in conditions was caused by PSTs’ determination to protect their own professional work and emerging identities so that they could both successfully support their students’ learning and pass the edTPA. This commitment matters because many PSTs realized in the wake of the edTPA how positively their preparation impacted their students’ engagement, especially in diverse classrooms. As Kang and Zinger (2019) highlight, PSTs’ increasingly skilled interactions with a wide range of students are an essential factor in developing equitable teaching practices.

Because the edTPA is meant to assess novices’ readiness to teach, some K–12 departmental policies in the host schools, such as strict curricula pacing across classrooms and common assessment regimes, were put on hold. This shift created the space for PSTs—sometimes quite literally when they were able to reorganize classroom seating—to reconcile resources and learnings that three communities of practice (the host school, their TEP colleagues, and the broader education community whose research-based practices are codified in the edTPA rubrics) had provided them. This opportunity was especially impactful for PSTs who were used to following, even mimicking, established mentor routines and well-rehearsed curricula in their classrooms. Even when these curricula were consistent with research on student engagement and effective practices, PSTs reported that trying this kind of teaching on their own added new skills and knowledge to the instructional repertoires they hoped to use in the future.

Finally, edTPA rubrics guided PSTs’ careful preparation and execution of their learning segments while allowing flexibility for their school contexts. In many cases, these rubrics encouraged and even emboldened candidates to try out new strategies they had learned in their TEPs but had never attempted before in their classrooms (similar results were reported by Ahmed, 2019). Even when the novices knew that their enactments of research-grounded practices were “clunky,” the requirements moved them out of their comfort zones so that they were able to learn from their students’ responses to these practices (e.g., asking students to represent and explain their thinking vs looking for the right answers, engaging learners in meta-cognitive reasoning, substituting assessments that pressed for authentic disciplinary thinking in place of multiple-choice tests). It is noteworthy that PSTs’ risk-taking was in almost all cases facilitated by a supportive and safe classroom environment that mentors had created, regardless of how congruent their vision of teaching was with that of the TEPs and the edTPA. We believe that a combination of sufficient preparation by the TEPs, supportive environments in their field placement classrooms (regardless of the mentors’ own practices), and the push for experimentation with instructional goals as outlined in the edTPA rubrics created the most productive conditions for PSTs’ opportunities to learn when working on their credentialing assessments.