Abstract

Trace-based measurements in self-regulated learning (SRL) require a careful balance between context and generalizability, while ensuring robust validity. This review of 58 theoretically grounded articles addresses three prevalent issues in SRL: contextualization, generalization, and validation. We seek to objectively define a context and examine how the design of software systems can facilitate generalizable trace capture and alignment of theoretical constructs. The learning environment, task type, and task duration are the key factors to consider while defining a context. We identify four categories of trace data useful for informing learning system design. Theoretical generalization is demonstrated by mapping trace data to constructs from two distinct SRL models—Zimmerman’s and Winne & Hadwin’s. We highlight that multichannel and multimodal data help capture enhanced trace data, while continuous measures, such as think-aloud protocols, are better suited than questionnaires to validate traces. We outline what validation in trace-based research should entail from the perspective of a modern validation framework and provide the best practices for balancing contextual depth with theoretical generalization in SRL research.

Keywords

Self-regulated learning (SRL) is a prominent construct of human learning that has been extensively researched in education (Panadero, 2017; Saint et al., 2022; Viberg et al., 2020; Zhang et al., 2021), and understandably so. Self-regulated learners are likely to succeed academically and are more hopeful about their future (Zimmerman, 2002). Its importance has been highlighted in lifelong learning as well; children and younger people with higher SRL skills are more likely to succeed compared to those with lower levels of self-regulation (Dignath & Büttner, 2008). From a motivational perspective, good SRL skills correlate with higher academic motivation and effective learning ability (Johnson et al., 2023; Pintrich, 2003).

In modern digital learning systems, SRL is often studied using a form of digital interaction data that is commonly referred to as trace data. Schraw (2010), in his taxonomy of measurements, lists trace data as an unobtrusive method of measurement that can be collected through active episodes of learning without consciously alerting the learner. In general, traces are observable evidence of learners’ self-regulatory processes generated in real-time as learners work on a learning task (Howard-Rose & Winne, 1993). The generation of trace data is approximately synchronous with the cognitive operations that the learner applies to the information in the working memory (Winne, 2010). Because of these reasons, trace data has been put forward as a modern means to unlock inferences about learners’ SRL.

One fundamental challenge that accompanies the measurement of SRL is the scope of context. Self-regulation is highly contextual (Winne, 2010), and students’ use of self-regulatory processes differs based on their learning environments (Viberg et al., 2020). While current research has started to explore what to trace (Viberg et al., 2020) and the methods to analyze SRL, these exploratory studies need to translate to confirmatory studies for testing theoretical models (Dawson et al., 2019; Winne, 2014). We require robust findings that can be investigated and that can eventually be sustained in multiple learning setups (Winne & Baker, 2013). We need to look for commonalities that exist between learning environments and how we can capture equivalent learning traces across different environments. Striking the right balance between contextualization and generalization is a requirement of the current time (Du et al., 2023; Quick et al., 2023). Although a number of systematic literature reviews have been put forward in the last decade focusing on the measurement of SRL using trace data (Du et al., 2023; H. Min & M. Nasir, 2020; Saint et al., 2022; Viberg et al., 2020; Wong et al., 2019), we have yet to identify themes of generalization across learning contexts. The closest one addressing this question is the one published by Du et al. (2023), which attempts to answer how indicators of SRL have been operationalized by trace data–based studies and the associated challenges. Their review found generalization and validity as two of the three major challenges in the measurement of SRL using trace data. The review by Viberg et al. (2020) is another relevant one, which was directed toward finding out the current state of research in the field of learning analytics to measure and support SRL in online learning environments. They identified that fewer studies have examined the reflection phase of SRL as compared to the forethought or performance phases. While most research has been directed toward the measurement of SRL rather than supporting it (Viberg et al., 2020), we are still far from identifying themes of generalization across studies in trace-data-based SRL research. Indeed, some efforts toward distinguishing generic SRL processes that researchers measure in SRL have come up (Du et al., 2023), but a lack of theoretical grounding in the field presents a hurdle to such efforts (Matcha et al., 2020). To address these challenges, we have kept theoretical alignment as a primary requirement for studies to be included in our current review. The review by Saint et al. (2022), on the other hand, focused on temporally focused analytics in measuring SRL. They primarily focused on the theoretical underpinnings of these studies and the choice of methods for the temporal modelling of SRL. They found several studies that combined constructs from different theoretical models to model their traces, as opposed to choosing a single theoretical model. The validity of measurement protocols in different study contexts, the generalizability of the findings, and the provision for triangulation with other measurements once again emerged as some of the areas that require emphasis in future studies.

Although previous literature reviews have acknowledged the issues surrounding generalization and contextualization in SRL, none have attempted to address them. To address this challenge of generalizability, the current systematic review compiles operationalizations used by researchers to represent higher-level SRL processes, supplemented with information about the learning contexts in which they were collected, thereby emphasizing the relevance of the context in each study. We performed a coding of traces across two well-known theoretical models in SRL, demonstrating the possibility of theoretical generalization and how combining theoretical perspectives from multiple models can enrich traces of SRL. We categorized traces based on their usage in a context, which can help learning system designers operationalize SRL with more informed traces. We also reiterate the issue of validity in SRL measurements, compile the contemporary ways of triangulation and validation, and offer our recommendations for the same. Researchers who work on challenges related to the interpretation of traces and the SRL constructs they might represent while setting up digital learning systems to effectively measure SRL will find this literature review particularly useful.

Background

Self-Regulated Learning and the Need for Theoretical Grounding

Self-regulated learning is a theoretical umbrella that encompasses cognitive, metacognitive, behavioral, and affective aspects of learning (Panadero, 2017). Over the past decades, researchers have introduced several theoretical models to conceptualize and understand SRL (Boekaerts, 2011; Efklides, 2011; Pintrich, 2000; Winne & Hadwin, 1998; Zimmerman, 1986). Puustinen and Pulkkinen (2001) and Panadero (2017) identified that these SRL models comprise three fundamental phases—(a) preparatory phase, where the learner analyzes the task and sets personal goals and plans; (b) performance phase, where the learner performs the task and monitors their progress; and (c) appraisal phase, where the learner assesses their attainment of set goals and refines their plans for further iterations of the task. These phases are loosely cyclical and recursive in nature, and each phase contains its unique sub-constructs in its respective model (Bernacki, 2017; Panadero, 2017). The conceptual differences among these theories primarily arise because of the difference in emphasis that the proponents of the theories put on different aspects of self-regulation in their respective theoretical models. For example, motivation finds primary emphasis in Zimmerman’s model (Zimmerman & Moylan, 2009) and Pintrich’s (2000) model, while affect or emotion is of special focus in Boekaert’s model (Boekaerts, 2011) of SRL (Panadero, 2017). When choosing a theoretical lens for empirical research, it is worth keeping in mind that each of these models provides alternative views to observe and understand the same learning process. The choice of a theoretical model should thus be motivated by the points of emphasis of the particular model and how well-suited it is for a particular research context or population of learners (Panadero, 2017).

A popular contemporary approach to capture SRL from an event perspective (Winne, 2010; Winne & Perry, 2000) is trace data, where researchers attempt to represent SRL constructs using the actions of learners (Winne, 2010). This fundamental challenge of mapping logged digital actions to higher-level educational constructs is sometimes referred to as going from clicks to constructs (Buckingham Shum & Deakin Crick, 2016). Most trace-based studies in SRL typically involves capturing these low-level clicks and aggregating them to proxies for their approximately synchronous SRL processes (Greene & Azevedo, 2010; Winne, 2010), with the assumption that a complete coding of the traces in a study will be adequate to comprehensively encompass the underlying theoretical model(s) to which the learners’ s self-regulatory behaviors conform (Greene & Azevedo, 2009). This top-down mapping of higher-level SRL constructs to lower-level clicks has been at the forefront of research into SRL in recent years, which has led several trace-based methodologies to come into the fray in a variety of contexts. Some of the most notable ones are pioneered by Winne et. al. (Bernacki et al., 2012; Hadwin et al., 2007; Jamieson-Noel & Winne, 2003) in reading, Azevedo et al. (Greene & Azevedo, 2009, 2010; Taub & Azevedo, 2019) through think-aloud studies in teaching human circulatory system, Aleven et al. (2006) in a math tutoring environment, Siadaty et al. (2016a, 2016b) at workplace, Biswas et al. (Paquette et al., 2020; Roscoe et al., 2013) in an intelligent tutoring system, Saint, Gašević, et al. (2020a), Saint, Whitelock-Wainwright, et al., (2020b) in an online course, and Fan, Lim, et al. (2022a; Fan, van der Graaf, et al., 2022b; Fan et al., 2023) for reading-writing.

It has been repeatedly emphasized that for trace-based research to progress, it is essential to have sound theoretical grounding (Dawson et al., 2019; Rovers et al., 2019; Winne & Baker, 2013). Measures in SRL should be closely aligned with the underlying models, which was often not the case in the review reported by Rovers et al. (2019). This may have contributed to the apparent lack of robust findings that empirically demonstrate benefits in promoting learning in all students, as noted by Winne and Baker (2013). While there has been considerable progress, the lack of refinement of and feedback into theories remains noticeable (Dawson et al., 2019). Dawson et al. (2019) posited that research in learning analytics is supposed to move through a series of maturation phases. In its most mature phases, learning analytics research is supposed to find its way into large-scale projects along with theory building and refinement. However, although there has been a surge in studies focusing on analyses, there has not been a proportionate contribution in terms of application in practice and theory building (Dawson et al., 2019). To overcome this gap, there is a need for a purposeful shift from strictly exploratory models to a more holistic approach to test out theoretical models (Dawson et al., 2019; Winne, 2017). In an effort to make such a purposeful shift, this review kept theoretical grounding as a primary criterion for the selection and review of articles.

Measuring SRL—Trace Data and Associated Challenges

Trace data allows measurement of SRL at a much finer granularity compared to other measures (Rovers et al., 2019). There are several other benefits of trace data as well: (a) Trace data can be captured reliably (Winne, 2020); (b) trace data collection is unobtrusive and does not interrupt a learner’s natural flow of activity; (c) trace data can capture finer temporal changes in learners’ self-regulation, unlike questionnaires (Winne, 2010); (d) trace data can be captured from ecologically valid, real-world learning contexts; and (e) trace data collection is scalable to large, real-world educational settings. A typical trace-based measurement protocol involves abstracting the raw computerized behavioral trace data and aggregating it to represent higher-level SRL processes. This aggregation of raw data can be done either by finding a summary measure of actions over time or by combining sequences of actions (B. Chen et al., 2018). For instance, the proportion of course units accessed before deadline has been interpreted to represent time management SRL process (Q. Li et al., 2020), and the learner reading the general task instructions/scoring rubric of the task, then navigating to read a page relevant to the goal for the first time, was interpreted to represent orientation (Srivastava et al., 2022). Researchers typically hypothesize a list of learner actions or a list of sequences of learner actions that are representative of SRL processes in their respective research contexts. In this review, we refer to such coding approaches as an

Issues surrounding contextualization and generalization in SRL

Since low-level measurements to trace SRL in online environments are so dependent on the learning context, researchers often struggle to align these measurements with higher dimensions of SRL theories—theories that are generic and are not proposed with any particular learning context in view. The traces that are applicable while measuring SRL during a school classroom assignment in a computer-based learning environment may not be valid in a workplace setting, which involves tracing SRL over several months, despite both studies using the same underlying theoretical model. To reflect this impact of context, modern frameworks of SRL have placed enhanced emphasis on the interplay of context with individual learner characteristics that influence SRL (Ben-Eliyahu & Bernacki, 2015; Efklides, 2011; Winne, 2010). In such a scenario, identifying what a context in trace-based SRL entails becomes imperative. While a broad perspective on “context” in learning can incorporate distal factors like familial issues, emotional well-being, disciplinary nuances, or even governmental policies, we will limit our definition of context for this review to the factors most urgent and immediate to the learner at the performance of a learning activity, which Ben-Eliyahu and Bernacki (2015) would also call a “microsystem.” Such microsystems include the most situated affordances available to the learner during the task, the type of the learning system, the nature and requirements of the learning activity, and the time scale at which a researcher chooses to observe for their research. For our review, we look at the context of a study in three aspects: learning environment, task type, and task duration.

The time scale of observation of a learning activity influences the granularity of the trace data that researchers may choose to record (Ben-Eliyahu & Bernacki, 2015; Newell, 1994). Ben-Eliyahu and Bernacki (2015) revived and proposed the use of bands based on a time scale to measure SRL, an idea originally developed by Newell (1994). They proposed to conceptualize the time scale of observation in SRL as events happening in three kinds of bands on the logarithmic scale of base 10—cognitive (cognitive and metacognitive events, happening over the range of seconds), rational (learner strategies and adaptive metacognitive control strategies, occurring over several minutes to hours), or social bands (e.g., use of self-report questionnaires to identify level of liking for a class, evolving over several weeks or months). This choice of granularity in trace-based measurement of SRL also needs to be informed by theory and the time bands that they aim to capture (Rovers et al., 2019). Before attempting to generalize findings from SRL studies, researchers must consider the contextual nuances of each study, the purpose of integrating a feature in a learning environment, and task constraints. Only then can we focus on reevaluating insights from a study in different contexts and achieve eventual generalization, which remains essential for theory building and validation (Winne & Baker, 2013).

Validity issues in trace data-based measurements

Because self-regulation is a metacognition-heavy phenomenon, it seldom emanates overt, dependable, behavioral indicators that can be used to validate traces. Messick (1987) defined validity as “the integrated evaluative judgement of the degree to which empirical evidence and theoretical rationale support the adequacy and appropriateness of inferences and actions based on test scores.” There are three commonly considered types of validity: content validity, criterion-related validity, and construct validity. In this review, we focus on construct validity only, which is defined, in the context of measurement of SRL, as a set of concerns about whether the measurements, as they are operationally defined, actually represent the SRL processes that researchers intend to measure and no other phenomena (Winne & Perry, 2000).

The use of trace-data-based protocols relies on researchers’ interpretations, under the assumption that such protocols can represent theoretical self-regulatory processes. For example, Wong et al. (2021) used the trace number of course preparatory items accessed in a MOOC as a proxy for the SRL process of planning in Zimmerman’s model of SRL. This interpretation is fundamentally contingent on the notion that the learner visits the course preparatory item only when they intend to plan. But does a learner always undertake an action only when they enter a singular metacognitive state (Winne, 2020)? Is accessing a course preparatory item at a later point in the course also an indicator of planning? Will this interpretation hold in another MOOC or a different learning environment that contains no SRL prompt of the nature that was used in the study of Wong et al. (2021)? These are legitimate questions and deserve attention.

Traditional ways of validating trace libraries for SRL had involved correlating online behavior with survey instruments (Cicchinelli et al., 2018; Jamieson-Noel & Winne, 2003). These questionnaires are collected before or after a learning activity, making them a convenient way to correlate global measures of self-regulation with trace measures. Recently, we have seen the emergence of another class of validation studies that use concurrent measures like students’ verbal accounts (Bernacki et al., 2025; Fan et al., 2023) or students’ in situ self-reports (Salehian Kia et al., 2021). These approaches offer a temporal and granular measure of SRL and allow comparison of two channels of data collected throughout a learning activity, which a questionnaire collected at the beginning or end of a session cannot provide. So, which of these approaches is more suitable, and what are their benefits and limitations? We need to identify the best practices for validating trace-based studies in SRL.

Research Questions

Our review focuses on the possibility of generalizing trace-based codings across similar learning contexts and what such generalization efforts would entail. We also aim to identify ways of validating trace data-based operationalization. The research questions that drove this literature review are: (1) What theoretical models of SRL have been used for the trace-based SRL measurements? (2) In what contexts (learning environment type, task type, task duration) have these studies been done? (3) What kinds of features in the learning environment help measure and support the self-regulation of learners? (4) What operationalizations are used for mapping trace data to SRL processes? (5) How have the researchers investigated/improved the validity of trace-based operationalizations?

Methodology

Search Strategy, Filtering Criteria, and Initial Screening

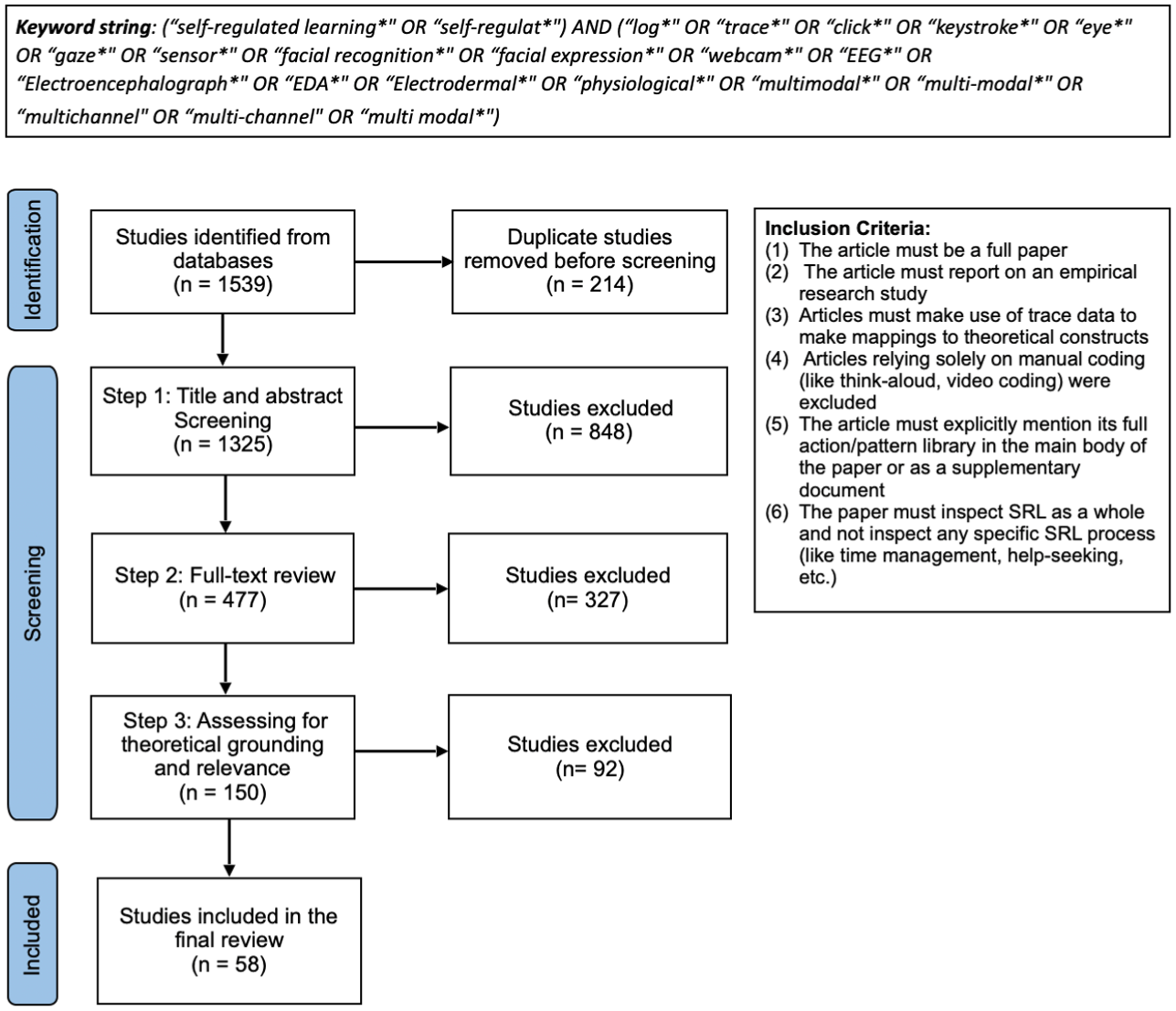

We searched for the literature in two relevant databases—SCOPUS and Web of Science Core Collection. These databases are two of the largest bibliographic databases and virtually cover all the major journals and conference venues in the field. We experimented with several variations of suitable keywords to generate the set of relevant literature from the databases. Our final keyword search string, inclusion criteria, and the filtering process are provided in Figure 1. We also limited the search to only those articles that contained the words “self-regulated learning,” “self-regulation,” or similar keywords. The objective of this process was to capture all the articles that have created theoretically grounded, trace-based libraries to operationalize SRL as a whole and have demonstrated the applicability of their trace libraries in a population of learners. The first search was performed on July 1, 2021. We later refreshed our corpus with another couple of searches conducted on May 9, 2022, and August 02, 2023. The three searches combined yielded a total of 1325 unique articles. All the articles went through an initial phase of title and abstract screening, following which 477 articles emerged and fit for the second step of filtering, which involved full-text assessment of the articles. A total of 150 papers emerged suitable for further review after the full-text assessment in Step 2.

Filtering process for our systematic literature review.

Filtering for Theoretical Relevance

The third step of filtering entailed assessing the theoretical grounding and relevance of each of the 150 papers retrieved from the second step of filtering. We developed a coding strategy where we assessed each article on two criteria: (a) whether specific SRL theory/theories is/are mentioned within the article as the grounding theory, and (b) whether the traces are mapped to SRL constructs with adequate justification. Based on the fulfilment of these two criteria, we categorized the papers into one of the following three categories:

The filtering and coding in steps 2 and 3 were done by the first and second authors of this paper independently, and discrepancies were resolved through discussion. At the end of step 3, we categorized 42 papers into the high theoretical relevance category, 16 papers into the medium theoretical relevance category, and the rest of the papers into the low theoretical relevance category. We decided to keep the high and medium-relevance categories for our review, prompted by the concept of generic SRL models (Panadero, 2017; Puustinen & Pulkkinen, 2001; Saint et al., 2022), apart from the formal ones like Zimmerman’s. That brought the final count of the papers for review to 58, the complete list of which can be found in the appendix.

Coding of the Articles to Conform to the Dimensions of SRL Models (RQ4)

To investigate the potential of theoretical generalization of traces across models, we coded the SRL traces of our articles separately into Zimmerman’s cyclical model (Zimmerman & Moylan, 2009) and Winne & Hadwin’s COPES model (Winne & Hadwin, 1998). While Zimmerman’s model is the popular choice used for trace-based research in SRL (Saint et al., 2022; this review), Winne & Hadwin’s model is particularly prevalent in modeling self-regulated learning in computer-supported learning environments (Panadero et al., 2016). Winne & Hadwin’s model further represents some of the most contemporary thinking of this field, based on the relevant chapters in the recent editions of the handbook of educational psychology (Greene et al., 2024), the handbook of learning analytics (Winne, 2017), and the handbook of self-regulated learning and performance (Bernacki, 2017). Both models are unique and distinct in several aspects (Panadero, 2017), and generalizing the traces of the articles in our corpus across constructs of both of these models will indicate the generalizability of the theoretical mappings across multiple models, with interpretations from different studies capable of contributing to multiple SRL models.

According to Zimmerman’s model, SRL happens over three phases: forethought, performance, and self-reflection. Each of the three phases contains a set of categories of SRL processes in the second level of the model. Each of these process categories then includes specific lower-level SRL processes (Panadero & Alonso-Tapia, 2014). On the other hand, Winne & Hadwin’s model bifurcates the forethought phase into two and proposes that learning occurs in four phases: task definition, goal setting, enactment, and adaptation. Each phase is characterized by COPES—an interplay of the learner’s conditions, operations, products, evaluations, and standards, which evolve over the four phases. We reviewed the trace-based features used in each of the 58 studies included in our review and conducted two rounds of coding, once for each of the two theoretical models. For coding according to Zimmerman’s model, we reviewed the traces and first mapped them to one of the three phases, and then into one of the process categories within that phase. For coding to Winne & Hadwin’s model, we first categorized each trace-based feature into one of the four phases and then into one of the five facets of COPES that the trace represented. Two authors of this systematic review were involved in this coding process, both of whom have prior experience in the theoretical coding of trace data-based features. For both rounds of coding, after reviewing half of the papers, the authors reconvened to discuss the disagreements and settled on a single mapping for each trace-based feature after extensive discussions. The inter-rater agreements of the two reviewers for Zimmerman’s and Winne & Hadwin’s coding at that point were then measured by using Cohen’s kappa, and the agreements were substantial (k = 0.906 and k = 0.866, respectively). In coding these traces, we took careful consideration of the context of each study, as context influences how a trace could be interpreted. This entire process of theoretical coding forms the basis for answering our RQ4.

Overall Summary of the Findings for Our Research Questions

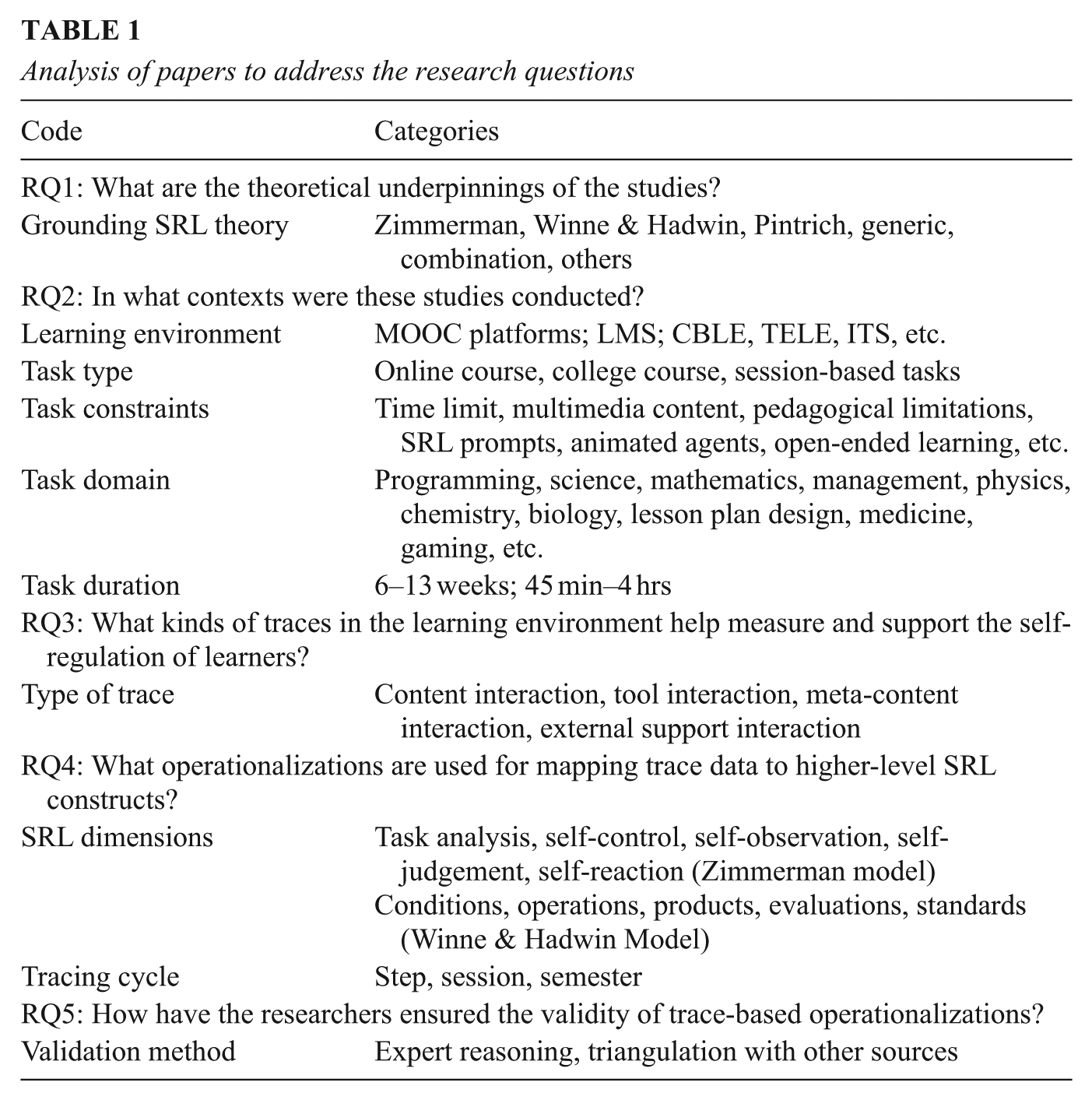

To answer our five research questions, we conceive a set of categories that summarize the overall findings of each research question. These categories are reported in Table 1. For RQ1, the code

Analysis of papers to address the research questions

Results

RQ1—Grounding SRL Theory

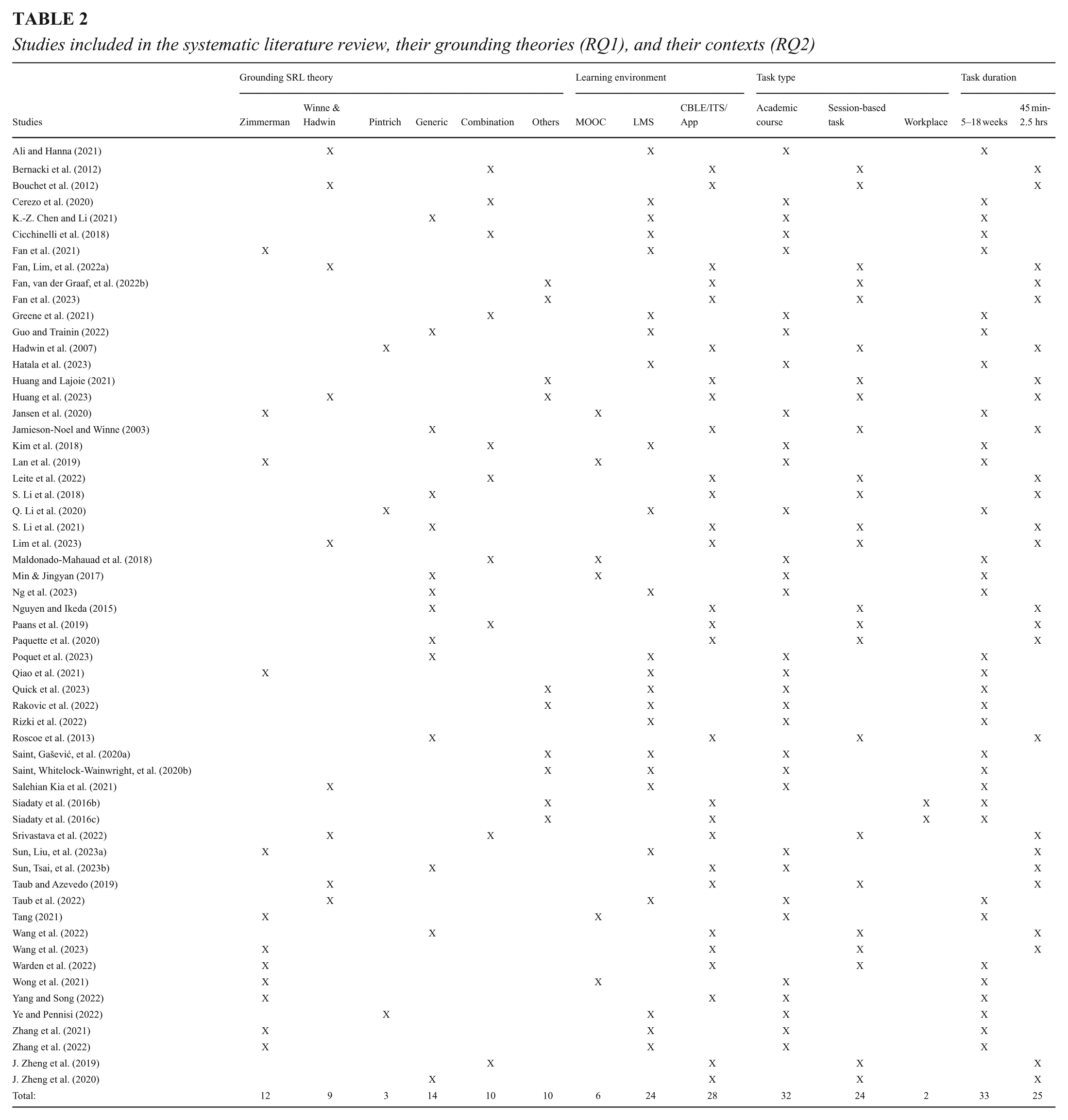

The studies show an inclination toward Zimmerman’s model of SRL, with 12 of the papers opting for the model for its theoretical basis. Winne & Hadwin’s model is another popular choice of grounding SRL theory, with nine studies opting for it. Three of the papers used Pintrich’s SRL theory to inform their choice of operationalizations of trace data. A summary of these results is reported in Table 2. Some of the studies (e.g., Fan et al., 2021; Huang et al., 2023; Quick et al., 2023; Rakovic et al., 2022; Siadaty et al., 2016c) have mentioned highly cited SRL models like that of Siadaty’s micro-level SRL framework (Siadaty et al., 2016a) or Greene and Azevedo’s SRL framework (Greene & Azevedo, 2009) as their grounding theory. Although most of these models are themselves spinoffs of the Zimmerman or Winne & Hadwin models, we have included them under “others” for this review. The majority of papers have, however, opted to map their traces to the generic phases of SRL (planning, enactment, and reflection) rather than explicitly going for a single established theoretical model. These papers have been included under the

Studies included in the systematic literature review, their grounding theories (RQ1), and their contexts (RQ2)

RQ2—Context of Studies

Learning environment

The kinds of learning environments used are fairly distributed in nature. Six of the studies have been done exclusively within massive open online courses (MOOCs) on MOOC platforms. Twenty-four studies were conducted on learning management systems (LMS), which were mostly used in the context of courses in higher education. The remaining 28 studies were done on platforms like open-ended learning environments (OELE) and intelligent tutoring systems (ITS). Some examples of these systems are MetaTutor (Taub & Azevedo, 2019), Betty’s Brain (Paquette et al., 2020), and Learn-B (Siadaty et al., 2016c). Most of these CBLEs are bespoke learning environments that have been set up and optimized specifically to study self-regulated learning. The study by Yang and Song (2022) is a notable one, which used mobile apps during a vocabulary learning task. The summary of these findings is listed in Table 2.

Task types

The nature of a task, its objectives and facilities influence the self-regulation of a learner and need to be studied in closer detail. An overview of the distribution of the studies based on task type is presented in Table 2. A total of 32 studies were conducted within academic courses, typically extending over a semester. These studies include those conducted in MOOCs and formal courses in higher education. We found a number of studies that investigated SRL over a specific period, often around assignments in the course, rather than investigating SRL over the entire course (Salehian Kia et al., 2021; Wise & Hsiao, 2019). Such studies were also categorized under academic courses for this review. A total of 24 studies were conducted where self-regulation was investigated over a session or a set of sessions. These tasks range from multisource essay writing (Srivastava et al., 2022), concept mapping after reading (Paquette et al., 2020; Roscoe et al., 2013), an intelligent tutoring system to diagnose a virtual patient (S. Li et al., 2021; Wang et al., 2023), interacting with an ITS while learning the human circulatory system (Bouchet et al., 2012; Taub & Azevedo, 2019), game-based learning environments (Warden et al., 2022), creating lesson plans for students (Huang & Lajoie, 2021; Huang et al., 2023), vocabulary learning on a mobile app (Yang & Song, 2022), among others. A couple of studies were also done in a professional workplace learning context (Siadaty et al., 2016b, 2016c).

Task constraints

Task constraints serve as mental boundaries that shape how learners approach and carry out a task. Even in relatively structured settings like online courses, learner behavior can be influenced by the specific nature of these constraints. Mandatory assessment attempt in each unit of a course (Taub et al., 2022; Zhang et al., 2021, 2022), whether all course materials are released at the start of the course (Q. Li et al., 2020) or not (Kim et al., 2018), how many graded assessments happen during a course (Chen & Li, 2021; Qiao et al., 2021), and whether the modules in a course are sequential (Quick et al., 2023) are all idiosyncrasies of courses that influence the cognitive and metacognitive activities of a learner. These constraints are even more varied in bespoke learning environments (Fan et al., 2023; Paquette et al., 2020; Taub et al., 2022; Wang et al., 2023). A list of constraints accompanying each study in the articles is included under the column “Task constraints” in our supplementary material.

Task domain

Using affordances in a task often requires detailed, domain-specific reasoning, which highlights the importance of considering the task’s domain. The studies in our review span a wide range of knowledge domains, ranging from courses in biology (Greene et al., 2021; Rakovic et al., 2022), project management (Tang, 2021), dentistry (Lan et al., 2019), and agriculture (Ye & Pennisi, 2022) to session-based activities requiring knowledge of physics (Jamieson-Noel & Winne, 2003), environmental science (Roscoe et al., 2013), artificial intelligence and education (Srivastava et al., 2022), medicine (J. Zheng et al., 2019), and business (Warden et al., 2022). A full list of the domain knowledge concerned in each task is listed under “Task domain” in the supplementary document.

Task duration

A total of 25 studies were conducted where the task was completed in a single session or as a series of small sessions. The duration of these tasks ranged from 60 minutes to 4 hours. A number of studies focused on reading and comprehension tasks, which were typically followed by a post-test requiring learners to answer questions based on the material they had read (Bernacki et al., 2012; Fan, Lim, et al., 2022a; Fan et al., 2023; Jamieson-Noel & Winne, 2003; Taub & Azevedo, 2019). Some reading tasks involved creating concept maps after reading the content (Paquette et al., 2020; Roscoe et al., 2013). On the other hand, longer duration tasks ranged anywhere between 5 to 18 weeks. These learning tasks were generally courses in higher education or MOOCs, or studies conducted within these courses. An exception here is the study by Rizki et al. (2022), who collected data from a countrywide learning platform for nine months, but there is no mention of the duration of the courses themselves within their article. Another exception is the study of Yang and Song (2022) on school students’ vocabulary learning, which lasted for 7 months. The findings are summarized in Table 2.

RQ3—Types of Trace Data for Measuring and Supporting SRL

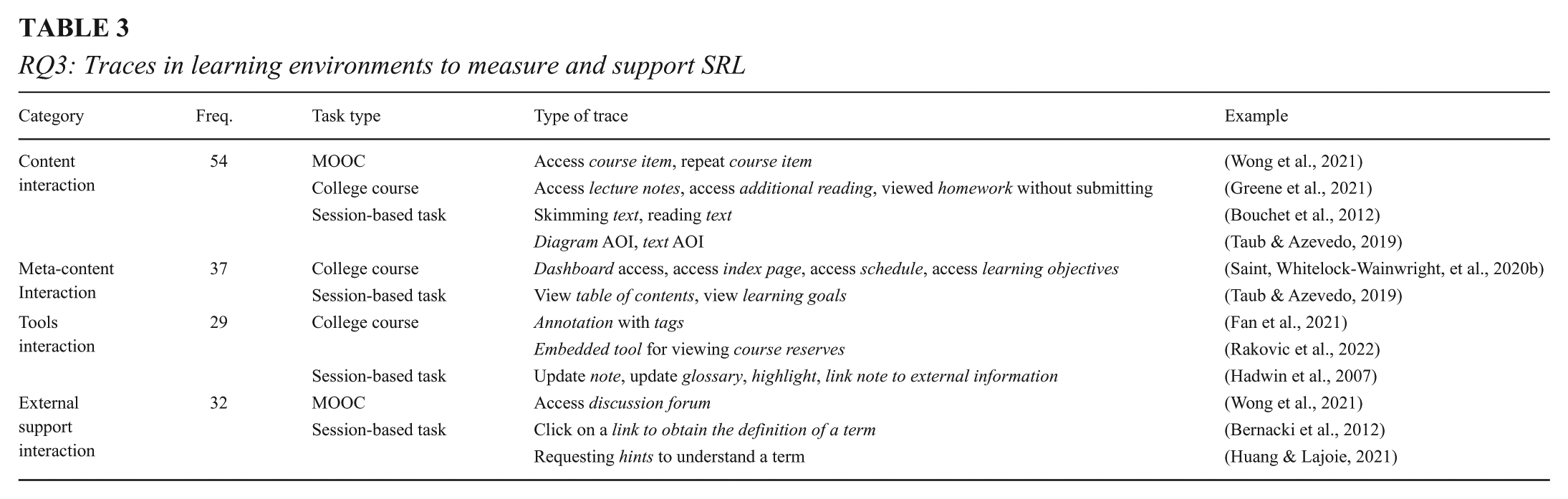

We categorized the traces into four types based on the learning environment design and the purpose they served in their tasks:

Researchers often characterize similar interactions in different ways to represent distinct higher-level SRL processes. For example, interacting with a page might be classified as thorough reading, skimming (Bouchet et al., 2012), or scrolling back (Jamieson-Noel & Winne, 2003), each reflecting a different SRL process. A few examples of traces under each category, along with their nature of interactions, are given in Table 3. The complete list is provided as a supplementary document.

RQ3: Traces in learning environments to measure and support SRL

The words in italics represent the feature in the learning environment, and the complete phrase represents the trace that was used to measure SRL process.

Chunking of content into multiple modules

We have noticed that researchers often deliberately chunk their content into multiple modules or pages, which generates traces when learners navigate between these pages. These design choices help researchers to theoretically hypothesize SRL behaviors even before the commencement of data collection. For instance, chunking introductory content into pages like overview, guidelines, detailed specifications, marking criteria, and how to submit can help researchers operationalize SRL processes based on what the learner was focused on at the time, for how long, and their sequence of actions (Salehian Kia et al., 2021). Had this content been on a single page, it would have required additional software capabilities or other modes of data to capture such processes. A similar approach was seen in Srivastava et al. (2022), where they chunked their content into topical sets of readings. This allowed them to understand a learner’s judgment based on their relevant and irrelevant reading.

Different pedagogies prompt different design choices

The pedagogical choices for delivering instruction can impact the nature of self-regulation, and in turn, may influence what traces we can and may want to capture. We can take the example of the studies by Zhang et al. (2021, 2022), who utilized online mastery-based learning modules (OLMs) to deliver their course, to illustrate this point. A series of OLMs was designed to teach a particular topic. Each OLM was optional, containing an assessment and an instructional component. The learners were presented with the assessment at the start, where they could make an initial attempt at the test and receive a score. The learners were then allowed to go through the instructional component and could take repeated attempts at the assessment component later to improve their scores. From this context, Zhang et al. (2022) used traces like accessing the learning materials after the first mandatory attempt and is the first attempt short as indicators of planning. These interpretations of traces only work where OLMs or pedagogy with a similar instructional format is used. Another example of how pedagogy can influence trace capture is the study by Wong et al. (2021), which used video prompts in their MOOC video content to stimulate self-regulation. The course videos contained questions that were designed to instigate planning, monitoring, or reflection in learners. Another example is that of Ali and Hanna (2021), who used a hybrid learning structure in their college course. Half of their learning tasks were conducted in class, and the other half asynchronously on Moodle. In such a scenario, where only 50% of learning interactions are expected to be captured digitally, researchers have to take the pedagogy into consideration while conceptualizing their traces. Fan et al. (2021) and Saint, Whitelock-Wainwright, et al. (2020b) used a flipped classroom; Cicchinelli et al. (2018) used blended learning; J. Zheng et al. (2019) used a collaborative inquiry-learning environment; and Siadaty et al. (2016b, 2016c) conceptualized traces for a workplace environment. These are all examples to show how the nature of instruction influences not only what traces to capture and features to create but also how to operationalize them to model self-regulated learning.

RQ4—Trace Data Mapping to Higher-Level SRL Constructs

To present our results of the coding of traces to map to the constructs of Zimmerman’s and Winne & Hadwin’s models, we represent each trace in our studies as a 3D tuple: Trace = {Phase, Process Category / Facet, Process} ∈{Task Definition, Goals & Plan, Enactment, Adaptation}, for Winne & Hadwin Self-Judgement, Self-Reaction}, for Zimmerman Model ∈{Conditions, Operations, Products, Evaluations, Standards}, for Winne & Hadwin

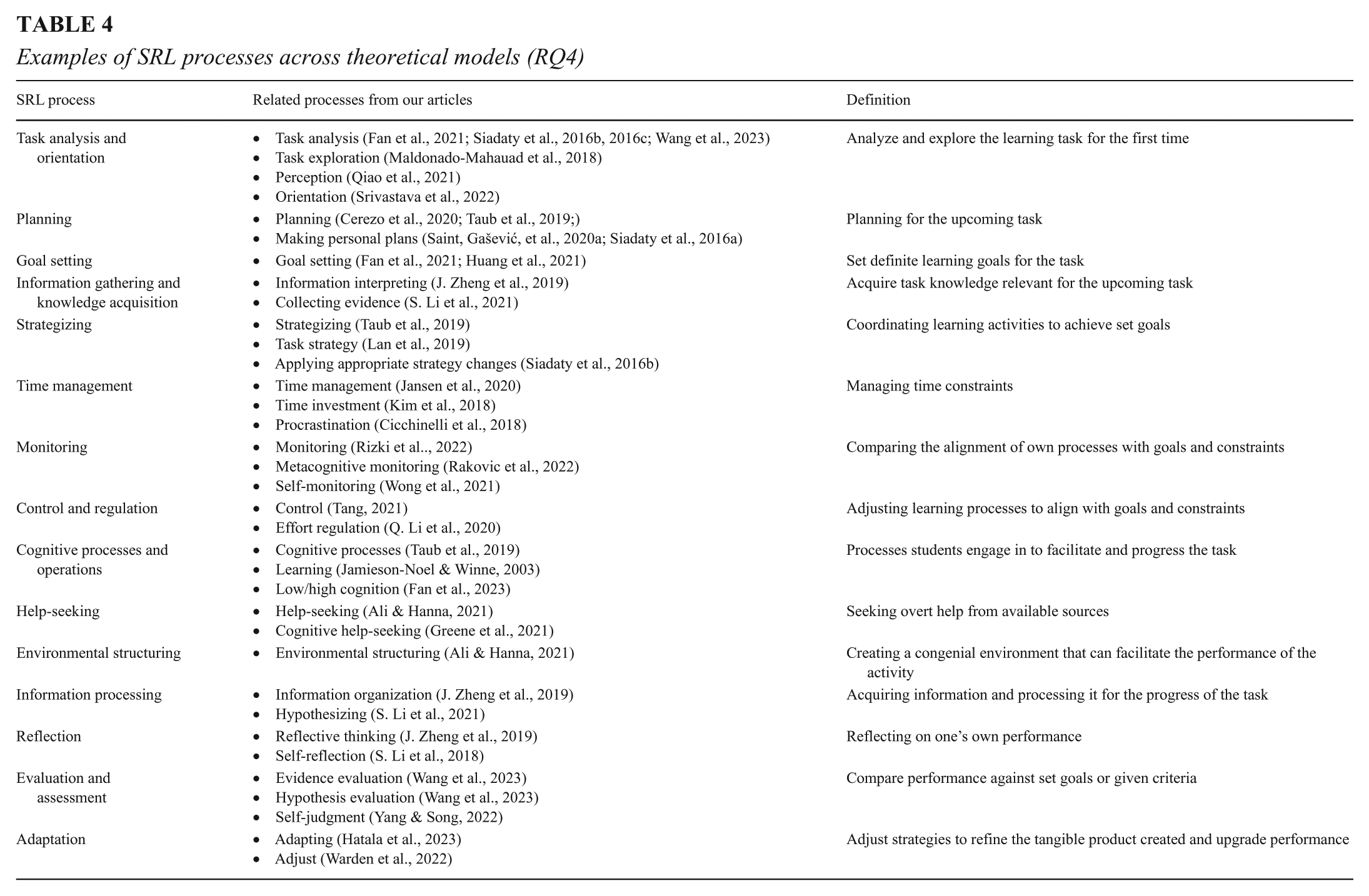

The first element of the tuple represents the phase of the task that the trace is representing in its respective theoretical model. The second element represents the process category (for Zimmerman’s model) or the facet of COPES (for Winne & Hadwin’s model). The third element represents the micro-level process that is being operationalized using the trace, as mentioned by the authors in their respective articles. A few examples of these studies categorized into this three-dimensional taxonomy can be found in Table 4. However, we strongly recommend that our readers review the comprehensive table included as a supplementary document, which comprises all the trace-based operationalizations of the articles included in this review. The interpretations form an important part of theory-driven, trace-based conceptualizations and our compilation is meant to help researchers toward this end.

Examples of SRL processes across theoretical models (RQ4)

Coding the articles for the first level of the models (phase level) was easier; our filtering process for this review ensures that each article explicitly indicates the phase of a theoretical model (preparatory, performance, or appraisal) from which the trace is generated. The next level of coding is where it became trickier; although Zimmerman’s model provides a specific and exhaustive list of processes within its process categories, the nomenclature of the processes used by the researchers in their articles is not necessarily the same as the ones provided by Zimmerman and Moylan (2009). We resolved this by coding them into the process category based on their theme and interpretation. For instance, Rakovic et al. (2022) interpreted the trace viewing calendar events as a course information gathering process, an indicator of the preparatory phase. As this process has no literal equivalence in Zimmerman’s model, we code it under strategic planning in the task analysis process category, as the process involves analyzing and planning for the course. This coding process was even trickier for Winne & Hadwin’s model of coding; no paper, even those that mention Winne & Hadwin’s model as their theoretical grounding, makes direct reference to which facet of COPES it traces. For the same example of course information gathering, we coded it to the Conditions facet of Winne & Hadwin’s model, as visiting the course information pages for the first time is likely to update the conditions of the learner.

It has been identified in prior reviews that the performance phase receives more focus in the investigations in SRL (K.-Z. Chen and Li, 2021; Tang, 2021; Viberg et al., 2020), and this finding is reiterated in our review as well. The performance phase has been investigated in all the articles reviewed. A possible reason for this could be that the cognitive actions during this phase become more overt and can be traced with relative ease. On the other hand, the appraisal phase is the least investigated phase, which was highlighted in the review by Viberg et al. (2020) as well. This, in the case of Winne & Hadwin’s model, can be attributed to the peculiarity of the adaptation phase, as the definition of adaptation in Winne & Hadwin’s model is not entirely equivalent to reflection (Greene & Azevedo, 2007).

While compiling the trace-based operationalizations and their corresponding interpretations, we took special care in keeping the context of their studies in view. A list of the SRL processes identified across the studies is given in Table 4.

Use of various tracing cycles in modeling SRL

In trace-based research, the broad goal of learning analysts is to model SRL from the start to the end of a learning task. Often dictated by their research goals, researchers choose different grain sizes to model out the learning task (Ben-Eliyahu & Bernacki, 2015; Rovers et al., 2019; Winne, 2017). This modeling usually starts from the atomic interactions left by the learners in the system in the wake of their learning activity. The atomic interactions are then mapped to SRL processes. Examples include view podcast video (control), post article (evaluation) (Qiao et al., 2021), edit discussion topics (reflection) (Tang, 2021), and set and update progress (evaluation) (Nguyen & Ikeda, 2015). Sometimes, even a pattern of actions such as looking at content AOI and then at the notes AOI (strategizing) (Taub & Azevedo, 2019) or identifying a learning gap by reading and then annotating as “confusing” (goal setting) (Fan et al., 2021) is also used. This approach models a

Use of supplementary tools and software to capture additional traces

A few learning environments have used multiple tools or software to capture trace data. Fan et al. (2021) integrated Alexandria (to host learning materials and activities) and Hypothes.is (a tool to facilitate annotations and highlighting in e-books) with the base Moodle platform. Greene et al. (2021) used the Piazza discussion forum along with their LMS. Salehian Kia et al. (2021) also linked an integrated development environment (IDE) for programming within their LMS. These integrated software systems enabled researchers to capture enhanced trace data that would have otherwise required extensive developmental changes in the native learning system.

RQ5—Methods of Validating Trace Data

We were able to identify two major approaches to validate trace data: (i) expert reasoning and (ii) comparing with other modes of data. A vast majority of the studies relied on expert reasoning for the validation of traces. For instance, Tang (2021) designed content pages corresponding to each SRL phase in Zimmerman’s model for their MOOC study and mapped traces based on task structure and system features that fostered self-regulation. It is to be noted that for our review, studies that used trace-based operationalizations from past studies also come under the category of expert reasoning, since they still indirectly rely on the reasoning of experts. One such example is that of Wong et al. (2021), who cited previous works to justify their choices of operationalization. Researchers who use data-driven techniques look for emerging behavioral patterns in the empirical data and use expert rationale to map those patterns to SRL theory using a bottom-up approach (K.-Z. Chen & Li, 2021; Maldonado-Mahauad et al., 2018) (see the section Theory-driven and data-driven approaches can be combined for creating more comprehensive trace libraries for more on data-driven techniques).

The second approach for validation involves comparison with multimodal data sources. This method involves corroborating digital traces with another measurement of SRL that has been historically relied upon as a valid interpretation of SRL, such as think-aloud protocols, self-report questionnaires, and semistructured interviews. Examples include Fan, van der Graaf, et al. (2022b) and Bernacki et al. (2025), who used think-aloud protocols to validate their trace libraries. In the case of Fan, van der Graaf, et al. (2022b), both trace data and think-aloud data were collected over a 45-minute writing task. They derived theoretically driven SRL processes from trace data and think-aloud data separately, synchronized them on the 45-minute timeline, and measured the match rates of the two channels to check the alignment of SRL processes identified from both channels. Their initial attempt gave them a median match rate of only 38.97%. They enhanced this match rate by improving their trace data library with data-driven approaches, but only up to 54.24%. This gives us an idea of how even theory-driven trace data may be susceptible to validity concerns and how keeping a critical view of the measurements and additional data triangulation efforts is necessary to improve the trace libraries. However, it must be acknowledged that a 100% alignment of trace data and think-aloud data is not realistic, as they may measure different aspects of SRL (Rovers et al., 2019). Also, there is a possibility that some digital events may co-occur with multiple verbalized SRL processes as identified by Bernacki et al. (2025), who undertook their validation study in a learning task sampled from a classroom course in a laboratory setting. Also, think-aloud, like any other measurement, suffers from limitations, including validity concerns (Young, 2009). Further, it is worth mentioning that Fan, van der Graaf, et al. (2022b) managed to detect SRL processes from 96.81% of a 45-minute task using trace data, compared to only 58.34% with think-aloud. It is an overestimation to expect that a learner will continuously verbalize their thinking, especially in long learning sessions, and trace data can overcome this limitation and help to capture much more user actions at a much finer granularity. The vast advantages of trace data outweigh its current limitations.

Rather than asking students to verbalize their actions, Salehian Kia et al. (2021) asked the students to self-report their SRL phase periodically during their activity, which they later used as ground truth. The learners were asked to report every 20 minutes in the learning environment about what they were doing at that moment (planning, enacting, or adapting), and they measured the agreement of these in-situ self-reports and trace data using Cohen’s kappa. On the other hand, Jamieson-Noel and Winne (2003) compared trace data with post hoc self-report surveys on specific SRL strategies and tactics and found considerable differences between the two. Ye and Pennisi (2022) compared their LMS trace data to self-reported questionnaires collected before and after the course. The LMS trace data correlated better with the post-course self-reports. However, there was again very little correlation between the SRL scales of both measurements. Only goal-setting showed correlations between the self-report and the trace data. Other scales showed none and, in some cases, even negative correlations. Cicchinelli et al. (2018) also looked for correlations with pre-course questionnaires and found positive correlations with only the frequency of planning and monitoring processes with trace data.

In summary, studies that have attempted to validate their trace libraries so far using other data sources have reported considerable mismatches between SRL processes derived from trace data and their second data channel. These mismatches were attributed to several factors, including faulty interpretations or over-interpretations (Fan, van der Graaf, et al., 2022b). An example of such misinterpretation is “highlighting during reading,” which was initially interpreted as a high-cognition process (elaboration/organization) but was later amended to a low-cognition process (reading). Additional data-driven efforts helped in fixing these theory-driven trace misinterpretations. This is an example of how a combination of theory-driven and data-driven analyses can improve the validity of our trace-based studies. Among other reasons for reported mismatches between self-report questionnaires and trace data, Salehian Kia et al. (2021) posit that distinguishing planning and adapting phases from an adjacent phase (i.e., the enactment phase) requires more information. They also found that real-time endorsements of highly self-regulated learners tended to disagree with theory-driven trace indicators of the adapting phase, which they reported as enactment. Ye and Pennisi (2022) conducted cluster analysis and semistructured interviews to delve deeper into the reasons for mismatches between self-reports and digital trace data. However, contrary to Salehian Kia et al. (2021), they found that in learners with high self-regulation, self-reports and trace data correlated better than in learners with low self-regulation. Jamieson-Noel and Winne (2003) attributed the mismatches in their study to students’ different criteria for self-reporting as compared to the experts’ reasoning for the corresponding study tactic. Students may not accurately report the use of a tactic after the study. Jamieson-Noel and Winne (2003), based on a prior study (Howard-Rose & Winne, 1993), also mention that students may have a better understanding of their overall study strategy when asked in retrospect in a questionnaire, compared to their granular study tactics. This issue/opinion about the grain size of data for analysis of SRL has been revisited in a recent review (Rovers et al., 2019).

Discussion

The findings of our review indicate that the challenge of balancing contextualization and generalization in trace-based measurements of SRL must be considered from the very beginning of any trace-based research, even before data collection begins. This balance can be achieved in two broad aspects—design and theory. Design of a learning environment that is deployed in a real-world setting (such as a MOOC) should aim to achieve a generic setup that can be replicated by other researchers across similar settings. That will help researchers understand self-regulation at play in authentic situations, which can help in conceptualizing traces that can be reused in other similar learning setups. Bespoke learning environments designed to prompt SRL behavior often come with enhancements that may not be characteristic of a real-world system. The design of such systems, while being contextual, can still be generic in the type of traces that they capture. The category of traces that we identified in our answer to RQ3 can help designers of such systems conceptualize trace data in their learning environments. It is worth noting that categorization of these interactions is also dependent on the instructional design and the design of the learning system. Interaction with a page of text offered as content material may not be equivalent when the same content is provided by a peer on a discussion forum, and our proposed categories of traces will be able to identify such differences. Suitable theoretical grounding depends on the extent to which a learning environment can trace learners’ actions, and our categories of traces are also targeted toward improving this tracing capability of a learning environment if taken into consideration while designing the learning environment.

Generalizability in theory starts when researchers theoretically hypothesize and map traces to the constructs of a theoretical model. Researchers should choose a theoretical model for grounding with considerable care and justification, keeping in mind the nuances each SRL model offers. Once chosen, researchers should ideally aim to exhaustively trace all the SRL processes of the model. This is likely an iterative process, and investing multiple cycles into this process is worthwhile, which will ultimately strengthen the overall research. The SRL processes identified in RQ4 and our process of coding them into SRL models, coupled with the categories of traces in RQ3, can be useful toward designing learning systems that are capable of tracing the SRL processes in different learning contexts (RQ2).

The rest of the discussion section is structured into three main sections: theoretical considerations, design considerations, and validation considerations, presenting our key learnings from this systematic literature review and offering concrete suggestions for future research.

Theoretical Considerations

Technological advancements have come a long way since the first theoretical SRL models were introduced. We can now capture very granular information about the learners’ activities in a learning environment using trace data. When combined with multimodal data sources, we obtain even more granular data that can capture very subtle SRL processes as they are externalized by learners. In light of these advancements, we can and should interpret learners’ actions even more thoroughly, reevaluate SRL models in digital learning contexts, and refine them as needed. Future theoretical models should take multimodal data sources into consideration and offer guidelines on how such models can be operationalized using multimodal data.

Need for the use of holistic trace data mappings in SRL studies

Our review finds that several researchers prefer a consolidated model of SRL, combining dimensions from multiple models. A similar observation was seen in the review by Saint et al. (2022). While such an approach may be convenient for mapping trace data, it may raise questions of construct validity (Saint et al., 2022). Our suggestion for researchers is to first make adequate efforts to fit their context into the constructs of a single theoretical model. They may still decide to integrate dimensions from other theories, depending on their study. However, since constructs of self-regulation are interrelated and co-dependent, studying only specific aspects of SRL (unless warranted by research questions) in theoretical models may come with limitations. There are phases and facets of SRL (as seen in RQ4) that have received less attention from researchers. The meta-analysis by L. Zheng (2016) also indicates that there is more virtue in focusing on all the phases of self-regulation rather than specific ones. Researchers should make purposeful efforts toward comprehensive coding of events in a learning task, rather than mapping only a small set of actions or sequences of actions to SRL processes. One such notable effort is that of Fan, van der Graaf, et al. (2022b), who demonstrated that with extensive efforts, it is possible to generate a great deal of inferences about the learners’ SRL processes based on their traced actions throughout the learning session. Learners with a specific goal in mind have agency over their path to achieving that goal (Hadwin, 2008; Saint et al., 2022). Their actions have reasons, and this notion leads us to believe that with adequate effort, most learner actions can be interpreted and mapped to higher-level self-regulatory processes. Additionally, the use of dimensions of a single theoretical model sets a precedent for future researchers on how to operationalize those dimensions using trace data in another similar context. Prior efforts by Greene and Azevedo (2009) to elaborately operationalize the constructs of Winne & Hadwin’s model can be taken as an example for such holistic mappings, which were later referred to by many researchers. However, it is worth mentioning at this point that the goodwill of the researchers alone may not be sufficient to capture certain self-regulatory processes using trace data. Certain metacognitive processes may not compel a learner to act physically and, in turn, do not leave sufficient traces for researchers to operationalize. Researchers may consider other data channels to operationalize such SRL constructs and supplement trace data. Notable examples in our review include Taub and Azevedo (2019), who used eye-tracking, and J. Zheng et al. (2019), who coded chat messages for certain SRL processes and collaborative self-regulatory processes. Taub et al. (2022) also proposed a trace-data-based measure for operationalizing self-motivation (see section Different pedagogies prompt different design choices). Researchers should make a purposeful decision about the type of study they want to conduct and which SRL dimensions they can analyze, and then specify what the digital learning system should capture as traces. At the same time, this also serves as a motivation for advancements in software development and technological improvements for capturing enhanced traces. Future research questions in SRL should focus on thorough mappings of actions in learners to a theoretical model and offer empirical benefits of such efforts.

A thorough consideration of all aspects is necessary before choosing a theoretical model

Zimmerman’s cyclical model (Zimmerman & Moylan, 2009) and Winne & Hadwin’s model (Winne & Hadwin, 1998) emerged as the two popular models used by researchers in trace-based SRL research. Before we discuss our results further, we must outline the points of similarity and difference between these two models. Both models conceptualize SRL as a process unfolding over phases (Panadero, 2017). In the case of Winne & Hadwin’s model, however, these phases and the processes within them are not as clearly demarcated as they are in Zimmerman’s model. Zimmerman’s model has clearly defined and discrete process categories and processes within them under each of the three phases of SRL, which likely contributes to the popularity of Zimmerman’s model for trace-data-based studies (Saint et al., 2022). On the other hand, Winne & Hadwin’s model conceptualizes SRL as a free-flowing recursive process where each of the five facets of COPES (i.e., conditions, operations, products, evaluations, standards) keeps on evolving throughout a learning activity over four loosely cyclical and recursive phases (Greene & Azevedo, 2007; Panadero, 2017). The Winne & Hadwin model’s relatively flexible conceptualization could be useful to model activities that are unstructured and may involve multiple cycles of SRL (Bernacki, 2017). The model’s grounding in the information processing theory (Greene & Azevedo, 2007) makes it suitable for modeling sessions where the learner acts as an initiator as well as a reactor to stimuli. Instead of attempting to trace one of the three SRL phases, researchers can attempt to trace the dynamic unfolding of COPES using SRL processes without being restricted to a strict chronological structure to comply with and see the phases of SRL emerge in the traced data in retrospection, as it did in a recent study (Nath et al., 2024). Further, the smaller grain size of SRL processes compared to phases makes it more suitable for modeling using trace data (Howard-Rose & Winne, 1993; Jamieson-Noel & Winne, 2003). Few papers in our corpus explicitly investigated the dynamic nature of COPES, and that remains an interesting and open perspective to investigate in the future. The theoretical basis for modeling SRL should fit the learner, the task, and how the learner engages with it. Researchers should have a clear understanding of their context in terms of the task type, constraints, domain, and duration (as identified in our RQ2), along with other factors that can influence self-regulation (Panadero, 2017), before they make their choice for theoretical grounding.

Our review raises another question: Can and should the theoretical constructs of two models be combined conceptually to generate better traces? Let us take the example of Paquette et al. (2021). Their study in Betty’s Brain ITS used the process information seeking, which represents seeking information to progress on the task. Information seeking can occur at different phases of the activity. Depending on whether it is operationalized as reading a page for the first time or reading a related page after reviewing quiz results, information seeking can represent a trace from either the preparatory phase or the appraisal phase, respectively. SRL is cyclical and recursive, and it is not unreasonable to expect SRL processes that we may typically associate with one phase to occur in another, even in structured tasks. Researchers should consider such possibilities when creating their coding frameworks and examine the nuances of each theoretical model before making any selection. The possibility of merging SRL theories should only be considered if the uniqueness of different models can enhance our coding and help in conceptualizing SRL for the task better, rather than in a way that combines redundant constructs. Can we create separate trace libraries for a learning context using two different theoretical models? Is one model more suitable than the other for certain contexts? Can trace libraries created based on various theoretical models be combined? Can insights generated from two theoretical models enhance our understanding of self-regulated learning (SRL) in our learners? Can we generalize and recommend the right theoretical model of SRL for a set of learning tasks (say MOOCs)? These are some questions that future empirical studies should address in this regard.

Theory-driven and data-driven approaches can be combined to create more comprehensive trace libraries

We identified two main approaches to operationalizing SRL processes using trace data:

In contrast, data-driven approaches rely on analytical techniques to infer higher-level SRL processes from raw learning data. For example, Maldonado-Mahauad et al. (2018) used process mining to extract interaction patterns from MOOC log data, which were then interpreted as indicators of various SRL processes. Similarly, K.-Z. Chen and Li (2021) applied lag-sequential analysis (LSA) to identify behavioral patterns in learners categorized as high or low SRL based on questionnaire results. Their findings revealed distinct patterns that differentiated the two groups, as well as some interaction behaviors that were common across both.

Fan, van der Graaf, et al. (2022b) demonstrated how integrating theory-driven and data-driven approaches can improve and enhance the mapping of trace data to SRL processes. They began with a theory-driven SRL process library based on an established model and were able to enhance their initial process library by applying process mining to think-aloud data collected concurrently from learners. This data-driven analysis allowed them to expand and refine their initial trace-based SRL process library. Such studies illustrate that researchers need not rely solely on predefined SRL processes; instead, they can iteratively enhance trace libraries based on data from empirical data collected during or after the learning activity.

Design Considerations

The design of a learning system can both foster self-regulatory processes in learners and support researchers in operationalizing these processes through trace data. The design of the learning environment is a crucial phase in the SRL research cycle, and each step of the design process should be grounded in theoretical justification. Equipping the learning environment with enhanced tracing software, using multimodal solutions to maximize the capture of learner behavior, using carefully hypothesized and theoretically grounded segmentation of the content, and integrating tools based on the generic categories of traces offered in this literature review are some a priori design choices that can help progress toward creating a generic design that can then be repurposed for contextualized SRL studies.

Pedagogy can guide system design choices and generate more informed traces

Pedagogy can serve both as a boundary and a frame of reference for researchers, offering opportunities to capture more meaningful trace data. While capturing trace-based measures is often viewed as a technological and theoretical challenge, the studies discussed in the subsection Different pedagogies prompt different design choices show how instructional and pedagogical design can be leveraged to operationalize SRL processes through learner actions. We suggest that researchers place particular emphasis on pedagogical and instructional design decisions to enable richer and more informative traces of SRL processes.

Additional tools and software can enhance a learning environment’s tracing capabilities

Modern digital learning systems are capable of capturing data traces at unprecedented granularity. Designers of these systems can harness this capability to support the researchers, provided there is clear communication of the research needs. It is advisable that researchers first pre-conceptualize their theoretical underpinnings clearly, which in turn can help them hypothesize ways in which traces can align with their research. This will help them to work with designers of learning systems to incorporate tools to capture their targeted data. There are several examples in our review that used custom-built tools within their learning systems to capture trace data that would otherwise be missed in standard learning systems. Features like linking notes to external information (Hadwin et al., 2007), clicking a link to obtain the definition of a term (Bernacki et al., 2012), and creating concept maps (Roscoe et al., 2013) help externalize the latent mental processes of learners. Additional integrations with learning systems can also help characterize interactions that enhance a trace. For example, characterizing “accessing a page” as reading, skimming, or scrolling back (Bouchet et al., 2012; Jamieson-Noel & Winne, 2003; Zhang et al., 2021) can help differentiate SRL processes. Instead of undertaking a major overhaul of learning systems, researchers can consider integrating lightweight, complementary tools and software to enhance data capture. Our supplementary document provides an exhaustive list of such tools and integrations.

Multimodal data can provide enhanced traces

Apart from multichannel data from added tools, recent studies have shown that valid measurements of self-regulation can be captured using multimodal sources like eye-tracking (Fan, Lim, et al., 2022a). Common trace data is captured only through physical interactions with the learning system, and modalities like eye-tracking can shed light on the “blind spots” between these interactions. In two different studies in the MetaTutor learning environment, Taub and Azevedo (2019) interpreted shifts of eye gaze from text content AOI to Learning Goal AOI as evidence of planning, while Bouchet et al. (2012) used time spent on a page captured using log data to categorize reading behavior during planning. These examples illustrate how the same activity within the same learning environment can be operationalized differently depending on the modality used. Taub and Azevedo (2019) were able to identify distinct SRL processes from eye-tracking data while using log data to identify others. Fan, Lim, et al. (2022a) demonstrated how combining eye-tracking data with log data can deepen the interpretation of SRL behaviors.

Chunking content into multiple pages can make SRL behavior overt

Thematic segregation of content in a learning platform into modules can help externalize learners’ SRL behaviors (see subsection Use of multimodal channels to capture traces). Learner interactions—such as opening a module, highlighting, and annotating the module—can serve as proxies for higher-level SRL processes. These design choices need to be driven by theory and expert hypotheses. Our supplementary material outlines how contemporary researchers have implemented such strategies, offering guidance for future researchers developing trace-based SRL learning environments.

Validation Considerations

Emerging validation studies in SRL indicate plenty of scope for progress in this area. Most studies have reported substantial discrepancies between trace data and the corresponding validation data sources. Researchers have attributed such mismatches to faulty or over-interpretations (Fan, van der Graaf, et al., 2022b), difficulty in distinguishing phases of SRL that tend to co-occur or occur in adjacency (Salehian Kia et al., 2021), and misalignment of students’ own criteria of self-reporting and researchers’ reasoning for a study tactic (Jamieson-Noel & Winne, 2003). Researchers have also identified learners’ internal SRL conditions playing a part in these mismatches (Salehian Kia et al., 2021; Ye & Pennisi, 2022). Studies have also highlighted that SRL processes operationalized using traces may co-occur with multiple verbalized SRL processes (Bernacki, et al., 2025; Fan, van der Graaf, et al., 2022b), opening up the possibility of a many-to-many mapping of trace data to self-reported SRL processes. In light of these findings, we need to reevaluate what validation should entail in trace-based studies in SRL.

A look at validation of traces in SRL using the lens of a modern validation framework

When examining contemporary validation theories, Cizek (2020) highlights a critical limitation that these theories fail to distinguish between two incompatible yet pertinent concerns: (a) the intended meaning of the measurement and (b) the intended use of the measurement. Cizek (2020) notes that validity concerns encompass both these issues, and each requires independent consideration. These issues seem to extend into trace-based measurements as well. We have seen that researchers often adopt theoretical rationales from other studies into their context (also highlighted in section RQ5—Methods of validating trace data). Extending Cizek’s view, we propose that validation of trace libraries in SRL research needs to consider two distinct aspects regarding trace libraries: (a) whether the trace library truly indicates the SRL processes that it aims to operationalize and (b) whether the trace libraries can be reused as a proxy for the corresponding SRL processes in a new setting. Researchers not only need to validate their trace libraries with relevant techniques but also should offer adequate justification as to why the trace library created in a previous context can be validly used to identify self-regulation in a new setting. Efforts undertaken by Fan, Lim, et al. (2022a; Fin, van der Graaf, et al., 2022b; Fan et al., 2023) and Bernacki et al. (2025) to validate trace libraries by temporally aligning them to student verbalizations and attempts by Salehian Kia et al. (2021) to validate using students’ periodic real-time endorsements can serve as examples to address the first validity concern—that is, the intended meaning of trace libraries. The demonstration by Bernacki et al. (2025) that such validated trace libraries can possess substantial predictive validity in another comparable setting serves as a precedent for addressing the second validity concern, which is to prove whether trace libraries can be reused in analogous settings.

Validation is an urgent need in trace-based measurements

Our review acknowledges the importance of experts in interpreting learners’ actions, as the vast majority of the papers use researchers’ hypotheses and domain expertise to measure constructs of SRL models using trace data. Our results in response to RQ5 in section RQ5—Methods of validating trace data list studies that compared such expert hypothesis-driven trace libraries with other measures of self-regulation. All studies found considerable differences between expert-based trace interpretations and self-reports/think-aloud data. Fan, van der Graaf, et al. (2022b) attributed their mismatches to invalid interpretations of trace data, which were based solely on theoretical hypotheses. This reiterates the validity concerns echoed by Winne (2020) and possible fallacies of taking expert reasoning at face value. Trace data, which comes with its multitude of unmatched benefits and conveniences, requires careful attention and rigorous measures to ensure its validity. Such validation studies can improve the reusability of trace-based libraries in other relevant contexts, which can also improve the reliability of SRL studies that are informed by expert-opinionated trace operationalizations.

The validation efforts of Fan et al. using concurrent think-aloud protocols (Fan, Lim, et al., 2022a; Fan, van der Graaf, et al., 2022b; Fan et al., 2023) and Bernacki et al. (2025) and Salehian Kia et al. using periodic self-reports during the task (Salehian Kia et al., 2021) are particularly noteworthy. These authors attempted to temporally validate their trace-data-based SRL processes, keeping the temporal event view of SRL intact (Winne, 2010). There could be obvious concerns about whether these validation approaches may interrupt the natural flow of activity of the learners, and if such tedious studies are worth taking up. The recent multimodal study (Bernacki et al., 2025) has demonstrated that validated trace events (against think-aloud protocol) in controlled laboratory settings can be reapplied in classrooms for future learners and possess ample predictive validity. Researchers should thus consider these validation studies not as a burden but as an opportunity to strengthen their trace-based research. Even a small-scale but thorough validation study is likely to translate into improvement of inferences generated in naturalistic studies at scale, as identified by Bernacki et al. (2025). They also found that certain digital traces co-occurred with multiple verbalized SRL processes, providing empirical support for Winne (2020), who posited that a digital trace may be indicative of multiple SRL processes and should be associated with a probability for each possible SRL process, rather than a one-to-one mapping. This finding is interesting for future pursuits in validating trace-based SRL research.

Continuous measures, such as think-aloud, are a better means of validating trace data compared to global questionnaires

Trace-data-based SRL research, in its current state, relies heavily on experts who hypothesize trace data and map them to SRL processes. While attempts to compare trace data against other measures of self-regulation are commendable, it cannot be denied that each measure has its share of limitations. Think-aloud data are inherently reliant on participants’ verbalization abilities and have their share of validity concerns (Young, 2009). It has also been suggested that questionnaires are not suitable instruments for measuring specific local SRL strategies or tactics in a learning task (Bernacki, 2017; Jamieson-Noel & Winne, 2003; Rovers et al., 2019; Winne & Jamieson-Noel, 2002). All the studies in our review that looked for validation of their trace-data-based operationalizations using questionnaires found considerable mismatches. Apart from possible contextual reasons listed in each study (detailed in section RQ5—Methods of validating trace data), we should not forget a fundamental point that questionnaires measure SRL from the perspective of an aptitude, while trace data measures SRL from the event perspective (Winne, 2010). Experts have also emphasized the important point that aptitudes are malleable; they can change and develop throughout a learning episode (Azevedo et al., 2010; Winne, 2010). So, researchers should not presume that aptitudes measured before a learning episode will remain constant throughout an intervention. Questionnaires collected post-intervention may correlate better with trace data, as Ye and Pennisi (2022) found; however, the grain sizes measured by questionnaires and trace data are still not the same (Jamieson-Noel & Winne, 2003; Rovers et al., 2019). Salehian Kia et al. (2021) developed a workaround by collecting periodic self-reports throughout the learning task, but they too found inconsistencies between the trace data and self-reports. Keeping all these results and previous suggestions in view, we propose that measurements that can capture SRL and its changes continuously (Winne, 2010), such as think-aloud data, are likely to be more effective measures for investigating the validity of trace-data-based codings. Alternative measures, such as retrospective think-aloud protocols (Pathan et al., 2021; Prokop et al., 2020) and retrospective semistructured interviews (Ye & Pennisi, 2022), can also provide continuous measures of SRL, although not on-the-fly. These suggestions had already been somewhat indicated in the literature (Bernacki, 2017). It has also been discovered that while students’ self-reports can provide a better account of the global measure of self-regulation, when it comes to local and specific SRL strategies and tactics, behavioral indicators (such as traces) give a more accurate account (Jamieson-Noel & Winne, 2003; Rovers et al., 2019; Winne, & Jamieson-Noel, 2002). This can be an important aspect to consider before researchers decide to combine self-reports with trace-based measurements. SRL is inherently a multilevel construct (Howard-Rose & Winne, 1993), and both its higher and lower levels of conceptualization can contribute to the understanding of how people learn. It is hence worth exploring which measurements are more suitable for measuring lower-level tactics (such as scrolling through a chapter at the outset of a task or highlighting a paragraph during the task) vs those suitable for high-level phases (like planning or reviewing).

Limitations