Abstract

The importance of improving teachers’ use of culturally responsive practice (CRP) in the classroom setting has been widely recognized. Although quantitative data on teachers’ use of CRP has potential to be a helpful decision-making tool in advancing that goal, little is known about the psychometrics of classroom-based CRP measures, their utility in evaluating the impact of interventions designed to improve teacher CRP, or their use to inform teacher professional development in CRP. The current study reports findings from a systematic review of the research on the quantitative measurement of CRP using the 2020 Preferred Reporting Items for Systematic reviews and Meta-Analyses standards to document how CRP is operationalized and measured in prekindergarten–12th-grade classrooms in the United States (U.S.). Searching across six databases, 27 measures were identified for inclusion. The vast majority of measures were teacher self-report surveys, and relatively few were student-report or external observer assessments. We examined the availability of classroom-based observational and survey instruments and critically analyzed each measure through an argument-based approach to validation. We concluded that although some CRP measures hold promise, the validity of their interpretation and use is not adequately supported by evidence, with some exceptions. This lack of empirical evidence is exacerbated by the limitations of single-informant measurement of CRP. More multi-informant assessment approaches are needed.

With increasingly diverse student enrollments in United States (U.S.) public schools, there is growing recognition of the importance of improving teachers’ use of culturally responsive practice (CRP; Gay, 2018). CRP is an asset-based approach intended to support students’ academic and social-emotional well-being at school by incorporating students’ personal strengths, cultural knowledge, lived experiences, and home communication styles into daily classroom interactions (Gay, 2018). Importantly, CRP goes beyond curricular adaptions. CRP theory recognizes that teachers can enrich students’ classroom experience through their instructional content (what they teach) and practices (how they teach) to make learning encounters more engaging and, in turn, increase students’ access to learning and opportunity for academic achievement. In this article, we use the term CRP to encompass three closely related terms: culturally responsive teaching (Gay, 2018), culturally relevant pedagogy (Ladson-Billings, 2021), and culturally sustaining pedagogy (Paris, 2012).

The important nuances between the foundational theories and subsequent iterations have been discussed extensively by the authors themselves (see Gay, 2018, 2023; Ladson-Billings, 2014, 2021; Paris & Alim, 2014). For example, critical consciousness was conceptually present in Ladson-Billings’s culturally relevant pedagogy, but not necessarily in Gay’s culturally responsive teaching. However, our choice of CRP in the current article is not intended to indicate a preference for Ladson-Billings’s or Gay’s or Paris’s definitions but, rather, to reflect of the nomenclature commonly recognized among educators in the field and to be inclusive of both instructional and relational aspects of classroom practices. The term CRP is intended to capture the constellation of critical, culturally-focused educational ideologies that came both before and after the identification of CRP in the academic literature nearly three decades ago (Gay, 2000; Ladson-Billings, 1995). Since then, there have been renewed calls for CRP as a promising approach for addressing academic and discipline disparities stemming from cultural and racial bias in schools (e.g., Holcomb-McCoy, 2021).

A persistent challenge for the field is the definitional and conceptual issues surrounding CRP. There is a consensus that CRP represents a paradigm shift—rejecting the notion that when students’ cultural identities do not conform to the dominant school culture (i.e., White, middle-class norms; Carter, 2005) it is a barrier to their academic success. Instead, CRP embraces the perspective that students’ cultural differences are strengths to be leveraged for the advancement of student learning. However, variations among the conceptualizations of CRP are many, particularly since the amplified calls for equitable, racially just education over the last several years (Ladson-Billings, 2021; National Education Association, 2021).

The inclusion of CRP as a possible vehicle for racial justice in education reflects the field’s evolving understanding of CRP in both concept and practice. Historically, conversations about how to provide high-quality education to racially minoritized students have circumvented direct references to race, racism, and White supremacy in the U.S. education system (NEA Center for Social Justice, 2020). Instead, classroom racial-ethnic diversity often has been addressed using a harmful color-evasive orientation (i.e., belief that race does not matter and should not be considered in interactions or decision making; Apfelbaum et al., 2012; Hazelbaker & Mistry, 2021) or a celebratory but often superficial emphasis on multiculturalism (i.e., the recognition and appreciation of distinct ethnic and cultural groups, similar to the Contributions Approach in multicultural education; see Banks, 1993). However, these approaches, which may be more comfortable for White teachers to adopt than speaking about race explicitly (e.g., Luther, 2009), have not been shown to improve student well-being and can even perpetuate racism and discrimination in the classroom setting (Au, 2017; Ngo, 2010; Plaut et al., 2018). For example, when considering multicultural education, it is not that the original theory was designed to discuss racial, ethnic, and cultural differences at a perfunctory level but that the approach was often misrepresented when adopted by school practitioners and omitted the crucially important critical perspective (Banks, 1993; Nieto, 2017). This superficial, or whitewashed, lens is a common pitfall in the implementation of classroom-based frameworks designed to support racially minoritized students (see Compton-Lilly et al., 2021; Sandoval et al., 2016), and CRP is not immune to this problem (Ladson-Billings & Dixson, 2021; Sleeter, 2012).

Ladson-Billings (2021) recently acknowledged the tension between encouraging the adoption of CRP as a focus of school improvement initiatives and ensuring that the theoretical foundations of CRP do not get overlooked. Efforts have been made to restate the critical race theory perspective that underlies CRP (e.g., Dixson, 2021) and point to CRP as a viable tool to promote racial justice in education (e.g., Curenton et al., 2022; Holcomb-McCoy, 2021). However, there are significant feasibility concerns that temper educators and researchers in making the direct connection between CRP and racial justice. For example, when working with school districts to introduce schoolwide initiatives or classroom-based interventions that address racism directly, there is a risk of resistance to discussions regarding race and equity, particularly in a polarized sociopolitical climate (Parkhouse et al., 2019). Fortunately, since hitting a racial justice tipping point in 2020, the field has demonstrated a greater willingness to grapple with racism in the education system (e.g., Metro, 2020), which has also resulted in more scholars weighing in about the critical components of CRP. However, educators and researchers’ increased interest in CRP has been met with pushback among some conservative groups regarding “critical race theory”–related topics, leading some researchers to adjust the language they use to maintain their working relationships with school partners (Kaplan & Owings, 2021).

Operationalizing Culturally Responsive Practice

Related to these broader definitional challenges, the field of CRP measurement has been constrained by a “jangle” fallacy (i.e., the use of many different labels for the same or overlapping, similar constructs; Kelley, 1927), whereby terms such as multicultural education, culturally responsive teaching, culturally relevant pedagogy, and culturally sustaining pedagogy have evolved over time through expanded interpretations while also often continuing to be used interchangeably (Ladson-Billings, 2021). Moreover, the following approaches could all arguably fall under the CRP umbrella: remedying home-school dissonance by improving the alignment between students’ home and school cultural ways-of-knowing and learning (Arunkumar et al., 1999; Tyler et al., 2018); enhancing teachers’ understanding of students’ cultural histories and experience as “funds of identity” (Esteban-Guitart & Moll, 2014); exploring teaching and learning as cultural practice (Gutiérrez & Rogoff, 2003; Nasir et al., 2006); and employing critical race theory and critical literacy (Aronson et al., 2020). The fluid and expansive terminology used in relation to CRP constructs, though vital for developing the theory and key concepts, has impeded their operationalization in the form of quantitative measures.

Resolving differences among conceptual and terminological CRP approaches is foundational to creating robust measurement tools. For example, CRP may be comprised of both generic and culturally specific classroom interactions, making CRP-specific measures difficult for educational leadership to identify and select (Jensen, Grajeda, et al., 2018). Some educators draw connections between CRP and differentiated instructional approaches (e.g., Universal Design for Learning; Kieran & Anderson, 2019). This is likely to stem in part from conflation of cultural differences (i.e., group-membership-related identity differences) and individual learning differences, which could result in the inaccurate measurement of CRP (Dixson, 2021). Furthermore, CRP has also been considered by some scholars and practitioners to be “just good teaching,” which could be interpreted in various ways. Ladson-Billings (1995) posited that CRP is arguably an approach for all students—yet is not actualized in practice for racially minoritized youth because U.S. mainstream education is largely responsive to hegemonic whiteness (Tevis et al., 2022). Alternatively, in a different context, reducing CRP to “just good teaching” could reflect a tendency to rationalize color-evasive perspectives and racial redirects (i.e., minimize the importance of CRP by implying it is an instructional coincidence; Liggett et al., 2017), underestimating the need to measure CRP more directly.

Morrison and colleagues (2008) sought to operationalize CRP in prekindergarten (PK) through 12th-grade education through an international review of the literature by analyzing 45 classroom-based studies. Their review identified 12 descriptors of what CRP “looks like” in a classroom. Yet none of the 45 studies reviewed included a definition that encompassed all 12 indicators and, most importantly, the quantitative measurement of each study’s framework (i.e., units of observation; DeCarlo, 2018) was not discussed. More recently, Aronson and Laughter (2016) presented a helpful comparison of Gloria Ladson-Billings’ culturally relevant pedagogy (1995, 2009, 2021) and Geneva Gay’s culturally responsive practices (2000, 2018) and subsumed both under the label culturally relevant education, inspired by Dover’s (2013) teaching for social justice conceptual map. A necessary next step to move the field forward is to build upon the existing literature syntheses through comparative and critical analysis of CRP measurement constructs.

Utility of Quantitative CRP Measures

Quantitative CRP measures are needed for formative (e.g., coaching, professional development) and research (e.g., intervention effectiveness) purposes. When used toward formative goals, such measurement tools can inform teacher professional development and growth guided by relevant and timely data. Indeed, school districts are showing increased interest in promoting CRP at the classroom and school level (Dixson, 2021), and quantitative tools could help advance these districts’ initiatives and goals. Regarding utility for research, tools that validly and reliably measure CRP could help to empirically test the theory of change that improving teachers’ mindsets and beliefs will influence their practices in the classroom, which, in turn, will increase students’ academic achievement. In addition, our own interest in this study was motivated in part by the difficulty we had finding adequate quantitative CRP measures to detect effects of a CRP-focused teacher intervention that we were developing. This demonstrates the need for CRP-focused outcome measures for use in intervention effectiveness–focused studies if we are to learn what interventions or intervention elements are effective at improving teachers’ CRP. Nonetheless, there are potential pitfalls of quantifying the rich, dynamic CRP scholarship, including reductionism and essentializing minoritized groups. For example, instructional materials used to prepare preservice teachers for working with PK–12 students from backgrounds different than their own have, in the past, essentialized group-specific cultural norms and customs in ways that oversimplified the complexity of culturally responsive and sustaining practices (see Gorski, 2008). For this reason, we were in search of a measure that did not focus on a sole demographic group but, instead, captured teachers’ responsiveness and critical consciousness of students’ multiple, complex intersectional identities.

We recognize that the extent and complexity of racialized inequality cannot be quantified given the legacy of enslavement, Jim Crow, and anti-Black brutality that is deeply rooted in our nation’s history and origin story (Gillborn et al., 2018). As such, it is important to note that quantitative measures can only provide a partial picture and that mixed methods, incorporating both qualitative and quantitative approaches in complementary ways, are necessary. In sum, there is utility in quantitative measurement of culturally responsive classroom practices for the purposes of accountability and to inform teacher feedback and coaching to guide practice changes. Quantitative measures are also critical to help us determine whether intervention approaches are effective at improving educators’ CRP. However, in research contexts, these measures should be utilized as companions to qualitative data collection.

Knowledge Gaps and Barriers to Implementation of CRP Measurement

Differences in the operational definition of CRP, as well as a lack of consensus on the terminology used to describe this multifaceted practice, have likely led to the limited body of research on valid and reliable measures of CRP in PK–12 classrooms. Of the available CRP measures, it is believed that the majority are teacher self-report, meaning that teachers rate their own culturally responsive attitudes, values, beliefs, and/or proficiency in working with students in a culturally responsive manner (Larson & Bradshaw, 2017; National Council for Accreditation of Teacher Education [NCATE], 2008). These self-ratings pose various validity threats such as the introduction of social desirability bias, as raters tend to provide overly positive self-assessments (Grimm, 2010), and implicit racial biases (Gilliam et al., 2016; Worrell, 2022), of which educators may be unaware but nonetheless may impact their teaching. Efforts to guard against these biases by embedding social desirability scales in self-report surveys, or working to address implicit racial biases with raters, respectively, have had variable success (Perinelli & Gremigni, 2016; Vitriol & Moskowitz, 2021). Alternate methods of evaluation, such as student-report or observational measures of CRP, would allow researchers and administrators to assess the effectiveness of professional development efforts to increase classroom teachers’ cultural responsivity without the interference of biases.

Despite school districts’ growing interest in promoting CRP at the classroom and school level (Dixson, 2021), a challenge has been how to advise school partners and researchers about the best CRP measures to utilize. Before making practice and policy recommendations, it is essential to have a complete understanding of the current CRP measurement landscape. As such, to improve the accurate measurement of CRP and understand the quality of existing CRP measures, a systematic review of the literature is warranted. Specifically, a systematic review can document the availability and evidence of validity among classroom CRP measures, while also determining the core elements of CRP through data extraction and inductive analysis. Although there have been several broad reviews of the qualitative CRP literature (e.g., Aronson & Laughter, 2016), and reviews that were specific to content areas such as mathematics (e.g., Thomas & Berry, 2019) and English language arts (e.g., Wetzel et al., 2019), these reviews did not focus specifically on quantitative measures that could be leveraged for intervention research or be used as tools to inform changes in teacher practice. CRP also has evolved definitionally over time and will continue to do so (e.g., as it is subject to external political pressure). Therefore, a systematic review of the literature on CRP operationalization in quantitative measurement is timely and may also help build consensus regarding its definition.

Overview of the Current Study

The purpose of the current study was to identify existing CRP measures and provide information to help researchers and practitioners select specific measures that are most appropriate for achieving their measurement goals (e.g., evaluate intervention effectiveness, assess CRP among in-service and preservice teachers). We conducted a systematic review of the literature to examine the state of the science as it relates to the measurement of CRP. We intentionally searched for measures that could be used across different U.S. student populations, in lieu of group-specific measures. Our focus on measures with nationwide dissemination potential aligned with our aspirations of discouraging the predominately White U.S. teacher workforce from developing reductionist, stereotypical beliefs about cultural group membership, rather than continuously developing an understanding and proficiency in CRP as a complex practice. We sought to address a specific gap in the literature with regards to consequential validity, that is, the cumulative validity evidence in support of the proposed score interpretations and uses (American Educational Research Association [AERA] et al., 2014; Messick, 1998). The four stages of establishing a validity argument (Kane, 2006), also known as an interpretation and use argument (Haertel, 2018; Kane, 2013), informed the development of two guiding research aims.

Our first aim was to summarize the state of the science of CRP measurement, as it relates to the reliability and validity of existing instruments. Specifically, we report on evidence supporting the first two stages of interpretation and use arguments: (1) scoring and (2) generalization, including reports on classical test theory metrics (e.g., reliability, content-, convergent-, discriminant-validity) and evidence of measurement invariance. Measurement invariance (MI) testing, which helps to establish whether the measure works the same in different samples or across settings, is particularly important in CRP measurement research (relative to generally effective teaching) because CRP is highly sensitive to context, and measures of CRP in one setting may not translate well to others. MI testing can provide critical information other researchers need to know to make decisions about the valid use of a given CRP measure in their sample and setting of interest. In Aim 2, we shifted our focus to the latter stages of interpretation and use argumentation: (3) extrapolation and (4) use or interpretation. We characterized the construct of CRP as it is conceptualized and operationalized in classrooms. It is our hope that the findings will provide greater clarity and useful information for end-users aiming to utilize CRP measures in their work and for multiple purposes (e.g., research, practice).

Our study was confined to the definition of CRP as it is currently operationalized in the quantitative literature thus far, leading us to highlight gaps within the CRP measurement field. We used the term CRP to include culturally responsive teaching, culturally relevant pedagogy, and culturally sustaining pedagogy, given their similarities as asset-based pedagogies, as has been acknowledged by both Gay (2018) and Ladson-Billings (2021). We recognize and include the more recent term culturally sustaining pedagogy (Paris, 2012) in our review, which has been embraced by Ladson-Billings (2014); but we generally used CRP as the umbrella term, given its broad familiarity and recognition among practitioners and researchers. Because this study is intended to provide guidance to school practitioners and researchers on how to best evaluate and possibly increase use of CRP in the classroom, it was important to assess and report on the measures’ intended use among specific samples and contexts and not merely classical reliability and validity metrics. This comprehensive approach allowed us to better understand the utility and limitations of using high-inference CRP measures for complex, real-world applications.

Method

The current study followed the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) 2020 recommendations, whereby we also provided a rationale for any notable restrictions to study eligibility (Page et al., 2021). After the initial identification of studies and removal of duplicate records, we followed a multistep process that included an initial screening focused on relevance and study setting, and three consecutive eligibility phases specific to study design and reporting. Figure 1 provides a visual representation of the studies identified using the PRISMA-compliant flow diagram.

PRISMA 2020 flow diagram.

Search Strategy

The extant literature was obtained for systematic review via six research databases. For efficiency, we used the combined search function via EBSCOhost research platform, which included five education databases: Education Resources Information Center (ERIC); Education Research Complete; Education Full Text; Academic Search Complete; and the Psychology and Behavioral Sciences Collection. Additionally, we also ran the same Boolean search terms in the PsycINFO database given the inclusion of CRP research in the educational psychology field. Notably, we did not set any publication year criteria in an attempt to capture the CRP measurement literature in its entirety. Records were last sourced via database search in January 2021 and via expert and peer review in July 2022.

The search strategy was carefully crafted using the guidance outlined in the Peer Review of Electronic Search Strategies (PRESS) for systematic reviews (McGowan et al., 2016). We consulted three research librarians with expertise in the systematic review process who ultimately suggested a stringent use of Boolean search terms. Due to the expansiveness of CRP-related terminology, we used search terms where an asterisk captured the letters at the end of the term and the connectors “AND” and “OR” combined concepts to search for terms. We provide a full list of the search term combinations related to CRP, education, school, and measurement in Table 1. Given the importance of teacher-student relationships in the classroom milieu, we limited the review to those studies designed to evaluate teacher practices in the classroom environment. For example, studies that examined CRP among school counselors or other related service professionals were not included. Moreover, only studies that were published in English and conducted in the U.S. were included in this review. Although the international literature provides an important perspective about cultivating multicultural school climates amidst global tensions between nationalism and globalization, this area of research was beyond the scope of our study, which focused on CRP in U.S. schools. Including studies conducted only within the U.S. allowed us to evaluate the quality of CRP measurement through the perspective of the United States’ geographic, racial, and sociocultural landscape.

Search term combinations

Note. The search terms within each column were separated with the “OR” Boolean term. The terms across columns were connected by the “AND” Boolean term.

Additionally, only studies that underwent a rigorous peer-review process, including peer-reviewed academic journals or chapters in edited volumes, were included. Other reports, such as unpublished manuscripts, online reports, doctoral dissertations, conference abstracts, and other gray literature, were excluded. Any study identified as retracted was also excluded. Finally, only studies that examined CRP in PK–12 were included in the review given the substantial variability of higher education settings. However, studies that specifically surveyed undergraduates about their work as preservice teachers were included.

Following the identification of studies, we utilized Zotero reference management software to remove duplicate records. Whereas some of the eligibility criteria were automatically screened through search filters (e.g., English, peer-reviewed), we also reviewed all records (title/abstract) during this initial screening. Two reviewers independently worked through a subsample comprised of 10% of the eligible records; they then compared their inclusion/exclusion decisions and established 84% reliability before proceeding with the remaining records. Throughout the selection and data collection processes, reviewers utilized organizational tools from Zotero, including color-coded tags denoting study inclusion or exclusion, folders, subfolder, and shared libraries. If uncertain about whether a study met the inclusion criteria, the primary reviewer (first author, MF) would confer with her coauthors, and a broader research team of senior and mid-career researchers with expertise in systematic reviews, until the coauthors reached a consensus using the preset eligibility criteria. The first author (MF) and second author (JB) also held biweekly meetings, during which they monitored for rater drift by independently applying criteria and cross-checking with studies randomly pulled from the dataset.

Eligibility Criteria

The first phase of eligibility screening focused on study design, following the successful retrieval of study records. Specifically, we determined studies to be ineligible if the authors (a) did not utilize a quantitative CRP instrument; (b) included a measure, but it was unrelated to CRP; or (c) utilized a measure of CRP that was originally published and validated by another author. If a measure was relevant but originally published and validated through another study, the dataset was cross-referenced to ensure that only the original study was included; if it was not captured in the extant records, the original study was added. The same procedures were applied when measures of potential relevance were reviewed and listed in the articles’ reference list.

For the second phase of eligibility screening, we needed access to each measure’s items to address our second aim of characterizing how CRP is conceptualized and interpreted. If the items of a measure were not published or accessible, the study was excluded (see Figure 1). When coding whether a study included the published items, we first reviewed the full text to determine if the items were included in the narrative, tables, or figures. Then, we referred to the appendices; and if no measure items were reported, the title of the measure would be searched via Google to find whether the items were published elsewhere by the study investigators. To ensure that the items of measures we included in our search were fully accessible to readers, we limited our internet search time to 10 minutes per measure, exploring the first page of search results. We tested this approach with roughly 25% of the measures and found that searching past 10 minutes and searching the second page of results yielded no additional information.

The third phase of eligibility screening was focused on the evidence available to support the use of each measure in racially and ethnically heterogenous school and classroom contexts in the United States. Thus, to be included, the methods section of each study was required to report (a) psychometric properties (i.e., at least some report of reliability and validity, which is further discussed below) and (b) a PK–12 sample that did not focus solely on the experience of one demographic group. We stipulated the latter requirement to identify CRP measures applicable to settings where there is diversity within educational settings (i.e., racial and ethnic heterogeneity within schools and classrooms), rather than across settings. To be culturally responsive in a diverse classroom is a different set of skills than to be culturally responsive to one cultural and linguistic group only. The purpose of this review was to identify measures of cultural responsiveness in the context of classroom racial-ethnic heterogeneity, whereby measures identified would be generalizable for teachers with within-classroom racial and ethnic heterogeneity. To be included in this review, a study had to report either on reliability or validity. Although evidence of both reliability and validity is preferable in measurement studies, we set criteria of at least one to reduce the risk of reporting bias. Specifically, for reliability, we looked for studies to report metrics of internal consistency, test-retest, or interrater reliability. When examining a scale or subscale, internal consistency reliability could be captured through Cronbach’s alpha (Cronbach & Meehl, 1955), McDonald’s omega (Hayes & Croutts, 2020; McDonald, 2013), or another empirically supported metric of internal consistency reliability (e.g., Phi coefficient, Theta coefficient, Spearman’s rho). Test-retest reliability was measured using Pearson’s correlation coefficient (Pearson, 1900; Rodgers & Nicewander, 2012). For observation tools, Cohen’s kappa (Cohen, 1960) or intraclass correlations (ICC) were used to report interrater reliability. For validity, we focused on metrics indicative of internal structure as captured through factor analytic techniques (e.g., exploratory and confirmatory factor analyses [EFA, CFA]). Recognizing the importance of holistic measurement development and the power of generalizability theory (Brennan, 2010), if there was no report on internal structure, we also considered content validity (e.g., expert review), criterion validity (e.g., associations with other validated measures), and consequential validity (e.g., evidence supporting interpretation/use).

Data Extraction Process

We decided upon a combination of instrument characteristics and quality indicators that aligned with an argument-based approach to evaluating evidence of measures’ validity (Kane, 2013). Specifically, we used the four stages of establishing an interpretation and use argument outlined by Haertel (2018), originally conceptualized by Kane (2006), to guide our critical analysis: (1) scoring, (2) generalization, (3) extrapolation, and (4) use or interpretation. During the data extraction phase, the following data were documented, as given in Table 2: (1a) response options and test score; (1b) traditional metrics of validity, such as factor analyses of measures’ internal structure and expert review; (2a) test reliability; (2b) measurement invariance; (3a) unit of observation (i.e., what is being observed, measured, or collected) and unit of analysis (i.e., who or what inferences are being made about; DeCarlo, 2018); (3b) measurement constructs; (3c) measurement domains; (4a) intended use (coded as Research, Formative, and Summative and listed in alphabetical order, whereby Research = to inform intervention effectiveness, gather evidence for theories, and/or contribute to developing knowledge in a field of study; Formative = to monitor learning, provide ongoing feedback, and/or identify relative strengths and skill areas that need work; and Summative = to evaluate learning at the culmination of instruction by comparing it against a standard or benchmark); (4b) test applications; and (4c) evidence supporting proposed interpretation and use (coded as No, Partial, Full, or N/A whereby “No” Evidence = reported study results were not applicable to the stated uses; “Partial” Evidence = reported study results were applicable to some, but not all, of the stated uses; “Full” Evidence = reported study results were applicable to all of the stated uses; and N/A = no measure use was reported and, therefore, evidence could not be evaluated). We report on MI to provide evidence on the use of these measures across specified populations. We looked for MI to be examined at a minimum based on the reporter’s race and ethnicity (in the case of the student- and teacher-report measures) and the classroom racial composition (in the case of observer-report measures), though we expected MI to be examined in other aspects of identity and culture as well (e.g., language, gender). In addition, we assessed whether a measure was developed or validated using qualitative reports of validity (Creswell & Miller, 2000), including cognitive interviewing or pretesting (Drennan, 2003), which is an important step for establishing validity (i.e., measure items are interpreted by users as the developers intended)

Interpretation and use arguments for CRP measures

Note. The four stages are based on Haertel (2018) and Kane (2006). The studies are ordered alphabetically by first author. The “Use” column is ordered alphabetically, with Research = to inform intervention effectiveness, gather evidence for theories, and/or contribute to developing knowledge in a field of study; Formative = to monitor learning, provide ongoing feedback, and/or identify relative strengths and skill areas that need work; and Summative = to evaluate learning at the culmination of instruction by comparing it against a standard or benchmark. The “Ev” (evidence) column was coded as No = reported study results were not applicable to the stated uses; Partial (abbreviated as Par.) = reported study results were applicable to some, but not all, of the stated uses; Full = reported study results were applicable to all the stated uses; and N/A= measure uses not stated, so evidence could not be evaluated. MI = measurement invariance; Obs. = observation; Avg. = average score; N/R = not reported; CRP = culturally responsive practice; IRA = interrater agreement; CFA = confirmatory factor analysis; CFI = comparative fit index; TLI = Tucker Lewis index; RMSEA = root mean square error of approximation; EFA = exploratory factor analysis; CLASS = Classroom Assessment Scoring System; ICC = intraclass correlation coefficient;= = invariant across groups;

Reliability statistics calculated using a larger sample of 244 teachers, paraprofessionals, counselors, principals, instructional specialists, and media specialists

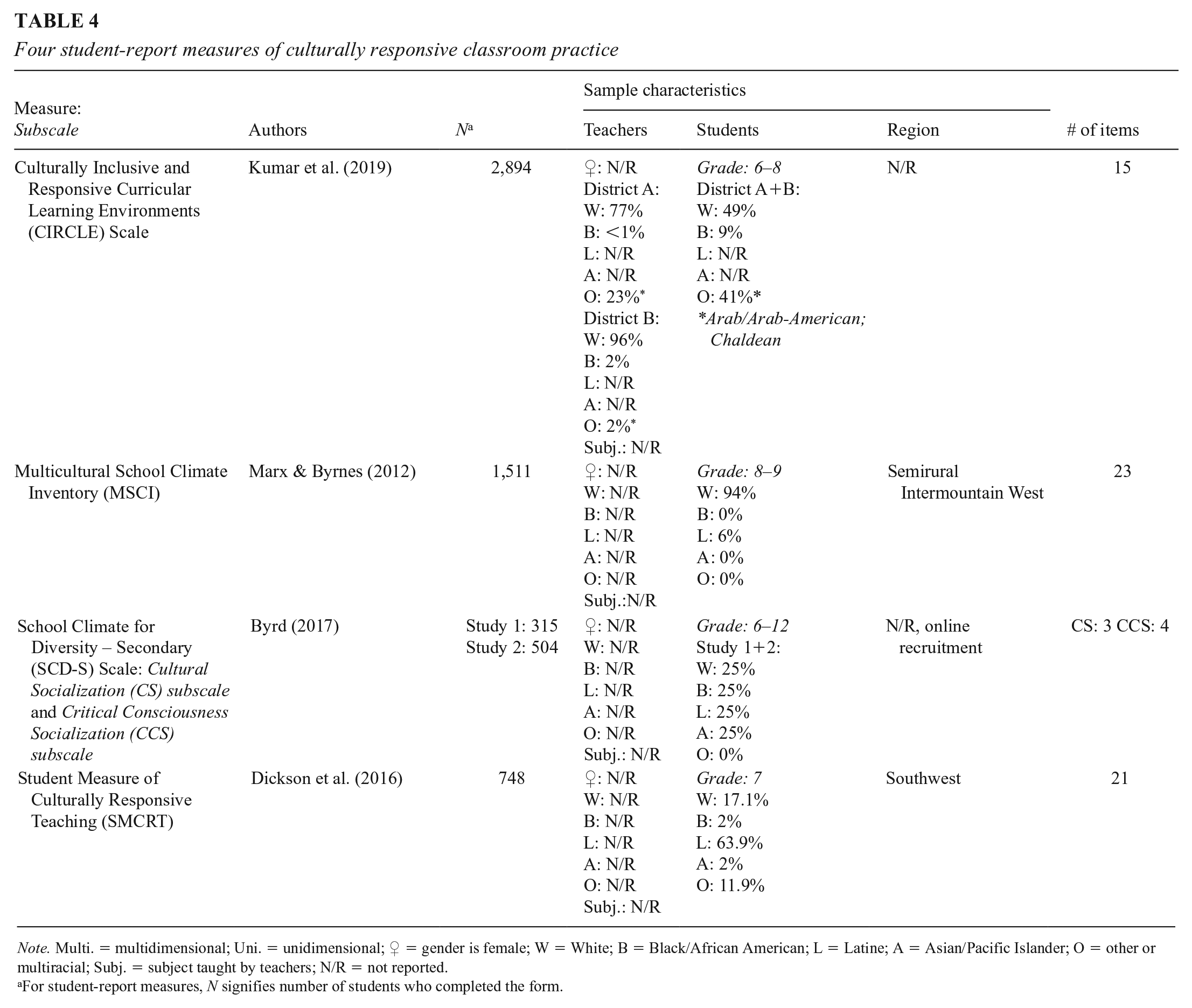

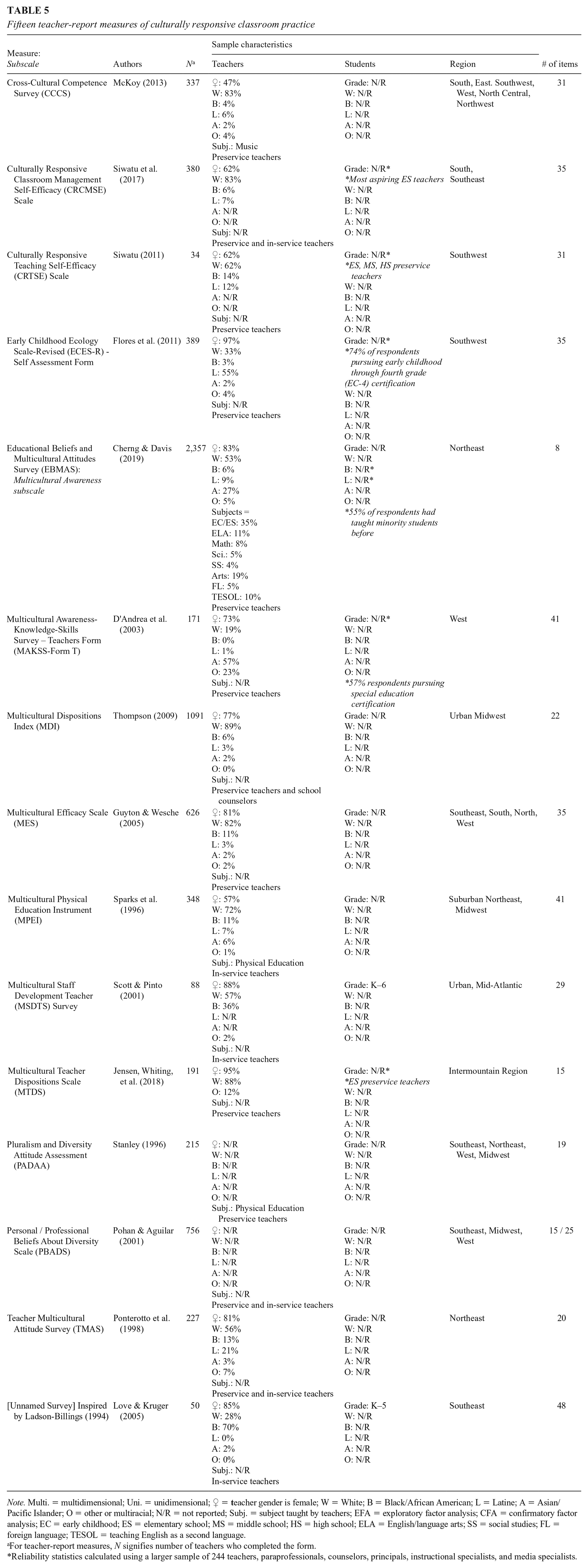

These data points were extracted to critically assess evidence of high-quality, interpretable data, an initial step towards establishing a measure’s consequential validity. However, because this study was designed to be a useful reference for practitioners, researchers, and policymakers, we also included descriptive information about each study’s sample and context in separate tables (see Tables 3, 4, and 5). Sample demographics were reported via percentage for ease of interpretation across studies; if race and gender descriptive statistics were reported in the original study numerically, we converted them to percentages. Missing summary statistics were denoted as Not Reported (N/R). Sample characterization summaries are provided in Tables 3 through 5 (refer to Supplemental Appendix A, in the online version of the journal, for further detail), which are critical to provide transparency regarding the normative sample the measure was tested with and its implications for the generalizability of measurement-related findings. These sample summaries may be of interest for those selecting a measure to use in practice or research settings.

Eight observation measures of culturally responsive classroom practice

Note. Multi. = multidimensional; Uni. = unidimensional;

For observational measures, N signifies number of teachers observed.

Four student-report measures of culturally responsive classroom practice

Note. Multi. = multidimensional; Uni. = unidimensional;

For student-report measures, N signifies number of students who completed the form.

Fifteen teacher-report measures of culturally responsive classroom practice

Note. Multi. = multidimensional; Uni. = unidimensional;

For teacher-report measures, N signifies number of teachers who completed the form.

Reliability statistics calculated using a larger sample of 244 teachers, paraprofessionals, counselors, principals, instructional specialists, and media specialists.

Quality Analysis

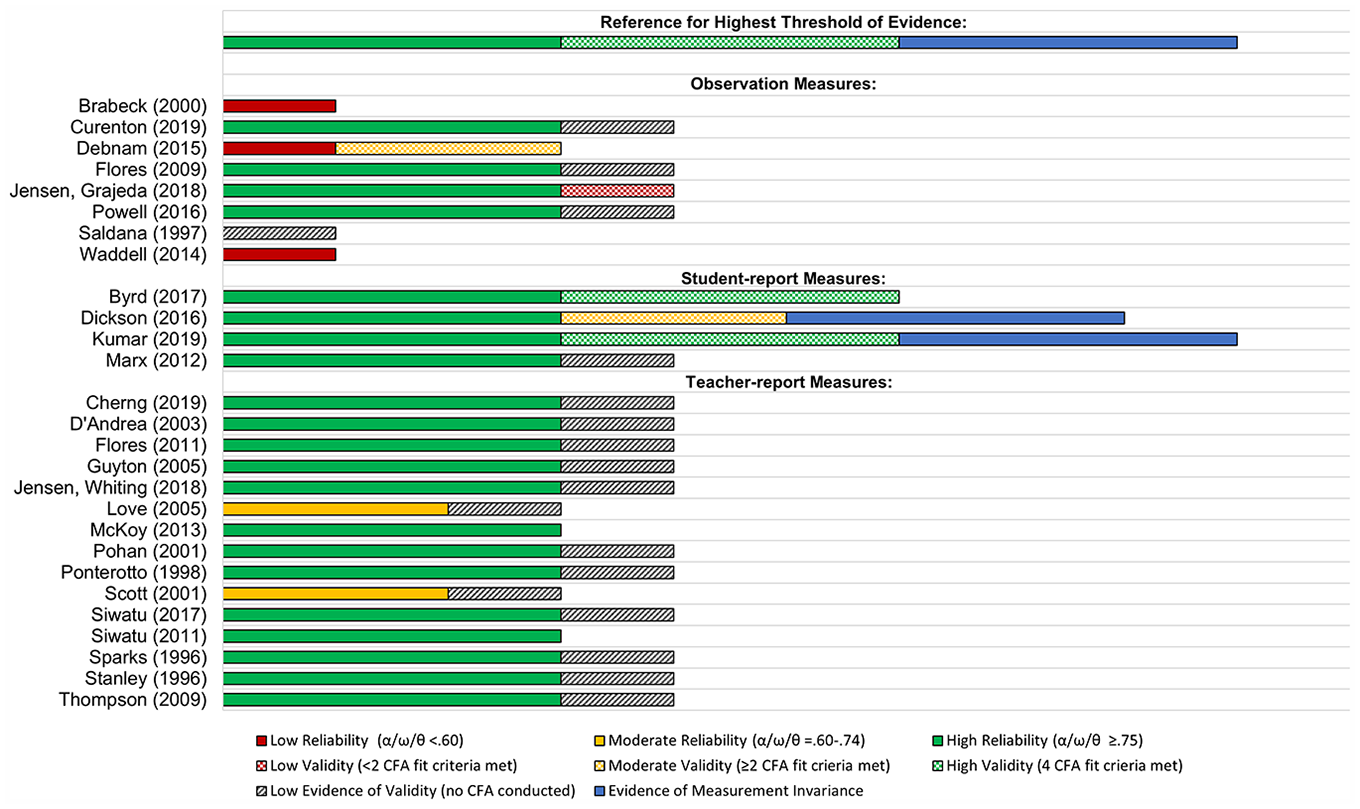

To address our first research aim, to summarize the state of the science of quantitative CRP measurement pertaining to the reliability and validity of existing instruments, we conducted a quality analysis. For ease of review and interpretation, the results of this quality analysis are presented visually in Figure 2. We designated reliability and validity as low, moderate, or high based on the benchmarks outlined below in the education literature, though we recognize and advise readers to consider that these criteria are high for measures of teaching in general and that other methods of assessing reliability have been used, particularly in observational research (e.g., Bell et al., 2012). For example, within the rigorously designed Measures of Effective Teaching Project some of the most commonly used, extensively studied general teaching observation measures (e.g., Classroom Assessment Scoring System [CLASS]; Framework for Teaching [FfT]; Protocol for Language Arts Teaching Observations [PLATO], and Mathematical Quality of Instruction [MQI]) would achieve low to moderate reliability and low evidence of validity based on the standards detailed in the following paragraphs (Kane et al., 2014; Kane & Staiger, 2012). We provide this caveat to strengthen readers’ interpretation of the psychometric findings presented in this study.

Visual representation of quality analysis.

Measures were indicated as having low reliability if the internal consistency coefficients (e.g., alpha, omega, phi, theta) were <.60, moderate (minimally acceptable) reliability was between .60–.74, and ≥.75 was considered high reliability (Taber, 2018). For test-retest reliability, a Pearson’s correlation coefficient < .70 was considered low, .70–.79 was considered moderate, and ≥ .80 was considered high (Rodgers & Nicewander, 2012). The kappa and ICC statistics, which measure interrater reliability, were assessed in terms of quality with ≤ .40 as low reliability, .41–.60 as moderate reliability, ≥ .61 as high reliability (McHugh, 2012). If reliability metrics were reported for both overall measure and subscales, we assessed the overall (total) metric. However, if only a range was reported (e.g., three domain-level alphas, no overall alpha), we considered the upper and lower limits of the range. If the upper and lower limits of the range were of the same quality, they were given that designation (e.g., α = .76–.90 are both ≥.75, indicating high reliability). If the upper and lower limits of the correlation coefficient range were in different but adjacent quality levels, we gave the measure the benefit of the doubt and defaulted to the higher designation (e.g., α = .72–.90 are moderate and high reliability, respectively, resulting in a designation of high reliability). Finally, if the upper and lower limits of the correlation coefficient range were in widely different quality levels, we designated the median the quality level (e.g., α = .45–.90 are low and high reliability, respectively, resulting in a designation of moderate reliability).

In assessing the quality of each measures’ internal structure, we used CFA model fit criteria including, factor loadings above .40 (Brown, 2015); comparative fit index (CFI) ≥ .95, Tucker Lewis index (TLI) ≥ .95, and root mean square error of approximation (RMSEA) ≤ .06 (Hu & Bentler, 1999). Measures that did not utilize confirmatory factor analytic techniques to examine internal structure were considered to have low evidence of validity; this included those measures that only reported EFA metrics. EFA is primarily intended to uncover complex patterns by exploring a dataset to parsimoniously find common factors (Watkins, 2018), whereas CFA can be used to establish internal structure by reducing measurement error and assessing the fit of the data to an a priori hypothesized measurement model (DiStefano & Hess, 2005). Measures were designated as having low validity if a CFA was conducted but fewer than two model fit criteria were met. Measures were designated as having moderate validity if they met at least two or more CFA model fit criteria; if all four CFA model fit statistics were met, the measure was designated as having high validity. We indicated where MI was assessed in Figure 2.

To address Aim 2, to synthesize measurement constructs and uses (i.e., pertaining to extrapolation and use or interpretation stages of Kane’s (2006) model), we critically analyzed data extracted and reported in Table 2. Specifically, we characterized the construct of CRP as it was conceptualized, as well as how the scores of classroom CRP measures were proposed to be interpreted and used. Our analysis is reported in the Results section.

Author Reflexivity

We the authors are White researchers who conduct primarily quantitative research with a postpositivist epistemological lens at a predominantly White institution (PWI; a university where more than 50% of its student body is White). We are currently evaluating the effects of a coaching intervention designed to improve teachers’ CRP as part of a randomized controlled trial. We recognize that being White and conducting quantitative research in this space defined largely by interpretivist epistemological foundations of CRP-related constructs and theories necessarily means we bring gaps in understanding as well as biases to this work. We are aware of our positionality as White quantitative researchers in this space and, as such, are not seeking to alter or extend, but only to mirror and translate the rich tradition of interpretivist CRP scholarship to the field of education and prevention sciences, where it may be used to support formative professional development and intervention goals to improve teachers’ CRP. We bring our own experience as applied psychologists and developmental scientists committed to understanding the effects of equity initiatives and CRP for students and staff in schools. As previously mentioned, our team also has experience developing CRP measures, providing insight into the challenges of this work. Recognizing the strengths and limitations of our positionality in relation to the present research, we conducted the reporting bias and certainty assessment below to mitigate potential biases.

Reporting Bias and Certainty Assessment

Following the initial completion of the quality analysis, we utilized expert review via school-based CRP scholars. In addition to scholars within our own professional networks, we reached out to the 24 authors whose measures were included in the final synthesis and inquired as to whether they were aware of any other relevant CRP measures besides their own. Furthermore, reviewers of the manuscript as part of the peer-reviewed publication process noted two additional measures for consideration through the inclusion criteria. If these experts recommended that we investigate a measure that was not already included in the literature review, the team located the original study via research database and screened the measure through all eligibility criteria. Eight of the 12 recommended measures had already been captured in the original search and were screened out due to various eligibility criteria (e.g., non-U.S. sample, not peer-reviewed, not classroom or teaching). However, there were four recommended measures that were eligible to be screened through the inclusion criteria, two of which were ultimately included in the final review. Of the other two that were excluded, the first was screened out because we were unable to locate a peer-reviewed published article validating the measure. The unpublished manual of the Climate of Healthy Interactions for Learning and Development measure (Gilliam & Reyes, 2017) did not meet the peer-review criterion; nor was it specifically related to CRP despite its potential to capture the equitable treatment of children in early childhood settings. The second excluded measure, Reinholz and Shah’s (2018) Equity Quantified In Participation observation tool, was screened but not included. The measure is a tool that teachers can use formatively to assess student behaviors in their classrooms indicative of student engagement disaggregated by student identity characteristics. Although there is some assessment of teacher behavior (e.g., whether a student responded as a result of being called on by the teacher and whether the teacher elevated the students’ voice when they spoke), this is not substantively a measure of CRP and was therefore screened out in Eligibility Phase III.

The first addition was the Assessing Classroom Sociocultural Equity Scale (Curenton et al., 2019), as it was determined that this observation measure was missed in the initial search because of a search term error. The original search terms “sociocultural conscious*” OR “sociocultural competen*” missed the Curenton (2019) measure, referred to by the authors as a “sociocultural equity scale.” We reran the search adding the term “sociocultural equity” and screened all new records; the Curenton et al. (2019) study was the only record to meet inclusion criteria. The rest of the decisioned records were incorporated into the PRISMA flowchart in Figure 1. The second measure added was the Multicultural Teacher Dispositions Scale (Jensen, Whiting, et al., 2018); it did not appear in our original search because it was missing one of the nine school-related terms (e.g., school, K–12, classroom) in the article title, abstract, and keywords (see Table 1). To our knowledge, this was the only instance of this type of exclusion.

Results

The search retrieved 4,080 records across six databases, of which 1,980 were duplicates. The reporting bias and certainty assessment added three new records, resulting in 2,103 records to be screened. Following the initial screening phase, 997 studies were sought for retrieval. However, 118 of these studies were not retrievable even after attempting to use various institutional library resources. During Eligibility Phase I, the methods section of 879 studies were reviewed to ascertain whether they examined a specific classroom CRP measurement tool. The vast majority of Phase I studies (n = 698) did not examine a specific measure. Of the 181 studies that did examine specific measures, 52 were unrelated to CRP, whereas 36 were originally published elsewhere, so only the original study was included. Of the 93 studies that included measures of CRP, approximately a third of the remaining studies (n = 33) were excluded during Eligibility Phase II because the items were not accessible following a due diligence search. In Eligibility Phase III, 60 studies were reviewed for the methods to determine whether psychometric properties were reported and more than one demographic group was the focus. Ultimately, 27 studies were included in the review and assessed for evidence of validity and reliability: 8 observation measures (Table 3), 4 student-report measures (Table 4), and 15 teacher-report measures (Table 5).

To gain a better understanding of the state of CRP measurement, our research was focused on addressing two primary research aims: to understand (a) whether CRP measurements produce reliable and valid data sources and (b) how scholars operationalize CRP and whether there is evidence supporting their intended use and interpretation. The results of our critical analysis are presented in Figure 2 (Aim 1) and Table 2 (Aim 2) with special attention to the content and quality of each measure.

Aim 1: Scoring and Generalization

Addressing our first research aim, we found that most measures ranged widely in terms of reliability and internal structure, and very few measures (i.e., only two student report measures) assessed MI. The Kumar (2019) student-report measure was the sole measure to meet the standards for validity and reliability described in the method and shown in Figure 2. See Table 2 for a summary of each measure’s response options, scoring procedures, validity, reliability, and MI reporting; and Figure 2 for a visual representation of the psychometric quality analysis.

Response Options/Test Score

Observer report measures had the most variability in response options, including field notes (i.e., Powell [2016] measure), scored responses to a semistructured interviews (i.e., Brabeck [2000] measure), estimates of percentage of students affected by the teacher practice (i.e., Curenton [2019] measure), duration of behavior observed (i.e., Saldana [1997] measure), and, most commonly, Likert scales via observer report. Scoring procedures were not consistently reported for observation measures; of those published, an average score was the common metric. Similarly, all four student-report measures were scored using an average score. All response options for students were via Likert scale, with the Marx (2012) measure including an additional open-ended question for students. Thirteen teacher-report measures utilized Likert-style response scales, and both Siwatu measures (2011, 2017) utilized a 0 to 100 confidence rating system. Scoring procedures were not reported for 6 of the 15 teacher-report measures.

Validity

The studies of the measures varied in terms of the indicator of validity reported (see Table 2 and Figure 2). Eighteen of the 27 measures utilized factor analytic methods to describe the variability among observed CRP variables. Whereas some studies conducted both an EFA and CFA (e.g., Dickson [2016] measure), others conducted CFAs only (e.g., Debnam [2015], Kumar [2019], and Byrd [2017] measures), or EFAs only (e.g., Marx [2012] measure). Due to the lack of evidence for internal structure, which cannot be proven using EFA techniques, those measures that only conducted EFA techniques, such as principal components factor analysis, were designated as having low evidence of validity.

Other types of validity (e.g., face and content validity), were established through qualitative reports of validity, such as expert review (e.g., Flores [2011] and Pohan [2001] measures), or pretesting (e.g., Dickson [2016] and Siwatu [2017]). There were also studies that used a mixed methods approach to collect qualitative data via focus groups prior to developing item content (e.g., Kumar et al., 2019), which adds to the content validity of those measures; however, post-item development pretesting or cognitive interviewing is needed to better support item interpretability. In some studies, measure developers tested convergent validity with other nonobservational measures of CRP (e.g., Brabeck [2000] and Debnam [2015]). Finally, in one study, validating the Curenton (2019) measure, divergent validity was established with the Classroom Assessment Scoring System, a culturally nonspecific measure of classroom climate.

Reliability

The classroom observational measures of CRP varied widely. As reported via Cronbach’s alpha, reliability at the subdomain level ranged from relatively low reliability (e.g., Brabeck [2000] measure, α = .27–.74) to high reliability (e.g., Curenton [2019] measure, α = .74–.90). Cohen’s kappa statistics of interrater reliability were also highly variable; across the eight observation measures kappas ranged from low agreement (e.g., Waddell [2014] measure, κ = .18–.36) to high agreement (e.g., Powell [2016] measure, κ = .84; McHugh, 2012). All four student-report measures reported reliability, and those metrics ranged from moderate to high reliability. Cronbach’s alphas ranged from .71 to .91 for subscales and .66 to .94 for full scales (overall metric). Due to the nature of student self-report, there was no interrater agreement or reliability reported. Test-retest reliability was also not reported for any of the student measures. Among the student-report measures, the Marx (2012) measure and the Dickson (2016) measure demonstrated the highest overall reliability with α = .94 and .90, respectively. Fourteen out of 15 teacher-report measures reported alpha values of .70 or greater, which is an acceptable level of internal consistency reliability, particularly for measures in development (Taber, 2018). Both alpha and theta statistics were reported on the study validating the Ponterotto (1998) measure; the theta coefficient is an index of internal consistency for a composite score (Ercan et al., 2007). Test-retest reliability coefficients were reported for the Stanley (1996) measure and Flores (2011) measure, though the test-retest assessment for the Stanley (1996) measure was done with a small subsample of 35 teachers. None of the teacher-report measure studies included students’ racial demographics.

Measurement Invariance

MI was not assessed in any of the observation nor teacher-report measurement studies. Two of the student-report measures (i.e., the Kumar [2019] and Dickson [2016] measures) were assessed for MI. The Kumar (2019) measure demonstrated configural, metric, scalar, and first- and second-order latent factors intercept MI across the following four student racial groups: White, Black/African American, Arab/Arab American, and Chaldean racial-ethnic group membership. The Dickson (2016) measure was assessed for configural, metric, and scalar MI using its second-order factor model. Dickson and colleagues (2016) reported that the nonvariance sources in their second-order factor solution were less than 20% parameters, suggesting that the measurement of the CRP construct did not differ significantly between female and male students, Latine and non-Latine students, and immigrant and nonimmigrant students.

Aim 2: Extrapolation and Interpretation/Use

Units of Observation and Analysis

In assessing units of observations and units of analysis, some concordance was found. The purpose of seeking concordance between units of analysis and observation is to ensure that what is being measured aptly reflects the larger entity or construct being studied. Upon review, observer report measures were aptly designed to capture objective, observable behaviors, such as student-teacher interactions (e.g., Curenton [2019] and Jensen, Grajeda, et al. [2018] measures), teacher behaviors (e.g., Powell [2016] and Waddell [2014] measures), and physical features of classrooms (e.g., Flores [2009, 2011] measures). However, the units of analysis amongst teacher self-report measures revealed a different purpose—to collect data on teachers’ dispositions (e.g., Jensen, Whiting, et al. [2018] and Thompson [2009] measures), self-efficacy (e.g., Guyton [2005] and Siwatu [2011, 2017] measures), and awareness (e.g., Cherng [2019] measure). Student-report measures were the least prevalent type of measure; though of those identified, several were designed to capture a classroom’s overall climate or milieu (e.g., Byrd [2017], Kumar [2019], and Marx [2012] measures).

Construct and Domains

Across the 27 measures of CRP, less than a third explicitly used the phrase “culturally responsive” to describe the primary measurement construct (i.e., Dickson [2016], Flores [2009, 2011], Kumar [2019], Powell [2016], and Siwatu [2011, 2017] measures). There was noticeable terminological variation; each of the 24 authors used different terms to describe CRP-related constructs and domains (see Table 2). Temporal trends across the literature reflected the field’s evolving understanding of CRP as a complex, ongoing pedagogical approach rather than a finite competency. For example, the oldest measures included language regarding teachers’ comfort (e.g., Stanley [1996] measure), sensitivity (e.g., Ponterotto [1998] measure), and intolerance (e.g., Brabeck [2000] measure) to diversity in the classroom. In contrast, measures developed within the past 15 years tended to reflect advances in the field’s understanding of teacher dispositions, including their self-awareness and consciousness of how their beliefs, attitudes, and life experiences influence their world views and interactions with students and families (e.g., Jensen, Whiting, et al. [2018] and Thompson [2009] measures).

Interpretation and Use

To further examine the consequential validity (Messick, 1998) of the measures, we evaluated the supporting evidence for each measure’s intended use and proposed score interpretation (see Table 2; AERA et al., 2014; Kane, 2013). While nearly all 24 measure developers mentioned the intended use of their measure (i.e., formative, research, and/or summative), they were less clear about the proposed interpretation and application of scores. Over three quarters of the studies (i.e., Brabeck [2000], Byrd [2017], Cherng [2019], Curenton [2019], D’Andrea [2003], Debnam [2015], Dickson [2016], Flores [2009, 2011], Guyton [2005], Jensen, Grajeda, et al. [2018], Jensen, Whiting, et al. [2018], Kumar [2019], Marx [2012], McKoy [2013], Pohan [2001], Ponterotto [1998], Powell [2016], Scott [2001], Siwatu [2017], Thompson [2009], and Waddell [2014] measures) included some preliminary commentary about the measures’ possible applications, but very few measures provided any evidence to support these proposed real-life uses. Of the 27 measures, the validity of 5 measures (i.e., Dickson [2016], Flores [2009], Jensen, Whiting, et al. [2018], Powell [2016], and Waddell [2014] measures) were supported by partial evidence, and just 2 measures (i.e., Cherng [2019] and Ponterotto [1998] measures) included enough evidence to meet the benchmark for full support of consequential validity.

Discussion

In this systematic literature review, we took an argument-based approach to documenting evidence of validity (Kane, 2006, 2013), which involved mapping a four-stage process (Haertel, 2018) onto our two primary research aims. Taken together, the four stages of analysis provided important insights regarding the current state of the science in CRP measurement.

Scoring and Generalization

Overall, the extant CRP measurement literature underreports on strategies utilized to initially develop the measure, as well as its intended applications; greater transparency in future reporting is needed. In addition, more consistent reporting of measure metrics (i.e., scores, reliability, validity, and MI), as well as clear guidance on the proposed interpretation of scores, would support the field’s efforts to translate CRP theory into quantifiable practice. In this light, two measures stand out among others, both student-report. The first was a measure by Kumar and colleagues (2019), which proved, through a rigorous mixed methods approach, to be the most psychometrically sound against stringent classical test theory criteria. By taking a phased, exploratory approach to CRP research, Kumar and colleagues produced a measure that met traditional reliability and validity criteria. Though classical test theory criteria are just one element of an overall interpretation and use argument for a measure, the multimethod, asset-based approach that Kumar and colleagues adopted was exemplary in that it produced empirically valid results and centered student voice via focus group interviews. Although judgements about validity metrics are subjective (e.g., convergent validity vs. divergent validity vs. face validity vs. content validity), we believe that pretesting and cognitive interviewing are important strategies that can support QuantCrit efforts to humanize minoritized communities in CRP measurement research (Garcia et al., 2018). By involving key informants, particularly students themselves, in CRP operationalization and measure development, the field can center student voice to increase the quality and appropriateness of future measures. It should be noted, however, that the intended use of this student-report measure was not explicitly stated, leaving the question of consequential validity unanswered.

The second student-report measure with strong evidence of reliability and validity was the Dickson (2016) measure, which was developed using factor analyses, measure pretesting, and, like the measure by Kumar and colleagues (2019), an examination of MI. Synthesized under the Generalization stage of Table 2, evidence of MI was assessed and found only in these two studies of student-report measures (i.e., Dickson [2016] and Kumar [2019] measures). MI testing is critical because it helps to establish whether the measure works the same in different samples or across settings, which is especially important in CRP measurement research because CRP is highly sensitive to context. Overall, the sampling for many studies included in this review was relatively homogenous; and the rigor of sampling procedures, including whether invariance procedures were reported, was inconsistent across the final included studies, suggesting a need for greater attention to MI in future CRP measurement research.

Aligned with our focus on assessing argument-based validity, it is important for readers to understand that the test administration and scoring procedures extracted from the 27 original measurement studies may not reflect the authors’ recommendations for use by others in nonresearch settings. For example, in research, scores are often transformed for statistical analyses and may be of little to no use to school-based practitioners. In our review, we found it was not often stated explicitly but should be: If measures are ready for public use, clear guidelines on test administration and scoring procedures should be provided; otherwise, studies should state the measures are not yet ready for public use. Similarly, if a measure is only intended to be used for self-reflection and personal growth (e.g., Flores [2009, 2011] and Waddell [2014] measures), researchers should advise against using the tool for summative evaluation or research purposes. Once the field begins to consistently report intended use arguments, we will be better positioned to discuss appropriate levels of reliability and validity in the context of measure use.

Extrapolation

Our comparative analysis revealed that the field is referencing CRP in very different ways and, in turn, making inferences based on very different indicators. Remarkably, all the studies included in our synthesis (apart from those by the same first author) used different terms to describe their CRP-related constructs. This level of variation in terminology is more common in educational research when describing topics related to inequities in school (see Berkowitz et al., 2017). We reviewed the content of the 27 CRP measures for commonalities across the ways in which CRP was operationalized in the quantitative literature. Below we note select constructs that emerged frequently in the extant CRP measurement literature, though these themes do not encompass all constructs reported in Table 2; nor are they intended to comprehensively represent all CRP concepts in their full depth and complexity.

Making sociocultural connections in the classroom during both instructional and noninstructional time was a core tenet across student-report (e.g., Byrd [2017] and Dickson [2016] measures) and observer-report measures (e.g., Saldana [1997] and Powell [2016] measures), with noticeably less focus among teacher-report measures (except for Siwatu’s [2011, 2017] measures). The presence of this theme across extant CRP measures suggests that the measurement field has placed value on applying CRP principles to curricular content (i.e., what is taught), which is consistent with school-based efforts to recenter the narratives of historically marginalized groups through revised curricula (e.g., Griffin & James, 2018; Utt, 2018). A focus on instruction and curriculum may be indicative of a greater trend that prioritizes measuring teacher practice over teacher disposition, given the field’s increased understanding of unconscious biases and other threats to data validity.

The topic of increased intergroup contact (Tropp & Saxena, 2018) was evident in half of the observation measures (i.e., Curenton [2019], Jensen, Grajeda, et al. [2018], Flores [2009], and Saldana [1997] measures), where observers were instructed to capture intergroup contact in regard to race, gender, or another phenotypic trait between students within the observed classroom. Students were also asked about intergroup contact in schools, but with less of focus on friendship-making and more geared toward students’ feelings of belonging (i.e., Marx [2012] measure) and teachers’ role in conflict resolution between students of different racial-ethnic backgrounds (i.e., Kumar [2019] measure). This line of questioning in the student-reports may have been driven by developmental perspectives given these measures are designed for administration to secondary-aged students.

Interestingly, intergroup contact also was discovered in teacher-report measures with more dated measures asking teachers about their own formative experiences with people from different racial and ethnic backgrounds, including cross-racial childhood friendships and exposure via travel (e.g., Guyton [2005] and Sparks [1996] measures). In contrast, the teacher-report measures developed within the past decade asked about intergroup contact in reference to teachers’ own confidence in their ability to connect and foster relationships between diverse students (i.e., Flores [2011], Siwatu [2017] measures). The conceptual differences within this theme raise interesting questions about the theory of change behind CRP’s effectiveness, including which of the constructs extrapolated from our review are reflective of CRP itself versus precipitators (e.g., teachers’ childhood experiences) or outcomes (e.g., students’ feelings of belonging) of CRP.

The critical appraisal of power, including hegemonic whiteness (Tevis et al., 2022), within CRP is a defining feature that sets the construct apart from other types of differentiated instruction and was captured in the Byrd (2017) and Curenton (2019) measures. Teachers’ critical consciousness is a two-part process of critical reflection and critical action (Jemal, 2017). As student-report and observation measures, respectively, the Byrd (2017) and Curenton (2019) measures represent the complexity of CRP measurement and the need for multiple informants to weigh in on teachers’ critical reflection (e.g., internal beliefs) and critical action (e.g., behaviors, interactions, instructional strategies). We refer to this CRP theme as critical consciousness; however, it is also related to antiracism, which we discuss further in the future directions section due to the notable absence of mentions of race across the extant CRP measurement literature.

Numerous teacher-report measures, spanning across nearly three decades of research attempted to capture whether teachers approached student diversity with humility (e.g., Cherng [2019], McKoy [2013], and Thompson [2009] measures). This theme also appeared in observational CRP measures (e.g., Powell [2016] and Jensen, Grajeda, et al. [2018] measures), though unsurprisingly student-report measures did not focus on this area. In another form of redressing power dynamics in the classroom, and the action-based complement to a culturally humble disposition, support for student agency (e.g., distributing classroom roles and instructional leadership opportunities) was evident in the Jensen, Grajeda, et al. (2018) observational measure.

Across measures, we observed that, similar to general educational measurement tools, extant CRP measures are designed to distinguish who teachers are and their worldviews (e.g., attitudes, beliefs, dispositions) from what they do (e.g., practice, interactions, instruction). However, the assumption that changing teachers’ mindsets and beliefs will influence their behaviors and CRP enactment has been theorized (e.g., Warren, 2018), but not yet demonstrated in the extant literature, in part due to the lack of validated CRP measures to capture intervention effects. In addition, some student report measures may be conflating CRP with students’ perceptions of the learning environment or students’ own cultural competence (i.e., outcomes of CRP). Overall, when considering the reviewed measures in this light, it appeared that measures may be confounding the precursors, instantiation, and outcomes of CRP. Future research should seek to clarify the causal and temporal associations between various CRP-related constructs.

Use and Interpretation

While the traditional validation of CRP measures through statistical metrics of reliability (e.g., Cohen’s alpha) and validity (e.g., CFA) is helpful in establishing some criteria for psychometric soundness, often even state-of-the-art measures of teaching in general fail to meet strict classical test theory psychometric reliability and validity criteria. For example, the Gates Foundation spent over $500 million on the Measures of Effective Teaching study, and their measures of general teaching quality only reached low to moderate reliability and low evidence of validity based on the rigorous standards used in our quality analysis (Kane & Staiger, 2012; Kane et al., 2014; Stecher et al., 2018). To be clear, the failure to meet validity and reliability standards is not unique to CRP measures; nearly all K–12 teaching measures share this weakness.

In addition, careful consideration of the evidence in support of the measures’ intended use and score interpretation is an important and often overlooked step in assessing the validity of a measure (Kane, 2013). When reviewing the results of this stage in our analyses, many scholars envisioned their CRP measures as potentially serving multiple purposes (e.g., to measure intervention effectiveness, preservice teacher performance) with aspirations for applications in practice and/or research. The potential for such applications should be tested through rigorous outcome evaluation or effectiveness trials in future research. High-inference hypotheses have the potential to be societally impactful, but substantiating these findings comes with a high burden of evidence (Bell, 2012; Kane, 2013). We recommend that researchers (re)state the measure’s intended use at each stage of development and provide the relevant information on reliability and validity for that use. We also recommend scholars take a focused approach, as was done by Cherng and Davis (2019), who identified their measure as appropriate for research use (e.g., to contribute to the knowledge base) at the time of publication. In fact, it was those measures that reported more narrow uses that tended to include at least partial evidence of validity in the study findings. We recommend a mixed-methods approach to document how, or if, a given CRP measure is being used and interpreted in practice. Both qualitative data (e.g., focus groups, participant quotes, teacher testimonials) and quantitative data (e.g., improved grades, fewer office discipline referrals) would provide users with evidence of a measure’s practical applicability and effect on theorized CRP-related outcomes.

Future Directions

Taken together, our findings indicated that there were major differences in CRP-related terminology and measurement constructs across the studies reviewed. Promisingly, publications continue to emerge introducing new CRP measures to the field. We are encouraged, for example, by Jensen and colleagues’ (2023) update to the Jensen, Whiting, et al. (2018) teacher-report measure, including improvements in their reporting of validity evidence. To better understand the processes that underlie CRP-driven changes in students, teachers, and classrooms, it is necessary to produce empirical evidence over multiple years, across different educational settings, and with diverse student and teacher populations. The field would benefit from future research delineating a clear theory of change, including which of these constructs encompasses the act of CRP itself versus what may instead be the outcomes of CRP. Below, we consider several additional recommendations for future research to deepen our understanding and measurement of CRP.

Absence of Race, Power, and Identity Foci

Many have postulated that CRP-related constructs may precede more advanced forms of critical action, such as demonstration of antiracist behavior (e.g., Cherng & Davis, 2019; Curenton et al., 2022); however, in the absence of a theoretically sound process model, the field remains limited in its ability to accurately propose and test hypothesized ways to increase the use of CRP in U.S. PK–12 classrooms. For example, the field would benefit from future research on how teachers’ own racial ethnic identity development, much of which may be formed in childhood and adolescence (Umaña-Taylor & Rivas-Drake, 2021), affects their self-assessment of CRP. Moreover, only a handful of measures sought to explicitly capture information about teachers’ racial biases and the impacts of institutional racism in the classroom. Whereas items about race have been in circulation from early measures (e.g., “The discussion of racial and ethnic subjects is inappropriate at the elementary level”—a reverse-scored item from the Scott [2001] measure), very few measures center students of color and the injustices they may face in the classroom. The exception to this is the Curenton (2019) measure, which is specifically focused on the experience of “racially minoritized learners.”

To some, in the current sociopolitical climate, it may seem counterintuitive to encourage measures of CRP that explicitly reference racial identity/socialization, racial biases, and systemic racialized inequality. However, explicit inclusion of race in measures of CRP may provide meaningful data to move the conversation forward regarding critical race theory–related topics in schools. Specifically, psychometrically sound measurement has the potential to produce data that is reflective of the possible benefits of CRP for students of color and for White students. Such empirical support has the long-term potential to debunk the disinformation that “critical race theory” harms White students’ psychological safety in school. In future work, the field would benefit from studies that approach CRP measurement development through a critical, postpositivist lens.

Need to Triangulate Multi-Informants

Interestingly, woven throughout these themes is a mix of observable teacher behaviors, internalized teacher dispositions, and teachers’ beliefs about their own efficacy. The results revealed unsurprising findings that teacher self-report surveys typically ask about a teachers’ beliefs, attitudes, awareness, and self-efficacy related to CRP. However, within teacher disposition measures, there are also some items that ask teachers to report on their actions (e.g., “I frequently invite extended family members to parent teacher conferences”—an item from Ponterotto’s [1998] measure). This raises an interesting question—What would the family members of this teacher’s students report? How about the students themselves? Or an outside observer (e.g., family social worker) or school record (e.g., family-school communication log)? Although there are certainly logistical barriers to obtaining multiformat reports, there have been calls for a multi-informant approach from professional organizations (e.g., AERA, 2014) as well from scholars who recognize that their single measure provides just one perspective (e.g., Cherng et al., 2019; Debnam et al., 2015; Guyton & Wesche, 2005). Some of the studies included in this review attempt to utilize a multi-informant approach but are working with teacher- and family-report measures that are also still in development (e.g., Powell et al., 2016).

Multi-informant approaches also allow for validity evidence to be provided at multiple levels of the individual, classroom, and school context (e.g., Jensen, Grajeda, et al., 2018). If employing (or developing) a multiformat test battery, it is important to consider whether the measures capture the construct of CRP, other related outcomes, or both (e.g., an observational measure of CRP and a student-report on school belonging). The conflation of practice versus outcome appears particularly prevalent when considering measures across reporters, suggesting that teachers may be well–positioned to assess CRP precursors, observers may be best positioned to assess CRP instantiation in the classroom, and students may be optimally positioned to assess proximal outcomes of CRP.

Limitations

When developing our search strategy, we included CRP-related terminology from scholarly and practice-based sources and vetted these terms as a research team. Nonetheless, even since sourcing within the past year, we likely excluded studies due to missed search terms. For future reviews, we encourage others to consider the terms ethnic studies, asset-based, Latin*. The rapidly evolving terminology related to CRP between 2020 and 2023, before which CRP nomenclature was already quite expansive, imposed challenges that we addressed well in this study, but could not fully eliminate.