Abstract

The purposes of this study included conducting a meta-analysis and reviewing the study reporting quality of math interventions implemented in informal learning environments (e.g., the home) by children’s caregivers. This meta-analysis included 25 preschool to third-grade math interventions with 83 effect sizes that yielded a statistically significant summary effect (g = 0.26, 95% CI [0.07, 0.45) on children’s math achievement. Significant moderators of the treatment effect included the intensity of caregiver training and type of outcome measure. There were larger average effects for interventions with caregiver training that included follow-up support and for outcomes that were comprehensive early numeracy measures. Studies met 58.0% of reporting quality indicators, and analyses revealed that quality of reporting has improved in recent years. The results of this study offer several recommendations for researchers and practitioners, particularly given the growing evidence base of math interventions conducted in informal learning environments.

Keywords

Mastering math concepts early has long-term implications related to children’s academic and postsecondary success. Early math development is associated with later math achievement in school (Geary et al., 2018; Kiss et al., 2019), as well as various other early-developing skills, such as literacy (Duncan et al., 2007; Purpura et al., 2019). Perhaps more noteworthy, children who encounter difficulties with math early are likely to continue experiencing difficulties in later years (Chu et al., 2019; Morgan et al., 2016). Math deficits can even adversely affect students into adulthood. For example, postsecondary school enrollment and completion rates, as well as employment rates, are related to children’s math proficiency (Davis-Kean et al., 2021; Gaertner et al., 2014; Lee, 2012). Yet, on the 2020 National Assessment of Educational Progress (NAEP), U.S. scores were lower than in 2012 for 9-year-olds (10th, 25th percentiles) and 13-year-olds (10th, 25th, 50th percentiles) (NCES, 2020). These gaps in math knowledge are not limited to school-aged children and, in fact, exist prior to school entry (Burchinal et al., 2011; Chu et al., 2019).

One way to improve math achievement is to extend and enhance children’s experiences with math outside formal school settings. Developing early math knowledge and skills involves complex processes that begin prior to school entry (Sarama & Clements, 2009). Children, birth to 8 years old, spend more time in informal learning environments (e.g., the home, after-school programs, libraries, everyday experiences such as grocery shopping) than formal learning environments, such as school classrooms (Hofferth & Sandberg, 2001). For all school-aged children, a larger portion of children’s time is still spent in informal learning environments compared to the 33 hours per week spent in school (NCES, 2007–2008). This positions adults in caregiver roles (e.g., parents, grandparents, childcare providers) as critical contributors to children’s math development in early years of learning (Ginsburg et al., 2012). Thus, effective caregiver-implemented math interventions rooted in empirically validated strategies are needed. Throughout this study, we use the term “intervention” to refer to any change in the typical routine of the informal learning environment that caregivers make as a result of receiving information or recommendations in a research study.

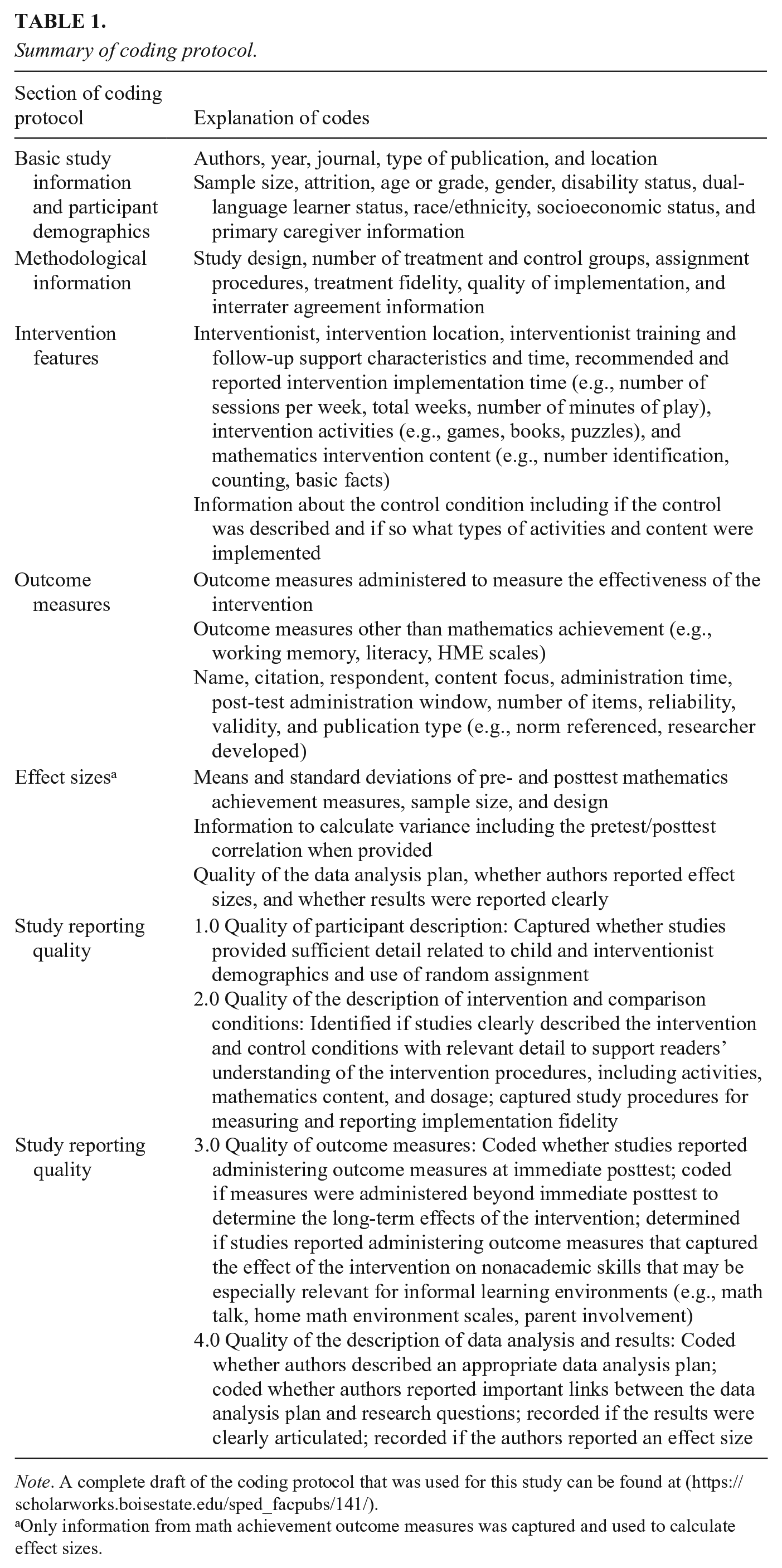

Recently, there has been an upward trend in empirical research that investigates the effectiveness of math interventions in informal learning environments (e.g., Berkowitz et al., 2015; Leyva et al., 2018; Niklas et al., 2016; Purpura et al., 2021). Given the long-term benefits of early math learning, a growing need exists to understand the characteristics of effective math interventions in informal learning environments and their potential benefits for young learners. The current meta-analysis included 25 interventions focused on early math content from preschool (e.g., counting, comparison) through third grade (e.g., basic fact fluency, comparing fractions) that were implemented by caregivers in informal learning environments. This is an emerging evidence base, so an essential step to improve research conducted with young children and caregivers is to provide recommendations for improving study reporting quality. To be clear, reporting quality is an indication of whether important features of a study’s design and analysis are clear to the reader; reporting quality is not an evaluation of the validity and accuracy of the findings based on an assessment of the design and analysis choices. It is entirely possible that the effect found in a study with low reporting quality is a valid and accurate estimate of an intervention effect. In the current study, we define indicators for study reporting quality as the recommended variables (e.g., attrition, participant demographics, intervention characteristics) that authors should present in their research reports (Applebaum et al., 2018). Thus, in addition to a meta-analysis, we also investigated the overall quality of the research report (i.e., we examined the degree to which authors reported essential and desirable information in their research reports, referred to throughout as “study reporting quality”). For a complete list of all study reporting quality indicators that we included in this meta-analysis, see Table 1.

Summary of coding protocol.

Note. A complete draft of the coding protocol that was used for this study can be found at (https://scholarworks.boisestate.edu/sped_facpubs/141/).

Only information from math achievement outcome measures was captured and used to calculate effect sizes.

Importance of Early Math Development

Early math skills, such as counting and comparison, are building blocks to learning more complex skills, such as addition and subtraction. Children who display difficulty with these foundational skills may continue to have difficulty in other areas of math, such as computation and fraction skills (e.g., Geary et al., 2012). Kiss and colleagues (2019) found that early math skills (e.g., decomposing, numeral identification, number sequence) in first grade were significant predictors of algebra, data analysis, and geometry skills in third grade. Claessens and Engel (2013) found children’s proficiency with identifying numerals, counting, and recognizing patterns were the most consistent and significant predictors of later reading and math achievement across elementary school. Similarly, simple retrieval of basic facts and decomposing in first grade accurately predicted performance with word problems, whole and rational number computation, and fraction comparison skills in seventh grade (Geary et al., 2013). The results from longitudinal studies suggest that children who do not develop early math skills at school entry are likely to have difficulty with achievement in math in later grades (Nelson & Powell, 2018).

Although many underlying factors impact math achievement, children from low socioeconomic status (SES) backgrounds may have an increased risk of low math achievement. Fewer children who were eligible for free or reduced-price lunch (FRL) scored proficient or advanced (26%) in math compared to children who were not eligible for FRL (58%) on the 2019 NAEP (NCES, 2019). Morgan et al. (2009) found that children from families of lower SES had slower growth in math between kindergarten and fifth grade compared to children from higher SES families. Students identified as dual-language learners (DLLs) may also be at increased risk of low math performance. NAEP data show an achievement gap between DLLs and non-DLLs in math over the last two decades. Only 16% of fourth-grade DLLs scored proficient, whereas 44% of non-DLLs scored proficient (NCES, 2019). Similarly, fourth- and eighth-grade students with disabilities have lagged behind peers in math for decades (NCES, 2019). As these trends in achievement have persisted, researchers are exploring factors that may improve math outcomes for all children. For example, Galindo and Sonnenschein (2015) found that supportive home math environments (HME) can significantly decrease achievement differences related to SES. Although many factors likely contribute to achievement gaps, children considered at-risk academically may especially benefit from supplemental math interventions.

The Role of Informal Learning Environments

Informal learning, or less structured learning, can take place anywhere. Learning that happens in informal learning environments, however, takes place in spaces such as homes, sports facilities, and exhibits at libraries and museums; this learning often involves engaging in everyday experiences (e.g., cooking; Gerber et al., 2001; Pattison et al., 2017). In this study, we prioritized children’s learning in informal learning environments, whether the learning itself was formal (i.e., facilitated or designed learning, such as a math-themed museum exhibit) or informal (i.e., unstructured learning, such as free play with blocks).

Recent initiatives also recognize the importance of informal learning environments. For example, the Mathematics Learning in Early Childhood: Paths Toward Excellence and Equity report (NRC, 2009) recommended that educational partnerships should form between families and community programs to promote math learning. In 2002, the National Association for the Education of Young Children and the National Council for Teachers of Mathematics released a joint position statement with a similar emphasis. This recommendation is not surprising, because most young children come to school with some knowledge of letters and numbers. Likely, the knowledge results from caregivers providing children with learning opportunities in informal learning environments (Passolunghi & Lanfranchi, 2012; Susperreguy & Davis-Kean, 2016). In other words, math learning can happen prior to school entry. A meta-analysis of 64 studies focused on the relation between the HME, and children’s math achievement reported a statistically significant relationship (r = .13, p < .001; Daucourt et al., 2021). The positive relation between the HME and children’s math achievement, and the amount of time children spend in informal learning environments, highlights the importance of identifying effective math interventions caregivers can implement.

Math Learning in Informal Learning Environments

The body of research examining the role of informal learning environments and student achievement is growing in the United States and worldwide (e.g., Manolitsis et al., 2013; Napoli & Purpura, 2018; Sheldon & Epstein, 2005). LeFevre et al. (2009) found that HME activities from kindergarten to second grade accounted for a significant proportion of variance in math knowledge and math fluency skills, even after controlling for other variables including vocabulary, working memory, and the home literacy environment. When examining the relationship between home literacy and math practices and children’s academic achievement, the HME was predictive of numeracy and vocabulary outcomes (Napoli & Purpura, 2018). In a longitudinal study, Manolitsis et al. (2013) examined the role of home literacy and numeracy experiences on reading and math outcomes for children in kindergarten and first grade; parent literacy teaching (e.g., letter names) predicted early math achievement as strongly as parent numeracy teaching. Sheldon and Epstein (2005) examined eight family and community involvement activities across elementary and high school (e.g., parent workshops on math skills, parent conferences to discuss children’s progress in math). Only one type of involvement (home learning activities) consistently and significantly related to children’s higher math achievement.

Caregivers in informal learning environments, then, play a critical role in children’s math development (Ginsburg et al., 2012). Interventions in informal learning environments are often led by adults who have no prior experience with teaching or math, making treatment fidelity and interventionist training particularly important. Accurate interpretation of treatment effects and recommendations related to generalization depend on adherence to the quantity and quality of intervention activities (Capin et al., 2018). A vast body of research shows that when given the opportunity to play board games (Laski & Siegler, 2007; Siegler & Ramani, 2009), read books focused on number concepts (Hendrix et al., 2019; Purpura et al., 2017), and engage with math education apps (Griffith et al., 2019; Zippert et al., 2019), children in both informal and formal learning environments increase their math knowledge. This is particularly true in the preschool years, which is encouraging because early math learning promotes greater achievement more broadly, such as in literacy and science (Duncan et al., 2007).

Previous Reviews of Math Intervention Studies

Researchers have conducted syntheses to identify effective components of math interventions in formal learning environments, such as classroom settings. For example, Nelson and McMaster (2019b) focused their meta-analysis on 34 studies of early numeracy interventions for children in preschool through first grade, reporting a large (Kraft, 2020) summary effect (g = 0.64). Similarly, Charitaki et al. (2021) evaluated numeracy interventions for preschool to second-grade students and found a similarly large effect size (g = 0.61); the authors reported larger treatment effects for short-term interventions including one to nine sessions. Related to students with exceptional learning needs, Kroesbergen and Van Luit (2003) investigated 58 math intervention studies for elementary children with low math achievement; authors reported a large summary effect (d = .92) for studies that focused on early math skills (i.e., counting, number sense). Although the results of these syntheses support the use of interventions to improve math performance, only studies conducted in classrooms with teachers or researchers as interventionists were examined.

Researchers have conducted few syntheses of interventions with caregivers acting as interventionists (e.g., Fishel & Ramirez, 2005; Van Steensel et al., 2011). Van Steensel et al. (2011) conducted a meta-analysis of 30 studies that examined the effectiveness of home literacy programs with preschool and primary school children. Results indicated a statistically significant mean effect (d = 0.18). Fishel and Ramirez (2005) reviewed 24 studies that examined the effectiveness of parent tutoring on kindergarten through 12th-grade children’s academic performance, including reading, math, spelling, and homework completion. Although this systematic review included math interventions, the authors did not include participants in the preschool age band or conduct a meta-analysis, and their literature search ceased in 2003. These analyses offer meaningful insights for young learners; however, to our knowledge, there is not a meta-analysis focused on math interventions implemented in informal learning environments.

Study Reporting Quality

In addition to conducting a meta-analysis, we also reviewed study reporting quality. One criticism of meta-analysis is the potential that the meta-analyses include several low-quality studies, and the flaws of those studies can be carried over into the meta-analysis (Borenstein et al., 2009). Although the quality of study reporting is not necessarily a precise measure of the quality of a study’s findings, studies with high quality of reporting can reveal limitations to the generalizability of findings, and low quality of reporting may raise concerns regarding the validity of intervention effects. The evidence-base for math interventions in informal learning environments is still emerging, so now is also an appropriate time to conduct a comprehensive evaluation of study reporting quality. With any evidence base, researchers and practitioners benefit from understanding the current limitations of the field. Evaluating study reporting quality can support researchers in identifying current limitations of how information about interventions (e.g., content, implementation) and the effectiveness of interventions is reported. The results of a quality review provide essential recommendations to enhance study reporting, thereby allowing researchers and practitioners to determine if interventions are representative, generalizable, and transferrable to the context in which they support children and families (Cook & Cook, 2017).

Previous researchers have examined study quality in school-based math intervention meta-analyses. The studies reported varying results, with average study reporting quality ratings (scale of 0 to 1) ranging from, for example, 0.77 for geometry interventions for 4th- to 12th-grade students with learning disabilities (Liu et al., 2019) to 0.83 for tier 2 math interventions for students with math difficulty (Jitendra et al., 2021) to 0.87 for proportional reasoning interventions for students with math difficulty (Nelson, Hunt, et al., 2022). Wide ranges in study reporting quality across math interventions within the same meta-analysis have been documented (e.g., Stevens et al., 2018). Researchers have not conducted a review of study reporting quality of math interventions conducted in informal learning environments.

Purpose and Research Questions

This project addresses an important gap in the literature—features of effective methods that caregivers use to promote children’s math understanding. The research questions were:

What is the average treatment effect, and how variable are the effects of math interventions implemented in informal learning environments?

What variables (e.g., intervention characteristics, outcome measure content, intervention activities) predict effectiveness for the total sample of studies?

What is the reporting quality for studies included in the meta-analysis, and what trends exist across quality indicator variables?

Method

The method section provides a detailed overview of the following aspects of this meta-analysis: (a) inclusion criteria, (b) procedures we used to conduct the literature search, (c) training procedures for the abstract screening and full-text review, (d) the results of the literature search procedures, (d) article coding procedures, (e) the training for and reliability of the coding procedures, and (f) the data analysis plan. (See https://scholarworks.boisestate.edu/sped_facpubs/147/ for full review protocol).

Eligibility for Inclusion in the Meta-Analysis

We applied the following a priori inclusion and exclusion criteria throughout the literature search process to identify studies for the meta-analysis. Studies:

Investigated math intervention effects. We defined intervention as a change to the typical routine such as researcher recommendations to focus on specific math knowledge and skills in the home or engage in an activity for a specified amount of time. We included intervention programs that primarily focused on math, as opposed to early childhood or elementary holistic curricula programs (Noble et al., 2012).

Were conducted in informal learning environments, defined as home, out-of-school programs held at community spaces such as libraries and museums, and everyday experiences (Gerber et al., 2001). We excluded studies conducted only in formal learning settings, including classroom interventions and studies at university research labs or lab schools (Brown & Alibali, 2018). However, if a study included both formal and informal learning environment components, the informal learning environment component was included if the effects of that intervention could be isolated (Starkey et al., 2004).

Administered at least one math achievement outcome measure to determine intervention effectiveness. Studies that only administered measures of “math talk” were excluded (Vandermaas-Peeler et al., 2016), as were studies that only administered cognitive measures that are not math achievement measures (e.g., executive functioning, spontaneous focus on numerosity [SFON]; Braham et al., 2018). Math talk and cognitive measures were excluded from this meta-analysis because these measures represent distinctly different constructs that require separate analysis (Pigott & Polanin, 2020).

Had an adult in a caregiver role (e.g., parent, grandparent, older sibling, staff at a library or museum) as the primary facilitator of the child-directed components of the math intervention. Researchers could serve as the implementer of the parent-directed component of the intervention (i.e., leading the caregiver workshop on math activities that caregivers then implemented at home with their child).

Included participants in settings that represent early childhood education, or between 3 years, 0 months (average age at the start of the intervention) to the end of third grade (average age less than 9 years, 0 months old). Studies with younger or older children were excluded (Lynch & Kim, 2017) unless data were disaggregated for the target age.

Used an experimental or quasi-experimental group design. Studies that used single-case design or qualitative methods were excluded (Linder & Emerson, 2019).

Provided appropriate information to calculate ESs (e.g., means, SDs, F statistics, t-tests). Studies that did not report information to calculate ESs (or could not be obtained directly from authors) were excluded (e.g., Pasnik et al., 2015).

Presented results in English.

Literature Search Steps

We conducted a comprehensive literature search process to identify studies for inclusion; we did not restrict our search by publication type or year. We captured relevant studies with (a) electronic databases, (b) journal table of contents, (c) communication with experts, (d) organizational email listservs, (e) preprints, (f) reference lists, and (h) forward citation search.

Electronic Databases Search

First, we searched electronic databases including: Academic Search Premier, Education Research Complete, ERIC, ProQuest Dissertations & Theses, PsycARTICLES, and PsycINFO with the following Boolean search string: (intervention OR activity OR training OR tutoring OR “book reading” OR tablet OR “e-book” OR “math* play” OR “informal learning”) AND (math* OR numeracy OR “number sense” OR “math talk” OR geometry OR algebra) AND (parent* OR childcare OR caregiver OR daycare OR “day care” OR day-care OR “after school” OR museum OR “home tutoring” OR home-based OR “home learning environment” OR “home math* environment” OR “home numeracy”) AND (preschool* OR prekindergarten OR “early childhood” OR kindergarten OR “first grade” OR “second grade” OR “third grade” OR elementary OR “primary school” OR “head start” OR “nursery school”).

Journal Table of Contents

Second, we searched the electronic table of contents for 10 relevant journals (using the same Boolean search string as the electronic databases): Curator: The Museum Journal; Early Child Development and Care; Early Education and Development; Journal of Cognition and Development; Journal of Research in Childhood Education; Journal of Research on Educational Effectiveness; International Journal of Early Years Education; International Journal of Science Education; Mind, Brain, and Education; and Visitor Studies. We also conducted a hand search of five journals (publication years 2016 to 2021) that did not allow using a Boolean search string: Children and Libraries; Cognitive Development; Early Childhood Research Quarterly; Journal of Experimental Child Psychology; and Journal of Research in Mathematics Education.

Email Communication with Experts in the Field

Third, we emailed 20 researchers who regularly conduct research related to the topic of this meta-analysis. We provided the researchers with the inclusion criteria and asked them to send any published or unpublished studies from their research labs that met inclusion criteria.

Researcher Contacts Through Relevant Organizations

Fourth, we contacted organizations maintaining an email listserv with subscribers who may have conducted studies aligned to the focus of this meta-analysis. We contacted several organizations, and the following posted our announcement to their listserv: Association of Science-Technology Center, Cognitive Development Society, International Group for the Psychology of Mathematics Education, Mathematics Cognition and Learning Society, and Visitor Studies Association. We also emailed the National Science Foundation’s Center for the Advancement of Informal Science Learning for information on former grant projects.

Preprint Publications

Fifth, we searched PsyArXiv (https://psyarxiv.com/) for preprints of studies that authors may have submitted to the repository. Based on the electronic search function of this database, we searched two-word combinations of the following terms: math, numeracy, intervention, tutoring, informal, activity, play, and home.

Reference List Search of Included Studies

Sixth, we searched the reference lists of three included studies that met inclusion criteria, and because they were under review at journals, they represented the most recent research. They also represented different types of interventions, including text messaging (Napoli & Purpura, 2021), picture books (Purpura et al., 2021), and food routines (Leyva et al., 2021).

Forward Citation Search

Finally, we conducted a forward citation search of the three most cited publications, according to Google Scholar’s “cited by” function, out of all the included studies in this meta-analysis (Niklas et al., 2016; Starkey & Klein, 2000; Vandermaas-Peeler et al., 2012).

Literature Search and Review Procedures

The abstract screening and full-text review began in January 2021, ended in August 2021, and were organized and supervised by the first and second authors. Three graduate assistants (GAs) conducted the literature search. We led four 1.5-hour introductory training sessions for the GAs on the purpose and defining features of the meta-analysis, features of published early math intervention studies, and best practices for abstract screening. The GAs were pursuing master’s degrees in counseling, early and special education, and elementary education.

Training

Abstract Screening

We held a 2-hour training session that included an overview of the abstract review criteria for this meta-analysis, the process for filling out the Excel database to identify which studies should be reviewed in full text, and a group practice of applying the criteria to abstracts. Through an iterative process of applying criteria to 175 practice articles and discussing challenges with the screening tool, we finalized the screening tool. After training and practice, the GAs independently coded the remaining abstracts. All abstracts were double-coded.

Throughout the abstract screening process, we held weekly 1-hour meetings to discuss disagreements about inclusion, increase screening process reliability, and provide continuous GA follow-up support. Each week, GAs coded a set of 400–600 abstracts, and the third author calculated Kappa. Kappa is a stricter measure of interrater reliability than percent agreement and takes into account the possibility of rater guessing. Values of Kappa between 0.60 and 0.79 are considered “moderate” (McHugh, 2012). Kappa improved from the first two sets of abstracts (0.25 and 0.42, respectively) to the final two sets of abstracts (0.78 and 0.71, respectively). All discrepancies were discussed during weekly meetings resulting in 100% agreement on the final set of included and excluded studies.

Full Text Review

A similar training process as the abstract screening was used for the full-text review (initial training, iterative feedback loop, weekly meetings for follow-up support). The full-text review included GAs reading and scanning sections of the full text of articles and capturing data from the studies (e.g., children’s age, intervention setting, outcome measures), so the first and second author could make final determinations about the inclusion of studies. Each GA coded 30 to 40 full-text articles each week, and all studies were double-coded. Discrepancies not discussed as part of the research team meetings were resolved between the GAs in individual meetings. Interrater agreement was 95.3% for full-text coding. All discrepancies were discussed and 100% agreement was achieved prior to analyses.

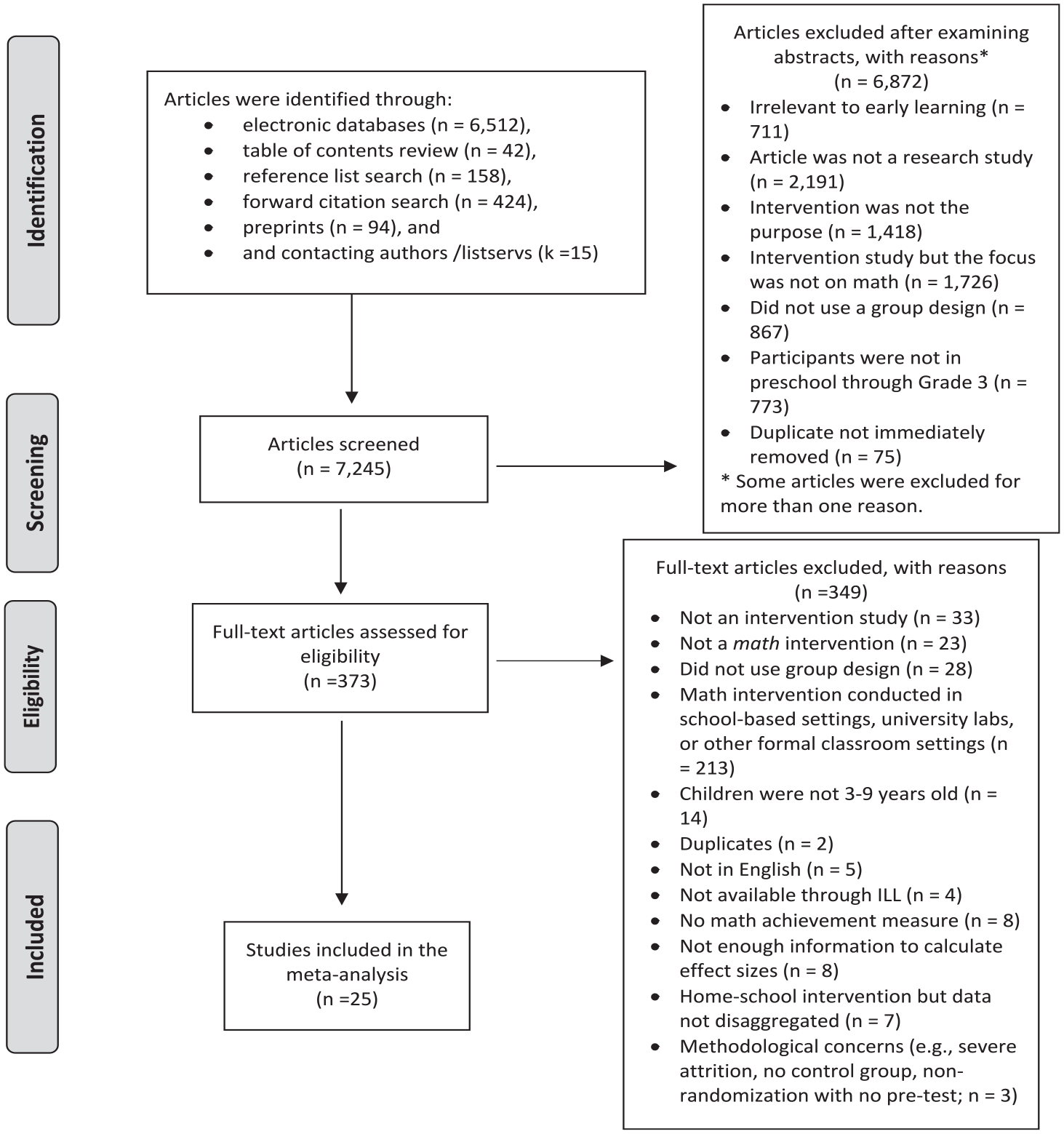

Literature Search Results

Figure 1 is a PRISMA diagram that documents the literature search results, including the abstract screening and full-text review results. In total, we captured and reviewed 7,245 abstracts. At the abstract screening stage, most studies were excluded because they did not focus on academic learning (or were generally irrelevant to early learning), represent a research study, include an intervention, have independent variables related to mathematics, use a group design, or include participants between ages of 3 and 9 years. Duplicated studies were also removed.

PRISMA diagram illustrating the literature search procedures.

We identified 373 studies for full-text review. We excluded studies because they did not take place in informal learning environments; focus on testing the effectiveness of an intervention; provide child participants with mathematics content intervention, play, or support; use a group design method; include child participants between the ages of 3 and 9 years; administer a mathematics achievement measure; present effects of the home component that could be isolated from the effects of the school intervention; and provide enough information to appropriately calculate ESs. Duplicated studies were also removed.

As we reviewed studies in the full-text phase, the first and third authors made notes about methodological concerns for studies. We enforced ad hoc exclusion criteria to ensure that the studies included in this meta-analysis were of high methodological quality. Although a purpose of this study was to investigate study reporting quality, study methodological quality is different and needs to be taken into consideration above and beyond simply coding for and reporting issues. In other words, poor reporting quality makes replication difficult but does not necessarily call into question the validity of the estimated treatment effect, whereas poor methodological quality indicates that the estimated effect of the intervention is flawed. If we would have allowed studies with methodological flaws to be included in the current meta-analysis, those study flaws would have transferred to the meta-analysis and potentially lead to a biased estimate of effect. We excluded one study with severe levels of attrition (i.e., more than 50%; Lore et al., 2016), one study that employed a treatment group–only design (Leyva et al., 2018), and one study that used nonrandomization procedures without administering a pretest and also lacked specific information needed to estimate the effect of clustering on variance estimates (Austin, 1988).

Coding Procedures

The first, second, and third authors drafted the coding protocol; authors have extensive experience creating coding protocols for systematic reviews, meta-analyses, and qualitative studies. We used an iterative process of feedback from GAs, advisory board members, and consultants to refine and finalize the coding protocol. The coding protocol comprises five sections (basic study information and participant demographics, methodological information, intervention features, outcome measures, ESs) and is briefly explained in Table 1. The full coding protocol (Nelson et al., 2021) contained nearly 200 variables and is on an open-access platform (https://scholarworks.boisestate.edu/sped_facpubs/141/). The coding protocol included a variable name, code options (e.g., forced response, open response), and the variable description or definition, with examples as applicable.

Quality of Study Reporting

Within each of the five sections of the coding protocol, we embedded study reporting quality codes, referred to as quality indicators (QIs). The coding protocol for QIs is also briefly explained in Table 1, with each individual indicator reported in the results. We coded studies for 37 QIs based on Gersten et al. (2005), which provided recommendations for reporting standards in experimental and quasi-experimental studies. We coded for QIs related to reporting quality, meaning that we recorded whether studies provided specific information to support readers’ replicability and understanding of the method and interpretation of the results (as we had previously removed studies due to methodological issues). Gersten et al. (2005) emphasized components related to special education research, but the QIs are highly relevant for all educational intervention studies. We also referred to PRISMA reporting guidelines (Page et al., 2021) to add relevant variables related to study reporting quality to the coding protocol.

The 37 QIs included variables that Gersten et al. (2005) deemed (a) essential (n = 27) and (b) desirable (n = 10). We used the essential and desirable framework, as essential QIs may be necessary for researchers and practitioners to replicate and generalize the intervention study, whereas the desirable QIs represent supplemental information that provide helpful study detail. We coded QIs across the following main categories: 1.0 participant description, 2.0 intervention and comparison condition descriptions, 3.0 outcome measures, and 4.0 data analysis and results.

Training and Reliability for Coding

The second author-led coder training for the three GAs responsible for coding all included studies for sections 1 through 4 (including embedded QI codes). The first and third author completed the section 5 coding (extracting ESs). The initial training included reviewing the variables and details of the section of the coding protocol, practicing with a set of studies, and discussing coding challenges. All studies used for practice were coded by all GAs, and the remaining studies were each coded by two GAs. At the end of training, interrater reliability using percent agreement for the sections of coding was 0.82 (section 1: study-level variables), 0.97 (section 1: child-level variables), 0.86 (section 2: methodological information), 0.89 (section 3: intervention features), 0.82 (section 4: outcome measures), and 1.00 (section 5: ESs). According to McHugh (2012), values greater than 0.80 are considered “strong” evidence of agreement. All discrepancies were discussed and 100% agreement was achieved prior to analyses.

Data Analysis Plan

Meta-Analysis Procedure

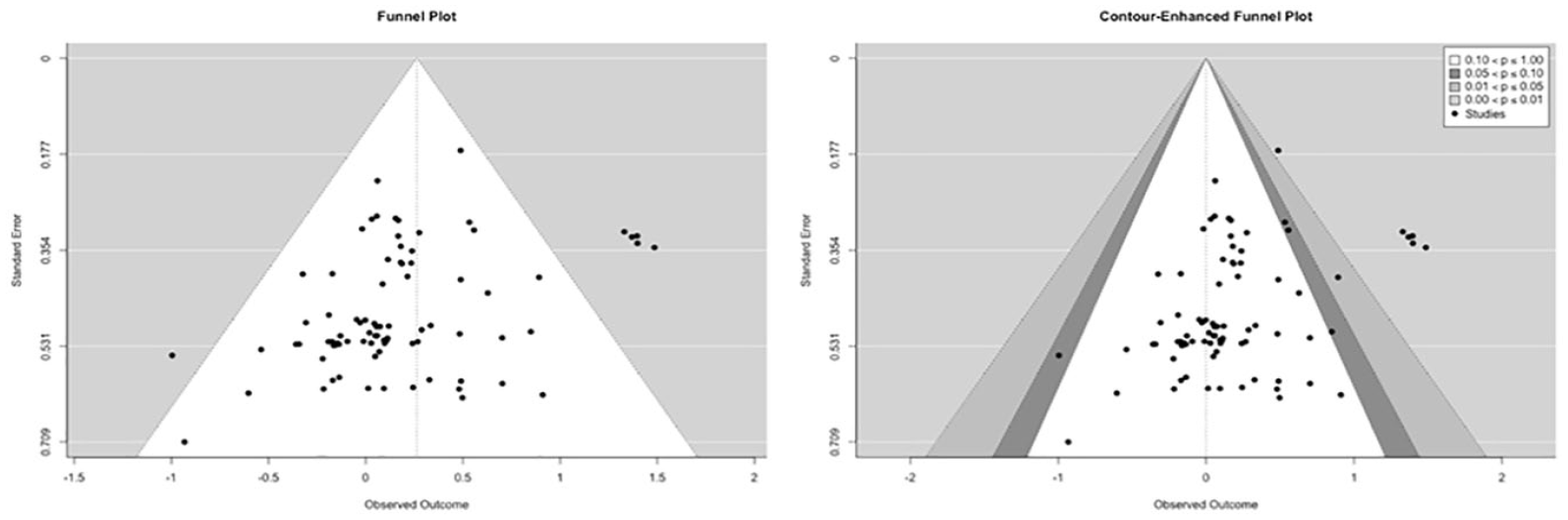

We calculated Hedge’s g for each study using procedures outlined in the What Works Clearinghouse statistical guidance documentation (WWC, 2017) and found in meta-analysis support materials (Lipsey & Wilson, 2001; Morris & DeShon, 2002). This required calculation of Cohen’s d based on means, standard deviations, and sample sizes and then correction for small sample size (thus producing Hedge’s g; Hedges & Olkin, 1985). All ESs were derived such that a positive value indicates the intervention group scored on average higher than the control group. When pre/post tests were used to calculate an ES, the correlation between the pretest and posttest was required for ES variance estimation. If this was not presented in the article, the authors were contacted. If authors were unresponsive, a value of 0.5 was used in accordance with WWC guidelines (WWC, 2017). We initially identified potential outliers in ESs as those that were more than +/−3.0 SDs from the random-effects weighted mean ES (Cooper et al., 2009). We used a funnel plot and Egger’s test (Egger et al., 1997) to assess for potential publication bias. Egger’s test requires specifying a meta-analysis model in which the ESs are predicted by their standard errors. Finally, we used a contour-enhanced funnel plot to flag studies that may be outliers due to factors other than publication bias.

We used random-effects models over fixed-effect models based on the assumption that a distribution of effects exists in the population (Borenstein et al., 2009). Several studies reported more than one ES, creating a dependency between ESs that must be accounted for to produce accurate statistical tests. To account for this type of nested data structure and dependence, we conducted multilevel meta-analyses with robust variance estimation (RVE; Hedges et al., 2010; Pustejovsky & Tipton, 2021) using the R packages metafor (Viechtbauer, 2010) and clubSandwich (Pustejovsky, 2019). RVE adjusts standard errors to account for correlations between effects within studies and is possible without precise knowledge of the correlations between effects; a value of 0.80 is assumed and sensitivity to this value assessed (Hedges et al., 2010).

Variability in the distribution of effects supports investigation of potential moderators. We estimated heterogeneity (

To explain heterogeneity, we tested several moderators. Moderators included: (a) content of and type of the math achievement outcome; (b) intervention characteristics (amount of caregiver training, intervention location, number of intervention sessions, length of intervention); and (c) intervention math content and activity type. Individual moderators were assessed using the RVE-corrected multilevel meta-analysis model. Not all studies reported all moderators. Therefore, multiple imputation was utilized to impute missing moderator values using mice (van Buuren & Groothuis-Oudshoorn, 2011), and results were compared to complete case analyses. Results were similar; therefore, reported results are from complete case analyses.

Study Reporting Quality

Each QI was scored on a scale of 0 (not met) to 1 (met), similar to previous reviews of quality using these indicators (e.g., Jitendra et al., 2021; Stevens et al., 2018). We calculated average scores for each QI variable, QI category, and study. Within the text of the results section we refer to the average scores as percent of QIs met to aid in interpretability of the scores.

Results

Descriptive Results

Please see online supplementary table S1 for an overview of the 25 studies, including the math content and intervention activities. The 25 studies included 23 publications (two publications included two studies each) and were published from 1981 to 2022, with some studies under review as of February 2022. At the time of article coding, most studies were published in peer-reviewed journals (72%; k = 18), with three studies (12.0%) in progress or under review at peer-reviewed journals. Four studies (16.0%) were masters’ or doctoral theses. All studies were implemented solely or partially in the home learning environment. In all studies and intervention groups, the child’s primary caregiver was the interventionist.

There were 3,697 total child participants with an average age of 4.77 years, ranging from 1.58 years to 8.5 years. Refer to online supplementary Table S2 for a summary of the participants across the 25 studies, including the number of studies that reported specific demographic information. For example, 18 of the 25 studies reported information about participants’ race or ethnicity. Using race and ethnicity categories that are commonly applied to research studies conducted in the United States, the table shows that, of the U.S. studies that reported race/ethnicity information for 1,125 participants, students were predominantly Hispanic (39.11%), Black (33.10%), and White (21.42%). For non-U.S. studies, the table shows that of 871 students who had race/ethnicity information, the majority of students (94.95%) were identified as Asian. We did not identify any studies that reported including children with disabilities, and we identified only two studies that reported including children who were DLLs. Importantly, the table also shows how studies reported children’s SES. Given the variability in how studies reported SES (and the differences in how SES is defined across contexts and countries), we were unable to quantitatively synthesize and report on the number or percentage of children who were identified as being low SES.

The 25 studies represented a range of content and activities. Each study was assigned a code to describe the main intervention activity, including card or dice games (k = 6), varying activities throughout the intervention with parent and child options for choice (k = 6; e.g., activity kits with many activities), formal curriculum (k = 5; i.e., specified set of activities and sequence of lessons for caregivers to implement), number board games (k = 4), children’s math story books (k = 3), and food routines (k = 2). One study was assigned two activity codes due to two different treatment conditions receiving different interventions. Each study was also assigned codes that identified the math content addressed as part of the intervention. Most studies addressed multiple math content areas and skills, including number skills (k = 20; e.g., counting, cardinality); relations skills (k = 17; e.g., set or numeral comparison, matching quantities, numeral identification); operations skills (k = 12; e.g., decomposing numbers, simple sums); measurement, geometry, and/or spatial relations (k = 9; e.g., using measurable attributes, identifying shapes, composing shapes, navigation, spatial relations); organizing and/or sorting (k = 5; e.g., categorizing objects); and sequencing and/or patterning (k = 7; e.g., shape, color patterns).

Meta-Analysis Results

Overall Effect

For quantitative analysis, we included 83 ESs from 25 studies (average of 3.3 effects per study). The overall effect was statistically significant, g = 0.26, 95% CI [0.07, 0.45], t(19.3) = 2.92, p = 0.009. The fractional degrees of freedom is due to the application of RVE with a small sample correction (Tipton, 2015). To provide some context to this finding, educational interventions with ESs larger than 0.20 may be considered “large” (Kraft, 2020). A caterpillar plot showing the distribution of ESs can be found in Figure S1 in the online supplementary materials.

Variability in Effects

The variance of the distribution of effects was statistically significant,

Publication Bias

The funnel plot did not show substantial asymmetry (see Figure 2), though values outside the funnel were further scrutinized and ultimately retained. Egger’s test was implemented to evaluate funnel plot asymmetry. For consistency with our analytic models, a multilevel model with RVE was specified for Egger’s test. The result was a nonstatistically significant (p = 0.423) coefficient of the standard error of the ESs; therefore, we fail to reject the null hypothesis of a zero relationship between the standard error and ES. A contour-enhanced funnel plot, useful for detecting publication bias resulting from the suppression of statistically insignificant findings, does not indicate substantial issues because many effects are located in the nonsignificant funnel (see Figure 2).

Funnel and contour plots.

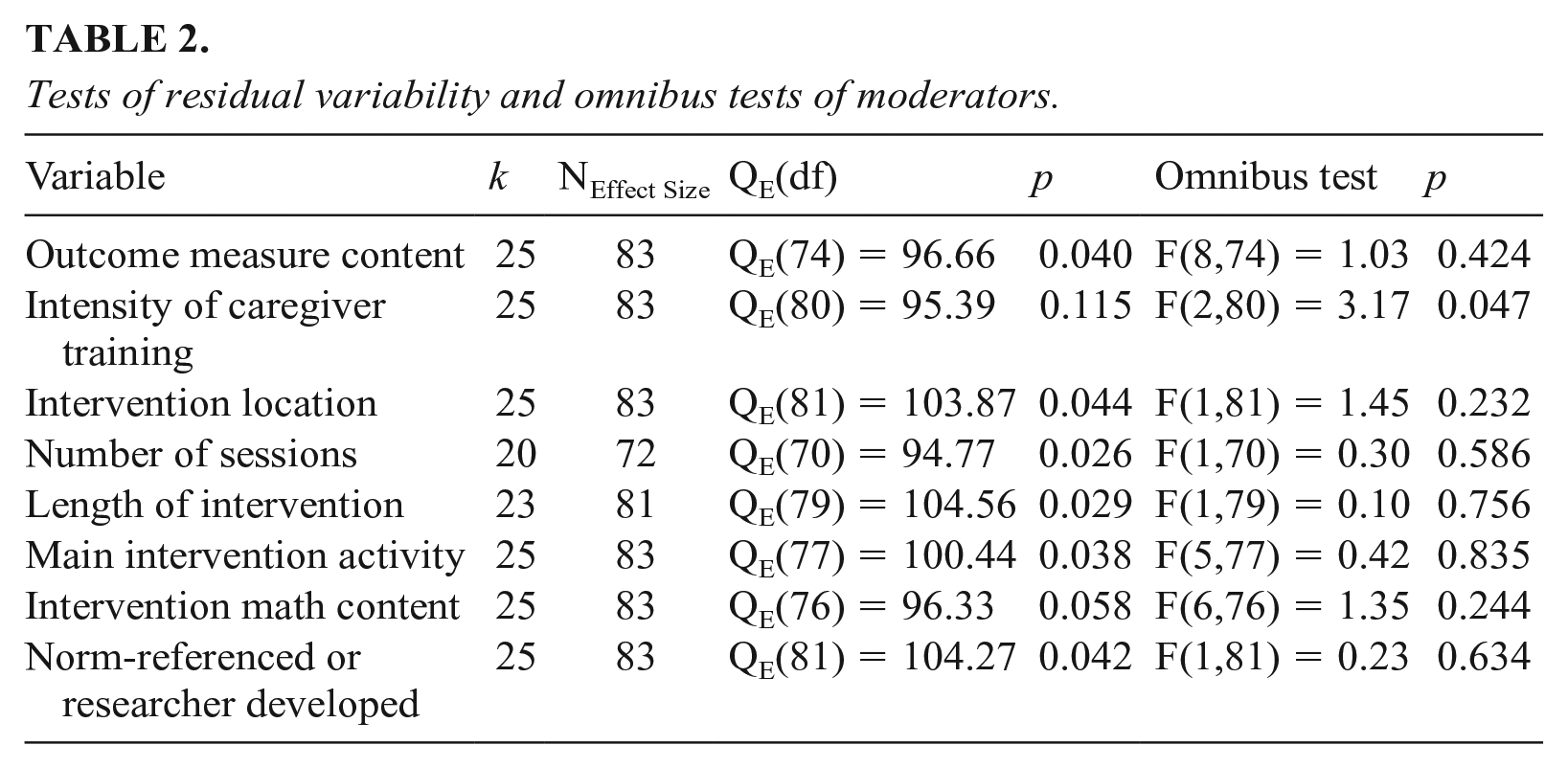

Moderators

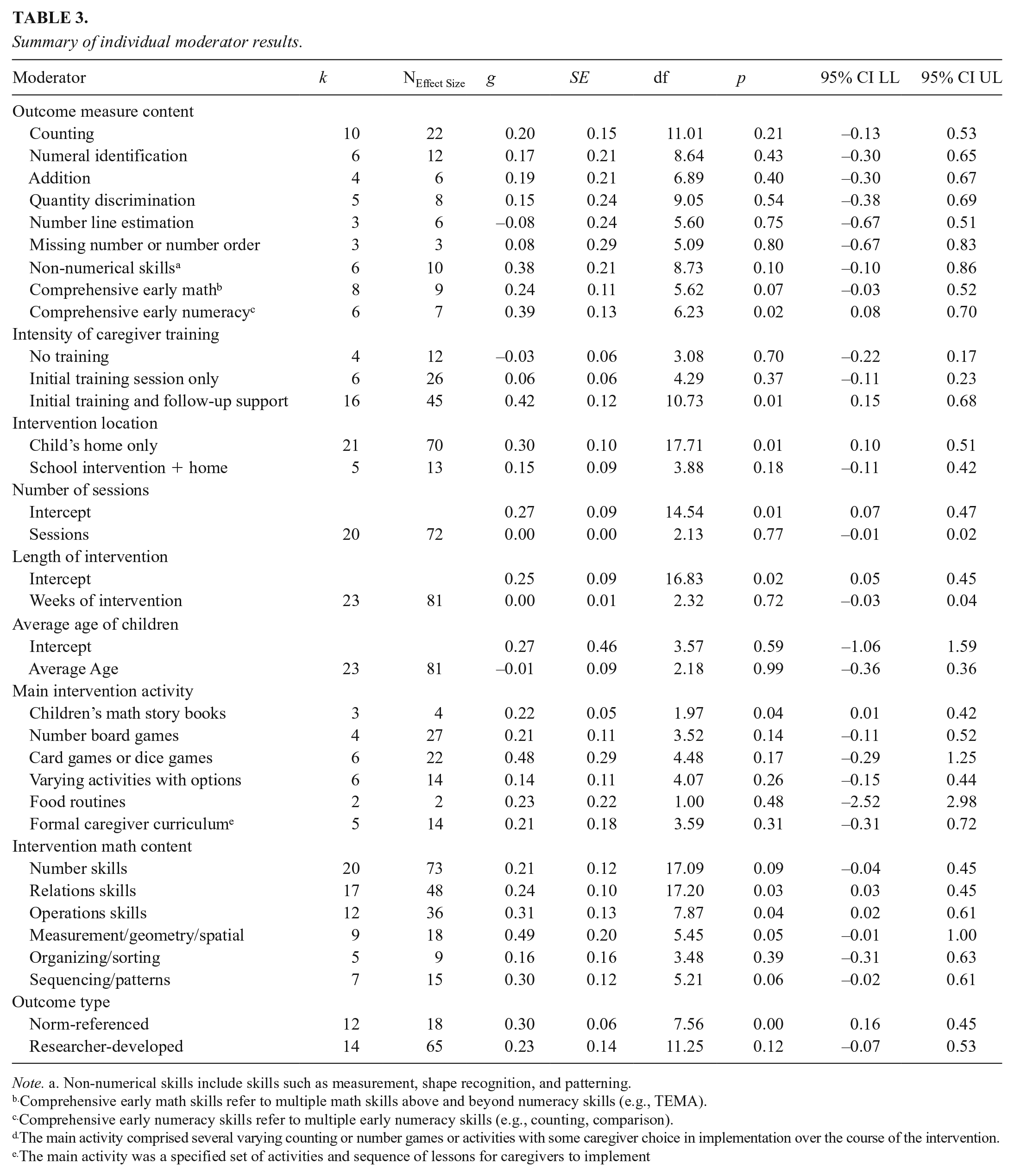

Moderators were evaluated individually. Results for categorical moderators required specifying a model with no intercept whereas continuous moderators included an intercept. As shown in Table 2, one of the omnibus tests of moderation was statistically significant (intensity of caregiver training). For intensity of caregiver training,

Tests of residual variability and omnibus tests of moderators.

The meta-regression results for each individual moderator are presented in Table 3. All analyses were conducted with and without controlling for quality of reporting variables. Results across the two sets of analyses were consistent. Because quality of reporting refers only to the information that is reported and may not capture a complete illustration of the research study that authors conducted, we report the results without controlling for quality of reporting. For math outcome measure content, comprehensive early numeracy measures (i.e., a measure that addressed multiple early numeracy skills) was the only category of outcome measures that was statistically significantly greater than zero (g = 0.39, 95% CI [0.08, 0.70], p = 0.02). Regarding use of a norm-referenced or researcher-developed measure, results with norm-referenced measures were statistically significantly different from zero whereas researcher-developed measures were not.

Summary of individual moderator results.

Note. a. Non-numerical skills include skills such as measurement, shape recognition, and patterning.

Comprehensive early math skills refer to multiple math skills above and beyond numeracy skills (e.g., TEMA).

Comprehensive early numeracy skills refer to multiple early numeracy skills (e.g., counting, comparison).

The main activity comprised several varying counting or number games or activities with some caregiver choice in implementation over the course of the intervention.

The main activity was a specified set of activities and sequence of lessons for caregivers to implement

Of the four intervention characteristics evaluated, intensity of caregiver training and principal intervention environment each had a level that was statistically significant. For caregiver training, the use of an initial training session with follow-up support (e.g., additional training sessions, in-home coaching, text message support) was associated with an average effect that was statistically significantly different from zero (g = 0.42, 95% CI [0.15, 0.68], p = 0.01), whereas when no training or only initial training occurred, the average effects were nonsignificant.

The majority of studies examined the effect of caregivers implementing math intervention treatment conditions (n = 21) in which the home was used as the sole, primary learning environment. A subset of studies examined the effect of considering two learning environments for treatment conditions (n = 5), which included classroom-based interventions with home learning environment components. (Note that the number does not total 25 because one study included two treatment groups categorized as two different settings.) The purpose of this meta-analysis was to determine the effect of learning that occurs in informal learning environments; therefore, the main effect of treatment conditions in which children were exposed to two principal learning environments represents only the isolated effect of the informal learning environment (i.e., ESs were calculated as classroom intervention vs. classroom intervention plus home component). The average effect was statistically significantly different from zero when the intervention occurred in the home only (g = 0.30, 95% CI [0.10, 0.51], p = 0.01). When the informal learning environment serves as a component of an intervention that primarily takes place in a formal learning environment (e.g., the classroom), the additive effect of the home learning environment is positive but not statistically significant (g = 0.15, p = 0.18).

Considering the main activities that caregivers used to implement the intervention with children, the use of children’s math story books was the only activity that yielded a p-value less than 0.05; however, given the degrees of freedom for this activity were less than 4 the result should not be characterized as statistically significant. All other activities except varying counting and number activities (i.e., caregivers were given several counting and number activities and games to choose from throughout the duration of the intervention) had average ESs larger than children’s books. Regarding math content of the intervention, only ESs pertaining to interventions that included relations skills (g = 0.24) or operations skills (g = 0.31) were statistically significant. However, nearly all math domains yielded large average effects (i.e., greater than 0.20; number; relations; operations; measurement, geometry, spatial; patterning), with only one domain yielding a medium effect (i.e., 0.05 to 0.20; organizing and sorting).

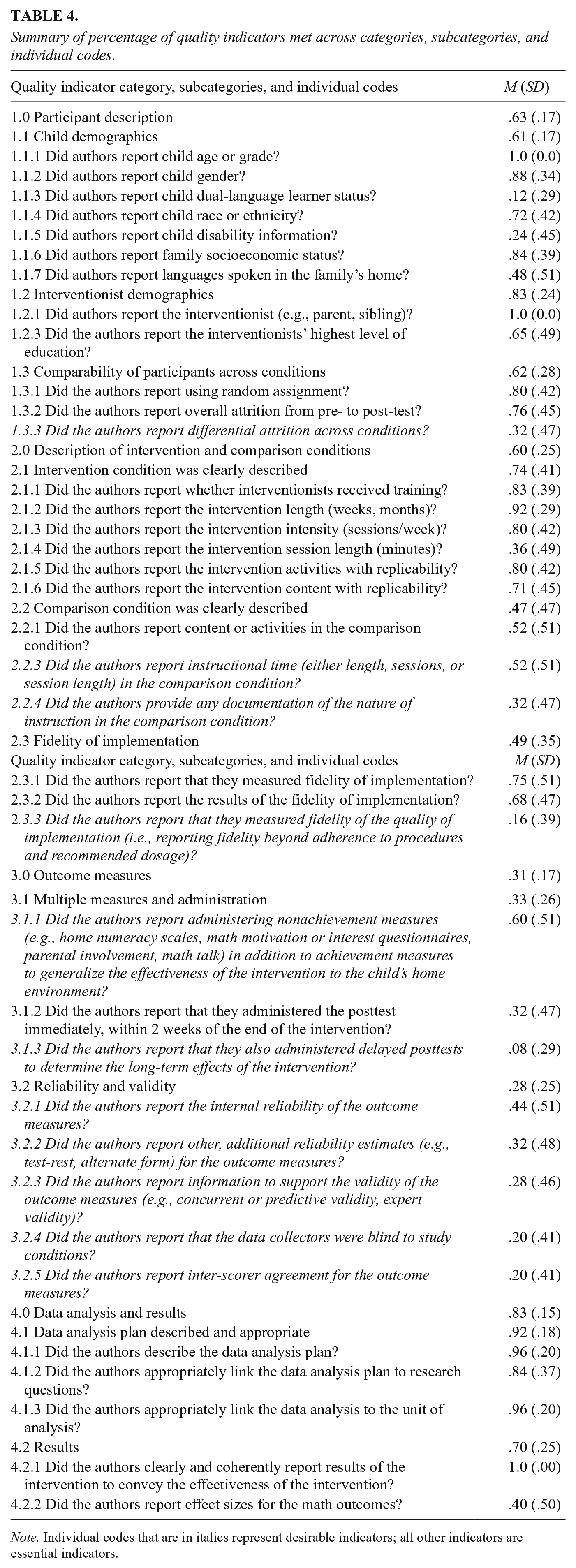

Study Reporting Quality Results

Table 4 provides the average QI score (percentage of QIs met) for each of the four main QI categories and their respective subcategories and individual QIs. The results for each study can be found in the online supplementary materials (Table S3). Studies met 58.0% (SD = 14.0%) of all QIs. The 25 studies met an average of 70.5% of the essential indicators (range = 38.5% to 88.5% QIs met across studies). On fewer occasions, studies met the requirements for reporting of the desirable indicators (31.3%). To explore if more recently published research met more QIs, we divided the studies as those published before 2019 and those published in or after 2019 and compared the average percent of QIs met. The year 2019 is the median year of the included studies and, given our review ended with the beginning of 2022, includes 3 years of recently published research. We found that a statistically significant difference in average QIs being met existed, with the more recent research meeting a higher average percent of QIs.

Summary of percentage of quality indicators met across categories, subcategories, and individual codes.

Note. Individual codes that are in italics represent desirable indicators; all other indicators are essential indicators.

On average, the 25 studies met 62.8% of the QIs related to participant description (range of 23.1% to 92.3% across studies), 60.7% of the QIs related to the description of the intervention and comparison conditions (range of 15.4% to 100% across studies), 31.0% of the QIs related to outcome measures (range of 0% to 75.0% across studies), and 83.2% of the QIs related to the data analysis plan and results (range of 60.0% to 100% across studies).

Discussion

The purpose of this study was to conduct a meta-analysis of early math interventions implemented by caregivers with young children in informal learning environments, with a subsequent review of study-reporting quality of these interventions. The results of the current meta-analysis may support training and future research focused on caregivers and other early childhood professionals who support young children’s math development in informal learning environments. The results of the current quality review reveal strengths and areas of growth for reporting the results of interventions conducted in informal learning environments.

Implications of the Meta-Analysis Results

The results of this meta-analysis revealed a statistically significant average effect (g = 0.26, p = 0.009) of early math interventions conducted in informal learning environments, and an ES of 0.26 is considered “large” (Kraft, 2020). To date, there is not another published meta-analysis on math interventions conducted in informal learning environments; however, another team of researchers has a similar study in progress. The authors reviewed home-based math interventions for 3- to 5-year-old children and reported an effect of d = 0.18 (95% CI [− 1.62, +1.99]; n = 8) for math outcomes (A. Cahoon, personal communication, January 13, 2022). The slight difference in results between Cahoon and the current meta-analysis could be due to diverging inclusion criteria, age of participants, and methodological considerations. In addition, the results of this meta-analysis reported a larger average effect for math interventions conducted in informal learning environments compared to a meta-analysis focused on home-based literacy interventions for young children (d = 0.18; van Steensel et al., 2011).

An ES of 0.26 is impressive for several reasons. The results reported a smaller average effect compared to meta-analyses that examined early math interventions conducted in classroom settings (e.g., g = 0.64, Nelson & McMaster, 2019b; 0.61, Charitaki et al., 2021). However, many interventions included in previous meta-analyses were conducted as part of research studies in which researchers implemented interventions, and fidelity of implementation was strictly monitored, potentially leading to larger effects. Yet, the interventions included in this meta-analysis were implemented in authentic settings, outside of laboratory settings or the structure of a classroom. Moreover, the interventions in the current meta-analysis were often nonintensive and provided caregivers with recommended dosages of intervention periods; whereas many classroom-based interventions have scripted lesson plans with specific guidelines on the number of minutes per week the intervention is implemented. Thus, smaller effects for interventions conducted in informal learning environments compared to classroom settings was not surprising. The average effect of 0.26 is equivalent to an improvement index of approximately 10.3 percentile points (WWC, 2017). These results suggest that caregivers can leverage the home learning environment without significant disruption or adaptation to the typical routine of the home while supporting children’s math development.

The interventions also required very few resources or materials. Caregivers were provided with materials that are typically available for low cost (e.g., dice, number board games), and the results of this meta-analysis did not suggest that families require access to more expensive materials such as iPads to reap the same benefits of the intervention. The interventions also required very little time to implement. The average intervention length was 8 weeks, and the recommended intensity ranged from once per week to daily. It is empowering, for researchers and caregivers alike, to observe the positive impact that caregivers can have on children’s math development and understanding with nonintensive interventions. It is encouraging that low-cost, easy-to-implement interventions by caregivers with varying SES backgrounds and different levels of education were effective in increasing young children’s math achievement.

Effect of Caregiver Training

The majority of interventions in this meta-analysis were nonintensive in their training requirements, which also speaks to the practical significance of the overall effect of g = 0.26. The results show that caregivers can positively impact children’s math development and understanding, even with a minimal time commitment, and this is even more so true when they are trained and supported. Based on our analyses, the use of initial training with follow-up support was statistically significant, whereas when no training or initial training with no follow-up support occurred, the average effects were nonsignificant. Examples of caregiver training from included studies involved a 30-minute presentation at an early childhood center parent night (Niklas et al., 2016), a brief phone call explaining the intervention materials and procedures (Flynn, 2021), and three at-home visits for the parent to practice activities with coaching support (Dulay et al., 2019). Examples of follow-up support included text message reminders three times per week (Leyva et al., 2018), researcher phone calls once per week (Libertus et al., 2020), and three classes involving a lesson and time for parent questions (Blanch, 2002).

Interventions with caregiver training and follow-up support can yield greater effects (i.e., be more influential to child learning). This is a promising finding for several reasons. First, training caregivers to implement nonintensive math interventions can be particularly meaningful for children who struggle and need additional practice opportunities to develop proficiency in math. In other words, ensuring that caregivers are provided training and support for learning in the home can contribute to minimizing achievement gaps in math that are prevalent before school entry (Burchinal et al., 2011) and can significantly decrease achievement differences related to SES (Galindo & Sonnenschein, 2015). More specifically, identifying the types of interventions and types of caregiver support needed to implement those interventions has the potential to minimize the differences in at-home support for all children. Second, the interventions typically required caregivers to attend an initial training session lasting between 30 min and 2 hours. This means that an extensive time commitment is not required by caregivers to have an impact on children’s math achievement. Finally, training can easily be controlled or manipulated as part of interventions in informal learning environments or as part of family engagement in community and school programs. For example, early childhood teachers or community leaders who offer caregiver training can do so with confidence that their efforts will likely have positive impacts on student learning. Because of the impacts on child learning, future intervention research can prioritize enhancing caregiver training and support opportunities, which can in turn increase fidelity of intervention activities. Additionally, meta-analysts may want to pursue future research focused on caregivers and other early childhood professionals who support young children’s math development in informal learning environments. In a meta-analysis focused on home literacy programs, the authors reported the same effects for programs that included a home visiting component compared to those programs that did not include home visiting (d = 0.18; van Steensel et al., 2011). Future research may consider further examining the effectiveness of training and follow-up support based on the specific type of support (e.g., home visiting, text messages) and quality of that support.

Effect of Intervention Outcome Measures

When considering the math content of the outcome measure, the results of the individual moderator analysis reported that comprehensive early numeracy outcomes (multiple early numeracy skills) yielded the only statistically significant effect. Although not to a statistically significant level, comprehensive early math measures (those measures that included more than just multiple numeracy skills, such as also including geometry and measurement concepts) and measures of non-numerical skills also yielded similarly large effects (Kraft, 2020). This was a surprising result because previous researchers have reported that comprehensive measures may not be sensitive enough to detect growth during an intervention (Nelson & McMaster, 2019b). Although not statistically significantly different, the results of this meta-analysis indicate that norm-referenced measures produced a larger effect than researcher-developed measures. This finding was also unexpected given that most researcher-developed measures are closely aligned to the interventions (as the measures are often developed for the purpose of measuring the impact of the intervention). Moreover, the results of school-based mathematics meta-analyses have indicated that researcher-developed measures typically produce larger effects (Gersten et al., 2009; Nelson & McMaster 2019b). The positive impacts on math achievement broadly and with measures that may be less sensitive to detecting growth in a short period of time gives confidence in the ability of nonintensive math interventions implemented in authentic settings to contribute to the overall growth of children’s math knowledge and skills.

Effect of Primary Intervention Location

To be included in this meta-analysis, any intervention study with a formal learning environment component (e.g., the classroom) and an informal component (e.g., the home) had to provide results in a manner that allowed us to isolate the effects of the intervention specific to the informal learning environment. It is important to acknowledge that although the interventions conducted in only the home environment yielded a larger effect (g = 0.30) than those conducted in the classroom and the home (g = 0.15), the effect of 0.15 (p = 0.18) represents the additive effect (i.e., value-add) of the informal learning environment. Although not statistically significant, this is practically significant. It may be important for researchers, practitioners, and parents to seriously consider the impact that all school-based interventions can have with a home intervention component. School-based math interventions are effective (Doabler et al., 2016; Powell & Fuchs, 2010), but perhaps home learning components that support caregivers with training represent a critical step in enhancing math outcomes. Better equipping families with efficient and effective methods for working with children at home increases the likelihood that all families can support their children in math prior to school entry.

Implications of the Quality of Study Reporting

As part of our ad hoc inclusion criteria, we excluded studies with methodology limitations that may have impacted the results of the current meta-analysis. The methodological and design decisions made by researchers can have a direct impact on the validity and accuracy of estimated effects. For example, pre/post designs with no control group are susceptible to confounding maturation effects; Lipsey and Wilson (1993) show that one-group pre/post ESs are on average nearly twice as large as comparisons with control groups. Biased effect estimates due to methodological decisions will ultimately bias average effects in meta-analysis (Borenstein et al., 2009). Methodological quality standards were enforced during the literature search process, so our quality review was specific to reporting quality (i.e., information that researchers provided in published reports of the intervention). High levels of study reporting quality are essential to enhance the generalizability, transferability, and replicability of intervention studies.

The results of this quality review (average QI rating of 0.58) were less favorable than the results of previous quality reviews of math interventions conducted in formal classroom settings (e.g., Jitendra et al., 2021; Nelson, Hunt, et al., 2022). Experts have suggested that study reporting quality may improve with the adoption of QIs across various fields (American Psychological Association, 2008). Therefore, the current quality review offers valuable insights and recommendations for future research considering that researchers had not yet conducted an evaluation of study reporting quality with interventions conducted in informal learning environments. The current review of study reporting quality found a promising result that studies published recently within the last 3 years have significantly improved in overall reporting compared to earlier publications.

Quality of Reporting Participant Information

An aspect of reporting participant information is comparability of participants across conditions. Researchers frequently reported the use of random assignment and overall attrition, which are key aspects for evaluating the internal validity of a finding. Random assignment is important because the participants are more likely to be equal at baseline on confounders and therefore provide an unconfounded estimate of effect, and having no attrition eliminates the possibility that the observed effect was due to the final sample containing only those participants that benefited most from the intervention (WWC, 2017). Studies less often met the desirable QI of reporting attrition across conditions beyond just reporting overall attrition.

Across studies, a relative strength of quality was authors’ reporting of the intervention and their highest level of education—though not in a manner that allowed us to examine the highest level of education as a moderator of treatment effects. Providing information about interventionists allows the reader to determine whether intervention effects may have been impacted by differences between interventionists (Gersten et al., 2005). Although the results of the current study indicated that all interventionists were the child’s parent, future intervention researchers may choose to examine the role of other types of caregivers. Therefore, we recommend that future researchers continue to provide detailed information about interventionists. Future research syntheses may examine other demographic variables that we did not consider, such as gender and linguistic diversity.

Although studies frequently reported child demographics, including age, gender, race or ethnicity, and SES, studies less often reported whether children had a diagnosed disability or if they were identified as DLLs. Previous research indicates that children from low SES may be at a greater risk of experiencing low math achievement (Galindo & Sonnenschein, 2015); thus, the fact that so many authors presented SES information was expected. However, achievement trends also point to lower performance by children who are DLLs (NCES, 2019). A previous synthesis on school-based early math interventions reported smaller intervention ESs for treatment groups that had a larger proportion of participants identified as DLLs (Nelson & McMaster, 2019a). It is important for readers to understand how the results of included interventions transfer to other populations, including children with diverse learning needs. Moreover, it is critical that meta-analysts have access to full participant demographics so that characteristics such as SES may be examined as a moderator.

A limitation of the research base on early math interventions conducted in informal learning environments is the lack of representation of children with disabilities. When studies reported disability information, it was to inform readers that children with disabilities were excluded from the intervention (e.g., de Chambrier et al., 2019; Starkey & Klein, 2000). This was surprising, since research consistently reports on the early achievement gaps that exist for children with or at-risk-of disabilities (Burchinal et al., 2011; Chu et al., 2019). Given the positive results of the current meta-analysis, informal learning environments may be a fruitful opportunity to enhance math outcomes for children who struggle with math. An extensive literature base reports on the effectiveness of school-based preschool to third grade math interventions for students with disabilities or math difficulty (Doabler et al., 2016; Dyson et al., 2015; Fuchs et al., 2009; Powell & Fuchs, 2010; Siegler & Ramani, 2009). Yet, achievement gaps in math for many students, especially those who begin school below the 10th percentile (Morgan et al., 2009), remain or widen throughout elementary school (Nelson & Powell, 2018). Training caregivers to implement nonintensive interventions can provide children who struggle with the additional learning and practice opportunities they require to be proficient in math. Thus, we urge researchers to include or specifically target children with or at-risk-of disabilities in their informal learning environment research. Researchers can also report findings separately for these children to build a greater understanding of what works, for whom, and under what conditions.

Quality of Reporting Intervention and Comparison Conditions

Studies need to present detailed information about the implementation of the intervention and the nature of the comparison condition (Gersten et al., 2005) to allow study replication with different populations. Replication is an essential component of the research cycle, especially when research in this field is still emerging (Makel & Plucker, 2014). Presenting this information also allows practitioners to bridge research to practice. Although we do not expect caregivers to access research studies to identify math activities to implement with children in the home, we expect trainers and organizations to access the information to determine best practices for supporting caregivers. Studies presented high-quality information related to interventionist training, recommended intervention dosage, and included math content and activities, similar to quality reviews of school-based math interventions (Jitendra et al., 2016).

An area of growth for this literature base is for authors to increase reporting related to the comparison condition and fidelity of implementation. Less than half of the time, studies reported the math content and activities used in the comparison condition, as well as the instructional time and nature of instruction in the comparison. Previous researchers have noted similar limitations of reporting fidelity of implementation (Jitendra et al., 2016; Liu et al., 2019). Providing researchers with information about the comparison condition is important in intervention research (including in meta-analyses of interventions) because the magnitude of intervention effects may be influenced by what instruction is provided in the comparison condition. Future researchers may consider collecting and reporting more detailed information about comparison conditions. Implementation fidelity is important from a research and practical standpoint. If interventions are found to be ineffective or to only produce negligible effects, researchers need to determine if the intervention was, in fact, not effective and requires modification, or if the intervention was simply not implemented as prescribed. Low fidelity of implementation can negatively influence expected treatment results (Hill & Erickson, 2019). Future researchers may review how studies measured fidelity of implementation in informal learning environments and determine if there are best practices for accurately capturing this information. Understanding more about how caregivers adhere to recommendations for interventions may reveal important barriers to fidelity and offer suggestions for improving the feasibility of interventions.

Quality of Reporting Outcome Measures

The effects reported from a meta-analysis on interventions rely on information derived from the outcome measures; thus, authors’ report of this information is desirable to conduct a high-quality review of the literature. Overall, studies met few QIs in this category. The essential QI of immediate posttest administration was met only 32% of the time, which was met less often compared to school interventions (Jitendra et al., 2016; Lafay et al., 2019). However, it should be noted that all but two studies that did not meet this criterion simply did not report posttest administration time. We encourage researchers to clearly report the time between the end of the intervention and the posttest administration so that readers are confident that the effects of the intervention are not due to competing hypotheses (Gersten et al., 2005).

We also promote delayed posttest data collection (or maintenance tests) so researchers can report on the long-term effects of interventions. Previous research on school-based interventions indicates that the effects of interventions fade beyond the prescribed intervention period (Bailey et al., 2020; Clements et al., 2013). It will be beneficial for researchers and practitioners to understand if fadeout effects also exist with interventions conducted in informal environments, and subsequently, researchers may consider investigating what level of ongoing support caregivers may benefit from to maintain intervention effects.

Given the focus of this meta-analysis on informal learning environments, we recorded when studies administered nonachievement measures that captured the effect of the intervention on other aspects that may also be important to consider, such as the HME (e.g., caregiver reported scales, frequency counts of activities), child or caregiver attitude or motivation related to math, and “math talk.” It was a positive finding that more than half of the studies included measures to capture the effect of the intervention on other aspects beyond achievement, especially given the evidence base that demonstrates the relationship between the HME and children’s math achievement (Daucourt et al., 2021) and caregivers’ beliefs about math (Sonnenschein et al., 2012). We recommend future researchers continue including nonachievement measures to capture the all-encompassing effects of the intervention.

Other desirable indicators that researchers may report include reliability and validity information of the outcome measures. Our quality review results indicate that reporting reliability and validity of measures is an area of improvement, similar to previous reviews of school-based math interventions (Park & Nelson, 2022). It is important for readers to have this information because tests that are used to determine the effectiveness of an intervention that also have low reliability or validity represent a threat to the internal validity of the study. Moreover, when researchers use unreliable assessments, the validity of the interpretations of the results of the intervention is questionable (Weathington et al., 2010).

Quality of Reporting Data Analysis and Results

The quality of reporting related to the data analysis plan and results was a strength of the included studies, which met 83% of the QIs in this category. Previous reviews of math interventions in school contexts reported inconsistent results for this category of Qis, with some studies reporting high scores (e.g., Jitendra et al., 2015) and others reporting low scores (e.g., Jitendra et al., 2016). One area of improvement for future research is for intervention studies to report ESs, as researchers and practitioners may consider the reported ES in selecting the interventions that are likely to yield the greatest gains for children and families.

Limitations

As with any study, there are limitations of this meta-analysis. First, our results are limited because only 25 studies (with 83 ESs) met inclusion. Although this reflects a developing area of study, our power to detect meaningful effects, particularly in meta-regressions with many predictors and interactions, was likely to be low. We also had strict inclusion criteria in order to conduct a meta-analysis; there are math intervention studies conducted in informal learning environments that were not represented in this meta-analysis (e.g., no math achievement measure). Second, the results of this meta-analysis are limited in the generalizability of results to different populations of students. Readers should take caution in generalizing results to older children as the majority of studies in this meta-analysis included preschool-aged children. Moreover, the results of this meta-analysis may not generalize to children with disabilities given that none of the included studies reported including children with or at-risk-of disabilities. Few studies also reported inclusion of children identified as DLLs. Furthermore, a common problem in meta-analysis research is missing information regarding participant demographics (Nelson, Park, et al., 2022); therefore, we were unable to conduct moderator analyses related to participant demographics (other than age). We encourage authors of future intervention studies to provide complete demographic information about the included sample so that future meta-analyses may investigate demographics as a moderator. Third, we focused this meta-analysis on achievement outcomes. Future researchers may consider investigating other, distinct outcomes such as “math talk,” literacy outcomes, or the frequency of activities conducted in the HME with separate analysis (Pigott & Polanin, 2020). Finally, the majority of the studies were conducted solely or partially in the home learning environment. Researchers have published math intervention studies conducted in other informal learning environments such as museums (e.g., Zippert et al., 2019) and grocery stores (e.g., Hanner et al., 2019); however, these studies did not meet inclusion criteria for a variety of reasons. The results of this meta-analysis may only generalize to interventions conducted, at least in part, in the home.

Conclusion

The results of this meta-analysis and quality review of early math interventions conducted in informal learning environments (viz., the home) broadly impact various education stakeholders by contributing to the evolution of this field—more specifically, fundamental understandings of how caregivers can support children’s math learning. The results of this meta-analysis report large effects on children’s math achievement outcomes. We offer several insights for future researchers and practitioners as they continue to identify best practices for supporting children’s math learning with minimal training and cost. Regardless of the specific math content (e.g., counting, shape recognition) or activities (e.g., iPad apps, children’s books), the essential component of the intervention that researchers and practitioners should concentrate on is the degree of caregiver training and follow-up support. As previously explained, this training and follow-up support does not require extensive time or resources, offering broad access to the potential benefits for children of ensuring their caregivers are equipped to support them. The results of this study also offer encouraging findings related to the improvement of the quality of research reporting in recent years, which is promising for the generalizability, transferability, and replicability of research in this area. Important next steps in this line of research include expanding the knowledge base to include diverse populations of students and enhancing the level of reporting in studies as recommended by study quality guidelines.

Supplemental Material

sj-docx-1-rer-10.3102_00346543231156182 – Supplemental material for A Meta-Analysis and Quality Review of Mathematics Interventions Conducted in Informal Learning Environments with Caregivers and Children

Supplemental material, sj-docx-1-rer-10.3102_00346543231156182 for A Meta-Analysis and Quality Review of Mathematics Interventions Conducted in Informal Learning Environments with Caregivers and Children by Gena Nelson, Hannah Carter, Peter Boedeker, Emma Knowles, Claire Buckmiller and Jessica Eames in Review of Educational Research

Supplemental Material

sj-docx-2-rer-10.3102_00346543231156182 – Supplemental material for A Meta-Analysis and Quality Review of Mathematics Interventions Conducted in Informal Learning Environments with Caregivers and Children

Supplemental material, sj-docx-2-rer-10.3102_00346543231156182 for A Meta-Analysis and Quality Review of Mathematics Interventions Conducted in Informal Learning Environments with Caregivers and Children by Gena Nelson, Hannah Carter, Peter Boedeker, Emma Knowles, Claire Buckmiller and Jessica Eames in Review of Educational Research

Supplemental Material

sj-docx-3-rer-10.3102_00346543231156182 – Supplemental material for A Meta-Analysis and Quality Review of Mathematics Interventions Conducted in Informal Learning Environments with Caregivers and Children