Abstract

In this essay, we explored the feasibility of utilizing artificial intelligence (AI) for qualitative data analysis in equity-focused research. Specifically, we compare thematic analyses of interview transcripts conducted by human coders with those performed by GPT-3 using a zero-shot chain-of-thought prompting strategy. Our results suggest that the AI model, when provided with suitable prompts, can proficiently perform thematic analysis, demonstrating considerable comparability with human coders. Despite potential biases inherent in its training data, the model was able to analyze and interpret the data through social justice perspectives. We discuss the applications of integrating AI into qualitative research, provide code snippets illustrating the use of GPT models, and highlight unresolved questions to encourage further dialogue in the field.

Keywords

As the era of artificial intelligence (AI) continues to unfold, the remarkable advancements in large language models (LLMs) have captivated the research and public community, with ChatGPT emerging as one of the hottest topics in contemporary discourse (OpenAI, 2022). Amid this technological revolution, qualitative research remains a vital methodological tool for uncovering people’s lived experiences and illuminating the need for social justice reform (Creswell & Creswell, 2017). This approach, characterized by its interpretive and naturalistic features, allows scholars to delve into and better understand complex phenomena, such as how social inequities play out in everyday life (Paris & Winn, 2014). However, the constraints inherent in qualitative research, such as the labor-intensive and time-consuming nature of data analysis, have led to scholarly inquiries into harnessing the potential of AI to facilitate the qualitative data analysis process.

In this essay, we explore the feasibility and comparability of employing AI for the analysis of qualitative interview data in equity-focused research. We used the generative pretrained Transformer 3 (GPT-3), a Transformer-based LLM, to perform thematic analysis of transcripts from in-depth interviews with first-year graduate students of color regarding their experiences during the COVID-19 pandemic. Although newer models, such as GPT-4o and GPT-o1, have emerged, GPT-3 remains the only version for which OpenAI has released detailed technical information as of October 2024 (Brown et al., 2020; OpenAI, 2023b). Understanding how GPT-3 operates not only sheds light on the mechanics of LLMs but also equips researchers with transferable knowledge and skills to interact with more advanced yet less transparent models. Because all current LLMs, including the latest versions, are based on the Transformer architecture, the methods and considerations we propose in this essay are broadly applicable across different models.

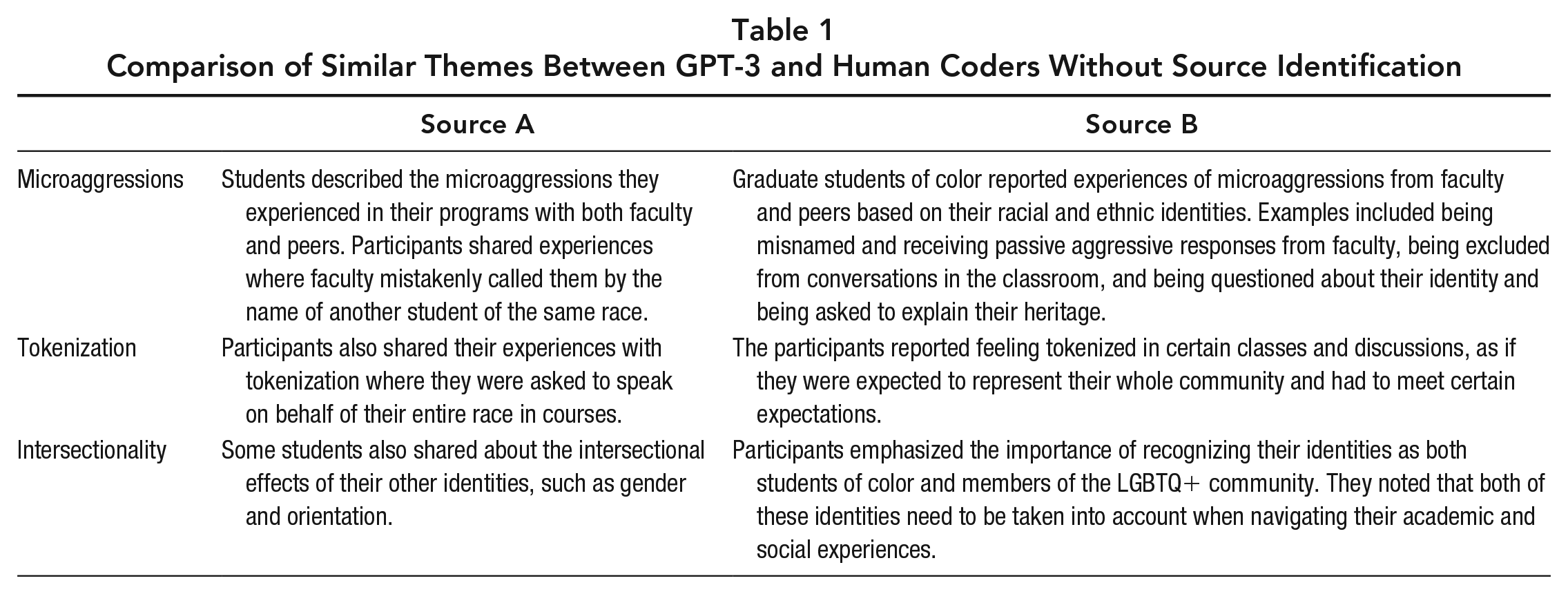

The interview transcripts were previously coded by human coders. To draw comparisons, GPT-3 was instructed to thematically analyze the same transcripts without prior knowledge of the earlier analysis process or results. Additionally, we invite readers to participate in the evaluation process that involves examining a table with parallel listings of AI and human coding results without identifying the source, encouraging readers to guess the origins of each coding. We begin with an introduction of LLMs and their capabilities and limitations, followed by an explanation of the procedures for using GPT-3 to analyze qualitative interviews, and the presentation of the outcomes. For researchers interested in applying these methods, we include code snippets that illustrate the process of using GPT models for qualitative analysis. Lastly, we discuss the potential implications of incorporating AI into qualitative research and its applications for equity-focused research.

What Are LLMs?

The integration of natural language processing (NLP) into social science research continues to garner scholarly attention (Isoaho et al., 2021). NLP is a discipline of research that focuses on the interaction between computers and humans through natural language, aiming to enable computers to understand, interpret, and generate human language in a meaningful way (Chowdhury, 2020). In recent years, the field of NLP has seen significant evolution, transitioning from a primary focus on analyzing word frequency to advancing the comprehension of word meanings through techniques such as word embeddings (Grimmer et al., 2022; Mikolov et al., 2013). Word embeddings map words into numerical vectors (sets of numbers) that capture the meanings of words based on their context and relationships with other words, enhancing how machines can interpret and process language (Mikolov et al., 2013).

Recent advancements in language models (LMs), particularly those built on Transformer architectures (Vaswani et al., 2017), have revolutionized the capacity of machines to process and generate natural language. LMs are algorithms designed to predict the next word in a sequence based on the words that come before it (Bengio et al., 2000). Traditional language models, such as n-grams, consider only a fixed number of previous words and are limited in capturing long-range dependencies and understanding the broader context of a sentence or paragraph (Jelinek, 1998). In contrast, LLMs are advanced LMs trained on vast amounts of text data with a significant number of parameters using deep learning architectures, such as Transformers. Transformers are neural networks that handle long sequences of text by focusing on important parts of the input through a mechanism called “attention,” allowing them to capture the context of words better than previous models (Vaswani et al., 2017). This architecture enables LLMs to learn complex patterns and structures in language and generate coherent and contextually accurate text across a wide range of tasks (Devlin et al., 2018). Although smaller scale LMs have been leveraged in diverse NLP tasks, including text classification and topic modeling (Devlin et al., 2018; Grootendorst, 2022; Sun et al., 2019), their deployment often requires task-specific model fine-tuning. This process demands substantial computational resources and expertise, which may limit the widespread application of LMs in social science research.

GPT-3, developed and released in June 2020 by OpenAI, stands as a landmark in the field of LLMs and exemplifies the scaling of Transformer architectures that form the foundation for more recent models. It is designed to predict the probabilities of the next word or sequence of words based on contextual information (Brown et al., 2020). With an unprecedented 175 billion parameters, GPT-3 pushes the limits of natural language understanding and generation. These parameters capture complex relationships and interactions within the data, allowing the model to represent language patterns in a highly sophisticated way (Brown et al., 2020). This advanced capability was achieved by extensively training GPT-3 on a vast and diverse corpus, including Common Crawl, WebText2, Books1, Books2, and English-language Wikipedia (Brown et al., 2020). The scaling of both model size and training data allows GPT-3 to perform a wide range of tasks without task-specific fine-tuning, marking a significant advancement in the field.

Since its introduction, GPT-3 has attracted significant scholarly attention across diverse fields and has demonstrated remarkable proficiency in executing a variety of tasks, often approaching or achieving human-level performance (Brown et al., 2020). For instance, researchers have found that GPT-3 excels in writing tasks that a typical undergraduate student would find challenging (Elkins & Chun, 2020). GPT-3 also demonstrates the ability to effectively tackle tasks commonly employed in psychology to evaluate human responses to real-world social situations, exhibiting performance equal to or surpassing that of human subjects (Binz & Schulz, 2023).

In recent years, the field of LLMs has experienced rapid evolution, with new model versions emerging in shorter intervals than before. However, despite these swift technological advancements, developers have become less inclined to release detailed model information due to increasing competition and concerns over safety and misuse (OpenAI, 2023b). Although the specific advancements associated with various newer models are largely undisclosed, there are several technical developments in the NLP field that have likely informed the newer models after GPT-3.

One significant advancement is reinforcement learning from human feedback, a technique where human feedback is used to fine-tune a model’s responses to better align with human values and preferences, which has been used in GPT-3.5 and GPT-4 (Ouyang et al., 2022). Other advancements include multimodal models that can process and generate multiple forms of data, such as text and images (OpenAI, 2023b); self-supervised learning techniques, in which models generate additional training data to enhance the training process (Xie et al., 2020); and mixture-of-experts models, in which multiple specialized “expert” networks handle specific parts of a task, enabling large-scale models to process information efficiently by focusing on specialized components (Fedus et al., 2022). Although these models generally perform better, studies have indicated that although more recent models are able to generate more human-like text, this can sometimes come at the cost of compromised performance on certain tasks (Chen et al., 2023; Ye et al., 2023).

Previous studies suggest that LLMs demonstrate multitasking abilities without model fine-tuning (Radford et al., 2019). However, achieving accurate multitask performance with LLMs requires appropriate prompting. Researchers have investigated various prompting strategies, such as few-shot prompts that provide the model with a few task demonstrations to facilitate learning within specific contexts (Brown et al., 2020) and chain-of-thought (CoT; Wei et al., 2022), a prompting strategy that provides the model with intermediate reasoning steps, thereby simplifying the process of task comprehension and completion. For specific examples of each prompting strategy, see the supplementary material available on the journal website.

Although LLMs demonstrate impressive capabilities, there are concerns regarding their potential limitations in qualitative data analysis. For example, previous research indicates that LLMs’ performance exhibits limitations in tasks requiring intricate reasoning steps, such as tasks that necessitate deep logical deductions or the understanding of nuanced implications (Shinn et al., 2023). Given the intricate logical reasoning and subjective interpretation involved in qualitative data analysis, it remains uncertain whether GPT-3 could effectively carry out this demanding task.

Moreover, because LLMs are typically trained on large corpora of human-generated text, they inevitably inherit biases deeply ingrained within human society, encompassing racial, gender, and religious prejudices (Bhardwaj et al., 2020; Brown et al., 2020). For instance, in an experimental setting where GPT-3 was prompted to describe racial groups, the resulting text tended to associate the “Black” group with lower sentiment words compared to other racial groups (Brown et al., 2020). Analogous biases were observed for gender and religious groups as well (Floridi & Chiriatti, 2020). Considering our objective to employ GPT-3 in analyzing data for equity-focused research, it remains uncertain how these inherent biases would impact GPT-3’s analysis and whether GPT-3 could interpret the text from an equity perspective.

Qualitative data analysis software, such as Nvivo and Dedoose, has been in use since the late 1980s (Creswell & Poth, 2018). These tools primarily support the mechanistic and management facets of qualitative analysis, and the intricate analytical thinking is still done by human researchers (Marshall & Rossman, 2015). LLMs, in contrast, with their remarkable natural language understanding and generation capabilities, have the potential to be leveraged for tasks such as theme identification or preliminary interpretations. Furthermore, although applications of GPT models have been demonstrated in the realm of quantitative data analysis (e.g., Code Interpreter; OpenAI, 2023a), with their performance anecdotally comparable to junior data analysts, their deployment in the field of qualitative research remains underexplored.

Recent initiatives have begun to examine the integration of NLP technologies in qualitative data analysis, although these endeavors often require substantial data preprocessing. For instance, Gamieldien et al. (2023) implemented NLP cluster analysis to categorize initial text data into clusters based on meaning similarities, subsequently using GPT-3.5 to assign thematic labels to these groups. Hamilton et al. (2023) manually identified key quotations and then used ChatGPT to identify themes in these statements. However, these data preprocessing steps might simplify the task for the model, potentially impacting the complexity of the analysis. Moreover, Siiman et al. (2023) explored the use of ChatGPT in both deductive and inductive coding by interacting with ChatGPT in a question-and-answer format to facilitate the data analysis process. These studies have all conducted comparisons between LLMs and human performances, arguing for the comparative efficacy of LLMs alongside human analysts, indicating LLMs’ potential in qualitative data analysis.

The current study aims to investigate GPT-3’s capacity to perform thematic analysis of interview data concerning the experiences of students of color during their first year of graduate studies amid the COVID-19 pandemic. We chose this task over deductive tasks, such as text classification, or inductive tasks, such as topic modeling, because these latter approaches fundamentally rely on dimensionality reduction of text data (Grootendorst, 2022), thus requiring less interpretative depth and nuanced understanding of the data’s contextual and thematic complexities from the model. Thematic analysis, in contrast, requires more complex natural language understanding and generation tasks, such as interpreting metaphors, analyzing arguments, or identifying the sentiment and intentions behind the text. In our study, we used natural language to prompt GPT-3 to conduct thematic analysis without model fine-tuning or data preprocessing. The specific questions we aimed to address are as follows:

Research Question 1: How effectively can GPT-3 identify and interpret themes in qualitative interview data when supplied with suitable prompts?

Research Question 2: To what extent can GPT-3 interpret the equity-focused interview data from a social justice perspective?

Research Question 3: How does GPT-3’s analysis of qualitative data compare to the analysis conducted by human coders?

Data Analysis Using GPT-3

Data Sources

The interview data employed in this analysis were derived from a broader investigation that explored the experiences of first-year graduate students of color during the COVID-19 pandemic. Current research has been lacking in terms of understanding the crucial first-year experiences in graduate education, particularly when it comes to students of color (Benjamin et al., 2017). The aim of the larger study was to understand both the challenges faced and the resilience demonstrated by these students, highlighting their inherent value and potential from an asset-based perspective (Ladson-Billings, 2009). This data set was chosen for its direct relevance to equity-related concerns, capturing narratives from students of varied racial and ethnic identities. Such diversity is crucial for evaluating GPT-3’s capability in processing complex and nuanced narratives.

In the larger study, researchers conducted one-on-one semistructured interviews lasting between 60 minutes and 90 minutes. Participants included individuals from diverse racial and ethnic backgrounds, including Latinx, Asian American Pacific Islander, African American, and Indigenous. Members of the research team coded and analyzed the interview data guided by critical race theory (CRT), a theoretical perspective that posits race as a socially constructed concept that is used to perpetuate racial inequalities in society (Ladson-Billings & Tate, 1995). The central feature of CRT is that racism is a normative part of life for people of color, which offers a racial equity foundation to analyze the data.

Following Creswell and Poth’s (2018) process, two members of the research team conducted a thematic coding of the data. First, they identified “narrow units of analysis” in the form of quotes that described participants’ usage of some of the tenets of CRT (Creswell & Poth, 2018). This horizontalization of data treated each piece of evidence as equally significant, facilitating an authentic representation of the participants’ experiences (Creswell & Poth, 2018). Subsequently, they used those quotes to develop “broader units,” or themes, to understand the phenomenon under examination (Creswell & Poth, 2018). Finally, those quotes and themes were used to write detailed descriptions that captured the essence of the phenomenon (Creswell & Poth, 2018). This included showcasing what the participants experienced and how they experienced the phenomenon in relation to its conditions, situations, and contexts.

Analytic memos were written after each interview to document initial thoughts and questions. The two researchers first analyzed the data independently. Through regular meetings, they compared coding, discussed and resolved discrepancies, and developed a preliminary codebook. The codebook comprised five codes related to students’ racial experiences, and no child codes were used (Table 3). Using this codebook, they performed deductive coding and achieved an interrater reliability of .80 (Brennan & Prediger, 1981). The codebook was iteratively refined throughout the study, all within the MAXQDA data analysis software.

The utilization of GPT-3 incurs costs based on the length of the document. It is important to note that the current investigation is not intended to be a comprehensive study but, rather, a preliminary exploration. Given the exploratory nature of this study, the analysis was limited to the participants’ responses to one interview question. This question, which probed the influence of race on the experiences of first-year graduate students of color (i.e., “How has race affected your experience as a first-year Master’s/Ph.D. student?”), represents a primary focus of the investigation.

Ten participants’ responses to this interview question were extracted from the original transcripts. The transcripts were highly colloquial, featuring frequent stuttering and false starts, thereby adding complexity to the data analysis task for GPT-3. The following is an excerpt from the transcript: So I’m used to that throughout my life, and I think except with family, except with a couple friends that like if I’m going to work if I’m, I was in undergrad when I was in high school, like there’s some, but it’s not that many answers is something that we used to I think, I think a class at [University Name] where five out of eight people were Latino, three were Guatemalan, so they, I don’t know, and it was really interesting.

Analysis Results

Prior to delving into the analysis process, we want to present the results conducted by human coders and GPT-3. Table 1 delineates the juxtaposition of similar findings by the two sources. Notably, whether the source is from human coders or GPT-3 is deliberately withheld from the main text. We invite readers to independently identify the sources of the text and then refer to the comparison section to verify their conclusions.

Comparison of Similar Themes Between GPT-3 and Human Coders Without Source Identification

Analysis Process

GPT-3’s analysis process comprises two sequential phases: prompt testing and data set analysis. To optimize GPT-3’s performance, we used a zero-shot CoT prompting strategy (Kojima et al., 2022). This strategy breaks down complex tasks into intermediate reasoning steps, guiding GPT-3 through each phase of analysis. In a zero-shot setting, GPT-3 performs the analysis without any task-specific fine-tuning or example demonstrations, relying solely on general instructions provided in the prompt. The CoT approach, which can be as minimal as a phrase such as “let’s think step by step” (Kojima et al., 2022) or as detailed as a sequence of specified steps, helps structure the prompt in a way that supports logical, step-by-step reasoning. This combination allows GPT-3 to carry out in-depth analysis on complex data sets without prior specialized training. Additionally, we used Davinci, the most advanced model offered by GPT-3, to further enhance our analysis.

The prompt testing phase was executed via the GPT-3 Playground, designed to facilitate user-friendly interaction with the model. The first participant’s responses were used during the prompt testing phase. Throughout the testing phase, considerable focus was directed toward refining the prompts to elicit precise and comprehensive responses from GPT-3.

Altogether, we had five trials (see Figure 1). In Trial A, a basic prompt led to a direct theme extraction, albeit lacking depth and detailed context. Notably, we did not observe initial biases in GPT-3’s generated contents even without equity-focused prompts. Considering GPT-3’s design to generate relevant text based on the input and the prompt, this result is not entirely surprising. Extracting themes from qualitative data, especially when the data are explicitly about racialized experiences, might inherently steer the model toward a more neutral or “on-topic” response. In Trial B, we used the zero-shot-CoT prompting strategy to direct GPT-3 to adhere to specific procedures. This resulted in themes that were richer and provided context, including instances such as incorrect name calling. Trial C integrated CRT into GPT-3’s analytical framework. With CRT as a guide, the model’s thematic extraction incorporated racial nuances, highlighting, for instance, that the incorrect name calling was from a White faculty member. For Trial D, we endowed GPT-3 with a professional identity, prompting it to operate as a professor specializing in racial equity. This role-play enhanced GPT-3’s output in terms of detail and precision, revealing specifics such as faculty members confusing students’ racial identities.

Inputs and outputs of GPT-3 with varied prompts.

In Trial E, we integrated qualitative analysis procedures from Merriam and Tisdell’s (2015) qualitative research textbook into the prompt and applied this prompt to the larger data set. The prompt was structured with an explicit research question, participant specifics, data source, and a structured five-step theme extraction procedure inspired by Merriam and Tisdell (2015). The analysis provided a broader perspective on microaggressions experienced by graduate students of color, encompassing diverse incidents, from misnaming to conversation exclusions to questioning about identity, and supporting them with direct quotes. This progression underscores the considerable effect of methodologically sound prompts on GPT-3’s capabilities in thematic analysis. This prompt enabled GPT-3 to produce results that were in line with our research aims (Grimmer et al., 2022).

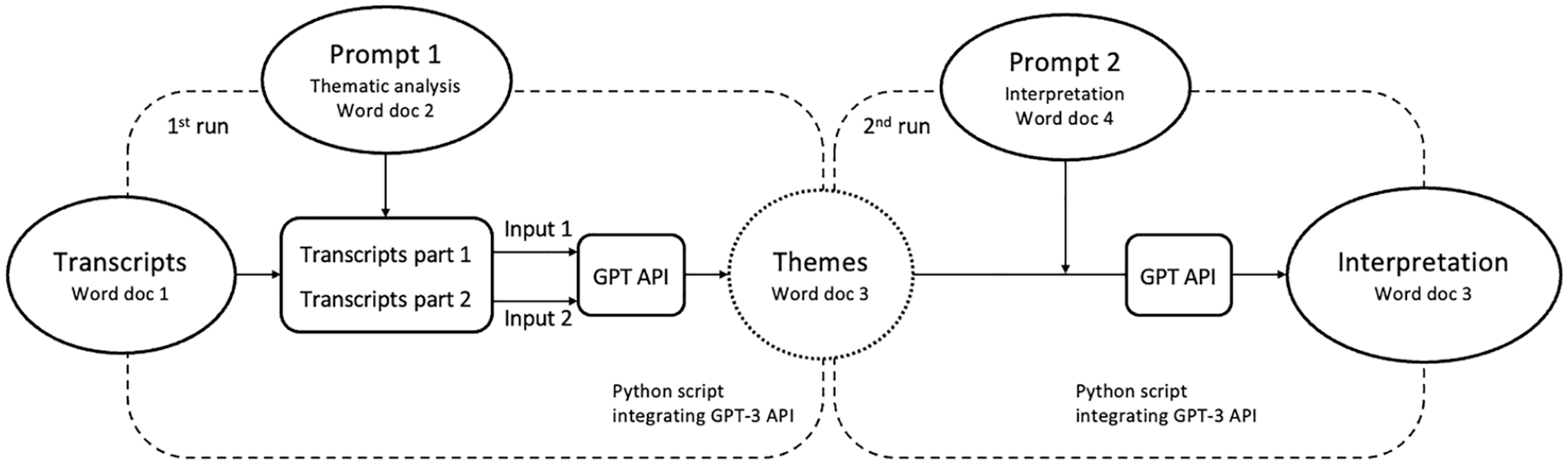

To analyze the larger data set, we developed a Python script to integrate GPT-3 Application Programming Interface (API). GPT API is an interface that allows developers to incorporate GPT models into their own applications. By using the API, we can efficiently process vast amounts of data with customized prompts, maintain greater control over model updates, and ensure data privacy and security. At the time of this analysis, the cumulative inputs and outputs of a single GPT-3 utilization were limited to approximately 3,000 words. Consequently, our transcript, which contained more than 4,000 words, was divided into two parts for analysis.

To prepare the data for analysis, we saved the transcripts and the validated prompts into two distinct Word documents (see Figure 2). Upon executing the Python script, it first calculated the total word count in the transcript document and then identified a suitable paragraph number to divide the text roughly in half. After splitting, the script merged the prompt with the first and second halves of the transcript, creating two separate inputs. These inputs were then processed by the GPT API, which generated themes that were subsequently stored in a new Word document. The extracted themes were then manually scrutinized, with similar themes combined due to the transcript being analyzed in two portions.

GPT-3 data analysis workflow.

It is worth noting that with each execution of the script, themes extracted by GPT-3 exhibit slight variations, characterized by recurrent themes and novel themes exclusive to a particular run. Such variations are not entirely surprising in unsupervised tasks in NLP because there is hardly only one correct way to read text (Grimmer et al., 2022). Furthermore, upon reflecting on the human analysis process, it became evident that this process is far from linear. Particularly during the initial stages, each examination of the transcript may reveal emergent themes.

Following the extraction of themes, we reran the script with a new prompt to guide GPT-3 in interpreting the identified themes through the lens of CRT. For this phase, the input comprised the previously generated themes combined with the new prompt, and the output was GPT-3’s interpretation of the identified themes through the lens of CRT.

Comparisons Between Human and GPT-3 Analysis

Altogether, GPT-3 identified six themes from the data, including microaggressions, implicit racial bias, intersectionality, tokenization, pressure to perform, and lack of representation. It supported these themes with relevant examples and quotes (see Table 2). Despite not having explicit instructions about CRT, GPT-3 managed to use racialized terms, such as “tokenization,” underscoring its ability to handle race-related topics. GPT-3 also generated a rational interpretation through the lens of CRT based on the themes it derived (see Table 2). In the interpretation, GPT-3 demonstrated a deep understanding of CRT themes despite the potential biases it possesses due to its training data, which may not adequately represent the lived experiences and perspectives of marginalized communities. Its ability to contemplate racial justice perspectives demonstrates its potential for use in studies focused on equity.

Themes, Example Quotes, and Interpretation by GPT-3

Human coders discerned five themes that were related to students’ racial experiences, including microaggressions, intersectionality, tokenization, racial tension, and racial stereotypes. These themes and their representative quotes can be found in Table 3.

Themes and Example Quotes by Human Coders

Comparing the human and GPT-3’s themes revealed significant congruity, with three themes—microaggressions, tokenization, and intersectionality—captured accurately by GPT-3. Although labeled differently, both the themes pressure to perform by GPT-3 and racial tension by human coders conveyed a similar underlying sentiment of burdens related to racial identities. Interestingly, GPT-3’s (Source B in Table 1) descriptions and examples closely matched those of the human coders despite not having access to human coding or writing. An instance of this is the microaggressions theme, where both human coders and GPT-3 identified instances of name calling to validate their analyses.

Nonetheless, certain limitations were observed in GPT-3’s analysis. For instance, some of the example quotes selected by GPT-3 did not fully represent their respective themes. Specifically, the quote chosen to represent intersectionality did not align completely with the theme (Table 2). This quote primarily focused on the participant’s experience with microaggressions rather than addressing the interconnected nature of multiple social identities. A more representative quote can be found in the human codebook, which more accurately illustrated the coexistence of the participant’s queer and racial identities (Table 3). Occasionally, GPT-3’s analysis tended to overweight certain participants’ opinions, such as the prevalence of microaggressions, even when one student reported not being sure about the racial influence on their graduate experience. Although these issues were identified, it is worth noting that such limitations are not exclusive to AI and can also occur in human qualitative analyses. Addressing these limitations could potentially be achieved by employing more precise and carefully constructed prompts.

Upon reflecting with the human coders about their own coding and GPT-3’s, consensus was reached that both the human coders and GPT-3 have captured important themes from the transcripts, albeit with certain omissions. For example, human coders discerned the theme associated with racial stereotyping, which was missed by GPT-3 but is salient in describing students’ experiences. Conversely, GPT-3 identified subtle themes that human coders overlooked, such as the lack of representation theme, which highlights feelings of isolation and insufficient support due to the underrepresentation of students of color in academic environments. Such observations underscore how AI can serve as a valuable supplement to human coding, ensuring a more comprehensive and sophisticated analysis of qualitative data.

Evaluating the quality of thematic coding could be challenging, primarily because there is seldom a single “correct” way to interpret text (Grimmer et al., 2022). As proposed by Grimmer et al. (2022), hand coding is the most straightforward validation approach for this kind of unsupervised learning. If the model can replicate phenomena of interest identified by human coders, the model is deemed validated. In congruence with this rationale, we compared the results produced by GPT-3 with those generated by human coders and ascertained that the salient themes of interest have been identified by GPT-3.

We also implemented an informal Turing-style test, a traditional measure of machine intelligence, to assess GPT-3’s performance (Grimmer et al., 2022; Rodriguez & Spirling, 2022). The core principle of the Turing test is that an inability to distinguish between human and machine outputs signifies a certain level of machine competency (Turing, 1950). If reviewers could not differentiate between GPT-3’s coding and human coding, the model is deemed to have achieved human-level performance. The reviewers, consisting of professors and doctoral students in the education field, were unable to discern the analysis results generated by human coders from those produced by GPT-3. The inability of the reviewers to differentiate the origins of the analysis serves as an indicator of GPT-3’s human-comparable performance. This implies that a researcher employing GPT-3 for this analysis can expect outcomes similar to what a human coder might produce.

It is worth mentioning that GPT-3 used less than 1 minute to accomplish the entire task, from processing the transcript to finalizing the interpretation, and cost less than 20 cents. In comparison, the equivalent amount of text data usually requires around 20 hours of human processing, equivalent to an average graduate student researcher’s salary of $600 at our institution. Importantly, GPT-3 can scale up to process double or triple the data in a similar time, whereas human processing would need significantly more time for similar volumes.

The Path Forward

In this essay, we have demonstrated the potential of GPT-3 to perform the intricate task of thematic analysis of qualitative data, engage in equity-focused interpretation of data, and exhibit a high level of comparability to human coders. These findings highlight the potential for LLMs and other AI techniques to be integrated into the qualitative data analysis process. However, it is important to emphasize that this integration is envisioned not as a substitution but as a complement to human expertise, aiming to leverage AI’s capabilities while ensuring human insight and ethical judgment remain at the core of the research process. Consequently, in the subsequent section, we discuss some potential applications of AI in qualitative data analysis and put forward several broader questions that, in our opinion, have not yet been exhaustively explored, with the hope of stimulating discussion in the field.

One consideration is how AI can be used in equity-focused research. The potential for AI to contribute to equity-focused research is both promising and complex. LLMs’ ability to analyze vast amounts of textual data enables the identification of patterns of bias and inequity hidden within extensive textual resources, such as legislation and policy documents, which could be challenging for human analysts to discern. Our findings, albeit preliminary, suggest that GPT-3 can manage data related to issues of race, equity, and bias, ensuring the representation of marginalized perspectives. However, the absence of bias in our analysis should not obscure the reality that biases in AI models are often subtle and context dependent. LLMs’ performance can vary depending on the task, the prompts, and the nature of the input data.

Although inherent model biases present challenges, they might not create insurmountable barriers but rather a critical consideration. Importantly, ongoing research is actively addressing biases in AI models. Notably, initiatives such as reinforcement learning from human feedback, which fine-tunes models with human feedback to align them more closely with human values, show promise in reducing biased outputs of AI models (Ouyang et al., 2022; Zhang et al., 2018). This evolving landscape of AI development holds promise for increasingly sophisticated models that are less biased and more aligned with equity goals. As we venture into future research, the responsibility is on education scholars to stay informed about technological advancements while continuing to examine the ethical dimensions of AI integration. By embracing a balanced perspective, we encourage future research to recognize AI’s potential to contribute to equity-focused research while also being critically aware of its limitations.

The second consideration pertains to AI’s potential to increase transparency in qualitative research. Qualitative research has been the subject of scrutiny for its subjectivity, primarily due to the heavy reliance on the researcher’s interpretation of data (Creswell & Creswell, 2017). Nonetheless, the researcher’s role is a vital and indispensable component of the qualitative research process (Boveda & Annamma, 2023; Orellana, 2019). The incorporation of AI, such as GPT-3, could potentially enhance the qualitative research process by rendering the analysis process more transparent. Even though the inner workings of these models are not fully clear yet and their outputs can exhibit variations, documenting prompts may shed light on analytical trajectories, possibly augmenting process transparency.

The third consideration points to the potential application of large data sets in qualitative research (Mills, 2018). The qualitative analysis process can be labor-intensive, posing challenges for the collection and analysis of extensive data sets. However, qualitative research offers value in humanizing research and amplifying individual voices on a broader scale (Denzin & Lincoln, 2011). With larger data sets, a more diverse range of participants’ voices can be included, potentially enriching the research findings. By integrating more experiences and voices from individuals, qualitative research could perhaps exert greater influence in the policymaking process, shifting the focus from solely relying on numerical data to incorporating a deeper understanding of individual narratives. LLMs are designed as multitask learners, which means that once they are trained, they can be directly applied to various tasks without additional training or fine-tuning. While recognizing the intense effort qualitative data collection demands, with continuous human monitoring to ensure accuracy and relevancy, we find it intriguing to contemplate the potential employment of AI to boost the efficiency of analyzing large-scale qualitative data sets.

Nonetheless, there are certain questions that require our attention and warrant further investigation. First, in the current exploration, we did not systematically examine how varying prompts might lead GPT-3 to produce biased results. Although our focus was not on prompts that might induce bias, we recognize the critical nature of such an inquiry when using LLMs in qualitative research. Future studies should continue research on how prompts influence LLMs’ responses, especially concerning the emergence of biases. This will help us better understand how subtle variations in language can sway the output and impact the conclusions. Furthermore, given the sensitivity of model performance to prompts, it is recommended to validate the prompts prior to each implementation. Such a validation process can offer greater control over data analysis and can, to a degree, prevent biased or skewed results.

Second, in discussing the ethical implications of using LLMs for qualitative coding, particularly in the context of social justice and equity, we must recognize that LLMs are trained on vast amounts of human-generated text from the internet and other sources. As such, LLMs’ outputs might perpetuate harmful stereotypes or biases present in the training data. This underscores the risk of undue reliance on their outputs, potentially sidelining human judgment. Therefore, a careful approach should be taken when relying solely on AI models’ coding for qualitative analysis. Although such models can offer valuable insights, the possibility of misinterpretation or bias remains. Thus, researchers should always approach the outputs of such models with a healthy degree of skepticism and critically interpret their results.

Third, the field of AI is advancing at an unprecedented pace, with new models being released rapidly. However, as competition intensifies, it is becoming less common for developers to disclose detailed model information, such as training data and model parameters, as seen with models such as GPT-3 (OpenAI, 2023b). This lack of transparency introduces potential ambiguities in the evaluation of model behaviors, creating challenges for researchers who seek to understand, critique, or improve existing technologies. The limited access to model specifications also impacts ethical considerations because researchers cannot fully assess potential biases, safety risks, or environmental impacts embedded in these systems. Education researchers exploring AI in their research should remain vigilant about these limitations, advocating for transparency and rigorously evaluating models to ensure that their applications promote equity, reliability, and ethical responsibility in education contexts.

In future applications of LLMs in qualitative data analysis, we offer several recommendations. First, our zero-shot CoT prompting strategy informed by Merriam and Tisdell (2015) demonstrated efficiency in our analyses. We suggest that researchers could build on this approach or draw inspiration from established qualitative data analysis frameworks to develop prompts that suit their specific research goals. Second, utilizing LLMs for qualitative analysis necessitates an interdisciplinary approach that encourages collaboration between qualitative and quantitative researchers. Although LLMs operate through quantitative methods by mapping linguistic meanings into numerical representations, qualitative data analysis requires a nuanced understanding of contextual and interpretive dimensions of language, capturing subtle variations in meaning, intent, and cultural significance that may not be easily quantifiable. Fostering effective communication and integrating these varied perspectives can significantly enhance the research process (Ho, 2024).

Third, it is important to understand the difference between using ChatGPT and accessing GPT models directly via API for research purposes. ChatGPT operates as a chatbot, guided by system prompts that shape its responses, and these prompts are frequently updated by OpenAI without user notification. These updates can introduce unexpected changes that may complicate the research process. In contrast, using GPT models directly through an API provides researchers with greater control over prompts and context, ensuring consistent model behavior under the same conditions. For research requiring reliability and reproducibility, we strongly recommend using the API. To support researchers in this approach, we include code snippets in the supplementary material (available on the journal website) to guide API usage of GPT models.

Finally, we must acknowledge that the application of LLMs or AI in social science research is still in its infancy. Despite our optimistic outlook on the potential use of these models to assist in qualitative data analysis, it is essential to recognize that they are far from being a definitive solution. Our findings need further empirical validation, employing data sets across diverse topics. Moreover, the “keep humans in the loop” principle should always remain central to this methodology (Grimmer et al., 2022, p. 299). Even as we harness the power of AI, we need human expertise for interpretation, decision-making, and ethical guidance. This balance between machine capability and human judgment is a stepping stone toward the equitable use of AI in research. As we continue to navigate this burgeoning field, it is imperative to stay humble (Grimmer et al., 2022) and vigilant, constantly question, and relentlessly explore, ensuring that we evolve alongside these rapidly developing technologies.

Supplemental Material

sj-pdf-1-edr-10.3102_0013189X251314821 – Supplemental material for The Feasibility and Comparability of Using Artificial Intelligence for Qualitative Data Analysis in Equity-Focused Research

Supplemental material, sj-pdf-1-edr-10.3102_0013189X251314821 for The Feasibility and Comparability of Using Artificial Intelligence for Qualitative Data Analysis in Equity-Focused Research by Yan Jiang, Lillie Ko-Wong and Ivan Valdovinos Gutierrez in Educational Researcher

Footnotes

Notes

Authors

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.