Abstract

In 2015, Wisconsin began mandating the ACT college entrance exam and the WorkKeys career readiness assessment. With population-level data and several quasi-experimental designs, we assess how this policy affected college attendance. We estimate a positive policy effect for middle/high-income students, no effect for low-income students, and greater effects at high schools that had lower ACT participation before the policy. We further find little evidence that being deemed college-ready by one’s ACT scores or career-ready by one’s WorkKeys scores affects college attendance probabilities. Pragmatically, the findings highlight the policy’s excellence and equity consequences, which are complex given that the policy has principally helped advantaged students. Theoretically, the findings shed light on students’ (dis)inclinations to update educational beliefs in light of new signals.

Keywords

Nationally (Belley & Lochner, 2007) and in Wisconsin (Smith & Hirschl, 2022), a wide gap in 4-year college attendance exists between low-income and higher-income students even when comparing students with comparable academic achievement. Economic disparities in 4-year college attendance have motivated many high-cost interventions: About 80% of precollege outreach programs in the United States target economically disadvantaged students (Swail & Perna, 2002). These programs tend to be high-touch with relatively long durations, making them expensive on a per-student basis. Hearteningly, though, this intensive approach is typically effective at boosting disadvantaged students’ college attendance rates (Harvil et al., 2012; Herbaut & Geven, 2020).

Searching for lower-cost interventions that can exist simultaneously with more intensive programs, researchers have tested the postsecondary effects of very short-duration interventions, such as Free Application for Federal Student Aid nudges, that disseminate college-related information to high school students (e.g., Bergman et al., 2019; Bird et al., 2017; Gurantz et al., 2021; Hoxby & Turner, 2013; Hyman, 2020; Oreopoulos & Dunn, 2013), generally finding smaller—although often still significant—effects compared to more intensive interventions (Herbaut & Geven, 2020). Most experimental studies of information interventions relate to financial information. However, the field of education has recently begun to accumulate quasi-experimental evidence about whether students’ postsecondary intentions adapt to new information about their academic achievement. Studies suggest that conferring somewhat arbitrary labels like “college-ready” (Foote et al., 2015) and “advanced” (Papay et al., 2016) on economically disadvantaged students boosts their probabilities of 4-year college attendance despite having little impact on economically advantaged students.

In the 2014–2015 school year, Wisconsin implemented a statewide policy mandating that all 11th-grade students in Wisconsin public schools take two sets of standardized exams that were previously optional: the ACT, a set of exams designed to assess college readiness and academic achievement generally, and the ACT WorkKeys assessments (henceforth referred to as “WorkKeys”), a set of exams designed to assess career readiness. WorkKeys “is a job skills assessment used to help individuals prepare for the workforce and help employers select, hire, train, develop, and retain a high-performance workforce. . . . It [focuses] on the direct application of basic skills to solving problems” (Department of Public Instruction, 2012, p. 139). WorkKeys measures workplace skills in three main areas: applied math, graphic literacy, and workplace documents. These areas are meant to represent foundational skills key to success in most jobs.

Both the ACT and WorkKeys send relevant signals to students. Students who attain a passing score on each of the main WorkKeys exams earn a National Career Readiness Certificate (NCRC), a “portable, evidence-based credential that certifies the essential skills for workplace success” (ACT, 2020b). Similarly, ACT deems students “college-ready” in a subject if they attain the benchmark score on the corresponding ACT subtest. For more details on these exams and the college-ready and career-ready signals they send, see Appendix A (available on the journal website).

Justifying mandatory WorkKeys, a budget request by the Wisconsin Department of Public Instruction (2012) invoked market benefits, with students better able to assess their readiness for jobs and employers better able to identify qualified job candidates. Justifying mandatory ACT, the same budget request invoked increased access for low-income students. In an interview, then State Superintendent of Public Instruction Tony Evers also invoked the ability of mandatory ACT to identify students who are well prepared for college but had not previously thought so themselves (DeFour, 2012).

Because inducing students to take the ACT is relatively low-touch and short in duration, it is inexpensive on a per-student basis. Some have argued that it is one of the least costly ways to increase college attendance rates and narrow economic gaps in college attendance (Dynarski, 2018). Thus, such interventions may present a coveted opportunity to promote equity and excellence simultaneously because doing so might disproportionately help economically disadvantaged students without hurting economically advantaged students.

However, the confluence of the two mandates in Wisconsin presents a potential tension: Mandatory WorkKeys may generate signals that the opportunity costs of college are higher than a student would have otherwise thought, whereas mandatory ACT may generate signals that a student is more academically ready for college than expected. In particular, students scoring well on the WorkKeys assessments may receive a signal that they are prepared to take a good career that does not require a college degree, thus dissuading them from continuing their education. In contrast, students scoring well on the ACT may receive a signal that they are prepared to succeed in college, thus encouraging them to continue their education. Therein lies the potential tension of an intervention that simultaneously mandates WorkKeys and the ACT.

Our study disentangles the aforementioned possibilities. First, we assess the impact of Wisconsin’s policy. Then, we partially tease out the role of each component of the policy by estimating how the policy effects differ at high schools with low versus high ACT participation before the policy. Finally, we further tease out the role of each component by testing the effects of sending potentially contradictory signals to students. All analyses consider heterogeneity by students’ levels of economic advantage. Overall, the study evaluates how viably Wisconsin’s unique mix of mandates can promote equity and excellence.

Bayesian Learning Theory

Bayesian learning is one mechanism through which the new testing regime may affect students’ college attendance behavior. According to Bayesian learning theory, each individual acts based on a set of beliefs that continually update as the individual acquires new information (Morgan, 2005; Piketty, 1995). Breen (1999) showed that the theory fits in more general rational actor models of educational continuation. Under Breen’s application, individuals use their prior beliefs to approximate the costs and benefits of continuing their education, use these approximations to maximize utility, learn new information from their utility-maximizing actions, update their beliefs according to Bayes’s rule, and start the process over with their new set of beliefs about costs and benefits. If individuals indeed make decisions about educational continuation in this way, then we expect to see educational continuation probabilities increase any time individuals learn information that strengthens their beliefs about potential success at the next level of education because this information will cause an upward Bayesian update in the individuals’ approximations of benefits. Conversely, we expect to see educational continuation probabilities decrease any time individuals learn information that strengthens their beliefs about the desirability of noncontinuation options because this information will cause an upward Bayesian update in the individuals’ approximations of the opportunity costs associated with educational continuation.

Bayesian Learning Theory and ACT College-Ready Signals

A positive effect of mandatory ACT on 4-year college attendance would be consistent with Bayesian learning theory. Students induced to take the ACT may receive information about their academic skills that allow them to update their expected probabilities of college success. Imagine an academically skilled 11th grader who does not consider himself to be capable of succeeding in college. Assume that this student would not take the ACT in the absence of the policy. The policy, then, induces him to take the test and, in turn, receive a highly explicit signal that he is prepared to succeed in college. The student may then update his impressions of his abilities, now considering himself capable of succeeding in college, and in turn feel motivated to attend college. In addition, college-ready signals may be especially beneficial to low-income students because their educational decisions and expectations are more sensitive to achievement signals than are those of higher-income students (Foote et al., 2015; Karlson, 2019; Papay et al., 2016), perhaps because the stakes of whether one succeeds in college are higher for low-income students, whose parental economic safety nets are limited (Karlson, 2019).

Bayesian Learning Theory and WorkKeys Career-Ready Signals

A negative effect of mandatory WorkKeys on college attendance would be consistent with Bayesian learning theory. The career-ready signal from an NCRC may discourage college attendance by encouraging direct workforce entry. Imagine a student who does not think she has the necessary skills yet to attain a desired job. Assume that this student would not take the WorkKeys in the absence of the policy. The policy, then, induces her to take the test. If she attains passing scores in all three areas, she earns an NCRC, which may lead her to update her assessment of her career readiness. This could cause her to look into career options—for example, some bookkeeping, sales, and customer service jobs—that require WorkKeys-related skills but do not require college. In turn, she may feel motivated to seek a satisfactory job without spending money and energy on college. Such an effect from the NCRC hinges on the NCRC being salient. The field lacks evidence about the salience of NCRCs specifically, but with respect to WorkKeys scores generally, one study showed that 53% of students agreed or strongly agreed that “My WorkKeys results caused me to consider career options I had not thought about before” and that 74% agreed or strongly agreed that “After seeing my WorkKeys results, I feel confident that I have the skills to be successful in a career” (Schultz & Stern, 2013, g. 163). In addition, the foregoing effect may be especially substantial among low-income students, who are especially inclined to report skipping college on account of preferring or needing to make money right out of high school (Bozick & Deluca, 2011) and more sensitive to other indicators that work is immediately available after high school, such as high local employment rates (Bozick, 2009). 1

Related Studies

Mandatory College Entrance Exam Studies

Since Illinois and Colorado implemented mandatory college entrance exam policies in 2001, several states have followed suit, and a handful of studies have assessed the impacts of these policies. Some studies have found positive effects of mandatory ACT and SAT policies on 4-year college attendance rates using aggregate data that prevented researchers from examining effect heterogeneity based on individual characteristics (Goodman, 2016; Klasik, 2013). Another study uses College Board data to show that Maine’s mandatory policy increased 4-year college attendance rates (Hurwitz et al., 2015).

The present study is most similar to Hyman’s (2017) work, which evaluated Michigan’s mandatory ACT policy implemented in 2007. He used student-level data and found a modest positive effect on 4-year college attendance. Several contextual features of our study make it nonredundant with Hyman’s very informative study. Our investigation reflects a motivation to explore these nonredundancies. First, we assess the effects of a unique policy that jointly mandated ACT and NCRC-bearing WorkKeys: Although Michigan and Illinois mandated portions of the WorkKeys, they did not mandate the full set of assessments needed for a student to be in the running for an NCRC, and thus, explicit career-ready signals were not a part of these states’ policies. We note, though, that Michigan has mandated the full set in more recent years (Michigan Department of Education, 2021). A second difference between our study and Hyman’s is that Hyman’s study speaks to only one highly specific historical and geographic context. Specifically, the postintervention period of his study corresponds almost exactly to the Great Recession. Michigan experienced dramatic economic setbacks during this period (Hyman, 2017). Local economic conditions interact with youths’ postsecondary decisions (Bozick, 2009; Hillman & Orians, 2013; Sutton et al., 2016). Therefore, mandatory ACT may associate differently with college attendance in different historical and geographic contexts, making it worthwhile to build on Hyman’s study. Finally, our study offers additional explanation because we can estimate the effects of college-ready and career-ready signals on individuals’ college attendance probabilities.

Achievement Signaling Studies

Some existing literature suggests moderate effects of increased test scores and grades on students’ educational expectations (Andrew & Hauser, 2011; Jacob & Wilder, 2010), and there is evidence that these effects are more pronounced for more socioeconomically disadvantaged students (Karlson, 2019). What about signals more explicit than numeric grades and test scores, though? Papay et al. (2016) tested whether reaching the arbitrary “advanced” threshold in Massachusetts standardized tests affects students’ college attendance. For the most part, earning an “advanced” signal does not have an effect statistically distinguishable from zero, although an “advanced” signal in mathematics does appear to increase low-income, urban students’ college attendance probabilities. In one of the studies most relevant to the present research, Foote et al. (2015) tested the effect of ACT college-ready signals on Colorado students’ college attendance. In the aggregate, there is weak evidence that the college-ready signals boost students’ probabilities of college attendance. However, for low-income students in isolation, there is evidence of an effect, albeit evidence that is only moderately statistically precise. Our secondary analyses build on Foote et al.’s work by assessing whether their important finding replicates in our larger sample and different context.

The Present Study

We are fundamentally interested in how college attendance behavior changes under a policy that introduced two simultaneous shocks—mandatory ACT and mandatory WorkKeys—that may have brought competing signals. We gain knowledge of these patterns by answering these research questions:

Research Question 1a: How has Wisconsin’s mandatory ACT-mandatory WorkKeys policy impacted students’ probabilities of 4-year college attendance?

Research Question 1b: How does economic group moderate the impact of Wisconsin’s mandatory ACT-mandatory WorkKeys policy on 4-year college attendance?

Research Question 1c: How does a school’s prior ACT-taking rate and a student’s economic group jointly moderate the impact of Wisconsin’s mandatory ACT-mandatory WorkKeys policy on 4-year college attendance?

Research Question 2a: How does receiving ACT college-ready signals impact one’s probability of 4-year college attendance?

Research Question 2b: How does economic group moderate the impact of receiving ACT college-ready signals on 4-year college attendance?

Research Question 3a: How does receiving a WorkKeys career-ready signal impact one’s probability of 4-year college attendance?

Research Question 3b: How does economic group moderate the impact of receiving a WorkKeys career-ready signal on 4-year college attendance?

Our answers to these research questions generate policy-relevant evidence: The pertinent policy mandates both the ACT, mandatory policies of which have caught many policymakers’ eyes, and NCRC-bearing WorkKeys, which no one has studied in relation to college attendance probabilities.

Methods

Data

We merge the Wisconsin Statewide Longitudinal Data System, which records academic and demographic information on every student in Wisconsin public K–12 schools, with the National Student Clearinghouse, a national data source that tracks where and when individuals enroll in postsecondary education, covering 97% of U.S. postsecondary education institutions in the fall of 2018 (National Student Clearinghouse Research Center, 2019). When we assess the effect of the mandatory ACT-mandatory WorkKeys policy on college attendance, our target population is the set of people who attended a Wisconsin public school for their 11th-grade spring and were in 11th grade between spring 2008 and spring 2017. 2 We observe this full population (N ≈ 670,000). However, when we control for 10th-grade test scores in our interrupted time-series design, we delete the 7% of cases that are missing one or more of these scores, yielding a sample of about 622,000 students. Thanks to the administrative nature of the data, missing test scores constitute the only missingness to address in our analyses. When we assess how college-ready signals and career-ready signals impact college attendance, we keep only students from 11th-grade cohorts in spring 2015 and later because we are specifically interested in how these signals affect students who are required to take the ACT and WorkKeys.

Measures

The outcome variable is a binary indicator of having enrolled—without withdrawing before the start of the term—in an institution that grants bachelor’s degrees in the fall immediately following the academic year that one was expected to graduate high school. For brevity, we call this “college attendance,” but we recognize that the outcome does not encompass all sectors and timelines of college attendance. We focus on 4-year colleges because only they use the ACT as a criterion for admission, and therefore, mandatory ACT policies are designed to induce 4-year college attendance particularly. However, in supplemental analyses, we estimate how Wisconsin’s policy affected 2-year college attendance and a collapsed outcome (i.e., attending either a 4-year college or a 2-year college). We focus on immediate enrollments to not give earlier cohorts an “advantage” in terms of college attendance probabilities. The analytic sample includes both those who did and did not graduate high school to avoid overcontrol bias (Elwert & Winship, 2014). In our sample, about 36% of 11th graders attended a 4-year college immediately after when they were expected to graduate high school (calculations our own; for more details, see Appendix B, available on the journal website).

The moderator variable is economic group, operationalized dichotomously with the category “low-income” if a student was recorded as receiving free- or reduced-price lunch for at least 2 years and the category “middle/high-income” otherwise. About 39% of the sample is in the former group, and about 61% is in the latter group. In Appendix C, available on the journal website, we share the rationale for this dichotomization method. In terms of control variables, we include school fixed effects and the rich set of student-level characteristics listed in Appendix D, available on the journal website.

Analytic Strategy

Statewide intervention interrupted time series

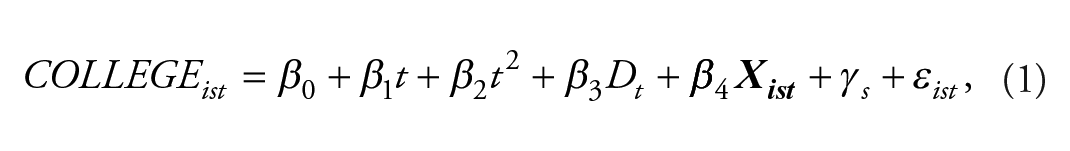

To answer Research Questions 1a through 1c, we use an interrupted time-series design, estimating the following model:

where

In more intuitive terms, we test for abrupt changes in college attendance outcomes following the intervention in spring 2015. We model the trend in college attendance outcomes before the intervention and use the continuation of this trend as the counterfactual for how college attendance outcomes would have changed over time in the absence of a mandatory ACT-mandatory WorkKeys policy. This interrupted time-series approach is more commonly applied to aggregated data than individual-level data, but applications with individual-level data like ours (e.g., Jacob et al., 2017; Park-Gaghan et al., 2020) provide more statistical power and allow a fuller array of control variables. For more details on the identification assumptions, estimation, and inference in these analyses, see Appendix E available on the journal website.

To assess effect heterogeneity by economic group (Research Question 1b), we estimate the model in Equation 1 separately for three groups: the full population, the population of low-income students, and the population of middle/high-income students. To explore the role of schools’ prior ACT-taking rates (Research Question 1c), we employ two related strategies. First, for each economic group, we estimate the model in Equation 1 among students at high schools that had preexisting mandatory ACT policies (N ≈ 77,000 students). Comparing these estimates to the estimates based on students from all high schools helps us isolate the effect of being induced to take the WorkKeys alone without also being induced to take the ACT. With that said, to restrict to the schools with preexisting mandatory ACT policies is to sacrifice a lot of external validity. Therefore, to conduct a second analysis with a similar logic but with a less dramatic reduction in external validity, we also split the sample into schools with below-median ACT-taking rates and schools with above-median ACT-taking rates. For each combination of economic group and ACT-taking two-quantile, we estimate the model in Equation 1. We put schools into ACT-taking two-quantiles based on the ACT-taking rate of 11th graders right before the intervention (i.e., 11th graders in spring 2014). For this analysis, we must drop about 8,000 (1%) of students because they attended schools with no observations from spring 2014, probably because the schools did not exist in that year. The median student attended a high school with a spring 2014 ACT-taking rate of 58%.

Nonequivalent dependent variable test

To reduce internal validity concerns, we assess the association between Wisconsin’s policy intervention and a variable that is related to college attendance but the policy should not have influenced. The logic of this check is as follows. Say one estimates a sizeable effect of the policy on college attendance. This abrupt change in college attendance probabilities could be spurious, that is, due to some other statewide change that coincided with the policy implementation. As examples, this statewide change could be a concurrent policy shift or simply a coincidental change in the composition of 11th-grade students such that the new cohort was unusually inclined toward college. If the abrupt change in college attendance probabilities is spurious, the spurious source of change should have also impacted other outcomes that capture students’ college-orientedness. Thus, an apparent effect of the policy on a nonequivalent dependent variable would suggest that the policy effect on college attendance may be spurious. Conversely, if the estimated effect of the policy on college attendance reflects a true causal effect unplagued by coinciding changes, then the apparent impact of the policy on the nonequivalent dependent variable should be small (Shadish et al., 2002).

For a nonequivalent dependent variable, we use a binary indicator of whether the student took an Advanced Placement (AP) exam in 11th grade. Henceforth, we call this variable “AP participation.” According to Coryn and Hobson (2011), the main outcome of interest and the nonequivalent dependent variable “should consist of similar . . . latent constructs” (p. 33). AP participation meets this criterion because college attendance and AP participation share the latent construct of college-orientedness, itself a function of many determinants that the two variables share: educational aspirations and expectations, academic achievement, socioeconomic advantage, conscientiousness, future orientation, and more. Indeed, the very premise of AP exams is to offer college credit opportunities to high school students who already plan to attend college. The link between the two outcomes is not only face valid but also borne out by descriptive statistics: In our sample, 77% of 11th-grade AP participants immediately attend college compared to only 27% of 11th-grade AP nonparticipants. In addition to sharing latent constructs, AP participation and college attendance further resemble each other in that they are binary manifestations of an individual’s orientation toward college. For all these reasons, in the absence of an intervention that affects one outcome but not the other, we expect increases in AP participation to go along with increases in college attendance. Beyond consisting of similar latent constructs as the outcome of interest, the other criterion for a nonequivalent dependent variable is that it be “predicted not to change because of the treatment” (Shadish et al., 2002, p. 509). Wisconsin’s mandatory ACT-mandatory WorkKeys policy is unlikely to have affected whether students took an AP exam because students had to take the ACT and WorkKeys in late February or early March, after they were expected to have signed up for AP exams, which take place in May but have a November registration deadline. To conduct the nonequivalent variable test, we estimate the same model shown in Equation 1 except that the dependent variable is AP participation.

ACT benchmark regression discontinuity design

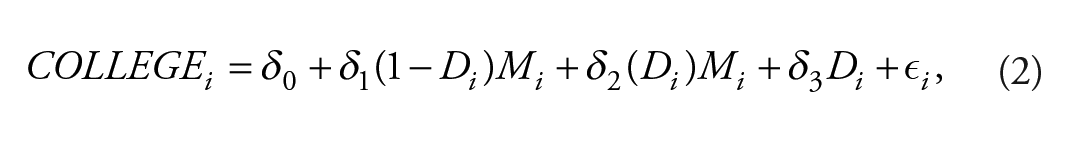

To answer Research Questions 2a and 2b, we apply a regression discontinuity design to test for abrupt changes in the probability of college attendance associated with meeting the college-ready benchmark for each ACT subject test. We do so separately for low-income students, middle/high-income students, and the full population. Our strategy is to compare the college attendance probability of students just at the cutoff to the probability projected by the local precutoff trend that describes how the probability of college attendance changes with respect to subject test score. In particular, for each subject test (English, mathematics, reading, science), we estimate the model

where

NCRC regression discontinuity design

To answer Research Questions 3a and 3b, we similarly apply a regression discontinuity design to test for abrupt changes in the probability of college attendance associated with meeting the cutoff WorkKeys scores for NCRC eligibility. Because NCRC eligibility is a function of three scores, we apply a binding-score regression discontinuity strategy, as described by Reardon and Robinson (2012). The binding score is the minimum of the three scaled scores (with each scale score recentered so that the cutoff is set to zero). Accordingly, the binding score is an appropriate running variable because it perfectly determines treatment status: All students with a binding score of zero and greater qualify for an NCRC, and all students with a binding score below zero do not qualify for an NCRC. Our strategy is to compare the college attendance probability of students just at the cutoff to the probability projected by the local precutoff trend that describes how the probability of college attendance changes with respect to binding score. In particular, we estimate the same model as shown in Equation 2 except that

Results

Effect of Statewide Intervention

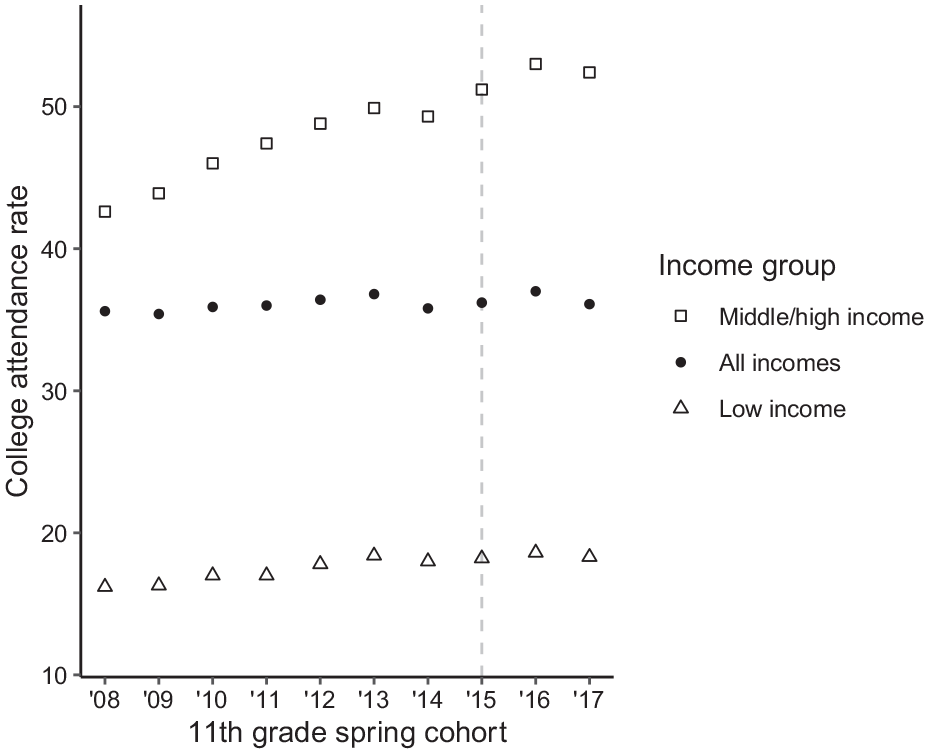

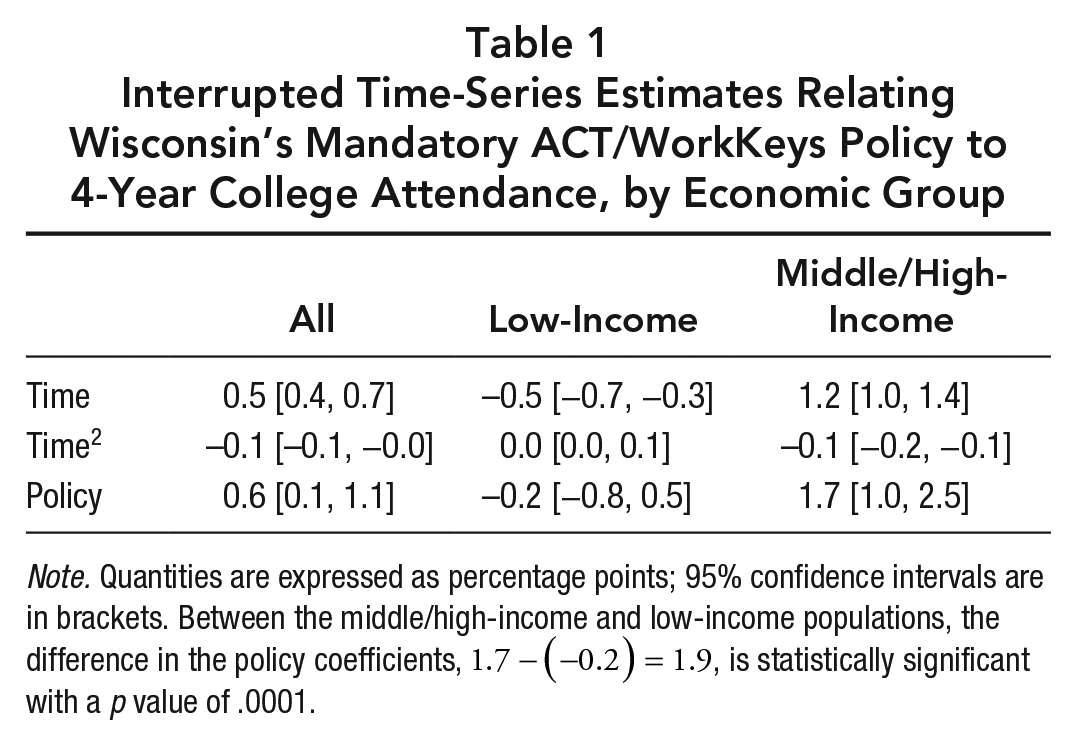

Pooling all students, the findings suggest that Wisconsin’s mandatory ACT-mandatory WorkKeys policy has had a modest but nontrivial impact on college attendance. Before inspecting the formal estimates from the individual-level interrupted time-series analysis, one can see in the middle trend of Figure 1 that the intervention coincided with a jump in the college attendance rate examined descriptively at the cohort level. This visual jump is quite small but nevertheless suggests a discontinuation of the trend that college attendance rates were following before the intervention. The formal estimates bear out what Figure 1 shows visually: The main effect of the policy is estimated at a 0.6 percentage point increase in the probability of college attendance (Table 1, first column). To one decimal place, this estimate equals the main effect of Michigan’s mandatory ACT policy, as estimated by Hyman (2017). The 95% confidence interval around our estimate excludes zero, so random chance is a weak candidate to explain the estimated effect.

Graphical depiction of unconditional, immediate 4-year college attendance rates (in percentages), by 11th-grade cohort and economic group.

Interrupted Time-Series Estimates Relating Wisconsin’s Mandatory ACT/WorkKeys Policy to 4-Year College Attendance, by Economic Group

Note. Quantities are expressed as percentage points; 95% confidence intervals are in brackets. Between the middle/high-income and low-income populations, the difference in the policy coefficients,

However, investigating low-income students in isolation, we see little evidence of a policy effect on college attendance. The policy effect on low-income students’ probabilities of college attendance is estimated at −0.2 percentage points, effectively an estimate of no effect (Table 1, second column). The 95% confidence interval does not stray far from zero; even the upper limit of this interval is only a 0.5 percentage point boost, suggesting that a true effect greater than a modest 0.5 percentage points would be very surprising. As reference, even this unlikely effect size would trail behind the 4 percentage point boost seen in experimental evaluations of college access programs (Harvil et al., 2012). In terms of both practical and statistical significance, there is little evidence that the policy increased college attendance among low-income students. The null finding from the individual-level analysis is consistent with what the bottom trend of Figure 1 shows descriptively with cohort-level trends: Among low-income students, the statewide intervention did not coincide with a jump in aggregate college attendance rates.

In contrast to low-income students, middle/high-income students seemed to have become more likely to attend college due to the policy. The policy effect on middle/high-income students’ probabilities of college attendance is estimated at 1.7 percentage points (Table 1, third column). This is a meaningful effect size: It corresponds to hundreds of additional middle/high-income Wisconsinites attending college directly after high school every year, and it is about two-thirds the size of the aggregate effect of class size reduction (Dynarski et al., 2010), a more expensive intervention. Thus, especially considering the principle that “effect sizes from lower-cost interventions are more impressive than similar effects from costlier programs” (Kraft, 2020, p. 246), the estimated effect of the policy is of noteworthy magnitude for middle/high-income students. In addition to the point estimate being sizable, the 95% confidence interval around this estimate excludes zero, so random chance is probably not responsible for our identification of a positive effect.

Furthermore, we can be reasonably confident that the greater policy effect estimate among middle/high-income students versus low-income students is not due to random chance given that the difference in the coefficients in the two groups,

Interested readers can consult Appendix H and Appendix I, respectively, available on the journal website, for two sets of supplemental analyses that we left out of the main article for brevity: results from a comparative interrupted time-series analysis and results related to 2-year college attendance.

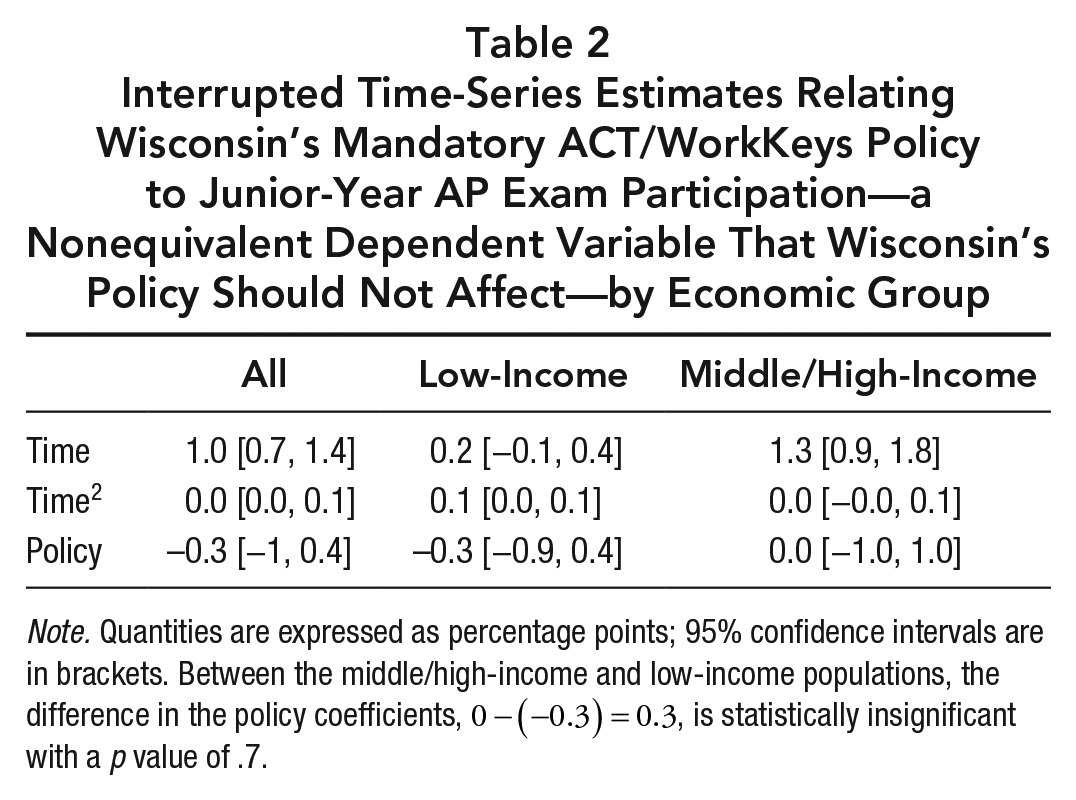

Nonequivalent dependent variable test

A nonequivalent dependent variable test can help assess whether the patterns identified previously are due to spurious factors. Although our best estimates suggest that Wisconsin’s mandatory ACT-mandatory WorkKeys policy had a positive effect on middle/high-income students’ college attendance and no effect on low-income students’ college attendance, we are aware that statewide changes simultaneous to the policy could bias our estimates if these changes are not sufficiently addressed by observed covariates and pretreatment trends. What if some other shock in 2015 increased middle/high-income students’ college-orientedness without affecting those of low-income students? What if later cohorts just happened to be composed of academically exceptional middle/high-income students but academically typical low-income students? We can get a sense of how plausible these possibilities are by seeing whether our college attendance results replicate when we consider a dependent variable, AP participation, that is related to college attendance but that Wisconsin’s policy should not have influenced.

We precisely estimate a null effect of Wisconsin’s mandatory ACT-mandatory WorkKeys policy on AP participation in the aggregate and for both economic groups (Table 2). This is the logically expected result in the absence of confounding factors given that the policy intervention in 2015 happened too late to affect whether students in that year’s 11th-grade cohort took an AP exam because students needed to sign up for AP exams before they were exposed to the new mandatory tests. These null findings are encouraging in that they lessen concerns of confounding factors in general, but they are encouraging moreover because they lessen concerns of heterogeneously confounding factors. Recall that the main takeaway of our college attendance results is that the policy seems to have benefited middle/high-income students but not affected low-income students at all. Therefore, the nonequivalent dependent test would raise particular concern if it showed greater estimates for middle/high-income students than for low-income students. This pattern does not arise. Instead, when it comes to AP participation, the estimated effects of the policy for the two economic groups are statistically indistinguishable (p = .7 for difference in coefficients); in contrast, when it comes to college attendance, the estimated effects of the policy for the two economic groups are statistically significantly different (p = .0001 for difference in coefficients).

Interrupted Time-Series Estimates Relating Wisconsin’s Mandatory ACT/WorkKeys Policy to Junior-Year AP Exam Participation—a Nonequivalent Dependent Variable That Wisconsin’s Policy Should Not Affect—by Economic Group

Note. Quantities are expressed as percentage points; 95% confidence intervals are in brackets. Between the middle/high-income and low-income populations, the difference in the policy coefficients,

If the apparent effect heterogeneity in the college attendance estimates were due only to the 2015 cohort of middle/high-income 11th graders’ underlying characteristics or experiences, which caused them alone to be highly oriented toward college, then two findings from the nonequivalent dependent variable test would be surprising: (a) the null finding for middle/high-income students and (b) the statistically equivalent estimates for middle/high-income versus low-income students. In short, the results of the nonequivalent dependent variable test reduce internal validity concerns in our estimates of how the policy affected college attendance among each economic group. To reduce internal validity concerns even further, we conduct nonequivalent dependent variable tests that use student demographic characteristics as dependent variables. For brevity, the main text of this article leaves out these results, but they are available in Appendix J, available on the journal website, and are consistent with the results that come from analyzing AP participation.

Competing explanations of main findings

Overall, the foregoing findings suggest that the mandatory ACT-mandatory WorkKeys policy has had no effect on low-income students’ college attendance and a positive effect on middle/high-income students’ college attendance. There are at least two possibilities to explain the null finding among low-income students: (a) that neither mandatory ACT nor mandatory WorkKeys have any independent effect on low-income students’ college attendance and (b) that mandatory ACT and mandatory WorkKeys have competing effects that cancel each other out. To weigh these competing explanations with suggestive evidence, we conduct two analyses: one on heterogeneous policy effects by prior ACT-taking rates and another on the effects of receiving college- and career-ready signals themselves.

We assess how estimated policy effects differ with respect to schools’ prepolicy ACT-taking rates because the results can give suggestive, although not conclusive, evidence on whether the mandatory WorkKeys component of the policy plays a role in the observed patterns, as opposed to the mandatory ACT component alone being responsible. Schools with proportionally more prepolicy ACT-takers are those where we expect students to benefit the least from mandatory ACT because those students would likely be taking the ACT whether it was mandatory or not. Thus, if mandatory ACT and mandatory WorkKeys have competing effects that cancel each other out, we expect a negative effect of the policy among students at schools with very high prepolicy ACT-taking rates because the policy induces these students to take the WorkKeys but mostly does not induce them to take the ACT.

Quasi-experimentally testing the effects of receiving college- and career-ready signals also helps us disentangle the role of the ACT and the role of the WorkKeys. Students receiving college readiness signals from the ACT also tend to receive career readiness signals from the WorkKeys (for correlations, see Appendix K, available on the journal website). Therefore, to the extent that scoring well on both tests creates a tension from mixed signals, many students experience this tension. When it comes to the greater policy effect among middle/high-income students compared to low-income students, this contrast could be explained by the tension being unequally relevant for the two groups; for example, mandatory ACT may raise everyone’s college attendance probabilities, whereas mandatory WorkKeys may reduce only low-income students’ college attendance probabilities. We assess the possibility that college readiness signals and career readiness signals have opposite effects on college attendance and, if so, for whom. The results can help, first, disentangle competing explanations for the null effect among low-income students and the positive effect among middle/high-income students and, second, hint at whether Bayesian learning drives the observed patterns.

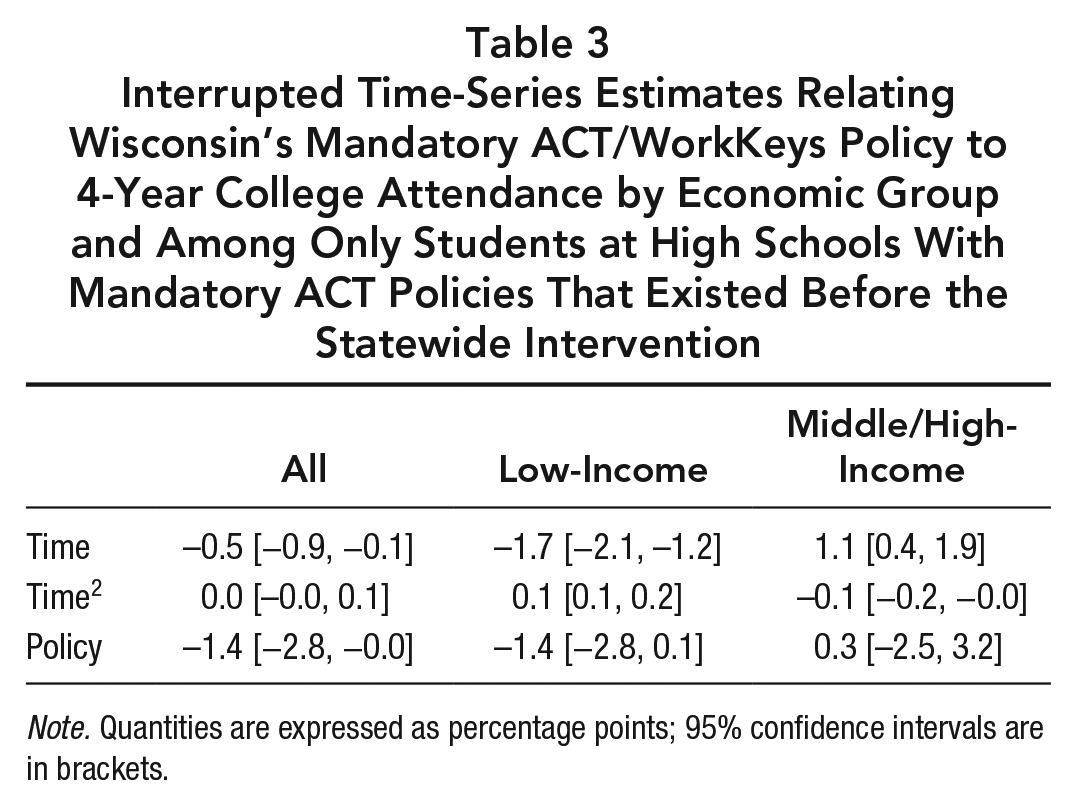

Statewide Intervention Effect Heterogeneity by Prepolicy ACT-Taking Rates

The results from all schools, shown in Table 1, differ quite starkly from the results when we restrict our analysis to students at schools with preexisting mandatory ACT policies (Table 3). Pooling low- and middle/high-income students at such schools, the estimated policy effect on college attendance is sizeable and negative, with a 95% confidence interval that excludes zero. This finding contrasts with the modest positive estimate found when analyzing all schools (Table 1), and the difference between the two estimates is statistically significant (p = .006). Among low-income students at schools with preexisting mandatory ACT policies, the estimate is sizeable and negative, contrasting with the small estimate found when analyzing all schools. However, consistent with the result from all schools, the 95% confidence interval includes zero, albeit only by a small margin. Indeed, there is not quite a statistically significant difference between the estimate for low-income students at all schools and the estimate for low-income students at schools with preexisting mandatory ACT policies (p = .16). Among middle/high-income students at schools with preexisting mandatory ACT policies, the estimate is small and positive, with a 95% confidence interval that includes zero. In magnitude, this finding contrasts with the sizeable, positive result found when analyzing all schools; however, this contrast in point estimates is not statistically significant (p = .36) due to the wide confidence interval around the estimate for schools with preexisting mandatory ACT policies. Overall, among students whose schools already mandated them to take the ACT, there is suggestive evidence that Wisconsin’s mandatory ACT-mandatory WorkKeys policy had no effect on middle/high-income students and a negative effect on low-income students.

Interrupted Time-Series Estimates Relating Wisconsin’s Mandatory ACT/WorkKeys Policy to 4-Year College Attendance by Economic Group and Among Only Students at High Schools With Mandatory ACT Policies That Existed Before the Statewide Intervention

Note. Quantities are expressed as percentage points; 95% confidence intervals are in brackets.

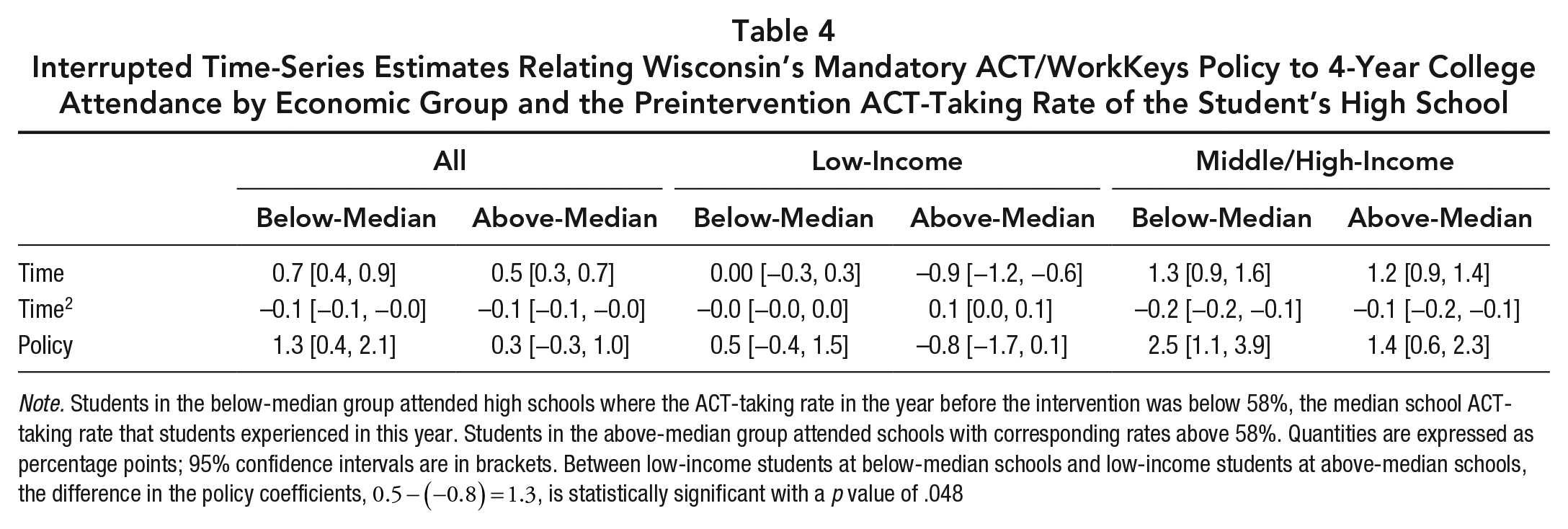

In an analysis that has a similar logic as the aforementioned but leverages information from more schools, we assess how estimated policy effects differ between students at schools with below-median ACT-taking rates and students at schools with above-median ACT-taking rates. The results from this analysis (Table 4) point to some similar patterns as the results presented in the previous paragraph. In each economic group, schools with proportionally more prepolicy ACT-takers are those where students are estimated to benefit the least from the policy, a finding consistent with what we found when analyzing schools with preexisting mandatory ACT policies. The difference in policy effect estimates between above- and below-median schools is especially pronounced among low-income students, for whom the two estimates differ by 1.3 percentage points, have opposite signs, and have a statistically significant contrast (p = .048). The corresponding contrasts among middle/high-income students and among the pooled population are not statistically significant at the .05 level, although in each case, the point estimate at above-median schools trails the point estimate at below-median schools by a full percentage point. In total, the results in this paragraph and the previous give suggestive evidence that schools with more prepolicy ACT participation saw the least benefit from Wisconsin’s policy. Without providing definitive proof, this evidence is consistent with the notion that mandatory ACT and mandatory WorkKeys had competing effects on college attendance—mandatory ACT having some positive effects and mandatory WorkKeys having some negative effects.

Interrupted Time-Series Estimates Relating Wisconsin’s Mandatory ACT/WorkKeys Policy to 4-Year College Attendance by Economic Group and the Preintervention ACT-Taking Rate of the Student’s High School

Note. Students in the below-median group attended high schools where the ACT-taking rate in the year before the intervention was below 58%, the median school ACT-taking rate that students experienced in this year. Students in the above-median group attended schools with corresponding rates above 58%. Quantities are expressed as percentage points; 95% confidence intervals are in brackets. Between low-income students at below-median schools and low-income students at above-median schools, the difference in the policy coefficients,

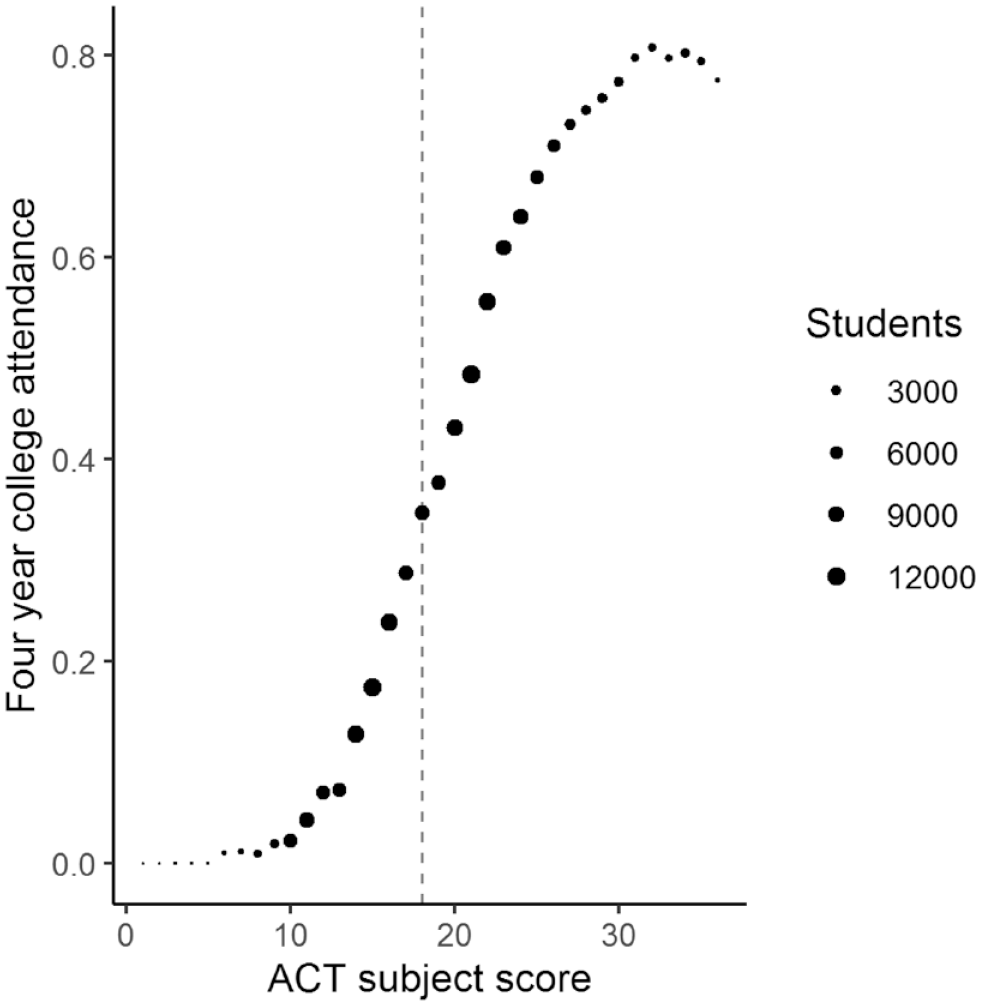

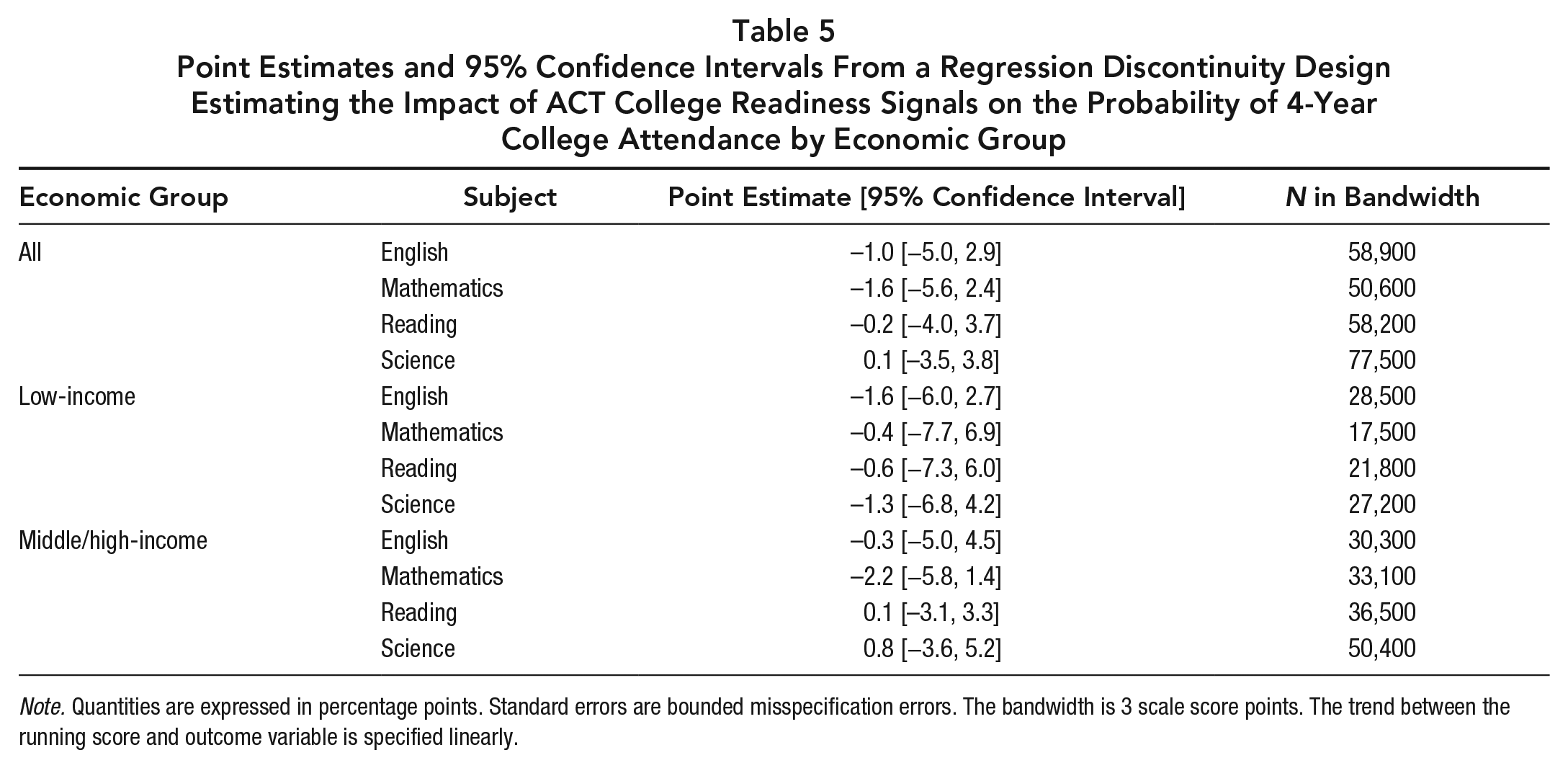

Effect of ACT College Readiness Signals

To disentangle the roles of the ACT and WorkKeys, we also estimate how receiving college readiness signals affects students’ probabilities of college attendance. We do not find good evidence of such an effect. Figure 2 shows the trends in college attendance probability with respect to ACT English scale score (Appendix L, available on the journal website, shows the trends for all combinations of subject test and economic group, with none of them differing meaningfully from the trends in Figure 2). This figure illustrates that there is no discontinuity in college attendance probabilities at the college-ready benchmark, a finding that the formal regression discontinuity estimates bear out. Ninety-five percent confidence intervals on these signal effects include zero in all cases, and point estimates are practically insignificant in all cases except a few where the estimates are implausibly negative (Table 5). There is no subject or income group for which the results indicate a positive effect of the signals on college attendance. These findings are inconsistent with the notion that mandatory ACT policies can induce college attendance by promoting Bayesian updating, although the findings do not on their own discredit the notion that mandatory ACT policies can induce college attendance via other mechanisms. We emphasize that the results from this analysis are imprecise, so even though there are no subjects for which the best estimate is a large positive effect, all 95% confidence intervals include the possibility of a large positive effect.

Probability of 4-year college attendance among students with varying ACT English scale scores.

Point Estimates and 95% Confidence Intervals From a Regression Discontinuity Design Estimating the Impact of ACT College Readiness Signals on the Probability of 4-Year College Attendance by Economic Group

Note. Quantities are expressed in percentage points. Standard errors are bounded misspecification errors. The bandwidth is 3 scale score points. The trend between the running score and outcome variable is specified linearly.

Effect of NCRC Career-Ready Signal

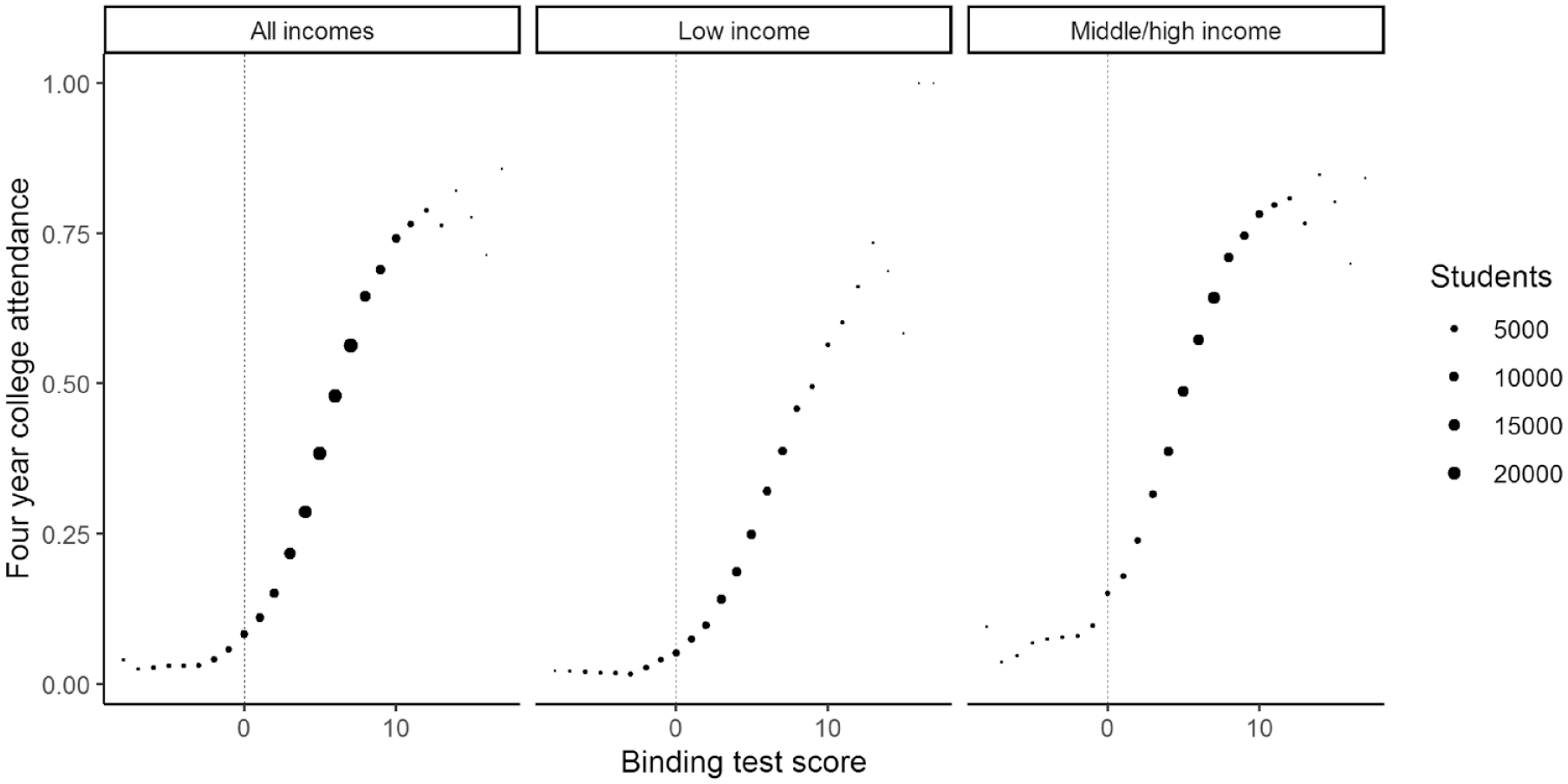

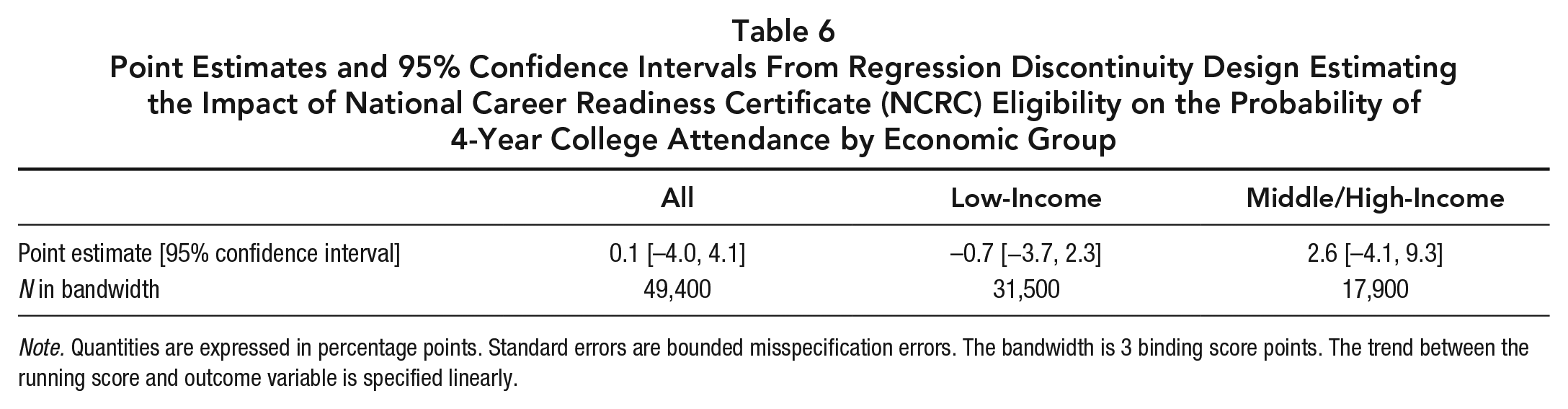

To disentangle the roles of the ACT and WorkKeys even further, we estimate how receiving career readiness signals affects students’ probabilities of college attendance. Pooling all students regardless of economic group, we do not find good evidence that being deemed career-ready with an NCRC decreases the probability of college attendance. The first panel of Figure 3 shows that college attendance probabilities increase relatively smoothly as WorkKeys binding scores increase around the cutoff, with no apparent discontinuity at the cutoff itself. This suggests that NCRC eligibility has no effect on college attendance. The formal regression discontinuity estimates confirm this result: The effect of NCRC eligibility on the probability of college attendance is estimated at a meager 0.1 percentage points (Table 6).

Probability of 4-year college attendance among students with varying binding scores by economic group.

Point Estimates and 95% Confidence Intervals From Regression Discontinuity Design Estimating the Impact of National Career Readiness Certificate (NCRC) Eligibility on the Probability of 4-Year College Attendance by Economic Group

Note. Quantities are expressed in percentage points. Standard errors are bounded misspecification errors. The bandwidth is 3 binding score points. The trend between the running score and outcome variable is specified linearly.

Evidence of an effect, especially a negative effect, is also weak for each economic group in isolation. Descriptively, the patterns in Figure 3 suggest a potentially negative effect of NCRC eligibility on low-income students’ college attendance and a potentially positive effect on that of middle/high-income students. In particular, low-income students who score just high enough to earn an NCRC are slightly less likely to attend college than those who just miss the cutoff and even more so than we would expect based on the trend before the cutoff. Conversely, middle/high-income students who make the cutoff appear more likely to attend than those who just miss the cutoff. However, the confidence intervals on the regression discontinuity estimates include zero (Table 6), perhaps due to the wide confidence intervals that arise when one accounts for a discrete running variable with appropriate conservativism (Kolesár & Rothe, 2018). Therefore, after stratifying the population by economic group, there is still not a strong case that students’ decisions to attend or forgo college are responsive to their eligibility for an NCRC. We reiterate, however, that there is suggestive evidence that NCRC eligibility causes middle/high-income students’ college attendance probabilities to increase, the opposite direction of the hypothesized effect. Similar to the ACT regression discontinuity results, however, these results are imprecisely estimated. 3

Discussion

The policy’s greater estimated effect for middle/high-income students than low-income students runs contrary to the goals of the policy, chief among them the goal of narrowing economic disparities in higher education (Department of Public Instruction, 2012). Wisconsin intended for its mandatory ACT-mandatory WorkKeys policy to promote both excellence and equity. However, based on our findings, the policy promoted excellence at the expense of equity, at least if one considers aggregate educational production to capture the concept of excellence. If our estimates are accurate, then evaluating Wisconsin’s mandatory ACT-mandatory WorkKeys policy requires one to compare the benefits of overall increased educational production to the downsides of widened inequality, a complicated calculus (Le Grand, 1990).

States and districts contemplating mandatory testing policies should weigh the current evidence alongside prior evidence (Dynarski, 2018) that largely indicates that mandatory college entrance exam policies in isolation promote both excellence and equity. Along with the prior evidence, our own results can help these actors contrast mandatory ACT-mandatory WorkKeys policies with mandatory ACT policies in isolation. At schools where Wisconsin’s policy mostly induced students to take the WorkKeys but did not induce them to take the ACT because they probably would have been taking the ACT anyway, estimated policy effects among low-income students are negative and are less than the estimates at other schools. Although these relationships are of mixed statistical significance, they point to some interpretations that compare the two components of the policy to one another. One interpretation of the findings is that mandatory WorkKeys alone would provide less benefit (or more harm) than mandatory ACT alone. A stronger interpretation is that mandatory ACT alone would be beneficial, whereas mandatory WorkKeys alone would be detrimental. Our results do not allow us to make either interpretation with full confidence. Still, synthesizing our findings with the prior research, we appraise the evidence as supporting mandatory ACT alone above a joint mandatory ACT-mandatory WorkKeys, at least vis-à-vis college attendance and equity therein.

When it comes to the effects of state policies involving the WorkKeys, the body of evidence is thin in general, and thus we encourage evaluations in other states. Wisconsin is not the only state to fund some sort of WorkKeys requirement. For example, while Michigan initially mandated only two of the three WorkKeys assessments that determine NCRC eligibility, it now mandates all three (Michigan Department of Education, 2021). With a narrower requirement, Kentucky mandates that students who are on a career pathway take all three assessments (Kentucky Workforce Investment Board, 2012). To the best of our knowledge, the effects of these states’ WorkKeys policies on educational and career outcomes are unknown. Future research ought to estimate these effects.

Regarding the effects of informational signals, the findings suggest, albeit with statistical imprecision, that neither ACT college-ready signals nor WorkKeys career-ready signals cause students to update their beliefs in a Bayesian fashion. To people who doubt Bayesian learning theory, these findings may be unsurprising. After all, individuals can go to college whether or not they meet the college-ready benchmarks and can directly enter the workforce whether or not they are deemed career-ready. Barely meeting versus barely missing these cutoffs does not remove or impose any structural barriers to college attendance, so one who is skeptical of behavioral mechanisms like Bayesian updating may not expect any effect of the signals at all. On the other hand, to people compelled by Bayesian learning theory, the findings are perhaps surprising given that both the college-ready and the career-ready signals are very explicit. For example, if Bayesian learning theory holds water, we would expect a direct label of college readiness from a supposedly reputable source to cause students to update their beliefs about their probabilities of succeeding in college and therefore alter their propensity to attend. Potential explanations include that students pay little attention to their score reports and therefore fail to notice the signals, that students do not trust the assessments as evaluators of their college or career readiness, that many students have already developed certainty in their ability to succeed in college by 11th grade, and of course, that Bayesian learning theory is inaccurate in general. Because Bayesian learning theory has implications for the human capital payoff of policies that send signals to students, we encourage future studies that test for Bayesian updating from publicly funded exams.

Supplemental Material

sj-pdf-1-edr-10.3102_0013189X221137899 – Supplemental material for Mixed Signals? Economically (Dis)advantaged Students’ College Attendance Under Mandatory College and Career Readiness Assessments

Supplemental material, sj-pdf-1-edr-10.3102_0013189X221137899 for Mixed Signals? Economically (Dis)advantaged Students’ College Attendance Under Mandatory College and Career Readiness Assessments by Christian Michael Smith and Noah Hirschl in Educational Researcher

Footnotes

Notes

Authors

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.