Abstract

Social Media and the Internet have catalyzed an unprecedented potential for exposure to human diversity in terms of demographics, language, culture, knowledge, opinions, talents and the like. Access to people’s diversity gives us the possibility of exploiting skills and competences that we do not have, that we may not even know they exist, some the so-called unknown unknowns, but which, however, could be exactly what we need when looking for help in the solution to the our current problem. However, this potential has not come with new, much needed, instruments and skills to harness the complications which rise when trying to exploit the diversity of people. This paper presents our vision of the “Internet of Us (

A Vision for the Internet of Us

No person is an island. No one knows or is skilled in everything. Human societies empower us to reach out to people with skills and information complementary to ours; we rely on others, and they rely on us. This diversity is something we exploit in our everyday life, often without even realizing it. We ask a doctor for a diagnosis and treatment when we are ill, we call up a plumber if a pipe leaks in our house, and look for someone who speaks our language and Chinese if we need to arrange a stay in Beijing. Embracing and exploiting diversity can help us improve. Diversity—our “differences” in language (Bella et al., 2024; Giunchiglia et al., 2017a, 2023; Koch et al., 2024), knowledge (Achón et al., 2024; Giunchiglia & Bagchi, 2022; Giunchiglia & Fumagalli, 2020), routines, social practices, personality traits, competencies, skills (Girardini et al., 2023; Hume et al., 2022; Mercado et al., 2023; Schelenz et al., 2021), and so on—is a desired feature that should be treasured and sought after. Over the past decades, the Internet, globalization, and the emergence of global digital platforms have transformed our lives and transcended geographical and cultural borders. Thanks to the Internet, the Web and social media, it is easy to connect to anybody in the world, regardless of their culture, social context, norms, habits, or language. The amount of diversity we have access to has increased exponentially. We get exposed daily to a seemingly unbound amount of diversity, most of which is unexpected and represents the ‘‘unknown unknowns.’’ That is, an essentially unbound source of knowledge, that we all can potentially exploit. Yet, evolution operates on a slower timescale than that of technology. We have basically the same instruments and skills that our grandparents had 50 years ago. Technology has provided us with an increased access to diversity, but has failed to provide us with the instruments for individuals and communities to cope with the social challenges that arise with diversity. As social interactions increasingly shift from the physical to the virtual world, where “the same” is reinforced via biased algorithmic recommendations (Orphanou et al., 2022), this diversity in information access is inevitably restricted (Cinelli et al., 2021), and often viewed as an undesirable bug to be avoided and eliminated, or delegated to opaque systems, for example, GenAI systems, with all the obvious risks, see, for example, (Jo, 2023; Mueller, 2024).

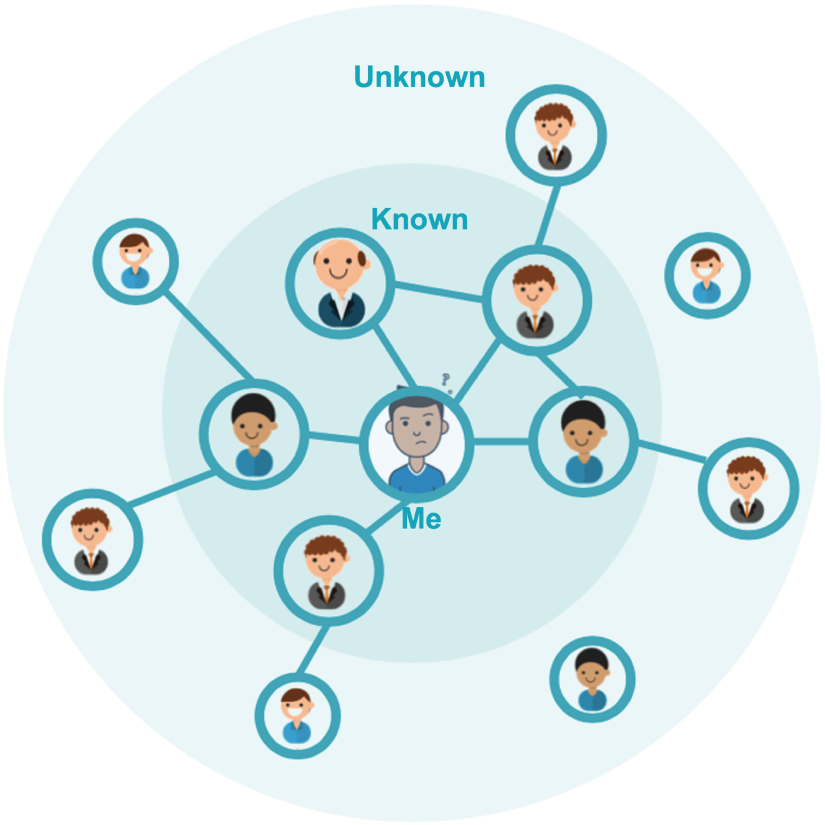

Not unlike the Internet of Things, this paper puts forward the vision of an “Internet of Us (

The underlying idea is that

IoU - asking possibly unknown people for help, about an unknown solution to a possibly unknown problem.

The remainder of this paper is organized as follows. First, we provide an overview of the main functionalities that the

A platform has been developed which implements a first version of the

The current prototype of the platform is highly modular, consisting of various components that address specific issues related to the problem solution. We provide below a short description of the different dimensions of the problem, and the components solving them, via a motivating example.

A relevant, somewhat under-specified problem. Jamal is a second-year undergraduate at the London School of Economics, curious about how artificial intelligence (AI) is shaping learning and society across cultures. Feeling that one’s local networks sometimes reinforce the same perspectives, Jamal joins the

Exploring the Unknown. One evening, Jamal posts a question in

Staying Motivated through Incentives. At first, Jamal mostly asks questions, but he soon notices that the platform rewards him for answering as well. After explaining how UK classes often focus on critical debate about AI regulation rather than purely technical training, he receives a digital badge recognizing his contribution. The recognition feels meaningful: not only has he shared something of value, but he also learns that incentives are designed to encourage active participation, both in giving and receiving support. This motivates him to contribute more frequently, echoing research showing that diversity-aware incentive mechanisms (such as badges and personalized recommendations) can sustain engagement without imposing a one-size-fits-all approach (Botta et al., 2023a, 2023b; Yanovsky et al., 2019).

Experiencing Interaction Norms. Over time, Jamal realizes that the app gently guides his behavior. When he tries to direct multiple follow-up questions to Maria from Mexico, the APP reminds him that he has already contacted her several times this week and suggests reaching out to someone new. At first, he is surprised by this restriction, but he comes to appreciate it: Maria is not overwhelmed, and Jamal’s own network expands as he meets more students. Here, he sees norms in action - rules of engagement built into the system that reflect community values of fairness and reciprocity (Garcı́a-Camino et al., 2009; Osman et al., 2021, 2020). For Jamal, this makes the platform feel more like a self-regulating community than a random chat service.

Adapting to the Situational Context. As Jamal becomes more active, he notices that the APP adapts to his context, recognizing the different information and data that compose it (see e.g., Busso et al., 2025). When he logs in from the library late at night, his queries are routed to peers in different time zones who are more likely to be online and responsive. During the day, however, the app prioritizes responses from nearby universities in Europe. This subtle adjustment helps Jamal always find engaged interlocutors, illustrating the importance of situational awareness in AI systems, and showing how context-aware models need localized data, since habits and schedules differ across countries (Assi et al., 2023). Without such sensitivity, the system would risk misaligning support and leaving questions unanswered.

Building the Conversational Context. As weeks pass, Jamal notices that the app not only adapts to his situation but also remembers his past interactions. It suggests reconnecting with Priya from India, who once offered him a nuanced take on fairness in machine learning, while also introducing him to new voices. This balance between continuity and novelty makes his conversations richer: he can build trust with familiar peers while still being challenged by new perspectives. In doing so, the app draws on both tie strength estimation (Perikos & Michael, 2022) and argumentation-based policy frameworks (Dietz et al., 2022; Markos et al., 2022; Michael, 2019, 2023a; Tubella et al., 2020), ensuring that recommendations are not only data-driven but also aligned with norms of diversity and fairness.

Discovering Diversity in Practice. When Jamal posts a question about “AI in healthcare”, he is amazed by the range of responses. The app deliberately diversifies who answers: younger and older students, men and women, but also - and perhaps more importantly - students with very different competencies. A medical student describes clinical uses, an engineer explains algorithmic design, and a philosophy student reflects on ethics. Jamal realizes that while visible diversity (age, gender) matters, invisible diversity (skills, values, disciplinary knowledge) often adds even greater value to his research project. This reflects the IoU’s approach of allowing communities to decide which diversity dimensions to prioritize (Harrison et al., 1998; Markos & Michael, 2022a; Tsui et al., 1992).

Balancing Diversity with Ethics. Eventually, Jamal feels ready to ask about a sensitive issue: “bias and discrimination in AI systems”. He hesitates at first, worried about receiving hostile responses, but the app allows him to post anonymously and restrict his question to a trusted pool of peers. The responses are constructive and respectful, and Jamal realizes how crucial these safeguards are. IoU’s design treats diversity as an instrumental value (Zimmerman & Bradley, 2019): it should foster inclusion and learning, but never at the expense of protection. By balancing exposure with safety, the app enacts the tension between inclusion and security that is central to ethical debates about diversity in technology (Goffman, 2009; Helm et al., 2022; Schelenz et al., 2024). For Jamal, this ethical mediation turns a potentially risky question into a meaningful dialogue.

The solution to an unknown problem. By the semester’s end, Jamal has collected enough material to write his paper on “AI-Mediated Dialogue Across Cultures.’’ He even organizes a cross-country student group on

Understanding and Modeling Human Diversity

A key prerequisite for achieving the goal of our platform is the ability to capture diversity within a community and to represent it in a machine-readable fashion. We have identified two main classes of diversity dimensions across which diversity is measured (Harrison et al., 1998; Tsui et al., 1991, 1992):

visible (or “observable”) demographic characteristics, for example, sex and gender, skin color/ethnicity, physical (dis)abilities, and age; and invisible (or “deep”) characteristics, for example, ethnic/cultural/socioeconomic background, personality characteristics, cognitive and interaction attitudes, social/human values, competencies and education, type of work organization, and role.

The complete list of diversity dimensions, along with descriptions of the experiments conducted to gather this data and the collected datasets, is provided in Busso et al. (2025), Giunchiglia et al. (2022). Among the many participant dimensions that we have modeled, a few key invisible characteristics can be grouped into three abstract dimensions, usually considered as part of the general theory of the Communities of Practice: material, competence, and meaning (Røpke, 2009; Shove & Pantzar, 2005). Material relates to the tangible assets that an individual has access to. Competencies incorporate an individual’s endowed skills, (background) knowledge, as well as social and relational skills required to perform a practice. Meaning incorporates understandings, beliefs, values, lifestyle, emotions, and social and symbolic significances, which are not transferable but can be learned through socialization (Berger & Luckmann, 2016). Meaning enables the connection of material and competencies, giving rise to “behavioral routine” actions that become practices at a social level. It also acts as a “nexus,” not only within a single practice, but in linking different practices, and even in creating new ones.

Both visible and invisible characteristics are operationalized in the profile of each community member. The values associated with these characteristics position each individual within a multi-dimensional space, which can be explored to establish social relationships among community members. By imposing a distance metric on this space, we can formalize the notion of variability between individuals.

However, when developing specific applications for certain communities on the platform, some characteristics may become less relevant. For instance, a community centered on the ethical implications of AI may consider ”material” aspects, such as owning a state-of-the-art computer, to be less critical. In contrast, a group focused on developing new algorithms for human-aware AI will view this as essential. The general approach is that the platform supports all the diversity dimensions we have identified, allowing the various applications built on top of it to decide which dimensions to utilize. The study referenced in Meegahapola et al. (2023) describes initial research on the explanatory power of the diversity dimensions we have chosen to model.

The richness of the profiles comes from a descriptive view of diversity, which captures the variability within a community (or a subset chosen to receive a request), but also between the person seeking support and each potential respondent to that request. Being able to compute degrees for these two types of diversity is a step towards making the members of a community aware of the diversity that exists within their ranks and files. A community member seeking support can then explicitly make use of this awareness by identifying which dimensions of the search space to restrict, and in what way, so that the exploration of the search space can become feasible, but not overly restrictive.

Understanding the Needs and Constraints of Humans Seeking Support

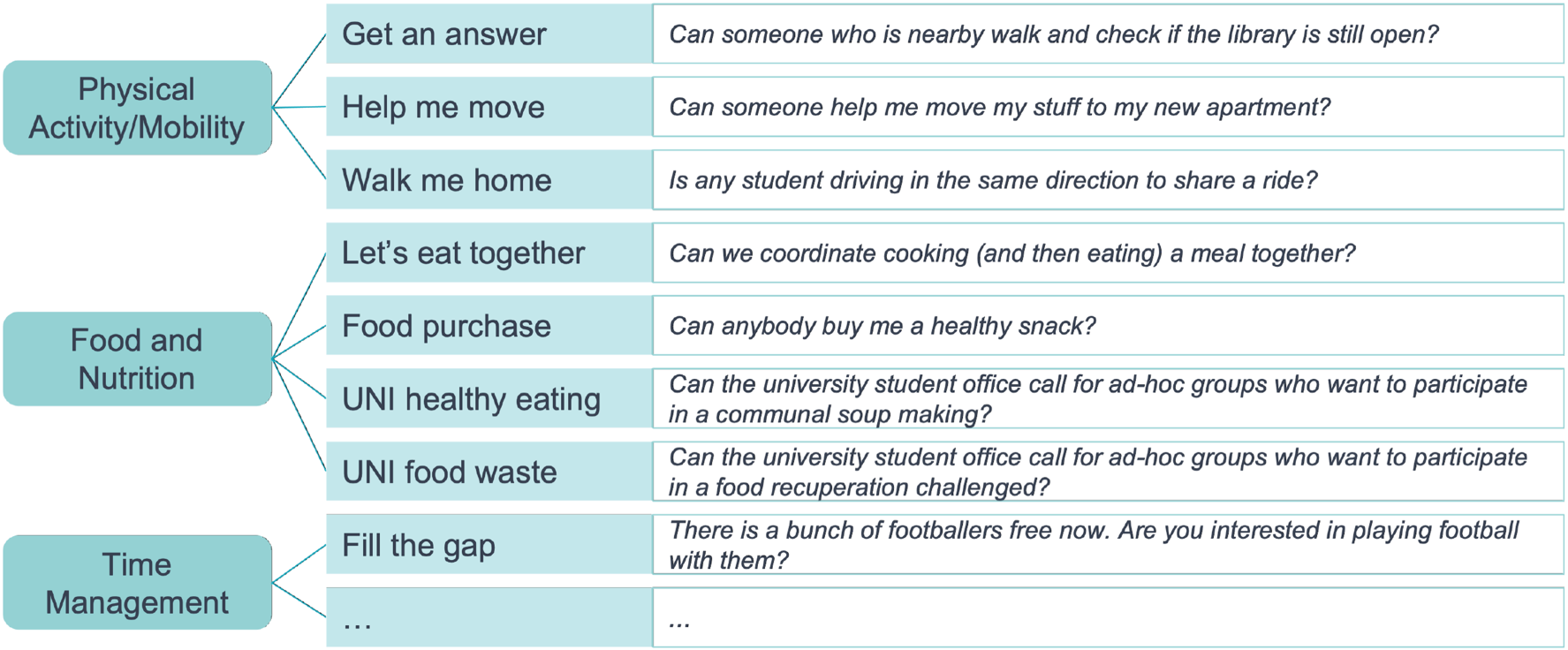

To study the perception of users when presented with diversity, we developed a technology probe (Hutchinson et al., 2003), in the form of a QA chat APP built on top of the IoU platform, with a lightweight interface (avoiding the technical complexity of a full-fledged conversational agent with natural language processing capabilities) that connects people in a community. In the application pilots, users could submit a support query in written text. They had the option to select the domain of their query, such as hobbies, arts, career, or studies. Additionally, users could specify who should be able to view their query and indicate whether the query was sensitive in nature, in which case it would be submitted anonymously. This information was then used to create a ranking of users, who were subsequently invited to respond to the query.

The chat app was evaluated favorably in the pilots. It was appreciated for its ability to connect students from different departments, educational and cultural backgrounds, and with various skills and competencies. While the possibility of getting support from “expert users” was an acknowledged feature of the app, a major attraction for some users was contacting other students, making social connections, and creating the feeling of a community while exploring its diversity. Most of the expectations towards diversity carried an exploratory quality, where people were driven by curiosity or wished for serendipity in posing queries to people different from themselves. This need was particularly apparent in the pilots conducted during the COVID-19 pandemic. Exit surveys and focus groups conducted with students after the most recent pilot programs reinforced the finding that many users were eager to leverage diversity. They indicated that giving advice was slightly more satisfying than receiving it. Users appreciated the absence of photographs and valued the opportunity to participate anonymously. Additionally, marking a question as sensitive was seen positively and reflected the ethical principles that guided the design of the chat app.

Beyond the aforementioned pilot studies and our developed QA chat APP, the platform was also utilized by several external third parties who were interested in developing alternative use cases using our technology. One such external third party was the Spanish NGO Fundación Cibervoluntarios, which coordinates a network of more than 1,800 cybervolunteers helping citizens to level out digital gaps. In March 2020, during the lockdown caused by the COVID-19 pandemic, Fundación Cibervoluntarios set up a helpline to provide support to citizens having doubts or problems related to technology, computing devices, and their connectivity, configuration, usage, or cybersecurity. Fundación Cibervoluntarios piloted our technology to empower cybervolunteers to effectively help out citizens in difficulty. Another external third party was the Greek social cooperative enterprise CommonsLab, which leveraged our technology to implement a chat application (MaTSE: a Matchmaking Tool for Social Entrepreneurs) that brings together different constituencies in the social innovation landscape: entrepreneurs with a business idea for a social innovation venture, potential investors, and other actors who may help in the process (e.g., established entrepreneurs who could provide early feedback, etc.).

As part of our effort to utilize (and evaluate) our technology in diverse contexts, we have been involved in two additional studies. The first study sought to elicit the perspective of Afghan refugees in Germany on the QA chat APP. Afghan refugees are particularly marginalized technology users because of their experiences of political, economic, and social violence in Afghanistan and abroad. The study spanned four months in 2022 and was conducted in cooperation with an NGO for the rights of Afghan women. In conversations with 14 Afghan refugee women, it became clear that empowerment through technology is not inevitable. The QA chat APP presented significant barriers for their participation due to the “complicated” registration process and usage commands.

The second study aimed to utilize our technology to alleviate the diminished social interactions between the Greek-Cypriot and Turkish-Cypriot communities of Cyprus, particularly those affected by the language barrier. A simplified version of the QA chat APP was extended with the extra functionality of allowing each user to interact (with the APP and others) in their native language, while supporting the automatic translation of messages exchanged between the two communities. The effort itself has been met with interest from members of both communities. Still, it has also highlighted the need to be wary of unforeseen pitfalls, such as the potential of mistranslation that may be deemed offensive by the recipient of the message. The two studies have highlighted that an Internet of Us must consider diversity in digital literacy and language skills, and ensure that the app design is inclusive of low-literate and socio-economically marginalized groups, and non-homogeneous groups that might not exhibit a shared trust.

Supporting and Incentivizing the Self-Determination of Communities

Awareness of descriptive diversity and the dimensions along which it is measured supports the self-determination of communities by providing the primitive concepts against which a community can differentiate itself from other communities. A community could, for instance, self-determine as including only residents of Spain who are wine aficionados, effectively utilizing the “country of residence” and the “hobbies and leisure” features of its members as the properties that make it distinct from other communities.

The same primitive concepts support a second path to community self-determination, by providing the basis over which a community can define the norms that apply during the interaction between its members. Sociologically speaking, norms capture the rules of engagement, or the social rules of behavior of a community and its members, and are the direct application of the social values of that community. They capture community-wide provisions (e.g., do not ask the same person for support more than three times within any single week) or individual provisions (e.g., do not share a request for support with members blocked by an individual). Norms are also meant to interpret the search directives provided by members of a community. The same cue (e.g., seeking support from people nearby) can be interpreted differently if seeking support within one’s “neighborhood watch” community, or within one’s “alma mater graduates” community. Technologically speaking, norms define the protocol of exploring the search space when seeking to solve the search problem of identifying appropriate respondents to a sequence of queries.

Norms come in the form of natural language expressions in human societies, and, traditionally, in the form of logic-based expressions in formal machine-readable representations (e.g., constraint-based Garcı́a-Camino et al., 2009, time-based Ågotnes et al., 2009(@, or event-based Kowalski & Sergot, 2005). In our work, we have adopted a declarative representation of norms through conditional logic-based rules (Osman et al., 2021). The rule premises refer to profile features (such as gender, competencies, etc.) or other state properties of the platform (such as the number of active community members, the total number of queries, the level of user satisfaction, etc.) that can encode information about a community, a user, or even a task or interaction. The rule conclusions refer to the possible actions that can be performed, which include message-passing actions (such as sending a message to another user, or sending a message to an app’s user-facing interface to enable a given button), and actions that change profile features or state properties (such as the privacy setting of one’s location, or the rating for a given task). A norm engine is then designed to mediate interactions by checking their compliance with the relevant norms in place, be they community-wide norms or individual norms.

Of course, having community members aligned in their understanding of norms is crucial, but difficult in open systems. For instance, if a norm prohibits hate speech, not all members might have the same understanding of what constitutes hate speech. We have developed learning mechanisms that can learn the meaning of a norm from a community’s interactions, explain this meaning to community members, and adapt over time based on members’ feedback (dos Santos et al., 2022). Furthermore, working with norms allows us to formally analyze the outcomes of interactions (Montes et al., 2022). For instance, we can analyze whether certain norms promote/demote certain human values (Sierra et al., 2021), as well as synthesize norms to ensure the maximum promotion of given human values (Montes & Sierra, 2021).

How can we promote the growth of active, inclusive, and diverse communities, taking also into account that the platform allows for interactions with people who are unknown unknowns? In the spirit of community self-determination, the key consideration is that communities may not wish for external guidelines on inclusiveness and active participation to be applied in a paternalizing and all-knowing manner. Consequently, instead of imposing such guidelines, our platform seeks to incentivize their adoption, taking into account the diverse characteristics of different participants and communities, and avoiding a “one-size-fits-all” approach common in current systems. Past works have recognized user diversity and harnessed it for generating incentives using badges (Yanovsky et al., 2019), text messages (Segal et al., 2018), and recommended items (Ben Zaken et al., 2022). Recently, we proposed a diversity-aware multi-armed bandit approach (Botta et al., 2023a, 2023b), which reasons about users’ diversity characteristics when making action recommendations. Such reasoning was shown to lead to improved outcomes compared to a “static” approach, which ignored participants’ rich and changing profiles (cf. Section 3).

The platform’s incentivization mechanism directs participants to engage with others by seeking support, responding to support queries, and rating the responses they receive, towards becoming more active members of their community. This same mechanism could be used to incentivize participants not to apply search filters unnecessarily, and to actively request that the recipients of their query be diversified (cf. Section 6).

Modeling the Personal and the Social Context of Individual Participants

One of the aspects that makes individuals diverse, and that affects their social interactions through the application of norms, is their personal context (Bontempelli et al., 2022; Giunchiglia et al., 2017b). Due to its inherently dynamic nature, the personal context of any given platform participant cannot be populated in their profile when they first join the platform, but needs to be learned. The worldwide deployment of diversity pilots over our platform presented a unique opportunity to investigate how personal contexts can be learned across diverse communities. Short surveys about the participants’ current place, activities, social situation, and mood were collected in parallel with data from smartphone sensors and mobile APP logs. The datasets were used to investigate how diversity attributes, such as the country of residence of participants, can be adopted to build ML-based inference models from mobile data. Such an investigation allows for the systematic comparison of country-specific and country-agnostic models for tasks like activity recognition (Assi et al., 2023) or mood recognition (Meegahapola et al., 2023), and facilitates the empirical evaluation of whether model transfer is possible across communities.

The results indicate that considering country-level diversity in model learning is crucial for recognition performance, and transferring models between countries is not a simple task. In this sense, the results contribute towards highlighting the fundamental need for locally-valid data that represents the reality of the world beyond economically advantaged regions (Gatica-Perez et al., 2019), and for documenting the resulting models with respect to these issues (Gebru et al., 2021). More broadly, our empirical results emphasize the need for machine learning models to be aware of the diversity that exists between communities, and by extension, the need for this diversity to be reflected in the learning data that is available (in the participant profiles, in the case of our work).

Beyond their personal context, a profile also includes an individual’s social context, which in its simplest form comprises a list of learnable social tie strengths (Perikos & Michael, 2022) that capture the frequency and manner in which the individual has interacted with other platform participants. A more advanced form of social context comes through a set of norms that specify an individual’s policy on whether / how to interact with other participants. This policy can be thought to be more persistent than an individual’s social tie strengths, but can still be revised over time. Our work has focused on developing a formal declarative policy-representation language (Markos & Michael, 2022b) that acknowledges the key role of argumentation as a calculus for human-centric AI (Dietz et al., 2022). A policy elicitation paradigm accompanies the language (Michael, 2019, 2023a) that is cognitively light and computationally efficient (Markos et al., 2022). To support the utilization of this formal policy language, we have investigated how policies can be elicited through natural language cues (Ioannou & Michael, 2021), and how they can be integrated with machine learning models (Tsamoura et al., 2021), towards making them explainable (Michael, 2023b) and contestable (Tubella et al., 2020).

The use of individual norms to condition a user’s interactions on their personal and social context, and the dependence of the user’s context on their interactions, may lead to a vicious spiral of diversity-reducing interactions. To compensate for this possibility, we adopt a second view of diversity as a prescriptive value to be promoted, and the platform diversifies the ranking of participants that receive any given query. One might think that diversification should be applied maximally on all visible characteristics, but not on any of the invisible ones, which relate to the abilities of participants to respond to queries and should, therefore, be allowed to be constrained. This view is understandable, given that human societies have practically equated the notion of diversity with that of inclusiveness based on visible characteristics. But this, by itself, does not make this a valid view.

In certain scenarios, one may wish to select participants based on their visible characteristics, to target, for instance, a particular minority demographic group. In other scenarios, one may want to diversify based on the participants’ invisible characteristics, not necessarily as a way to avoid discrimination, but as a way to get a more varied perspective (or serendipity, cf. Section 4). In the spirit of self-determination, our diversification process allows each community to choose the profile characteristics (one or many, visible or invisible) against which to diversify, and the extent to which the diversity requirement will override a user’s own preferences (Markos & Michael, 2022a). Empirical studies on how humans perceive diversity in participant rankings have been carried out to validate the process.

Diversity As An Instrumental Value That Supports Fundamental Values

Any attempt to operationalize and mediate diversity in social interactions must consider the possible ethical ramifications. Technological mediation is not value-neutral, and the development of technology is never done without prioritizing different values and advancing a certain “agenda” (Brey, 2010; Friedman & Nissenbaum, 1996; Schelenz et al., 2024). Being mindful of the inevitably value-ridden nature of the technology development process, the

Our rationale follows the assumption that diversity is not an end, but a means; it has instrumental rather than intrinsic value (Zimmerman & Bradley, 2019). In the same way that diversity may promote the value of inclusiveness, it may also, if abused, demote the value of protection. This relates to the classic discussion in content moderation between the need to balance the freedom of speech and the prevention of hate speech and violence (Brown, 2021; Gillespie, 2018). On the one hand, public discourse should include as many perspectives as possible. On the other hand, public discourse should be free from abusive communication (such as, for instance, racist or sexist attacks) and protect minority positions.

Values like inclusiveness and protection are of a more fundamental nature vis à vis diversity (Helm et al., 2022), and they ought to be promoted for their own sake, even at the expense of diversity. Think of a request about a topic that is associated with stigma (e.g., mental health problems), where an individual may risk social exclusion just for seeking support (Goffman, 2009). Somewhat counter-intuitively, restricting the diversity of the respondents ends up enhancing the individual’s access to a diverse section of the social search space, by protecting them from hurtful and derogatory comments, and by creating a safe space within which to seek support. Accordingly, our platform implements algorithms and offers interface functionalities to interact anonymously and to restrict the respondent pool. At the same time, transparency is essential to enable individual control over personal data and to trust in the potential of a diversity-aware platform like WeNet (Schelenz et al., 2024).

Conclusion

We have sought to make progress on an ambitious goal: to design the

It goes without saying that realizing the

Footnotes

Acknowledgments

The authors wish to thank all our collaborators for their fantastic support; a most likely incomplete list is: Marina Bidoglia, Andrea Bontempelli, Maria Chiara Campodonico, Carlo Caprini, Ronald Chenu Abente, Galileo Disperati, Paula Helm, Alethia Hume, Peter Kun, Meegahapaola Lakmal, Vasileios Theodoros Markos, Chaitanya Nutakki, Marcelo Dario Rodas Brites, and Donglei Song.

Funding

This research has received funding from the European Union's Horizon project “WeNet - The Internet of Us”, under grant agreement no. 823783.

Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.