Abstract

The significant pace of technological change due to artificial intelligence (AI) is transforming the competitive and operational landscapes of contemporary organizations. While there are models such as the technology acceptance model (TAM), the unified theory of acceptance and use of technology (UTAUT) and the technology–organization–environment (TOE) framework, which can help set the context for understanding adoption behaviour, they are rarely considered in parallel with the longitudinal perspectives provided by digital maturity frameworks. This study provides a bridge across this divide by combining traditional adoption models alongside constructs from digital maturity, grounded in dynamic capabilities theory, to represent the processes whereby firms are sensing, seizing and reconfiguring resources in response to technology disruption. The study adopts a qualitative–descriptive design that will identify characteristics and constructs that are determinants of successful AI integration, incorporating a case illustration using secondary data. Four key determinants were identified to successfully integrate AI, including executive sponsorship, mature data governance, continuous skill development and ethical and regulatory compliance. The inclusive framework presented illustrates how the development from early adoption to mature stages occurs and demonstrates that sustained capability-building leads to greater adaptability and long-term value. The case illustration demonstrates how theory-based knowledge is transferable into practice, which reinforces the findings of the study and highlights its relevance for scholars and practitioners alike. The research findings provide a holistic, empirically supported model for guiding the adoption of AI in rapidly changing digital environments.

Keywords

Introduction

Artificial intelligence (AI) has become the defining catalyst of digital transformation in the world today, disrupting industries, business models and forms of competition. While the promise of AI is still developing with respect to operational efficiencies, enhanced analytics and different value propositions, it still requires significant changes to organizations’ structures, processes and cultures. The ability to respond to such change has become a strategic imperative, because organizations that do not adapt to new technological transformations have the potential to erode market relevance (Kane et al., 2015; Mikalef et al., 2019). This means that studying AI adoption requires an analytical framework that not only accounts for the acquisition of technology but also examines the related capability and organization development that must happen over time.

Established adoptions frameworks, such as the technology acceptance model (TAM), the unified theory of acceptance and use of technology (UTAUT) and the technology–organization–environment (TOE) framework, have been used extensively to explore the determinants of technology adoption (Venkatesh et al., 2003). The models indicate that technology adoption is influenced by perceived usefulness, perceived ease of use, the organizational readiness to adopt a technology and that external environmental pressures can also shape technology adoption decisions. While these frameworks add value to the understanding of technology adoption, they focus almost exclusively on the first stage of adoption, often neglecting to illuminate the processes that organizations adopt an approach to sustain, extend and fortify the use of a new technology long-term. As a result, these models limit the ability to shape strategies that map a short-term approach to implementation with the longer-term digital transformation aims of an organization.

Recently, digital maturity models (DMMs) and dynamic capabilities theory (DCT) have become increasingly acknowledged as critical representations of advancement that supplement or complement previous approaches to technology adoption. DMMs, like those developed by Westerman et al. (2014) and Kane et al. (2015), offer structured pathways to examine an organization’s transition from initial digitization to the maturity of innovation-led implementations. They highlight stages of development, alignment of resources and continuous advancement. DCT, as articulated by Teece (2007) and developed in the context of digitality by Warner and Wäger (2019), focuses on how firms sense opportunity, how they seize opportunity through action and design and how to reconfigure resources to aid competitive advantage in turbulent situations. When juxtaposed, these perspectives expand AI adoption into an opportunity set of decisions, as well as the continual opportunity to develop capability towards AI use and implementation.

While these perspectives have useful elements, the literature seems bifurcated. The technology adoption models, for instance, and frameworks of digital maturity are very seldom integrated, despite some emerging scholarship showing how determinants of adoption link to maturity, or how dynamic capabilities direct sustained adaptation (Mikalef et al., 2019). Furthermore, there is a lack of empirical examples of integrated models of these perspectives deployed in AI adoption situations, limiting the advancement of theory and the ability to provide managerial guidance. Therefore, this opens an important research gap that provides a singular opportunity to unite these perspectives in a cohesive framework that can guide organizations through the entire trajectory of AI-enabled transformation.

To fill this gap, the current study will discuss:

How can AI adoption frameworks (TAM, UTAUT and TOE) be harmonized with DMMs to articulate a roadmap for organizational adaptation? What organizational, technological and environmental conditions play the most important role in AI adoption success across the maturity stages? How do dynamic capabilities allow organizations to sustain AI adoption and yield long-term value in an evolving digital environment?

In combining theories of adoption, digital maturity and dynamic capabilities into an integrated framework, this research removes AI adoption from a single, technology upgrade event, to a multi-stage and multilayered capability development process. In doing so, it offers an important theoretical contribution and practical guidance for organizations managing digital transformation embedded in rapidly changing and increasingly competitive technological environments.

Literature Review

Technology Adoption Frameworks

TAM

The TAM, initially suggested by Davis (1989) and later furthered by Venkatesh and Davis, remains one of the most significant frameworks for describing technology adoption. Central to the model is the notion that an individual’s intent to adopt a technology derives from their perceived usefulness (i.e., how well the technology improves their job performance) and their perceived ease of use (i.e., how much effort is involved). Over time, TAM has been applied in a number of emerging technological contexts, including AI-enabled systems, to analyse users in various adoption of use contexts. In contexts of AI adoption, perceived usefulness is closely related to perceived efficiencies and subsequent enhanced decisions while perceived ease of use is related to the intuitive usability of the system, the ability for integration and the accessibility of data.

Yet, while TAM is a very strong predictor of decision to adopt decisions, it has some important shortcomings in the context of this study. First, TAM explicates individual-level adoption behaviour and does not truly capture the dynamics of organization-wide adoption, which measures the social structures that organizations use to measure intuitive group decision-making, cultural norms and strategic goals. Second, TAM also does not intrinsically reflect on the transformation of technological capabilities post-adoption; this is important in organizational settings where adoption is part of a broader learning, integration and improvement (Mikalef et al., 2019). These shortcomings highlight the necessity to supplement the TAM with additional frameworks that integrate organizational readiness, contextual elements and maturity trajectory.

Unified Theory of Acceptance and Use of Technology

The UTAUT, developed by Venkatesh et al. (2003), integrates eight prior models, including TAM, the theory of planned behaviour and the innovation diffusion theory, into a single unified framework. UTAUT identifies four primary constructs that influence behavioural intention and use of technology: performance expectancy, effort expectancy, social influence and facilitating conditions. In the organizational context, performance expectancy refers to perceived strategic value, such as competitive advantage or operational efficiency; effort expectancy corresponds to perceived ease of using AI systems part of existing workflows or work processes; social influence describes the degree to which leaders’ endorsement of key aspects or peer adoption matters; and facilitating conditions include technology infrastructure, training and support programmes.

The power of UTAUT is in its capacity to incorporate individual, organizational and social aspects, making it a more unified theoretical framework than TAM. However, UTAUT has also been primarily operationalized in cross-sectional indicators focusing on adoption intention and early awareness or involvement in use, not on the continual longitudinal changes in patterns of use or advancing capabilities over time. This cross-sectional nature, even in the organization-level context of AI adoption, is problematic because, in the case of typically complex technology that is typically adopted and deployed in an iterative approach, it restricts its ability to fully explain the processes of continued adoption and maturity progression. Thus, while UTAUT is a valuable way to examine technology acceptance and use, it is still an incomplete perspective when considering the full impact of AI in organizations.

Technology–Organization–Environment (TOE) Framework

The TOE framework has been characterized as a macro-perspective of technology adoption by revealing the contextual interactions of technology, organization and environment addressed in the adoption process. In technology adoption for research related to AI, the technological context includes such dimensions as compatibility, relative advantage and complexity of AI solutions; the organization’s context examines the breadth of a firm’s leadership support, size or resource availability; and finally, the environmental context encompasses competitive pressure, regulatory environment and market uncertainty.

The generality of TOE makes it a constructive framework to analyse organization-level adoption where external influences align with internal capabilities. For AI contexts, this alignment includes compliance with ethical imperatives, addressing workforce skill gaps and alignment of existing business strategies with AI efforts. However, TOE has also been criticized for its descriptive nature versus predictive capacity and for including vagueness of organizational analysis in the operationalization of its constructs. Like TAM and UTAUT, TOE by itself does not inherently address maturity progression or the process by which organizations build a capability for sustained AI presence after initial adoption.

As a collective whole, TAM, UTAUT and TOE provide complementary lenses moving from purely individual perceptions to organizational and environmental perspectives, but none represent the iterative, capability-building that characterizes AI adoption. That underpins an even stronger logical incoherence for combining any models with digital maturity frameworks and DCT to encompass a broader exploration of the overall journey of initial adoption through sustained strategic change.

AI Readiness Models

Adoption of AI by organizations involves more than access to sophisticated technologies but necessitates a measure of organizational readiness that includes technical, structural, cultural and strategic capabilities. AI readiness models provide a systematic approach to assessing this ready state prior to scaling up and implementation. Readiness models expand on technology readiness to include AI-related factors such as data governance, ethical oversight and algorithmic convergence (Jöhnk et al., 2020).

Among the most prominent is the AI readiness index, also known as the AI readiness model, which describes readiness across multiple dimensions. Jöhnk et al. (2020) recognize the key pillars of readiness include leadership commitment and vision, data infrastructure quality, employee skills and competencies, technology integration capability and ethical and regulatory compliance. For example, leadership commitment ensures ongoing strategic support and funding for AI planning, and data quality infrastructure allows for better model training and deployment. Also, from a skills readiness perspective, this is especially relevant in AI contexts where technical ability must be accompanied by knowledge of the domain in order to design, interpret and manage AI-driven systems (Mikalef et al., 2019). Simultaneously, Dwivedi highlighted the socio-technical aspect of AI readiness, arguing that technical infrastructure, such as computers and software applications, must be accompanied by a willingness to adapt, capabilities in change management and cross alignment of AI initiatives with organizational strategy. They stressed that an organization with a capable technical infrastructure can still be a low performer in AI if suitable culture or governance frameworks for responsible AI use are not in place. This socio-technical framing is consistent with more recent empirical findings that assert organizational agility, cross-functional collaboration and ethical governance are as important to successful adoption of AI as computational capabilities.

While readiness models amount to a useful form of diagnostic analysis, they are typically depicted as pre-implementation assessments and would seldom fall within longitudinal studies or analyses of adoption. This is a missed opportunity as they do not provide a dynamic and contextual explanation of how readiness factors evolve within progressing levels of digital maturity in the post-adoption stage. For instance, one organization might initially score poorly on AI skills readiness; as it matures, it builds up its levels of readiness through targeted upskilling initiatives; however, most readiness models consider readiness as static in character. It is this relevance of a connection to maturity frameworks that track development and capability over time (Kane et al., 2015).

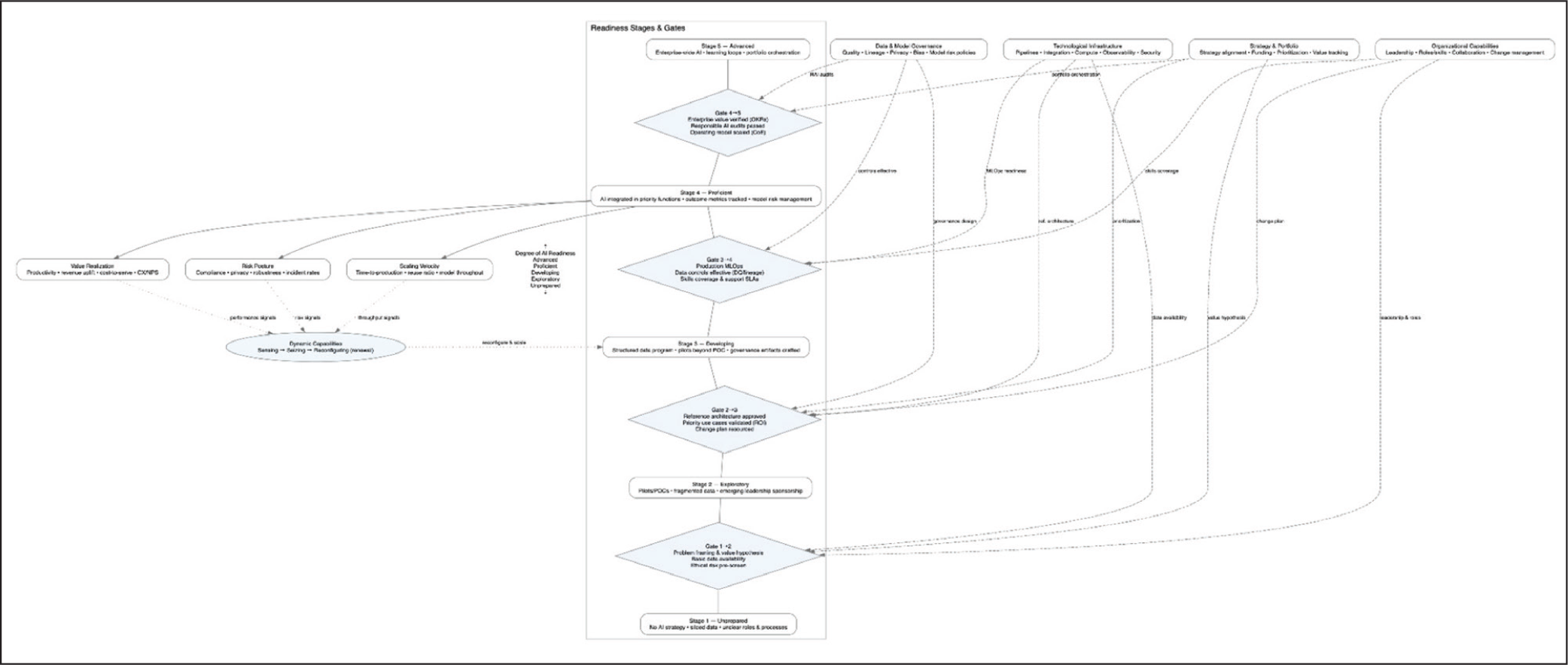

AI readiness models are important in our study since they take elements of adoption frameworks (e.g., technology adoption model—TAM, UTAUT and TOE framework) and DMMs and provide evaluative measures to understand an organization’s readiness to move through maturity stages and to identify gaps in capabilities that need addressing in order to remain on the trajectory of sustained transformation enabled by AI. By framing readiness as both a precondition and a dynamic quality, the study reaches a unique position of interweaving a readiness measure as part of a broader strategic path for developing a strategy for AI adoption, as well as underlying capability development. As illustrated in Figure 1, AI readiness progresses through a series of structured gates that represent sequential organizational milestones. Each gate signifies a critical transition point where leadership commitment, data infrastructure and compliance maturity are evaluated before advancing to the next stage. This gated model ensures that readiness is not perceived as a one-time assessment but as a continual process of alignment between technological capacity and strategic intent. By integrating leadership, data and ethical governance dimensions, the framework highlights how organizations systematically develop their capability foundations to achieve sustainable AI adoption and long-term digital maturity within evolving technological ecosystems.

Readiness Stages and Gates.

Digital Maturity Models

DMMs provide structured frameworks for assessing the extent to which organizations have incorporated, or applied, digital technologies, processes and capabilities into their strategic and operational models. This transition towards digital transformation moves organizations through a series of stages and DMMs, or maturity models, represent digital transformation as an evolutionary process, rather than a point-in-time technology decision. That is, organizations move through clear process stages one-by-one, from varying forms of experimentation through what is described as the maximum stage of maturity (fully integrated and innovation-led maturity) (Kane et al., 2015; Westerman et al., 2014). The staged approach affords firms the opportunity to assess their current state and determine what digital capabilities are required to develop further.

Broadly similar early maturity models presented by Westerman et al. (2014), four stages being beginners, fashionistas, conservatives and digital masters, include a consistent emphasis on the interplay of an organization’s digital capabilities and an organization’s leadership capabilities. Recent models have expanded the dimensions of maturity models to include practices of agility, customer centricity, data-informed decision-making practices and innovation ecosystems, for example (Schumacher et al., 2016). In their model, Kane et al. (2015), MIT Sloan–Deloitte suggested the most important factors to consider in assessing organizational maturity are the strategic alignment, culture, talent and governance of the organization. While organizations invest in the technology, Kane et al. (2015) argue that the real source of maturity is the required alignment of strategy, organizational culture and investing in talent development.

When considering AI contexts, digital maturity is about having meaningful deployed AI capabilities, but it is also about how to scale AI capabilities across and between functions of the organization; whether AI capabilities are integrated into the organization enterprise systems; and whether AI capabilities are embedded in the decision-making process of the organization (Mikalef et al., 2019). This type of deployment of AI capabilities requires mature data governance, cross-functional forms of collaborative practices and adaptive leadership that are able to manage the complexities involved with both technological and ethical dimensions. As well, Marques and Ferreira note that ‘digital maturity for AI’ by considering the dimension of organization readiness. Again, this must also be considered by technical indicators, such as types of automation and predictive analytics adoption and organization indicators, such as levels of workforce readiness, types of innovation culture and types of ethical compliance mechanisms.

While the models of digital maturity discussed here can be useful in determining the strategic direction of the organization, many of the DMMs are primarily utilized as a diagnostic tool at a specific point in time, without much consideration for understanding the specific mechanisms that promote or support advancement to the next stage of maturity. The greatest conceptual gap occurs when organizations attempt to develop their own trajectory towards maturity and how they link their trajectory to technology adoption frameworks such as the TAM, the UTAUT and the technology, organization and environment (TOE) frameworks.

Technology adoption frameworks identify the factors which influence the initial uptake of the technology to some degree but do not provide much educational or analytical value or utility to promote or highlight how to sustain the capability development post technology adoption. DMMs provide a gradient of staged progressions through different developmental stages to enhance the organization’s maturity without necessarily linking to the determinants of adoption and types of readiness measures that promote engagement and use, if organizations are even using these types of frameworks at all.

For this reason, this study proposes the perspective that DMMs act as a part of the connective tissue between adoption frameworks and DCT. By structuring the relevant determinants of adoption to respective stages of maturity, organizations may better understand how readiness factors evolve and how they identify the types of dynamic capabilities they will require to move from one stage of maturity to another stage of maturity. This integrated understanding will allow the maturity assessment capability function to be more than simply measuring an organization’s current status, but as a strategic map of the organization’s transformational pathway to AI-enabled transformation, to ensure that the relevant technological investments are aligned to the organization’s capability development objectives over time.

Dynamic Capabilities Theory

DCT provides a strategic understanding of how organizations adapt, transform and maintain viable competitiveness within their environments, particularly turbulent ones. DCT originated from Teece foundational article and was developed and refined by Teece (2007). While the theory acknowledges that competitive advantage is derived from an organization’s resource endowments in volatile markets, it also asserts that competitive advantage is created through the organization’s ability to sense opportunities, threats and emerging trends in order to seize them in a timely and effective manner while also reconfiguring assets and resources to help the organization remain relevant. When considering AI adoption, dynamic capabilities refer not only to whether or how a firm implements AI solutions but also to whether or how a firm can develop those solutions over time.

The sensing capability involves sensing or identifying technological trends in the environment, changes in the market and future customer demand. In relation to AI, this means scanning the environment (or relevant advances) for machine learning algorithms and applications (e.g. new natural language applications or ethical frameworks for AI) that would enhance business processes. The seizing capability entails joining together the resources and processes identified from the sensing capability in order to take advantage of these opportunities by taking action. This includes the organization beginning to create pilot AI projects, organizing strategic partnerships with technical providers and ensuring resources, such as access to funds and skills, are available to deploy a viable pilot alternative (Warner & Wäger, 2019). The last capability of DCT is the reconfiguring capability, which is the organization’s ability to reassess existing assets, structures and processes to include, depend upon and integrate the application of AI into the heart of operational and strategic business decision-making within the organization. This often includes redesigning workflows, modifying regular work activities/responsibilities and incorporating systems that are designed for continuous learning (Mikalef et al., 2019).

Within digital transformation literature, dynamic capabilities are starting to emerge as the identified linking mechanism that connects the unique actions associated with adoption and the capability associated with maturity progression over time (Warner & Wäger, 2019). Dynamic capabilities enable organizations to transition to a full potential of using AI-as-a-technology and go beyond the traditional identification of an organization’s adoption stage as identified and captured by TAM, UTAUT and TOE framework, towards sustained maturity as described in DMMs. Path dependence is critical to recognize that firms with a history of successfully adapting processes and integrating knowledge into the decision to adopt AI technologies can address capability scale within AI processes in a prompt and effective trade-off between ethical use of resources and social demonstration of AI.

On the other hand, while the DCT framework provides a terrific conceptual setting in which to articulate and consider how organizations adapt, the current level of DCT in AI adoption research is limited in scope. Most studies examining AI adoption are evaluating preparation, readiness factors and the adaptation and transformation stages upon initial implementation challenges without a critical consideration of how sensing, seizing and reconfiguring capabilities develop, change or disappear across stages of maturity. This indicates the need for researchers to explore synergies associated with adopting dynamic capabilities and both adoption and maturity frameworks to build a more holistic understanding of a new, integrated model exploring the entire lifecycle of AI-enabled transformation from initial uptake and adoption to sustaining AI-enabled strategic renewal.

In terms of this research, DCT served an interest as the strategic enabler within the integrated framework. It provided the theoretical construct for how organizations move or progress through stages of digital maturity and embrace reconfiguring resources and capabilities for an organizational response to the changing technological, market and regulatory environments. With dynamic capabilities embedded into the adoption process, organizations can maintain a balance in planning-for-the-future and holistically ensure that the technological decision to adopt specific technologies is done so as a part of longer-term organizational competitive positioning without defining AI as a one-time tool, but rather, as a strategic asset to be developed over time.

Bringing Together Adoption Models, Readiness, Maturity and Dynamic Capabilities

The disparate state of AI adoption research, where TAMs, readiness assessment, maturity models and dynamic capabilities are typically studied in isolation, inhibits the development of a more comprehensive understanding of an AI-enabled transformation. Technology acceptance models, such as TAM, UTAUT and TOE studies, focus on the drivers of initial decision to adopt (Venkatesh et al., 2003) and early usage, but provide inconclusive guidance on the evolution that follows adoption. Readiness models examine an organization’s preparedness for adoption (Jöhnk et al., 2020) but are generally static and fail to capture learning and capability growth that follows adoption. DMMs track the developmental path of process and customer-facing digitalization capabilities (Kane et al., 2015; Westerman et al., 2014) but do not typically link clearly to the micro determinants of adoption behaviour or readiness constructs. DCT (Teece, 2007; Warner & Wager, 2019) can conceptually link to adoption, maturity and capability growth, but ideally, should be connected to all three; however, much of the literature is not in the context of AI.

An integrated framework that draws together these notions allows us to address the conceptual and empirical gaps in many of the streams of literature. In this framework, adoption models describe the organizational, technological and environmental influences that impact the decision to adopt AI. Readiness models would describe the minimum viable capabilities such as leadership buy-in, data capabilities, skills, integration capacity and governance needed to support AI adoption. Maturity or capability development models would chart the developmental path representing the progression from initial adoption and experimentation, towards scaled, organization-wide adoption as a competent, capability-based organization; and dynamic capabilities sensing, seizing and reconfiguring support each of those maturity stages to ensure post-adoption capabilities are aligned with the organization’s strategy in an uncertain and dynamic business climate.

Such an integrated model offers a synergy of benefits. First, it offers a temporal bridge between the initial points of adoption and the long-term building of capabilities, allowing organizations to conceptualize the AI transformation as a lifecycle rather than an isolated event (Mikalef et al., 2019). Second, it presents an integrated structure along a diagnostic-to-strategic continuum in which readiness models provide some pre-adoption diagnostics, maturity models provide some post-adoption development framework and dynamic capabilities support the development of future capabilities in a changing technological landscape, for both researchers and practitioners. Third, it provides scholars and practitioners, respectively, with a greater robustness to hold the lens of theory and practice together, whilst offering scholars a more holistic viewpoint and practitioners a plan to assess and manage AI transformation.

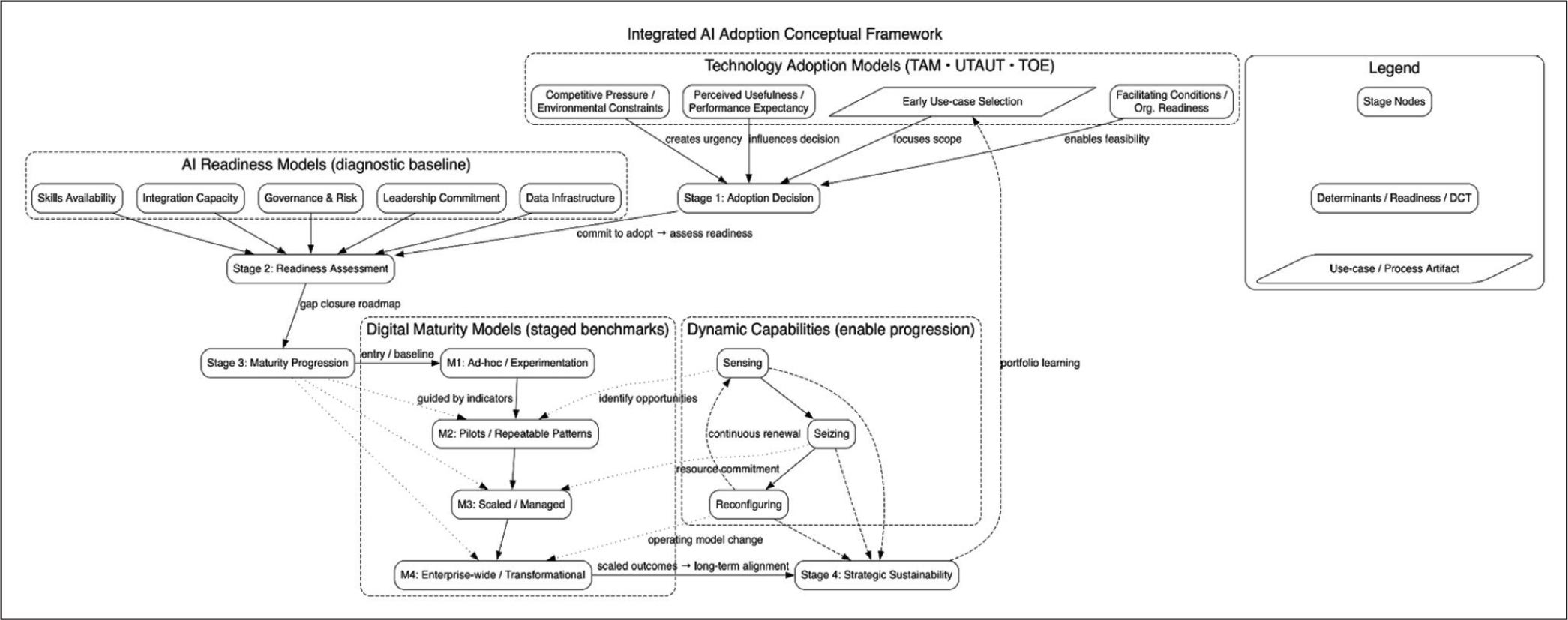

For the present article, this integrated model provides the conceptual backdrop. Using the integrated model situates AI adoption within a framework that describes the strategic pathway taken to enable AI adoption, starting with the influences on adoption, moving to the readiness level, followed by stages of maturity and then calculation of dynamic capability. By framing the discussion in the way proposed, it moves beyond the tendency to treat these constructs in separation as highlighted earlier and addresses the progress literature has made towards some studies calling for consideration of multi-theoretical, longitudinal approaches to digital transformation. Figure 2 depicts the integrated framework that connects technology adoption models, digital maturity stages and dynamic capabilities into a unified pathway for AI-enabled transformation. This framework demonstrates how adoption determinants initiate organizational change, which is then strengthened through readiness building and maturity progression, culminating in sustained capability renewal. By aligning constructs from TAM, UTAUT, TOE and DCT, the model bridges short-term adoption intentions with long-term strategic adaptation. The integration highlights the interdependence between technological, organizational and environmental factors, illustrating how dynamic capabilities enable firms to continuously sense, seize and reconfigure resources in evolving digital environments.

Conceptual Diagram: Integration of Technology Adoption, Digital Maturity and Dynamic Capabilities Frameworks.

Methodology

This research utilizes a qualitative, theoretically informed research methodology to examine how organizations can strategically reposition themselves in the wake of technological disruption through overseeing technology adoption models, readiness models, digital maturity and dynamic capabilities. As the objective of this research is to develop an integrated framework rather than test a singular theory, the research methodology adopted was exploratory. This exploratory approach echoes the focus of research on digital transformation, which offers a conceptual model developed through an illustrative case analysis using secondary research data (Jöhnk et al., 2020; Susanti & Salim, 2022). More specifically, the study aims to directly affect the research request by Thordsen and Bick for research that causes theorized-as-integrated and empirically relevant frameworks which guide strategic decision-making in technologically complex environments, as opposed to the narrow confines of maturity assessments.

Data were only sought from secondary sources for breadth, consistency and reliability. Academic data were located in journals listed in Scopus and the ABDC list of journals, within the 2018–2024 time period, of studies that looked at AI adoption, organizational readiness, digital maturity and capability. Practitioner-based items included reports from consulting firms like McKinsey, Deloitte and the World Economic Forum. These enabled us to obtain applied research knowledge. Selection criteria took into account purposefully decided contextual relevance for understanding digital transformation strategy, theoretical and methodological transparency and usable data across engagement activity types. As an added illustrative layer, the study actionably integrated publicly available case data from corporate annual reports, industry whitepapers and verified news accounts with respect to standard practices of secondary data research.

The analysis was carried out in three stages. Beginning with thematic coding, we sorted and classified the reviewed literature content into four overarching meta-categories, which included adoption influences, readiness needs, maturity indicators and dynamic capabilities. The coding process was undertaken with and against existing ontological methods of established models: the technology adoption model (Davis, 1989), TOE, AI readiness models (Jöhnk et al., 2020) and methods (Teece, 2007). The second stage was a cross-comparative analysis to determine convergences and complementarities between the four areas of research, studying synergistic relationships with potential orderings. The final stage was to undertake a non-linear mapping exercise that categorized the areas of study into a sequential–iterative model of organizational repositioning that examined the readiness for technology, progression through models of maturity and enhancement of organizational capabilities.

Several validation techniques were deployed during the processes of research and analysis, assuring methodological rigour. The triangulated research between academic, industry-based and case data studies provided construct validity, while conducting peer debriefings with subject matter experts ensured improved conceptual clarity. Other validation processes that improved reliability included establishing an explicit audit trail of the coding and synthesis, especially when incorporating categories. Recognizing ethical research practice, we utilized only publicly available, or adequately licensed data, provided appropriate attribution and acknowledged that although generative AI tools were used to improve proper writing flow and refinement, generative AI tools were not deployed during analysis or the research outputs. Through a combination of conceptual synthesis together with applied illustration, across synthesis and practice methodological lenses, we both aligned with and enacted Thordsen and Bick’s call for integrative and actionable research into DMMs and their use.

Analysis

Thematic Synthesis of Literature

The examination of prior research finds four related themes that determine organizational responses to technology change, namely adoption determinants, readiness enablers, maturity indicators and dynamic capabilities. While prior research has examined these themes separately, this examination connects timelines among the sequential and recurring themes to inform the consuming resources needed for continued capabilities in digital transformation.

Adoption determinants shaped by perceived usefulness, relative advantage, cost–benefit antecedents and support of top management make up the factors in which technology adoption as a phenomenon starts (e.g., TAM, TOE and UTAUT). However, the observations of Troise and Kraus suggest that adopting organizations systematically refer to external environmental levels of turbulence and larger environmental regulators. For example, organizations in the financial services sector take advantage of the rushed and cheap use of AI for compliance and regulation, while manufacturers refer to relative competition and supply chain resiliency. Adoption determinants are not holistic wholes; they change and develop as organizations move along the transformative process towards operational maturity. Empirical findings suggest readiness is a multidimensional notion composed of material assets (cloud infrastructures, data integration platforms, etc.); yet it is equally valid for more ethereal uncontentious matters like organizational culture and absorptive capacity (Jöhnk et al., 2020). Due to infrastructural bottlenecks, organizations in emerging markets often have to adopt paths of incremental rather than holistic transformational change.

Readiness indicators have to do with the extent to which digital technologies have been embedded, optimized and utilized for strategic advantage. Well-recognized models of digital maturity (Westerman et al., 2014) suggest that maturity is either a sequential journey to experimental, integrated, driven innovation ecosystems or a binary state (either embedded or not). Recent evidence has shown that maturity is not just that it is linear; organizations can and do regress and plateau in maturity due to management changes, shock events or technology transitions. In this sense, maturity is not meant to be considered an end state; rather, it is about the ongoing reassessment of organizational relevance through dynamic equilibrium of vision. Dynamic capabilities connect readiness to sustained maturity by allowing organizations the ability to realign, reconfigure resources, redefine processes and rethink business models in response to technological discontinuities (Teece, 2007). In digitally turbulent environments, the dynamic process of building orientations towards sensing opportunities, seizing and realigning necessary resources has been shown to significantly enhance resilience and long-term business competitiveness.

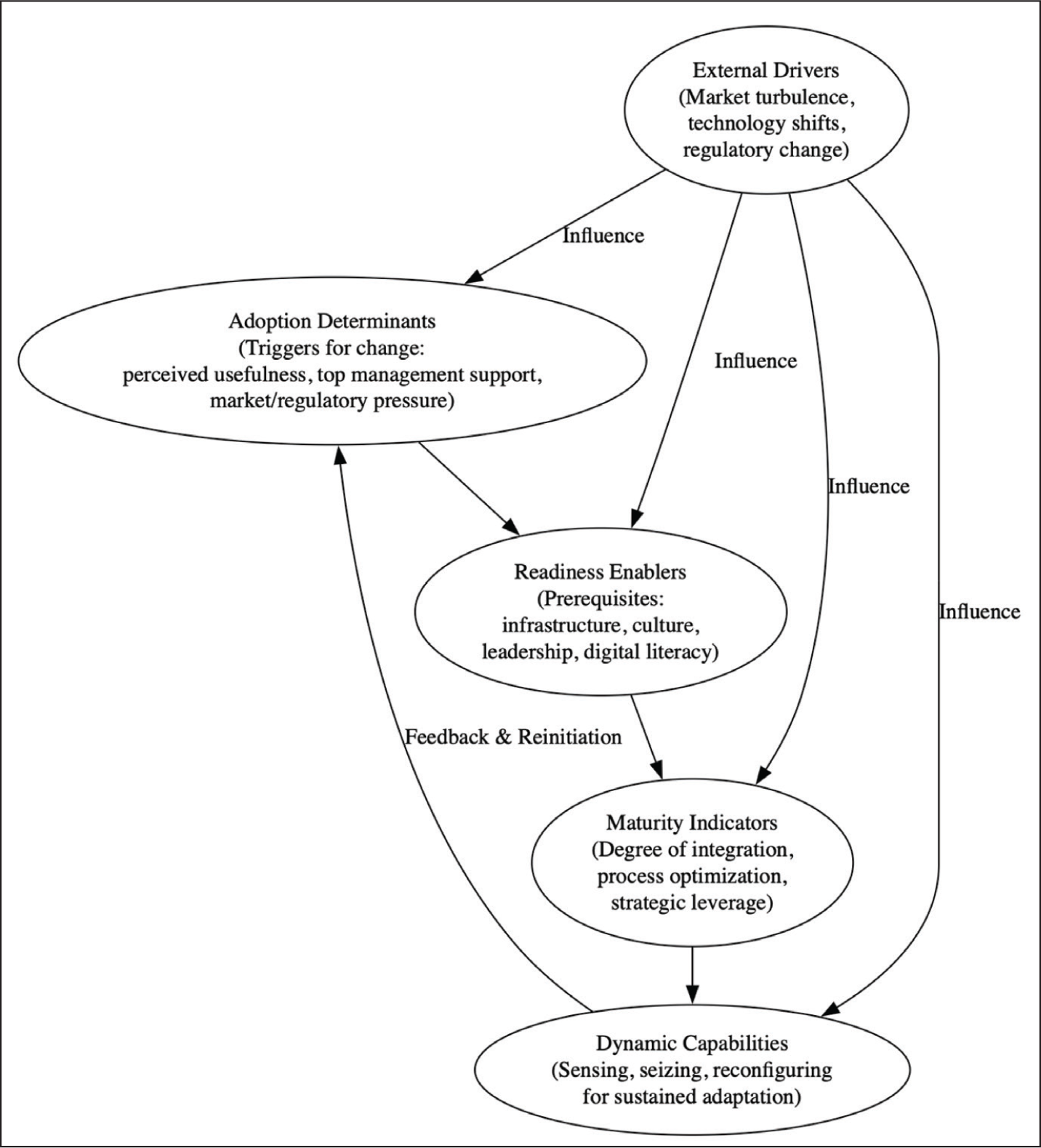

External influences, as outlined in Figure 3, play a pivotal role in shaping how organizations navigate technological turbulence and transformation. Factors such as regulatory pressures, market volatility, competitive intensity and stakeholder expectations interact to either accelerate or constrain AI adoption trajectories. The figure illustrates how these external dynamics intersect with internal readiness conditions—governance, data infrastructure and leadership vision—to influence strategic decision-making. By contextualizing external drivers within the broader adoption–maturity continuum, the model emphasizes that technological adaptation is not solely a function of internal capability but a negotiated response to evolving environmental complexities and digital ecosystem interdependencies.. Studies from organizations adapting business in a post-pandemic evolving context reported that their dynamic capabilities meant they were faster at re-establishing impacted business activities than their competitors and had the chance to leapfrog them in terms of business digital sophistication (Jöhnk et al., 2020).

External Drivers and Their Influence.

Cross-framework Integration

The analytical structure of this investigation is based on a deliberate meshing of three different but complementary theoretical perspectives: technology adoption models (e.g., TAM, UTAUT), DMMs and DCT. This is not simply theoretical layering but a structured meshing of perspectives that directly address the research questions for this study (RQ1–RQ3). RQ1, which investigates drivers of technology adoption, is underpinned by the adoption determinants found in TAM and UTAUT (e.g., perceived usefulness, perceived ease of use and facilitating conditions). RQ2, which investigates the organizational readiness for digital transformation, is based largely on maturity models that assess the organization’s progress from initial digitization to transformative digital leadership. RQ3, which investigates mechanisms of sustaining competitive advantage, draws on DCT’s focus on sensing, seizing and reconfiguring capabilities in volatile technology contexts (Warner & Wäger, 2019).

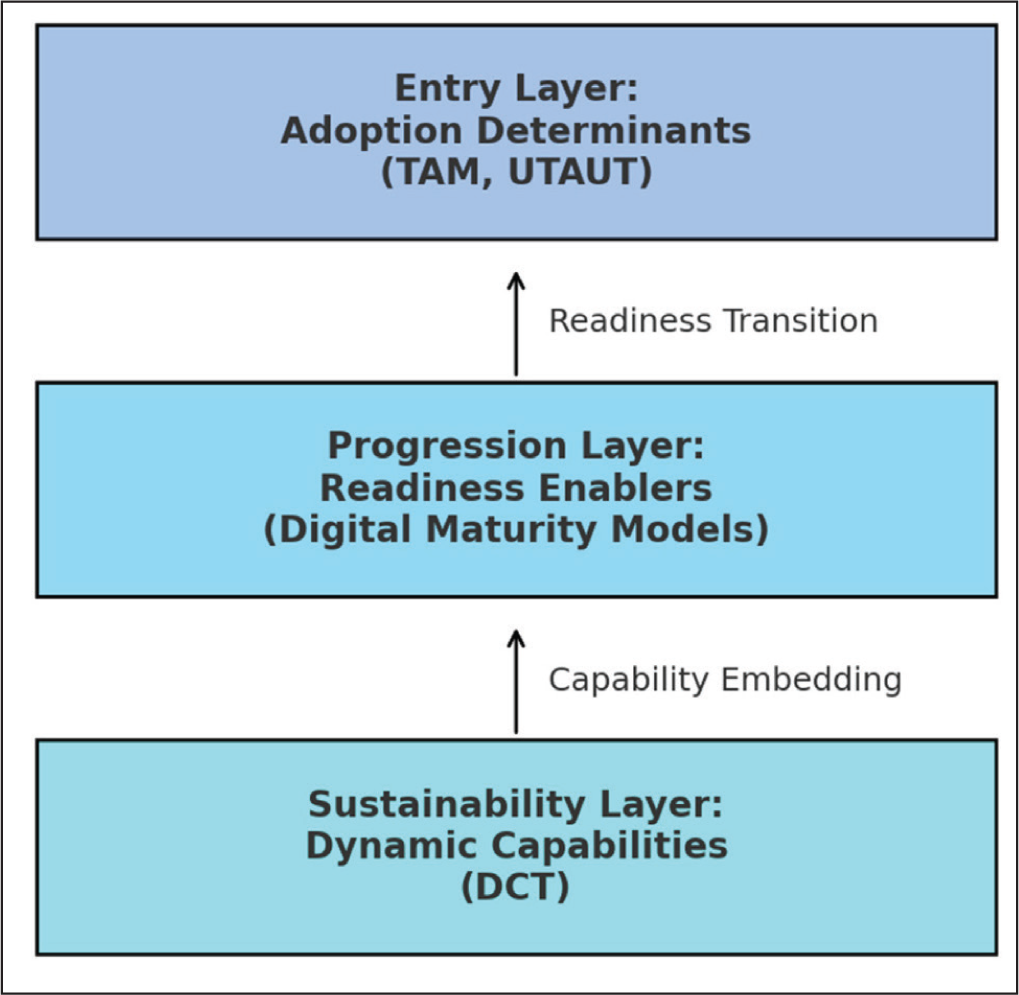

Recent research highlights the value of these types of integrated approaches. Loonam contended that maturity frameworks attain a deeper level of analytical understanding when used with adoption theories, since readiness assessments can be further improved by behavioural adoption predictors. In a similar vein, Susanti and Salim (2022) argue that generative AI adoption will be most effective when maturity stage assessments are conducted with capability-based evaluations, so readiness is not misconstrued as resilience. This study appreciably contributes to that conversation by way of proposing a three-layered conceptual model in which the adoption determinants represent the entry layer, readiness enablers represent the progression layer and dynamic capabilities represent the sustainability layer, with transitions between these layers taken as iterative not linear, as organizations can be seen to return to earlier states throughout their transformation journey to recalibrate (Vial, 2019).

For analytical clarity, this generative view of a conceptual model can be found in the form of a conceptual diagram (Figure 2), which demonstrates the interdependencies across the three blueprints. The diagram’s central image shows that readiness enablers (infrastructure investment, skill development and governance alignment) facilitate the relationship between adoption triggers and embedding dynamic capabilities over the long term. This model is contextually situated in the financial services industry because its two distinguishing characteristics of regulatory intensity and high rates of digital disruption make it an ideal testbed for investigating adaptation in conditions of technological volatility.

Given the theoretical underpinning, the integrated framework will assist in the case mapping activity in the following section. More precisely, the secondary-data cases will be coded in relation to the three layers (the organization is required to report publicly for compliance purposes) using the organizational reports, the digital transformation assessments and sector-specific assessments. The mapping will identify the adoption–readiness–capability pathways and sector-based variations for developing maturity plateaus, which will allow the theoretical synthesis to be empirically grounded (Bohorquez et al., 2023; Susanti & Salim, 2022). Figure 4 illustrates the conceptual integration of technology adoption frameworks, DMMs and DCT into a cohesive analytical structure. The diagram visually represents how organizations transition from initial adoption triggers through readiness building towards sustained capability reinforcement. It emphasizes that digital transformation is neither linear nor isolated but a cyclical process of continual alignment between technology, strategy and capability development. By demonstrating the interdependencies among adoption determinants, maturity enablers and dynamic capabilities, Figure 4 underscores how sensing, seizing and reconfiguring mechanisms collectively sustain adaptability, enabling organizations to evolve strategically in volatile and technologically dynamic business environments. Furthermore, the cross-framework integration ensures the study will be more than just a descriptive catalogue of the adoption factors, but instead, a unified process model that describes both forward and backward progress in an organization’s digital journeys.

Conceptual Diagram.

Sectoral Application and Case Mapping

The empirical application of the tri-layered integration framework is framed within the financial services domain and has been chosen for its distinctive characteristics where rapid technological disruption, regulatory pressure and high trust from consumers intersect. This domain has mobilized accelerated adoption of AI and automation and platformization, particularly in retail banking, payment systems and asset management. However, the digital transformation journey in this domain is often disparate as there are maturity plateaus stemming from regulatory requirements, IT legacy architecture and a fragmented approach to capability-building (Bohorquez et al., 2023). The financial services domain is compelling both in terms of the necessity for organizations to innovate—to avoid losing competitiveness—against a backdrop of needing stability for complying with regulators. This dynamic makes this domain a relevant paradigm for assessing the viability of the integrated adoption–maturity–capability model.

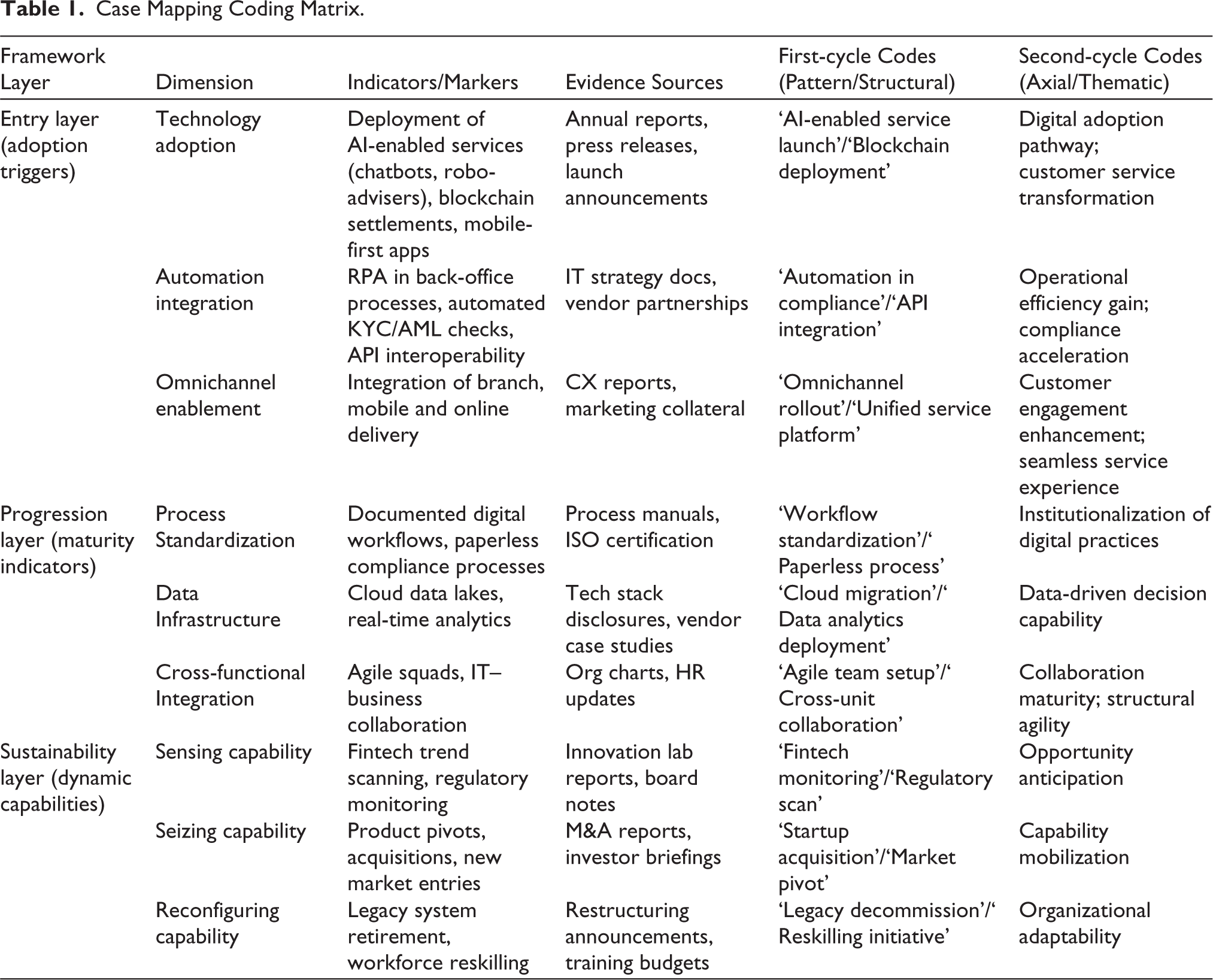

The case mapping process leveraged secondary data sourcing from publicly available annual reports, investor presentations, regulatory documents and digital transformation white papers from leading—in the context of AI, automation and platformization—organizations in the sector between 2019 and 2024. This was supplemented with literature from consulting firms such as Deloitte, McKinsey and industry reports published in peer-reviewed outlets. In determining the use of cases, the selection criterion aimed to ensure diversity in regard to digital maturity, starting from early-stage digitalizers to digital leaders, and representation across organizational size and market geography (Susanti & Salim, 2022). The use of secondary sources aligns with common methodological approaches to qualitative case mapping in digitally oriented sectors (Yin, 2018). The case was coded systematically against the tri-layered framework from the Cross-framework Integration section. The entry layer was measured based on observable adoption triggers, for example, launching AI-enabled products, integrating automation in workflow and backend functions and adopting an omnichannel service platform. The progression layer was mapped by considering maturity indicators, such as workflow digitally standardized, cross-functional integrations and IT-semi-standardized analytics infrastructure. The sustainability layer focused on identifying robust dynamic capabilities—showing strategic pivots, making use of open ecosystem partners and internal restructuring to promote rapid changing technology development. The coding process employed a directed content analysis methodology, where prescribed categories from the integrated framework framed the extraction and coding of segments of data. Patterns were identified through a cross-case comparison, leading to the identification of adoption–readiness–capability pathways as well as differences caused by sector, regulatory and cultural diversity. As an observation, global financial technologies lead organizations have rapidly moved through the maturity stages, while regional banks remain in a hybrid state (e.g. higher adoption intensity but limited embedding of capability). Table 1 presents the case mapping coding matrix that operationalizes the tri-layered integration framework across adoption, readiness and capability dimensions. It systematically categorizes data from secondary sources—annual reports, regulatory filings and industry analyses—into three hierarchical layers: entry (adoption triggers), progression (maturity indicators) and sustainability (dynamic capabilities). Each layer is further detailed through specific markers such as technology deployment, process standardization and capability reinforcement. The table demonstrates how patterns derived from these indicators reveal organizational pathways towards AI-enabled transformation, thereby linking theoretical constructs with empirical evidence to illustrate sectoral variations in digital maturity and adaptive capability development.

Case Mapping Coding Matrix.

This sectoral mapping serves two purposes. First, it provides empirical support for the proposed notion of non-linear progression in the tri-layered model, in which organizations can cycle backwards through stages to realign and develop strategy. Second, it provides context to the global application of the framework in showing how regulatory, infrastructural and competitive factors influence trajectories across sectors of transformation. Additional insights from this process informed the comparative analysis of the Discussion section, where findings were compared to other digitally oriented sectors to test the models’ more generalized applicability.

Sequential–Iterative Process Model Development

The process model translates the thematic findings into a staged—albeit explicitly iterative—structure that provides an explanation of how organizations go from initial AI adoption to sustained, capability-led advantage. At a conceptual level, our model integrates a temporal spine (stages of activity), with adaptive feedback loops (recalibration cycles), so that short-term decisions around adoption build towards long-term strategic renewal rather than one-off initiatives, in accordance with process views of digital transformation and capability development (Teece, 2007; Vial, 2019).

Stage A: Adoption Triggers (Entry)

The journey commences when a number of explained triggers (perceived usefulness, performance expectancy, environmental pressure and enabling conditions) are sufficiently strong to embolden action. At this sentiment stage of adoption, we have TAM/UTAUT/TOE constructs coming through in dominant activity, as decision-makers weigh perceived gains from value, effort of integration and contextual constraints, as they select initial use cases (e.g., customer-facing AI and RPA in compliance) generating high impact, with low risk and acting under the premise that an emergent mindset will facilitate any changes that might be required along the way. Key governance decisions include scope (pilot vs enterprise), architecture (stand-alone vs API-first) and sponsorship (functional vs C-suite) that together outline the early trajectory for transformation (Davis, 1989; Venkatesh et al., 2003).

Stage B: Readiness Building (Enablement)

After creating a foothold, organizations need to turn intent-to-capacity. Readiness building translates activity and investment into data pipelines, platform choices, upskilling talent and responsible AI controls. The question changes from ‘can we adopt?’ to ‘can we scale and govern?’ The focus is now infrastructural (cloud/data), organizational (roles and squads) and normative (risk/ethics), which establishes the conditions where AI stops being a point solution and becomes a managed capability. Empirical findings suggest that organizations progress at this phase by financially backing data quality and analytics investments alongside ahead-of-the-workforce capabilities and governance clarity, while also relieving deep-seated frictions in scaling (Jöhnk et al., 2020; Mikalef et al., 2019).

Stage C: Maturity Progress (Integration)

Once readiness is achieved, organizations will develop maturity plateaus where they will start to move from wins localized in pockets of the organization to cross-functional integration and then on to enterprise orchestration. Maturity that can be characterized by standardized digital workflows, common data assets and product–platform–thinking that allows reuse and speed. It is essential to reiterate that there is no linear ladder to maturity: organizations can firmly settle in a plateau of maturity until they optimize controls and systems for stability before making movement upward. You can robustly define maturity however you measure it, by assessing the degrees of integration (depth), the decision automation, the time to deploy and the connectivity of the firm in the surrounding ecosystem, thereby changing ‘maturity’ into transformation and quantitative progression (Chanias & Hess, 2016; Jöhnk et al., 2020; Schumacher et al., 2016).

Stage D: Capability Reinforcement (Renewal)

The locus of sustainable advantage is routinizing cycles of sensing–seizing–reconfiguring, so that the organization is continuously aligning a match between technological possibility and strategic significance. Sensing institutionalizes scanning the environment and experiments; seizing prioritizes and scales bets using a funded portfolio logic; and reconfiguring retired existing assets, realigning the organization and refreshing skills. This is where ‘digital maturity’ becomes dynamic maturity: organizations do not simply access capabilities, they renew capabilities to protect against obsolescence and continue to conduct successive waves of value creation (Teece, 2007; Warner & Wäger, 2019).

Transition Logic and Feedback Loops

Transitions across stages are bounded and governed by relevant gating conditions. For example, A→B requires proof of value and the emergence of architectural fit; B→C requires evidence that the data and the operational model are scalable; C→D requires evidence that appropriate governance boundaries exist for investment portfolio decisions and that there is capacity for reconfiguration. Two feedback loops are explicit. First, corrective loops signal a regress from C or D back to B when audit, risk or performance signals expose readiness gaps (e.g., data lineage or model risk). Second, exploratory loops route back to node ‘A’ to promote ‘seed use cases’ as sensing reveals emergent opportunities from any point in the process. This dual-loop design helps to explain the non-linear nature of our observations and also makes it possible for maturity not to ossify into static checklists (Mikalef et al., 2019; Vial, 2019; Warner & Wäger, 2019).

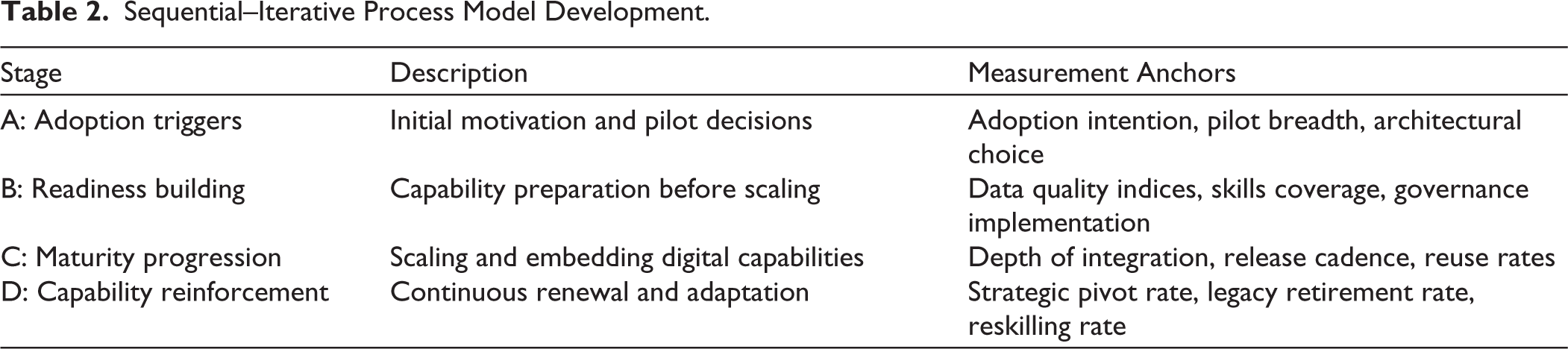

Operationalization and Measurement Anchors

Table 2 outlines the sequential–iterative process model that explains how organizations transition from initial AI adoption to sustained capability renewal. It delineates four interrelated stages—adoption triggers, readiness building, maturity progression and capability reinforcement—each associated with specific measurement anchors such as data quality indices, integration depth and reskilling rates. The table serves as both a diagnostic and an evaluative tool, enabling researchers and practitioners to assess organizational progress along the adoption–maturity continuum. By translating theoretical constructs into measurable indicators, Table 2 reinforces the model’s practical applicability for tracking digital transformation trajectories and ensuring long-term strategic adaptability.

Sequential–Iterative Process Model Development.

These markers make the model auditable and comparable across cases, converting a construct of a pathway into a performance-linked operating system (Chanias & Hess, 2016; Jöhnk et al., 2020; Warner & Wäger, 2019).

Validation of Findings

To enhance the reliability and credibility of the derived process model and thematic interpretations, a multifaceted approach to validation was used that was informed by the ideas of methodological triangulation and theory–data convergence widely embraced in qualitative information systems and digital transformation studies (Eisenhardt & Graebner, 2007; Vial, 2019; Yin, 2018). The approach took into account the fact that this study was based on extracted secondary data and so rigour was involved through structured cross-verification approaches instead of statistical inference.

Cross-source Triangulation

Each coded data point from annual reports, regulatory filings, analyst briefings or industry case repositories was verified against at least one independent source. For example, ‘AI-driven supply chain optimization’ programmes reported were verifiable by both investor presentations and third-party analyst coverage. While no single verification point eliminates risk from self-reported corporate stories, cross-referencing from multiple sources increases the factual basis of observed transformation processes.

Coder Consistency Checks (Inter-coder Reliability)

Using Saldaña’s two-cycle methodology, the two researchers independently coded all initial codes before moving to code-reconciliation sessions to discuss differences in category placement. The two researchers maintained inter-coder agreement greater than 0.85 (Cohen’s κ), for all first-cycle pattern codes, which ensured some level of interpretative consistency for mapping the data to the tri-layer peel-back framework.

Theoretical Congruence

We carefully analysed the themes and transitions in the processes of our case organizations against established constructs from DCT (Teece, 2007), DMMs (Chanias & Hess, 2016; Schumacher et al., 2016) and technology adoption theories (Venkatesh et al., 2003). This was our conceptual anchoring check: any aspects of our findings that were inconsistent with the theory were revisited to check if they were either context-specific deviations from the theory, emergent contributions to the theory or simply coding artefacts.

Negative Case Analysis

In accordance with qualitative best practice, we closely examined cases that did not match the emerging process model, such as organizations that jumped from adopting the change to the latter state without a discernible readiness phase. By studying these outlier cases, we were able to provide rich insights into contemporary adaptation logics, such as relying heavily on pre-existing partner ecosystems or regulator exemptions to shortcut standard capability pathways (Yin, 2018).

Stakeholder Review for Plausibility

No primary interviews were conducted for validation purposes; however, we sought to lead the interpretive validation by engaging with multiple subject matter experts in the area of AI-enabled transformation in a targeted way. A selection of findings was presented in a closed practitioner–academic roundtable, where we received useful feedback regarding the plausibility of the definitions of stages, the logic of the feedback loop and the intent of capability classifications. Indeed, suggestions received from this review (such as involving regulatory compliance as a readiness gate) were incorporated into the final model.

Iterative Refinement

Finally, the process model is represented through the use of cross-case synthesis. The process model was revisited during two iterations: one after initial synthesis that took place immediately following the completion of each of the cases, and the second after the thematic identification process, which rested primarily with the literature. All of the iterations engaged in evaluations of representational size; having the logic and content of the final representation in the process model being (a) both empirically valid against the coded evidence and (b) theoretically consistent with aspects of the extant scholarship produced greater both academic contribution and practical applicability.

Through these validation practices, our findings provide credibility (confidence in accuracy), transferability (generality to like contexts) and dependability (continuity of interpretation over time), which meet the quality standards anticipated in elite ABDC and FT50 journals choice firms find area of qualitative process for research digital transformation (Yin, 2018).

Discussion

The results of this study advance the academic literature on organizational adjustment to a technological change by demonstrating that AI-enabled digital transformation is a sequential–iterative process instead of an ordered linear maturity process. The proposed model, with triggers to adoption, readiness building, maturity progression and reinforcement of capabilities, draws from several theoretical streams, reconciling technology adoption theories like the TAM and UTAUT with frameworks of digital maturity and dynamic capabilities. This process means that we have reshaped the notion of adoption not as a single decision point but as a precursor to a fuller journey of developing capabilities. Whereas the TAM and UTAUT have focused mainly on the determinants of intent to adopt (Davis, 1989; Venkatesh et al., 2003), our findings highlight that sustainable advantage can only be gained if adoption triggers are linked to a systematic, structured readiness-building process. This raises the potential for hybrid models that combine prior to adoption drivers with post-adoption development of capabilities. In advancing the digital maturity literature, this study treats maturity as a dynamic state with potential regressions and explorations, which has been largely absent in traditional maturity models (Chanias & Hess, 2016; Schumacher et al., 2016). The corrective and explorative paths reinforce the path-dependent and non-linear nature of digital transformation (Vial, 2019). Additionally, the analysis provides depth to DCT by connecting elements of Teece’s (2007) core components, sensing, seizing and reconfiguring, to the transformation phases, whereby readiness-building links to seizing, maturity development connects to routinized reconfiguring and capabilities reinforcement relates to the ongoing renewal of sensing capabilities.

From an applied managerial perspective, the model offers organizational leaders a useful navigational framework to guide AI-enabled transformation, reducing the risk of enlarged scaling prematurely or eroding capability. The staged logic provides diagnostic capability to gauge their current position with clarity and allows an organization the capacity to assess readiness shortfalls prior to progression. The model’s diagnostic capability also enables an organization to consider how to allocate resources to maximize near-term impact while also maintaining long-term viability and resilience. The feedback loops signify, in a legitimate way, the right to pause or regress strategically when the organizational conditions for readiness disallow in-movement. The readiness-building phase is also a vital checkpoint for reducing risk during transitions, where resource governance, data quality operands and readiness to integrate systems, as is common in tightly regulated industries, could impede transformation. Following readiness building, capability reinforcement stages represent a sensible approach to institutionalizing renewal in an organization’s function, following an inventory of AI innovations and where to reinvest in reskilling or retire legacy systems, preventing capability from becoming rigid and hence potentially exposing an organization to declining sustainability in the face of disruptive technologies.

While the evidence base covered a range of geographic and sectoral contexts, the model does possess sufficient abstraction to be context-neutral while offering some operational specificity. However, consideration of sector-specific contingencies would be required for transferability. For instance, transformation trajectories in highly regulated industries (e.g., healthcare and finance) may involve readiness building being the primary component of the transformation sequence, given the impacted complexity related to compliance obligations, while digitized industries may have shorter readiness-building processes but be vulnerable to immediate challenges transitioning to the capability reinforcement phase responding to rapid changes in markets (Jöhnk et al., 2020; Mikalef et al., 2019). The model’s global applicability is enhanced given that its adoption triggers are similarly relevant in both developed and emerging markets—competitive pressures, client expectations and regulatory compliance—however, obviously, the relative weighting of each stage is likely to vary depending on readiness or sectoral level of infrastructure maturity, talent location and institutional context afforded.

These insights also provide opportunities for future research. Collectively, longitudinal studies could empirically test the temporal logic of the model where organizations would be traced through multiple transformation cycles collecting data on durations for each stage, frequency of regression and renewal levels. Sectoral comparison studies could lead to refinements to the gating conditions and performance measures for specific sectors. Simulation-based platforms could also utilize variations to feedback loops to gain insights about the relationship between how level and intensity of feedback loops mediate transformation, velocity and sustainability. Also, a combined longitudinal case studies programme and a quantitative maturity assessment would provide larger-scale statistical evidence surrounding the value chain connection between readiness investment and efficiencies.

The study used curated secondary data sources, and while useful, it cannot replace aspects of the internal organizational setting. Namely, informal governance practices, flows of tacit knowledge and ethnogenesis of cultural resistance may also shape or impede transformation. As a result, this study is suggestively analytically generalizable (Yin, 2018), while not statistically generalizable with this logical deduction. These limitations further emphasize the role of data collection involving primary sources in the next phases of research to capture not only the explanatory richness but also the conceptual robustness of the model.

Conclusion

This research has contributed to both theorizing and practice on how organizations transition through changing technologies in an era of AI-enabled digital transformation. By reconceptualizing the framework of transformation as both a sequential–iterative process (i.e., adoption triggers, readiness building, maturity progression, capability reinforcement), the research has bridged gaps with existing technology adoption theories, digital maturity frameworks and dynamic capabilities literature. Our framework is not simply a linear maturity model implying a one-way road of upward movement but acknowledges non-linear movement forward during which organizations may return to earlier stages of capability development to accelerate their readiness related to capability deficits or to respond to changes in market and regulatory environments.

From a theoretical perspective, the model advances adoption-based research by re-establishing adoption as a starting point for building capabilities that extend beyond the mere determinants of intent laid out in TAM and UTAUT. It also reframes digital maturity as a dynamic state inclined in part towards exploratory learning, with structural looping to emphasize corrective and emerging learning and provides process-level mapping of sensing, seizing and reconfiguring capabilities at a level of analysis spanning all aspects of the transformation journey. This level of integration in models provides scholars with the necessary vocabulary and framework from which to study technological adaptation in volatile, uncertain, complex and ambiguous contexts.

For practitioners, the staged and feedback mechanisms provide meaning as a navigation tool that assists in avoiding operational pitfalls associated with misallocated focus on scaling too soon or impairing adaptability via erosion of capabilities. Gating conditions, such as data readiness, governance capability maturity and integration preparedness, adequately condition boarded decision-making in relation to transformation risk and to maintain decisions for strategic adaptability over the long term. In particular, capability reinforcement provides a roadmap for institutional reinvention that transforms early-stage gains rooted in AI adoption into resilient, future-fit capability.

Globally, the framework can be applicable to a wide range of contexts while respecting sector-specific contingencies. The disposition it provides allows organizations—be they digitally native organizations seeking to sprint to the head of the digital maturity curve or traditional incumbents in broadly complex compliance environments—to close gaps in data regulation and privacy laws, in unison towards guided growth. The arrival of adoption triggers—competitive pressure, client pressures, regulatory pressures—does not change despite contextual shifts across geographies, and both sector and institutional differences present opportunities for local sequencing and resource designation.

Ultimately, this research is a starting point for eventual empirical validation by longitudinal and comparative studies and simulative process modelling of transformational pathways. The research is limited to the extent that it draws on secondary data, limiting largely internal insights into organizations; however, the conceptual clarity, process mapping and theoretical integration provided here offer a valuable contribution to academia and an operable roadmap for leaders engaging with/against the complexities associated with an AI-enabled digital transformation. The study not only responds to timely academic and managerial interests but also develops future knowledge development by suggesting a more nuanced and iterative approach to digital evolution, particularly in an era of constant technological change.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.