Abstract

Purpose:

Artificial intelligence (AI) algorithms created with machines and deep learning combined with an extended reality (XR) headset could help train physicians in new technology without the need for the presence of an instructor.

Materials and Methods:

A partial nephrectomy phantom was created from 3D-printed casts, which were designed from an anonymized patient’s computerized tomography scan. The casts were filled with water-based polymers and assembled to create the partial nephrectomy phantom. The students wore a custom-designed XR headset, where instructions were streamed to train and observe them while placing a bulldog clamp on the phantom’s renal artery. Machine learning models were developed from four states (clamp on artery, vein, ureter, and no structure), which were used to create the educational system for instructorless surgical training (ESIST). Customized deep learning architecture was deployed in real time to analyze the video feeds and determine user progress. The computer determined one of four classes based on the object clamped to simulate real circumstances, provide appropriate instructions, and track errors. Seventeen participants completed a 19-question survey for educational value and usability after performing the procedure.

Results:

High algorithm performance was confirmed using confusion matrix scores, which achieved an accuracy of 99.91% for placement of the bulldog clamp on the renal artery. Survey responses were strongly disagree—1 (0.3%), disagree—46 (15.7%), agree—139 (47.4%), and strongly agree—107 (36.6%) with the value of ESIST. The responses were converted to a 2-point scale and reported as favorable in 84% of the 19 questions (range 47.5–100%).

Conclusions:

We introduced an AI system to train surgeons to place a clamp on the renal artery using a kidney phantom while wearing an XR headset. This investigation suggests AI could assist in surgical education, potentially offer a means to monitor procedural progress, and provide a pathway for autonomous learning.

Introduction

Deep neural networks (DNNs) are a class of computational models central to a subfield of artificial intelligence (AI) known as deep learning. In the medical field, DNNs have been applied to interpret radiographical images, identify pathological features, and analyze vast datasets to uncover clinically relevant insights. 1 Such capabilities have already been harnessed in medical AI to aid clinicians in managing oncological diseases. Notably, DNNs have been employed to identify gene expression signatures associated with bladder cancer, predict disease recurrence following prostatectomy, and assess urinary continence recovery, among other applications.2–7

Surgical education requires a trainee to gain experience by directly working with a skilled instructor. Becoming competent in complex medical devices does not always adhere to the traditional educational framework of “See One, Do One, Teach One.”8,9 Surgical instruction of residents has traditionally required their presence in the operating room (OR), which has been referred to as “education by random opportunity” and can result in inconsistent skill acquisition. Institutions have also added simulation to further enhance residents’ operative skills.10,11 While the availability of proctors can limit both simulation and patient-based procedural training, other issues can also impact the adequacy of the resident experience. Training can be diminished by an instructor’s own limitations and experiences. In a review of the accuracy of the general surgeon’s ability to assess their level of technical skills, Rizan et al. found that self-assessment improved with increasing experience, practitioner’s age, and the use of video playback but was reduced by stressful learning environments, lack of familiarity with assessment tools, and advanced surgical procedures. 12

The housing of large surgical video libraries has created the opportunity to use AI to identify crucial steps in procedures, eliminating the need to exhaustively review entire videos. 13 This capability, however, falls short of creating the full algorithms to initiate an autonomous learning platform.

Extended reality (XR), delivered by a headset, allows instructions and video content to be conveyed to the trainee’s eye while keeping the student’s hands free during the procedure. 14 In theory, a proctor can be replaced by algorithms that deliver automated content and live instructions to the student. The coupling of real-time XR with a first-person and/or laparoscopic camera further supplemented by other imaging allows the system to continuously monitor the case and provide feedback and corrective measures to the student.

We sought to develop educational software for instructorless surgical training (ESIST) utilizing deep learning coupled with an XR headset to create a closed-loop educational system. This could eliminate the need for the presence of a proctor to train and observe a student. To test this hypothesis, ESIST was developed using a partial nephrectomy phantom to teach the student to clamp the renal artery during a laparoscopic partial nephrectomy. The principles of this investigation adhered to the standardized reporting of machine learning applications in urology (STREAM-Uro) framework. 15

Materials and Methods

Hardware development

Several hardware components were developed for this investigation: kidney phantoms, an XR headset, and computer with drivers and boards to integrate and run both hardware and software.

Kidney phantom

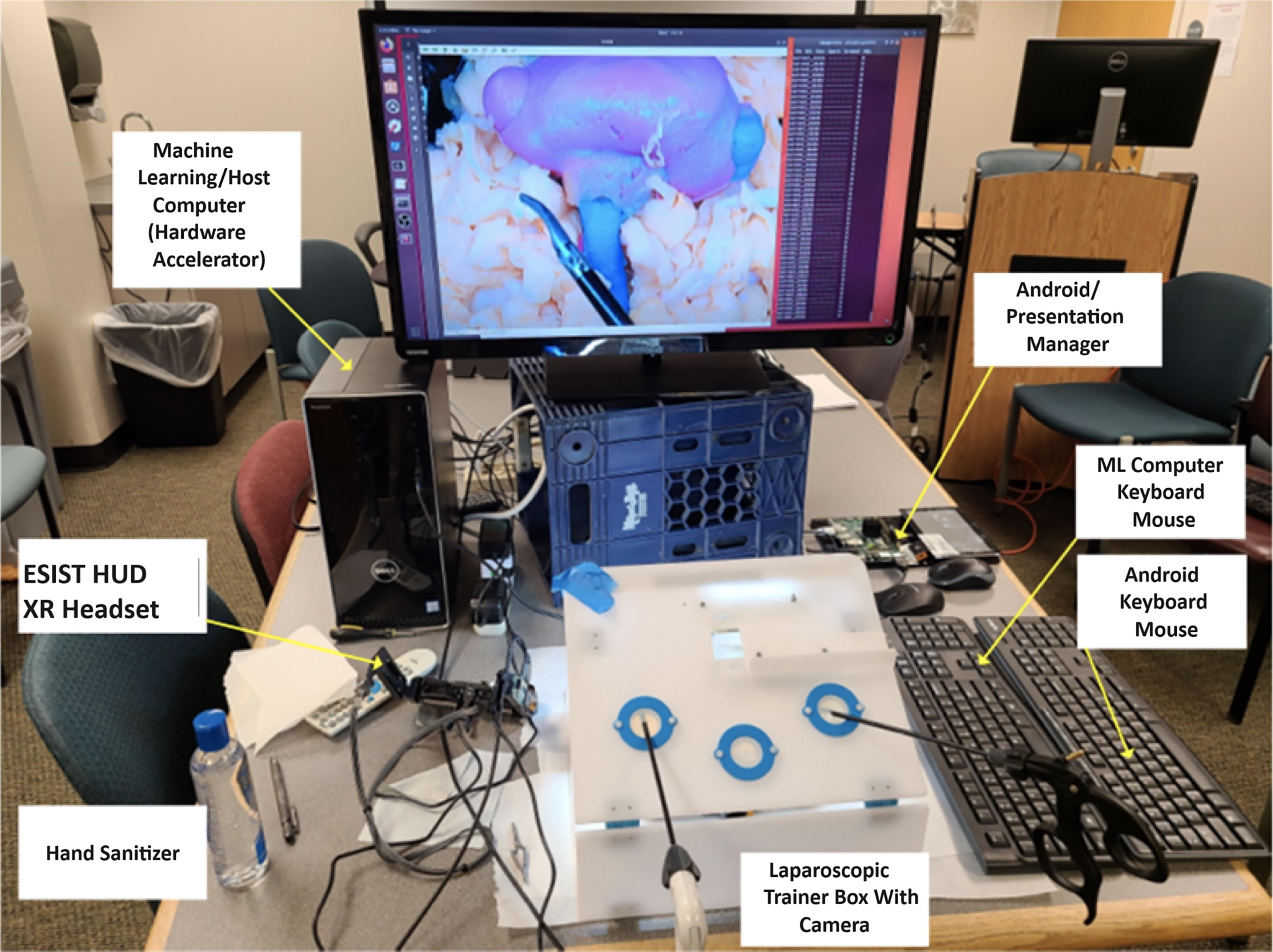

A kidney phantom was created from 3D-printed casts, which were designed from an anonymized patient’s computerized tomography (CT) scan. The casts were filled with water-based polymers and assembled to create a partial nephrectomy model with kidney tumors. Before proceeding with tumor excision, the students first exposed the renal hilum, separated the renal artery from the vein and ureter, and then placed a bulldog clamp across the artery. Clamp time was limited to 20 min to simulate the prevention of ischemic damage to the kidney. Various formulations of water-based polymers were used to validate the model, including measurements of Young’s modulus and comparison of bleeding and suturing from fresh porcine kidneys. 16 The phantom consisted of renal parenchyma, upper and lower pole tumors, perinephric fat, collecting system with pelvis and ureter, renal artery, and vein. To properly train the software, all tissues had life-like colors, texture, and deformability. The kidney phantom was placed into a standard laparoscopic training box that contained a camera and illumination (Fig. 1).

ESIST. A laparoscopic training box was used as the training environment where the student learned how to place a bulldog clamp on the renal artery prior to a partial nephrectomy. The kidney phantom was created in the lab. Android Presentation Manager—see text. AR headset is described as an XR headset in the text. AR, augmented reality; DNN, deep neural network; ESIST, educational system for instructorless surgical training; HUD, heads-up display; XR, extended reality.

Extended reality headset

The headset, with a first-person camera (RealSense, Intel, Santa Clara, CA), allowed the student to keep both hands free while receiving the instructions and served as a substitute for an instructor standing next to the student. An XR headset was designed with customized high-definition multimedia interface (HDMI) boards and an optical engine containing high-resolution see-through reflective waveguides (OE Vision, Lumus Ltd., Ness Ziona, Israel). The HDMI boards received signals through a micro-HDMI connector and converted these to DSI (differential signal interface) signals with an HDMI Bridge. The DSI signals were converted by the waveguide application-specific integrated circuit to digital video timing and then presented to the optical waveguide. Optical engine characteristics included 1920 × 1080 resolution per eye, field of view of horizontal: 32.7° ± 0.5° and vertical: 18.4° ± 0.5°, reflective liquid crystal display, 4,500 nit/W luminance, and ≥70% see-through transmission. The platform was optimized for the surgical workspace, creating functionality critical for this application in the OR.

The optical engine received video feeds from miniature projectors assembled into an in-house printed frame. The transparent display provided an unobstructed view of the surgical field while simultaneously allowing the ability to overlay prompts or multimedia content to the eyes (Fig. 2).

XR headset developed to be used in conjunction with the ESIST program. Text and video instructions from the gaming computer were streamed to the see-through optics of the headset prior to starting the procedure. The instructions appeared as an overlay on the video monitor. When the procedure began, the student looked at the monitor while step-by-step text appeared at the see-through optics. If the student incorrectly performed a step (placing the clamp on the vein instead of the artery), ESIST identified the error and then streamed the correct step to the headset.

Software development

ESIST was designed to teach the students how to correctly place a bulldog clamp on the renal artery, simulating initiation of warm ischemia.

Video image acquisition and processing

Video images were recorded from the laparoscopic box camera. Correct (clamp on artery) and incorrect steps (clamp on vein, ureter, or no clamp) were used to train the ESIST platform. Two authors performed each of the four clamp placements on the phantom with the renal hilum exposed. If the clamp was placed on the vein or the ureter, automated instructions were delivered to the participants via the headset to remove the clamp and place it on the artery. Over 10 trials, 396,043 images were collected of which 294,961 of the images were used for training data and 101,082 images for validation. A total of 96,950 images from the training dataset were quality-filtered, leaving 198,011 images to build the model (test datasets 1–3). The 396,043 images collected were comprised of four categories: approximately 98,740 images showed the clamp on the renal artery (correct placement), while 99,221, 97,000, and 101,082 images depicted incorrect placements on the vein, ureter, or no structure, respectively. To address potential class imbalance, several strategies were employed. The dataset underwent augmentation to expand minority classes (e.g., rotating or mirroring) and targeted oversampling for categories underrepresented during interim training phases. The final distribution was monitored to ensure that no single class dominated the training pipeline. However, due to unannounced relabeling steps, the precise final class proportions could not always be independently verified.

Video image analysis and model building

Customized deep learning architecture was deployed in real time to analyze the video feeds and determine user progress. Architecture customization included modified visual geometry group network with 16 layers (VGG16) base with three convolutional neural network (CNN) blocks, batch normalization, and skip connections; custom preprocessing pipeline for surgical video; and 393,135 trainable parameters optimized for instrument tracking. The computer determined one of four classes based on the object clamped to simulate real circumstances, provide appropriate instructions, and track errors. The machine learning (ML) model’s role in classification included real-time inference engine processing the video feeds, a final state machine (FSM) managing state transitions, five distinct states with defined confidence thresholds, and temporal smoothing over 0.25-s windows.

Several ML algorithms were tested to find the one with the best performance. These included InceptionV3, 17 ResNet50, 18 VGG18, 19 Inception-ResNet, 20 and a series of CNN architectures. Table 1 compares the performance metrics (accuracy, precision, recall, F1-scores), along with rough computational throughput of these algorithms.

Multiple Neural Network Architectures Including InceptionV3, ResNet50, VGG16/18, a Hybrid VGG16–ResNet50, and a “Custom CNN,” Were Evaluated Before Selecting the Final Approach

CNN, convolutional neural network; FPS, frames per second; GPU, graphics processing unit; ResNet50, residual network with 50 layers; VGG16/18, visual geometry group network with 16/18 layers.

While the deep learning neural network was shown to be effective, false positives were experienced when the vein was clamped, which was remedied by adding depth perception to the red/green/blue video. Depth perception was accomplished through stereo camera calibration, real-time frame analysis at 30 fps, custom depth estimation algorithms, and integration with the FSM for temporal consistency. The red channel emphasized blood-filled vessels, the blue channel highlighted metallic instrument edges, and the green channel accentuated soft tissue. Each channel was processed at 10-bit depth.

The kidney had each vessel clamped three times on each side of the vessel at varying locations and by moving the surrounding fat. Videos were recorded at 30 fps (resolution of 640 × 480 pixels), and each frame was resized, contrast-optimized, and labeled for training. A data augmentation pipeline was used to strengthen the model’s robustness while preserving critical surgical features. Geometric transforms included mirroring applied to 25% of images, rotations of ±15° to 20°, and shear transformations (±0.1 radians) to 15%. Intensity adjustments included contrast shifts of ±20–30% of the images, and brightness variations were ±15–25%, with each transformation calibrated against real surgical footage to avoid losing key anatomical cues. Environmental effects included blur (3 × 3 to 5 × 5) added to 10% of the images and simulated surgical smoke (10–30% opacity) applied to 15%. Multiple surgeons reviewed 1,000 augmented images, with 98.7% judged as realistic. Vessel boundaries, clamp orientation, and tissue depth cues remained >95% intact.

Model building and validation

Each set of manipulations was run as lists within a list, and possible combinations were evenly sampled separately in the training data. The model was then trained until it converged. Clinical validation resulted in decreased false positives from 4.2% to 0.3%, clamp-to-vessel distinction improvement of 89%, and a 99.91% accuracy in distinguishing actual clamping versus visual overlap. Only 2 ms of additional latency was introduced, preserving real-time performance.

Workflow automation and finite state models

A “workflow automation” program (WAP) was developed to accept csv file formats for the training scenarios, which then generated C# code files creating a WAP finite state machine decision tree. Another finite state model (FSM) was developed as an addition to the raw deep learning model (unrefined CNN output before the FSM layer) to add stability when making predictions by removing statistical invariance between the probabilities of the classes of the model over a set of multiple images. A second FSM was added to enhance stability by reducing frame-level variance. In creating the FSM, criteria were established to determine a confidence interval for the predictions of the raw DNN. These included five distinct states (unknown, vein, artery, ureter, no clamp), confidence thresholds for transitions, temporal smoothing over 0.25 s windows, and a frame count weighting system. The WAP smoothed noisy frame-by-frame predictions and created a structured sequence of recognized surgical steps. Potential drawbacks included delays in transitions if extremely rapid clamp changes occurred, and reliance on consistent labeling over consecutive frames and mislabeled data compounding errors within the FSM window.

To provide smoothed output, the difference in the sum of probabilities for the sum of any class probability for the prior 0.25 s was determined. The maximum of the minimum differences for all the different classes from that 0.25 s clip was compared against what the prediction threshold for the current class was from the raw ML model. In addition, frame count for the classes was weighted as a form of linear windowing, emphasizing most recent classifications over more distant ones. The output from the DNN was delivered to the WAP through a serial port, which used a gaming engine (Unity Technologies, San Francisco, CA) to perform decision tree processing before deciding which instruction to display to the user. The WAP also displayed both static and live video images such as educational diagrams and premade video clips to the student via the XR headset.

The WAP was further programmed to generate a log file of states during the procedure. This log file was used to deliver a performance score by assigning points for each state achieved (positive points for correct actions such as clamp on renal artery, and negative points for incorrect actions such as clamp on ureter) and by tracking time within each state. The log file generated a grade, which was displayed to the user at the end of the procedure and exported as a report card to track performance.

Software/hardware integration

Training of the students was accomplished by presenting them portions of the procedure (correct and incorrect states) via the XR headset using a gaming engine with appropriately timed prompts and instructive images. The headset was connected to the “Android Presentation Manager” (DART-6UL, Variscite, Lod, Israel) running the compiled WAP executable software. The Android Presentation Manager received the DNN class information from the desktop computer and displayed the appropriate surgical instructions through the XR headset see-through optic (Fig. 3).

ESIST signal flow between the XR headset, android computer hosting the WAP, and desktop computer hosting the DNN hardware accelerator. WAP, workflow automation program.

System testing and participant survey

Seventeen participants were trained by ESIST and completed the bulldog clamp procedure (Fig. 4). Following the procedure, a 19-question survey with a 4-point scale between strongly disagree to strongly agree was completed. Users included surgeons and residents (n = 11), OR nurses and technicians (n = 5), and a medical device clinical applications specialist.

Seventeen students were trained and tested by ESIST. Instructions appeared in the XR headset while the student looked at the monitor, which displayed kidney images.

The kidney phantom development was supported by NIH NIBIB grant 1R41EB026358-01A1, while ESIST was supported by a National Science Foundation Grant (1913911). The study was conducted in the Viomerse lab (160 Office Parkway, Pittsford, NY 14534). The initial phantom technology was developed at the University of Rochester Medical Center Departments of Neurosurgery (J.J.S.) and Urology (601 Elmwood Ave, Rochester, NY 14642).

Results

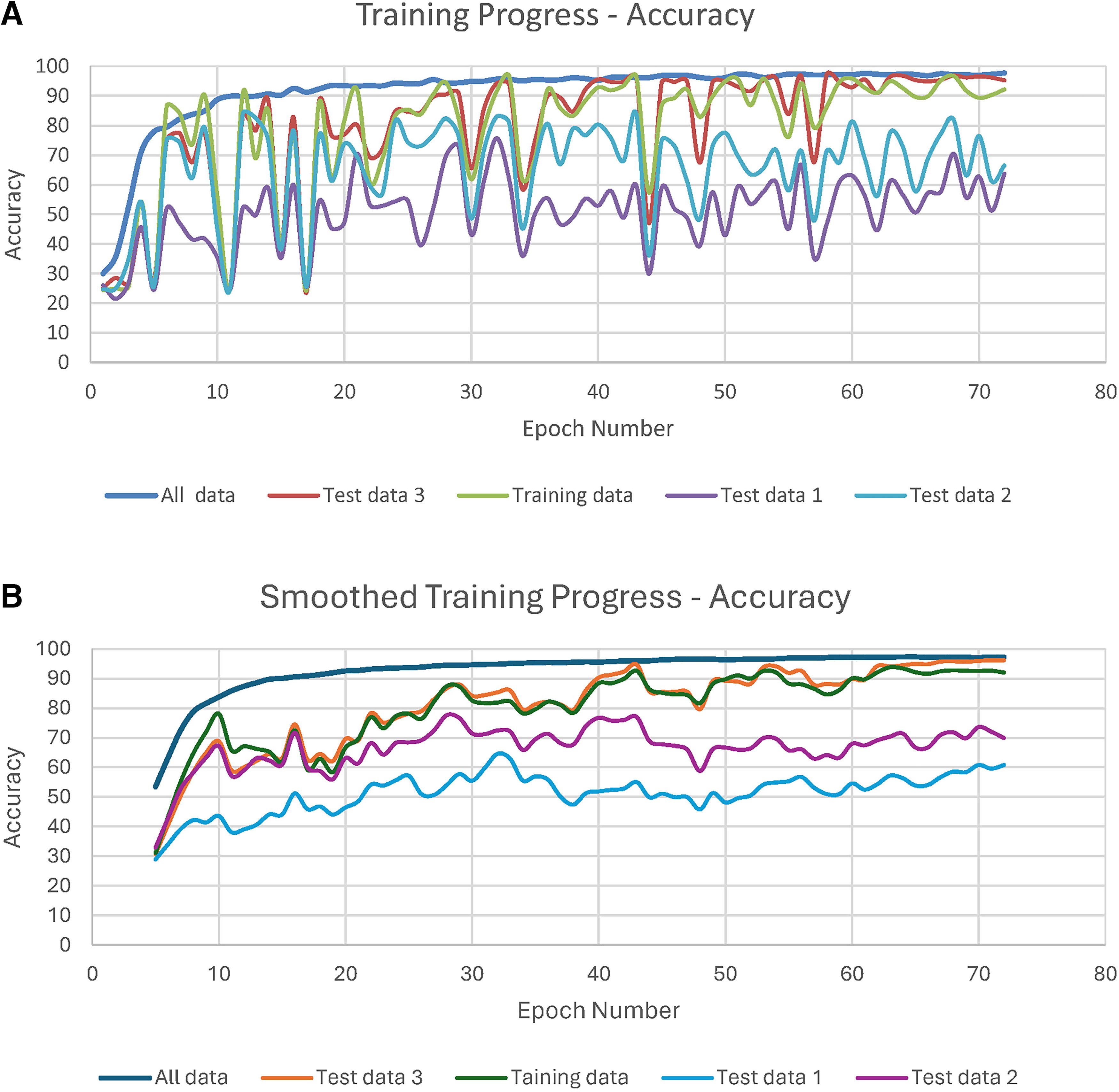

The model was trained until it converged and no longer improved without further loss. By epoch 70, the loss decreased to 0.10, with stability maintained for additional 20 epochs (Fig. 5). Consistent performance was observed across the validation sets with no signs of overfitting in the validation metrics. Periodical testing of the model’s performance during training resulted in overfitting several of the datasets. The FSM refined the model by reducing statistical invariance over multiple frames, allowing for more consistent predictions, reaching an accuracy of 96% for the four states (Fig. 6a, b). These refinements reduced prediction variance by 47%, enhanced temporal consistency checking, created a weighted frame counting system, and produced clear transition criteria between states. Cross-validation also showed consistent performance.

The model was trained until loss no longer occurred or stabilized.

The confusion matrices generated for the validation data demonstrated a progressive increase in the accuracy of recognizing placement of the bulldog clamp on the renal artery to 99.91% as more datasets (8,468, 12,606, and 80,022 clips) were added (Fig. 7a–c). Accuracy was further validated by performing per-class precision and recall metrics, obtaining temporal consistency measurements, and by real-world performance.

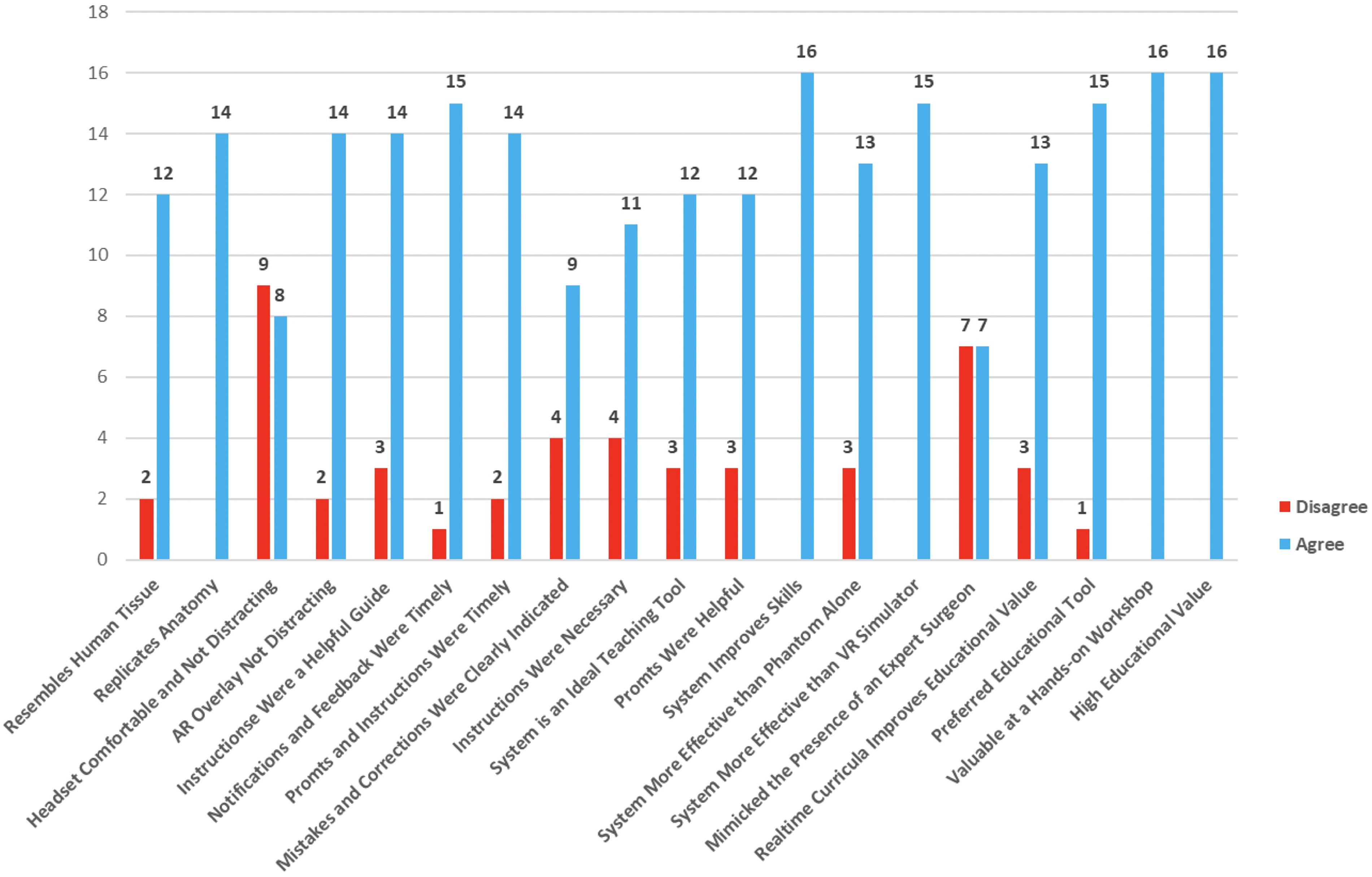

Bulldog clamp placement on the renal artery was successful in 17 participant attempts with no false positives. Participants strongly disagreed—1 (0.3%), disagreed—46 (15.7%), agreed—139 (47.4%), and strongly agreed—107 (36.6%) that the ESIST system had educational value (Table 2). The responses were converted to a 2-point scale (strongly disagree/disagree vs. agree/strongly agree) (Fig. 8). Agree and strongly agree responses were reported for 84% (range 47.5–100%) of the questions. Agreement scores of 80% and 90% or higher were noted for 15 and 5 questions, respectively. 100% of the surveyed said the setup of the kidney phantom replicated relevant human anatomy, while 87% thought the simulated tissue resembled texture and behavior of live human tissue. 52.9% thought the headset was comfortable, and 12.5% were distracted by the overlay in the see-through optics. 73.3% said the system was an ideal teaching tool for the procedure, while 100% thought practice with ESIST had high educational value and that it would be more effective than a virtual reality simulator.

Survey responses for the ESIST platform. Responses have been categorized as agree (blue) and disagree (red). There were 17 questions, but not every question was answered by all the participants.

Survey Results for 17 Users of the ESIST System

Of the possible 323 responses, 293 (90.7%) were answered.

ESIST, educational system for instructorless surgical training; VR, virtual reality; XR, extended reality.

Discussion

ESIST was designed as a “closed-loop” learning system where the software interacted with the continuous video feed from the laparoscopic camera and automatically displayed instructive prompts and corrections through the XR headset. The deep learning techniques deployed were able to extract features from the kidney phantom images to help create predictive models in real time. These models extrapolated the relevant features within the images to classify the appropriate clamp placement (off, or on vein, artery, ureter). The final model was then validated on participants who were surveyed about usability, headset comfort, and the ability of this automated system to provide adequate instruction. Fivefold cross-validation results were accomplished with external datasets, user performance metrics, and statistical significance analyses. To further support the validity of our results, we believe our datasets were robust as they comprised 396,043 images collected from standardized training sessions. A power analysis indicated this sample size would detect effect sizes of 0.1 with 95% confidence (α = 0.05, β = 0.8). 21 Data collection involved contributions from two expert surgeons (>10 years’ experience) performing standardized procedures. We elected to study only four states: correct artery clamp (n = 98,740), vein clamp (n = 99,221), ureter clamp (n = 97,000), and no clamp. Furthermore, we evaluated five deep learning architectures through fivefold cross-validation following protocols established by Sheth et al. 22 The final hybrid architecture combined elements validated by Devanish et al. 23 We also conducted three validation studies following Myllyaho et al. 24 Finally, our findings aligned with Morris et al.’s meta-analysis showing AI-based surgical training can reduce learning curves by 35–50%. 25

By using automation theory, all possible correct and incorrect actions that could be performed during the surgical procedure were listed on the WAP Excel spreadsheet and combined into a finite state machine format. 26 The WAP systematically orchestrated each possible action (clamping artery, vein, ureter, or no structure) into a prespecified “action code.” These codes were automatically annotated in each video sequence, allowing the FSM to scale if additional steps or complexities were introduced. Scalability relied on reassigning new action codes, plus additional confidence thresholds, without altering the fundamental FSM logic.

ESIST was designed to automatically generate the WAP code which tracked the surgeon’s decision-making process during each step of the procedure and thereby enabled autonomous training. The “grading guide” assigned negative points for errors and excess procedure time, allowing ESIST to grade the user. Workflow automation (WA) simplified the compilation of this information into an executable program.

Abid et al. recognized that automatic machines are still mostly under control of their operators but when autonomy is introduced large adaptations and changes to the environment can occur without the need for input of an external user. 27 Relevance of Abid to the ESIST system includes automated performance assessment, real-time feedback capabilities, objective skill evaluation, and integration with surgical workflow. In a multidisciplinary survey of 40 stakeholders for surgical education, Vedula et al. found consensus that AI-enabled metrics for surgical education should be used to assess proficiency of skill acquisition of a surgeon’s performance and provide meaningful, actionable feedback. 28

Differences in surgical skills can contribute to as much as 25% of the variations in patient outcomes; therefore, improved training combined with performance monitoring is critical. 29 In a review of several publications on the use of AI to improve surgical skills, Cacciamani et al. noted French who created a model to provide immediate formative feedback and predict the technical skill level of surgeons within seconds.30,31 This study used video and tool motion from 98 surgeons but was limited to peg transfer, suturing, and circle cutting. In a more real-life scenario, Hung et al. used ML algorithms, which were trained using automated performance metrics, instrument kinematic events data, and hospital length of stay. 32 However, limitations of interpretability, overfitting, training time, and memory usage were encountered.

Surgical instruction and procedure assessment have been advancing with the availability of wearable head-mounted cameras. The addition of the first-person headset camera is necessary to monitor open surgery and hand movements once DNNs are developed for more complicated open surgical procedures and can handle motion degradation of the images. Stone et al. described beta testing an AR headset for remote training in prostate biopsy and rectal spacer insertion. 14 He demonstrated the successful use of a first-person camera where live operative images were displayed in real time to a remote instructor. A telepresence platform allowed instructor-student interchange. The ESIST program described in this investigation seeks to do the same but differs by replacing the remote instructor with an AI program.

Pugh et al. used multisensory wearables to record and analyze videos. A surgeon performed a simulated bowel repair and wore an electroencephalogram, motion tracking sensors, and audio-recording equipment. Preliminary results showed that with additional data streams, AI interpretation was improved. 33 Adding motion tracking, EEG, or other sensor streams could further enhance the ESIST platform by supplying additional signals for skill assessment. For instance, concurrent motion data might help confirm that a clamp is actively being placed rather than merely hovering over a vessel. Preliminary internal tests did suggest synergy between video data and kinematic metrics, but no conclusive results were obtained during the study.

Nithipalan et al. explored the use of mixed reality (MR) for remote feedback and guidance during transrectal ultrasound biopsy simulation. 34 Students wore the Vuzix M4000 smart glasses (Vuzix, Rochester, NY), which were connected to a remote instructor using a cloud-based MR platform (Help Lightning, Birmingham, AL). The enhanced performance characteristics of the XR headset designed in the current study could prove to be essential versus using off-the-shelf devices, which have inferior optics that were designed for industrial rather than medical uses. Higher resolution and a substantially larger field of view will be necessary when building more complex autonomous surgical training programs.

In contrast with the current study that prospectively created autonomous supervised deep learning algorithms in a controlled environment using an anatomical model, Khanna et al. proposed a method of automated identification of key steps for robot-assisted radical prostatectomy (RLAP) using AI. 35 Full-length RLAP videos were manually annotated to identify 10 critical surgical steps to train a novel AI computer vision algorithm, which achieved 92.8% overall accuracy for the full-length video. Zohar et al. also proposed using semi-supervised learning to replace training the deep model to detect out-of-body and nonrelevant segments in surgical videos and showed mean detection accuracy of above 97% after several training-annotating iterations. 36 While the work of these investigators highlights the value of utilizing the vast stores of recorded video, an advantage to the current system and methodology is that it allows students to become proficient in a procedure outside of the OR, thereby further reducing the risks of surgical errors. The transition from autonomous training on a phantom to a patient would also need validation.

There are several limitations to this investigation. The surveys pointed to the desire for students to utilize the system, as more than 80% agreed that ESIST proved to be valuable. ESIST surpasses older systems through (1) real-time classification and feedback delivered via XR, (2) robust AI-based assessment that identifies clamp misplacement instantly, (3) an FSM-based temporal smoothing mechanism, and (4) automated performance tracking. Nonetheless, user surveys show that only 50% believe that the system mimicked the presence of an expert surgeon, underlining the need for ongoing refinement and acceptance testing (Fig. 8). The platform also only taught and assessed a small but important component of a partial nephrectomy procedure, securing the renal artery. An entire procedure would require many more video files to be analyzed and additional DNNs to be built. However, an advantage to the current methodology is the ability to create reproducible complex anatomical models using life-like phantoms. This may provide some consistency, which will allow for more accurate ESIST development and training. Twelve and one-half percent of the participants also found the headset to be distracting. Distraction can be lessened in future headset designs that include implementation of adjustable opacity, more optimized instruction placement, and reduced latency in feedback delivery.

Conclusions

An AI system using DNNs was developed to autonomously train surgeons in performing a critical step during laparoscopic partial nephrectomy while providing corrective measures and skills assessment. The XR headset allowed the user to have both hands free, as instructions and corrective prompts were projected to the student’s eyes. This preliminary proof of principle should encourage others that this approach, which prospectively created video content using an anatomical model, could improve education, potentially offer a means to measure procedural skills, and provide a pathway for more autonomous learning.

Footnotes

Acknowledgments

All work was conducted in the Viomerse laboratories in Pittsford, NY. The technology utilized to create the phantoms was developed at the University of Rochester Medical Center, Departments of Neurosurgery and Urology, and is licensed to Viomerse, Inc.

Authors’ Contributions

J.J.S.: Conceptualization (lead), funding acquisition (lead), investigation (supporting), supervision (lead), writing (supporting). N.N.S.: Writing (lead), visualization (lead), conceptualization (supporting). S.H.G.: Data curation (supporting), formal analysis (lead), funding acquisition (supporting), investigation (lead). K.Z.: Data curation (lead), formal analysis (supporting), investigation (supporting), writing (supporting). M.P.W.: Funding acquisition (lead), project administration (lead), resources (lead).

Author Disclosure Statement

J.J.S. is president of Viomerse but receives no compensation. N.N.S. is a part-time employee of Viomerse and serves as the chief medical officer. S.H.G. is a full-time employee of Viomerse and serves as the chief technology officer. M.P.W. is a full-time employee of Viomerse and is the company CEO. All the above have ownership in Viomerse.

Funding Information

The kidney phantom development was supported by NIH NIBIB grant 1R41EB026358-01A1, while ESIST was supported in part by a National Science Foundation Grant (1913911). All the work was conducted in the Viomerse lab (160 Office Parkway, Pittsford, NY 14534). The initial hydrogel technology was developed at the University of Rochester Medical Center, Departments of Neurosurgery and Urology (601 Elmwood Ave., Rochester, NY 14642).