Abstract

This article proposes a systemic perspective for understanding disinformation as a communicative phenomenon embedded in socio-technical environments, particularly social media platforms. Drawing on systems theory and platform studies, it reframes disinformation not as a mere collection of false claims, but as an adaptive system composed of elements situated within a dynamic environment and shaped through relational dynamics. Platforms are understood not as passive hosts, but as structurally coupled systems—primary environments that co-evolve with disinformation systems, reinforcing their circulation through algorithmic affordances and economic incentives. In addition, the article presents a methodological framework that enables researchers to identify and analyze disinformation as a systemic process across diverse contexts. This approach supports a more nuanced understanding of disinformation's resilience and impact, encouraging situated, cross-platform, and relational analyses that move beyond traditional content-based models.

Introduction

In recent years, disinformation has emerged as a critical issue in public discourse, reshaping political landscapes, public health measures, and societal trust in institutions (Benkler, Faris and Roberts, 2018; Cinelli et al., 2020; Tucker et al., 2018). The phenomenon has gained attention also because of social media platforms, which do more than simply host disinformation but they actively amplify it (Author, 2024). Through algorithmic curation, engagement-based incentives, and virality-driven metrics, platforms provide what may be called “structural advantages” to disinformative content—enhancing its reach, visibility, and circulation over more accurate or contextualized information. This systemic support makes platforms not just passive intermediaries that circulate the content, but active agents in the spread of disinformation—offering the incentive, momentum, and reach that underpin the phenomenon as it stands today (Hilary and Dumebi, 2021).

Yet despite the volume of research on disinformation, conceptual challenges persist. It is still difficult to conceptualize the phenomenon. Some authors have focused on disinformation as an empirical object, which can be defined in particular contexts, for example, as “fake news” (see Fallis, 2009; Wardle and Derakhshan, 2017). Others have focused more on its effects in society, emphasizing its impacts (Lazer et al., 2023; Marwick and Lewis, 2017). Despite these contributions, there still remains a fundamental difficulty in defining what qualifies as disinformation. As studies have demonstrated, disinformation is not always an obvious or overtly false claim—it can also occur when truthful information is framed in misleading ways, stripped of context, or selectively emphasized to produce false or harmful interpretations (Fallis, 2011; Wardle and Derakshan, 2017). Thus, while many works have sought to on how to detect, classify, or debunk disinformation, relatively few have explored its deeper theoretical status or systemic properties. This gap reveals the need for a more comprehensive framework—one that understands disinformation not as isolated content, but as part of an evolving communicative process embedded in technological, political, and social infrastructures.

To address this complexity, the present article proposes a framework: viewing disinformation as a dynamic system, structurally coupled (Maturana and Varela, 1992) with social media platforms. Platforms are not mere environments for content distribution; through dynamics such as platformization and algorithmic mediation (Gillespie, 2010; Van Dijck, Poell and De Waal, 2018), they are integral to the constitution of disinformation practices. A systemic perspective allows us to move beyond the analysis of content and instead examine the relational conditions—including infrastructural design, power dynamics, and economic incentives—that allow disinformation to emerge, persist, and evolve. This systemic perspective invites us to consider not just content but also the intricate relations, interactions, and contextual conditions that enable disinformation to persist and proliferate in social media platforms (Kuo and Marwick, 2021). This systemic approach underscores the need to integrate content analysis, dynamics of platform governance, and social context to effectively understand and respond to the multifaceted phenomenon of disinformation.

This article thus proposes a theoretical reflection on disinformation from a systemic perspective, considering it not as a set of isolated contents, but as part of broader communicative, technological, and sociopolitical dynamics. At the same time, it aims to contribute methodologically by offering a framework that helps researchers apply this perspective in practice. Our framework is based on three interconnected dimensions: (a) elements, such as content, actors, and infrastructures involved in disinformation; (b) environment, which includes the platform dynamics and sociopolitical context; and (c) dynamics, which refers to the relational patterns and feedback loops that sustain disinformation over time.

The difficult concept of disinformation

A changing concept

As we explained, disinformation today is a phenomenon intrinsically connected to social media platforms. One of the most widely cited characteristics in definitions of disinformation, in this context, is its association with false or misleading content. This focus has been present in the literature through several other concepts, such as misinformation (Wardle and Derakshan, 2017), fake news (Alcott and Gentzkow, 2017; Tandoc Jr., Lim and Ling, 2017), propaganda (Benkler, Faris and Roberts, 2018) and, sometimes, conspiracy theories (Phillips and Milner, 2021). This debate entangles a conceptual discussion focused on two things: (a) content that is, to some degree, false and (b) the intention to deceive by creating and spreading this problematic content. From this perspective, disinformation generally refers to false or misleading information shared with the intent to deceive or manipulate, whereas misinformation is typically understood as false information shared without malicious intent. This distinction, highlighted by Wardle and Derakhshan (2017), is crucial for diagnosing the nature of information disorders, as it signals differences in motivation, culpability, and potential remedies. Fallis (2011) also defends that intention to mislead is a key factor, proposing that even true statements can constitute disinformation if they are used manipulatively to produce a false belief in the audience. However, “intention,” in many cases of spread of content, is difficult to ascertain, and it often becomes impractical to apply the distinction between mis-disinformation rigorously in empirical research or content moderation practices. As a result, the terms disinformation and misinformation are frequently used interchangeably or confused with one another in both academic and public discourse, which can obscure the analytical clarity needed to address the phenomenon effectively.

The two characteristics we pointed became more fluid and difficult to point out as research on empirical cases evolved. Quite often, researcher pointed out that strategies such as cherry picking, reframing, context change, and manipulation could be used to give false impressions to truthful content (Marwick and Lewis, 2017) and, sometimes, even by the perception of the subject (Pennycook and Rand, 2019). This shift has been appearing on several authors as well. Vraga and Bode (2020), for example, emphasizes the need to approach misinformation—and by extension, disinformation—as a bounded and context-dependent phenomenon, shaped by the interplay of expert consensus and the best available evidence at a given moment. Rather than relying on fixed notions of truth or falsity, they argue for definitions that account for how knowledge evolves over time, especially in rapidly changing domains like health and politics. It also highlights the difficulty of drawing clear boundaries between accurate and inaccurate information—one of the key challenges that motivate the shift toward a systemic and relational approach proposed in this article. This highlights a shift from a binary notion of truth versus falsehood toward an understanding of disinformation as a rhetorical and strategic practice. Hameleers (2023) also argue critiques object-based and moralizing framings of disinformation, emphasizing contextualization to understand the phenomena. In the same direction of the conceptual shift, ore recent works, like Bastos and Tuters (2023) or Starbird et al. (2019) have pointed to more complex relations for the study of disinformation. In both cases, authors underline the idea of disinformation as a participatory process, rather than a semantic problem: the content is dependent on the agents and the collective of agents not only to circulate, but also, to be meaningful. These approaches change the focus from disinformation as problematic content to disinformation being a social construction, which aligns with our core argument.

In recent scholarship, the term “influence operations” has gained traction as a way to describe coordinated and strategic attempts to steer public opinion across media ecosystems—beyond single acts of disinformation. Lanuza and Ong (2024) define these operations in the Philippine context as “new campaign tactics” that systematically bypass legacy media by targeting multiple platforms and orchestrating narratives through influencer networks and political brokers. Meanwhile, Wijayanto et al. (2024) highlight how such operations combine automated actors, monetization incentives, and narrative adaptation, producing emergent systemic mechanisms that are more complex than standard disinformation. Rather than isolating individual pieces of false content, this approach focuses on the strategic orchestration of communication practices, often distributed across platforms and actors, and embedded in complex political and economic incentives. This reconceptualization underscores the need to examine disinformation as a dynamic, adaptive system—a view that resonates more with our systemic framework.

Types of disinformation

While the general conceptual idea of “disinformation” has been changing, much has also been also discussed about types of disinformation, based on the characteristics of the content. It is the case of “fake news,” which some authors adopt to describe particular manifestations of disinformation (Allcott and Gentzkow, 2017; Alcott, Gentzow and Yu, 2018; Tandoc et al., 2017). The term “fake news,” in these cases, refers mostly to fabricated news stories that mimic journalistic formats in order to deceive. While this framing has gained traction in public and academic debates, it focuses narrowly on a subtype of disinformation that simulates the appearance of legitimate news content. Consequently, it does not encompass the full range of disinformation practices, which may include memes, videos, manipulated images, or misleading narratives that do not adhere to traditional news conventions.

Another important conceptual category that is often used as a synonym to disinformation phenomena is discussed in the literature is “propaganda.” Some authors use this term to describe communicative strategies that aim to influence public opinion through selective or manipulative dissemination of information (Benkler, Faris and Roberts, 2018). Propaganda shares important similarities with part of the concepts of disinformation, especially in its strategic and often intentional deployment of persuasive narratives. However, propaganda encompasses a broader range of communicative actions, including not only falsehoods but also selectively framed truths, omissions, and appeals to emotion or ideology. In this sense, propaganda is less concerned with the veracity of content and more with its persuasive intent and political utility. Authors such as Stanley (2015) and Jowett and O’Donnell (2012) emphasize that propaganda operates through sustained campaigns designed to shape beliefs and behaviors, often by mobilizing identities, fears, and ideological commitments. While disinformation can be one of the tools used within propaganda, the latter represents a more encompassing framework that situates misleading content within broader communicative and political strategies.

Conspiracy theories are also forms of disinformation. They represent a distinct subtype of disinformation, characterized by their tendency to offer totalizing, often unfalsifiable explanations that attribute major events to the covert actions of powerful groups (Barkun, 2013). While they fulfill the same function of misleading and manipulating perception, conspiracy theories are not synonymous with the broader concept of disinformation, which also includes other forms (Wardle and Derakhshan, 2017). These narratives are frequently sustained within affective communities, where emotional resonance and identity play central roles in their circulation and resistance to correction (Marwick and Lewis, 2017). Uscinski (2019) further emphasizes that belief in conspiracy theories is shaped by cognitive biases and partisan identity, not necessarily by gullibility, which complicates efforts to define intent or fully contain their spread. Therefore, while conspiracy theories may have central role to certain disinformation system, they constitute just one among multiple forms of disinformative practices.

Taken together, the various conceptualizations of disinformation—whether framed as misinformation, fake news, or propaganda—highlight different facets of the phenomenon. Each emphasizes certain elements, such as intent, format, or political function. However, these perspectives often struggle to encompass the full complexity and dynamism of disinformation in contemporary digital environments. They tend to focus more on the object itself than on the contextual dependencies and environmental conditions that shape the phenomenon. This is an important limitation, mostly connected to the tendency to conceptualize disinformation as a static object—something to be identified, labeled, or countered—rather than as a dynamic process or systemic effect. By treating disinformation as an object, these frameworks risk overlooking the relational, infrastructural, and adaptive dimensions that are central to its operation within networked media ecologies. As we argue in this article, there is a shift in more recent works, which start to understand disinformation as a more process-oriented, dynamic concept.

This critical perspective is echoed in the work of Kuo and Marwick (2021), who argue that disinformation must be approached through a critical lens that accounts for historical inequalities, power structures, and media infrastructures. Rather than isolating false content, they emphasize disinformation as a sociopolitical process embedded in broader systems of oppression and platform governance. Similarly, Diaz Ruiz (2023) adopts a market-shaping approach to disinformation, framing it as a consequence of platform-driven attention economies and commercial imperatives. For both authors, disinformation is not simply an object, but a process, but a product of systemic forces—economic, technological, and social—that shape how information is produced, circulated, and legitimized online. Building on this line of inquiry,

This discussion shows that the perception of disinformation is changing. And this work goes in the same direction. We propose to understand disinformation as a system, that is, as part of a communicational and social mechanism, with its own elements, dynamics and effect. A system that is structured in actors’ networks, narratives, power relations, and, of course, local contexts. Disinformation encompasses, thus, a set of coordinated operations that emerge, articulate, and gain meaning within specific structures of mediated communication. It is enacted through practices of sharing, engagement, reinforcement, and repetition that unfold in particular technological and social contexts (Bastos and Tuters, 2023). Through this approach, we emphasize disinformation on social media platforms as a phenomenon with dynamic, adaptive, and systemic characteristics. It involves not only the production of problematic content but also its amplification, normalization, and instrumentalization by a range of actors—including users, influencers, algorithmic systems, and platform infrastructures. In this sense, disinformation cannot be effectively addressed by targeting isolated instances of content alone; it must be understood and confronted as a process embedded and sustained by larger socio-technical systems, mostly, through platforms.

Disinformation as a system

To understand disinformation as a system, it is necessary to revisit General Systems Theory, particularly the work of Ludwig von Bertalanffy (1975; but see also Capra and Luisi, 2014), who defines a system as a set components that interact with each other, organized to try to achieve a specific function or to maintain a state of equilibrium through exchanges with its environment. Systems, thus, are more than the sum of their parts, as the interactions between these elements often create emergent phenomena. In this framework, systems are not static but dynamic entities marked by interdependence, feedback loops, and adaptability. These properties enable systems to adjust and evolve in response to external stimuli, a feature that is crucial for understanding the behavior of communicative phenomena in digital environments. Building upon this foundation, Niklas Luhmann (1995) further developed systems theory in the realm of social communication, proposing that social systems are composed not of individuals or actions, but of communications that reproduce themselves through recursive processes. In Luhmann's view, a social system maintains its boundaries and coherence by continuously generating and connecting its own elements—communications—in response to environmental stimuli.

This framework is particularly relevant to the study of disinformation, which can be seen as a self-reinforcing communicative process that selects, amplifies, and reorganizes content in ways that maintain the system's internal coherence and responsiveness. Understanding disinformation as a system encompasses not only studying its parts but also dynamics that emerge from the interactions between these parts, other systems and the environment. It also means perceiving disinformation as a system that changes in relation to other systems, such as media, politics, or the economy, without losing their internal logic. Moreover, disinformation, as we understand the phenomenon today, exists in structural coupling with social media platforms. This means that platforms function both as part of the environment and as autonomous systems with which disinformation maintains a co-evolving relationship. In systems theory, such structural coupling implies that two systems remain operationally independent while recurrently interacting, allowing them to adapt to each other without losing their internal logic. The idea of structural coupling was first proposed by Maturana and Varela (1992). It refers to the process through which two autonomous systems become mutually interdependent through recurrent interactions. This coupling allows systems to influence each other while preserving their operational autonomy. Within the context of disinformation, structural coupling is particularly useful to understand the tight interdependence between social media platforms and disinformation. They closely interact and adapt to each other's feedback, co-evolving.

These theoretical foundations help understand disinformation not as isolated content or intentional deceit, but as a complex, adaptive communicative system that evolves through continuous feedback among platform algorithms, user behaviors, monetization models, affective dynamics, and sociopolitical contexts. In this sense, disinformation becomes a system capable of self-regulation, reinforcement, and mutation, making it resilient and difficult to dismantle through traditional interventions like fact-checking or content moderation alone. This perspective resonates with the approaches proposed by Lelo (2024) and Kuo and Marwick (2021), who highlight how disinformation is not only a communicative artifact but part of larger infrastructural and political processes.

A systemic perspective allows us to understand disinformation from a different point of view—not as a discrete object to be identified or classified, but as a set of evolving practices, elements, and dynamics that emerge from the interactions between problematic content and the infrastructures of social media platforms. This approach shifts the focus from static definitions toward the relational processes that sustain disinformation over time. However, applying this perspective in practice can be challenging, as it requires researchers to account for complex interdependencies, shifting boundaries, and context-specific interactions. In what follows, we outline a set of steps that can help guide empirical investigations grounded in these systemic principles. To help develop this approach, we divide the disinformation system into three core dimensions that encompass the phenomenon.

Elements

The elements are the material parts of the system, such as content, actors, and agents, the tangible objects that connect to each other and produce, circulate, and legitimate the content. The elements are the agents that can be individualized and directly influence the phenomenon. Understanding disinformation from this perspective requires acknowledging what are these material components that serve as building blocks of the system. These blocks can be systems themselves, but they act as an unique agent to a particular phenomenon (e.g., the financing models that support a particular disinformation narrative, as described in Alves and Nichols work, 2024). They can also be actors. Disinformation systems involve a wide range of agents operating with diverse motivations, resources, and strategies—including state-sponsored operatives (Gaw et al., 2025), political consultants and brokers (Sastramidjaja and Wijayanto, 2022), influencer networks (Udupa, 2024), coordinated inauthentic behavior (Giglietto et al., 2020), algorithmic amplification, and ordinary users who may unknowingly participate in its spread. As Soriano and Gaw (2021) suggest, such actors often operate within complex infrastructures and informal arrangements, forming what some have called a “human infrastructure of misinformation.” A systemic perspective must therefore account not only for actors’ roles individually, but also for their position within relational, evolving networks that span across platform architectures, cultural contexts, and political economies.

Disinformation also materializes in various forms, each with distinctive characteristics and impacts. Some other examples of these elements can be the different types of problematic content described by literature, such as fake news and its formats (Gomes and Dourado, 2019), conspiracy theories (Douglas et al., 2019), memes (Dupuis and Williams, 2019), or negationism (Santini and Barros, 2022).

It is important to notice, however, that, as we argued, disinformation does not solely depend on false content. It can also involve strategically framing truthful information in misleading ways or even cherry-picking truthful content. This form of disinformation relies on selective emphasis, omission of context, or manipulative presentation of facts to mislead or manipulate audience perception. Because disinformation acts as a system, these truthful pieces can be connected to an untruthful narrative (e.g. see Authors, 2023 on problematic narratives on elections). In these cases, these other pieces of content, framing and omissions are also part of the elements for one particular system.

In sum, each system may have different elements, but they have, in common, their role in supporting disinformation. They also interact with each other, creating different dynamics, which we will explore in the next section. These elements also often serve as entry points for analysis, as they can be the most visible parts of the system. The idea of disinformation as a system highlights how these diverse components work in tandem, shaping a complex network of interaction and influence that underpins the phenomenon's effectiveness and resilience.

Environment

In systems theory, understanding a system's environment is essential because systems do not exist in isolation—they adapt, evolve, and sustain themselves through continuous exchanges with their surroundings. As Luhmann (1995) argues, the environment provides the conditions of possibility for the system's operations while remaining structurally distinct. In the case of disinformation, the environment is not merely a backdrop, but a constitutive force that shapes how communicative elements are selected, amplified, and circulated. The environment is, to this perspective, everything that is outside the system, external to this system. The environment can have parts that interact with the specific system, but not all the environment interacts with a particular system (Maturana and Varela, 1992). However, there are certain parts of the environment, other systems, for example, that are directly connected to a particular system. These other systems, while autonomous, have recurrent interactions between them and with that environment (Luhmann, 1995). This form of connection helps each system to better adapt to the complex inputs (Seidl, 2004).

Our argument is that social media platforms function both as a coupled system and as the primary environment for disinformation systems. They serve as the interface through which disinformation systems connect to other systems—economic, political, and cultural—and gain the resources and visibility necessary for their reproduction. They provide important infrastructures, financing, visibility and connection for disinformation.

To understand the environment in which disinformation systems operate, it is essential first to define and critically explore what platforms are and how they function. According to Gillespie (2010), platforms are socio-technical infrastructures that mediate the relationship among users, content, and algorithms. They are not neutral intermediaries but rather actors that curate, rank, and modulate visibility through algorithmic systems designed primarily to maximize engagement and economic return (D’Andrea, 2020). Platforms, in turn, are connected to social, economic and political systems (the secondary environment in which disinformation is also connected). But platforms mediated this relationship (Couldry and Hepp, 2017) to the environment, helping disinformation to adapt.

Van Dijck, Poell and De Waal (2018) introduce the concept of platformization to describe this pervasive influence of digital platforms across various sectors of society. Platforms do not merely distribute content; they actively influence the logic by which content acquires visibility, legitimacy, and economic value. Central to this process are dynamics of datafication, algorithmic governance, and the monetization of user-generated content and interactions (D’Andrea, 2020).

The dynamics of platforms also includes their business model, which is fundamentally based on the extraction and commodification of user data. This highlights how platforms leverage data to produce value, primarily through targeted advertising, content personalization, and attention monetization. Consequently, platforms are structured to favor content that stimulates user engagement—frequently privileging sensational, emotional, polarizing, or misleading information due to its effectiveness in capturing attention. As Diaz Ruiz (2023) and Kuo and Marwick (2021) argue, this structural tendency creates an environment highly conducive to disinformation, as the same mechanisms intended to optimize platform profitability also facilitate and stimulate disinformation production and spread.

Moreover, platforms cannot be analyzed as isolated technological entities. They are deeply embedded within broader systems such as global capitalism, surveillance economies, digital colonialism, and geopolitical power structures (Madrid-Morales and Wasserman, 2022; Ricaurte, 2019). Platforms, thus, also translate and reinforce existing power relations and inequalities in their dynamics with other systems (such as disinformation). This broader connection suggests that disinformation thrives particularly well through structural coupling with platforms, as the success of content depends less on its accuracy and more on its ability to align with economic incentives and algorithmic logics—logics that both reflect and mediate the dynamics of the broader environment. These exchanges shape and reshape disinformation, as the system adapts to the inputs provided by platforms.

While the “environment” category in our framework highlights the infrastructural and technological conditions that shape disinformation circulation, it is equally crucial to recognize that these processes are deeply embedded in broader social, cultural, and political dynamics. Disinformation, as social media platforms, do not operate in a vacuum; its form, resonance, and impact are shaped by local histories, normative structures, and political tensions. For instance, Banaji et al. (2022) examine how religious nationalism in India provides fertile ground for emotionally charged disinformation, while Cabañes and Santiago (2023) show how “networked propaganda” in the Philippines draws on culturally resonant narratives to manipulate public discourse. In both cases, the platforms are embedded in other social systems, and are also influenced by them. These cases illustrate that the disinformation environment is not only defined by platform logics or moderation policies, but also by the meanings, values, and social cleavages that shape how information is received and acted upon. By integrating these perspectives, the proposed framework emphasizes that any robust analysis of disinformation systems must account for both the socio-technical infrastructure and the cultural-political conditions that give disinformation its traction.

Dynamics

The third dimension comprises the dynamic interactions between the elements of disinformation and their environment. As we argued, this means that while they interact and adapt to each other, disinformation and platforms create different dynamics that produce effects on the other systems as well. Some of these relations are, for example, polarization or echo chambers (Rhodes, 2021; Sustein, 2017).

Polarization refers to the process through which individuals or groups increasingly adopt divergent ideological positions, which may be accompanied by growing hostility towards each other (Bail et al., 2018; Garimella and Weber, 2017). Literature has often pointed out how social media platforms increase this phenomenon through their logics of operation (Kubin and von Sikorski, 2021). Disinformation has been also connected to the increase of polarization as it works on the same logic and is often designed to work on certain groups (Volcan et al., 2024). Thus, polarization can be perceived as a dynamic that emerges from the interaction between disinformation and social media platforms. Disinformation exploits the dynamics of algorithmic visibility of social media platforms and, in return, engages users to interact more, which generates more revenue for platforms. These dynamics also contribute to echo chambers.

An echo chamber is a space where people mostly come into contact with information and opinions that confirm what they already believe, while opposing views tend to be ignored or dismissed (Nguyen, 2020; Sustein, 2007). Again, research has shown that social media platforms foster the emergence of this phenomenon through the dynamics of human agents and algorithms (Rhodes, 2021). At the same time, because disinformation also circulates in identity-driven controversies, it both thrives and increases these echo chambers (see Ruiz and Nilsson, 2023). These dynamics create environments where contradictory information is discredited, enabling more echo chamber formations.

In these cases, dynamics of polarization and echo chambers are not merely consequences but often integral dynamics of the relationship between platforms and disinformation, shaped through processes that involve users, content, and algorithmic systems. In such contexts, disinformation is not just tolerated, but reinforced, as networked users act as amplifiers, while platforms, driven by engagement metrics, algorithmically boost such content (Tufecki, 2015; Vosoughi, Roy and Aral, 2018). This creates feedback loops where polarization and echo chambers fuels disinformation, and disinformation, in turn, deepens ideological divides and increases echo chambers.

Another important dynamic that emerges from the interaction between social media platforms and disinformation is coordinated behavior aimed at spreading disinformation. The literature often refers to this phenomenon as “coordinated inauthentic behavior.” It represents a strategic process within disinformation ecosystems, in which actors—whether individuals, bots, or algorithms—deliberately exploit platform infrastructures to amplify misleading content, frequently simulating authentic communities or groups. As Giglietto et al. (2020) show in their analysis of the 2019 European elections, such coordination often involves networks of pages and accounts working together to increase the visibility and perceived legitimacy of politically charged disinformation, leveraging the virality mechanisms of platforms like Facebook. Similarly, Howard et al. (2018) argue that this behavior not only distorts public discourse but also artificially manufactures consensus, simulating organic support for false narratives and intensifying polarization. Thus, coordinated inauthentic behavior should be understood as also a systemic dynamic, a structurally embedded tactic within disinformation systems, made possible and amplified by the affordances of social media platforms. These affordances are the functional and interactional possibilities enabled or constrained by platform design, such as liking, sharing, tagging, algorithmic recommendation, or visibility modulation. They structure how content circulates, how users engage with each other, and how communication is shaped within the platform (Bucher and Helmond, 2018).

These are some examples of phenomena that can be seen as part of the emergent dynamics between social media platforms and disinformation. Rather than treating them as isolated or exceptional, cases like echo chambers, polarization, and coordinated inauthentic behavior help show how disinformation works systemically, shaped by the logics, features, and incentives of platforms. These dynamics come out of the complex interaction between algorithms, users, and broader sociopolitical contexts (Kuo and Marwick, 2021). Thinking of them as emergent helps us move beyond linear explanations and better grasp how disinformation evolves inside and through platforms. Research into these relationships may point to other dynamics we haven’t fully mapped yet, showing just how adaptable and context-dependent these systems really are.

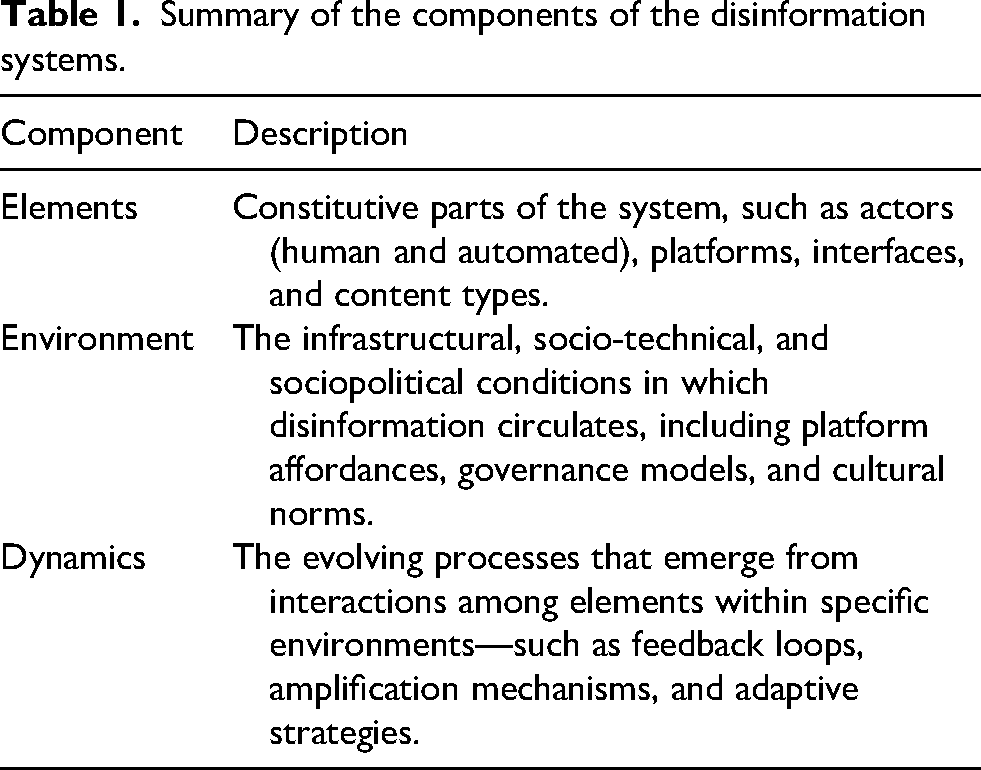

Finally, looking at disinformation through a systemic perspective means recognizing that, even if we focus on one specific aspect (whether content, technological infrastructure, or patterns of interaction) we still need to keep the broader system in view (Table 1 summarizes the proposal). These parts are deeply interconnected and only make full sense in relation to one another. Ignoring that interdependence can lead to oversimplifications that miss the very mechanisms driving disinformation's evolution and persistence. Disinformation, thus, isn’t just a collection of falsehoods or bad actors but rather a dynamic communicative structure, shaped by and shaping the platforms, technologies, and social contexts it moves through.

Summary of the components of the disinformation systems.

Studying disinformation on social media platforms through a systemic perspective

Now that we’ve discussed the key elements that make up the phenomenon of disinformation on social media platforms—its content, environments, and relational dynamics—we can shift focus to how this perspective informs research design. In this section, we will explore how to prepare a study that takes seriously the systemic and emergent nature of disinformation within platformed environments. Rather than isolating variables or seeking linear causality, this approach calls for methodological choices that are sensitive to complexity, interdependence, and context.

From this perspective, the focus must remain on the specific manifestations of disinformation—such as a viral narrative, a coordinated network, or a platform feature—without abstracting them from the ecosystems that sustain and shape them. As General Systems Theory poses, a system is defined not only by its internal components but also by its exchanges with its environment (Bertalanffy, 1975; Capra and Luisi, 2014). In the case of disinformation, these environments include the platform architectures, algorithmic logics, user behaviors, and sociopolitical conditions in which it circulates.

Research design

If we take disinformation as a system, then our research design also needs to reflect that complexity. The first step is to consider where the system begins and ends in each study—which will define the elements, the environment and the dynamics that will be included in the research. Defining these boundaries helps situate the analysis without trying to capture everything at once. It is therefore important to clearly delimit the particular disinformation system that will be the focus of the study. This delimitation is necessary in order to focus on the material phenomenon that will be studied. It's what allows the researcher to define what, exactly, is being analyzed, and to do so in a way that makes the complexity of the system more manageable.

This boundary-setting can happen at different levels, depending on the goals and scope of the study. For instance, Amaral et al. (2022) offer a grounded micro-contextual analysis of anti-vaccination discourse on Twitter in Brazil and Germany. By comparing narrative constructions across national contexts, the study reveals how disinformation practices are shaped by localized cultural, political, and linguistic factors, as well as by the specific configurations of actors and audience engagement within each platform ecosystem. This illustrates how systemic elements—such as platform affordances and actor strategies—interact in distinct ways depending on the sociopolitical environment, and reinforces the need for analytical frameworks capable of capturing disinformation's contextual embeddedness and adaptive nature. On the other hand, a macro-level approach can be seen in the work of Giglietto et al. (2020), who investigated coordinated link-sharing behavior across multiple platforms during the 2018 and 2019 Italian elections. They identified cross-platform networks working in sync to manipulate visibility and media narratives, showing how disinformation systems can operate at scale. While these studies do not explicitly connect to a systemic approach, they take into account the elements, environment and dynamics of the system and provide valuable examples to our discussion. While macro studies may examine phenomena that unfold across various platforms and contexts, micro studies offer more localized, fine-grained insights. What matters in both cases is understanding disinformation as a mappable phenomenon—one where we can identify elements and trace relational dynamics. Still, it's crucial to keep in mind that this mapping is always a snapshot in time: the system is dynamic, and its configurations will shift and adapt. The point here isn’t to create a rigid model but to make the system visible enough to analyze.

Once the phenomenon is defined, the next step is to map and analyze the elements that make up the system, as well as their relationships with social media platforms. This means identifying not just the actors involved—such as users, influencers, bots, or organizations—but also the content, narratives, and mechanisms through which disinformation circulates. It's also essential to examine how these elements interact with platform infrastructures: what features or affordances are being used (or manipulated), and how those shape the visibility, reach, and impact of disinformation.

At this stage, the researcher should be guided by some key questions: Who or what are the main agents and objects in this system? Where are they operating? And how are they connected? These questions help structure the analysis and bring the system into focus—not as a static entity, but as a set of evolving relationships that produce specific communicative effects. This mapping is what allows us to see disinformation not as isolated content or actors, but as part of a broader, dynamic configuration that's shaped by the platforms themselves.

The next step is to map the environment of the disinformation system. Now, the researcher needs to look at the broader infrastructural and contextual conditions in which the phenomenon unfolds. This includes the platforms where disinformation circulates, their affordances (such as algorithms, sharing features, visibility metrics), and the rules, norms, or policies that govern interactions within those spaces. It also involves situating the phenomenon in its social, political, and cultural context, since these conditions often shape what kind of content spreads, who it resonates with, and how it is framed.

Mapping the environment means asking questions like: Which platforms are involved, and how do their structures shape the flow of disinformation? What features are being used to amplify or conceal content? What external events, crises, or political disputes are influencing how disinformation is produced and received? How does this phenomenon connect to power and economic relations? This kind of mapping doesn’t just describe the setting—it helps reveal how the environment actively participates in shaping the system's dynamics. The works of Gillespie (2010) or Van Dijck, Poell and De Waal (2018) cited in this discussion may help understand these structures and their agency. Other works such as Ricaurte (2019) may help understand the environment that also shapes platforms and disinformation within a broader perspective on power and colonialism, for example.

Finally, the research needs to focus on the dynamics of the system. To map them, the focus shifts to understanding how the elements and environments interact over time—how content circulates, how actors coordinate, and how patterns emerge from these interactions. This involves looking at flows, feedback loops, amplification strategies, and moments where the system reorganizes or adapts in response to internal or external pressures (like fact-checking, moderation, or political events). Key questions here include: What triggers the spread of certain narratives? How do actors and platforms respond to interventions? What patterns or rhythms emerge in the circulation of disinformation? Mapping these dynamics requires attention not just to static relationships, but to processes, evolutions, and adaptations. This is where disinformation reveals itself as an emergent system: it's not just about who shares what, but how these actions connect and escalate across time and space, often producing effects that exceed the sum of their parts. Tracking these dynamics can help uncover how disinformation persists, mutates, and reinforces itself, even in the face of resistance. One example is the study of Amaral et al. (2022) that discussed how anti-vaccination narratives based on disinformation adapted over time in the German and Brazilian Twittersphere, shifting strategies and frames based on political context. This adaptation represents an important system dynamic which is a result of the adaptation of the disinformation phenomenon to specific environments.

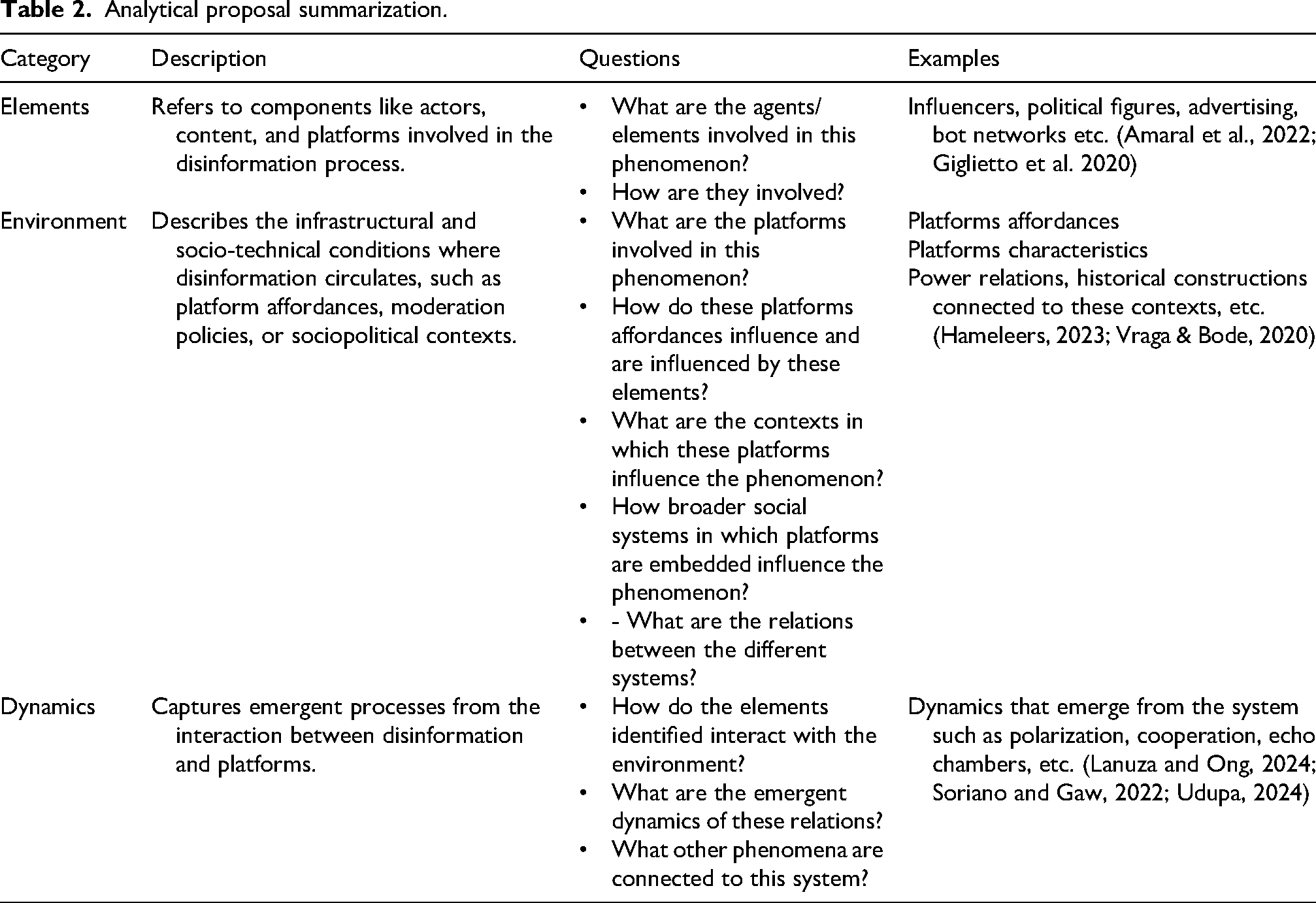

To operationalize the systemic perspective on disinformation and facilitate its application in empirical research, we propose a methodological synthesis in Table 2. This table systematizes the key elements discussed throughout the article—such as structural coupling, platform affordances, and feedback dynamics—into a set of guiding questions that researchers can use to analyze disinformation not as isolated content but as a phenomenon embedded in broader communicative systems. The goal is to offer a flexible analytical framework that can be adapted to diverse case studies, platforms, and research objectives. By highlighting relevant dimensions—like the interaction between users and algorithms, the relationship between specific narratives and sociopolitical environments, and the patterns of amplification and adaptation—this tool helps scholars translate conceptual insights into research design choices. In this way, we aim to bridge theoretical abstraction and methodological application, encouraging more context-sensitive and system-aware approaches to the study of disinformation.

Analytical proposal summarization.

Finally, it is also possible to focus on a specific dimension of the disinformation system—be it the content (elements), the technological infrastructure (environment), or the interactions (dynamics). However, it is essential to maintain awareness of the system as a whole. Each part is interconnected and gains meaning in relation to the others. Overlooking this interdependence risks simplifying the phenomenon and obscuring the very mechanisms that allow disinformation to evolve and persist. Therefore, adopting a systemic perspective is crucial for comprehensively understanding disinformation.

Conclusion

This article proposed a systemic perspective to understand disinformation as part of broader communicative, technological, and sociopolitical dynamics—rather than as isolated content or intentional deception. Drawing from systems theory (Bertalanffy, 1975; Luhmann, 2005) and platform studies (Gillespie, 2010; Van Dijck, Poell and De Waal, 2018), we emphasized the importance of analyzing the interactions between different elements—such as users, algorithms, and narratives—as key to understanding how disinformation circulates, gains visibility, and persists. Disinformation is not a stable object; it is a phenomenon shaped through relationships, feedback loops, and the affordances of digital platforms.

Beyond the theoretical discussion, we also offered a methodological contribution by translating this perspective into a framework that can support empirical research. A systemic approach requires both theoretical and methodological flexibility: while it is necessary to define the observable phenomenon—whether a specific piece of content, a coordinated campaign, or a pattern of distribution—it must be analyzed in relation to the networked, affective, and institutional configurations that give it meaning and effectiveness. This means attending to the elements, the environment, and the dynamics of the system.

Finally, it is important to emphasize that the disinformation system is not sustained solely by producers or platforms, but also by user engagement and demand. Audiences actively participate in shaping disinformation dynamics—whether through sharing, commenting, or reinforcing certain narratives—which contributes to the amplification and normalization of problematic content. In this sense, users are not merely passive recipients but integral components of the system, participating in feedback loops that reinforce its persistence. Moreover, disinformation does not operate exclusively within social media platforms. Traditional media, interpersonal relationships, and broader cultural and political norms all contribute to shaping the environment in which disinformation circulates. These cross-contextual influences underscore the need for systemic analyses that go beyond platform boundaries, capturing how narratives are rearticulated across news media, social circles, and everyday discursive practices. Researchers adopting this framework should therefore attend not only to infrastructural affordances and actor strategies, but also to the cultural resonances and social mechanisms that enable disinformation to gain traction and evolve.

Studying disinformation systemically is therefore not just about tracing connections between actors or messages, but about understanding the processes of adaptation, amplification, and resonance that unfold across multiple communicative and technical layers. This perspective is essential to grasp not only how disinformation spreads, but also why it continues to thrive within the architecture of digital platforms.

Footnotes

Acknowledgments

This research was partially supported by the National Council for Scientific and Technological Development (CNPq), through grants number 406504/2022-9 (National Institute of Science and Technology on Information Disputes and Sovereignty—INCT/DSI); 405965/2021-4 and 302489/2022-3.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Conselho Nacional de Desenvolvimento Científico e Tecnológico (grant numbers 302489/2022-3., 405965/2021-4, 406504/2022-9).

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.