Abstract

Artificial intelligence (AI)-based drug repurposing is an emerging strategy to identify drug candidates to treat rare diseases. However, cutting-edge algorithms based on deep learning typically do not provide a human understandable explanation supporting their predictions. This is a problem because it hampers biologists’ ability to decide which predictions are the most plausible drug candidates to test in costly lab experiments. In this study, we propose rd-explainer a novel AI drug repurposing method for rare diseases which obtains possible drug candidates together with human understandable explanations. The method is based on graph neural network technology and explanations were generated as semantic graphs using state-of-the-art explainable AI (XAI). The model learns features from current background knowledge on the target rare disease structured as a knowledge graph, which integrates curated facts and their evidence on different biomedical entities such as symptoms, drugs, genes, and ortholog genes. Our experiments demonstrate that our method has excellent performance that is superior to state-of-the-art models. We investigated the application of XAI on drug repurposing for rare diseases and we prove our method is capable of discovering plausible drug candidates based on testable explanations.

Introduction

Developing new drugs is a challenging effort that often ends with the drug not being able to launch. Recent studies have shown that around 90% of drugs fail to be approved during their clinical development (Sun et al., 2022). This leads to a fruitless expenditure of both time and money that will yield no financial returns. The situation is even worse in the case of rare diseases, as pharmaceutical companies may consider it risky to invest large amounts of resources into developing drugs that only a small percent of the population will need. Nonetheless, in total, human beings are affected by approximately 7,000 rare diseases, of which only 5% have an effective treatment (Haendel et al., 2020); and only in Europe between 27 and 36 million people suffer from rare diseases ( Rare diseases ).

In this scenario, drug repurposing strategies have appeared as a possible approach to solve these issues. By reusing drugs that have already been approved, companies can avoid many of the costly and time-consuming steps of clinical trials. In this context, innovative approaches to drug repurposing, such as computational strategies and artificial intelligence (AI)-driven methodologies, have emerged as promising solutions to address these challenges. Graph-based drug repurposing is another noticeable strategy that has gained attention in recent years. By constructing intricate networks of molecular interactions, genes, proteins, and diseases, this approach unveils hidden relationships and connections that might otherwise go unnoticed (Guney et al., 2016).

Still, many people remain skeptical about AI-driven decisions, especially machine learning (ML) and deep learning, as many of them come with no explanation that can help to understand the reason why they should be trusted (also called black-box AI). This issue is especially significant in the healthcare field, where decisions may have an important impact on people’s lives. Also, giving valid explanations can help researchers to point in the right direction in the generation of hypotheses that are testable in labs and enable a solid knowledge discovery. Furthermore, the EU General Data Protection Regulation is requesting the AI industry to fulfill the “right to explanation” (Goodman & Flaxman, 2017). This “right to explanation” implies that when a decision is significantly affected by an automated process/algorithm, the individual can demand an explanation. In recent years, many different tools have appeared to try and cover this gap in the emerging explainable AI (XAI) research area (Huang et al., 2023; Pezeshkpour et al., 2019; Ribeiro et al., 2016).

In this study, we explore whether AI can be used to produce both predictions and explanations in computational drug repurposing for rare diseases and, if so, how helpful can these explanations be for hypothesis generation. The main objective of this work was to develop and implement a pipeline to find marketed drugs that can be used to treat the symptoms of a rare disease. Our approach is based on cutting-edge AI algorithms used in computational drug repurposing such as graph ML using knowledge graphs (KGs) and graph neural networks (GNNs), and XAI methodology to provide the explanations supporting the drug predictions made by AI models. The approach was evaluated by selecting Duchenne muscular dystrophy (DMD) as a case study, a genetic disorder that is the most common form of muscular dystrophy (Szigyarto & Spitali, 2018). We demonstrate the generalizability of our approach by applying the pipeline to different rare diseases.

Related Work

Knowledge Graph-Based Drug Repurposing

The state-of-the-art of computational drug repurposing approaches makes use of graph-based structures and AI techniques to find potential drug candidates. One of the main advantages of using graph structures is that they can easily incorporate information from different sources. This is especially important in the domain of rare diseases, where information is distributed and often scarce. The ability to integrate as much relevant data as possible can confer a significant advantage. An example of this would be the recent study of Al Al-Saleem et al. (2021), where a knowledge graph was used to discover drug candidates to treat COVID-19.

Different ML algorithms can be used to analyze knowledge graphs, including matrix factorization, random-walk approaches (node2vec; Grover & Leskovec, 2016), geometric embeddings (DistMul; B. Yang et al., 2014), and GNNs; Ferrari et al., 2022; Yue et al., 2020, each one of them with their own advantages and disadvantages, see Table 1. In our study, we used a combination of random-walk approaches and GNNs as in contrast to other methods (like matrix factorization or geometric embeddings) they can easily incorporate new information without the need of retraining the ML model. This is especially relevant in the field of drug repurposing where new information about drugs, genes, and diseases is being published (Hsieh et al., 2021; Sadeghi et al., 2022; Zhang et al., 2022).

Comparison of Different Graph-Based Machine Learning Methods in Drug Repurposing.

Comparison of Different Graph-Based Machine Learning Methods in Drug Repurposing.

One of the graph-based methods that can provide explanations of the predictions, also called local explanations, is (Graph)LIME (Huang et al., 2023), an adaptation of the popular and more general explainability method LIME (Ribeiro et al., 2016). The idea behind this method is the following: when trying to get an explanation for a given prediction, (Graph)LIME performs small perturbations to the features of nodes, and sees how the predictions vary with respect to the initial prediction. The more the prediction changes, the more the model is relying on that feature to obtain its prediction. This way, explanations in this model are given in the form of a set of node features. Among its drawbacks, this method can only be used in node classification tasks. Another explainability method is CRIAGE (Pezeshkpour et al., 2019) where explanations are given as a set of rules.

Several other explainability methods have been proposed for Graph ML, including PGExplainer (Luo et al., 2024) and GRETEL (Xie et al., 2022). PGExplainer generates explanations by learning a probabilistic mask over graph structures, making it more flexible in terms of capturing various graph features. GRETEL, on the other hand, is designed to provide global explanations, making it different from other methods that focus on local interpretability.

Finally, the method chosen in this work is GNNExplainer (Ying et al., 2019). The insight of how this method works is the following: given an initial prediction (link prediction, node classification, or graph classification) obtained through a GNN, GNNExplainer finds a subset of node features and edges that are responsible for the prediction. This subset is obtained by training an edge and node mask. This method was chosen as explanations are provided in the form of a subgraph that can be easily understandable. Additionally, it is a posthoc XAI method, that is, it is GNN model-agnostic, which means that if more sophisticated GNNs are developed in the future, these new GNNs can be easily incorporated into the pipeline. Furthermore, as a posthoc method, its explanations might not always be faithful to the model’s decision-making process. If a GNN has been trained on noisy data, GNNExplainer may highlight irrelevant edges or nodes simply because they correlate with predictions. These features make it a popular method in the research community (Kim, 2023; Pfeifer et al., 2022; Sun et al., 2023). However, a major drawback is that it lacks consistency when obtaining explanations. This means that explanations on the same prediction can significantly change if running GNNExplainer several times. A summary of the methods can be found in Table 2.

Summary of Explainability Methods in Graph ML.

Summary of Explainability Methods in Graph ML.

Note. ML = machine learning.

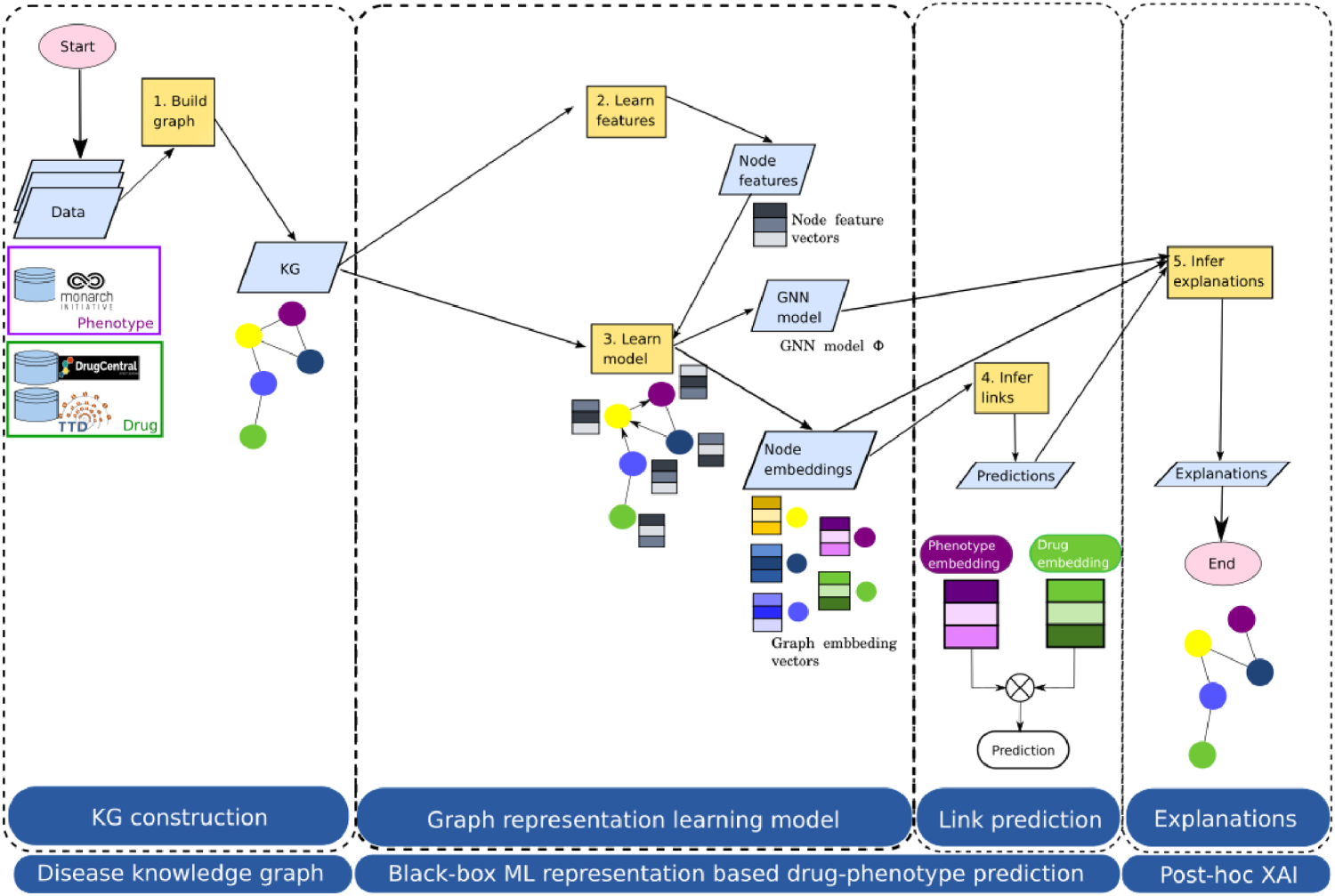

rd-explainer Method Overview

rd-explainer is a drug repurposing method we developed for rare diseases and its pipeline is illustrated in Figure 1. rd-explainer has three modules: the Knowledge Graph Construction module constructs a KG for the specific rare disease and drug repurposing task, the Prediction module trains a GNN model and predicts drug candidates for the rare disease symptoms, and the Explainer module computes the most important semantic subgraphs that explain the connection between the predicted drug and the symptom. First, disease-related information is gathered from different data sources: Monarch Initiative knowledge base ( Monarch Initiative Explorer ) for disease pathology, and DrugCentral (Avram et al., 2021) and Therapeutic Target Database (Zhou et al., 2022) for disease druggability. This information is then preprocessed and captured as a knowledge graph. Next, for each node in the graph, a feature vector is obtained that will be used as input for the GNN model. This is done by making use of a method known as edge2vec (Gao et al., 2019) to consider the different edge semantics in the KG for node embedding learning. We used the version extracted from GitHub (accessed in 2021). 1 The next step is to build and train the GNN model, which is done using the GraphSAGE framework for learning graph representation (Hamilton et al., 2017). Next, link prediction is performed for each drug–symptom node embedding pair using the dot product as a scoring function. Finally, we produced prediction explanations as semantic graphs using GNNExplainer (Ying et al., 2019), a recent and, to our knowledge, one of the first XAI methods for obtaining explanations from GNN predictions.

rd-explainer drug repurposing method pipeline developed in this work.

Data Sources

Data were obtained from three different sources: Monarch ( Monarch Initiative Explorer ) (accessed in 2021), DrugCentral (Avram et al., 2021) (2021 version), and Therapeutic Target Database (TTD) (Zhou et al., 2022) (November 8th, 2021 version). Monarch is a knowledge base built on semantic principles, unifying gene, variant, genotype, phenotype, and disease data across different species. Its primary aim is to establish links between genes and phenotypes, thereby facilitating computational exploration of human disease biology. Monarch was chosen because it contains curated information across different species. This way, because rare diseases are often less studied than common diseases, incorporating information from other species can maximize the amount of knowledge in the graph. However, Monarch does not specialize in drug information.

Drug information was incorporated from DrugCentral (drug-target information) and from TTD (drug–disease information). DrugCentral is a comprehensive online database that provides information about approved drugs, active ingredients, and other pharmaceutical products. One of its major features is that it is open source and its data is freely available to anyone. For this project, we made use only of the drug-target information (as it is the main piece of information that is not present in Monarch) downloaded as a tsv file from their site (Zhou et al., 2022). 2 Similarly, TTD is a database that specializes in drugs and their respective therapeutic targets. Once more, this database is freely accessible and its information can be easily downloaded in the csv format (in this project, we just made use of the drug–disease information (Zhou et al., 2022), 3 once again because it is the information missing in Monarch).

Knowledge Graph Construction

To extract information from Monarch, the BioKnowledge Reviewer (Queralt-Rosinach et al., 2020) tool was used. This tool was originally created to collect knowledge from several sources and create a knowledge graph that could be later used for hypothesis generation. It works by using several seeds (node identifiers [IDs]) as input to query the Monarch API and constructing the graph based on the neighborhood of those seeds. After introducing the seeds in the BioKnowledge Reviewer pipeline, the final output is the rare disease research question-specific knowledge graph structured in two dataframes (stored as csv files). One of them contains a list of nodes with their respective name, IDs, semantic entity type, synonyms, and description. The second file contains the list of edges, again containing the IDs of the entities participating in each link and other edge information such as type of edge, supporting evidence, and reference date. Monarch was our main source of information and therefore served as a starting point to create the rest of the graph. This way, data from other data sources were modified to fit Monarch’s standards by unifying the identifiers. Finally, the graphs were constructed using the NetworkX Python library (NetworkX). With this library, the dataframes extracted using the BioKnowledge Reviewer were converted into a Graph object.

We integrated data into two different knowledge graphs to perform the experiments. Each one of them was constructed using different (number of) node seeds to extract information from Monarch. The first one (KG A) uses only two seeds: DMD seed (HGNC:2928), corresponding to the human gene that causes the disease; and DMD seed (MONDO:0010679), corresponding to the disease itself. The second graph (KG B) extends KG A by including as seeds all phenotypes of the rare disease (in total, 27 more seeds). The seeds used for the construction of each graph can be found in Tables S1 and S2 in the Supplemental materials. The idea of creating two different graphs is to find out if the performance of the model and the quality of the explanations increase by incorporating more (phenotypic) information.

ML Model and XAI

Node Features

At this point, none of the nodes has any specific node features. It is possible to run a GNN relying only on graph information, that is, network topology (this is done, e.g., by using the node degree as graph feature); nonetheless, this resulted in poor performance (results not shown). To increase the efficiency of the model, edge2vec was used to produce a specific embedding for each node that captures information about its neighborhood. edge2vec (Gao et al., 2019) is a tool that generates node embeddings based on the node neighborhood and types of edges connecting each node. After executing edge2vec, each node was given a unique feature vector. Since edge2vec is an unsupervised method that does not use task-specific labels, these embeddings serve as general-purpose representations of the graph structure rather than encoding direct knowledge of the downstream task. This approach ensures that the GNN still needs to learn task-relevant patterns, rather than relying only on the precomputed embeddings.

Data Splitting

As any other machine learning task, data needs to be split into training, validation, and test sets. However, when tackling a link prediction task, there are different ways to perform this split. In link or edge prediction tasks, edges can be divided into two groups: message-passing edges and supervision edges. Message-passing edges are the ones that will be used by our GNN to obtain the embeddings, while supervision edges are the ones that will be used to test the performance of our model (CS224W; DeepSNAP). Additionally, when creating the supervision edges it is necessary to include negative examples by applying negative sampling. Negative sample edges are those not present in the original graph—pairs of entities known to be unconnected or for which no link is known. The goal is for the neural network to learn to distinguish true (positive) edges from false (negative) ones. In general, one negative edge is created for each true edge (CS224W; DeepSNAP).

In this work, we selected the all-graph transductive split (CS224W; DeepSNAP). This method divides the data as follows: in the training set, the supervision edges and message-passing edges are the same. In the validation set, the message-passing edges are the same as those in the training set, while the supervision edges are different from the training supervision edges. Finally, in the test set, the message-passing edges consist of the validation edges, and the supervision edges are distinct from both the training and validation supervision edges.

This method is one of the standard settings for link prediction tasks, as the whole graph can be seen in all dataset splits (CS224W). The split proportion we used was 80% of edges for training set, 10% for validation set, and 10% for test set. The training set was used to train the model, the validation set to select the best hyperparameters, and the test set to obtain the global performance of the model.

To avoid data leakage, node features were obtained by running edge2vec only in the train split; this way, no information from the validation or test set is seen during the training. This procedure was just used during the evaluation of the model to ensure our experiment was unbiased.

GNN Model

We first utilized a GNN algorithm to learn vector representation embeddings for nodes in our knowledge graphs. Then, we applied these node embeddings for drug–phenotype link prediction. The GNN algorithm that we used in this work is called GraphSAGE (Hamilton et al., 2017). GraphSAGE performs inductive graph representation learning by leveraging rich node attribute information. The main advantage that was brought by GraphSAGE is its scalability: instead of working with full batches (the whole graph is seen during the training) it works with mini-batches. Each mini-batch is a subset of computational graphs (a computational graph is the individual GNN that is built for each node) of

The final model consists of a GraphSAGE-based neural network that processes node embeddings through two graph convolutional layers using mean aggregation. The first SAGEConv layer transforms the input features into a 264-dimensional hidden representation, followed by batch normalization, LeakyReLU activation, and dropout (0.2) to prevent overfitting. The second SAGEConv layer maps the hidden representation to a 64-dimensional output space, which serves as the final node embeddings. Link prediction is performed by computing the dot product between the embeddings of node pairs. The model is trained for 150 epochs using the Binary Cross-Entropy with Logits Loss function and optimized with a learning rate of 0.07.

Drug–Phenotype Link Predictions

The GNN model generates embeddings for individual nodes within the graph as its final output. By applying the dot product between distinct node pairs and applying a sigmoid function, we obtain a value that shows the likelihood of a link existing between those nodes. Consequently, we obtain dot products between each drug and every phenotype in the graph, and rank them in descending order. The top-ranked dot products are considered the most promising targets. Links that were already present on the graph were removed from the ranking.

Graph-Based Prediction Explanations

We applied GNNExplainer to generate explanations for every drug–phenotype prediction. To do so, we adapted the pipeline code (from PyTorch geometric version 2.0.4) to generate explanations for the link prediction task, which was not implemented in the authors’ version (Ying et al., 2019) (see pseudocode in Algorithm 1 in the Supplemental materials). However, this XAI algorithm has a robustness problem in the explanations it produces (Agarwal et al., 2023) and, additionally, it may produce disconnected graphs that affect the interpretability of explanations by domain-users. To solve this issue, we developed the following procedure. First, we assume that a complete explanation is one that connects the two targeted nodes. If drug A can treat phenotype B, there must be some common pathway that allows A to interact with B. This way, the procedure starts by running GNNExplainer for several iterations. In each iteration, NetworkX is used to check if, in the subgraph generated by GNNExplainer, a path exists between both nodes. If no path is found, it continues with the next iteration; if it does exist, it stops iterating and that subgraph is considered to be the final explanation. If no subgraph is found that satisfies the “pathway” condition, the last subgraph is returned as a possible explanation.

In total, seven phenotypes were selected to evaluate the explanations (Muscular Dystrophy [HP:0003560], Respiratory Insufficiency [HP:0002093], Arrhythmia [HP:0011675], Congestive Heart Failure [HP:0001635], Dilated Cardiomyopathy [HP:0001644], Cognitive Impairment [HP:0100543], and Progressive Muscle Weakness [HP:0003323]). These phenotypes were selected to cover all the main areas that are affected by the disease (muscular, respiratory, cardiac, and intellectual symptoms). For each prediction obtained in these phenotypes (three drug predictions per phenotype), an explanation was obtained. This process was done for the predictions coming from KG A and for those coming from KG B. This makes a total of 42 explanations (21 for each graph).

Regarding the parameters of GNNExplainer, because the graphs are highly connected, explanations were generated by using the 1-hop neighborhood of the graph. Using a higher

Additionally, the maximum size of the explanations was set to 15 (this means that no more than 15 edges will be part of the explanation). This way, we will avoid obtaining too complex explanations with many edges that might be impossible to comprehend by researchers. This was done by selecting the edges whose contribution values are among the 15th highest values.

Finally, the maximum number of iterations was set to 10. In other words, if after 10 iterations GNNExplainer has not found an explanation that connects the drug candidate with the targeted phenotype it will conclude that no “complete” explanation was found, and the last explanation produced by GNNExplainer will be the one that will serve as the final answer. This parameter can be increased or reduced depending on the expectations of the researcher. A large number of iterations increases the chances of finding a complete explanation at the cost of more computational time. In contrast, reducing the number of iterations reduces the computational time, which can be useful if a researcher wants to obtain explanations for a large number of predictions.

Evaluation and Metrics

Evaluation of GNN Model

Other evaluations were developed to further assess the performance of the model. These evaluations include the testing of different negative sampling sizes (

Evaluation of Explanations

The evaluation of the explanations was done manually, following a two-step process. First, they were classified as complete or incomplete explanations based on the appearance of a connection between the drug and the phenotype. We developed a function to visualize the explanations as semantic graphs based on Pytorch Geometic's visualization function4 (see the Section “Visualization of Explanations” in the Supplemental materials for further details). This way, if the explanation contains a link between the drug and the phenotype it is considered to be a complete explanation. These explanations are considered to be the most useful as they can be easily understood and interpreted. However, explanations where there is no link between drug and phenotype (where there are two separate clusters) or where only one of the target elements (either the drug or the phenotype) is missing, are considered incomplete explanations. Several illustrative examples are provided in the Supplemental materials (see the Section “Complete/Incomplete Explanation Example”).

During the second step, we evaluated the explanations using an objective and a subjective approach. First, complete explanations were reviewed and a manual search was performed to check whether the explanation proposed by the model had already been described in the literature (objective evaluation). This process was only performed for predictions that have supporting evidence in the literature and that were classified as complete explanations. The literature examination was performed using PubMed and Google Scholar during the first half of 2022. Finally, each explanation was evaluated for domain knowledge from rare disease researchers (subjective evaluation).

Results

Rare Disease KG Topology and Representation for Drug Repurposing

We generated two different drug repurposing knowledge graphs for the DMD rare disease. KG A contains 10,786 nodes, 93,905 directed edges. The average node degree of the graph (

Table Showing Features of KG A and KG B.

Table Showing Features of KG A and KG B.

KG = knowledge graph.

In the case of KG B (built from 29 nodes: KG A seeds extended by 27 phenotypes of DMD), the total number of nodes is 83,665, with a total of 1,984,774 directed edges. The average degree in this case is 34.43, being the node with the highest degree the physiological process “Protein Binding” with a total degree of 4,817. The diameter of the graph is 7, which shows one of the features of scale-free networks: despite increasing the number of nodes 8 times and the number of edges 20 times, the diameter of graph B only increased one unit with respect to graph A. In this case, the clustering coefficient is equal to 0.48, showing that KG B is more clustered. Table 3 shows a summary of the features of both graphs.

The schema of the knowledge graph, which is the same for KG A and KG B, can be seen in Figure 2 and shows how the eight different node types interact with each other. The schema contains 24 and 29 different edge types for KG A and KG B respectively, which are not included in this figure for clarity, but are listed in the Supplemental materials S3 and S4.

Schema of the knowledge graph. Node types are: drugs or chemical compounds (DRUG), genes (GENE), symptoms/phenotypes or diseases (DISO), gene variants (VARI), genotypes (GENO), gene orthologs (ORTHO), anatomical structures (ANAT), and biological processes (PHYS).

In total, two GNNs were used, one trained on KG A and one trained on KG B. Hyperparameter optimization was developed using Ray Tune and optimal values can be found in Table S5 in the Supplemental materials. These hyperparameters were obtained by training several GNN models (Random Search) on graph A; and were later used to train a GNN model on graph B.

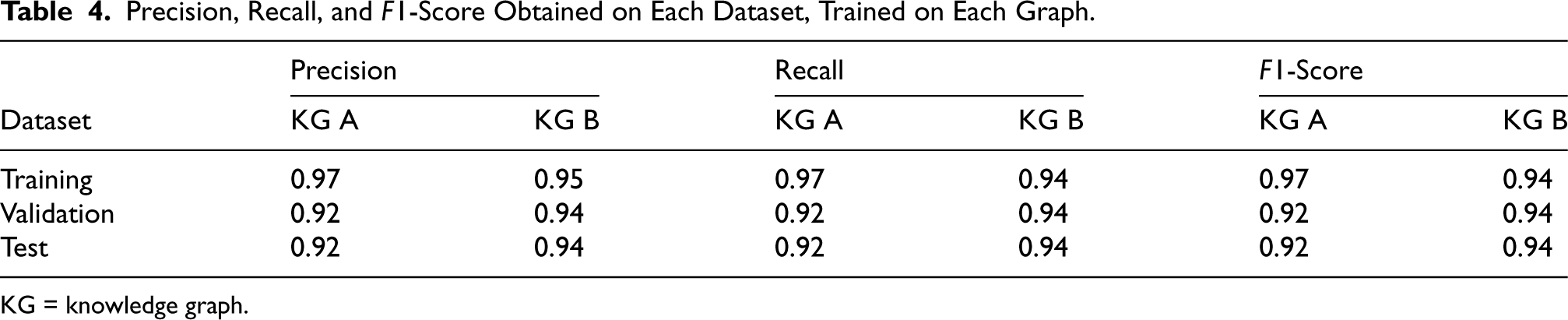

To measure link prediction performance, the scores obtained were precision, recall, and the

AUROC on the test dataset using KG A. AUROC = area under the ROC curve; KG = knowledge graph.

AUROC on the test dataset using KG B. AUROC = area under the ROC curve; KG = knowledge graph.

Precision, Recall, and

KG = knowledge graph.

First, we evaluated our GNN model applying different strategies and compared its performance with the state-of-the-art graph embeddings used in drug repurposing methods. Then, we evaluated our approach based on its ability to predict drugs that have already been reported in the literature for a new symptom or phenotype.

We performed a regular 10-fold cross-validation and a biased 7-fold cross-validation evaluation in KG A. The regular 10-fold cross-validation obtained an average AUPRC of 0.98 and an average AUROC of 0.98. For the biased 7-fold cross-validation, in each fold four symptoms (along with the edges connected to those symptoms) were removed from the training set. The performance of the model was then tested on the removed symptoms. In this case, the average AUPRC was 0.75 and the AUROC was 0.8.

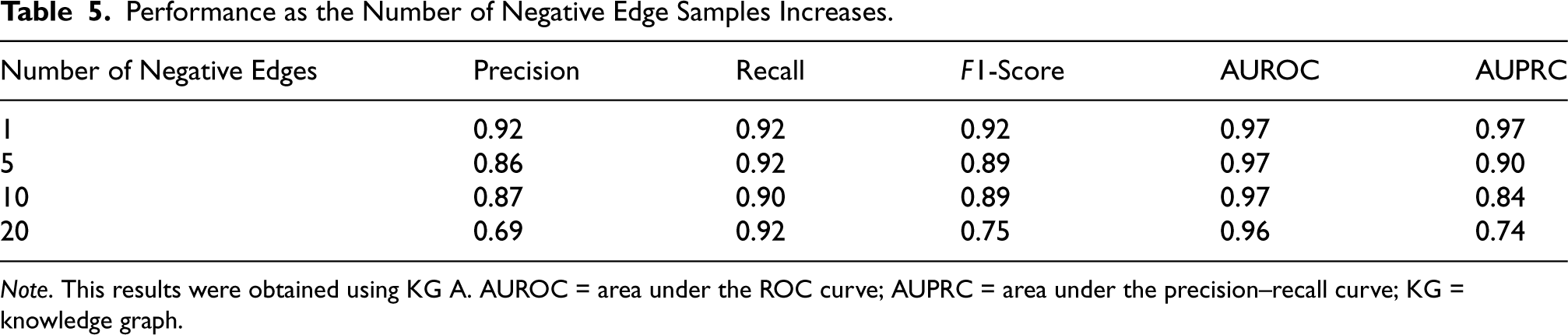

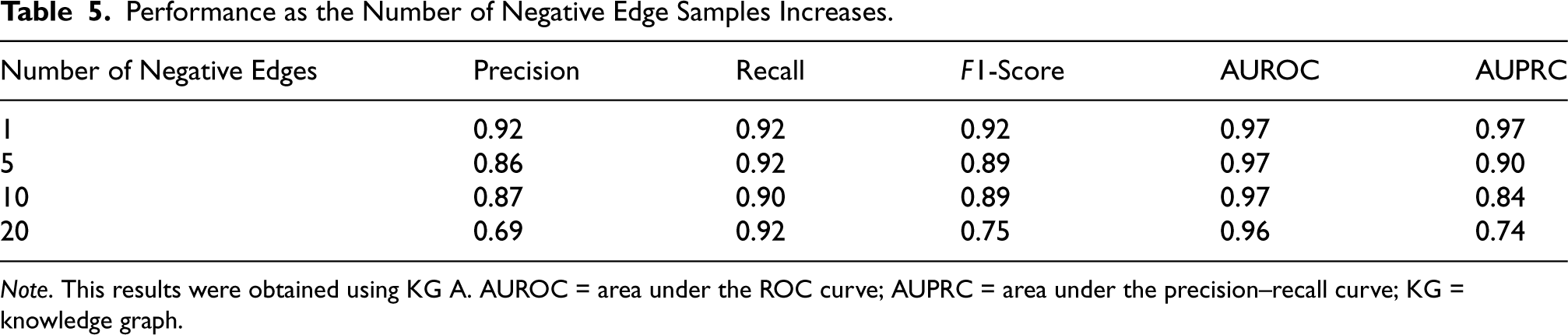

The performance of the pipeline was evaluated for a different number of negative edges. This evaluation was only performed in KG A due to the large increase in the number of edges in the evaluation tests (and the consequential increase in the computational time). The results can be seen in Table 5. It is seen that as the number of negative edges increases, the PR curve is affected while the ROC curve remains mostly intact, a result that has been previously reported (Junuthula et al., 2016).

Performance as the Number of Negative Edge Samples Increases.

Performance as the Number of Negative Edge Samples Increases.

Note. This results were obtained using KG A. AUROC = area under the ROC curve; AUPRC = area under the precision–recall curve; KG = knowledge graph.

Finally, the performance of rd-explainer (tested in KG A) was also compared to other state-of-the-art methods, including edge2vec, GraphSAGE, ComplEX, DistMult, and TransE. Our results can be seen in Table 6 and revealed that rd-explainer outperformed all other methods based on the different evaluation metrics measured.

Prediction Performance Metrics Comparing rd-explainer with Other State-of-the-Art Graph Embedding Methods Including edge2vec, GraphSAGE, ComplEX, DistMult, and TransE.

Note. The best results are highlighted in bold. In the headings, P stands for precision, R for Recall, and

We also evaluated the prediction performance based on the capacity of our method to discover marketed drugs already reported to be used for a new phenotype. First, we listed for each of the seven selected phenotypes the three drugs with the highest scores. Because the objective is to find new indications for drugs; if any of the reported drugs already appears in the graph as a treatment for the targeted symptom, this drug will be skipped and the next one with the highest score will be selected. For example, if aprindine is selected as the drug with the highest score to treat arrhythmia, but the relation “aprindine is a substance that treats arrhythmia” is already present in our graph, aprindine will not be reported as a possible drug candidate.

For each possible drug candidate, a literature search was performed to find preliminary evidence that that drug had already been used to treat the symptom. If the drug was contraindicated to treat the symptom (or if it could cause the symptom) it was also annotated. The results of each drug candidate obtained using KG A can be found in Table S9 in the Supplemental materials. Additionally, Table 7 summarizes the amount of drugs (in percentage) that contained supporting evidence, contraindication evidence, or no evidence at all. We found that only a fifth of drug candidates had supporting evidence in the literature, and that the vast majority of candidates (65.43%) did not have any evidence at all. There is a small percentage of them that are actually contraindicated to treat the targeted symptom/phenotype. Finally, the amount of supporting/contraindicating evidence can be found summarized in Table S7.

Percentage of Drugs Containing Supporting Evidence, Contraindication Evidence, or No Evidence at All for Both graphs A and B.

Percentage of Drugs Containing Supporting Evidence, Contraindication Evidence, or No Evidence at All for Both graphs A and B.

KG = knowledge graph.

The same approach was followed for KG B. Information regarding the drug candidates for each symptom (as well as supporting evidence) can also be found in Table S10 in the Supplemental materials. Additionally, the percentage of drugs with supporting evidence, contraindication evidence, or no evidence at all can be seen in Table 7. In this case, the number of drug candidates with evidence has increased relative to the drug candidates obtained with KG A (27% in B vs. 21% in A), and the number of drug candidates without evidence has been reduced (58% in B vs. 65% in A). The number of drug candidates with contraindications remains almost the same (13% in A vs. 14% in B).

Evaluating an explanation is a tough task and many different benchmarks have recently appeared to evaluate them (Markus et al., 2021). In this work, we followed two different approaches to evaluate the explanations: a more subjective one, where the explanation was evaluated with our own biological knowledge; and a more objective one, where a manual literature search and curation was performed to check if the suggested explanation has already been reported. We selected seven phenotypes (muscular dystrophy, respiratory insufficiency, arrhythmia, dilated cardiomyopathy, congestive heart failure, progressive muscle weakness, and cognitive impairment) and their top three predictions, then explanations were produced from the models trained in both KGs. The selection of these phenotypes aimed to cover the diverse systems affected by the disease. Each explanation was analyzed and, if possible, compared to the one found in the literature.

Explanations were classified into complete and incomplete explanations. Complete explanations are those that show a connection (path) between the drug candidate and the targeted symptom/phenotype (Figure S3 in the Supplemental materials). They are considered complete because they allow for an easy human-understandable interpretation. However, incomplete explanations are those where the explanation is made up of two separate clusters (one for the drug and one for the phenotype) (Figure S4) or by a unique cluster where either the drug or the phenotype is missing (Figure S5).

The global analysis of the completeness of the explanations generated can be seen in Table 8 (amount of complete and incomplete explanations in each type of supporting evidence) and Table 9 (amount of supporting evidence in each type of explanation). This analysis was performed taking into account the explanations from both graphs. As can be seen in Table 8, in total the same number of complete and incomplete explanations were obtained (21 each). However, when looking at each category separately, it is seen that when there is evidence GNNExplainer tends to produce complete explanations (68%), and conversely when there is no supporting evidence or when the drug is contraindicated the resulting explanation is usually incomplete (62% and 70%, respectively). As can be seen in Table 9, when a complete explanation is created, almost two-thirds of the time the explanation contains supporting evidence (62%); while when the explanation is incomplete, only one-fourth of the time it contains supporting evidence (28%).

Number and Percentage of Complete and Incomplete Explanations in Each Evidence Type.

Number and Percentage of Complete and Incomplete Explanations in Each Evidence Type.

Number and Percentage of Explanations with No Evidence, with Supporting Evidence, and with Contraindications in Each Type of Explanation.

An additional analysis was performed, this time considering each graph separately. This can be seen in Table 10 and Table S7 in the Supplemental materials. There is a clear difference between the explanations obtained in graphs A and B. First, KG A explanations are more likely to be complete (72% in A vs. 28% in B), while KG B produces more incomplete explanations (72% in B vs. 28% in A) (Table 10).

Number and Percentage of Complete and Incomplete Explanations in Each Evidence Type and in Each Graph.

KG = knowledge graph.

An example of an explanation produced by rd-explainer can be seen in Figure 5. This explanation is classified as complete and suggests why doxorubicin should be considered to treat respiratory insufficiency; as it is a drug that targets CHRM1 a gene that interacts with DAG1, which causes the disease. Throughout this section, explanations have been classified into complete and incomplete. However, an explanation being complete does not make it a good explanation. This way, for example, an explanation of the type “Drug A targets Gene B, Gene B interacts with Gene C, and Gene C causes Disease D” can make biological sense such as in Figure 5. On the other hand, an explanation of the type “Drug A treats Disease B, Disease B is caused by Gene C, Gene C causes Disease D” does not make full biological sense (Drug A could treat Disease B by targeting a gene other than Gene C; this way, the same treatment could not be applied for Disease D). In fact this is what is observed in Figure S6 in the Supplemental materials, where disopyramide is said to treat muscular dystrophy following the next explanation: disopyramide treats urinary incontinence, affectation of the DMD gene can cause urinary incontinence, and DMD gene has muscular dystrophy as a phenotype. In this case, a person may have urinary incontinence for several reasons, and disopyramide may be able to treat one of them, but not necessarily the one caused by affectation in DMD gene.

Explanation of drug candidate doxorubicin as possible treatment for respiratory insufficiency. Classified as complete explanation.

The objective evaluation is undoubtedly more unbiased and equitable. Nonetheless, subjective evaluations are also significant since there are drug–phenotype interactions that are not fully understood (especially when a certain drug is producing an undesired side effect), and so are not well established in the literature. However, analyzing the proposed explanations based on expert domain knowledge might shed light on the interaction and help to formulate a hypothesis that can be clearly designed to be tested in a wet laboratory.

After applying the objective evaluation, only one explanation (levosimendan—progressive muscle weakness) was found to have supporting evidence (where levosimendan treats the disease by increasing the troponin C affinity for calcium), and two links’ explanations (doxorubicin—respiratory insufficiency and sorafenib—respiratory insufficiency) contained unclear interactions (both were of type contraindications). The results after applying this evaluation can be found summarized in Table S6 in the Supplemental materials. Regarding subjective evaluations, 17 out of 21 explanations were found to be good explanations (they were in accordance with biological reasoning) such as the one illustrated by doxorubicin—respiratory insufficiency in Figure 5; and 4 were considered bad explanations (they did not make biological sense), the previously mentioned disopyramide—muscular dystrophy in Figure S6, and the explanations in Figures S7 to S9.

To show that this method can be extended to other rare diseases it was also tested in Alzheimer’s disease (AD) and amyotrophic lateral sclerosis (ALS) type 1. Although Alzheimer’s disease is not a rare disease, there are different types of Alzheimer with very little prevalence. This way, for the Alzheimer’s knowledge graph we used the general disease (MONDO:0004975) and all its causal genes that were present in Monarch (APP [HGNC:620], APOE [HGNC:613], PSEN1 [HGNC:9501], and PSEN2 [HGNC:9509]) as seeds. The final result would be a knowledge graph that specializes in Alzheimer’s diseases and that we can use to focus on the symptoms of the rare types of the disease. For the ALS type-1 knowledge graph we used the seed for the disease (MONDO:0007103) and the causal gene according to Monarch (SOD1 [HGNC:11179]). Table 11 shows the GNN performance in both diseases, again showing a high AUROC and AUPRC for these diseases.

Table Showing Different Performance Metrics Tested in AD and ALS.

Table Showing Different Performance Metrics Tested in AD and ALS.

AD = Alzheimer’s disease; ALS = amyotrophic lateral sclerosis; AUROC = area under the ROC curve; AUPRC = area under the precision–recall curve.

The same approach that was followed for DMD was followed for both diseases: for each symptom we analyzed the three drug candidates with the highest score (these drug candidates should not appear in the knowledge graph); then a literature search was performed to check if the drug candidates had been reported by the scientific community. The complete list of phenotypes as well as drug candidates and scores for each phenotype can be found in Tables S11 and S12 in the Supplemental materials. These tables also contain whether drug candidates had supporting evidence in the literature.

Among the predictions, it is worth mentioning pexidartinib, a drug candidate that was proposed by the model to treat memory impairment in AD and currently undergoing a clinical trial as a drug that could potentially be beneficial to treat the disease (Ancidoni et al., 2021).

We integrated disease-specific knowledge graphs in combination with GNN and XAI for interpretable drug repurposing. We found that state-of-the-art XAI methods based on GNNs support in silico predictions of candidate repurposable drugs for rare diseases by providing interpretable reasoning paths of mechanism of action. We developed rd-explainer, a method for performing computational drug repurposing specifically for rare diseases. It utilizes cutting-edge deep learning methods such as edge2vec and GNNs and provides drug–symptom/phenotype predictions with high-performance scores, and utilizes a modified version of GNNExplainer to provide explanations as semantic graphs for the interpretability of the results. We also found that these explanations have different levels of usefulness to generate testable hypotheses: paths linking drug and phenotype nodes are more understandable versus isolated clusters since they are similar to human reasoning; adding semantics to relations adds biological meaning to help to formulate a hypothesis and design the experiment in the laboratory; and providing clear semantic graphs by removing relations that are not contributors in the learning process. We tested the generalizability of our method by running it on two additional diseases: ALS and AD. ALS type 1 was selected to test the pipeline in another monogenic disease with fewer available information. AD was selected as it is a common disease with rare subtypes that can be caused by several genes, and we wanted to test the pipeline in a polygenic and multifactorial disease. We demonstrated that our pipeline performs well on mono- and polygenic rare diseases. rd-explainer is a researcher-centered drug repurposing method that has been demonstrated to be an innovative AI-based method for rare disease drug research. rd-explainer’s main advantage is its interpretability. The main motivation of this study was to provide explanations underlying AI predictions. rd-explainer provides explanations as semantic graphs, a type of explanation that resembles human reasoning. This is in line with current research on user-centric XAI (Wang et al., 2023). Not only does this have high value to support rare disease researchers to formulate evidence-based hypotheses testable in the wet laboratory (and reduce cost, time, and risk), but to gain new disease knowledge and speed up robust drug research. Our approach was to use state-of-the-art AI and XAI methods used in drug repurposing such as knowledge graphs to naturally represent known associations among biological entities with expressive semantics and supporting curated evidence, graph learning, and graph-based XAI methods. The advance in the field of rare diseases is that we provide interpretable predictions thanks to a pipeline that seamlessly integrates a graph learning model with an explainer, combining results of both model performance and explanation accuracy to mitigate the black-box problem and promote XAI adoption in the field (Borile et al., 2023a). BioKnowledge Reviewer tool provides rare disease-specific knowledge graphs for disease biology data collection using the Monarch knowledge base API (Queralt-Rosinach et al., 2020) and, thus, disease context. We argue that a tool or approach that can collect associations from a virtual, federated knowledge graph via APIs could extend this feature to any biomedical associations such as for drug data collection, and improve data and knowledge-driven research. Another great advantage of the rd-explainer method is its modular implementation; this means that different parts of the workflow (data, features, GNN, and explanations) can be independently modified and the pipeline can still be run. For example, if one is interested in using another node feature embedding algorithm instead of edge2vec, one can just modify that component of the pipeline and still run the rest of the workflow.

Our results showed that rd-explainer is a highly performant graph ML-based drug repurposing method. Our method builds rare disease-specific models trained in newly generated KG for the disease of focus and enriched with data for the prediction task. Compared with state-of-the-art AI-based drug repurposing approaches, rd-explainer demonstrates outstanding performance. Throughout this paper, we have compared rd-explainer with various AI methods that employ different techniques for their predictions, including GNNs such as GraphSAGE, random-walk embeddings such as edge2vec, and geometric embeddings using models like ComplEX, DistMult, and TransE. By combining random-walk models (edge2vec) with GNNs (GraphSAGE), rd-explainer achieves superior results in the link prediction task. In particular, edge2vec outperforms GraphSAGE, suggesting that the exceptional performance of rd-explainer is primarily attributed to the random-walk model, with the GNN providing an additional performance boost. This level of performance rivals other models developed for drug repurposing, such as deepDR (AUROC

Our new predictions are valid drug candidates since they are consistent with recent findings in the literature. We demonstrated that rd-explainer can provide new interesting drug–phenotype predictions. For example, sunitinib, one of the drugs that appears to be a good candidate to treat disease symptoms according to both models (using KG A and KG B), has been considered a good drug candidate for treating DMD and in 2019 appeared to be in preclinical trials (Vitiello et al., 2019). This drug belongs to the group of tyrosine kinase inhibitors, and many other drugs that belong to this category have been proposed by our model (fedratinib, sorafenib, bosutinib, ruxolitinib, and midostaurin). Similarly, mezlocillin, an antibiotic used to treat Gram-negative bacterial infections, has also been proposed by our model; while gentamicin, another Gram-negative antibiotic, was in 2019 in clinical trials to treat DMD (Vitiello et al., 2019). This way, despite not producing drug candidates that are undergoing a clinical trial or treating the disease, it produces drug candidates that participate in similar biological processes (i.e., tyrosine kinases inhibitor, Gram-negative antibiotics).

Importantly, explanations for hypothesis generation may enable one to move toward a lab-in-the-loop framework. Regarding the interpretability and utility of the explanations, one of the 21 explanations examined was supported by evidence in the literature. Nonetheless, this does not mean that the explanations are useless. A good example of this would be the explanation for the methylprednisolone–muscular dystrophy link (Figure S10 in the Supplemental materials). The explanation is simple: “Methylprednisolone treats DMD, DMD has Muscular Dystrophy as a phenotype; thus, methylprednisolone can treat Muscular Dystrophy.” In this case, the explanation does not contain supporting evidence but the explanation still makes sense. In the literature, methylprednisolone is said to be a good candidate for the treatment of muscular dystrophies because it interacts with the glucocorticoid receptor and this leads to the activation of anti-inflammatory signaling and the inhibition of proinflammatory signaling (Quattrocelli et al., 2021). The explanation proposed by rd-explainer does not provide the underlying causative mechanism that relates methylprednisolone and muscular dystrophy, but a researcher can still see that muscular dystrophies and methylprednisolone are interrelated. This illustrates how even though an explanation may lack comprehensive supporting evidence, it can still provide valuable directional cues for further more precise investigation. Another important aspect is that rare disease findings in a lab can be introduced back in the knowledge graph to update and improve the disease-specific AI model for continual learning and enabling precise experimental design. In addition, this synergy fosters collaboration between computational and wet lab researchers to increase efficiency for disease-specific drug research (Queralt-Rosinach et al., 2020).

Finally, we found that knowledge graph topology has an impact on explainability. It was also seen that KG A usually produces more complete explanations, while in KG B incomplete explanations appear to be more numerous. This could happen due to the difference in the graph structure itself: graph A has a smaller clustering coefficient than graph B (see Section 4.1), which leads to more edges being present in the subgraphs produced by GNNExplainer. This way, because the 15th edges with the highest scores are selected, it is more likely to find a path between drug and phenotype in KG A than in KG B. Another interesting difference is that explanations generated with KG A tend to have a higher “sensitivity,” while explanations generated with KG B tend to have a higher “specificity.” When an incomplete explanation is produced using graph A it is very unlikely that the explanation will contain supporting evidence (0 explanations were found to have evidence if the explanation was incomplete in KG A). Similarly, when a complete explanation is produced in KG B, it is very likely that the explanations have supporting evidence or contraindication evidence (67% of complete explanations had supporting evidence and 33% of complete explanations had contraindication evidence). For this reason, if one remains skeptical about the explanations themselves, this quality of the explanations might be used as filter/validation. For example, if an incomplete explanation is obtained with KG A, it is unlikely that it is trustworthy (none of the incomplete explanations had supporting evidence). Similarly, if a complete explanation is obtained using KG B, it is likely that there is some interaction between the drug and the phenotype (all complete explanations generated with graph B had either supporting or contraindication evidence). Our findings are aligned with recent studies in which the influence of clustering coefficient and topology has been observed on embedding-based predictions (Gupta & Sardana, 2015; Robledo et al., 2022), here we extend these observations to its impact on graph-based explanations.

Limitations and Future Directions

An important limitation of this study is that we only utilized one XAI method, which is not model agnostic. XAI is a hot research topic in AI, where new and more sophisticated methods are frequently published (Saranya & Subhashini, 2023). It would be good to extend our study to other XAI types to check how applicable they are given the unique characteristics of rare diseases, including limited data, lack of knowledge, and lack of a gold drug–phenotype standard. Additionally, the data used in the pipeline is from 2021, so updating the pipeline on recent data in the future could further strengthen its applicability. Another important limitation is the lack of standard benchmarking and metrics to systematically evaluate explainers and explanations. Currently, some initial efforts are underway in this direction (Agarwal et al., 2022, 2023; Borile et al., 2023b; Daza et al., 2024; Fel et al., 2022; Yuan et al., 2023), but there is still a lack of a common standard (Alam et al., 2023). Another limitation is the known reproducibility issue of our explainer (Agarwal et al., 2023), which may imply that the explanations are different each time it is used, and may reduce the confidence and reliance on the explanations. We did several experiments to try and bring consistency to explanations; for example, executing GNNExplainer several times and using the mean mask as the final mask or increasing the number of epochs (results not shown). However, this did not solve the issue. This experience makes us strongly recommend working on the standard evaluation of explanations by the XAI community to foster trust in the application of AI in bioinformatics and biomedicine. Additionally, many times the explanation would consist of a subgraph in which the two targeted nodes would be disconnected from each other, which might bring confusion and could be seen as a “bad” explanation. Therefore, work toward methods that prioritize or focus on providing just connecting paths such as metapath-based ones (Fu et al., 2020; Himmelstein et al., 2017; Jiménez et al., 2024; Mayers et al., 2022; Noori et al., 2023) and improving path visualization for user interpretation (Himmelstein et al., 2022; Wang et al., 2021, 2023) is arguably recommended. Finally, while we focused primarily on integrating a graph ML model with an explainer, a clear line of research will be to work on interpretability and reproducibility of explanations in the context of the drug repurposing task. The reproducibility/inconsistency could be affected by the size and complexity of our data. This inconsistency could make the users of this pipeline skeptical about its explanations and, for this reason, more investigation should be conducted on this element of the pipeline to make it a more robust model. To improve this, ontologies could be incorporated into knowledge graphs to increase the quality and interpretability of our data. Ontologies help to standardize data into the shared meaning by a community enhancing thus interpretability by domain users. Importantly, the formal description of knowledge embedded in ontologies can be used to verify data consistency and to infer implicit knowledge into the graph (Alshahrani et al., 2017). Nonetheless, knowledge graph and ontology changes pose a great interoperability challenge to the community to keep up with downstream bioinformatics and data science workflows and analyses (Hegde et al., 2024; Unni et al., 2023). Finally, it would make our work more “FAIR” (Wilkinson et al., 2016), that is, not only understandable by humans but also by machines, by providing our drug repurposing for DMD KG from a FAIR data point (da Silva Santos et al., 2023), and rd-explainer from workflowHub (da Silva et al., 2020).

Conclusion

We present an application of XAI in state-of-the-art computational drug repurposing for rare diseases. Our knowledge graph-based deep learning method provides human understandable explanations for the drug–symptom/phenotype link prediction task and we demonstrated that graph XAI can be applied to rare diseases. The rd-explainer method is an innovative approach that can maximize available disease-specific knowledge and generates context-aware predictions with explanations. Our GNN-based method is highly performant and drug predictions are often supported by evidence. The key contribution of our study is that our method provides explanations in the form of semantic graphs that can help researchers of rare diseases make informed decisions to experimentally validate candidate drugs. However, we detected that data topology affects explanations, highlighting the importance of investigating further how best to represent graphical knowledge for robust model performance and explanation accuracy. rd-explainer is generalizable to other rare diseases and provides computer-aided guidance for biologists to accelerate translational research. Finally, future research should advance on necessary standard mechanisms to evaluate explainability and foster adoption by domain experts and to mitigate the black-box problem of trust on AI, especially for biomedicine where decisions can have an important impact on people’s lives.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the European Projects: BIND https://bindassociation.org/ Grant no 847826 and EJPRD https://www.ejprarediseases.org/ Grant no. 825575.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.