Abstract

The swift progression of artificial intelligence, along with the rising demand for in-home assistance for individuals facing a loss of autonomy, has driven a significant increase in research within the domain of ambient assisted living (AAL). A key challenge challenge in developing assistive technologies in an AAL context concerns the automatic recognition of the ongoing user’s activity. Most existing approaches of Human Activity Recognition use a level of abstraction (low granularity) that is insufficient for developing efficient assistive technologies. The majority of them focus primarily on identifying broad categories of activities, such as eating or sleeping. While this identification is sufficient for monitoring general behavior, it does not enable providing practically meaningful real-time, actionable assistance. In this paper, we propose a comparative study of two novel algorithmic approaches for hand gesture recognition, intended to serve as core components of a fine-grained activity recognition model. To this end, we have defined 13 atomic hand gestures commonly used in cooking activities. The first model we introduce utilizes inertial data, collected from a standard wristband equipped with a triaxial accelerometer and gyroscope, and applies machine learning techniques for analysis. The second model is based on a less conventional approach, employing photoplethysmography sensors, which are rarely used for activity recognition. We detail the design and implementation of both approaches and the conducted experiments. Finally, we present a comparative analysis of the obtained results showing the potential of such approaches for the AAL.

Keywords

Introduction

Ambient assisted living (AAL) refers to a vast range of applications, infused with artificial intelligence (AI), designed to support individuals with impairments in various activities of daily living (ADLs) (Jovanovic et al., 2022). These systems employ diverse AI approaches to learn about their users and autonomously make decisions. AAL technologies primarily aim to enhance safety, comfort, and offer real-time assistance in daily activities (Purohit et al., 2022). A conventional solution is the implementation of fully automated systems to take over user tasks. However, this can potentially reduce the individual’s autonomy, motivation, and interest, and may even accelerate cognitive decline (Bouchard, 2017). An alternative, more user-centric approach is an assistive system that functions like a guardian angel, dynamically monitoring and supporting individuals with loss of autonomy and cognitive disorders, rather than completely taking over their activities. This system is designed to identify erroneous or risky behaviors and provide appropriate guidance, such as advice, suggestions, or reminders, enabling users to independently manage situations (Roberge et al., 2022). In emergencies, it can intervene directly, such as disconnecting a stove’s power. The primary goal is to deliver on-demand assistance based on real-time sensor data, thereby maintaining cognitive stimulation and personal dignity (Facchinetti et al., 2023).

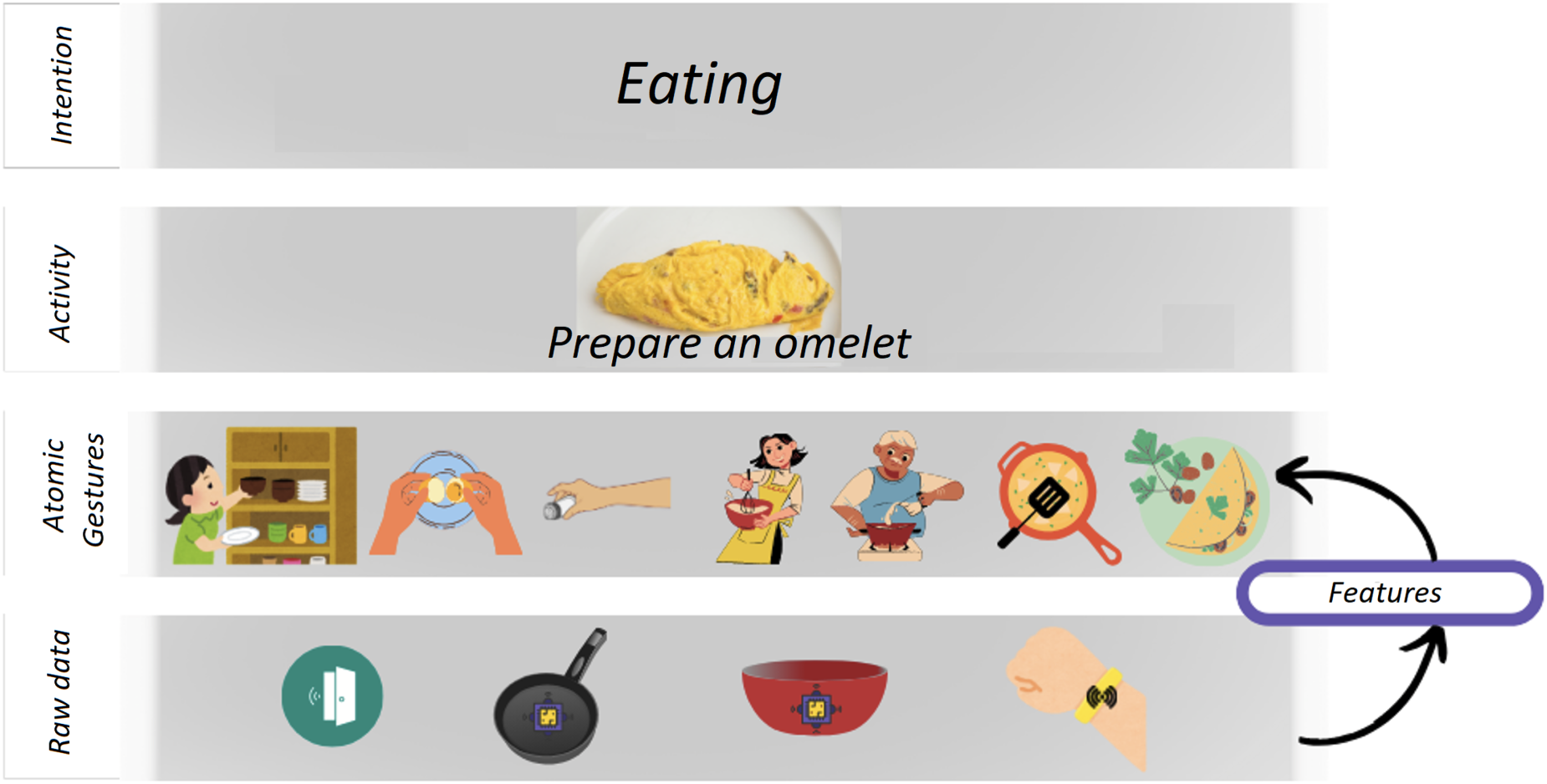

A key challenge in developing assistive technologies in an AAL context concerns the automatic recognition of the ongoing user’s activity. Most current research focuses on monitoring high-level activities, such as eating or sleeping. Figure 1 shows the different layers involved in a Fine-Grained Human Activity Recognition (HAR) model. At the bottom, you can see the sensors. They can be wearable sensors, distributed sensors such as RFID or infrared sensors, or they can be cameras. From these sensors, we collect raw data, which is used to extract significant features. These features are then used to infer, in our case and for fine-grained use, atomic hand gestures such as whip or flip. These gestures are part of recipes or cooking activities and constitute the basic steps. A specific sequence of atomic gestures forms a cooking activity, such as, in this example, “prepare an omelet.” Finally, the user has an intention or a goal for carrying out this activity, in that case, their intention is clear: They want to eat. Most research follows the same kind of model but only with three layers, using directly the raw data from sensors collected over an extended period to directly infer the high-level activity (Wang and Cook, 2022). This kind of high-level recognition suffices for monitoring the general behavior and daily routines of users, aiming to track the evolution of their conditions. However, for individuals with cognitive impairments, such as those with Alzheimer’s disease, effective real-time assistance requires more fine-grained recognition of specific activities and their component steps (Yang et al., 2018). For instance, if an assistive system wishes to help a person cooking pasta marinara, it will need to know the exact activity (not just cooking, but cooking pasta marinara) and also to know the actual ongoing step (the person is about to boil water) and finally, when the user needs help. While vision-based algorithms using cameras (Beddiar et al., 2020) could provide such granularity, privacy concerns, particularly among older adults, limit their use. Consequently, there is a growing interest in fine-grained recognition using wearable sensors (Diraco et al., 2023).

Human activity recognition process by layers.

Existing research efforts on fine-grained recognition often utilize common technologies, such as accelerometers and gyroscopes, in wrist-worn devices. In our recent work (Roberge et al., 2022), we followed this approach and proposed a new assistive activity recognition system to help people perform detailed cooking activities. Maintaining the ability to independently prepare meals is crucial for individuals with cognitive impairments and their caregivers, as it is a key aspect of instrumental activities of daily living (IADL). Meal preparation not only fulfills the basic need to feed oneself but also boosts self-esteem and upholds social roles. Nonetheless, challenges in performing this task, coupled with associated safety risks like burns and fire hazards, pose significant barriers to the autonomy of these individuals and their ability to remain living at home (Yaddaden et al., 2020). Our research focuses on developing assistive technologies for cooking activities in the kitchen, a significant part of older adults’ lives. For this purpose, we decomposed cooking activities into 13 atomic gestures, such as mixing, whipping, and pouring. We then employed a wrist-worn device with accelerometers and gyroscopes in a first recognition model to identify these atomic gestures accurately. We propose as a first contribution an algorithmic approach specifically designed to be used as the core component of a fine-grained activity recognition model enabling such assistive technologies. This approach involved analyzing ambulatory movements of hand gestures, associated with precise patterns forming a set corresponding to fine-grained activities. We constructed a fully labeled dataset serving as a basis for learning atomic gestures and conducted experiments with 21 participants, yielding promising results. Despite these positive outcomes, some limitations remained. For example, we had to use two distinct sliding windows with our algorithm: One with a one-second window and the other with a three-second window. Specifically, we executed two instances of a random forest (RF) classifier. The first instance processed one-second chunks, while the second instance processed three-second chunks. The rationale for this approach was that relying solely on a three-second window resulted in some one-second gestures being misclassified, for instance, some similar gestures like cut and whip. It is also important to clarify that this method was only employed during our initial experiment with accelerometers. In the subsequent experiment, which included both accelerometers and photoplethysmography (PPG) sensors, we successfully utilized a single instance of the algorithm with a three-second window. The inclusion of the additional sensors enabled accurate recognition of all gestures using just one instance of the algorithm.

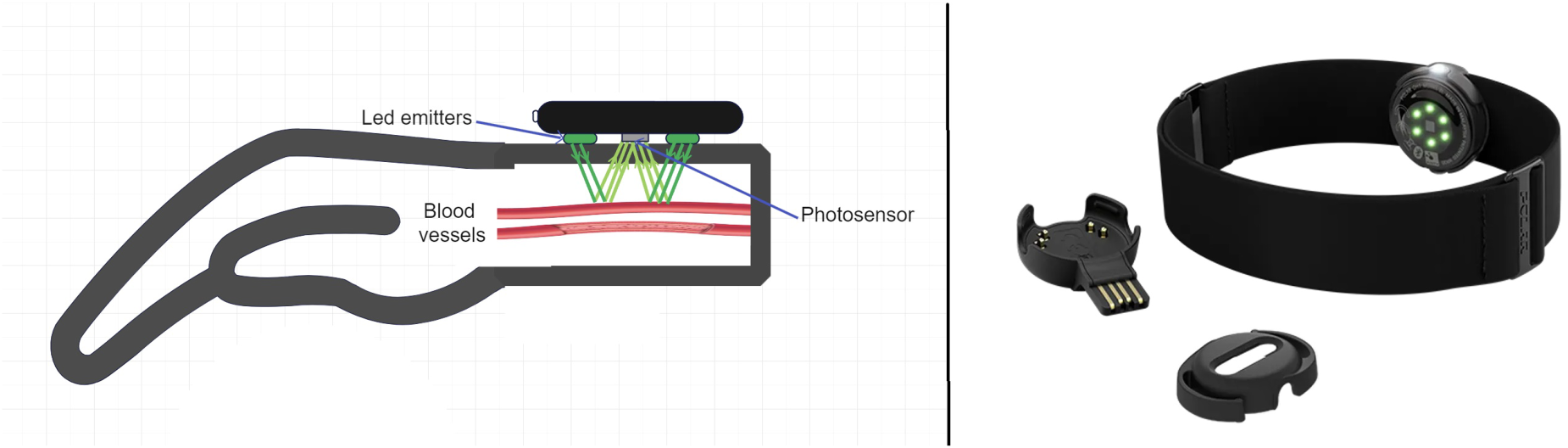

In recent years, PPG sensors have gained attention as a non-invasive and convenient method for capturing vital cardiovascular information (Ebrahimi and Gosselin, 2023). By measuring variations in light absorption due to blood flow, PPG enables the extraction of valuable physiological signals such as heart rate and blood volume changes. These sensors have been widely used for heart rate monitoring, blood pressure estimation, sleep monitoring, and research in human–computer interaction (HCI) (Castaneda et al., 2018). However, this technology has rarely been applied in activity recognition, especially in smart home applications. The common practice with PPG technology involves filtering the signal from a wrist with PPG sensors to remove noise (mainly caused by hand and arm movement) for accurate monitoring. Yet, we hypothesized that this ‘noise’, caused by hand movements, might be precisely what we need to characterize hand gestures with precision. This hypothesis was the focus of our second contribution presented here. The operation of a PPG sensor, as we just described, is schematically depicted in Figure 2.

Schematic showing the operation of a photoplethysmography (PPG) sensor.

This paper focuses on leveraging the power of accelerometers, gyroscopes, and PPG to achieve fine-grained HAR, integrating machine learning techniques to create a sophisticated recognition approach. Our objective is to demonstrate the potential of all these combined sensors, especially PPG, which has only rarely been used in such context, in recognizing specific activities, such as different types of kitchen gestures. Therefore, we investigated two research questions.

The first question we address (RQ1) is: can accelerometers, gyroscopes, and PPG sensors be used with enough precision to recognize atomic hand gestures in the context of fine-grained activity recognition?

The second question (RQ2) is: can accelerometers, gyroscopes, and PPG on a wrist, used alone or in combination with other sensors, be precise enough to develop an activity recognition model?

To answer these, we developed two novel algorithmic approaches for fine-grained activity recognition based on accelerometers, gyroscopes, and PPG. Our two models are based on a dataset collected from a wristband equipped, in our first approach, with accelerometers and gyroscopes and, in our second approach, with accelerometers and a PPG sensor. To build the datasets, we used a set of 13 atomic cooking gestures performed by recruited participants. We conducted two experiments with both approaches with 21 participants each time, for a total of 42. The collected data have been labeled and made available to the scientific community. We obtained promising and insightful results, showing the potential of both approaches using these sensors. In addition, our results and our dataset have been made publicly available online to the community.

The remainder of this paper is organized as follows: Section 2 provides an overview of related work in the field, highlighting key achievements and existing gaps. Section 3 details the methodology employed in both our approaches, including the data acquisition with sensors, feature extraction, and the machine learning framework. Section 4 presents experimental results and discussions, shedding light on the performance of both of our proposed models. Finally, Section 5 concludes the paper with a summary of findings, implications for future research, and potential applications of fine-grained HAR in real-world scenarios.

To ensure clarity and precision in this study, it is essential to define key terms and concepts used throughout the discussion. This section provides a comprehensive list of definitions to establish a shared understanding of the terminology, particularly those that may have specific meanings within the context of this research. By standardizing these definitions, we aim to facilitate accurate interpretation and avoid potential ambiguities.

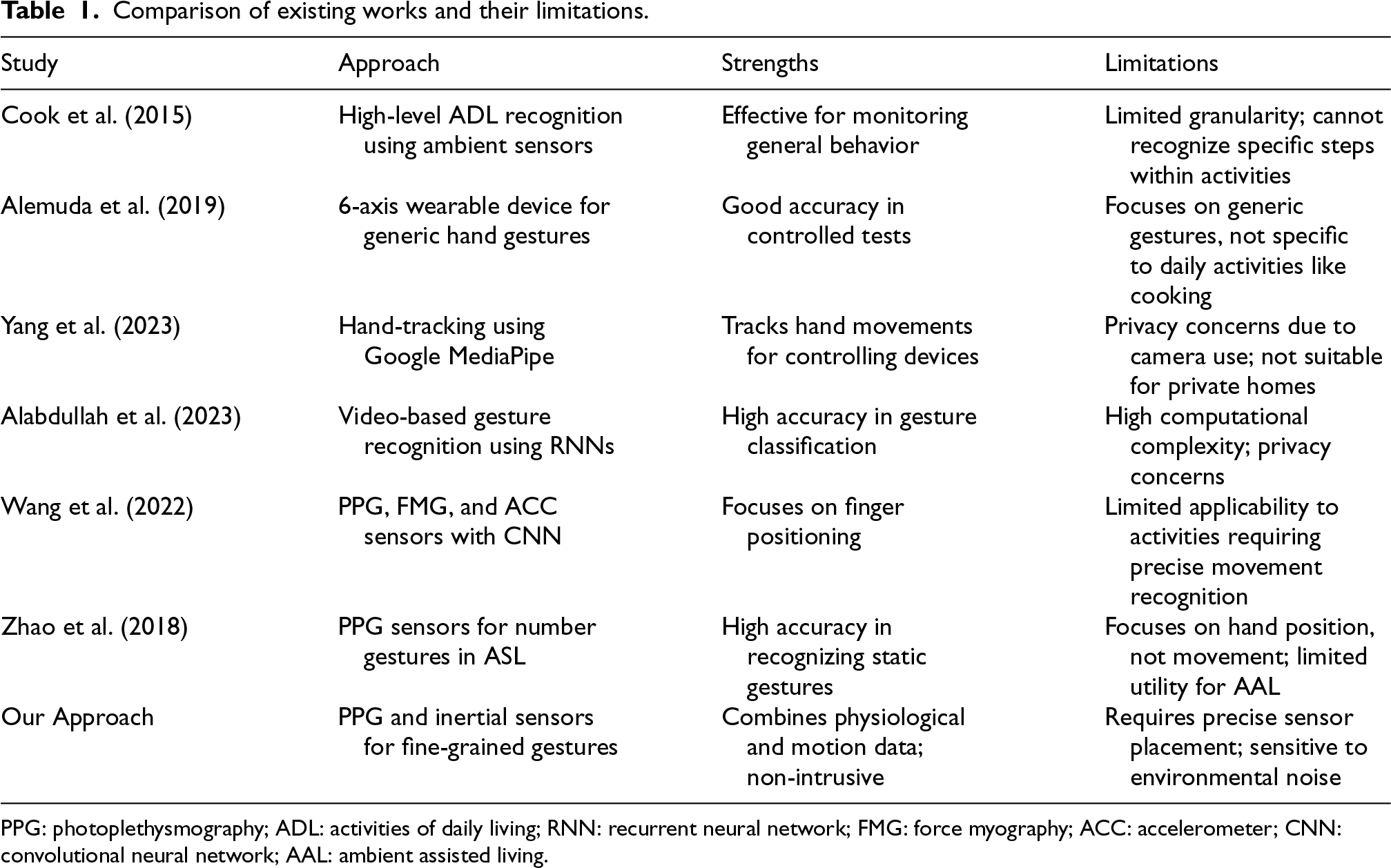

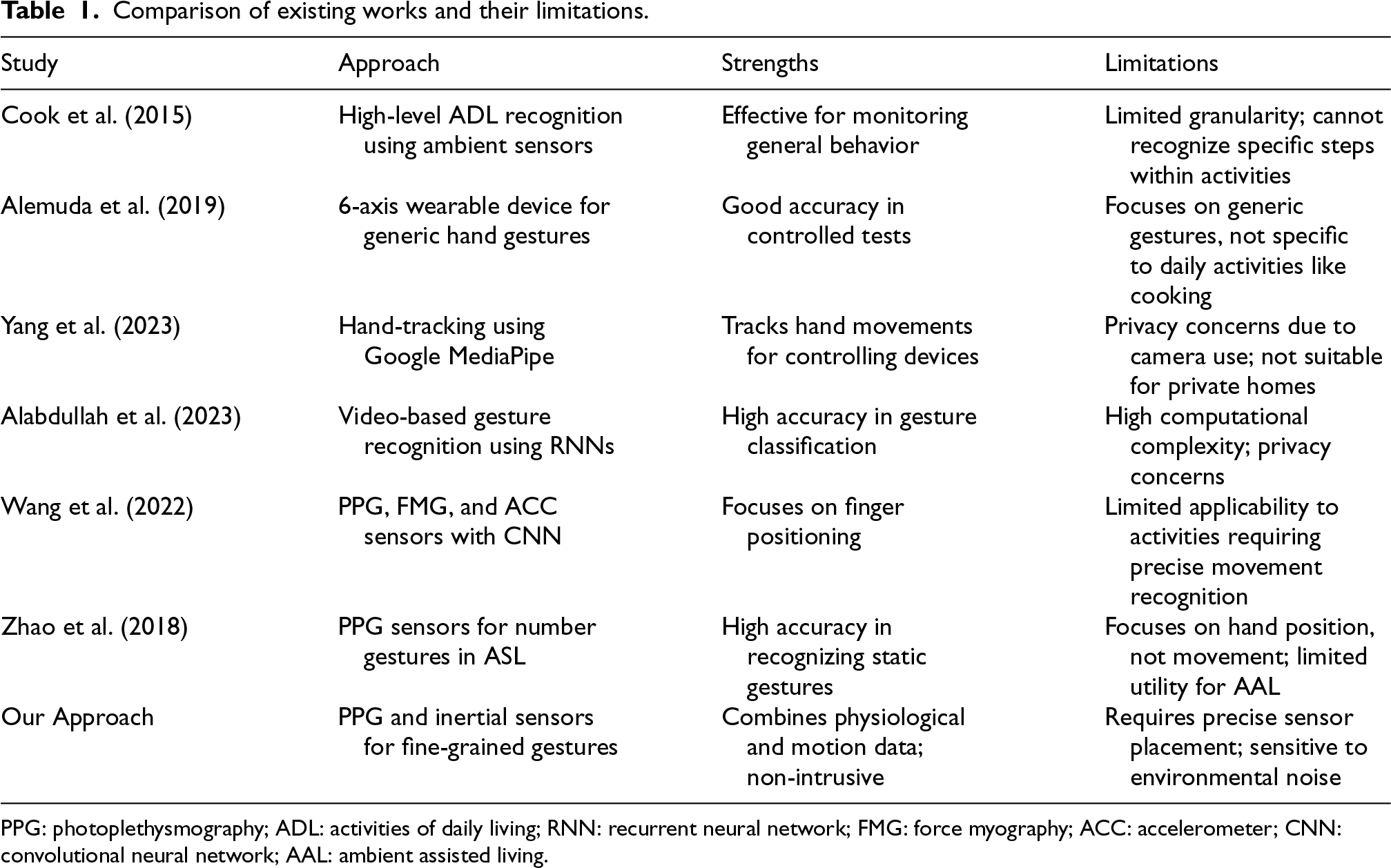

In the literature, the main problem (Alrazzak and Alhalabi, 2019; Das et al., 2023; Fellger et al., 2020; Kolkar and Geetha, 2021; Sangavi and Hashim, 2019) inherent in the development of assistive technologies adapted for home care concerns AI models allowing real-time recognition of a person’s ongoing ADLs. Few examples could be: Preparing a meal, washing hands, taking medication, etc. Generally speaking, the problem of ADL recognition in smart homes relates to a fundamental question in AI (Das et al., 2023): How to identify the actual ongoing activities, as well as their progress (steps), so that this identification can be used in a decision-making assistive process? Typically, a recognition system takes as input a sequence of observations (low-level inputs coming from sensors), performs an interpretation of these inputs (extraction and interpretation of that information), and then applies an algorithm allowing to match the observed input sequence with one of the activity models (signatures) contained in a knowledge base (Table 1).

Comparison of existing works and their limitations.

Comparison of existing works and their limitations.

PPG: photoplethysmography; ADL: activities of daily living; RNN: recurrent neural network; FMG: force myography; ACC: accelerometer; CNN: convolutional neural network; AAL: ambient assisted living.

The activity recognition process in smart homes involves multiple steps (Civitarese, 2019), typically broken down into data collection, data preprocessing, feature extraction, and activity classification. Initially, raw data is gathered from various sensors placed in the environment or worn by the user. Commonly used sensors include accelerometers, gyroscopes, cameras, and wearable devices that track movements, location, and physiological signals such as PPG.

Next, the collected data undergoes preprocessing to remove noise and irrelevant information. This includes data cleaning, handling missing values, and normalizing sensor readings to ensure consistency. Techniques such as filtering, segmentation, and windowing are commonly applied at this stage to prepare the data for analysis.

The third step involves extracting meaningful features from the preprocessed data. These features can include statistical measures (mean, variance), temporal characteristics (duration, frequency), and spatial characteristics (location, orientation). The goal is to transform the raw sensor data into a set of descriptors that can be used for activity classification.

The core of the recognition process involves applying machine learning or deep learning algorithms to classify the extracted features into specific activities. Commonly used classifiers include decision trees (DTs), naive Bayes classifiers (NBCs), RFs, and support vector machines (SVMs). Advanced techniques such as convolutional neural networks (CNNs) and recurrent neural networks (RNNs) are increasingly used for their ability to capture spatial and temporal dependencies in the data.

Finally, classification results are post-processed to improve accuracy and reliability. Techniques such as smoothing, voting, or probabilistic methods may be applied. Validation of the results against reference data ensures the robustness and accuracy of the system. It should be noted that the presented figure shows the classical approach in the literature. In our specific case, instead of using large time windows (for instance 4 hours, all the afternoon) to infer directly at the last step the high-level activity (for instance watching TV), we use the raw data and small time windows (such as 3 seconds) to infer small atomic gestures that will further be used in a fine-grained activity recognition model.

Recent existing works and their limitations

Recent studies have explored various approaches to improving the recognition of ADLs in smart homes, each with its own strengths and limitations (Civitarese, 2019; Cook and Krishnan, 2015; Nagpal and Gupta, 2023; Nguyen et al., 2021; Wang and Cook, 2021; Yang et al., 2018).

The well-known Professor Diane Cook and her team from Washington State University (Cook et al., 2015; Wang and Cook, 2021, 2022; Wang et al., 2023) have dedicated over a decade to exploring the ADL recognition problem from various perspectives. Their efforts have yielded diverse approaches for identifying high-level ADLs from low-level ambient sensor data, employing techniques such as DT classifiers, NBCs, RF, and SVMs. Additionally, they have worked on the issue of multi-resident ADL recognition (Wang and Cook, 2021) and recently introduced a method for finally discerning activity start and end times. While this advancement provides valuable temporal information, the issue of recognizing very specific low-level actions (steps) remains unaddressed. However, while these methods are effective in recognizing high-level activities and segmenting activity times, they fall short in identifying specific low-level actions or steps within an activity, which is crucial for providing real-time assistance. Closer to our actual specific proposal, Alemuda and his associates used a 6-axis wearable device to capture hand gestures and applied machine learning algorithms like DTs and logistic regression for gesture recognition (Alemuda and Lin, 2017). Their method demonstrated good accuracy in controlled tests. However, the main limitation is that they focus on generic hand gestures rather than gestures specifically targeting daily activities like cooking, which limits its practical applicability for daily living assistance. Cheng and his co-researchers developed a hand-tracking system using Google MediaPipe to control household devices, aiming to assist older adults (Yang et al., 2023). Their system tracks hand movements using a mobile device camera. However, the reliance on camera-based systems raises privacy and complexity concerns, making it less suitable for widespread use in private homes, especially for older users who may have privacy concerns. Alabdullah and his collaborators utilized video streams and advanced image processing techniques to detect and classify hand gestures (Alabdullah et al., 2023). They employed a combination of adaptive median filtering, gamma correction, saliency maps, and RNNs for feature extraction and classification. Despite high accuracy, the use of video data introduces significant computational complexity and privacy issues. The system’s computational demands may not be feasible for real-time applications in typical home environments. Wang and his fellow researchers proposed an innovative method combining PPG, force myography (FMG), and ACC sensors on a wrist platform, using a multi-head attention mechanism within a CNN for gesture recognition (Wang et al., 2022). Although this approach primarily focuses on finger positioning rather than movement itself, which limits its applicability to activities requiring precise movement recognition. Additionally, issues of misclassification due to user posture were noted as a significant problem. Zhao and his collaborators used commercial PPG sensors to classify number gestures in American Sign Language, achieving notable accuracy (Zhao et al., 2018). It is a rare case of a work using PPG sensors for gestures recognition. However, similar to Wang et al., they focus on the position of the hands rather than the movements, limiting its utility in our AAL context.

In summary, while recent advances have made significant progress in activity recognition, limitations such as insufficient granularity, privacy concerns, and the majority of applications in non-AAL contexts bring the need for more targeted research on these specific issues. Therefore, we need to address these issues by developing more adapted models capable of recognizing fine-grained actions, ensuring privacy, and optimizing the real-time applicability in an assistive technology context.

Two approaches for fine-grained gestures recognition

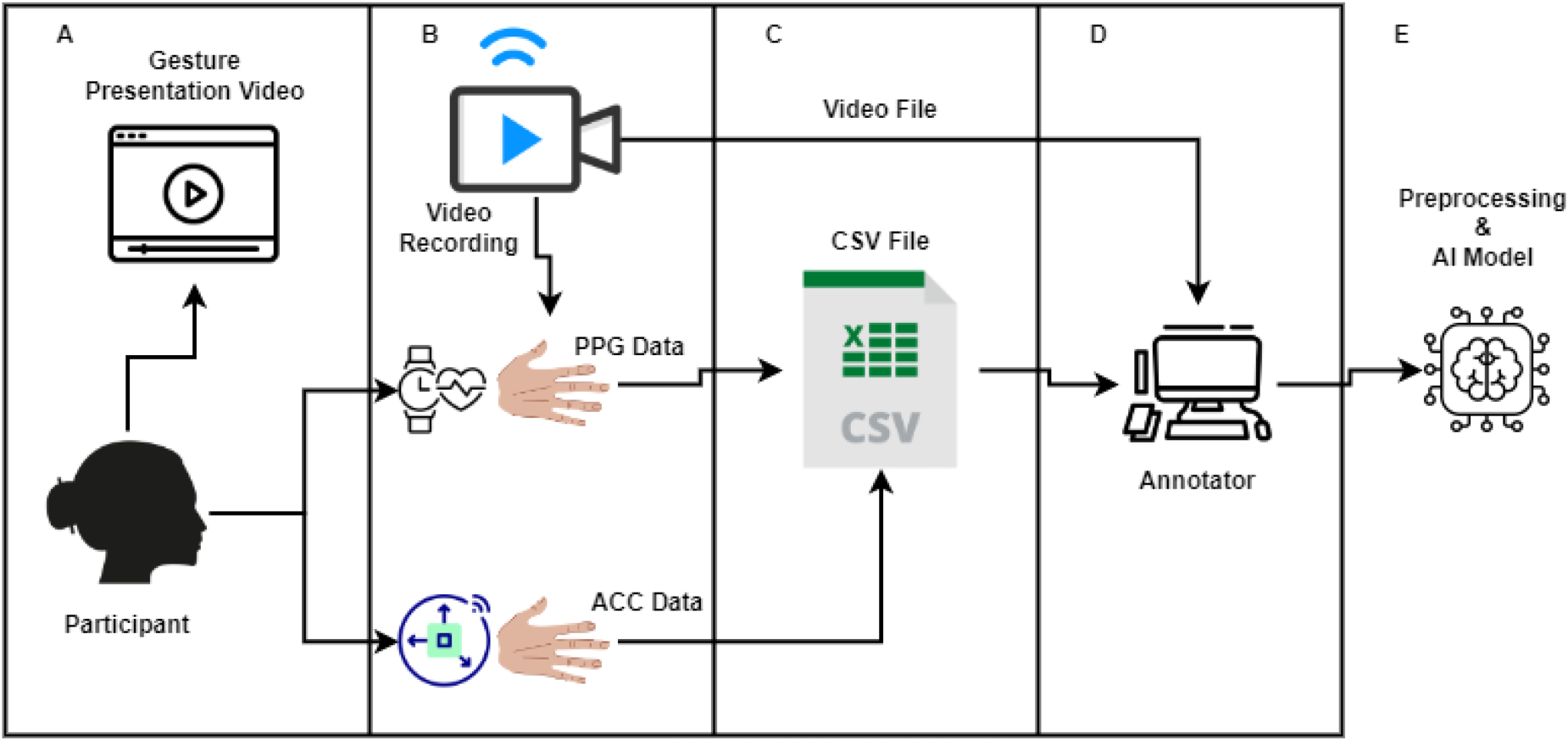

We propose two approaches for fine-grained gesture recognition (basic actions), which constitute the first step in developing a fine-grained activity recognition approach. In our work, we focus on the utilization of sensors and machine learning techniques to accurately identify atomic gestures performed by individuals in a cooking context. Figure 3 illustrates the software architecture of our system, which integrates various sensors and machine learning algorithms to achieve accurate gesture and activity recognition.

Software architecture of our system.

As we can see in the figure, the user first follows a demonstration video of a kitchen gesture to replicate, while the bracelet equipped with either accelerometer and gyroscope (first approach) or with PPG and accelerometers (second approach) records the axis data or the variations in blood flow and physical movements of the gestures made on a computer, using a bluetooth low energy connection. The data is recorded in a CSV file, while a video of the participant’s hand movements is saved separately. We then proceed to annotate the gestures using the recorded video. Subsequently, the annotated data is preprocessed to train an AI model capable of recognizing hand gestures from the sensor data.

The final goal of the system is to be the first pillar of a fine-grained activity recognition system identifying specific tasks performed and their steps. Rather than implementing fully automated systems that could limit individuals’ autonomy, the idea is to deploy an assistive system that acts as a “guardian angel.” This system will dynamically monitor the activities of individuals with declining autonomy and cognitive abilities in real-time. Its role is to identify erroneous or risky behaviors and offer appropriate advice, suggestions, or reminders to enable the user to rectify the situation autonomously. In case of emergencies, the system can also intervene directly to ensure the individual’s safety. The primary goal is to provide on-demand assistance based on real-time information collected by sensors, while maintaining cognitive stimulation and dignity for the individual. Fine-grained activity recognition is emphasized as a key challenge in the development of such assistive technologies, requiring precise understanding of performed gestures and atomic steps accomplished.

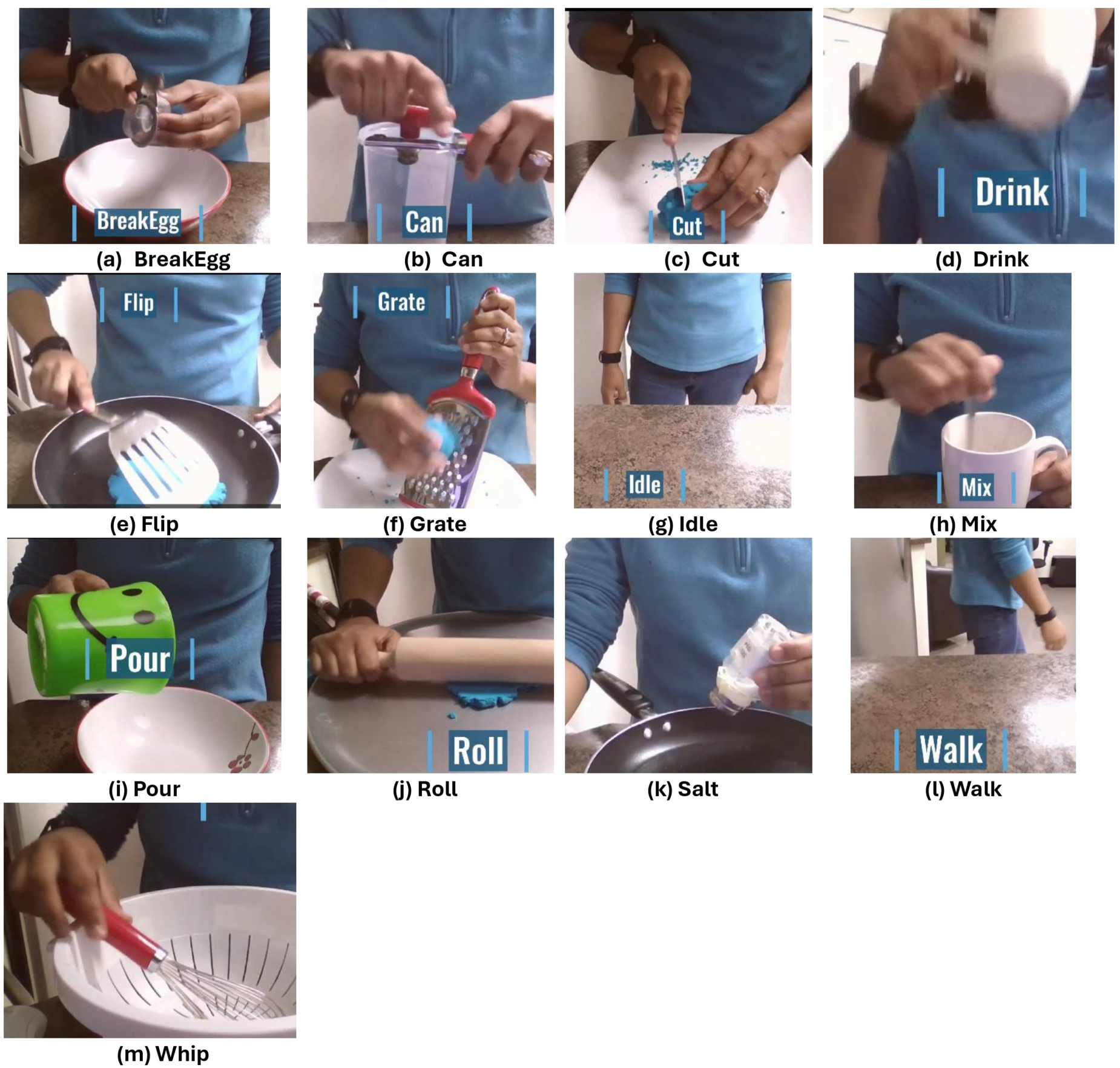

To develop our two models, we take as defined a set of 13 cooking gestures. Figure 4 shows the set of 13 atomic kitchen gestures used in our study. This visual representation helps to understand the specific movements that were classified and recognized by the system. We took the context of cooking because of its crucial importance in the autonomy of individuals with impairments. It is well known that being able to prepare a meal is one of the first indicators of autonomy (Yaddaden et al., 2020). We created a labeled dataset comprising the 13 atomic kitchen gestures performed by recruited participants with each approach, and these data have been made available to the scientific community. 1 The results obtained demonstrated the potential of both accelerometers-gyroscope and PPG sensors for gesture recognition, underscoring the importance of this innovative approach for assistive technologies. One of the originalities of the paper is to promote the use of PPG sensors for activity or gesture recognition for assistive technologies, which are rarely utilized.

Kitchen gestures set.

Developing a robust recognition system hinges on the caliber of the learning dataset. Thus, the meticulous construction of an accurate and comprehensive dataset stands as a key aspect of any project in this domain. Our approach involved devising a stringent experimental protocol aimed at ensuring the integrity of the collected data. This protocol can be succinctly summarized as follows:

Participants sign a consent form allowing data collection and filming of hand gestures. Participants are asked to remove any bulky or interfering equipment. Participants are instructed to put on the wristband. A team member initiates system testing and data acquisition by performing several practice gestures. Once all devices are synchronized and practice runs are successful, participants are filmed via a mobile phone while the acquisition program starts. Participants choose to perform gestures while seated or standing. Each user is presented with a pre-defined series of cooking gestures to be performed in a random order, following instructions displayed on a monitor. Participants are prompted to execute one brief gesture at the video’s outset to facilitate synchronization (a clap). Participants proceed with performing the predetermined gestures, as outlined in the accompanying table. The two previous lines are repeated for each gesture.

Prior to each experiment, participants were required to sign a consent form, granting permission to collect their gestural data using the wristband and its embedded sensor, as well as to film their hands during the execution of each gesture to facilitate data annotation based on the video footage captured during the experiment. Participants were given the option to perform each gesture while seated or standing, depending on their comfort in either posture. The variation in postures for each user aimed to bring us closer to the real-life conditions under which each of us performs gestures in daily life. All hand gestures were executed and recorded within a controlled setting. Each participant was tasked with performing a minimum of 20 repetitions of each gesture (10 repetitions for each hand). Uniform wristband placement and orientation were ensured across all participants for both hands. This meticulous protocol facilitated the collection of inertial data from multiple repetitions of various gestures performed by the 21 participants, 2 all stored in CSV files.

The established protocol has been instrumental in the construction of diverse datasets, all of which are readily accessible online for further research and utilization.

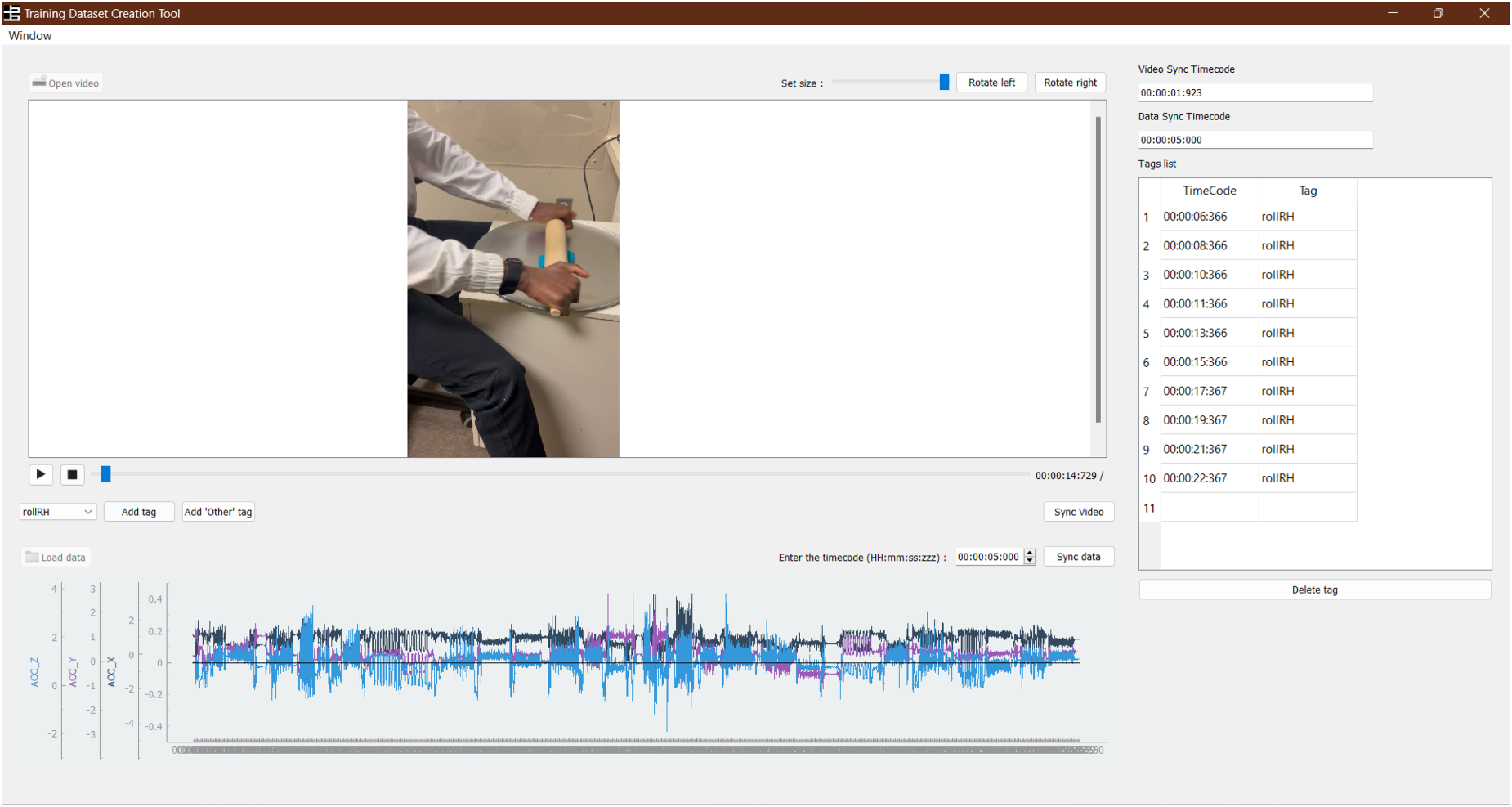

The annotation of gestures was carried out through a tailored annotation program, depicted in Figure 5. The process involved loading the video footage of the desired gesture along with its corresponding CSV file, followed by synchronizing the two components. Participants were prompted to initiate a swift movement at the video’s commencement to aid in synchronization. Using the program, gestures were tagged at the onset of each discrete movement from a predefined list. Once all gestures were annotated and consolidated into a single file, the software generated an output file mirroring the input CSV file, but this time incorporating labels at the designated timecodes corresponding to the initiation of each gesture. This process was repeated for all participant files.

Annotator application.

We proceeded to two distinct experiments, one with each approach. The first experiment with the inertial sensors was conducted with 21 participants. In that case, 17 features were extracted for each of the six axes of the input data, commencing from the labeled rows corresponding to each gesture’s timeframe. This process culminated in the creation of a new dataset comprising 102 columns (attributes) and n rows, with “n” representing the total number of annotated gestures, as illustrated in the accompanying table, showcasing the list of extracted features.

The left part of Figure 2 provides an overview of a representation of our Polar OH1+ armband, used in our work.

The second experiment with the PPG sensor mounted on a wristband was also conducted in the same controlled environment using the same experimental protocol. A total of 20 participants followed the protocol. Like the first experiment, the data collection team typically consisted of two researchers, with one responsible for initiating the execution of various scripts and monitoring their proper functioning during the data collection process, while the other ensured to guide and assist the participant throughout the data collection process. Concerned with obtaining superior data quality and operating under the assumption that the orientation of the Polar OH1+ sensor on either hand may influence the quality of collected data, particularly for the recognition of specific gestures, our team took into account several factors to ensure impeccable data quality:

This section presents the classification approaches used for fine-grained gesture recognition in our AAL system. Following sensor data preprocessing and feature extraction, we developed and evaluated two distinct classification approaches: One based on accelerometer and gyroscope, and another combining accelerometer and PPG. For each approach, we detail the selected classification algorithms, their optimization parameters, and the validation strategies employed. Our methodology aims to maximize recognition accuracy while maintaining computational complexity suitable for AAL environments.

Inertial sensors based approach

For the accelerometer and gyroscope-based approach, the dataset was preprocessed, and new features were extracted to enable the application of a supervised learning approach to a multi-class classification problem. The dataset was divided into a training set (70%) and a test set (30%). Subsequently, a MinMaxScaler was applied to the training and test attributes to normalize the data. Slow gestures were incorporated into the fast gesture dataset as an “other” class due to the potential to shorten the temporal window of slow data. However, the reverse was not possible due to the minimum recording duration of fast gestures. Walking and immobility gestures were labeled as “other” in the slow gesture dataset. This data preparation was performed twice, once for slow gestures and once for fast gestures, resulting in a total of 6607 gestures.

After data preparation, 5668 slow gestures and 939 fast gestures were obtained. The main objective of the study was to accurately classify these gestures. To achieve this, six classifiers were selected, including classification and regression trees, k-Nearest Neighbors (

The LOSO strategy is a cross-validation technique where the model is trained on data from all participants except one, who is used for testing. This process is repeated

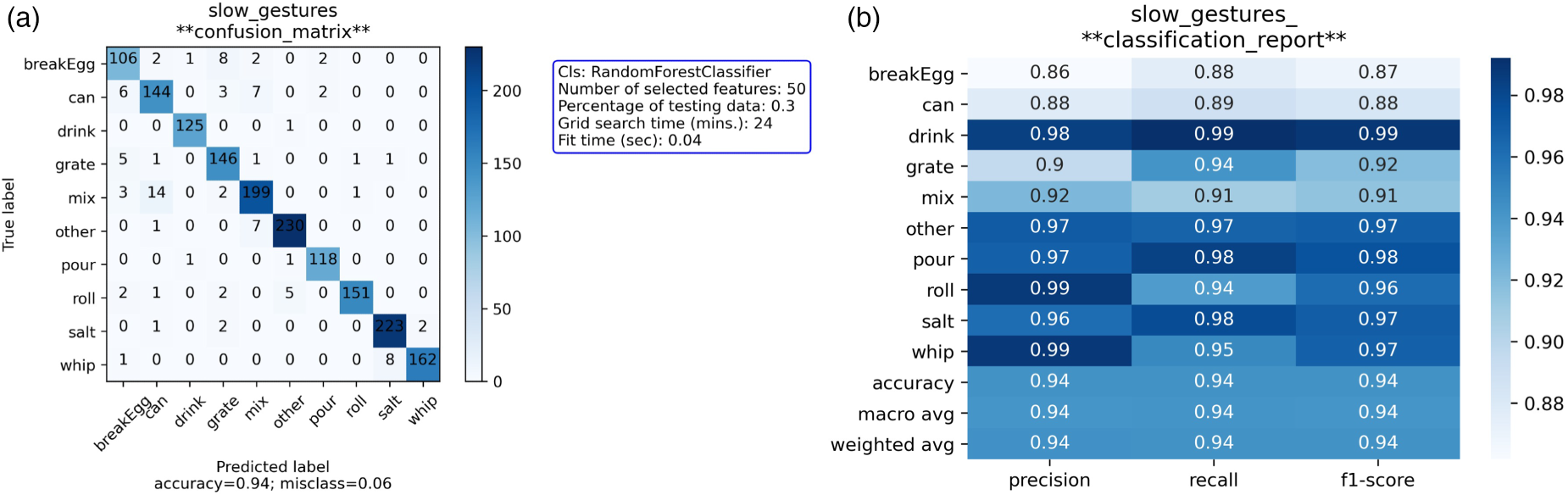

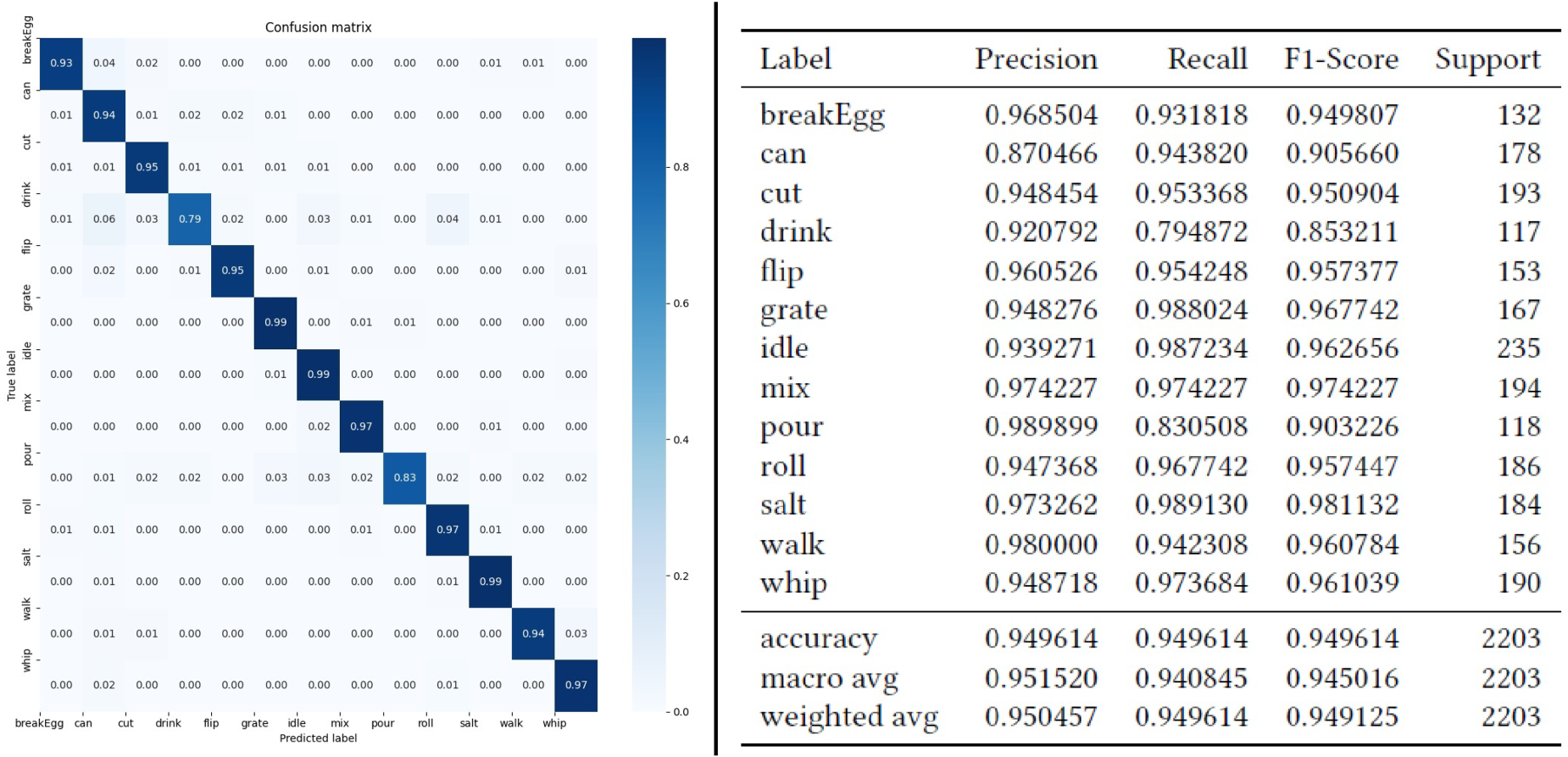

These results are promising for the development of a comprehensive model for fine culinary activity recognition. Furthermore, avenues for future work include adding a mechanism for prioritizing gesture types in cases of simultaneous recognition of multiple gestures. Figure 6 illustrates the performance of the classifiers through a confusion matrix and a classification report. The confusion matrix (a) visualizes the accuracy of gesture recognition across different categories, while the classification report (b) provides detailed metrics on precision, recall, and F1-score for each gesture type.

(a) Slow gestures confusion matrix; (b) Slow gestures class. report.

For data collection using a bracelet equipped with PPG and accelerometers, participants performed a series of cooking gestures to create a dataset. Once the dataset was constructed, we tested the ability of our model to recognize each gesture from the data. The process began with encoding categorical variables, followed by partitioning the dataset into two distinct subsets: 80% for training and 20% for testing. Using a RF algorithm with 100 trees, we trained the model on the training dataset and achieved an accuracy of 94.96%.

The RF algorithm was selected as our primary classifier due to its exceptional performance and several key advantages. In our initial classifier comparison, it achieved near-perfect accuracy for gesture recognition, demonstrating its robustness and reliability. The algorithm’s ensemble learning approach, which combines multiple DTs, provides superior generalization capabilities and resilience against overfitting compared to single-model classifiers. Its decision-making process can be understood through feature importance analysis, which highlights how different sensor measurements contribute to gesture classification. For instance, accelerometer data in the

The results showed that all gesture classes had at least 100 occurrences, with “idle” being the most frequent class (235 occurrences) and “drink” the least frequent (117 occurrences). We observed that the “drink” gesture had the poorest performance, likely due to its slowness compared to other gestures.

The study resulted in a 94.96% accuracy in recognizing hand gestures, thus demonstrating the potential of wearable sensors, such as PPG, to enhance the quality of environments for independent living, especially for older adults. The effective use of PPG sensors for gesture recognition highlights the versatility and capabilities of these sensors in detecting subtle hand movements.

Figure 7 illustrates the feature importances derived from the RF model. This visualization helps to identify which features were most influential in the classification process, providing insights into the model’s decision-making process.

Feature importances.

Obtained results

The results obtained with each of the two approaches provided valuable insights into the ability of these technologies to recognize hand gestures associated with specific activities.

With inertial sensors, the models demonstrated an interesting accuracy in hand gesture recognition of around 85%. By analyzing data from accelerometers and gyroscopes, the models were able to capture the characteristic movements of participants when performing gestures. The results showed a strong correlation between the performed gestures and the model predictions, with satisfactory accuracy in atomic action recognition. In our study, a key distinction was made between short gestures and long gestures. Short gestures, defined as those that can be completed in less than one second, were more easily identifiable and distinguishable by inertial sensors. In contrast, long gestures, requiring more than three seconds, presented challenges in precise recognition due to difficulties in maintaining a consistent movement trajectory over a prolonged period.

On the other hand, PPG sensors also showed promising performance, with an average accuracy in hand gesture recognition approaching 90%. By exploiting variations in blood flow detected by PPG sensors, the models were able to accurately identify gestures associated with different activities, adding a physiological dimension to gesture recognition and improving overall model accuracy.

Comparing the results of the two approaches, it is interesting to note that PPG sensors exhibited a slight improvement in accuracy compared to inertial sensors. This difference can be attributed to the ability of PPG sensors to detect subtle variations in blood flow, providing better discrimination of hand gestures. Nevertheless, inertial sensors also demonstrated a strong ability to recognize gestures, highlighting their robustness in capturing movements. An important observation from the study was the presence of inaccuracies between certain types of gestures when using accelerometers, due to the sensitivity of these sensors to vibrations and extraneous movements. Moreover, with the PPG sensor approach, we were able to recognize short (one second) and long (three seconds) gestures using a single function within our algorithm. However, with the inertial sensor (ACC), we had to implement two distinct instances within our algorithm—one for processing short gestures and another for long gestures—to obtain similar results. This difference highlights the advantage of PPG, which allows a more unified approach in gesture processing, regardless of their duration.

In summary, the results obtained with both approaches confirm the feasibility of fine activity recognition through hand gesture analysis. Each approach has unique advantages in terms of accuracy and ability to capture gesture characteristics, emphasizing the importance of choosing the appropriate technology based on specific application needs. Finally, it is probably interesting to combine a set of such sensors, which can all be embedded in a relatively short space on a wristband. A detailed comparison of the performance of both approaches reveals that the combined use of PPG sensors and inertial sensors can offer synergistic advantages in terms of gesture recognition accuracy and robustness. By integrating data from these two types of sensors, it is possible to enhance activity detection quality and reduce classification errors, thereby providing a more comprehensive and reliable solution for monitoring human activities in smart environments.

Advantages and limitations of each method

The accelerometer and gyroscope-based approach offers several significant advantages. Firstly, it allows for precise capture of device movements and orientation. This precision is particularly useful for detecting position changes and sudden movements, providing a solid foundation for various applications, such as physical activity tracking. Additionally, this approach can be used to recognize simple gestures and basic activities, making it versatile in many contexts. However, despite its advantages, the accelerometer and gyroscope-based approach presents certain limitations. It may be restricted in recognizing more complex and detailed gestures, often requiring complex algorithms to interpret data and recognize fine activities accurately. Thus, while effective for simple tasks, it may be less suitable for applications requiring more sophisticated motion recognition.

In contrast, the accelerometer and PPG-based approach offers a different perspective with its own distinct advantages. Not only does it allow for precise measurement of blood flow variations, but it also captures detailed physiological signals. This capability gives it an edge in recognizing intricate gestures, especially in fine activities such as cooking, where precision is crucial. Moreover, the physiological data it collects can enhance activity recognition accuracy, enabling a deeper understanding of user actions and supporting more advanced applications in areas such as health and well-being.

However, the accelerometer and PPG-based approach is not without its limitations. PPG sensors are sensitive to ambient light variations, which can impact signal quality. Blood flow measurements can also be affected by physiological factors such as blood pressure, heart rate, and skin temperature, potentially introducing variability in gesture recognition accuracy. Furthermore, the quality of PPG signals may degrade during rapid or intense movements, complicating the differentiation between similar gestures performed at varying speeds. Sensor placement on the wrist must be precise and consistent, as even slight variations can influence blood flow readings and subsequently affect gesture recognition performance.

Environmental limitations —uncontrolled environment challenges

While our sensor-based approach demonstrates promising results in controlled experimental settings, deploying it in real, uncontrolled environments introduces a variety of challenges. This section examines the limitations inherent to our approach, including potential issues related to sensor accuracy, environmental variability, and user behavior. Understanding these constraints is critical for evaluating the feasibility of real-world implementation and identifying areas for future research and improvement. By addressing these limitations, we aim to provide a balanced perspective on the applicability of our system in practical settings.

The use of a photoplethysmogram (PPG) sensor combined with an accelerometer for gesture recognition presents several limitations when deployed in an uncontrolled environment. First, environmental noise can significantly impact the reliability of the PPG sensor. Variations in ambient light, motion artifacts caused by rapid or irregular movements, and changes in skin contact due to perspiration or varying pressure can distort the signal. These factors reduce the sensor’s ability to accurately capture physiological data linked to gestures.

Second, the accelerometer’s data can also be affected by extraneous movements or vibrations not related to the intended gestures. For example, activities such as walking, interacting with other objects, or environmental vibrations may introduce noise, making it challenging to distinguish between gesture-related and unrelated movements.

Additionally, individual differences, such as variations in physiology, gesture execution styles, or sensor placement, may further complicate recognition. Without precise calibration or adaptive algorithms, these differences can lead to inconsistent performance across users.

Finally, uncontrolled environments often feature dynamic contexts, such as varying user activities or unpredictable external factors, which can degrade the model’s ability to generalize and perform consistently. Addressing these limitations requires advanced signal processing, noise-reduction techniques, and robust machine learning models capable of adapting to diverse and complex conditions.

Connection and contribution to previous works

Our work on fine-grained hand gesture recognition for culinary activities continues the trajectory of several previous studies while providing original contributions. We build on existing research that has explored the use of inertial sensors (accelerometers and gyroscopes) for activity recognition, as demonstrated by Mahsa Sadat Afzali Arani and her team (Arani et al., 2021). In their recent work, they investigated the impact of combining bio-signals with a dataset acquired from inertial sensors to recognize human daily activities. They used the PPG-DaLiA dataset, which consists of 3D-accelerometer, electrocardiogram, and PPG signals acquired from 15 individuals performing daily activities. However, we go further by focusing specifically on more sophisticated kitchen gestures, allowing for a more detailed and contextual understanding of these daily activities.

Our approach innovatively explores the potential of PPG sensors for gesture recognition, a field still underexplored, as highlighted by the results from the Cerebrovascular Disease Research Center at Hallym University (Ryu et al., 2024). While most previous studies have focused on accelerometers and gyroscopes, we are opening new perspectives for less intrusive and potentially more accurate recognition systems by integrating PPG data. Furthermore, our work is inspired by research on sensor data fusion, as mentioned earlier, but applies it in an original way to the recognition of kitchen gestures. This combination of inertial sensors and PPG potentially allows us to improve the robustness and accuracy of recognition by leveraging the complementary advantages of each sensor type. By focusing on culinary activities, our research complements recent work on technological assistance for meal preparation (Giroux et al., 2015; Salloum and Tekli, 2022; Zarshenas et al., 2022), a crucial aspect of autonomy for older adults or individuals with disabilities. We provide finer granularity in gesture recognition, which could enable more precise and contextual assistance in this specific domain. Additionally, our data collection methodology, involving participants performing real kitchen gestures, introduces a new dataset to the community and follows the line of previous studies, such as those mentioned in the work of Caetano Mazzoni Ranieri and his team (Ranieri et al., 2021).

Regarding the Journal of Reliable Intelligent Environments, which focuses on theoretical developments and lessons learned from the deployment of intelligent environments, our work contributes to the fields of modeling human activity in the context of assistance in smart homes, people-centered computing, the development of assistive tools, and the adaptiveness and autonomy of the user. Our proposition builds on our previous work published in 2021 (Demongivert et al., 2021), which contributed to the architecture of event-oriented activity recognition. Our contribution also builds on recently published papers in the journal by Ferrari et al. (2021, 2023), which proposed a deep learning model for personalization in sensor-based HAR. In their research, they investigated the benefits that personalization can bring to deep learning techniques in the HAR domain, aiming to verify if personalization applied to both traditional and deep learning techniques could lead to better performance than classical approaches (i.e., without personalization). These previous publications each tackled specific aspects (distribution, personalization, etc.) of the significant challenge of HAR in intelligent environments. This paper specifically addresses the challenge of recognizing fine-grained gestures for precise activity recognition, which is crucial in the context of assistive technologies. Our contribution is innovative because it explores the use of new sensors for activity recognition, potentially bringing new possibilities to the field. The experiment with real users and the freely available data we provide will also contribute significantly to the scientific community working on this issue.

Finally, our work aligns well with the general research in the field of AAL, which is pursued by well-known teams around the world, such as the team of Professor Diane Cook (Wang and Cook, 2021, 2022; Wang et al., 2023) at the University of Washington, the team of Professor Sylvain Giroux in Canada (Gagnon-Roy et al., 2024; Giroux et al., 2015; Zarshenas et al., 2022), the team of Professor Juan Carlos Augusto (Ali et al., 2023; Manuel et al., 2022), and many more. We aim to make a specific contribution by focusing on fine-grained recognition of kitchen gestures. This approach paves the way for more precise ambient assistance systems tailored to the real needs of users in their daily lives, particularly for older adults or individuals with disabilities.

Relevance of our research to the community of the journal of smart cities and society

Our work on cook gesture detection at fine-grained levels aligns with and builds on some of the already available work on the Journal of Smart Cities and Society, with some new contributions.

Building on the work of Voskergian and Ishaq (2023) for the application of IoT and deep learning for intelligent e-waste management, the current work applies the same technologies, this time, however, for the recognition of activities of daily cooking. Our work supplements the work of theirs by demonstrating the application of such technologies for the enhancement of the autonomy of the elderly or the disablement of individuals in intelligent homes.

In addition, the inspiration for our novel use of the PPG sensor for gesture detection came from Meijer’s et al. (2023) work for using electrodermal activity as a continuous well-being monitor. We take their work one step further with the reality that physiological signals can, in fact, be used for well-being monitoring and also for accurate detection of ADLs.

Last but not least, we align with Borrohou’s et al. (2023) work on machine learning data cleaning and outlier detection. We apply those principles on the particular application of cooking gesture classification, thus making the robustness and reliability of assistive systems for the smart city better.

Briefly, this work follows up on these previous attempts and goes a step further in the context of new implementations, new additions to the development of intelligent assistive technologies for the urban environment and community context.

Conclusion and perspectives

As we mentioned in the introduction, one of the most significant challenges in developing assistive technologies within an AAL context is the automatic recognition of the user’s ongoing activities. Most existing HAR approaches operate at a low granularity level, which is inadequate for creating effective assistive technologies. These approaches primarily focus on identifying broad activity categories, such as eating or sleeping. While such identification is sufficient for monitoring general behavior, it falls short in delivering real-time, actionable assistance. In this paper, we propose an original work that constitutes a step toward the development of a fine-grained HAR model based on PPG and inertial sensors, opening new perspectives for assisting older adults and monitoring gestures in smart environments with wearable sensors. The conclusion of our experiments shows that the combined use of these sensors could improve the accuracy of activity recognition models, offering promising opportunities for applications such as personalized assistance, enhanced security, preserved autonomy, and proactive medical monitoring. Our results in atomic action recognition illustrate a good accuracy of 94.96% (Figure 7) in recognizing fine hand gestures, which is essential for providing effective and reliable assistance. The innovative use of PPG sensors, traditionally used to monitor heart rate, to detect subtle hand movements, opens new perspectives for autonomous living assistance by offering non-intrusive solutions. Moreover, all the created datasets and results have been made freely available to the scientific community so that other researchers can use the collected data to test other models and enhance our findings.

In the future, we plan to explore hybrid approaches to improve the model’s robustness, adapt technologies to specific needs, and explore new application areas. We also plan to create a complete activity recognition system based on our gesture recognition application, which will allow us to infer the correct ongoing daily activity, detect abnormal movements or emergency situations, and provide real-time personalized guidance in cooking tasks. The avenue of solution is to create a high-level activity recognition model, able to recognize a fixed list of recipes defined in, for instance, description logic, and making use of Allen’s temporal logic (Allen, 1983), which describes temporal relationships between events, thus helping to model and recognize sequential and coherent activities.

Footnotes

ORCID iDs

Funding

The authors received no financial support for the research, authorship and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.