Abstract

In the recognition of electromyogram-based hand gestures, the recognition accuracy may be degraded during the actual stage of practical applications for various reasons such as electrode positioning bias and different subjects. Besides these, the change in electromyogram signals due to different arm postures even for identical hand gestures is also an important issue. We propose an electromyogram-based hand gesture recognition technique robust to diverse arm postures. The proposed method uses both the signals of the accelerometer and electromyogram simultaneously to recognize correct hand gestures even for various arm postures. For the recognition of hand gestures, the electromyogram signals are statistically modeled considering the arm postures. In the experiments, we compared the cases that took into account the arm postures with the cases that disregarded the arm postures for the recognition of hand gestures. In the cases in which varied arm postures were disregarded, the recognition accuracy for correct hand gestures was 54.1%, whereas the cases using the method proposed in this study showed an 85.7% average recognition accuracy for hand gestures, an improvement of more than 31.6%. In this study, accelerometer and electromyogram signals were used simultaneously, which compensated the effect of different arm postures on the electromyogram signals and therefore improved the recognition accuracy of hand gestures.

Introduction

Hand or arm gestures are types of nonverbal communication along with facial expressions. In particular, hand gestures are the most frequent type of nonverbal communication and represent diverse intentions. Numerous studies to identify the intentions of those gestures have been conducted. Among them, vision-based studies that employ camera sensors such as time-of-flight (ToF) or RGB cameras1,2 were conducted and progressed to improve the recognition accuracy of hand gestures by incorporating the depth and color of images. However, those studies are vulnerable to surrounding brightness and have a limitation in recognizing hand gestures within the sight of the camera sensors. In addition, there have been studies that employed accelerometer, gyroscope, magnetoresistive, and flexure sensors embedded in data gloves to track hand motions or to recognize hand gestures.3,4

Besides the employment of cameras and data gloves, electromyogram (EMG) signals were also used in studies to examine the recognition of hand gestures. The surface EMG signal is an electric signal generated in the muscles making intentional motions and is measurable from the surface of the skin. Studies employing the EMG signal to recognize the hand gestures are particularly popular in fields related to the control of prosthetic hands for patients.5–11 Especially, in Atzori et al., 10 the first NINAPRO database with EMG of 27 intact subjects performing 10 successive repetitions of 52 hands, wrist, and forearm gestures was described in detail and characterized with global analyses and benchmark classification results. The results reported that the nonlinear support vector machine (SVM) and multi-layer perceptron (MLP) classifiers perform better than the linear SVM and linear discriminant analysis (LDA). Also, a versatile embedded platform for EMG acquisition and SVM-based gesture recognition was implemented for the real-time processing. 11 Recently, many studies have aimed to improve myoelectric control of prostheses.12–14 It was reported that the displacement of the EMG electrodes and the positions of the arm during the use of the prosthesis affect the classification performance.12,13 Also, the detailed information about clearing up commonly associated noises and artifacts from EMG signals were provided, and advantages and disadvantages of the various analytic methodologies were discussed to help researchers encounter the better method for analyzing EMG signals. 14

Besides the prosthetic purpose to amputee subjects, another aim by EMG-based hand gesture recognition is to infer user intentions for the human–computer interface (HCI) and smart device interaction. Studies delving into active and intuitive recognition of hand gestures for normal and healthy people are in progress. Researches on hand gesture recognition based on EMG were initiated for the recognition of simple motions of the wrist of a subject and have evolved into the recognition of motions of individual fingers.15,16 Furthermore, the works studied by Naik and Nguyen 17 and Tsujimura et al. 18 aimed at the recognition of multi-finger movements like those involved in playing rock–paper–scissors are currently in progress. In addition, the recognition of sign languages has been proposed by Kosmidou and Hadjileontiadis, 19 Zhang et al., 20 Li et al., 21 Cheng et al., 22 and Su et al., 23 as well as those that employ EMG-based hand gesture recognition technologies to control the hand movement or driving motions of robots have been performed.24,25 However, despite the high accuracy of gestures considering in many literatures, there still exist accuracy degradation in practical use. One of the major causes of such performance degradation is the change in EMG signals depending on the posture of the arm. The effect of arm posture on the performance of myoelectric control algorithm both on trans-radial amputees and able-bodied subjects was investigated. 26

For the information about arm postures, accelerometer signals have been jointly used with EMG signals in recognizing hand gestures. The use of accelerometer signals can be divided into two purposes. One is for recognizing complicate and numerous hand gestures such as sign languages and dexterous prosthetic hand control.19–21,23,27–29 The other is to prevent the performance degradation of the hand gesture recognition due to various arm postures, that is, even for the same hand gesture, the recognition accuracy decreases as arm postures change. 30

In this study, we aim to improve the robustness of hand gesture recognition to arm posture changes. Furthermore, we are targeting the interaction of able-bodied subjects such as HCI and smart device control. The EMG-based hand gesture interaction for healthy subjects in daily life has attracted more and more attention. But accelerometer and EMG sensor fusion techniques have not been widely applied to hand gesture interaction. Although the commercial product, MYO is a recently introduced forearm-band for EMG- and accelerometer-based gesture recognition for interactive applications, accelerometer, and EMG sensor fusion for hand gesture recognition is still in the initial stage. In addition, most researchers, as well as MYO (Armband, Thalmic Labs Inc., 2015), acquire EMG signals from the forearm. The reason is that most muscles related to hand gesture are anatomically placed in the forearm and thus good EMG signals bearing the gesture information can be obtained. But currently, most commercial wearable bands such as Fitbit and Jawbone are wrist-type bands and friendly acceptable to common users since they can be easily worn like fashion accessories. To integrate EMG-based interaction functions in wrist-type bands, we need to record the signals from wrist areas, not from the forearm. Here, we challenge this problem.

Our previous pilot study 31 demonstrated that hand gesture recognition can be improved more than 20% for eight hand gestures when arm postures are considered. In the previous study, only EMG signals were used without accelerometer signals and we just investigated the effect of various arm postures on the performance degradation. Moreover, the EMG signals were recorded in the forearm. Also, although we used both accelerometer and EMG signals in Rhee et al., 25 the study was mainly for the directional control of moving robot such as forward, backward, left, and right movements. This control was done by accelerometer signal only, and EMG signals were used for single hand gesture, fist. Inspired by but unlike to the previous study, in this study, we improve hand gesture recognition robust to arm posture changes with accelerometer and EMG signals acquired only from wrist areas.

We propose a hand gesture recognition method using EMG and accelerometer signals simultaneously to overcome the degradation of performance according to various arm postures. For the research purpose, we designed the sensing device to record accelerometer and EMG signals simultaneously. The accelerometer signals had distinct values corresponding to different arm postures. Thus, we can identify the arm postures using the accelerometer. Also, EMG signals for the gestures of different hands had different amplitudes and frequencies, and the recognition of the hand gestures was based on such distinct characteristics. Features that are typically used for accelerometer and EMG signals are the mean values

20

and the waveform lengths,

10

respectively. For the classification, nine arm postures are recognized using the 1-nearest neighborhood based on the Euclidean distance and eight hand gestures are classified using the maximum likelihood estimation. EMG signals are statistically modeled using Gaussian models considering nine arm postures. In the experimental results, we compared the cases that took into account the arm postures with the cases that disregarded the arm postures for the recognition of hand gestures. Furthermore, the performance was evaluated using other existing classifiers such as linear/nonlinear SVM and

Experiment environment

Figure 1(a) shows the device used to measure EMG and accelerometer signals simultaneously. The device consists of a four-channel EMG sensor, sensor connector, three-axial accelerometer sensor, battery, Bluetooth module, and micro-controller unit (MCU) configured with the dimensions of 12 cm in width and 6 cm in length. The EMG analog front end depicted in Figure 1(b) has four differential data acquisition channels. Each channel consists of an instrumentation amplifier, a low-pass filter, a high-pass filter, and a second amplifier. The instrumentation amplifier is chopper-stabilized to remove the flicker noise. The EMG signal of the instrument amplifier is then passed through a high-pass filter and a low-pass filter, which were designed for passing the frequencies ranging 10–400 Hz and then be amplified again. A digital notch filter was used to eliminate the power line noise (60 Hz) in the preprocessing. The power consumption is about 100 mW, which is very high compared with devices reported in literatures. Thus with four 1.2 V batteries, the working duration is about 1.5 h. Both EMG and accelerometer signals are sampled at 512 Hz using a 12-bit analog-to-digital

Experimental environment for acquiring both EMG and accelerometer signals: (a) acquisition device, (b) analog front end, (c) EMG sensor position, and (d) signal acquisition wearing the acquisition device.

Figure 2 illustrates the eight hand gestures and the nine arm postures. The hand gestures comprise flexion motions of five individual fingers, grab, pointing, and pinch. The arm postures are constructed by distinguishing between the angles of the elbow while the upper arm was anchored on the body and the angles during the supination and pronation of the wrist. The arm posture in

Model of (a) hand gestures and (b) arm postures.

Figure 3 presents the EMG and the accelerometer signals of hand gestures obtained from diverse arm postures. The arm postures were

Changes of raw EMGs and waveform lengths for different arm postures (

Proposed method

The proposed method in this study uses EMG and accelerometer signals simultaneously to prevent the degradation of hand gesture recognition due to varying arm postures. The signal processing consists of the feature extraction of the EMG and accelerometer signals, statistical modeling of hand gestures, and recognition.

Arm posture recognition using accelerometer

The accelerometer sensor can measure the three-dimensional motion of an object in the

Accelerometer signals for nine arm postures.

Let

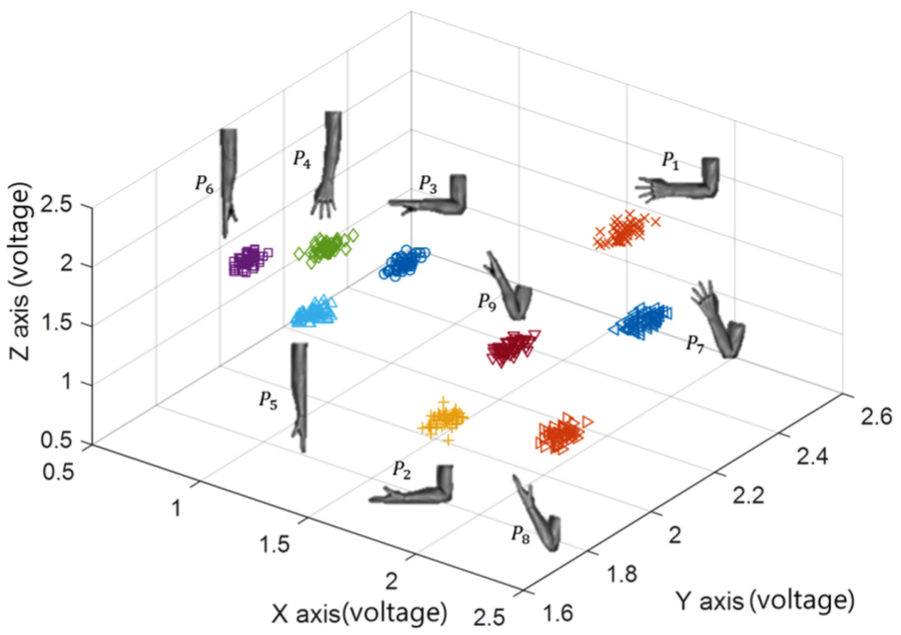

Figure 5 shows the mean values of the accelerometer signals in the

Feature distribution of accelerometer signals.

Hand gesture recognition using EMG and accelerometer signals

The waveform length can quantify the dynamic complexity of EMG signals.32,33 The waveform length is simply the cumulative difference of signals. Let the EMG signal be measured from channel

Then, we calculate the histogram,

Figure 6 shows bins and the maximum value of the waveform length. Where

Bins and the maximum value of the waveform length.

Figure 7 shows the histograms of the waveform length obtained by repeatedly performing the eight hand gestures. The shape of the histogram reveals the placement of large values in the center while the rest of the values decrease bi-directionally along the lateral axis. Different distributions of the waveform lengths were observed even for the identical hand gestures depending on the arm postures. From the shape of the histograms, the underlying distribution is assumed to be Gaussian with the mean

Histograms of the waveform lengths of four-channel EMGs for eight hand gestures.

Figure 8 shows the box plot of the waveform length for hand gestures

Box plot of the waveform length for hand gestures: (a)

For the discrimination of hand gesture, we employed maximum likelihood estimation (MLE), a parametric estimation method that maximizes the value of the likelihood function for the probability model of the observed samples and the observations. Assuming the EMG signals in different channels are independent, we use the likelihood function,

Then, the hand gesture is estimated by maximizing

Experimental results

Eight hand gestures combined with nine arm postures were done by eight subjects in the experiments. The subjects do not have any physical problems in making hand gestures and are fully aware of the experimental process. The subjects perform 10 trials for single hand gesture and rest for 1 min to avoid the accumulation of physical fatigue. Each trial is executed in 1–2 s and the subjects have a rest for 5 s between trials. For 10 trials, it takes about 1 min. In the experiments, we informed subjects of the movement timing by beeping. Thus, in this experiments, the start timing is known. The segmentation is done for 1 s after the start of beeping. Thus, the classification delay is the almost same as 1 s. The results obtained from the experiment were classified into cases that solely employed EMG signals and those that simultaneously employed EMG and accelerometer signals for comparison. The parameters

In Figure 9, the hand gestures for

Sampled results of hand gesture recognition.

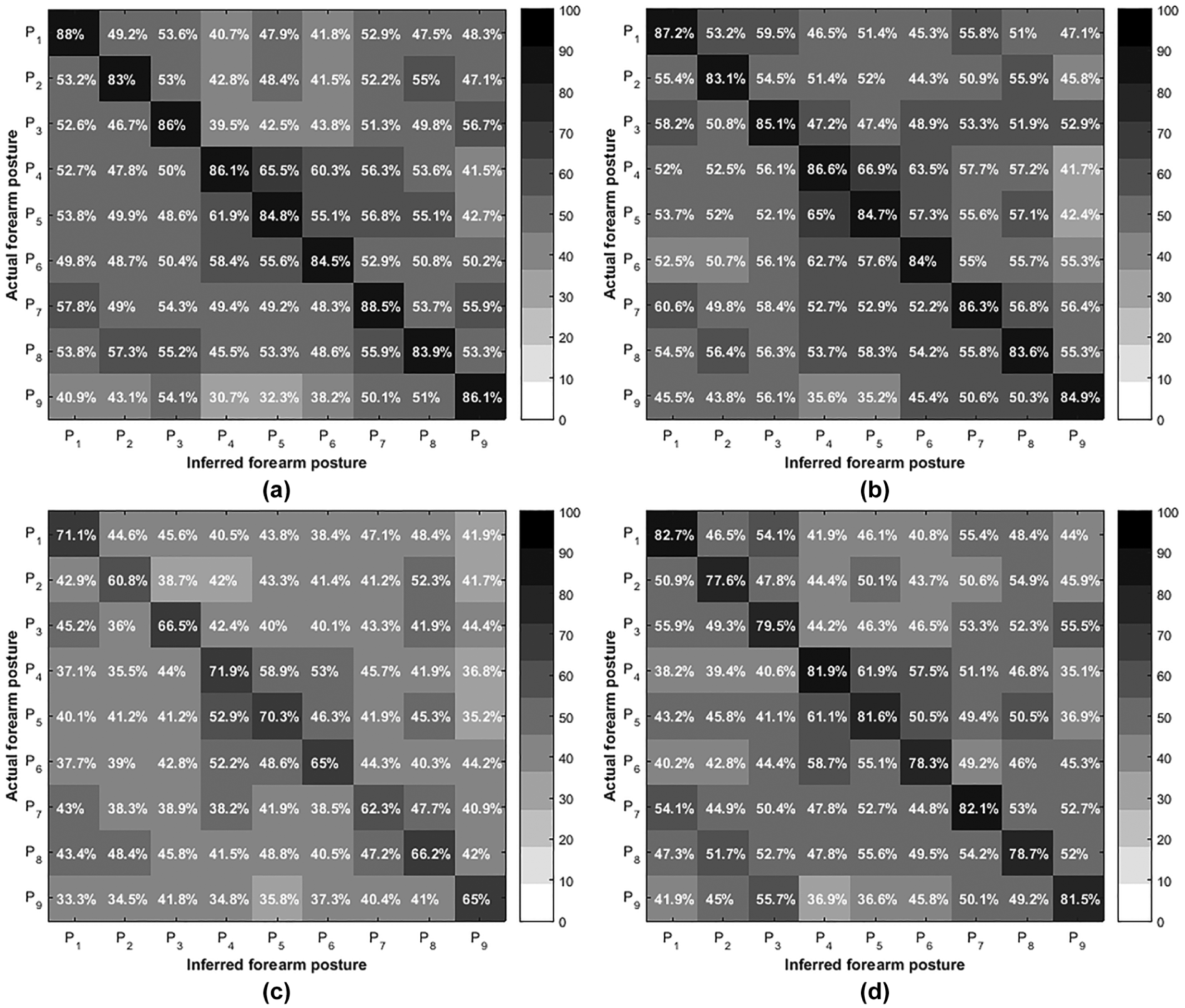

The experimental results for the average recognition accuracy of eight hand gestures performed for each of nine arm postures are presented in Figure 10. In order to verify the performance of the proposed method, we compared it with the existing classifiers such as linear, nonlinear SVM, and kNN. The

Comparison average of recognition accuracies over subjects and eight hand gestures for various classifiers for correct and incorrect arm posture recognition: (a) MLE, (b) kNN, (c) linear SVM, and (d) nonlinear SVM (average accuracy over eight hand gestures for correct arm posture recognition: 85.7 (MLE), 85.0 (kNN), 66.6 (linear SVM), and 80.4 (nonlinear SVM)).

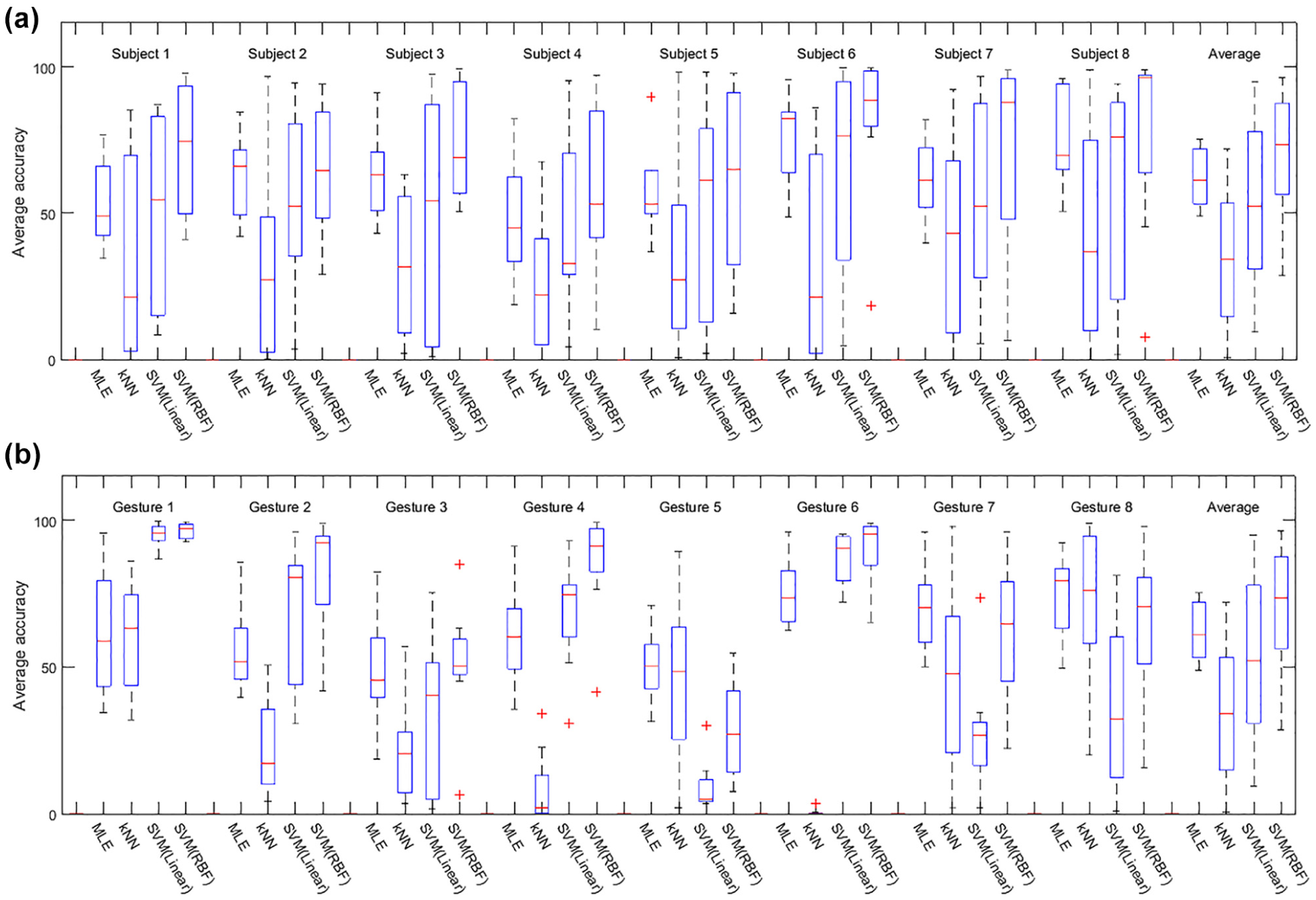

The average recognition accuracies for hand gestures in cases in which the hand gestures were recognized by taking the corresponding arm postures into account and those recognized without taking the corresponding arm postures into account were calculated and are illustrated in Figure 11, and the averaged accuracy over all subjects, eight hand gestures, and nine arm postures and the performance improvement by considering the arm posture are summarized in Table 1. Figure 11 shows the average recognition accuracy for hand gesture and subject with and without considering arm postures. The white bar means the average accuracy without considering the arm postures. The improvement by taking the corresponding arm postures into account is represented by the black bar. The average recognition accuracies of hand gestures with the correct arm posture inference were 85.7%, which is 31.6% higher than without considering the corresponding arm postures.

Comparing the average recognition accuracy of eight hand gestures using MLE, kNN, SVM with linear kernel and SVM with RBF kernel. The white bar means the average accuracy without considering the arm postures. The improvement by considering the arm postures is represented by the black bar: (a) average accuracy of eight hand gestures for each subject and (b) average accuracy of each hand gesture over subjects.

Average accuracy (mean ± SD, %).

SD: standard deviation; MLE: maximum likelihood estimation; kNN:

Although the degradation of the recognition performance of hand gestures can be overcome by considering the arm posture, we need various training models of hand gestures according to the arm postures. We investigated the effect of the training model on the performance from two scenarios building the models. The first scenario is that the training model is built using EMG signals in one specific arm posture and the recognition performance is evaluated for all other arm postures, that is, the training model is a local training model. The other is that the training model is made using EMG in nine arm postures and the performance is investigated for each posture, that is, the training model is a global model. The results of the two scenarios are shown in Figures 12 and 13, respectively. The recognition accuracies are decreased compared with ones in Figure 11, and the performance is better in the second scenario than in the first one except for kNN. Also, we can observe that the performance variation is not so high in MLE according to subjects or hand gestures compared with other three classifiers.

Average recognition accuracy of hand gestures for the scenario building the training models: training with one posture a local training model and testing with all other postures (a) average accuracy of eight hand gestures for each subject and (b) average accuracy of each hand gesture over subjects.

Average recognition accuracy of hand gestures for the scenario building the training models: training with all posture a global training model and testing with each postures: (a) average accuracy of eight hand gestures for each subject and (b) average accuracy of each hand gesture over subjects.

In addition, we have evaluated the effect of electrode position bias on the performance under the correct arm posture inference. The electrode position bias is generated by moving the center of EMG sensor arrays upward, downward, right, or left by 5 mm. The original center is set to that in Figure 1(c) and we define this position as

Effects of electrode position bias on the recognition accuracies: (a) positions of electrode center and (b) recognition accuracies according to electrode position mismatch during the training and the test modes.

Controlling display devices using hand gestures

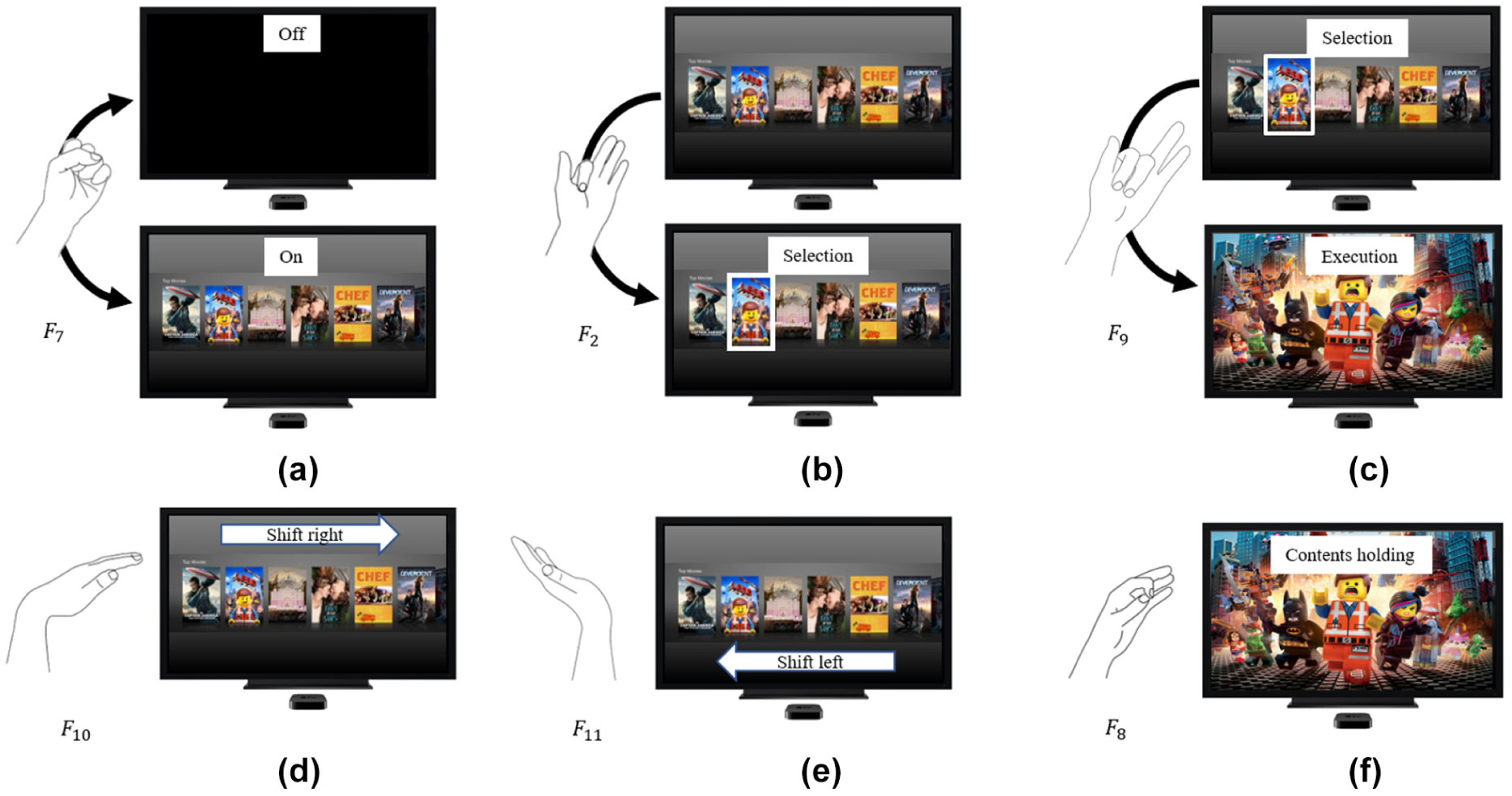

A human interactive model controlling display devices was established to evaluate the proposed method. Figure 15 shows the hand gestures and the corresponding commands for various display controls. The grab gesture

Six hand gestures for control display and its commands: (a) display on/off, (b) contents selection, (c) contents execution, (d) contents shift right, (e) contents shift left, and (f) contents holding.

Seven males and one female were participated in experiments. The subjects performed the experiment in the order of Figure 15(a)–(f). The experimental results for the average accuracy of six hand gestures performed for each of nine arm postures are presented in Figure 16. The diagonal elements in the matrix are the average recognition accuracies results of hand gestures for correct arm posture. The off-diagonal elements correspond to the average recognition accuracy of hand gestures for different arm postures. Figure 17 shows the average accuracy for each hand gesture and each subject with and without considering arm postures and the performance improvement is summarized in Table 2. The white bar means the average accuracy without considering the arm postures. The improvement is represented by the black bar. The average recognition accuracies of hand gestures for display control with the correct arm posture was 90.3%, which is 28.7% higher than without considering the arm postures.

Average of recognition accuracies of six hand gesture for various arm postures.

Comparing the average recognition accuracy of six hand gestures: (a) average accuracy of six hand gestures and (b) average accuracy of various subjects.

Average accuracy of six hand gestures for control display (mean ± SD, %).

SD: standard deviation; MLE: maximum likelihood estimation.

Discussion and conclusion

In this article, we have described a hand gesture recognition method using EMG and accelerometer signals simultaneously recorded from wrist area to overcome the degradation of performance according to various arm postures. Changes of EMG signals due to arm postures have been reported in various literatures, and many researchers have tried to solve the issue by using accelerometer signals. Peng 28 showed the relative movement of the muscle and sensor by the rotation of the arm, and Fougner 30 studied the gravitational and biomechanical effects of the limb position affect the EMG signal. Our approach also used accelerometer signals, and we have verified that experimentally the recognition performance is highly improved more than 31.6% for eight hand gestures. In this study, EMG signals were measured on the wrists differently from previous studies,27–30 and our results indicated that gravity, biomechanics, and muscle rotation due to arm posture have a significant effect on the signals measured on the wrist.

The main contribution of the article is not simply the improvement of the recognition accuracy for hand gestures. Considering EMG-based interaction in wrist-type wearable bands, we have acquired both EMG and accelerometer signals from wrist area, not the forearm. Unlike to conventional studies, we have improved hand gesture recognition robust to arm posture changes with accelerometer and EMG signals acquired only from wrist areas. Although there are other issues to be solved to realize the EMG-based interaction in wrist-type bands such as the integration of EMG sensors in wearable band, the conductance improvement, the user-friendly design, and others, our research is the first attempt to recognize eight hand gestures robust to nine arm postures with weak EMG signals from wrist areas. However, the hand gestures we used are not as practical as the ones in the ARMYs standardized hand signals for close range engagement (CRE) operations and NINAPRO database. We will conduct research using practical hand gesture in the future.

Another contribution is the use of the MLE-based classifier, where EMG signals are statistically modeled using Gaussian models considering nine arm postures. MLE is known as the statistically optimum estimator when the probabilistic model is correct. Also, this classifier does not need additional parameters causing the performance dependency once the probabilistic model is obtained. In the case of Gaussian modeling, only the mean and the variance of features are needed. When updating the training model with new data, only Gaussian modeling is needed, that is, updating the mean and the variance. Some classifiers may need to recalculate decision boundaries with the updated whole data. Also, the MLE-based classifier is computationally efficient since most cost is in calculating the likelihood in equation (6). Although we have assumed EMG signals are independent for the mathematical convenience, the performance improvement may be expected if features are modeled using multi-dimensional Gaussian model. More importantly, to improve the robustness to the variation of arm postures, a method and experimental model need to be developed for continuous changes in arm posture, not just discrete changes.

Footnotes

Handling Editor: Gianluigi Ferrari

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by Basic Science Research Program through the National Research Foundation of Korea (NRF) funded by the Ministry of Science and ICT (No. 2017046246).