Abstract

The growing emphasis on “fit” as a hiring criterion introduces the potential for a new, subtle form of discrimination (Bertrand & Duflo, 2017). Analysis of 1,901 U.S. Supreme Court oral arguments from 1998 to 2012 documents that voice-based snap judgments predict court outcomes. Male petitioners who rank below median in perceived masculinity are 7 percentage points more likely to win. This negative correlation between perceived masculinity and winning cases in the Supreme Court is more pronounced in masculine industries. Perceived femininity of women lawyers also predicts court outcomes. Democrats favor men with less masculine-sounding voices. Perceived masculinity explains additional variance in Supreme Court decisions beyond what is predicted by the best random forest prediction model. A de-biasing experiment using information and incentives in factorial design is consistent with misperceptions and taste for masculine-sounding lawyers explaining the negative correlation between perceived masculinity and Supreme Court wins.

1. Introduction

Courts routinely distinguish between immutable and mutable characteristics, and between being a member of a legally protected group as opposed to behavior associated with that group, which is not protected (Yoshino, 2006). Legal theorists suggest that discrimination, once aimed at entire groups, now aims at subsets that refuse to cover, that is, to assimilate to dominant norms (Goffman, 1963). Mutable characteristics have recently entered economic models of identity formation (Austen-Smith & Fryer, 2005; Bertrand & Duflo, 2017; Neumark, 2018). For example, African-Americans cannot be fired for their skin color, but they can be for wearing cornrows. This distinction between being (immutable) and doing (mutable) incentivizes assimilation. Should we understand such legally sanctioned differential treatment to be harmful? Are individuals “punished” for not conforming when it comes to mutable characteristics (Yoshino, 2000)? Building on Kenji Yoshino’s notion of “covering,” we use the phrase “punish non-conformity” to capture how society might indirectly burden individuals whose voices deviate from a perceived norm.

The primary focus of our empirical analysis is judicial outcomes—namely, which attorney prevails at the Supreme Court—this emphasis motivates a selection puzzle rooted in discrimination theory. In principle, if law firms or parties aim to maximize the probability of winning, they should promote or retain lawyers best suited to succeed before the Court. Classic models of taste-based or statistical discrimination (Becker, 1957; Phelps, 1972) suggest that if firms discriminate in favor of “masculine-sounding” voices merely due to preference or misbeliefs, then we would observe a mismatch if those favored traits do not align with actual performance. By testing whether perceived voice masculinity (or femininity) correlates negatively with success, we create an “outcomes test,” akin to the approach used in labor-market discrimination studies (Charles & Guryan, 2008). Put simply, if market participants rationally rewarded masculine voices, we would not expect to find that those same voices underperform at the Supreme Court. Yet that is precisely the pattern our data reveal. This incongruity points to a selection dynamic—firms appear to “choose the wrong attorneys” from a pure win-maximization standpoint—and underscores the broader significance of how extralegal traits (like voice) can affect both the makeup of the advocacy pool and the ultimate decisions handed down by the Justices. The selection puzzle we describe is purely suggestive; we do not have a controlled experiment manipulating the voice of advocates at the moment they are being selected by firms.

This study defines voice as a “mutable characteristic” because, although not trivially changed, vocal registers and pitch are subject to deliberate training and self-conscious modification. Professional speech coaching (e.g., Margaret Thatcher’s documented pitch-lowering exercises (Atkinson, 1984)) illustrates that people can—and do—adjust elements of their vocal style over time, placing voice in a middle ground between strictly immutable traits like race or age, and freely chosen characteristics like clothing. By “mutable,” we thus highlight how speakers can face social pressure to “cover” or conform their voice, even if such transformations demand substantial effort.

Likewise, our analysis of “perceptions” relies on external assessments: we ask a broad panel of online participants (via Amazon Mechanical Turk) to rate how “masculine” or “feminine” a single spoken phrase sounds—“Mr Chief Justice, (and) may it please the Court?”—thus standardizing word choice and capturing how everyday listeners might rapidly form impressions of an advocate’s voice. Because it is infeasible to poll the Justices themselves, these crowd-sourced ratings serve as a proxy for snap judgments any audience member (including the Court) could form upon hearing a brief utterance.

Our central inquiry is whether these externally measured, potentially mutable vocal cues correlate with attorney success in the Supreme Court. If extralegal traits—here, “perceived masculinity” or “perceived femininity”—predict outcomes net of conventional factors (e.g., case facts, attorney credentials), it raises questions about the subtle influences that mutable identity markers might have in high-stakes judicial settings.

Political scientists and legal scholars have long debated the extent to which Supreme Court decision-making is driven by legal doctrine versus extra-legal or attitudinal factors (Segal & Spaeth, 2002; Epstein & Knight, 1997; Epstein et al., 2013). Most of that work, however, emphasizes either rationally strategic behavior or broad ideological preferences. By contrast, we align more closely with a growing subfield that examines unconscious biases and heuristics—for example, how Justices might rely on System 1 thinking (Kahneman, 2011; Rachlinski et al., 2009a) even in a high-level adjudicative context. In this sense, our approach differs from purely rationalist extralegal accounts: we investigate a truly extraneous influence—vocal traits—that can shape outcomes in a way that does not appear to fit conventional strategic or attitudinal models. Scholarly work on facial appearance (Todorov et al., 2005; Benjamin & Shapiro, 2009; Berggren et al., 2010), emotional cues (Schubert et al., 2002), and cognitive biases (Rachlinski et al., 2009a) underscores that courts—like other deliberative bodies—can be susceptible to impressions that extend beyond the strict letter of the law.

Hence, while prior scholarship offers robust evidence of extra-legal judicial decision-making, it generally locates that behavior within an explicitly rational framework (e.g., Justices optimizing personal policy goals or responding strategically to political constraints). Here, by contrast, the role of vocal cues suggests a less canonical mechanism: snap judgments or involuntary biases that operate beneath conscious strategies. Positioning our findings within this newer subfield of judicial behavior helps illustrate that subtle, potentially irrational factors can still affect which side prevails—even in a context where law and policy are presumed dominant.

Against this backdrop, we examine voice-based perceptions as one more factor that might correlate with Supreme Court outcomes, echoing research showing that first-impression judgments—however fleeting—can influence real-world decisions (Ambady & Rosenthal, 1992). Our measure of “masculinity” in voice is used to explore whether an attribute that is tangential to legal merits can nonetheless predict who wins and who loses. Although we do not claim that judicial reasoning explicitly turns on an advocate’s vocal register, the data reveal consistent associations between perceived vocal traits and voting patterns, adding to the empirical record that external cues may have a subtle but significant impact in high-level adjudication.

Moreover, these findings hold relevance beyond the Supreme Court. Situations ranging from lower-court litigation to corporate hiring frequently involve consequential interactions where snap judgments about individuals arise—be it in job interviews, negotiations, or high-stakes presentations (Schroeder & Epley, 2015; Klofstad et al., 2012). In each case, a person’s vocal qualities can spark biases (positive or negative) that shape decisions. Hence, although the Supreme Court is an elite and idiosyncratic institution, our results resonate with a broader phenomenon: voice-based heuristics can help or hinder individuals in settings where outcomes hinge on persuasion, credibility, or perceived competence.

We use “first impressions” to refer to the immediate, real-time judgments formed when an advocate begins speaking before the Court, rather than any assertion that the Justices come in with no prior knowledge of the lawyer’s identity or legal arguments. Indeed, many Supreme Court advocates appear repeatedly, and the Justices typically read briefs in advance (Hazelton & Hinkle, 2022). However, social cognition research (Ambady & Rosenthal, 1992) indicates that even well-informed decision-makers can form or update rapid heuristics upon encountering someone’s live voice or demeanor.

Scholars have extensively studied the “elite Supreme Court bar,” in which a small cadre of repeat advocates shape both advocacy strategy and judicial perception. While Lazarus (2008) focuses on the modern rise of specialized SCOTUS practitioners, a long-standing political-science literature (McGuire, 1993; 1995; Spriggs et al., 1995) emphasizes the repeat-player phenomenon, documenting how multiple appearances can foster ongoing relationships with the Justices. We recognize that many attorneys return for multiple cases and, accordingly, some of our specifications incorporate lawyer fixed effects, allowing us to examine whether within-lawyer variation in perceived vocal traits still predicts outcomes. Meanwhile, our dataset also includes a substantial fraction of single-appearance advocates, preserving variation along the “novelty” dimension.

Moreover, even for repeat players, the immediate auditory cues—pitch, resonance, intonation—can still prompt a new or reinforced perception during oral argument. We do not claim that these vocal impressions override ideology or the substantive content of briefs, but rather propose that voice-based attributes may be one additional extralegal influence on Justices’ reactions and, ultimately, on case outcomes. Thus, “first impressions” here reflects the psychologically grounded process triggered upon hearing an advocate’s live speech, highlighting how vocal qualities can still matter in a highly prepared, information-rich environment.

While the classical Becker (1957) theory suggests that complete markets would eradicate prejudice, as discriminatory employers would ultimately fail, evidence suggests otherwise. Numerous studies, including correspondence tests (Bertrand & Mullainathan, 2004) and analyses linking labor market inequality to prejudicial attitudes (Charles & Guryan, 2008), indicate persistent discrimination. Yet, distinguishing between prejudice and statistical discrimination remains complex, often hindered by limited data on productivity (Heckman & Siegelman, 1993; Neumark, 2018). Innovative experiments across various contexts (List, 2004; Mobius & Rosenblat, 2006; Rao, 2014) have attempted to dissect these discrimination forms, but real-world, high-stake settings remain challenging to study. We contribute to this discourse by analyzing 1,901 oral arguments in the U.S. Supreme Court between 1998 and 2012, focusing on how snap judgments of lawyers’ voices correlate with court outcomes. Our analysis leverages voice samples (e.g., Sample 1 (https://goo.gl/ZPdCkU) and Sample 2 (https://goo.gl/mbhuLF)) holding fixed the words spoken – “Mr Chief Justice, (and) may it please the Court?” – to measure perceived masculinity and its association with case success.

For the analysis of observational ratings, participants simply rate already-existing voice clips (with or without reversing the clips) on perceived traits. We treat these ratings as a measurement of how typical listeners hear an attorney’s voice. In a randomly assigned 2 × 2 design (Information/No-Information × Incentive/No-Incentive), participants guess who won the case under varying levels of feedback and rewards. By observing changes in their correlation between perceived win rates and perceived masculinity, we can isolate how much of the correlation might stem from taste-based preference versus misbelief.

Our research design deliberately uses only the standard opening phrase, “Mr Chief Justice, (and) may it please the Court?”, in order to hold word choice constant and ensure that any perceived “masculinity” or “femininity” reflects vocal qualities rather than differences in lexical content. Moreover, we reverse some clips so that even English comprehension is removed, which further confirms that the acoustic signal alone drives our measured impressions.

To bolster confidence that this short standardized sample does not “miss out” on relevant vocal information, Dietrich et al. (2019) analyze entire oral arguments—without restricting to a single sentence—and rely on direct pitch measurements instead of listener ratings. Crucially, they reach the same conclusion: an advocate’s higher-pitched voice correlates with a higher likelihood of winning at the Supreme Court. Together, these approaches underscore that voice-based cues meaningfully predict Court outcomes, even beyond any specific word choices or rhetorical content.

Our results align with a growing body of empirical work suggesting that judicial outcomes can be influenced by factors not captured in traditional legal or attitudinal models (see, e.g., Chen & Jess, 2017; Rachlinski et al., 2009b). If vocal traits—which do not bear on the substantive merits of a case—correlate with winning, then no purely doctrinal or ideological account can fully explain how justices decide. This underscores the difficulty of building comprehensive models of judicial behavior and complicates the longstanding debate over whether decisions reflect law, policy, or personal biases (Segal & Spaeth, 2002). See also Epstein et al. (2018), Waterbury (2024), and the experiments by Rachlinski, Wistrich, and Guthrie for a few examples of how in-group bias, attractiveness, and extralegal factors can enter judicial decision-making.

More crucially, these findings raise questions of fairness and equal treatment under the law. If an advocate’s voice—something arguably tangential to the legal issues at stake—affects a party’s likelihood of prevailing, then systemic reliance on such traits calls into question the principle that cases should be judged solely on their legal merits. From a normative perspective, allowing vocal attributes to shape outcomes challenges the fundamental expectation that litigants stand on equal footing regardless of personal characteristics.

This issue resonates with broader worries about implicit bias and the subtle ways in which perceived identity markers (gender presentation, race, accent) can permeate judicial or jury decisions. Whether or not these biases are deliberate, their presence in Supreme Court advocacy is particularly consequential, given the Court’s role as the final arbiter of crucial legal and policy questions.

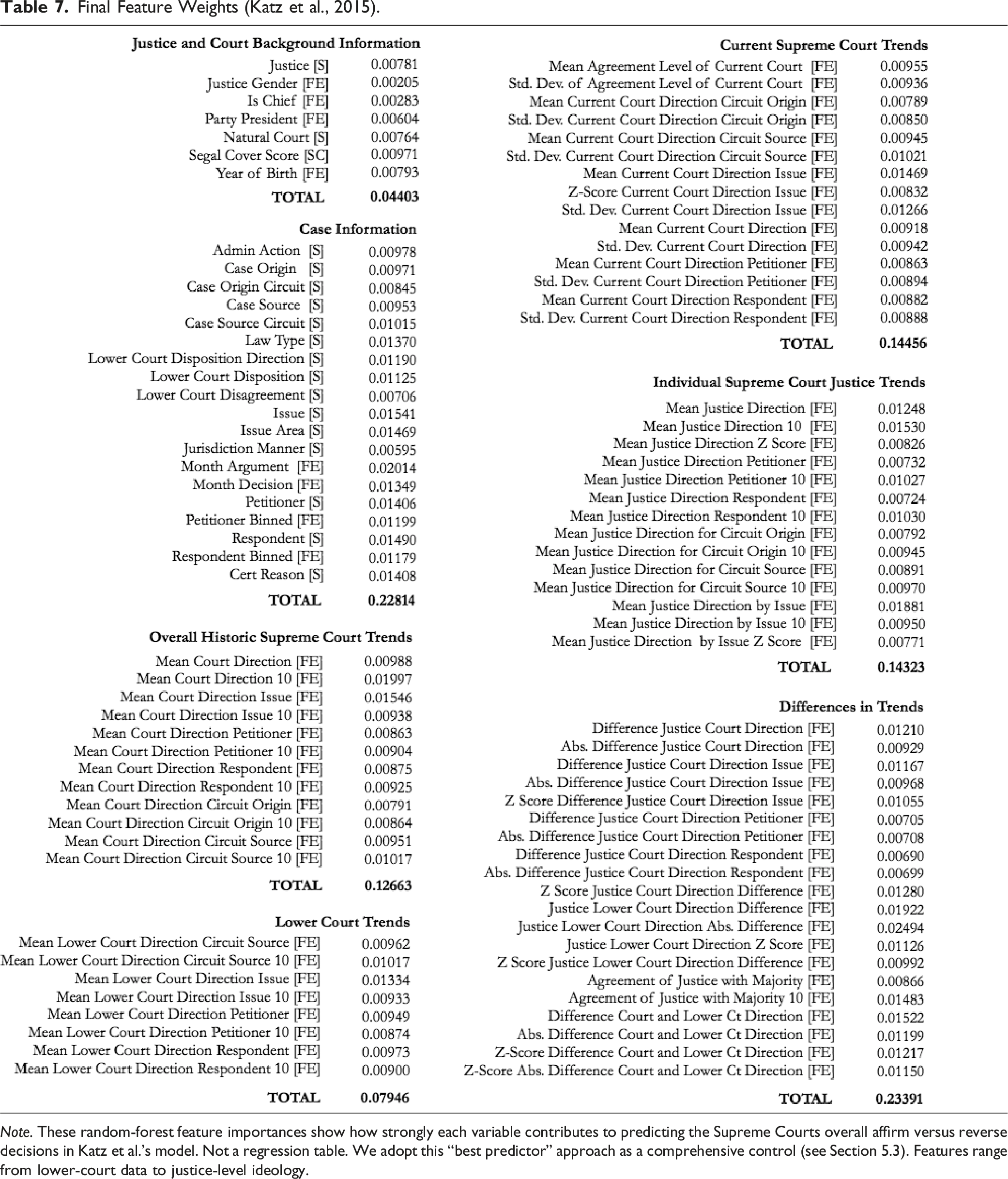

Katz et al. (2017) develop a time-evolving random forest classifier for Supreme Court outcomes, harnessing the Supreme Court Database (SCDB) across two centuries (1816–2015). They encode legal issues, court/Justice characteristics, and historical patterns (e.g., reversal rates) into a rich feature set. By retraining or ‘growing’ the random forest each term, Katz et al. show ˜70% accuracy in predicting affirm-or-reverse decisions across vastly different Court compositions.

In our work, we adapt the Katz framework not to maximize predictive accuracy per se, but rather to serve as a comprehensive control for all known correlates of Supreme Court outcomes—ideology, lower-court data, etc.—and then test whether vocal traits add explanatory power. Our main interest is causal inference: isolating whether lawyers’ perceived masculinity (or femininity) has an independent effect above and beyond the Katz-style best-guess model.

Our main interest lies in identifying whether perceived vocal traits influence Supreme Court outcomes once we’ve accounted for all other known correlates (ideology, issue area, lower court data, etc.). The RF model itself is used primarily as a comprehensive control or “best guess” baseline—akin to how one might include a highly flexible function of observables in a regression—to ensure that any remaining correlation with masculinity/femininity is not due to omitted variables.

This paper proceeds as follows: Section 2 provides theoretical background; Section 3 describes our experimental methods and data; Section 4 presents baseline associations between voice impressions and Supreme Court outcomes, exploring the dynamics of lawyer variation and extending the analysis to female lawyers; and Section 5 offers concluding remarks.

2. Background and Theory

Previous research has extensively analyzed the nuances of speech, including aspects like vowels, pitch, diction, and intonation. However, the impact of speech variation, particularly beyond lexical choices, on real-world behavior remains underexplored. Our study addresses this gap by investigating the significance of vocal cues in high-stakes policy-making environments, specifically the U.S. Supreme Court. We delve into whether these vocal cues hold relevance even when advocates (i.e., lawyers) deliver identical sentences, thus bringing a new dimension to understanding the influence of speech in critical decision-making settings.

First impressions based on voice have been shown to significantly influence decisions in diverse contexts, ranging from mate and leader selection to housing choices, consumer behavior, and even financial markets, as evidenced by vocal analysis in earnings conference calls (Nass & Lee, 2001; Klofstad et al., 2012; Purnell et al., 1999; Scherer, 1979; Tigue et al., 2012; Mayew & Venkatachalam, 2012). These observations align with the broader behavioral literature on intuitive (System I) versus analytical (System II) thinking. Notable examples include studies showing that facial cues can predict electoral outcomes (Todorov et al., 2005; Benjamin & Shapiro, 2009; Berggren et al., 2010) and research demonstrating the influence of nonverbal behavior on teacher evaluations based on silent video clips (Ambady & Rosenthal, 1992). Intriguingly, Schroeder and Epley (2015) found that even in the presence of visual information, voice-based impressions carry more weight for employers in assessing job applicants. This body of work underscores the powerful role of vocal cues in shaping perceptions and decisions, setting the stage for our exploration of their impact in the high-stakes setting of the U.S. Supreme Court.

There are compelling arguments from various disciplines as to why voice impressions should ostensibly hold no sway in Supreme Court decisions. A rational perspective, as posited by Posner (1973), suggests that factual information should supplant initial impressions. Ideologically, some argue that court outcomes are largely political, with predetermined results (e.g., Cameron, 1993). Legally, it is advocated that decisions should hinge solely on the legal merits of arguments (Kornhauser, 1999). Economically, consistent correlations between voice and court outcomes are unlikely as law firms and advocates are expected to adapt their strategies to negate such biases (Becker, 1957; Knowles et al., 2001).

However, from a behavioral standpoint, the nuances of speech can reveal significant insights into one’s personality and identity (Babel et al., 2013; Hodges-Simeon et al., 2010; McAleer et al., 2014; Bordalo et al., 2014). Past research underscores the potential impact of vocal cues in diverse environments, including courtrooms (Schubert et al., 2002) and presidential debates (Gregory & Gallagher, 2002). Furthermore, the alignment of a lawyer’s spoken words with a SCOTUS judge’s linguistic style has been shown to predict the judge’s vote (Danescu-Niculescu-Mizil et al., 2012). This body of evidence suggests that despite theoretical arguments to the contrary, voice impressions may indeed play a role in high-stakes legal settings, a hypothesis our study aims to explore.

In the last few decades, the Supreme Court bar has been characterized by the emergence of an elite group of private sector attorneys not witnessed since the early 1800s (Lazarus, 2008). Elite Supreme Court legal practices are increasingly led by “rainmakers” and landing a Supreme Court case is incredibly important to them. There are a few specialists, most working on behalf of businesses, who have enjoyed heightened success (Fisher, 2013). A new Supreme Court Pro Bono Bar has also emerged (Morawetz, 2011).

The selection of oral advocates by firms is when perceived masculinity may positively affect selection. However, firms that excessively prioritize masculinity in their selection criteria may inadvertently create a negative correlation between perceived masculinity and actual performance in court.

Positive selection may occur because deep-voiced individuals are perceived as having many positive attributes (Klofstad et al., 2012; Tigue et al., 2012; Apple et al., 1979; Buller et al., 1996). Margaret Thatcher and George H. W. Bush were coached to be less shrill (Kramer, 1987). Via humming exercises, Thatcher made her voice more masculine by the amount equivalent to half (https://goo.gl/8bMkut) the male-female difference (Atkinson, 1984), though her natural voice occasionally slipped out (https://goo.gl/WNDgr0) (Nallon, 2014). As women have entered the workforce and positions of authority, their voices have moved closer to a masculine standard (Pemberton et al., 1998) and they have been rewarded for this (Case, 1995). This phenomenon is not limited to women or leaders: in an employment discrimination case involving Sears, job applicants were asked: “Do you have a low-pitched voice?” (EEOC v. Sears, Roebuck & Co., 628 F. Supp. 1264, 1300). The employer preferred employees with masculine voices, even if they performed worse on the job (Case, 1995).

More formally, suppose there are two advocates M and F, who either win or lose. Consider the following utility:

Individuals will choose advocate F over M if and only if the difference in the probability of F winning rather than M winning exceeds the relative taste individuals have for advocates with voice M:

Suppose individuals are more likely to choose M over F. There are two reasons individuals may choose M over F: information and taste, i.e., due to statistical discrimination (information, π F < π M ) or prejudice (taste, d > 0). Information can be used to update one’s beliefs about π F − π M , and any changes in behavior would be due to information. Likewise, the incentives to choose correctly erode the effect of taste on choices (π F − π M > d/α). Any changes in behavior would be due to existence of preference (taste) for a type of advocate independent of the economic consequences. Incentives increase α, so any response would imply that d > 0.

In the experiment, if subjects perceive masculine voices to be more likely to win, their prior beliefs are that π

M

> π

F

. To summarize, • •

As α → ∞, only a large d (on the order of US$10 million) explains the negative correlation between perceived masculinity and court outcomes. Further, in industries with higher d > 0, masculine voices would do worse as firms indulge in taste d at cost of α△π

v

.

In sum, this theoretical analysis motivates a debiasing experiment where we randomize both the information and the incentives. We will investigate whether information reduces the correlation between perceptions of masculinity and perceptions of winning. We will then investigate if incentives further reduce this correlation. Providing incentives in the model is analogous to increasing the stakes, which would reduce the influence of taste on choices, but only if there is a positive taste for masculine lawyers. If the only reason that decision makers prefer masculine lawyers is due to misbeliefs, then only information would affect decisions. In the model, this would mean that d/α would be 0 regardless of the size of α.

3. Experiment

This study’s design builds on a rich interdisciplinary tradition examining how minimal voice cues influence judgments in high-stakes settings. Research in sociophonetics shows that brief audio clips can convey potent social signals (Purnell et al., 1999; Babel et al., 2014). In parallel, psychology experiments on “thin slices” of expressive behavior demonstrate that even a few seconds of speech or nonverbal interaction can reliably affect evaluators’ perceptions and subsequent decisions (Ambady & Rosenthal, 1992). Meanwhile, scholarship specific to the U.S. Supreme Court (e.g., Epstein et al., 2010) highlights the potential impact of oral argument dynamics on case outcomes, including how advocates’ manner or style may subtly shape judicial impressions. Our methodology—extracting standardized, short voice samples (“Mr Chief Justice, may it please the Court”) and collecting crowd-sourced ratings—follows the broader logic of using minimal cues to uncover bias or discrimination (Bertrand & Mullainathan, 2001). By situating the data-collection approach within these literatures, we aim to illuminate whether similarly rapid, voice-based judgments might correlate with real legal outcomes in the highest court.

3.1. Design

Oral arguments at the SCOTUS have been recorded since the installation of a recording system in October 1955. The recordings and the associated transcripts were made available to the public in electronically downloadable format by the Oyez Project (https://www.oyez.org/), which is a multimedia archive at the Chicago-Kent College of Law devoted to the SCOTUS and its work. The audio archive contains more than 110 million words in more than 9000 hours of audio synchronized, based on the court transcripts, to the sentence level. Oral arguments are, with rare exceptions, the first occasion in the processing of a case in which the Court meets face-to-face to consider the issues. Usually, counsel representing the competing parties of a case each have 30 minutes in which to present their side to the Justices. The Justices may interrupt these presentations with comments and questions, leading to interactions between the Justices, the lawyers and, in some cases, the amici curiae, who are not a party to the case but nonetheless offer information that bears on the case to assist the Court.

This paper presents analysis of clips taken from the period 1998–2012. Over 80% of the advocates featured in these clips argued only once before the SCOTUS, and 169 advocates in the sample—about 15%—were female. We hired 748 MTurk raters from the US to evaluate 1,901 audio clips comprising 1085 lawyers. Each rater evaluated 60 random clips, producing roughly 20 ratings for each clip. Six clips were randomly chosen to be played twice for each rater, resulting in each rater providing a total of 66 evaluations.

A little over half (382) of the 748 MTurk raters were female. Two-thirds were aged between 20 and 35 years old, and one-third were older than 35. Likewise, one-third indicated they had some college education, whereas one-third claimed to have a bachelor’s degree. The median annual income of those who completed the survey was about $40,000. Their racial and geographical distribution broadly reflects that of the US population.

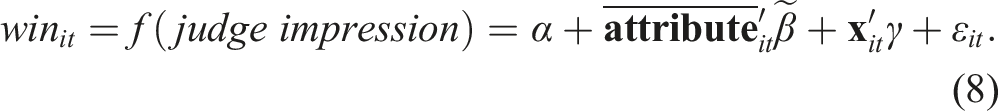

The raters were asked to use headphones and to rate on a Likert scale from 1 (low) to 7 (high) the characteristics of masculinity, attractiveness, confidence, intelligence, trustworthiness, and aggressiveness. These six traits were selected based on previous research on listeners’ perceptual evaluations of linguistic variables (Eckert, 2008; Campbell-Kibler, 2010; McAleer et al., 2014). They are also similar to the ones used in Todorov et al. (2005), which presented subjects with pictures of electoral candidates’ faces and asked them to rate their perceived attributes. That study found that only perceptions of competence predicted election outcomes. To assess whether raters were making global judgments about candidates that were not specific to competence, the authors also elicited judgments of candidates’ intelligence, leadership, honesty, trustworthiness, charisma, and likability. We take a similar approach by analyzing how judgments of masculinity, while correlated with judgments of other voice attributes, are the only ones that predict court outcomes in a consistent and robust manner. Subjects were also asked to predict whether the lawyer would win.

Male and female lawyers were rated in separate blocks, such that participants either rated male advocates or female advocates but not both, so raters would not be comparing females and males on the degree of masculinity. Female lawyers were rated in terms of femininity instead of masculinity.

We randomized the order and polarity of the questions (e.g., “very masculine” and “not at all masculine” would appear on the left and right of a 7-point scale, respectively, or on the right and left in the opposite polarity); the order and polarity of questions were held fixed for any particular rater to minimize cognitive fatigue. For additional nudges across experimental designs, to ensure attention by the rater, we included listening attention checks. Raters who failed these checks were dropped from the sample. There were six alertness trials, three with beeps and three without. The beep comes at the beginning of the lawyer’s voice. Subjects were asked if they heard a beep, but not to rate the lawyer’s voice.

Several clips were repeated to check for intra-rater reliability. Raters were also asked to rate the quality of the recording. While there is no time limit on how long a subject can spend on each trial, they were given a minimum of 5 seconds to respond; they were not allowed to proceed to the next trial until the 5 seconds was up (and all the questions completed) in order to ensure that subjects were given enough time to complete the ratings and to discourage them from speeding through the trials. An example of what each questionnaire looked like is provided in Figure 1. No information regarding the identity of the lawyer or the nature of the case was given to the participants.

2

Screenshot of experiment. Note. This sample page illustrates how MTurk participants rated attorneys’ vocal clips. Raters heard a brief audio segment (”Mr Chief Justice, may it please the Court?”), then indicated perceived masculinity, attractiveness, confidence, intelligence, trustworthiness, and aggressiveness on a 1-7 scale. They also guessed whether the lawyer won the case. The instructions, randomized question order, and forced minimum listening time are described in Section 3.1.

We also reversed the voice clips so the sentence no longer sounded anything like English.

3

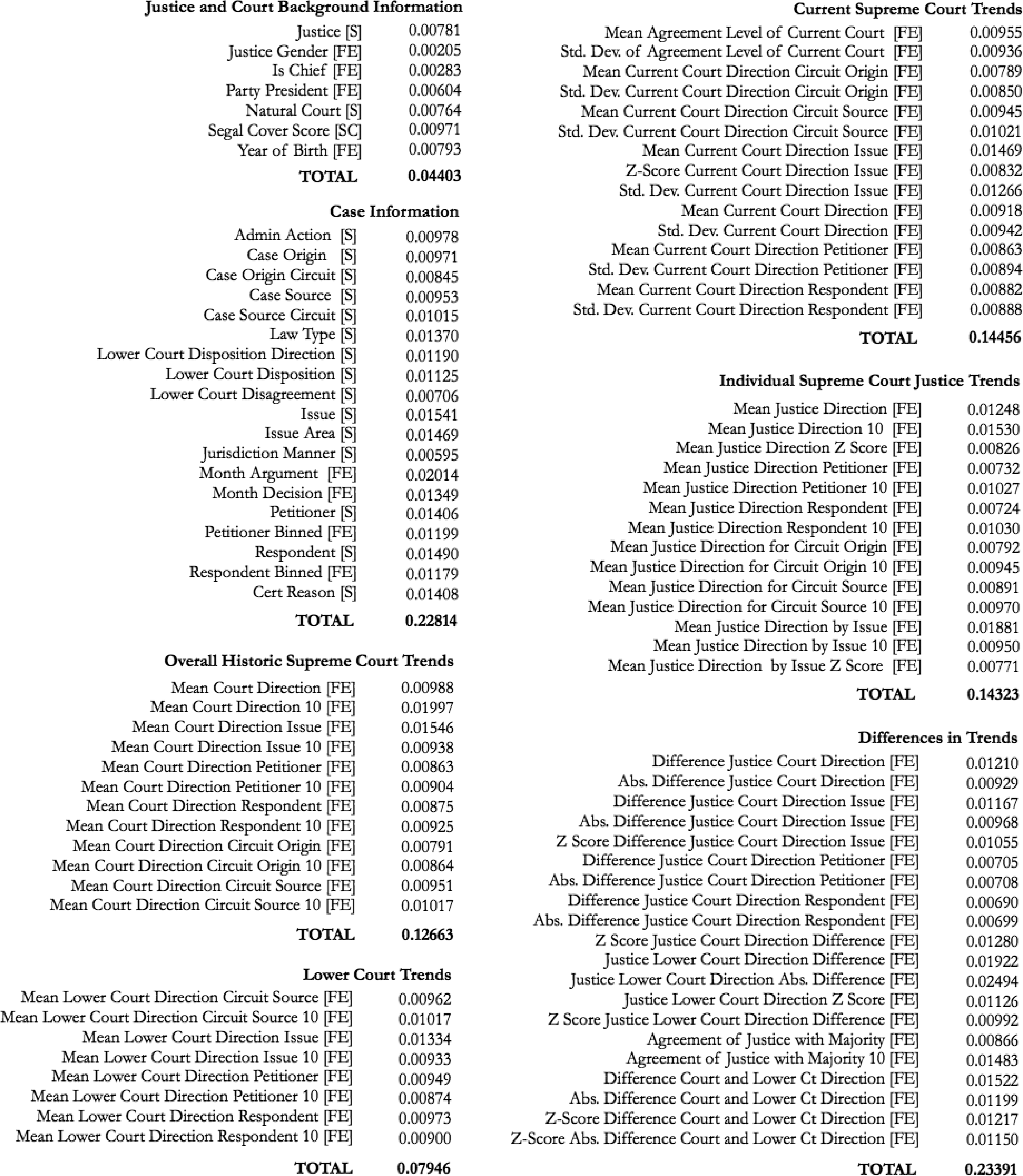

As Figure 2 shows, most attributes exhibit some correlation, but generally very weak ones. The only personality attribute that shows a strong correlation is masculinity. This result suggests that masculinity may be more salient, innate, or stereotyped than other attributes, such as aggressiveness, confidence, competence, attractiveness, intelligence, or trustworthiness. Correlation in voice perceptions across experiments (Forward vs. Reversed Clips). Note. We compare raters’ impressions when the audio clip was played forward versus reversed (stripping English content). Each point represents a trait (masculinity, confidence, etc.) and shows the correlation of that trait across forward versus reversed conditions. See Section 3.1 for details on the reversed condition. Higher correlation suggests that perceived masculinity or femininity is robust to the language’s intelligibility. The number represents the Pearson’s r.

Some of this data was previously analyzed in an earlier study by the authors (Chen et al., 2016). We expand on these earlier analyses in six directions. First, we include female lawyers. Second, we explore mechanisms behind the observed correlations, for example, by merging additional datasets for heterogeneity analyses to elucidate the mechanisms. For example, the heterogeneity in judge votes assuages concerns that omitted case characteristics are associated with voice masculinity and win rates. Third, we examine how lawyers change their voice over time. Fourth, we implement a de-biasing experiment. We use a factorial design. One factorial arm provides information to raters after each voice rating. More specifically, raters were asked whether they believed the lawyer would win. Immediately they are told whether the lawyer actually won before the next voice clip is played for the rater. The other factorial arm provides incentives for raters to guess correctly whether the lawyer will win or not. Our de-biasing experiment complements the observational data by disentangling the roles of statistical discrimination and prejudice, revealing that both factors significantly contribute to the correlation between voice perception and court success. Fifth, we find that whose perception of masculinity matters. Sixth, we use machine learning to predict Supreme Court votes and find that our results hold controlling for the best prediction model of Supreme Court votes. When we introduce masculine perceptions as a feature, the model also selects masculinity as a feature.

3.2. Measurement

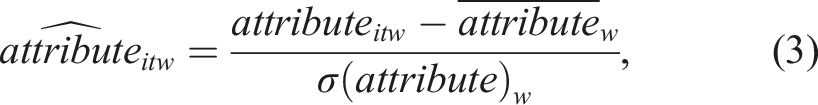

We measure perceived masculinity in three ways. Within-rater normalization entails adjusting for cross-subject variability in the cardinality and spread of ratings. Let attribute

itw

be subject w’s perception of a given attribute of advocate i in case t, where attribute refers to any one of the six traits (i.e., attractiveness, confidence, etc.) and the perceived likelihood of winning. The normalized rating is given by

Our second measure uses raw ratings, which give more weight to raters who provide more signal amid greater variance in their ratings. 4

A third measure of voice attribute uses the average scores of each lawyer, matching only one voice measure to each oral argument. To do this, we take the average ratings across raters as follows:

There are 33,666 individual ratings, with roughly 20 ratings per oral argument. The standard deviation of the raw ratings is about 1.5. For robustness, we present sets of analyses using all three measures in Section 4, but the raw ratings is our preferred measure because it weights more heavily the raters who provide more signal amid greater variance in their ratings. We use the raw ratings when conducting mechanism analyses in Section 5.

3.3. Empirical Strategy

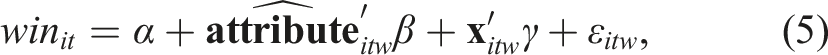

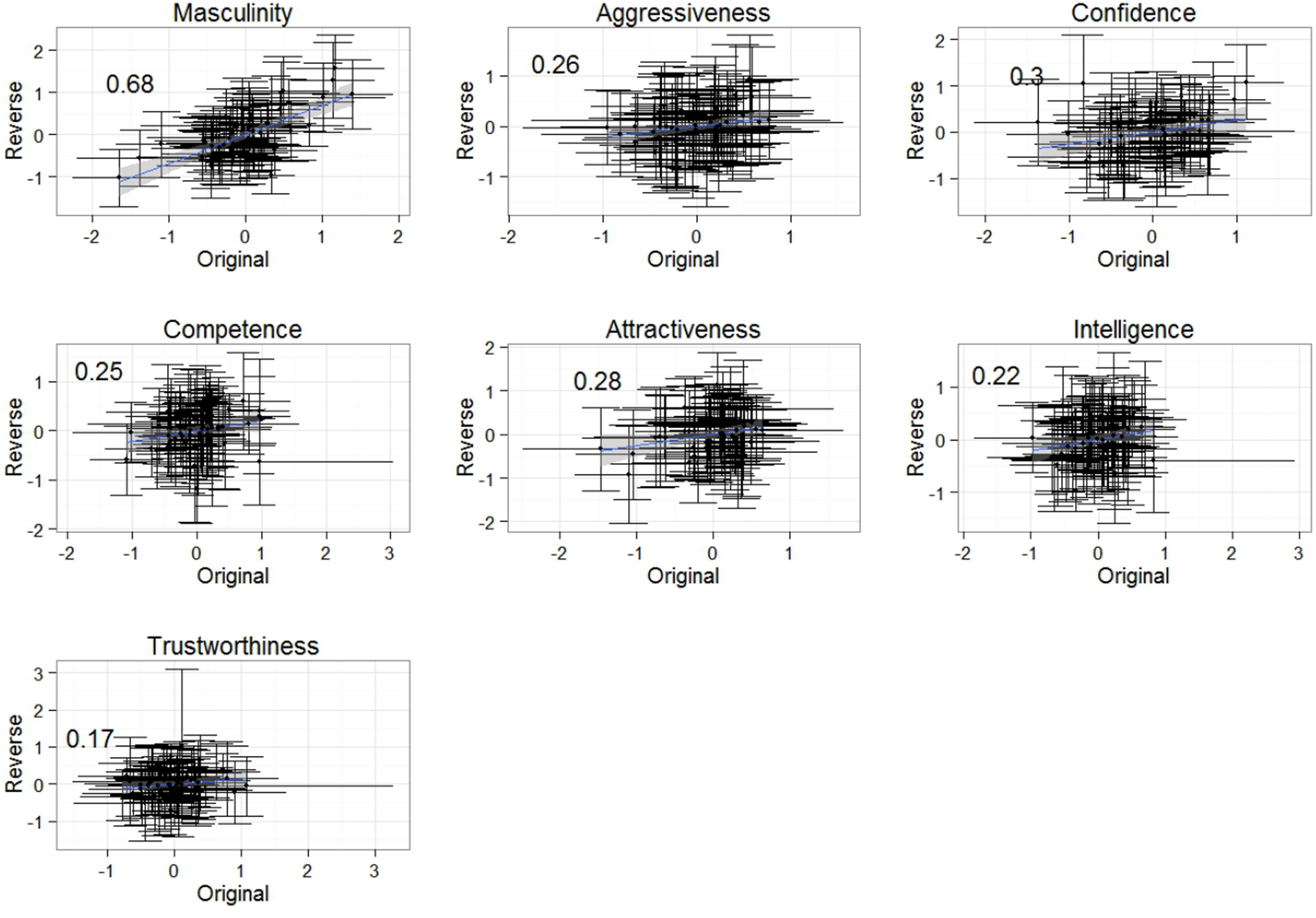

We consider two empirical specifications to explore whether first impressions of lawyers’ voices predict Supreme Court outcomes. In the micro-level strategy, we estimate the following equation:

3.3.1. Control Variables

We further expand our analysis by including in some regressions additional covariates denoted by the vector

Attorney Characteristics: These comprise SCOTUS clerkships, age, number of clerkships, law review, other graduate degree, law school tier, masculinity of first name (the number of males divided by the number of females with that name in the census), number of previous SCOTUS oral arguments, years since graduation, number of admitted courts, number of practice areas, firm size, office size, and whether they are a law firm partner.

Case-Level Controls: These controls are case category, region of origin, and lower-court characteristics–reversal of trial court, opinion length, disagreement, political division, ideology, and number of self-certainty words that proxy for confidence.

Industry or Client Type: For some specifications, we track whether the advocate’s client is a government entity, nonprofit, or private corporation, along with indicators for “masculine” industries (e.g., penal institutions, telecommunications).

In other specifications, we adopt a machine-learning approach (inspired by Katz et al., 2015) in which these controls feed into a random forest to create a high-quality “predicted probability of winning.” We then include that prediction in a simpler regression with vocal masculinity as the variable of interest. This approach ensures that the entire information set—attorney plus case-level covariates—is accounted for, while enabling us to see whether vocal traits retain an independent correlation.

Because our main goal is to isolate the effect of voice net of attorney quality or case factors, we do not emphasize every control’s individual coefficient in the main text. Instead, we show how adding these covariates affects the estimated coefficient on vocal traits—a standard approach in causal-inference frameworks (see Oster, 2019).

In certain specifications, we use attorney fixed effects rather than explicit variables, effectively removing all time-invariant differences across that attorney. In specifications with lawyer fixed effects, we effectively control for any stable personal attributes, including facial appearance. Thus, while Chen (2018) finds that face and voice both matter in cross-sectional predictions, our within-lawyer approach shows that mutable vocal traits can still affect outcomes beyond any time-invariant characteristics—such as facial features—associated with each attorney. Where feasible, we add year or case fixed effects, further netting out variations specific to a particular term or dispute.

We adjust the standard errors of the regression estimates for clustering at the case level (and also multi-way clustering at the lawyer level and, later, the judge level). Petitioners (the first lawyer to speak and arguing on behalf of the plaintiff) and respondents (the second lawyer to speak and arguing on behalf of the defendant) are presented separately. 5

In the case-level strategy, we estimate the following equation:

In these regressions we cannot control for intra-rater rating correlations nor rater characteristics. For these reasons, the aggregated regression is generally viewed as too conservative in terms of statistical precision (Bertrand et al., 2004). We provide regression results using both the micro- and case-level strategies. Importantly, the interpretation of the magnitudes of the association will differ depending on the level of aggregation.

3.4. Data Generating Process

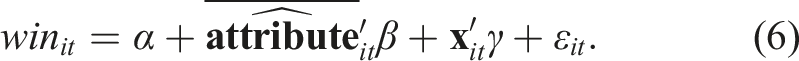

The underlying data-generating process is halfway between the micro-level regression with 20 raters per advocate and the case-level regression with one rating per advocate. In the Supreme Court, each of nine Justices forms his or her own perception. The corresponding micro-level strategy is:

The outcome of the case is a function of the nine separate impressions of the advocate.

Differences between the case outcome and the judge votes would be suggestive of whether the swing voter’s behavior is linked to perceived masculinity.

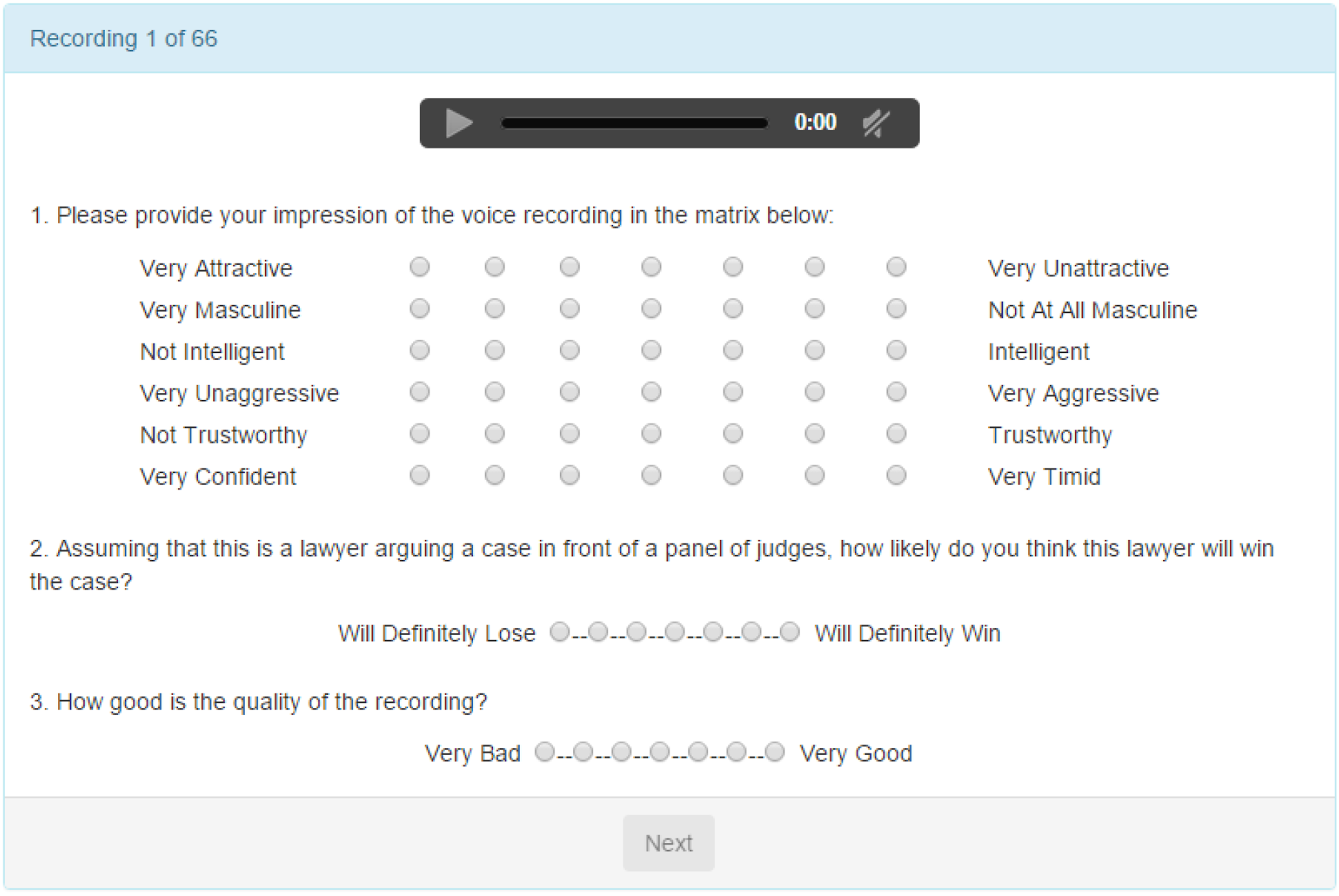

Data simulations of equation (8) and comparing β and

4. SCOTUS Outcomes and Perceived Masculinity

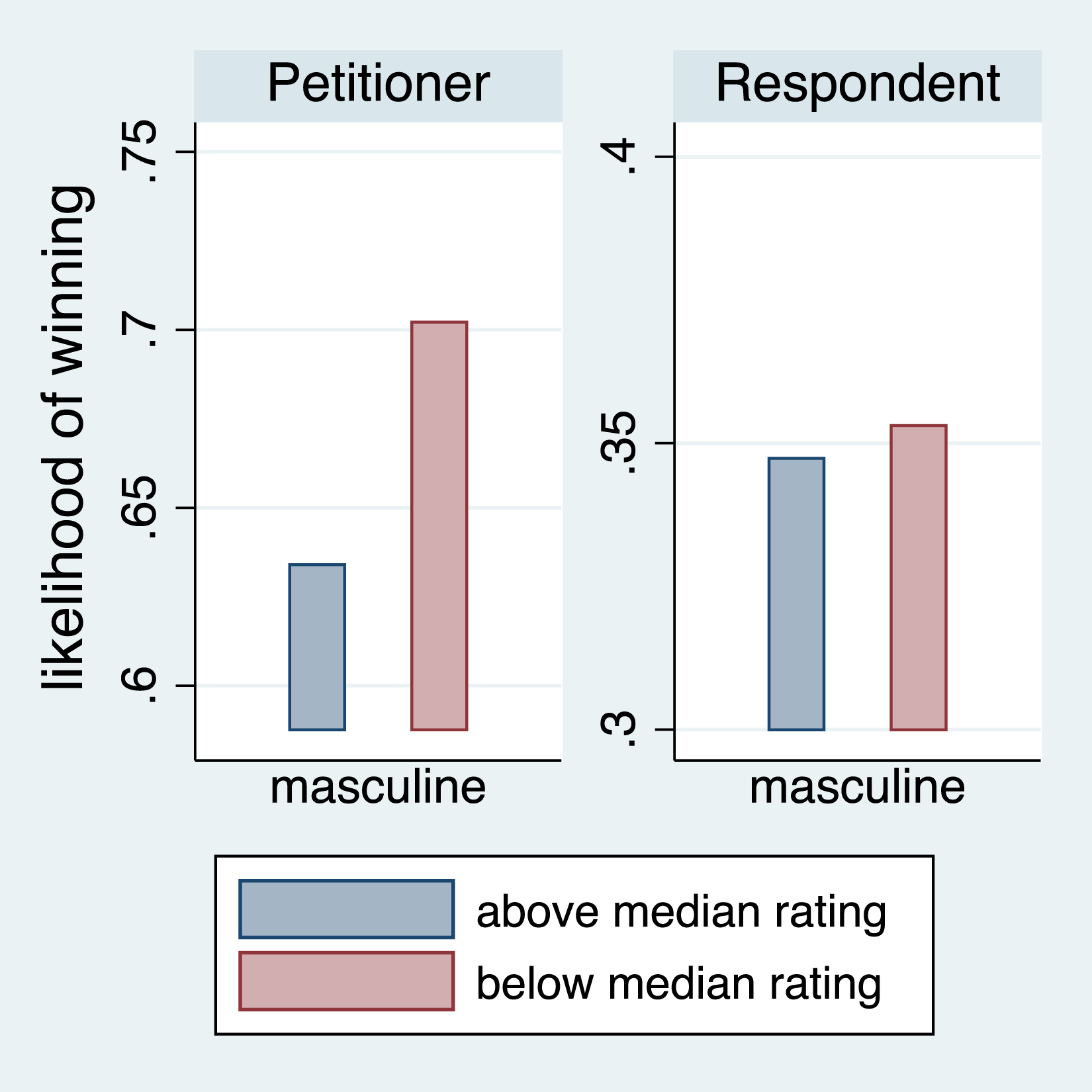

We can discretize the continuous rating measures at the lawyer level and observe that a below-median masculinity rating corresponds to a roughly 7 percentage-point greater likelihood of winning (Figure 3). No association between perceived masculinity and court outcomes is found among the lawyers for the respondent, consistent with the primacy of first impressions. Perceived masculinity and win rate by petitioner-respondent status. Note. We group attorneys by above versus below median perceived masculinity (1-7 scale) and show the fraction of cases won. Petitioner lawyers argue first, Respondent lawyers second. Bars indicate mean outcomes. Source: 19,982,012 SCOTUS audio data. See Section 4 for a full discussion of sample construction.

Since female lawyers are coached to be more masculine (Starecheski, 2014), this raises the question of whether our findings extend to female lawyers. The anecdote from Starecheski’s NPR piece (2014)—in which a lawyer deliberately sought to deepen her voice—demonstrates how legal professionals may feel compelled to modify their vocal presentation. A broader empirical literature shows that advocates, politicians, and other speakers consciously adjust pitch and resonance over time. For instance, Kramer (1987) and Atkinson (1984) document Margaret Thatcher’s deliberate pitch-lowering exercises, while Pemberton et al. (1998) find that working women often shift toward lower pitch in response to workplace norms. Moreover, Case (1995) analyzes litigation over hiring decisions allegedly contingent on an applicant’s vocal depth, indicating that deeper or more ‘authoritative’ tones can be rewarded in certain professional environments. Together, these works illustrate that while voice modification is not trivial, it is perceived as sufficiently feasible that attorneys, politicians, and other public figures invest in coaching and training.

Studies on voice-based social biases have repeatedly observed significant differences in how listeners react to voices of different (perceived) genders (Babel et al., 2014). We thus examine male and female lawyers separately for the association between perceived masculinity (femininity) and court outcomes.

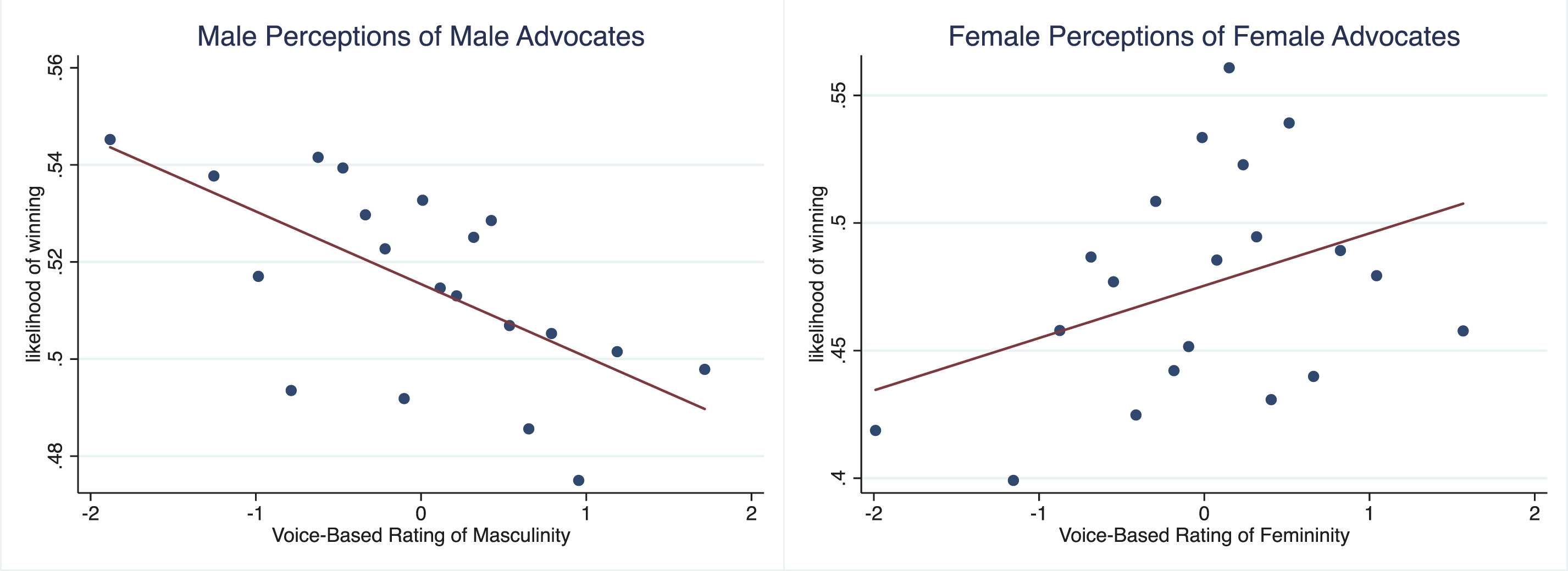

The results extend to females: while an increase in perceived masculinity among male lawyers correlates with a decrease in the likelihood of winning, the same degree of increase in perceived femininity in female lawyers correlates with an increase in the likelihood of prevailing in a court case (Figure 4). If “masculine” were the opposite of “feminine,” then the pooled results would be stronger. Graphical illustration of gender and predicted outcomes. Note. Illustrates how male versus female attorneys fare given different ranges of perceived vocal masculinity/femininity. X-axis = perceived masculinity/femininity (from lower to higher). Each bin on the X-axis represents an equal-sized subset of lawyers by rating. Y-axis = predicted probability of winning.

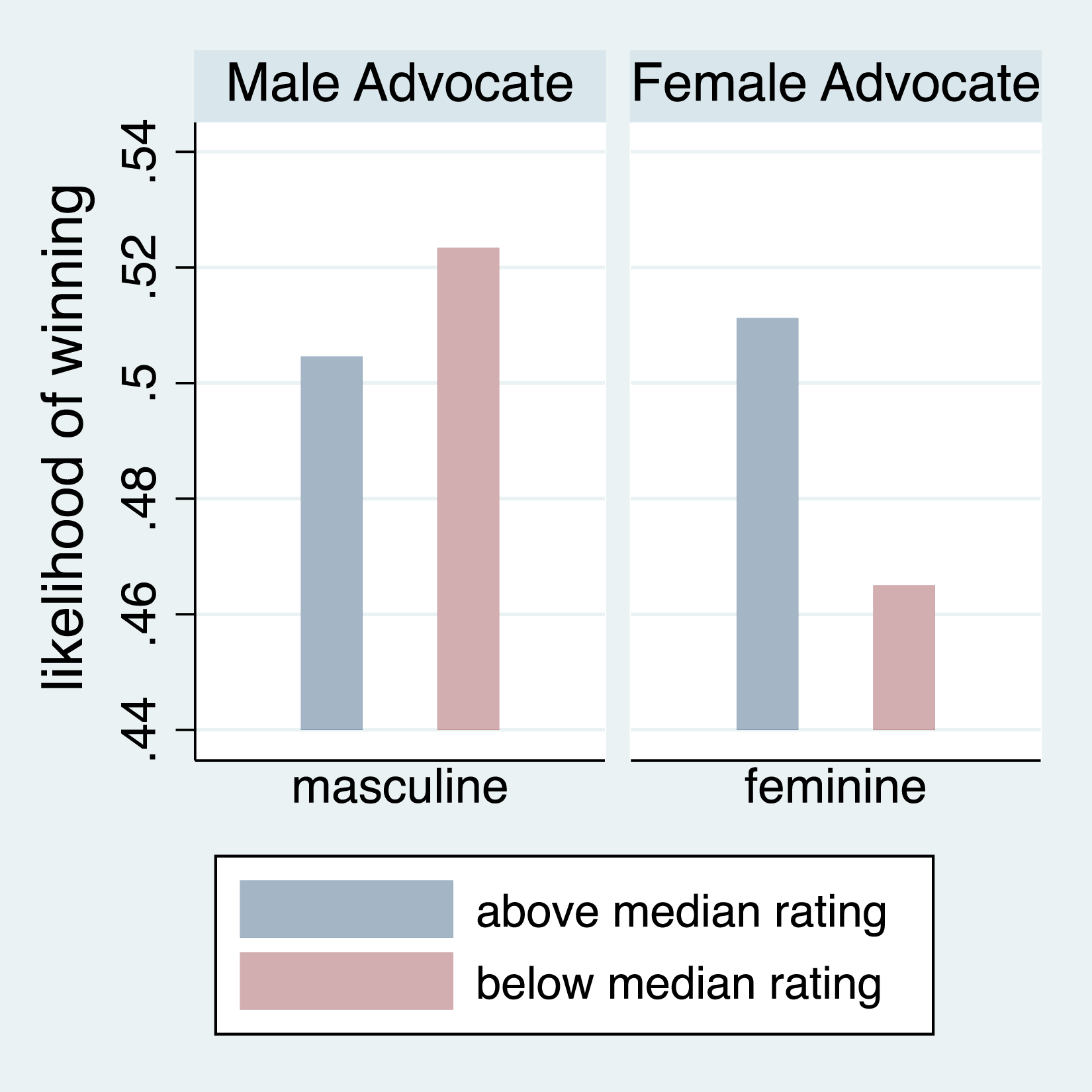

Another interpretation of our results – where we discretize the continuous rating measures at the lawyer level – is that a below-median masculinity rating corresponds with a roughly 2 percentage-point greater likelihood of winning for males, but a below-median femininity rating corresponds with an approximately 5 percentage-point lower likelihood of winning for females (Figure 5). Perceptions of masculinity (Femininity) and advocate win rates. Note. We group male attorneys by above versus below median perceived masculinity (1-7 scale) and likewise for female attorneys by above versus below median perceived feminity. Bars indicate mean outcomes. Source: 1998-2012 SCOTUS audio data.

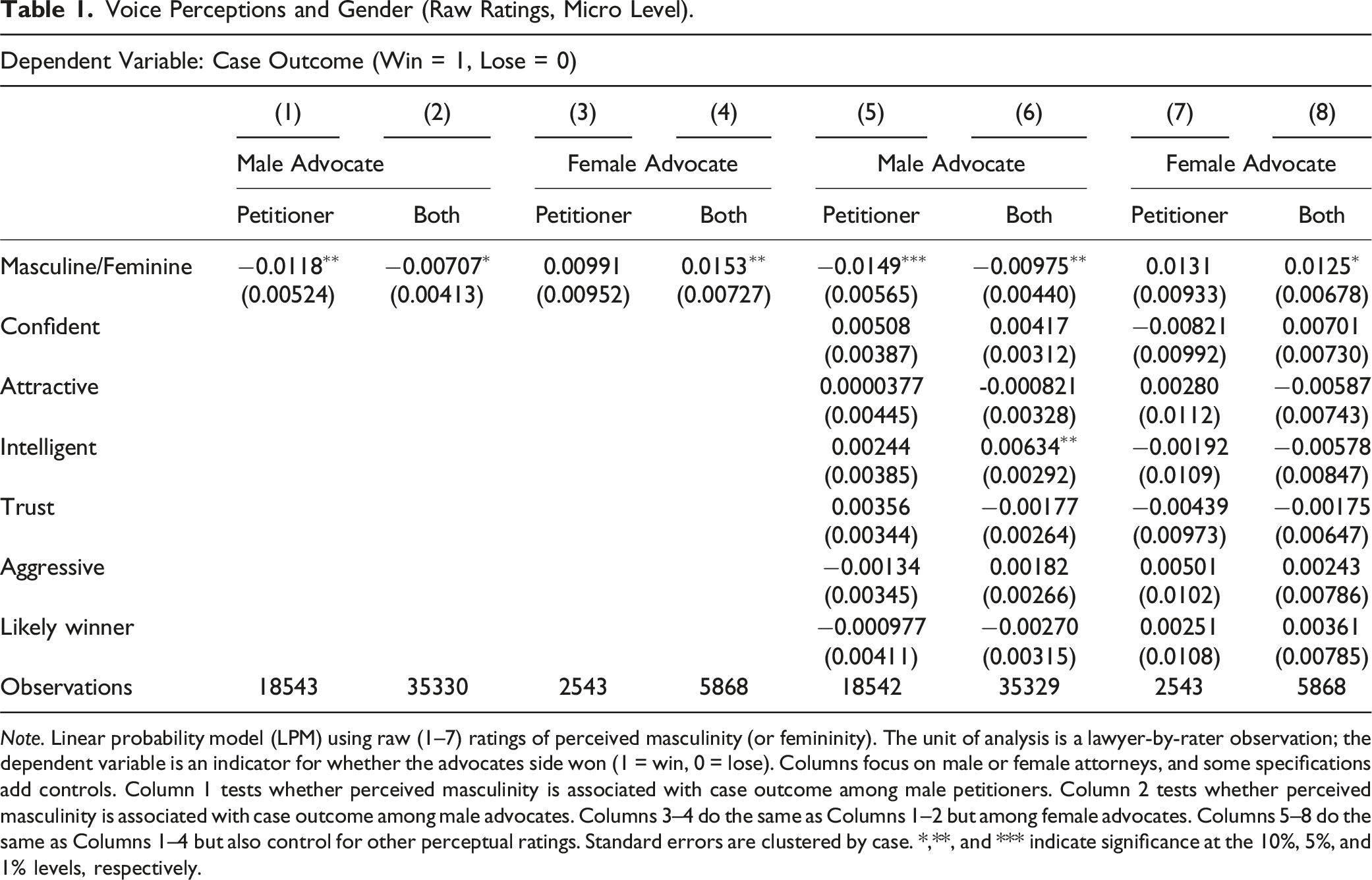

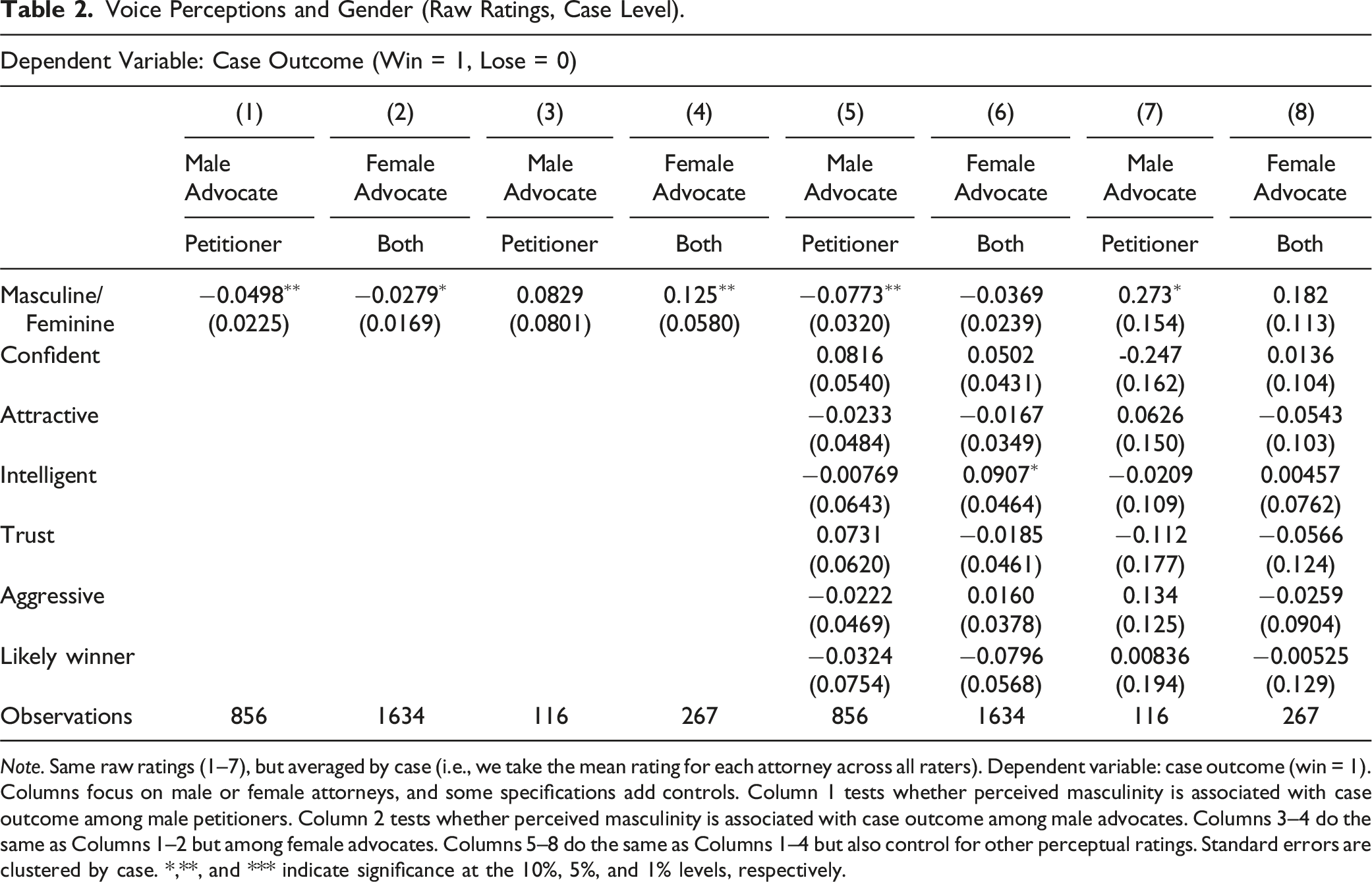

Voice Perceptions and Gender (Raw Ratings, Micro Level).

Note. Linear probability model (LPM) using raw (1–7) ratings of perceived masculinity (or femininity). The unit of analysis is a lawyer-by-rater observation; the dependent variable is an indicator for whether the advocates side won (1 = win, 0 = lose). Columns focus on male or female attorneys, and some specifications add controls. Column 1 tests whether perceived masculinity is associated with case outcome among male petitioners. Column 2 tests whether perceived masculinity is associated with case outcome among male advocates. Columns 3–4 do the same as Columns 1–2 but among female advocates. Columns 5–8 do the same as Columns 1–4 but also control for other perceptual ratings. Standard errors are clustered by case. *,**, and *** indicate significance at the 10%, 5%, and 1% levels, respectively.

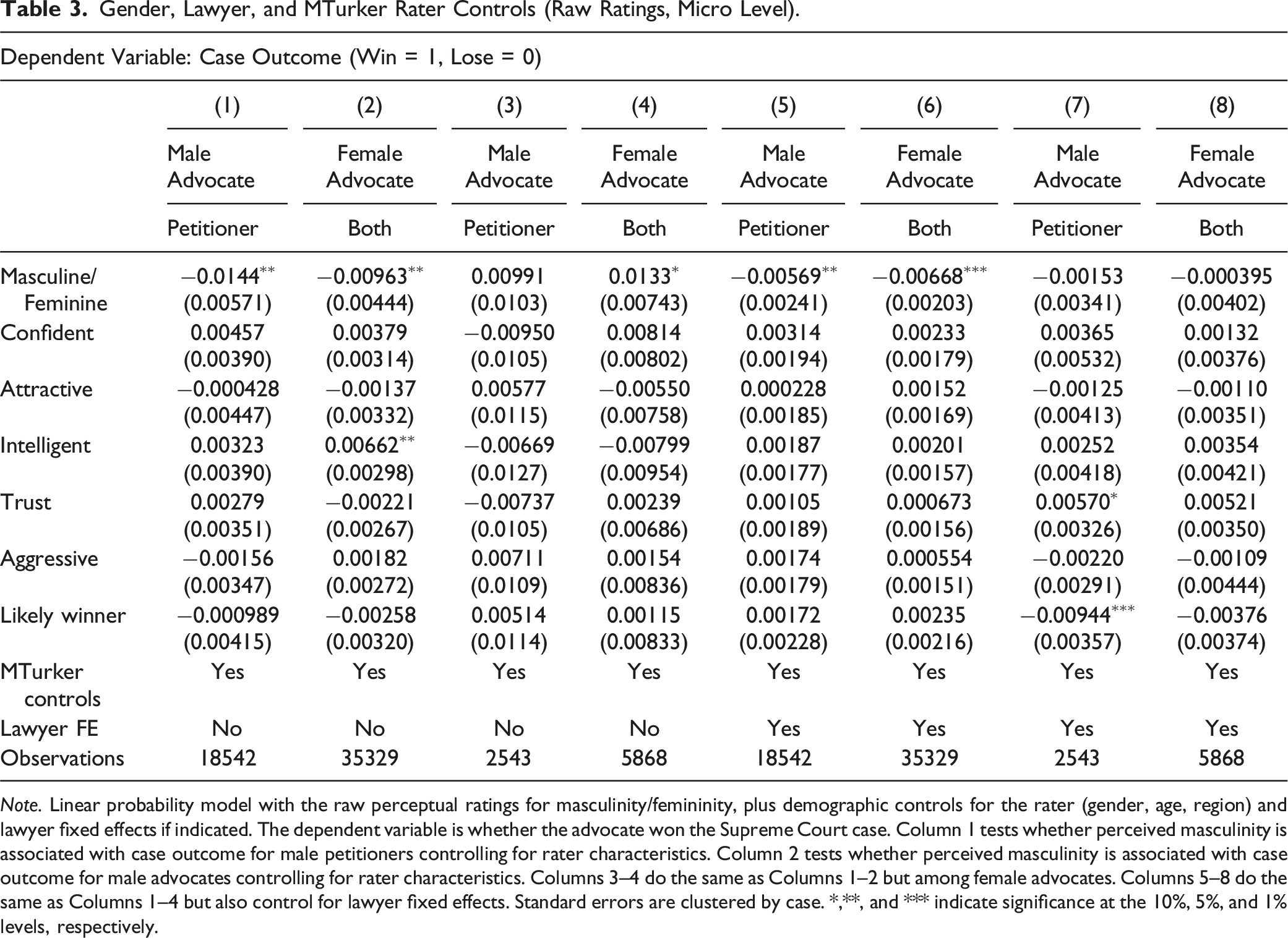

Voice Perceptions and Gender (Raw Ratings, Case Level).

Note. Same raw ratings (1–7), but averaged by case (i.e., we take the mean rating for each attorney across all raters). Dependent variable: case outcome (win = 1). Columns focus on male or female attorneys, and some specifications add controls. Column 1 tests whether perceived masculinity is associated with case outcome among male petitioners. Column 2 tests whether perceived masculinity is associated with case outcome among male advocates. Columns 3–4 do the same as Columns 1–2 but among female advocates. Columns 5–8 do the same as Columns 1–4 but also control for other perceptual ratings. Standard errors are clustered by case. *,**, and *** indicate significance at the 10%, 5%, and 1% levels, respectively.

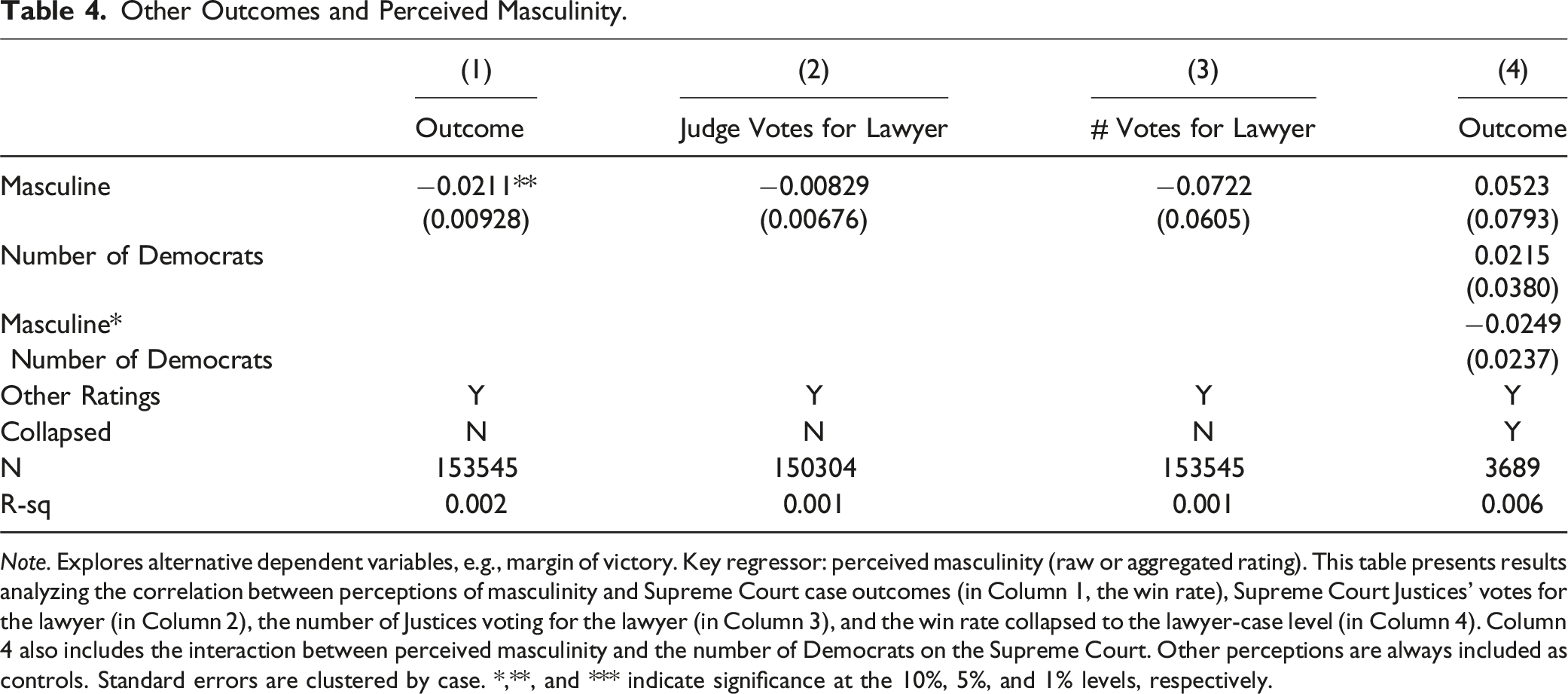

Gender, Lawyer, and MTurker Rater Controls (Raw Ratings, Micro Level).

Note. Linear probability model with the raw perceptual ratings for masculinity/femininity, plus demographic controls for the rater (gender, age, region) and lawyer fixed effects if indicated. The dependent variable is whether the advocate won the Supreme Court case. Column 1 tests whether perceived masculinity is associated with case outcome for male petitioners controlling for rater characteristics. Column 2 tests whether perceived masculinity is associated with case outcome for male advocates controlling for rater characteristics. Columns 3–4 do the same as Columns 1–2 but among female advocates. Columns 5–8 do the same as Columns 1–4 but also control for lawyer fixed effects. Standard errors are clustered by case. *,**, and *** indicate significance at the 10%, 5%, and 1% levels, respectively.

To benchmark our findings, the 0.86 percentage-point difference in court outcomes attributed to a one-standard-deviation change in our voice-based measure of perceived masculinity is equivalent to nearly one-quarter of the gender gap. When analyzed at the case level, a one-standard-deviation change corresponds to 5.2 percentage-point difference in court outcomes (when not including further controls). 6

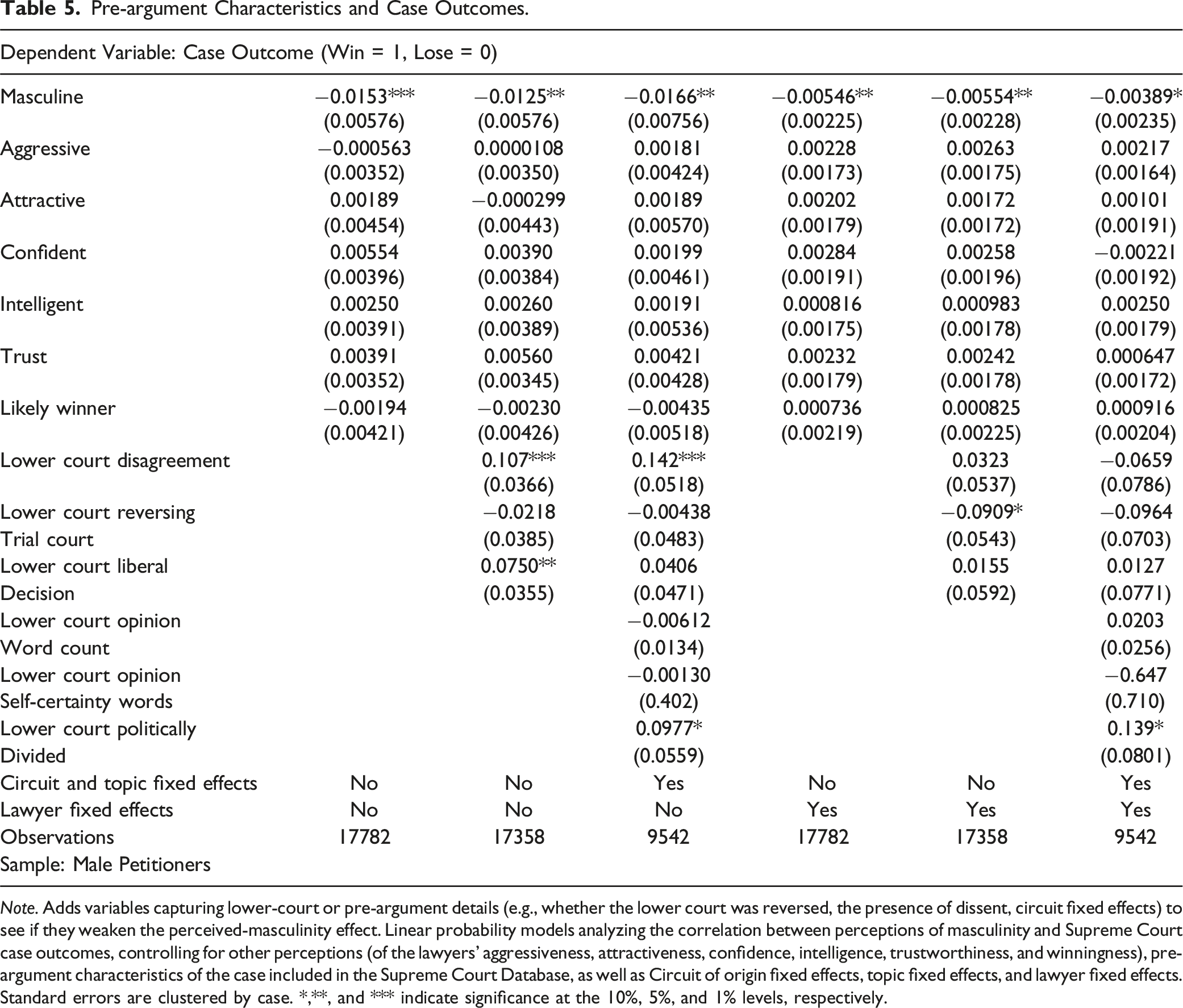

Other Outcomes and Perceived Masculinity.

Note. Explores alternative dependent variables, e.g., margin of victory. Key regressor: perceived masculinity (raw or aggregated rating). This table presents results analyzing the correlation between perceptions of masculinity and Supreme Court case outcomes (in Column 1, the win rate), Supreme Court Justices’ votes for the lawyer (in Column 2), the number of Justices voting for the lawyer (in Column 3), and the win rate collapsed to the lawyer-case level (in Column 4). Column 4 also includes the interaction between perceived masculinity and the number of Democrats on the Supreme Court. Other perceptions are always included as controls. Standard errors are clustered by case. *,**, and *** indicate significance at the 10%, 5%, and 1% levels, respectively.

Our reference to heuristics or biases draws on the large body of behavioral research showing that people often rely on simplifying mental shortcuts—particularly when facing uncertainty or complexity (Tversky & Kahneman, 1974; Kahneman, 2011). In the context of Supreme Court adjudication, these cognitive shortcuts can become more influential precisely when a case is legally or ideologically “hard”: that is, when doctrinal precedent does not squarely resolve the issue or when the Justices are evenly divided in their ideological leanings. Without a clear rule or consensus, extralegal factors—such as an advocate’s vocal pitch or perceived confidence—can inadvertently shape which side appears “slightly more persuasive.”

Rachlinski et al., 2009b have documented how System 1 thinking (Kahneman, 2011)—fast, intuitive decision-making—can affect judges, even in relatively stable legal domains. In the Supreme Court, where cases often involve high-stakes or novel legal questions, these snap judgments or intuitive heuristics may loom larger when Justices find little doctrinal clarity or experience near-even splits. In such scenarios, judges may—consciously or not—rely on “soft” cues like voice timbre, demeanor, or emotional tone (Schubert et al., 2002).

While the significance of gendered language and courtroom behavior is not new, prior scholarship has typically focused on broad differences between male and female attorneys rather than on acoustic details (e.g., pitch, resonance, or intonation) that vary even within gender categories. Our findings suggest these voice-based impressions may be especially potent in marginal or contested cases, contributing to the broader conversation about how cognitive heuristics operate when Justices lack unequivocal doctrinal signals or when ideological divisions are sharp. In other words, heuristics involving vocal attributes could serve as an additional tipping factor—not displacing legal reasoning, but accentuating or reducing the perceived credibility of each side’s argument when the legal question remains uncertain.

Hence, in “hard” cases, the Court’s decision-making may involve a blend of System 2 deliberation (analytical parsing of precedent, legal tests) and System 1 intuitions (rapid, sometimes unconscious assessments of the advocate’s presentation). By illuminating how perceived vocal cues correlate with votes and outcomes, this study underscores the subtle extralegal elements that can influence even the highest judicial forum. That influence, we propose, is most visible in close cases, where small psychological nudges—including the advocate’s voice—may help Justices tip from one outcome to the other.

While the focus on language and gender in the courtroom is not new, previous studies have focused primarily on the gendered language performance of witnesses (O’Barr & Atkins, 1980) or the discursive practices in the courtroom (O’Barr, 1982). Scholars have long examined the significance of oral argument at the Supreme Court, tracing how the substance and style of advocacy can shape judicial decision-making. In particular, Chief Justice Roberts (2005) and Johnson et al. (2006) explore how advocate performance can persuade Justices and influence case outcomes, laying the groundwork for subsequent empirical studies on this topic. Other scholars find that Supreme Court outcomes are correlated with authors’ coding of emotional arousal in the behavior of Justices, lawyers, and their voices (Schubert et al., 1992); with the number of questions asked by Justices (Epstein et al., 2010); and with measurements of the emotional content of questions using linguistic dictionaries (Black et al., 2011). By building on these sources, we emphasize the continuity in research linking advocacy style and real-world appellate outcomes, setting the stage for our own focus on how voice characteristics might matter at the margin.

A wealth of scholarship already documents the ways gender may influence judicial outcomes, including analyses of whether female versus male attorneys fare differently before the Court (e.g., Szmer et al., 2010). However, much of that research examines aggregate gender categories—male versus female attorneys—without disentangling the precise mechanisms at work, such as differences in rhetorical style, experience levels, or vocal delivery.

By contrast, the design here isolates vocal perceptions specifically, holding lexical content constant through a set phrase—“Mr Chief Justice, may it please the Court?”—that every advocate utters. This approach is intended to filter out differences in argument quality or strategy and focus on how acoustic features of speech alone (e.g., pitch, resonance) might shape listeners’ impressions of “masculinity” or “femininity.” In effect, we are looking at one dimension of how gendered signals can emerge—even among attorneys of the same gender—beyond simply classifying a lawyer as male or female. We do not dispute the prior literature’s demonstration that gender matters at the Court. Instead, we contribute a more granular question: How much do subjective impressions of an advocate’s voice—when they utter the identical words—predict case outcomes, above and beyond the broader male/female attorney effect?

Hence, although existing studies have addressed gender’s role in Supreme Court advocacy, none, to our knowledge, have systematically examined how differences in perceived vocal traits (despite identical phrasing) correlate with real vote and win/loss patterns. By spotlighting that narrower factor, we capture a dimension of extralegal influence—voice-based snap judgments—that operates at a level of detail previous “direct effects” research has not isolated.

Masculinity is a quality or set of practices that is stereotypically, though not exclusively, connected with men. Women may engage in masculine practices equally as often, although such practices are usually either not noticed or censured. The performative nature of “masculinity” makes possible the existence of non-masculine men and masculine women (Kiesling, 2007; Eckert & McConnell-Ginet, 2003; Butler, 1990; Kessler & McKenna, 1978). Different cultures may also construct different notions of masculinity that are reflected in stereotypical ways of talking and thinking about men and masculinity.

The following sections identify three channels for the presence of these correlations. The next section investigates the role of judicial ideology and further assuage concerns of omitted case characteristics. The section after investigates the role of firms and the potential channel of information and taste in selecting lawyers with masculine voices.

5. Why Does Perceived Masculinity Predict SCOTUS Outcomes: The Role of Justices

5.1. Is Voice Masculinity a Response to Case Weakness?

Pre-argument Characteristics and Case Outcomes.

Note. Adds variables capturing lower-court or pre-argument details (e.g., whether the lower court was reversed, the presence of dissent, circuit fixed effects) to see if they weaken the perceived-masculinity effect. Linear probability models analyzing the correlation between perceptions of masculinity and Supreme Court case outcomes, controlling for other perceptions (of the lawyers’ aggressiveness, attractiveness, confidence, intelligence, trustworthiness, and winningness), pre-argument characteristics of the case included in the Supreme Court Database, as well as Circuit of origin fixed effects, topic fixed effects, and lawyer fixed effects. Standard errors are clustered by case. *,**, and *** indicate significance at the 10%, 5%, and 1% levels, respectively.

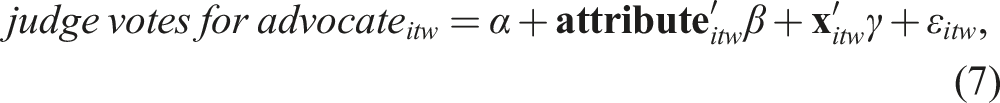

5.2. Do Justices Differ in How Their Votes Correlate with Masculine Voices?

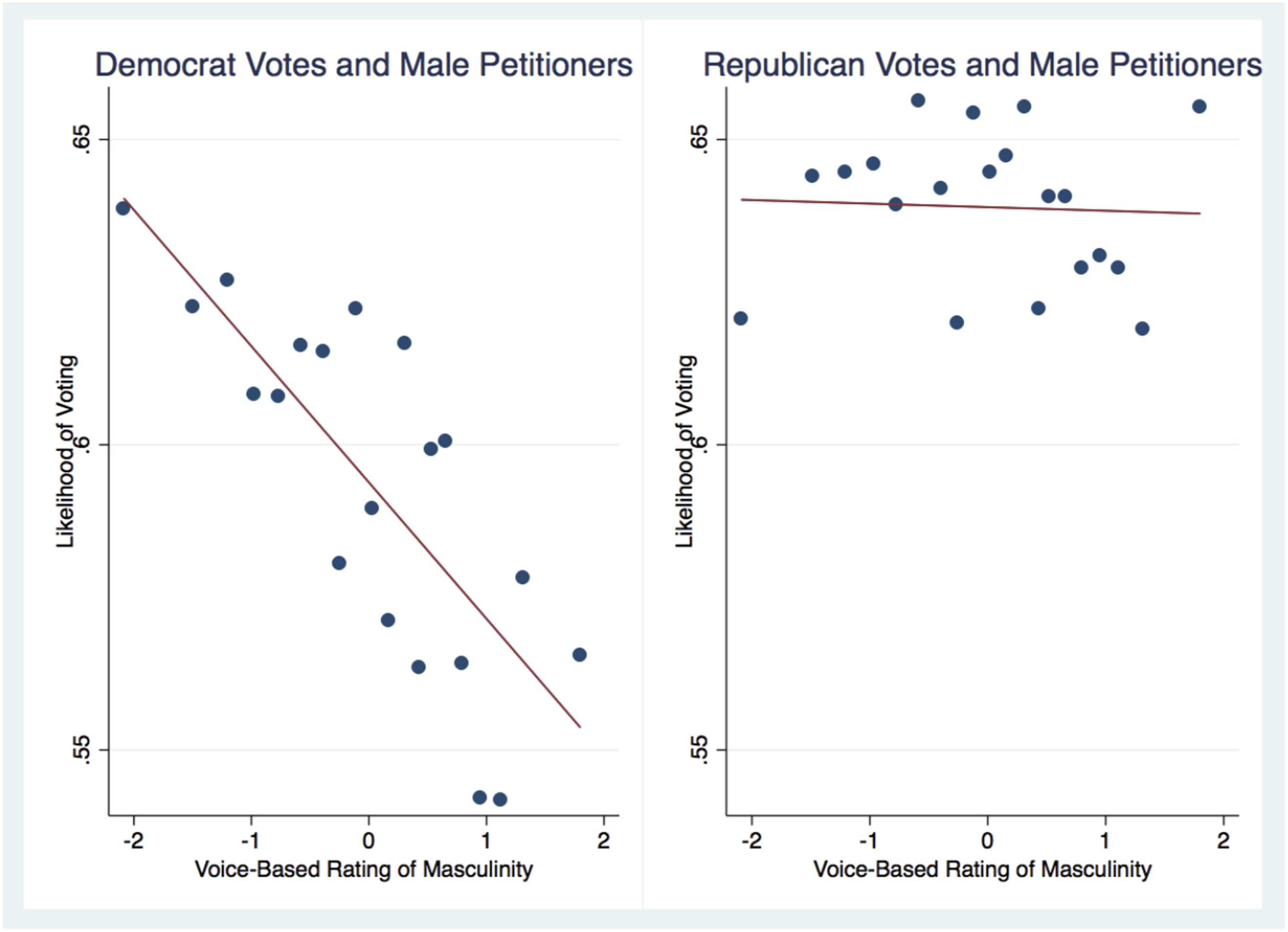

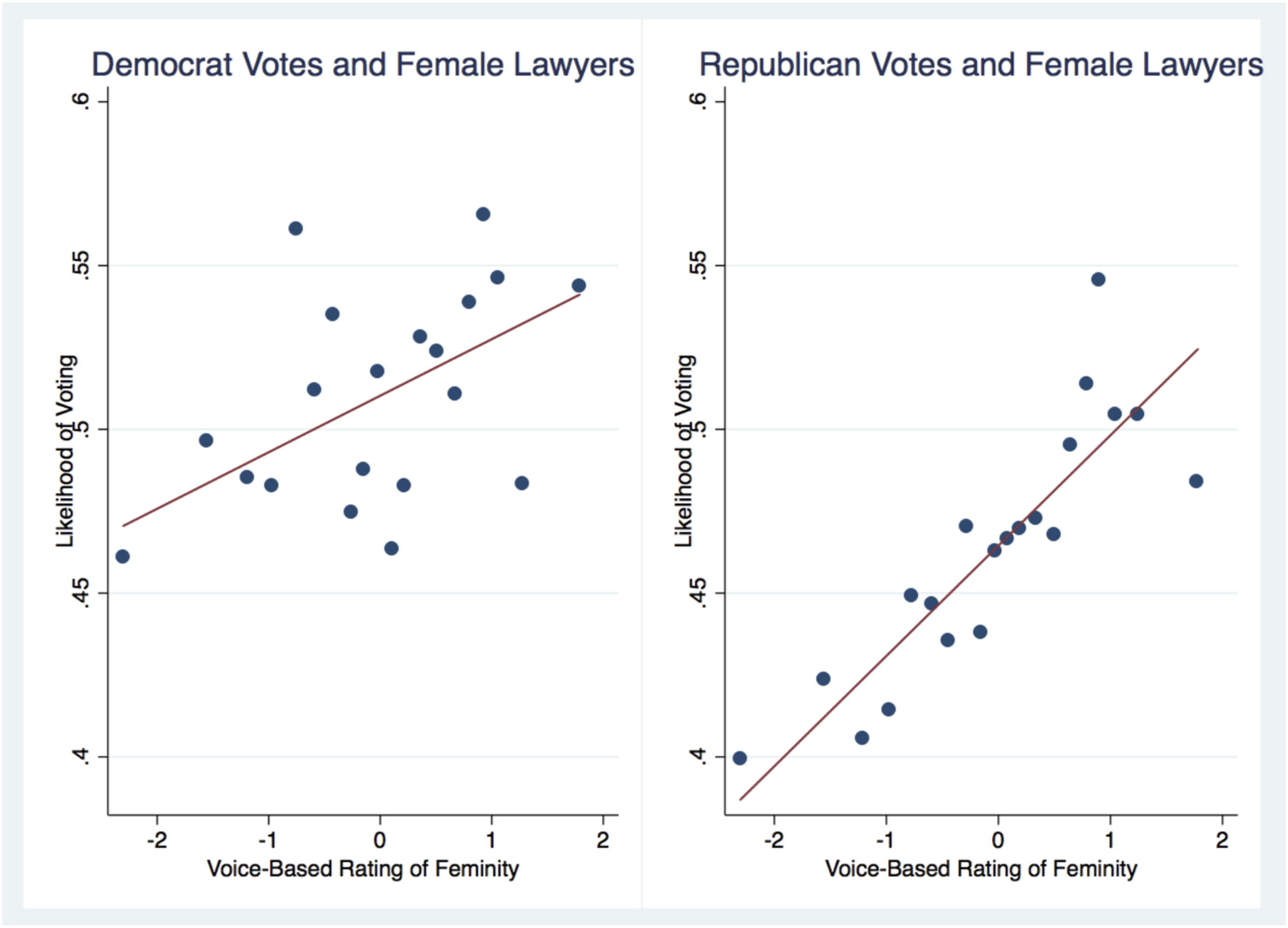

Votes of Democrats, but not Republicans, are negatively associated with perceived masculinity (Figure 6). In the higher intercept for Republicans, we see that Republicans favor male petitioners more so than Democrats do (Szmer et al. (2010)). The slope on the left side indicates that Democrats disfavor masculine males relative to less-masculine males – and the lack of a gradient for Republicans indicates that the adverse outcome for masculine males can be entirely attributed to Justices appointed by Democrats. All analyses in this sub-section regress: Political party and response to masculinity (p < .01). Note. Shows how Justices of different party of appointment respond to male petitioners’ perceived masculinity (X-axis). Each bin on the X-axis represents an equal-sized subset of lawyers by rating. Y-axis measures predicted probability of Justice voting in favor.

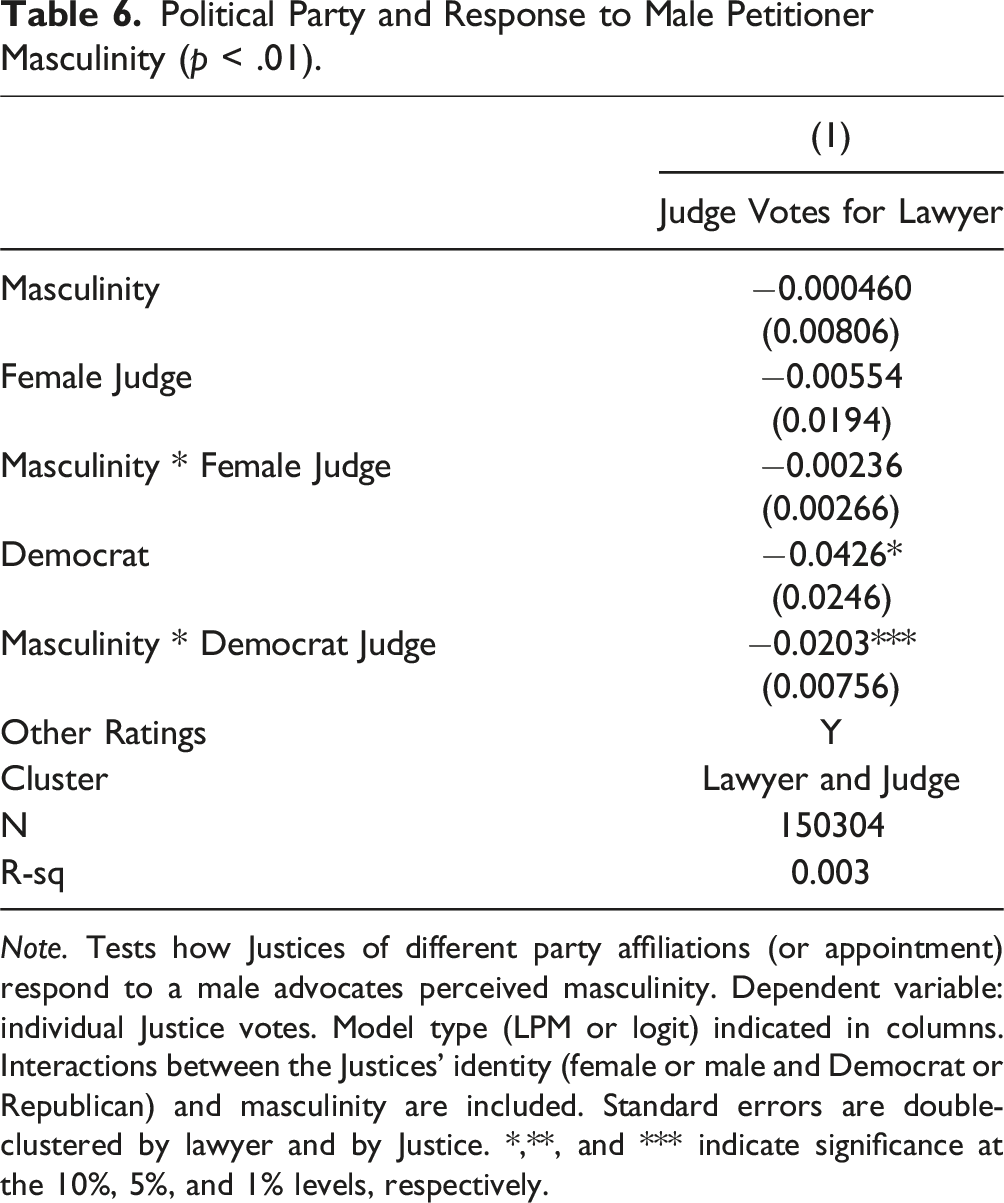

Political Party and Response to Male Petitioner Masculinity (p < .01).

Note. Tests how Justices of different party affiliations (or appointment) respond to a male advocates perceived masculinity. Dependent variable: individual Justice votes. Model type (LPM or logit) indicated in columns. Interactions between the Justices’ identity (female or male and Democrat or Republican) and masculinity are included. Standard errors are double-clustered by lawyer and by Justice. *,**, and *** indicate significance at the 10%, 5%, and 1% levels, respectively.

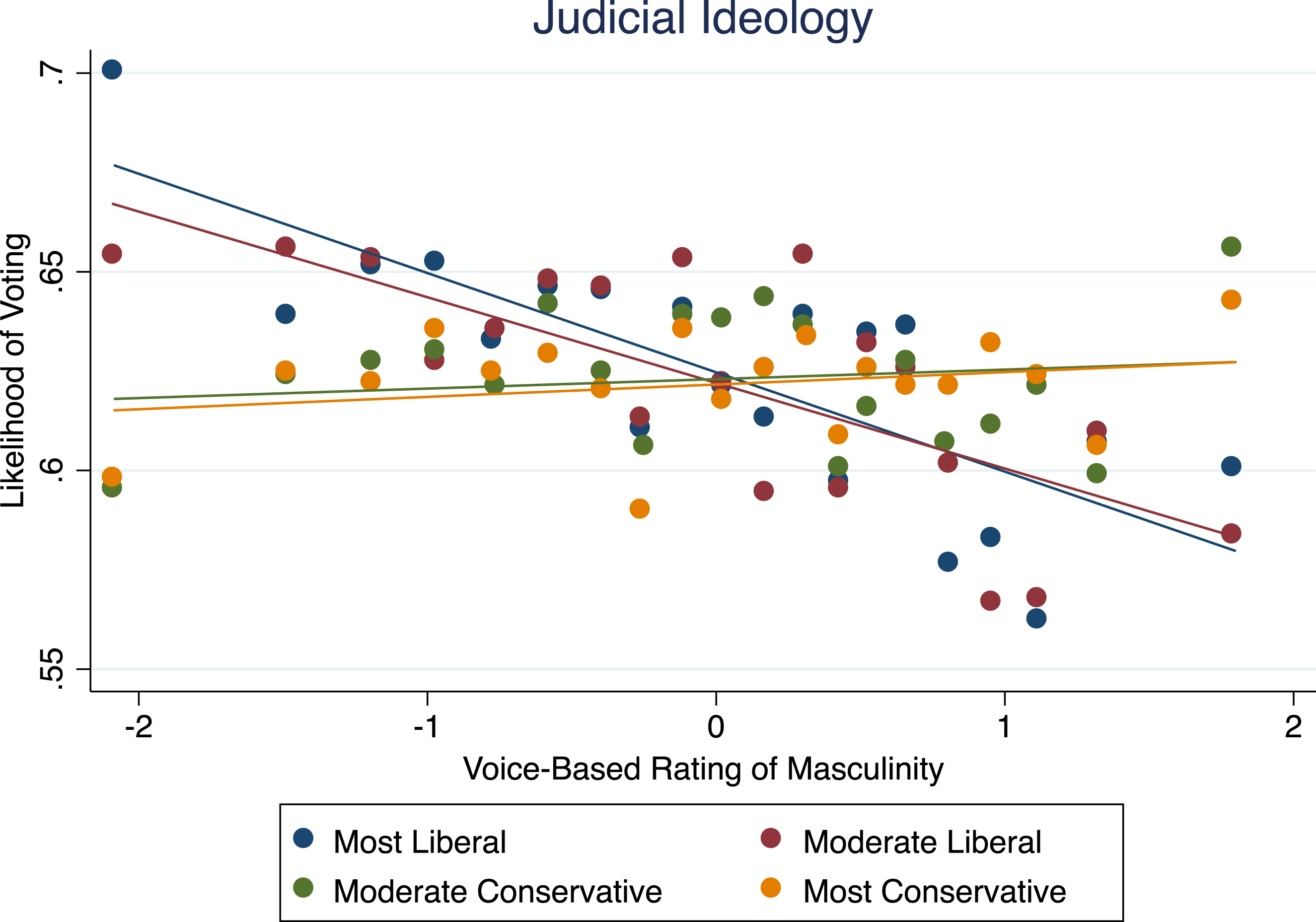

If we replace party with Justices’ ideology scores (Martin & Quinn, 2002) or include both together, we find that ideology (p < .01) is more important than party, which is rendered insignificant (Figure 7). Judicial ideology and response to male petitioner masculinity (p < .01). Note. Shows how Justices of different ideological leanings respond to male petitioners’ perceived masculinity (X-axis). Each bin on the X-axis represents an equal-sized subset of lawyers by rating. Y-axis measures predicted probability of Justice voting in favor. Judges are grouped by quartile of ideology.

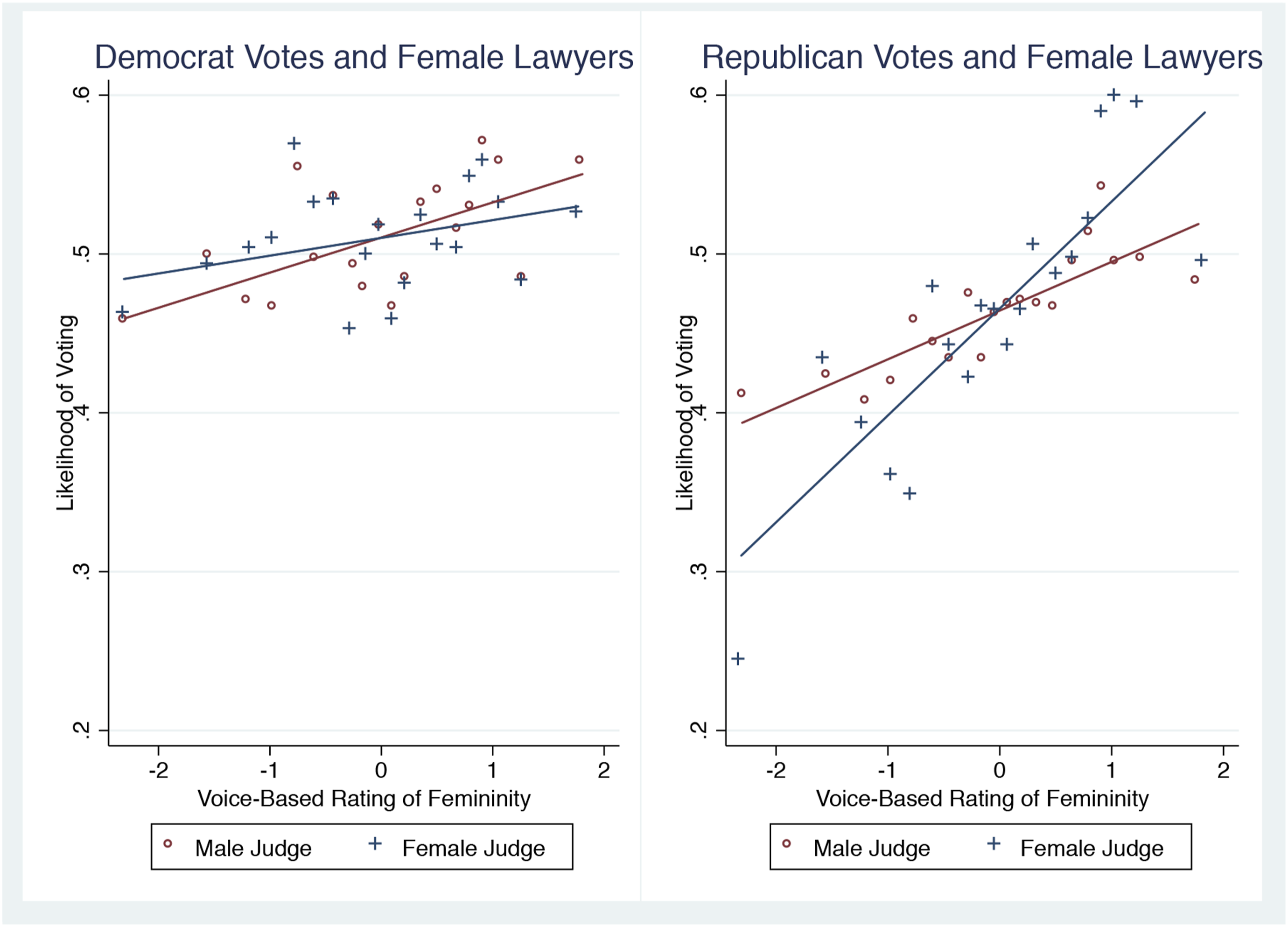

We also find that Republicans vote for feminine-sounding females more than Democrats do (Figure 8). The difference is significant at p < .1. The basic pattern is again robust to considering the Justice’s gender (Figure 9). Political party and response to femininity (p < .1). Note. Shows how Justices of different party of appointment respond to female advocates’ perceived feminity (X-axis). Each bin on the X-axis represents an equal-sized subset of lawyers by rating. Y-axis measures predicted probability of Justice voting in favor. Political party and response to femininity (p < .01). Note. Shows how Justices of different gender and different party of appointment respond to female advocates’ perceived femininity (X-axis). Each bin on the X-axis represents an equal-sized subset of lawyers by rating. Y-axis measures predicted probability of Justice voting in favor.

5.3. How Does Predictive Power Compare with Previous Best Multivariate Models?

Final Feature Weights (Katz et al., 2015).

Note. These random-forest feature importances show how strongly each variable contributes to predicting the Supreme Courts overall affirm versus reverse decisions in Katz et al.’s model. Not a regression table. We adopt this “best predictor” approach as a comprehensive control (see Section 5.3). Features range from lower-court data to justice-level ideology.

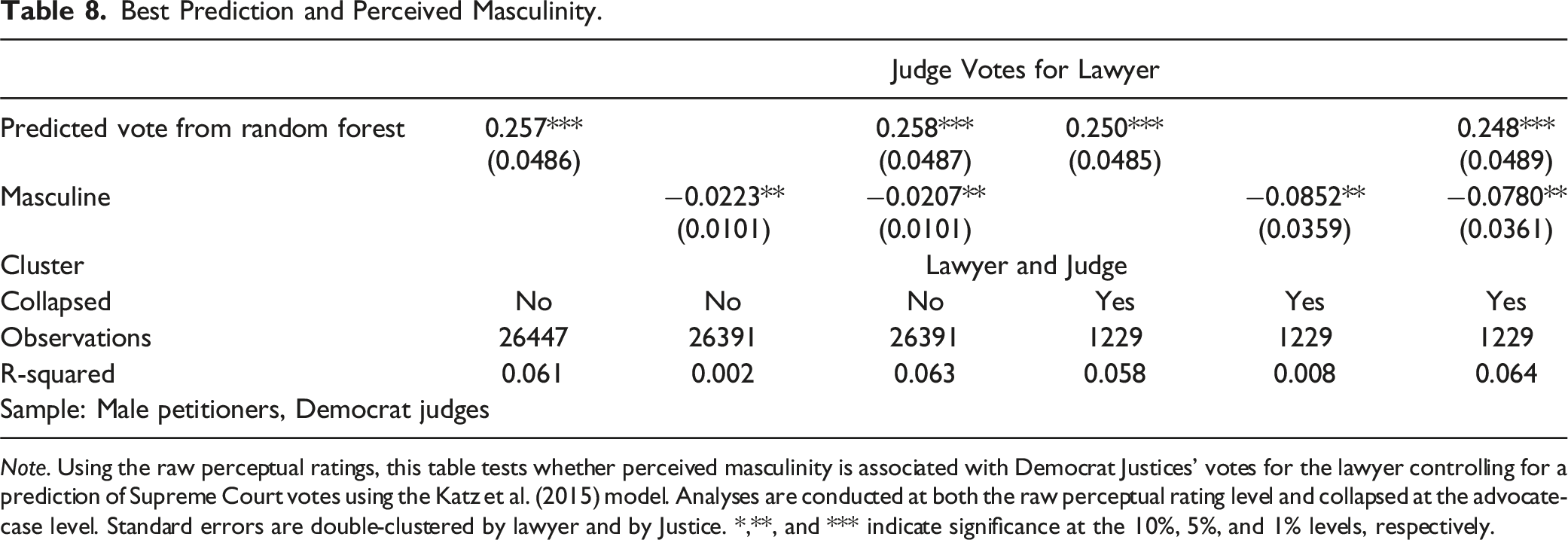

We use this prediction of Justices’ votes. Intuitively, if the predictive model were perfect, i.e., we essentially control for the correct decision or true case quality, then no extraneous factors should matter. We therefore ask two questions: Does perceived masculinity have an explanatory effect above and beyond this best predictor? If so, how much R-square improvement?

Best Prediction and Perceived Masculinity.

Note. Using the raw perceptual ratings, this table tests whether perceived masculinity is associated with Democrat Justices’ votes for the lawyer controlling for a prediction of Supreme Court votes using the Katz et al. (2015) model. Analyses are conducted at both the raw perceptual rating level and collapsed at the advocate-case level. Standard errors are double-clustered by lawyer and by Justice. *,**, and *** indicate significance at the 10%, 5%, and 1% levels, respectively.

Why does perceived masculinity do so well relative to the best prediction model? Previous models perform best on cases on which the Court was in agreement (9–0) and performs worst on cases with high levels of disagreement among members of the Court (5–4) (Katz et al. (2017)). In fact, in close cases affirming the lower court, the model predicts the outcome with only 25% accuracy. 8 The interpretation that perceived masculinity improves prediction accuracy in hard cases is consistent with perceived masculinity being relevant with the swing voter.

5.4. How Much Do Perceptions Explain Beyond Objective Acoustic Data?

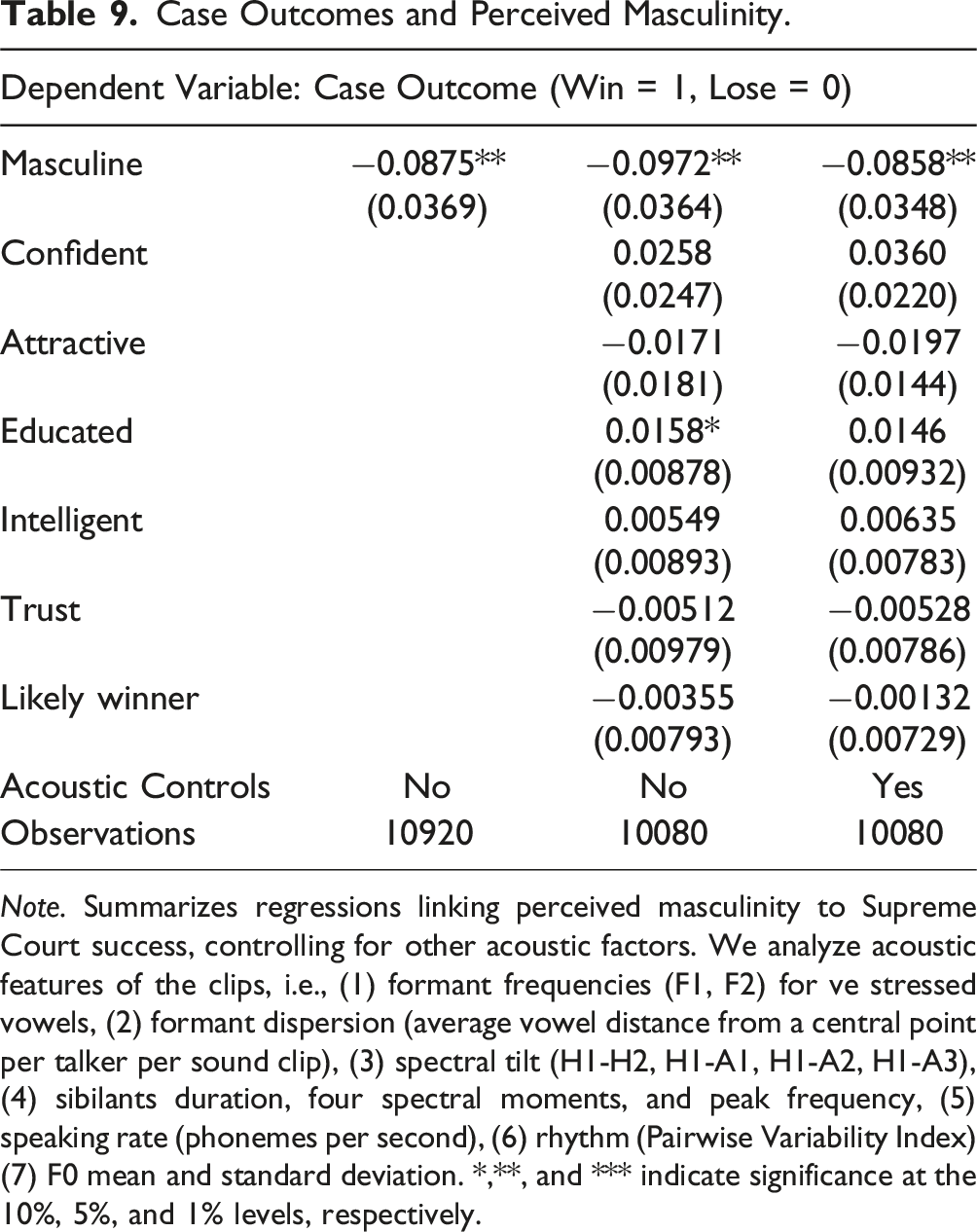

A contemporaneous study finds that lawyer’s pitch is associated with Supreme Court outcomes (Dietrich et al. (2019)). Here, we analyze acoustic features of the clips using a set of many phonetic traits deemed as important by the phonology literature: (1) formant frequencies (F1, F2) for ve stressed vowels (/i, I, O, ej, 2/); (2) formant dispersion (average vowel distance from a central point per talker per sound clip); (3) spectral tilt (H1-H2, H1-A1, H1-A2, H1-A3); (4) sibilant’s duration, four spectral moments, and peak frequency; (5) speaking rate (phonemes per second); (6) rhythm (Pairwise Variability Index); (7) F0 mean and standard deviation.

Case Outcomes and Perceived Masculinity.

Note. Summarizes regressions linking perceived masculinity to Supreme Court success, controlling for other acoustic factors. We analyze acoustic features of the clips, i.e., (1) formant frequencies (F1, F2) for ve stressed vowels, (2) formant dispersion (average vowel distance from a central point per talker per sound clip), (3) spectral tilt (H1-H2, H1-A1, H1-A2, H1-A3), (4) sibilants duration, four spectral moments, and peak frequency, (5) speaking rate (phonemes per second), (6) rhythm (Pairwise Variability Index) (7) F0 mean and standard deviation. *,**, and *** indicate significance at the 10%, 5%, and 1% levels, respectively.

6. Why Does Perceived Masculinity Predict SCOTUS Outcomes: The Role of Firms

6.1. Is Masculinity of Voice Differentially Correlated with Court Outcomes Across Firms?

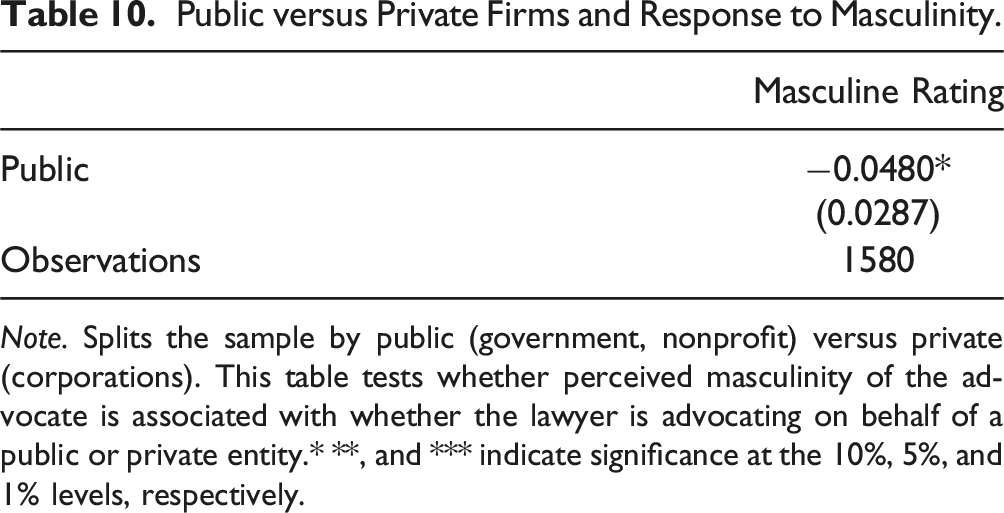

We now address the role of firm selection of lawyers with masculine voices more directly using the available data. In the model, industries that value d > 0 more highly should observe a steeper correlation between perceived masculinity and losses because these firms have a greater relative taste for M lawyers than for F lawyers.

Public versus Private Firms and Response to Masculinity.

Note. Splits the sample by public (government, nonprofit) versus private (corporations). This table tests whether perceived masculinity of the advocate is associated with whether the lawyer is advocating on behalf of a public or private entity.* **, and *** indicate significance at the 10%, 5%, and 1% levels, respectively.

Public versus private firms and response to masculinity. Note. Illustrates how attorneys arguing on behalf of the government or a private firm fare given different ranges of perceived vocal masculinity. X-axis = perceived masculinity/femininity (from lower to higher). Each bin on the X-axis represents an equal-sized subset of lawyers by rating. Y-axis = predicted probability of winning.

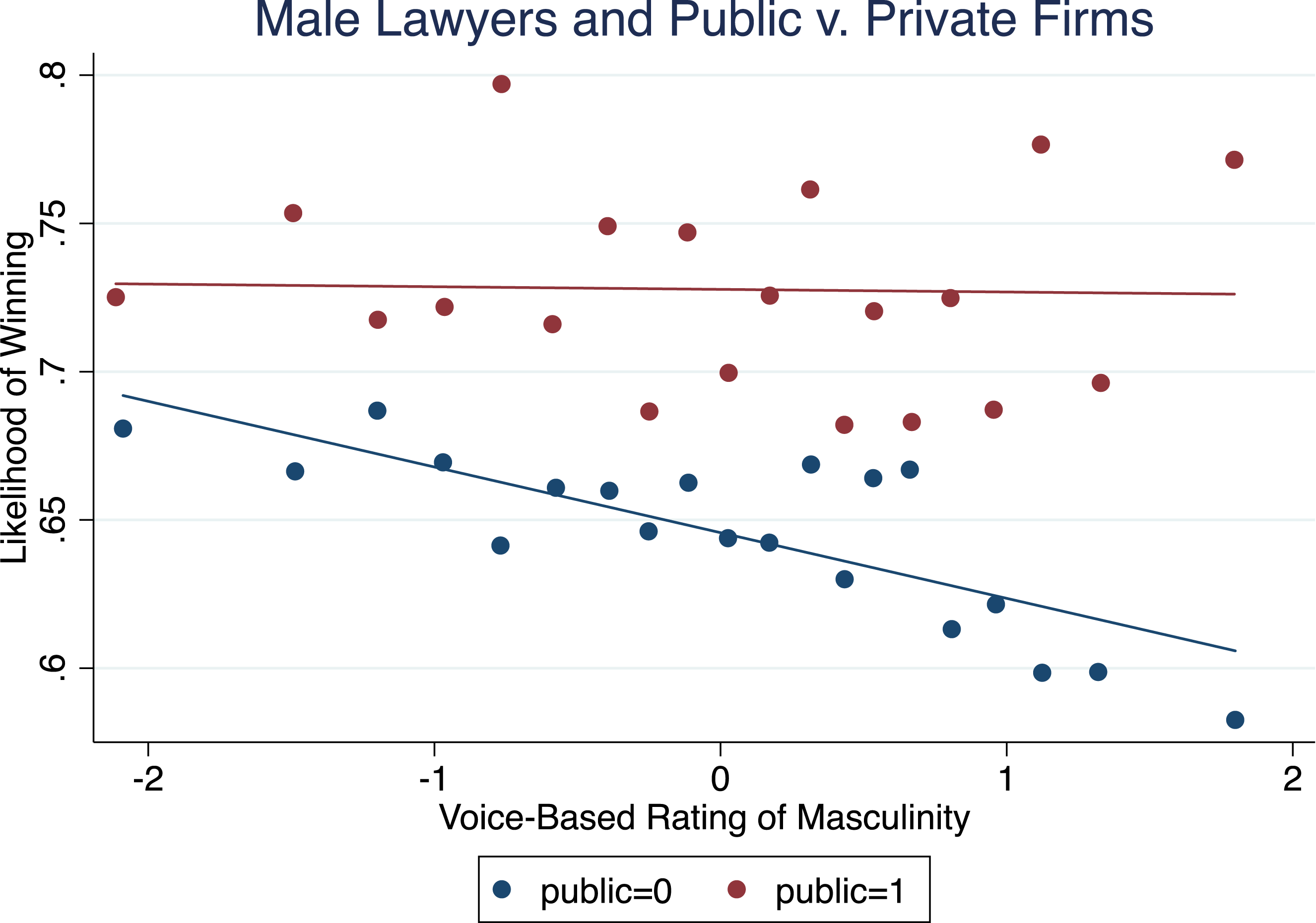

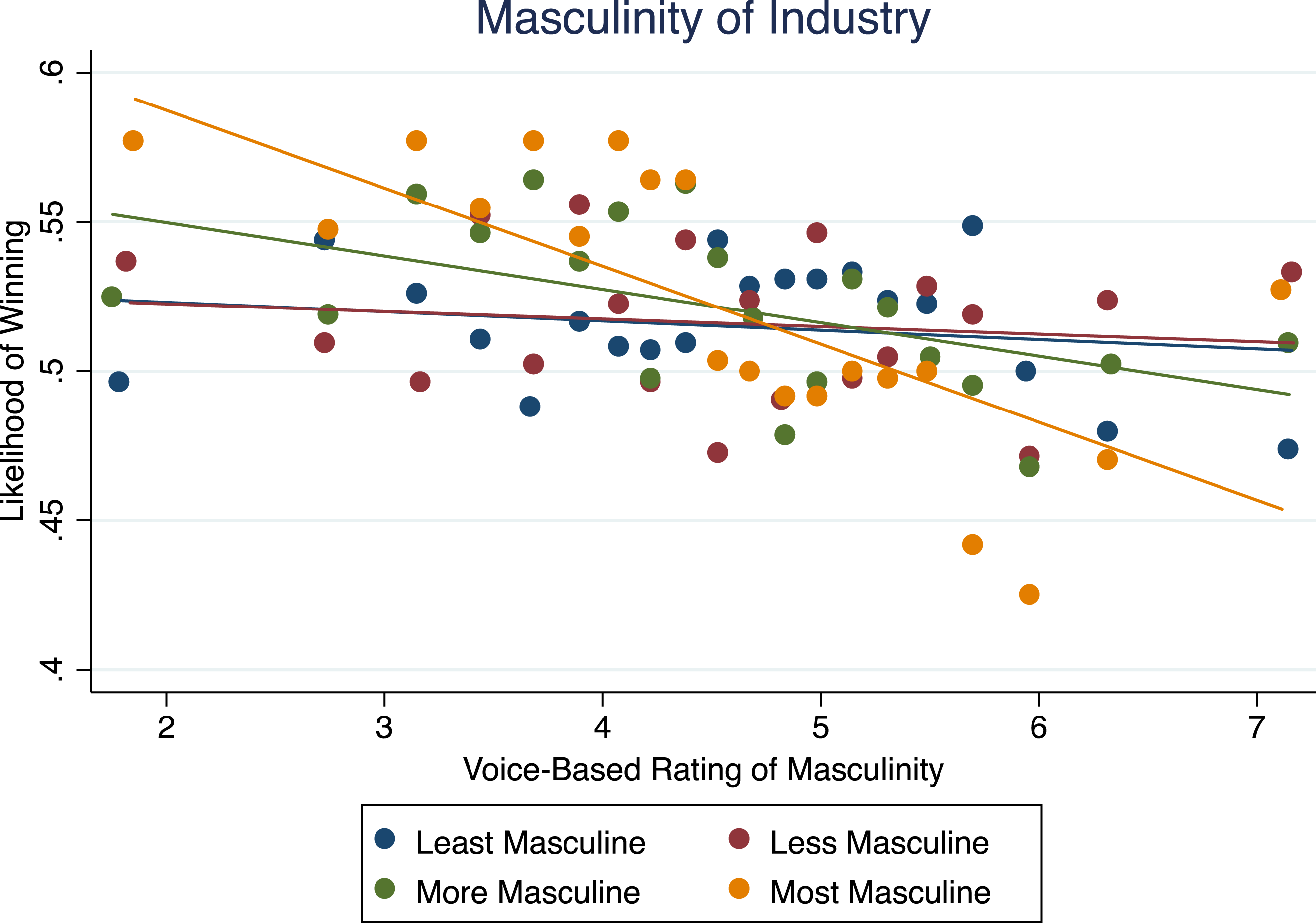

Masculinity of industry and response to masculinity (p < .05). Note. We classify petitioner industries by their average perceived masculinity and plot the correlation between perceived masculinity (X-axis) with case win rates (Y-axis). Each bin on the X-axis represents an equal-sized subset of lawyers by rating. Data suggest that in industries with the highest overall ”masculine-sounding” lawyers, perceived masculinity negatively correlates with success. Section 6.1 details these groupings.

To be more systematic, we next construct an average masculinity rating for every class of petitioners. Perceived masculinity is negatively correlated with outcomes for industries that have the most masculine-sounding lawyers. Figure 11 presents a gradient for four industry quartiles according to masculinity. The steepest gradient is for the most masculine and the flattest for the least masculine, which is consistent with differences in d > 0. 9

6.2. Information and Incentives

We now turn to our de-biasing experiment. Let y ij represent attitudes in treatment j = 1, 2, 3, 4 and recording i, F j be an indicator variable that takes a value of 1 if raters receive information about whether the attorney on the recording won the case or not, and 0 otherwise. I j indicates whether the raters were given an incentive to choose correctly (I j = 1) or not (I j = 0).

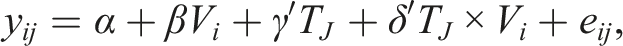

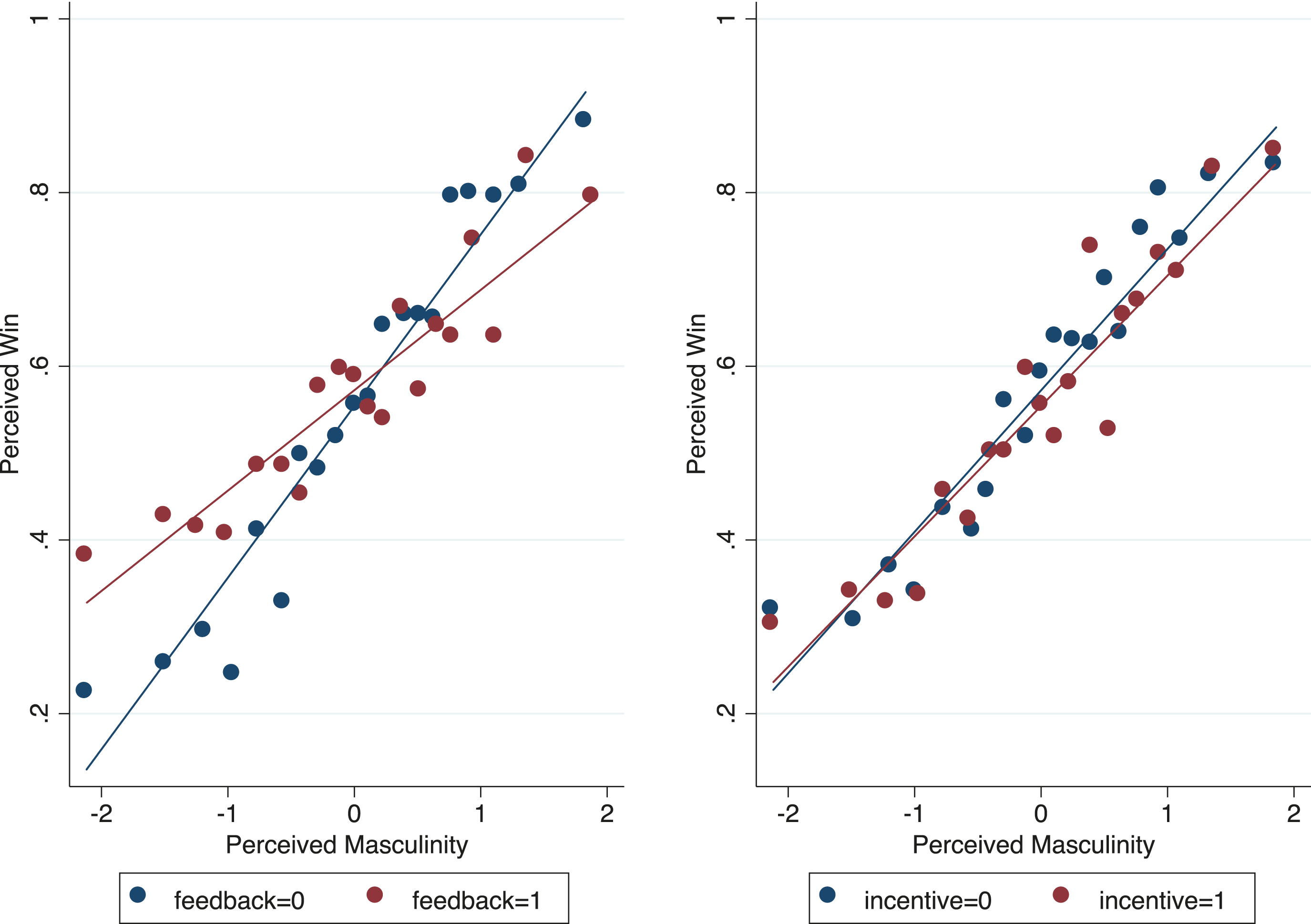

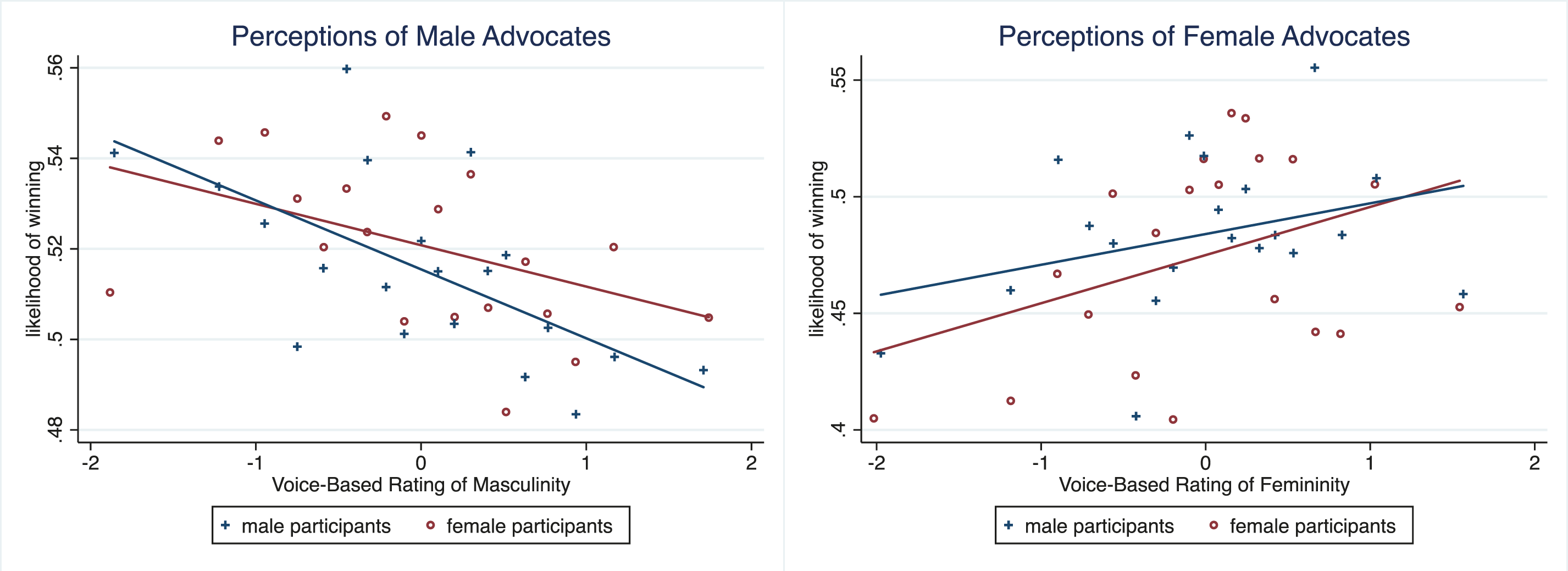

The estimation equation takes the following form:

Figure 12 shows that information reduces 40% of the correlation between perceived masculinity and perceived win (p < .05). In fact, all ratings’ positive correlations with perceived wins are affected by information but not incentives. Recall that none of the other ratings predicted actual wins, but raters generally associated all the attributes with a greater likelihood of winning. Feedback (p < .01), incentives. Note. De-biasing Experiment: Incentives and Feedback. Each binscatter line depicts the change in correlation between perceived masculinity and perceived win rate under different experimental conditions: (1) No Feedback (regardless of incentive), (2) Feedback (regardless of incentive), (3) No Incentive (regardless of feedback), (4) Incentive (regardless of feedback). Incentives = small bonus for accurate guesses. Feedback = telling participants the actual outcome after each rating. See Section 6.2 for the 22 design.

When we fully interact the treatments in Figure 13, we observe that incentives further reduce the correlation. Recall that incentives to choose correctly erode the effect of taste on choices (π

F

− π

M

> d/α). Providing incentives should bring d → 0. The existence of the effect (p < .05, 30% of association with feedback) also means d > 0 (else d/α would be 0 regardless of the size of α). Incentives (p < .05) with feedback. Note. De-biasing Experiment: Incentives and Feedback. Each binscatter line depicts the change in correlation between perceived masculinity and perceived win rate under different experimental conditions: (1) No Feedback + No Incentive, (2) Feedback + No incentive, (3) No Feedback + Incentive, (4) Feedback + Incentive. Incentives = small bonus for accurate guesses. Feedback = telling participants the actual outcome after each rating. See Section 6.2 for the 2 × 2 design.

6.3. Whose Perceptions of Masculinity Predict Outcomes?

Regardless of the reason for Justices to vote differently according to voice masculinity, lawyers should adjust, unless another audience, like employers, matters. We now investigate two issues regarding whether perceptions of voice might differ by rater characteristic. First, do certain individuals’ perceptions of masculinity (possibly those of law firm employers) predict court outcomes? Second, do certain individuals (possibly law firm employers) perceive masculine voices to be more likely to win?

We begin by analyzing the raters’ gender to benchmark our findings. Previous studies find that members of the same sex may use certain criteria to rate each other, which differ from those used to rate members of the opposite sex (Babel et al., 2014). Table A.5 shows that partial correlations among perceived attributes of men and women differ when rated by men or by women.

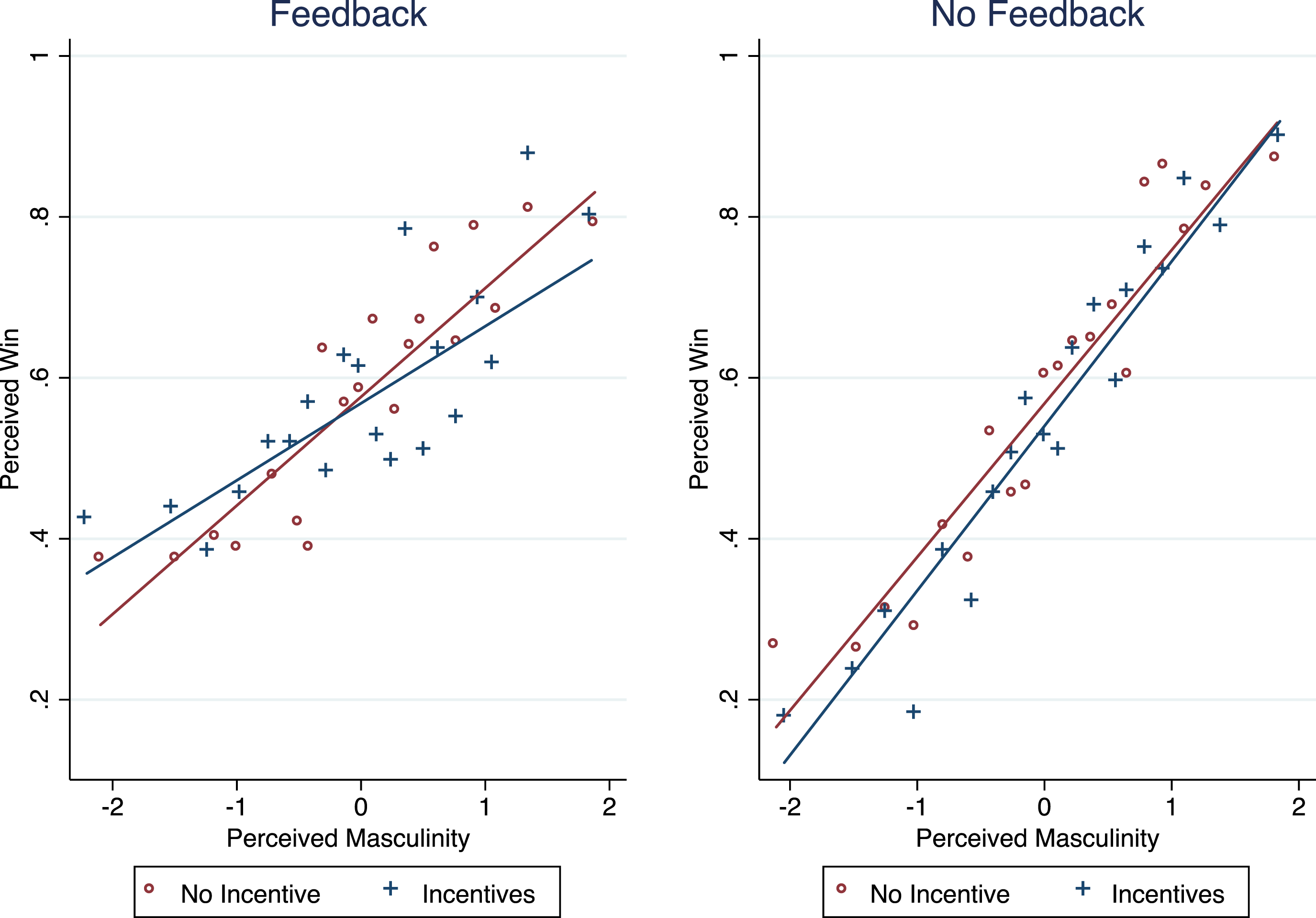

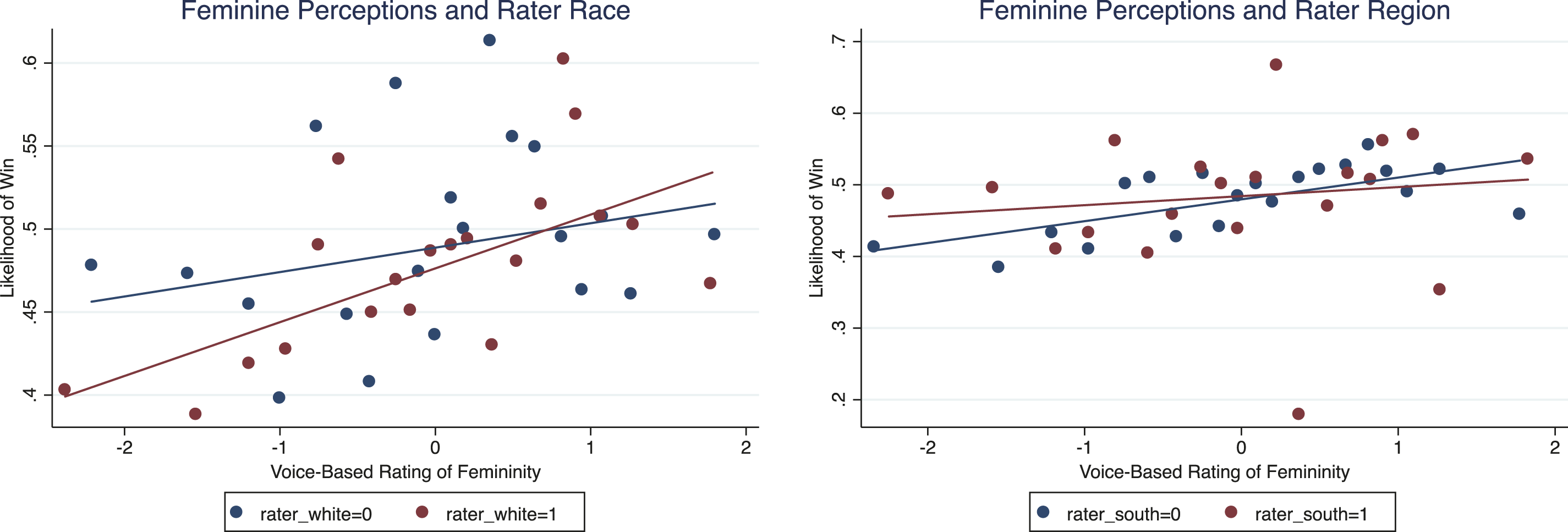

The associations stem more (but not significantly more) from the judgments made by male participants rating male lawyers and female participants rating female lawyers (Figure 14). Voice-based perceptions and court outcomes by advocate and participant gender. Note. Shows how male versus female raters perceive male versus female advocates, and how these differing perceptions correlate with Court outcomes. For instance, male raters of male advocates versus female raters of female advocates. Y-axis = average share of cases won. Section 6.3 describes the gender match analysis in detail.

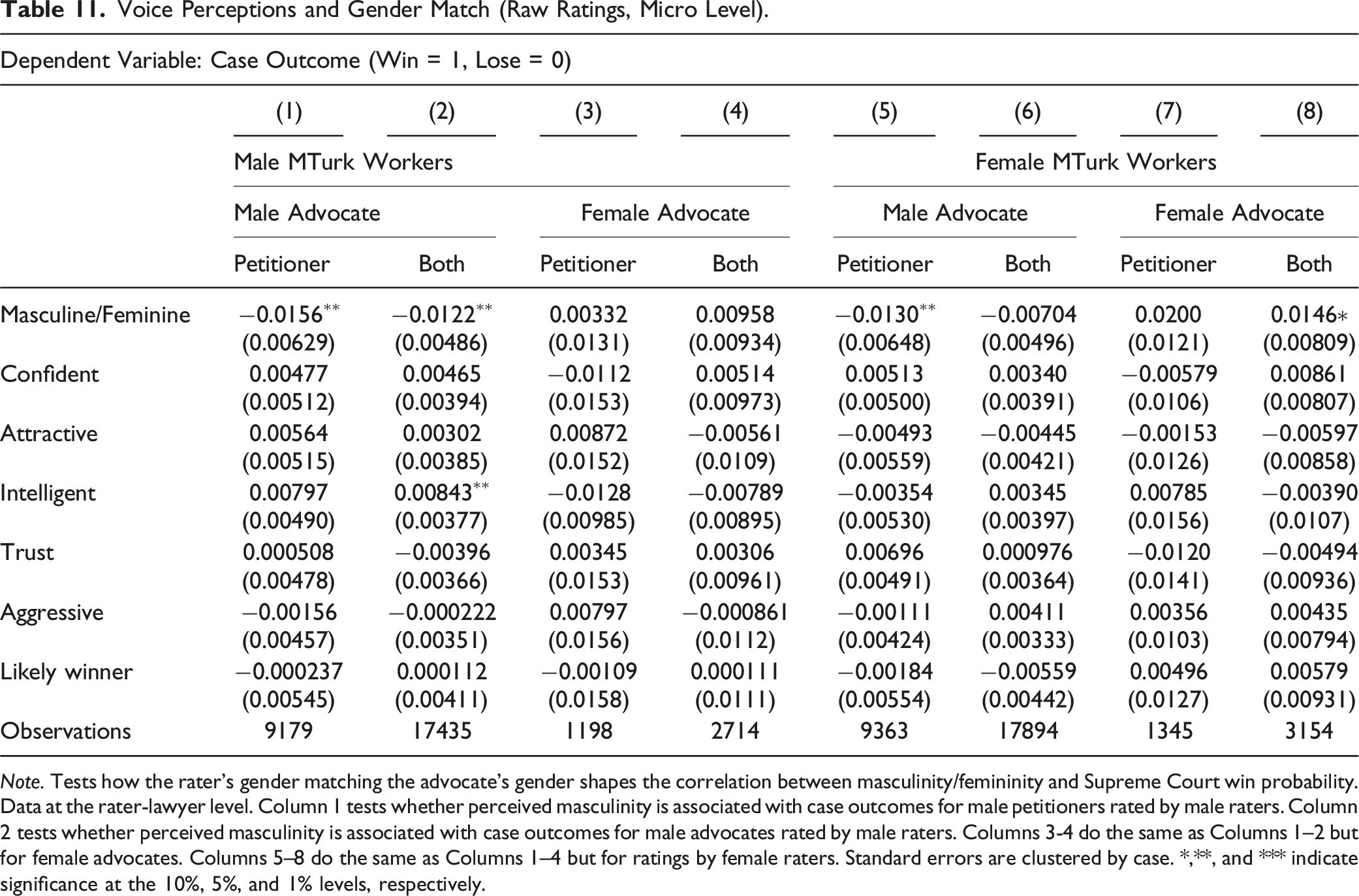

Voice Perceptions and Gender Match (Raw Ratings, Micro Level).

Note. Tests how the rater’s gender matching the advocate’s gender shapes the correlation between masculinity/femininity and Supreme Court win probability. Data at the rater-lawyer level. Column 1 tests whether perceived masculinity is associated with case outcomes for male petitioners rated by male raters. Column 2 tests whether perceived masculinity is associated with case outcomes for male advocates rated by male raters. Columns 3-4 do the same as Columns 1–2 but for female advocates. Columns 5–8 do the same as Columns 1–4 but for ratings by female raters. Standard errors are clustered by case. *,**, and *** indicate significance at the 10%, 5%, and 1% levels, respectively.

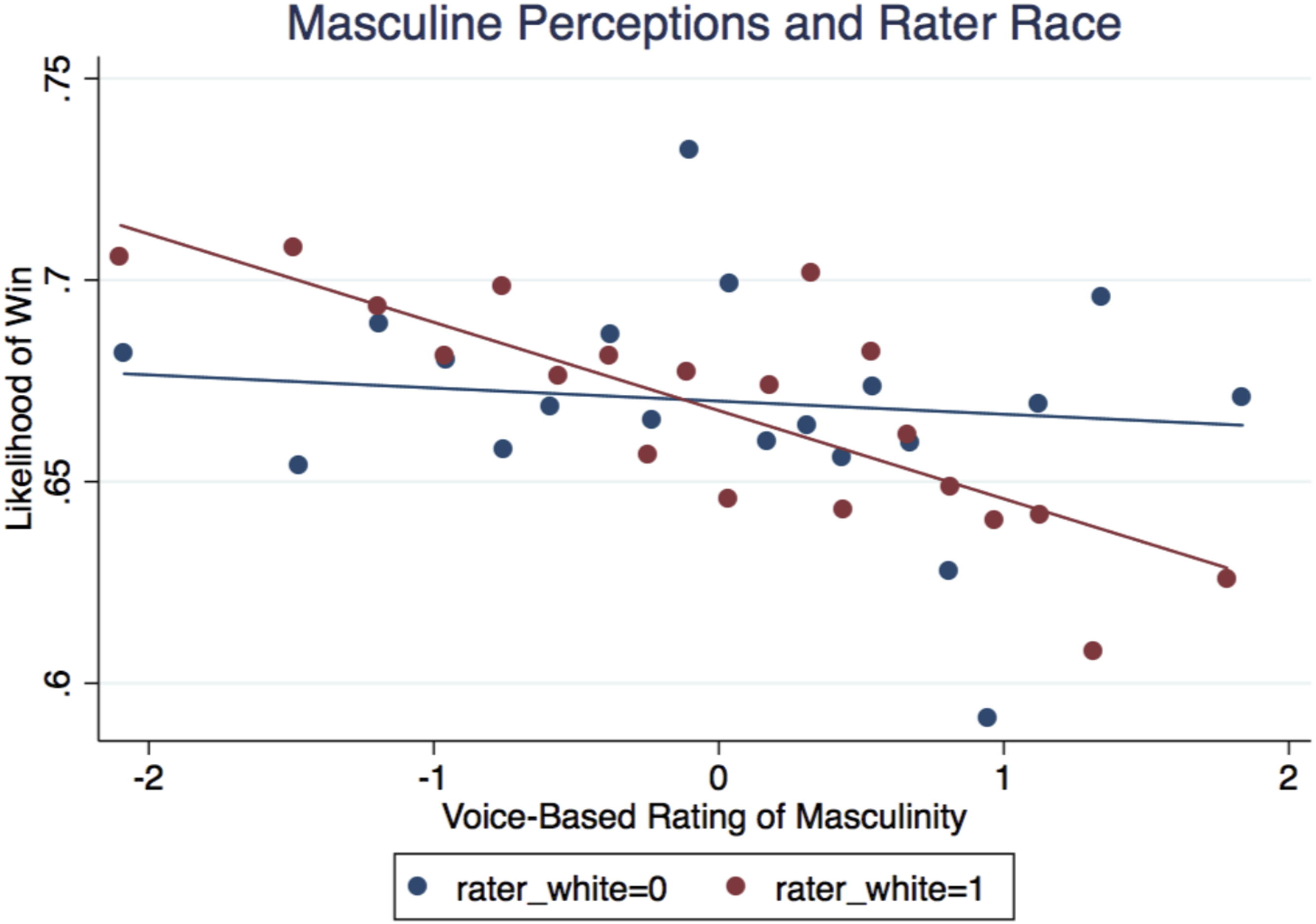

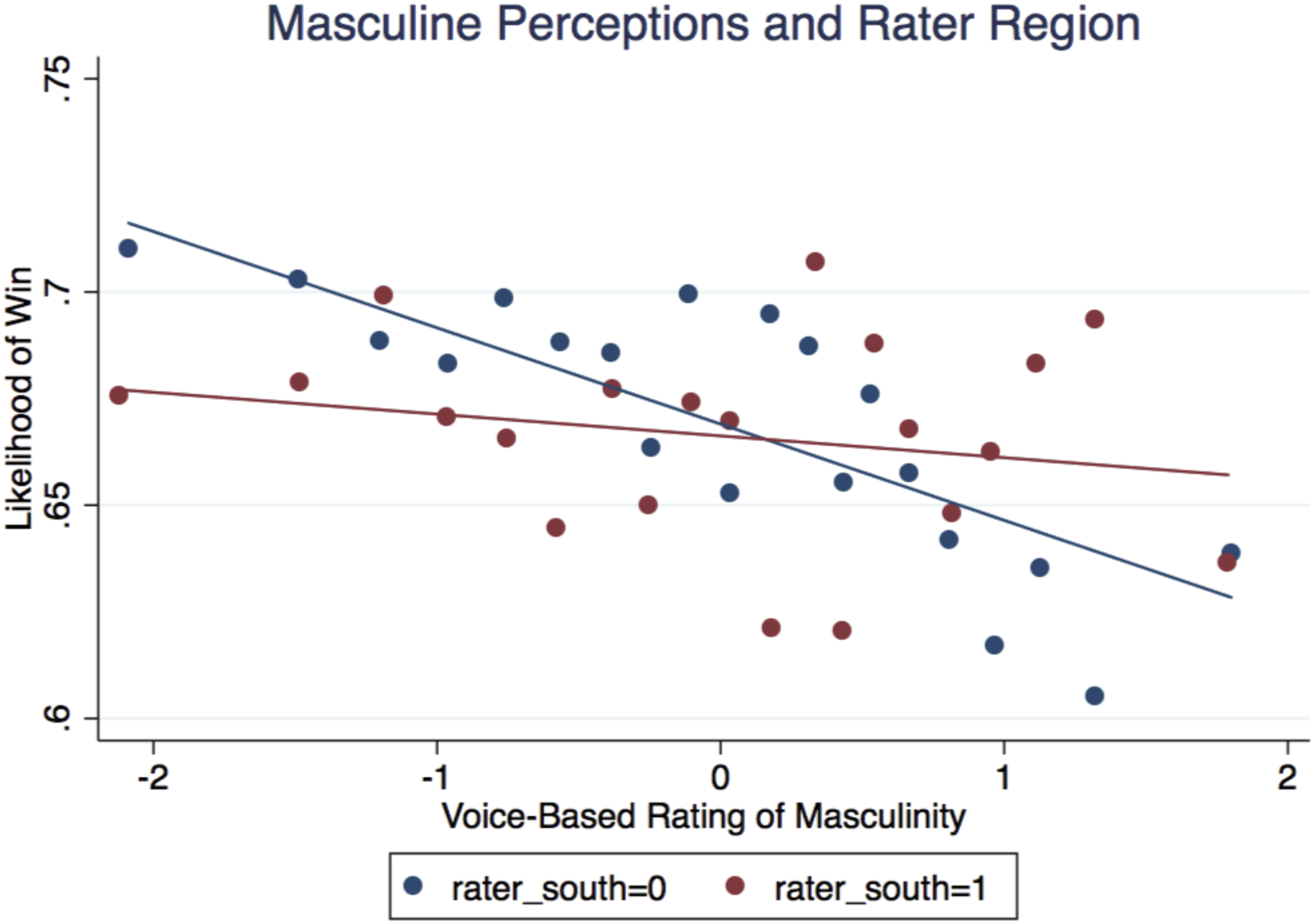

Next, we investigate other demographic characteristics that play a bigger role than gender. Specifically, we observe greater heterogeneity according to the rater’s self-identified race and region (Figures 15 and 16). White (p < .05) and non-Southerners’ (p < .05) perceptions of masculinity are more significantly predictive of court outcomes than other demographic groups’ perceptions.

11

If White non-Southerners are more involved in the process of selecting oral advocates, the stronger correlations would be consistent with the important role of the firm in explaining the negative correlation between masculinity and court outcomes. White raters’ perceptions of masculinity predicted court outcomes (p < .05). Note. Shows how White versus non-White raters perceive male advocates, and how these differing perceptions correlate with Court outcomes. Y-axis = average share of cases won. For more on rater demographic splits, see Section 6.3. Non-Southerners’ perceptions of masculinity predicted court outcomes (p < .05). Note. Shows how Southerner versus non-Southerner raters perceive male advocates, and how these differing perceptions correlate with Court outcomes. Y-axis = average share of cases won. For more on rater demographic splits, see Section 6.3.

Notably, White and non-Southerners’ perceptions of femininity are also more predictive of court outcomes, though the difference is less salient than for masculinity (Figure 17). White non-Southerners’ perceptions of femininity also predict court outcomes (p > .1). Note. Shows how White versus non-White raters and Southerner versus non-Southerner raters perceive female advocates, and how these differing perceptions correlate with Court outcomes. Y-axis = average share of cases won. For more on rater demographic splits, see Section 6.3.

When we examine raters’ perceptions of winning and masculinity, we see that poor individuals (p < .05) and non-whites (p < .05) are significantly less likely to correlate masculinity with winning. Poor individuals (p < .05), males (p < .1), and the less-educated (p < .05), are significantly less likely to correlate femininity with winning. If these individuals are less likely to be selecting oral advocates, the results are consistent with the role of firms in selecting masculine-sounding males as Supreme Court oral advocates. Perceptions of masculinity are subjective and not everyone perceives the same voice as masculine. Different stereotypes persist, which may drive behavioral responses.

6.4. What Are Lawyers Doing Over Time?

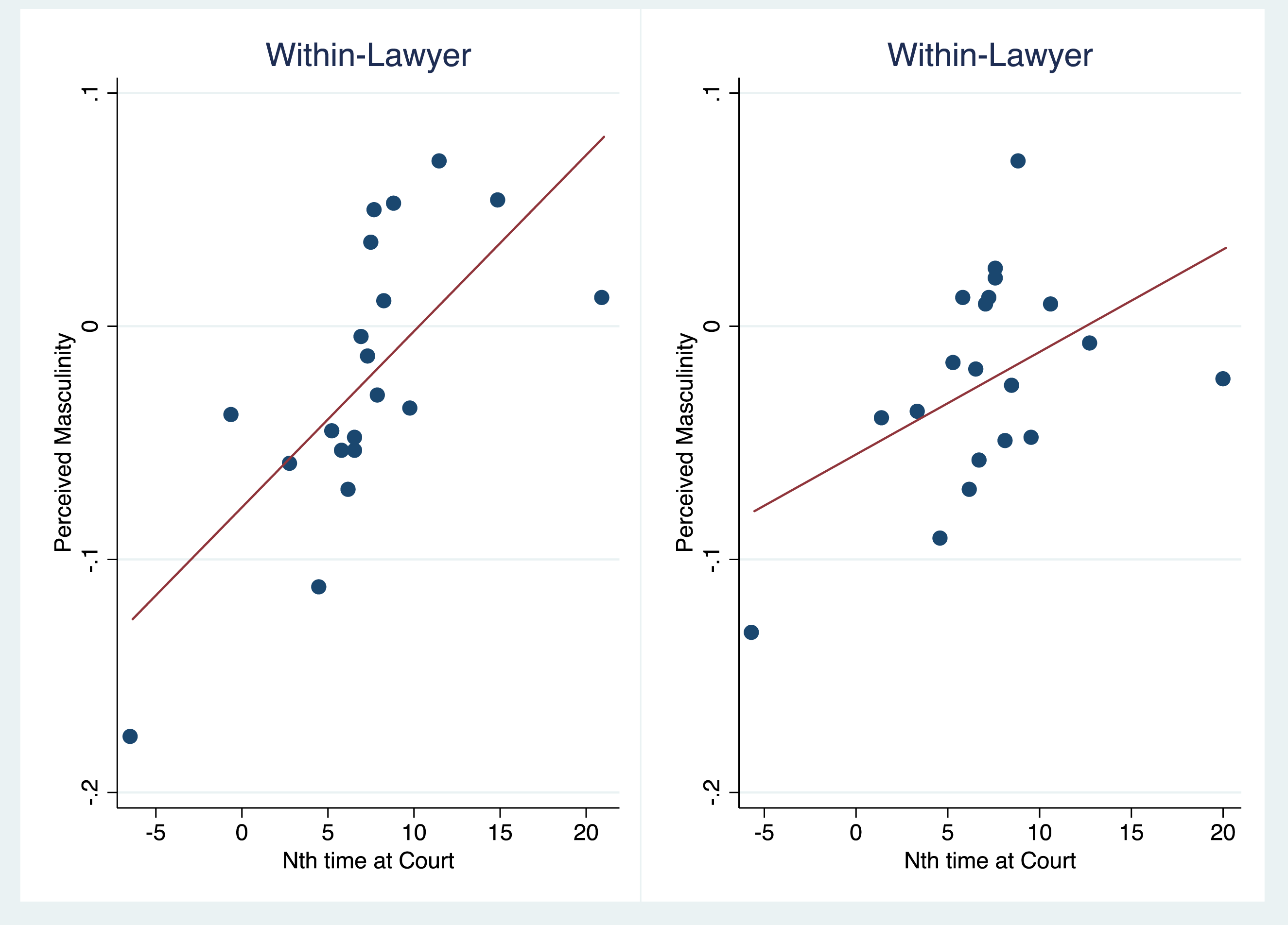

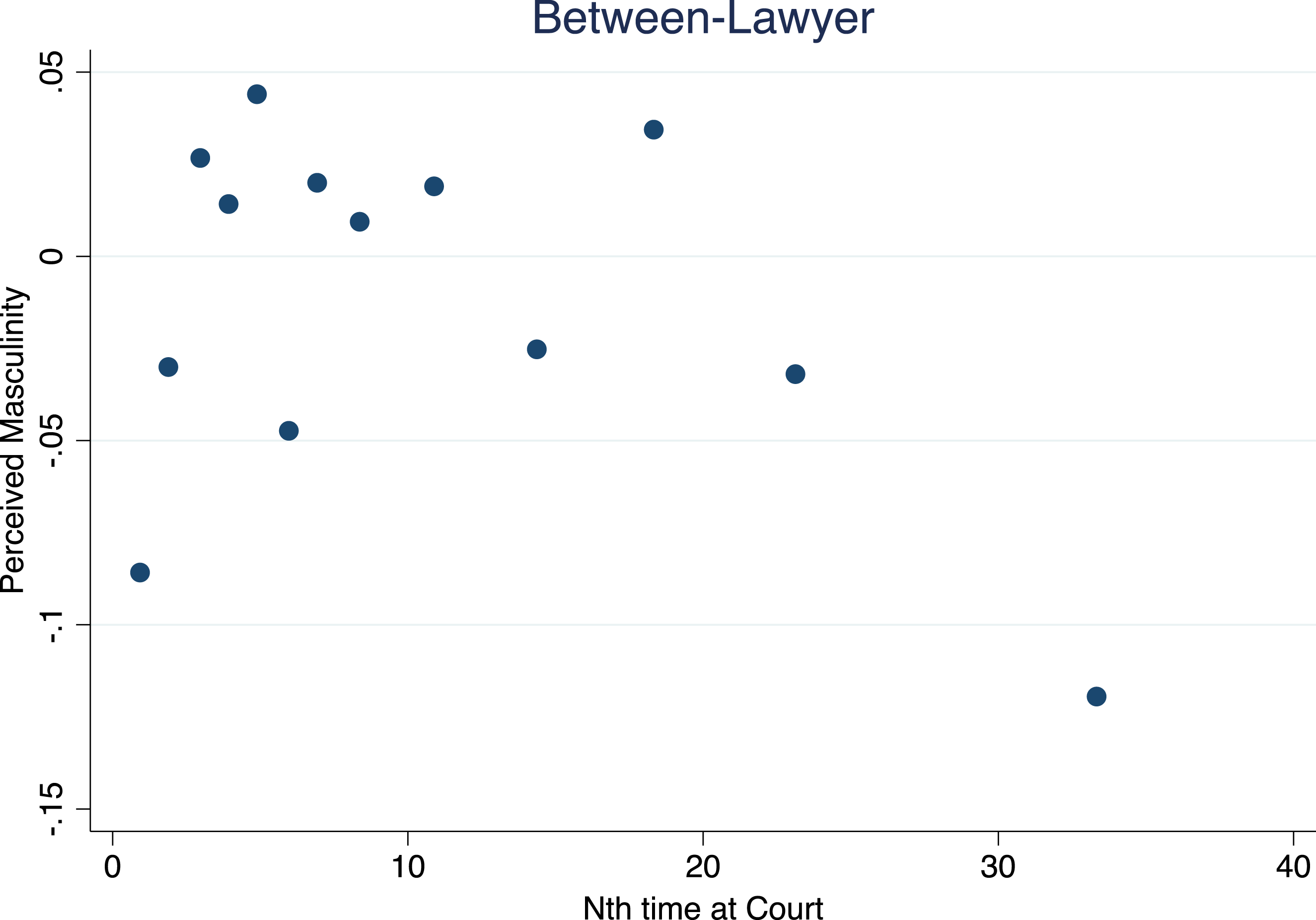

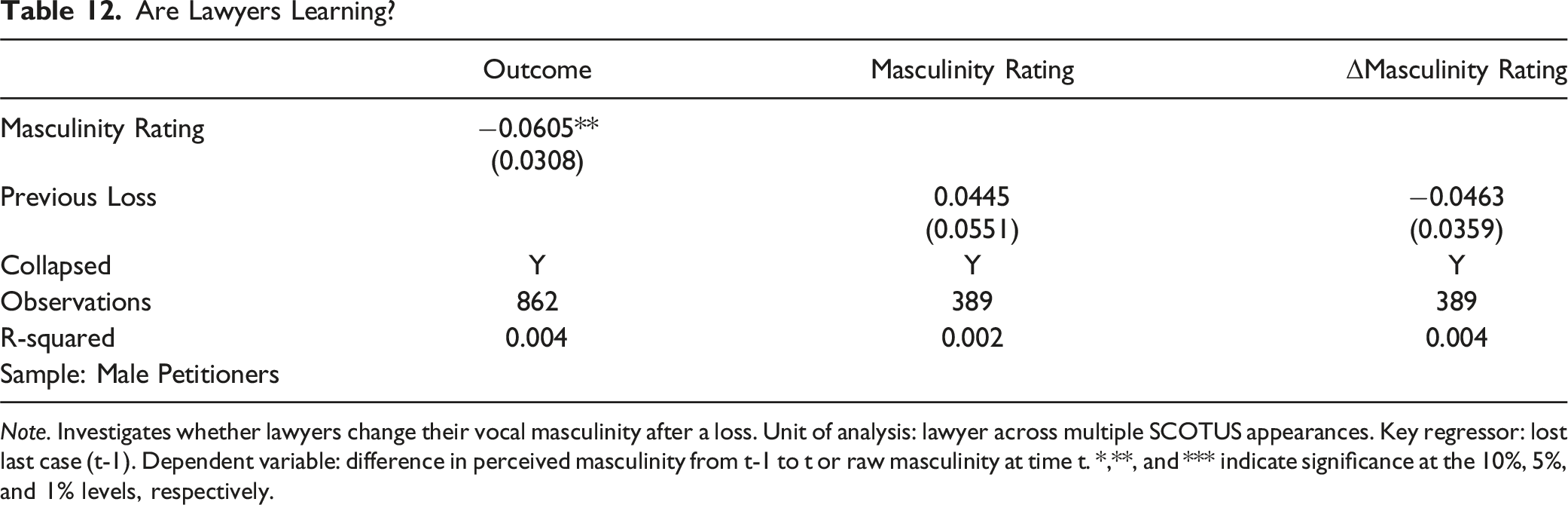

Voice appears to be mutable, and perceived masculinity is negatively correlated with winning in the Supreme Court. Do lawyers learn over time to make their voice less masculine? On the contrary, lawyers’ perceived masculinity increase over time as they appear before the SCOTUS more often. This is surprising since it would seem to suggest that lawyers are not learning and instead, adapt their voice in the opposite direction. Figure 18 visualizes how individual lawyers become more masculine over time. The left side presents the raw correlation, residualizing only on lawyer fixed effects. The right side also controls for years since law school graduation. Figure 19 presents the cross-sectional variation without lawyer fixed effects. Masculine ratings and order of appearance. Note. We track how advocates’ perceived masculinity changes across multiple appearances before the SCOTUS. X-axis = ordinal index of each argument (first, second, third appearance, etc.). Y-axis = average perceived masculinity. These graphics are residualized on lawyer fixed effects. The right-side graphic also residualizes for years since law school graduation. Section 6.4 explores whether lawyers become more or less masculine-sounding over time. Cross-sectional variation. Note. We track how advocates’ perceived masculinity changes across multiple appearances before the SCOTUS. X-axis = ordinal index of each argument (first, second, third appearance, etc.). Y-axis = average perceived masculinity. These graphics are not residualized on lawyer fixed effects to illustrate the cross-sectional variation. Section 6.4 discusses how different cohorts or experience levels show variation in average ratings.

Are Lawyers Learning?

Note. Investigates whether lawyers change their vocal masculinity after a loss. Unit of analysis: lawyer across multiple SCOTUS appearances. Key regressor: lost last case (t-1). Dependent variable: difference in perceived masculinity from t-1 to t or raw masculinity at time t. *,**, and *** indicate significance at the 10%, 5%, and 1% levels, respectively.

7. Discussion

Our approach integrates dual-process theories of cognition (Kahneman, 2011) and existing research on judicial behavior (Rachlinski et al., 2009a), proposing that vocal attributes act as an extralegal “System 1” heuristic in situations of uncertainty.

Even when Justices are extensively briefed, the sheer complexity of many cases—plus the limited time for oral argument—can encourage “on the spot” intuitions. A brief, deeply pitched utterance might subconsciously convey authority or confidence, while a higher, more “feminine” pitch might signal approachability or clarity (Klofstad et al., 2012). Our data show that these impressions, formed in seconds, correlate with final votes and case outcomes.

We hypothesize that voice may be a proxy for attorney quality (confidence, competence) or a stand-in for agreement with the Justice’s worldview. If a Justice is uncertain about the legal merits—or perceives both sides’ doctrinal arguments as roughly equal—vocal cues might tilt the balance. To test this, our empirical design includes controls for actual attorney credentials (e.g., prior SCOTUS arguments, clerkship experience), perceptions of confidence, intelligence, among other traits, and ideological salience (e.g., whether the case involves politically charged subject matter). If voice retains a significant effect even after these controls, it suggests the heuristic is not merely substituting for measured attorney skill or ideological alignment.

Our analysis also splits the sample by case complexity or level of disagreement (e.g., 5–4 vs. 9–0 decisions) to see whether the voice-based correlation is stronger in ambiguous or closely contested scenarios. Consistent with Guthrie et al. (2007) and Schubert et al. (2002), the data indicate that extralegal signals become more salient when the law does not provide a clear resolution or the Court is split ideologically. In these moments, “System 2” deliberation may be insufficient to entirely override the immediate, intuitive impressions formed as an advocate begins to speak.

While major ideological commitments or well-settled precedent can dominate a Justice’s vote, System 1 impressions can shape the trajectory of oral argument—e.g., by influencing the tone or aggressiveness of questioning. This dynamic can subtly color how the Court processes each side’s argument, even if, by opinion-writing stage, the Justice attempts a more deliberative approach.

We include appointing-president partisanship as one measure of judicial attitude, consistent with long-standing empirical practice in political science (Segal & Spaeth, 2002; Sunstein et al., 2006). The party of appointment has historically served as an imperfect yet widely accepted proxy for a Justice’s underlying ideological orientation—especially across decades. To refine this approach, we supplement the appointing-president variable with Martin–Quinn scores (Martin & Quinn, 2002), yielding a continuous measure of ideology over our entire sample.

Regarding the ideologies of the court of origin, we draw on the feature set developed by Katz et al. (2017), which leverages the Supreme Court Database (SCDB) and encodes each vote and case through a rich collection of variables. These include (i) basic categorical fields—such as petitioner, respondent, issue, case origin, and lower-court disposition—converted into numerous binary (dummy) indicators; (ii) chronological markers (term, month of argument, days from argument to decision); (iii) engineered features capturing each Justice’s historical behavior (e.g., reversal rate, proportion of liberal/conservative votes, dissent rate) and the difference between a Justice’s tendencies and the Court’s overall patterns; and (iv) court-of-origin groupings (federal circuits vs. state or administrative courts). By binarizing SCDB variables and adding these summary statistics, Katz et al. track both the ‘who, what, and where’ of a case and each Justice’s prior voting trajectory. Although they do not incorporate a specialized metric for state supreme court ideology, their random forest accommodates case origin, circuit, or state court category, and it infers broader patterns of judicial ideology via historical vote distributions. By including court of origin dummy indicators and year in the feature set, the random forest automatically incorporates time-varying ideological measures of the court of origin. This design ensures a comprehensive set of controls for case-level, judge-level, and procedural factors when predicting or explaining Supreme Court outcomes.

Our study relies on audio from 1998–2012 primarily because, during that period, Oyez.org provided high-quality, consistently labeled recordings that reliably matched transcripts at the individual speaker level. While Oyez originated at Chicago-Kent, it has since migrated hosting and consolidated its archive; we accessed the site under its historical affiliation at the time of data collection. Although Oyez now offers more recent material, that content was not fully synchronized or standardized at our project’s inception. Looking forward, additional analyses already support the generality of our findings. Dietrich et al. (2019), for instance, adopt a broader window without restricting to a single sentence or relying on human-subject impressions, and still find that higher-pitched advocates are likelier to succeed. Similarly, Chen (2018) leverages the entire archive from 1955 onward, combining facial and vocal cues to improve prediction of Supreme Court outcomes. These replications suggest that, despite the narrower 1998–2012 range here, pitch and other acoustic qualities are robustly correlated with judicial decision-making across a wider historical sweep.