Abstract

This study investigates human–machine interaction in the workflow of automatic speech recognition (ASR)-assisted simultaneous interpreting of speeches with different delivery rates. Specifically, the study examines how student interpreters interact with ASR-generated text and how it differentially affects interpreting performance across speech rates. To address these questions, caption-viewing patterns (with ASR live captions) and interpreting performance at varying speech rates were collected and analysed. The findings indicate that interpreting performance improves significantly as input rates increase when supported by ASR-generated text, whereas no notable improvement is observed at slower speech rate. Patterns and behaviour of how student interpreters resort to live captions were found to be subject to individual differences. The study highlights the need for targeted training to help student interpreters utilise live transcriptions to facilitate their performance.

Keywords

1. Introduction

The rise of technology and artificial intelligence is transforming the workflow of interpreting. Rather than being fully replaced by technology, interpreters are likely to transition towards a future in which they are assisted by and increasingly dependent on technology (Chen & Kruger, 2024). In the research domain, discussions about the integration of technologies mainly focused on computer-assisted interpreting (CAI) systems. The current definition of CAI highlights features such as automatic speech recognition (ASR) and automatic term retrieval, with the aim of supporting interpreters at various stages of the interpreting process (Fantinuoli, 2021; Liu et al., 2024). Prior studies examining the interaction between human interpreters and ASR-generated texts (Defrancq & Fantinuoli, 2021; Desmet et al., 2018; Fantinuoli, 2021; Pisani & Fantinuoli, 2021; Wang et al., 2025; Yuan & Wang, 2023) have mainly focused on the types of information, such as numbers and proper nouns, that are most beneficial for interpreters. Some studies (e.g., Cheung & Li, 2022; Su & Li, 2024; Yuan & Wang, 2023) investigated the effect of ASR captions from the perspective of cognitive load and cognitive processing patterns. Others (e.g., Wang et al., 2025) have explored the effect of ASR based on trainee interpreters’ perceptions and attention distribution.

However, no empirical studies have examined the interaction with AI-powered technology in interpreting of speeches with different speech rates. The manipulation of input rate in this study is based on the assumption that simultaneous interpreting (SI) quality may suffer as input rate increases (Liu et al., 2024) and that ASR-generated captions may provide supportive assistance when task difficulty rises. The study also responds to Wang et al.’s (2025) call for further research to explore live transcription’s impact across various settings.

2. Literature review

2.1 SI as a complex information processing task

The integration of machine-generated text was proposed due to the multitasking nature of SI and the demands SI makes on interpreters’ cognitive resources, especially for student interpreters due to their limited experience. SI is a complicated multitasking process that involves active listening, message analysis, delivery, and other subtasks such as segment recognition, adjustment, self-monitoring, and so on, all of which happen almost simultaneously (Camayd-Freixas, 2011). In interpreting studies, scholars (Gile, 2009; Seeber, 2011) have investigated the mental processes underlying interpreting and developed theoretical models to describe them. Two representative models are Gile’s (2009) Effort Model and the Cognitive Load Model developed by Seeber (2011). The Effort Model is “a set of models that attempt to explain interpreting difficulty that could help with the selection and development of strategies and tactics towards better interpreting performance” (Gile, 1995, p. 159). For SI, the Effort Model can be represented as SI = L (listening and analysis) + P (Production) + M (Memory) + C (Coordination effort). Among them, each Effort deals with a different speech segment and each Effort is determined by their interaction with each other (Gile, 2009). A smooth interpreting process requires the total processing capacity to be below the interpreter’s total available capacity and each active Effort to be sufficient for performing the corresponding task (Gile, 2009). Building on this foundation, Seeber (2011) proposed the Cognitive Load Model, which conceptualises SI as a complex multitasking activity that requires the interpreter to engage in language comprehension and language production at the same time. Each of these tasks can be further decomposed into perceptual, cognitive, and response components. A key contribution of the Cognitive Load Model is its recognition of the conflict and overlap between comprehension and production processes during interpreting (Seeber, 2013). This model also sets the stage for the integration of the multimodality approach in interpreting research (Zhu & Aryadoust, 2022).

Apart from traditional visual modality, technological developments and the emergence of ASR-generated live captions have given rise to a new modality of practicing SI—the visual reading of live transcriptions. This is where the concept of multimodality becomes relevant, referring to the interplay between different representational modes such as written and spoken word (Korhonen, 2010). To have a closer look at this process, interpreters are required to simultaneously process visual input (reading captions), auditory input (listening to the speaker), and produce verbal output in another language. These modalities operate concurrently and impose substantial demands on cognitive resources. Interpreting with ASR assistance, therefore, merits scholarly attention, as it introduces an additional cognitive dimension beyond the conventional cognitive load (see Seeber, 2017).

2.2 Empirical studies of CAI

The goal of ASR integration into interpreting is to present interpreters with elements deemed difficult to interpret such as numbers, specialised terminology, and names (Desmet et al., 2018; Gile, 2009; Prandi, 2023). Currently, there are three types of CAI tools, all of which are centred around ASR technology. However, these tools differ in how they present text: some display real-time captions of the source language (e.g., Wang et al., 2025; Yuan & Wang, 2023), others provide target text generated through machine translation (MT) (e.g., Chen & Kruger, 2024; Su & Li, 2024; Wang & Wang, 2019), and some offer automated selection of numbers and glossary translations (e.g., Defrancq & Fantinuoli, 2021; Desmet et al., 2018; Liu et al., 2024; Pisani & Fantinuoli, 2021). Recent progress in research on CAI tool-related studies focuses on three main topics: (1) the general discussion of whether CAI tool can enhance interpreting (e.g., Su & Li, 2024; Wang & Wang, 2019); (2) the kind of information that contributes to the benefit of CAI tool (e.g., Defrancq & Fantinuoli, 2021; Desmet et al., 2018; Liu et al., 2024; Pisani & Fantinuoli, 2021; Yuan & Wang, 2023); and (3) potential workflows of ASR-assisted interpreting (e.g., Chen & Kruger, 2024; Sihee, 2025).

Various ASR-related studies have proven that ASR live captions are capable of improving interpreters’ accuracy in providing non-contextual information like numbers and proper nouns (Defrancq & Fantinuoli, 2021; Desmet et al., 2018; Pisani & Fantinuoli, 2021; Yuan & Wang, 2023). Desmet et al. (2018) demonstrated that texts that offer non-contextual stimuli, such as numbers and names, can enhance interpreters’ accuracy and performance. The same benefit also applies to live captions generated by ASR. The study conducted by Defrancq and Fantinuoli (2021) showed that the rendition of numbers improved with the assistance of ASR software for student interpreters. Pisani and Fantinuoli’s (2021) experiment on the impact of ASR confirmed that ASR effectively provides support during the interpretation of speeches dense in numbers. A recent eye-tracking experiment conducted by Yuan and Wang (2023) among student interpreters also suggested that interpreters would put special focus on information chunks that include numbers and proper nouns. Their performance data showed that ASR improves interpreters’ accuracy in rendering numbers and proper names.

However, in addition to the positive impact on interpreting accuracy, two studies have highlighted the potential negative effects of CAI tools on fluency. Wang and Wang (2019) found a dual effect of CAI on fluency but were unable to establish a clear trend regarding how the tool affects interpreters’ fluency. Later, Cheung and Li (2022) found that although CAI tools improved the accuracy of student interpreters’ target texts, they led to a decrease in fluency.

Apart from the quality assessment approach, studies are starting to understand CAI from more diverse angles. For example, Jin (2025) looked at CAI as a new workflow of interpreting practices and proposed adopting respeaking using ASR captions into translation and interpreting training programmes. Chen and Kruger (2024) introduced a computer-assisted consecutive interpreting (CACI) workflow, formally incorporating automatic respeaking technologies, including speech recognition (SR) and MT, into the interpreting process. Wang et al. (2025) investigated CAI tools from trainee interpreters’ feedback and categorised the attention division while interpreting with ASR texts.

3. Research questions and hypothesis

The purpose of the study is to investigate how live caption plays its role in facilitating SI of speeches with fast and slow rates. Moreover, as the interpreters were given the option of looking at the captions when needed, the study intends to investigate interpreters’ caption-viewing pattern of utilising ASR live transcriptions. To address the objective, the study proposed the following research questions:

RQ1. To what extent do captions influence interpreters’ performance across varying speech rates in terms of accuracy, completeness, and overall quality?

RQ2. How do interpreters’ viewing duration and frequency of ASR-generated captions vary across different speech rate conditions?

RQ3. How do interpreters interact with live captions as reflected in their individual viewing patterns?

RQ1 examines the potential dual effects of ASR live captions on interpreters. On one hand, findings may indicate that ASR captions interfere with interpreters’ performance by increasing cognitive load and reducing accuracy. On the other hand, captions could serve as a supportive aid, particularly in challenging scenarios such as fast rates. RQ2 and RQ3 investigate the role of captions in SI under varying levels of input difficulty (fast vs. slow). To address RQ2, the study will analyse the correlation between caption-viewing duration and caption-viewing frequency across different input rates. For RQ3, the research aims to identify patterns in interpreters’ reliance on live captions and determine the specific circumstances under which they choose to utilise, or disregard, the captions.

Based on the research questions, the study proposes the following hypothesis:

H1. ASR captions are expected to enhance interpreters’ performance across both speech rate conditions. However, the facilitative effect is predicted to be stronger at faster speech rates, suggesting that captions are particularly beneficial when interpreters are engaged in more challenging tasks.

H2. Interpreters’ caption-viewing behaviours, including reading frequency and duration, are expected to vary with speech rate. Specifically, interpreters are predicted to view captions more frequently and for longer periods during fast speech than during slow speech.

4. Research design

4.1 Participants

Twelve postgraduate students (female: 9; male: 3) majoring in translation and interpreting from the world’s top-tier T&I programmes gave their consent to participate in this study. All participants have received at least 1 year of professional SI training. All the participants have Chinese as their first language (L1) and English as their second language (L2).

4.2 Materials

The materials were selected from two TED talks on the topic of artificial intelligence, a subject frequently emphasised in the Translation and Interpreting (T&I) curriculum. The speeches were first transcribed and then edited for experimental purposes. Sentences or content that relied solely on charts, images, or other visual inputs were removed. To examine the potential effect of ASR-generated text, low-frequency words were retained in accordance with the word frequency effect theory (Clark, 1992), as interpreters were expected to seek visual cues to support memory recall for such terms.

The edited speeches were recorded by a native English university instructor with clear and comprehensible pronunciation, minimising comprehension difficulties due to accent. To ensure natural delivery and prevent any artificial modification that might affect speech quality or clarity, the speaker produced the speeches at two controlled rates. The fast and slow delivery rates were 156.5 and 113.4 words per minute, respectively. These rates were chosen based on prior research (Han & Riazi, 2017; Korpal, 2016; Pio, 2003; Shlesinger, 2003), with slow speeches ranging between 100 and 130 wpm and fast rate between 140 and 170 wpm.

The readability of the speeches was quantified using the Flesch Reading Ease score, a widely used index of textual complexity. The Flesch Reading Ease scores for the four tasks were as follows: for the fast delivery tasks, 65.17 for the task without captions and 64.72 for the task with captions, and for the slow delivery tasks, 71.84 without captions and 70.59 with captions. These results indicate that the speeches in both fast and slow conditions exhibit comparable levels of readability. This uniformity ensures that the study’s outcomes are primarily influenced by the presence or absence of live transcription and by speech rate, rather than by differences in speech complexity.

4.3 Procedure

The experiment adopted a 2 × 2 within-subject design. The independent variables were speech rate (fast vs. slow) and caption condition (with vs. without live captions). The dependent variables were interpreters’ caption-viewing behaviour and SI performance. Each participant completed four interpreting tasks covering all combinations of the two factors—that is, slow and fast speeches performed with and without captions.

This study adopted a screen-based observational design. Data were collected through two sources: audio recordings of the SI output and video recordings of the interpreting process. The live captions were displayed on a computer screen positioned to the left of the participant. Prior to the experiment, participants were briefed to avoid turning towards the monitor unless they intended to consult the captions for assistance. Head and eye movements were analysed as indicators of whether the interpreter sought assistance from the live captions. The entire interpreting process was video recorded to track participants’ interactions with the live captions. During SI, turning towards the screen was taken to indicate that the interpreter was seeking support from the ASR-generated text, whereas refraining from looking at the screen suggested that the interpreter either did not find the captions useful or preferred to rely on conventional interpreting strategies. To control for potential order effects, participants were randomly assigned to one of two task sequences: slow-slow-fast-fast or fast-fast-slow-slow. The final distribution was balanced, with an equal number of participants completing the fast-first and slow-first sequences.

4.4 Apparatus

The study used Iflytek ASR software as the captioning apparatus (see Figure 1). Iflytek provided ASR that converts audio streams into text in real time. The software applied a large-scale language model, intelligent segmentation, and punctuation prediction. According to the official specifications provided by Iflytek (iFLYTEK, n.d.), the system achieves an SR accuracy rate of approximately 97.5% and offers a low latency of around 200 ms. Meanwhile, Iflytek ASR was also a commonly used engine in academic research. In a review paper on the current status of CAI tools (Guo et al., 2023), it was found that out of 27 empirical studies reviewed, 11 utilised Iflytek ASR-related software. Moreover, the software was also equipped with a user-friendly interface, as only the page of the caption is shown on the screen, eliminating other interfering factors that may affect the result of the study.

Screenshot of Iflytek ASR software.

4.5 Data collection and analysis

The recorded interpreting output of each participant was transcribed using Microsoft Teams, and the resulting transcripts were evaluated for accuracy and completeness. The transcripts of both the fast and slow speech conditions were divided into 22 segments. The weighting of each segment score was determined according to the distribution of information and sentence density within that segment. A professional interpreter with 5 years of interpreting experience was invited to assess each segment for accuracy and completeness. The overall interpreting performance score was then calculated using a weighted formula: Accuracy (60%) + Completeness (40%) = Total Performance Score (Wu, 2011). Total scores were rounded up to the nearest decimal place if the digit following the decimal was 5 or greater.

After collecting the data, the descriptive statistics were visualised using boxplots, whereas the statistical analysis was conducted using

5. Findings

Based on the qualitative data collected from the interview, there are several positive influences of using captions. First, they provide useful information such as numbers and detailed information. For example, 25% of the participants highlighted how captions are particularly helpful for providing large numbers. Second, captions play a complementary role in their interpreting. ASR captions help relieve interpreters’ short-term memory load by filling in missing information and aiding in maintaining the flow of interpretation. This support allows interpreters to keep up with the speech and shorten their eye-voice span (EVS). Captions also assist participants in understanding the structure of the speech and grasping its meaning as mentioned by one participant.

Regarding the negative influence of ASR-generated texts, captions pose a challenge to interpreters’ multitasking skills. Feedback indicates that most participants struggle to balance listening, processing the meaning, and reading the caption. A total of 37.5% of the participants reported that they could not hear properly when focusing on the caption. Behavioural patterns observed in the study support this: 40% of the time participants spent looking at the caption silently, indicating that the other modality was interrupted by the caption. One participant also mentioned missing entire sentences when focusing too much on the caption to avoid missing information, resulting in distraction from listening to the speaker.

Moreover, latency and self-correction inherent in ASR technology have frequently been mentioned by participants as shortcomings in the modality of SI with captions. The long latency and ASR self-correcting function create visual interference for the interpreter. Nearly all participants mentioned that the long latency could be annoying if not managed properly. This finding aligns with Sridhar et al.’s (2013) concern that live captions can create problems due to the high latency associated with long segments.

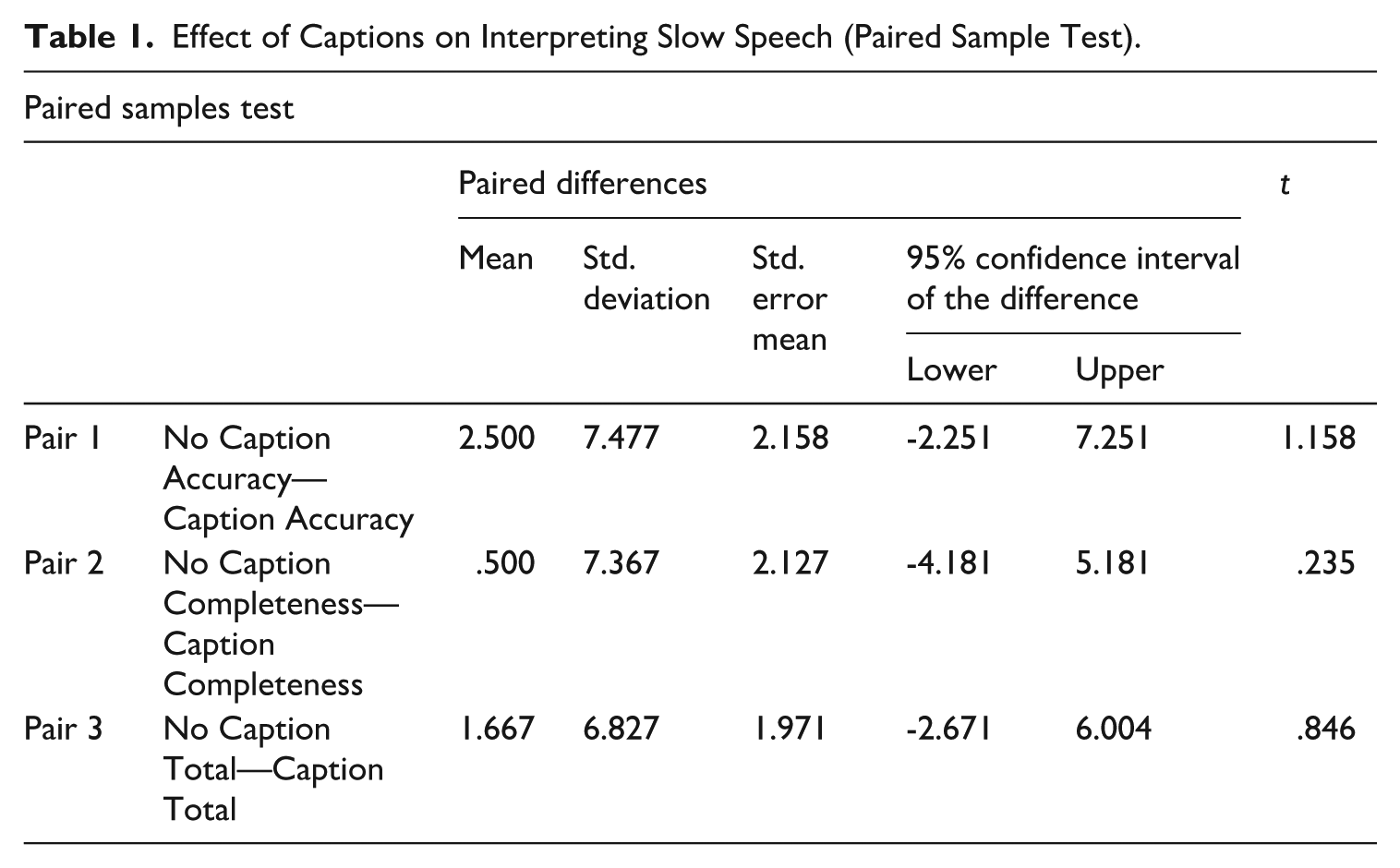

5.1 Different effects of ASR captioning on interpreting of fast and slow speeches

5.1.1 Insignificant effect of caption in enhancing interpreting of slow speeches

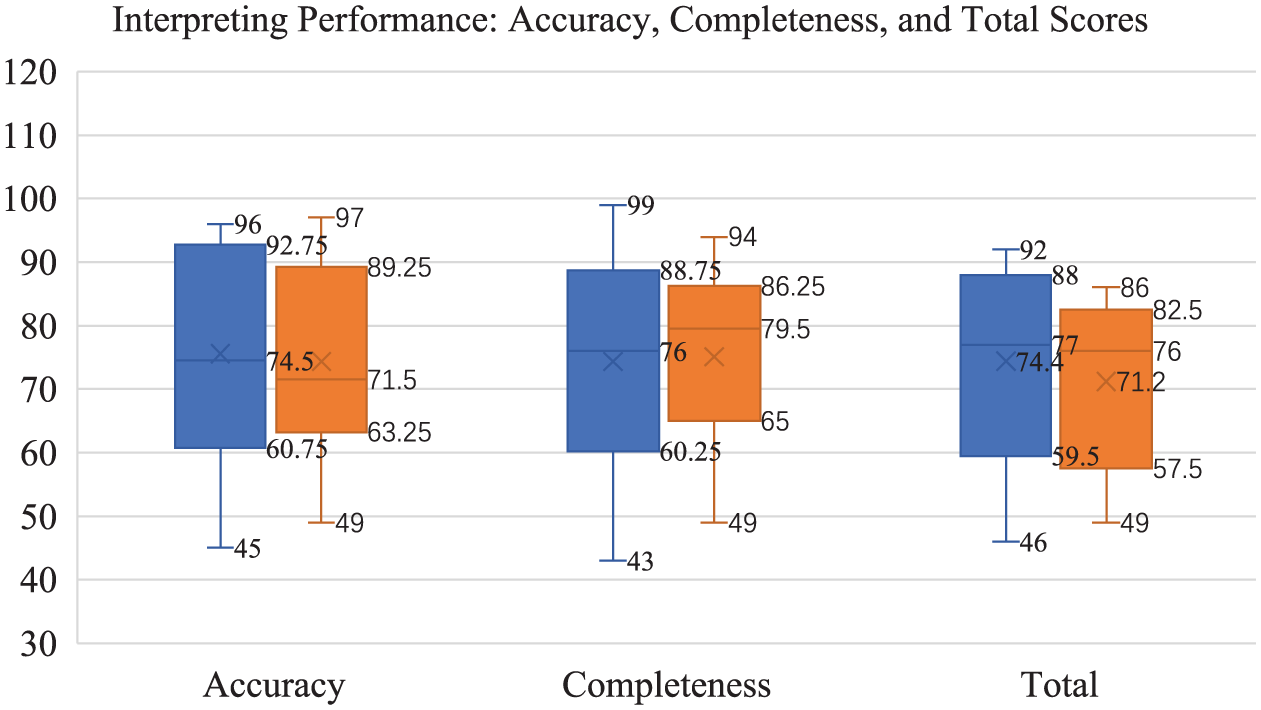

Descriptive data for the slow speech rate condition were summarised in Figure 2, which illustrates performance differences between the No Caption and Caption conditions across three key metrics: Accuracy, Completeness, and Total Score. The No Caption condition exhibited wider score distributions and more outliers, suggesting greater variability in interpreter performance. This dispersion may reflect individual differences in ability or the influence of external factors. In contrast, the Caption condition demonstrated slightly lower median and mean scores, along with a narrower interquartile range. This pattern implies that although live captions may have reduced performance variability, potentially by standardising cognitive load, they did not enhance overall accuracy under slow speech conditions.

Boxplot of Interpreting Performance Metrics: Accuracy, Completeness, and Total Scores.

The results are further confirmed by the

Effect of Captions on Interpreting Slow Speech (Paired Sample Test).

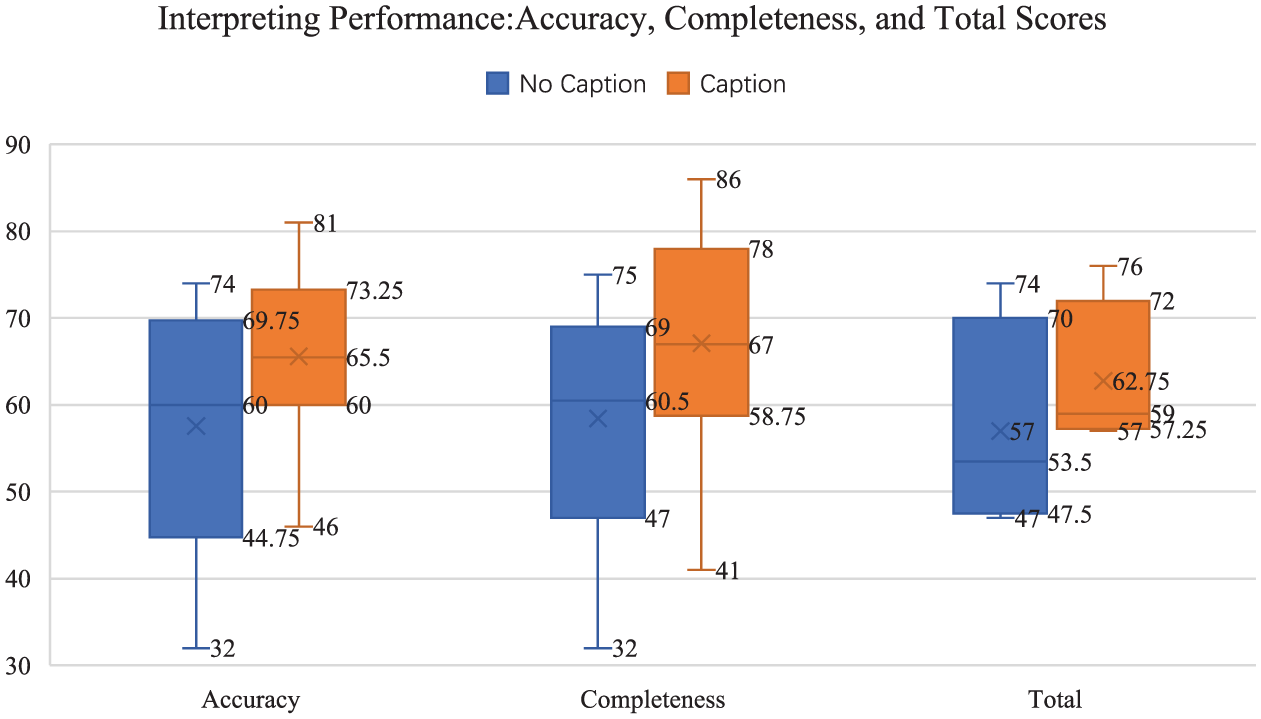

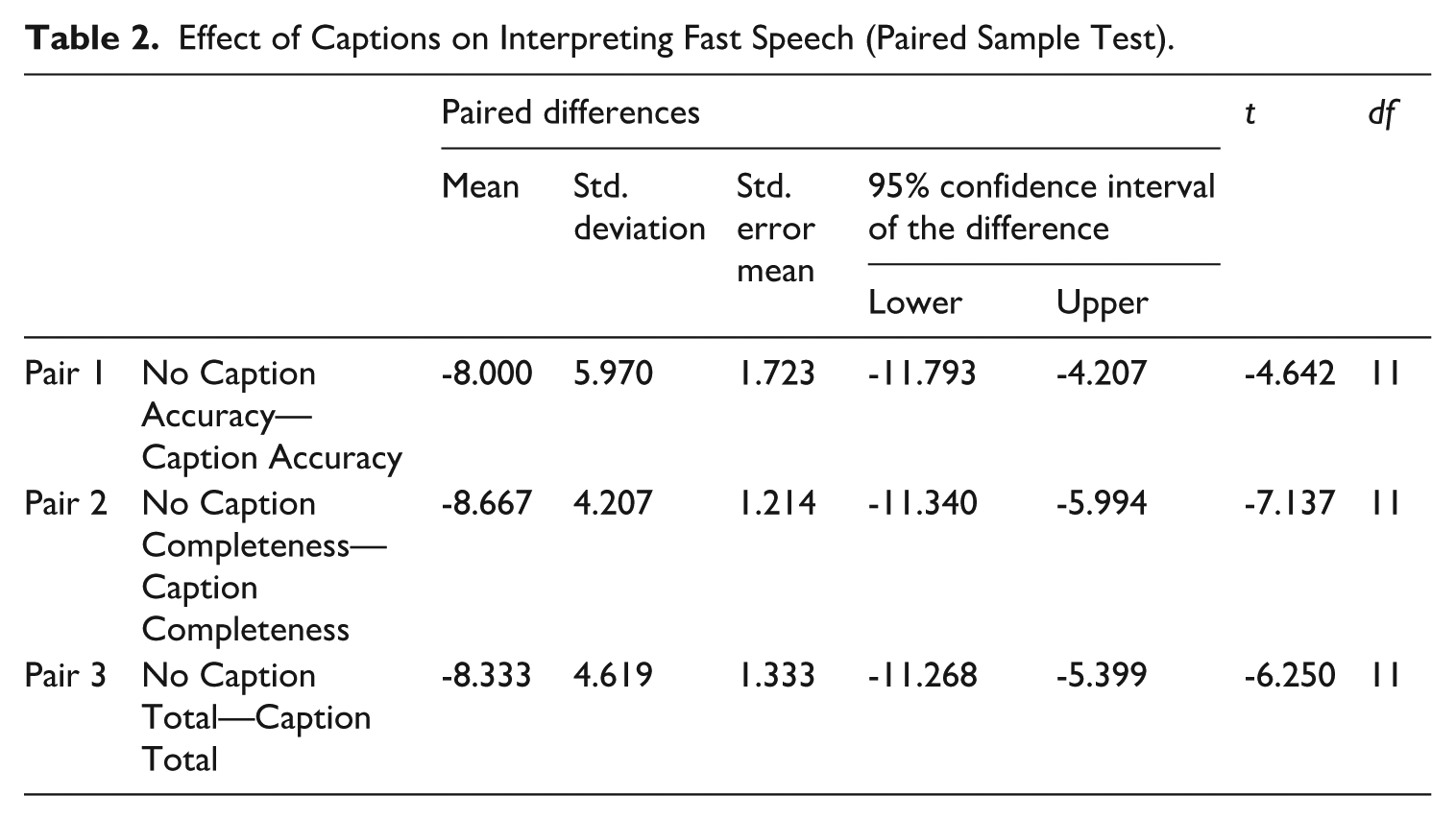

5.1.2 Significant effect of caption in enhancing interpreting of fast speeches

In contrast to interpreters’ performance at slow input rate, both descriptive and statistical data reveal a significant correlation between interpreters’ performance and the presence of live transcription generated by ASR. The boxplot (see Figure 3) compared the performance between the No Caption and Caption conditions across three measures: Accuracy, Completeness, and Total scores. The Caption condition generally resulted in higher median and mean scores for Accuracy, Completeness, and Total measures, suggesting that participants may have performed better with captions. However, the No Caption condition exhibited greater variability and a wider range of scores, indicating that performance without captions was more inconsistent. The differences between the conditions were relatively modest, with the Caption condition showing a slight advantage in consistency and overall scores.

Interpreting Performance: Accuracy, Completeness, and Total Scores.

According to the

Effect of Captions on Interpreting Fast Speech (Paired Sample Test).

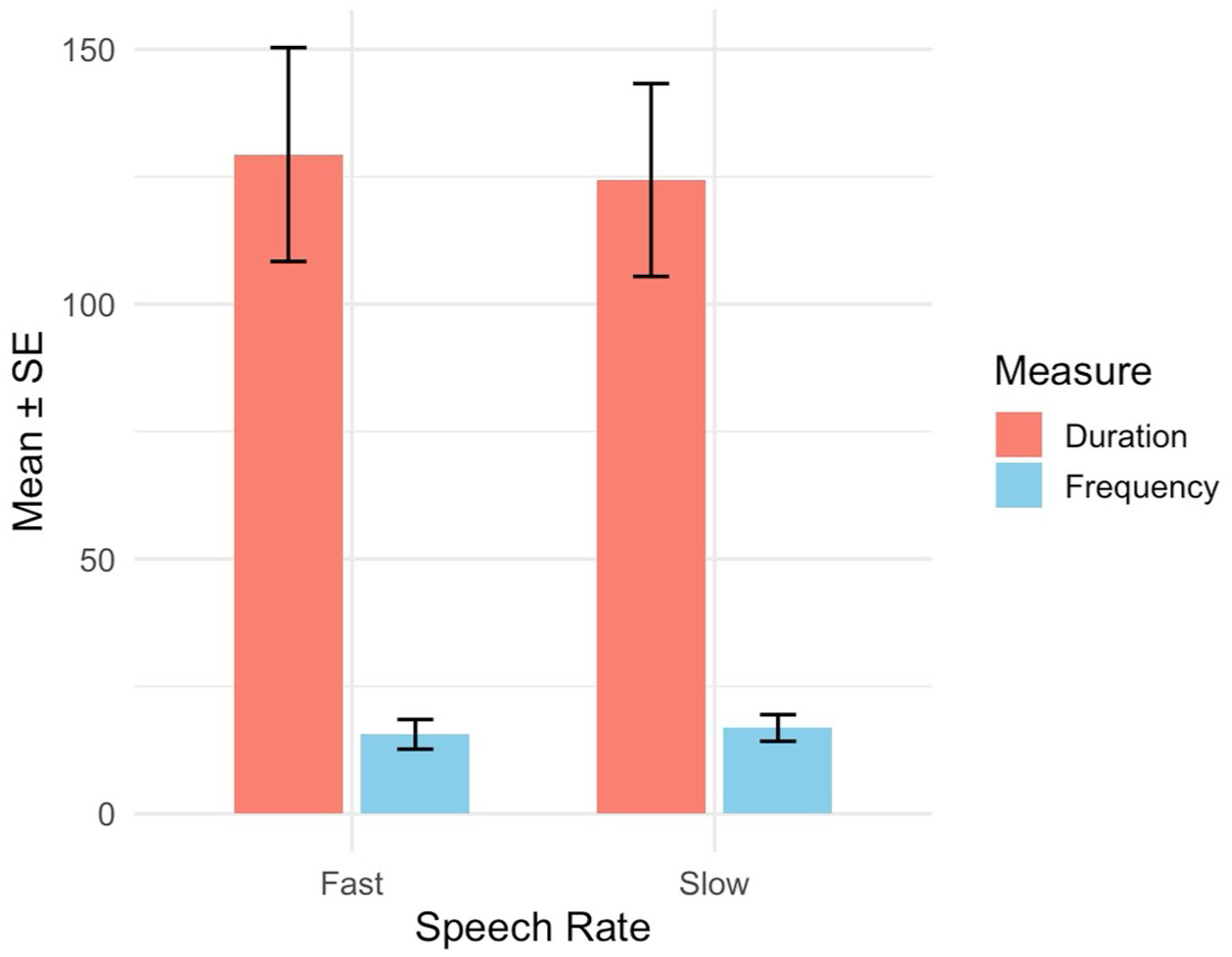

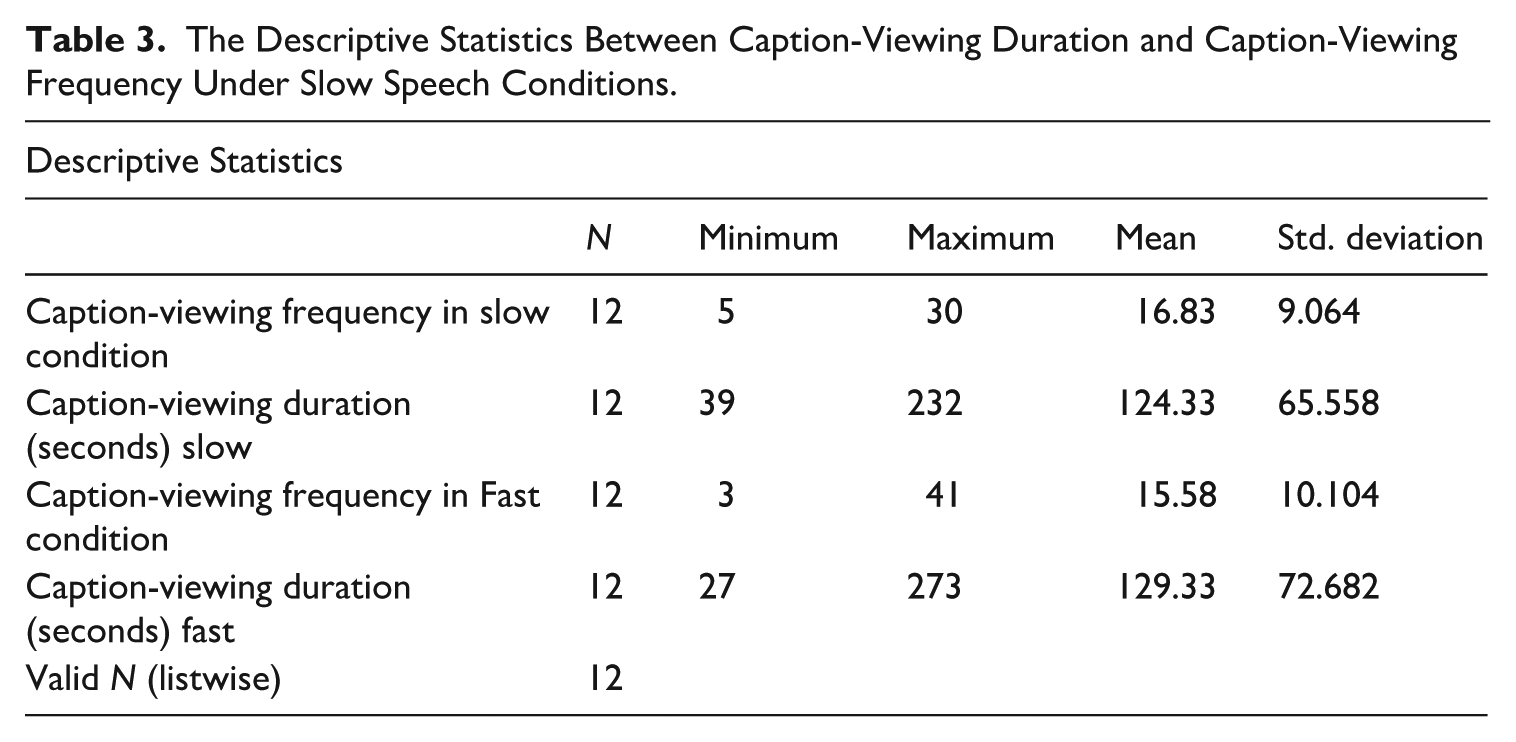

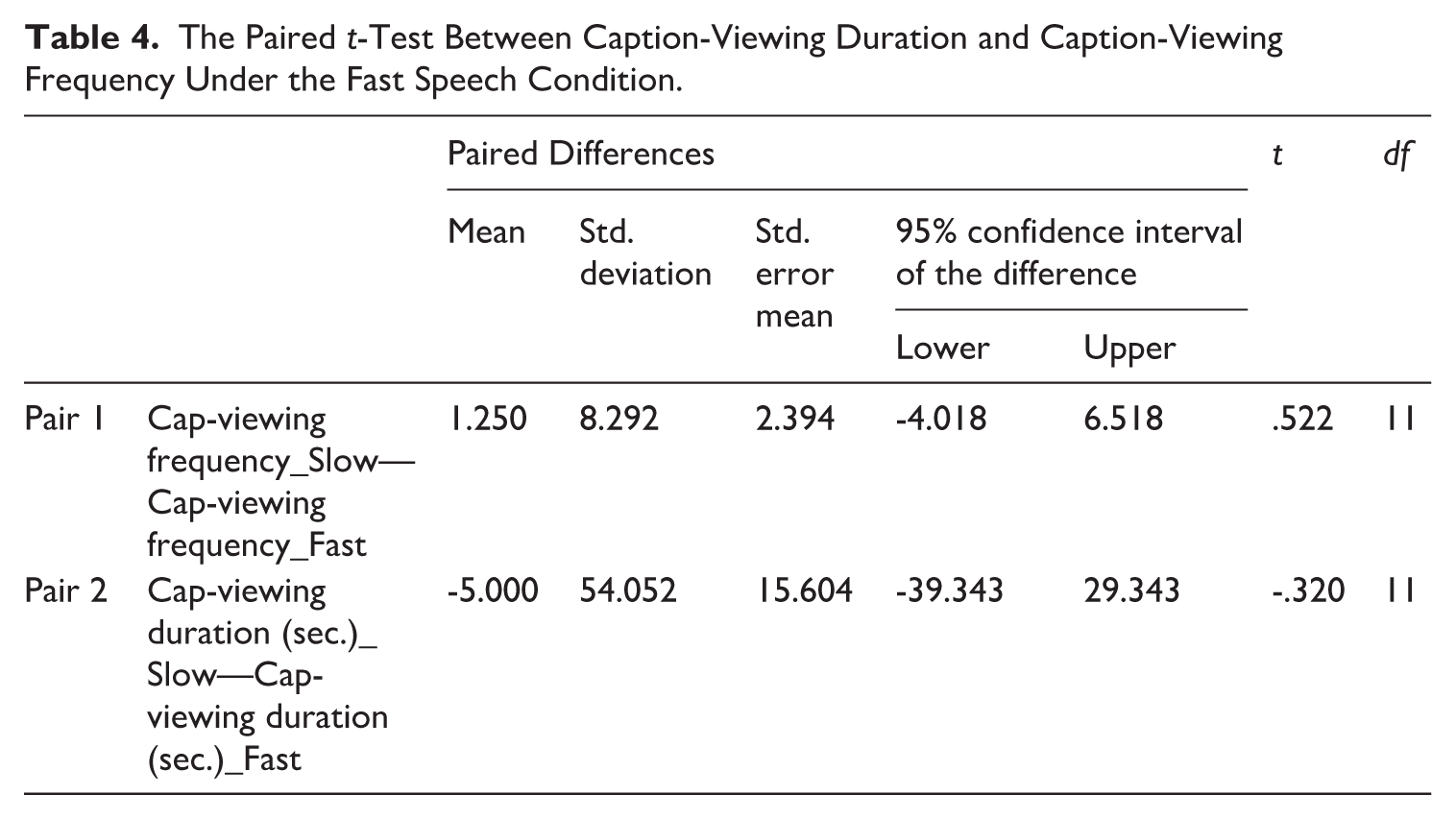

5.2 Caption-viewing frequency and duration of ASR captions

To address RQ2, which examines how interpreters’ viewing duration and frequency of ASR-generated captions shape their interpreting performance under different speech rate conditions, the proportion of caption-viewing duration was calculated to indicate the extent to which participants referred to the captions during the task. The data (see Figure 4, Tables 3 and 4) showed that interpreters sought help from captions to varying degrees. The results differed from Hypothesis B, which assumed that interpreters would look at the captions more frequently and for longer durations at fast rate. Instead, individual differences can be observed from the participants regarding their gazing behaviours. Both the descriptive data and statistical analysis indicated that there was no significant correlation between caption-viewing duration, caption-viewing frequency, and speech rate.

Graph of Caption-Viewing Pattern.

The Descriptive Statistics Between Caption-Viewing Duration and Caption-Viewing Frequency Under Slow Speech Conditions.

The Paired

The boxplot and statistical data both indicate that there is no standard caption-viewing pattern for trainee interpreters to interact with the live transcription. Participants viewed captions slightly more frequently in the Slow condition (

The experiment results also align with what has been observed during the task. Different participants employed different strategies for using captions. Some looked at the caption when they anticipated what the speaker was going to say, allowing them to avoid cognitive overload and focus on the captions. In contrast, some participants used the caption for difficult sections and for unfamiliar content and looked away when they anticipated easier content. It was observed that the interpreters actively used the captions as a complementary resource to support their listening comprehension. To conclude, the findings suggest that interpreters flexibly integrated ASR-generated captions into their comprehension process, using them strategically to balance cognitive load and optimise interpreting performance.

5.3 The observation of patterns of interpreters’ reliance on captions

The caption-viewing data in section 5.2 revealed that caption-viewing habits were highly individual. This section categorised three distinct gazing patterns identified through observation. To sum up, there were three types of gazing patterns of live captions: (1) high frequency of gazing times which indicates that their interpreting flow was disturbed and they were struggling with the management of modalities; (2) low frequency of gazing times yet long gazing duration which indicate their high reliance on the caption; and (3) moderate caption-viewing frequency and duration, indicating a balanced mode with the assistance of live captions:

Struggle between looking and not looking. The first type of gazing pattern reflected the interpreter’s challenge in balancing the modalities of speaking, reading, and listening. In this case, interpreters often fell into the cycle where they struggled to speak while simultaneously processing the meaning of the generated captions. For instance, the caption-viewing frequency data for one participant indicated that he frequently turned to the monitor for each segment. When these data were cross-referenced with the audio recordings, it became evident that he either looked at the segment, then looked away to generate a translation, or he attempted to translate the information without initially consulting the caption, subsequently turning to the monitor for confirmation.

High reliance on captions. In contrast to the high frequency of caption viewing observed in some participants, others displayed a lower frequency of caption viewing but with longer durations spent looking at the monitor. This behaviour suggests a strong reliance on the live captions, as she continuously focused on the text while interpreting. Two possibilities might explain this situation. First, the interpreter could have been experiencing cognitive overload, leading her to prioritise reading over listening. Alternatively, she might have been attempting to balance all modalities. Her SI performance provides further insight: she appeared to abandon listening to the audio and resorted to sight translation, likely due to cognitive overload.

Balancing between looking and listening. When combined with the interpreting performance data, a moderate caption-viewing frequency and duration (with a frequency near 15 and duration around 50%) appears to be the optimal mode of using captions. In these cases, interpreters effectively balanced prioritising listening to understand the speech while allocating additional attention to the captions. The captions served as a valuable tool, enhancing their performance. An explanation for this balance is that these interpreters were highly skilled in multitasking and capable of managing a significant cognitive load.

6. Discussion

The study aimed to investigate whether the extent of ASR support was the same as the delivery rate changes and how interpreters use live captions to aid their performance. As for the multitasking nature of SI, the study intended to use ASR captions to facilitate interpreters’ performance. Student interpreters were asked to interpret with and without the presence of ASR live transcriptions. The study aimed to determine if the presence of captions remains to be helpful in both conditions and in which situation live captions play a bigger role.

6.1 SI with ASR captions: enhancing effect for interpreting under demanding conditions

Prior studies (Defrancq & Fantinuoli, 2021; Desmet et al., 2018; Pisani & Fantinuoli, 2021; Wang et al., 2025; Yuan & Wang, 2023) have demonstrated that ASR captions can reduce error rates and enhance interpreting performance. The present study contributes insights by revealing that the degree of assistance provided by ASR captions varies according to speech rate. Consistent with H1, the results presented in section 5 indicate that captions can facilitate performance in demanding situations, supporting previous studies on ASR-assisted interpreting. The variation in facilitating effects suggests that ASR captions modify interpreters’ conventional SI workflows, prompting them to develop new strategies that yield differential outcomes.

The study found an aiding effect during demanding tasks, in this case, at fast speech. Increased rate heightened the information density of the speech, creating a problem trigger that prevented interpreters from hearing the subsequent segment while processing the previous information (Gumul, 2018). At higher speech rate, ASR texts served as a complementary resource, allowing interpreters to retrieve missed content. In this case, the function of ASR captions is similar to the findings of Seeber et al.’s (2020) research, which demonstrated that text could offload short-term memory, leading to less omission and more accurate translation.

Apart from offsetting the challenges associated with short-term memory, ASR captions also had a psychological effect, as revealed in the semi-structured interviews and caption-viewing data. For example, one participant reported that the captions provided a sense of reassurance, alleviating her anxiety about missing information. In addition, a participant mentioned that she was not actively seeking information when she noticed that the captions appeared too slowly and could be a distraction. Despite this awareness, the data showed that she still instinctively turned towards the monitor during SI and spent a significant amount of time (65.7%) engaging with it. These data suggest that, rather than merely serving as a complementary tool to aid in lexical retrieval during comprehension (Seeber et al., 2020), the captions may have offered psychological comfort. The reason interpreters continued to look at the popping-up text on the screen, even when not actively seeking information, could be that the captions helped alleviate their stress. The captions help alleviate interpreters’ stress by providing a sense of reassurance. The fear of making mistakes is a significant source of stress for interpreters (Korpal, 2016). With the presence of text, this stress can be mitigated, as the captions offer a backup that reduces the anxiety associated with missing or misinterpreting information. This explains why participants, even when not actively using the information from the captions, were inclined to glance at the monitor since it provided them with a sense of security.

The insignificant aiding effect of ASR live transcriptions of slow speeches may be attributed to a ceiling effect, similar to the interpretation offered by Su and Li (2024), who found no significant impact of ASR caption assistance on the accuracy scores of professional interpreters compared with student interpreters. The explanation is that slow delivery rates were already manageable for the participants, allowing them to perform well without external support. Consequently, the potential benefits of ASR captions were diminished due to the relatively low task difficulty.

6.2 Highly individualised caption-viewing patterns highlight the need for systematic training

Previous studies (Cheung & Li, 2022; Defrancq & Fantinuoli, 2021; Desmet et al., 2018; Liu et al., 2024; Pisani & Fantinuoli, 2021; Yuan & Wang, 2023) have examined interpreters’ information prioritisation patterns in live transcription viewing as indicators of reading behaviour. This study approached reading patterns from a different perspective—caption-viewing frequency and caption-viewing duration. In terms of caption-viewing strategies, the findings align with those of Wang et al. (2025), who observed that interpreters adopt highly individualised strategies in allocating their attention to ASR transcriptions. Therefore, H2 is rejected, which assumed student interpreters would look at the screen more often and for longer periods when input rate rises. Instead, the study found no correlation between the two factors.

The observed individual variability in caption-viewing patterns may be attributed to the fact that trainee interpreters have not yet developed a standardised workflow for this particular interpreting modality. The fluctuating caption-viewing frequency and caption-viewing duration suggest that live captions may interfere with interpreters’ original multitasking processes and may potentially destabilise the performance. The disruption may stem from cognitive demands introduced by live transcriptions, which add an additional dimension of cognitive demand (Wang et al., 2025). A closer examination of the experiment revealed a common pattern in some of the participants’ behaviour. During the task, some participants repeatedly turned to the monitor to read the captions, silently absorbing the content. After grasping the meaning, they would look away from the monitor to formulate a translation. This cycle continued as they alternated between reading the captions and generating their translations, detaching from the normal conduct of SI. This process revealed the competing efforts involved in SI, where participants had to navigate the conflicting demands of speaking, listening, and reading. As some participants reported after the task, they occasionally found themselves unable to listen while reading the captions. This highlighted the disruptive impact that reading captions can have on the interpreting process, causing interruptions in the natural flow and increasing the cognitive load. Similar concerns were also raised by scholars such as Chen and Kruger (2024) and Wang et al. (2025). This scenario illustrated how visual stimuli can potentially disrupt active listening. During the interpreting, their active listening conflicted with the simultaneous reading of captions since active listening demands a high level of concentration and deep processing throughout the discourse (Setton & Dawrant, 2016), overwhelming the participant’s ability to coordinate all the necessary cognitive efforts. The results therefore point to the need for systematic training of ASR-integrated interpreting. Although recent studies (see Chen & Kruger, 2024) have introduced the new modality of ASR-integrated interpreting, it has not yet been formally included in interpreting training programmes.

7. Conclusion

This study explored human–machine interaction on the utility of ASR-generated live captions in English-Chinese SI speeches of varying rates. By assessing and interpreting performances and collecting caption-viewing data, the study found that the presence of ASR-generated text significantly enhanced the performance of trainee interpreters under fast speech conditions. The live captions served as a crucial aid, helping to offload working memory and alleviate stress, which resulted in fewer errors and less fragmented sentences. The limited effects of live captions at slow rates may be attributed to a ceiling effect (Su & Li, 2024), where the task was less challenging and that additional support had minimal impact. The study also found that the pattern of using captions to enhance performance was highly individualised, with no statistically significant relationship observed between speech rates, caption-viewing duration, and time counts. Three caption reading patterns were identified: (1) high frequency of brief caption viewings, indicating disrupted interpreting flow and challenges in modality management; (2) low frequency but long-duration caption viewings, suggesting a high reliance on the captions; and (3) moderate frequency and duration of caption viewings, reflecting a balanced use of live captions.

This study faced several limitations. The absence of eye-tracking devices in this study limited the ability to determine which parts of the ASR-generated text interpreters focused on and found useful from a statistical angle. Meanwhile, the sample was limited to trainee interpreters. The observed differences in interpreting performance at different rates may be specific to this group. Future research can explore the use of ASR-generated captions among both professional and trainee interpreters. It is possible that experienced interpreters may not experience the performance deterioration observed in trainees.

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.