Abstract

The 2024 Indian General Election was marred by an unprecedented wave of disinformation—deepfakes, doctored visuals, and communal hoaxes—disseminated across multilingual, mobile-first platforms. While fact-checkers have become central to electoral integrity globally, little is known about how they operate in high-risk, linguistically fragmented, and digitally unequal contexts like India. Addressing this gap, this study examines how Indian fact-checkers acted as retroactive gatekeepers during the election, intervening after misinformation had already gone viral. Drawing on semi-structured interviews with fact-checkers from six organizations and ethnographic observation within one fact-checking organization's newsroom, the study explores how sociotechnical conditions—language diversity, infrastructural asymmetries, and platform algorithms—influenced verification routines, editorial decisions, and audience engagement. Fact-checkers prioritized claims based on virality, emotional salience, and harm potential, while navigating political polarization and informal editorial structures. Multilingualism emerged as both a logistical hurdle and a disinformation amplifier, with fact-checkers relying on informal networks to bridge gaps. Audiences were reconceptualized as collaborators, with participatory tip lines and mobile-optimized literacy materials used to build trust and reach. Despite the availability of AI detection tools, practitioners preferred manual verification due to reliability concerns in vernacular settings. The study expands gatekeeping theory by foregrounding the reactive, participatory, and context-specific nature of fact-checking in the Global South. It offers practical insights for building inclusive verification ecosystems by investing in vernacular infrastructure, community partnerships, and preventive media literacy. This research contributes to underexplored areas of election-related disinformation, fact-checking, and gatekeeping in non-Western democracies.

Introduction

The convergence of advanced digital technologies, the viral nature of political content, and the participatory nature of social media have significantly transformed the information ecosystem in India, the world's largest democracy. Among the most urgent concerns in this ecosystem is the proliferation of electoral disinformation—false or misleading content intended to influence public opinion and distort democratic participation. This disinformation is not merely abstract or rhetorical—it has led to tangible harms, including communal violence, distrust in democratic institutions, and the viral spread of synthetic content. For instance, deepfake videos of political figures such as Prime Minister Modi and Delhi CM Arvind Kejriwal circulated widely during the 2024 General Election, while false videos accusing Muslim men of contaminating food and water containers stoked communal hatred and triggered mob attacks (Mehrotra & Upadhyay, 2025). These harms are exacerbated by India's vast and uneven digital ecosystem: with over 800 million internet users and an internet penetration rate of 55.3% (The Indian Express, 2025), misinformation can travel rapidly across mobile-first platforms like WhatsApp and YouTube, particularly in vernacular languages and low-regulation digital spaces. This convergence of technological access, political polarization, and low media literacy makes India especially vulnerable to the societal impacts of disinformation—rendering electoral periods like the 2024 General Election high-risk moments for democratic participation.

In the Indian context, these campaigns are increasingly shaped by multilingual circulation patterns, encrypted platforms such as WhatsApp, and the strategic deployment of synthetic media during critical political events. As misinformation spreads rapidly across digital channels, fact-checking organizations have emerged as vital actors working to uphold electoral integrity. Yet, the pressures, methods, and audience engagement strategies that characterize Indian fact-checking during elections remain underexplored within global disinformation scholarship.

This study investigates how professional fact-checkers in India navigated the challenges of political disinformation during the 2024 General Election, a period marked by sophisticated deepfakes, doctored visuals, and community-targeted hoaxes. Drawing on semi-structured interviews with fact-checkers from six Indian organizations, we examine how verification decisions are shaped by sociotechnical conditions including language barriers, digital divides, and platform infrastructures. We focus on how these fact-checkers operate as retroactive gatekeepers—media actors who intervene after misinformation has already circulated widely—complicating traditional gatekeeping models while introducing new participatory and educational roles.

By foregrounding the experiences of fact-checkers in India, this study contributes to interdisciplinary debates on disinformation and emerging media technologies. Building on gatekeeping theory, we explore how fact-checkers balance institutional values and technological constraints in an environment where algorithmic curation, virality, and audience participation redefine the boundaries of professional verification. In contrast to prior studies that focus on Western democracies with high levels of media literacy or regulatory safeguards (Cazzamatta & Sarısakaloğlu, 2025), and the broader body of misinformation scholarship that has predominantly examined such contexts through psychological and communication lenses (Ha et al., 2019), this paper offers insights from a setting where institutional trust is volatile, digital literacy is uneven, and information flows are multilingual and highly politicized.

This article is situated at the intersection of media and technology, industry and management, and society and culture. In doing so, it focusses on timely issues such as how AI and ICTs reshape public discourse, challenge democratic resilience, and demand new modes of media literacy and verification. Our findings reveal that Indian fact-checkers rely not only on journalistic routines but also on collaborative strategies with local reporters, participatory input from audiences, and informal multilingual networks to verify claims and educate users. These insights extend conceptual understandings of gatekeeping, highlight the frictions of fact-checking in the Global South, and offer critical lessons for the design of inclusive, proactive misinformation countermeasures.

These insights highlight how verification practices are not only reactive but deeply embedded in sociotechnical systems shaped by language diversity, infrastructural gaps, and platform affordances. By analyzing how fact-checkers in India integrate journalistic routines, community input, and technological tools, this study contributes to ongoing conversations about the governance of information in digitally mediated democracies. It expands gatekeeping theory to account for the retroactive and participatory dimensions of verification work in contexts where digital participation is high but institutional and technological resources remain uneven.

Literature Review

Conceptualizing Misinformation, Disinformation, and Fake News

The scholarly literature has emphasized the need to distinguish between related terms such as misinformation, disinformation, and fake news. Wardle and Derakhshan (2017) define misinformation as false or misleading content shared without the intent to deceive, while disinformation refers to false content shared deliberately with the intent to mislead. Fake news, although widely used in public discourse, has been criticized for its imprecision and politicization (Egelhofer & Lecheler, 2019). Tandoc et al. (2018) provide a comprehensive typology of fake news, including satire, parody, fabrication, manipulation, advertising, and propaganda. In this study, we adopt the term disinformation when referring to politically motivated and intentionally misleading content—particularly relevant to election contexts in India—while acknowledging that interviewees often used these terms interchangeably.

Electoral Disinformation in Global and Indian Contexts

Disinformation around elections has been widely documented across the globe, from the United States (Allcott & Gentzkow, 2017) to Brazil (Batista Pereira et al., 2022) and Taiwan (Wang, 2020). Comparable patterns of election-related disinformation have been observed in Germany, Portugal, Taiwan, and the EU, where technological affordances, partisan biases, and algorithmic amplification exacerbate misinformation's reach (Baptista & Gradim, 2022; Wang, 2020; Zimmermann & Kohring, 2020). In the Indian context, election-related disinformation has frequently taken communal, casteist, and hyper-nationalist forms, often amplified by encrypted platforms such as WhatsApp. Studies on the 2019 Indian General Election reveal how political actors, including major parties actively leveraged platforms such as Facebook and WhatsApp to circulate misleading content (Arabaghatta Basavaraj, 2022; Das & Schroeder, 2021; Udupa, 2019). Scholars have highlighted how digital infrastructures complicate detection and correction due to linguistic fragmentation and rapid dissemination in closed networks (Farooq, 2018; Laskar & Reyaz, 2021).

Badrinathan and Chauchard (2024) argue that Western-developed correction models may not always translate effectively to Global South contexts due to differences in platform usage, trust in institutions, and exposure dynamics. For example, peer-to-peer corrections on WhatsApp were shown to significantly reduce belief in misinformation in India, even without source citations—a finding that contrasts sharply with U.S.-based studies where motivated reasoning dampens corrective effects (Badrinathan & Chauchard, 2024).

Recent research also draws attention to infrastructural and linguistic constraints in India. Seelam et al. (2024) report that fact-checkers in India often struggle to serve rural populations due to language barriers, resource shortages, and lack of awareness. Their study notes that many rural users remain unaware of fact-checking services altogether and that fact-checkers rely heavily on local stringers and multilingual networks to detect and debunk region-specific misinformation.

The communalization of disinformation during crises such as the COVID-19 pandemic adds another layer to understanding India's digital misinformation landscape. Arya and Kanozia (2025) document how narratives targeting Muslims, such as the Tablighi Jamaat incident, led to real-world violence and xenophobic trends on platforms like Twitter. Disinformation included pseudoscientific remedies, vaccine misinformation, and conspiracy theories tied to religion, caste, and national identity, revealing the complex sociocultural roots of India's misinformation ecosystem.

Fact-Checking and the Evolution of Gatekeeping

Gatekeeping theory, which focusses on how information reaches its audience, has evolved over time, with roots tracing back to Kurt Lewin's early work in social psychology (Lewin, 1947). The theory gained prominence in the field of journalism through the seminal work of David Manning White (1950), who examined the role of newspaper editors as “gatekeepers” in controlling the flow of information to the public. White's gatekeeping model focused on the selection and rejection of news stories based on editorial judgments.

Subsequently, Shoemaker and Reese (1996) expanded the theory by introducing the concept of “gate watching,” acknowledging that audiences, as well as media professionals, participate in the gatekeeping process through their choices in consuming and sharing information. Gatekeeping involves several key principles, including the selection of news based on newsworthiness criteria, the framing of stories through editorial decisions, and the role of gatekeepers in shaping public perceptions (Shoemaker & Vos, 2009). This theory continues to be influential in understanding how information is filtered and disseminated in the media landscape.

In the digital age, the traditional role of editorial gatekeepers has expanded as user-generated content and citizen journalism proliferate through online platforms (Hermida, 2012). The democratization of information flow challenges the traditional top-down model, with audiences participating in the dissemination process. Thorson and Wells (2015) created the “curation of flows” framework which places journalistic curation next to four groups of people who are also engaged in curating: individual media consumers; social others embedded in online and offline networks; strategic communicators; and algorithms that shape and present digital content. Wallace (2018) provides a similar framework for digital gatekeeping where she too identifies four gatekeeper archetypes: journalists, platform algorithms, strategic professionals, and individual amateurs who are interacting with each other in the process of information selection and dissemination.

Fact-checking which started out as a focused look into how a newsroom uses technology to get facts out to the public has now emerged as a subfield of journalism which uses gatekeeping routines and (Graves & Anderson, 2020). The role of fact-checkers during high-stakes political events, such as elections, highlights their function as information gatekeepers. While Shoemaker and Vos (2009) define gatekeeping as the process of selecting, filtering, and shaping content before it reaches the public. In the digital era, however, this process has been complicated by the participatory nature of online platforms (Thorson & Wells, 2015; Wallace, 2018). Singer (2014) argues that audiences are playing a bigger role in the gatekeeping process and their role in relation to news media can be seen as one of “secondary gatekeeping.”

Similarly, Singer (2023) goes on to propose that fact-checkers should be understood as retroactive gatekeepers who intervene after misinformation has already spread. Unlike traditional editors, they do not control publication but instead act as corrective agents in a fragmented media ecosystem. Shin et al. (2025) frame of fact-checking similarly as part of a broader epistemic infrastructure embedded in platform governance and algorithmic mediation. Shin et al. emphasize that verification systems are increasingly hybrid, combining human discretion with machine processes, and that fact-checkers now operate within sociotechnical networks that both enable and constrain their authority.

This expanded view of gatekeeping reflects broader transformations in media power. As Shin et al. (2025) argue, the epistemic authority of fact-checkers now depends not only on their journalistic expertise but also on how they interface with algorithmic sorting, platform policies, and audience expectations. Thus, gatekeeping is no longer solely about content selection but also about the infrastructural and procedural standards by which knowledge is validated in digitally mediated societies.

AI, Detection Tools, and Technological Tensions

Recent studies show that transformer-based models like BERT and GPT have improved detection accuracy, but real-time implementation remains challenging due to computational demands (Cazzamatta & Sarısakaloğlu, 2025; Gondwe, 2025). In the Global South, additional challenges include low-resource languages, lack of localized training data, and skepticism toward algorithmic tools (Mandava & Bhadra, 2025).

Dierickx et al. (2024) document how fact-checkers in the Nordic region are cautious about AI tools, often preferring manual verification due to concerns about reliability and ethical opacity. These concerns resonate with Indian fact-checkers interviewed in Seelam et al. (2024), who emphasized the importance of local judgment, multilingual literacy, and manual curation in verification workflows.

Building on the literature reviewed, this study examines how Indian fact-checkers responded to the surge of disinformation during the 2024 General Election. While earlier work has explored global fact-checking trends and institutional routines, less is known about how Indian professionals navigate election-specific challenges, platform constraints, and linguistic barriers in real-time. This study seeks to address that gap by answering the following questions:

What factors influenced how fact-checkers determined which election-related news and claims to verify during the 2024 Indian General Election? What factors shaped the gatekeeping role of fact-checkers in managing election-related misinformation during the 2024 Indian General Election? How did fact-checkers conceptualize their audience during the 2024 Indian General Election? What roles did fact-checkers play in countering misinformation during the 2024 Indian General Election?

Methodology

This study employed qualitative, semi-structured interviews with domain experts in fact-checking in India during the 2024 General Election, complemented by direct ethnographic observation. A purposive sampling strategy was adopted to ensure representation across the Indian fact-checking organizations. Initially, we identified 19 operational fact-checking initiatives, including both independent fact-checking organizations and fact-checking teams embedded within legacy media outlets. Upon follow-up, we found that two of these organizations were no longer active, bringing the final sampling frame to 17.

Of the 17 organizations contacted, seven participants agreed to participate. The final sample included representatives from six active organizations, with three participants working in newsroom-based fact-checking teams and the rest affiliated with independent fact-checking organizations.

While the sample size is small, it includes nearly forty percent of the active fact-checking initiatives in India during the election, with wide representation across organizational structure, language, and editorial orientation. We prioritized depth over breadth, aligning with qualitative research norms that value information-rich cases. No additional participants were pursued after the initial round due to two reasons: (1) resource constraints during the election period and (2) the emergence of thematic saturation—the point at which new data no longer yielded novel insights. As per Guest et al. (2006), saturation was achieved after the fifth interview, with the last two confirming and refining core categories.

To supplement these interviews, the first author also spent multiple days embedded within one of the participating fact-checking newsrooms. This observational period allowed for an immersive understanding of everyday workflows, editorial decision-making, and informal verification routines during the election period.

The interview protocol blended structured and semi-structured interviews. Participants were asked a consistent set of core questions across themes such as verification criteria, organizational influence, audience interaction, and platform strategies. The semi-structured format allowed for follow-up probes through natural inquiry, and several participants elaborated on unexpected themes such as pressures from platform algorithms or regional misinformation trends. The full questionnaire is provided in the Appendix.

Sample questions included:

How do you decide what content to fact-check during an election? What organizational or political factors shape your verification priorities? How do you think about your audience—who are you fact-checking for? What are some of the key challenges you face during election cycles?

Interviews were conducted in English or Hindi, depending on participant preference, via Zoom or Google Meet. Each lasted between 45 and 70 min, as well as two in-person interviews. Transcripts were manually produced and anonymized to remove all identifying details, following ethical research guidelines (Baez, 2002).

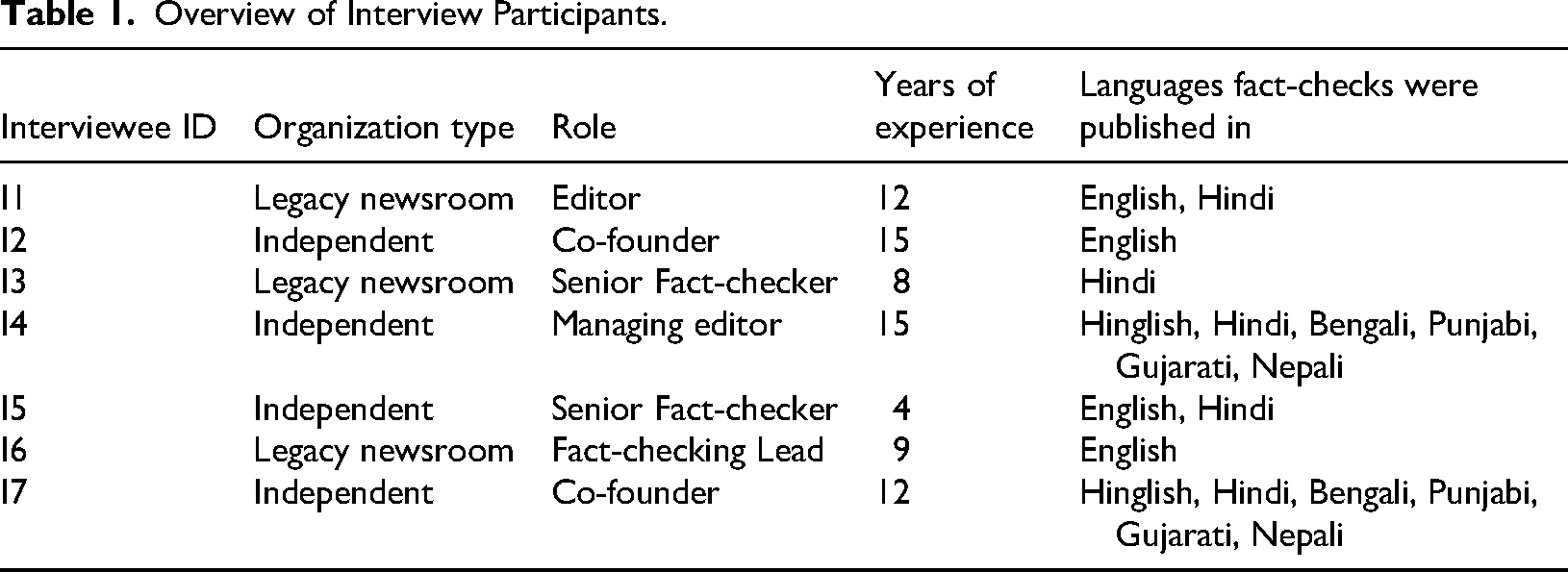

Overview of Participants

All interviewees held senior editorial or founding roles and brought between 4 and 15 years of experience in journalism or digital verification. This group reflected significant diversity across organizational structures and linguistic profiles: one participant worked exclusively in Hindi; two operated in English only; two in both Hindi and English; and one spanned a multilingual remit including English, Hindi, Bengali, Punjabi, Gujarati, and Nepali. Participants held senior roles—such as editors, senior fact-checkers, or co-founders—and brought between 4 and 15 years of experience in journalism, digital verification, or both. Table 1 summarizes the characteristics of the participants:

Overview of Interview Participants.

Data Analysis

Data were analyzed using qualitative content analysis with an inductive approach. The authors independently reviewed all transcripts to generate initial codes. These were then discussed collaboratively to refine a shared codebook. Codes captured recurring topics such as verification criteria, editorial pressure, linguistic strategies, and audience feedback. As themes began to coalesce, the researchers grouped related codes into high-level categories that reflected both institutional and platform-level dynamics. Intercoder reliability was maintained through discussion-based consensus and memo-writing exercises, which helped resolve interpretive discrepancies and ensure analytical rigor. This process adhered to qualitative best practices as described by Schreier (2012) and Tracy (2019), prioritizing reflexivity, thematic clarity, and transparency.

Findings

This section presents the key findings organized around the four research questions guiding the study. Drawing from in-depth interviews with fact-checkers across India, the results are structured thematically to foreground how verification practices, audience conceptualization, and institutional constraints shaped fact-checking routines during the 2024 Indian General Election. Representative quotes are included to illustrate key points, while additional statements offer contextual depth. RQ1: What Factors Influenced Fact-checkers’ Decisions to Verify Election-Related Claims?

Misinformation was understood as content shared without malicious intent. For instance, a forwarded message about home remedies for weight loss, though inaccurate, was not inherently harmful. Malinformation referred to exaggerated or misleading information rooted in partial truths. As Interviewee 1 explained, “Malinformation is not fully false but is framed in such a way that it exaggerates certain aspects while omitting crucial context.”

Disinformation, in contrast, was identified as the most problematic during elections due to its deliberate intent to mislead: Disinformation is done specifically to mislead the users… it is manipulated with an agenda to deceive. (Interviewee 2)

The significance of this category became evident during the election cycle, as political actors exploited doctored videos, out-of-context quotes, and fabricated claims. One fact-checker explained: Political leaders are often misquoted… sometimes a real speech is clipped just before they clarify a statement, making it seem like they said something completely different. (Interviewee 6)

Disinformation was particularly potent due to its ability to stoke emotional reactions. Several fact-checkers emphasized that emotionally charged content spreads faster and can be harder to correct once it becomes entrenched in public discourse.

When we find a political claim, we check whether it has been seen by just a few people or if it has already gone viral. If it's viral and it's hurting people… that's why these claims circulate widely—because people want to believe them. (Interviewee 6)

Particularly concerning were false opinion polls and manipulated visuals, which fact-checkers described as attempts to shape voter perception. These forms of disinformation were seen as timed to influence undecided voters by simulating electoral momentum. “This is done to influence undecided voters and make them think the election result is already decided.” (Interviewee 4).

Several fact-checkers also noted that misinformation was often localized, requiring them to monitor regional political dynamics and cultural references closely. This made real-time prioritization difficult without regional expertise. RQ2: What Shaped the Gatekeeping Role of Fact-checkers?

While acknowledging that disinformation can emerge from all political camps, the participants emphasized institutional safeguards against partisan pressures. Fact-checkers invoked fairness and transparency as integral to their legitimacy. Interviewee 4 added, “Our credibility depends on being consistent. If we only fact-check one side, we lose the trust of the audience.”

Fact-checkers explained that they primarily focus on Hindi and English but acknowledged that their linguistic coverage is constrained by team capacity and resource limitations. Although some staff possessed regional language proficiency, the volume of multilingual misinformation far outpaced their ability to respond. “Not knowing a language poses a significant barrier to effective fact-checking,” said Interviewee 1.

Many fact-checkers used tools like Google Translate to get an initial sense of non-Hindi content but emphasized that these tools fall short for vernacular or hyper-local contexts. Interviewee 4 pointed out, “Automated translation tools like Google Translate are not always effective, particularly for vernacular languages and hyper-local content.” To address this, several organizations relied on personal networks—friends, stringers, and local reporters—who could interpret, verify, and contextualize claims in underrepresented languages.

An additional challenge cited was the repetition of the same disinformation across languages, requiring fact-checkers to debunk identical content multiple times. “The falsehoods persist across languages,” Interviewee 1 explained. “Even after being debunked in Hindi and English, we keep seeing the same videos circulated with new captions.” This linguistic recycling added to fact-checkers’ workload and delayed their response times.

Strategies to mitigate these challenges included scanning content for recurrent keywords or tropes across languages, using collaborative cross-language verification teams, and creating basic glossaries of common misinformation terms. Interviewee 3 explained, “We use local stringers and local correspondents who are both able to verify the facts and translate content. In situations where we lack local resources, we reach out to personal contacts or friends who may be proficient in the language in question.”

The widespread nature of region-specific misinformation and the active translation of false content by bad actors illustrate how multilingualism is not merely a barrier to verification—it is also a mechanism of disinformation proliferation. These findings highlight the urgent need for regional resourcing and vernacular literacy within fact-checking institutions.

Some organizations used informal Slack channels or WhatsApp groups to coordinate decisions, while others relied on individual fact-checkers to take initiative. Interviewee 5 explained, “There isn’t always time to hold a meeting, especially during elections. You have to act quickly based on your judgment.” RQ3: How Did Fact-checkers Conceptualize and Engage Their Audience?

During the elections, this participatory engagement continued. Audiences were encouraged to report suspicious claims via WhatsApp or websites, a strategy fact-checkers saw as enhancing both speed and trust. Some organizations established dedicated hotlines or used social media polls to identify circulating falsehoods. Interviewee 1 remarked, “We rely on our readers as much as they rely on us. It's a two-way street now.”

Workshops and webinars were also cited as important tools to build media literacy and reduce long-term susceptibility to misinformation. Several fact-checkers mentioned efforts to translate their materials into multiple languages to ensure accessibility. Interviewee 7 noted, “We're trying to create media literacy campaigns that speak to different age groups and language groups. It's slow, but it's working.” RQ4: What Roles Did Fact-checkers Play in Countering Electoral Disinformation?

They saw themselves as “retroactive gatekeepers” who provided second-order corrections after misinformation had already spread. This reactive stance was a structural feature of their role. Some described themselves as “circuit breakers” who helped interrupt the flow of disinformation before it could reach saturation.

Deepfakes and edited visuals posed unique difficulties, particularly given their emotional salience and believability. Interviewee 7 added, “Once people see a video, even if you prove it's fake, the damage is already done.”

Fact-checkers cited examples where political commentary intersected with ableist or health-shaming narratives, compounding the harm. One notable example involved a meme about a political leader with diabetes, which used imagery to mock his condition while questioning his competence. Such content was seen as simultaneously spreading political disinformation and reinforcing harmful stereotypes.

In response, some organizations collaborated with local content creators or stringers to build vernacular fact-checking capacity. Interviewee 3 described partnering with sural area-based journalists to translate and disseminate verified information in local dialects. This strategy was seen as essential for expanding reach beyond metropolitan areas.

Together, these findings illustrate how Indian fact-checkers navigated a complex, multilingual, and politically charged information landscape. Their roles extended beyond verification to include editorial judgment, educational outreach, and participatory engagement—often under resource-constrained and rapidly evolving conditions. Despite institutional and infrastructural limitations, fact-checkers devised flexible, audience-centered strategies to combat disinformation and safeguard electoral integrity.

Discussion

This study underscores how fact-checkers in India acted as retroactive gatekeepers during the 2024 General Election. They responded to an overwhelming volume of election-related disinformation by making editorial decisions rooted in contextual urgency, virality, and anticipated harm. In doing so, their work bore strong similarities to traditional journalistic gatekeeping but was distinguished by its retrospective nature, limited institutional support, and urgent digital pacing.

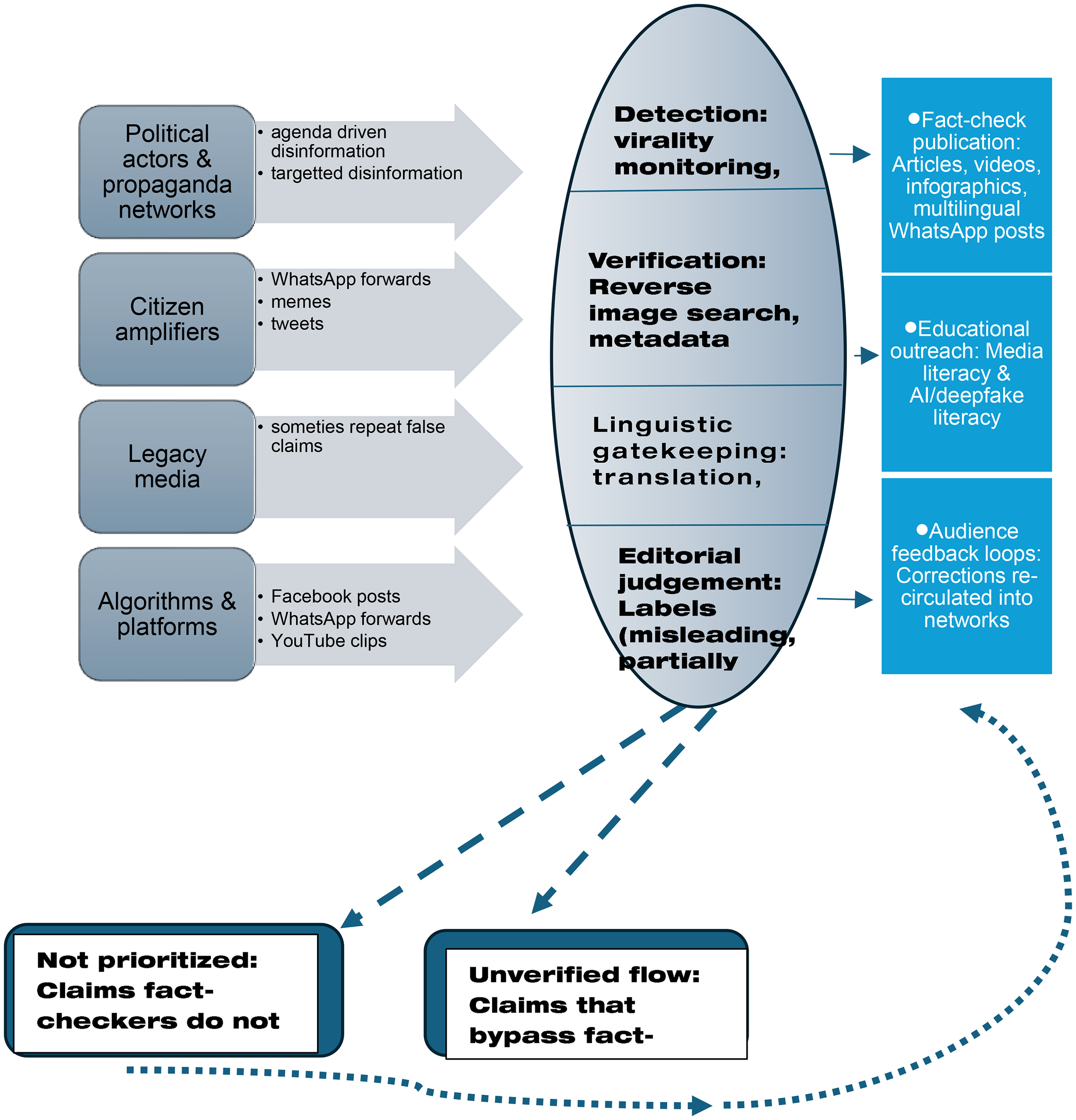

This study advances gatekeeping theory by extending Singer's (2023) notion of fact-checkers as retroactive gatekeepers into the Global South. While existing work highlights the temporal dimension of gatekeeping—intervening only after information circulates—we show that retroactive gatekeeping in India is also linguistic, improvisational, and participatory. Fact-checkers operated as linguistic gatekeepers, filtering and translating disinformation across multiple vernaculars; relied on informal, rapid editorial routines instead of standardized newsroom structures; and engaged audiences as collaborators through participatory tip lines and WhatsApp forwards (Figure 1).

Fact-checking as an act of retroactive gatekeeping in a multilingual environment.

These dimensions expand the theoretical frame of retroactive gatekeeping beyond Western democracies, situating it within a typology of multilingual, resource-constrained, and audience-centered practices that are increasingly central to understanding information governance in the Global South.

Participants saw themselves as distinct from journalists but aligned with journalistic values such as objectivity, accuracy, and public service. Unlike traditional editors, they responded to viral claims in real time, often relying on informal editorial coordination, WhatsApp groups, and rapid verification under pressure. These fact-checkers supplemented journalism rather than replacing it, acting as circuit breakers and information correctors rather than initiators of news agendas.

Notably, while recent scholarship has explored the promise of AI-assisted verification tools, participants in this study did not foreground such technologies in their routines. Their preference for manual verification methods and reliance on personal networks echoes broader skepticism toward automated detection systems—particularly in multilingual, resource-limited contexts where algorithmic tools often fall short (Dierickx et al., 2024; Seelam et al., 2024).

This linguistic complexity emerged as one of the most persistent challenges, shaping both the limits and innovations in Indian fact-checkers’ election-time routines. While multilingualism poses challenges to the gatekeeping process of fact-checkers, there may be ways to manage the same. One can use the lessons from health communications practices in India where health practitioners often find it difficult to navigate the linguistic divide. One of the recommendations we have based on the health communication literature include linguistic proficiency as a key job requirement for fact-checking. Second, creating a basic translation book, and visual literacy for common words used to sensationalize or spread disinformation would help the fact-checkers flag the content. Third taking a cue from the Indian national television, Doordarshan, which has navigated this challenge over the years. To accommodate multilingualism, Doordarshan broadcasts news in several languages in addition to Hindi, English, and the prominent regional language of the state. The television service does not translate or dub all programming but prioritizes the broadcast of news in multiple languages. Similarly, when the fact-checkers mentioned they have started disseminating information via WhatsApp, they may want to make these streams multilingual.

The participatory nature of fact-checking also surfaced clearly in the 2024 elections. Interviewees noted that their role extended beyond debunking to educating audiences on misinformation identification. This reflects Bro and Wallberg's (2015) “journalism-as-communication” model and Singer's (2023) view of fact-checkers as retroactive gatekeepers who engage audiences as collaborators. Educational initiatives, live webinars, and targeted campaigns through WhatsApp were tailored for populations most vulnerable to disinformation—particularly the elderly and rural voters.

Nevertheless, fact-checkers continued to face structural challenges posed by the digital architecture of social media. Echo chambers and algorithm-driven feeds remained formidable barriers. Disinformation during the 2024 elections circulated rapidly within homophilic networks, often outpacing fact-checks. Moreover, fact-checkers encountered cases where political and health misinformation intersected, such as false claims about vaccines made during political rallies, reinforcing prior concerns about the blurring of issue-specific misinformation during election cycles.

In addition to audience engagement and linguistic challenges, institutional differences among fact-checking organizations also shaped how verification work was conducted during the election.

A notable institutional distinction that shaped fact-checking practices during the 2024 Indian General Election was the difference between fact-checking units embedded within legacy news organizations and those operating as independent organizations. While both shared core verification goals, their structural environments shaped their workflows and audience strategies in important ways. Fact-checkers based in legacy newsrooms often worked within formal editorial hierarchies and benefitted from institutional infrastructure, including access to newsroom resources and broader media distribution platforms. However, they were also more constrained by organizational protocols and timelines.

In contrast, independent fact-checking organizations exhibited greater flexibility and improvisation in their routines, especially in navigating India's multilingual information ecosystem. For example, one independent fact-checker described reaching out to friends and “friends of friends” who knew a particular regional language in order to verify a viral claim—highlighting how informal networks often filled resource gaps. Additionally, independent organizations placed particular emphasis on their educational role, frequently organizing media literacy workshops and creating vernacular outreach content tailored to rural or older populations. These efforts extended their function beyond verification toward proactive, community-centered engagement. While newsroom-based units often aligned more closely with traditional journalistic gatekeeping models, independent fact-checkers occupied hybrid roles as both retroactive gatekeepers and media educators, adapting flexibly to evolving digital and sociolinguistic challenges.

However, these findings are highly context-dependent. India's linguistic complexity, reliance on closed messaging apps like WhatsApp, and lack of comprehensive fact-checking infrastructure make it distinct from many Western and East Asian democracies. Therefore, while the study offers useful insight into the broader functions of gatekeeping and verification, its conclusions should not be generalized without caution.

A limitation of this study is that it foregrounds the voices of fact-checkers but not those of audiences themselves. Although our data show that users contributed tips and engaged with fact-checks, we did not capture how audiences perceived or acted upon these interventions. Future research should examine the reception side of retroactive gatekeeping—whether fact-checks reshape trust, change beliefs, or influence sharing practices—particularly across diverse linguistic and demographic groups. Incorporating audience perspectives would provide a fuller picture of the efficacy and limits of fact-checking in participatory environments.

Implications for Democratic Societies

While rooted in the Indian context, the findings of this study carry cautious relevance for other democratic societies confronting the rising tide of electoral disinformation. The weaponization of misinformation—particularly via closed networks like WhatsApp or Telegram—is not unique to India. Democracies across the Global South and Global North alike have witnessed how misinformation exploits social divisions and erodes trust in electoral processes (Baptista & Gradim, 2022; Zimmermann & Kohring, 2020). In the U.S. 2016 Presidential Election, for instance, approximately 25 percent of election-related tweets linked to hyper-partisan or false information (Allcott & Gentzkow, 2017; Bovet & Makse, 2019). In Brazil's 2018 elections, disinformation campaigns on WhatsApp blended religious appeals with political propaganda, influencing voter perceptions (Dourado & Salgado, 2021), while fact-checkers struggled to counter these narratives due to partisan resistance and speed of spread (Batista Pereira et al., 2022).

Within Asia, India's experience finds echoes in Indonesia, where election-related disinformation has stoked ethnic and religious tensions (Kajimoto & Stanley, 2018), and in Malaysia, where linguistic and religious divides make minority groups especially vulnerable to targeted narratives (Ong & Tapsell, 2022). Even advanced digital environments like Singapore and Japan contend with structural challenges: the former with balancing state regulation and speech rights, and the latter with an aging population's vulnerability to misinformation (Kajimoto & Stanley, 2018). These comparative insights suggest that while the Indian case is distinct in its linguistic and infrastructural complexities, the models of participatory, multilingual, and retroactive fact-checking observed here may offer adaptable strategies for other democracies—provided they are contextualized within local socio-political environments.

Practical Implications

The study yields several actionable recommendations for fact-checking organizations and media policy stakeholders. These implications build on the distinctive challenges of retroactive gatekeeping in multilingual and high-velocity election environments.

Taken together, these recommendations underscore the need for an inclusive and participatory verification ecosystem that is multilingual, collaborative. Moreover, media literacy among the users may thwart misinformation from being spread.

Limitations

This study is based on a relatively small sample of seven interviews with expert fact-checkers across six organizations. Although participants represent a range of regional and organizational backgrounds, the sample may not fully capture the diversity of fact-checking practices in India, especially those emerging informally or in grassroots contexts. Further, the interviews were conducted in the immediate aftermath of a high-stakes election period. Participants’ recollections and reflections may reflect peak-period pressures rather than sustained, routine operations. Additionally, while interviewees reflected on challenges like multilingualism and audience engagement, this study did not directly incorporate perspectives from audiences themselves, which may limit insight into user reception and impact.

Finally, given the distinct socio-political media ecosystem in India, including factors such as the digital divide and regional media variation, caution is warranted in generalizing the findings to other national contexts. Nevertheless, this study offers important insights into the flexible and adaptive roles that fact-checkers can play in safeguarding election integrity under rapidly evolving digital conditions. This study shows that retroactive gatekeeping in India is reactive, multilingual, and participatory, reshaping verification in the Global South.

Footnotes

Ethical Considerations

This study was approved by the Towson University Institutional Review Board (IRB Protocol #2260). Semi-structured interviews were conducted with informed consent, and all identifying information was anonymized to protect participants. The ethnographic observation component focused solely on professional newsroom settings and did not involve private communications. All procedures adhered to established ethical standards for qualitative research to ensure participant confidentiality, autonomy, and dignity.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Towson University, (grant number FDRC Grant received by Enakshi Roy).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Appendix

Questionnaire used for interviewing participants for this study.

Section 1: verification priorities and editorial judgment

1. What kinds of election-related content did you prioritize for fact-checking during the 2024 General Election? 2. How do you define fake news, misinformation, or disinformation in the electoral context? 3. What criteria guide your decision to verify a political claim? Are there any formal or informal guidelines in your organization? 4. How do you ensure neutrality and avoid ideological bias when selecting content to verify?

Section 2: fact-checking process

5. What tools and techniques did you rely on most during the election to verify content such as visuals, videos, or quotes? 6. On average, how long does an election-related fact-check take? 7. How are fact-checkers trained to handle high-volume or politically sensitive misinformation during elections?

Section 3: organizational context and ecosystem

8. How would you describe the differences between fact-checking units within legacy newsrooms and independent organizations? 9. Was there any collaboration or competition across organizations during the election? How did you handle overlapping claims?

Section 4: audience engagement and platform challenges

10. How did your audience contribute to your work during the election (e.g., submitting claims, giving feedback)? 11. What role did platforms like WhatsApp play in both spreading and verifying election misinformation? 12. How did regional languages and the digital divide affect your fact-checking efforts?

Section 5: key challenges and reflections

13. What were the biggest challenges your team faced during the 2024 election—such as volume of claims, speed, platform constraints, or political pressure? 14. Looking ahead, what would improve the reach and impact of fact-checking during future elections? 15. Is there anything else you would like to add?