Abstract

The recent surge of false information accompanying the Russian invasion of Ukraine has re-emphasized the need for interventions to counteract disinformation. While fact-checking is a widely used intervention, we know little about citizen motivations to read fact-checks. We tested theoretical predictions related to accuracy-motivated goals (i.e., seeking to know the truth) versus directionally-motivated goals (i.e., seeking to confirm existing beliefs) by analyzing original survey data (n = 19,037) collected in early April to late May 2022 in nineteen countries, namely Austria, Belgium, Brazil, Czech Republic, Denmark, France, Germany, Greece, Hungary, Italy, Netherlands, Poland, Romania, Serbia, Spain, Sweden, Switzerland, UK, and USA. Survey participants read ten statements about the Russian war in Ukraine and could opt to see fact-checks for each of these statements. Results of mixed models for three-level hierarchical data (level 1: statements, level 2: individuals, and level 3: countries) showed that accuracy motivations were better explanations than directional motivations for the decision to read fact-checks about the Russian war in Ukraine.

Keywords

Russia’s invasion of Ukraine in early 2022 was accompanied by a sudden increase in false information. At the same time, fact-checkers from across the globe rushed to publish fact-checks (Suárez 2022) and debunking efforts continued to abound in the following months. However, despite a wealth of fact-checks on the supply side, and an abundance of research on the effectiveness of fact-checks, we know very little about motivations to read fact-checks among the general public, especially in the context of the Russia-Ukraine war. Given that people may selectively share fact-checks (Amazeen et al. 2019; Shin and Thorson 2017), or avoid fact-checks when they are not aligned with their beliefs (Hameleers and van der Meer 2020), it is crucial to explore why people may choose to (not) read fact-checks. After all, corrective information may not reach segments of the audience that need corrections most given their initial support for misinformed claims. A better understanding of the motives for people to select or avoid fact-checks may allow for a more targeted approach in corrective information, and contribute to strategies intending to overcome barriers of selecting fact-checks. Especially in times of high information need and uncertainty about the veracity of information, as is the case in global crises, it is crucial to assess the extent to which people engage in the cognitive effort needed to verify information.

In this paper, we therefore study why citizens read fact-checks about the Russian war in Ukraine and how this might differ across countries. We build on previous research distinguishing between two types of (unconscious) reasoning that might be at play (Kunda 1990; Taber and Lodge 2006): (a) accuracy-motivated goals, which relate to wanting to arrive at the most accurate conclusions, and (b) directionally-motivated goals, which relate to wanting to confirm one’s existing beliefs. Research so far finds that directional goals are more prominent motivators for reading fact-checks than accuracy goals (Walter et al. 2021). However, the precedence of directional goals in extant work might be due to a number of specific conditions, namely the partisan nature of the messages studied (Taber and Lodge 2006), as well as the focus on party ideology the predominance of studies conducted in the United States (Jerit and Zhao 2020). Under these circumstances, directional goals are primary drivers of fact-checking motivations. When it comes to other contexts and issues that severely affect citizens irrespective of partisanship, fact-checking motivations may play out differently. The Russian war in Ukraine is a case in point for several reasons. First, in many countries the war severely affected entire systems beyond partisanship, via harm to the global economy or the presence of an imminent threat. In the early stages of the war, which is the time frame of this study, directional motivations for consulting fact-checks may therefore be less pertinent. Second, the war is likely to trigger uncertainty and anxiety among many citizens. These same emotions have been found to reduce partisan effects on information processing and make individuals more open to corrective information (Weeks 2015). Similar to the case of the Covid-19 pandemic, high uncertainty and fast-changing events might trigger a need for orientation that steers individuals toward more accurate information (Van Aelst et al. 2021). In the case of the Russian war in Ukraine it is therefore conceivable that accuracy goals play an overall larger role in motivating individuals to read fact-checks.

The extent to which accuracy goals trump directional goals in this context might differ across countries. Some countries display a pro-Russian bias in the context of the war, whereas others are more supportive of Ukraine (Hameleers et al. 2023). Such differences might affect the relative prominence of directional motivations. Similarly, some countries are geographically closer to the war areas, and therefore more directly affected by the war. Such proximity might trigger accuracy motivations among its citizens more strongly because of the higher costs of being misinformed.

To investigate accuracy- and directionally-motivated fact-checking behaviors, we analyzed original survey data collected in nineteen countries, namely Austria, Belgium, Brazil, Czech Republic, Denmark, France, Germany, Greece, Hungary, Italy, Netherlands, Poland, Romania, Serbia, Spain, Sweden, Switzerland, UK, and USA in spring 2022. Survey participants (N = 19,037) read ten statements about the Russian war and were subsequently given the choice to consult fact-checks for any of these statements. The statements varied in terms of whether they were true, false, or unverified, and whether they had a pro-Ukrainian or pro-Russian bias. This variation was intended to mimic the actual information ecology as closely as possible, reflecting the diversity of information people were exposed to in the first weeks after the Russian invasion of Ukraine. In studying accuracy and directional motivations, we included a cross-country comparison to understand how decisions to read fact-checks differ across countries in the context of a global crisis. The overarching research question of this study is: Why do individuals read fact-checks in the context of the Russian war in Ukraine?

Theory and Hypotheses

Motivations to Read Fact-Checks About the War

Research on corrective information has mostly focused on the effectiveness of fact-checks in refuting misperceptions (Walter and Tukachinsky 2020). Fact-checks offer an evaluation of political claims as well as a factual correction of suspicious statements made in various offline and digital media (Graves and Amazeen 2019). Different presentation formats, such as long-form fact-check articles or videos, have been shown to be effective (Young et al. 2018). While fact-checks seem effective when participants are forcefully exposed to them, actually selecting to view them might not be the type of behavior that occurs frequently in today’s digital media environments (Graves and Amazeen 2019; Robertson et al. 2020). Therefore, it is crucial to explore what motivates citizens to verify information because only if fact-checks are selected in the first place can they have a positive effect on belief correction.

The selection of fact-checks may be contingent upon different motivations to seek out information. We can discern two specific—if unconscious—motivations: the motivation to be accurate versus the motivation to confirm prior beliefs (Taber and Lodge 2006) which is also referred to as motivated reasoning (Kunda 1990). Motivated reasoning can be understood as the guiding influence of people’s existing beliefs and identities on the selection and processing of information (Chaiken 1980; Kunda 1990). People may show a tendency to seek out information that confirms prior beliefs and identities, while avoiding conflicting information.

In contrast to directionally-motivated goals, accuracy goals are more likely to drive individuals toward accurate information, irrespective of whether it confirms or challenges existing beliefs (Kunda 1990). Individuals who are motivated by accuracy goals exert more cognitive energy in processing information and seek to reach more correct conclusions (Nir 2011). Evidence in support of accuracy motivations shows that accuracy goals might be amplified under specific conditions, namely under uncertainty and when an issue is particularly relevant. When people are uncertain about the veracity of information, and do not believe that they have sufficient knowledge about the topic, they are more inclined to select additional information to manage their feelings of uncertainty (Berger and Kellermann 1994). In the case of the Russian war in Ukraine, uncertainty is likely amplified because the crisis situation poses a severe threat to many citizens (e.g., nuclear threat, expansion of the war to other areas). In support of this, previous work shows that in times of crisis and uncertainty, citizens’ need for orientation increases (Lowrey 2004; Van Aelst et al. 2021), which might trigger accuracy goals.

Since accuracy and directional motivations are not directly observable and difficult to assess in a cross-country survey, we rely on a number of indicators and proxies as further described in the following sections.We formulate partly competing hypotheses relating to directional motivations versus accuracy motivations, as supported by extant literature. In times of high information need and uncertainty about the veracity of information, as is the case in global crises like the war in Ukraine, it remains an open question which motivational processes are more likely to lead citizens toward fact-checks. In this study, we seek to answer this question empirically, by simultaneously testing directional motivations and accuracy motivations.

Directional Motivations to Fact-Check

Previous research has shown that the selection of fact-checks is related to motivated reasoning (Hameleers and van der Meer 2020; Walter et al. 2021). Fact-checking messages are less likely to be selected when the claims they respond to are congruent with people’s existing beliefs (Hameleers and van der Meer 2020). Fact-checks are also selectively shared by partisans to achieve directional goals. Specifically, partisans are more likely to share fact-checks that either favor their preferred candidate or delegitimize opposed candidates (Shin and Thorson 2017). People are also more likely to reject and counter-argue corrective messages when they run counter to their views (Thorson 2016). Overall, it thus seems that people may not consult corrective information when this threatens their prior beliefs, while they might do so if it helps to reassure their prior beliefs and identities—a strategy referred to as “affirming fact-checking” (Walter et al. 2021).

Against this backdrop, we expect that existing beliefs related to the ideological and attitudinal basis of statements concerning the Russian war in Ukraine may bias the selection of fact-checks. Irrespective of its actual veracity, the more people’s prior beliefs align with the scrutinized statement, the less likely they should be to select a fact-check that might cast doubts on these beliefs. Likewise, people may resort to affirming fact-checking, meaning that they select a fact-check that they expect to further discredit a statement that they already believe to be false. Yet, Edgerly et al. (2020) found that this may also work the other way around: People may be most likely to verify information when they already believe that the headline is true. This effect can be explained as motivated by a confirmation bias: If people expect that they will encounter discrepant views in corrective information, they may be likely to avoid the verification. We deviate from this expectation for two main reasons. First, we study fact-checking intentions in an international context where partisan biases and partisan motivated reasoning are expected to play a less central role. Second, we focus on an unfolding crisis event for which information was surrounded by high levels of uncertainty. In addition, it should be stressed that selecting a fact-check is not the same as verifying information: Actual exposure to the fact-check may lower uncertainty, but people do not know beforehand whether the verdict rates the information as true or false. In this context, we expect that fact-checking serves as a way to mitigate uncertainty and gain knowledge in times of high need for factual information, which outperforms the need to avoid the discomfort of discrepant views.

To assess the biasing role of motivated reasoning in the context of the Russian war in Ukraine, we consider two factors, namely (a) people’s evaluation of the statement’s veracity and (b) the alignment between people’s stance on the war (i.e., whether they demonstrate a pro- or counter-Russian bias) and the pro- or counter-Russian bias of the statement. We introduce the following hypotheses on the role of directional motivated reasoning in the selection of fact-checks:

H1: Individuals are more likely to select a fact-check if (a) they believe that the statement it checks is false and (b) if the ideological stance of the statement contradicts their ideological beliefs.

Accuracy Motivations to Fact-Check

Despite the potential relevance of directional motivations, several studies highlight the importance of accuracy motivations under specific conditions—several of which are met in the context of the Russian war in Ukraine. Compared to directional motivations, which are relatively directly indicated by an alignment between people’s existing beliefs and the ideological stance of the fact-checked statement, accuracy motivations are less directly observable. We therefore rely on a number of indirect indicators of accuracy motivations as well as conditions that make it more likely for accuracy motivations to be present.

Seminal research suggests that uncertainty is a key condition under which accuracy motivations arise. This is because uncertainty, especially when paired with relevance, triggers a need for orientation (Matthes 2005; Weaver 1980), which is the psychological need to seek out relevant information in the face of unfamiliar situations. The need for orientation is closely related to accuracy motivations in the sense that accuracy-motivated behaviors are one way that the need for orientation can be satisfied. Under conditions of uncertainty about political issues, the news media are seen as a key source of relevant information that can provide orientation (Matthes 2005). Recent literature also suggests a link with verification behaviors, such that people are more likely to verify information when they experience uncertainty (Walter et al. 2021; Weeks 2015). Verifying information can fulfill the need for orientation because it can serve to reduce uncertainty when confronted with inconsistent, unavailable, or ambiguous information (Brashers 2001). In line with this, it has also been suggested that need for orientation, even more than the strength of ideology, predicts the sharing of fact-checking information (Amazeen et al. 2019). Thus, the higher individuals’ need to be updated about mediatized events and issues, the more relevant it is for people to be certain that information is true. Fact-checks may offer an informational cue to reduce uncertainty about the veracity of information. Moving beyond sharing intentions (e.g., Amazeen et al. 2019), need for orientation should thus also play a role in the actual selection of fact-checking information. As a contribution to existing work in this field (e.g., Amazeen et al. 2019; Edgerly et al. 2020), we propose that people with a stronger need for orientation are more motivated to be accurately and completely informed on issues, which should also result in a stronger reliance on additional information that reduces uncertainty. We thus interpret fact-checking that takes place as a result of uncertainty as accuracy-motivated fact-checking.

If accuracy motivations are present, then we expect that the more people are uncertain about the veracity of content received in the information ecology, the more they should be motivated to verify the information and avoid the state of discomfort caused by uncertainty. If people are motivated by accuracy goals, then uncertainty should trigger the choice to read fact-checks that challenge (false) information. Uncertainty can arise at different levels and in this paper we make a broad distinction between situational versus dispositional uncertainty. Situational uncertainty refers to uncertainty that arises from the conditions and circumstances of a given situation. In the context of the Russian war in Ukraine, situational uncertainty may arise because the information ecology is threatened by mis- and disinformation and it is unclear which statements about the war can be trusted. Dispositional uncertainty, in contrast, is attributed to the individual and can be understood as individual differences in experience or tolerance of uncertainty. While it is clear that individuals and situations interact with each other in complex ways, making a clear distinction between situational versus dispositional uncertainty allows us to highlight their respective contributions to individual-level motivations to seek out fact-check information.

Situational Uncertainty

At the situation level, we rely on a number of global measures capturing uncertainty related to the veracity of information about the Russian war in Ukraine in the information ecology. As Russia invaded Ukraine, fact-checkers noted an alarming amount of false information and recurring disinformation narratives (Suárez 2022). Citizens have likely encountered at least some false claims, rumors, or disinformation narratives related to the war, which suggests uncertainty in the information environment. Moreover, disinformation campaigns often explicitly seek to sow doubt about unfolding events. We expect that when people believe that information on the Russian war in Ukraine is generally false or even deliberately deceptive, they may be more uncertain about the extent to which they should accept claims about the war. To resolve this undesirable state of uncertainty, they might choose to read fact-checks that offer additional information on the claims they are exposed to.

We can explain this in light of deception detection and the truth default theory (Levine 2014). According to this theory, people have a tendency to accept the honesty of information, unless they are alerted to suspicion in their environment. We expect that perceived misinformation acts as a trigger event for deviating from the truth default, signaling that the honesty of information cannot be taken at face value, and emphasizing the need for a critical outlook on potentially deceptive content. Consequently, perceiving that there is a high base rate of false information should correspond with uncertainty about the acceptance of information as true (see also Hameleers 2023). After all, in such a context, any information could be false. As a strategy to deal with the high risk perceptions related to false information, individuals who believe that misinformation prevail are more likely to select fact-checks that help them to navigate their information environment.

Against this backdrop, we hypothesize:

H2a: Individuals are more likely to select fact-checks if they believe that there is more false information in the information ecology.

Next to general beliefs related to false information about the Russian war in Ukraine, we map the perceived causes of false information. These perceived causes have previously been summarized under misinformation versus disinformation beliefs which can be understood as the perception that information is either unintentionally false (misinformation beliefs) or deliberately dishonest and deceptive (disinformation beliefs; for a conceptualization, see Hameleers, Brosius, Marquart, et al. 2021). Previous research suggests that individuals distinguish between these two false information causes in the context of the Russian war in Ukraine and that they believe that false information in this context is more likely to be caused by manipulative intent (i.e., disinformation) rather than a lack of access to conflict areas or experts (Hameleers et al. 2023). This aligns with the knowledge persuasion model which states that source motives, such as the motive to deceive, are critical for perceived accuracy of (false) claims, especially in a misinformation context where it is unclear who the source is (Amazeen and Krishna 2022; Friestad and Wright 1994). If the perceived motive of the source is to try and deceive, then individuals may be more likely to judge the claims put forward as false.

When causes of false information are seen as intentional, the crisis of untruthfulness is more severe, and a stronger need to restore uncertainty may be triggered. We therefore expect that when people are inclined to believe that false information is spread due to intentional deception, they experience a stronger need to restore certainty by selecting fact-checking messages. Hence, we hypothesize:

H2b: Individuals are more likely to select fact-checks if they believe that false information is spread intentionally.

In addition to beliefs related to the overall information ecology, uncertainty at the statement-level may matter for motivations to read fact-checks. Following the Russian invasion of Ukraine, a number of specific claims were circulated which varied in their degree of certainty and truthfulness. Some prominent statements were false (e.g., “The U.S. is funding biological weapons research in Ukraine.”) and yet others were unverifiable at the time (e.g., “Russia is committing genocide in Ukraine.”). Extrapolating from previous research on uncertainty and fact-checking (Li and Wagner 2020), we expect that participants will be selective with regard to the statements they verify depending on how certain they are about the truthfulness of the respective statement. Being uncertain about the veracity of claims may be a more constructive state than being certain, especially when one is certain that a false claim is true. In line with previous literature problematizing the state of being actively misinformed (Hochschild and Einstein 2015), we view uncertainty as potentially positive because individuals who feel uncertain about claims may be more open to corrective information (Damstra et al. 2023).

Seeking out additional information in the form of a fact-check is one strategy to resolve the discomfort of not being sure about the veracity of information (Walter et al. 2021). If accuracy motivations are present, then we expect that individuals are more likely to follow this strategy in the face of uncertainty about the veracity of specific statements.

H3: Individuals are more likely to select fact-checks if they are more uncertain about a statement’s veracity.

Dispositional Uncertainty

People vary in the degree to which they can tolerate uncertainty and whether they want to understand issues deeply. One interpersonal difference that is key in this regard is the need for cognition (NfC). Individuals with a higher NfC enjoy solving puzzles, thinking deeply, deliberating long, and considering issues from different angles (Cacioppo and Petty 1982). NfC has been argued to capture accuracy goals (Nir 2011) because people with a high NfC seek out both confirming and disconfirming information and process information more deeply even if it challenges their existing views. In support of this, research on news consumption found that individuals with a higher NfC are more likely to voluntarily expose themselves to news they might disagree with (Tsfati and Cappella 2005). These findings suggest that individuals with a higher NfC may be more inclined to read fact-checks because they want to learn more about different angles to a problem and they are less afraid that fact-checks might challenge their beliefs. We therefore propose:

H4: Individuals with a higher NfC are more likely to select fact-checks.

Another condition for accuracy motivations related to individual-level uncertainty is personal relevance (Matthes 2005; Weaver 1980). Uncertainty is more likely to trigger accuracy motivations when individuals feel like the issue at hand matters to them personally. Seminal literature shows that when people are highly involved in an issue, they are more likely to process information in a systematic way instead of relying on heuristics (Chaiken 1980). Crises highlight the urgency and relevance of political issues and this in turn affects people’s information-seeking behaviors. In the case of the 9/11 terrorist attacks, individuals who perceived terrorist threats to be more severe relied more heavily on news media for information about the issue (Lowrey 2004). During the Covid-19 pandemic, individuals who were more concerned about the pandemic were more likely to seek out additional information from the news (Van Aelst et al. 2021). Based on these findings, we hypothesize:

H5: Individuals are more likely to select fact-checks if the issue is more relevant to them.

Cross-Country Differences

The extent to which accuracy goals and directional goals motivate citizens to read fact-checks in the context of the war might differ across countries. The impact of false information on citizens is contingent upon the media systems and political systems of their countries, which determine how widely and effectively false information is able to spread. Cross-country research shows that countries with low levels of polarization and populist communication, as well as high levels of media trust and strong public service broadcasting are conditions that protect countries against misinformation (Humprecht et al. 2020). Western European democracies, like Austria, Belgium, Denmark and the Netherlands, display these characteristics. In a country where false information does not easily reach the public, citizens might not need the same level of accuracy motivations in order to be accurately informed as in countries with structures that offer less structural protection from false information. Countries described as being more vulnerable to disinformation are those that display higher levels of polarization, populist communication, social media news use, as well as low levels of trust. This pattern is found in polarized pluralist countries, such as Greece, Italy, Portugal and Spain. In such countries, misinformation is expected to be relatively more prevalent, which might affect the degree to which citizens seek out verifying information.

Several resilience indicators discussed in the paper by Humprecht et al. (2020), namely, ideological polarization and press freedom also seem relevant to the case of the Russian war in Ukraine. In addition, countries differ in their degree of pro-Russian stances on this topic (Hameleers et al. 2023), which might create conditions that make it more or less likely for directionally-motivated information seeking to occur among citizens. Countries also differ in many structural aspects, such as the information ecology, that might make individual-level accuracy goals more likely to occur. We therefore study three relevant country-level factors that we expect to be most likely to be related to citizens’ inclination to read fact-checks in the context of the war, namely, country-level polarization, press freedom, and geographical proximity to the war areas:

RQ: What is the relationship between country-level resilience to misinformation (i.e., polarization, press freedom, and geographical proximity) and citizens’ choice to read fact-checks?

Methods

Data

Data for this study come from a sample of 19,037 citizens from nineteen countries, namely Austria, Belgium, Brazil, Czech Republic, Denmark, France, Germany, Greece, Hungary, Italy, Netherlands, Poland, Romania, Serbia, Spain, Sweden, Switzerland, UK, and USA. The country selection was based on available resources and expertise in the respective countries at the onset of the war. That is, we selected countries where an infrastructure was in place that would facilitate a quick survey launch in order to capture citizen perceptions amidst an unfolding crisis.

Data collection was conducted from late April to early May 2022 by the international research company Kantar, which also translated the original English-language survey into the respective country languages. Median completion time was thirteen minutes and twenty four seconds, and participants received a small compensation in the form of voucher points in line with the survey company’s policies. The total count of valid survey completions amounted to 19,037, with 53 percent of the participants self-identifying as female. The average age of survey participants was 48.96 years (SD = 16.31).The average completion rate across all countries was 65.9 percent. Completion rates per country can be found in Supplemental Table A1.

The sampling strategy was as follows: The survey company extended invitations to their panel members through their own digital channels via digital invitations. Incentives were provided to participants, which could be accumulated and exchanged for gifts, with a value of approximately one euro per completion. The panel operates on a voluntary opt-in basis. Careful monitoring of participation statistics is conducted to ensure that panelists are not overwhelmed or engaged in multiple surveys simultaneously.

For each country sample, the survey company implemented soft quotas on gender, education, and age to ensure that the sample compositions approximated the population composition in the respective country. The quota came from the latest national census of the respective countries.Toward the conclusion of the study, these quotas were slightly relaxed to meet the desired total number of participants. The target and achieved quota per country can be found in Supplemental Table A2.

The project received ethics approval from the review board of the University of Amsterdam. The questionnaire (anonymized link: https://osf.io/pruda/?view_only=188fca5107ca40639936bfa810bbe5d5) as well as research questions and variables (anonymized link: https://osf.io/vkx7m?view_only=af62c3921e904e9c9f20e04bc624628f) were pre-registered on the platform of the Open Science Framework. 1

Procedure

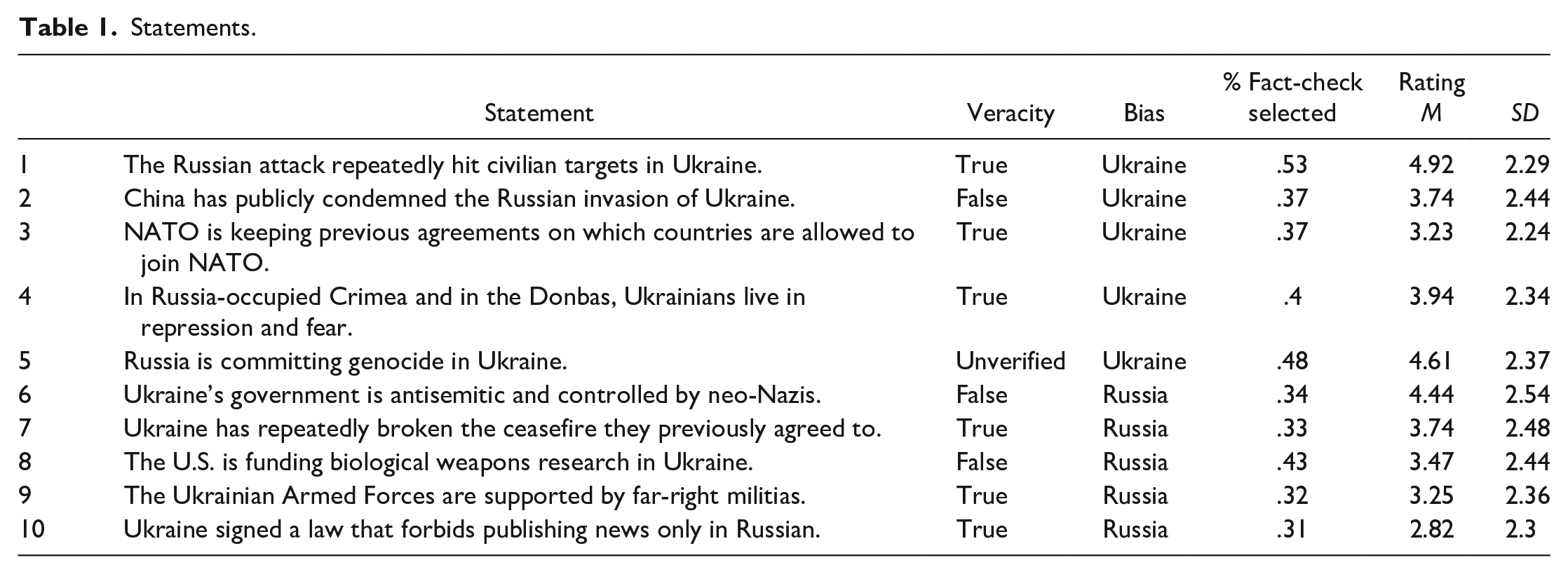

Participants were presented with ten statements about the Russian war in Ukraine (see Table 1), which varied in terms of whether they were true, false, or unverifiable, and whether they favored Ukraine or Russia. Participants rated each statement in terms of how certain they were that it is true or false. 2 They then answered a number of other questions about their attitudes toward the Russian war in Ukraine, and measures of NfC. At the very end of the survey, participants were informed that the ten statements had been fact-checked and they could opt to see fact-checks for any of these statements. Participants were free to select as many fact-checks as they wanted, and they received the fact-checks they selected on subsequent screens.

Statements.

Measures

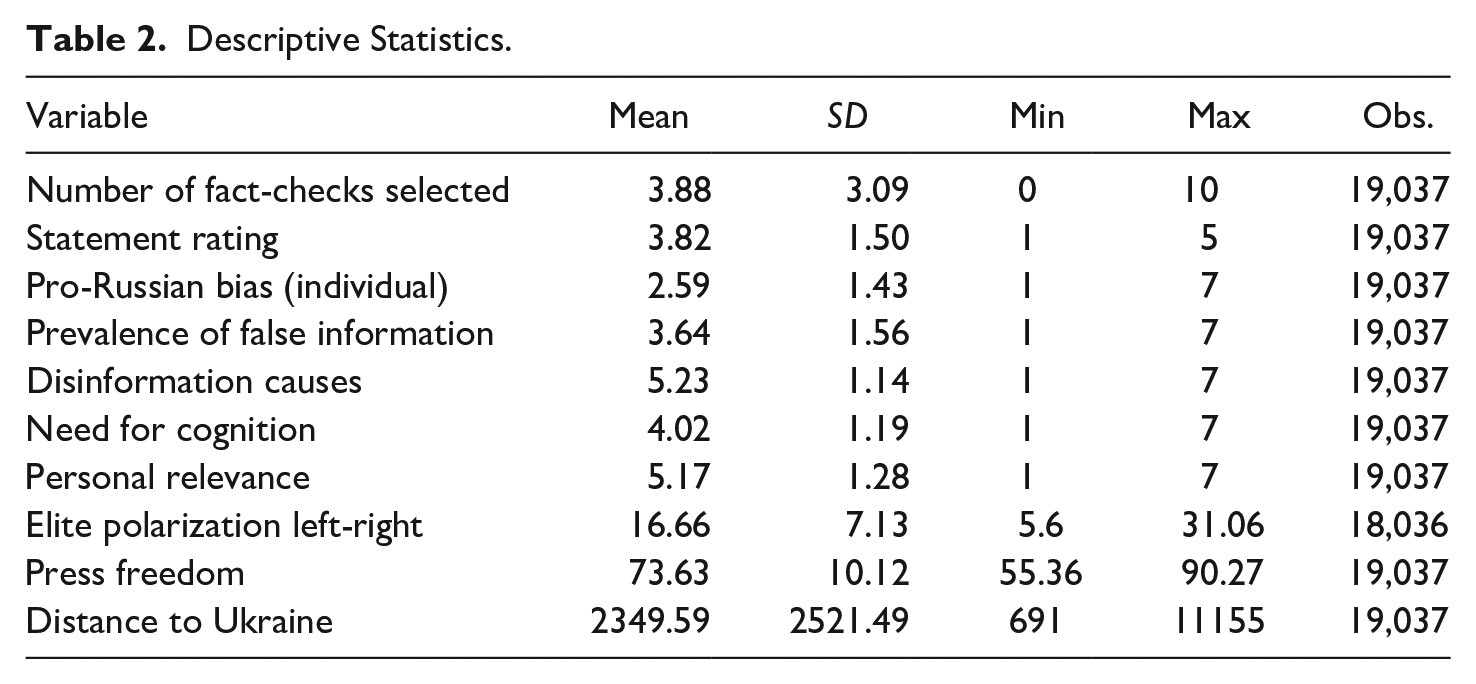

Table 2 displays descriptive statistics for predictive measures included in the main analyses. Unless stated otherwise, all variables at the individual level were measured on a seven-point scale (1 = completely disagree, 7 = completely agree).

Descriptive Statistics.

Outcome Variable

The key outcome variable is a binary measure at the statement-level. It captures whether or not a fact-check was selected for a respective statement. On average, participants opted to see four out of ten fact-checks (see Table 1).

Statement Rating

Per statement, respondents indicated how certain they were that the statement is true or false on a five-point scale (1 = very certain it’s false; 5 = very certain it’s true).

Pro-Russian Bias (Statement)

Each statement either favored the Russian or Ukrainian perspective. The direction of the bias for each statement is presented in Table 1, column “Bias.” We coded this into a variable capturing pro-Russian bias (0 = pro-Ukrainian bias, 1 = pro-Russian bias).

Prevalence of False Information

To measure perceptions related to the prevalence of false information, we relied on the conceptualization of Hameleers, Brosius, Marquart, et al. (2021), adjusted to the context of the Russian war. 3 Respondents indicated their agreement with a total of six statements. 4 We obtained our measure by averaging across the six responses (M = 3.64, SD = 1.56, Cronbach’s alpha = .94).

Causes of False Information

To capture causes of false information, we followed the conceptual distinction between misinformation as false information driven by a lack of expert knowledge and/or empirical evidence (Vraga and Bode 2020) and disinformation as being motivated by goal-directed deception or manipulation (e.g., Freelon and Wells 2020).

To construct perceived disinformation causes, we averaged across responses on the following four items: “False information is spread (c) due to strategic aims of political actors, (d) to disrupt the societal order, (e) to make financial gains, and (f) to hide reality from the people” (M = 5.23, SD = 1.14, Cronbach’s alpha = .79).

Pro-Russian Bias (Individual)

We measured individual-level pro-Russian bias by asking to what extent respondents agreed with four statements, two of which were supporting Russia, while the other two were supporting Ukraine. The mean pro-Russian bias was 2.59, SD = 1.43, Cronbach’s alpha = .76.

Need for Cognition

Participants indicated their agreement with five statements taken from Tsfati and Cappella (2005). 5 We obtained our measure by averaging across all items (M = 4.02, SD = 1.19, Cronbach’s alpha = .79). Just like Tsfati and Capelle (2005), we use a drastically shortened battery of items to tap NfC. We specifically use statements that are related to the cognitive effort invested in deliberating about the quality and value of information.

Personal Relevance

To obtain our measure of personal relevance, we averaged across respondents’ agreement with the following three items inspired by Hameleers, Brosius, Marquart, et al. (2021): (a) The war in Ukraine is an important global issue, (b) The war in Ukraine is an important issue for my country, and (c) The war in Ukraine is an important issue to me personally (M = 5.28, SD = 1.32, Cronbach’s alpha = .79).

Country-Level Variables

Polarization was proxied as elite polarization as proposed by Gidron et al. (2020). This metric assesses the divergence in left-right ideology scores among political parties within each country. A higher value on this measure signifies increased ideological polarization within that country. The ideology scores themselves are derived from data provided by the Comparative Manifesto Project (Lehmann et al. 2022). 6

Press freedom

To account for geographical proximity, the distance in kilometers between each country and Ukraine was determined using data from the CEPII Gravity database (Conte et al. 2022).

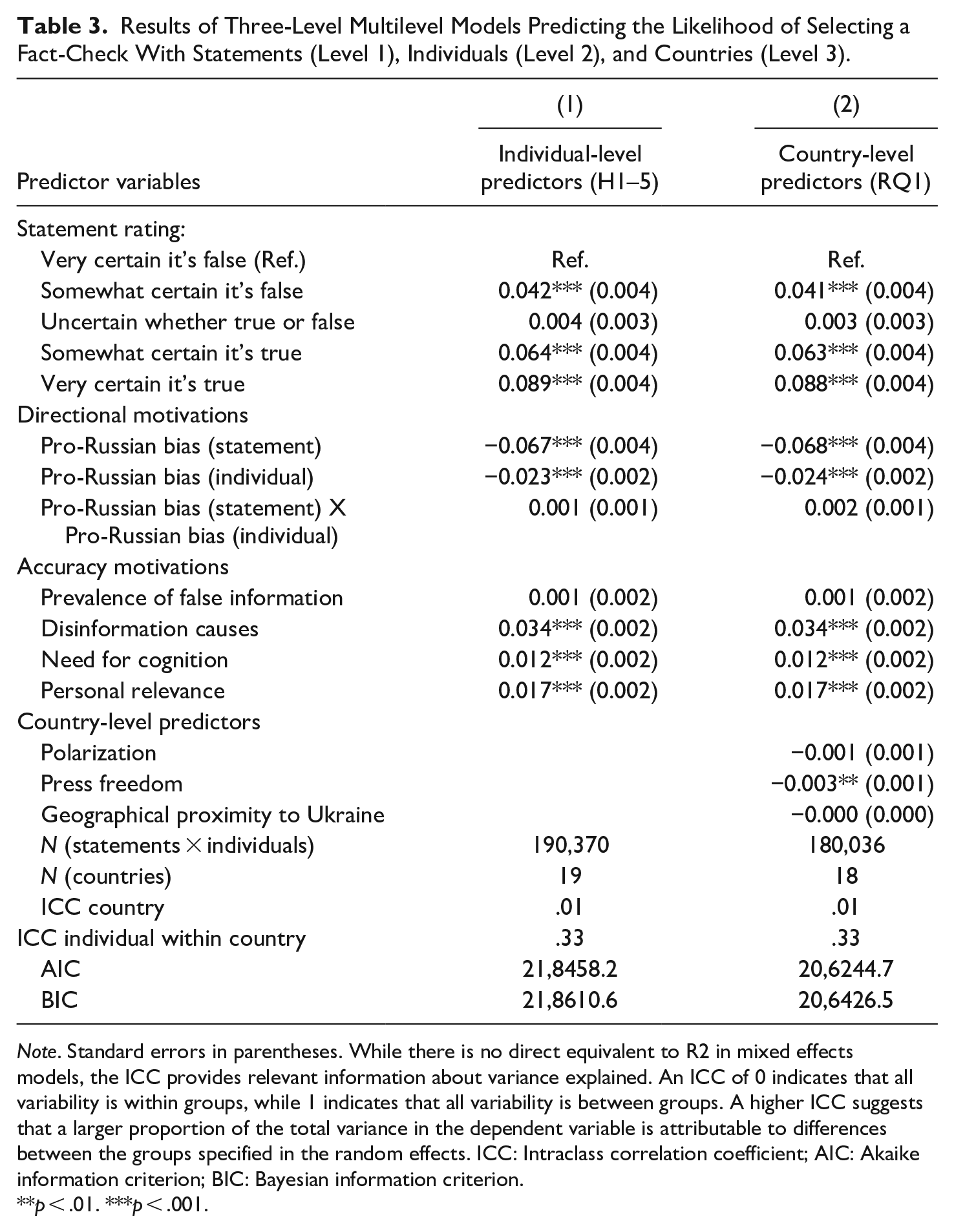

Analytical Strategy

The data are structured such that fact-checks are nested within individuals (i.e., each individual saw ten statements for which they could select fact-checks) and individuals are nested within countries. The outcome of interest (i.e., fact-check selection) was measured at the lowest level, namely the statement-level, while the predictors were measured at various levels. We therefore estimated mixed models for three-level hierarchical data with statements at level 1, individuals at level 2, and countries at level 3. All models are linear probability models with random intercept and fixed slope fitted using maximum likelihood estimation (see Table 3). Regression coefficients can thus be interpreted as probabilities. In the Supplemental Information file we report post-hoc power analyses and robustness checks.

Results of Three-Level Multilevel Models Predicting the Likelihood of Selecting a Fact-Check With Statements (Level 1), Individuals (Level 2), and Countries (Level 3).

Note. Standard errors in parentheses. While there is no direct equivalent to R2 in mixed effects models, the ICC provides relevant information about variance explained. An ICC of 0 indicates that all variability is within groups, while 1 indicates that all variability is between groups. A higher ICC suggests that a larger proportion of the total variance in the dependent variable is attributable to differences between the groups specified in the random effects. ICC: Intraclass correlation coefficient; AIC: Akaike information criterion; BIC: Bayesian information criterion.

p < .01. ***p < .001.

Results

Table 3 displays results of our regression analyses. Model 1 tests H1–5 and Model 2 tests RQ1.

Directional Motivations (H1a & H1b)

We did not find support for H1a that people are more likely to select a fact-check if they believed that the statement was false. Instead, individuals who thought that the statement was true were significantly more likely to choose to see a fact-check (B = 0.09, SE = 0.004, p < .001). We also did not find that reading fact-checks was promoted by an alignment between ideological beliefs and the ideological stance of the statement (H1b). While pro-Russian attitudes and pro-Russian statement bias were negatively associated with the likelihood of selecting a fact-check, the interaction effect was not significant. This means that we did not find evidence for directional motivations in fact-checking behavior. 7

Accuracy Motivations

Uncertainty About the Information Ecology (H2a & H2b)

We did not find that individuals were more likely to select fact-checks if they believed that there was more false information in the information ecology (H2a). When asked about the specific causes for false information, however, we found that individuals were more likely to select fact-checks if they thought that false information was spread deliberately (i.e., disinformation causes, B = 0.034, SE = 0.002, p < .001). With every one step increase in perceived disinformation causes, the likelihood of selecting a fact-check increased by 3.4 percent. Because perceived disinformation causes is measured on a seven-point scale, someone with the highest score on perceived disinformation causes has a 24 percent higher probability of selecting a fact-check than someone with the lowest score. This finding lends support to H2b.

Uncertainty About Statements (H3)

We found mixed results for the hypothesis that individuals select fact-checks if they are more uncertain about the statements’ veracity (H3). Individuals who were uncertain about the veracity of a statement were not more likely to select a fact-check than those who were very certain that it was false. However, those who were somewhat certain that a statement was true (B = 0.064, SE = 0.004, p < .001) were 6.4 percent more likely to select a fact-check than those who were very certain that it was false and those who were somewhat certain that a statement was false (B = 0.042, SE = 0.003, p < .001) were 4.2 percent more likely to select a fact-check. Those who were very certain that a statement was true were 8.9 percent more likely to select a fact-check than those who were very certain that it was false (B = 0.089, SE = 0.004, p < .001). While we did not expect to find this, this result does suggest that people are willing to challenge their existing beliefs, especially if they are very certain that they got it right.

Need for Cognition (H4) and Personal Relevance (H5)

In line with H4, we found that people with a higher NfC were 1.2 percent more likely to select fact-checks (B = 0.012, SE = 0.002, p < .001), meaning that those with the highest NfC were 8 percent more likely to select a fact-check than those with the lowest NfC. In line with H5, we found that people were 1.7 percent more likely to select a fact-check if the issue was more important to them (B = 0.017, SE = 0.002, p < .001). Those who experienced the problem as most important were 12 percent more likely to select a fact-check than those who thought it was least important.

Country-Level Predictors (RQ1)

Finally, we tested to what extent country-level variables were associated with citizens’ choice to read fact-checks (Model 2). While polarization and geographical proximity did not emerge as significant, we do observe a very small, negative and significant association between press freedom and the decision to choose a fact-check (B = −0.003, SE = 0.001, p = .008). In our data, press freedom ranges from fifty five in Greece to ninety in Denmark, which means that citizens living in Greece are about 11 percent more likely to read fact-checks than citizens living in Denmark, because of their country-level differences in press freedom.

Discussion

This study set out to investigate directional motivations and accuracy motivations in the selection of fact-checks about the Russian war in Ukraine. Our cross-country results show a clear pattern where accuracy motivations trump directional motivations. Specifically, we do not find evidence for directional motivations seeing that individuals are not more likely to read fact-checks when they think that the claim they check is false (affirming fact-checking). Alignment between their own ideological stance and the ideological bias of the statement also did not affect inclinations to read fact-checks in the direction that would point to directional goals. Instead, accuracy motivations seem to play a relatively larger role seeing that individuals are more likely to read fact-checks when they experience more uncertainty about information related to the war, when they have a higher need to understand issues deeply and when the issue at hand is more relevant to them personally.

These findings are in line with seminal research on the need for orientation, which highlights the importance of uncertainty and issue relevance for drawing individuals toward reliable information (Matthes 2005; Weaver 1980). When individuals experience unfamiliar and uncertain circumstances that are also highly relevant to their lives, they show a stronger tendency to turn to the news media for clarity and guidance. Similar to previous crises, like the Covid-19 pandemic (Van Aelst et al. 2021), the Russian war in Ukraine has likely triggered uncertainty among many citizens at a time when being accurately informed about the crisis situation was paramount. Especially in the early weeks after the Russian invasion of Ukraine, which is the timeframe of this study, events in the crisis area were quickly unfolding, threatening to further escalate, or spread across country borders. These circumstances have likely triggered various emotions among citizens, which may impact information processing. While reliance on emotions in information processing has been linked to misperceptions in the context of the Covid-19 pandemic and the 2020 U.S. presidential elections (Young et al. 2022), our findings show a different picture. In the context of the Russian war in Ukraine, high levels of uncertainty appear to have triggered an increased need for orientation, which individuals were able to satisfy (at least partly) by reading fact-checks about the war. In line with our expectation that fact-checks can reduce uncertainty, we found that individuals in our study were more drawn to fact-checks if they thought that false information about the war was due to deliberate deception. They also read fact-checks if they displayed a higher NfC and if the issue was more relevant to them personally. All of these findings are indicative of accuracy goals because individuals seem to read fact-checks in an attempt to reduce uncertainty, rather for the purpose of confirming existing beliefs. Citizens seem to reach for fact-checks in order to learn more about the truth (informing fact-checking), even when the fact-checks might cast doubt on what they currently believe to be true. Fact-check articles tend to focus on false statements rather than true statements (Lee et al. 2023), like Politifact, which concludes in 89 percent of their fact-check articles that the claim contained at least some degree of falsehood. We expect that citizens know this intuitively. If they choose to read a fact-check, then there is a high chance that the fact-check will conclude that the statement is false. If citizens think a statement is true, and they choose to read a fact-check that they expect to conclude that the statement is false, then they are not affirming knowledge, but they are willing to challenge their existing beliefs for the sake of greater accuracy. We find that this is the case in the context of the Russian invasion of Ukraine.

Hence, although extant literature has assumed that people may be more vulnerable to false information when their prior beliefs align with deceptive information, our findings indicate that in an unfolding global crisis, a lack of accuracy motivations may make people more vulnerable to false information. In line with this, an important implication of our study is the need to enhance critical thinking and media literacy skills that can trigger more certainty about verification tasks and instill a stronger motivation to verify.

Our study extends existing research in a number of ways. First, very few studies have compared the different motivations—accuracy versus directional motivations—in the selection of fact-checking information. A notable exception is a two-study paper by Walter et al. (2021) which focused on motivations to verify partisan statements in the context of the U.S. While they did not find strong support for the influence of accuracy motivations on intentions to fact-check, they showed that directional motivations were indirectly related to fact-checking intentions. The findings of our study diverge from this pattern and highlight the importance of cross-country and cross-topic research. Our study provided a cross-country comparison on a topic that was less clearly divided along partisan lines, but of high and immediate relevance to many citizens. Under these conditions it seems that accuracy motivations are more likely to drive people toward reading fact-checks, and this is a pattern we observe across countries.

A relevant country-level difference we found is the relationship between press freedom and motivations to read fact-checks: These motivations were most pronounced under conditions of lower press freedom (i.e., in Greece). We interpret this as a corrective response from individuals to the higher uncertainty they encounter in the trustworthiness of information. Countries with lower levels of press freedom are more vulnerable to misinformation (Humprecht et al. 2020), which should correspond to a stronger incentive for citizens to read fact-checks about the information they receive. This also corresponds with Amazeen’s (2020) conceptualization of fact-checking as a democracy-building tool that especially emerges in the face of threats to democratic institutions—of which low press freedom is a salient threat.

It is worth noting that our country selection is bound to have implications for the generalizability of our findings. In terms of strengths, the country contexts included in this study vary in key macro-level characteristics and allow us to study how country-level differences, such as press freedom, relate to the individual-level behavior of reading fact-checks. We overall find that country-level differences are less predictive of motivations to read fact-checks, than individual level predictors. That said, the included countries are all democratic and industrialized, and mostly European (with the exception of Brazil and the United States). Only one of the countries, namely Serbia, is clearly pro-Russian. It is therefore not entirely clear to what extent our results would generalize to countries beyond the West and in particular to those that are allied with Russia. In line with our focus on conditions and contexts in this study, a future direction for similar research is to focus not on if, but when accuracy motivations or directional motivations are relatively more important, depending on differences between countries of a wider global selection.

Like all studies, this study is not free of limitations. The data were collected as part of a survey, which raises questions about ecological validity. In the real world, when people scroll through their news feeds, they come across an abundance of information from different sources. In our study we mimicked a news feed such that we presented all ten statements on one screen and allowed the individuals to choose which information they wanted to engage with further. However, the amount of information they had to process was limited and stripped off of any other cues, like information on sources, which was shown to be an important factor in judging credibility (Amazeen and Krishna 2022) or markers of engagement (e.g., number of likes or shares). While it is conceivable that a more realistic study design or a field study would attenuate the effect magnitudes, it is not immediately clear that it would also change the pattern of results.

A second limitation may be related to social desirability. The choice to select fact-checks may have been affected by respondents’ desire to present themselves as knowledgeable and interested citizens, and they might have censored their attitudes toward the war. Anticipating this, we minimized social desirability in a number of ways: We carefully phrased the survey questions such that they reflected both pro-Russian and pro-Ukrainian perspectives (see Methods section). We also highlighted at several points in the survey that people may have different opinions on the issue, and that “there are not right or wrong answers” in the statement ratings and that a “best guess is as good as the ‘right’ answer.” In line with this, we see in our measure of attitudes toward the war that respondents have made use of the full range of answer options, and that the dispersion of this measure is comparable to that of other, non-sensitive measures in the survey.

Third, recent studies suggest that priming people to think about the accuracy of statements reduces their likelihood to share false information (Pennycook and Rand 2022). In our study, we asked respondents to rate the veracity of statements, which may have primed accuracy goals, which in turn encouraged them to choose to read fact-checks. Because we use observational data we are unable to account for this possibility. Future research could test this in an experimental design which is able to counterbalance this.

Another limitation is that we rely on self-reported and indirect measures of motivated reasoning processes. Especially, accuracy motivations are hard to observe and we therefore relied on a number of proxies. Although our measures are theoretically grounded and we believe we have tapped the difference between accuracy and directional motivations, it may be impossible to measure (partially subconscious) processing mechanisms in a survey. Future research may draw on more observational or unobtrusive measures to map motivations beyond direct awareness.

Despite the above limitations, our study makes important contributions to the literature. First, our study is based on a unique cross-country dataset that was collected at a critical time, namely a few weeks after the Russian invasion of Ukraine. These data allow us to understand how citizens across countries were dealing with the uncertainty of an unfolding crisis situation. We find that fact-checking information is one strategy that citizens use to reduce uncertainty in times of increased disinformation. Most importantly, with respect to societal relevance, we find that fact-checks can serve to challenge existing beliefs, give citizens an opportunity to distinguish between what is true or false information, and ultimately help them come closer to the factual truth.

Supplemental Material

sj-docx-1-hij-10.1177_19401612241233533 – Supplemental material for Why do Citizens Choose to Read Fact-Checks in the Context of the Russian War in Ukraine? The Role of Directional and Accuracy Motivations in Nineteen Democracies

Supplemental material, sj-docx-1-hij-10.1177_19401612241233533 for Why do Citizens Choose to Read Fact-Checks in the Context of the Russian War in Ukraine? The Role of Directional and Accuracy Motivations in Nineteen Democracies by Marina Tulin, Michael Hameleers, Claes de Vreese, Toril Aalberg, Nicoleta Corbu, Patrick Van Erkel, Frank Esser, Luisa Gehle, Denis Halagiera, David Nicolas Hopmann, Karolina Koc-Michalska, Jörg Matthes, Sabina Mihelj, Christian Schemer, Vaclav Stetka, Jesper Strömbäck, Ludovic Terren and Yannis Theocharis in The International Journal of Press/Politics

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The collection of data in Romania was supported by the Interdisciplinary PhD school of SNSPA. The research presented in this paper is a part of the project “THREATPIE: The Threats and Potentials of a Changing Political Information Environment” which is financially supported by the NORFACE Joint Research Programme on Democratic Governance in a Turbulent Age and co-funded by FWO, DFF, ANR, DFG, NCN Poland, NWO, AEI, ESRC and the European Commission through Horizon 2020 under grant agreement No. 822166. The collection of data in the Czech Republic, Hungary and Serbia was supported by the Economic and Social Research Council [ES/S01019X/1]. The collection of data in Italy was supported by the Italian Ministry of Research and University under the PRIN research program (“National Projects of Relevant Interest”, 2017) (grant number:20175HFEB3).

ORCID iDs

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.