Abstract

In algorithmically mediated societies, fact-checking has transitioned from a journalistic corrective to a foundational infrastructure shaping how public knowledge and civic trust are produced. This study explores how verification practices are increasingly embedded in sociotechnical systems that blur the boundaries between human discretion and algorithmic decision-making. Situating fact-checking at the intersection of media theory and political communication, the analysis examines the epistemic role of human fact-checkers, the rise of hybrid human-AI verification models, and the global institutionalization of fact-checking networks. Special attention is given to the ethical, cultural, and procedural tensions that arise as verification systems are reconfigured through platform governance, algorithmic opacity, and shifting audience expectations. This study conceptualizes it as a proactive, pluralistic form of civic epistemology—one that must reconcile speed with transparency, automation with human judgment, and global standards with local knowledge cultures. Methodologically, it adopts a theory-building approach grounded in conceptual synthesis and multi-level analysis across micro, meso, and macro domains.

Keywords

Introduction

In an era marked by contested truths and algorithmically amplified misinformation, fact-checking has gained renewed urgency (Gondwe, 2025). Digital media ecosystems now circulate information through fragmented and opaque channels, blurring the lines between fact and falsehood and undermining the authority once held by institutional gatekeepers (Shibuya et al., 2025; Shin, 2023). While the internet was once viewed as a democratizing force, it has paradoxically become a catalyst for epistemic instability (Schellmann, 2023). Social media platforms and algorithmic curation systems frequently prioritize engagement over accuracy, accelerating the spread of falsehoods (Choi & Ferrara, 2024). The production and validation of knowledge have moved beyond traditional institutions of expertise and are now shaped by algorithmic infrastructures, platform policies, and attention economies (Marres, 2018; Mihailidis & Viotty, 2017). Within this landscape, fact-checking has evolved into a form of epistemic intervention—one that not only addresses specific inaccuracies but seeks to restore the procedures and norms through which truth claims are constructed, contested, and validated (Seyfert, 2023; Westlund et al., 2024).

Two guiding questions inform this discussion: What lessons can be learned from conventional fact-checking methods? How are human fact-checkers adapting to the AI age's challenges? These questions raise deeper theoretical issues about epistemic authority, media legitimacy, and the organization of truth in digitally mediated societies. They invite reflection on how verification practices are shaped by broader social, technological, and political forces. Fact-checking is not simply a set of procedural tasks—it is embedded within systems of knowledge production that determine who has the power to define what counts as credible information. The study engages with these questions to examine how fact-checking is evolving under the pressures of platform governance, algorithmic media systems, and global information flows. It foregrounds the dynamic tensions between established verification paradigms (Dierickx et al., 2024)—anchored in journalistic commitments to objectivity, transparency, and epistemic rigor—and the emergent hybrid modalities shaped by algorithmic infrastructures, platform governance, and shifting institutional configurations. Fact-checking today represents an active and contested space of intervention, where foundational questions about truth, authority, and credibility are negotiated through shifting combinations of human judgment, institutional norms, and technological mediation. In this context, we conceptualize epistemic infrastructure as the underlying sociotechnical systems, institutional arrangements, and procedural norms that enable—or constrain—how knowledge is constructed, evaluated, and circulated. Much like a physical infrastructure that supports the flow of water or electricity, epistemic infrastructures shape the flow of information and the legitimacy of knowledge claims.

The algorithmic curation tools used by platforms like Google News influence what is surfaced as credible content, just as editorial policies in traditional newsrooms once did. Unlike traditional editorial filters, however, these new infrastructures operate through opaque algorithms, crowd-sourced flagging systems, and third-party fact-checking partnerships—often with little transparency or accountability. Understanding fact-checking as an epistemic infrastructure highlights its role not merely as a reactive tool to counter falsehoods but as a foundational component of how truth is institutionally constructed and publicly maintained in digital ecosystems. This conceptualization also aligns with the foundational principles of traditional fact-checking (accuracy, accountability, and transparency), which operated under established editorial norms and performance standards. By linking these journalistic principles to the evolving notion of epistemic infrastructure, the study underscores their continuity and transformation in algorithmically mediated verification systems.

This shift from editorial gatekeeping to infrastructural governance represents more than a change in tools; it marks a deeper epistemological transformation in how truth is organized and sustained. Epistemic infrastructures—composed of algorithms, protocols, and platform logics—do not simply support verification but actively shape the conditions under which it occurs. In the AI era, fact-checking no longer operates merely at the level of content correction; it functions within complex systems that classify knowledge, encode legitimacy, and automate evidentiary processes. By foregrounding this infrastructural turn, we can better understand how verification itself is being redefined—not only in terms of who verifies, but how credibility is computed, communicated, and contested across digital environments.

Building on this infrastructural lens, the present study contributes to emerging work in algorithmic epistemology by conceptualizing fact-checking as a form of epistemic infrastructure in its own right. We examine how verification practices are shaped by hybrid arrangements of human and machine labor, highlighting the interpretive and ethical dimensions of fact-checking in algorithmically mediated environments. Our analysis proceeds across three levels: micro (the epistemic labor of individual fact-checkers), meso (the institutional norms of fact-checking organizations), and macro (the broader architectures of platform governance and global information flows). Together, these perspectives offer a more comprehensive understanding of how truth is negotiated, institutionalized, and contested in contemporary digital contexts. To pursue this inquiry, the study engages in a theory-building effort that synthesizes insights from infrastructure studies, science and technology studies, and communication scholarship. Through conceptual analysis and illustrative examples, it develops an integrative model of fact-checking as epistemic infrastructure. This theoretical scaffolding enables the articulation of key mechanisms—such as human-AI hybridity, algorithmic mediation, and institutional practices—that govern the evolving construction of truth.

Traditional fact-checking practice

The practice of fact-checking originated as a journalistic safeguard, grounded in normative commitments to accuracy, evidential rigor, and public accountability (Reiter & Vertolli, 2023). Prior to the digital age, fact-checking was a largely institutionalized process carried out within editorial departments of newspapers and magazines (Amazeen, 2020). It operated under clearly defined professional routines, relying on internal protocols, specialized verification staff, and hierarchies of journalistic oversight. These traditional methods were developed to uphold standards of truthfulness and mitigate the risk of publishing erroneous or misleading information. As such, fact-checking was not only a corrective mechanism but also a preventive layer embedded within journalistic production cycles. At its core, traditional fact-checking involves a series of procedural tasks: identifying factual claims within a piece of content, verifying the accuracy of those claims against authoritative sources, and ensuring consistency with documented evidence.

These tasks require careful attention to sourcing, context, and linguistic precision. The process typically includes cross-referencing with government records, scholarly publications, archival materials, and expert testimony. The aim is not merely to detect errors or fake news but to protect the integrity of the published work by ensuring that each claim can be substantiated by credible evidence. This model of verification is often referred to as procedural or source-based fact-checking, distinguished from more interpretive or rhetorical analyses of content. Source-based verification rests on the assumption that facts can be objectively retrieved and authenticated through access to authoritative repositories of information. In this framework, the credibility of the source often determines the credibility of the claim. As such, traditional fact-checking relies heavily on institutional trust—in governments, scientific bodies, and mainstream media organizations—to delimit the boundaries between reliable and unreliable information (Carlson, 2017).

Historically, fact-checking has also functioned as a gatekeeping practice, reinforcing journalistic authority and legitimizing editorial judgment. In legacy newsrooms, dedicated fact-checkers were part of editorial hierarchies, often working behind the scenes to uphold standards of professionalism. While they rarely received public recognition, their role was considered essential to the production of trustworthy journalism. Their work was predicated on institutional values such as impartiality, transparency, and accountability—values that, while aspirational, provided a normative foundation for verification practices (Zelizer et al., 2021). The institutionalization of fact-checking in this period was shaped by a number of social and technological conditions. First, the relatively slow pace of information dissemination allowed for more thorough verification before publication. News cycles were measured in hours or days, not seconds, providing fact-checkers with adequate time to investigate claims. Second, journalistic organizations operated within relatively stable frameworks of audience trust, where the authority of professional media was not yet undermined by competing digital platforms. Third, editorial departments were hierarchically organized, enabling the implementation of standardized verification protocols across newsrooms (Craft et al., 2017).

Traditional fact-checking also involved a set of tacit knowledge and interpretive heuristics acquired through professional training and newsroom experience. Fact-checkers developed intuitive skills for assessing the reliability of sources, detecting inconsistencies in narrative structure, and identifying potentially problematic claims (Shibuya et al., 2025). This knowledge, though not always formally codified, was integral to the process of verification. The emphasis was not only on factual correctness but also on contextual accuracy—ensuring that claims were not misleading by omission or rhetorical framing (Graves, 2018).

While traditional methods provided a robust model for verifying claims in print media, they were largely constrained by institutional structures and limited in scope. Their effectiveness was tied to the editorial ecosystem in which they were embedded. As the information environment began to shift with the advent of digital technologies, these methods faced increasing limitations (Kavtaradze, 2024). The rise of user-generated content, social media platforms, and real-time news cycles introduced epistemic conditions that challenged the applicability of conventional verification frameworks. The traditional model, oriented toward pre-publication verification within professional media organizations, struggled to adapt to a decentralized and temporally compressed media environment.

Scholars have noted that one of the core challenges facing traditional fact-checking is its inability to scale in proportion to the volume and velocity of misinformation circulating online (e.g., Graves, 2018; Plantin et al., 2018). In the legacy media environment, verification was a deliberative and time-intensive process; in contrast, the current information ecosystem demands rapid response and constant monitoring. The procedural rigor of traditional methods often becomes impractical in this context, especially when misinformation spreads within minutes and reaches global audiences before fact-checking institutions can intervene.

A further limitation of conventional fact-checking practices stems from their underlying epistemological assumptions (Kavtaradze, 2024). The model presumes that factual claims can be easily separated from interpretive or normative statements and that verification can proceed on the basis of clear empirical evidence. Yet, many contemporary forms of misinformation are embedded in value-laden narratives, symbolic politics, or emotionally charged discourse that elude simple factual correction. As such, traditional fact-checking often finds itself operating within a narrowed epistemic frame, ill-equipped to address the rhetorical strategies and affective dynamics of digital misinformation (Molina et al., 2021).

Traditional fact-checking has also come under scrutiny for its reliance on elite sources and institutional knowledge systems. Critics have argued that this reliance may unintentionally reproduce existing power hierarchies and marginalize alternative epistemologies (Sahebi & Formosa, 2025). When fact-checking organizations defer primarily to governmental or scientific authorities, they risk reinforcing dominant narratives at the expense of pluralistic knowledge perspectives (Shin, 2022). In polarized media environments, such reliance can fuel public skepticism, particularly among groups that perceive these institutions as ideologically biased or untrustworthy.

Despite these limitations, traditional fact-checking retains methodological and normative relevance. Its emphasis on rigorous sourcing, methodological transparency, and editorial accountability offers important principles for designing effective verification systems in the digital age. Recent studies have suggested that blending conventional practices with digital innovations—such as open-source verification tools, collaborative platforms, and real-time monitoring systems—can enhance the credibility and responsiveness of fact-checking efforts (Brandtzaeg & Følstad, 2017; Shin, 2025). Traditional methods serve as a foundational framework upon which new verification strategies can be built. One illustrative example of this synthesis is the adaptation of traditional verification principles within collaborative digital journalism initiatives.

Projects like First Draft and Bellingcat have leveraged crowdsourced intelligence and open-source methods to conduct forensic investigations while maintaining the epistemic rigor associated with conventional journalism. These models demonstrate that traditional fact-checking can be recontextualized within new media environments without losing its core ethical commitments. Besides, the growth of structured fact-checking databases and transparency reporting mechanisms reflects an effort to institutionalize some of the foundational values of traditional methods within digital infrastructures. Organizations such as PolitiFact and FactCheck.org have formalized rating systems, claim databases, and source transparency protocols that echo earlier newsroom verification standards. These innovations not only preserve journalistic accountability but also provide new affordances for public engagement and information literacy (Amazeen, 2020).

While digital transformation has reconfigured the material and institutional conditions under which verification occurs, the foundational logics of traditional fact-checking—careful sourcing, contextual precision, procedural transparency—remain vital to navigating the epistemic challenges of the current media environment (Shibuya et al., 2025). They remind us that verification is not merely a technical task but a socially situated process that involves ethical judgment, interpretive skill, and public accountability. Understanding the evolution and limitations of traditional fact-checking is, therefore, crucial for assessing both the potential and the pitfalls of emerging AI-assisted verification systems, which often promise efficiency but risk losing the deliberative and normative dimensions of human-centered verification.

The transition toward hybrid and automated models must be informed by a critical engagement with the legacy practices they seek to supplement or replace. Traditional fact-checking offers not just a set of techniques but a repository of normative commitments and professional values that remain relevant, even as the modalities of information production continue to evolve. As subsequent sections examine, integrating these legacy frameworks with digital innovation is essential for building verification systems that are not only scalable but also epistemically and ethically sound.

Traditional fact-checking methods, grounded in editorial norms and performance criteria, exemplify a commitment to accuracy, transparency, and professional accountability. To strengthen the manuscript's conceptual connectivity, we now more explicitly link these journalistic values to the evolving concept of epistemic infrastructure. While traditional verification relied on established institutional practices, epistemic infrastructure captures how such values are now embedded—and often contested—within algorithmically mediated systems. This transition underscores the shift from human-centered gatekeeping to platform-driven governance, revealing both continuity and transformation in the principles that underpin verification. By cross-referencing these frameworks, we clarify how legacy journalistic practices continue to inform, and in some ways constrain, the design of contemporary verification systems.

Epistemic infrastructure and the foundations of truth verification

The concept of epistemic infrastructure in AI fact-checking can be productively understood through an extension of the broader literature on information infrastructure. While the concepts of epistemic infrastructure and information infrastructure are closely related, they operate at distinct levels. Information infrastructure refers to the material and technical systems—servers, protocols, databases, and digital platforms—that enable the storage, distribution, and retrieval of data. In contrast, epistemic infrastructure refers to the normative, procedural, and institutional arrangements that govern how knowledge is classified, validated, and made credible within those systems. For example, while a content management system or a platform's search algorithm would be part of an information infrastructure, the underlying rules and value systems that determine what content is determined as “truthful” or “authoritative”—such as fact-checking partnerships, credibility scoring systems, or AI model training datasets—form part of the epistemic infrastructure. Clarifying this distinction is essential to understanding how AI technologies are not just conduits of information but active participants in the construction of public knowledge.

Scholars such as Star and Ruhleder (1996), Bowker and Star (1999), Hanseth and Lyytinen (2008), and Edwards et al. (2009) have shown that information infrastructures are not merely technical systems, but layered, evolving, and deeply embedded socio-material assemblages that support the circulation, coordination, and organization of information across institutional domains. In this view, infrastructures are not passive or neutral backdrops but active mediators of knowledge, practice, and power. Similarly, epistemic infrastructure refers to the sociotechnical systems that make knowledge claims visible, credible, and actionable—shaping not only how information flows but also what constitutes legitimate knowledge and how truth is operationalized (Shin, 2025). Epistemic infrastructure emphasizes the conditions under which knowledge is constituted, validated, and legitimized within those systems. In the context of AI fact-checking, this includes not only the computational architecture—databases, algorithms, APIs—but also semantic schemas, ground truth corpora, ontologies, interpretive frameworks, institutional procedures, and user interfaces that collectively scaffold the processes of verification. These components work in concert to structure how claims are evaluated, which sources are prioritized, and what forms of evidence are deemed credible within algorithmic systems.

Drawing from Star and Ruhleder's (1996) criteria—such as embeddedness, transparency, reach, learned as part of membership, and becoming visible upon breakdown—AI fact-checking systems constitute infrastructures precisely because they are routinized, taken for granted, and broadly distributed across multiple contexts, yet reveal their epistemic scaffolding only when they malfunction. System errors, such as misclassification of claims, reinforcement of bias, or failure to offer explanations, expose the normative logics embedded in these infrastructures and prompt critical reflection on their underlying assumptions.

A central element of epistemic infrastructure in AI fact-checking is the materialization of truth claims into computable formats. These systems do not assess truth in abstract or deliberative terms; rather, they operationalize verification through structured knowledge representations such as knowledge graphs, annotated corpora

For example, in Google's Fact Check Tools, a knowledge graph might represent a network of relationships connecting political figures, events, and legislative actions. This allows the system to verify claims like “Senator X voted against Climate Bill Y” by tracing connections between entities (Senator X → vote → Bill Y) and aligning them with authoritative sources such as congressional records or verified news outlets. Similarly, an annotated ground-truth corpus might consist of a dataset of prior fact-checked claims, labeled as “true,” “false,” or “misleading,” often accompanied by source evidence and contextual notes. Tools like the FEVER dataset (Fact Extraction and VERification) provide such corpora, enabling systems to learn patterns of claim-evidence relationships and perform textual entailment. A claim such as “The Eiffel Tower is taller than the Empire State Building” could be refuted by comparing it with evidence-labeled sentences from a verified database like Wikipedia or encyclopedic archives. These components function as epistemic anchors that allow AI systems to conduct entailment reasoning (e.g., does the retrieved evidence logically support or refute a claim?), consistency checks (e.g., has the speaker made contradictory claims in the past?), and source triangulation (e.g., are multiple reliable sources converging on the same fact?).

Yet, the ability to encode knowledge in such representations depends on prior assumptions about what kinds of information are structured, which relationships matter, and which forms of evidence are countable or computable. As Shin (2021) has argued, classification systems and databases are not merely tools of organization; they are also moral and ethical projects. They inscribe values into infrastructure—privileging certain modes of knowledge, institutional sources, and verification logics, while rendering others invisible or unintelligible. In the context of AI fact-checking, this may manifest in the prioritization of institutional authority (e.g., established media organizations or government agencies), scientific rationalism (e.g., deductive forms of inference), or algorithmic efficiency (e.g., simplified classification schemas).

Just as information infrastructures shape the accessibility and flow of data, epistemic infrastructures shape which forms of knowledge are recognized, how they are interpreted, and how they circulate through algorithmic systems. In AI fact-checking, this occurs through algorithmic mediation, where machine learning models assess claims according to pre-defined heuristics and probabilistic logics. These logics often remain opaque, raising concerns about epistemic opacity, automation bias, and the erosion of interpretive agency. Increasingly, these systems are embedded in broader content moderation infrastructures, recommendation algorithms, and policy enforcement tools (Gillespie, 2022). As a result, epistemic infrastructures are not merely technical scaffolds for knowledge verification—they become mechanisms of governance, influencing the visibility of information, the credibility of sources, and the normative boundaries of truth itself (Bartsch et al., 2025).

This evolving role of AI systems in shaping knowledge raises critical questions about the politics of verification. As media scholars have noted (e.g., Amazeen, 2020; Graves, 2018), algorithmic systems do not simply reflect reality—they participate in constructing it. The deployment of AI verification tools in platform governance, for instance, contributes to what has been termed infrastructural epistemology: the formation of truth regimes not through deliberation but through technical infrastructure. Looking ahead, one of the central challenges in the development of epistemic infrastructures is how to design systems that are reflexive, pluralistic, and accountable. These infrastructures should avoid reductive truth models and instead accommodate ambiguity, context, and diverse epistemologies. This includes participatory approaches to knowledge modeling, in which diverse stakeholders co-create ontologies and verification criteria; explainable AI systems that render reasoning processes transparent and subject to critique; layered models of truth assessment that allow for degrees of evidentiary support; and open, federated infrastructures that resist centralization and promote democratic oversight.

Epistemic infrastructure should thus be conceived not as a fixed system but as a dynamic sociotechnical ecology—one that is constantly shaped by interactions between computational logics, human judgment, institutional norms, and cultural values (Shin, 2025). As AI fact-checking systems continue to evolve, attention to the design and governance of their epistemic infrastructures will be essential to ensuring that such systems promote not only efficiency and scale but also epistemic justice, transparency, and public trust.

Algorithmic epistemic infrastructure

The transition from human-centered gatekeeping to AI-driven governance demands a robust conceptual framework for understanding how truth is now infrastructurally produced. The theory of algorithmic epistemic infrastructure is introduced as a foundational lens through which to analyze the evolving systems of fact-checking and verification in the digital age. The aim is to shift the analytic focus from tools and outputs to the underlying systems that mediate the production of truth in contemporary digital environments (Ananny & Crawford, 2018; Plantin et al., 2018).

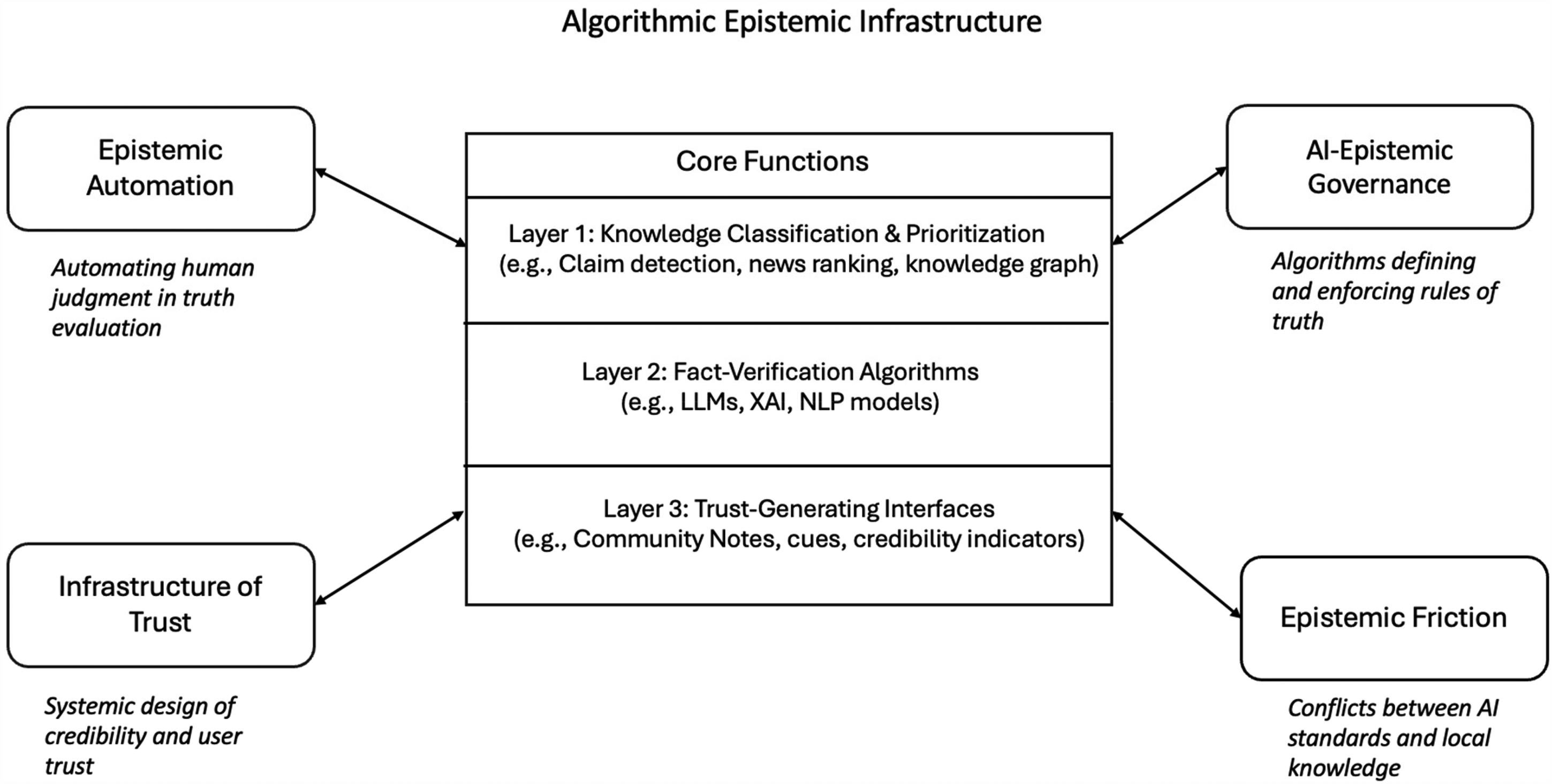

Figure 1 illustrates how various layers of verification—ranging from human judgment and institutional norms to algorithmic tools and platform governance—interact to shape the construction of truth in digitally mediated environments. The framework is structured across three interrelated domains: (1) epistemic labor (micro level), where individual fact-checkers exercise discretion and interpretive agency; (2) institutional practices (meso level), including organizational routines and professional standards; and (3) infrastructural systems (macro level), which include AI systems, content moderation mechanisms, and platform architectures. Arrows indicate the recursive influence between these layers, showing how infrastructures not only support but also shape verification practices over time. Importantly, transparency is an implicit but central thread across all three levels—particularly within infrastructural systems—where it represents both a normative requirement and a design challenge for building accountable verification ecosystems.

Epistemic infrastructure of AI fact-checking.

At the center of this theory is the concept of algorithmic epistemic infrastructure, a layered and dynamic system composed of interdependent components that collectively produce, regulate, and legitimize factual knowledge:

Knowledge Classification and Prioritization Automated Verification Technologies: This includes large language models (LLMs), natural language processing (NLP) systems, and claim-matching pipelines that classify and score information. These technologies rely on probabilistic inference rather than interpretive reasoning and are shaped by optimization metrics that often prioritize scale, speed, and engagement over epistemic accuracy (Zerilli et al., 2019). Trust-Building Interfaces

This infrastructure does not operate in isolation. It is dynamically shaped and constrained by four surrounding epistemic forces. These forces not only structure the operational logics of the infrastructure but also influence how truth is conceptualized, contested, and institutionalized:

Epistemic Automation AI-Epistemic Governance Infrastructures of Trust: Emphasizes the design and institutional mechanisms through which systems cultivate perceived legitimacy and credibility. Trust is not simply a user disposition but a condition engineered through systemic affordances, interface design, and procedural transparency. The reliability of fact-checking outputs depends as much on the infrastructure of trust as on the accuracy of verification technologies themselves (Ehsan et al., 2021). Epistemic Friction: Refers to the resistance and dissonance that arise when universal algorithmic standards collide with culturally and linguistically specific ways of knowing. Friction is a critical diagnostic of the limits of algorithmic generalization and a reminder of the situated nature of epistemic practices. It exposes the risks of epistemic homogenization and highlights the importance of maintaining pluralism and contextual sensitivity within AI-mediated verification systems (Shin, 2025).

These four elements converge to shape and co-constitute the algorithmic infrastructure at the core of the model. Rather than functioning solely as a neutral conduit for information verification, this infrastructure is inherently recursive—it actively produces and configures the epistemic conditions under which truth is not only mediated but also recognized, legitimized, and institutionalized. In this sense, truth emerges not merely as an output of computational operations but as an outcome of infrastructural configurations, governance protocols, and embedded sociotechnical imaginaries. This conceptualization of algorithmic epistemic infrastructure yields three significant implications for understanding the formation of epistemic authority and legitimacy in the context of AI-driven systems:

From Judgment to Procedure From Institutional to Infrastructural Power: Epistemic authority now resides as much in the design of interfaces, training data, and classification models as it does in traditional institutions of knowledge. Power is diffused across technical architectures, automated systems, and proprietary standards, complicating the landscape of accountability and redress (Seaver, 2021). From Legitimacy to Contestability

Understanding fact-checking as a form of algorithmic epistemic infrastructure enables a shift in perspective from evaluating discrete verification outcomes to interrogating the systems that produce them. It foregrounds the embedded logics, institutional commitments, and normative stakes that underlie AI-mediated verification processes. This theoretical framework provides a critical foundation for analyzing the epistemological transformations underway in contemporary digital ecosystems. Algorithmic epistemic infrastructure is not simply a tool of automation—it is an emerging site of epistemic governance that reshapes the relationships between truth, power, and legitimacy in the digital age. Recognizing and critically engaging with this infrastructure is essential for ensuring that the future of verification remains accountable, inclusive, and democratically legitimate.

In this evolving hybrid landscape, ethical and practical tensions arise around issues such as algorithmic opacity, accountability, and bias. When large language models flag content as misinformation based on probabilistic heuristics without human review, they risk misclassification and reinforce systemic biases. These challenges are amplified by the interpretive gap between computational logics and human expectations of transparency and explanation. Addressing these tensions requires more than technical refinement—it demands institutional accountability mechanisms, robust human oversight, and design choices that prioritize interpretability. Hybrid verification models must therefore grapple not only with questions of efficiency but also with the deeper epistemological and ethical stakes of automation in the public sphere.

While hybrid systems hold the promise of augmenting human judgment with computational efficiency, they also risk obscuring the role of human discretion. It remains essential to interrogate which elements of human oversight—interpretation, ethical evaluation, and contextual reasoning—are most vital to preserve. When AI systems surface claims for verification or generate explanatory annotations, what safeguards ensure that human reviewers retain meaningful control over the outcome? Clarifying the interplay between algorithmic decision-making and editorial authority can strengthen transparency and accountability. Rather than displacing human judgment, hybrid models must be designed to complement it, preserving the interpretive capacity and normative grounding that are central to democratic truth-making practices.

Empirical illustrations: AI fact-checking in global contexts

Recent developments in countries with explicit regulatory or platform governance frameworks—such as South Korea, the European Union, and the United States—offer illustrative examples of how AI fact-checking systems operate in real-world contexts. In South Korea, the 2023 Digital Platform Transparency Act introduced reporting obligations for platform companies and encouraged the integration of AI-assisted fact-checking into domestic platforms like Naver and Kakao. During the 2022 presidential election, Naver deployed an automated misinformation detection engine that flagged high-velocity political claims and routed them to partner fact-checking organizations for human review. This hybrid system demonstrated how national platforms can implement real-time verification pipelines that combine machine speed with editorial oversight, aligning with South Korea's broader regulatory emphasis on information integrity and civic responsibility.

In the EU, the Digital Services Act (DSA, 2022) has mandated algorithmic transparency and systemic risk assessments for very large online platforms (VLOPs). Meta, Google, and TikTok have responded by scaling up AI-driven verification pipelines. Meta, for example, has expanded its machine-learning classifiers to prioritize fact-checking efforts across multilingual content in coordination with third-party fact-checking partners such as Correctiv and Pagella Politica. The system flags potentially misleading posts for rapid review, but the uneven application of AI standards across cultural and linguistic boundaries has exposed epistemic frictions—highlighting ongoing challenges of automation bias, transparency, and contextual sensitivity in algorithmic truth-making.

In the United States, where platform self-regulation dominates, major tech companies have implemented internal hybrid fact-checking systems often guided by public pressure and civil society partnerships rather than government mandate. Google's Fact Check Tools utilize structured knowledge graphs to automate verification, while Meta's Third-Party Fact-Checking Program partners with certified organizations like PolitiFact and FactCheck.org to review AI-surfaced claims. During the COVID-19 pandemic and the 2020 presidential election, these systems were stress-tested by waves of health and electoral misinformation. Although they helped stem the viral spread of false claims, criticism emerged over algorithmic opacity, ideological bias, and inconsistent enforcement—particularly from political actors who challenged platform legitimacy. These controversies underscore the tension between automation and accountability in US media environments, where the absence of a unified regulatory framework complicates efforts to harmonize verification standards. These cases highlight how national and supranational infrastructures shape the design, implementation, and reception of AI fact-checking systems. They illustrate how algorithmic epistemic infrastructures interact with political cultures, platform ecologies, and institutional constraints—substantiating the theoretical argument that verification today is not merely a technical function but an infrastructural and contested civic practice.

Conclusion

This study has situated fact-checking within a broader sociotechnical and epistemological framework, demonstrating that the practice of verification is not merely an ancillary journalistic task but a foundational mechanism for sustaining public knowledge infrastructures. Through a critical analysis of traditional fact-checking methods, the evolving role of human fact-checkers, and the global institutionalization of verification practices, this study has provided a comprehensive account of the enduring significance and ongoing transformation of verification in digitally mediated societies. One of the key theoretical insights advanced herein is that verification must be understood as an epistemic institution—constituted through a confluence of human judgment, methodological standards, and infrastructural mediation (Shin, 2025).

While algorithmic automation is advancing, it does not render conventional fact-checking obsolete. Conventional fact-checking continues to offer indispensable normative and methodological resources, particularly in terms of procedural transparency, deliberative rigor, and reflexive accountability. These epistemic values remain vital in contemporary information ecosystems, where speed-driven circulation, platform logics, and content automation often outpace critical scrutiny (Sahebi & Formosa, 2025). The study has also emphasized the irreplaceable role of human discretion in the verification process. As epistemic agents, human fact-checkers exercise interpretive labor that transcends the capabilities of computational systems. Their engagement with discursive ambiguity, moral reasoning, and sociocultural context positions them not only as correctives to misinformation but as active participants in the construction of public credibility. The hybridization of verification—wherein human discretion is increasingly entangled with AI-assisted tools—raises important theoretical questions regarding epistemic authority, interpretability, and the boundary between human and machine judgment.

The global diffusion of fact-checking practices reveals the situatedness of verification within diverse institutional, linguistic, and political contexts. Moreover, the interaction between platform governance and local political climates adds further complexity to global fact-checking practices. While major platforms often implement universal moderation policies, these policies frequently intersect with distinct national regulations, cultural sensitivities, and political dynamics. In some contexts, fact-checking efforts may be perceived as politically motivated or aligned with dominant regimes, particularly when local governments exert pressure on platform moderation standards. These entanglements challenge the perceived neutrality of fact-checking and highlight the importance of understanding verification as a culturally embedded and politically situated practice.

Rather than a universal technique, verification must be understood as a culturally contingent and infrastructurally embedded practice—one that is shaped by asymmetries in resources, platform dependencies, and socio-political pressures. This perspective challenges reductive or technocratic models of verification and foregrounds the need for context-sensitive, pluralistic, and participatory frameworks. The study's conceptual trajectory also gestures toward the epistemological and political stakes of emerging verification systems. As subsequent sections of this volume will explore, the integration of AI into fact-checking introduces new ontologies of truth production—marked by algorithmic abstraction, predictive classification, and machine-led evidentiary logics. These developments necessitate a critical reassessment of the foundational assumptions underlying verification: What does it mean for an algorithm to “verify” a claim? What kinds of epistemic authority are conferred by automated systems? How do human-AI hybrid systems reconfigure trust, transparency, and accountability in public knowledge environments?

Such questions point to the necessity of approaching verification not as a closed technical solution but as an evolving epistemic practice—one that is deeply entangled with broader transformations in media infrastructure, platform governance, and democratic culture. Future research and institutional development must continue to engage with the tensions between procedural efficiency and interpretive depth, between automation and deliberation, and between global standardization and local specificity. This study argues that fact-checking should no longer be regarded merely as a defensive measure or a reactive tool against misinformation.

To position fact-checking as a proactive tool, academia and industry must reframe it as part of a broader epistemic strategy aimed at cultivating informational resilience. This includes designing preemptive interventions such as algorithmic inoculation, media literacy campaigns, and early-warning systems that anticipate and flag emerging misinformation patterns before they reach virality. Embedding fact-checking frameworks into content recommendation engines, platform design norms, and news production workflows can help reduce the visibility of misleading information at the point of exposure. Such anticipatory approaches emphasize prevention over correction and frame verification as an integral part of healthy information ecosystems, not just a corrective afterthought. This study reconceptualizes fact-checking not merely as a media practice but as a form of epistemic infrastructure—shaping how legitimacy, credibility, and truth claims are algorithmically produced and governed.

Future studies

As AI fact-checking systems become more deeply embedded in epistemic infrastructures, future research must grapple with the ethical, political, and practical tensions that hybrid verification models introduce. Central among these is the challenge of preserving human interpretive judgment within algorithmic workflows, ensuring that human discretion is complemented—not eclipsed—by automated processes. Scholars should investigate how the design of fact-checking platforms either facilitates or inhibits key epistemic values such as transparency, explainability, and normative accountability. These design choices—ranging from interface cues to backend classification schemas—directly influence how truth is rendered legible to both users and institutions.

Equally important is the need to examine how these systems operate across varying political regimes and cultural settings, where the sociotechnical affordances of platforms intersect with local knowledge norms, governance structures, and linguistic ecologies. Participatory and community-based verification models, which foreground pluralism and deliberation, may provide valuable counterweights to centralized algorithmic decision-making. Comparative studies that map these diverse configurations will be critical in understanding how verification systems scale globally while retaining contextual legitimacy. A critical research agenda should consider how micro-level human discretion, meso-level institutional routines, and macro-level infrastructural governance interact to co-produce the architecture of public knowledge. These layers should not be treated as discrete domains, but rather as interlocking processes that shape the production and circulation of credible information in digital societies. Understanding verification as an evolving epistemic infrastructure requires attention to how these levels converge in specific technological, institutional, and cultural arrangements. Addressing this complexity will require interdisciplinary collaboration across communication theory, science and technology studies, human-computer interaction, and normative ethics. Such cross-cutting inquiry will be essential for designing verification systems that are not only technically robust but also inclusive, reflexive, and democratically legitimate.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.