Abstract

The prevalence of disinformation in media ecosystems has spurred efforts by researchers from various disciplines and media professionals to find effective methods for verifying information at scale. Automated fact-checking has emerged as a promising solution to combat disinformation. However, fully automated tools have not yet materialized. This technographic case study of a start-up company, “X,” investigated the challenges associated with this process. By conceptualizing automated fact-checking as a technological innovation within journalistic knowledge production, the article uncovered the reasons behind the gap between “X's” initial enthusiasm about AI's capabilities in verifying information and the actual performance of such tools. These reasons cross the disciplinary boundaries relating to the technological aspects of automated fact-checking and a requirement for such tools to be epistemically authoritative. The study revealed significant hurdles faced by the start-up, including issues with the accuracy of the AI editor and its adoption by the industry. Key obstacles included the elusive nature of truth claims, the rigidity of so-called binary epistemology (ascribing true/false values to information claims), data scarcity, algorithmic deficiencies, issues with the transparency of results, and industry-tool compatibility. While focused on a single company's experience, the study offers valuable insights for researchers and practitioners navigating the evolving landscape of automated fact-checking.

Introduction

Automated fact-checking, an emerging form of media production and technological innovation within journalism, has been discussed in academia, in the industry press, and at journalist conventions already for several years (Dickson, 2020; Mantzarlis, 2015; Marr, 2021; Mok, 2020). As one of the pioneering initiatives formulated, “our eternal quest, the ‘Holy Grail,’ is a completely automatic fact-checking platform that can detect a claim as it appears in real-time, and instantly provide the voter with a rating about its accuracy” (Hassan et al., 2015, p. 1). The metaphor of the Holy Grail here refers to a technological means that can verify information. Usually, this is done by human fact-checkers who are trained to produce knowledge by identifying checkworthy information claims, verifying their accuracy, and communicating the assessments about their factual value (Carlson, 2022; Singer, 2021). Several organizations announced that they were developing automated tools for fact-checking (for example, Full Fact in the United Kingdom, Aos Fatos in Brazil, Chequeado in Argentina, and Factinsect in Austria). Consequently, almost a decade after it emerged as an idea, automated fact-checking has transformed into a collective effort of scientists and practitioners from various disciplines, most notably from computer science and journalism, to develop new technologies for information verification using artificial intelligence (AI) (Graves, 2018).

Despite the hyped-up promises, so far, fully automated solutions for fact-checking do not exist (Azevedo et al., 2021; Chu, 2020). There seem to be impassable challenges to creating automated tools that can emulate the epistemic authority of fact-checkers or, as Gieryn (1999) defines, “the legitimate power to define, describe, and explain bounded domains of reality” (p.1). The main goal of this technographic case study was to identify such challenges in the example of one of the few start-up companies, here, named “X”. The Norwegian start-up tried to create an AI editor for media professionals to find false information in written text and provide evidence for correcting it automatically. However, after several years of work, the company did not have an automated fact-checking tool ready. Hence, this study asks:

By analyzing a set of rich qualitative data, including stakeholder interviews, documents, promotional materials, and technographic notes, the study found that the epistemic authority of the AI editor was mainly hindered by the challenges related to its accuracy. However, technologies do not have to perform flawlessly to be successfully adopted, as humans can tolerate technological malfunctioning (Norman, 2013). For example, despite the tendency to “hallucinate” incorrect results, users have quickly embraced conversational AI tools such as ChatGPT (Wu et al., 2023; Zhang et al., 2023). Moreover, even had the AI editor been perfectly accurate, the company would still have needed to convince fact-checkers that the tool would add value to their work (Nakov et al., 2021). “X” would have needed to prove the relative advantage of the tool, as well as its compatibility with fact-checkers’ norms and needs (Rogers, 2003). Thus, “X” also had to overcome the challenges related to the adoption of AI editor as an innovation.

In this study, “X”’s AI editor is explored as a technological innovation that should hold epistemic authority as a knowledge production tool to be successfully materialized. This entails looking beyond the tool’s technical characteristics and considering the transcending disciplinary context within which automated fact-checking is emerging. Thus, the study adopted interdisciplinary approach while identifying challenges of developing the AI editor, which allowed to document the state of the art of automated fact-checking technologies.

Importantly, existing news ecosystems are increasingly damaged by information disorder (Wardle, 2018), and some consider manual fact-checking as a resource-demanding and unfeasible counterstrategy (Nakov et al., 2021). The urgency to involve AI in the fight against disinformation is even greater, considering the concerning practices in which AI is used to produce fake news (Santos, 2023). Documenting an attempt to create an automated fact-checking tool and the corresponding challenges can help scientists and practitioners understand the bottlenecks of automated fact-checking while setting realistic agendas for designing information verification technologies.

Structure-wise, this paper first presents the case, describes the emergence of automated fact-checking as journalistic knowledge production, and discusses the need for an interdisciplinary approach while exploring related challenges. This is followed by methodological clarifications about a technography as an advantageous approach when dealing with emerging technology (Berg, 2022). Then, the article presents the study findings, reporting the challenges “X” faced while developing an AI editor. Eventually, the paper discusses how the interdisciplinary approach better suits the exploration of automated fact-checking technologies and the question of their epistemic authority.

Presenting the case: “Grammarly for fact-checking”

The start-up company “X” was founded in 2019 by a Norwegian computer scientist and the team after developing an algorithm for finding patterns in false information using machine learning and neural networks. After obtaining patents, “X” decided to create an automated fact-checking tool, aiming to create “Grammarly for fact-checking”—referring to the AI-based assistant that reviews English language spelling and grammar.

The company website initially mentioned that “X” was automating fake news detection using AI-based technology. Later descriptions pointed to the automatic identification of checkworthy claims and the extraction of evidence to verify facts. This soon changed into “the world's best research tool for journalists” that would help human fact-checkers conduct research faster and better. From 2019 to the early summer of 2022, these descriptions bounced back and forth around the idea of automated fact-checking. Eventually, “X” developed the intelligent text editor into a prototype. However, at the time of data collection, the AI editor had not yet been launched publicly nor adopted by any media organization. Moreover, as the website iterations showed, the company distanced itself from the ambition of creating an automated fact-checking tool.

Similar developments can be observed among other automated fact-checking initiatives. For example, Squash, an automated fact-checking platform based on the ClaimBuster algorithm, was shut down with its initiators stating that it was making too many mistakes as “it isn’t quite ready for prime time” (Adair, 2021). A British fact-checking organization, Full Fact, also has been developing an automated fact-checking system. However, instead of the autonomous system, the organization created several tools for claim detection and claim-evidence matching, which still required human integration (Abels, 2022). An Argentinian organization, Chequeado, also created a prototype internally to fact-check political speeches, although not yet available for public use.

It is usual for innovation-oriented start-ups to modify their product, adjust them to the needs and demands of the market, or even switch anticipated markets (Ries, 2017). However, these dynamics within the innovation-driven start-ups also shed light on inherent contradictions related to the product they try to innovate. In the case of “X,” the company had to put its original plan of creating an automated fact-checking tool on hold and instead focus on developing a research-assisting tool for journalists. This marked a significant shift in terms of amending expectations about the epistemic potential of AI-based technology to automatically determine the veracity value of truth claims. Before elaborating on the epistemic potential or authority of automated fact-checking, the following chapter describes the context of its emergence as a technological innovation at the intersection of computer science and journalistic knowledge production.

The emergence of automated fact-checking

Since the early 2000s, fact-checking—a core journalistic practice—has evolved into a separate genre of media production and a global professional movement (Juneström, 2020). This is often marked by differentiating ex-ante and ex-post fact-checking (Graves & Amazeen, 2019). The former is practiced in traditional newsrooms before journalists publish their stories. The latter refers to newly established independent organizations or units within legacy media organizations that systematically assess “the validity of claims made by public officials and institutions with an explicit attempt to identify whether a claim is factual” (Walter et al., 2020, p. 351). However, fact-checkers cannot verify everything manually due to the increased amount and speed of information flow (Saeed et al., 2022). In response, some consider automation as the logical next step in journalistic information verification (Hassan et al., 2017; Nakov et al., 2021).

Literature on automated information verification centers around two main topics: fake news detection and automated fact-checking (Santos, 2023; Školkay & Filin, 2019; Wu et al., 2014; Zeng et al., 2021). As both deal with the assessment of information veracity by using AI, they somewhat overlap. Nevertheless, they also differ from each other. Some consider fake news detection an umbrella term for “deception, hoax, clickbait, and credibility detection” that evaluates and classifies mostly digital media content as either fake or genuine (Özgöbek & Gulla, 2018, p. 1). Meanwhile, automated fact-checking is an even more intricate and meticulous process, as explained below.

There is no agreement on what automated fact-checking entails or should entail, as researchers suggest different ways of automating information verification. According to Graves (2018), automated fact-checking involves automatizing at least three core elements: claim identification, verification, and correction. These elements can be broken down into smaller tasks, such as monitoring media content, identifying checkable and checkworthy claims, retrieving evidence from an authoritative source, making a judgment about the veracity of the claim, correcting the claim if necessary, and producing an explanation of the decision (Babaker & Moy, 2016; Guo et al., 2022; Hanselowski, 2020; Zeng et al., 2021). Most of the automated fact-checking pipelines found in the literature follow these steps, adding or subtracting specific stages or altering their names (Abumansour & Zubiaga, 2023; Barrón-Cedeño et al., 2020; Hassan et al., 2017; Nakov et al., 2021; Zeng et al., 2021). Some focus on one or several steps of the verification flow, such as claim detection or evidence retrieval (Allein & Moens, 2020). Others emphasize the ability of automated fact-checking tools to generate explanations (Kotonya & Toni, 2020) or adopt a human-in-the-loop approach in the context of human-assisted automated fact-checking (Nakov et al., 2021).

Despite the lack of an agreed-upon definition of automated fact-checking, there is a consensus in the literature that such systems should somewhat follow the steps of manual fact-checking (Graves, 2018; Guo et al., 2022). Manual fact-checking as a knowledge production stands out for making epistemic assessments about the veracity value of truth claims (Steensen et al., 2022). Hence, the result of fact-checking is often categorizing truth-claims along the true/false binarity. For example, in a traditional newsroom, fact-checking would result in a journalist claiming that “the increased gun violence in the country made the neighboring state close the border.” Meanwhile, an ex-post fact-checker would pick up this information, scrutinize the statistics of gun violence in the country, as well as all the possible variables that might have led the neighboring country’s administration to close the border and assess the claim about correlating the gun violence, and the border closure is true, false or somewhere in-between. By doing this, fact-checkers exercise a higher degree of epistemic authority - instead of merely reporting facts, they also evaluate the truth value of claims.

Thus, fact-checkers demonstrate their epistemic authority, which can be understood as “a set of relationships between knowledge creators and those pursuing this knowledge” (Carlson, 2022, p. 67). This relationship is based on trust between the information sender and receiver, although such authority is neither given nor static but rather constantly contested and reconstructed (Carlson, 2020; Zagzebski, 2012). Media professionals need to establish such authority or its perception to achieve the status of the “credible spokespersons of real-life events” (Zelizer, 1992, p. 8).

Similarly, suppose automated fact-checking system developers want their tools to be accepted as credible sources of knowledge. In that case, they should establish such epistemic authority by ensuring that end users trust and adopt their technology as an innovation. However, as the next section describes AI-based fact-checking technologies face many challenges when adopted as epistemically authoritative tools.

The quest for an interdisciplinary exploration of automated fact-checking and its challenges

Even though automated fact-checking is an innovation that emerged within the intersection of various fields, most of the literature stems from computer science. Few studies conceptualize automated technologies’ role in fact-checking as a knowledge production practice and discuss journalistic authority in relation to it (Johnson, 2023; Juneja & Mitra, 2022). The same applies to empirical knowledge about what hinders such systems from being successfully materialized. Several studies pinpoint the challenges of existing systems. For example, Guo et al. (2022) highlight the ambiguity in labeling fact-checks, quality of sources, the question of subjectivity, along with the issues of bias and quality of datasets, challenges caused by multimodality and multilingualism of content that need to be checked, as well as faithfulness in such systems and their reactive nature rather than preventing the spread of disinformation. Zeng et al. (2021) highlight the issues with conceptual difficulties of determining a claim as a knowledge unit or that automated systems are developed within narrow domains, making it impossible to use them to verify information related to other topics. The same study also mentions problems with annotating data to train algorithms, scalability and integrity of systems, and the struggles with interpretability and generalizability of systems that might end up with automated fact-checking tools making decisions based on wrong evidence (Zeng et al., 2021). Other than the abovementioned challenges, Nakov et al. (2021) also discuss difficulties integrating contextual information within automated systems. Information verification often depends on contextual information related to claims. Accordingly, the issue of translating contextual information into a format readable for the machine is of crucial importance.

However, these challenges primarily focus on how automated fact-checking can work as a technological innovation. Meanwhile, the literature is unequivocal that innovation, along with technological development, is also a socioeconomic process (Nowotny, 2006), and for innovation to be adopted, it does not need to work seamlessly. Conversely, technologically seamless functioning does not guarantee the successful diffusion or adoption of the innovation (Rogers, 2003). As Pacheco et al. (2017) note, “innovation depends on several players, factors and dimensions,” and “this is why innovation became an object of study and practice of several approaches, sources and fields” (p.313).

Accordingly, hindrances to automated fact-checking processes should also be sought beyond the technological realm. As automated fact-checking tools are often tailored to fact-checkers who have the perceived authority to label information claims as true or false, it is important to cross the disciplinary boundaries of computer science. Moreover, as Li (2023) notes, the exploration of “the communication models of emerging media calls for an urgent expansion of interdisciplinary perspectives” (p.6). Hence, it is necessary to examine a broader context of emergence for automated fact-checking as an innovation within the intersection of computer science and journalistic information verification, as well as the challenges it faces in this process of emergence.

Qualitative technographic case study: Methodological clarifications

Documenting the challenges of automated fact-checking, which is only now developing, requires a methodological approach that, beyond describing the current state of the art, critically explores how technology creators imagine and interpret them (Berg, 2022). A qualitative technographic case study is one such methodological approach, which, as a design of this study, is explained in detail below.

Historically, technography meant “a treatise on the art of rhetoric” or “written economic, sociological, or historical accounts of specific processes or devices” (Purdon, 2018, p. 6). However, qualitative researchers from various fields have recently defined it as “an ethnography of technology” (Jansen & Vellema, 2011, p. 169). As Kien so succinctly states, “Technography = Technology + Ethnography” (2008, p. 1101). This method has been used to collect and analyze various types of data about technology adoption within agriculture, mobility studies, and health sciences, to name a few (Arora & Glover, 2017; Hildebrand, 2020; Rezazade Mehrizi et al., 2021).

Berg (2022) offers an important clarification, stating that digital technography is a particularly useful method for studying emerging technologies, noting that “instead of concentrating on how technologies participate in everyday life, digital technography is oriented toward understanding how they are imagined as participants in everyday life” (p. 828). In digital technography, three conceptual aspects stand out: (a) specification—how the technology is presented in promotional materials as a new solution; (b) valorization—the creator values the solution; and (c) anticipation—“how and why certain technologies are supposed to bring about change” (Berg, 2022, p. 830). Thus, doing technography requires a special emphasis on the technology itself and studying the promotional materials and evocative stories from the developers, where the articulation of specification, valorization, and anticipation mixes the researcher's reflexive use of the technology.

Data material and analysis

Only a few companies are currently working on developing automated fact-checking tools, aiming to commercialize them. Thus, outsiders, such as researchers, cannot easily access these tools. “X”'s employees kindly allowed me to test their technology and were willing to share their experiences. This enabled me to explore in-depth the challenges their innovation faced as a “single significant case” (Patton, 2015, p. 411) —instead of aiming for results generalizable to all automated fact-checking initiatives.

The case study draws on three types of textual data—interviews, documents, and promotional materials—in addition to the author's reflexive technographic notes to fuse the reflections regarding X's AI editor with the perspectives from the company. I conducted semi-structured, in-depth interviews with the company-related stakeholders, including all four full-time employees and three external partners of “X,” who worked with the company on various collaborative tasks. In total, I conducted seven interviews in person (3), via the Zoom platform (3) or by phone (1) (the latter to accommodate the COVID-19 pandemic-related recommendations in Norway). Interviews lasted from 40–45 min on average, except for a phone interview with one of the external actors that lasted for 15 min. All interviews were in English and were audio recorded. I used the automated service Otter.ai to transcribe and clean the interviews. The data was anonymized, and written or oral informed consent was obtained before each interview. The study was approved by the Norwegian Agency for Shared Services in Education and Research (Sikt) for the ethical collection, storage, and sharing of data with project participants.

The interviews were complemented by rich data from documents and promotional materials provided by the employees via e-mail communication or by archiving the content of their website using the internet archive website The Wayback Machine. This amounted to more than 30 documents, including blog posts, newsletters, home page screenshots, descriptions of their product, and two texts written by another external actor related to the company. All documents were written in English. Additionally, the prototype of an AI editor was accessible to me as a researcher, which I took notes on and tested throughout the entire data collection period (almost for a year), making it possible to observe the tool longitudinally.

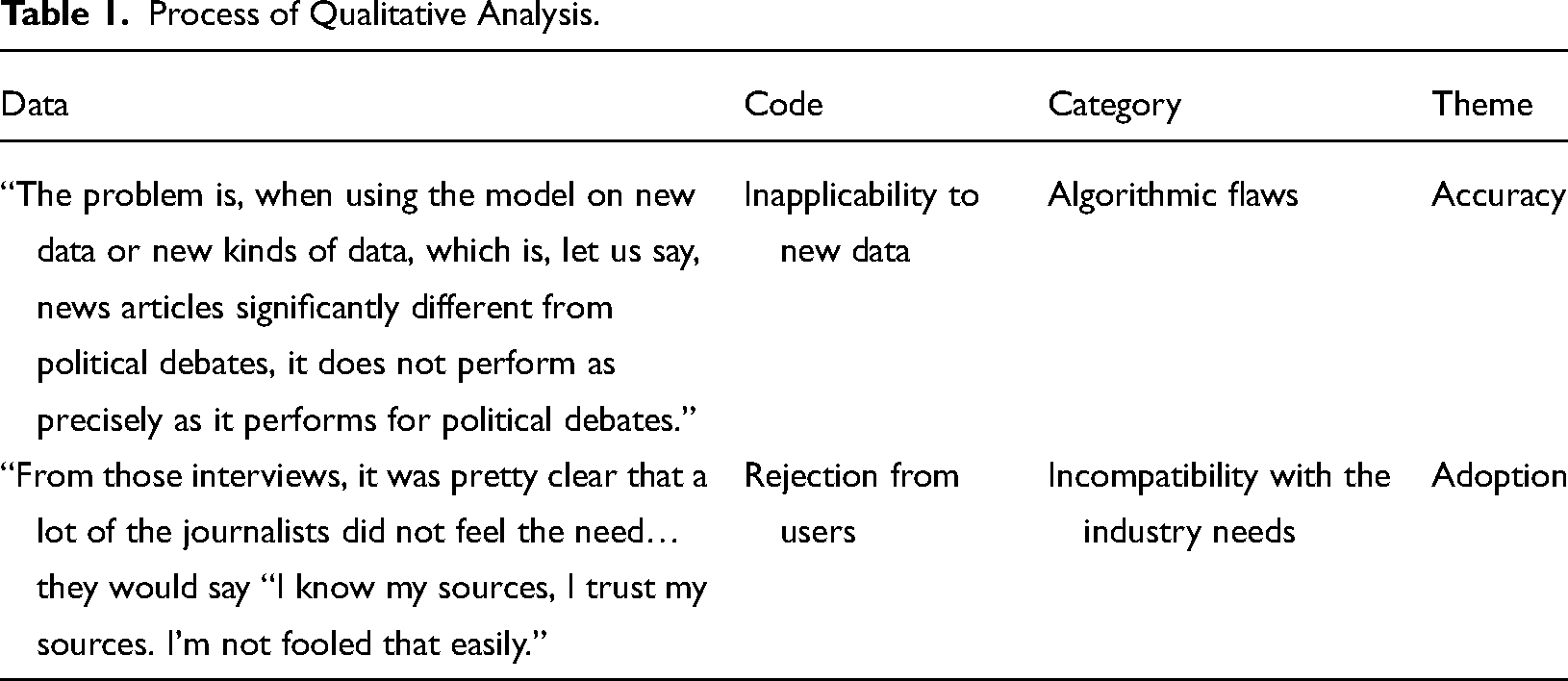

The collected textual data was analyzed thematically, which involved coding the interview transcripts, documents, and technographic notes using the qualitative analysis program NVivo. Using axial coding, data were organized and categorized to identify relationships and connections between concepts (Saldaña, 2009). First, snippets from the textual data were coded with brief descriptions of challenges either mentioned by the interviewees or presented in the documents and technographic notes. Then, the identified challenges were grouped under categories that described the broader thematic areas. Table 1 shows examples of the interrelation between data material, codes, challenge categories, and interpretative themes.

Process of Qualitative Analysis.

Findings

Based on the analysis, the AI editor developed by “X” faced hindrances regarding accuracy and adoption. About accuracy, four main challenges stood out: (1) the elusiveness of truth claims, (2) the rigidity of binary epistemology, (3) the lack of (quality) data, and (4) algorithmic deficiencies. The tool faced two main challenges concerning adopting AI editor: (5) a lack of transparency in explaining the results and (6) incompatibility with the industry’s needs. As the concluding section of the paper explains later, challenges identified throughout the study are by no means exhaustive regarding the hindrances “X” faced as a start-up. This study only focuses on challenges in relation to the AI editor as an innovative tool envisioned as capable of verifying information in the context of journalistic knowledge production.

Elusiveness of truth claims

Three out of the four interviewed employees noted that precision in claim detection, the first step of fact-checking, was one of the main challenges for their AI editor. This problem was more transparent when the tool dealt with topics recently emerging within the public discourse. As one of the employees noted, if their tool had to deal with the topics related to the data the prototype was trained on, results were quite solid, “but the moment it goes into more philosophical claims or something that hasn’t been written about before, that is definitely something we need to think about.” Another employee elaborated on the elusive nature of claims: “[T]his prototype automatically marks, highlights claims and sentences that you need to fact-check. And that is very, very difficult. (…) [T]hat's probably one of the biggest challenges for this tool. How do you define what is a claim? What is a claim worth fact-checking in a text? It can vary from day to day.”

“Elusive” refers to the unstable epistemic nature of truth claims, making it difficult for the AI editor to differentiate between claims/non-claims, checkable/non-checkable claims, or checkworthy/non-checkworthy claims. Other companies experienced similar difficulties with automated claim detection: Nakov et al. (2021) point out that one explanation for automated fact-checking tools’ inability to accurately identify checkworthy claims is the context-dependent nature of those claims.

Finding claims and determining their checkability or checkworthiness is complicated, even for human fact-checkers (Uscinski & Butler, 2013). It requires using value judgments, comprehending contextual information, and dealing with uncertainties about the relevance and factuality of information bits. To be regarded as claims, such bits should be statements containing descriptive, interpretive, evaluative, or analytical inferences (Steensen et al., 2022). However, not all claims are checkworthy or checkable. To consider a claim to be relevant for fact-checking, it matters as to who said what, when, and how (Graves, 2017). Certain claims are easy to recognize as relevant for fact-checking, but if a communicated message contains information with more interpretative features or topics that are emerging at that moment, it might take more effort to decide on its checkworthiness. As the experience of “X” showed, when AI is involved in such a unilinear reasoning process, this task becomes even more complicated.

Rigidity of binary epistemology

The AI editor allowed users to automatically sort claim-related sources into “supporting,” “neutral,” and “disputing” categories. Though it was never precisely defined as to what these categories mean, there was a space for interpretative flexibility, which means that the phenomenon of interest, or “as it is at present, is not determined by the nature of things; it is not inevitable” (Hacking 1999, p. 6). Labeling sources as “supporting” or “disputing” means that the algorithmic decision is not inevitable, and there is space for human interpretation while assessing the truthfulness of claims. However, such categorization appeared in the later stage of the tool's development.

Initially, the AI editor was based on a “binary epistemology, in which dichotomies like true/false, reliable/unreliable, and accurate/inaccurate structure the approach” to verification (Steensen et al., 2022, p. 2121). The tool marked claims in green or red colors as “true” or “false.” As the earliest version of the company's website mentioned: “X” “provides evidence in the form of a summary containing important points for classifying certain claims as true or false.”

Later, the website also encouraged the users to “copy-paste any claim (f.ex., from Facebook) and click check claim. You will get a result—true or false.” Soon, all direct references to sorting claims into true or false categories disappeared from the website. The prototype itself also changed the categorization of sources into “supporting,” “neutral,” and “disputing.”

This was an important change from an epistemic standpoint. As the company employees noted, using definitive categories such as “true” and “false” while determining the veracity of claims was problematic. One employee explained it as follows: “When you say something is true or false, it also provokes a reaction. Because there's nothing like a universal truth (…) It is a slippery slope for us.”

In this remark, the employee highlights the complicated nature of truth as a philosophical and practical category. Interestingly, one analyzed document showed a similar transformation within organizational thinking. The document mentioned that interpreting the terms “true” and “false” can be a politically or culturally complicated issue and suggested: “[the language] … must be precise in how it frames results, never classifying a claim as ‘false,’ but rather as ‘being challenged.’”

The employee also confirmed an internal discussion in the company about using definitive categories while assessing claims, including the use of colors. One employee stated: “[Using such colors] might not be the best because we don’t want to push the user too much in the direction to make a definitive decision.”

This shift to using more flexible epistemic categories indicates a reassessment of human agency in deciding the veracity of claims. If the AI editor retained the original true–false dichotomy, there would be less space for interpretative flexibility or epistemic ambiguity—a necessary component of manual fact-checking in the real world.

Lack of (quality) data

According to the company employees, a main bottleneck constraining the performance of their tool was access to data. They emphasized the lack and insufficient quality of existing data that prevented AI editor from being accurate in performance. At the time of data collection, the editor's prototype algorithm was trained on manual fact-checks from the International Fact-Checking Network member organizations. The “X” employees explained that they used different data sets designed for research purposes to develop the prototype. These included 30,000 articles produced by American fact-checkers for PolitiFact and Snopes and a database about the United States presidential election. However, one employee clarified: “From the experimental studies we did on the data from political debates, it performs pretty well, with over 80% accuracy. The problem is using the model on new data or new kinds of data (…) [T]hen it doesn’t perform as precisely.”

Thus, if the AI editor checked claims about currently emerging topics or topics not sufficiently discussed in the public discourse, it would have a major issue with inaccuracy. This was also highlighted in my interviews with the external stakeholders. One of the partners observed that a primary concern regarding the AI editor's performance was to determine how well it would work with random texts.

Moreover, even if the tool successfully determined the claim’s veracity, there was always a risk that this could be problematized by information that was unavailable when the algorithm was being trained. “X” employee noted that one of the main issues was the availability and access to relevant information—without guaranteeing access to all information, it is difficult to achieve full automation in fact-checking: “And there is always the issue of if you cannot find something, that does not mean it does not exist, right? So, we might say something is false based on what we found, but there might be something else that is not available to us. Yeah, how do we access that? That's the most difficult part.”

There were other issues with data sets (for example, human errors in training data). While assessing the overall performance of the AI editor, one of the employees noted that “it's strong, but there are some challenges with using data sets that have been made by humans.” Even if the data sets were regarded as high quality, they could still contain certain geographical, linguistic, socioeconomic, political, or ideological biases that would affect the tool. The “X” employees noted that their algorithm was mostly trained on data produced by international fact-checkers who “most likely will lean to the left [politically]. So, it's the objectivity of the data that we train our AI [with]” that posed a risk. An external partner of “X” also highlighted the problem of bias: “We all have examples of AI or machine learning engines being tricked by biased data (…) [S]o, there are some kinds of risks that need to be front and center.”

In terms of flaws in human-produced data sets, the question of bias is important. Suppose automated fact-checking is considered a typical example of an AI pipeline, where data sets are used as input. In that case, one can expect that the algorithms will replicate the corresponding bias in their output, too. There are at least four major points where biases can clog the AI pipelines: data creation, problem formulation, data analysis, and output evaluation/validation (Srinivasan & Chander, 2021). In the context of automated fact-checking, such issues can be translated into risks of ending up with algorithmic deficiencies and wrong results damaging the epistemic authority of the tool.

Algorithmic deficiencies

Simply put, the algorithm is a rule that determines the performance of the automated fact-checking tools. Optimal performance for the AI editor would entail coming as close as possible to the human ability to decide whether a certain bit of information can be regarded as true or false. If its performance is not accurate, this would erode trust in the epistemic authority of the tool, damaging the company's reputation. One partner explained this as follows: “In this game, if you kind of don’t hit the nail a few times or come with false negatives or false positives in fact-checking, that will hurt your reputation.”

The algorithm's accuracy was also mentioned as a challenge by “X” employees. However, not everyone saw it as a major problem, as this employee's statement shows: “[n]othing will be 100% precise. Even manual fact-checking is never precise. But people can make mistakes as well. So, I cannot give you a guarantee that the [the tool will be] 100% precise, but it might come close to matching human-level performance.”

Thus, if we assume that human-produced data will always be flawed and the algorithm will be trained on such data, the result will be algorithmic deficiencies and, consequently, more false fact-checks, which will then be used as new training data. This cycle of flawed data and inaccurate results could ultimately risk the epistemic authority of the tool and, eventually, the entire company—as the tool would simply carry on producing inaccurate results.

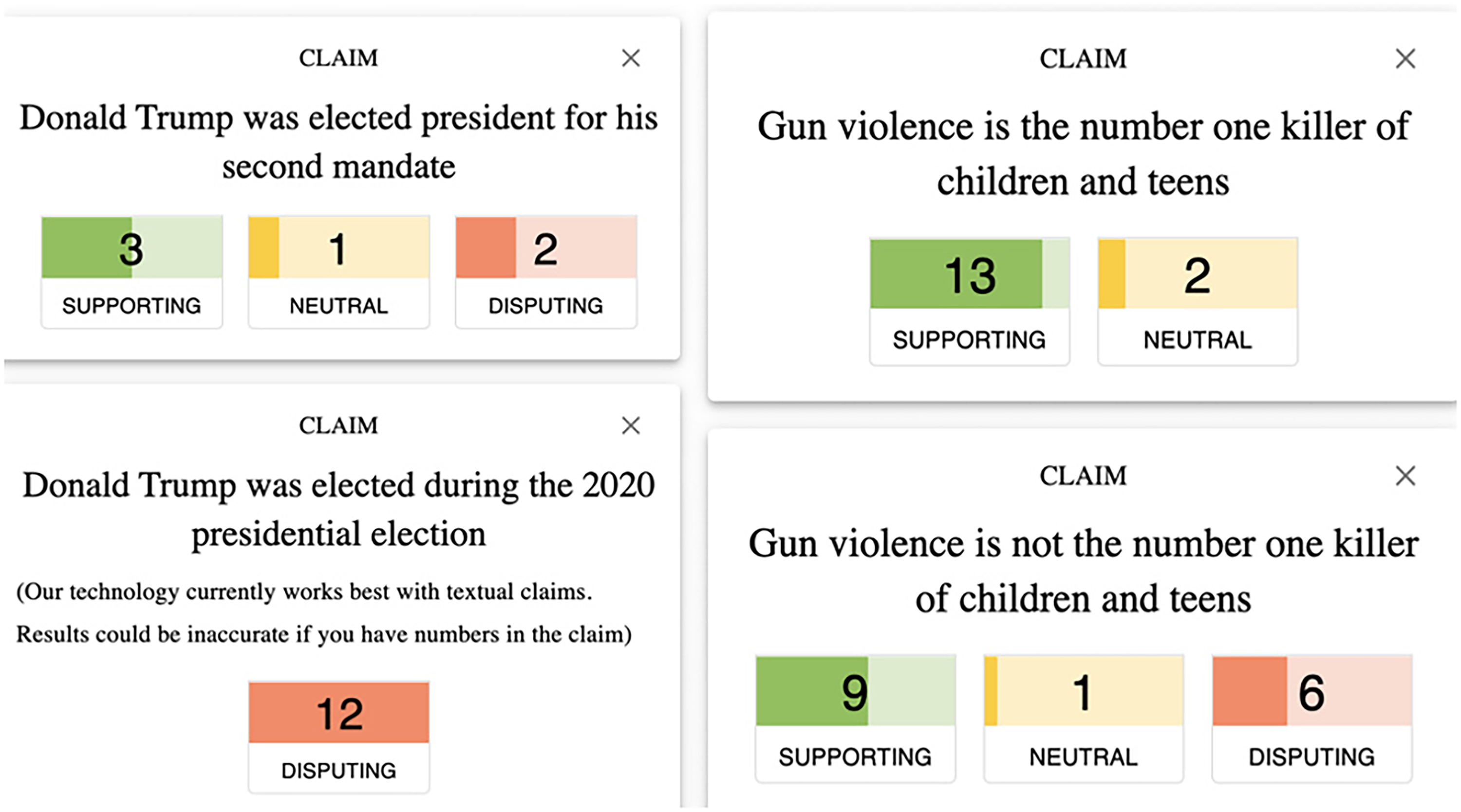

Another reason that the tool produced inaccurate results seems to be the algorithm's language sensitivity. The tool’s accuracy depended on how claims were formulated in terms of language. While testing the tool, I noticed that similar claims that were formulated differently yielded different results and that claims with contrasting content did not yield contrasting results, as shown in Figure 1.

Inconsistency of results.

In summary, the setbacks described above concerned the accuracy of the tool: These challenges needed to be dealt with to make the AI editor work as a technological innovation. However, as the company wanted to monetize its tool, it also had to ensure that the fact-checking industry would adopt the AI editor. Accuracy issues aside, the company still had to overcome barriers to technology adoption by media practitioners.

Lack of transparency

Even if the AI editor could distinguish true claims from false, “X” needed to consider other aspects of algorithm functioning. One such aspect was being transparent regarding the tool's decisions by explaining the results to the users. This was problematized in one of the analyzed documents, which mentioned that the company needed “to ensure the highest possible degree of transparency to maintain trust in methodology.” Concerns regarding transparency were supported by the user testing of the prototype and the feedback the company received from them. As one of the employees noted, while testing the tool with journalists, the moment they saw claims marked in red (meaning they were not true), they would immediately start questioning the mechanism behind the decision: “And even if we had everything right, they were still very, very skeptical.”

Transparency was an issue with the tool throughout the claim identification and verification stages. One way “X” dealt with this issue was to focus on the explainability potential of the algorithm behind the tool. Explainability in machine learning is understood as the ability of the model to justify its decisions, and in the case of automated fact-checking, it can be understood as “the full set of rules used to arrive at the final judgment” about the veracity of the claim (Kotonya & Toni, 2020, p. 5)

One of the blog posts published on the company website stated: “[w]hen facts alone are insufficient, our goal is to create explainable AI for automatic fact-checking and extra insights.”

Despite this promise, during the AI editor testing, it was unclear on which section of the referential text the tool was basing its decision to allocate the source to “supporting” or “disputing” categories. The tool was inconsistent in marking the exact snippets of information in the referential text that would back up the results. Accordingly, from the user's perspective, the tool was not transparent enough to explain the results. Such an extra layer of clarity might have had a critical impact on the successful adoption of this technology by a media industry that does not necessarily have expertise in how AI systems work. In the interviews, the employees expressed that bringing such clarity into their algorithm was a matter of urgency for the company: “[this is] something that we should do pretty soon is to have a kind of transparency. Okay, how does our AI work? but in an explanation that data journalists can understand or that I can understand without knowing AI in depth.”

Thus, “X” was faced with technological challenges related to creating an explainable AI algorithm. This was necessary to make the behavior of the AI editor “more intelligible to humans by providing explanations,” as this is what largely determines whether the user understands and trusts the tool (Gunning et al., 2019, p. 1).

Incompatibility with industry needs

As one of the external partners noted in the interview, “X” had to precisely identify which market they wanted to enter. Initially, they planned to develop the tool primarily for the media industry in Norway. According to this partner, there were two main problems the company had to face—Norway is a small market, and focusing only on the media industry was limiting: “Are you going to be a Norwegian ‘mom and pop’ store? (…) [P]art of the challenge was [that] they were focused more on the journalistic part. And so, if they were going after other markets, what is the messaging for that?”

The geographical aspect of the target market seemed a relatively easy issue to solve. Considering the availability of training data and the size of the potential client base, the company focused on developing the tool primarily in English, targeting international clients. However, selecting the target industry where their technology would be adopted appeared to be a longer and bumpier road. When asked about the major challenges for the company, an employee responded: “I think it is communicating the value to the to the right, first client… We are discussing this in a lot of directions - health researchers or journalists have different needs… We are looking at different verticals to see which fields could use our AI the fastest and get the most value from it. And that is such a challenge …”.

Difficulties in finding a place in the sun became immediately apparent as the company tried to secure a pilot client for their technology. After being established, “X” contacted a major news agency in Norway. The agency representatives asked them to create a tool like “Grammarly” for fact-checking, helping journalists analyze text and find mistakes. The company worked on making its tool more intuitive and improving the user experience. However, after they showed the prototype to the potential client, the news agency suddenly retreated. One employee commented “[t]hey were suddenly like, hmm, maybe we don't need this… it might be useful for other newspapers, but not for us.”

This reaction led the team to investigate journalists’ needs further. The employee quoted above explained that they did not give up on their chosen pilot client. Instead, “X” decided to conduct in-depth interviews with their journalists. They learned that, for international stories, the news agency relied on trusted media content published by major legacy media organizations. In addition, while contemplating the necessity of AI tools to tackle the issue of fake news in day-to-day journalistic work, the employee noted: “a lot of the journalists did not feel the need (…). [T]hey would say, ‘I know my sources, I trust my sources. I’m not fooled that easily.’” Another employee commented: “[T]hey were saying ‘No, we are good at understanding what is fake or what is not. And we don’t encounter that often in Norway.’”

The industry reaction suggests that the AI editor as an innovation faced challenges from a compatibility perspective; according to Rogers (2003), this refers to the degree to which an innovation fits with existing values, experiences, and needs of potential adopters. These challenges pushed “X” to diversify its client base—aiming at industries besides media that are also confronted with the disinformation problem (for example, business, finance, security services etc.). Moreover, the company decided to diversify the range of tools they wanted to create. One of the internal documents described that the next step for the company should have been bringing “current solutions to customers with similar problems: investors, military security analysts, etc.,” and developing “new products for new markets like gaming, education.”

Adhering to such a strategy came with consequences. One major consequence was “X”'s step-by-step departure from its original goals. Developing several so-called “low-hanging fruit” products meant diverging from the initial plan of creating “cutting-edge technology” capable of information verification with sufficient accuracy and adoption potential. Eventually, this departure resulted in repackaging their technology as an assisting tool to help journalists research faster and better—in place of a tool that automatically checks facts.

Discussion and conclusion

This technographic case study documented the challenges of automated fact-checking on the example of a Norwegian start-up company, “X,” that attempted to create an AI editor for journalistic information verification. Using the example of an AI editor, the study conceptualized automated fact-checking as an innovation within journalistic knowledge production. This article contributes to the exploration of automated fact-checking as a phenomenon theoretically, methodologically, and practically by documenting the challenges that the AI editor faced as an innovation within journalistic knowledge production.

Theoretically, the article argues for conceptualizing automated fact-checking as a technology that adheres to the epistemic authority of human fact-checkers while establishing itself as a credible tool for describing real-life events (Zelizer, 1992). This is necessary for creating trust between technology and information receivers (Carlson, 2020). Hence, studying automated fact-checking requires moving beyond the computer science approach, which narrowly focuses on the technical challenges of creating such tools. Instead, it vouches for applying an interdisciplinary lens that pays sufficient attention to the context of its emergence, which is journalistic knowledge production. Methodologically, the article advocates for applying a qualitative approach and demonstrates the advantages of technography as a study design for exploring perspectives on and value and potential of emerging technologies from developers’ point of view (Berg, 2022). In terms of specification, valorization, and anticipation-Berg’s (2022) key methodological aspects of technographic studies:

Iterations on “X”’s website as a space for promoting their product reflected that the start-up experienced challenges while designing the AI editor and that the company’s approach towards automated fact-checking was shifting. They framed their technology as “assisting” rather than “automated,” alleviating the expectations about the tool's epistemic authority (specification). Despite the less enthusiastic response from the news industry, the company did not give up prospects for its technology. Instead, while being confident in the value of its technology, “X” decided to adapt to the situation and search for potential clients in other industries that need assistance in dealing with (valorization). As the challenges began piling up during the creation of the AI editor, this ultimately affected X's choice between continuing its efforts to create an automated fact-checking tool and diversifying its products by pivoting from the initial plan. They ultimately chose the latter (anticipation). In practice, though the case study documents the experience of one company, considering the limited number of organizations working on automated fact-checking tools worldwide (Abels, 2022), this study sheds light on common practical limitations of infusing AI into epistemically demanding practices like information verification. Thus, the trajectory of the company “X”—which invested its hopes and resources in large-scale automation but later turned to increased human agency—is an excellent example of the ongoing struggles within the fact-checking industry and academia on how much automation is desirable and realistic to achieve in complex epistemic practices such as fact-checking.

In response to the research question (

Specifically, the elusiveness of truth claims, the rigidity of binary epistemology, the lack of access to data, and algorithmic deficiencies hindered “X”'s ability to successfully automate fact-checking, at least for the time being. As the company was also confronted by issues related to the news industry adopting the AI editor, it influenced how the “X” would develop its tool(s). The lack of transparency in explaining results and the tool’s incompatibility with the industry needs encouraged the company to work on other “low-hanging fruits”: tools that could expand their target market.

These challenges were not standalone issues. There was an inherent connection between the challenges that eventually led the company to diverge from its initial goal of striving towards tools with epistemic authority and instead reevaluate the human agency's role in assessing claims’ veracity. For example, without improving the quality and quantity of data to train the algorithm, it was impossible to detect checkworthy claims with sufficient accuracy nor to accurately detect the veracity of claims. Moreover, solving one set of challenges did not automatically solve the other challenges—and one set of challenges could not be solved without solving the others. Ultimately, if the accuracy-related challenges were not dealt with, the company would have difficulties convincing the media industry to adopt their tool for information verification.

Limitations and future research

It should be noted that the challenges identified in this study are not exhaustive, and various organizations working on similar tools might experience different sets of problems. Such a predicament comes from the emerging nature of AI tools and the organizational peculiarities of innovation-oriented start-ups. They are not dealing with established technologies; as such, different actors are trying to resolve different information verification issues with different technologies and approaches (Guo et al., 2022). Specifically, “X” encountered other challenges too, such as issues with competition, mobilizing human and financial resources, branding, and other organizational or circumstantial issues (for example, the COVID-19 pandemic). Considering the aim of the study and its specific interest in the phenomenon of automated fact-checking, this article only reported the issues related specifically to their tool, AI editor.

Finally, many areas remain underexplored in automated fact-checking as an emerging media technology and innovation within journalistic knowledge production. For example, how can automated systems help tackle the information disorder? How can such systems uphold ethical standards in journalism? How will end users of fact-checks react to machine-driven truth-seeking in day-to-day meaning-making? In parallel with seeking answers to these questions, those working on automated fact-checking tools must also address the challenges identified in this case study.

Ethical statement

The Norwegian Agency for Shared Services in Education and Research (Sikt) has approved the research project for the ethical collection, storage, and sharing of data with project participants. Written or oral consent was obtained prior to recording, storing, and processing data from all human participants in the study. None of the study participants are identified directly or indirectly throughout the article.

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.