Abstract

Artificial intelligence (AI) has the potential to disrupt the advertising industry as marketers and brands can leverage its power to create highly engaging personalized content. However, the usage of AI is prone to bias and misinformation and can be used to manipulate. Therefore, various lawmakers such as the European Union aim to enforce AI disclosure messages to protect consumers. But the implications of such disclosures have not yet been studied. This paper draws on existing theories in persuasion knowledge, disclosure theory, inferences of manipulative intent, and AI aversion to develop a model to understand consumer attitudes toward AI disclosures in Instagram advertisements. A three-condition between-subjects online experiment (Nfinal = 161) was conducted to test the model. The data were analyzed using a moderated mediation model. AI disclosures lead to a direct decrease in advertising attitude. In addition, AI disclosures lead to a decrease in brand attitude only when consumers have high AI aversion. There were no effects of AI disclosures on source credibility. These effects were mediated by inferences of manipulative intent. However, participants who viewed the AI disclosure had lower inferences of manipulative intent and then participants who did not view the AI disclosure. Furthermore, no differences were found between AI disclosures pertaining to the use of AI in the creation of the image or the text. Implications are discussed from both theoretical and managerial viewpoints and highlight why the use of AI on social media for advertising purposes should be limited as it will become more transparent in the future.

Introduction

For marketers, AI-generated content (AIGC) is often highly effective and efficient as AI can rapidly create content based on consumer data tailored to specific consumers (Chen et al., 2019). When consumers, however, are presented with this content on social media, they may be more susceptible to persuasion (Ienca, 2023). One way to protect consumers from unwanted persuasion attempts using AI is the inclusion of disclosures indicating the use of AI-generated content (from now on referred to as AI disclosures). Disclosures can inform consumers and increase the transparency of AIGC (Ienca, 2023), which is why the European Union has recently passed the Artificial Intelligence Act, aiming to enforce disclosing AIGC (European Parliament, 2023). To be in accordance with this new regulation, Meta, parent company for among other Instagram and Facebook, has put several AI disclosures in practice (Meta, 2024). Instagram specifically, having generated an advertising revenue of 23.1 billion dollars in 2023 (Statista, 2024), now asks brands to add their so-called AI-info disclosure label when using AI for advertising purposes (Instagram, n.d.). This label signals that the advertisement was produced (or assisted by) of AI. From a scientific point of view, the effect of AI disclosures on consumer behavior and marketing outcomes, however, remains unclear.

Previous research on disclosures in marketing showcases both positive outcomes, due to honesty (De Jans et al., 2018), and negative outcomes because they identify the sender's persuasive intent (Boerman et al., 2017). However, the effects of disclosures are context dependent (Boerman et al., 2017), and there is limited research on disclosures in an AI context (Wu et al., 2022). This is probably because there is limited knowledge on consumers' attitudes toward AI (Campbell et al., 2022; Van Noort et al., 2020; Wu et al., 2022) and AI-generated advertisements (Wu et al., 2022).

Therefore, we argue that it is essential to understand the effect of AI disclosure on consumers' attitudes, such as advertising attitude, brand attitude, and source credibility in a for profit context (Arango et al., 2023; Biehal et al., 1992; Ismagilova et al., 2020; Mitchell & Olson, 1981). Moreover, this study further expands the literature on AI disclosures by proposing factors that may influence consumers' attitudes when facing AI disclosure cues. Since AI can generate images and text, AI disclosures could pertain to using AI to create the image or text. Previous research has indicated consumers may be more accepting of AI-generated texts than AI-generated images, as images contain valuable information for consumers (Bellaiche et al., 2023; Liu et al., 2020). The type of AI that is disclosed (either image or text-based) could therefore affect the way the advertising is perceived and therefore lead to different marketing outcomes.

Additionally, a study on AIGC in a charity context revealed consumers had increased inferences of manipulative intent when an ad contained an AI disclosure (Arango et al., 2023). Inferences of manipulative intent occur when consumers feel they may be manipulated, which often leads to negative consumer attitudes (Campbell, 1995). Since AI can be used to manipulate, consumers may dislike AI advertisements as they trigger their inferences of manipulative intent.

Finally, a factor that may influence consumers' attitudes when facing AIGC is AI aversion. AI aversion is the negative bias or belief consumers have toward AI (Dietvorst et al., 2015). Therefore, consumers with high AI aversion may already be skeptical of using AI and dislike AIGC advertisements more than consumers with low AI aversion.

Through a between-subject experimental study, we aim to investigate the effect of AI disclosures in Instagram advertising by answering the following research questions: (1) “How does an AI disclosure affect advertising attitude, brand attitude, and source credibility, and (2) to what extent can this be explained by inferences of manipulative intent and AI aversion?”. The current experimental study discerns between two types of AI disclosure: one disclosure pointing out that the text was generated using AI (AI text disclosure) and one disclosure pointing out that the image was generated using AI (AI image disclosure). Both disclosure types will be compared to a control condition, where no involvement of AI was disclosed. These questions aim to advance our theoretical understanding on the mechanisms behind AI disclosure cues understanding and consumer effects, as well as provide practical guidelines on how to effectively integrate AI disclosures. These practical guidelines will benefit among other marketeers, policymakers, and lawmakers considering enforcing mandatory AI disclosures, brands that are determining their own AI disclosure policies, and social media platforms.

AI-generated ads and consumer attitudes

Consumers' attitudes toward advertisements generated by AI are an important consideration for brands and have commonly been measured in terms of advertising attitude, brand attitude, source (brand) credibility, and purchase intention.

Advertising attitude, defined as consumers' affective assessment of an advertisement (Biehal et al., 1992), can influence brand attitude, brand preference, and purchase intention (Biehal et al., 1992; Mitchell & Olson, 1981). Previous research indicates primarily negative advertising attitudes toward AIGC products, such as AI-generated music (Shank et al., 2023), artwork (Bellaiche et al., 2023), and chatbots (Luo et al., 2019). In advertising, consumers were found to be negative about AI disclosure despite valuing AI's effectiveness (Wu et al., 2022; Wu & Wen, 2021). AI disclosures in charity advertisements also led to reduced donation intentions (Arango et al., 2023), suggesting a negative effect of AI on advertising attitude.

Brand attitude, consumers' affective assessment of a brand (Biehal et al., 1992; Shimp, 1981), is crucial as it can influence brand preference and purchase intention (Biehal et al., 1992; Mitchell & Olson, 1981). However, research on the impact of AIGC on brand attitude is scarce and contradictory, with some studies showing no difference in terms of attitude if AI is used (Kirkby et al., 2023) and others indicating a negative effect (Arango et al., 2023).

Source credibility, the amount of trust a consumer has in the creator of an advertisement (Umeogu, 2012), is important as it can lead to higher purchase intention (Ismagilova et al., 2020). However, machines tend to be considered less credible than humans (Dietvorst et al., 2015), and AI disclosures have been found to reduce source credibility in various contexts (Liu et al., 2023).

In sum, previous research suggests that advertisements with an AI disclosure are more likely to result in negative advertising attitude, brand attitude, and source credibility, similar to the effects of other disclosure cues that activate persuasion knowledge and lead to negative consumer attitudes.

Persuasion knowledge and AIGC

Persuasion knowledge refers to the knowledge consumers hold about the intentions and methods advertisers employ to persuade (Friestad & Wright, 1994). Persuasion knowledge is activated when consumers become aware of an intent to persuade, for example, when viewing an advertisement (Friestad & Wright, 1994). Consumers can then employ various strategies to manage and resist persuasion (Friestad & Wright, 1994; Rahmani, 2023). This can lead to negative outcomes such as a decreased brand attitude and purchase intention (Rahmani, 2023). Thus, activating persuasion knowledge allows consumers to resist persuasion.

Various cues can serve to trigger persuasion knowledge (Friestad & Wright, 1994). For example, aspects pertaining to the source of a message, such as source familiarity, can trigger persuasion knowledge. Therefore, AI disclosures may serve as a cue as they pertain to the creator of the advertisement. In addition, disclosures themselves may also serve as a cue.

Disclosures are messages that reveal information to consumers, usually regarding the nature of an advertisement. They are often mandatory by law as an ethical obligation so consumers can better understand the content they are viewing and make informed decisions (Boerman & Van Reijmersdal, 2016). Disclosures in marketing have been studied in various contexts, showcasing contrary outcomes.

Consumers seem to have a general negative disposition toward disclosures. This may be because disclosures serve as a cue triggering persuasion knowledge (Boerman et al., 2017). This was found in a study on advertising disclosures in covert marketing, where consumers' brand attitude decreased as the disclosure triggered their persuasion knowledge (Campbell et al., 2013). Thus, disclosures could lead to negative effects due to activating persuasion knowledge.

However, disclosures can also be seen as an honest revelation, which can increase consumer attitudes (De Jans et al., 2018). This was also found in a study on the disclosure of retouched photo advertisements, where consumers had higher brand attitudes toward disclosed ads due to their transparency (Semaan et al., 2018). Thus, disclosures could also lead to positive effects if consumers view them as an honest revelation.

Nevertheless, considering the ample literature on the negative perspective consumers have regarding the use of AI in advertisements (Bellaiche et al., 2023; Wu et al., 2021), we argue that it is likely that the AI disclosure will lead to a decrease in advertising attitude, brand attitude, and source credibility. Therefore:

Inferences of manipulative intent and AI-generated content

A possible explanation for consumers' negative attitude toward AI disclosures may be an increase in inferences of manipulative intent. Inferences of manipulative intent can be defined as the perceived inappropriateness, unfairness, or manipulation in trying to persuade the consumer (Campbell, 1995). Advertisements do not have to be deliberately manipulative to be considered as manipulative by consumers (Held & Germelmann, 2019). Thus, consumers may infer manipulation when viewing AIGC disclosed advertisements.

Disclosures in advertisements in general may lead to higher inferences of manipulative intent (Thomas et al., 2013). For AI disclosures, this may be heightened as AI can deliberately be used to create false images and realities to manipulate consumers (Arango et al., 2023; Nah et al., 2023). Thus, consumers may find using AI manipulative, and disclosing AI may trigger their inferences of manipulative intent.

The literature on inferences of manipulative intent makes it overtly clear that high inferences of manipulative intent result in negative advertising attitude, brand attitude, and purchase intention (Campbell, 1995). Furthermore, high inferences of manipulative intent can lead to a decrease in source credibility, as when consumers feel they are being manipulated, they trust the source less (Umeogu, 2012). Therefore, the reduction in advertising attitude, brand attitude, and source credibility when viewing AIGC disclosed advertisements may be caused by inferences of manipulative intent. Therefore:

AI aversion and AI-generated content

A factor that may influence consumers' negative attitude toward AI disclosures is AI aversion, which can be defined as negative bias or beliefs consumers hold regarding algorithms such as AI (Dietvorst et al., 2015). Although AI can be very effective, consumers still seem to distrust it and prefer humans (Dietvorst et al., 2015). AI aversion can be caused by various factors. For example, consumers may be unfamiliar with AI and how it works and have concerns regarding privacy that may negatively influence their view of AI (Mahmud et al., 2022).

Conversely, some consumers may have high trust in AI, but this has been shown to recede once they become aware of any faults made by AI (Dietvorst et al., 2015). AI has been found to be prone to faults such as spreading false information, raising questions about its efficiency and reliability (Huh et al., 2023). Consumers especially seem to lack trust in AI's subjective skills (Wu & Wen, 2021), as consumers find AI to lack creativity, authenticity, and emotion (e.g., Van Noort et al., 2020). Since these subjective skills are usually central in creating advertisements, it is likely AI aversion will further influence consumers' negative attitudes toward AIGC disclosed advertisements.

Advertising with an AI disclosure may trigger consumers' AI aversion and lead to negative outcomes (Sundar, 2020). Previous AI aversion research found consumers with troubled feelings toward AI found advertisements made by AI to be more unnerving (Wu & Wen, 2021). Disclosing AI usage triggers aversion and negatively impacts consumers' acceptance of AI recommendations (Ochmann et al., 2020). Finally, AI aversion was shown to affect the relationship between social trust and perceived risks in using algorithm recommendation systems (Wu et al., 2024). Therefore:

In addition, previous research found that consumers felt more manipulated when viewing AIGC (Arango et al., 2023). These feelings may be enhanced when consumers have high AI aversion, as they are already skeptical of trusting AI. This may make them critical of the content and further negatively impact the relationship between AI-disclosed advertisements and inferences of manipulative intent. Therefore:

Types of AI disclosures

Different types of AI disclosures may influence consumer attitudes toward advertisements with an AI disclosure. Since AI is capable of generating images and text, AI disclosures could pertain to using AI in creating the image or text. However, there is a lack of studies on AIGC texts and images and on differences between AI images and AI texts and consumer attitudes.

However, consumers may be more critical of the images they view in social media advertisements. Images on social media hold important information for consumers about advertised products and services as they express more about abstract experiences such as changes in attitude (Liu et al., 2020). This allows consumers to form opinions about a brand and its products or services (Liu et al., 2020). This may also pass onto the attitude consumers have regarding the advertisement and brand (Mitchell & Olson, 1981). Thus, images are often used by consumers to infer information and form attitudes.

These effects may be enhanced on social media, as there is a stronger emphasis on graphic-based content (Gretzel, 2017). This was also found in a study comparing consumer engagement with images and text on social media, where consumers engaged more with images (Moran et al., 2019). Another study on the effects of captions and images on body image found only a negative effect of images on body image and not captions (Tiggemann et al., 2020). Therefore, images may be more important on social media and have a stronger effect on consumers.

In the context of AIGC images and texts, there is limited research. However, because Instagram relies a lot on pictorial content (Zappavigna, 2016), one can assume that the generation of false images would lead to more negative attitudes in consumers than if the text was generated with the use of AI. Therefore:

Furthermore, images are often seen as depictions of reality; however, advertising images can also be manipulated to persuade. Since AI can manipulate reality by editing or generating images (Nah et al., 2023), consumers may find using AI in images to be more manipulative. Therefore:

Moreover, consumers with high AI aversion may have an even stronger negative attitude toward AI-generated images than text, as they are already distrusting and critical of AI. The compounding negative effects of AI images and AI aversion may therefore result in AI aversion worsening the negative effects of AI image disclosures and consumer attitudes. Therefore:

Lastly, since AI images may be manipulated and consumers with AI aversion may be more critical of AI, they may find the use of AI-created images to be even more manipulative. The compounding negative effects of AI images and AI aversion on inferences of manipulative intent may therefore result in AI aversion negatively influencing the feelings of manipulation consumers have. Therefore:

Methods

To answer the above-mentioned research questions, a between-subjects online experiment with three conditions (AI type disclosure: text, image or control) was conducted to test the effect of these conditions on marketing outcomes (i.e., advertising attitude, brand attitude, and source credibility), while considering the perceived manipulation intent as a mediator and AI aversion as a moderator. The study was preregistered at aspredicted.org (https://aspredicted.org/GKG_XYM) and received ethical approval before data collection from the Tilburg University Ethics Review Board, Research Ethics and Data Management Committee (REDC2023.48a). Finally, the data, jamovi file, and the supplemental material can be found in the following OSF folder: https://osf.io/8fjpz/?view_only=dcdf0d9382174bdc8459a66c5f6c755f.

Participants

Sample size was determined using G*power 3.1. To compare three conditions (f² = 0.25, ɑ = .05, β = .80), 159 participants were needed. Out of the initial sample (Ninitial = 231), participants (n = 12) were removed from the sample after failing the attention check, after failing the manipulation check (n = 31), or after indicating they never use Instagram (n = 6). The final number of participants was Nfinal = 161 (120 women, 1 other, Mage = 22.32). Most participants had a university educational level (n = 87), a higher vocational education level (n = 44), or a high school education level (n = 24). In total, 55 participants were randomly allocated to the control, 55 to the AI image disclosure, and 51 to the AI text disclosure condition.

The participants were sampled using convenience sampling. A subsample (n = 115) was sampled at the researchers' university using the Human Subjects Pool at Tilburg University school of Humanities and Digital Sciences, where students voluntarily participated in exchange for academic credit. Other participants (n = 46) were sampled using the researcher's personal network and received separate access to the experiment so the data from both samples could be checked for differences.

Inclusion criteria included the participants being aged between 18 and 34 and users of Instagram (Dixon, 2023). Furthermore, only active Instagram users were selected to participate to ensure familiarity and comprehension of the content.

Materials

The participants completed the experiment in Qualtrics. A fictitious backpack brand, “Stash Gear,” was created with a fictitious Instagram profile and advertisement. This was done to control for previous brand or product affiliation and appreciation. The choice for a backpack was based on previous research in similar disclosure studies (Beckert et al., 2021) and also on the fact that backpacks are useful equipment for people of the age range 18–34, that is a genderless apparel, and that these product are already advertised quite a lot on Instagram, making them therefore relatable. All images representing the product, the profile, and the stimuli are visible in the OSF folder. Photoshop was used to create the brand, profile, and advertisement. Royalty-free images were used and sourced from the website Pexels. The text for the caption was created by relying on what was used by real backpack brands on Instagram. The profile and advertisements per condition can be found in Appendix A.

Measurements

Inferences of manipulative intent were measured using the scale developed by Campbell (1995). The scale has seven items (ω = .81), the first six were measured on a 7-point Likert scale ranging from 1 (completely agree) to 7 (completely disagree), and the last item was measured on a 7-point semantic differential scale with fair/unfair, with items such as “The way this ad tries to persuade people seems acceptable to me” and “This ad was fair in what was said and shown.”

AI aversion was measured using the attitude toward artificial intelligence scale (AIAS-4) developed by Grassini (2023). The scale has four items (ω = .86), which were measured on a 10-point Likert scale ranging from 0 (not at all) to 10 (completely agree), with items such as “I believe AI will improve my work” and “I think AI is positive for humanity.”

Brand attitude was measured using a five-item (ω = .94), 7-point semantic differential scale developed by Spears and Singh (2004). The scale used the following semantics: unappealing/appealing, bad/good, unpleasant/pleasant, unfavorable/favorable, and unlikeable/likable.

Advertising attitude was measured using an eight-item (ω = .90) 7-point semantic differential scale developed by Machleit and Wilson (1988). The scale used the following semantics: unfavorable/favorable, good/bad, enjoyable/unenjoyable, not fond of/fond of, dislike very much/like very much, irritating/not irritating, well-made/poorly made, and insulting/not insulting.

Source credibility was measured by letting participants react to eight claims, as developed by Newell and Goldsmith (2001). For example, items included “I trust Stash Gear” and “Stash Gear is honest.” Reactions to the claims were measured using a 7-point Likert scale (ω = .90) ranging from 1 (completely agree) to 7 (completely disagree).

Manipulation, attention, and sincerity checks were also measured. The manipulation question was as follows: “What was the first line of the caption under the post?”. The participants could choose from four options; the first three related to each disclosure condition, and the last one was “I don't know”. Participants who chose any option other than the one for their condition were removed from the sample.

The attention check was as follows: “What unique feature did the caption explain that the backpack in the advertisement has?”. Participants could choose from waterproof, fireproof, made from recycled materials, or lockable zippers. Participants who chose any option other than “waterproof” were removed from the sample.

Sincerity was checked by asking participants, “How sincere were you in your answers?”. The participants could choose from a 7-point Likert scale, ranging from 1 (completely honest) to 7 (completely dishonest). Participants who chose any option above three were removed from the sample.

Procedure

The experiment was pretested (N = 35) to check if the manipulation was salient and to prevent errors. The pretest demonstrated the experiment was clear in its instructions and that there were no issues.

Participants gave their consent after reading an informed consent form (see Supplemental material on OSF). Then, participants were shown the Instagram profile of “Stash Gear,” for 30 s, to ensure they spent enough time viewing the profile. Afterwards, each participant was randomly assigned to one of the three conditions. Depending on the condition, participants saw either the Instagram advertisement with the I image disclosure, the AI text disclosure, or no disclosure. Participants were only allowed to proceed after viewing the ad for 20 s, to ensure they had spent enough time viewing it.

After viewing the advertisement, participants filled in the various scales relating to inferences of manipulative intent, AI aversion, advertising attitude, brand attitude, and source credibility. Afterwards, they filled in their demographics, such as age, Instagram activity, educational level, and gender. The participants then completed the experiment, where the manipulation was revealed as well as the opportunity for participants to receive the outcome of the study or opt to withdraw their data from the experiment.

Data analysis

The data collected via Qualtrics was exported to jamovi (version 2.4.8), further prepared for analysis, and checked for assumptions (The jamovi project, 2023). To test the moderated mediation model, the GLM mediation package from the jamm module.

Influential cases were checked using Cook's distance, multicollinearity was checked using the collinearity test, normality was checked using the Shapiro–Wilk test, heteroskedasticity was checked using the Breusch–Pagan test, and linearity was checked using the Q-q plot of residuals. Full outcomes for the assumptions can be found in the OSF folder. No issues were found with normality or multicollinearity. However, issues were found with influential cases, heteroskedasticity, and linearity. Therefore, bias-corrected bootstrapping was applied.

For descriptive purposes, differences between the samples gathered from the researcher's network and the human subject pool were checked. An independent-sample Welch's t-test revealed a significant difference for AI aversion and age, respectively. On average, the human subject pool sample (M = 7.13, SD = 1.48) had higher AI aversion than the personal network sample (M = 6.30, SD = 1.60). This difference was significant (Mdif = 0.83, t(77.1) = 3.03, p = .003, 95% CI [0.29, 1.38], d = .54). On average, the human subject pool sample (M = 21.6, SD = 2.82) was younger than the personal network sample (M = 24.2, SD = 3.55). This difference was significant (Mdif = −2.56, t(68.9) = −4.38, p = .001, 95% CI [−3.73, −1.39], d = −.80). No other differences were observed between the two samples.

Results

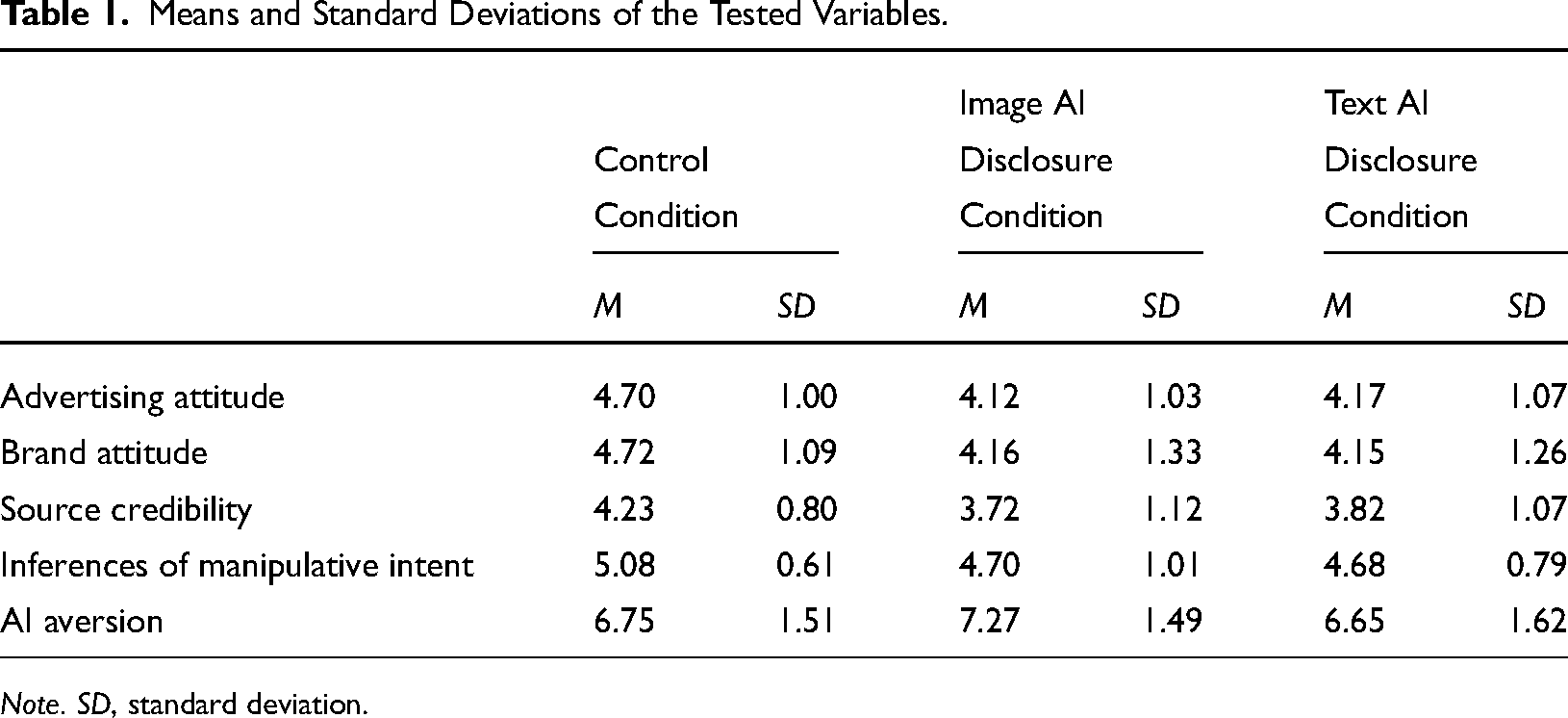

To test the hypotheses, a moderated mediation model was built for each outcome variable (i.e., advertising attitude, brand attitude, and source credibility) using Helmert's contrasts. In all models, AI disclosure was the predictor variable, with AI aversion as a moderator and inferences of manipulative intent as a mediator. Full results of the moderated mediation can be found in the jamovi file in the OSF folder. The means and standard deviations of all variables can be found in Table 1.

Means and Standard Deviations of the Tested Variables.

Note. SD, standard deviation.

Effect of AI disclosure (present/absent) on consumers’ attitudes

Direct effect

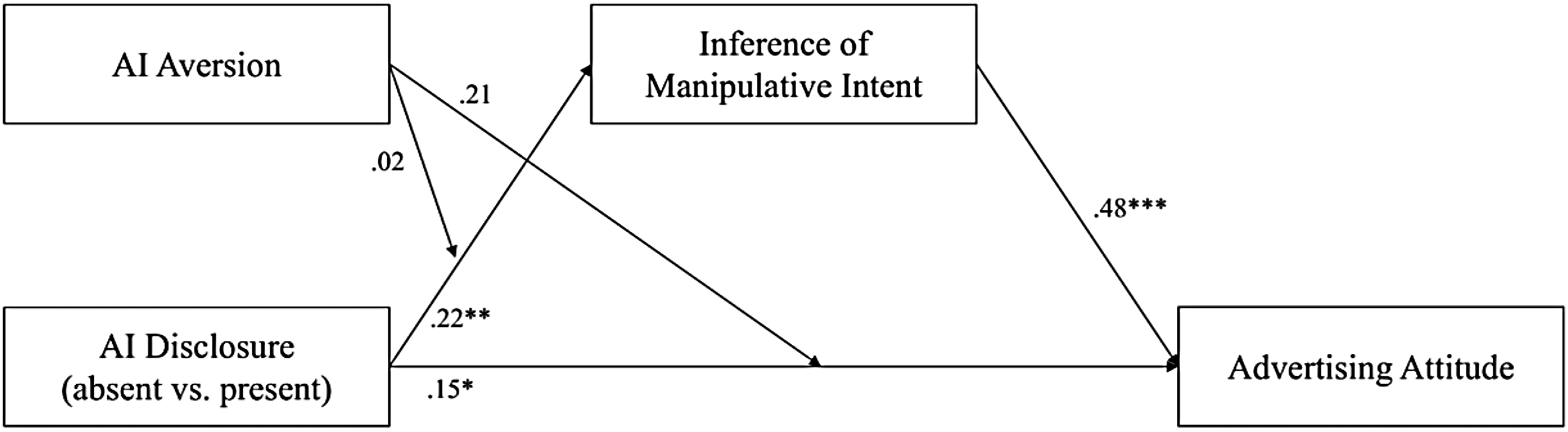

There was a significant direct effect of AI disclosure on advertising attitude (b = 0.33, SE = 0.16, 95% CI [0.01, 0.64], β = .15, p = .04, where the presence of AI decreased advertising attitude. This supports H1a. However, there was no significant direct effect of the presence of AI disclosure on brand attitude (b = 0.33, SE = 0.17, 95% CI [0.002, 0.67], β = .12, p = .06). Therefore, H1b is rejected. In addition, there was no significant direct effect of the presence of AI disclosure on source credibility (b = 0.16, SE = 0.13, 95% CI [−0.10, 0.40], β = .07, p = .23). Therefore, H1c is rejected.

Mediating effect of inferences of manipulative intent

There was a significant mediation effect of inferences of manipulative intent on the relationship between AI disclosure and advertising attitude (b = 0.24, SE = 0.08, 95% CI [0.09, 0.40], β = .11, p = .004). The relationship was still significant for high levels of AI aversion (b = 0.26, SE = 0.12, 95% CI [0.05, 0.51], β = .12, p = .03), however, not for low levels of AI aversion (b = 0.21, SE = 0.12, 95% CI [0.02, 0.52], β = .09, p = .07). Therefore, H2a is accepted as a partial mediation as there is a significant direct effect of AI disclosure on advertising attitude. The model accounts for 10.9% of the variance.

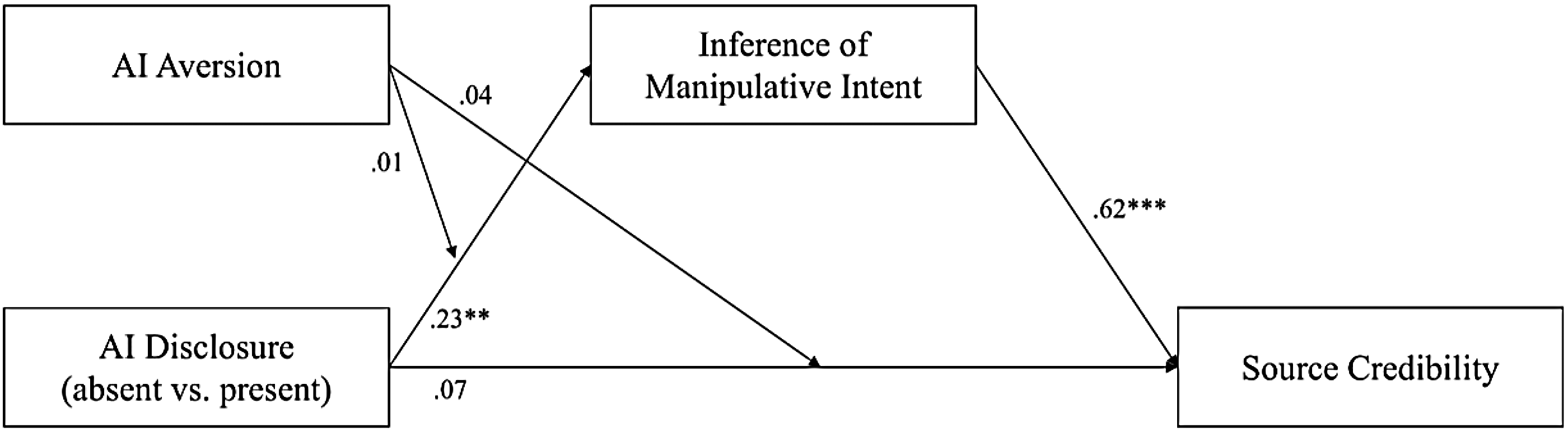

In addition, there was a significant mediation effect of inferences of manipulative intent on the relationship between AI disclosure and brand attitude (b = 0.29, SE = 0.09, 95% CI [0.12, 0.48], β = .11, p = .002). The mediation effect was still significant for low levels of AI aversion (b = 0.28, SE = 0.13, 95% CI [0.05, 0.56], β = .11, p = .04), as well as high levels of AI aversion (b = 0.30, SE = 0.14, 95% CI = [0.02, 0.60], β = .11, p = .04). Therefore, H2b is accepted as a full mediation as there is no significant direct effect of AI disclosure and brand attitude. The model accounts for 9.6% of the variance.

Furthermore, there was a significant mediation effect of inferences of manipulative intent on the relationship between AI disclosure and source credibility (b = 0.30, SE = 0.09, 95% CI [0.14, 0.51], β = .14, p = .001). The effect was still significant for low (b = 0.29, SE = 0.14, 95% CI [0.04, 0.58], β = .14, p = .04), and high levels of AI aversion (b = 0.31, SE = 0.15, 95% CI [0.04, 0.60], β = .15, p = .03). Therefore, H2c is accepted as a full mediation as there is no significant direct effect of AI disclosure on source credibility. The model accounts for 5% of the variance.

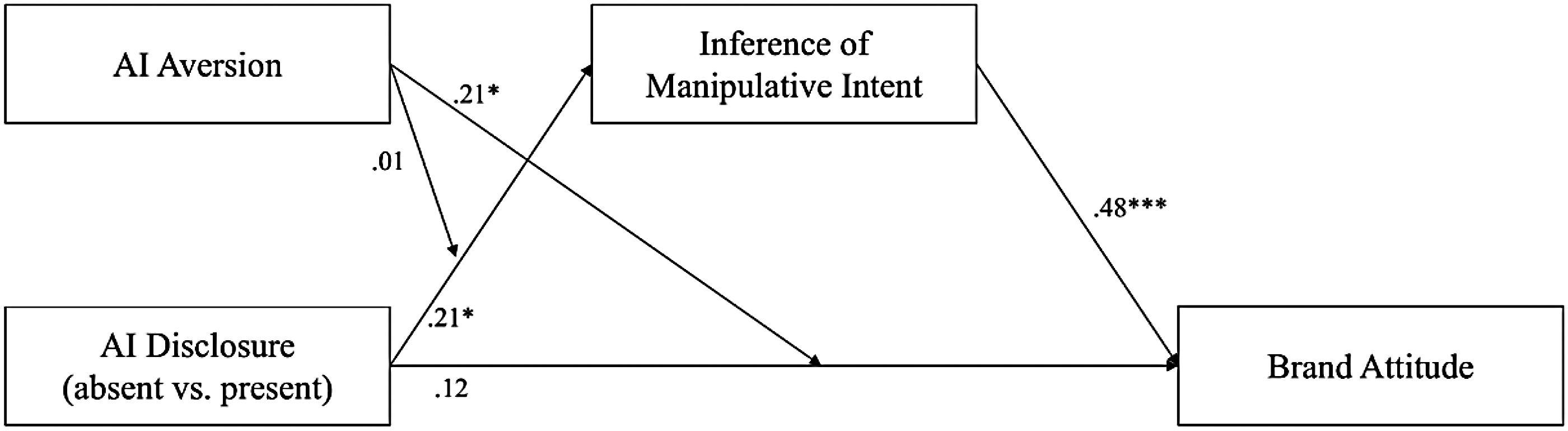

Moderating effect of AI aversion on the direct effects

There was no significant moderation effect of AI aversion on the direct effect of AI disclosure and advertising attitude (b = 0.30, SE = 0.16, 95% CI [−0.004, 0.59], β = .21, p = .06). Therefore, H3a was rejected. However, there was a significant moderation effect of AI aversion on the direct effect of AI disclosure and brand attitude (b = 0.36, SE = 0.15, 95% CI [0.06, 0.66], β = .21, p = .02). Therefore, H3b was supported. Finally, there was no significant moderation effect of AI aversion on the direct effect of AI disclosure and source credibility (b = −0.05, SE = 0.08, 95% CI [−0.20, 0.12], β = −.03, p = .54). Therefore, H3c was rejected.

Moderating effect of AI aversion on inferences of manipulative intent

There was no significant moderation effect of AI aversion on the relationship between AI disclosure and inferences of manipulative intent (b = 0.03, SE = 0.09, 95% CI [−0.14, 0.19], β = .02, p = .77) for advertising attitude. Therefore, H4a was rejected. In addition, there was no significant moderation effect of AI aversion on the relationship between AI disclosure and inferences of manipulative intent (b = 0.01, SE = 0.09, 95% CI [−0.16, 0.20], β = .01, p = .93) for brand attitude. Therefore, H4b was rejected. Lastly, there was no significant moderation effect of AI aversion on the relationship between AI disclosure and inferences of manipulative intent (b = 0.01, SE = 0.09, 95% CI [−0.16, 0.21], β = .001, p = .93) for source credibility. Therefore, H4c was rejected. The complete model with coefficients can be seen in Figures 1–3.

Moderated mediation of the effect of AI disclosure on brand attitude. Note. Scores represent standardized regression coefficients (β). *p < .05, **p < .01, and ***p < .001.

Moderated mediation of the effect of AI disclosure on advertising attitude. Note. Scores represent standardized regression coefficients (β). *p < .05, **p < .01, and ***p < .001.

Moderated mediation of the effect of AI disclosure on source credibility. Note. Scores represent standardized regression coefficients (β). *p < .05, **p < .01, and ***p < .001.

Differences between AIGC image disclosures and AIGC text disclosures

Direct effect

There were no significant differences between the AIGC image or text disclosure (b = 0.004, SE = 0.18, 95% CI [−0.38, 0.33], β = .002, p = .98) for advertising attitude. Therefore, H5a was rejected. There was no significant difference between the AI image or text disclosure on brand attitude (b = 0.04, SE = 0.23, 95% CI [−0.37, 0.54], β = .01, p = .85). Therefore, H5b was rejected. There were no significant differences between the AI image or text disclosure for source credibility (b = −0.12, SE = 0.17, 95% CI [−0.46, 0.21], β = −.05, p = .49). Therefore, H5c was rejected.

Mediating effect of inferences of manipulative intent

There was no significant mediation effect of inference of manipulative intent between the AI disclosure type and advertising attitude (b = 0.003, SE = 0.11, 95% CI [−0.20, 0.21], β = .00, p = .98). Therefore, H6a was rejected. There was also no significant mediation effect of inference of manipulative intent between the AI disclosure type and brand attitude (b = 0.001, SE = 0.13, 95% CI [−0.25, 0.26], β = .00, p = .99). Therefore, H6b was rejected. Furthermore, there was no significant mediation effect of inferences of manipulative intent between disclosure type and source credibility (b = 0.002, SE = 0.14, 95% CI [−0.27, 0.27], β = .00, p = .10). Therefore, H6c was rejected.

Moderating effect of AI aversion

None of the hypotheses related to the moderating effect of AI aversion in the second level of the contrast was significant, all ps > .55. Therefore, H7a, H7b, H7c, and H8 are all rejected.

Discussion

This study investigated consumer responses toward AI-disclosed Instagram advertisements (i.e., advertising attitude, brand attitude, and source credibility) with different AI disclosures (i.e., AI image or AI text disclosures) and if these effects were caused by inferences of manipulative intent or influenced by AI aversion. The results indicate AI-disclosed advertisements on Instagram lead to a negative advertising attitude, but that they have no effect on brand attitude or source credibility. However, our study reveals that behind those results, the factor that is the perceived manipulative intent of the advertisement is crucial. As a matter of fact, consumers felt less manipulated when viewing an AI disclosure, which led to an increase in source credibility and brand attitude, but to a decrease in advertising attitude. In addition, we highlight that AI aversion only had an effect on brand attitude, meaning consumers with high AI aversion also had a decreased brand attitude when viewing the AI disclosure. Finally, we found no differences in terms of marketing outcomes between AI image and AI text disclosures. These results and their implications are discussed further.

The decrease in advertising attitude when viewing AI disclosures aligns with previous research indicating consumers are more negative about AI (Wu et al., 2021) and may find it unnerving (Wu & Wen, 2021). However, the findings do not align with previous research indicating that advertising attitude may shift onto brand attitude (Shimp, 1981) as there was no effect of AI disclosures on brand attitude. These outcomes align with previous research that found no effect of AI disclosures on brand attitude as AI was used to create content that aligned with the brand's identity (Kirkby et al., 2023). Therefore, it is possible that consumers view the use of AI as an extension of the brand. This may also be why there was no effect of AI disclosure on source credibility. Although previous research found consumers tend to distrust AI (Dietvorst et al., 2015) and find AIGC less credible (Liu et al., 2023), previous research also indicates consumers may find AI more credible when they know humans are still involved (Aoki, 2021). Therefore, consumers may still view the source as credible if they believe the brand was actively involved in using AI to create the advertisement.

These results could also be explained by the fact that source credibility and brand attitude might take more time to develop than advertising attitude. Advertisement generates instantaneous impressions of a product, while the assessment of the brand and its credibility often rely on a more analytical way of processing advertisement information (Petty et al., 1983). The absence of effects of AI should thus be considered cautiously, as undesired effects might occur as time passes and as potential customers are more exposed to AI in advertisement.

Inferences of manipulative intent were found to be an underlying cause of consumer attitudes toward AI disclosures; however, participants reported lower levels of inferred manipulative intent when viewing the AI disclosure. This is interesting as it contradicts previous research where AI disclosures increased consumers' inferences of manipulative intent (Arango et al., 2023). These differences might be explained by the context, as Arango et al.'s study was conducted in a charity context as opposed to a for-profit context (2023). Furthermore, our results suggest consumers may not find AI advertisements to be manipulative. Previous research indicates consumers may be more accepting of manipulated advertisements as they become more prevalent in our society (Campbell et al., 2022). Therefore, consumers may have grown accustomed to AI manipulation in advertisements and may not find AIGC to be overtly manipulative. In addition, consumers may have appreciated the honesty of disclosures, which might also have lowered consumers' inferences of manipulative intent. This also aligns with previous research where consumers perceived deceptive intent decreased when viewing disclosures (Beckert et al., 2021). Thus, AI disclosures reduce consumers' inferences of manipulative intent, which may be due to consumers' appreciation of disclosures and them not finding AIGC in advertising to be manipulative.

Interestingly, when participants considered that the advertisement was not displaying low manipulative intent in the presence of AI disclosures, they still displayed low advertising attitude, which seems contradictory considering previous findings stating higher inferences of manipulative intent will lead to a decrease in advertising attitude (Campbell, 1995). We hypothesize that this discrepancy indicates an admission of honesty from the potential consumer, recognizing that the brand is honest about their use of AI, but that it still is not acceptable to be used to sell products online The moderating role of AI aversion was also explored in this study. The results indicate AI aversion only had a negative effect on the relationship between AI disclosure and brand attitude, meaning consumers with high AI aversion also had a reduced brand attitude when viewing the AI disclosure. This is in line with previous research indicating AI in advertising may lead to negative outcomes by triggering AI aversion (Sundar, 2020). Consumers with high AI aversion may distrust AI use and be more critical of it and, by extension, of the brand.

However, this does not hold true for the effect of AI aversion on the effect of AI disclosure on source credibility. This means consumers, even those with high AI aversion, do not find the source less credible when using AIGC. This is contrary to previous research where AI aversion negatively impacted the relationship between consumers' trust and perceived danger in AI recommendations (Wu et al., 2024). As previously stated, this may be due to the transparency of disclosures (De Jans et al., 2018), or the consumers' belief about brand involvement in AI usage (Aoki, 2021).

Similarly, the results also indicate that AI aversion was not an influencing factor for the decrease in advertising attitude. This is contrary to previous research where disclosing AI was correlated withAI aversion and which then negatively impacted the acceptance of AI-based job recommendations (Ochmann et al., 2020). Since the decrease in advertising attitude due to AI disclosures is not explainable through inferences of manipulative intent or AI aversion, other factors that did not fall under the scope of this study may be at play. We highlight how factors such as creativity, humanness, and advertising effort should be of interest for future research.

Previous research indicates consumers may find AI incapable of certain subjective skills, such as creativity (Wu & Wen, 2021). This may lead to consumers viewing AIGC as less creative. Since creativity is an important factor in advertising, this may lead to negative reactions (Dahlén et al., 2008). In addition, using AI in content creation may be seen as less authentic.

The use of AI may be seen as effortless or lazy. This is in line with previous research on AI-created artwork that found consumers preferred artwork disclosed as created by humans as they perceived it to have cost more effort (Bellaiche et al., 2023). Previous research found consumers make assumptions about the quality of a brand or product based on the effort consumers believe was put into the advertisement (Kirmani, 1990). Therefore, the use of AI may lead to negative reactions if consumers believe using AI in advertisements is lazy.

Moreover, consumers may find AI lacking a sense of humanness. Emotions are what make us human and differentiate us from AI (Borau et al., 2021). Since AI is incapable of having emotions, consumers may dislike them and perceive them as cold. Previous research has indicated consumers generally showcase a preference for humans over AI (Dietvorst et al., 2015). This may lead to negative reactions when consumers find an advertisement has not been made by a human but by AI.

Surprisingly, the results indicated that AI aversion did not influence the relationship between AI disclosure and inferences of manipulative intent, suggesting that the perceived manipulative intent is independent of prior attitudes toward the use of AI. This fosters important questions regarding the perception of AIGC on social media and how this perception affects attitudes and behavior. We highly encourage further research on this matter.

Finally, whether the disclosure conceded that the text or the image in the ad was generated with the help of AI did not seem to matter. This is contrary to previous research that found more negative effects of AIGC images than AIGC texts (Bellaiche et al., 2023). However, Liu et al. (2023) also highlighted that AI disclosures triggered consumers to pay more attention to the content of the AI-generated news (Liu et al., 2023). Therefore, it is possible the AI disclosure triggered consumers to evaluate the advertisement in a similar fashion, rendering the subject of the disclosure irrelevant. Future studies using eye-tracking methods might provide more insights on this matter.

Managerial implications

The outcomes of this study may be relevant to policymakers and lawmakers considering enforcing mandatory disclosures of AIGC, social media platforms that are developing AI disclosures on their platforms, and brands determining their policy on AIGC. Enforcing of AI disclosures may have negative implications for brands. Disclosing AI may lead to negative advertising outcomes as consumers dislike AI-generated advertisement, and consumers with high AI aversion also find the brand less favorable. This could have an impact on the effectiveness of advertising.

For brands, the findings indicate a potential challenge in balancing transparency with consumer sentiment, as mandatory AI disclosures may lead to undesired advertising outcomes. Consumers, independently of their AI aversion level, may react negatively to AI-generated advertisement, perceiving the advertisement as manipulative and therefore developing a altering their attitude toward the ad, the brand, and its credibility. In this study, we show apparent positive effects of disclosing AIGC, but that does not imply a causal link that should be taken for granted. We argue that marketing agencies need to carefully assess their communication strategies regarding AIGC, potentially adopting approaches that mitigate consumer concerns regarding AI usage while promoting transparency.

Furthermore, social media platforms that are in the process of developing AI disclosure policies should consider how such disclosures will be perceived by users and how they may affect both user engagement and brand partnerships. While the disclosure obligation is necessary to awaken the potential consumer's critical thinking skills, it might be highly detrimental for the platform as it impacts how much brands are willing to rely on them for marketing.

From a policymaking standpoint, the study highlights the challenges of enforcing mandatory AI disclosures. While transparency is important for helping consumers understand the use of AI, the negative effects on brands cannot be ignored. If consumers react poorly to AI-generated content, required disclosures may reduce consumer trust, lower brand favorability, and weaken the impact of advertising. Policymakers must find a balance between transparency and protecting brands. This could involve flexible rules, gradual disclosure requirements, or educating consumers about AI to reduce their aversion. By doing so, regulations can promote responsible AI use without harming brands or disrupting markets.

Theoretical implications

This is one of the first studies to investigate the effects of AI disclosures in a for-profit context on consumer attitudes measured by brand attitude, advertising attitude, and source credibility. The outcomes of this study expand the literature on AI in advertising, the use of AIGC, and AI disclosures. In addition, it expands the literature on persuasion knowledge, inferences of manipulative intent, and AI aversion in the context of AIGC.

The outcomes further establish the notion that disclosures are context-specific, and in an AIGC context, disclosures seem to propose a paradox: the higher the manipulative intent, the higher people consider the source of the advertisement to be credible, and the brand to be agreeable. We argue that these results need further investigation, as a hidden variable might explain this paradox We encourage future research to assess whether the disclosure of AIGC affects the potential customers' perceptions of the humanness of the advertisement campaign, the effort put in the ad, its perceived quality, or its creativity, as these all have a potential impact on marketing outcomes.

In addition, our study is one of the first to showcase that AI image and text disclosures in advertisements affect marketing outcomes similarly. In a society that may grow more accustomed to manipulation in advertisements and where AI involvement in advertising may become more frequent, this study indicates the current understanding of persuasion knowledge may not be a fitting measure in understanding consumers' reactions to AIGC advertisements and disclosures. Finally, this study showcases how AI aversion can influence the brand attitude when disclosing AIGC in advertisements, however, it does not influence the advertising attitude or source credibility.

Limitations

Despite cautious preparations, several limitations should be noted when interpreting the study's results. Only one product and advertisement were used, which may be a limitation as other products may warrant different results. Future research should focus on a wider variety of products to establish if results differ per product. Previous research on AI influencer brand endorsements found consumers may find technological products to be more suitable for AI influencers (Arsenyan & Mirowska, 2021). Therefore, it could be interesting to investigate different products in AIGC advertisements.

Another limitation is that only advertisements on Instagram were investigated. In addition, this study only investigated the difference between AI image and text disclosures. Future research could focus on researching the AI disclosures on different social media platforms and with different media such as videos or even offline mediums. Tiktok is currently one of the largest growing social media platforms and is also working on integrating AI disclosures (Hutchinson, 2023). Since TikTok is primarily video-based, it could be interesting to investigate AI disclosures in advertisements on TikTok.

Moreover, this study tested one design of AI disclosures. However, previous research has indicated different arrangements of AI disclosures could affect how disclosures are processed (Sun & Evans, 2022). For example, disclosure images such as labels or certificates may lead to different types of information processing than textual disclosures (Sun & Evans, 2022). Therefore, future research could focus on testing different designs of disclosures.

Finally, this study made use of a fictitious brand for the advertisement. Therefore, different results may occur for existing brands. Previous research has indicated consumers react negatively when brands act out of expectation or morality (Reimann et al., 2018). Consumers may therefore not expect AI advertisements by existing brands. Future research could focus on replicating the study with existing brands.

Conclusion

The outcomes of this study propose a paradox for disclosures in the context of AI. Although consumers seem to appreciate the transparency of AI disclosures, they still dislike advertisements using AI. Other factors not explored in the scope of this study are possibly the reason for this decrease, as consumers do not seem to perceive them as manipulative. Possible factors to further investigate may include authenticity, creativity, humanness, and advertising effort. Furthermore, consumers with high AI aversion also dislike brands that use AIGC advertisements. However, consumers, even those with high AI aversion, still find brands using AIGC in advertisements to be credible. Furthermore, there were no differences in AIGC image or AIGC text disclosures, suggesting that consumers seem to be more affected by the use of AI in general than the specifics.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical statement

This study received ethical approval before data collection from the Tilburg University Ethics Review Board, Research Ethics and Data Management Committee (REDC2023.48a).

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.