Abstract

Building on first-order and second-order cultivation frameworks, we examine how exposure to news and entertainment media portrayals of artificial intelligence (AI) is associated with public perceptions of quantitative estimates of AI development and future outlooks on artificial general intelligence (AGI). The survey data of 713 respondents in the United States were analyzed using Partial Least Squares Structural Equation Modeling. Findings showed that exposure to AI news is associated with higher estimates of AGI development and more hopeful emotional responses, though exposure to negatively framed AI news elicited fear and pessimism. Science fiction content was not associated with quantitative estimations but showed a positive association with emotional optimism. These results suggest that both news and entertainment science fiction media narratives contribute to the ambivalent yet meaningful construction of audience imaginaries of AGI.

Keywords

A tabloid newspaper recently ran a sensational headline: “Terrifying study reveals AI robots have passed Turing test,” accompanied by the statement: “The dystopian lessons in every sci-fi movie from ‘Terminator’ to ‘Ex Machina’ appear to be coming true” (Cost, 2025). Such portrayals illustrate how media coverage often frames artificial general intelligence (AGI) as an imminent existential threat. AGI refers to a hypothetical class of AI systems capable of learning, reasoning, and flexibly applying knowledge across a wide range of tasks and domains at a level comparable to, or exceeding, human intelligence, rather than being limited to narrow or task-specific functions (McLean et al., 2023; Raman et al., 2025). Unlike existing forms of narrow AI embedded in everyday technologies, AGI remains a not-yet-realized concept whose feasibility and developmental timeline are widely debated. In contrast to today’s task-specific AI systems, AGI is typically characterized by general problem-solving capacity, the ability to transfer knowledge across contexts, and autonomous goal pursuit.

Despite its speculative status, AGI is increasingly discussed by leading experts and industry leaders and has become a frequent reference in public discourse and media narratives. However, it remains unclear how the public perceives AGI and whether these perceptions accurately reflect the technical realities and implications associated with this advanced level of AI. Research consistently shows that public understanding of AI often diverges from technical realities, with perceptions heavily influenced by sensational news coverage and entertainment-driven narratives (Ittefaq et al., 2025; Nader et al., 2024). Public perceptions of AGI matter because exaggerated optimism or fear can affect policy debates and regulatory responses in ways that do not align with AI’s actual capabilities and risks (Nader et al., 2024). News media tend to portray AI as a powerful yet ambivalent force, whereas entertainment media – particularly science fiction – frequently depict AI through emotionally charged narratives ranging from benevolent helpers to existential threats. Communication research has long examined the role of media in cultivating public understandings of science and technology through repeated exposure and symbolic representations (Bauer, 2005; Choi, 2024; Choi et al., 2025; Kirkpatrick et al., 2023; Nisbet and Goidel, 2007; Yang et al., 2025). However, relatively little empirical work has examined how media exposure relates to public perceptions of AGI specifically.

This study seeks to fill this gap by examining how public understandings of this speculative stage of AI advancement are subject to cultivation effects. Specifically, it investigates the associations between repeated exposure to media narratives and (a) beliefs about how close current AI development is to achieving AGI, as well as (b) evaluations of AGI’s future impact framed in dystopian versus techno-optimistic terms. These two dimensions correspond to first-order and second-order cultivation processes, respectively (Shrum et al., 2011).

Literature review

Cultivation theory: overview and evolution

Cultivation theory posits that long-term, cumulative exposure to television content constructs individuals’ perceptions of social reality, especially when the portrayed world consistently features specific themes, values, or social roles (Gerbner, 1998; Gerbner et al., 1980, 1986). At the heart of cultivation theory is the idea that television functions as a centralized system of storytelling that presents a steady, repetitive, and compelling system of dominant images and messages about social reality and worldviews. These recurring portrayals gradually cultivate distorted perceptions of the world, particularly among heavy viewers, who are more likely to believe that “people cannot be trusted” (Gerbner, 1998: 185) and that the world is a “dangerous place” (Gerbner et al., 1980: 23). This process underlies what Gerbner and his colleagues (Gerbner et al., 1980) described as the mean world syndrome – a tendency for frequent television viewers to perceive the world as more dangerous, mean, threatening, and untrustworthy than it actually is. This perception arises not from specific incidents, but from the overrepresentation of violence, crime, and victimization in television programming (Grabe and Drew, 2007).

Traditional cultivation theory assumed television to be a dominant, uniform, and pervasive medium, with viewers engaging in habitual, non-selective consumption. Accordingly, total television viewing was treated as a sufficient predictor of worldview formation (Gerbner et al., 1986; Shrum, 1995). However, as media environments have become increasingly fragmented and personalized, the predictive utility of this model has declined (Hermann et al., 2021). In response to these changes, cultivation theory has undergone three major developmental phases (Potter, 2022). The first phase emphasized shared worldview formation through cumulative exposure to television content. The second phase shifted toward individual-level effects, recognizing variability in media effects based on personal viewing patterns. The third and current phase centers on genre- and content-specific exposure, acknowledging that cultivation outcomes depend on selective engagement with narrative genres such as crime dramas and reality television (Hawkins and Pingree, 1981; Potter, 1991a). This theoretical progression reflects cultivation theory’s adaptation to contemporary, individualized media consumption habits.

Cultivation theory has continued to evolve to account for genre-specific and content-specific exposure, rather than relying solely on total television viewing. Scholars increasingly agree that this approach provides a more accurate understanding of media’s gravitational influence on viewers’ perceptions of reality (Hermann et al., 2021). In line with this development, recent research has broadened the scope of cultivation studies to include diverse content genres and various media platforms. Within the realm of science and technology, the cultivation literature has examined how media cultivate public perceptions and attitudes on science and technology-related topics, such as the moral acceptability of different types of biotechnology (Bauer, 2005), beliefs about gender stereotypes in video gaming (Ellithorpe et al., 2025), and both perceived risks of agricultural biotechnology and attitudes toward science-related decision-making (Brossard and Shanahan, 2003). For instance, Bauer (2005) examined how symbolic distinctions in elite press coverage of biotechnology contributed to public perceptions of red and green biotechnology across Europe. Brossard and Shanahan (2003) examined how media use and attention to television and newspaper coverage of biotechnology controversy were associated with authoritarian views of science governance and support for public participation.

Media cultivation and first-order and second-order cultivation

Cultivation researchers (Hawkins and Pingree, 1981; Potter, 1991a) suggest that the psychological processes underlying cultivation operate through three core mechanisms: learning, construction, and generalization. Audiences initially acquire incidental information from repeated exposure to media content. This information is then used to construct beliefs about the real world, and those beliefs may be reinforced by direct experiences. Building on this premise, cultivation scholars distinguish between first-order and second-order effects (Busselle and Van den Bulck, 2020; Potter, 1991b; Shrum, 2001; Shrum et al., 2011). First-order effects involve the formation of factual beliefs, such as how frequently certain events occur, based on repeated exposure to media content. Shrum (1996), drawing from Tversky and Kahneman’s (1973) availability heuristic, proposed that cultivation effects occur through construct accessibility – the ease with which information that is frequently portrayed in media can be retrieved from memory. His experimental results demonstrated that heavy viewers not only had higher frequency estimates of real-world phenomena (e.g. crime, marital discord, and particular occupations) but also responded more quickly. Thus, first-order cultivation reflects differences in “real-world beliefs across levels of viewing,” typically measured by the estimated prevalence of attributes frequently depicted in the media content (Shrum, 1996: 483).

Second-order effects, by contrast, refer to broader evaluative beliefs, as well as attitudes, moral judgment, or emotional responses (Busselle and Van den Bulck, 2020; Ellithorpe et al., 2016; Potter, 1991b; Shrum et al., 2011). The second-order cultivation can occur independently of the first-order cultivation, although the two types of cultivation judgments may occur sequentially (Potter, 1991b; Shrum et al., 2011). According to Shrum et al. (2011), generalized beliefs such as materialism can be formed through online processing, in which evaluative judgments are constructed during media exposure. Yet, these generalized beliefs may also stem from repeated first-order estimations. When individuals frequently estimate a high occurrence of certain events, they may construct overarching beliefs about the nature of the world. For example, when individuals are repeatedly exposed to negatively valenced media messages (e.g. terrorism), they may form more accessible mental constructs related to threat or insecurity. Over time, these constructs can generalize into second-order beliefs – such as a perception that the world is a dangerous place (Coenen, 2020). Thus, frequent exposure to content emphasizing violent or threatening events increases the accessibility of related exemplars in memory, which in turn affects both frequency estimates and evaluative worldviews.

Exposure to AI news and first-order and second-order cultivation

In the current research’s context of AGI, first-order cultivation effects refer to belief-based judgments about the current state of technological development, particularly in areas that lack direct personal experience. For example, people may form estimated beliefs about the extent to which AI systems today can develop emotions like sympathy, joy, sadness, or possess self-awareness, which are capabilities often attributed to human-level intelligence (Cardon, 2006; Raman et al., 2025). These beliefs about unrealized technology, AGI, may reflect perceptions of currently observable AI technology, even when the realization of AGI remains speculative or has been debated for more than a decade. Baum et al. (2011) found that while expert forecasts varied, many anticipated human-level AGI within a few decades. However, Müller and Bostrom (2016) reported wide disagreement among AI researchers, with some expecting AGI by 2040 and others predicting that it could take centuries or might never materialize. In public discourse, such beliefs are often informed less by technical understanding than by cumulative exposure to media narratives that frame AGI as either imminent or distant.

From a cultivation perspective, repeated exposure to media coverage of AI technological breakthroughs and related narratives, such as AI systems passing several standardized tests, creating art, or replacing professionals, may lead audiences to overestimate AGI’s current development stage. This aligns with the concept of first-order judgments, as individuals begin to perceive AGI as already capable of human-like reasoning or emotion, even in the absence of empirical confirmation. Priest (1995) showed that variations in access to scientific information were associated with differing public perceptions of biotechnology debates. Priest’s findings suggest that sustained media exposure cultivates not only awareness but also specific factual beliefs about the status and progress of science. Extending this logic to AGI, the first-order cultivation effect may help account for how the volume of AI news corresponds with audience estimations of AGI capability in the absence of direct experience. Based on this reasoning, we propose the following hypothesis:

H1a: Greater exposure to AI-related news is positively associated with higher perceived development of AGI capabilities.

Second-order cultivation effects involve emotional reactions such as fear, hope, or optimism about AGI’s long-term impact, as well as beliefs about whether AGI will improve or disrupt human life. Such outcomes are less about estimating technical progress and more about interpreting what AGI means for society as a whole. Several studies have examined how AI-related media exposure relates to public attitudes toward AI. For example, Choi et al. (2025) demonstrated that greater exposure to AI information was positively associated with public support for AI. They also found that negative emotions (anger and fear) toward AI were negatively associated with support for AI while positive emotions (hope) led to greater public support for AI. Yang et al. (2025) found that attention to science news was positively associated with trust in AI scientists and AI companies. Li and Zheng (2024) also demonstrated that the amount of social media use predicted positive attitudes toward AI technology indirectly through reduced perceived AI threat.

Although public discourse often emphasizes AGI’s risks and limitations, media narratives have also presented aspirational imaginaries that frame AGI as a transformative societal force. Kampmann (2024) examined how a tech firm constructed a “doctor in your pocket” metaphor to enhance its AI product’s appeal, despite unresolved flaws. Cheng et al. (2025) found that metaphors like “friend,” “teacher,” and “assistant” encouraged public trust and predicted AI adoption. These metaphor-driven framings inform intuitive models of AI’s role and trustworthiness, projecting visions of advanced AI systems, including AGI, as benevolent helpers. Related representations, such as the “universal translator” or “librarian of infinite knowledge,” also signal future-oriented hope.

Several news framing studies complement cultivation theory by demonstrating that framing in the broader media context is associated with how individuals evaluate the future risks, opportunities, and societal implications of AI. For example, Choi (2024) found that the way temporal cues are embedded in balanced news coverage about AI, specifically whether consequences are framed as near or distant in the future, can influence perceived risk severity and support for AI development. Choi’s (2024) findings suggest that news framing affects not only how the public perceives the current state of AI but also how they mentally simulate its potential future impacts. Brewer et al. (2022) also found that individuals who reported greater exposure to news about AI were more likely to express supportive attitudes toward AI technologies, and that science fiction viewing was associated with both positive and negative evaluative frames in open-ended responses. These findings suggest that media exposure, whether informational or fictional, may correspond with emotional responses and attitudinal orientations toward AI, depending on the nature and framing of the content. Brossard and Shanahan (2003) examined whether attention to television and newspaper coverage of agricultural biotechnology was associated with authoritarian attitudes toward science policy among New York State residents. Although they found no direct relationship between media exposure and authoritarianism, they detected indirect effects through concern, scientific knowledge, and fear of science. The authors attributed the non-significant direct media effect to the limited amount of biotechnology content available on television, which may have failed to reach the threshold for producing direct cultivation effects.

Building on previous empirical findings, we propose that exposure to AI-related news is expected to be associated with stronger positive emotional responses and more optimistic expectations about AGI’s potential societal outcomes via enhanced positive emotional affect:

H1b: Greater exposure to AI-related news is associated with stronger emotional responses toward AGI.

H1c: Greater exposure to AI-related news is associated with stronger beliefs about AGI’s future societal impact, both positive and negative.

Selective exposure to negative AI news and cultivation

Traditional cultivation theory assumed the ubiquity and uniformity of television’s message system. However, media portrayals of AI today are not uniform in tone. Some highlight benefits, while others focus on risks (Ittefaq et al., 2025). Ittefaq et al. (2025), using LDA topic modeling on news articles from 12 countries between 2010 and 2023, found that in 2023, 21% of AI-related headlines were negatively framed, 13% were positive, and the remaining 66% were neutral. In addition, audience news and media consumption have become highly personalized through feed algorithms on social media platforms. A Twitter-based content analysis by Kouloukoui et al. (2025) revealed that users express both optimism about AI’s potential and concern about its ethical implications. In such a fragmented media environment, individuals’ preexisting views and experiences are likely to condition how cultivation effects emerge. For example, while general exposure to news may foster the so-called mainstreaming effect – a convergence of groups with otherwise divergent views toward more similar beliefs through shared media exposure (Morgan et al., 2025) – exposure to negatively framed AI news, particularly through algorithmic recommendations, may instead pull audiences away from these shared beliefs and toward more polarized and dystopian perceptions of AI. Nisbet and Goidel (2007) explain that media influence involves more than simple exposure. In their study of public perceptions of stem cell research, they found that exposure to science-related information, whether through news or entertainment media, interacted with individuals’ prior orientations such as ideological or moral beliefs. As a result, people may form different attitudes even when they encounter the same content. In the context of AI, news stories that focus on negative aspects of AI, including job displacement, algorithmic bias, surveillance, or existential threats, may receive more attention from audiences who hold skeptical views about AI (Lim et al., 2023). This assumption is based on the idea that audiences do not always encounter media content randomly. Instead, they may self-select into content streams that align with their existing concerns, or they may receive news tailored to their interests and ideological perspectives through algorithmic curation (Cardenal et al., 2019; Thorson and Wells, 2016). Audiences with skeptical or dystopian views may attend more to and more readily recall threat-focused content, whereas those supportive of AI advancement may engage more with narratives portraying AI as transformative or beneficial. For this reason, selective exposure to one’s preferred news frames about AI may reinforce existing beliefs. For example, individuals who already hold skeptical or dystopian views are more likely to be influenced by negative news narratives, which may heighten anxieties and reinforce negative beliefs about AGI’s societal implications.

Some cultivation research further shows that the valence of media messages influences emotional and cognitive responses. Andersen et al. (2024) demonstrate that media exposure cultivates a generalized sense of societal threats such as violent crimes and climate change. They interpret these results as reflecting the negativity bias of news media systems (Andersen et al., 2024), which disproportionately emphasizes negative events such as crime and conflict. This characteristic tends to foster negative emotions such as anxiety about the real world. They also explain that “an apprehensive and pessimistic outlook on society” develops through repeated exposure to such media messages (Andersen et al., 2024). Their argument applies directly to AI, as people may develop exaggerated fears regarding AI’s societal consequences when exposed to consistent negative portrayals of AI. Monteverde et al. (2025) argue that the dark aspects of AI-enabled devices are reported by a major newspaper, whether these AI-assistants are “fun time-savers or the beginning of an Orwellian nightmare” (p. 2). Andersen et al. (2024) also propose expanding cultivation beyond the mean world syndrome to what they call “scary world syndrome,” where media produces generalized societal fears (Andersen et al., 2024). These frameworks help explain why repeated and sensationalized portrayals of AI in media may foster public anxiety about AI as an inevitable and threatening societal force. Consistent with these theories, we expect that repeated exposure to negatively framed AI content, particularly when filtered through existing schema or predispositions, will heighten negative emotional responses and reinforce pessimistic beliefs about AGI. Therefore, we propose the following hypotheses:

H2a: Selective exposure to negatively valenced AI news coverage is positively associated with stronger negative emotional responses toward AGI.

H2b: Selective exposure to negatively valenced AI news is positively associated with stronger beliefs about negative societal consequences of AGI.

Perceived AGI capability and emotional responses

Research on cultivation effects suggests that there may be a relationship between first-order and second-order effects (Potter, 1991b). Potter distinguished between first-order measures – people’s quantitative estimates of occurrences (e.g. crime rates, job loss) – and second-order measures – people’s generalized beliefs or attitudes about social reality. His analysis suggested that these two effects are only weakly correlated at a general level but may be related under certain conditions or within specific subgroups. Potter (1991b) found asymmetric patterns in which first-order perceptions often serve as a cognitive basis for second-order beliefs.

Building on this reasoning, people’s emotional responses toward AGI may depend on perceptions of AGI’s capability or humanlikeness. However, it is unclear whether perceived AGI capability (AGICAPA) evokes positive (e.g. hope, excitement) or negative (e.g. fear, anxiety) emotions. Given this lack of clear directional expectation and the distal nature of AGI perception, no directional hypotheses were proposed. Instead, the following research question was posed:

RQ1: How is perceived AGI capability (AGICAPA) associated with individuals’ emotional responses – both positive and negative – toward AGI?

Cultivation from science fiction content

Cultivation effects extend beyond news media into entertainment content, as fictional narratives contribute to audiences’ imaginaries of future realities driven by advanced technologies. Cultivation scholars argue that fictional content influences beliefs via psychological processes distinct from those of news media (Appel, 2008; Appel and Richter, 2010). Bilandzic and Busselle (2008) found that repeated engagement with science fiction narratives can result in genre-consistent beliefs, particularly when audiences are transported into the narrative world and accept the story as realistic within its context. While news prompts viewers to build their worldview from factual cues, fictional storytelling reinforces belief systems through emotional and narrative engagement. Appel (2008) showed that regular exposure to fictional television was associated with “just world” beliefs – the perception that the world is fair and just – contrasting the “mean world” view often linked to crime-focused or violent media. These just-world beliefs were not fostered by non-fictional media.

Fictional portrayals of AI and humanoids in books, movies, and streaming media play a significant role in how the public understands and imagines AGI. Recent titles such as Black Mirror, Blade Runner 2049, Ex Machina, Her, The Creator, and Westworld offer compelling portrayals of AGI and its societal implications. For many individuals, science fiction serves as a primary frame of reference for imagining what AI could become. In the case of AGI, an emergent technology not yet fully realized, these fictional depictions may have a substantial impact on public expectations, concerns, and beliefs about the future. Monteverde et al. (2025) observe that portrayals of AI in film increasingly mirror real-world experiences, as people struggle to distinguish human voices from AI assistants. Kirby (2003) explains that science fiction films “naturalize the images and depictions embedded within their narratives” (p. 273), making even speculative or inaccurate science appear realistic to audiences. This blurring of boundaries reflects a media-driven projection of AI’s future, whereby individuals apply fictional portrayals to their real-world expectations, despite having limited direct experience with AGI:

H3a: Greater consumption of science fiction content featuring AI or humanoid technologies is associated with higher perceived development of AGI capabilities (first-order cultivation).

Science fiction, in particular, constructs shared imaginaries of social and human futures by presenting immersive narratives about intelligent machines, humanoids, and speculative forms of human–machine coexistence. These portrayals often oscillate between utopian visions emphasizing progress and human flourishing and dystopian scenarios foregrounding loss of control, existential threat, or moral ambiguity. However, dystopian depictions of AI rarely depict futures of total annihilation. Instead, they frequently retain elements of human agency, moral possibility, or affective continuity, embedding cautionary visions within narratives that preserve a residual sense of hope.

Short’s (2005) analysis of cyborg cinema provides a useful theoretical lens for understanding these mixed realities. Although the films he examined do not depict AGI in a strict technical sense, some figures, such as replicants or autonomous systems, display cognitive and moral capacities that audiences may readily interpret as human-level intelligence. Short (2005) argues that even when cyborg narratives are dominated by dystopian themes, they function as cultural spaces for negotiating human values rather than merely rehearsing technological catastrophe (Short, 2005). In doing so, these narratives affirm that humanity’s moral legacy may persist through technological creations while simultaneously articulating anxieties about loss, displacement, and extinction.

This perspective aligns closely with communication research suggesting that the societal influence of science fiction does not depend solely on whether portrayals are optimistic or cautionary, but rather on repeated exposure, narrative immersion, and affective engagement with speculative technologies. Brewer et al. (2022) demonstrate that audiences interpret AI-themed science fiction through distinct interpretive frames. Viewers adopting a social progress frame expressed greater support for AI, whereas those interpreting the same content through a Pandora’s box frame expressed greater skepticism. Crucially, their findings indicate that science fiction operates less as a source of direct persuasion and more as a context for familiarization and imaginative rehearsal, influencing how audiences emotionally and cognitively process AI-related information regardless of narrative valence.

Extending cultivation theory, Ellithorpe et al. (2016) argue that repeated exposure to mediated narrative content influences perceptions through cognitive mechanisms such as construal level. Concrete construals strengthen first-order cultivation effects, including perceived prevalence or realism, whereas abstract construals guide second-order judgments such as generalized beliefs, values, and societal expectations. Applied to AI-centered science fiction, this framework suggests that repeated engagement with speculative narratives may heighten emotional responses toward AI and contribute to beliefs about its broader societal significance, even when portrayals are ambiguous or predominantly negative. Accordingly, the present study does not assume that exposure to AI-centered science fiction leads to uniformly positive or negative evaluations of AGI. Rather, it proposes that repeated consumption of science fiction content fosters emotional engagement and perceived societal relevance through processes of familiarity, normalization, and affective involvement with speculative technologies. These emotional responses are expected to mediate the relationship between science fiction exposure and beliefs about AGI’s future societal role, independent of whether fictional narratives portray AI as benevolent or threatening:

H3b: Greater consumption of science fiction content featuring AI or humanoid technologies is associated with stronger emotional responses toward AGI.

H3c: Greater consumption of science fiction content featuring AI or humanoid technologies is associated with stronger beliefs about AGI’s future societal impact.

Methods

We tested the hypothesized paths specified in our structural model using partial least squares structural equation modeling (PLS-SEM). We prepared a self-administered online survey questionnaire on Qualtrics and launched it on the Prolific platform, which manages panels resembling the demographics of the UK and US populations (Douglas et al., 2023). Purposive sampling was employed based on the respondents’ qualifications regarding generative AI (GenAI) experience. This ensured respondents had baseline AI literacy, though it may have introduced bias toward technologically engaged individuals. Those who reported using GenAI services, including ChatGPT, Gemini, or Copilot, for longer than 1 month were allowed to complete the survey. Respondents were given a monetary reward of approximately 3US$ for their voluntary participation.

The total sample for analysis was 713 respondents. The sample included 49.2% females and 49.2% males, with their average age being 37 years (SD = 12). Approximately 61% of the sample was non-Hispanic White, 8.3% was Hispanic, 13.5% was African American, and 10.9% was Asian American. In terms of education level, 51% had a 4-year college degree or higher. About 24.4% of respondents had an annual household income of US$100,000 or more. As for the most frequently used GenAI service, 73.8% used ChatGPT, 10.8% used Gemini, and 7.6% used Copilot.

To analyze the collected data, we employed PLS-SEM using SmartPLS 4.0, because it provides greater statistical power in testing more complex models and offers robust model estimation for non-normal data (Hair et al., 2021). Using PLS-SEM is more suitable for exploring theoretical extensions of established theories such as media cultivation theory – the applicability of cultivation models in predicting people’s long-term outlook on AI.

Measures

Before responding to AGI-related items, participants were provided with a standardized definition of AGI to ensure a shared understanding of the concept. This definition was adapted from the prior literature (McLean et al., 2023) and presented as follows.

Artificial General Intelligence (AGI), also known as human-level AI, refers to AI systems capable of performing tasks at least as well as, or even better than, humans. Unlike narrow AI, AGI is expected to function as an autonomous agent capable of achieving complex goals across a wide range of environments, without relying on task-specific programming.

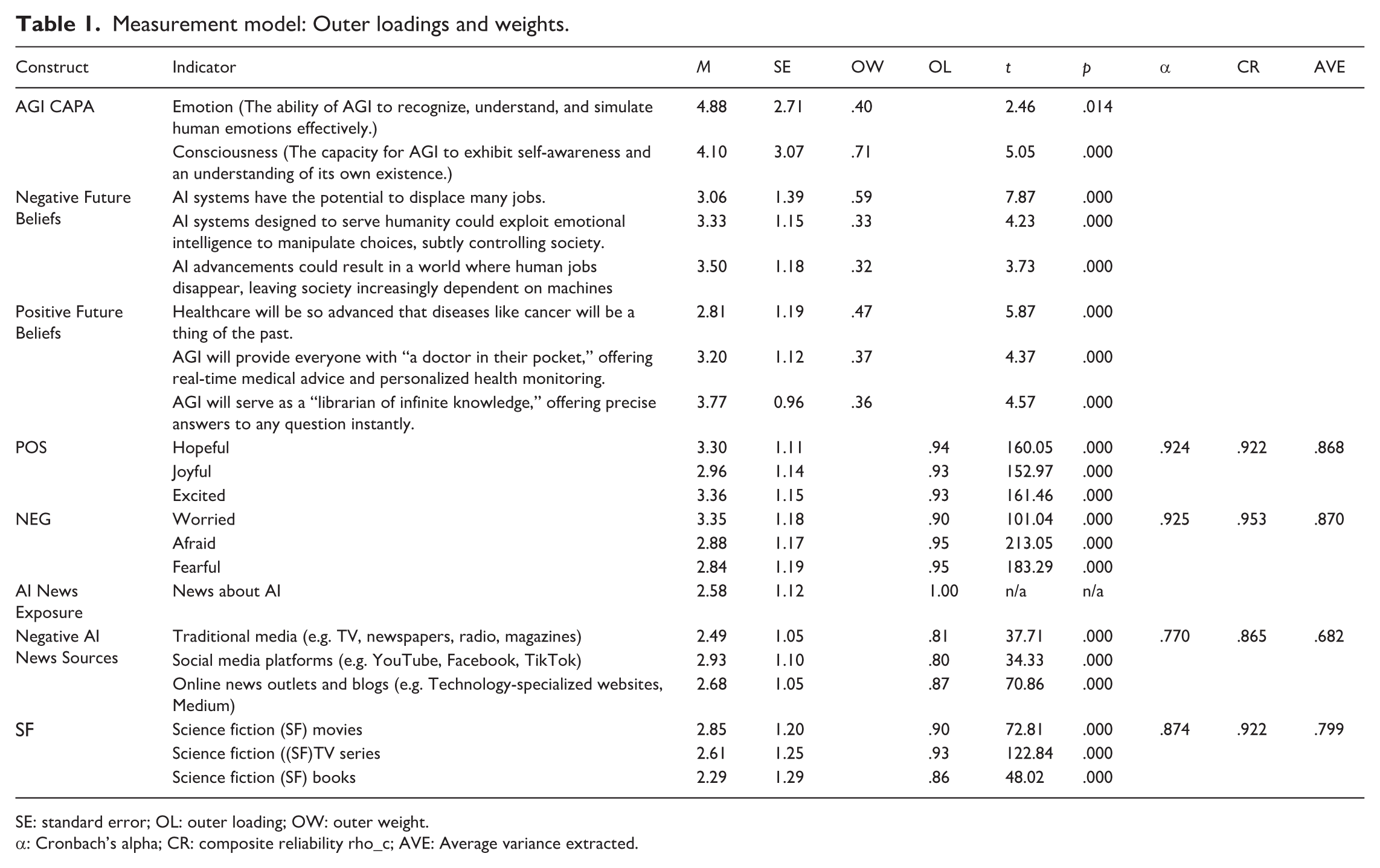

Table 1 summarizes the measurement model and constructs employed to test the proposed SEM framework.

Measurement model: Outer loadings and weights.

SE: standard error; OL: outer loading; OW: outer weight.

α: Cronbach’s alpha; CR: composite reliability rho_c; AVE: Average variance extracted.

Exposure to AI-related news

Participants were asked “Over the past year, how frequently have you come across news stories about AI from news sources?” on a 5-point scale (1 = Not at all, 5 = A great deal).

Selective exposure to negative AI news content

It was measured by asking how much they pay attention to negative AI information from different information sources – traditional media (TV, radio, newspaper, magazine), and social media platforms (YouTube, Facebook, and TikTok). It was rated on a five-point scale, never, rarely, sometimes, often, and always. These items served as indicators of selective exposure to non-fictional AI content. While this measure captures subjective attention rather than objective exposure, it reflects individuals’ self-identified focus on negatively valenced coverage – a common proxy in selective exposure research (Cardenal et al., 2019).

Consumption of science fiction content featuring AI or humanoids

Inspired by Bilandzic and Busselle’s (2008) narrative engagement framework, we asked participants, “Over the past year, how much have you consumed the following types of content – movies, television series, and books – that depict AI or humanoid technologies?” on a 5-point scale (1 = Not at all, 5 = A great deal). The three answers formed a reflective measure of exposure to science fiction content about AI.

AGI capability estimation (AGICAPA) (First-order judgments)

As a proxy for first-order judgments, participants estimated the current level of AGI development across two core capabilities: emotion and consciousness. Emotion referred to the ability of AGI to recognize, understand, and simulate human emotions effectively, while consciousness denoted AGI’s capacity for self-awareness and understanding of its own existence. Each capability was rated on an 11-point scale, where 0 indicated “no progress made” and 10 indicated “already achieved.” An 11-point scale was selected to enhance measurement sensitivity and better approximate interval-level data, as recommended by Leung (2011). This approach aligns with that of Spreng and Page (2001), who used 11-point scales to assess cognitive judgments of expected and perceived performance. Both our AGI capability estimation and their measures involve cognitively demanding, abstract judgments about uncertain states. In such cases, an 11-point scale offers the granularity and precision necessary to detect subtle differences in subjective estimates and to support continuous modeling in SEM frameworks. This construct was treated as a formative measure, representing participants’ quantified estimations of AGI’s current technological capabilities. It was conceptually distinct from attitudinal or evaluative responses and reflected the type of factual beliefs or perceived reality central to first-order cultivation theory.

Emotional responses toward AGI (Second-order judgment #1)

Participants’ emotional reactions to the development and societal use of AGI were measured as second-order cultivation judgments, including both negative and positive emotional responses. Negative emotions followed the framework of Zhan et al. (2024), who conceptualized fear of AI as a multidimensional emotional response. Participants rated the extent to which AGI made them feel worried, afraid, and fearful on a 5-point Likert-type scale. Positive emotions were designed to capture enthusiasm- and hope-related responses, which reflect motivational and goal-directed states elicited by future-oriented appraisals (Vogelaar et al., 2024). Participants rated how much AGI made them feel hopeful, joyful, and excited on a 5-point Likert-type scale.

Beliefs in AGI’s positive and negative societal impact (Second-order judgment #2)

Respondents rated their agreement on a 5-point Likert-type scale with four metaphor-based statements (Cheng et al., 2025; Kampmann, 2024) representing AGI’s anticipated positive impact across domains such as healthcare, information access, and communication. Sample statements include “AGI will provide everyone with a doctor in your pocket” (Kampmann, 2024). Each statement was treated as a formative indicator, reflecting distinct and non-interchangeable facets of societal benefit. While all four items were intended to contribute to the construct, the item using the “universal translator” metaphor failed to demonstrate a statistically significant outer weight and was subsequently excluded. The remaining three items focused on medical advancement, personalized AI-driven health support, and infinite access to knowledge exhibited significant outer weights (β > .10, p < .01) and formed the final formative measure of projected positive AGI impact. As for beliefs in AGI’s negative social impact, respondents were asked to rate their agreement with three negative scenario statements. For instance, potential negative AI impacts include “AI advancements could result in a world where human jobs disappear, leaving society increasingly dependent on machines.”

Results

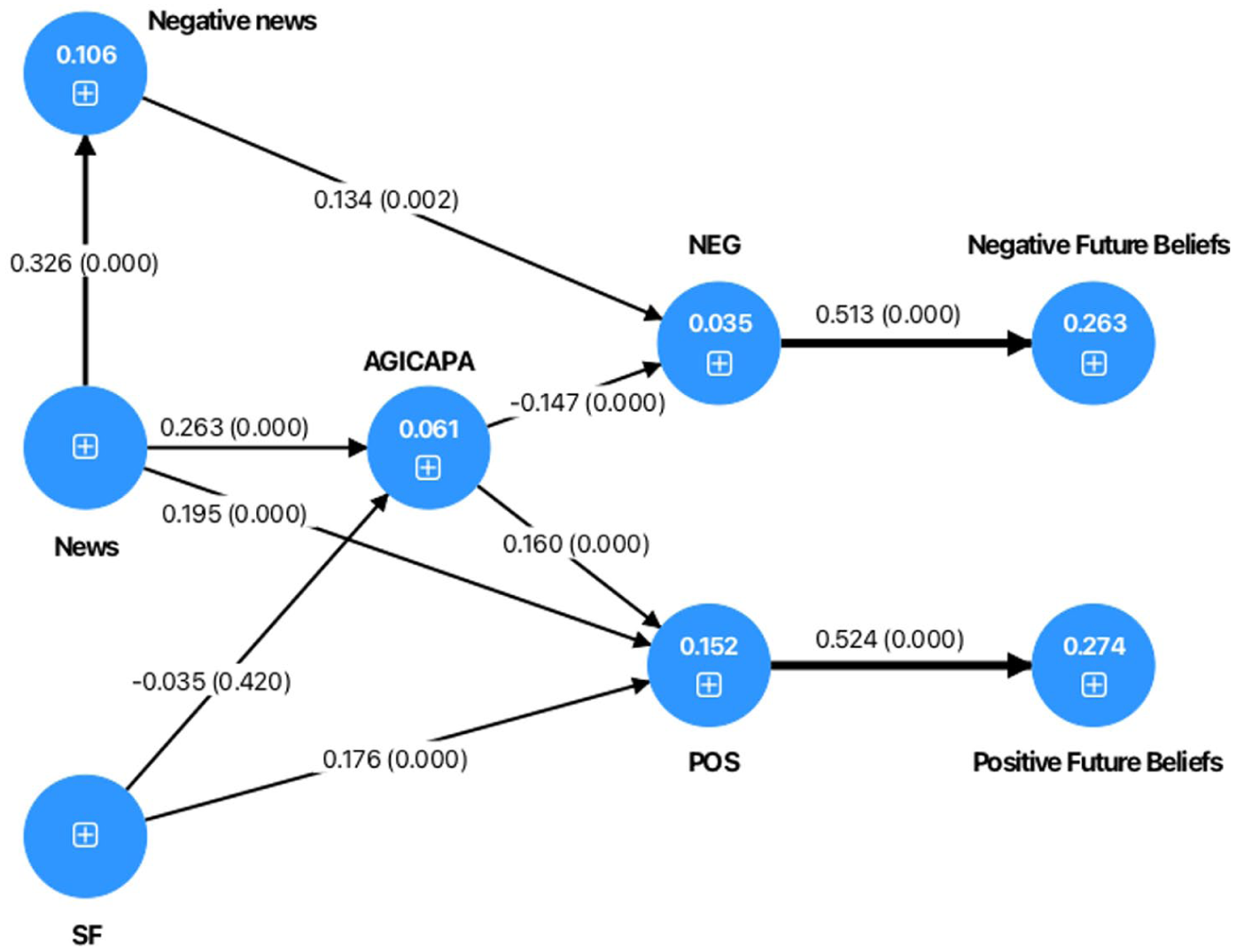

The hypothesized model has three formative constructs and four reflective constructs. First, the PLS-SEM algorithm was used to test the validity and reliability of the four reflective constructs. Cronbach’s alpha, composite reliability, and average variance extracted (Table 1) exceeded the conventional cut-off values, indicating good construct validity and reliability. Discriminant validity was assessed using the Heterotrait-Monotrait Matrix (HTMT). The correlations between all constructs were well below the conventional cut-off level (.90). For the three formative constructs, path coefficients for formative indicators were all larger than .10. Once the measurement model was confirmed to be reliable and valid, the structural model was analyzed to examine the path coefficients and indirect effects between latent constructs. The bootstrapping technique with 10,000 bootstrap sub-samples was performed to explore the t-values, the significance of path coefficients, and indirect relationships among constructs (Figure 1).

Direct path coefficients in the hypothesized model (p-values in parentheses).

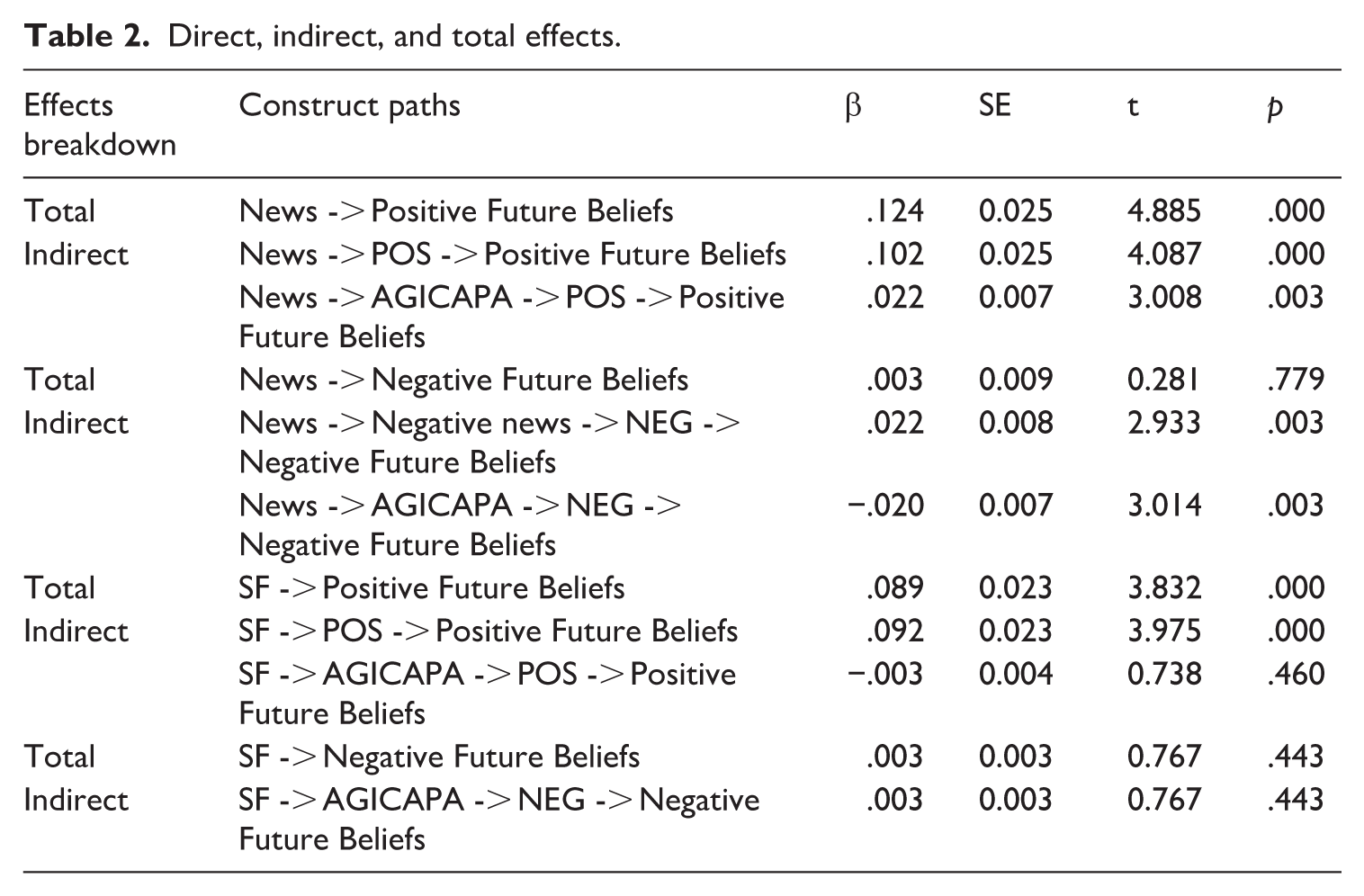

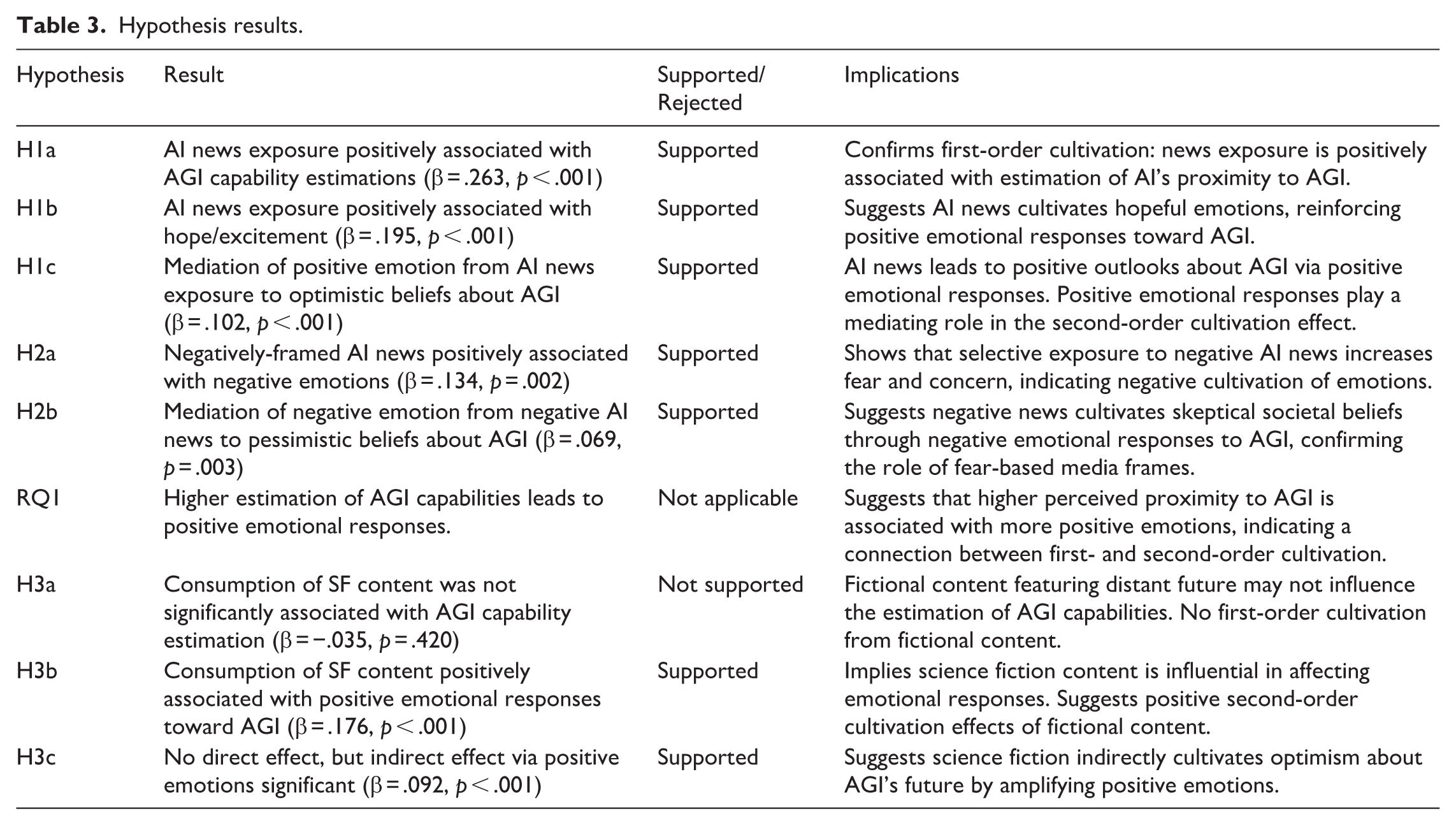

H1a hypothesized the first-order cultivation effect. The results supported H1a that when users are more exposed to AI-related news, they are more likely to believe that current AI technology is closer to achieving AGI (β = .263, p < .001). H1b and H1c tested the second-order cultivation hypothesis. Exposure to AI news was positively associated with positive emotions, such as hope and excitement (β = .195, p < .001). Also, AI news exposure was positively associated with optimistic beliefs about AGI’s societal impact via positive emotional responses to it (β = .102, p < .001). These findings suggest that positive emotional responses play a mediating role in second-order cultivation effects.

H2a and H2b were tested to see the route of AI news exposure influencing negative outlooks of AGI. First, it was found that the greater exposure to AI-related news significantly led to a greater likelihood of exposure to negatively framed AI news (β = .326, p < .001). Exposure to negatively framed AI news was significantly related to negative emotional responses to AGI (β = .134, p = .002), which in turn influenced negative beliefs about AGI (β = .513, p < .001). Mediation analysis indicated that the path from negative AI news exposure to negative beliefs via negative emotions was significant (β = .069, p = .003). Thus, both H2a and H2b were supported.

RQ1 asked about how the first-order effect relates to the second-order cultivation in terms of affective valence toward AGI. The analysis indicated that the estimation of AGI capability was significantly associated with positive emotion toward AGI (β = .160, p < .001). It was also negatively associated with negative emotional responses to AGI (β = −.147, p < .001), indicating that AGI capability estimation had a positive influence on emotional responses toward AGI. The direct, indirect, and total effects of the structural model are summarized in Table 2.

Direct, indirect, and total effects.

The third set of hypotheses (H3a, H3b, and H3c) tested the cultivation effects of SF content consumption on AGI capability estimation, emotional responses, and positive outlooks about AGI. The results indicated that science fiction content consumption was not significantly associated with estimations of AGI’s current capabilities (β = –.035, p = .420). Thus, H3a was not supported, which indicated no first-order effect. On the contrary, science fiction content was positively associated with hopeful and enthusiastic emotions toward AGI (β = .176, p < .001), supporting H3b. Moreover, its indirect effect on optimistic societal beliefs was significant (total effect: β = .092, p < .001), mediated by positive emotions, supporting H3c. (see Table 3).

Hypothesis results.

Discussion

Grounded in media cultivation theory, this study investigated how consumption of news and science fiction media content relates to individuals’ expectations about AGI’s societal impact, particularly through first- and second-order cultivation processes (Busselle and Van den Bulck, 2020; Shrum et al., 2011). First-order cultivation was assessed through individuals’ estimations of current AGI development, while second-order cultivation was examined through emotional responses and generalized beliefs reflecting broader worldviews about AGI’s long-term societal consequences. Our findings extend cultivation theory into the public’s perception of the not-yet-realized technology – AGI – by examining both informational and narrative forms of media exposure, and by incorporating cognitive and affective dimensions of audience perception.

Regarding the effects of AI-related news exposure (H1a and H1b), the analysis results supported the first- and the second-order cultivation hypotheses that the overall amount of exposure to AI-related news significantly predicts public beliefs about the pace of AI development toward AGI and its long-term consequences. These results suggest that media coverage of AI predominantly contributes to positive emotional responses. The public appears to draw emotional cues from how news stories portray AI, with more frequent exposure corresponding to a greater sense of optimism about the technology’s potential. This positive affective response contrasts with narratives from some AI founders and venture capitalists who have expressed concern that extensive media coverage might generate public anxiety or resistance toward AI. Instead, our findings indicate that news media exposure may foster positive feelings rather than fear. Furthermore, news media exposure was associated with a generally hopeful outlook, perhaps reflecting optimistic themes that emphasize innovation, convenience, and problem-solving potential. This result is consistent with those of recent studies examining the relationship between media use and positive attitudes toward AI (Brewer et al., 2022; Choi et al., 2025; Yang et al., 2025). This may be because negative coverage of AI is proportionally small (about 20%) compared with positive and neutral coverage of AI (Ittefaq et al., 2025). Another possibility is that even when AI news coverage includes both risks and benefits, as Choi (2024) revealed, audiences exposed to distant-future temporal framing may process the information more abstractly and focus more on the benefits than the risks, resulting in more positive emotional reactions.

H1c further extended this relationship by showing that positive emotions mediated the association between news exposure and optimistic future beliefs about AGI. This indirect pathway underscores how emotional responses may link current media exposure to generalized expectations about AGI’s societal impact. Importantly, this supports the notion of second-order cultivation effects (Potter, 1991b), where media cultivate not just specific beliefs but also broader, generalized beliefs. These findings suggest that long-term exposure to AI news may cultivate generalized, future-oriented beliefs, such as AGI’s ability to eliminate disease or provide universal access to knowledge, particularly when audiences respond to such content with hope or enthusiasm.

The results of H2a and H2b suggest that exposure to negatively framed AI news may reinforce second-order cultivation effects on negative emotional responses and generalized pessimistic beliefs about AGI’s anticipated societal consequences. These results are consistent with the assumptions of the Social Amplification of Risk Framework (SARF), which posits that repeated exposure to negatively framed news can act as an amplification station that heightens emotional concern and reinforces generalized beliefs about future risks (Zhang and Pang, 2025). Mediation results about H2b confirmed that exposure to negative AI content explained the link between overall AI news and negative future beliefs. These findings support recent arguments that audience-driven or algorithmically curated exposure may reinforce pre-existing anxieties (Cardenal et al., 2019). The evidence of selective exposure in cultivation effects offers a refinement to cultivation theory by foregrounding affective selectivity and algorithmic gatekeeping (Thorson and Wells, 2016).

While second-order cultivation effects were presumed to emerge from first-order factual beliefs derived from media exposure (Gerbner et al., 1982), empirical findings have been mixed (Coenen, 2020; Potter, 1991b). Potter (1991b) also noted that prior studies had shown that second-order effects are not necessarily dependent on first-order beliefs. The findings for RQ1 suggest that the second-order cultivation effects may be related to the first-order cultivation in the context of AGI. It can be interpreted that individuals who believe current AI technology has progressed closer to the AGI level tend to develop and experience positive hopeful emotions toward AGI. In some cases, individuals may rely on preexisting evaluative beliefs (second-order) when generating perception-based estimates (first-order), especially when those attitudes are already firmly established. Potter (1991b) argued that the directionality between first- and second-order effects is not fixed but may operate in a reciprocal or context-dependent way. The directionality of the first-order and the second-order cultivation need to be examined using experimental designs in future research.

The test of H3a revealed that exposure to fictional portrayals of AI did not appear to correspond with participants’ beliefs about how far AI had developed at the time of the survey. This finding indicates that SF content may not operate in the same way as news content with respect to cognitive estimations of technological development. One possible explanation is that audiences may view science fiction narratives as imaginative or as depicting not-yet-realized possibilities, rather than as accurate representations of real-world capabilities. As a result, they may treat these stories separately from their understanding of current AI progress. This interpretation aligns with prior media effects research, which observes that audiences tend to apply different interpretive frameworks to fictional and non-fictional content (Busselle and Van den Bulck, 2020). The survey items in this study measured the general consumption of science fiction media without reference to specific titles or immersive experiences. Without contextual cues such as widely recognized dystopian or utopian AI narratives, it may be less likely that the cognitive dimensions of fictional content were fully reflected in participant responses. This limitation could have resulted in the absence of a first-order cultivation effect of fictional content. In addition, the non-significant effects of SF exposure may reflect the fragmented nature of AI portrayals across genres, which present both utopian and dystopian narratives rather than a unified message capable of cultivating shared perceptions. This finding is also reminiscent of Hawkins and Pingree’s (1981) critique of the cultivation assumption that television content conveys a uniformity of messages. As with the varied genres they analyzed, science fiction portrayals of AI may be too varied to support consistent first-order effects across viewers.

The non-significant effects of science fiction content may also reflect how audiences cognitively respond to the psychological distance of speculative narratives. Ellithorpe et al. (2016) found that distal content tends to elicit abstract construal, which reduces reliance on salient media cues and weakens first-order cultivation effects. In contrast, more proximal portrayals such as those in news media are more likely to prompt concrete construal and foster heuristic judgments. Given that SF typically presents AI in distant or imagined contexts, audiences may view such content as less relevant when forming judgments about AGI’s current capabilities. This interpretation supports our findings and suggests that both narrative distance and cognitive stance help explain the limited cultivation effects of fictional media.

The test of H3b further shows that individuals who reported higher consumption of science fiction content tended to report more positive emotional responses. Although dystopian portrayals are common in AI-themed fiction, the results may reflect the audience’s underlying interest in technological innovation and speculative futures. Individuals frequently engaging with science fiction may already hold favorable dispositions toward emerging technologies, which could correspond with an emotionally positive outlook. In testing H3c, the indirect path from SF content to positive future outlook through positive emotion was significant. Because the path from SF to AGICAPA was also not significant, these results suggest that emotional responses may link science fiction content consumption to generalized optimism about AGI’s future more strongly than to specific estimation regarding current technological progress.

Overall, findings indicate that science fiction media consumption was more consistently associated with affective responses than with specific beliefs about AGI. While not linked to beliefs about current AGI progress or pessimistic outcomes, science fiction was related to emotionally grounded optimism about technological possibilities. Fictional media, even when presenting dark or cautionary themes, may foster imaginative engagement that supports more hopeful visions of the future.

Implications

Theoretical implications

The findings of this study extend cultivation theory into the not-yet-realized, speculative technologies such as AGI, where public perceptions are formed less by direct experience than by mediated narratives. Traditional cultivation models emphasized cumulative exposure to television as a centralized system of storytelling (Gerbner, 1998; Gerbner et al., 1980), but contemporary media environments are fragmented and diverse, requiring content- and genre-specific approaches (Hermann et al., 2021; Potter, 2022). This study contributes to understanding how cultivation processes align with different media forms and informational settings. First, the amount of exposure to AI-related news leads to the first-order cultivation of beliefs about AGI capabilities. Second, selective exposure to negative coverage and consumption of science fiction narratives contribute to the formation of both negative and positive outlooks toward AGI. Third, fictional entertainment content did not lead to higher estimation of the current AI development toward AGI but was related to positive emotions and optimistic future outlooks. The results suggest that informational cues, such as news exposure, tend to correlate with current estimated beliefs regarding AGI’s perceived proximity (first-order effect), whereas fictional portrayals from SF are more commonly linked to emotional responses and broader, futuristic beliefs about AGI.

The results also contribute to framing and emotion research, as exposure to negative AI news heightened fear and pessimism, consistent with arguments that affective information channels cultivate outcomes more powerfully than neutral content (Choi et al., 2025). This supports calls to integrate framing perspectives with cultivation models to capture how interpretive structures and emotional pathways mediate media effects (Busselle and Van den Bulck, 2020). At the same time, the indirect effect of science fiction content through positive emotions mirrors Appel and Richter’s (2010) research. This suggests that fictional portrayals operate as symbolic environments that cultivate hopeful imaginaries of AI’s future, even when audiences recognize their speculative nature.

These contributions underscore that cultivation in the context of AGI is less about distorting observable realities and more about the construction of sociotechnical imaginaries of what might be possible (Nader et al., 2024). By situating cognitive estimations and affective responses within this dual cultivation framework, the study shows that media effects on emerging technologies are not only cumulative and long-term but also differentiated by genre, framing, and emotional resonance. This theoretical extension provides a foundation for future work to examine how mediated imaginaries of speculative technologies influence public trust, governance debates, and cultural expectations of science and technology.

Practical implications

The results of this study carry important practical implications across policy, media, public communication, and the technology industry. Policymakers should recognize that public imaginaries of AGI are cultivated less by technical realities than by mediated narratives, which often emphasize either overly optimistic or dystopian scenarios. This tendency underscores the need for balanced and transparent communication in regulatory debates so that governance frameworks are informed by realistic assessments rather than by hype or fear. Journalists and media practitioners likewise bear responsibility for presenting AI developments with balance and context, avoiding sensational headlines that distort expectations and clearly distinguish between the capabilities of current GenAI and the speculative status of AGI. Science communicators and educators can design interventions to strengthen AI literacy, encouraging critical engagement with both news coverage and fictional portrayals, and helping audiences contextualize what is speculative versus what is technically achievable. For the technology industry, the findings suggest that public optimism and skepticism toward AI are not solely based on user experience but are strongly cultivated by media discourses; firms introducing new AI systems should therefore communicate capabilities and limitations clearly, resisting the temptation to reinforce exaggerated claims about AGI being imminent.

Limitations and suggestions for future research

As a cross-sectional survey, this study shares the limitations common to correlational designs. First, the significant path coefficients identified in the structural equation modeling analysis should not be interpreted as evidence of causality. Prior cultivation research has shown that individuals with pessimistic worldviews may selectively seek content that reinforces those concerns (Andersen et al., 2024). Moreover, our analysis did not account for subtle framing cues in news content, such as temporal references, which may influence public emotions and beliefs, as demonstrated by Choi (2024). Future research using experimental or longitudinal designs would be better suited to assess causal direction and explore how individuals select and interpret media content.

Second, the study relied on self-reported measures of media exposure. Although self-report remains a common approach in cultivation research, it may not capture individuals’ actual media use. This limitation is particularly important when assessing selective exposure, as participants may underreport or overreport their attention to certain types of news coverage or entertainment genres. Moreover, respondents’ ability to identify negative or positive coverage may itself be influenced by preexisting attitudes and emotional orientations. Individuals predisposed toward optimism about AI may fail to recognize favorable coverage as positively framed, whereas skeptics may not perceive alarmist content as a negative exaggeration. Future work should triangulate self-reports with content-based or behavioral measures to validate selective exposure more rigorously.

Third, although the study provided respondents with a standardized definition of AGI prior to AGI-related measures, it remains possible that participants’ responses reflected extrapolations from more familiar forms of AI, particularly GenAI systems with which they had direct experience. Because AGI remains a speculative and not yet realized technology, respondents may have anchored their judgments to existing AI applications rather than to AGI as a distinct conceptual category. This potential conflation can be an inherent challenge in studying public perceptions of hypothetical or future technologies, where mediated representations and experiential reference points play a central role in interpretation.

Fourth, the study focused exclusively on respondents with prior experience using GenAI tools. While this ensured that participants had some experiential reference point with AI, it also introduced potential bias. GenAI users are likely to be more technologically engaged and more attentive to AI-related media discourse, meaning their perceptions of AGI may systematically differ from those of nonusers. In addition, varying levels of AI literacy may have influenced how participants interpreted AGI-related concepts despite the provided definitions. Future studies should incorporate comprehension checks and include comparisons between users and nonusers to better assess how experience and familiarity shape AGI beliefs.

Finally, future studies should more systematically conceptualize and evaluate the emotional valence and framing of AI-related media content across both news and entertainment contexts. While this study examined audience responses to media exposure, it did not include a systematic content analysis of the specific AI coverage participants may have encountered. This limits the ability to assess whether audience attitudes reflect the actual tone, emphasis, and framing used in media portrayals. Given that early cultivation research often assumed consistently negative portrayals – such as recurring depictions of crime in television dramas – making similar assumptions about AI content may be premature without empirical evidence. Although we cited Ittefaq et al. (2025), who found that only 21% of global AI headlines in 2023 were negatively framed, further content analyses are needed to track how AI is portrayed across media outlets, genres, and time periods.

Footnotes

Acknowledgements

This work was supported by a Page Legacy Scholar Grant (No. 2023DIG02) from the Arthur W. Page Center for Integrity in Public Communication at the Donald P. Bellisario College of Communications at The Pennsylvania State University.

Author’s note

Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of Penn State.

Data availability statement

The dataset underlying this study was submitted as part of the manuscript review process and is available from the corresponding author upon reasonable request.

Ethical approval

This study was approved by the Institutional Review Board (IRB) of Syracuse University (No. 24-375) on December 2, 2024.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by a Page Legacy Scholar Grant (No. 2023DIG02) from the Arthur W. Page Center for Integrity in Public Communication at the Donald P. Bellisario College of Communications at The Pennsylvania State University.

Informed consent statement

All participants provided written informed consent prior to enrolment in the study. This research was conducted ethically in accordance with the World Medical Association Declaration of Helsinki.