Abstract

The rapid advancement of generative artificial intelligence (AI) has made AI-generated content (AIGC) increasingly prevalent. However, misinformation created by AI has also gained significant traction in online consumption, while individuals often lack the skills and attribues needed to distinguish AIGC from traditional content. In response, current media practices have introduced AIGC labels as a potential intervention. This study investigates whether AIGC labels influence users’ perceptions of credibility, accounting for differences in prior experience and content categories. An online experiment was conducted to simulate a realistic media environment, involving 236 valid participants. The findings reveal that the main effect of AIGC labels on perceived credibility is not significant. However, both prior experience and content category show significant main effects (P < .001), with participants who have greater prior experience perceiving nonprofit content as more credible. Two significant interaction effects were also identified: between content category and prior experience, and between AIGC labels and prior experience (P < .001). Specifically, participants with limited prior experience exhibited notable differences in trust depending on the content category (P < .001), while those with extensive prior experience showed no significant differences in trust across content categories (P = .06). This study offers several key insights. First, AIGC labels serve as a viable and replicable intervention that does not significantly alter perceptions of credibility for AIGC. Second, by reshaping the choice architecture, AIGC labels can help address digital inequalities. Third, AIGC labeling extends alignment theory from implicit value alignment to explicit human–machine interaction alignment. Fourth, the long-term effects of AIGC labels, such as the potential for implicit truth effects with prolonged use, warrant further attention. Lastly, this study provides practical implications for media platforms, users, and policymakers.

Introduction

The rise of AI-generated fake news has turned AI into a super-spreader of misinformation. According to NewsGuard, a misinformation tracking organization, the number of websites hosting AI-generated fake articles surged by over 1,000% between May 2023 and May 2024, increasing from 49 to more than 600 (Verma, 2023). The rapid commercialization of AI, combined with its technical flaws, has significantly raised users’ exposure to AI-generated misinformation (Monteith et al., 2024). As early as the GPT-2 era, studies revealed that individuals struggled to distinguish between AI-generated and human-created poetry (Köbis & Mossink, 2021). While generative AI holds remarkable potential, it is far from perfect and often exhibits biases, such as discrimination against women and Black communities—issues that even advanced models like ChatGPT have not fully resolved (Fang et al., 2024). To address such biases, researchers have proposed value alignment as a corrective framework (Weidinger et al., 2023). Furthermore, studies consistently show that distinguishing AI-generated content (AIGC) from human-created content is becoming increasingly challenging (Partadiredja et al., 2020). As generative AI evolves at an unprecedented pace, its technical shortcomings may eventually diminish, leaving society with a new challenge: discerning AIGC from human-created material in an ever-more-convincing media landscape.

For media consumers, the core issue is not merely differentiating between AI and humans but determining whether specific content is AI- or human-generated. This distinction directly impacts information security and users’ right to informed decision-making online. To tackle this challenge, behavioral scientists have advocated the use of content labeling as a nudging intervention (Morrow et al., 2022). AIGC labels have already been implemented on platforms like TikTok and Xiaohongshu, aiming to mitigate the misinformation problem. While these labels may help address the chaos caused by AI-generated misinformation in online ecosystems, they could also influence users’ perceptions of content, social media platforms, and source credibility. As users navigate environments where AIGC and human-generated content (HGC) coexist, the effectiveness and unintended consequences of AIGC labels warrant further exploration.

Literature Review

Behavioral science interventions: Label nudging

Public opinion is largely shaped by online content disseminated through algorithm-driven platforms. The design of today's online ecosystems primarily aims to capture users’ limited attention rather than fostering truth, freedom, and democratic discourse. To address this issue, researchers have proposed two additional intervention measures from a behavioral science perspective: nudging and boosting. These measures aim to restructure online environments to promote informed decision-making and democratic development (Lorenz-Spreen et al., 2020). Nudging interventions can reshape choice architectures, improving the cognitive quality of content and facilitating its dissemination. The first type, educational nudging, integrates cognitive cues into the decision-making environment to inform rather than actively guide behavior. Examples include alerting users to unclear content sources or showing how many readers have viewed a particular article. The second type involves fine-tuning the arrangement of online content, such as platforms explicitly distinguishing content types (e.g., commercial posts vs news) to enhance source transparency (Lorenz-Spreen et al., 2020). Compared to boosting interventions, nudging is simpler and more effective, making it a practical approach for a broad audience.

Today, AI is capable of producing content indistinguishable from that created by humans (Partadiredja et al., 2020), and it is even used to generate misinformation. This makes nudging interventions for AIGC particularly crucial. Platforms like TikTok have adopted AIGC labels as a key measure to address this issue. Another approach is embedded interventions, such as evading watermark techniques, which help distinguish AIGC from HGC. This method mitigates traditional technical vulnerabilities while preserving visual quality with minimal disturbance (Jiang et al., 2023).

The speed and adaptability of new technologies and their users often outpace content regulation efforts (Lorenz-Spreen et al., 2020). It is evident that focusing solely on misinformation will not resolve the challenges posed by AIGC. A comprehensive solution requires combining AIGC labels with nudging interventions, addressing these issues through the lens of human–machine interaction.

While discussions around generative AI and large language models often emphasize value alignment, less attention has been given to alignment in human–machine interactions. Nudging theory underscores the importance of restructuring online environments to foster digital democracy. The intent behind AIGC labels is to help users differentiate between AIGC and HGC, aligning their cognitive perceptions at the intersection of human–machine interaction. This approach not only addresses the challenges posed by AI but also redefines the role of digital tools in shaping a fair and informed society.

Fact-checking, accuracy warning labels (Pennycook & Rand, 2022), and sponsorship disclosure labels (Feng et al., 2021) in traditional news consumption are also categorized as nudging interventions. Some researchers have operationalized boosting interventions by providing alternative news sources and nudging interventions by implementing “verified” labels (Thornhill et al., 2019). Others have used the presence or absence of fact-checking alerts as two levels of a nudging variable, finding that fact-checking alerts reduce users’ willingness to share misinformation (Nekmat, 2020). Additionally, studies have compared the effects of truth pledges and fact-checking guidelines on users’ ability to discern information and their willingness to share misinformation. These interventions, which emphasize individual agency, fall under the broader category of boosting interventions rather than nudging (Tu, 2024).

Traditional fact-checking labels typically alert users to the accuracy of content in an explicit manner, directly presenting verification results and focusing on post hoc corrections. In contrast, AIGC labels do not assess the authenticity of content but instead inform users that the content is AI-generated. These labels function by altering users’ cognitive load and emphasize preemptive marking and in-situ intervention rather than retrospective corrections (Liu et al., 2023).

Importantly, fact-checking and AIGC labels are not mutually exclusive. Both can coexist within platform governance frameworks, complementing each other to address challenges in the digital information ecosystem.

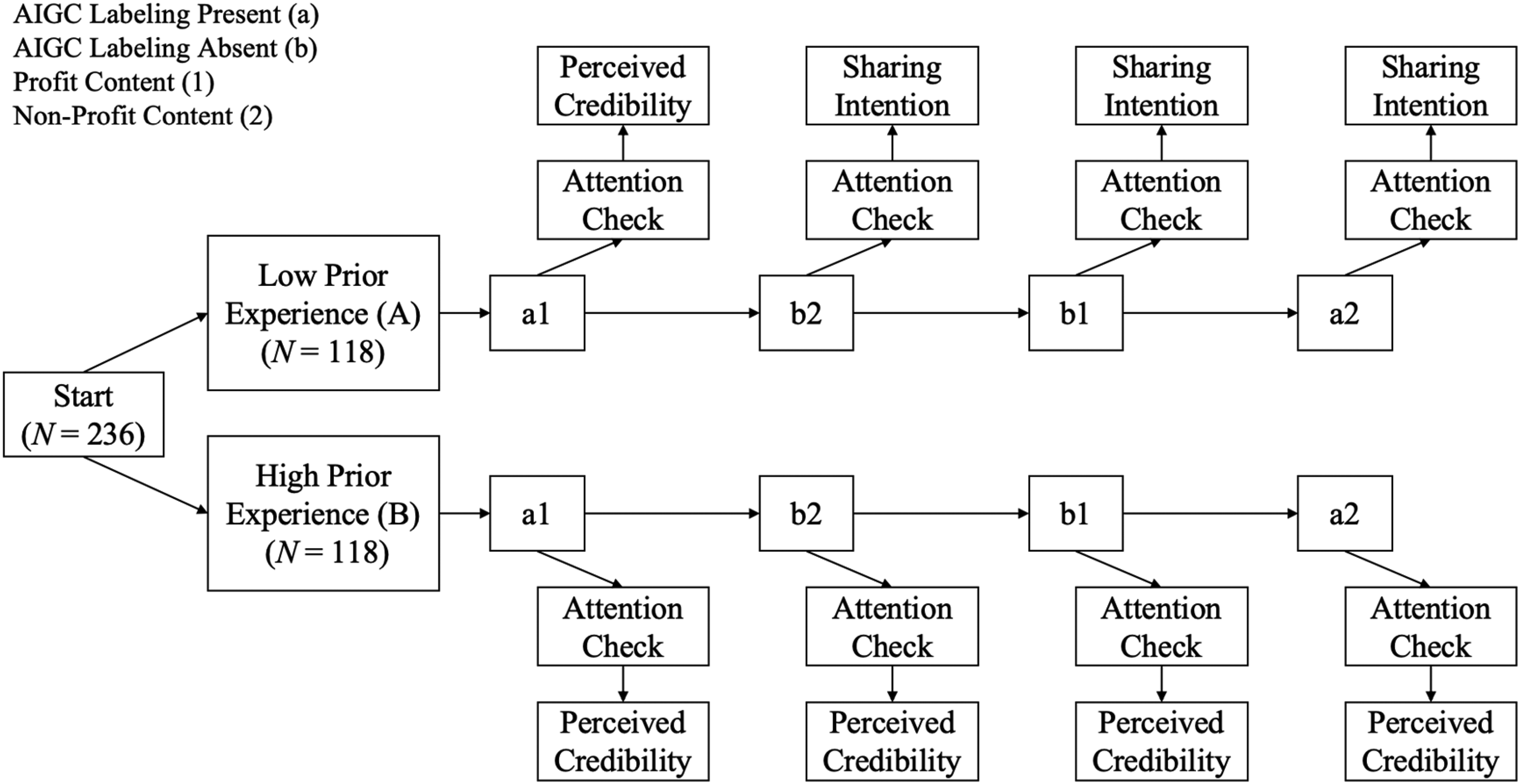

Experiment procedure.

First selection situation of participants.

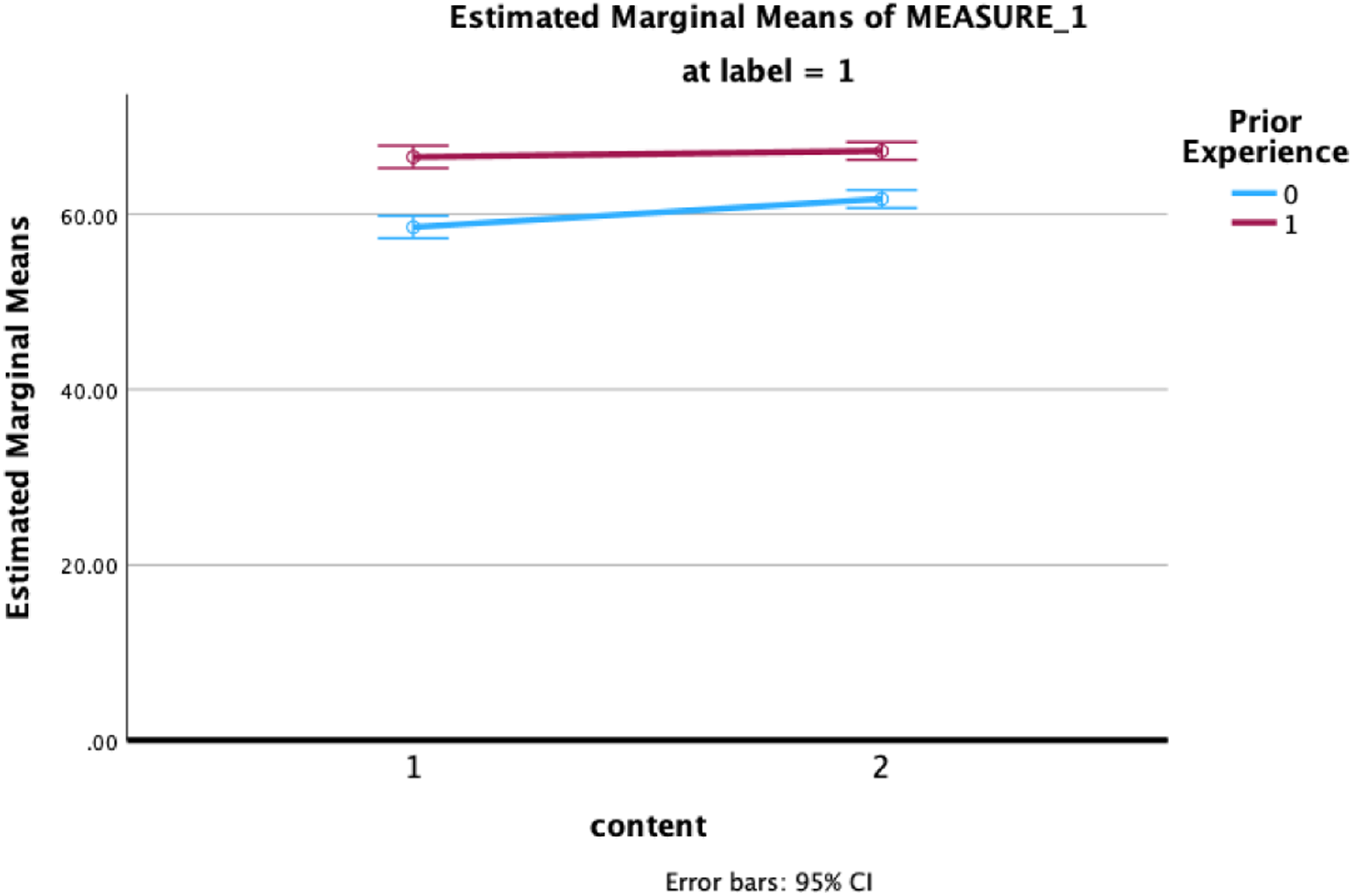

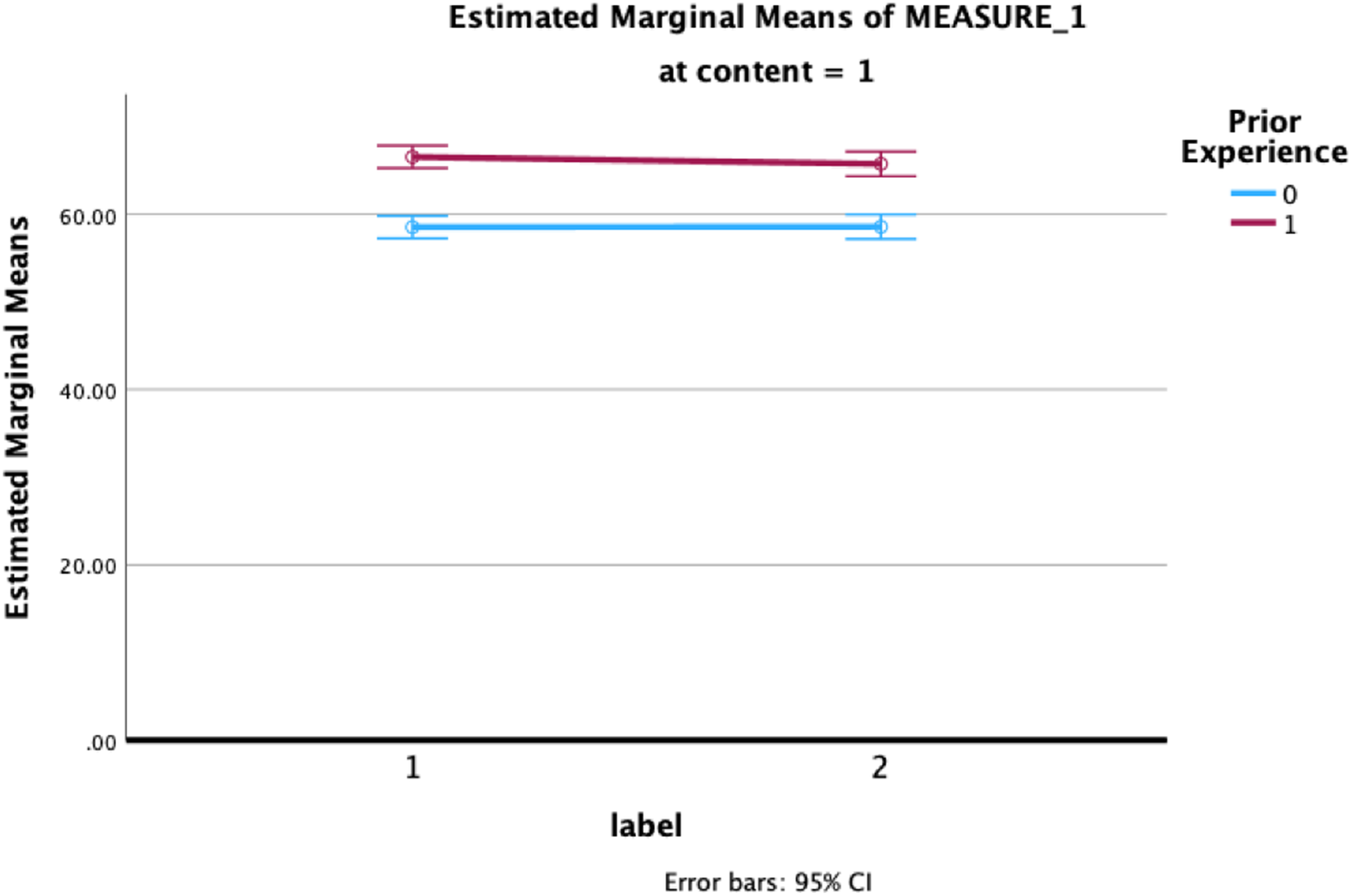

Estimated marginal means between content category and prior experience under AIGC labeling present.

Perceived credibility of AI-generated content

Trust is a broad and elusive concept, yet it plays a critical role for individuals seeking to purchase unfamiliar products or services online. Trust helps reduce the uncertainty inherent in most online transactions, especially in scenarios involving risk and information asymmetry. As such, trust serves as an essential mechanism to mitigate the uncertainty and complexity of interactions and relationships (Chang et al., 2019).

Evaluating whether information sources or content are trustworthy or credible plays a key role in interpreting and assimilating information (Huschens et al., 2023). In communication studies, the foundational concept of “information” was defined by information theory, with Shannon's linear communication model outlining the relationships among the source, message, channel, and receiver. Credibility research began with Hovland's work on source credibility, which he characterized as a universal feature of communication sources, comprising two components: expertness and trustworthiness (Hovland & Weiss, 1951). Miller further proposed that the credibility of information is directly influenced by the medium through which it is transmitted, introducing the concept of medium credibility (Miller & Kurpius, 2010). Building on these foundations, researchers have categorized credibility into three dimensions: source credibility, medium credibility, and information credibility (van der Kaa & Krahmer, 2014).

For computer-mediated interactions, Fogg and Tseng defined credibility as a perception—specifically, the perceived credibility of computer products or systems (Fogg & Tseng, 1999). In studies of computer-mediated communication, trust is often measured through users’ perceptions of credibility. In the era of AI, researchers have developed credibility scales tailored to AIGC. These scales assess four dimensions: competence, trustworthiness, clarity, and engagement. The first two dimensions evaluate source credibility, while the latter two focus on the credibility of the content itself (Huschens et al., 2023). This framework highlights the multifaceted nature of credibility and its relevance in understanding user trust in AI-mediated communication.

AI-generated content, AIGC labels, and perceived credibility

AIGC labels, akin to traditional news author bylines, inform users that the content is created with the involvement of AI. Previous research has explored the credibility of AI-assisted journalism by comparing the perceived credibility of different content authorship sources and news topics. Findings indicate that news consumers perceive journalists and AI as equally trustworthy and competent. However, for journalist-authored content, the credibility is rated significantly higher than AIGC. Meanwhile, journalists themselves perceive computers to possess greater expertise than what is acknowledged by news consumers. News topics also influence perceived credibility, with sports content rated lower than financial content (van der Kaa & Krahmer, 2014).

Other studies using online experiments have shown that human-generated news content is rated higher in credibility and expertise compared to AIGC, although no significant differences were found in terms of readability (Liu, 2020). Conversely, research on Twitter bots suggests that writing bots can serve as reliable information sources, with no significant differences in credibility between bot-generated and human-authored content (Edwards et al., 2014).

These findings indicate that for news content, the trust levels for AIGC and HGC are not significantly different. In some cases, users may even perceive AIGC as more competent. However, for advertising content, sponsorship disclosure labels negatively affect the perceived credibility of both the advertisement and its publisher. Users exposed to explicit sponsorship disclosures rate the advertisement and its influencer as less credible (Vogel et al., 2020). This may be due to the association of sponsorship labels with marketing intent, which undermines trust in the influencer and the advertisement. Based on these insights, we hypothesize that there may be an interaction effect between content category and AIGC labels. Specifically:

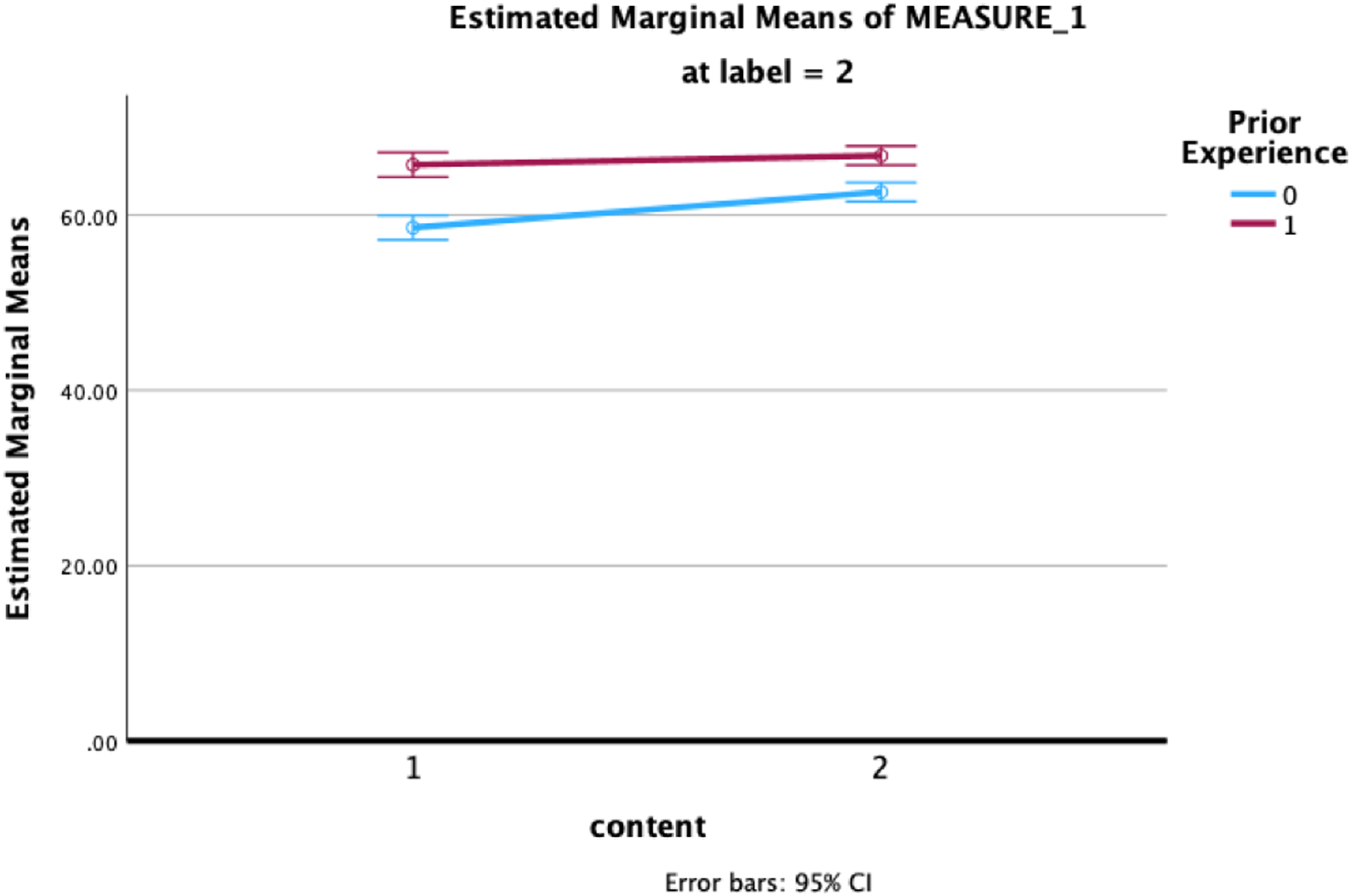

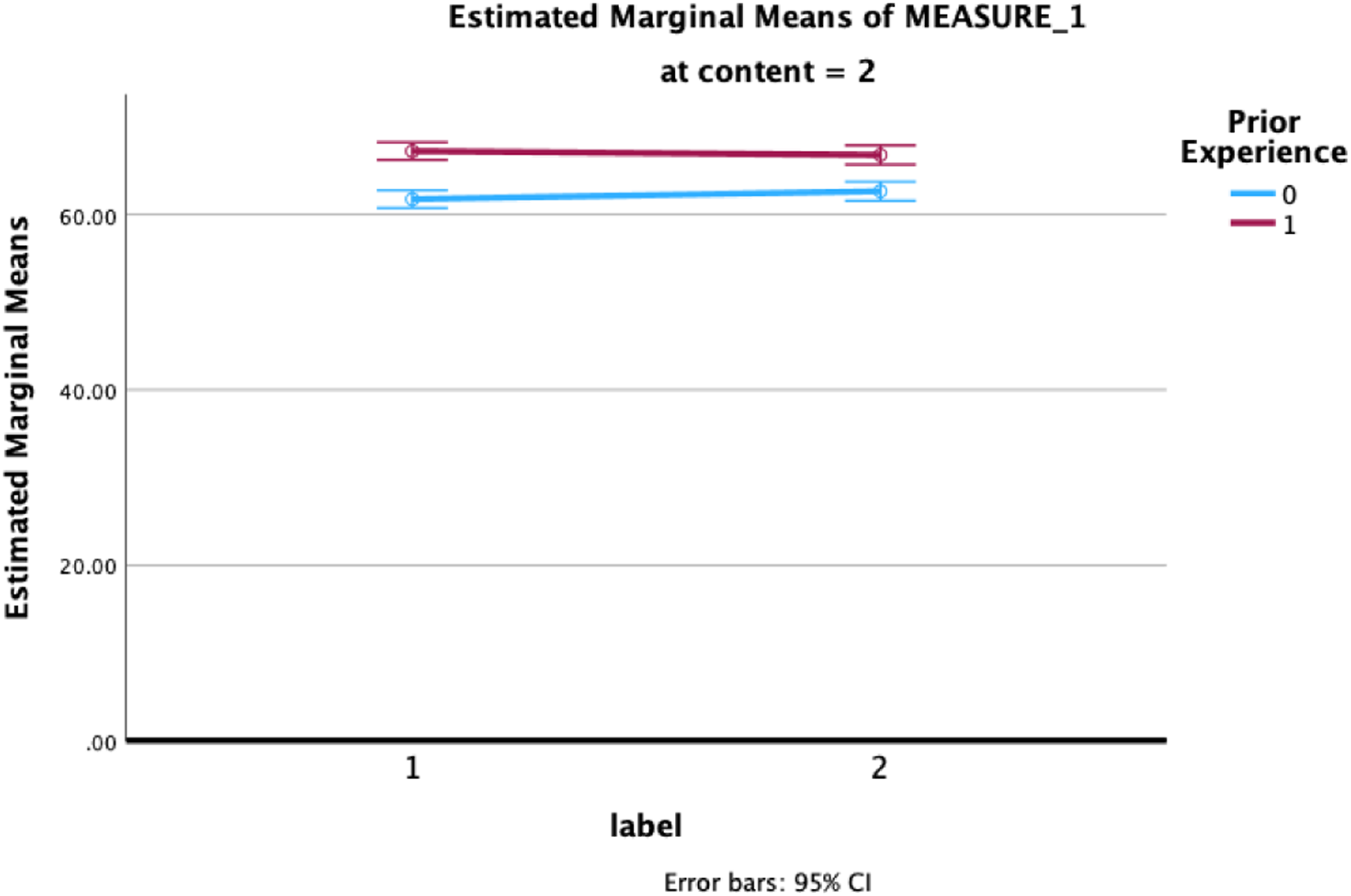

Estimated marginal means between content category and prior experience under AIGC labeling absent.

Influence of personal background: Prior experience and cultural factors

The rapid development and commercialization of generative AI tools like ChatGPT have deepened individuals’ awareness and usage of these technologies. However, disparities in economic development and internet accessibility lead to significant variations in users’ knowledge and experience with generative AI. This experience, referred to as prior experience, represents the cognitive understanding gained through sustained observation or interaction with a subject (Taylor & Todd, 1995). Prior experience significantly influences behaviors on social media, including users’ willingness to share news (Lee & Ma, 2012). For instance, research on personal health applications found that prior experience positively affects the time users take to complete tasks (Sox et al., 2010).

Similarly, studies on prior knowledge suggest that users with high engagement and extensive prior knowledge find content with objective and non-extreme attributes more credible. Conversely, users with limited prior knowledge are more influenced by source validation. This indicates a potential interaction effect between content attributes and prior experience. Based on this, we hypothesize:

In addition to prior experience, cultural background is another critical factor affecting users’ perceptions of AIGC. Hofstede's cultural dimensions framework outlines six cultural dimensions: power distance, uncertainty avoidance, individualism/collectivism, masculinity/femininity, long-term/short-term orientation, and indulgence/restraint (Hofstede, 2011). For example, in cultures with high uncertainty avoidance, users may be more inclined to trust content with explicit explanatory labels. In contrast, in low uncertainty avoidance cultures, such labels might have less influence on decision-making (Merkin, 2006). Future research should further explore the impact of cultural context on the effectiveness of labels, particularly in cross-cultural environments.

Estimated marginal means between AIGC labeling and prior experience under profit-oriented content.

Content categories of AI-generated content: Commercial and nonprofit

AIGC labels do not exist in isolation but are embedded within specific contexts, meaning their effectiveness may be influenced by the nature of the content itself (Martel & Rand, 2023). Based on their profit-oriented nature, online content can be broadly categorized into commercial and nonprofit content, a common classification method that aligns with the typical content found on social media (Lou & Alhabash, 2018).

Commercial content, such as advertisements and brand promotions, often involves users’ economic interests, making it more likely to trigger skepticism and vigilance (Vogel et al., 2020). Nonprofit content, including news, educational material, and public service announcements, is often associated with social identity or moral motivations, making it more likely to be perceived as a credible source. According to the Elaboration Likelihood Model (ELM), individuals process information through either the central or peripheral route (Kitchen et al., 2014). Commercial content, given its direct relevance to users’ economic interests, is more likely to prompt central route processing, where users engage in deeper cognitive evaluation, affecting their perception of credibility. Conversely, nonprofit content, perceived as less personally consequential, may rely more on peripheral route processing, influencing users’ perceived credibility differently. This dynamic may also interact with users’ prior experience, as AIGC labels, being part of the context, may interplay with content categories and prior experience to influence trust.

Research on advertisements, the primary example of commercial content, suggests that disclosure labels help consumers recognize sponsored posts as advertisements, leading to distrust or negative attitudes (Feng et al., 2021). This raises questions about how nudging interventions, such as AIGC labels, influence user trust in commercial content like advertisements.

For nonprofit content, such as news, studies have shown that humans often struggle to distinguish between AI-generated and human-written news content. While human-written news is perceived as clearer, AI-generated news is sometimes rated higher in credibility (Clerwall, 2014). Other research indicates consistent credibility perceptions across different content sources (van der Kaa & Krahmer, 2014). As a new nudging intervention, the impact of AIGC labels on user trust in both types of content requires further investigation.

This study selects news and advertisements as representatives of nonprofit and commercial content, respectively, and proposes the following hypotheses:

Based on the above, the study seeks to address the following research questions:

Estimated marginal means between AIGC labeling and prior experience under non-profit content.

Methods

Experiment design and procedure

This study employs a 2 (AIGC labeling: present or absent) × 2 (content category: profit-oriented or non-profit content) × 2 (prior experience: high or low) three-factor mixed experimental design. Prior experience is treated as a between-subjects variable, while AIGC labeling and content category are within-subjects variables. The study will be conducted through an online experiment. As shown in Figure 1, Participants will be divided into two groups based on their frequency of using generative AI: high prior experience and low prior experience. Participants who do not fit into these categories will be excluded from the study. Each group will then be randomly assigned to read four sets of experimental materials, categorized by the presence or absence of AIGC labels and the type of content (profit-oriented or non-profit).

The process unfolds as follows:

Participants are recruited and screened based on the operational definition of prior experience, and they complete an informed consent form. Participants who meet the criteria for prior experience fill out a generative AI cognition and usage questionnaire. Both the high and low prior experience groups are presented with four sets of experimental materials as outlined above. After reading each set of materials, participants undergo an attention check to ensure they carefully reviewed the content. This is followed by a measurement of their perceived credibility. The process is repeated for all four sets of materials. At the end of the experiment, participants complete a demographic questionnaire.

Recruitment and participants

The operational definition of prior experience

Long-term practice and frequent usage are critical factors for enhancing individual performance, with exceptional performance rooted in extensive prior experience (Ericsson et al., 1993). Extended practice duration and frequent task engagement enable individuals to better understand environmental patterns, thereby improving the reliability of intuitive decision-making (Kahneman & Klein, 2009). Related studies have shown that users’ prior usage frequency significantly influences their subsequent perceptions (Bolton & Lemon, 1999). Against the backdrop of the rapid commercialization of generative AI, user behavior exhibits a polarized pattern: a substantial number of high-frequency, short-term users coexist with a smaller group of low-frequency, long-term users. Based on this reality and the experimental data, this study operationally defines prior experience primarily through usage frequency, supplemented by usage duration.

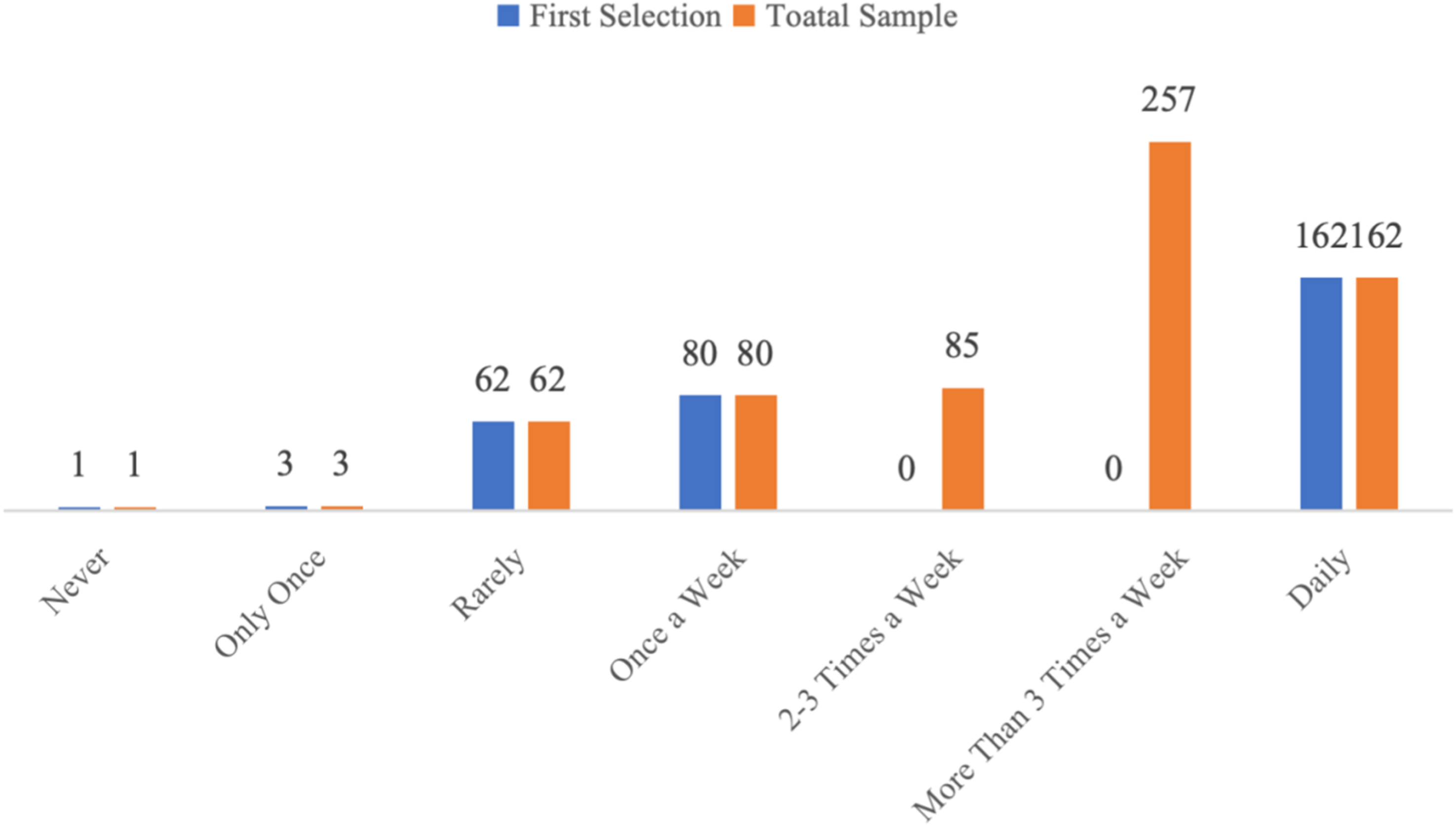

As shown in Table 1, the usage frequency of “more than three times per week” is the most common among the total sample, with some participants using generative AI tools daily. Meanwhile, participants with a usage frequency of “less than three times per week” are relatively fewer and are dispersed across a range from “never used” to “two to three times per week,” reflecting a “long-tail distribution.” To meet experimental requirements and ensure reasonable classification, this study defines prior experience according to the following criteria:

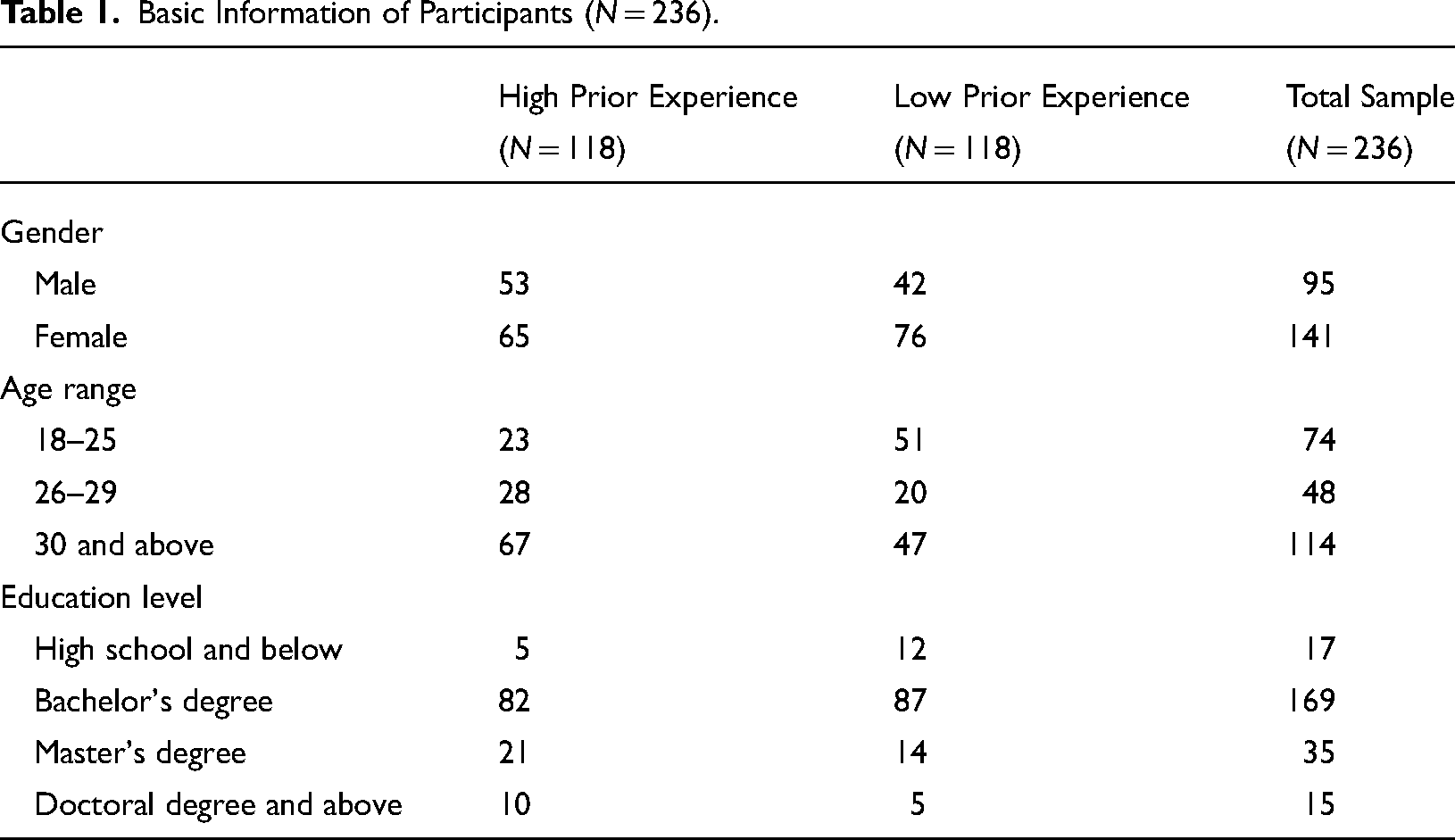

Basic Information of Participants (N = 236).

High prior experience: Users with a daily usage frequency and more than one year of usage duration, indicating long-term and in-depth practice with generative AI.

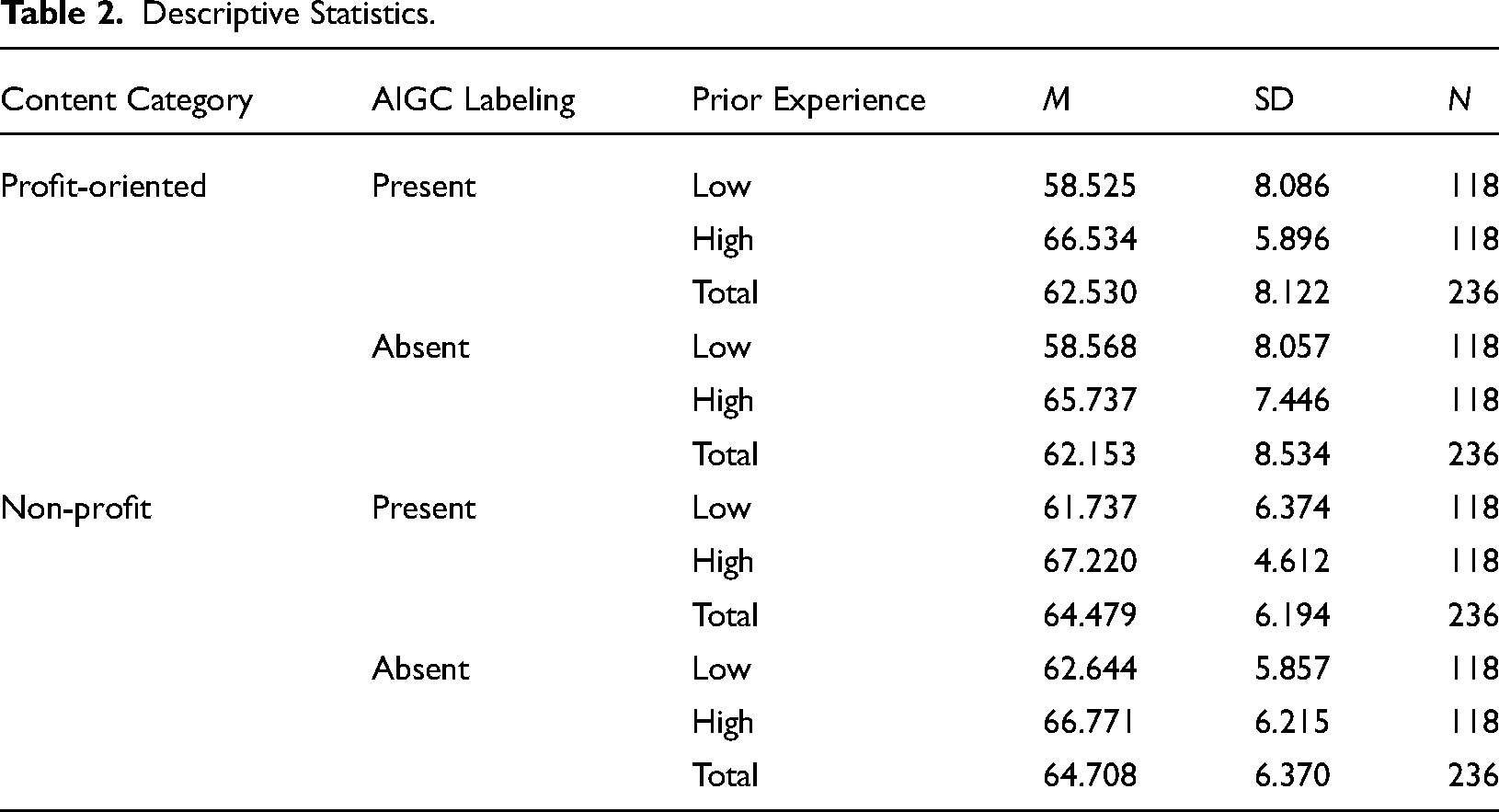

Descriptive Statistics.

Low prior experience: Users with a usage frequency ranging from “never used” to “once a week.”

Independent Samples t-Test for Gender Differences in Perceived Credibility.

Participants and attention checks

Prior to the main experiment, the minimum sample size was calculated using G*Power (Faul et al., 2007). The power analysis indicated that with an effect size (f) of 0.25, a significance level (α err prob) of 0.05, and a statistical power (1-β err prob) of 0.95, a minimum of 36 participants is required, with at least 18 participants per experimental group. Considering the nature of online experiments, a larger sample size is planned. The study targeted online users, recruiting participants from December 2023 to April 2024 via the data platform Credamo (https://www.credamo.com). Initially, 730 participants were recruited. After manually excluding 80 participants who provided random responses, 650 participants remained. Using the operational definition of prior experience, participants were categorized based on their generative AI usage frequency. As shown in figure 2, Among the 650 participants, 146 were classified as having low prior experience, and 162 as having high prior experience.

Further filtering based on attention check questions resulted in 118 participants with low prior experience and 133 with high prior experience. Using SPSS, 118 participants with high prior experience were randomly selected, resulting in a final sample of 236 participants: 95 males and 141 females; 219 participants had at least a university-level education. There were some differences in gender distribution, age structure, and educational levels between the two groups, which may explain the differences in prior experience. The experiment commenced after participants signed an informed consent form, with all participants voluntarily taking part. Compensation was provided upon completion of the experiment.

Pairwise Comparisons for Age Differences in Perceived Credibility.

* indicates statistical significance for all levels below p < 0.05.

Materials

AIGC labeling

AIGC labeling is a central focus of this study. The first issue to address is the format and placement of the AIGC labeling. Social media platforms such as Douyin (TikTok) and Xiaohongshu use labels like: “Content suspected to be AI-generated, please verify carefully,” “Author declaration: Content generated by AI,” and “Suspected to contain AI-generated information, please verify authenticity.” Analyzing the existing AIGC labeling, it can be categorized into accuracy prompts or author declarations indicating AI involvement in content generation.

Unlike accuracy prompts, which advise users to verify content carefully, AIGC labeling primarily informs users that the content involves AI generation without suggesting skepticism. Additionally, this study differentiates from author declarations by focusing on reconstructing the online environment to provide informative functions through AIGC labeling, without requiring author declarations. Based on the existing labeling used by social media platforms, the AIGC labeling in this study will read: “⚠ (a warning sign) Content generated by AI,” positioned at the lower left corner of the AIGC.

Content category

As this study focuses on AIGC labeling, the specific AIGC must be produced using generative AI to ensure sufficient external validity. The AIGC labeling needs to function in conjunction with specific content categories, and a common classification standard is the distinction between profit-oriented and non-profit content (Dineva et al., 2020; Sánchez-Torné et al., 2023). Based on this classification, content categories are divided into profit-oriented and non-profit content.

Profit-oriented content, represented by advertisements, is generated using ChatGPT. These advertisements are based on fictional brands and categorized according to clothing, food, housing, and transportation. Detailed content and prompts are provided in Appendix A. Non-profit content, represented by news, is synthesized using summaries from four major news platforms: Xinhua News Agency, China News Service, People's Daily, and The Paper in Appendix D. The common news topics identified from these platforms include sports, military, finance, technology, politics, culture and tourism, ecology, and international news. Baidu's AI model Wenxin Yiyan, known for its proficiency in processing Chinese language materials, is used to generate fictional news content. Detailed content and prompts are available in Appendix A. The average word count for profit-oriented content is 557 words, while non-profit content averages 638 words. Despite this difference, the disparity is negligible due to the fast reading speed of human participants (Rayner et al., 2010).

Outcome measures

Building on multiple credibility scales developed by scholars like Martin Huschens and others (Appelman & Sundar, 2016; Flanagin & Metzger, 2007; Winter & Krämer, 2014), a perceived credibility scale was specifically designed to measure trust in AIGC through a series of pretests (N = 60) and formal tests (N = 606). This scale encompasses four dimensions: competence, trustworthiness, clarity, and engagement, with Cronbach's α ranging from 0.79 to 0.91, and robust CFA results supporting its reliability and validity (Huschens et al., 2023).

For this study, we adopted this scale to measure perceived credibility. The scale was first translated by a doctoral student in education (certified with China's TEM-8 English proficiency). It comprises 11 items presented in a 7-point Likert format, including statements such as accurate, complete, knowledgeable, trustworthy, reliable, clear, confusing, understandable, interesting, and maintaining attention. The first six items primarily measure source credibility, while the latter five assess information credibility (Huschens et al., 2023). Scores are summed to range from 11 to 77, with higher scores indicating higher perceived credibility. The item confusing is reverse-coded.

In the pretest phase (N = 11), no issues were identified with the translation or adaptation of the scale. During the formal measurement phase (N = 236), the scale demonstrated good reliability and validity, with a Cronbach's α of 0.845. The Cronbach's α values after item deletion ranged from 0.821 to 0.848. The Kaiser–Meyer–Olkin (KMO) value was 0.912, and Bartlett's test of sphericity was significant (P < .001). These results confirm the scale's appropriateness for the present study.

Demographic questions

At the end of the experiment, participants are asked about their demographic information, including gender (male, female), age (under 18, 18–25, 26–29, 30 and above), date of birth (specific date selection), educational level (high school or below, bachelor's degree, master's degree, doctoral degree or above), and their primary city of residence (provincial and municipal selection).

Statistical analysis

Statistical analysis is performed using SPSS (version 29; IBM Corp). Initially, descriptive analyses are conducted on demographic and outcome data. Subsequently, t-tests and one-way analysis of variance (ANOVA) are used to compare the effects of gender, age, and education level on perceived credibility. Finally, a repeated-measures ANOVA is conducted to analyze the effects of prior experience (high or low), AIGC labeling (present or absent), and content category (profit-oriented or non-profit) on perceived credibility. When interaction effects are significant, simple effects analysis is performed to understand specific differences. The results of the repeated-measures ANOVA and simple effects tests are reported in the results section.

Results

Descriptive statistics among variables

Based on the measurements of the four sets of materials, the perceived credibility of all four sets is relatively high among participants. Specifically, as shown in Table 2, the highest perceived credibility is observed in the non-profit content with the AIGC labeling, with an average score of 67.220. Conversely, the lowest perceived credibility is noted in the profit-oriented content without the AIGC labeling, with an average score of 62.153. These results indicate that participants generally perceive the credibility of online content to be quite high.

Demographic characteristics and perceived credibility

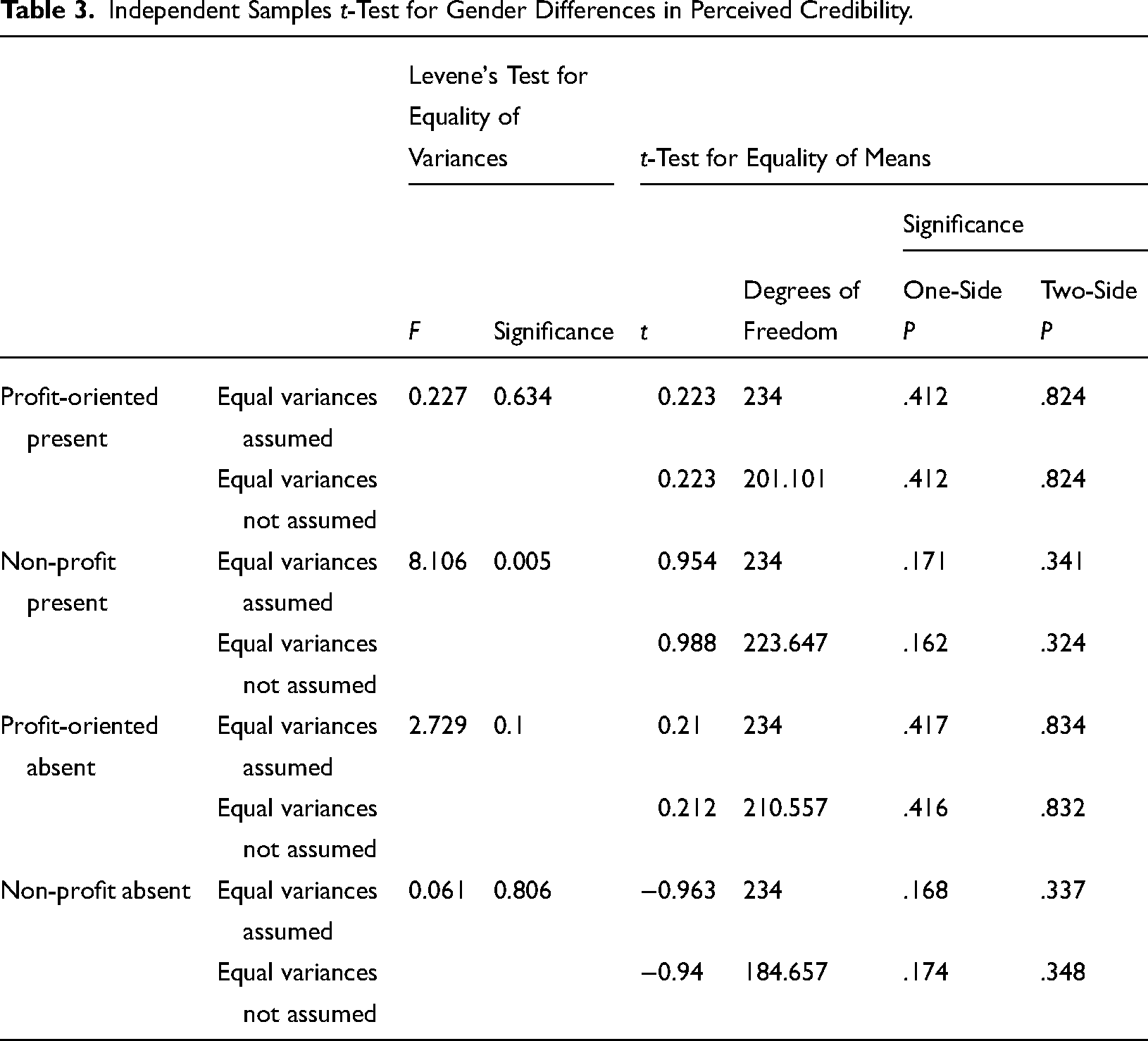

Gender

An independent samples t-test was conducted to examine the effect of gender on perceived credibility. As shown in Table 3, The results were not significant (P > .05), indicating that gender does not significantly influence user credibility.

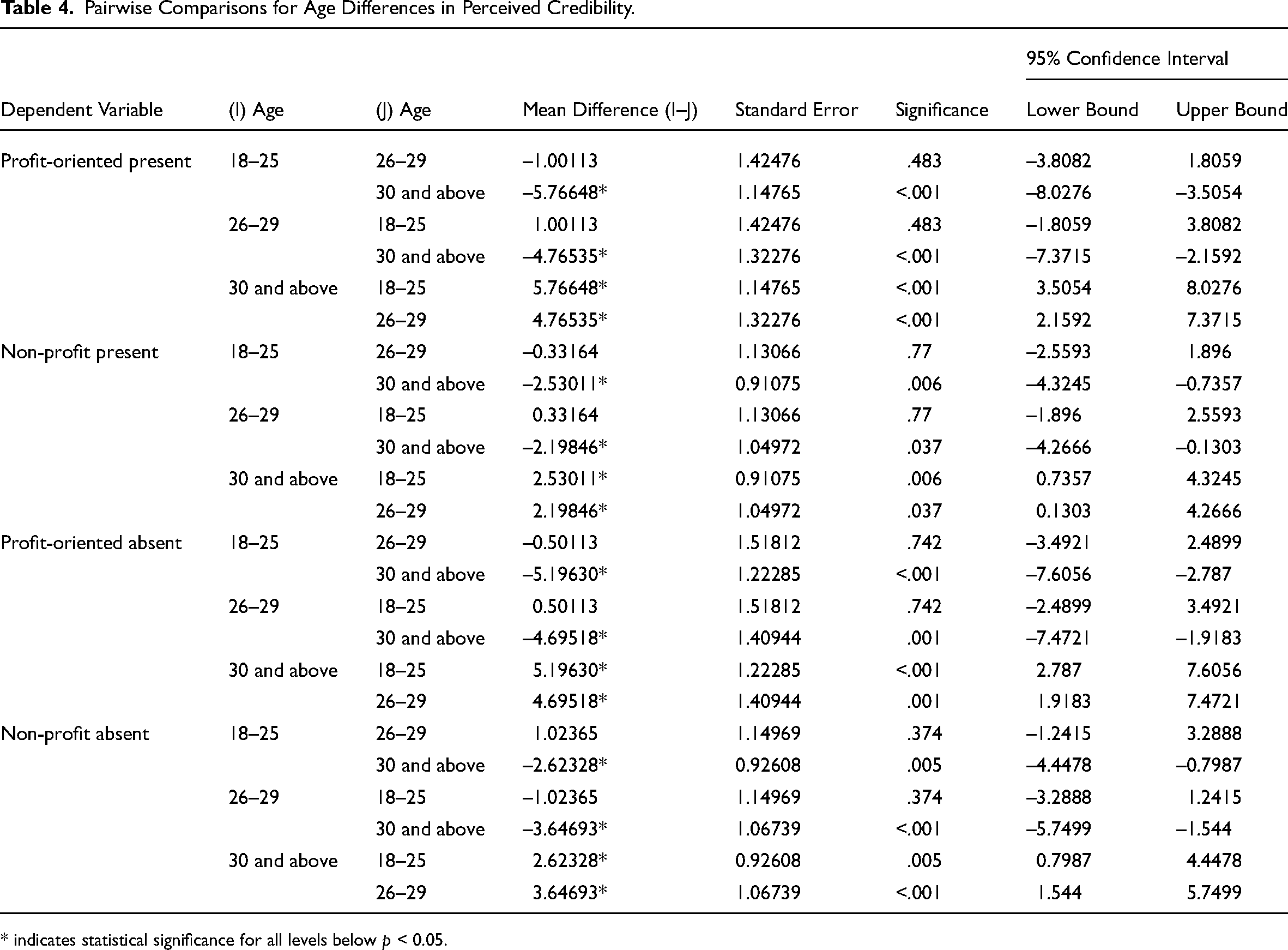

Age

A one-way ANOVA was performed to analyze the impact of age on perceived credibility. The results showed that age differences significantly affect perceived credibility. Specifically, participants aged 30 and above exhibited significantly different perceived credibility compared to the other two age groups, while no significant differences were observed between the 18–25 and 26–29 age groups. For all four sets of experimental materials, significant differences were found between participants aged 30 and above and those aged 18–25 (P < .001, P = .006, P < .001, P = .005) and between participants aged 30 and above and those aged 26–29 (P < .001, P = .037, P = .001, P < .001). As shown in Table 4, participants aged 30 and above had higher perceived credibility scores.

One-Way ANOVA Analysis for Educational Differences.

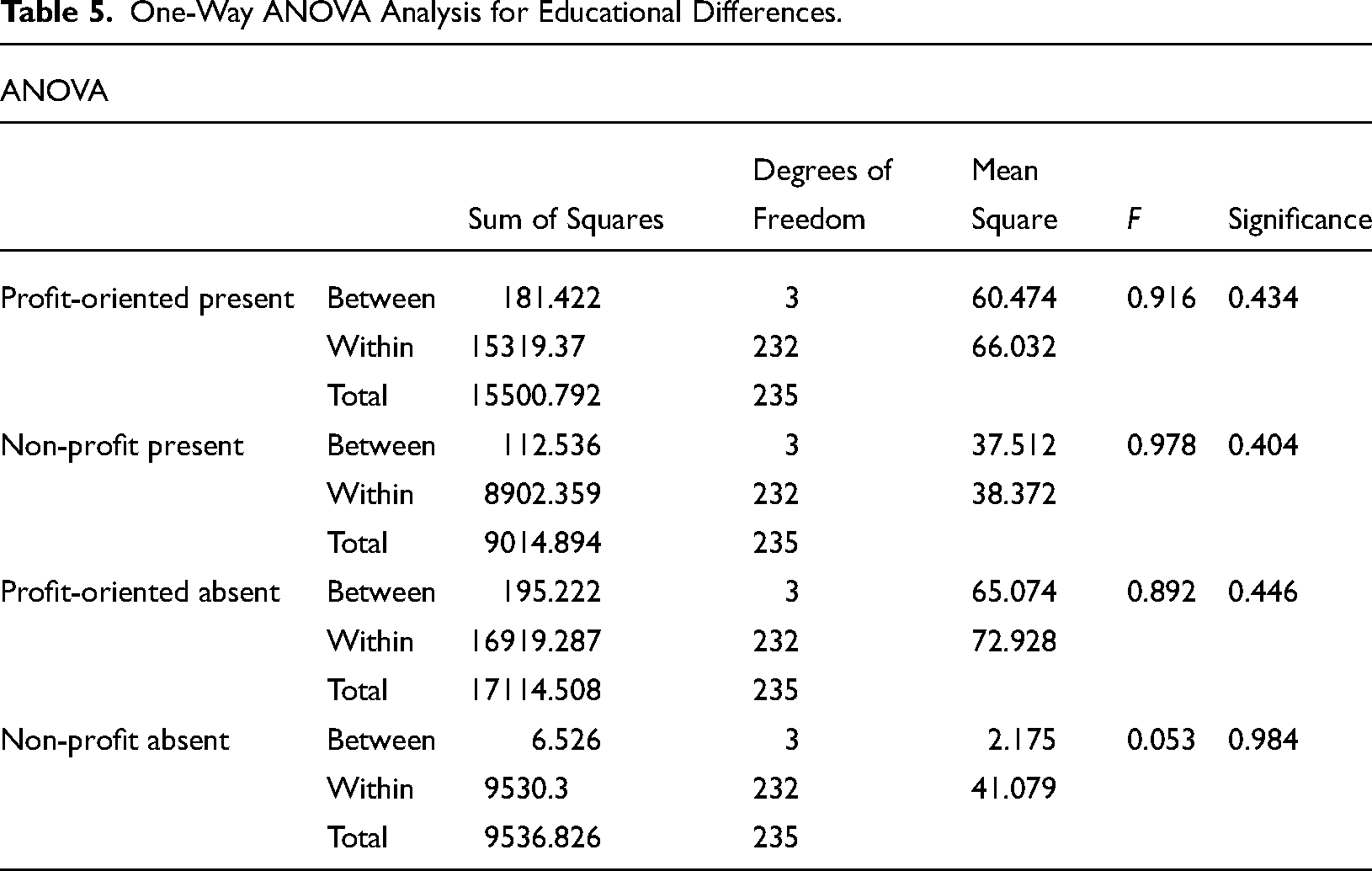

Education

The results of the one-way ANOVA for education level, as shown in Table 5, indicate that educational attainment does not significantly affect perceived credibility (P > .05).

In summary, age differences significantly influence perceived credibility, while gender and educational level do not have a significant impact on user credibility.

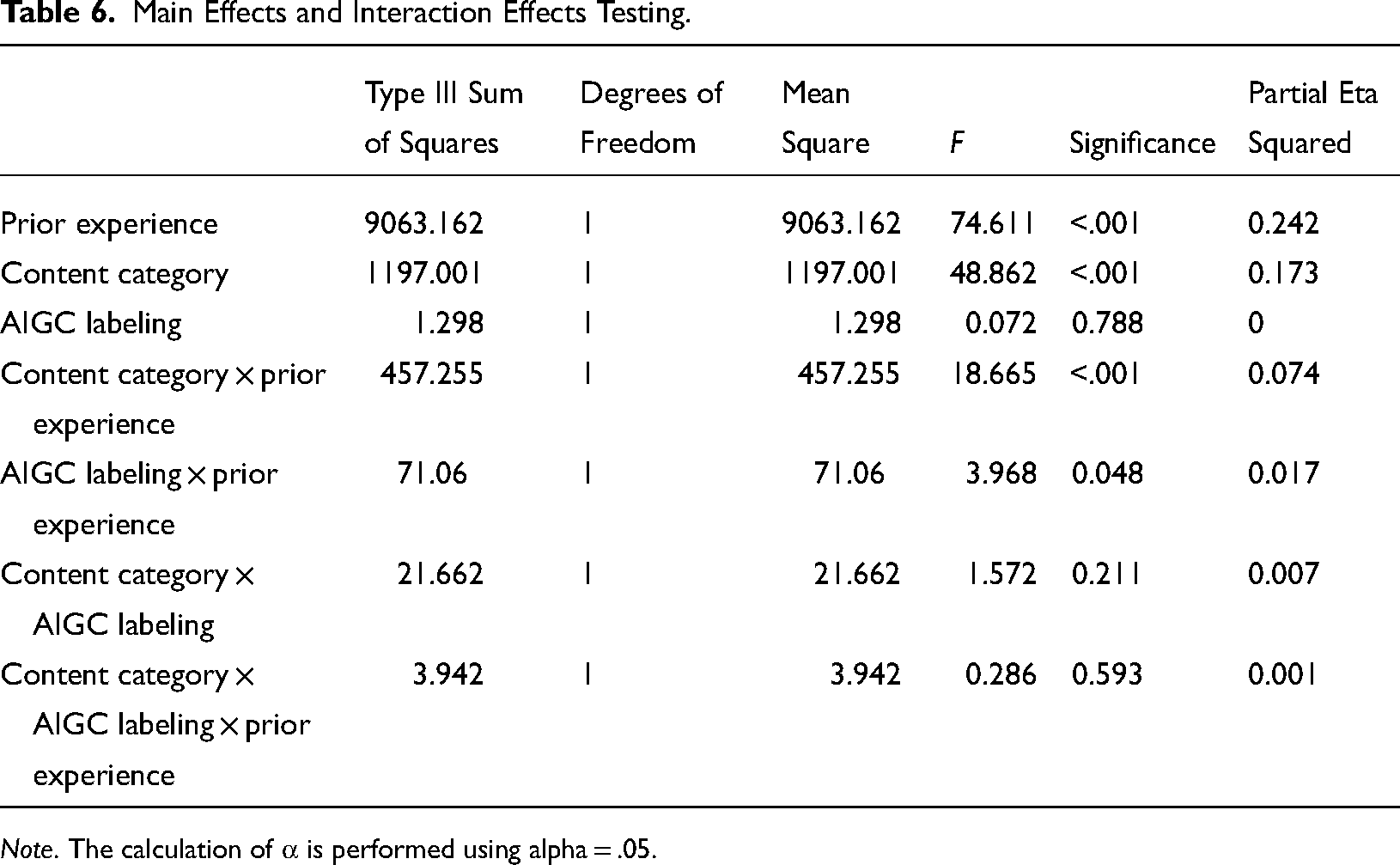

AIGC labeling, prior experience, content category and perceived credibility

Main effects. As shown in Table 6, the main effect of prior experience is significant (F(1, 234) = 74.611, P < .001, η2 = 0.242). Participants with high prior experience (M = 66.566, SD = 0.507) exhibit significantly higher perceived credibility compared to participants with low prior experience (M = 60.369, SD = 0.507). The main effect of content category is also significant (F(1, 234) = 48.862, P < .001, η² = 0.173). Participants perceive non-profit content (M = 64.593, SD = 0.338) as significantly more credible than profit-oriented content (M = 62.341, SD = 0.442). However, the main effect of the AIGC labeling is not significant. Therefore, differences in prior experience and content category significantly influence user credibility, but the presence or absence of an AIGC labeling does not have a significant impact on user credibility.

Main Effects and Interaction Effects Testing.

Note. The calculation of α is performed using alpha = .05.

Interaction effects and simple effects analysis. The interaction effect between content category and prior experience is significant (F(1, 234) = 18.665, P < .001, η2 = 0.074). Additionally, the interaction effect between AIGC labeling and prior experience is significant (F(1, 234) = 3.968, P = .048, η2 = .017). These results indicate that both the content category and prior experience, as well as the AIGC labeling and prior experience, have significant interaction effects, both of which significantly influence user credibility.

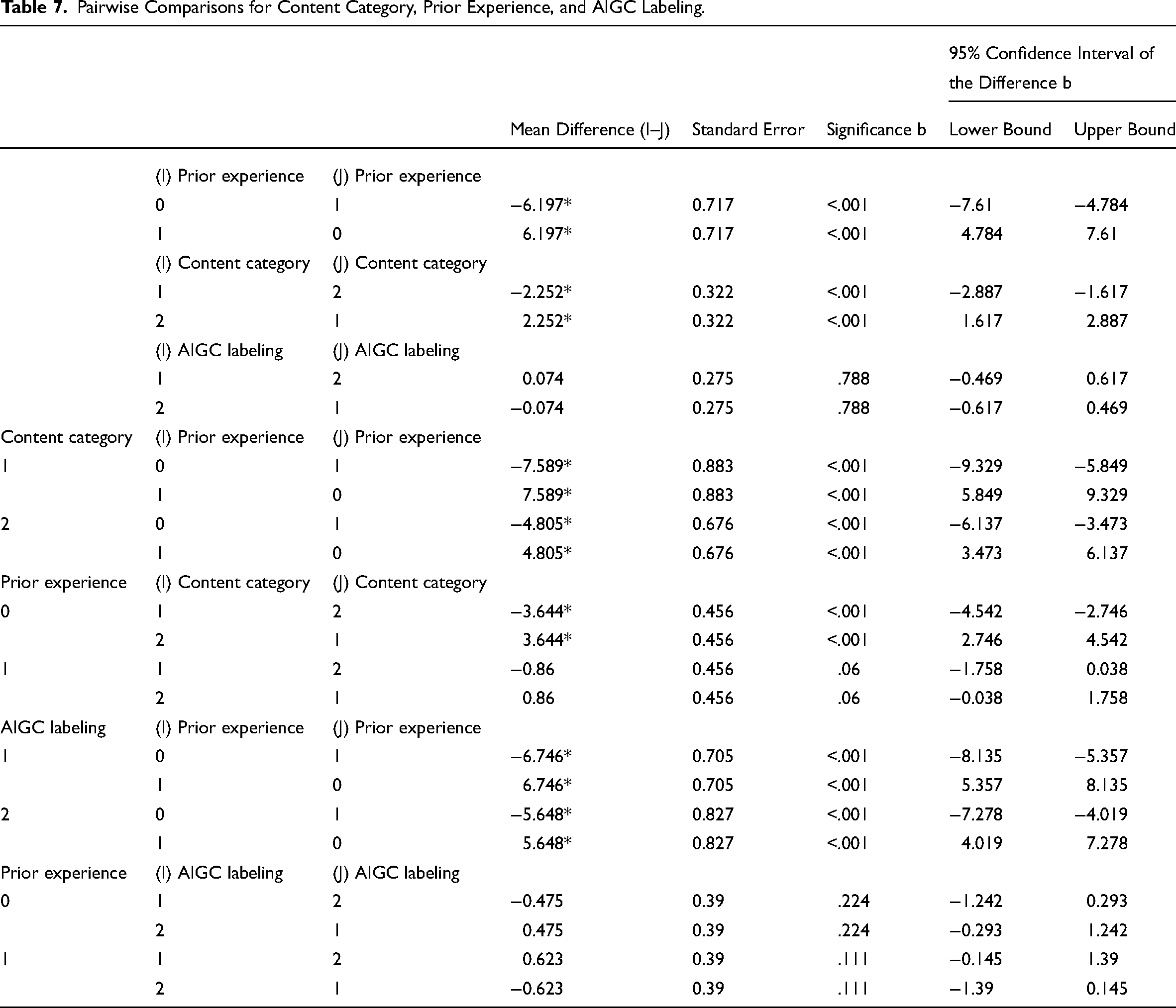

Content category and prior experience. As shown in Table 7, Figure 3, and Figure 4, there is a significant difference in perceived credibility between participants with high and low prior experience when the content is profit-oriented, with an average difference of 7.589 (P < .001). This indicates that for profit-oriented content, participants with high prior experience perceive the content as significantly more credible than those with low prior experience. For non-profit content, there is also a significant difference between participants with high and low prior experience, with an average difference of 4.805 (P < .001). This suggests that for non-profit content, participants with high prior experience also perceive the content as significantly more credible compared to those with low prior experience. When participants have low prior experience, there is a significant difference in perceived credibility between non-profit and profit-oriented content, with an average difference of 3.644 (P < .001). This shows that among participants with low prior experience, non-profit content is perceived as more credible than profit-oriented content. However, when participants have high prior experience, the difference in perceived credibility between non-profit and profit-oriented content is not statistically significant, with an average difference of 0.86 (P = .06). This indicates that as participants’ prior experience increases, the difference in perceived credibility between non-profit and profit-oriented content diminishes, although non-profit content is still slightly more credible, this difference is not statistically significant.

Pairwise Comparisons for Content Category, Prior Experience, and AIGC Labeling.

AIGC labeling and prior experience. As shown in Table 7, Figure 5, and Figure 6, there is a significant difference in perceived credibility between participants with high and low prior experience when AIGC labeling is present, with an average difference of 6.746 (P < .001). Similarly, when AIGC labeling is absent, there is a significant difference of 5.648 (P < .001) between participants with high and low prior experience. This indicates that the presence or absence of AIGC labeling does not change the significant difference in perceived credibility based on prior experience, though the difference is slightly larger when AIGC labeling is present. For participants with low prior experience, the difference in perceived credibility between content with and without AIGC labeling is 0.475, which is not statistically significant. Similarly, for participants with high prior experience, the difference is −0.623, which is also not statistically significant. These findings suggest that the presence of AIGC labeling does not significantly affect perceived credibility, regardless of the level of prior experience.

Discussion

Principle findings

First, AIGC labels represent a feasible and replicable nudging intervention. This study finds that the presence of AIGC labels does not significantly impact users’ trust, meaning people do not lose trust in content or posts with AIGC labels, nor in the media platforms hosting such content. AIGC labels effectively address the issue of distinguishing AIGC from HGC (Kreps et al., 2022). Cognitive neuroscience evidence further suggests that AIGC labels enhance cognitive processing, enabling users to make more deliberate judgments (Liu et al., 2023), which aligns with the primary objective of this research. Another possible explanation for the labels’ effects is the “default trust bias,” where users may interpret content as credible without engaging in deeper verification (Kuhn et al., 2023). However, this interpretation conflicts with the cognitive neuroscience evidence. Our findings indicate that AIGC labels cause slight changes in perception, but these changes are not statistically significant. Given the elusive nature of trust as a variable, there may be more complex mechanisms at play.

Second, AIGC labels may serve as a tool to reduce digital inequality. From a long-term perspective, they can enhance users’ exposure to and understanding of AIGC. As generative AI becomes increasingly commercialized, AIGC will become more prevalent online. Labeling such content with AIGC tags could effectively increase users’ prior experience with generative AI, boosting trust in both the content and the platforms hosting it. Research by Mrkva highlights that choice architecture (nudging) significantly impacts decision-making, with consumers from lower socioeconomic statuses benefiting more from nudges than their higher-status counterparts (Mrkva et al., 2021). Today, anyone with internet access can utilize generative AI tools, learn effective prompting skills (Giray, 2023), and harness AI to assist in various tasks (Meskó, 2023). AIGC labels thus have the potential to democratize access to AI and improve digital literacy across diverse populations.

Third, AIGC labels expand the discussion of traditional alignment theories by introducing a focus on the “explicit dimension” from the perspective of human–computer interaction. Traditional alignment theories predominantly emphasize the algorithm itself, including value alignment (Gabriel, 2020), moral alignment (Liang et al., 2021), and ethical considerations (Weidinger et al., 2023). However, alignment can also be considered through the lens of human–computer interaction interface design (Terry et al., 2023). If traditional alignment theories focus on the “implicit dimension,” human–computer interaction alignment broadens the theoretical boundary to the “explicit dimension,” offering more intuitive and actionable value. AIGC labels exemplify this approach by enhancing users’ ability to distinguish between AIGC and HGC through an interactive interface. By informing users that content is AI-generated, the labels empower them with knowledge and indirectly enhance their ability to differentiate content types.

Fourth, as suggested in previous research on nudging interventions like warning labels, there is a potential “implied truth effect” associated with nudging (Pennycook et al., 2020). This effect occurs when, in the absence of warning labels, people may assume that information is true. Similarly, AIGC labels may also exhibit an implied truth effect. The experimental results reveal that in the interaction between AIGC labels and prior experience, users with limited prior experience perceive higher credibility in the absence of labels, while those with extensive prior experience perceive higher credibility in the presence of labels. Although these differences are not statistically significant, they indicate that the implied truth effect could be a relatively universal phenomenon in nudging interventions. Furthermore, prior experience appears to be a crucial factor influencing whether the implied truth effect takes hold, with users who have greater prior experience being more susceptible to it.

Practical implications

Despite certain limitations, this study offers valuable practical insights for media platforms, users, and governments.

Practical guidance for media platforms

The findings highlight that users’ prior experience and content types significantly influence their interpretation of AIGC labels. Platforms can leverage this understanding to customize generative AI labels based on user characteristics. For high-experience users, labels can include more technical details, such as the generative model used and the data sources. For low-experience users, labels should be simplified, incorporating visual aids like colors or dynamic icons to enhance clarity and intuitiveness.

In terms of content distribution strategies, for nonprofit content, labels can emphasize educational functions to encourage active user engagement. For commercial content, labels should provide detailed disclosures about the AI-generated information to reduce user skepticism. These approaches enhance platforms’ ability to manage content transparency, allowing businesses to achieve more precise audience targeting and improving content dissemination effectiveness (Sunstein, 2022). AIGC labels thus become a powerful tool to support targeted communication strategies.

Enhancing user experience

The study reveals that users with limited prior experience struggle to interpret labels effectively, indicating the need for platforms to invest in user education. Developing educational modules, such as short videos or interactive tutorials, can improve users’ understanding of AIGC labels. Platforms can also provide feedback mechanisms, such as credibility ratings, to guide user interpretation.

Enhancing user education can mitigate the risk of misinformation and optimize user experience. Additionally, integrating interactive label designs—such as “click to learn more” features—can foster greater user engagement during content consumption, maximizing the nudging potential of labels for diverse user groups (Thunström, 2019).

Implications for government policies and regulations

This study provides a theoretical foundation for establishing industry standards for AIGC labels. Governments and industry associations should work toward formulating unified labeling guidelines to standardize the identification of generative AI content. Encouraging businesses to adopt transparent label designs can reduce the risk of misinformation and misleading content.

Such measures would help build a transparent and trustworthy digital communication environment, curbing the spread of false and misleading information. Drawing on nudging theory, this study demonstrates that label design can serve as an effective policy intervention. By incorporating labels as a regulatory tool within information governance frameworks, policymakers can promote compliance among content platforms and foster the sustainable development of the digital ecosystem.

Limitations and future research

This study acknowledges several limitations, which provide opportunities for future research:

1. Simulated experimental environment

The simulated experimental environment used in this study may not fully capture the complexity of user interactions on actual social media platforms, such as the effects of liking, commenting, and sharing on content credibility. Future research could incorporate more realistic interaction scenarios to better reflect the nuanced dynamics of social media usage. 2. Sequence effect

The study did not strictly control for sequence effects in the experimental design. While repeated-measures ANOVA was used to account for sequence effects as a covariate, and the results showed no significant impact, improvements to the experimental design are necessary. For instance, employing a Latin square design could better balance sequence effects in future experiments. Additionally, while the operational definition of prior experience in this study is theoretically grounded, it lacks direct support from authoritative literature, warranting further refinement. 3. Design of AIGC labels

This study did not explore the content, style, or placement of AIGC labels, leaving questions about the optimal design unanswered. Effective AIGC labels should inform users without imposing excessive cognitive demands or diverting attention, to avoid negative outcomes like cognitive overload. Future research should examine how different label designs influence user trust and engagement, identifying best practices for AIGC label implementation. 4. Sample limitations

The study sample is not globally representative, which may limit the applicability of the proposed AIGC label guidelines for diverse internet users worldwide. Future research should include larger and more diverse samples, considering unique cultural, religious, and social contexts across different countries to ensure the global relevance of the findings.

Conclusion

This study explores whether AIGC labels influence human trust and whether this impact is moderated by individual traits (such as prior experience) and content categories. The findings indicate that AIGC labels are a practical and effective nudging intervention, helping users differentiate between AIGC and HGC without significantly affecting trust. However, a “one-size-fits-all” labeling approach is not recommended. Instead, AIGC labeling strategies should be tailored to users’ individual characteristics, content category differences, and developmental stages.

First, regarding user traits, AIGC labels should be used more strategically for users with limited prior experience, while for those with extensive prior experience, a more universal approach may be appropriate. However, caution is needed to address potential implied truth effects.

Second, regarding content categories, for commercial content, it may be worth considering reducing the prominence of AIGC labels or adopting more subtle approaches, such as invisible watermarks (Jiang et al., 2023), to minimize trust disparities. For nonprofit content, greater platform involvement may be required to ensure quality control from the source.

At present, AIGC labels do not cause significant differences in trust and remain a viable nudging intervention. They can help users build awareness of AIGC, indirectly enhancing prior experience. However, the long-term effects of AIGC labels require further investigation.

Footnotes

Acknowledgments

This research was supported by the National Social Science Fund of China (24BXW041) and the Beijing Normal University Student Growth and Development Research Program Key Project (BNUTSQHJH24ZD11).

Data availability

The data that support the findings of this study are available from the corresponding author upon reasonable request.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical statement

This study received ethical approval from the Ethics Committee of the School of Journalism and Communication at Beijing Normal University on April 22, 2024 (Approval No: BNUJ&C20240422002). Prior to participation, informed consent was obtained from all participants. They were informed that their data would be used anonymously, that they could withdraw from the study at any time without penalty, and that no identifiable personal information would be collected or reported. To ensure confidentiality, all data were anonymized during collection and reporting.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Beijing Normal University Student Growth and Development Research Program Key Project, National Social Science Fund of China (grant number BNUTSQHJH24ZD11, 24BXW041).