Abstract

The practice of fact-checking involves using technological tools to monitor online disinformation, gather information, and verify content. How do fact-checkers in the Nordic region engage with these technologies, especially artificial intelligence (AI) and generative AI (GAI) systems? Using the theory of affordances as an analytical framework for understanding the factors that influence technology adoption, this exploratory study draws on insights from interviews with 17 professionals from four Nordic fact-checking organizations. Results show that while AI technologies offer valuable functionalities, fact-checkers remain critical and cautious, particularly toward AI, due to concerns about accuracy and reliability. Despite acknowledging the potential of AI to augment human expertise and streamline specific tasks, these concerns limit its wider use. Nordic fact-checkers show openness to integrating advanced AI technology but emphasize the need for a collaborative approach that combines the strengths of both humans and AI. As a result, AI and GAI-based solutions are framed as “enablers” rather than comprehensive or end-to-end solutions, recognizing their limitations in replacing or augmenting complex human cognitive skills.

Keywords

Introduction

The role of fact-checking organizations has, during the last decades, become increasingly paramount, as journalism has juggled with radical changes in the media environment, both internally and externally. The concept of fact-checking encompasses both simple and complex definitions, ranging from straightforward claims verification to a nuanced process that involves different stages such as identifying claims, verifying them, and then creating and disseminating an argument that either confirms or disconfirms the claim (Graves & Amazeen, 2019; Nakov et al., 2021). Fact-checking activities share many similarities with the verification practices inherent to journalism—assimilated into internal procedures for verifying facts prior to publication—before becoming an independent subgenre of journalism (Graves & Amazeen, 2019; Mena, 2019; Micallef et al., 2022; Singer, 2021).

Fact-checking and journalism are associated with the pursuit of truth, a widely accepted ethical standard underpinned by principles of accuracy, fairness, and integrity (Ward, 2005). These principles are associated with the time-consuming nature of investigative journalism and open-source journalism, both of which appear to reinforce values such as public interest, democracy, and accountability (Müller & Wiik, 2023). Differentiating from journalism, the epistemology of fact-checking is additionally committed to transparency as an umbrella standard for fact-checking practices, promoted by the International Fact-Checking Network (IFCN; Humprecht, 2020). This epistemology also covers complex concepts that professionals navigate, such as what defines a fact and how to determine or nuance the truth or falsity of a claim. Reporting facts is hence synonymous with establishing truth by providing trustworthy information using credible sources and evidence (Haider & Sundin, 2022).

Fact-checkers are confronted with the complex nature of information disorders, which manifest themselves in different forms. These include misinformation (unintentionally misleading content), disinformation (deliberate falsehoods), conspiracy theories (collective beliefs that construct new social realities), alternative facts (emotionally driven subjective truths), fake news (deliberate disinformation), and propaganda (which blurs the line between truth and falsehood) (e.g., Barrera et al., 2020; Derakhshan & Wardl, 2017; Douglas & Sutton, 2023; Gelfert, 2018; Guess & Lyons, 2020). The complexity of disentangling truth from falsehood is also exacerbated by the nature of the claim being fact-checked. For example, audiovisual content, which often has more significant impact than text, can be more challenging for fact-checkers in that images can be manipulated, fabricated, or decontextualized (Montoro Montarroso et al., 2023; Weikmann & Lecheler, 2023).

Consequently, fact-checking is widely acknowledged as a time-consuming process, with artificial intelligence (AI)-based technology increasingly recognized as a valuable tool for improving the efficiency and accuracy of fact-checking. These systems can be used to assist professionals in specific tasks such as media monitoring, evaluating the newsworthiness of claims, geolocation, and multimedia verification (e.g., Dierickx et al., 2023; Gutiérrez-Caneda & Vázquez-Herrero, 2024; Nakov et al., 2021). The launch of ChatGPT in November 2022 has sparked discussions about the potential of large language models (LLMs), considering how their user-friendly interface and conversational capabilities can lower the threshold for applying AI tools to fact-checking.

However, the hype surrounding LLMs and the tendency to exaggerate technology capabilities (LaGrandeur, 2024) may obscure the significant challenge these systems pose for fact-checking practices. The opacity of the models, combined with their ability to produce plausible but inaccurate information and their failures in logical deduction, raises ethical concerns about their accuracy and reliability (Augenstein et al., 2023). Underpinning these considerations is the issue of trust in AI systems, which remains a complex challenge to overcome while being essential for optimizing the user experience (Jacovi et al., 2021).

Although there is an important body of research on the technical capabilities of AI in fact-checking (Dierickx et al., 2023), significant gaps remain in understanding the factors that influence AI integration, particularly in the context of the recent rise of generative AI (GAI). This paper examines fact-checking practices in the four independent organizations active in the Nordic region whose main common characteristics are being small organizational structures that are deeply rooted in journalism. In particular, this study explores how fact-checkers engage with AI technologies, specifically focusing on the Nordic countries, where the ethical standards of transparency in fact-checking are echoed in media systems that traditionally prioritize values of openness, transparency, public trust, and a commitment to democratic values (Enli & Syvertsen, 2020; Schrøder et al., 2020). In these countries, (self-)media regulation combined with a high level of media literacy also contributes to resilience against disinformation (Andersson, 2023; Grönvall, 2023).

Considering the benefits of the theory of affordances for examining the interaction between artefacts and actors with specific goals and capabilities (Kammer, 2020), we explore four complementary affordances: (1) functional affordances, which refer to the specific purposes for which the technology is used (Hartson, 2003); (2) perceived affordances, which include fact-checkers’ representations and expectations of the technology (Best, 2009; Norman, 2013); (3) contextual affordances, which relate to the socioprofessional and organizational contexts in which fact-checking takes place (Best, 2009); and (4) fitness-for-use affordances, defined as the fit between the tool's offerings and fact-checkers’ user needs (Das et al., 2023; Zhang, 2008). Hence, this study is articulated on the following research question: RQ. How do the affordances inherent in AI-based technologies transfer into the professional practices of Nordic fact-checkers?

This research is based on two rounds of interviews with fact-checkers from the four Nordic fact-checking organizations, conducted before and after the launch of ChatGPT. After a literature review that provides the basis for analyzing the theory of affordances in fact-checking, the methodology is described in detail. The paper then presents the results and discusses their practical implications, emphasizing the importance of human supervision in maintaining professional standards.

Literature review

The theory of affordances has received notable attention in media and communication studies, shifting its focus from its original perceptual–psychological framework to a tool for understanding the characteristics of new media technologies (Kammer, 2020). When applied to the study of technology, the framework emphasizes the importance of specific characteristics of different technologies while embracing the cultural and social forces that shape their actual use (Hutchby, 2001). This approach avoids the pitfalls of both technological determinism and social constructivism by suggesting that technology frames rather than determines agency (Hutchby, 2001).

Originally proposed by ecological psychologist Gibson ([1979], 2015), affordances refer to the inherent qualities or characteristics of an object that enable or constrain interactions or uses by subjects. Affordances are both material and “imagined by users” (Nagy & Neff, 2015, p. 1) and depend on the ability to perceive possible affordances (Gibson, [1979] 2015, p. 119). Therefore, affordances should be considered through the complementary lenses of perception, intentionality, representations that influence users’ actions, and the context of technology use (Pond et al., 2019). In other words, affordances relate to the inherent qualities within a technology that shape users’ interactions (Gaver, 1991). However, this perspective can sometimes overlook the complex interplay between technology, social construction, and human agency (Nagy & Neff, 2015).

The theory of affordances is flexible and has been applied in different theoretical contexts, sometimes without a clear definition (see Nagy & Neff, 2015). In interaction design and evaluation, Hartson (2003) proposed four complementary types of affordances: cognitive, physical, sensory, and functional. Cognitive affordances refer to the user's understanding and manipulation of the technology, while physical affordances refer to tangible features that enable physical engagement. Sensory affordances provide cues that facilitate user understanding, and functional affordances relate to the utility or purpose of the technology (Hartson, 2003). Learning is essential to fully appreciate potential affordances when using complex systems, such as AI-based software (Hutchby, 2001, pp. 448–449). Pond et al. (2019) highlighted the importance of developing perceptual and interpretive skills in technological contexts, suggesting that the interaction between skilled users and technology enables a wide range of potential outcomes. Considering the broader environment and material conditions in which users operate is also critical when using the theory as an analytical framework (Tenenboim-Weinblatt & Neiger, 2018).

Applying the theory of affordances to the use AI and GAI technology in fact-checking is valuable to shed light on how human fact-checkers perceive and engage with AI-based tools in their professional environments (Best, 2009). In this study, we propose that affordances are context-specific, drawing on prior knowledge, in that technology frames rather than determines possibilities for action (e.g., Hutchby, 2001). Therefore, we focus on four complementary affordances—functional, perceived, contextual, and fitness-for-use—as they offer a nuanced framework for understanding how AI-based tools interact with fact-checkers’ professional needs, perceptions, and contexts.

Functional affordances—tools for fact-checking

Functional affordances refer to the specific purposes for which the technology is used (Hartson, 2003). From this perspective, there is already a plethora of fact-checking tools designed to streamline various tasks within the fact-checking process or to augment the activities of human fact-checkers (Lindén et al., 2022; Micallef et al., 2022; Westlund et al., 2022). They can be seen as valuable for speeding up the time-consuming process of fact-checking, but the proliferation of tools evokes two contrasting behaviors in fact-checkers: overwhelm by the constant stream of innovation and product development, or the excitement of experimenting with new products (Larssen, 2020).

Due to limited time to explore new tools or learn how to use them, fact-checkers often rely on the same digital tools for tasks such as web searches, image verification and geolocation (Dierickx & Lindén, 2023; Picha Edwardsson et al., 2021; Samuelsen et al., 2023). The use of disparate tools contributes to fragmented practices and increases fact-checkers’ workload. Furthermore, multiple tools perform similar tasks but often produce different results (Micallef et al., 2022).

Trust is an important factor in the choice of tools. One of the most popular tools in the fact-checking community is the InVID-WeVerify verification plugin, developed through a partnership between academia and the French news agency AFP, which underlines its credibility and reliability (Beers et al., 2020). This open-source tool was initially designed for multipurpose use in fact-checking, allowing the benchmarking of similar tools for image and video verification (Marinova et al., 2020; Teyssou et al., 2017).

Some fact-checking organizations integrate open-source intelligence OSINT techniques and tools into their workflows to collect and analyze content from publicly available sources, for instance, for geolocation or satellite imagery (Hassan et al., 2018; Pastor-Galindo et al., 2020). The NGO Bellingcat is a notable pioneer in this field, seamlessly blending OSINT techniques with traditional journalism to enhance its investigative capabilities (Müller & Wiik, 2023). While incorporating OSINT into fact-checking requires new methods and skills to complement existing journalistic practices, the level of proficiency with OSINT tools can vary among fact-checkers (Müller & Wiik, 2023).

Large language models (LLMs) are likely to add extra layers to these capabilities, as they can be used for a wide range of tasks that can streamline fact-checking processes and improve overall efficiency, including extracting data from large amounts of information, providing contextual information, detecting disinformation, analyzing data, summarizing text, or supporting multilingual operations (Chen & Shu, 2023; Cuartielles et al., 2023; Yenduri et al., 2024). Nevertheless, technology alone cannot solve all the difficulties that fact-checkers may encounter, especially when reviewing manipulated videos and decontextualized photographs (Himma-Kadakas & Ojamets, 2022; Montoro Montarroso et al., 2023; Weikmann & Lecheler, 2023).

Perceived affordances—what to use tools for

Perceived affordances include fact-checkers’ representations and expectations of the technology (Best, 2009; Norman, 2013). Fact-checkers are generally more open to new technology than journalists overall and may be able to adapt to technological developments more quickly (Weikmann & Lecheler, 2023). This is also true in the Nordic countries, where research has highlighted contrasting attitudes between journalists and fact-checkers toward technology (e.g., Larssen, 2020; Picha Edwardsson et al., 2021; Samuelsen et al., 2023). While the potential of technology to augment fact-checking practices is clear to fact-checkers, various barriers often impede its integration into daily routines.

Many tools fact-checkers rely upon are in fact AI-powered, such as search engines, automatic transcription, and machine translation, without being aware of their AI-driven nature (de Haan et al., 2022; Dierickx & Lindén, 2023). However, knowledge about how these tools function can be scarce, leading fact-checkers to not fully understand their limitations and capabilities. Lack of access to and trust in these tools are other factors explaining the nonuse of technology by fact-checkers (Beers et al., 2020). The most challenging issues for using AI in fact-checking are related to algorithmic bias, lack of transparency, dependence on data quality, and the potential that these tools present for generating misinformation (Gutiérrez-Caneda & Vázquez-Herrero, 2024). In addition, the systems’ complexity affects their ability to be fully understood by their users (Procter et al., 2023).

All of these concerns are related to the question of trust toward AI systems, which is recognized as a critical factor in enabling human–AI interaction (Jacovi et al., 2021), and fact-checking is no exception (Juneja & Mitra, 2022; Kavtaradze & Kalsnes, 2024). This is the main reason why, despite the increasing integration of AI into fact-checking processes, there remains a general skepticism among fact-checkers about fully automated approaches, even as fact-checkers become more familiar with AI technology (Juneja & Mitra, 2022; Micallef et al., 2022; Nakov et al., 2021).

Skepticism may also stem from the so-called algorithm aversion, where fact-checkers tend to view human accuracy as superior to algorithmic accuracy (Liu et al., 2023a). Furthermore, despite significant advances, researchers agree that these technologies still lag behind human capabilities in many ways (Das et al., 2023). Indeed, the use of algorithmic fact-checking tools can activate a machine heuristic, fostering the (mis)belief that automated fact-checking services provide a more impartial review of claims than humans (Liu et al., 2023b; Moon et al., 2023).

Fact-checkers face a significant challenge in understanding the tools they use and the algorithms within them, which requires a level of explainability that is not necessarily provided (Juneja & Mitra, 2022). Such a lack of transparency may be due to the reluctance of machine learning developers to share details about their products and capabilities (Školkay & Filin, 2019). At the same time, users of current GAI tools are generally aware that these systems are based on AI and acknowledge the opacity of their inner workings as well as their limitations (Cuartielles et al., 2023; Wolfe & Mitra, 2024).

Contextual affordances—where the fact-checking takes place

Contextual affordances refer to the socioprofessional and organizational contexts in which fact-checking takes place (Best, 2009). In this regard, key factors such as the professional background and skills of fact-checkers, regulatory perspectives, resource availability, geographical context, or an organizational culture of innovation are likely to collectively shape the use and integration of technology within fact-checking practices. While research has explored these complex dynamics, gaps remain in understanding their full implications and interconnections, particularly concerning the use of AI-based technology in fact-checking. In addition, research often considers fact-checking as a global phenomenon, given the importance of the IFCN, which has established a set of core principles and standards for fact-checking organizations worldwide, promoting a solid commitment to the ethical principle of transparency in methods and funding to maintain high standards in fact-checking practices (Graves, 2016, 2018; Lauer & Graves, 2024).

These global standards have been embraced by fact-checking organizations worldwide, including in the four organizations based in the Nordic countries. In these countries, as mentioned previously in this paper, fact-checkers seem more open to digital and AI-based technology, although empirical evidence is still lacking. In Norway, for instance, Faktisk has been at the forefront of developing innovative technological solutions to combat misinformation (Samuelsen et al., 2023; Steensen et al., 2023). The four Nordic fact-checking organizations also comply with IFCN standards and suggested working methods, from media monitoring to disseminating the checked claims online (Grönvall, 2023).

In a study conducted with 38 employees of 29 fact-checking organizations across six continents, Wolfe & Mitra (2024) found that the use of GAI in fact-checking depends on the organizational infrastructure and the work of fact-checkers. The authors also discussed the value tensions between fact-checking and GAI, highlighting the importance of transparency, fairness, accountability, and reliability in fact-checking. The findings underline the interconnectedness of different affordances and show that the usefulness of a tool (functional affordance) can influence the quality of information (perceived affordance) without diminishing the importance of essential soft skills such as creativity, empathy, and personal integrity (fitness-for-use affordance). Research also suggests that despite the different contexts in which it develops, fact-checking is a culture that extends beyond organizational and national boundaries as they converge in terms of professional routines and challenges (Mahl et al., 2024).

Fact-checking practices combine traditional journalistic skills with critical thinking and evidence-based analysis to verify the accuracy and reliability of online claims, particularly on social media platforms and websites (Himma-Kadakas & Ojamets, 2022; Picha Edwardsson et al., 2021). While increasing knowledge of information gathering, investigative journalism methods and social media are seen as valuable assets for fact-checkers, their skills are likely to evolve with technological developments. This means they must be familiar with technology and trained to adapt to a dynamic context (Brandtzaeg et al., 2016; Himma-Kadakas & Ojamets, 2022; Nguyen et al., 2018). There is a need among professionals to develop better skills and profiles to effectively use AI in fact-checking (Gutiérrez-Caneda & Vázquez-Herrero, 2024). In addition, linking fact-checkers to journalism may suggest that traditional journalistic skills are valued more than technological ones (Samuelsen et al., 2023).

Fitness for use affordances—considering the user needs

Fact-checkers use tools that respond to their user needs to fulfil specific tasks. However, most of the digital tools they use are not specifically designed for journalism. When it comes to AI-based solutions, there is still a gap in considering the user needs, practices, and values of journalists and fact-checkers (Beers et al., 2020; Dierickx et al., 2023). Nevertheless, these systems offer a promising response to combating online disinformation, as they can help monitor potentially harmful content, identify claims worth checking, select claims for verification, retrieve evidence, create and distribute articles, and manage suggestions from readers (Liu et al., 2023a; Nakov et al., 2021). On the other hand, it supposes that fact-checkers learn how to use these tools effectively, considering that they are not error-free (Johnson, 2023).

Research in computer science has shown considerable interest in AI-based fact-checking tools, but many solutions remain vague, lack empirical grounding, and often overlook the needs of fact-checkers (Dierickx et al. 2023; Schlichtkrull et al., 2023). AI-based technology still needs to be improved in many ways to be integrated into fact-checking, and the current limitations are related to more than just credibility and scalability issues (Liu et al., 2023b; Nakov et al., 2021).

First, determining the truth of a claim is a complex and controversial task. Second, despite significant advances, fact-checking technology still faces many limitations. For example, sensitivity to context can lead to difficulties in developing effective algorithms (Graves, 2018). Relying on previously published predictions can perpetuate biases or stereotypes (Schlichtkrull et al., 2023); and complex concepts such as virality are difficult to formalize in computer code (Liu et al., 2023a). Furthermore, the technology's reliance on available data for verification is problematic, as data are not always accessible, generalizable, or of sufficient volume to train systems (Das et al., 2023). In addition, large amounts of training data can contain biases and errors (Leiser, 2022), which can affect the accuracy of fact-checking results.

Outside the research world, a few AI-powered companies propose their services to fact-checkers, including automated fact-checking, credibility, and authenticity assessments. These systems are not fully automated—which remains an unreachable goal due to the importance of human judgment in the fact-checking process—and they tend to reduce the complex dynamics of information disorders to a decreasing quality of media content, either in terms of factuality or credibility (Kavtaradze & Kalsnes, 2024).

Fact-checking cannot be fully automated because it remains a manual process that requires subjective judgment and contextual knowledge (Liu et al., 2023a). Therefore, rather than playing a central role, AI-based technology can make a partial but significant contribution to fact-checking by acting complementary and at scale (Školkay & Filin, 2019). Successful interaction between human fact-checkers means keeping humans in the loop, allowing automated solutions to scale while harnessing human cognitive strengths for complex tasks (Demartini et al., 2020). In the background, it is about the challenge of developing reliable and trustworthy AI-based tools to support human fact-checkers (Montoro Montarroso et al., 2023).

Research on the integration of AI in newsrooms also highlighted the need to support human–AI collaboration while maintaining journalistic integrity and ensuring that AI systems are aligned with journalistic principles and values (Komatsu et al., 2020). In a study conducted in a Swedish newsroom about the potential of using machine learning for optimizing headlines for search engines, Stenbom et al. (2023) underlined the importance of considering ethical implications, including issues related to bias, accuracy, and transparency. At the same time, they underline the need for journalists to develop new skills to work effectively with AI. We may assume that these observations also apply to fact-checking organizations when grounded in journalism, either in terms of professional practice or/and in terms of organizational model.

Using LLMs in fact-checking poses additional challenges insofar as their potential in the fight against information disorders is not without risks, which can complicate effective use (Chen & Shu, 2023; Dierickx et al., 2024; Jones et al., 2023). Artificial hallucinations, which relate to the generation of unintended fabricated content, challenge the credibility and reliability of generated content in fact-checking contexts (Cuartielles et al., 2023; Dwivedi et al., 2023; Ji et al., 2023; Jones et al., 2023; Rawte et al., 2023). Furthermore, the opaque decision-making process of LLMs and other GAI tools raises concerns about their transparency and accountability (Augenstein et al., 2023).

Method

To explore and understand how Nordic fact-checkers perceive and engage with AI-based technologies, in this study, we adopt a qualitative approach. We used two rounds of semistructured interviews to explore the evolving relationship between human fact-checkers and AI-based technologies in the four fact-checking organizations established in Denmark, Finland, Norway, and Sweden that form the fact-checking landscape in the Nordic countries. These organizations are or were members of the IFCN, and they are members of the European Digital Media Observatory through the Nordic Observatory for Digital Media Information Disorder (NORDIS).

The Danish and Norwegian organizations are also verified members of the European Fact-Checking Standards Network (EFSCN), which aims to promote excellence in fact-checking at the European level. Being a member of the IFCN or the EFSCN means adhering to ethical standards that not only include transparency on the methods used by the fact-checkers but also funding and financial transparency.

In Finland, FaktaBaari is a fact-checking service that operates since 2014 and is actively involved in digital media literacy programs to build resilience to information disorder. TjekDet, founded in Denmark in 2016, strives to provide accurate information and insight into false claims in the Danish public sphere, intending to strengthen democratic discourse. It is recognized as a public service and has been state-funded since 2023 (Grönvall, 2023). Faktisk was established in Norway in 2017 by a consortium of three major media companies, primarily focusing on scrutinizing claims in Norwegian media and public discourse. Today, the Norwegian fact-checking organization is owned by six news media companies and has developed a media literacy division. In Sweden, Källkritikbyrån is an independent fact-checking organization that operates since 2019. Two international organizations, the French news agency AFP and the UK-based technology company. Logically, also operate in the region through a limited number of local correspondents. However, due to their narrower focus and scope in the region, they are not included in this study.

The four examined fact-checking organizations regularly exchange knowledge of their respective practices and perspectives within the NORDIS network, through regular meetings and several joint fact-checking projects. In Finland and Sweden, the two organizations employ three fact-checkers, while in Norway and Denmark, between 10 and 15 fact-checkers are involved in the organizations. The small structure of these organizations is not only a common feature, but they are also associated with a newsroom model that reflects the democratic-corporatist perspective characteristic of the Nordic countries (Graves & Cherubini, 2016; Larssen, 2020) and involved in media literacy programs. The newsroom model of these organizations reflects the journalistic mission that underpinned their creation, where most employees identify themselves as both journalists and fact-checkers (Dierickx & Lindén, 2023). In addition, the Finnish and Swedish organizations operate as niche entities, due to their modest size (Grönvall, 2023).

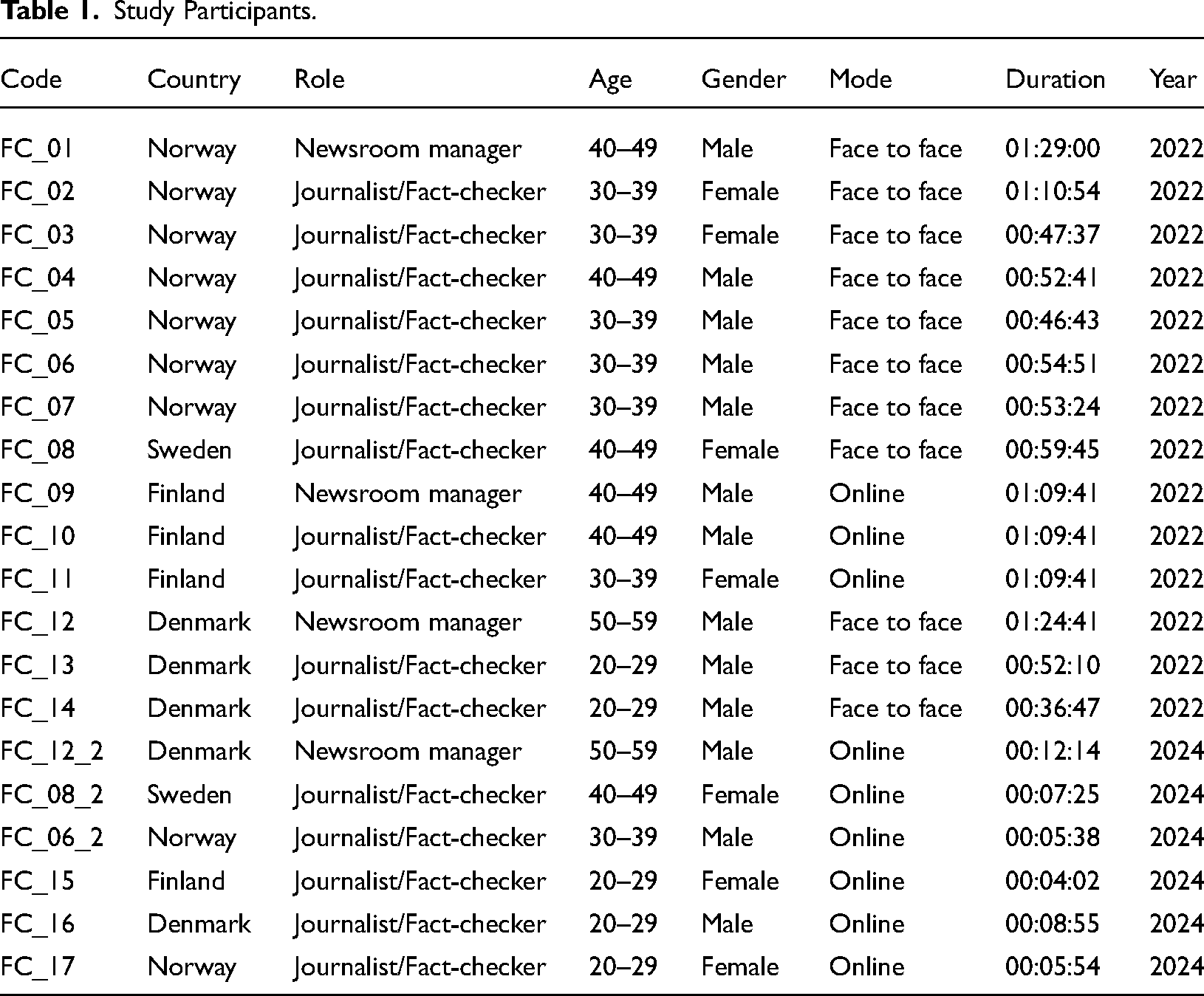

This study is based on two rounds of semi-structured interviews. The first one was conducted in the spring of 2022 and involved 14 professionals from these four organizations (about one-third of the fact-checkers), as a part of a broader study that focused on the user needs of the fact-checkers (Dierickx & Lindén, 2023). The interview guide consisted of 29 open-ended questions and aimed to gain insights into subjective experiences (Busetto et al., 2020). The interviews were organized around four overarching themes: professional background, responsibility, routines, and interactions with technology. Given that our group of interviewees represents approximately one-third of the total staff in the four examined organizations, it can be considered a representative sample (Table 1).

Study Participants.

The second round of interviews, conducted in the winter of 2023–2024, focused on fact-checkers’ perceptions and engagement with GAI technology, a rapidly developing and novel field. Given the small size of fact-checking organizations in the Nordic countries and the exploratory nature of the study, a sample of six participants was considered a significant proportion of the active professionals, with the aim of complementing the interviews conducted in the first round. Also, while the small sample size and niche focus may limit generalizability, the data collected was consistent from the perspective of an exploratory study.

Although four of these participants had also participated in the first round of interviews, longitudinal characteristics were not explored as we focused primarily on professional applications and practices. It consisted of online interviews conducted by a research assistant, which focused on three core questions about the use, purpose, and perception of GAI tools.

All interviews were audio-recorded with prior consent, transcribed, and translated into English when necessary. The transcriptions were coded using Taguette, an open-source qualitative data analysis tool (Rampin & Rampin, 2021). Taguette facilitated the organization and refinement of the data according to a set of codes related to our analytical framework. An inductive approach allowed the research findings to emerge organically from the themes inherent in the raw data, while a deductive approach involved focusing on the research questions and theoretical constructs derived from the theory of affordances.

Results

This analysis explores four types of affordances to gain insight into how fact-checkers discuss and use AI in their work. Rather than providing an exhaustive list of all AI-based tools used by Nordic fact-checkers (Dierickx & Lindén, 2023), the aim was to develop a comprehensive framework for understanding the factors that either encourage or discourage the use of AI systems, in line with the research question. It is noteworthy that in the first round of interviews, respondents rarely used the term “artificial intelligence,” focusing instead on the potential for automating fact-checking processes and the specific functionalities of the technology that are nevertheless related to the field of AI.

Functional affordances

The fact-checkers in the four Nordic organizations show a consistent reliance on digital tools, each of which serves different functions. For example, CrowdTangle is—or was until its closure—widely used for social media monitoring, Google and the WayBack Machine for web searches, and TinEye and the InVid plugin for reverse image searches and multimedia verification. Despite the widespread use of these tools, many fact-checkers remain unaware of whether they contain AI, highlighting the need for greater transparency about the technological underpinnings of these tools while at the same time showing the mundane characteristics of AI.

Large language models and GAI tools appear to be game changers, as our respondents reported functional affordances that go beyond their basic capabilities of content generation. These technologies are being used to support secondary tasks such as generating code, creating visualizations, and improving written content, but not in all organizations. For example, the Norwegian fact-checkers reported using ChatGPT for preliminary data analysis and content creation tasks such as generating Python coding prompts, creating visualizations from large data sets and brainstorming. Perplexity AI is used by some fact-checkers for research purposes, mainly to efficiently gather sources. Dall-E and Midjourney are mainly used for testing and keeping abreast of technological developments relevant to fact-checking (FC_17). Future uses may include text-to-speech applications to make textual fact-checking more accessible (FC_06_2). Generative AI tools are also being explored for large-scale data analysis and research to make these processes more efficient (FC_17).

GAI is used to a limited extent in the Finnish fact-checking organization, where tools are used for data analysis and occasionally for search engine tasks using LLM-based tools such as Perplexity AI to find information that is not readily available through traditional search methods. However, these applications are still at an experimental stage. As the Finnish fact-checker noted, “I haven't found anything where it would be useful at the moment. Except maybe sometimes I need to find sources that I cannot find. Because it's unreliable and I'm a better writer than the generative AI, I just don't know what to use it for” (FC_15).

In the Danish fact-checking organization, GAI tools are not allowed to gather evidence, as fact-checking relies on human expertise. ChatGPT is mainly used to translate longer emails into English and to improve the language of written content. In Sweden, the use of GAI systems is minimal due to a lack of knowledge and skepticism about their effectiveness, which relates to the perceived affordances discussed next.

Perceived affordances

Fact-checkers widely recognized the potential of AI to detect fake or manipulated content and acknowledged its ability to complement or augment human know-how. In particular, they expressed the potential for automation to streamline specific tasks, such as data collection and monitoring: “Everyone has slightly different search terms because journalists are interested in different things. I think that process, in general, could be done with some automation. For example, just collecting all the interesting results in one place” (FC_06); “Maybe (automated) analysis of the tips we get, because sometimes there are a lot of things that are more or less the same and it's easy to check. So that will be something we might look at and also linking claims to data” (FC_03); “I guess some of the work in terms of finding claims that are not from tips can be somewhat repetitive (…) So I think there's a possibility to enhance that workflow” (FC_07).

Nordic fact-checkers generally show openness to technological development and AI while remaining critical of the technology's limitations, insofar fact-checking relates to a complex process that requires critical human input—“Automation could be helpful as a tool within the fact-checking process but, at least for the foreseeable future, will have to have a human touch because it's complicated, because it's not mathematics” (FC_01).

Therefore, AI-based technology should be seen as an enabler rather than a complete solution. While technology is likely to support their work, it is not a panacea for all the challenges they face and needs to be balanced with human knowledge and expertise (see Himma-Kadakas & Ojamets, 2022): “You have to be able to break everything down into the smallest pieces and then check them one by one” (FC_03); “You have to interpret a lot of information, (coming) from very many different places, sources and platforms” (FC_08); “Sometimes you have to think outside the box” (FC_13).

Fact-checkers reported unsatisfactory results with AI-based tools, pointing to the limitations of generic, general-purpose solutions that are not tailored to the specific needs of newsrooms. Concerns about accuracy and reliability remain critical, highlighting the importance of human oversight and judgment in verifying claims to maintain quality standards in fact-checking practices. As one respondent noted, “The tools in general are not magic wands. Most of the time you don't get good results back when you use them” (FC_11).

Surprisingly, the only resistance we found was in Norway, where the fact-checking organization is involved in several AI-based technology experiments, for instance, in partnership with academia or through the NORDIS network. Also, a fact-checker explicitly expressed fears about the increasing automation of the fact-checking process: “I'm pretty much against automation because it takes away my job. I'm not really into it, but repetitive tasks, you know?” (FC_05). We also observed that this fact-checker has a strong relationship with his job: “I love my job. Please don't automate it too much” (FC_05).

Fact-checkers are open to integrating GAI technologies into their workflows but approach them with caution. In Sweden, GAI systems are seen as having the potential to support data collection and research, but they will not complement manual verification (FC_08_2). Similarly, in Denmark, there is a perception that GAI could help, for instance to scan the media for statements by public figures and identifying potentially false claims for further investigation (FC_16).

Overall, there is limited confidence in the results provided by GAI tools. These technologies are also perceived as posing several challenges that are difficult to address in fact-checking, mainly because of the reliability issues associated with GAI systems, either in terms of training data or artificial hallucinations, which can result in causing confusion or reducing trust—“We will create a lot of additional work for ourselves just going through the possible hallucinations, checking all the sources and so on” (FC_06_2).

One of the possible breaks relates to the consequences of using AI-generated content in the sense that it might be perceived as decreasing trust in the veracity of information. Thus, the cautious attitude toward GAI not only lies in its cautious attitude but also based on the potential for AI to be used for propaganda or fraud, highlighting the need for fact-checkers to assess AI-generated content critically—“I see AI as more of a problem area that needs to be investigated” (FC_08_2).

Contextual affordances

The organizational structure and internal culture of fact-checking organizations, rooted in political fact-checking, emphasize quality and the impact of fact-checking on media literacy. Limited human and financial resources prevent these organizations from fact-checking everything, leading to a focus on human soft skills, similar to those in journalism. These constraints also hinder large-scale experimentation and integration of AI tools.

In Finland, for example, resource limitations are a significant barrier to AI adoption: “We don't have that many automated tools because of our resource problems. We haven't been able to integrate them. We thought it would take some time to learn how to use them and to invest our time in them” (FC_10). In Sweden, fact-checking is more in line with traditional journalistic practices and relies on established tools and methods combined with the usual digital tools for fact-checking multimedia content—“I use the phone. I use people. I try to talk to people, because the people would know” (FC_08).

In Norway, four respondents mentioned having programming skills or a willingness to learn, as their organization uses automated workflows for monitoring and data scraping tasks: “I know Python and SQL and some basic HTML and some JavaScript and so on. I use that mostly for one-offs, in terms of using it for specific projects” (FC_07); “We have a few people who are trained programmers and we also have a developer” (FC_03). In addition to exploring the potential of GAI, there is a strong emphasis on mastering these tools, particularly in areas such as prompt engineering to improve the efficiency and accuracy of the results (FC_17).

Danish fact-checkers favor critical and analytical approaches over technical ones, although one fact-checker reported exploring OSINT methods and tools as additions to existing knowledge—“I look at OSINT technologies to try and verify videos and images” (FC_014). While technology is used, the focus remains on rigorous analysis and verification before turning to technical solutions. Beyond the inherent functionalities of a tool or technology, fact-checkers implicitly express the need for a balanced approach that takes into account both technological advances and the essential human skills required for fact-checking. The functionalities of the technology therefore complement or extend the skills of fact-checkers, which include research and analysis that require not only contextual knowledge—“You have to be able to find the facts and you have to be able to evaluate the facts” (FC_04)—but also strong critical thinking.

Critical thinking is recognized as a fundamental skill for fact-checkers, enabling them to question claims, identify bias, and assess the validity of information. In the Danish fact-checking organization, for example, the preferred candidate profile emphasizes people who can provide deep insights into how society works: “They are much more able to observe problems, ask critical questions about their research and have a broader knowledge to see connections in data” (FC_01). These observations are consistent with existing research on fact-checking practices, where journalistic skills are also highly valued (see Brandtzaeg et al., 2016; Himma-Kadakas & Ojamets, 2022; Samuelsen et al., 2023).

Furthermore, in line with previous findings, fact-checkers expressed a need for a better understanding of how machine learning works and how GAI can be used through prompt engineering, which consists of optimizing the instructions provided to the system in natural language (Gutiérrez-Caneda & Vázquez-Herrero, 2024). The national or regional context does not seem to be a factor that favors the use or adoption of AI technology. Rather, the contextual factors to consider are pretty much related to the intrinsic characteristics of the organization in terms of available resources, habits of practice and organizational engagement to technological developments and experimentations.

Fitness-for-use affordances

Fitness-for-use affordances are closely related to how fact-checkers perceive the capabilities and limitations of AI technology. A key issue is the lack of transparency and explainability of AI systems, which often raises concerns about trustworthiness (Jacovi et al., 2021). While this opacity is a recognized problem, it does not necessarily prevent the use of AI tools. One respondent noted this dual challenge: “We're part of Facebook's third-party fact-checking programme, so you get a feed of facts to check (…) You could ask this: ‘The algorithm understands the Danish phrases, the topic.’ (…) I don't know if they even know how it works (…) Is it something it learns from our ratings?” (FC_12).

Trustworthiness remains a key issue for GAI systems, which can be derived from their ability to provide accurate and reliable outcomes. In this matter, fact-checkers argue that these advanced tools cannot independently perform fact-checking tasks without compromising the credibility of the organization: “I don't think a generative AI tool would ever be able to do fact-checking on its own. At least not if you want to be a trusted fact-checking media organisation” (FC_16). Further, the inherent complexity of fact-checking tasks requires human involvement, positioning GAI technology as a complement to human skills rather than a replacement. Concerns about reliability, such as artificial hallucinations, further emphasize the need for human expertise to ensure accuracy and credibility, as outlined in the literature review (see Augenstein et al., 2023; Cuartielles et al., 2023).

Despite these challenges, there is recognition of the potential of AI to support certain aspects of fact-checking, such as monitoring and doing large data analysis, which is complex and time-consuming due to the multiple platforms involved (FC_01_2, FC_06_2). As AI technologies evolve, fact-checkers expect these tools to become more integrated into their workflows, while continuing to rely on human judgment for thorough investigations (FC_16). This also suggests that emerging trends are constantly being scrutinized and explored to enhance their practical utility in fact-checking processes (FC_06_2).

Discussion and conclusion

This study provides nuanced answers to the research question: “How do the affordances inherent in AI-based technologies transfer into the professional practices of Nordic fact-checkers?” By examining four complementary affordances, we have put together a comprehensive framework that allows us to provide nuanced answers to this broad and complex research question. However, several limitations should be acknowledged. The study focuses on perceived affordances rather than the effectiveness of AI tools and does not fully address all the external factors that influence the adoption of rapidly evolving technologies. The emphasis on individual perspectives may not fully represent the diversity of experience within fact-checking organizations, and the general examination of AI technology does not explore its practical applications within fact-checking workflows in depth. Despite these limitations, the study makes a significant contribution to understanding how Nordic fact-checkers approach and experience AI technologies, and how their practices and perspectives converge.

The examination of functional affordances showed that AI-based technologies are mainly used for authenticating images or videos. While AI and GAI technologies are addressed distinctly in our interviews, fact-checkers agreed that technology can support and augment workflows and practices as complements rather than stand-alone solutions. However, GAI tools are instead approached as a starting point for further exploration or to take charge of secondary tasks, including search engines based on LLMs such as Perplexity AI.

Regarding the perceived affordances, fact-checkers are open to AI technologies while being aware of their limitations in terms of accuracy and reliability. In particular, the skepticism about GAI is consistent with previous observations about automated fact-checking (Juneja & Mitra, 2022; Micallef et al., 2022; Nakov et al., 2021). At the same time, fact-checkers show an openness that is also consistent with previous research, seeing the technology as having the potential to support fact-checking workflows and practices. Such an open but cautious position is reflected in the conclusions of the pioneering study conducted by Cuartielles et al. (2023) within Spanish fact-checking organizations. In addition, the expressed openness to technology indicates that fact-checkers may be more receptive to technological advances than their journalist counterparts, as suggested by Samuelsen et al. (2023).

The examination of contextual affordances suggests that the practices and culture within an organization play a crucial role in shaping professionals’ attitudes and behaviors toward technology. The Nordic context does not seem to play a significant role in the way fact-checkers approach technology, as the findings are in line with recent studies in the field. It can be concluded that fact-checkers are generally more receptive to AI as part of their investigative toolkit and that it should become more integrated in the near future, especially for tasks related to monitoring and verification. No significant differences in perspective were observed between newsroom managers and fact-checkers, suggesting a strong alignment on these issues as well as a managerial commitment to fact-checking practices.

The analysis of the fitness-for-use affordances showed that a lack of transparency or explainability in AI and GAI systems does not play a decisive role in their adoption or rejection. However, it encourages remaining cautious. Fact-checkers have consistently emphasized the indispensability of human judgment and critical analysis in evaluating the credibility and accuracy of claims. This underscores the fundamentality of the human factor in upholding transparency, accountability, and ethical integrity throughout the fact-checking process. Additionally, it triggered a demand for developing strategies for more efficient uses of GAI, including prompt writing to enhance the accuracy of the results.

Ethical considerations appeared throughout the analysis and are related to the four types of affordances examined in this paper: functional affordances involve ethical dilemmas related to technological limitations for performing certain tasks; perceived affordances relate to challenges of accuracy and trust; contextual affordances suggest that ethical challenges should be addressed by providing clear guidance, whether ethical or practical; and fitness-for-use affordances highlight the need for an AI tool that adequately meets the needs of fact-checkers. This transversal approach makes a fruitful contribution to the existing literature and paves the way for further research in this area.

While AI technologies can assist several aspects of the fact-checking workflow, they are not a substitute for human judgment and critical analysis. The evolving nature of these technologies suggests that they may become even more integrated into fact-checking workflows without replacing the essential role of human fact-checkers in conducting thorough investigations and maintaining editorial standards. Despite their many drawbacks, most of our respondents do not reject GAI tools, highlighting the need for ongoing review and adaptation for integration in fact-checking.

The findings of this exploratory research are consistent with previous research on the use of verification technologies, which are often fragmented due to the diversity of tools (Micallef et al., 2022), influenced by the availability of resources and tools (Picha Edwardsson et al., 2022), and dependent on the fit with users’ needs, values, and skills (Beers et al., 2020; Das et al., 2023; Nakov et al., 2021). The primary barrier to the widespread adoption of GAI tools is the need to enhance the trustworthiness and reliability of their results. This indicates a shared perspective among the global fact-checking community, which can also be understood as a community of practice through the lens of an organized international network (Brookes & Waller, 2023; Lauer & Graves, 2024).

While the primary findings of this exploratory research emphasize the importance of human oversight as a safeguard for professional standards of accuracy and reliability, further research is needed to explore risk mitigation strategies to optimize the integration of AI technologies into fact-checking workflows and practices.

Footnotes

Acknowledgements

The authors would like to thank our research assistant at University of Helsinki, master student Charlotte Lackman, for excellent help with the interviews. This research was funded by EU CEF Grant No. 2394203.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical statement

This research adhered to ethical guidelines to protect the rights and welfare of participants. All subjects were informed about the nature and scope of the study, which focused on using AI technology in fact-checking. They were made aware of their right to withdraw from the study without consequence. Informed consent was obtained, and participants were assured that their anonymity would be preserved throughout the research.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the EU CEF, (grant number 2394203.).