Abstract

This article explores the triadic relationship between media, the public, and AI technology, focusing on AI's portrayal in news media and ethical controversies depicted in science fiction. It discusses public perceptions of AI through media content and entertainment. Subsequently, the essay analyzes AI's impact on news production and media creation, highlighting the benefits and concerns of AI applications, such as personalization and automation. Four ethical paradoxes of AI in media are presented, addressing human morality, journalism's gatekeeping, the echo chamber effect, and responsible AI. The essay suggests that AI should be viewed as an essential part of the media environment, advocating for meaningful interactions between AI and humans to foster industry development. The intelligence of AI, derived from human-created knowledge, should be leveraged to create a balanced perception of AI.

Introduction

Since the official release of ChatGPT at the end of 2022, the development speed of AI has rapidly increased, becoming increasingly important in people's lives (Abdullah et al., 2022). From an information technology perspective, AI refers to a computer's capacity to perform various information-processing tasks such as perception, association, prediction, planning, and motor control by imitating human brain functions (Boden, 2016, p. 1). However, today's AI issues are no longer just technical; AI is transforming our lives and working processes, much like the diffusion of all previous communication technology innovations.

AI has brought multiple challenges. The complexity of the technology, ethical issues, data privacy concerns, and more have aroused active discussions. The One Hundred Year Study on Artificial Intelligence 2021 Study Panel Report (Littman et al., 2021) indicates that considerable progress has been achieved in the last 5 years in many communications-related areas such as speech recognition and generation, language translation, natural language processing, image/video generation, and gaming. Specifically, the application of AI in news production has been a major focus of many communication studies. Recent research (Gutiérrez-Caneda et al., 2023) explores the actual use of generative AI tools like ChatGPT by journalists to assist in news production. The study indicates that journalists believe AI tools can significantly reduce production time and more rapidly utilize big data to produce graphic and text content (including podcast content creation from text-to-speech and automatic subtitle generation from video to text). However, journalists also acknowledge that these applications pose numerous ethical issues, including journalistic ethics, privacy, and intellectual property rights due to the lack of professional news gatekeeping and ethics.

Even though the development of AI has impacted the world, many people may not necessarily feel positively about the innovation. Communication research attempts to explore how people feel about AI. Cave et al. (2019) conducted a questionnaire with 1,078 UK residents, and according to their results, the most common visions of the impact of AI elicit significant anxiety. When reporting their views, people worry more about the use of AI than feel excited. In a more detailed way, people are worried that AI will take away human jobs, relationships, and social life.

Media presentation and public perception of AI

The media may play a role in amplifying the public's negative feelings towards AI. Ouchchy et al. (2020) conducted a qualitative study by analyzing 254 coverage articles written after 2012 about AI to identify public concerns. Even if the tone of most articles is neutral, public concerns about the topic still exist. In more detail, the public worries about the lack of transparency and undesired results caused by biases in AI technologies. It seems like there is a lack of transparency and bias within AI systems. Besides, people suspect a massive impact of AI technological enhancement on unemployment rates in the future and economies in general.

At the end of 2022, ChatGPT provided a more user-friendly AI conversation interface, making ChatGPT the initial experience for many users with generative AI and LLM algorithms. Skjuve et al. (2023) conducted a survey of 194 users (primarily from the UK, with an average age of 34 and a high educational background) who were using ChatGPT in January 2023. The survey explored both positive and negative experiences, revealing that most users had positive experiences, including obtaining detailed information and assistance with work or studies, and rated ChatGPT’s entertainment and creativity positively. However, the authors suggested that this early postlaunch survey might be influenced by the wow factor. Another survey at the beginning of 2023 investigated public reactions to ChatGPT (Leiter et al., 2024). Analyzing 300,000 tweets and 150 scientific articles, the study found that social media highlighted ChatGPT’s powerful functionalities, often accompanied by joyful emotions. However, these positive perceptions and positive emotions declined later, and non-English-speaking countries exhibited more negative evaluations and emotions. In their study, most scientific articles agreed that ChatGPT could be beneficial in healthcare but posed threats and ethical concerns in education.

In addition to surveys, media presentations, and social media posts, scholars have diverse evaluations of ChatGPT. Some research found that students using ChatGPT for homework produced many erroneous pieces of information (Church, 2024), indicating an over-reliance on AI at the expense of fact-checking. Another study explored the pros and cons of using ChatGPT in art creation education (Bender, 2023), pointing out that AI could help distinguish between creative skillsets and creative mindsets. While AI has proven powerful in the former, it raises concerns over copyright and originality, though the study still believed in the irreplaceability of human creators. Furthermore, Dunn et al. (2023) noted that using AI in health communication must address transparency, trust, and misinformation issues, yet they believed it could enhance health information production.

Overall, while there is limited empirical data on the public's direct use of generative AI and ChatGPT, the general public initially held positive evaluations and emotions toward the newly launched ChatGPT. However, these evaluations declined with subsequent technology debuts and may be due to the wow factor. Interestingly, users in specific fields, such as education, communication, and art creation, expressed more concerns and skepticism toward ChatGPT. Non-English-speaking users also rated ChatGPT lower, possibly due to limitations in language operation algorithms in non-English environments. It is also crucial to note potential sample selection biases in these user experience studies, indicating that the findings may only represent the thoughts of those willing to try the technology, while many other audiences might have reservations or technical constraints regarding generative AI usage.

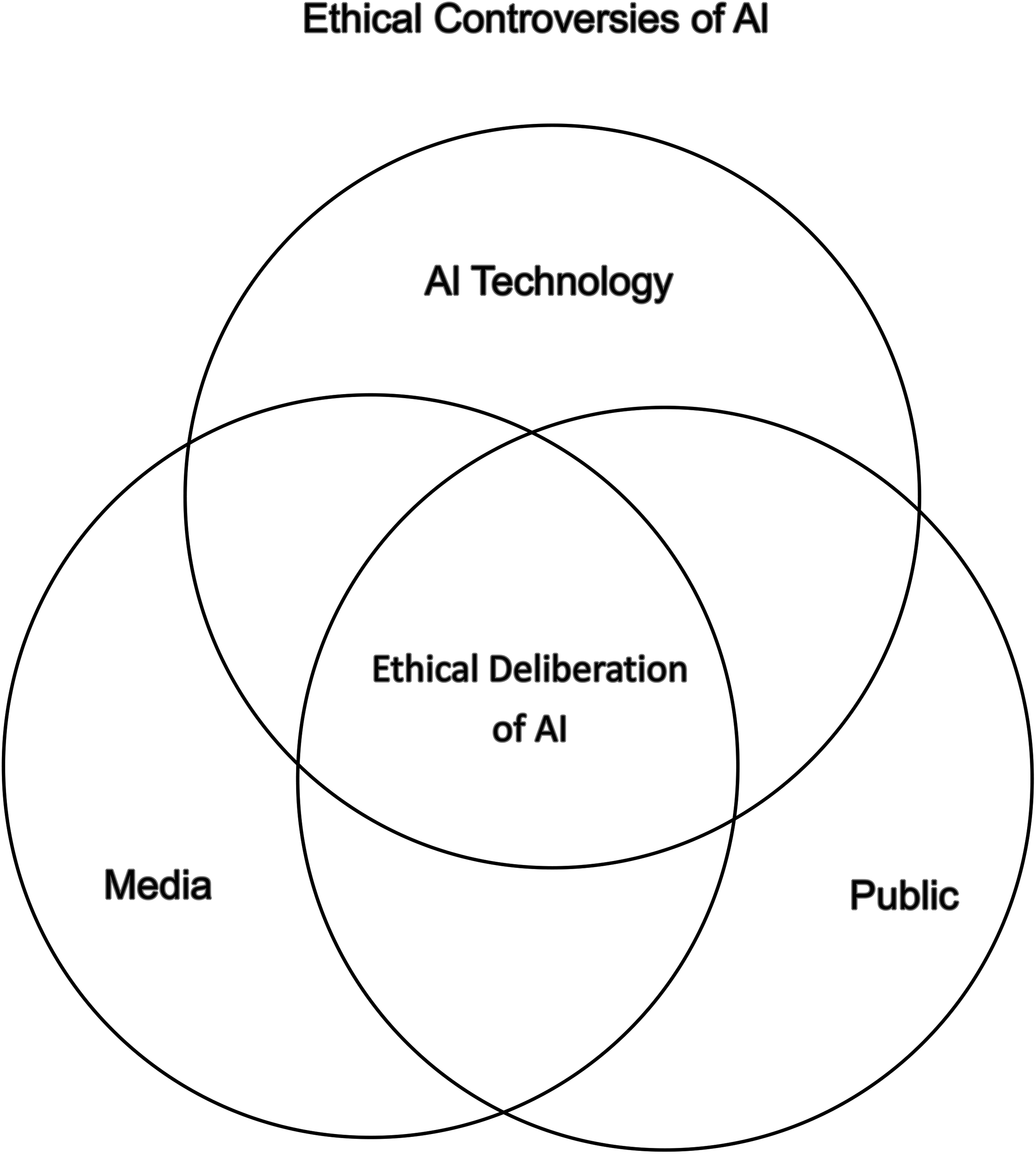

This essay focuses on the ethical deliberation of AI and suggests that they can be explored through the triadic relationship of media, public, and AI technology (please see Figure 1). In other words, the ethical controversies of AI include three relationships: the relationship between media and the public, between media and AI technology, and between the public and AI technology. The previously discussed research findings on the public's noticeable negative perceptions of AI technology are indeed worth delving into. After all, before the launch of ChatGPT in the end of 2022, the actual use of AI technology by workers was relatively limited, and empirical studies published after the end of 2022 remain scarce. Therefore, the subsequent question is, if most people have not had sufficient experience using AI, why does the public already exhibit strongly negative feelings with AI?

The triadic relationship of media, public, and AI technology.

Examining why people have reservations or anxiety about generative AI (or even broadly AI technology) is not a new issue. Human beings have not always greeted the emergence of new technologies with unreserved enthusiasm. Resistance to new technologies has been common throughout the development of technological society. Past research indicated that the advent of computers in the last century transformed human life, but in the early stages of this transformation, nearly a quarter of the population experienced computerphobia (Heinssen et al., 1987). This fear is distinct from a negative attitude towards computers; it represents a profoundly emotional response, where resistance and avoidance of computers can be seen as a reaction to a feared object (Heinssen et al., 1987). Some scholars refer to this as technostress (Tarafdar et al., 2010), focusing on the impact of technology on productivity and its effects within organizations.

Today, users in an information society may have become accustomed to the assistance of computers and enjoy their convenience, yet research indicates that many people still experience technological anxiety towards subsequent innovations deemed as “new technology.” For instance, Yang and Forney (2013) discussed how social factors influence technological anxiety in mobile shopping; Tsai et al. (2020) attempted to use the technology acceptance model (TAM) to explore how elderly individuals with needs overcome anxiety to accept wearable cardiovascular systems. In other words, technological anxiety and continuous technological progress have become a norm in the information society. This essay focuses on generative AI, one of the latest and most scrutinized technologies in contemporary society, which unsurprisingly becomes a new target for technological anxiety. These technological anxieties and fears are likely to manifest in the public's perception of AI technology.

Communication scholars have proposed various theoretical perspectives on how audiences perceive the external world. If adopting the cultivation perspective (Gerbner, 1998), we might explain the public's fear and apprehension towards AI technology by reviewing the media presentation of AI. ChatGPT is not the first presentation of AI technologies, and audiences have been exposed to the media's portrayal of AI-like entities (such as Siri, AI-based chatbot, and Amazon’Alexa, the intelligent voice assistant) did before the critical technology development point in 2022. Although recent news reports from the past 5 years might be a proximate informational cause, we believe exploring AI imagery in entertainment texts such as science-fiction films also offers an interesting observation.

The ethical reflection of AI from existing Sci-Fi film

Following the perspective of the Elaboration Likelihood Model (ELM) (Kitchen et al., 2014), public anxiety about AI can potentially be divided into two categories: rational concerns and emotional anxieties. Rational concerns include worries about job displacement, data leaks, and privacy violations (Cave et al., 2019; Littman et al., 2021; Ouchchy et al., 2020). Emotional anxieties, on the other hand, pertain to more abstract and difficult-to-articulate issues, such as ethical controversies and questions about the essence of humanity. Although binary distinctions may not be absolute, some AI ethical controversies or oppositions are relatively abstract without specific reasons. For instance, the notion that AI robots will completely replace humans, as often depicted in science fiction movies.

There are many science-fiction films about artificial intelligence, although each film has its own themes and plots, and their styles vary greatly. However, scholars have studied the portrayal of AI in science fiction films and the associated public moral debates about AI (Hermann, 2023). In Hermann's research, she analyzed multiple films and TV series with AI themes, particularly focusing on AI as human-like characters and their plots. She argues that science-fiction films reflect human's imagination and fear about science and technology of the future, and between the gap of expectation and anxiety, morality reveals. Science fiction is a major genre in the film industry, and it is frequently connected with horror and thrill. The portrait of sci-fi films also illustrated the threat and moral issues of future science (Hermann, 2023). Many masterpieces demonstrated critical insight into ethical/moral dilemmas for the coming science and technology, and, most importantly, they expressed their own opinions and stance toward that issue.

Science-fiction films are not constrained by the limitations of technological developments in the real world. In fact, many classic or archetypal stories in science fiction films may predate the actual development of AI technology. While it is indeed challenging to identify any single science fiction film as the best case for audience imagination of AI technology, these films collectively may present certain metaphors for technological anxieties.

One important and popular topic discussed in science-fiction films is the relation between human and their creations (Hermann, 2023, p. 322). Androids, robots, cyborgs, or simulants are all human creations in different forms with multiple cutting-edge technologies, and they are all expected to help, serve, assist, and “be” human. In many sci-fi films, the creation of human-like or human-form artifacts is always related to the development of science and technology. The audience gazes at the screen and admires the creativity and diligence of human beings. Multifunctional, high calculation speed, and delicate human appearance, and came up with the idea of “They just look like us.” At that same moment, another question came to their mind “Are they human?” The key issue of this paragraph is the similarity between humans and their creation. To answer this question, we can start from a totally opposite direction, and ask “What's the difference between humans and their creations?”

Addressing two outstanding sci-fi films in film history, Mary Shelley's Frankenstein was released in 1994, and Edward Scissorhands was released in 1990. We may have some insight about that question (Hermann, 2023). Mary Shelley's Frankenstein, the most classic and renowned sci-fi artifact of human history, depicted the crazy scientist, Victor Frankenstein, using a human corpse and remaining to compose a human body, and by the power of science, he created a monster called Frankenstein, a 2.5-m-tall, scar-covered, crippled human form creature. In the story, the scientist soon realized his creation is prohibited by the god and began his journey of self-salvation to eliminate “Frankenstein.” Another classical sci-fi movie, the epic film of Tim Burton, Edward Scissorhands, tells a story about one unfinished robot trying to fit in the human society, after its creator's/father's death. The poor robot was adopted by a family with a warm heart, but the very nature of the robot still causes many misunderstandings and difficulties while interacting with ordinary people.

The grotesque appearance and enormous stature of Frankenstein's monster, and the sharp fingers of Edward Scissorhands, may not be the fundamental reasons people fear them. Instead, it seems that people are more frightened by the fact that these beings resemble humans. In both films, the human-like creations encounter the same situation, in which they cannot be accepted in human society for one reason: The considerable fact that they are not real human beings. It represents two possible facets: that they don’t have an ordinary human appearance (even though they are human-like), and they don’t know how to interact with humans properly. Both directors offered some solutions and workaround for the appearance, and in the end of the story, they both taught the creations the most important concept of ethics/morality/norms, so that they are able to interact with humans. At the end of the films, the public finally appreciated the creations and embraced them, and the most interesting thing is that the public also realized that the human villain in the film is less human than the creations. In other words, the films present us a society with deficient ethical behaviors, standards, and norms, and the creation helps us to understand it via their limited knowledge about ethics.

Besides the desire of creation (Hermann, 2023), humans also show significant eagerness to make them human by teaching them ethics/morality/norms. Rather than telling us what is right or wrong, the films prefer to demonstrate us an extreme scenario and make us ruminate.

Does AI resemble the scientific monsters of science fiction films, like Frankenstein or Edward Scissorhands? What are the reasons behind the public's fear of these creations that are stronger than us? Or perhaps the question should be, as depicted in movies, will these artificial beings only be accepted by humans once they have learned to interact with real humans? The “uncanny valley” hypothesis suggests that humans instinctively fear objects that closely resemble humans; the closer the resemblance, the greater the fear. This is because humans can perceive subtle differences, even when these creations are highly similar to us. Future research might benefit from analyzing recent science fiction films related to AI. We believe that the portrayal of AI in entertainment media can help us understand the public's deep-seated emotional responses to AI.

It is worth noting that while we support Hermann's analysis of science-fiction films and AI narratives perspective, it is evident that the aforementioned two science-fiction films may not be the best examples of the current public imagination of AI technology. Moreover, cultural differences across regions should also be a key factor in the analysis of AI narratives.

The relationship between media and AI: AI in media production

In this article, we consider the media, the public, and AI technology to form a triadic interactive relationship on ethical deliberation. The aforementioned news media reports and the portrayal of AI in science fiction films belong to the first dimension of interaction between the media and the public. Although it is unclear whether public selective preferences or media framing reports are the cause or effect, the negative presentation of AI technology is a plausible explanatory approach that also warrants further empirical research to deeply explore the causal relationship.

The interaction between media and AI technology is the second dimension discussed in this article. Communication researchers are interested in the advantages and potential challenges that AI technology brings to media applications and communication research. How should media industry professionals view AI? What benefits and obstacles does it bring to the media industry? Answering this question means researchers must clarify some fundamental aspects of the media and their responses to new technologies.

Generally, when we refer to AI, we mean human-made technology that can display human intelligence using ordinary computer programs. However, with the rapid advancement of AI technology, the concept now requires a much more accurate definition. Wang and Siau (2018) categorized AI as strong and weak. Weak AI can be characterized as task-specific. In contrast, strong AI has human-like flexibility in realizing different types of tasks. Zhou (2023) categorized it as weak, general, and superintelligence. According to him, weak AI systems of speech recognition and systems of feedback do not have self-awareness. Strong AI is capable of matching human cognitive operations. It can understand contexts and can transfer this understanding among several different contexts or domains. Super-intelligence is speculative regarding the machine intelligence's form, which is superior to human intelligence. This is obtained from the categorization by Bostrom's (2014) and Searle's (1980) insights that compare narrow AI and general-purpose AI. AI researchers are attempting to construct general-purpose AI, to imitate human flexibility since narrow AI is skillful in specific tasks (Littman et al., 2021, pp. 29–33).

Building on the previous discussion of AI technology, we then ask, what are the advantages and disadvantages of AI in media? The advantages of AI-produced news as pointed out by Noain-Sánchez (2022) include its personalization of content depending on the interest of its user, facilitated by algorithms; the provision of support to the journalist in the analysis of enormous files of information; the ability to identify inappropriate content and comments that are harmful; and the provision of video footage with descriptions.) Researchers (Jamil, 2023; Tao, 2023; Tejedor & Vilà, 2021) highlight the substantial benefits of AI in translation, textual analysis, and news source comparison.

However, the pitfalls are overly visible. First, AI-generated content is not original and does not contain creative insights and new ideas. Second, algorithmic bias exacerbates biases and disparities based on gender, age, and race within social and cultural frameworks (Wang, 2023). Third, the lack of transparency surrounding the “algorithmic black box” challenges many individuals in comprehending its operations (Noain-Sánchez, 2022). Fourth, the problem of continuing regulation is that there is still no widely agreed-upon definition of what counts as moral judgment. Given the various ethical frameworks applied in different countries and regions, such definition uncertainty further impairs our ability to identify ethical decision-making in AI. Attaching responsibility to it makes it even more challenging (Singh et al., 2023).

In the above discussion, scholars primarily consider AI in the context of news production (Jamil, 2023; Tao, 2023; Tejedor & Vilà, 2021). However, AI applications in media extend beyond news. Recently, AI has introduced the Sora feature, which quickly creates short videos using text commands, making video production more efficient and reducing costs (Liu et al., 2024). Similarly, Vartiainen & Tedre (2024) identify eight concerns regarding the use of text-to-graphic generative AI tools that must be considered for future directions: (1) issues of copyright, privacy, and ownership in AI training and usage, (2) neutrality, fairness, and equity in constructing initial models, (3) unprecedented unethical use, (4) the influence of generative AI tools not only on intermediary actions but also on dynamic power structures, (5) overreliance and over-trusted on AI, (6) the potential of large AI tools to reinforce and deepen international inequalities, (7) unclear impacts of AI on humans, and (8) the environmental burden of establishing and maintaining large AI systems.

Regarding the advantages and threats brought by AI applications in media, scholars have proposed three different approaches of reference responses. First, from the training perspective, regarding AI-generated content, researchers argue that we can enhance AI access to information, provide AI with quality learning content, and disclose what copyrighted materials are being utilized to develop AI systems (Lai, 2023; Ouchchy et al., 2020). Second, from the management perspective, we could educate future AI regulators on how to manage the use of AI (Noain-Sánchez, 2022) or engage professionals qualified in the ethics of AI in their role (Ouchchy et al., 2020). This approach aims to prevent AI from being used inappropriately without being fully understood (Tao, 2023). Last, from the regulatory perspective, AI needs further regulation. The governments should create coherent policy and regulatory frameworks for AI (Ouchchy et al., 2020).

The paradoxes of AI in media applications

Previous researchers have identified numerous advantages and disadvantages of AI technology in media applications and proposed corresponding strategies. However, we believe that some of these discussions contain logical and conceptual contradictions and paradoxes. These paradoxes exist within the triadic relationship of the public, media, and AI technology.

The paradox of AI learning human morality: how can AI learn from humans when humans themselves don't understand how morality works?

One commonly proposed viewpoint is that AI needs to learn human morals and ethics to ensure that the decisions it makes conform to these ethical standards. For example, Littman et al. (2021) argued that to maintain AI as ethical, fair, and consistent with values, it requires robust regulatory models capable of integrating its behaviors into human normative institutions and processes. Despite significant progress in making AI more interpretable—and avoiding opaque models in high-risk environments where possible—accountability requires more than causal explanations of decision-making processes; it demands normative explanations of how and why decisions align with human values (Littman et al., 2021, p. 24).

However, according to Haidt (2001, 2012), human morality is based on intuition rather than rational thinking, and it varies across cultures. In the above discussion of AI ethics, a prevalent argument is that AI must learn from humans and understand human cultural morality to avoid making judgments based solely on computer logic. However, this argument contains a clear paradox: it assumes that humans possess a clear and even correct set of ethical values that AI, as a social entity similar to humans, must learn.

From the perspective of moral psychologist Haidt's morality foundations theory (2001, 2012), the human moral system itself does not operate in a clear-cut manner. He posits that the human moral system is determined by two factors: logical thinking, which involves rational judgment, and intuitive judgment within the social and cultural environment. Haidt often uses the metaphor of the rider and the elephant, where logical thinking is the rider, and intuitive judgment is the elephant. In his writings, Haidt illustrates through various moral judgment cases that moral views in human society are often formed through intuitive judgment. It is akin to the elephant moving based on instinct, while the logical thinking system merely follows and explains moral behavior, much like the rider wielding a stick seems to direct the elephant but actually follows its movements.

If we infer from Haidt's viewpoint (2001, 2012), we find that expecting AI to learn human morality is paradoxical because humans do not possess a clear or specific set of moral values for AI to learn. Of course, we are not suggesting that universal moral values are unimportant. Acts such as murder, theft, and nonconsensual sexual behavior are indeed unacceptable in most cultures. However, the reality is that moral judgments in human societies are highly diverse and context-dependent. Compiling a consistent and comprehensive moral system that AI can universally apply is nearly impossible.

To extend Haidt's famous metaphor (2001, 2012), if human moral judgments are like the relationship between the rider and the elephant, how can we expect a highly functional autonomous vehicle to intelligently navigate the correct path simply by following the elephant and rider's meandering in the jungle? After all, even the rider cannot decide where to go. The notion that AI should learn human morality is itself a false proposition because human moral judgment is based on moral intuition, with cognition merely providing post hoc rationalization.

The paradox of accuracy and objectivity in AI automated news production: how can AI automates the production of true and objective and news, yet there is a desire for human judgment in news gatekeeping.

Some scholars argue that the use of AI in news production has consistently faced questions about the accuracy and objectivity of its content (Jamil, 2023; Tejedor & Vilà, 2021). These concerns arise from the poor quality of AI data sources, including distrust in news sources and the low accuracy of data. Jamil (2023) also noted that potential ethical issues in AI news production: (1) protecting sources and ensuring data transparency, (2) accuracy and misinformation, (3) biases and manipulations in existing data, and (4) sensitivity to political, religious, and cultural contexts. The point here is to argue that these News gatekeeping processes in traditional Journalism are necessary.

However, it is worth considering that issues of source credibility and report objectivity also often involve human judgment in the traditional news industry. This human judgment includes decisions made by sources, journalists, editors, and the stance of the news organization. These judgments can be part of the gatekeeping process guided by professional journalism ethics, but they can also reflect biases stemming from political and economic considerations.

We may argue that AI and big data might be closer to the so-called accuracy and objectivity. AI and big data may remove human biases and provide objectivity, but at the time they may conflict with the gatekeeping process, privacy protection, and source protection in journalism ethics. When AI is applied to news reporting, it brings the advantages expected by data journalism, including real-time, rapid, and large-scale data integration. Even the credibility of sources emphasized in journalism can be planned through relevant settings in AI's data retrieval, such as configuring AI to consolidate information only from government or official databases. In other words, AI news can automate news production, reduce human intervention, and achieve an objective and truthful presentation through data and primary sources. If AI can automate news from various credible public databases, can't we expect these reports, free from personal biases and political and economic pressures, to provide a unique perspective?

We can certainly understand that the lack of human gatekeeping in AI-generated news may bring about some concerns. The pursuit of objectivity, data accuracy, and real-time rapid AI news may conflict with the concepts of privacy and source protection in traditional journalism. For example, the footage of a major car accident late at night and the personal information of the perpetrator and victims might be rapidly reported as real-time news by AI through highway monitoring systems, police, or hospital reports. However, whether this information infringes on privacy or requires careful consideration regarding reporting standards could lead to privacy violations.

From a data perspective, AI should learn that the specific clues about sources, events, and people involved are key to judging the credibility of the news while learning that privacy is also important. Therefore, AI news should collect news information from various big data sources, including image data for verification and even data records. The more, the finer, and the more comprehensive these primary data collections, image acquisitions, and data retrievals are, the better in terms of data accuracy.

The paradox of free choice, customization, and echo chambers: is that the echo chamber phenomenon created by AI and algorithms can be seen as a customized service and personal free will?

This paradox indicates that while AI and algorithms might indeed create echo chambers and information cocoons, this personalized information provision, which limits choice, might also be the most customized and genuinely reflective of the audience's true preferences.

Social media studies stated echo chamber effect is that audiences are limited to expose content that reinforces their own beliefs and then causes polarization and biases (Quattrociocchi et al., 2016; Terren & Borge-Bravo, 2021). Littman et al. (2021, pp. 17–18) mention that AI recommendation systems lead to information polarization and the formation of echo chambers, causing audiences to lose diversity in their digital content consumption. The third common paradox is that we often criticize AI and algorithms for delivering partial, stance-specific information based on user preferences, resulting in information cocoons and echo chambers. Critics argue that echo chambers exacerbate people's specific biases, as continually receiving information that aligns with their views makes them feel supported by the majority and prevents diverse opinions from entering their echo chamber. This criticism is valid; research in political communication has indeed shown that news messages provided by big data algorithms with specific stances can intensify people's party leanings.

But is it truly wrong to receive only preferred information? A satisfied, highly-customized user might question this. If freedom of speech can be seen as a form of free will, allowing every audience member the right to choose and express themselves autonomously, then can the personalized services provided by AI and algorithms be considered a result of personal choice? These algorithms merely understand users’ true preferences through digital footprints, then automatically filter and produce customized messages that match user preferences. Just like how, after watching Netflix for a while, viewers who dislike horror movies will no longer be recommended any horror content. Can this not be viewed as a form of personal choice?

Some might argue that the controversy lies in the fact that AI and algorithms deprive viewers of the opportunity to make each choice, offering only preselected content. However, algorithms do not force audiences to accept specific types of news content. On the contrary, they are faithful servants, recording all the master's preferences, even those the audience themselves might not be aware of or willing to acknowledge. Through repeated viewing behavior analysis, AI and algorithms adjust these preselected contents. In other words, the audience is not entirely passive, and the limited choices can be their free will.

The paradox of responsible AI between providers and users: is responsibility a regulatory tool or a means to regulate people?

The European AI Act introduces the important concept of responsible AI, asserting that AI development must consider social responsibilities beyond technology (Helberger & Diakopoulos, 2023). It clearly states that this responsibility should fall on AI content creators and digital service providers, not the users. The question of who should bear the responsibility for AI is a profound one. Generative AI services, which charge fees and derive commercial benefits, should assume commercial responsibilities and be capable of economic compensation in the event of legal disputes. However, this does not mean that users are free from responsibility.

Historically, when new communication technologies were introduced, we often believed that users bore the responsibility of media literacy, as humans are the ultimate users of these technologies. Human will is the final decisive factor in the decision-making process, determining the integration of new technologies into social life. In other words, is it meant to regulate the designers and producers or the users? The former focuses on laws and policies, while the latter emphasizes ethics and literacy.

Especially with AI generative technology, which relies on continuous interaction with users. For example, when a user generates a poster, they provide various textual references on design style, content, and visual elements for the AI to create the most suitable poster. If this poster involves issues like plagiarism or intellectual property disputes, it is hard to claim that the user is completely exempt from responsibility. Another common scenario is the liability in accidents involving autonomous vehicles. Drivers must understand the relevant usage regulations of autonomous vehicles. In short, autonomous driving is not foolproof, and users must adhere to safety regulations.

We believe that future research in media applications of AI will further explore the question of AI responsibility. The European AI Act leans towards holding designers, producers, and operators accountable, while the media literacy perspective emphasizes that audiences have both the ability and responsibility to use AI wisely (Helberger & Diakopoulos, 2023). So, who should be responsible? Should responsibility be shared equally when disputes arise, or should different levels of responsibility be assigned?

The current trend leans towards placing responsibility on AI technology and digital content producers, favoring legislative approaches for better enforcement. Conversely, placing responsibility on users would lean more toward media literacy education. Legal regulations emphasize enforceability but often lag behind rapid technological advancements. Media literacy, which emphasizes principled education, can adapt to fast-evolving technologies but often lacks effective enforcement, resulting in mere slogans.

The controversy over responsible AI is unlikely to be resolved by a polarized debate on responsibility attribution. It inherently involves preemptive regulations on the technology side and self-discipline on the user side. The proportion of responsibility should be determined on a case-by-case basis, similar to determining fault in traffic accidents, where neither party is entirely blameless. The most widely accepted principle currently is that AI technology must comply with existing media regulations, such as intellectual property rights and privacy laws, and users must disclose which AI tools were used in content production and research processes. These issues require further exploration by media researchers, particularly in how AI tools should be regulated in different political, social, and cultural contexts, and how AI impacts media production and media research.

The four aforementioned myths represent the competitive and cooperative dual relationship among the public, media, and AI technology. These are also issues that future research can delve into under different scenarios. In conclusion, as we enter an era of human and humanoid robot cooperation and an era of media and AI collaboration, readers may find themselves in an age of coexistence with AI.

Conclusion

This article examines AI issues through the triadic relationship of media, the public, and AI technology. The first section begins by discussing the portrayal of AI in news media, also referencing the analysis of ethical and moral controversies of AI in classic science fiction films. This section focuses on the interaction between media and the public, understanding public perceptions of AI technology through informational content and entertainment texts.

The second section analyzes the interaction between AI and the media, listing recent advantages and disadvantages of AI used in news production and image and video generation. It summarizes the benefits and corresponding concerns in areas such as personalization, informational efficiency, gatekeeping in journalism, automated production, and media content creation. Scholars propose coping strategies, considering roles such as the trainer, manager, and regulator to reflect on the interaction between media and AI.

Among the triadic relationship of media, public, and AI technology, empirical data on public evaluations of generative AI remains scarce. Existing research, focusing on early user experiences with the initial versions of ChatGPT and related social media posts, indicates predominantly positive evaluations and emotions towards AI technologies, especially regarding information provision and entertainment features (Bender, 2023; Church, 2024; Dunn et al., 2023; Skjuve et al., 2023). However, these studies pointed out that specific fields such as education, communication, and artistic creation, as well as among non-English users, reveal more negative evaluations and ethical concerns. This article posits that novelty may be a significant factor in the positive evaluations, and the attitudes of nonusers toward ChatGPT remain unknown, highlighting a limitation in the existing empirical research.

The public's direct experience of AI and their understanding of AI through media presentations are likely to differ. With the significant reduction in AI technology's entry barriers today, emerging technologies are no longer unattainable innovations for the general public but have become a part of everyday life. We also anticipate that more studies on AI applications will emerge in various fields.

This article presents four paradoxes related to AI ethics. Firstly, it explores the complexity and irrationality of human morality, juxtaposed with the expectation that AI can learn morality from humans. Secondly, it examines the potential contradiction between the traditional gatekeeping process in journalism and the accuracy and objectivity of information, suggesting that AI and big data-produced news could potentially eliminate human interference. The third paradox addresses the echo chamber effect brought about by AI and algorithms, questioning whether it can be considered an expression of audience free will. The final paradox discusses the concept of responsible AI emphasized in European AI laws, debating whether it is a technical and service provider obligation or also a user responsibility to use AI literacy.

These four ethical paradoxes regarding AI applications in media currently have no definitive conclusions, and both sides of each paradox have valid and invalid points. Therefore, future research, along with continuous updates in AI technology, warrants deeper exploration. This essay's overall stance leans towards viewing AI as an indispensable role in the media environment, where media organizations and professionals must recognize that establishing meaningful, positive interactions with AI is beneficial for industry development. Meaningful and positive interactions may involve assisting AI in learning the morals, ethics, and culture of the media industry and human society. While these rules may not be entirely clear, just as humans are not always clear about their own moral standards or their origins, continuous training will eventually allow AI to approach human expectations.

The intelligence and algorithms of AI ultimately derive from the various languages, texts, and data created by humans. We might consider AI as a dialogue window that integrates human social civilization. Interacting with AI essentially involves engaging with the knowledge created by humanity. When we truly regard AI as a partner, providing it with correct concepts and developing algorithms that align with individual or organizational cultures, the perception of AI by the media and the public will not be confined to the extremes of fear and over-reliance.

The story of “The Wizard of Oz” might be a prophecy about AI and humanity. The Tin Man and the Scarecrow are metaphors for two types of AI technology: one powerful but lacking emotion, and the other similar to humans but without true intelligence. The seemingly least capable character, Dorothy, is the core of the entire adventure team. It is through the cooperation of Dorothy, the Tin Man, and the Scarecrow that they reach the castle of the Wizard of Oz.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.