Abstract

As the world's fourth-largest Muslim country with a growing community of social media users, Bangladesh has been experiencing frequent online religious misinformation, inspiring violence against minorities and threatening interreligious harmony. Following an exploratory sequential mixed-methods analysis combining a qualitative thematic analysis and a quantitative content analysis, we answer two pertinent research questions. We found three ways users engage with misinformation: their topics of discourse, reactions, and appraisal. Users’ discourse revolves around religious, radical, and political issues. Radical issues (60.4%) dominate users’ discourse, followed by political issues (37.1%). Users’ reactions are primarily negative (94.1%), exhibiting different destructive behaviors. Alarmingly, the negative reactions are more than seventeen times the positive reactions (5.5%). Results for misinformation appraisal suggest that 69.3% of users believe misinformation, and only 25.9% can identify and deny misinformation. Nearly half of the users (48.21%) concomitantly talk radical, react negatively, and trust misinformation. This research suggests that religious misinformation-led violence may have more political connections than religious ones.

Introduction

This research endeavors to comprehend the engagement patterns of social media users with religious misinformation in Bangladesh. In this study, we embrace a simplified and adaptable perspective on misinformation, defining it as information that may or may not be true, with or without intent, yet ultimately misleads individuals. This viewpoint aligns with Treen et al. (2020), who characterized misinformation as “misleading information that is created and spread, regardless of whether there is intent to deceive” (p. 3).

Following the Ramu violence in 2012 (Al-Zaman, 2021a), the proliferation of online religious misinformation in the country escalated, leading to interreligious tensions and violence. Beyond socio-political issues, such as Islam's growing presence in society and social media, the burgeoning number of social media users, and digital communalism, the business interests of social media companies also exacerbate this crisis. For instance, Facebook algorithms are engineered to stimulate user interactions, occasionally tolerating misinformation and other harmful content on the platform: the more users engage, the more profit Facebook accrues (Hao, 2021). Furthermore, Facebook's moderation of harmful content primarily targets English-speaking and Western nations, thereby permitting social turmoil fueled by such content in the Global North. Bangladesh's religious landscape is similarly affected by this (Hasan et al., 2022).

When religious misinformation intertwines with social media and targets religious minorities, its recurrence, potency, and reach amplify, significantly impacting society. Additionally, most misinformation directed at religious minorities demonstrates political motivations aimed at dehumanizing and exploiting them, rendering them more vulnerable and anxious (Liton, 2021). Consequently, religious misinformation on social media has emerged as a new tool for marginalizing minorities (Sharma, 2022).

Religious misinformation appears to exert a persistent, intense, and widespread influence (e.g., mob violence, interreligious tension, and minority expulsion) on society (Sharma, 2022; Shwapon, 2015, 2016), warranting closer examination. Nonetheless, a comprehensive understanding of the phenomenon is needed. In this study, we employ a mixed-methods research approach to investigate the engagement of Bangladeshi social media users with religious misinformation, contributing to communal tension. The findings reveal that nearly half of the users simultaneously endorse radical views, react negatively, and trust misinformation. In the subsequent section, we delve into some relevant literature.

Social media and religion in Bangladesh

For a large share of social media users in Bangladesh, social media is the internet, demonstrating social media's pervasiveness and relative importance (Amit et al., 2020). Facebook (93.05%) is the most used social media platform, followed by YouTube (3.99%), indicating the immense popularity of Facebook (StatCounter, 2022). Therefore, social media, especially Facebook's nexus with significant social and political phenomena in Bangladesh, is worth discussing. Recently, “the advent of social media and video-sharing platforms have brought more opportunities for techno-spiritual practices for faith-based communities” in Bangladesh (Rifat, Peer, et al., 2022, p. 2). It suggests that social media is an extension of religious institutions for some.

Researchers studied the link between social media and religious radicalization in Bangladesh. For example, Amit et al. (2020) investigated the interconnection between social media use and Islamic extremism among Bangladeshi youths. They found that 33.4% of youths encountered online extremist and radical material. However, they assume this percentage is under-reported due to youths’ incapability of differentiating between religious and radical online content. Regardless of youths’ proper understanding of religion, 26.8% of them “considered the material (radical contents) to be authentic and accurate and claimed to have absorbed it,” and another 25.1% found it exciting and shared with others (pp. 235–236). In 90.6% of cases, Facebook provides such content.

One type of Islamic content demands separate discussion because of its immense influence over Muslims online in recent years, that is, waz, a type of Islamic sermon that takes place in a large congregation known as waz mahfils (Rifat, Amin, et al., 2022; Rifat, Prottoy, et al., 2022). It is an outdoor event organized more frequently in winter so that there is no monsoon. Many grassroots content creators capture videos of such congregations and upload them on Facebook and YouTube. As a result, the prevalence and popularity of waz have been increasing continuously since the 2010s (Al-Zaman, 2021b).

Preachers in such congregations are commonly addressed as Huzur, an Arabic word for respected persons. In Bangladesh, persons who appear to have Islamic knowledge are called Huzur, despite the fact that a large portion of them are less educated and prejudiced (Hashmi, 2000). Their sermons in waz mahfils commonly feature extreme provocations, such as jihad against the infidels, religious hatred against the Hindus and Jews, and misogynistic remarks against women. Also, these often contain religious misinformation and misinterpretations of holy texts, along with political provocations. This offline misinformation spreads online fast when these videos are uploaded to social media platforms. As a large portion of the country's Muslim population is sensitive to their religion and follows and trusts religious preachers, they are vulnerable to such misinformation.

Religious misinformation on social media

Misinformation is information that may or may not be true, has or has no intention but misleads people (Treen et al., 2020). Religious misinformation is misinformation related to one or more facets of religion, such as religious figures, practices, scriptures, and beliefs. It is frequent in three South Asian countries: Bangladesh, India, and Pakistan (Mahfuzul et al., 2020). A study of online religious misinformation in Bangladesh shows that users react to such misinformation more emotionally than reasonably, and destructive behavior is more common among them when interacting with it (Al-Zaman, 2021a). Furthermore, most users tend to believe in online religious misinformation, making the situation precarious (Mahfuzul et al., 2020).

Religious misinformation attracted more scholarly attention worldwide during the pandemic because of its ubiquity and impact (Druckman et al., 2021; Levin, 2020; Nagar & Ashaye, 2022). Religious leaders, mullahs, and congregations have become crucial sources of COVID-19 information across religious traditions in this period (Levin, 2020), which in some countries helped proliferate misinformation. Followed by an extensive survey of 18,132 respondents, Druckman et al. (2021) uncovered that people with a higher religiosity tend to believe misinformation the most, inspiring vaccine hesitancy and fake remedies (Barua, 2022; Hegarty, 2021; Nagar & Ashaye, 2022).

Besides other reasons, studying religious misinformation is imperative as it survives in the presence of contrary but authentic evidence, making it more alarming (Barrett, 2014). The present study is more interested in religious misinformation generated and disseminated to produce interreligious tension in Bangladesh. South Asian scholars call it communalism.

Misinformation, social media, and communalism

Communal online misinformation

Communalism in the South Asian context chiefly indicates the extreme support for a particular religion that produces interreligious tension between communities and inspires religious violence (Pathan, 2009). Historically, communalism exists in the Indian subcontinent to varying degrees. However, as social media usage is steeply increasing, communalism, like other offline issues, also finds a new nest in social media that has transformed its (communalism's) previous strengths, modes, and intensities. Anonymity, rapid and low-cost information production, wide reach within minimum time, and persuasive content design have made social media an ideal weapon for communalism. More specifically, religious misinformation on social media has become one of the main tools of communalism: blasphemy, the act of insulting or disrespecting religious entities (e.g., figures, practices, scriptures, and beliefs), is a companion in this process.

Communal online misinformation leading to violence

Interreligious violence led by online misinformation connects the four discussed concepts—blasphemy, communalism, misinformation, and social media. To elucidate their connections, the following real-life pattern suffices.

Muslim perpetrators anonymously commit blasphemy on social media, using a fake online profile or hacking others’ accounts. Such blasphemous content includes doctored photos, statuses and comments, shared videos and photos, and private messages. For example, in the Nasirnagar violence, Rashraj Das, an illiterate Hindu fisherman, was accused of posting a doctored image on Facebook that allegedly insulted Islam. It caused mass hysteria among the local Muslims, who later vandalized the Hindu households. However, subsequent investigations found that Rashraj's compromised social media account was used to frame him (Shwapon, 2016).

In many similar cases, police investigations found Muslim men, unknown culprits, and/or various interest groups (e.g., Islamic groups, ruling party men, and local groups) behind the conspiracies (Hasan & Macdonald, 2022). The interest groups frequently exploit ordinary Muslims’ religious sentiments and mobilize them toward violence, encouraging vigilante behavior. Misinformation-led mob lynching is an example of offline vigilantism.

Social media users’ vigilantism is called “digilantism” or “netilantism” (Chang & Poon, 2017; Martin, 2007). Chang and Poon (2017) found that “netilantes most perceived the criminal justice system as ineffective, possess the highest level of self-efficacy in the cyber world, and are the only role who perceived netilantism as capable of achieving social justice” (p. 14). It helps explain how and why religious misinformation leads to online and offline aggressiveness and violence: The users perceive that committing blasphemy and defaming religion is a crime, and the alleged perpetrators committed it. However, they have trust issues with the authorities (e.g., the government and law enforcement agencies), and they (the authorities) would leave the perpetrators unpunished. This line of thinking provokes them to act to deliver justice: burning and lynching in offline space and verbal aggressiveness in online space.

Apart from blasphemous content, users on social media also express communal attitudes through their language. Rashid (2022) compiled a dataset that included toxic religious Bangla terms and phrases by analyzing Facebook public comments. He found 148 types of religious or communal toxic textual expressions: 106 contained mid-level, three high-level, and 35 extreme-level toxicity. The eight major contexts of such toxic expressions are anti-Arab, anti-Hindu sentiment, anti-Islam sentiment, anti-Pakistan sentiment, intra-Islamic conflict, Islamic extremist bashing, non-Muslim bashing, and context-free. The analysis found Islam biddweshi (anti-Islamic), murti puja (idolatry), Islam biddwesh (anti-Islam), Hindu jongi (Hindu militants), dharma beboshayider (religion merchants), and Iyahudi Nasara (Jewish and Christians) are the top five bigrams, suggesting the dominance of Muslims’ toxicity and negative attitudes against other religions.

The overall discussion on the various facets of social media, religion, and misinformation in the context of Bangladesh suggests that the presence of religion on social media is visible, undeniable, and worth studying. Also, faith-based misinformation on social media threatens the country's interreligious coexistence. Unfortunately, lacking relevant research creates a knowledge vacuum (Sharma, 2022). This study answers two interrelated research questions to contribute to this gap:

RQ1: How do social media users engage with religious misinformation? RQ2: What are the frequencies of users’ different types of engagement?

Research methodology

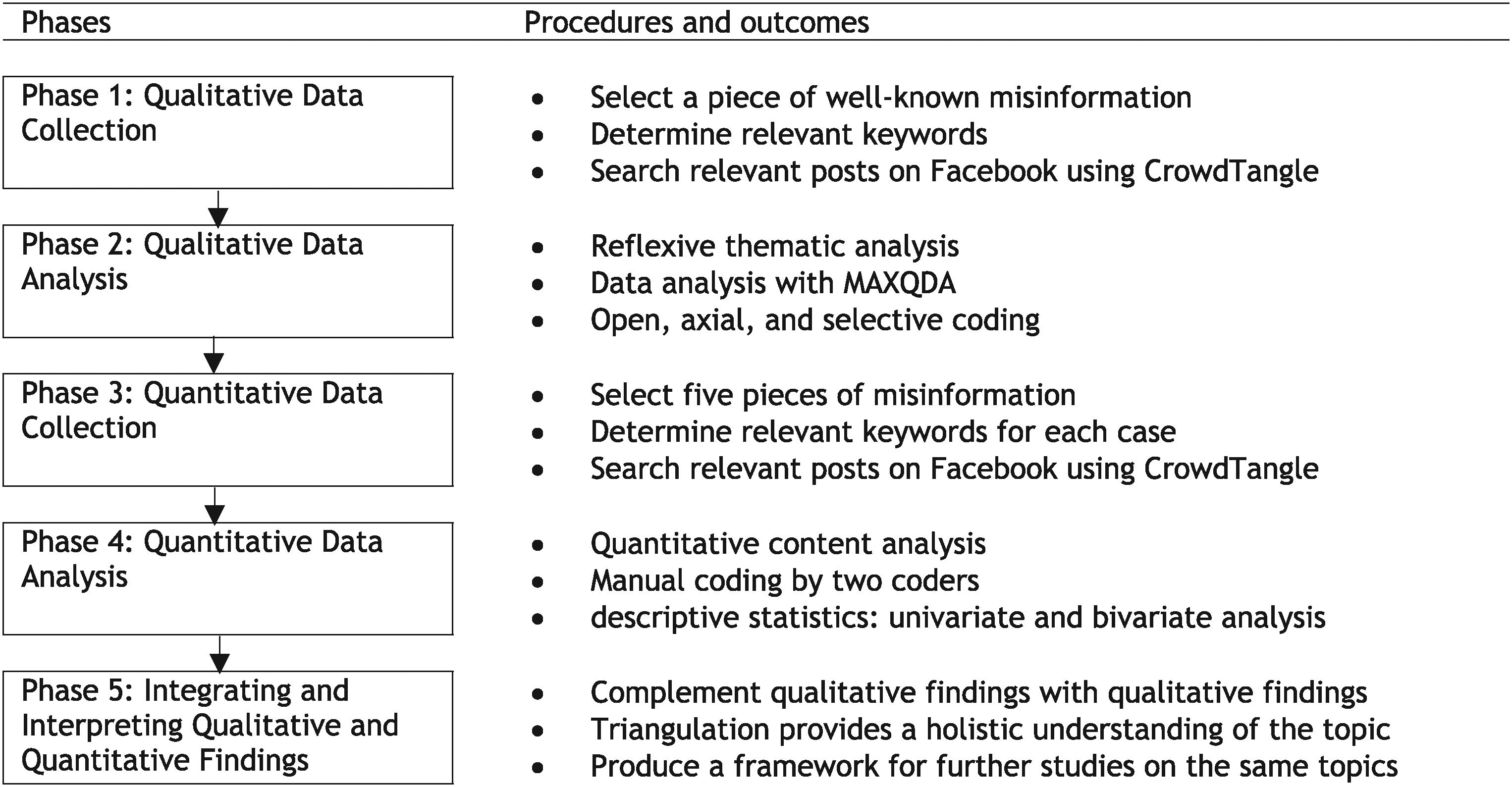

Five rationales for a mixed-methods analysis inspired this study: Triangulation (the confirmation of results by different methods); complementarity (results from one method are used to enhance, elaborate, or clarify results from another method); initiation (where new insights are obtained which will stimulate new research questions); development (results from one method shape another method); expansion (expanding the breadth and the range of the research by using different methods for different lines of inquiry) (Johnson et al., 2007). Therefore, we devised an exploratory sequential mixed-methods technique in this study proposed by Creswell and Clark (2017) (Figure 1).

A mixed-methods design for the present study.

Our primary aim in the qualitative part of this research was to develop a conceptual framework to understand the issue under investigation and other similar issues. The quantitative part tests the generalizability and explains the qualitative findings more rigorously (Creswell & Clark, 2017; Creswell & Creswell, 2018; Teddlie & Tashakkori, 2015). In another way, we qualitatively explored how social media users engage with religious misinformation based on a smaller sample (1,819 users’ comments) drawn from one relevant and representative religious misinformation.

Based on the results of the qualitative analysis, we quantitatively analyzed another set of data with a larger sample (7,350 users’ comments) from five other religious misinformation. This part has four aims: assessing the generalizability of qualitative insights, complementing qualitative findings, generating the statistical insights of the qualitative findings, and providing a comprehensive framework for analyzing similar cases. In this research, RQ1 is qualitative, while RQ2 is quantitative. Following an accepted practice in mixed-methods designs, the qualitative part of this study deals with RQ1, and the quantitative part deals with RQ2 (Creswell & Creswell, 2018).

Religious misinformation included in this research has six features: Originated from or disseminated through social media or both; directly connected to religion; contained hostile or blasphemous elements that can yield interreligious disharmony; led to offline consequences, including protest, violence, tension, intervention, arrest, and imprisonment; later investigations proved it misinformation; attracted remarkable media and public attention both offline and online. The following four sections discuss qualitative and quantitative methods and their findings. The results from both qualitative and quantitative methods have been integrated into the discussion section to explain the studied phenomenon in depth (Teddlie & Tashakkori, 2009).

Qualitative method

Data collection

Following an emergent design, this part relied on a qualitative thematic analysis of social media text (Braun & Clarke, 2006). We selected a piece of well-known religious misinformation in Bangladesh for data collection.

On October 20, 2019, a piece of religious misinformation spread in Bhola, a coastal district of Bangladesh. The misinformation claimed that a 25-year-old Hindu man named Biplob Chandra Badiya defamed Allah and the Prophet (PBUH) on Facebook (The Daily Star, 2019). It stirred mass outrage among local Muslims and inspired them to protest. Meanwhile, police asserted that Biplob lodged a police complaint before the protest by stating that his Facebook account, “Biplob Chandra Shuvo,” was compromised, and the hackers were spreading blasphemous remarks using it. Police also claimed in media reports that the alleged hackers threatened Biplob over the phone when he was at the police station. The two accused—Sharif and Emon, two Muslim men, were arrested later (UNB News, 2019).

However, protesting Muslims denied all these claims, addressed the police's account as fabricated, and believed that the Hindu man was purposively demeaning their religion. They further conceived that the police and the government were covering up the culprits. The situation soon escalated, and an angry mob of local Muslims attacked the police and police station. The conflict killed four protesters, leaving a hundred more injured. The protest, supported by the All-Party Muslim Unity Council, later proposed six-point demands, including hanging Biplob for his blasphemy and the acting Superintendent of Police (SP) and Officer in Charge (OC) for killing and injuring the protesters (The Daily Star, 2019).

Before independent fact-checkers, including BD Fact Check, Fact-Watch, Jachaai, and Rumor Scanner, debunked this misinformation, it led to a series of violent events now known as the Bhola violence. This misinformation fulfilled the inclusion–exclusion criteria of this study described in the previous section, making it a strong sample case.

After selecting the incident, we conducted a keyword search in CrowdTangle, a public insight tool owned and operated by Facebook (CrowdTangle Team, 2021). Our keyword search strategy was the following: (“Mohanabi,” “kotukti,” “songhorsho,” “hamla”) AND (“Bhola”) [Translations: (“Prophet,” “insult,” “conflict,” “attack”) AND (“Bhola”)]. These keywords were produced based on our inclusion and exclusion criteria and a pilot observation that we initially conducted to understand what keywords best represent the case.

We searched for the available news posts shared on Facebook about the misinformation on October 20–30, 2019. We set the second date (i.e., October 30) considering two factors: the misinformation was debunked by this date, and the interests of the public and news media regarding this incident plummeted significantly. We searched only the Facebook pages. While searching for relevant data, we set the language to Bengali and the country of relevance to Bangladesh. This way, we found 114 posts that generated 91,235 interactions (e.g., comments, shares, and likes); all were news links.

Data preparation

Of the collected news items, we filtered out 15 for at least one of the following reasons: they were duplicates, ambiguous, did not mention the misinformation in any way, or revealed that the violence was rooted in a piece of misinformation. We randomly chose five posts from this list, aligning with our research objectives. We scraped the comments from these posts using Comment Exporter (http://exportcomments.com), a paid data harvesting platform specialized in social media data extraction. The total extracted comments were 2,811. We created a dataset of the comments using Microsoft Excel. Afterward, we cleaned the data, excluding duplicate comments (n = 311), comments from duplicate users (n = 275), and comments containing advertisements, photos, mentions, and spam links (n = 406). Our final sample included 1,819 comments.

Data analysis

First, we imported the comments into the MAXQDA (VERBI Software, 2019). Then, following the reflexive thematic analysis method, which relies on an inductive approach to data analysis, we analyzed the data for relevant themes (Braun & Clarke, 2006). Reflexive thematic analysis is “analyzing qualitative data to answer broad or narrow research questions about people's experiences, views and perceptions, and representations of a given phenomenon” (Brulé, 2020).

In this exploratory research, we used a grounded theory coding approach (Charmaz & Belgrave, 2016), combining open, axial, and selective coding in the three coding cycles to generate codes, categories, and themes from the raw data (Allen, 2017). We conducted a sentence-by-sentence coding of the data to extract meaning. However, not all sentences convey relevant insights. Also, many sentences conveyed more than one insight, leading us to code them with more than one code. At first, we and another trained coder coded 10% (n = 182) of the data separately. We resolved the coding issues and disagreements based on mutual consent and thus determined the intercoder reliability (O’Connor & Joffe, 2020).

Qualitative findings

We found three ways users engage with religious misinformation: topic-related engagement, reactional engagement, and appraising misinformation. Appendix 1 briefly describes the themes and categories.

Topics of discourse

Religious issues

With religious issues, we imply issues linked to religions’ core ideas, such as religious actions and rituals (e.g., namaz, puja), sacred religious texts (e.g., the Quran), religious monuments (e.g., temples, mosques), religious ways of life (e.g., prohibitions, brotherhood), and religious entities (e.g., the prophets, clergy).

Many users explained the necessity of a Muslim brotherhood—an ummah—that can encounter conspiracies against Islam and Muslims. We found among these users fear of humiliation and defeat, survival instinct, self-loathing, and attempts at rejuvenation. For example, some users talked about how Muslims are becoming the targets of violence worldwide in recent times, known as Islamophobia. It is a threat that is making Muslims feeble and helpless. In their comments, users helplessly praying to Allah indicate such a tendency: “Oh Allah! Please be kind to us. Eliminate the enemies of Islam on behalf of us.” Some users implied that Muslims must regain their declining strengths and reputations.

We also found some indications of Hindu revivalist discussion. However, such comments did not explicitly support the Hindu brotherhood but still provided an impression of their revivalist thinking. The fundamental reason for this, the users suggested, was to safeguard their threatened Hindu lives in Bangladesh, a country of and for Muslims.

Besides religious conspiracies and revivalist comments, we found some users interested in religious cohesion among religious communities. The basis of this was introducing Islam as a religion of peace, not violence and hostility. Their discourse revolves around specific ideas, such as Islam is peaceful, Muslims are good, “religion is for humans, humans are not for religion.” Such ideas are more relevant to Islamic mysticism and a more humane tradition called Sufism. They also talked about violence against religious minorities and suggested religious tolerance as a countermeasure for this. Some users advised other Muslims to follow the path of the Prophet (PBUH). Such remarks seem constructive and helpful for greater benefits.

Political issues

Users were concerned and talked extensively about issues like South Asian geopolitics and international, national, and local political issues. For example, indicating Islamic extremism and its possible aftermath, one user commented: “Some people [Islamists] are trying to make Bangladesh another Afghanistan.”

Among users, two other groups—pro- and anti-Islamic—criticized Bangladeshi political bodies. Pro-Islamic groups, for example, were not happy with the role of the government that allegedly let the perpetrators who insulted Islam go unpunished. In this regard, they presumed that the government is anti-Islamic, implying that the government is subduing Muslims’ protests with the help of police to cover up the Hindu man who “insulted” the Prophet (PBUH). Moreover, they accused the government of being pro-India and pro-Hindutva: “Infidels surround the Bangladeshi government and its institutions. These Islamophobes work for the interest of only the BJP and Awami League.”

They vehemently criticized the ruling party and its members and their actions and policies in their (users’) comments, manifesting their frustration regarding Bangladeshi politics. As they perceived that the government was not supporting the holy cause of the protesting Muslims, they started talking about the illegitimacy of the ruling party that manipulated elections, the growing despotism in the political system, and the increasing power of the oppressive law enforcement (e.g., the police).

Some users deduced that Muslims consciously propagate misinformation for their political and worldly interests, such as evicting Hindus from their land to capture it to satisfy their hatred against Hindus. Some users believed that disparaging Islamic symbols and persons, such as the holy Quran and the Prophet (PBUH), is part of a greater plot against Muslims and Islam. The conspirators might be Bangladeshi Hindus allied with Indian Hindus. We found that such thoughts remind many users of Islamic revivalism.

Many users tended to argue that the alliance of the government, Hindus, and seculars would endanger Islam and Muslims. This triad conducts an anti-Islamic campaign to protect and expand Hindutva, suppressing the Islamic environment in Bangladesh. The alleged patronization of the Hindu perpetrators indicates the rising Hindutva ideology in India and Bangladesh. They think, “Awami League and Bharatiya Janata Party (BJP) both are the saviors of Hinduism but in two different countries, which makes them allies against Muslims” and “they [the government, Hindus, and seculars] are the agents of the Jews.”

Users highly criticize India, BJP, and Hindutva for two more reasons. One, India is selling the “plight” of Bangladeshi Hindus to bolster the stronghold of Hindutva. Two, the Indian Hindu nationalist government BJP, according to the users, knows well that only Muslims can prevent their expansionist agenda in South Asia. Therefore, they target Islam to defame and suspend Muslims’ authority. It is another reason some Muslim users called for a Muslim brotherhood to resist Hindutva's influence. From the users’ comments, Islam seems to be an essential source of political agency to them.

Radical issues

Radical topics consist of two issues: hatred and actions. Some users exhibit intense hatred against others using various verbal expressions. Much of such hatred is related to religion and politics, containing racial, ethnic, and religious slurs (e.g., Islam biddwesh (anti-Islam), Islam biddweshi (anti-Islamic), Iyahudi Nasara (Jewish and Christians), jongi (militants)). We also found extreme hatred towards seculars, Hindus, and media in the dataset, using slang such as khankir pola (son of a whore), magir pola (son a whore), kuttar bacha (son of a bitch), jaroj (bastard), motherchod (motherfucker), chutmarani (whore), and bainchod (sister fucker). However, not only did Muslims admonish Hindus, but we also found evidence where Indian Hindus vilified Bangladeshi Muslims using pejorative terms.

Many users endorse moderate and extreme actions in response to religious misinformation. Most of them talk about Islamic jihad, Islamic justice, and revenge. The concept of jihad is conceived both broadly (e.g., a war against the infidels in the world) and narrowly (e.g., punishing the culprits of the country who insult Islam): “Hey Muslims, brothers, prepare for a jihad.” They also argue that Bangladesh is a country of Muslims, and non- and anti-Islamic sentiments should not be tolerated here. It seems to be a common expression from Muslim social media users in cases of online religious discourse.

A part of this sentiment seems to be influenced by the absence of Islamic justice against blasphemy and religious desecration that fails to bring anti-Islamists under justice. As a result, they endorse their versions of Islamic justice. Some users proposed different punishments for the alleged persons, including hanging, throwing into the fire, cross-firing (i.e., extrajudicial killing), killing brutally, and slaughtering. Comments like “throw the culprit dog into the fire” are common throughout the dataset. One user commented: “Those who humiliated the Prophet (PBUH) have no right to live.”

Interestingly, not only were Muslim users calling for extreme punishment of the alleged Hindu man, but we also found comments demanding extreme actions against the protesting Muslims: “These extremely insane people [protesting Muslims] should be killed like dogs.” It suggests that destructive behavior is shared among all engaged groups in the discussion.

Reactions of users

Positive reactions

Some users expressed positive attitudes toward the followers of other religions, political institutions, ideologies, and events. Such attitudes include the most common positive reactions, such as love, interest, and serenity. Even if they criticize, they do it constructively, indicating their control over emotions. All categories of users (e.g., pro-Islamists, anti-Islamists, seculars, pro-Hindus) show positive attitudes. Expressions of religious cohesion discussed earlier are also positive reactions that fall under this section. One user commented, “Humans are not for religion. Rather, religion is for the betterment of humanity.” Interestingly, both positive and violent users claimed they were inspired by the same religious texts and preaching, demonstrating the possibility of different interpretations of the same text.

Negative reactions

Some comments express certain negative emotional reactions, such as anger and hatred, that are noteworthy. Such reactions also suggest how users react to the same issues differently due to their different thought processes. A large share of the users expressing negative emotions seems to trust misinformation. There might be two reasons. First, their religious belief was hurt by the incident of insulting their Prophet (PBUH), no matter who committed the blasphemy. Second, they were apprehended by a series of events of violence where the government, as discussed earlier, did not take a proactive role in punishing the offender.

Additionally, seculars’ apparent bias toward supporting Hindus seems frustrating for many users. For these reasons, many unleashed hatred with offensive language and intense anger. Some users expressed disgust and disappointment to a moderate degree, but the reasons are the same. Some angry comments also highly criticized religious extremism, Islamists, and deceived Muslims. These comments are presumably from the seculars, Hindus, some Muslims, and others aware of the misinformation.

Misinformation appraisal

Trust

Many users trust misinformation, suggesting they fail to separate facts from fiction. Two groups of believers can be discerned based on their reasoning skills: users who trust misinformation but lack reasoning skills and users who trust misinformation but possess reasoning skills. Comments that lack reasoning are relatively shorter and hostile with slurs and other hate expressions. Some believers are firm in their position, proposing extreme actions against the Hindu perpetrator. Many who tend to trust misinformation demand justice according to the blasphemy law: “Who insulted our Allah and Prophet (PBUH) should be punished. They encourage the interreligious strife.”

In contrast, users from the second group tend to argue with reasons why it is not a case of misinformation, and Hindu people might commit blasphemy against Islam. Also, instead of directly analyzing misinformation, they try to support their position by bringing other relevant issues into the discussion. Users believing misinformation again prove that Islam is not a mere religion but a key source of political agency for many Muslims.

Denial

Like the previous category, some users identified the misinformation based on proper reasoning, while some outright denied it without providing enough explanation in favor of their position. It is essential to mention two key arguments here. First, one group of users who explained their position argued that misinformation propagation is religiously, politically, and morally disgraceful and must be dealt with. A user stated: “Hacking religious minorities’ social media accounts and spreading rumors that cannot be the acts of good Muslims. […] Only those who are the country's enemies can do such heinous works. They should be brought to justice immediately.” Second, referring to previous incidents of misinformation propagated by Muslim perpetrators targeting Hindu and Buddhist minorities in Bangladesh, another group of users described the present misinformation as manufactured. While some of these users strongly labeled all Islamic organizations in Bangladesh as “terrorist groups,” some users congratulated the Hindu man for bravely complaining about the issues to the police and tolerating such a nuisance.

Doubt

Some users were doubtful and could neither deny nor trust misinformation through proper reasoning: “It can or cannot be fake news.” Some tried to be logical but still failed to demystify the misinformation properly. Some referred to the previous incidents of religious misinformation that targeted religious minorities in Bangladesh to address it might also be an incident of misinformation. Although some users tried to assess the misinformation and related events based on available reasons and evidence, they could not reach any conclusion. Such doubtfulness may often lead them to believe the misinformation.

No appraisal

Some comments should have overtly assessed the truth value of misinformation statements to learn whether they are true or false. Most such comments are either reluctant to discuss the topic directly or lack adequate skills to analyze the claim. In such cases, some users preferred to avoid the main topic and decided not to enter the core argument of the discussion. For example, the following comment does not inform anything about the quality of the claim, and neither denies nor accepts the proposition of misinformation: “A valuable virtue like humanity cannot be expected from beasts because it can only be found in real human beings.” Some comments with no appraisal were difficult to distinguish because of their ambiguity.

Quantitative method

Data source and collection

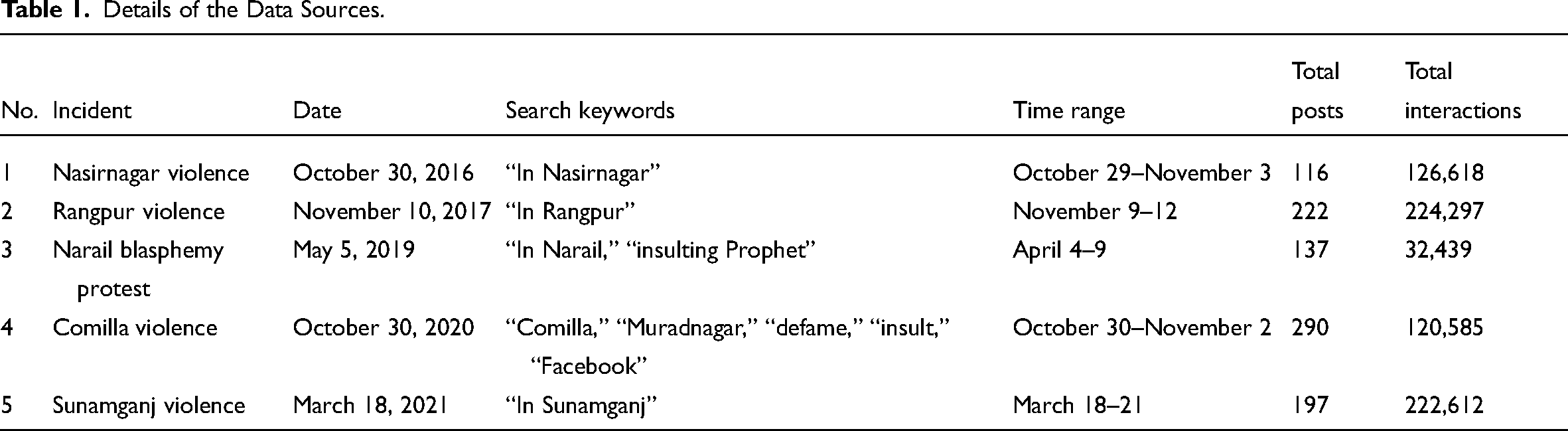

Based on the findings of qualitative analysis, this quantitative part devised a content analysis method to answer RQ2. Here, we analyzed the public comments on five well-known and impactful cases of online religious misinformation in Bangladesh that led to offline violence (Table 1). This analysis relied on a larger sample than the qualitative part (Allen, 2017; Krippendorff, 2004; Wimmer & Dominick, 2014). Although there are more similar cases of misinformation, we excluded them due to their limited extent, impacts, and/or online interactions.

Details of the Data Sources.

We used CrowdTangle to search for posts related to each misinformation event. In this regard, we applied keyword search using different and relevant keywords to the events. We set the time range as 3–5 days. From the qualitative data analysis experience, we found that sometimes, it usually takes less than a week to find the real story behind a piece of misinformation. Therefore, we decreased the search time range in the quantitative data collection phase. We found 962 (M = 192.4, SD = 69.52) posts that contained and/or endorsed the claims of misinformation or did not debunk them. All posts generated 0.73 million interactions (M = 145,310, SD = 80,490.88).

Data preparation and analysis

We randomly selected 23 (2.39%) Facebook posts out of 962. These posts generated 10,862 comments. We used the qualitative phase's data collection technique here. In the data cleaning process, we filtered out 288 instances of spam, photos, ads, and links, 2,015 duplicate comments, 974 comments from the same users, and 235 blank, fragmented, or vague comments.

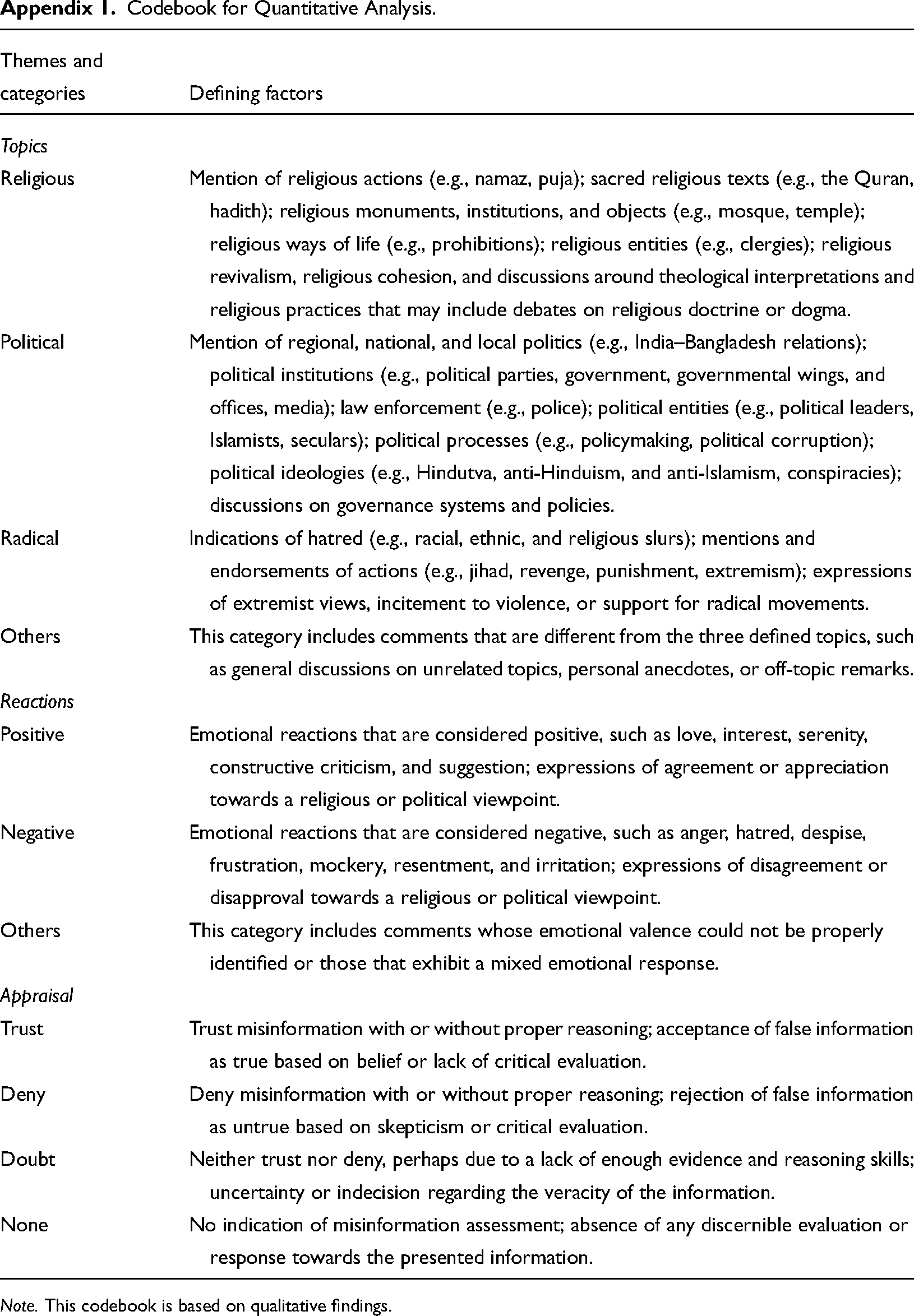

Based on the findings of the qualitative part, we prepared a codebook to guide quantitative content analysis (see Appendix 1). We added the “others” category for the topic and reaction variables. We intended to explore other topics and reactions (if any) in the dataset beyond the topics we found in qualitative analysis. If we could find new findings, we intended to add them to the final framework with an explanation.

We sought help from two trained coders to code the data. After discussing the coding criteria, both coders coded 10% (n = 735) of the comments, producing an almost perfect and statistically significant intercoder agreement (Cohen's kappa value 0.930 for topics, 0.911 for reactions, and 0.906 for appraisals). The values indicate that our coding is 82%–100% reliable (McHugh, 2012, p. 279).

Quantitative findings

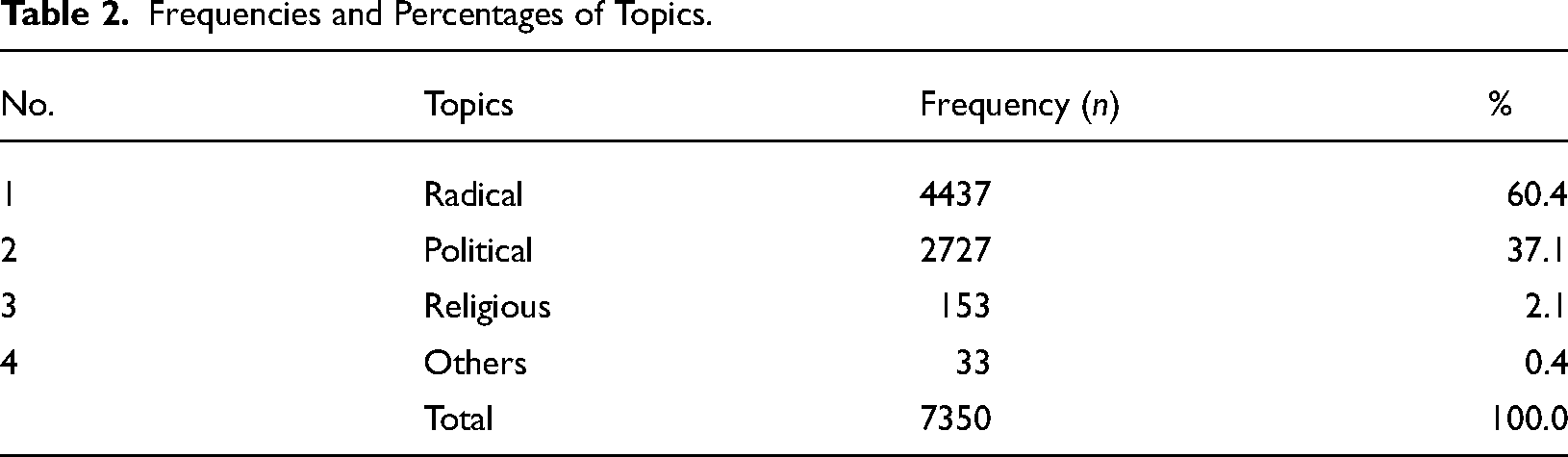

Topics of discourse

The quantitative analysis found that radical issues—hatred and extreme actions (n = 4,437; 60.4%) are more prevalent in users’ discourse when they engage with misinformation, followed by political issues (n = 2,727; 37.1%), which is almost half of the share of radical issues (Table 2).

Frequencies and Percentages of Topics.

These results indicate the dominance of radical issues. On the other hand, compared to radical and political issues, religious issues (n = 153; 2.1%) have limited space in users’ discourse. The analysis also found only 0.4% (n = 33) other issues. This result suggests the minimal presence of topics beyond these three dominant topics in users’ discourse. It further implies that the qualitative findings are generalizable for similar other cases.

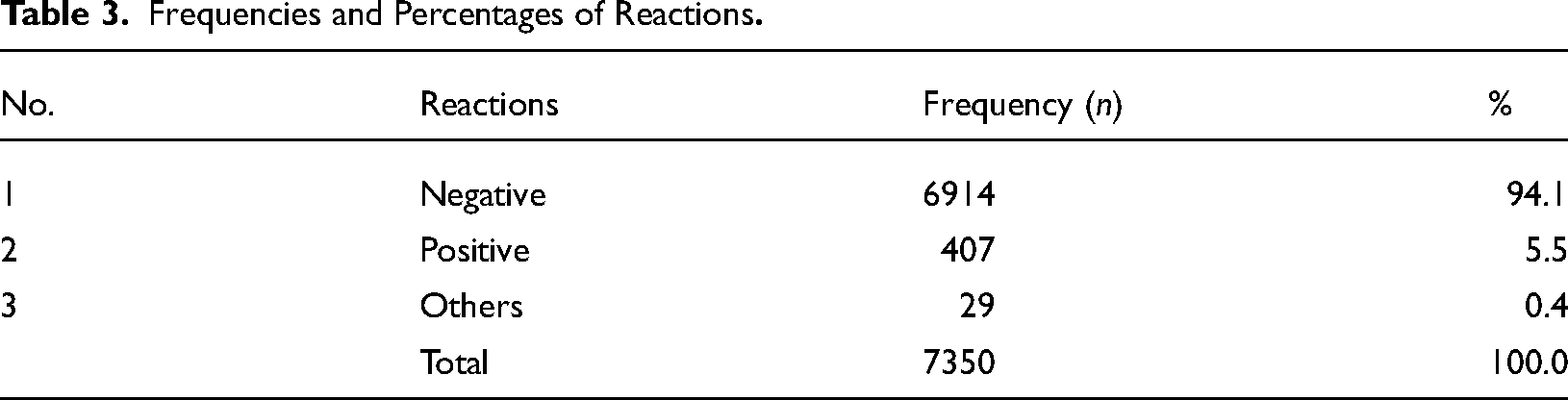

Reactions of users

For the reaction variable, the findings show that users almost always respond negatively (n = 6,914; 94.1%) when engaging with religious misinformation (Table 3). Conversely, only 5.5% (n = 407) cases show positive reactions: negative reactions are more than seventeen times higher than positive ones. Like topics of discourse, the analysis also found only 0.4% (n = 33) of other reactions. It implies that the qualitative findings for reactions are generalizable.

Frequencies and Percentages of Reactions

Misinformation appraisals

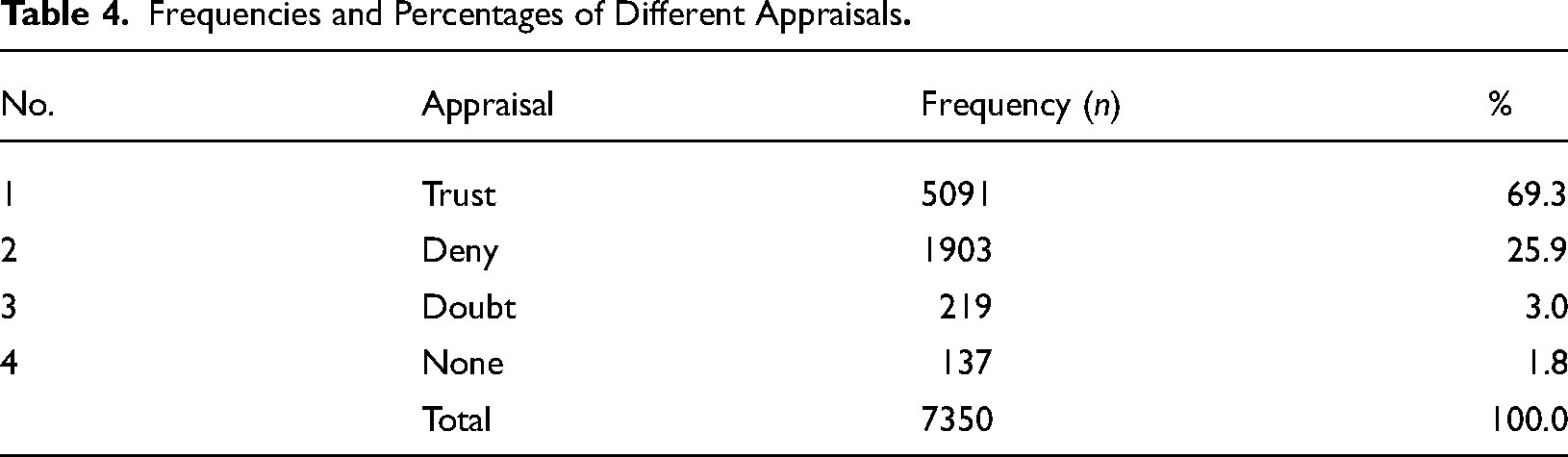

Most users tend to trust misinformation (n = 5,091; 69.3%), comprising nearly three-quarters of the total share (Table 4). Users who deny (n = 1,903; 25.9%) misinformation are far less than half of the believers. Only 3% (n = 219) of the users doubt misinformation while evaluating it, making their decision-making problematic. No instances of misinformation assessment were found for 1.8% (n = 137) users in the dataset, which may suggest their lack of information processing skills or enthusiasm.

Frequencies and Percentages of Different Appraisals

Topics and reactions

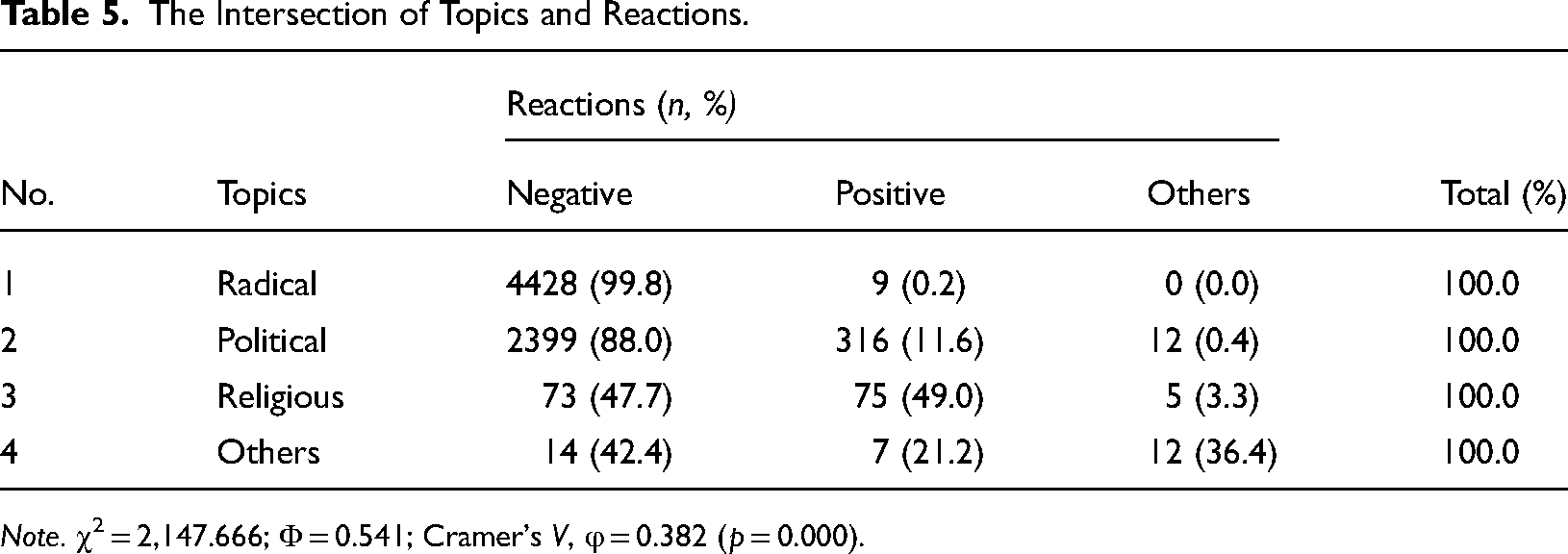

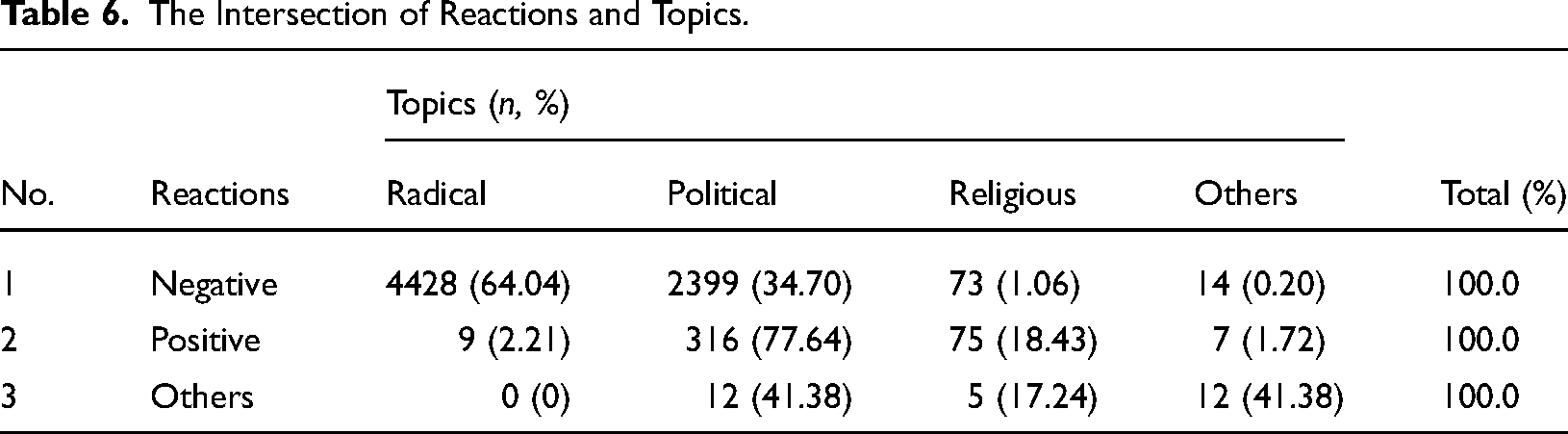

The contingency table of topics and reactions shows a few interesting findings of their intersections. Radical issues (99.8%) are mostly negative (Table 5). Political issues in discourse (88%) also provoke users to react negatively. However, Table 6 suggests political issues are less negative (34.70%) than radical issues (64.04%). Again, political issues are more positive (77.64%) than radical issues (2.21% positive).

The Intersection of Topics and Reactions.

Note. χ2 = 2,147.666; Φ = 0.541; Cramer's V, φ = 0.382 (p = 0.000).

The Intersection of Reactions and Topics.

For religious issues, users mostly exhibit more positive reactions (49%) than negative reactions (47.7%) (Table 5), which is noteworthy, although such a minor difference can be negligible as well. Notice that the “others” categories in both topics and reactions have a minimal presence in frequency and percentage analysis, so we have decided not to discuss them with emphasis.

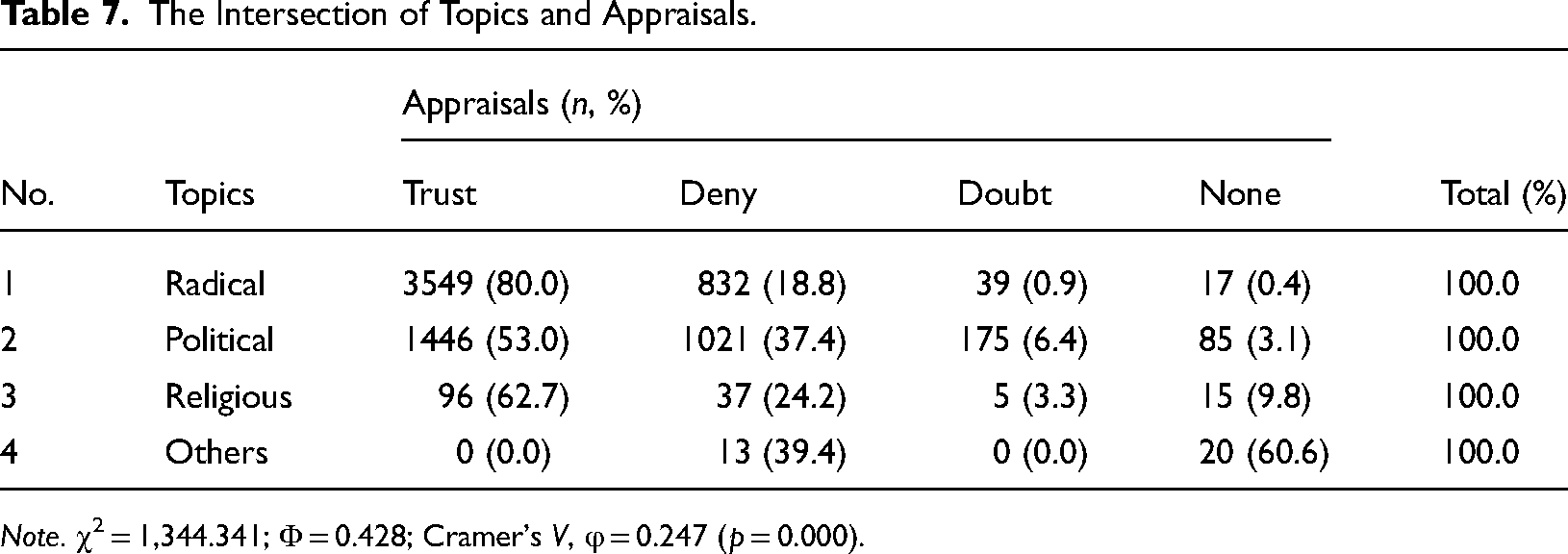

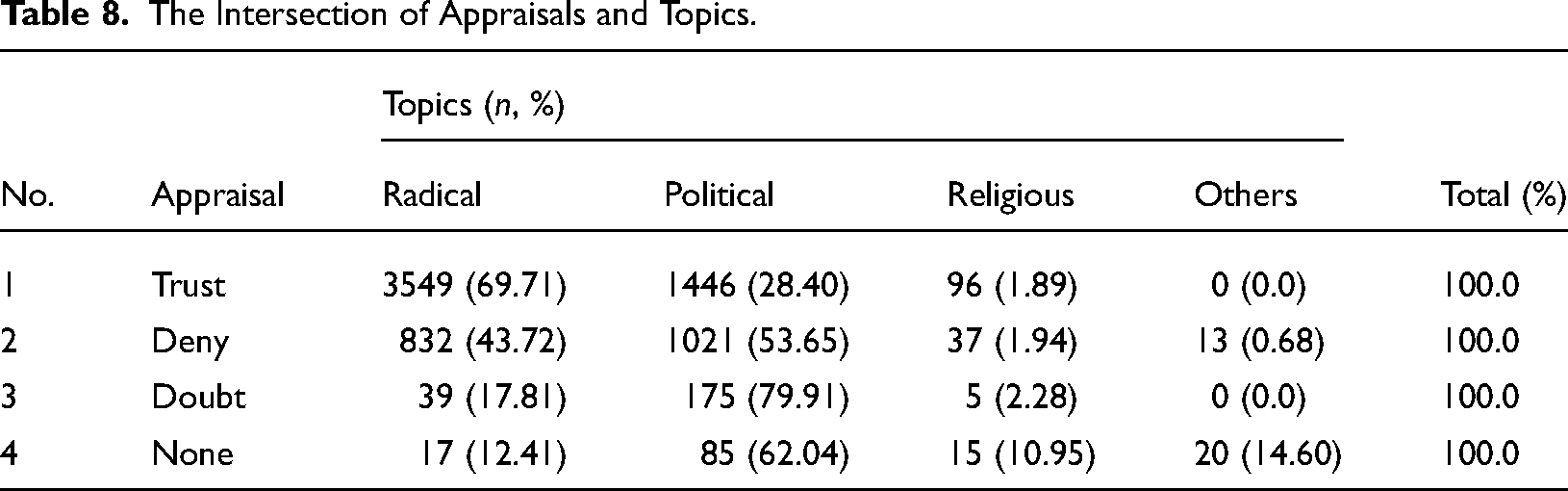

Topics and appraisals

Primarily, users who talk about radical issues (80%) believe in misinformation (Table 7). Users talking about political issues (53%) also trust misinformation more than denying or doubting it. However, users who talk about political trust (28.40%) in misinformation are less than users who talk about radical issues trust (69.71%) in misinformation, Table 8 suggests.

The Intersection of Topics and Appraisals.

Note. χ2 = 1,344.341; Φ = 0.428; Cramer's V, φ = 0.247 (p = 0.000).

The Intersection of Appraisals and Topics.

Instead, users who talk about politics (37.4%) exhibit a better misinformation-identification capacity than users who talk about radical issues (18.8%) (Table 7). Another result in the same table suggests that users who talk about religious issues (n = 96; 62.7%) are more likely to trust misinformation than political ones but less likely than radical ones. Users who talk about politics (79.91%) in the doubt category occupy the most significant space. These users (62.04%) also tend to avoid misinformation assessment more than others (Table 8).

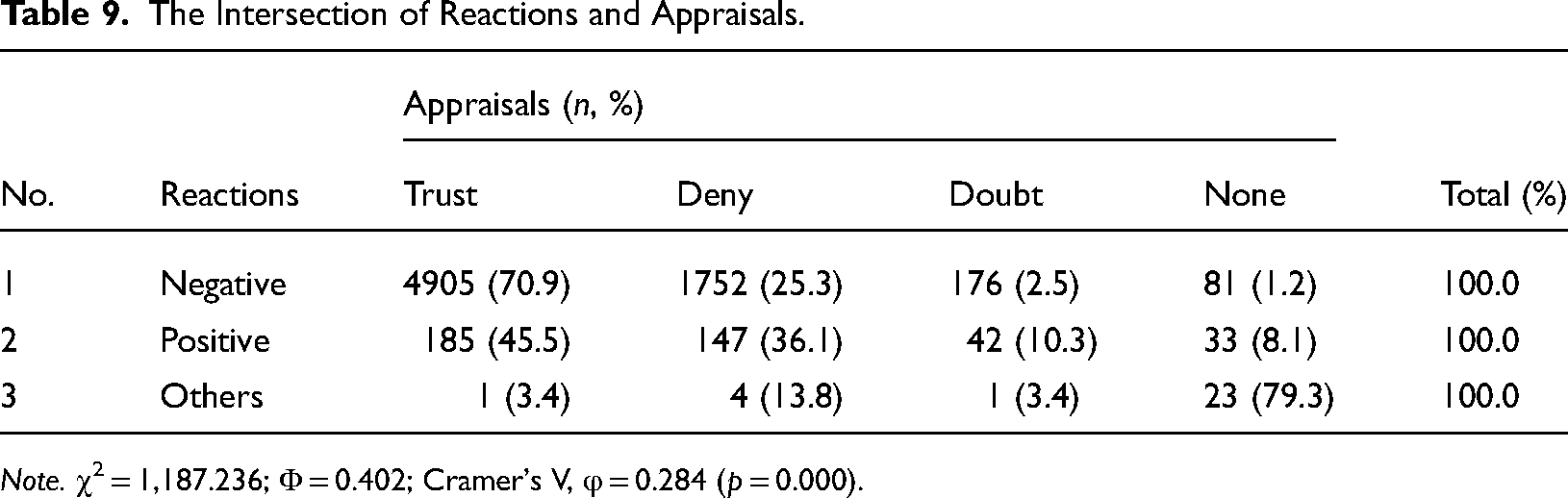

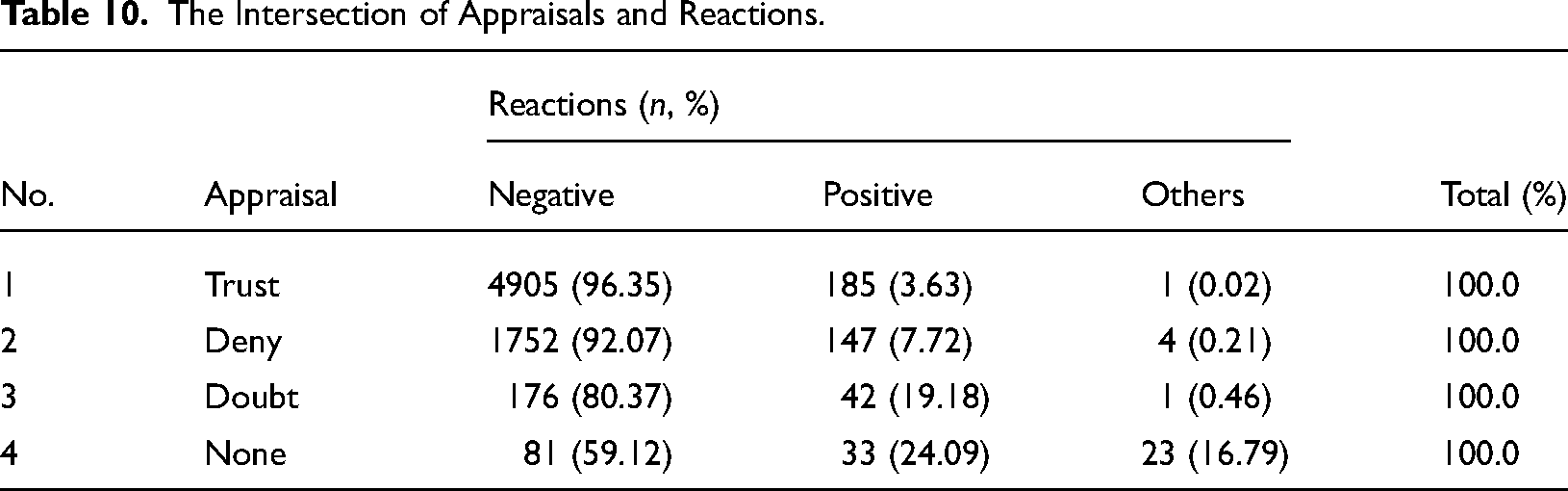

Reactions and appraisals

Users who express negative reactions (70.9%) predominantly trust misinformation (Table 9). Users who react positively also trust misinformation (45.5%) more than they deny it (36.1%) but are less likely to trust it than negative users. It also suggests a close relationship between reacting negatively and trusting misinformation. In misinformation appraisal, Table 10 shows that users of all appraisals have their highest shares of negative reactions, while trust (96.35%) has a comparatively higher proportion than others. Overall, the quantitative findings support the qualitative findings, suggesting that the categories for each variable are generalizable.

The Intersection of Reactions and Appraisals.

Note. χ2 = 1,187.236; Φ = 0.402; Cramer's V, φ = 0.284 (p = 0.000).

The Intersection of Appraisals and Reactions.

Discussion and conclusion

Objectives and key findings

The present study asks two interrelated research questions to understand social media users’ engagement with religious misinformation. A mixed-methods analysis suggests that users’ engagement can be defined from three perspectives: their topics of discourse, their reactions to misinformation, and their misinformation appraisal capacities.

This study has four major findings. First, users’ topics of discourse mostly revolve around religious, political, and radical issues. Radical issues, such as hatred and punishment endorsement, dominate the discourse, followed by political issues. Second, reactions to misinformation are dominated by negative reactions, except for only a few positive reactions. Third, nearly three-quarters of the users cannot identify misinformation, nearly three times the misinformation deniers. Fourth, users who talk about radical issues almost always react negatively and mostly trust misinformation. Similarly, almost all users who trust misinformation react negatively. Altogether, users who are both radical, react negatively, and trust misinformation comprise nearly half of all users.

The subsequent discussion sheds further light on these findings and other noteworthy insights, extending the understanding of these issues.

Radicality, aggressiveness, and digilantism

The prevalence of radical issues in users’ discussions indicates a negative tendency. Radical issues include hatred against others: the targets are followers of other religions and beliefs (e.g., Hindus and Buddhists, seculars, and atheists). Also, punishment endorsements, such as hanging or killing the alleged perpetrators who insulted Islam, are frequent in the dataset, which indicate users’ vigilante behavior (Chang & Poon, 2017; Smallridge et al., 2016). Furthermore, since almost all radical comments are negative, we infer that hate speech, frustration, anger, and other negative reactions are commonplace.

There is a chance that such intense behavior can stem from users’ personal religious beliefs, as it plays a significant role in online engagement, specifically with religious misinformation, shaping interactions within diverse religious communities on platforms like Facebook and Reddit and influencing the online religious landscape (Chopra, 2023). Since religion is a sensitive matter to its followers, they may exhibit extreme behavior when they feel their belief gets hurt, and blasphemous religious misinformation is more likely to instigate this feeling. This extreme religious sentiment is more common among Muslims (Rahman et al., 2015).

Extreme online language, such as hate speech, is a form of destructive aggressive communication called destructive symbolic aggression (Littlejohn & Foss, 2009). It differs from destructive physical aggression, which indicates physical actions like violence and vandalism: One can lead to another, often making them entwined. Destructive symbolic aggressive communication, a form of destructive behavior, is categorized into hostility and verbal aggressiveness. Both may define social media users’ radical verbal expressions when engaging with religious misinformation. Hostility explains users’ irritability, negativity, resentment, and suspicion. We found several indications of these behaviors among users. A group of users, for instance, were extremely unhappy with the authorities for not punishing the perpetrators for blasphemy and hostility against Islam.

Another key feature of users’ communication behavior is verbal aggressiveness. It incorporates a range of negative verbal and, to some extent, non-verbal expressions: character attacks, competence attacks, mocking, background attacks, appearance attacks, and threats (Infante & Rancer, 1996). Four reasons inspire verbal aggressiveness: psychopathology or repressed hostility, disdain for others, social learning of aggression, and deficiency in argumentative skills (Infante et al., 1992). This study found evidence of users’ lack of proper argumentative skills and, to some extent, disdain for others. However, the findings cannot confirm the other reasons for aggressiveness, leaving space for further investigation. Again, although verbal aggressiveness incorporates some self-concept and identity-related issues (e.g., character, competence, and appearance), it does not include other identity markers (e.g., race, ethnicity, gender, and nationality) that this study found.

At least two large user groups show destructive behavior: (a) those who believe that others are targeting their religion and they are becoming the victims, but the authority is silent instead of taking proper actions; (b) those who believe that conspirators are trying to frame minorities to harass and exploit them for greater interests. Generally, users from the first group trust misinformation, while users from the second group deny or doubt it. The first group of users tends to use words and phrases like Islam biddweshi (anti-Islamic), Malaun (accursed), bidhormi (infidel), and Iyahudi Nasara (Jewish and Christian). In contrast, the second group of users uses words like jongi (militant) and dharma beboshayi (religion merchants). These expressions are similar to what Rashid (2022) found while analyzing Facebook's public comments.

Users’ aggressive behavior manifests and reaches the peak through justice endorsement, which we previously noted as digilantism. Two major groups of users demand punishments: (a) the first group believes that perpetrators committed crimes against religion and demands exemplary punishment of the alleged perpetrator; (b) the second group believes that some fundamentalists are conspiring against religion and demands punishments for the conspirators. Most users in the first group trust misinformation (80%), while the majority in the second group deny misinformation (18.8%). What is common among them is that both groups demand mild and extreme punishments, such as imprisonment, beating, shooting, hanging, and burning. The proponents of punishments against misinformation producers believe the judicial system is neither pro-Muslim nor flawless. Instead, it serves other religions and institutions. The judicial system in Bangladesh is indeed affected by many problems, such as corruption, creating predicaments in achieving proper justice (Hossain, 2019; Tahmina & Asaduzzaman, 2018).

The second group of users demands punishments against the religious extremists who commit crimes against religious minorities and support them online and offline. These users believe religious extremists and politicians conspire against minorities to drive them out of Bangladesh, a severe crime that must be brought to justice. Since the judicial system is faulty, they also propose their own forms of punishment. Believing that lawlessness, corruption, and bias exist in the judicial process provides both groups a moral ground for justice endorsement and digilantism (Chang & Poon, 2017).

Political Islam, agency, and oppression

In political and radical discussions, it is important to understand political commenters who trust misinformation and express negative reactions, and who deny misinformation.

The first group of users consists mainly of the critics of political entities and ideas (e.g., the ruling party, party men, and anti-religious and anti-Islamic political ideologies). Some of them believe that Awami League, the current ruling party, is the friend of Hindu-dominated India and the enemy of Islam and Muslims. Its reasons can be manifold: the party's apparently secular stance, its historical–political alliance with India, its less supporting attitude to Islamic political entities and their demands, and its alleged Hindu patronization. Some other social–political factors contribute to this: two of them could be corruption and political uncertainty.

In the last decade, Bangladesh experienced a gradual drop in the Corruption Perception Index with increasing corruption in both private and public sectors. The country's rank dropped from 120 in 2011 to 147 in 2021 among 183 countries worldwide (Transparency International, 2022). Also, political corruption further intensifies the situation. For example, electoral manipulation, corruption in government offices, and corruption of political leaders are a few examples of political corruption in Bangladesh (Riaz, 2019, 2021; TIB, 2021). Even during the COVID-19 pandemic, widespread corruption in the health sector disrupted proper healthcare services (Julqarnine et al., 2020; TIB, 2021). The five indicators—electoral process and pluralism, functioning of government, political participation, political culture, and civil liberties—suggest that Bangladesh falls short of becoming a full or flawed democracy. These factors have transformed Bangladesh into a hybrid regime (Riaz, 2019; Riaz & Parvez, 2021), positioning the country at 75th in the Democracy Index ranking (The Economist Intelligence Unit, 2022).

Moreover, corruption and lack of justice may increase political uncertainty and pessimism among people. Corruption and lack of justice coupled with political pessimism lead to social frustration, as Riaz and Parvez (2018) suggested. These could be reasons for users’ adversities against political entities and ideologies that we found, often surpassing the other issues related to misinformation.

In recent years, India has been experiencing rising extremism in Hinduism with the help of the ruling party, the BJP. The possibility of Hindu domination further terrorizes Bangladeshi Muslims. The assumed conspiracy and patronization of Hindutva as two political affairs thus may create fear among Bangladeshi Muslims, threatening Muslim authority and sovereignty. These may also inspire them to trust misinformation and react negatively. We need more empirical studies to unravel and explain this complex nexus.

However, Islam cannot be understood without a holistic understanding of Bangladesh's historical and contemporary religiopolitical and social conditions. From the users’ discussion, we presume that Islam is a form of political agency to them. Islam could also be their last resort against all challenges, such as corruption, social insecurity, political uncertainty, rising Hindutva, and India's aggression. Contemporary Bangladesh is experiencing a revival of Islamism (Islam & Saidul Islam, 2018): Islamic symbols, sentiments, institutions, groups, activities, and practices are becoming ubiquitous, creating an Islamic ambiance in society (Hasan, 2020; Rahman, 2019). As a source of political agency, Islam could contribute to this revival.

The second group of users is important because political denial of misinformation is higher than two other topic categories—radical and religious. Al-Zaman (2021b) identified that users’ identification capacity of COVID-19-related political misinformation (trust 27.91%, deny 35.27%) is higher than other types of misinformation, including religious (trust 94.72%, deny 2.31%). The results suggest that users in Bangladesh possess higher political knowledge and interpretive ability, but this ability is highly absent regarding religious issues. The present study adds to previous knowledge that users are more likely to identify religious misinformation if they assess it from a more political perspective.

Political comments denying misinformation are primarily dominated by common reasonings that warrant extended discussion. First, when these users deny misinformation, they usually refer to previous incidents of similar misinformation devised to frame religious minorities and the political games of interest groups behind such conspiracies. Consequently, most of their reasoning concerns political motives of displacing religious minorities from their lands, which is at least a five-decade-old practice. Interestingly, this practice seemingly regenerates using social media and religious misinformation. Therefore, the politics of land grabbing seems essential to understanding online religious misinformation in Bangladesh (Barkat, 2018; Panday, 2016; Yasmin, 2015).

Many believe such land grabbing is a part of the corrupted politicians’ complex political games and multilayered interests (Panday, 2016). In hundreds of incidents of land grabbing, media, and non-profit organizations indicated the associations of politicians (Shwapon, 2015). Since land grabbing is one of the most crucial factors responsible for the Hindu exodus from Bangladesh (Panday, 2016), Barkat (2018) established a link between land grabbing, violence, systematic oppression, and minority expatriation. Focusing on Hindu minorities who suffer the most from systematic deprivation, he found 11.3 million Hindus expatriated from Bangladesh between 1964 and 2013.

Despite ample existing evidence, including media reports and witnesses, limited academic scholarship investigated social media's use as a political tool in the systematic and structural violence against minorities in Bangladesh. In recent years, religious misinformation has been propagated on social media intentionally to serve specific political purposes of interest groups. For example, Muslims’ attack on Hindu households in Sunamganj was a plotted incident inspired by political interests (Liton, 2021). The local political leader of the ruling party produced the misinformation, gathered local Muslims led by his political strongmen, and encouraged the furious Muslims to attack households. Investigations found different political motives behind similar attacks: some are connected to the ruling party, some are connected to Islamic and other extremist groups, and some are connected to property-related issues. This discussion suggests that religious misinformation-led violence may have more political connections than religious ones.

Limitations and future research

This study has a few limitations. First, the themes that emerged from the qualitative analysis were not mutually exclusive, meaning, at some points, they overlap. It is an unavoidable problem in similar social media research. Second, Facebook often relies on its third-party fact-checkers to flag and remove misinformation content. It is conceivable that much data regarding misinformation can be lost in this way. We suspect this study suffers from such a data loss—the data that could have been added to the sample could have yielded more insights. Third, CrowdTangle has limited access to Facebook data and other limitations for tracking public data (Fraser, 2022). Therefore, a methodological triangulation for data collection could have mitigated this gap to a certain extent. However, due to time and resource constraints, it was not possible. Fourth, it only focused on Bangladesh, a small South Asian country with a Muslim majority, a growing social media user community, and frequent interreligious violence led by online misinformation. Therefore, the findings of this study may not be generalizable for other countries with entirely different social-religious settings.

Despite these limitations, this study unravels an essential but poorly studied research problem. Moreover, it offers some novel findings that would be helpful for the academic understanding of a complex web of online radicality, politics, misinformation, and religious violence. We hope it will encourage and guide future researchers to explore online religious misinformation more comprehensively in the Bangladeshi context. More specifically, in response to a dearth of empirical evidence, we encourage researchers to investigate the diversity of online religious misinformation and their connections to events, ideologies, and actors more precisely as the first step in understanding this problem from the ground. Researchers may also endeavor to explore who the creators of misinformation are, who benefits, who is attacked by the religious misinformation, what specific religions are involved in political domination, and how users of religious information are attracted, immersed, or satisfied. An understanding of the intermediaries or moderating factors that affect this involvement process with religious misinformation can also yield valuable insights.

Footnotes

Acknowledgments

I thank Dr. Geoffrey Rockwell, Dr. Gillian Stevens, and Dr. Harvey Quamen for their cordial cooperation and contributions.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical statement

The research did not involve any human participants and received a research ethics exemption from the University of Alberta Research Ethics Board, No. Pro00121333, on June 13, 2022.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Data availability

A data paper along with the dataset can be found here: https://doi.org/10.1016/j.dib.2023.109439

Codebook for Quantitative Analysis.

| Themes and categories | Defining factors |

|---|---|

| Topics | |

| Religious | Mention of religious actions (e.g., namaz, puja); sacred religious texts (e.g., the Quran, hadith); religious monuments, institutions, and objects (e.g., mosque, temple); religious ways of life (e.g., prohibitions); religious entities (e.g., clergies); religious revivalism, religious cohesion, and discussions around theological interpretations and religious practices that may include debates on religious doctrine or dogma. |

| Political | Mention of regional, national, and local politics (e.g., India–Bangladesh relations); political institutions (e.g., political parties, government, governmental wings, and offices, media); law enforcement (e.g., police); political entities (e.g., political leaders, Islamists, seculars); political processes (e.g., policymaking, political corruption); political ideologies (e.g., Hindutva, anti-Hinduism, and anti-Islamism, conspiracies); discussions on governance systems and policies. |

| Radical | Indications of hatred (e.g., racial, ethnic, and religious slurs); mentions and endorsements of actions (e.g., jihad, revenge, punishment, extremism); expressions of extremist views, incitement to violence, or support for radical movements. |

| Others | This category includes comments that are different from the three defined topics, such as general discussions on unrelated topics, personal anecdotes, or off-topic remarks. |

| Reactions | |

| Positive | Emotional reactions that are considered positive, such as love, interest, serenity, constructive criticism, and suggestion; expressions of agreement or appreciation towards a religious or political viewpoint. |

| Negative | Emotional reactions that are considered negative, such as anger, hatred, despise, frustration, mockery, resentment, and irritation; expressions of disagreement or disapproval towards a religious or political viewpoint. |

| Others | This category includes comments whose emotional valence could not be properly identified or those that exhibit a mixed emotional response. |

| Appraisal | |

| Trust | Trust misinformation with or without proper reasoning; acceptance of false information as true based on belief or lack of critical evaluation. |

| Deny | Deny misinformation with or without proper reasoning; rejection of false information as untrue based on skepticism or critical evaluation. |

| Doubt | Neither trust nor deny, perhaps due to a lack of enough evidence and reasoning skills; uncertainty or indecision regarding the veracity of the information. |

| None | No indication of misinformation assessment; absence of any discernible evaluation or response towards the presented information. |

Note. This codebook is based on qualitative findings.