Abstract

This article highlights the role of epistemic norms in mitigating the spread of misinformation. The mixed-methods study includes exploratory reconstructions and survey experiments. Two intervention approaches proved efficient in reducing the sharing of misinformation, but only one significantly differentiated between true and false information. This study contributes to the literature on normative countermeasures and is the first to emphasize epistemic norms. Although misinformation is fundamentally an epistemic issue, scholars rarely refer to the rich epistemic literature for conceptualization and theory. The article also draws on focus theory and additional social literature to ensure a holistic framework. In addition to underscoring the role of epistemic norms, we suggest temporal proximity as a new factor to consider in focus theory-based measures. Ethical, empirical, and practical implications are discussed, emphasizing the need to control for countermeasures’ effect on true information sharing, which may threaten to suppress public discourse.

Concerns about the online dissemination of misinformation have prompted efforts by academics and industry to explore various countermeasures. A range of methods were tested and found effective in limiting the spread of misinformation to varying extents (Rastogi and Bansal, 2023; Roozenbeek et al., 2023). Some studies explored human-focused solutions, while others focused on automated moderating tools (Da Silva et al., 2019; Guo et al., 2022; Molina et al., 2021; Record and Miller, 2022b; Uscinski and Butler, 2013; Vaccaro et al., 2020). Some studies investigated top-down organizational gatekeeping tools, while others adopted a user-centered approach to help individuals make responsible decisions (Delellis and Rubin, 2020; Hoes et al., 2024; Nekmat, 2020; Prike et al., 2024; Vraga et al., 2022).

Some studies specifically focused on social norms, identifying norm changes as a cause for the dissemination of misinformation and proposing norm-based interventions as possible solutions (Gershon, 2010; Hoes et al., 2024; Pennycook et al., 2020, 2022; Prike et al., 2024). In a systematic review of countermeasures for vaccine misinformation, Roozenbeek et al. (2023) conclude that social-norm nudges have “promising, albeit preliminary results” (p. 195), while more generally, the research on misinformation countermeasures is still “in its infancy” (Roozenbeek et al., 2023: 189). The norms-based solution can be an alternative to the involvement of moderation and censorship by mega-corporations, which is seen by some as a controversial solution (Karppi and Nieborg, 2021; Miller, 2024a; Record and Miller, 2022b).

In addition to providing new evidence to the social-norms countermeasure literature, this study addresses two additional gaps—one theoretical and one empirical. The theoretical gap arises from researchers largely overlooking the specific role of epistemology and epistemic norms in the spread of misinformation. Recognizing the close connection between epistemic standards and the technological affordances of inquiry, dissemination, and validation within the sociotechnical environment (Amico-Korby et al., 2024; Bruckman, 2022; Godler et al., 2020; Miller and Record, 2013, 2017; Nguyen, 2023; Record and Miller, 2018, 2022a), social epistemologists theorize that a major driver of misinformation online is the instability of social media-sharing norms and their lack of epistemic checks (Marin, 2021; Record and Miller, 2022a; Rini, 2017). Sharing is a relatively new speech act, unlike telling or promising, for example. It directs others’ attention to content and expresses the sharer’s perception of its shareworthiness (Arielli, 2018). However, media ideologies regarding sharing—specifying what posts are appropriate to share, in which medium, by whom, and to whom—have not yet stabilized (Arielli, 2018; Gershon, 2010, 2020) and largely do not pertain to epistemic features of the candidate posts to be shared, such as their veracity and evidential support (Record and Miller, 2022a).

Unlike the theoretical philosophical literature, most empirical studies dealing with misinformation adopt a social or psychological perspective (e.g. Ecker et al., 2022; Pennycook et al., 2022; Roozenbeek et al., 2023; Wardle and Derakhshan, 2017), overlooking the two-millennia worth of philosophical scholarly and conceptualization of knowledge. In this study, we test how peoples’ inclination to share misinformation is related specifically to epistemic norms (Record and Miller, 2022a), namely, norms that regulate communicative acts and pertain to the attainment of epistemic goals such as knowledge, truth, and justification. As discussed below, epistemic norms are often regarded as a subtype of social norms, but with their own unique value.

A common methodological weakness in empirical studies of sharing behavior creates an empirical gap. Most experiments do not examine whether measures to reduce the spread of misinformation also reduce the spread of true information. Tools that do not discriminate true from false information may demotivate people from engaging in public discourse and could be democratically dysfunctional (Hoes et al., 2024). Examples of empirically comprehensive studies that did use such controls are those of Pennycook et al. (2020, 2022), and more recently, Hoes et al. (2024), whose study focused on that exact distinction.

While this second gap might seem technical, it has social and ethical implications as well. Interventions employed by the industry have the potential to limit free speech and dissolve the socially desirable public political discourse. Studies have already found that people become too skeptical of true information (Hoes et al., 2024) or “too scared to share” (Weeks et al., 2024) and refrain from participating in political discourse when they are too afraid of the potential backlash.

We thus conducted two studies to examine the role of epistemic norms in sharing misinformation. We started with exploratory, qualitative research, which used reconstruction interviews (Reich and Barnoy, 2016, 2020) to identify social media users’ normative accounts for sharing misinformation; we used these explanations to construct epistemic normative interventions to be tested in Studies 2a and 2b. Study 2a consists of an online survey experiment that tests the effect of interventions that set social epistemic norms of responsible sharing, on participants’ willingness to share a post containing misinformation; Study 2b consisted of a preregistered replication, which tested the effect of the same measures on the sharing of both true and false information.

In the following section, we start by discussing the concept of epistemic norms, the distinction between them and other social norms, and the studies that explore norms that might be considered epistemic. We then move on to discuss why people share misinformation, leading to the first research question. We close the literature review with a short discussion of the countermeasures that were explored, which leads to our second research question.

Epistemic norms and focus theory

Existing accounts in epistemology and related fields of sociology and the history of knowledge characterize epistemic norms as norms that aim at the production of epistemic goods, such as truth, knowledge, and justified belief (“epistemic,” from the Greek word for knowledge: episteme).

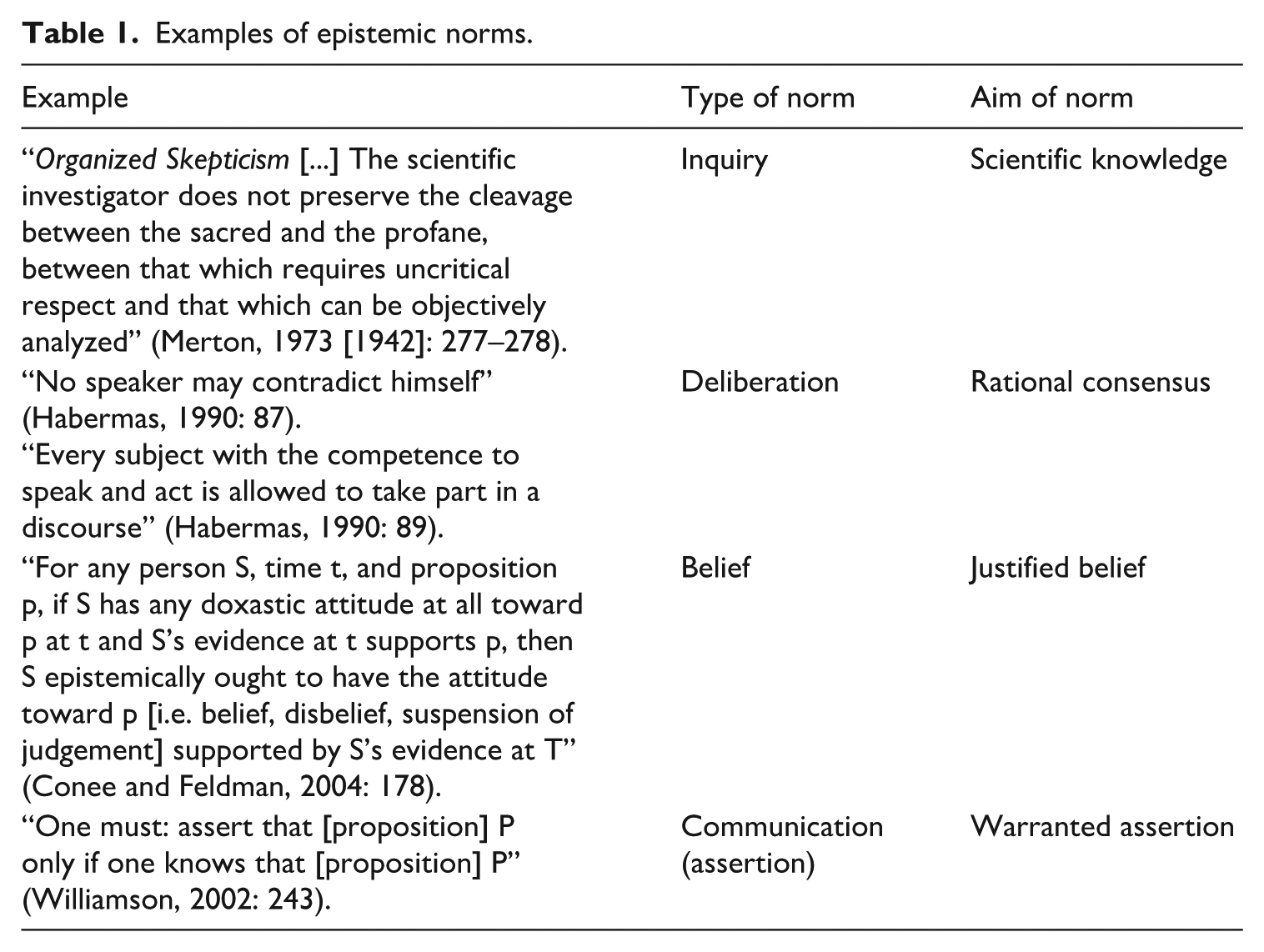

There are different classes of epistemic norms; the four most prominent classes are detailed here and summarized in Table 1, alongside examples. One prominent class governs inquiry, particularly scientific inquiry, and these norms typically aim at generating knowledge, as opposed to error or poorly substantiated claims (Friedman, 2020; Longino, 1992, 2002; Merton, 1973 [1942]; Miller, 2021, 2024b; Oreskes, 2019). Another related class of epistemic norms are norms of public deliberation, which aim at rational consensus or communal knowledge (Habermas, 1990; Longino,1992, 2002). Another class is norms of belief, which aim at justified belief (Conee and Feldman, 2004; Clifford, 1877). While these philosophical accounts of epistemic norms are highly theoretical and idealized, there is a growing body of empirical work testing philosophical claims about such epistemic norms (Kneer, 2018; Turri, 2021).

Examples of epistemic norms.

A fourth class of epistemic norms, most relevant for this study, is the epistemic norms of communication. Different speech acts, like asserting and conjecturing, are governed by different kinds of norms (Marsili, 2025; Record and Miller, 2022a), and at least for some of them the governing norms are epistemic in nature. For example, epistemic norms of assertion specify the epistemic conditions under which it is permissible for someone to make an assertion (Marsili, 2025); knowingly asserting false content violates the norm of assertion. Moreover, some kinds of speech acts (e.g. assertions) are governed by more demanding epistemic norms than other kinds of speech acts (e.g. conjectures). Accordingly, one must pass a higher evidentiary bar, or enjoy a higher level of justification, in order to permissibly tell a hearer that something is the case, than in order to conjecture that something is the case.

Beyond speech act distinctions, epistemic norms also have unique general characteristics distinguishing them from other social norms. Henderson (2020), for example, described them as “widely shared sensibilities about state-of-the-art ways of pursuing projects of individual veritistic value” (p. 281). And yet, for the most part, they are viewed as a subcategory of social norms that specifically address epistemic conditions such as states of knowledge, levels of evidentiary support, and so on.

An important distinction is that the philosophical literature does not address the epistemic as merely descriptive norms—that is, how people actually behave—but as injunctive ones—that is, what behaviors people approve or disapprove and expect of each other (Gimpel et al., 2021). Accordingly, epistemic norms are manifested, first, in common tendencies to generate, and to avoid generating, speech acts with certain kinds of contents; second, in common forms of reactions to speech acts, such as questions about whether and how speakers satisfy the relevant epistemic norms, or in criticism of speech acts that violate such norms; and third, in responses to such criticisms, in the form of justifications or excuses of apparent violations of the norm (Green, 2017; Williamson, 2002).

In general, epistemic norms play an important role in explaining the reliability of central forms of communication (Graham, 2020; Green, 2009). Social epistemologists theorize that a major catalyst for the online spread of misinformation is that epistemic norms of sharing content on social media are unstable and lack epistemic checks; namely, factors such as the validity and truth of the post do not play a salient role in determining whether it is normatively appropriate to share the post. Social epistemologists further theorize that even when epistemic factors play some role, norm instability leads to users’ conflicting and miscalibrated assessments of shared information’s truthfulness and validity, as well as the credibility and responsibility of those posting it. These, in turn, shape the beliefs they form from the post and their subsequent decision to share it or not (Frost-Arnold, 2021; Marin, 2021; Record and Miller, 2022a; Rini, 2017).

Record and Miller (2022a) theorize that a general template for the norm of sharing is that one should share a post only if that post is worthy of attention (p. 40). They argue, however, that this formulation is thin because different users, in different contexts, have conflicting interpretations of what is worthy of attention and what a call for the audience’s attention entailsfor example, whether sharing entails endorsement of the shared content by the sharer (Allen, 2014; Arielli, 2018; Marsili, 2021; Perry, 2021). Moreover, this formulation is not epistemic, in that it lacks checks on the epistemic standing of the content being shared.

If the instability of epistemic norms and lack of epistemic checks contribute to people’s sharing misinformation, then stabilizing countermeasures which set epistemic standards of evaluation might be effective in mitigating such spread. An effective countermeasure to misinformation could be one that communicates consistent epistemic norms to users.

Bringing norms to users’ attention to influence their behavior is not a new idea, as summarized more than three decades ago already in Cialdini et al.’s (1991) focus theory. According to the focus theory, the ability of a norm to affect an individuals’ behavior depends on their focus on it. Social norms compete with other factors that shape human behavior and their effect is increased when they are triggered—“made salient or otherwise focused upon” (Cialdini et al., 1991: 204). Throughout their set of nine experiments, a systematic and powerful effect of focusing users on norms was demonstrated, though the effect varied depending on factors such as how the norms were primed, the use of physical arousal, environmental factors, and more. A recent replication of the study supported the general effect, though not in all variations (Bergquist et al., 2021).

Applying Cialdini et al.’s theory to social epistemic norms and their role in the spread of misinformation leads to two primary expectations. First, epistemic norms will likely play a role in people’s account of their decision to share misinformation. While they might present a variety of reasons for doing so in hindsight, merely encountering questions on an issue that is inherently epistemic—misinformation—will focus their accounts on related issues. Second, countermeasures that focus users’ attention on epistemic norms will affect their willingness to share misinformation.

Gimpel et al. (2021) have already applied the focus theory to the study of misinformation countermeasures. Their study found that social norms brought into focus indeed impact users’ reported behavior, and that they can be “guided in their behavior by highlighting desirable behavior and making transparent what other users are doing” (Gimpel et al., 2021: 213). A few other studies also applied epistemic norms when studying misinformation countermeasures, though usually implicitly, without an epistemic theory or conceptualization. The most prominent example is Pennycook et al. (2022) promotion of the “accuracy norm.”

In this study, we extend prior research to develop a framework that acknowledges the relevance of focus theory and social norms, while emphasizing the undeniable relevance of epistemic conceptualization to the study of misinformation countermeasures. After we presented the two theories that frame this study, and before moving to our method and results, we set some specific expectations based on existing studies that explored both reasoning for sharing misinformation and relevant countermeasures.

Why do people share (mis)information?

In addition to social norms, individuals pursue various goals that shape their beliefs and values. Among these goals are epistemic and accuracy goals, which compete with moral, system, status, existential, and belonging goals (Van Bavel and Pereira, 2018). Studies on misinformation have often attributed individuals’ belief systems to “partisan reasoning,” wherein people prioritize beliefs aligning with their ideology, linking social media echo chambers to the spread of misinformation driven by political motivations (Van Bavel and Pereira, 2018; Clayton et al., 2019; Enders and Smallpage, 2019; Kahne and Bowyer, 2017; Origgi, 2018; Schaffner and Roche, 2016).

However, while political reasoning is often the cause for peoples’ adoption of false beliefs, evidence suggests that other reasons are responsible for people’s decision to share misinformation. According to Pennycook et al. (2022) analysis, it is mostly lack of attention that prevents people from even evaluating veracity, and that leads them to share misinformation. So, while individuals may possess the ability to discern between true and false information, even when it contradicts their pre-existing beliefs, lack of attention leads them to intuitively decide whether to share a post without evaluating its veracity.

While additional studies explore motivations that support the idea of unintentional sharing, others address more directly the cases where sharing of misinformation could also be deliberate. Petersen et al. (2023), for example, identified the “need for chaos” tendency. In this case, epistemic norms might not play a role at all. Globig and Sharot (2024) suggest that information sharing is a value-based choice, like other economic decisions, users weigh their options based on gain–loss potential. Appling et al. (2022) identified multiple motivations for sharing, including “sharing for humor.”

While there are many studies exploring the reasoning, most of them infer these reasons based on effectiveness of countermeasures (Pennycook et al., 2020) or analysis of the spread of the content (Globig and Sharot, 2024); studies that collect people’s testimonies are very scarce. This is understandable, as with sensitive issues, such as sharing misinformation, self-testimonies are very likely to be more biased than usual. Hence, in this study, we use the reconstructions method to mitigate the levels of self-reporting social desirability bias (Reich and Barnoy, 2020).

To understand the role that epistemic norms play in individuals’ decisions to share misinformation, we present the first research question (RQ1): Which epistemic norms-related reasoning do people use when asked about their decision to share a post that contains misinformation? While individuals may not explicitly discuss epistemic norms in interviews, our methodology focuses on identifying representations of these norms, as detailed in the “Method” section.

Countermeasures to the spread of misinformation

Understanding why people share misinformation is crucial for developing effective countermeasures. Various strategies have been proposed to combat the spread of misinformation. In a systematic review of countermeasures for vaccine misinformation, Roozenbeek et al. (2023) identified 8 categories with 14 subcategories of approaches. Some target individuals are to influence decision-making, while others are employed at the organizational level. Despite the detailed categories, the authors note that research on misinformation is still “in its infancy” (Roozenbeek et al., 2023: 189).

One category mentioned is social-norm nudges, which Roozenbeek et al. (2023) find to have “promising, albeit preliminary results” (p. 195). These countermeasures, grouped under “Nudging,” include accuracy primes explored by Pennycook et al. (2022) and Altay et al. (2022). Both approaches operate under the premise that misinformation sharing is often unintentional, stemming from a lack of attention or carelessness. By prompting individuals to consider accuracy-related social norms, such as that “Most responsible people think twice before sharing content with their friends and followers” (Andi and Akensson, 2021), they are more likely to refrain from spreading misinformation.

However, social norm nudges remain understudied (Hoes et al., 2024; Prike et al., 2024; Roozenbeek et al., 2023). Moreover, within the surveyed studies, none seem to explicitly acknowledge the role of epistemic norms. While Pennycook et al. (2022) accuracy norm can be easily categorized as an epistemic one, there is still a need to broaden both the theoretical and empirical scope to encompass various types of epistemic norms and assess their impact comprehensively. This research gap motivates our RQ2, which investigates the effectiveness of interventions aimed at making various epistemic norms more salient.

So, to explore the effectiveness of epistemic norm-based interventions, we present RQ2: Does exposing participants to stories debunking norms that permit the sharing of misinformation reduce their willingness to share a post containing misinformation? In the Supplemental materials are the specific designs of the survey experiments, including the format of the manipulation (5a–5c).

Method

While existing research provides a solid foundation, our study aims to address specific gaps by employing a mixed-methods approach. This article relies on two studies: The first (S1) is an exploratory qualitative study, which includes reconstruction interviews meant to identify the reasons people had for sharing misinformation. The second (S2) study included an online survey experiment (S2a) and a replication (S2b), meant to test whether normative interventions that defeat reasons identified in the first study are effective in reducing users’ willingness to share a post.

We designed the mixed-methods series of studies using the “Sequential Exploratory” strategy (Guetterman, 2015), which emphasizes the quantitative phase. First, qualitative data is collected and analyzed, and then the quantitative data collection is designed based on the results of the qualitative phase. Qualitative research is effective in uncovering unknown factors in emerging research questions, such as those related to misinformation sharing. However, quantitative methods are essential for validating causality and enabling broader generalizability.

In this study, we use a survey experiment—a design that combines the strengths of randomized experiments and population-based survey sampling, thereby improving both causal inference and external validity (e.g. Schachter and Weisshaar, 2024; Sniderman, 2018; Sniderman and Druckman, 2011). As Mullinix et al. (2015) explain, such studies “combine experiments’ causal power with the generalizability of population-based samples” (p. 109).

Study 1

This study included a set of reconstruction interviews with a sample of 25 Israeli Facebook users. As a qualitative exploratory study, the sample was not meant to achieve representativeness, but rather diversity (Jansen, 2010) (for details on the sampling process see Supplemental materials 1a). For each participant, we tracked before the interview several posts they had recently shared on social media, one of which manifestly contained misinformation. The user was first asked to recreate and report on different aspects of sharing the posts, specifically their reasons and motivations. Then we revealed to them that one of the posts clearly contained misinformation and asked them about it. A thematic analysis of their answers, which included their attempts to defend the sharing despite its violation of the epistemic norm of truth, constituted the basis for the norm-debunking stories presented to subjects in Study 2.

The primary benefit of the reconstruction method (Reich and Barnoy, 2016, 2020) over traditional interviews is that the interviewees are presented with something they produced (a post containing misinformation in our case), and by doing so it mitigates self-reporting biases and yields more reliable answers, as interviewees invest their cognitive efforts in remembering rather than reflecting (Barnoy, 2022). The thematic analysis was based on Kacen and Krumer-Nevo’s (2010) 7 stages analysis method (for full details on the analysis method see Supplemental materials, 1b).

Study 2

Experiment 2a

Following the “Sequential Exploratory” strategy (Guetterman, 2015) of mixed-methods studies, we designed six interventions based on the results of Study 1. The intervention tested whether making salient norms that debunk reasons identified in Study 1 would affect participants’ willingness to share a post containing misinformation. Participants (N = 1001, a representative sample of the Israeli population) were randomly assigned to one of the between-participant interventions or to the control group, using Qualtrics’s randomizer. Screenshots of all posts, the full texts of the interventions (stories and labels), and a visualization of the survey flow are provided in Supplemental materials 5a–5c.

The primary interventions consisted of four different stories, disguised as a literacy test, each expressing a rejection of a defense offered by an agent who shared false content. A fifth, reverse intervention normatively supported the justifications and excuses in the story. This condition was designed to test whether affirming the reasoning behind sharing misinformation, rather than challenging it, would increase participants’ willingness to share. The sixth intervention tested the “auto-pilot” theme, with a label on the post itself reading, “Facebook identifies that this post contains scientific information. Should it be shared? For additional information click here,” encouraging users to pause and think before sharing.

The survey flow began with a standard consent form, followed by the different story versions. Then, participants were asked to complete a survey on social media use and behavior. Participants were shown a post containing misinformation—a report about an alleged study claiming that moderate consumption of alcoholic drinks could protect against COVID symptoms—and were asked to share it. The report also included a fabricated academic journal, university, and email address. Participants were presented with a seemingly genuine request to share the post, alongside an incentive for those who agreed (participation in a lottery). The pretext for the sharing request was follow-up research and a need to recruit participants.

After indicating whether they agree to share the post, participants were asked to report if they noticed any misinformation in the post (“noticing misinformation”). Finally, they were told that the request to share was not genuine. It was hypothesized that the exposures would lower participants’ willingness to share unchecked information (potentially misinformation), except in the case of the reverse intervention.

At the end of the survey a list of socio-demographic questions were presented, including Political orientation, level of activity on social media, Level of Religiosity, and Level of Education as control variables (see Supplemental materials, 2a–2c for full details on the measurement and analysis).

Experiment 2b

Experiment 2b included the same interventions as Experiment 2a, with three key changes. The first change involved making the falsity harder to detect. Unlike S2a, the post did not include a fabricated academic journal, university, or email address, only the false claim about alcohol consumption. The second change was the addition of a follow-up question to assess participants’ ability to recall the content of the post. Their responses were coded to develop the “content understanding” measure.

The third change was that we introduced the Falsity factor: At the post-sharing stage, participants (N = 1543; a representative sample of the Israeli population) were randomly assigned, using Qualtrics’s randomizer, to either a misinformation condition, in which they were asked to share a post that contained misinformation, or to a true information condition in which they were asked to share a post containing true information (yielding a 2 × 7 design). The true post included an authentic screenshot from the World Health Organization’s (WHO) web page, claiming that moderate alcohol consumption does not prevent COVID-19 symptoms; the misinformation contained a manipulation of the same screenshot, edited to claim the opposite—that moderate consumption of alcoholic drinks does prevent Covid symptoms (similar to S2a). Participants could easily find the correct information by searching online.

Intervention unification in the analysis

Following the standard distinction in ethics (Austin, 1961), epistemology (Williamson, 2015), and the law (Greenawalt, 1986), between two types of defenses—justifications and excuses—these interventions were grouped into two categories in the analysis: justification intervention and excuse intervention, depending on the defense that appeared in them: Justifications are defenses in which a person denies that an action was bad, whereas excuses involve a denial of responsibility. Accordingly, justification interventions involved descriptions where the agent is described as claiming that sharing false content was not bad; excuse interventions involved descriptions where agents did not deny that sharing false content was bad, but rather denied that it was they who were responsible for the violation.

To ensure this grouping did not distort the results, we analyzed both the grouped and ungrouped variables and compared them. We found that this grouping did not significantly alter the main findings, while facilitating cross-study comparisons throughout the article. In the Supplemental materials (3c and 3d), we present the model with separate interventions, along with a comparison of effect sizes between the two models (with and without the grouping).

In both survey experiments, we report the best-fitting model, determined by likelihood ratio chi-square tests comparing the fit of the logistic regression models. Complete tables comparing the models are available in the Supplemental materials (4).

Results

Study 1—reasons for sharing misinformation

The analysis resulted in five common explanations people provided for sharing misinformation despite their violation of the epistemic norm of truth. Two were grouped under “excuses,” two under “justifications,” and the fifth referred to the way social media is used. Since this study is exploratory, we only include here a summary of the findings, and we expand on it in the Supplemental materials (1b and 1c).

“Excuses” included two repeating types of explanations people used, which were meant to relieve themselves of responsibility for the act of sharing: (1) “I am not a journalist” and (2) “reliable sources.” The former is a claim that, unlike journalists, a regular user does not have the responsibility to verify information before sharing it. The latter is a claim that since the author of the post they shared is perceived as reliable (based on past acquaintance or reputation), there was no reason to doubt the veracity of the content.

“Justifications” included two types of explanations that instead of relieving users from responsibility for sharing, suggested that sharing the post is justified, despite it containing misinformation. One type of justification is that the shared post, despite containing misinformation causes (3) “no harm”; the second type asserts that the content, despite containing falsehoods, represents a (4) “greater truth”—for instance, while it may be true that a certain statement was not uttered by a particular politician, the statement still represents the politician’s actual views.

The final theme identified concerns in which the way social media is used. The claim was that sharing is often an incidental act, decided on a sort of (5) “auto-pilot” mode, as the platform’s structure does not encourage users to stop and think before sharing. Instead, the form and format of the app or website often encourage immediate sharing. For full details on frequencies, quotes, and additional insights, see Supplemental materials 1b and 1c.

Study 2—exposure intervention associated with the willingness to share information

Experiment 2a

Participants identified themselves in political and religious terms, with the highest frequency in non-religious (N = 408; 40.76%), and right-wing oriented (N = 363, 36.26%). Most of them (75.82%) reported their activity level on social media as negligible to moderate (see Supplemental materials 2a for descriptive statistics of all control variables).

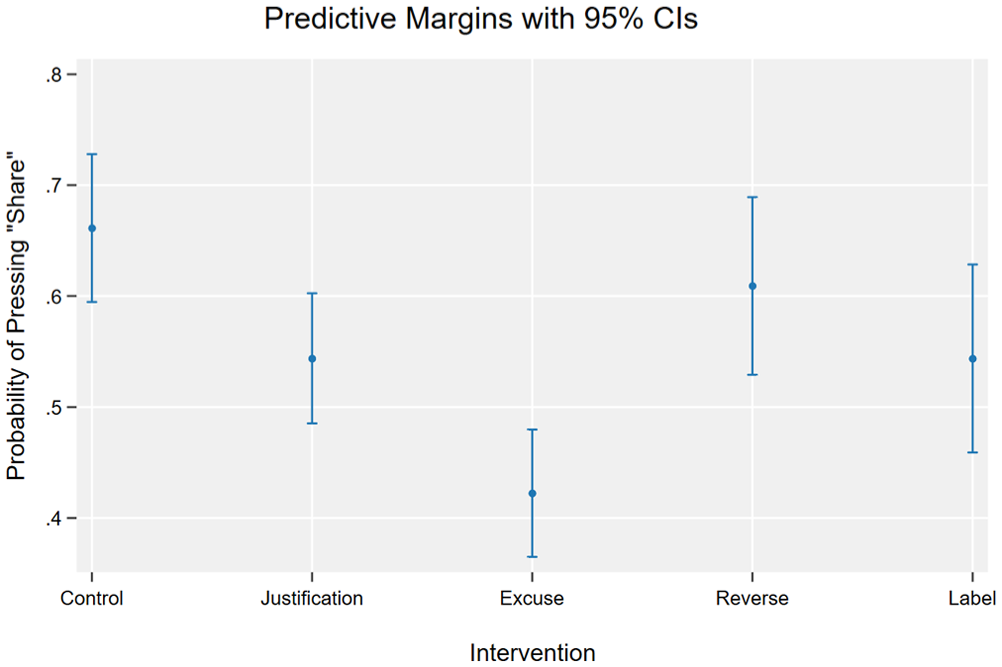

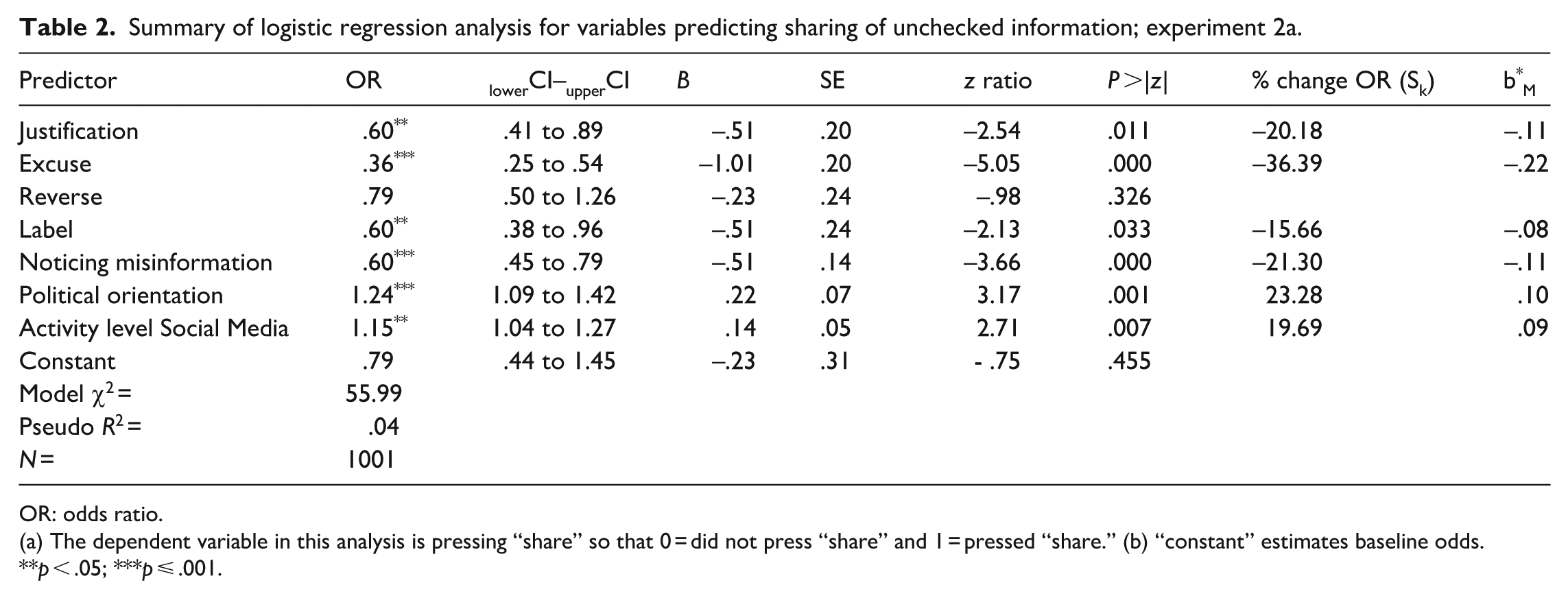

Main Measure (Willingness to share): In total, 54.15% of participants (N = 542) were willing to share the post. In line with our hypothesis, three of the four exposure interventions—excuse, justification, and label—demonstrated a protective effect compared with the control group (no intervention) when examining the association with willingness to share. Probability of pressing “share” was lowest in excuse condition (odds ratio [OR]: .36, confidence interval [CI] = [.25–.54], p < .001), then in Justification (OR: .60, CI = [.41–.89], p = .011) and Label condition (OR: .60, CI = [.38–.96], p = .033); see Figure 1 and Table 2. By contrast, the reverse intervention did not yield any difference from the control group (OR: .79, CI = [.50–1026], p = .326).

Predicted probabilities of willingness to share unchecked information under each intervention.

Summary of logistic regression analysis for variables predicting sharing of unchecked information; experiment 2a.

OR: odds ratio.

(a) The dependent variable in this analysis is pressing “share” so that 0 = did not press “share” and 1 = pressed “share.” (b) “constant” estimates baseline odds.

p < .05; ***p ⩽ .001.

Most participants reported that they did not notice any misinformation in the post (N = 680; 67.93%). Almost half (46.11%; N = 148) of the participants who reported noticing misinformation in the post were willing to share it.

Alongside the experimental intervention, two other measures were found to be associated with a higher probability of willingness to share: Political orientation (OR: 1.24, CI = [1.09–1.42], p = .001) and Activity-level on social media (OR: 1.15, CI = [1.04–1.27], p = .007). Noticing misinformation in the post was also found to lower the probability of sharing (OR: .60, CI = [.45 to .79], p < .001). Effect size 1 of each predictor is represented in two columns in Table 2: (a) the percentage change in odds ratio per standard deviation unit increase on the predictor and (b) Menard’s (1995) approach representing the predicted change in logits in standard deviation units per standard deviation unit increase on each predictor (b*M). Both allow us to assess the relative influence of each predictor on the outcome.

Experiment 2b

Participants identified themselves in political and religious terms, with the highest frequency in non-religious (N = 572; 37.07%) and right-wing oriented (N = 604, 39.14%). Most of them (78.67%) reported their activity-level on social media as negligible to moderate (see Supplemental materials, 3a and 3b). Most participants reported that they did not notice any misinformation in the post (N = 1143; 74.08%).

Main measure (willingness to share)

In total, 55.67% of participants (N = 859) were willing to share the post. Interestingly, almost half of participants who reported they noticed misinformation in the post pressed “share” nonetheless (43%; N = 172). The coding of participants’ answers regarding content understanding revealed that most did not fully understand the content they were asked to share (zero understanding, n = 588; 38.11%; partial understanding, n = 626; 40.57%) with only 21.32% of them fully understanding it.

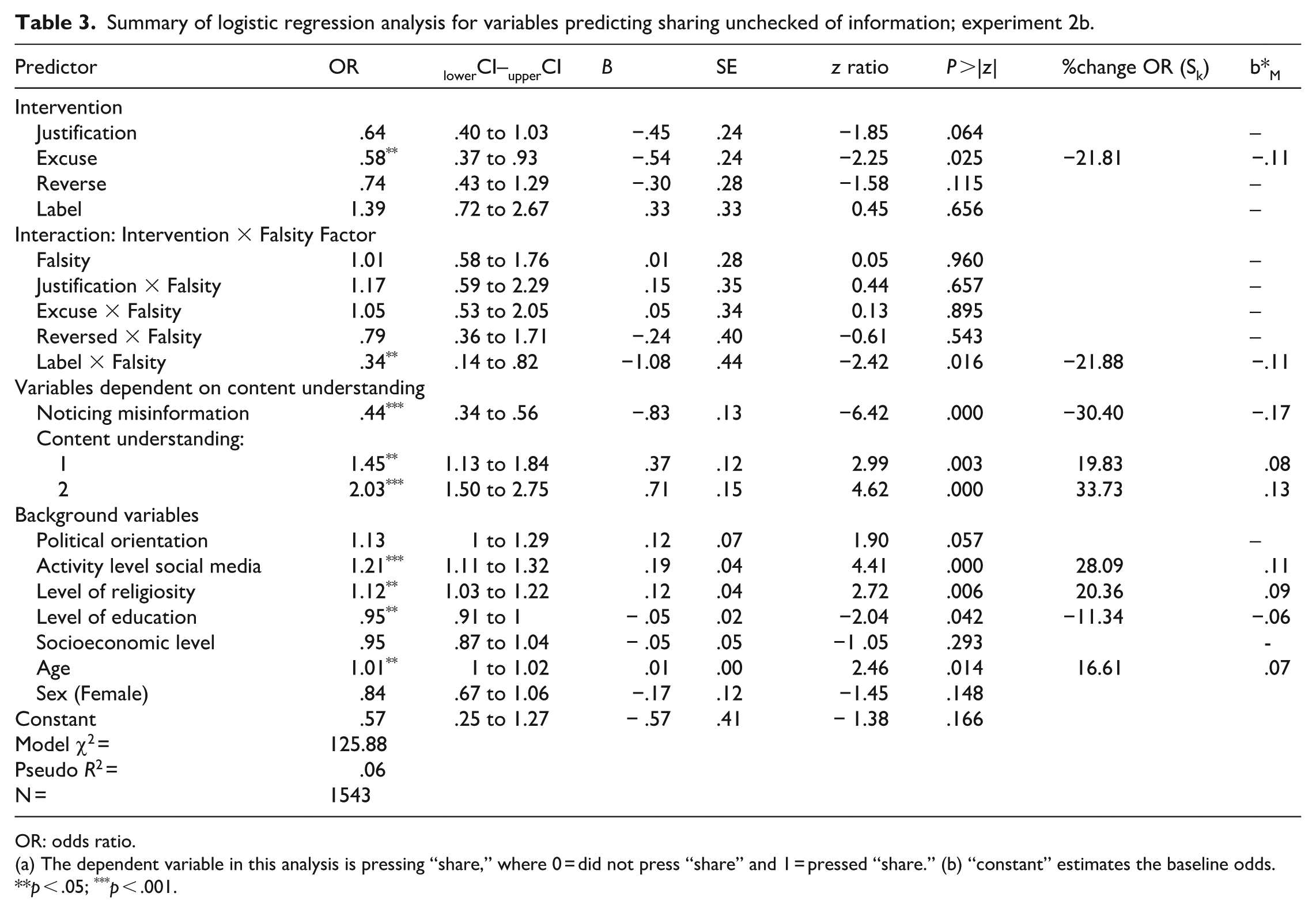

As in S2a, we found a protective effect of exposure to Excuse: when asked to share unchecked information (either true or false): The probability of pressing “share” was lower in the Excuse condition than in the control group (OR: .58, CI = [.37 to .93], p = .025). Exposure to Justification, Reverse, and Label intervention did not yield any difference from the control group (see Table 3). Here, possible interactions between the Falsity factor and each of the interventions were also tested.

Summary of logistic regression analysis for variables predicting sharing unchecked of information; experiment 2b.

OR: odds ratio.

(a) The dependent variable in this analysis is pressing “share,” where 0 = did not press “share” and 1 = pressed “share.” (b) “constant” estimates the baseline odds.

p < .05; ***p < .001.

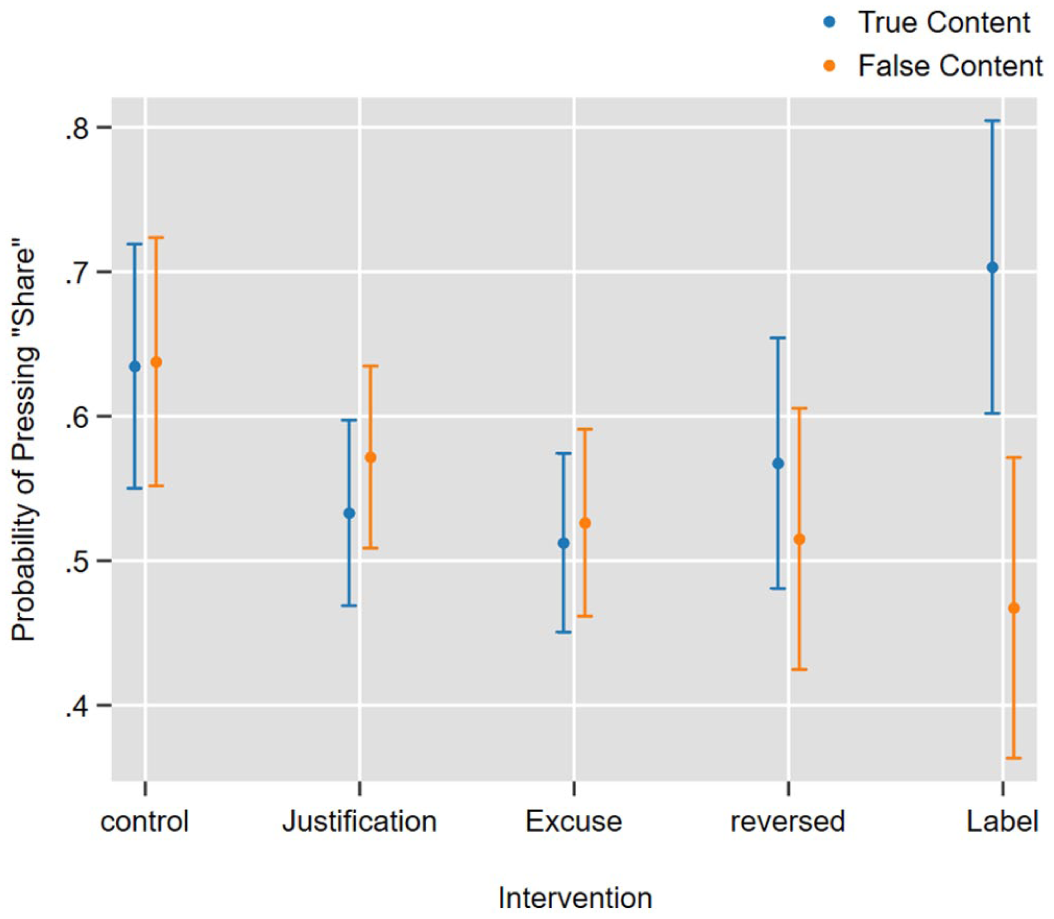

The only intervention found to significantly interact with the Falsity factor (namely, to reduce the willingness to share misinformation, but not true information) was exposure to Label (OR: .34, CI = [.14 to .82], p = .016); Sharing of true information after exposure to Label was slightly higher than Sharing in the control group; and Sharing of false information was significantly lower than found in the control group (see Table 3 and Figure 2).

Predicted probabilities of willingness to share unchecked information given each intervention and a Falsity factor.

Noticing misinformation in the post was also found to lower the probability of sharing (OR: .44, CI = [.34 to .56], p < .001). Surprisingly, level of Content Understanding did not have any protective effect and this variable was associated with a higher probability of sharing unchecked information; full understanding of the content makes the sharing of unchecked information twice as high as not understanding at all (OR: 2.03, CI = [1.50–2.75], p < .001). When adding possible interaction between Content Understanding and exposure interventions to the model, no significant effects were yielded.

Alongside the experimental factors, the effect of Activity level on social media was again found to show a higher probability of Sharing unchecked information (OR: 1.21, CI = [1.11–1.32], p < .001). The Level of religiosity was also associated with a higher risk of Sharing unchecked information (see Table 3).

Replication of results

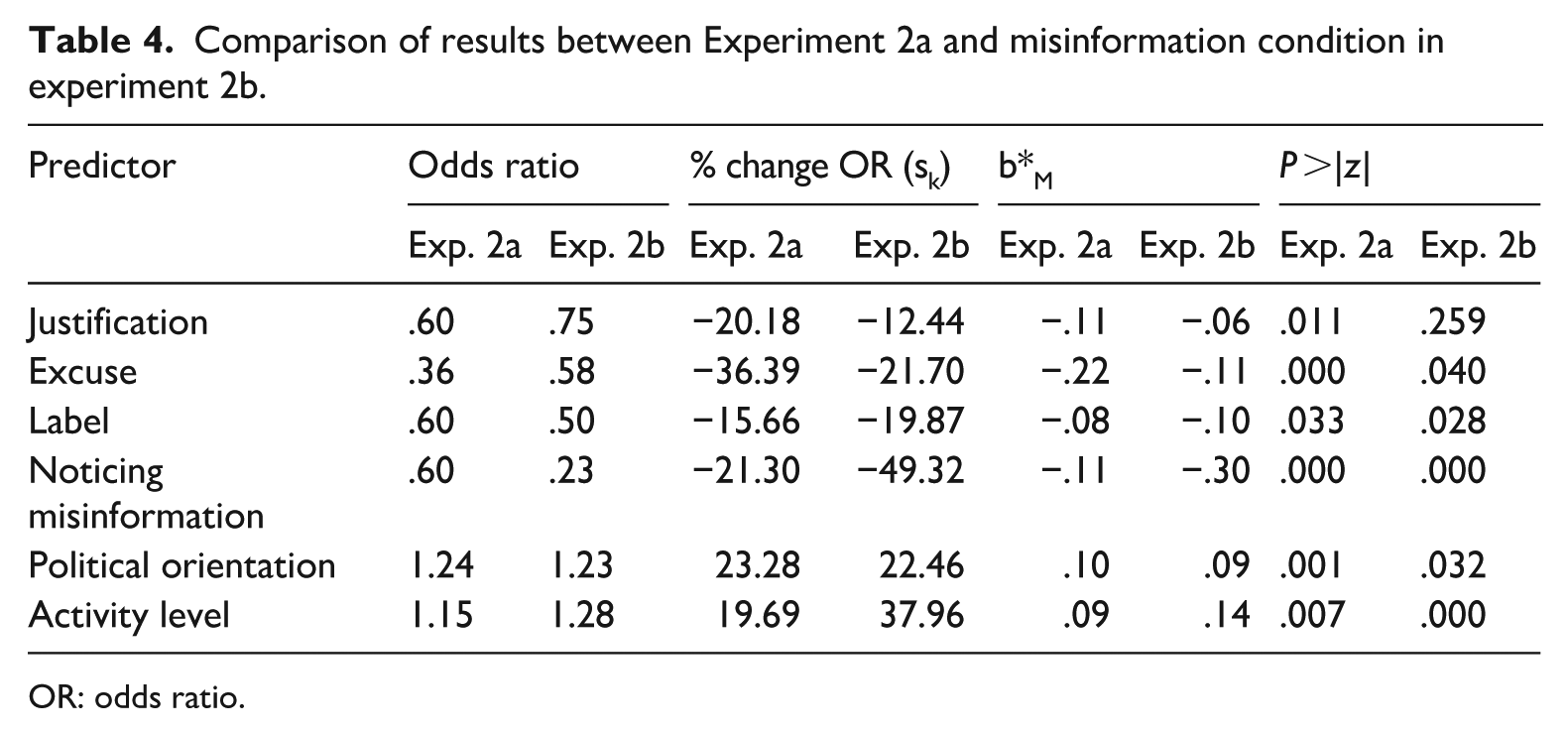

Table 4 presents a comparative analysis of the results from Experiment 2a, and the misinformation condition in Experiment 2b, aiming to evaluate the replication of the primary findings. This assessment is based on both statistical significance and effect sizes, as outlined in Table 4, and provides insight into the consistency and robustness of the observed effects across the two survey experiments. 2

Comparison of results between Experiment 2a and misinformation condition in experiment 2b.

OR: odds ratio.

When examining the misinformation conditions separately to establish replicability between Experiments 2b and 2a, statistical analysis reveals significant results. While the initial model in Experiment 2a included 4 predictors and the subsequent model in Experiment 2b expanded to 10 predictors (as determined by likelihood ratio chi-square tests comparing logistic regression model fit), the results demonstrate substantial convergence. With the exception of the Justification predictor, statistical significance (p < .05) was observed across predictors, confirming a robust replication of the original results. The directional trends of the predictors remained consistent, with most effect sizes showing similar patterns of influence on the likelihood of pressing Share.

Discussion

This study provides new evidence supporting the hypothesis linking epistemic norms of sharing and the spread of online misinformation (Record and Miller, 2022a). The study employed two separate methods, to fulfill two separate purposes. First, an exploratory qualitative set of reconstructions with users who shared misinformation, to identify their accounts for their actions, while focusing on the part that epistemic norms play in these accounts. The qualitative approach allowed us to identify specific, unknown norm-related accounts.

Based on the first study, we were able to answer RQ1 by identifying five explanations that people presented when asked about their decision to share misinformation. Two of them were grouped under excuses as they relieve the users from responsibility, but do not try to justify the mere publication of the misinformation: (1) “I am not a journalist” and (2) “reliable sources.” By contrast, another two we considered as “justifications” as they claim that even the resulting spread of misinformation might not be problematic, since it (3) causes “no harm” or because it represents a (4) “greater truth.” And the final theme (5) “auto-pilot,” refers to the “auto-pilot” theme and to the App’s design and structure, which according to interviewees encourages immediate sharing without any checks.

While these themes may suggest a decision-making mechanism, they do not test the effectiveness of specific countermeasures. Thus, to answer RQ2, we built a series of interventions based on these themes, which were tested in two survey experiments, yielding a fairly consistent result. Two types of interventions had no significant effect on users’ willingness to share a post containing misinformation: the intervention meant to demotivate them by debunking the “justifications” for sharing (in line with themes 3 and 4), and a reverse intervention, meant to test if users’ willingness to share the post will increase by supporting the justifications or excuses. The intervention designed to demotivate users’ willingness to share by debunking the “excuses” proved effective. However, by including both true and false posts in the second experiment, we discovered that the intervention did not create a distinction in the sharing behavior of false versus true information, leading participants to share less overall, regardless of the content’s veracity. The only intervention that proved efficient in discerning true and false information and reducing the willingness to share the latter was the “label.” The label was designed to counter the problem mentioned in theme 5, by introducing an epistemic norm according to which users should pause and think before sharing.

Two other notable findings concern users’ ability to understand and identify misinformation. Almost half of the participants in both experiments who reported noticing misinformation in the post were still willing to share it. When testing whether participants understood the post’s content, we found that the majority of them decided on whether to share or not even though they did not fully understand what they were asked to share.

Implications and conclusions

We find that the focus theory can help explain why only one of the interventions interacted significantly with the falsity factor. Focus theory posits that social norms shape behavior more effectively when they are salient at the moment of decision-making (Cialdini et al., 1991). While all interventions in our experiment were designed to foreground specific epistemic considerations (e.g. truthfulness or source reliability) they were embedded earlier in the survey and followed by a series of intervening questions before the sharing task.

By contrast, the “label” intervention was placed directly on the post and prompted users to consider its scientific content while deciding on whether to share it. This immediacy likely made the relevant epistemic norm more salient at the critical moment, in line with the expectations of focus theory. The result also aligns with the distraction hypothesis, which suggests that even minimal disruptions that slow users down can help reduce the spread of misinformation (Pennycook et al., 2022). Building on these insights, we introduce temporal proximity as a refinement to the focus theory model in digital environments: for a normative prompt to be effective, it must not only address a relevant epistemic norm but also appear as close as possible to the point of decision. In high-speed, attention-fragmented contexts like social media, salience depends not just on content but on timing.

The other interventions, on the contrary, framed users, in a more general and implicit way, into a mindset of being careful when sharing information online. Further studies are needed to determine if this is indeed the case, yet our holistic view of both the qualitative and quantitative data leads us to adopt this as the most likely explanation. Another option is that the label represents a normative intervention that acts in conjunction with other psychological mechanisms. If this is the case, then normative-psychological measures could prove to provide the most adequate solution—not only increasing overall epistemic vigilance, but also triggering a need to verify among some users.

Another theoretical contribution of this study is to the broader discussion of the relationship between misinformation and epistemic norms. While previous studies emphasized norms that are clearly epistemic, such as accuracy (Pennycook et al., 2022), an explicit discussion about the distinct role of epistemic norms is still needed. Furthermore, the findings raise doubt about whether the problem is the destabilization of the norms in online environments (Record and Miller, 2022a). The different types of effects that similar interventions had might suggest it is a more profound disagreement about these injunctive norms.

As revealed in the second experiment, some interventions reduced the willingness to share posts, regardless of whether they contained misinformation. This finding exposes an ethical dilemma that was hardly discussed in previous studies (Kozyreva et al., 2023) but is in line with Hoes et al.’s (2024) recent findings. While in some cases, being deterred from sharing due to fear of backlash (Brian et al., 2023) can be beneficial, reducing the sharing of true information along with misinformation might be counterproductive from a democratic perspective. These interventions could be misused as soft measures to limit public discourse and can undo some positive social developments that social media triggered, such as broadening public political engagement.

On the contrary, the post at hand includes scientific information, determined by expertise, and it is controversial to begin with—originating from the WHO’s myth-busting section (meaning such a myth exists). From an epistemic perspective, reduced willingness to share content based on limited evidence may represent a form of desired epistemic vigilance or humility. In which case, the overall reduction in the spread of misinformation would justify these “side effects.” As Gimpel et al. (2021) argue, even in regular news the main problem is the oversharing of misinformation, let alone in expert-related content. Therefore, in a similar discussion, they concluded that the “Overall effect of improving reporting by a combination of different SN messages is beneficial” (p. 213), even if it reduces the sharing of true information.

The results also point to an important empirical lesson for future studies. Many previous studies testing countermeasures against online misinformation did not include a control to test whether the interventions also reduced the willingness to share true information.

How can our interventions be applied in practice? Of all the interventions, the implementation of Label is the most straightforward. Some research indicates that warning labels effectively reduce false beliefs and misinformation sharing (Martel and Rand, 2024). However, difficult normative questions arise about who (or what) decides which posts to label and according to what moral and epistemic standards. Questions also arise regarding the reliability of the posts’ detection methods (Ling et al., 2023). Future research is needed about the effectiveness of different label designs as well as their efficiency over time. The other interventions can be implemented as community norms, enforceable either by community members expressing disapproval of their violation or by community officials imposing sanctions on violators. In Wikipedia and some Reddit communities, for example, this approach has proven effective (Bruckman, 2022; Reagle, 2010).

The findings also align with certain social epistemologists’ conceptualizations of epistemic rationality and knowledge as social rather than individual (Longino, 2002; Miller, 2021, 2024b; Miller and Pinto, 2022; Solomon, 2001). For these scholars, [epistemic] normativity, if it is possible at all, must be imposed on social processes and interactions, that is, that the rules or norms of justification that distinguish knowledge (or justified hypothesis-acceptance) from opinion must operate at the level of social as opposed to individual cognitive processes. (Longino, 1992: 201)

It also accords with our findings that about half of the participants shared the post even after identifying the misinformation.

Finally, we also suggest future studies explore the epistemic norms governing online conversation in other places, as some epistemic norms are culturally variable (Gerken, 2014). An international study with comparative samples could expose whether these cultural normative differences remain relevant in online environments. In addition, as norms are likely to evolve over time, a longitudinal study might also yield important insights.

Supplemental Material

sj-docx-1-nms-10.1177_14614448251385917 – Supplemental material for The role of epistemic norms in mitigating the spread of misinformation

Supplemental material, sj-docx-1-nms-10.1177_14614448251385917 for The role of epistemic norms in mitigating the spread of misinformation by Aviv Barnoy, Shirel Bakbani-Elkayam, Boaz Miller and Arnon Keren in New Media & Society

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Israel Science Foundation, Grant Number 984/23.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.