Abstract

Background:

Accurate diagnosis and spatial characterization of craniofacial fractures is critical for treatment planning and precise fracture reduction. Since augmented reality (AR) has potential for better diagnostic evaluation than traditional three-dimensional (3D) reformats, we tested whether our accessible mobile-based AR model improves diagnostic accuracy, spatial understanding, and decreases task cognitive load when clinicians evaluate facial fractures.

Methods:

Clinicians (n = 30) in specialties managing craniofacial trauma assessed a database of mandibular and maxillofacial complex fractures of varying severity using computed-tomography slices supplemented with either traditional 3D reformats (control) or the AR model (experimental), completed diagnostic and spatial characterization tasks, and were evaluated quantitatively and qualitatively on diagnostic accuracy, task cognitive load, and weighted preference for the traditional versus AR model.

Results:

Most clinicians (83%) preferred the AR model overall. Control and experimental groups had equivalent diagnostic sensitivity and specificity. Less experienced clinicians found the AR model required less effort, was less frustrating, and was preferred for fracture displacement characterization. The AR model had no significant impact on more experienced clinicians. All clinicians found the AR model allowed more intuitive manipulation of the 3D object. Those with less experience preferred the AR model over traditional imaging for diagnostic and educational purposes, whereas more experienced clinicians found that the AR model did not significantly alter their established approach to fracture evaluation.

Conclusions:

Our mobile-based AR model may be preferable to traditional 3D formats for spatial assessment tasks and decreasing task cognitive load, most notably for less experienced clinicians for whom perioperative practices are less established.

Introduction

Craniofacial trauma continues to be a significant cause of morbidity and mortality worldwide. In the latest global review of facial fractures from 2017, there were over 7.5 million new facial fractures globally and 117,402 years of life lost due to disability, translating to approximately 6.5% loss of normal health status for each affected individual. 1 Accurate diagnosis and classification of facial fractures is essential for proper management. However, in complex craniofacial trauma with multiple comminuted fractures and overlapping fracture patterns, diagnoses can be difficult even for an experienced radiologist or surgeon. Missing concomitant fractures or inaccurate spatial assessment may lead to inadequate surgical exposures or poor fracture reduction. This can subsequently lead to devastating functional and esthetic complications.2,3

The current standard imaging modality for managing traumatic craniofacial fractures is computed tomography (CT), which provides isotropic submillimeter resolution with modern advances that continue to improve its resolution and accessibility. Many institutions supplement the two-dimensional (2D) images with some form of volume or surface rendering for better 3D visualization. 4 However, conventional 3D renderings are generally static and non-immersive, lacking the characteristics of the real-world captured environment that allow for true spatial engagement of an operator with a 3D object. 5 Some groups are bypassing these limitations via 3D printing, creating physical models for surgical simulation and pre-operative planning. 6 3D printing has proved valuable in craniofacial surgery, especially in dental implant surgery and mandibular reconstruction. 7 However, limitations to 3D printing such as equipment costs, resolution loss, accessibility, environmental considerations, and turnaround time remain prohibitive factors for widespread use of 3D printing for most surgeons.7,8

Augmented reality (AR) has the potential to provide immersive interaction with a 3D object without the limitations of 3D printing. 7 Current applications include improving understanding of a diagnosis, virtual planning, and virtual practice simulations. 9 Compared to traditional CT scans and static 3D renderings, AR allows for better visualization and dynamic interactions with 3D data. 10 If applied to complex craniofacial trauma, for which 3D displacement of fracture fragments (impaction, rotation, etc.) is particularly difficult yet important to assess, AR has the potential to significantly improve pre-operative planning, and in turn, post-operative outcomes.

The scant literature on the application of AR to traumatic facial fractures led us to develop a relevant, novel, and accessible AR model. Preliminary studies verified the accuracy and precision of our AR model using our software application, Radiology with Holographic Augmentation (RadHA), which permits real-time AR projection of CT, MRI, and ultrasound DICOM and 3D files for pre-operative planning and intra-operative guidance using the Microsoft HoloLens platform. 11 We further refined our AR system for pre-operative planning and equipped it with measurement tools comparable to current standard picture archiving and communication systems (PACS). 12 In the present study, we adapted that system into an accessible AR model utilizing the Merge Cube interface and directly assessed its clinical relevance to craniofacial trauma by testing the model’s impact on diagnostic accuracy, spatial assessment, and user cognitive load for clinicians caring for patients with traumatic craniofacial fractures.

Methods

Fracture Database: A database of traumatic craniofacial fractures was compiled using de-identified historical CT scans obtained from Zuckerberg San Francisco General Hospital. The database consisted of a comprehensive variety of clinically relevant craniofacial fractures including naso-orbito-ethmoid complex, zygomatico-maxillary complex, Le Fort I/II/III, and various mandible fractures. All scans contained multiple contiguous 0.625 mm axial images obtained through the head, including the entire face, from the vertex to the base of the skull. The images were stored as DICOM files. The UCSF Center for Advanced 3D+ Technologies (CA3D+) converted the DICOM files using voxel rendering into 3D models (.obj files) for use with our AR model.

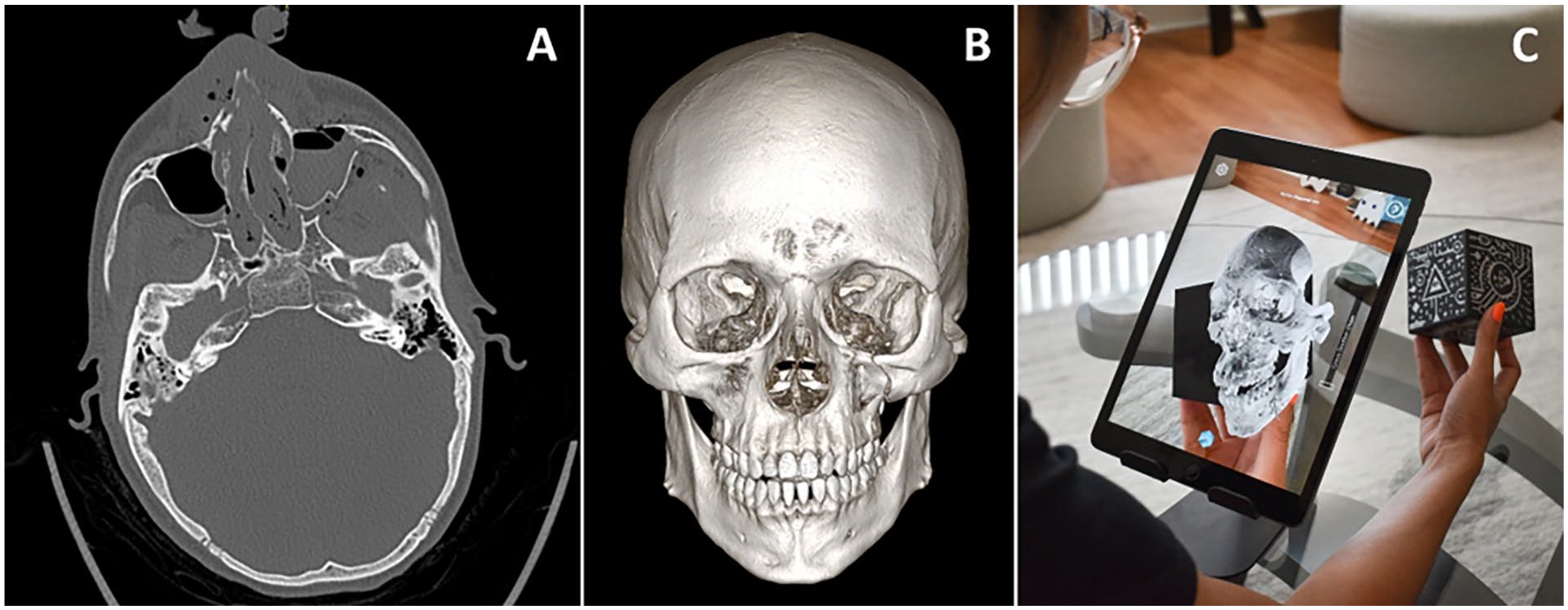

Interface: Conventional CT scan 2D images and standard 3D volume renderings were viewed on RadiAnt DICOM Viewer (Version 2021.2.2, Medixant, Jan 19, 2022) using a standard desktop monitor, keyboard, and mouse setup (Figure 1). The AR model was viewed using the Merge Cube platform and an iOS tablet as the viewing interface (Figure 1). The virtual 3D model was displayed on the tablet screen as an interactive projection of the physical Merge Cube through the tablet’s camera. The viewing software developed specifically for this research project was adapted from the RadHA software used in our preliminary studies. Most AR models are inaccessible to general users because they require a dedicated interface like Microsoft HoloLens. We introduced a more accessible viewing interface through our software, which allows users to interact with AR models through any phone or tablet when paired with a low-cost Merge Cube. The Merge Cube retailed at 29.99 USD at the time of writing. The software was developed internally so did not have a retail cost. A video demonstrating the AR interface is included in supplemental files.

Interfaces. (A) Conventional CT scan 2D axial image. (B) Standard 3D volume rendering on RadiAnt DICOM Viewer. (C) Augmented reality interface with surface rendering using the Merge Cube platform paired with software developed for this research study.

Test Subjects: Test subjects included residents and faculty in plastic surgery, otolaryngology, and oral maxillofacial surgery involved in the care of traumatic craniofacial fractures. All residents and faculty within the appropriate UCSF departments were eligible for recruitment into the study. Subjects provided written informed consent to participate (UCSF IRB 20-32917).

Testing Diagnostic Accuracy: Each subject analyzed 6 scans from the fracture database. In randomized order of difficulty and user interface, 3 scans were analyzed using standard 3D rendering and 3 scans were analyzed using the AR model. For all scans, 2D CT slices were provided. Using the provided interface, the subjects were tasked with identifying all individual fractures and fracture complexes. A comprehensive standardized list of all potential fractures and patterns was provided to all subjects for language uniformity. Variables measured were the number of correct diagnoses, number of incorrect diagnoses, number of false positives and negatives, and time to task completion. Correct diagnoses were based off reads from an attending neuroradiologist. Stratified parametric statistical analysis (Student’s T-test) was used to compare accuracy of diagnoses between AR and traditional views.

Testing User Cognitive Load: After each scan, subjects participated in a NASA task load index (TLX) survey to assess subjective mental workload. NASA TLX is a standardized tool designed to quantify mental demand, temporal demand, effort, and frustration level. 13 Additional TLX questions using the NASA TLX format were used to assess weighted preference for either the standard 3D or AR interface compared to 2D slices when performing individual fracture diagnosis, fracture complex diagnosis, or spatial characterization of the fractures. Survey data was stratified or weighted by clinical experience (≤2 years vs ≥3 years). The levels for stratification were based on preliminary survey results that demonstrated a significant difference in clinician approach to AR before and after 2 years of clinical experience. A parametric statistic (Student’s T-test) was used to compare differences in cognitive load between AR and traditional views.

Gathering Qualitative Data: After completing fracture identification and cognitive load tasks, subjects were asked qualitative questions regarding perceived benefits or limitations of the AR interface. Questions covered specifics about the settings in which the providers thought the AR interface was most helpful, where they would personally use the technology if easily accessible, whether the technology could supplant current common practices, and what the limitations of the technology were. Answers to qualitative questions were transcribed, coded thematically, and represented using key quotes.

All underlying research materials including the DICOM files, surveys used, and data collected can be accessed by contacting the corresponding author.

Results

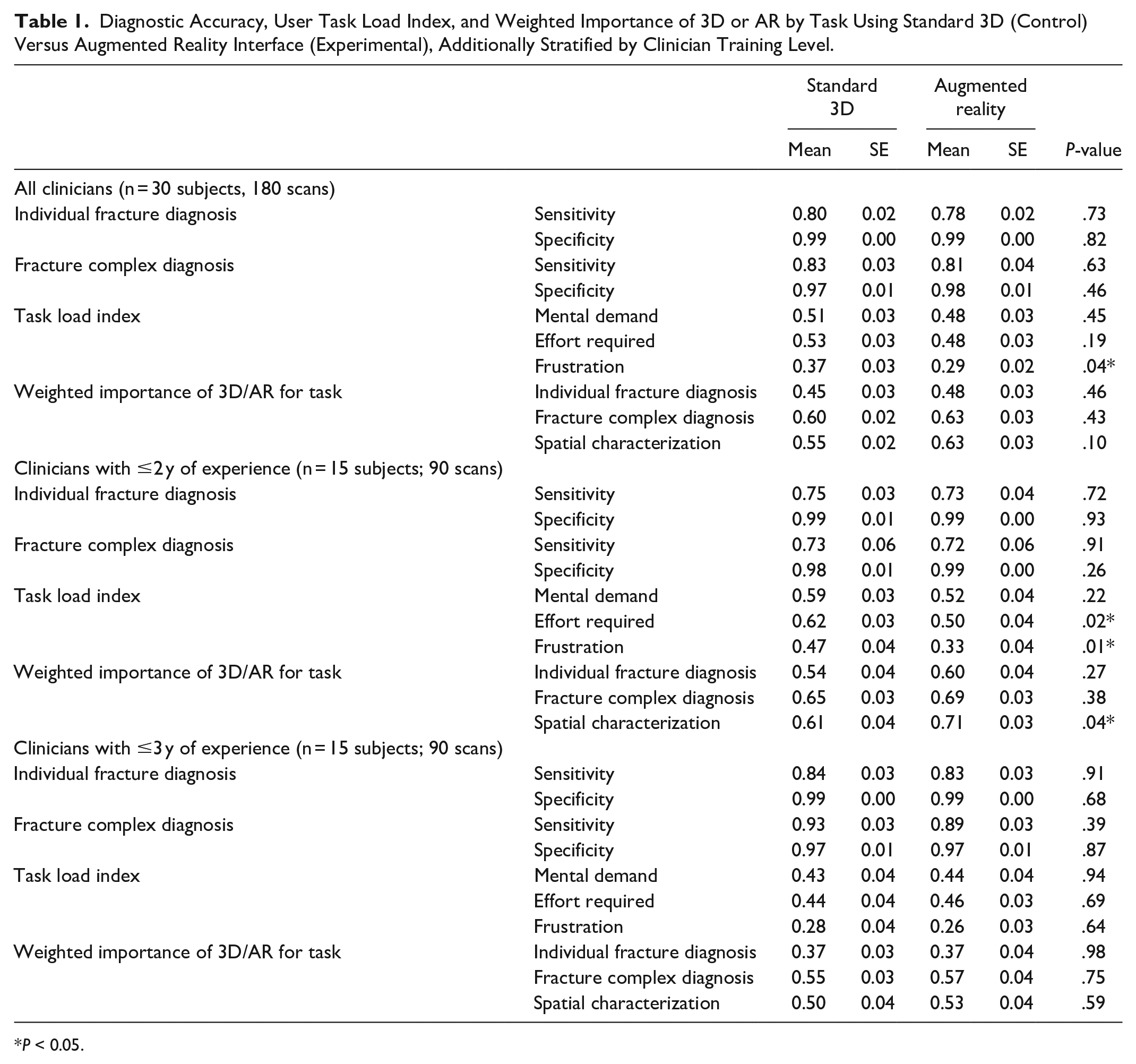

Of the 30 residents and faculty (15 plastic surgeons, 7 otolaryngologists, and 8 oral maxillofacial surgeons) who participated in the study, 83% preferred the AR model over the standard 3D model. There was no significant difference in diagnostic accuracy (individual fracture and fracture complex diagnosis sensitivity or specificity) between the AR model and standard 3D model when scans of craniofacial trauma were reviewed. For TLX markers, frustration was 21.6% lower with the AR model than with the standard 3D model (P = .04), but there was no significant difference in mental demand or effort required. There was also no significant difference between the standard 3D and AR in their weighted importance for the tasks of individual fracture diagnosis, fracture complex diagnosis, and spatial characterization (Table 1).

Diagnostic Accuracy, User Task Load Index, and Weighted Importance of 3D or AR by Task Using Standard 3D (Control) Versus Augmented Reality Interface (Experimental), Additionally Stratified by Clinician Training Level.

P < 0.05.

When stratified by training level, clinicians with ≤2 years of training found that the AR model required 19.4% less effort (P = .02) and was 29.8% less frustrating (P = .01) for evaluating craniofacial trauma scans. These less experienced clinicians also preferred the AR model 16.4% more for spatial characterization tasks (P = .04). For clinicians with ≥3 years of training, there was no significant difference in any measured outcomes between AR model and standard 3D model (Table 1).

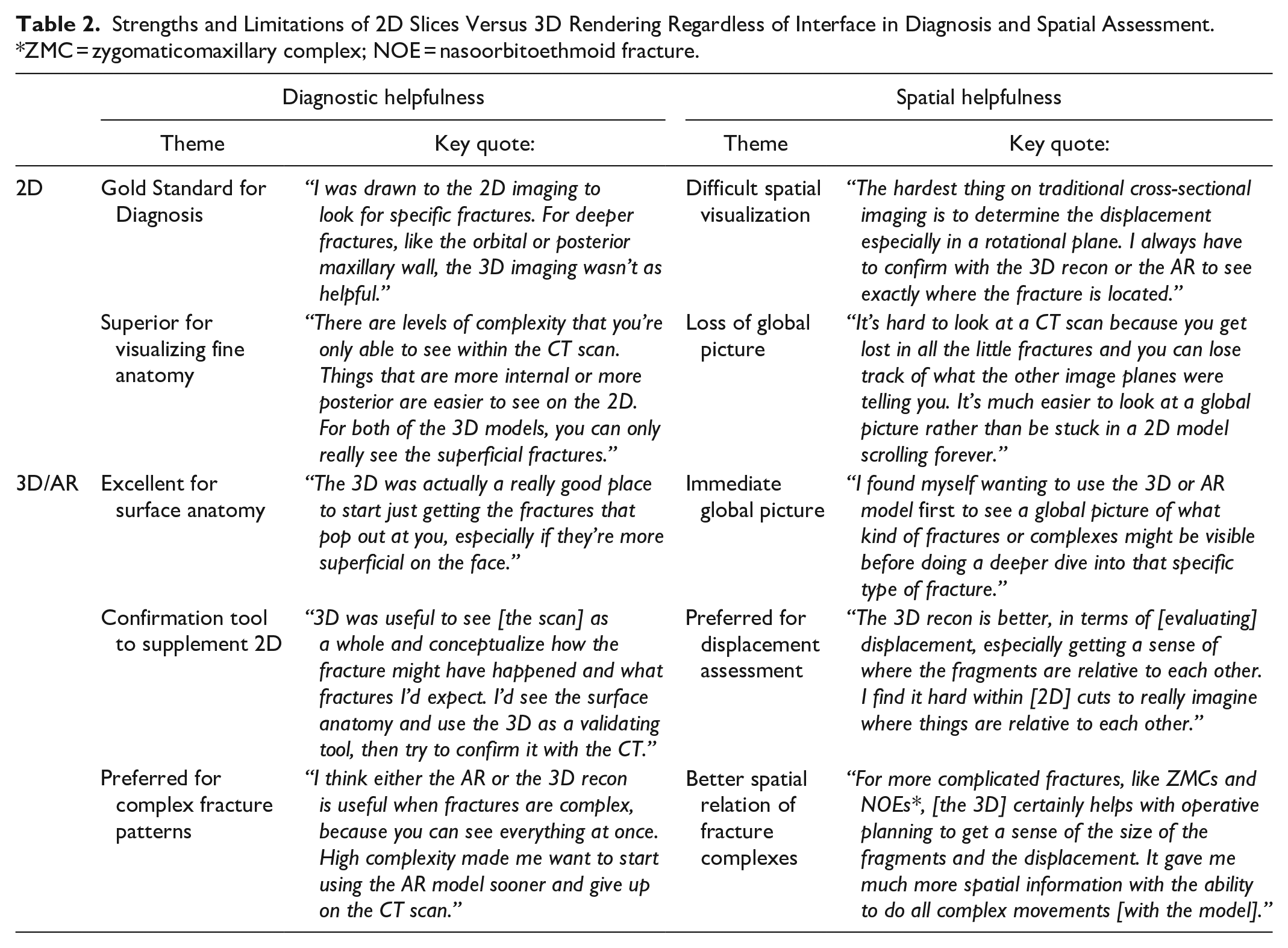

Qualitative assessment provided nuance on the impact of AR not captured in the quantitative measures. Subjects first confirmed the overall strengths and limitations of 2D slices versus any 3D rendering, whether viewed in the standard 3D or AR format (Table 2). Their responses indicated that 2D slices continued to remain the gold standard for diagnosing fractures given their higher image resolution, ease of viewing deeper structures, and visualization of soft tissue elements. Limitations of 2D views were visualizing the global picture and the spatial relation of different image elements across the hundreds of slices. Conversely, 3D renderings on any interface provided excellent global views of the fractures and their spatial relations, but lacked the detail contained in 2D slices and were most helpful for superficial fractures. The 3D images were especially helpful for diagnosing fracture complexes where spatial assessment is key. Finally, 3D was most often used as a confirmatory supplemental tool to the 2D slices rather than being considered any kind of replacement.

Strengths and Limitations of 2D Slices Versus 3D Rendering Regardless of Interface in Diagnosis and Spatial Assessment. *ZMC = zygomaticomaxillary complex; NOE = nasoorbitoethmoid fracture.

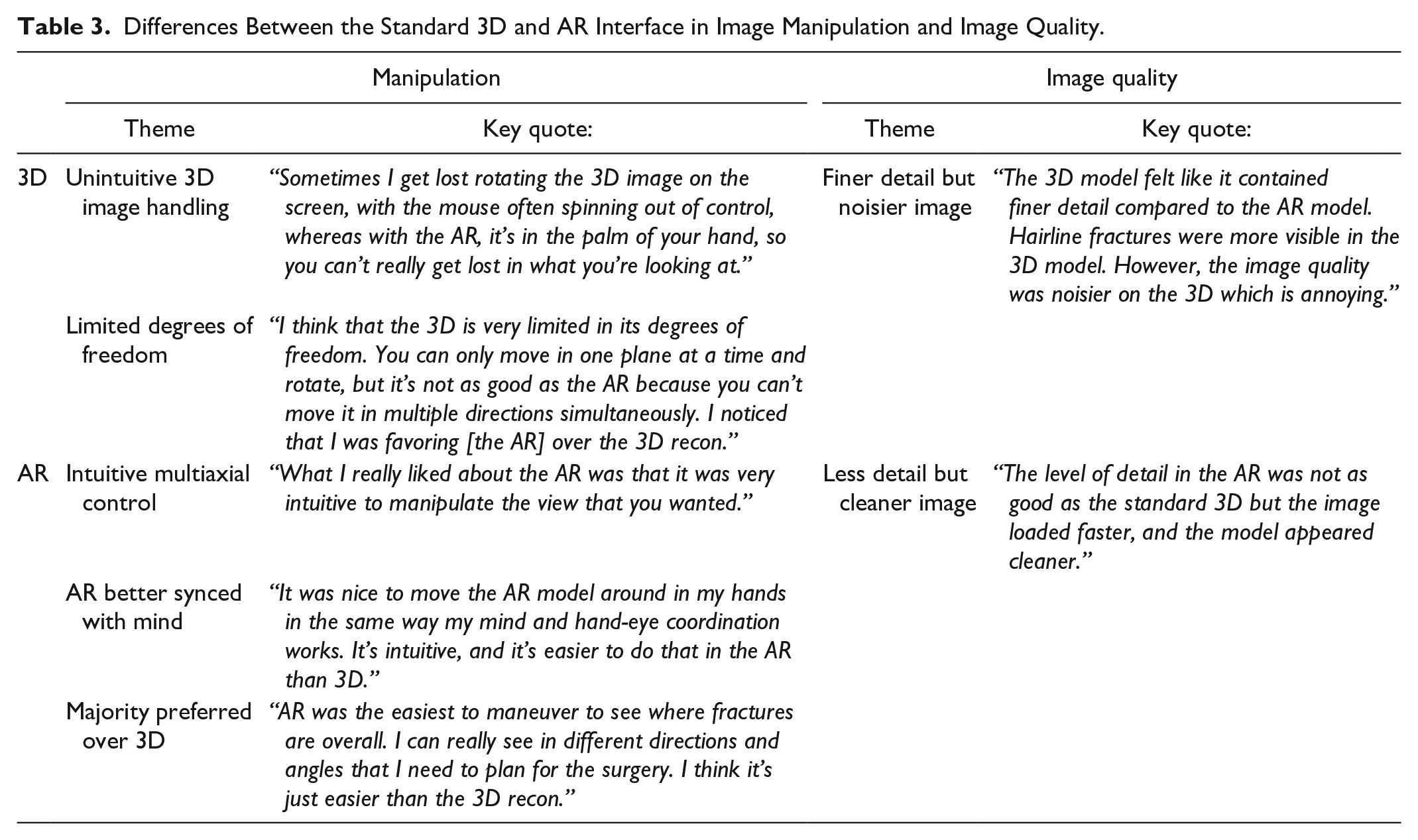

The primary distinction between the standard 3D interface and the AR interface was the ease of manipulation (Table 3). When handling a 3D object using a standard keyboard and mouse setup, subjects found the object difficult to maneuver precisely, unable to rotate along the exact desired axis, more easily disoriented, and unable to perform multiple control tasks such as zooming and rotation simultaneously. By contrast, all subjects found the AR interface to be highly intuitive and equivalent to handling a tangible 3D object. Subjects were able to view the object at the exact preferred angle with simultaneous zoom and rotation in real-time synchronization with their hand. A recurrent theme was that AR matched “just how the mind works” compared to the standard 3D interface. Subjects preferred the AR interface overall.

Differences Between the Standard 3D and AR Interface in Image Manipulation and Image Quality.

There was some concern about image quality differences between the standard 3D and AR interfaces because the 3D object in the standard interface was a volume render whereas the object in the AR interface was a surface render (Table 3). This arises from a difference in the way 3D objects require processing to be compatible with their respective interface. According to our study subjects, volume renders appear to contain finer detail but more noise, whereas surface renders are blockier but have smoother edges. However, users were evenly split regarding their image quality preference between volume and surface renders, so we do not believe it significantly impacted the study.

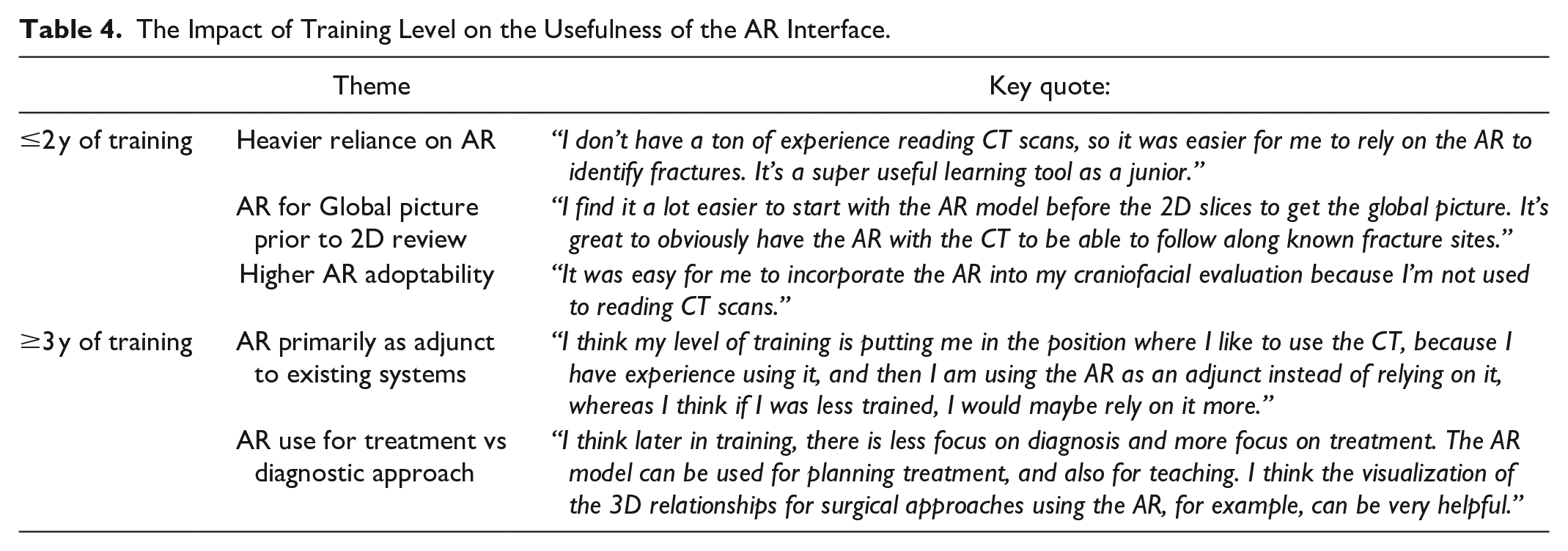

Lastly, there was a significant difference in the way the AR interface impacted clinicians based on their years of experience (Table 4). For those in their first 2 years of residency, AR was most helpful as a diagnostic tool. They were often still having difficulty navigating 2D slices and visualizing the spatial relation of 2D elements. Consequently, the AR interface was often their preferred tool for understanding the global picture and more heavily relied on for confirmation or even initial identification of individual fractures and fracture complexes. These residents were also less likely to have a set system for evaluating craniofacial fractures and were thus more amenable to introducing a new element like the AR interface into their initial diagnostic workup.

The Impact of Training Level on the Usefulness of the AR Interface.

More experienced clinicians found the AR interface to be more helpful during the treatment rather than the diagnostic phase (Table 4). They were more likely than residents with less experience to have an established personal diagnostic system and were thus less receptive to a new AR interface. They were more likely to use the AR interface as an adjunct to their existing system and only relied on the AR as a confirmatory tool for diagnoses they had already identified. However, they became more reliant on the AR interface during treatment planning. They also found the AR interface to be useful for better assessment of the spatial relationship between fracture fragments, such as the degrees of displacement and angles of rotations which are important considerations for operative planning.

Discussion

Our study shows that AR is a novel and accessible interface that is preferred over the current gold standard of 3D viewing interfaces for evaluating craniofacial fractures. There was no significant difference in diagnostic accuracy between the AR interface versus standard 3D, demonstrating non-inferiority of the AR model for diagnostic tasks. This finding is consistent with our prior studies regarding the accuracy and precision of our AR model. 11 The AR interface did not provide additional raw data compared to the standard 3D model and was predominantly a supplemental tool to the gold standard 2D slices, so improvement in diagnostic accuracy using the AR model was not necessarily expected, but the study at least demonstrated non-inferiority of the AR model for diagnostic tasks.

Despite similar diagnostic accuracy, however, most clinicians found the AR interface to be easier to navigate than traditional 3D scans, leading to decreased frustration among all study subjects. Our qualitative data indicated that this finding was largely attributed to how natural handling a 3D object was in AR. Using the Merge Cube allowed for more intuitive multiaxial control of a 3D object than a standard keyboard and mouse set up. This decrease in user cognitive load while completing assigned tasks led most clinicians to prefer the AR model over the standard 3D viewing interface when evaluating craniofacial fractures.

An unexpected outcome was the degree to which clinicians’ experience impacted their preference for the AR model over standard 3D. Clinicians with ≤2 years of experience found the AR model to be less mentally demanding than 3D, as well as less frustrating, and preferable when completing spatial assessment tasks. This difference in diagnostic accuracy was not found for clinicians with ≥3 years of experience. Although all clinicians acknowledged the more intuitive handling of 3D objects using the AR model, those with less experience much more frequently preferred the AR model over the standard 3D model when completing diagnostic tasks. Our qualitative data indicate this finding is likely attributable to less experienced clinicians’ heavier reliance on 3D objects for diagnosis and more experienced clinicians’ resistance to altering their existing system for diagnosis. Less experienced clinicians frequently expressed difficulty using 2D slices alone for diagnostic tasks, were thus more reliant on 3D objects, and in turn relied more on the AR model which had superior 3D object handling. By comparison, more experienced clinicians already had a well-established diagnostic system using standard 2D slices, only used 3D objects as a confirmatory supplemental tool, and thus did not find the AR model to be more helpful in diagnostic tasks even if they preferred it to standard 3D interfaces. Our qualitative findings indicated that both groups preferred AR, particularly the intuitive manipulation of the AR interface compared to standard 3D models.

The experience discrepancy provides important insight as to when the AR model may be most useful in managing craniofacial trauma, with less experienced clinicians gravitating toward the AR model more as a diagnostic and educational tool, supplementing their lack of experience reviewing CT scans with an interface that more intuitively controls 3D objects. By comparison, more experienced clinicians found less value in the AR model for the initial diagnosis, but often suggested that there could be a greater role for AR in the operating room as a real-time 3D object manipulating tool for surgical planning. However, a limitation of this study was that it focused primarily on the impact of AR as a diagnostic tool, so it was not able to elucidate the role of AR in the operating room where it may have better measured the impact of AR for more experienced providers.

Accordingly, the intraoperative application of AR is a natural evolution of the technology’s role in surgery. However, many challenges remain in bringing AR to the operating room. AR headsets are still unwieldy to the operator and direct AR overlay requires tracking and calibration tools that are not yet flexible enough for dynamic changes during an operation. 14 Our hybrid AR interface bypasses some of these limitations with the Merge Cube, but in doing so also foregoes AR’s potential for real-time overlay on a patient. As such, our model serves as an accessible transition technology while AR and medical imaging continue to advance.

In conclusion, the results of this study demonstrate that AR can serve as an improved replacement for current 3D viewing systems and reveal the specific clinical contexts in which AR can be most impactful for craniofacial management based on clinician experience.

Footnotes

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Jesse Courtier is a Co-Founder and shareholder in Sira Medical, an augmented reality startup. Benjamin Laguna is the Chief Medical Officer and a shareholder in Sira Medical, and augmented reality startup.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Plastic Surgery Foundation (PSF) and the American Society of Maxillofacial Surgeons (ASMS) Combined Pilot Research Grant [#837419].

Ethics approval

This study received ethical approval from the UCSF IRB (#20-32917) on 7/20/2021.

Informed Consent

All participants provided written informed consent prior to participating.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.