Abstract

This review aims to identify the role of augmented, virtual or mixed reality (AR, VR or MR) technologies in setting of spinal surgery. The authors address the challenges surrounding the implementation of this technology in the operating room. A technical standpoint addresses the efficacy of these imaging modalities based on the current literature in the field. Ultimately, these technologies must be cost-effective to ensure widespread adoption. This may be achieved through reduced surgical times and decreased incidence of post-operative complications and revisions while maintaining equivalent safety profile to alternative surgical approaches. While current studies focus mainly on the successful placement of pedicle screws via AR-guided instrumentation, a wider scope of procedures may be assisted using AR, VR or MR technology once efficacy and safety have been validated. These emerging technologies offer a significant advantage in the guidance of complex procedures that require high precision and accuracy using minimally invasive interventions.

The number of ambulatory procedures for spine disorders has dramatically increased over the past two decades. 1 An upward trend in the preference for minimally invasive spine surgery (MISS) has been development of new approaches and technologies to improve patient outcomes. Surgical navigation has become a pivotal tool in MISS due to lack of visual cues with a smaller exposure site, increasing the surgeon’s dependence on their knowledge of superficial anatomical landmarks as well as computed tomography (CT) images. The worldwide market for navigation systems in spinal surgery, valued at $600 million in 2019, is forecast to grow at 4.4% compound annual growth rate in terms of revenue to 780 million by 2024. 2 Augmented reality (AR) technologies have progressed considerably and are now implemented in MISS. AR is a computer-generated virtual image projected onto the user’s real surroundings. In surgery, the user has a direct view of surgical field with a specially constructed superimposed device allowing additional anatomical information from graphical/visual elements to blend with the real environment as an overlay guiding the surgeon.

This differs from virtual reality (VR) technologies, which artificially integrates virtual images overlaid with real-time locational data of surgical instruments. While AR technology allow for a minimally invasive approach to many surgical procedures offering image-guided navigation to the surgeon in real time, VR provides reproduced anatomy and surgical setting in the virtual space for simulation and education purposes. 3 Early adoption of these technologies is perceived to have a high financial burden and lack substantial data on efficacy and efficiency. 4

A new technology, mixed reality (MR), has come to the fore surpassing the limitations of AR with its inability to interact with three-dimensional (3D) data packets and VR’s exclusion of the real-world environment in VR. MR is a hybrid of AR and VR, in which real and virtual images are intertwined allowing for interaction with and manipulation of both the real and virtual environment. 5,6 Using MR, a surgeon can intraoperatively access anatomical information of the patient and overlay virtual holographic elements on the actual superficial anatomy of the patient while on surgical table, in real time. MR not only can do this hands-free but also enables surgeons to bidirectional communication between surgeons and assisting devices, other surgeons and medical staffs or anyone who can access the system.

AR, VR or MR combined with robotic-assisted surgery has the potential to alleviate surgeon fatigue and increase precision of spinal realignment and stabilization. Increasing precision and consistency has become a primary objective of this technology in this context to reduce surgical time, alleviate the physical burden on the surgeon and support fine motor control throughout the procedure. This review will give an overview of the state of the art and offer insight into what hurdles are necessary to overcome to further advance this technology for widespread adoption.

A brief history of innovators in the field

The use of AR in spine surgery was first described in 1997 where Peuchot et al. developed a system, ‘Vertebral Vision with Virtual Reality’, to superimpose a fluoroscopy-generated 3D transparent vision of the vertebra onto the surgeon’s operative field. This aids watching vertebral displacements occurring without the distraction of referring to a monitor for feedback. 7 While this was an innovative technique at the time, intraoperative fluoroscopy in spine surgery exposes patients and surgeons to considerably higher levels of ionizing radiation compared to other subspecialties. 8

AR navigation has the potential to lower occupational exposure to ionizing radiation. AR surgical navigation demonstrated significantly lower occupational doses of radiation compared with values reported in the literature. The average staff-to-reference radiation exposure was decreased to less than 0.01% after optimization of the protocol and patient exposure was reduced to 32% of exposure dose reported by other imaging techniques. 9

Salah et al. describe the first navigated spine surgery utilizing AR visualization on a mannequin by integrating magnetic resonance imaging (MRI) with a marker-based optical tracking server. 10 This combined approach can provide a surgeon with high-resolution images faithfully displaying patient anatomy while limiting or completely eliminating radiation exposure.

Initial AR innovations in spine surgery trailed CT, 11 MRI 12 –14 and X-ray. 15 Convention has fallen on CT to be the choice imaging modality for intraoperative acquisition due to fast, high-quality image capture with minimal radiation exposure to staff over conventional fluoroscopy. While MRI eliminates radiation exposure in intraoperative imaging, it requires specialized metal-free operating suites, non-magnetizing instrumentation and longer anaesthesia and operating room (OR) time, making it impractical. MRI also requires permanent fixture instalment and has a larger physical footprint in the OR. Multi-slice (32 and 64 slice) CT scanners (Airo – Brainlab, Munich, Germany, Somatom – Siemens, Munich, Germany, O-arm – Medtronic, Memphis, Tennessee) have steadily been replacing precedent cone-beam CT scanners due to less scatter radiation and better image quality. An increase in slice specification also reduces scan times with the capability of covering a larger area on the patient in each pass. 16 –18

Google Glass, a see-through head-mounted display (HMD), is reported to be the most used HMD in AR-assisted surgeries in the current literature. 19 In spinal surgery, the Microsoft HoloLens appears to the HMD of choice. 20 –23 HoloLens currently offers features such as gaze and eye tracking capability, position and head movement tracking and gesture control. These features make the HoloLens and similar technologies more suitable for AR surgical application with the potential for a built-in IR NDI (Infra-Red Network Device Interface) camera tracking system such as in the Xvision (Augmedics, Arlington Heights, Illniois) to allow for uninterrupted equipment feedback. 24

The technicalities of image processing and 3D rendering are beyond the scope of this review and much of this information in proprietary. Nonetheless, progress in AR visualization will focus on accuracy and precision of 3D representation overlaid on the surgical field with optimal depth perception and translucency, instantaneous tactile feedback, versatility and accessibility. Many image processing and 3D rendering technologies are competing in this field with multiple hardware companies seeking to integrate their equipment into surgical theatres such as Novarad, Medtronic and Philips. Initial 3D rendering software used in early study utilized open-source algorithms and image viewers, while, in recent years, there has been a commercial overhaul with image processing and rendering becoming proprietary information. This is unsurprising given the current market evaluations and forecast growth in the field.

Nguyen et al. highlighted the limitations of AR-guided systems by analysing the dimensional accuracy and positioning of the mixed media. A margin of error of 10.81% and 2.67% was recorded in the x and y dimension, respectively, and up to 16.66% was reported in the z dimension for the indirect measurement of virtual objects through the HMD. This highlights the current drawback of AR accuracy, attributable to camera resectioning, image noise and filtering and image parallax. 25 Nonetheless, the authors deem this technology suitable for high-level tasks such as visualization and incision planning rather than fine movement actions such as screw placement.

The accuracy of AR visualization is dependent on many factors including CT imaging resolution, virtual 3D modelling, marker placement for superposition and anatomical displacement and distortion post-imaging. Wu et al. utilized an AR system, which superimposes 3D anatomical models onto the patient using localization by superficial skin markers. 26,27 While this approach of localization is non-invasive, the margin of error in superimposition would be higher versus markers attached to bony anatomy such as the spinous process clamp or iliac crest pins. It is likely the obese patient would be less suitable for this imaging system. Choice of markers for virtual image integration onto the field of view will be crucial in future technologies to increase precision. One of the main factors influencing accuracy and precision is the distance from the tracking camera to the reference frame on the patient. This group achieved a maximum error of 1.3 and 1.5 mm in the x and y directions, respectively, with a depth error of 1.8 mm. This system utilized a projected image, which became occluded by the surgeon highlighting the need for an HMD for uninterrupted projection onto the visual field.

The integration of an HMD allowed a virtual image to project onto the surgeon’s visual field, allowing the surgeon to maintain focus on the surgical field rather than reverting to a monitor. In a clinical evaluation of a virtual protractor with augmented reality, five patients with osteoporotic vertebral fractures underwent percutaneous vertebroplasty. 17 Post-operative CT showed that the error of the insertion angle in 10 needle insertions was 2.09° ± 1.3° in the axial plane and 1.98° ± 1.8° in the sagittal plane. Elmi-Terander et al. became the first group to undertake a prospective cohort study of pedicle screw placement in patients. 16 This study achieved 94.1% overall accuracy of pedicle screw placement as measured by post-operative CT. Screw placement was considered successful at either Gertzbein grade A or B; 15 screws had 2–4 mm breach, Gertzbein grade C (all in scoliosis patients).

AR support has been applied in surgical planning and navigation of extra- and intradural tumour resection. 28 This approach reduced average radiation exposure by 70% and navigated with a mean registration error of 1 mm. Recent reports in neurosurgical procedures involving intracranial tumour resections have reported a reduced mean registration area and standard deviation versus spinal surgery reports. 29 –31 It is unclear why these studies report higher accuracy and precision; however, it is likely due to the use of multiple imaging modalities (MRI, CT-angiogram) and the nature of rigidity offered by the skull in the registration of fiducial markers.

Philips first announced an AR operating suite or ‘hybrid OR’ with the aim of improving on existing surgical navigation by creating a 3D reconstruction of patient anatomy overlaid on the surgeons visual field. 18,32 A similar system funded by the AO foundation, Xvision-Spine AR surgical navigation is still under development. The authors of this review are currently using Epson Smart Glass (Moverio BT35F, Epson, Tokyo, Japan) for visualization of real-time navigation images acquired by Medtronic O-arm2 and Stealth Navigation system (Medtronic) as shown in Figure 1.

Example of real-time CT navigated spinal surgery with AR assistance. (a) Image on the navigation monitor is simultaneously displayed in the surgeon’s AR HMD enabling the surgeon access the image overlaid on surgeon’s view of the operative field. Surgeon does not need to move his head to see the navigation monitor. (b) In-display renderings of 2D image overlay on preoperative CT scan with real-time instrument feedback. The image seen on the monitor is a virtual image. When this virtual image is overlaid on the patient, the user is experiencing an AR. An AR and mixed reality are visually identical; however, virtual overlays can be manipulated by the physical environment (positional sensors on the patient). An extended reality will not change the surgeon visual perception; however, it includes tactile instrument responses and non-visual inputs and outputs to a reader network of systems. AR: augmented reality; CT: computed tomography; 2D: two dimensional; HMD: head-mounted display.

Trends in advanced navigation systems

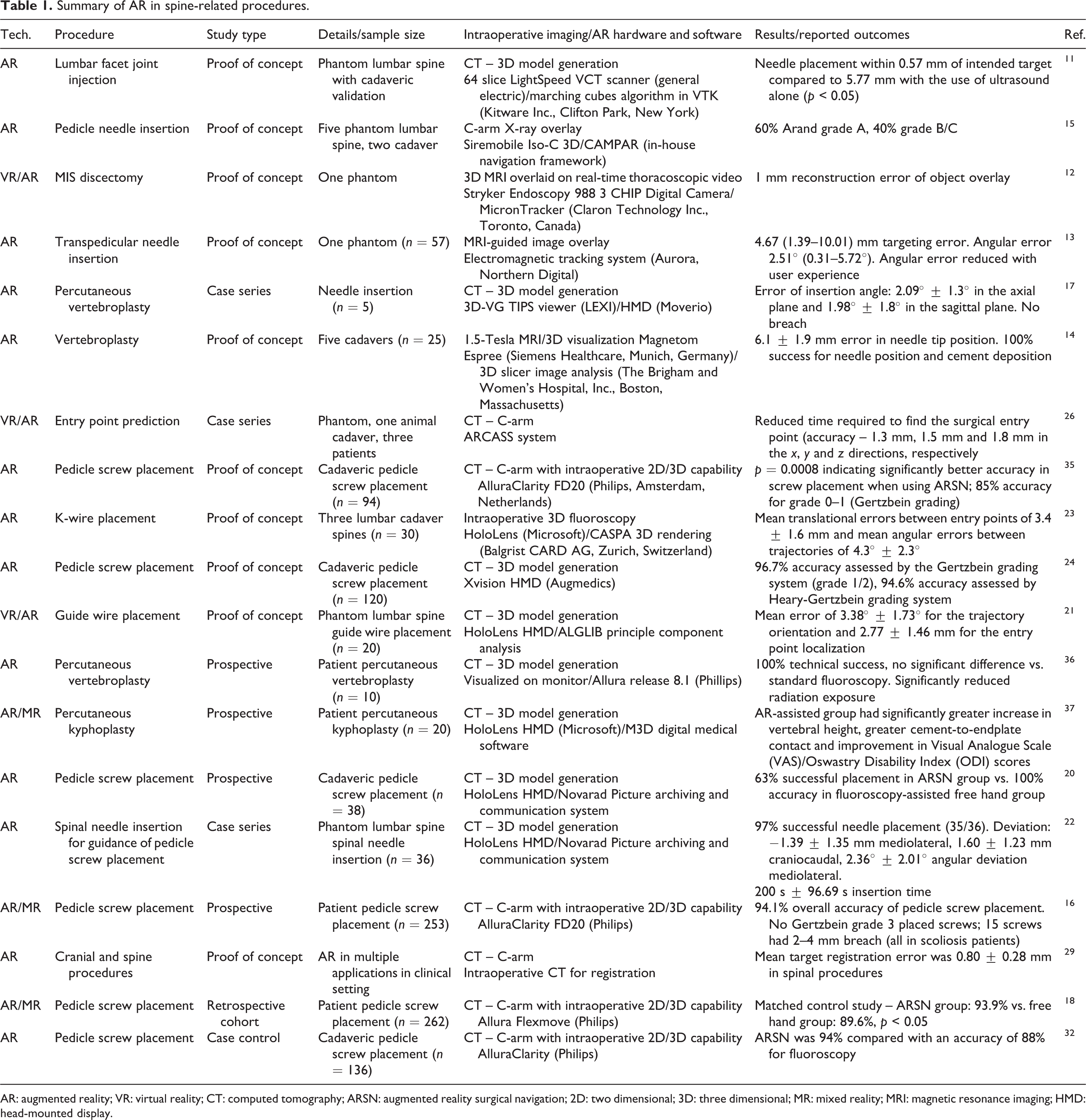

In total, 19 studies investigating the precision and accuracy of AR systems in spinal procedures have been performed to date (Table 1). Twelve studies focused on pedicle screw placement (or guide wire/needle insertion), four studies used AR to guide vertebroplasty. MIS discectomy, target registration in cranial and spinal procedures and facet joint injection were each also investigated in individual studies. Seven studies used phantom models, eight used cadaveric spines and six involved patients undergoing spinal procedures. While early studies integrated MRI imaging, most studies opted for CT-based imaging systems (14 of 19). X-ray and fluoroscopy have also been integrated in recorded reports. The Microsoft Hololens appears to be the HMD of choice, being used most widely in studies that integrated an HMD (4 of 7). Output parameters vary widely but common measurements can be summarized; 9 of 19 studies recorded registration error, 6 of 19 studies recorded the angular deviation of the instrument path, 4 of 7 studies that measured pedicle screw placement used a placement grading system (Gertzbein grade); 7 of 19 studies use a conventional technique to compare measured parameters. Other measured parameters include registration times, pedicle screw placement time, successful insertion (yes/no output), procedure-specific parameters (kyphoplasty: vertebral height measurement). These findings highlight the variability in reporting standards for this emerging technology.

Summary of AR in spine-related procedures.

AR: augmented reality; VR: virtual reality; CT: computed tomography; ARSN: augmented reality surgical navigation; 2D: two dimensional; 3D: three dimensional; MR: mixed reality; MRI: magnetic resonance imaging; HMD: head-mounted display.

Commercialization standards for navigation systems

Precision and accuracy are the key parameters that are investigated to validate a navigation system. The aim of these studies presented is not to successfully place a pedicle screw, since skilled surgeons already have expertise in pedicle screw placement. Instead, the main aim of these studies is to validate these systems for precision, accuracy, competency, consistency and accountability for the consideration of widespread adoption by less-skilled operators. The FDA has laid out parameters that these systems must meet for approval for procedures such as pedicle screw placement in accordance with Standard Practice for Measurement of Positional Accuracy of Computer Assisted Surgical Systems (<3 mm screw tip deviation, <3° angular deviation). 33

It is evident from the current literature investigating the use of AR systems in spine procedures that there is a need for standardization of clinically relevant outcomes. Studies thus far have heterogeneously reported accuracy and precision across x, y and z dimensions; imaging integration accuracy; successful screw insertion; Gertzbein grade of screw insertion; correctly identified targets; error in angle of trajectory; time of screw placement and many other parameters. It will be important to standardize these outputs and identify key clinical parameters that further assess the need, benefit and economic validity of this technology to attract capital investment and encourage widespread adoption. At a minimum, all studies must include data to comply with Standard Practice for Measurement of Positional Accuracy of Computer Assisted Surgical Systems and achieve <3 mm screw tip deviation and <3° angular deviation in pedicle screw placement. Early studies did not always meet these thresholds; however, recently, many systems have achieved screw placement within these parameters consistently. Navigation systems have not been fully validated because it is difficult to measure position accuracy and error, and crucially, not all studies report tip and angle deviation, making validation and cross-system comparison very difficult.

These technologies also have great expansion potential into education and surgical training. Tangible organs may be printed from MRI-acquired image to provide a better anatomical reference tool as a tailor-made simulation and navigation. 34 This contribute to medical safety and accuracy, less-invasiveness and improvement of the medical education for students and trainees.

Barriers to widespread adoption

It is clear from the advances in this field that there is a trend towards AR, VR or MR in an HMD system integrated with sensitive superficial or deep markers for image localization (Table 2). CT imaging maintains dominance over other imaging modalities due to speed and ease of access with relatively low exposure to staff versus other approaches like fluoroscopy. There does not appear to be consensus on the accuracy and precision of these technologies; however, commercial prototypes tend to outperform academically developed systems and open-source algorithms. The potential advantages of AR implementation are clear visualization of subcutaneous anatomy, serves as valuable training tool, and reduces learning curve of challenging procedures, allowing for more MIS procedures. Since the organ model in AR visualization uses a polygon (STL (Standard Triangulated Language), OBJ (Object) format) 3D printable format, it can be 3D printed to produce a physical construct identical to the virtual construct and used for surgery planning and simulation. 34

Differences between VR, AR, MR and XR specific to spinal procedures.

CT: computed tomography; MRI: magnetic resonance imaging; HMD: head-mounted display; XR: extended reality; AR: augmented reality; VR: virtual reality; MR: mixed reality.

Current limitations of these technologies include variable inaccuracy, visual fatigue, re-registration and recalibration and most importantly cost of instalment, adaptation in OR workflow and training of staff. Biggest issue in terms of error is registration errors or movement of the registration device during surgery thus rendering the system inaccurate. Technology to continuously detect this or to re-register and calibrate the image guidance software during the procedure would be a worthwhile development. While increased cost may be a prohibitive factor for adoption, the potential increase in accuracy, reduced number of revisions and reduced radiation exposure can all offset the high upfront cost of these systems.

Risk of attention shift towards a remote display and dissociation from the actual patient creates pitfalls in placement of instrumentation; however, this is largely being addressed in newer generations with HMD visualization. Line of sight interruption caused by detector occlusion by the surgeon is current major drawback limiting accessibility and versatility; however, this is being addressed in newer generation detector systems with multiple cameras and head-mounted detectors for remote tracking of the reference frame on the patient and tracking of the instrumentation. Other issues have been noted such as image latency, poor image resolution, low brightness and contrast which are being addressed with improved image processing and projection feedback relays. Image latency or delay may arise with bulky processing-intensive renderings; however, organ model is generally 50 MB or less and rendered without difficulty. AR accuracy depends on the projection resolution, which is accommodated by HoloLens (first generation) resolution equivalent to HD (High Definition) and the newer HoloLens 2 which has 2 K resolution with one eye and 47 pixels per degree of viewing angle. The processor of HoloLens 2 has been changed from Atom base to Qualcomm’s Snapdragon 850, and processing performance and power consumption have also been improved. Magic Leap 1, the HMD used by the authors, has one-eye resolution of 1280 × 960, wide viewing angle of 50°, 1.3 MP resolution, 16.8 million display colours and a refresh rate of 20 Hz.

While brightness and contrast limitations can make it difficult to see digital models in bright outdoors, HMD capabilities making models highly visible in the operating theatre setting. The surgical theatre may be darkened or the model may be placed in a low light area of the theatre to improve image discrimination if necessary.

Commercially available HMDs have variable battery lives and from 2–3 h for Hololens to 1–5 h on Google glass in a gaming environment. Depending on the specifications of each device, complex model renderings used in gaming and entertainment generally have a higher burden on battery life. On the other hand, using for surgery or education at the medical site, polygonized patient organ models are compressed, reduce processing demands and increase HMD battery life to beyond 5 h. Currently, we are using Oculus Quest, HoloLens 2, Magic Leap 1 and cordless devices. Magic Leap 1 is made of an HMD component attached to a processing unit, connected by a 1-m cord, eliminating movement restriction. Many HMDs can be used while charging with a mobile battery.

There is a steep learning curve in integrating this technology for new adopters, especially for those with limited immersion in AR, VR or MR environments. This carries a potentially high cost in training personnel with few technologies on the market holding an oligopoly. There is also a risk of low experience users learning only this type of technology who will not have the capability of doing free-hand instrumentation should the system fail in surgery.

The lack of standardization of technology, image acquisition, processing and rendering software as well as outcome reporting in accuracy and precision make it difficult to discern where the state-of-the-art and superiority lies in clinical relevance. It is likely that systems reporting sub-millimetre mean registration error and angular error in decimal degree ranges will be sufficient to undertake most challenging applications such as tumour resections and complex deformities in MIS procedures.

Ongoing evolution in the field with extended reality (XR)

With the rapidly growing pace in the advancement of AR, VR or MR technologies, a term XR has gained interest and has also been taken in the medical field to promote broader integration of these technologies. XR refers to all real-and-virtual combined environments between human and computer-generated input processed to create an interactive environment. The ‘X’ represents an assigned variable for any current or future spatial computing technologies that fulfil a function to adapt a user environment to include VR, AR and MR. XR may be differentiated from the previously mentioned technologies by its increasing integration of further real and virtual components to heighten the user experience. This includes physical inputs in surgery such as instrument sensors, patient vitals and intraoperative imaging synchronization but also a wider network of integrated system such as cloud-based information transfer. These inputs are processed to create a seamless mixed environment including intraoperative benefits such as instrument tactile feedback, real-time dynamic patient anatomy during manipulation and deeper visualization to include interactive surgical planning and navigation. Other outputs include later surgical simulation based on real surgical procedures, as a teaching tool for students. HoloEyesXR cloud system can transform 3D image data from a patient’s CT, MRI or ultrasound data into 3D VR data viewable on a VR or MR HMD. Similar system exists in the field of surgery and similar technologies are offered by Surgical Theater Company(https://www.surgicaltheater.net) and MediView XR company (https://mediview.com).

Imaging platforms and health system integration

The value of XR is in the degree of systems integration throughout the healthcare system to create a unified reality and produce heightened clinician awareness for improved patient outcomes. Cloud services are vital to advance the field in XR technology in medicine. Such cloud systems can transform 3D image data from a patient’s CT, MRI or ultrasound data into 3D VR data viewable on a VR or MR HMD (Figure 2). This revolutionary service enables many regular, low experience surgeons who are not familiar spatial computing to access the technology without the inconvenience of converting medical images to 3D VR data using complex computer languages. The images acquired from the imaging department will be uploaded to the cloud and will be automatically and instantly converted and mounted through the internal hospital network to corresponding HMD systems. The system is highly compatible with VR and MR HMDs. With such a system, VR, AR or MR technologies can assist surgeons during operation in simulation and navigation through efficient instrument guidance but also outside of the surgical theatre to enhance education of trainees and students, provide a more interactive demonstration for informed consent and offer remote consultation with surgeons worldwide using VR conferencing (Figure 3). The authors have utilized HoloEyesXR system (medical device certified, class II), a leading example of a cloud service with Magic Leap 1 HMD during thoracoscopic spine surgery to identify the most efficient entry points for inserting ports for cameras and instruments in accessing the spine and for pedicle screw placement (Figure 4). An integrated, user-friendly, cloud service is an ideal platform to incrementally expand input and output modalities to enhance XR as the technology progresses to create a truly integrated health service.

Workflow of Holoeyes image requisition, data analysis and conformational modelling. A wide scope of compatibility allows for easy integration of various HMDs in the hands of low experience users. Modern cloud services allow for easy acquisition and processing of image data for 3D modelling and use in AR in theatre. Cross-compatibility is crucial to ensure complete integration of systems. In future, these technology platforms will expand the network to include further inputs from instruments and robotics to improve surgical planning, technical precision and patient outcomes. 3D: three dimensional; HMD: head-mounted display; AR: augmented reality.

Overview of applications for XR in the medical field through MR integration. Combined facets of VR and AR technologies illuminate surgical fields, enhance navigation, enrich interactive learning environments, offer patients new insights and understanding of their ailments and allow for mass dissemination of surgical techniques and approaches worldwide. AR: augmented reality; XR: extended reality; VR: virtual reality; MR: mixed reality.

(a) The surgeon utilizes HoloeyesXR system with Magic Leap 1 HMD during pedicle screw placement in spine surgery. (b) 3D rendering of patient axial skeleton in situ superimposed on the patient by the MR device (Magic Leap 1). The access pathways to the spine via transcostal approach (green arrows) for thoracoscopic discectomy are highlighted. HMD: head-mounted display; MR: mixed reality; 3D: three dimensional.

Future directions

This technology must be easily integrated into existing ORs, with low-financial burden and proven clinical benefit. A surgical navigation workstation integrated into the HMD may be the natural progression of this technology. Improved haptics to provide tactile feedback for the surgeon should be implemented. Advanced high-resolution infrared tracking cameras directly positioned over the patient will perform active matching registration to the patient with live surface tracking to account for active movements and deformity corrections to return objective data to the user. Artificial intelligence (AI) and machine learning will utilize complex feedback processing for improved instrument guidance.

The value of this technology lies in deskilling very technically challenging procedures. 35,36 XR offers a significant advantage in the guidance of high precision osteotomy cuts for en bloc tumour resections where normal anatomy may be compromised and largely patient-specific. This technology has the potential to inform and assist surgeons in complex procedures that require high precision and accuracy such as spinal cord decompression, arthrodesis, anterior cervical discectomy and fusion/posterior thoracic decompression and fusion and complex deformity surgery using MIS interventions. This system also has the potential to highlight anatomical variation in vascular and neurological structures in real time should they arise in a given patient.

Next generation systems must be intuitive with low learning curve aims to fulfil a role in specialist surgeries where AR is of significant advantage. This evolution in navigation technologies is one of many examples of systems integration to further the ‘internet of things’. XR technology platforms can offer more immersive training scenarios for surgeons with a broader range of sensory feedback, including high-fidelity haptic systems. Ultimately, these technologies must be cost-effective to ensure widespread adoption. This may be achieved through reduced surgical times, decreased incidence of post-operative complications and revisions while maintaining equivalent safety profile to alternative surgical approaches. Future progression will ensure surgical planning, decision-making and responsibility lie in the hands of the surgeon, augmented by XR, robotics and AI technologies.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.