Abstract

Drawing on data from the Life in Australia™ panel (ANUpoll Wave 31; n = 1,911), this study investigates the factors that shape individuals’ perceived increase of cybercrime victimisation risk and how these perceptions influence their online disclosure behaviour. Using ordered probability and partial proportional odds models, we examine the role of personal attributes, individual safety concerns, perceived capability to avoid cybercrime, and perceived capability of institutional guardianship. The study provides a unique contribution by employing recent nationally representative data from all Australian states and territories to analyse multiple dimensions of cyber-related concerns. We also use a detailed categorisation of specific cybercrime types to predict both the perceived increase of risk and preventative behavioural adaptations. Guided by Ferraro’s risk interpretation model and Beck’s risk society paradigm, we find that personal attributes and concern factors differentially shape individuals’ perceptions of increasing cybercrime risk. Concerns about identity crime and misuse, online goods fraud, banking fraud, and malicious software significantly heighten perceived increases in risk. These perceptions are further influenced by trust in online security systems and public data guardianship, consistent with the broader concept of institutional guardianship. Overall, the findings show that diminished confidence in digital and institutional safeguards predicts stronger perceptions of increasing cybercrime risk and greater caution in personal information disclosure.

Keywords

Introduction

The rising prevalence and perceived threat of cybercrime has become a hallmark of life in today's digital society. Both the actual and perceived risk of cybercrime victimisation and perceptions of vulnerability online have increased in recent years (Rughiniș et al., 2024; Virtanen, 2017). This may be attributed to the increase in the percentage of the world's population using the Internet (approximately 66%) (Pelchen & Allen, 2024) but also to users’ reliance on technology and the Internet for communication, information dissemination, commerce, education, and social interactions as well as highly publicised cases of online fraud and deception (Ho & Luong, 2022; Reep-van den Bergh & Junger, 2018).

This phenomenon fits within what Beck (2009) conceptualised as the emergence of a risk society – an era in which societal focus shifts from the distribution of wealth to the distribution and management of man-made risks, especially those created through modernisation and technological advancement. In the context of cybercrime, these risks are often invisible, incalculable, and global in nature, making them particularly difficult to manage using traditional institutional structures. Trust in public and private guardianship of online safety, whether government agencies, digital infrastructure providers, or security systems, has become increasingly fragile, especially when these institutions fail to communicate transparently or respond effectively to risk events (Ngwenyama et al., 2023). Such failures can lead to an erosion of reflexive trust (Luhmann, 1979), undermining individuals’ confidence in their ability to navigate the digital world safely.

The association between individuals’ perceived risk of victimisation, vulnerability, and fear has been established (Ferraro, 1995). These interconnected constructs collectively contribute to one's perception of personal control over one's life (Shippee, 2012). The perception of risk has been a consistent predictor of fear (Ferraro, 1995; Rader et al., 2012; Wyant, 2008). Individual perceived risk also provides implications regarding the actual safety of one's surroundings, likelihood of victimisation, and subsequent disempowerment (Adams & Serpe, 2000). Drawing upon Ferraro's (1995) risk interpretation model, Virtanen (2017) extended this framework to the cyber domain using a representative European sample. Virtanen demonstrated that gender, social status, confidence in navigating online environments, and prior victimisation experiences have both direct and indirect effects on fear of cybercrime through perceived risk. This adaptation was one of the first to connect traditional criminological theories of risk perception with digital contexts, showing how vulnerability and capability interact to shape emotional responses to online threats.

Earlier research on fear of cybercrime, by contrast, relied on small samples of U.S. college students and often lacked a clear theoretical framework for interpreting findings. These early studies generally lacked a nuanced framework to interpret the fear of cybercrime and tended to overlook the cumulative and differentiated nature of such fears. Specifically, most research focused on isolated cybercrime types, failing to account for how fear might manifest differently across various forms of online victimisation. While typologies such as cyber-dependent crimes, which specifically target or require the use of computers or digital technologies, and cyber-enabled crimes, which involve but do not rely on digital technologies (McGuire & Dowling, 2013), as well as distinctions based on victim targeting (e.g., interpersonal vs. property-related crimes) (Cross et al., 2021), have helped systematise the field, the emotional and psychological responses associated with these categories remain underexplored and insufficiently disaggregated. There are emerging exceptions that take a broader or integrative approach. For instance, Guedes et al. (2025) examined both interpersonal (cyberbullying and cyberstalking) and property-related cybercrimes (fraud, identity theft, malware) in a comparative framework, demonstrating that the predictors of fear differ substantially between these categories and underscoring the value of a multidimensional approach.

Contemporary scholarship increasingly emphasises the importance of a crime-specific lens when examining fear, noting that individuals may fear cyber-harassment without fearing identity theft or malware. This differentiation is supported by evidence that gender, age, victimisation history, and Socio-Economic Status (SES) variably predict fear across cybercrime types. For instance, women and younger individuals tend to report higher levels of fear for interpersonal cybercrimes like cyberbullying and stalking, while economic insecurity is a stronger predictor of fear related to property-based cybercrimes such as fraud and malware (Brands & van Wilsem, 2019; Cook et al., 2023; Henson et al., 2013). Their findings underscore the importance of a multidimensional approach and complement Virtanen's insights into the layered nature of cybercrime fear.

Building on this body of research, the current study applies the concepts of victimisation, vulnerability, and perceived risk of cybercrime to a representative Australian sample, examining how factors such as gender, age, Indigenous status, SES, English proficiency, and confidence in using the Internet, as well as past victimisation and online safety concerns, shape perceptions of victimisation risk and cautious behaviour when sharing personal information online. Importantly, this study contributes to a growing literature on the digital risk society by investigating how Australians experience, interpret, and respond to cybercrime threats in a context where digitalisation of government services and commerce is near universal and where expectations of institutional guardianship are high. Through this lens, we explore how individuals may be disproportionately affected by cyber insecurity, not just due to technological vulnerabilities but because of specific cybercrime concerns (e.g., concerns relating to identity crime and misuse, online abuse and harassment, malware, and fraud and scams) and concerns about website security and public authority security that shape their risk perception and risk-averse behaviour.

Although the relationship between fear of crime and the adoption of tactics of self-defence against cybercrime has been established, former studies tend to focus on individuals’ overall fear of cybercrime and not on specific concerns as we do in this study. Empirical evidence consistently shows that a heightened fear of cybercrime is associated with increased engagement in protective behaviours online. For example, Drew (2020) examined a representative Australian sample and found that individuals who perceived higher prevalence and harm of cybercrime were significantly more likely to employ password, software, and privacy protection strategies, even after controlling for prior victimisation. This study highlights the importance of cognitive–emotional drivers such as perceived risk, harm, and fear in motivating online self-protective behaviour. Tsai et al. (2016) found that perceived threat severity and coping appraisal factors such as response efficacy, personal responsibility, and habit strength significantly predicted intentions to adopt online security behaviours among Internet users. Akdemir (2020) also found that individuals with a higher fear of cybercrime are more cautious when disclosing personal information online. Fear of cybercrime does not appear to interrupt online shopping habits of young Internet users. Some individuals, however, are more cautious in their approach to buying online. Here, they tend to employ security measures such as “…purchasing items only from secure websites, checking the signs that indicate a website is secure and only purchasing from well-known or trusted websites” (Akdemir, 2020, p. 615). However, there are others that are more at risk because of personal vulnerabilities (e.g., experience, lack of security awareness, and vulnerability to social engineering) or loopholes in security systems (Phillips et al., 2022).

Former research has shown that Internet users, particularly younger users, who report higher degrees of fear or perceived risk of cybercrime tend to engage in more cautious online behaviours. For instance, Zhou and Liu (2023) found that among Chinese teenagers, higher perceived privacy risk directly increased online privacy-protection behaviours, such as using privacy settings and refusing data requests, both directly and indirectly via privacy concerns. Similarly, Youn (2005) demonstrated that among teenagers, higher perceived risk of information disclosure reduced willingness to provide personal information online and increased risk-lowering behaviours such as falsifying or withholding data and avoiding websites that request sensitive information. Consistent with these findings, Dinev and Hart (2006) demonstrated that risk–benefit appraisals strongly predict individuals’ willingness to disclose information online: Higher perceived risk lowers disclosure intentions and increases protective actions. Collectively, these studies support the argument that perceived risk and fear can motivate proactive privacy-protective behaviours, especially among younger Internet users.

Using a sample of 1,911 Australians, this study explores the relationship between respondents’ personal safety concerns in cyberspace and their perceived risk of victimisation and their behavioural adaptions towards precautionary protection of personal information online. This unique dataset is derived from the 31st wave of the Life in Australia™ survey. We use these data as they currently represent the only data in Australia to measure the perceived risk of cybercrime victimisation and multiple concern factors across all Australian states and territories. Concern factors refer to weakness and vulnerable marks observed by respondents within the system towards four specific cybercrime problems as categorised in a recent Australian cybercrime victimisation study (Voce & Morgan, 2023). These include identity crime and misuse, online abuse and harassment, malware, and fraud and scams. Previous studies have not used such detailed categorisations to predict the risk of victimisation and preventative behavioural adaptations. Other predictors also include respondents’ personal attributes (e.g., age, gender, highest education level, Indigeneity, SES level) and their perceived sense of capability to protect themselves from cybercrime victimisation.

By examining these predictors, we can identify patterns and disparities in how different groups perceive the risk of becoming victims of cybercrime and are likely to adopt behavioural changes across the four categories of cybercrime threats. As such, we address the following Research Questions (RQ) in an Australian context: RQ 1. Is there a relationship between an individual's sense of capability to avoid cybercrime, personal safety concerns over the four categories of cybercrime, and an individual's perceived increase of cybercrime victimisation risk? RQ 2. Is there a relationship between an individual's sense of capability to avoid cybercrime, personal safety concerns over the four categories of cybercrime, and an individual's protection and disclosure of personal information online?

By answering these RQs, this study makes a significant contribution to the existing literature by examining the disclosure of personal information online and its implications for data breaches affecting Australian and global users. It explores how individuals may continue to engage in unsafe online behaviours despite the known risks of cybercrime and assesses perceived victimisation risk and concern factors. Overall, the study explores the factors that are associated with both the perceived risk of cybercrime victimisation and the disclosure of Personal Identifying Information (PII) among Australian computer users.

Theoretical background

Ferraro's risk interpretation model

Ferraro's (1995) risk interpretation model suggests that fear of crime is influenced by the perceived risk of victimisation, distinguishing the emotional experience of fear from perceived risk. For instance, fear of crime reflects an emotional reaction, such as anxiety about PII being misused, while perceived risk refers to one's assessment of the likelihood of victimisation after disclosing PII. The distinction between these constructs is well established (Ferraro & Grange, 1987; LaGrange et al., 1992; Rountree & Land, 1996).

A core argument of the risk interpretation model is that individuals continuously gather and interpret information about potential victimisation through social interactions. Personal characteristics shape how they assess their own situations, meaning cognition is influenced by social factors such as their societal position. The model highlights social and physical vulnerabilities, suggesting that individuals lacking financial resources to cope with the aftermath of victimisation or those less capable of handling its physical or emotional effects perceive a higher risk of victimisation (Dubow et al., 1981). Consequently, minorities, individuals with low SES, women, and older adults tend to report greater perceived risk and fear of crime (Clemente & Kleiman, 1976; Li et al., 2022; Sampson et al., 1997; Schafer et al., 2006), though some argue that gender differences result from socialisation rather than inherent vulnerability (Sutton & Farrall, 2005).

Ferraro's (1995) model outlines two pathways linking personal characteristics to fear of crime: a direct connection between factors such as gender and fear levels and an indirect pathway where personal characteristics influence risk perceptions, which in turn affect fear levels. For example, belonging to a minority group may heighten perceived risk, thereby increasing fear of victimisation. It is also important to note that the association between these constructs is complex (Shippee, 2012). The association between the emotional aspect and cognitive aspect of fear of crime may be conceptualised as reciprocal with simultaneity or reverse causality. The emotional fear of crime or concerns of victimisation may affect one's judgement on the perceived risk of crime just as how the perceived risk may influence one's emotional concerns. Soto et al. (2022), using a model inspired by Ferraro's model, for example, found that fear of crime at different locations predicts perceived risk of victimisation in the context of public transportation.

Beck's risk society paradigm

Ulrich Beck's (1992) risk society theory offers a powerful lens for understanding the emergence and intensification of cybercrime victimisation in the digital era. Beck suggested that contemporary societies have transitioned from concerns over the distribution of wealth and class struggle to managing the distribution of risks, many of which are global, invisible, and incalculable by nature. In this paradigm, cybercrime represents a quintessential risk of “second modernity” which is man-made, technologically induced, and socially amplified.

Central to Beck's argument is the idea that modernisation has produced side effects (so-called “bads”) such as climate change, nuclear accidents, or now, data breaches and digital surveillance. As societies become more reliant on digital infrastructures, new vulnerabilities emerge, particularly in the form of cyberthreats to individuals, institutions, and national security. Importantly, Beck (1992) identified these threats as “non-quantitative uncertainties”, which are invisible and not easily managed through traditional institutional means.

Ngwenyama et al. (2023) expanded on this by articulating the rise of the digital risk society, where digitalisation itself becomes a source of threat. Their study of Denmark's NemID system shows that citizens face increasing vulnerability to risks exacerbated by the complex interplay between state-managed digital infrastructures and private corporate control such as data breaches, identity theft, and service outages. These developments intensify a key issue raised by Beck: the erosion of public trust in institutional guardianship, particularly when governments appear ill-equipped to protect citizens from the unintended consequences of digital modernisation.

Trust becomes critical in this risk society framework. As Beck (1992, 2009) argued, a defining feature of second modernity is the collapse of confidence in institutional authority and expert systems. When public officials fail to adequately manage cyber risk events or when they rely on opaque or “systematically distorted” communication strategies (Ngwenyama et al., 2023), public anxiety and distrust are exacerbated. Citizens may then feel increasingly alienated or helpless, forced to accept digital infrastructures (e.g., eID systems or online banking credentials) even while doubting their safety.

In this context, guardianship takes on a new meaning. Traditional notions of guardianship (e.g., policing or surveillance) must now be reconceived to address diffuse, technological threats that transcend physical space. According to Beck's (2009) risk society theory, effective guardianship in the digital era cannot be delegated solely to the state. Instead, it requires cooperative governance, transparency, and empowerment of citizens through digital literacy and inclusive risk communication. However, as Ngwenyama et al. (2023) showed, governments frequently lack the institutional agility and communication capacity to navigate digital risk events, leading to reactive policies and eroding legitimacy.

Furthermore, when digital infrastructure is privately owned or outsourced to multinational firms, as was the case in Denmark's sale of NemID to foreign investors, citizens’ concerns over data sovereignty and the risk of foreign surveillance are amplified. These fears are not merely speculative: They reflect what Beck (2009) described as the boomerang effect of modernisation, whereby unintended consequences “return” to destabilise social institutions and citizen confidence.

Hypotheses

Hypotheses for RQ1

Here, we test several hypotheses. H1a. Individuals with a higher sense of capability to avoid cybercrime will report a lower agreement that cybercrime risk is increasing. H1b. Individuals with higher personal safety concerns about specific cyber threats will be more likely to agree that the risk of cybercrime victimisation is increasing. H1c. Individuals with a greater concern about website security and public authority security will be more likely to agree that risk of cybercrime victimisation is increasing.

Hypotheses for RQ2

Also underpinned by the above theory, the same set of factors is likely to influence the adoption of defensive actions to deal with perceived concerns in the system. We test several hypotheses. H2a. Individuals with a higher sense of capability to avoid cybercrime will be more cautious about disclosing personal information online. H2b. Higher personal safety concerns will lead individuals to adopt more rigorous precautionary actions regarding the disclosure and protection of personal information online. H2c. Individuals with less concern about website security and public authority security are more likely to disclose information online.

Materials and methods

Data and participants

This study is based on the final, weighted, and coded data produced for the ANUpoll project, run as the 31st wave of Life in Australia™. The data for Wave 31 of the Life in Australia™ panel were collected using a mixed-mode methodology combining online and telephone surveys, conducted between 7 and 21 October 2019. Participants were drawn from a nationally representative, probability-based panel of Australian adults aged 18 and over, recruited through dual-frame random digit dialling of landlines and mobile phones across all eight states/territories of Australia. Of the 2,589 active panel members invited, 1,910 completed the survey, yielding a completion rate of 73.8%. Online respondents received email and SMS invitations followed by up to five reminders via multiple channels, while offline respondents were contacted via SMS and an extended telephone call cycle. Surveys were completed primarily online (via email or SMS links), with a smaller proportion conducted by phone, especially among offline participants. All respondents received a $10 incentive, and additional efforts such as a support hotline and interviewer training were employed to maximise participation.

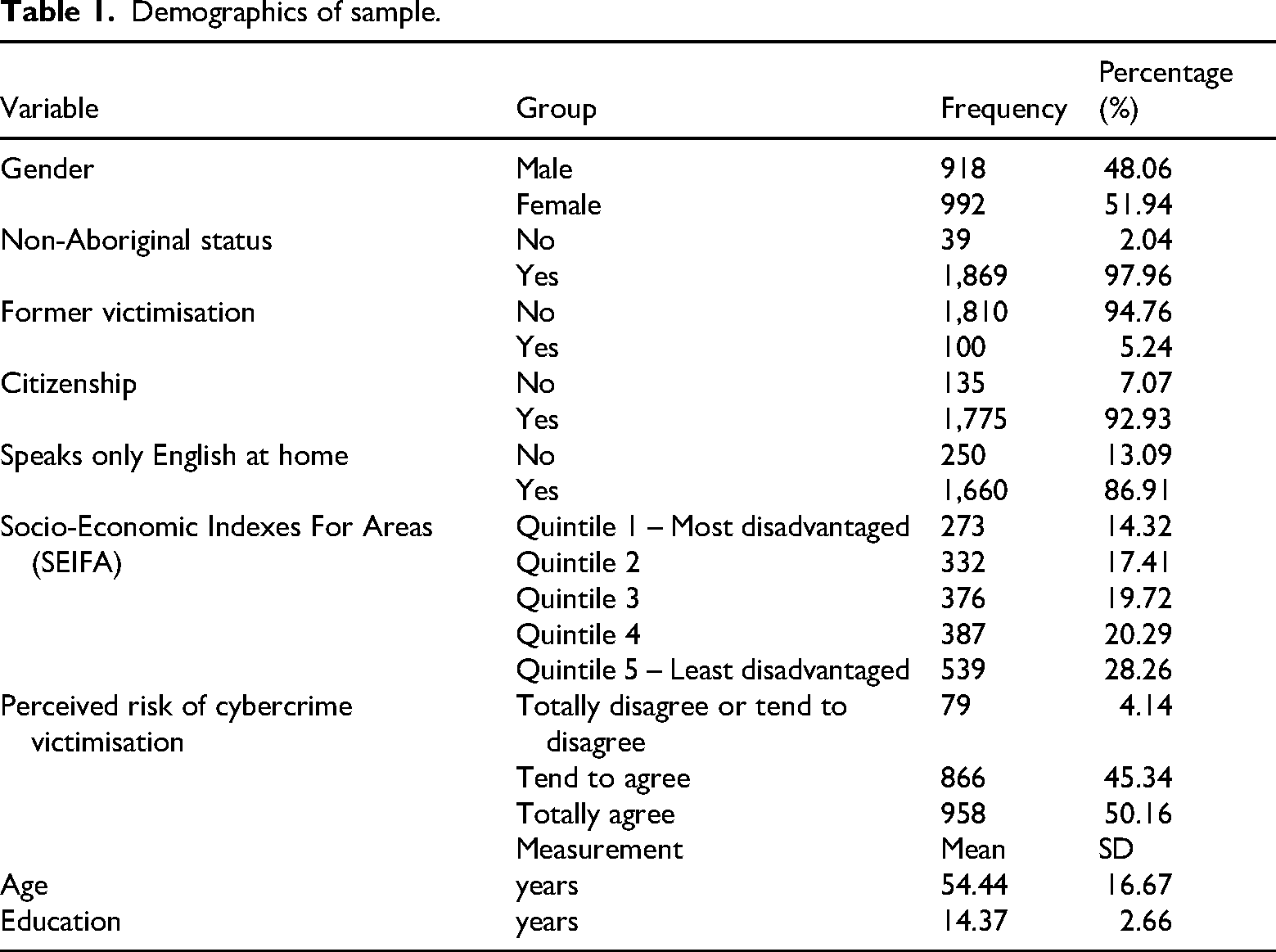

The Life in Australia™ panel implements rigorous data-quality and non-response adjustment procedures. Each wave includes systematic validation of approximately 15% of telephone interviews through remote monitoring, interviewer debriefings, and automated timestamp and consistency checks. To correct for potential non-response bias, the Social Research Centre applies response-propensity weighting based on demographic and behavioural predictors (e.g., age, gender, education, household type, employment status and digital affinity), followed by generalised regression calibration to align with Australian Bureau of Statistics population benchmarks. The cumulative response rate for Wave 31 was 8.0%, indicating robust coverage and representative weighting (Social Research Centre, 2019). Data are weighted to have a similar distribution to the Australian population across key demographic and geographic variables (Table 1).

Demographics of sample.

Dependent variables and independent variables

Dependent variables

For RQ1, we identified the Dependent Variable (DV) as individuals’ perceived increase of cybercrime victimisation risk. This ordinal variable ranges from totally disagree to totally agree (four outcomes) that risk of cybercrime victimisation is increasing (i.e., “You believe the risk of becoming a victim of cybercrime is increasing”). Because of the small number of respondents in the two “disagree” categories (4.1% combined), these were merged into a single category, resulting in a three-level ordinal variable: disagree, tend to agree, and totally agree. For RQ2, we identified the DV as the extent to which individuals report avoiding the disclosure of personal information online (i.e., “You avoid disclosing personal information online”). This is an ordinal variable with four response categories ranging from totally disagree to totally agree.

Independent variables

For both RQ1 and RQ2, we examined a set of theoretically informed independent variables derived from the questionnaire. These include the following:

Self-reported cybercrime protection ability: This variable asked respondents to rate their agreement with the statement “You are able to protect yourself sufficiently against cybercrime, e.g., by using antivirus software”. Responses were coded on a 4-point ordinal scale from totally disagree to totally agree. This variable reflects perceived self-efficacy in avoiding cybercrime. Prior victimisation: Respondents were asked the question, “Have you had contact with the police or the criminal justice system in the last 12 months as a victim of crime?” Responses were dichotomised (yes/no) and used as an indicator of general prior victimisation experience, not limited to cybercrime-related incidents. Perceived guardianship capability: These variables assessed concern that personal information is “not kept secure by websites” and “not kept secure by public authorities” using a scale from 1 (totally agree) to 4 (totally disagree). These items were intended to capture the respondent's perception of the capability of external actors (private and public) to act as guardians in cyberspace. A higher agreement indicated lower perceived guardianship. Consistent with Beck's (1992, 2009) risk society paradigm, this construct reflects trust in systemic, institutional forms of risk management rather than situational guardianship as defined in routine activity theory. Cybercrime-related personal safety concerns (10 separate items): Respondents were asked to report how concerned they were about a range of specific cyber threats, including identity crime and misuse, online abuse and harassment, malware, and fraud and scams. All 10 items were measured on a 4-point scale ranging from not at all concerned to very concerned. These items were analysed separately and not combined into a composite index to allow for the examination of category-specific effects on perceived risk and information disclosure behaviour.

Control variables included standard demographics: gender, age, Indigenous status, highest education level, citizenship, whether English is spoken at home, being locally born, and area-level SES (SEIFA).

Analytic strategy

We applied ordered logit and Partial Proportional Odds (PPO) models to address RQ1 and RQ2. An assumption of ordered probability models is that the slope of the coefficients remains consistent across different outcomes (i.e., of the ordinal DV), except for the cut-off points (termed parallel-line assumption). In many cases, there will be a violation to this assumption. To address this issue, a generalised ordered logit model can be employed, which relaxes the parallel-line assumption for all variables (Williams, 2006). The key distinction between this model and the standard ordered logit model is that the coefficients are not fixed across equations.

In cases where the parallel-line assumption is only violated by one or a few variables, a PPO model can be specified. In this model, some coefficients differ across equations, while others remain the same for all equations. Peterson and Harrell (1990) introduced a gamma parameterisation of the PPO model with a logit function. In this model, each explanatory variable has one primary coefficient and k − 2 additional coefficients (where k is the number of alternatives). Here, k − 1 coefficients represent cut-off points between alternatives. So, for example, if there are four alternatives, there will be four cut-off points. The primary coefficients indicate deviations from proportionality. The gamma parameterisation combines the traditional ordered model features, while accommodating non-proportionality in variables that violate the parallel-line assumption.

If all the gamma values are set to 0, the model effectively becomes a proportional odds model. PPO models can be estimated by employing a user-written program “gologit2” in Stata (Williams, 2006). It is, however, important to exercise caution when interpreting the coefficients of intermediate categories, since the sign of the primary coefficient does not always determine the direction of the effect on the intermediate outcomes (Washington et al., 2003; Wooldridge, 2002).

Ordered probability models and PPO models with different functions (logit or probit) are not nested. To assess model performance, pseudo R2, given by R2 = 1 − (ln Lmodel/ln L0), and Akaike's Information Criterion (AIC) = −2 ln L + 2p are applied. Here, ln Lmodel and ln L0 represent the log-likelihood in the fitted and intercept-only models, and p is the number of estimated parameters. A smaller AIC value indicates a better-fitting model. The pseudo-R2 provides a relative measure of model fit compared with the null model; however, it does not represent explained variance in the same way as R2 in linear regression.

In assessing the statistical significance of model estimates, we used a conventional threshold of p < .05 to identify statistically significant effects. In addition, we reported estimates with p-values between .05 and .1 as marginally significant, reflecting the exploratory nature of this study and the real-world relevance of identifying potentially meaningful patterns in behavioural responses to cybercrime risk. This approach is commonly used in applied social research to avoid overlooking effects that may be theoretically important but fall just short of conventional cut-offs, particularly in models involving complex social behaviours and attitudes. All findings are interpreted with appropriate caution, and marginal effects are clearly distinguished from stronger, statistically significant results in the results sections.

Results

Factors influencing respondents' perceived increase of risk of victimisation

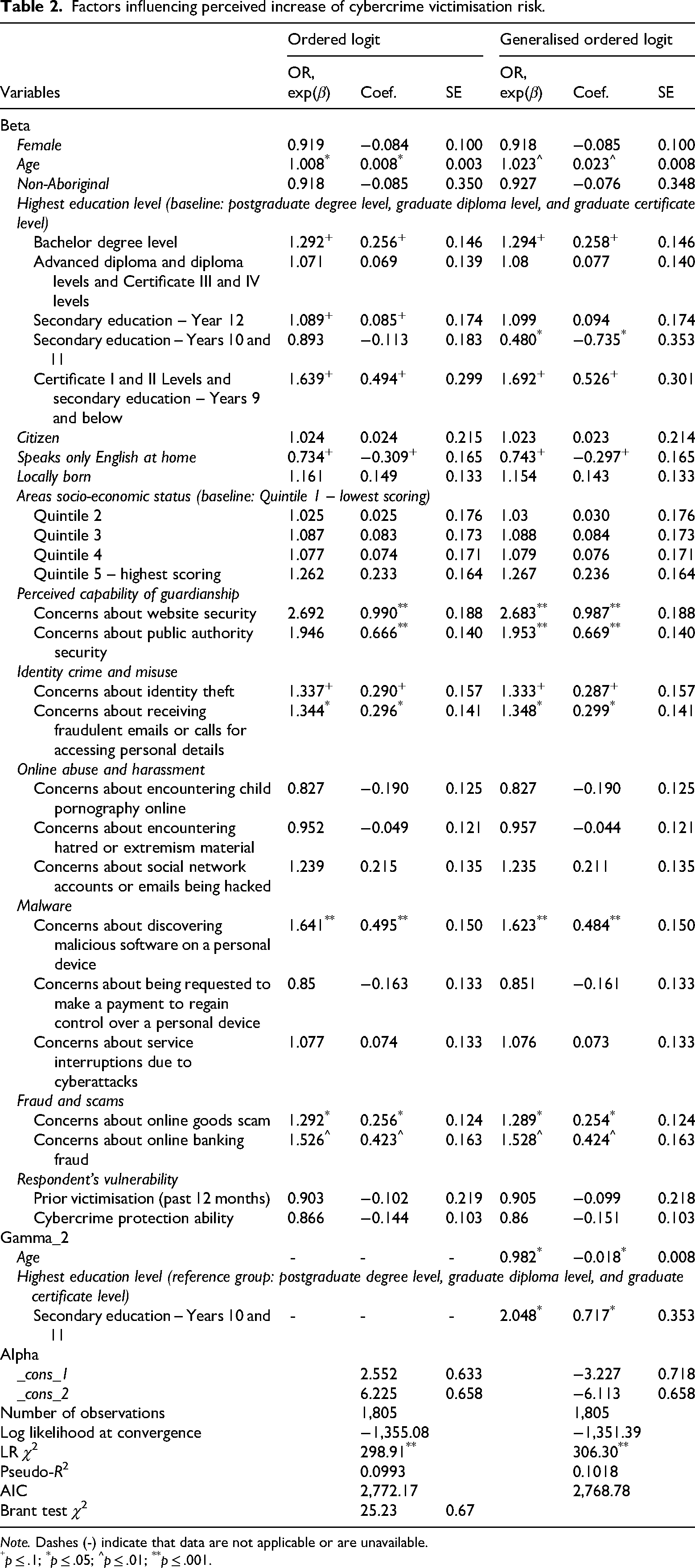

To assess the factors influencing individuals’ perceptions of increasing cybercrime victimisation risk, we estimated both an ordered logit model and a PPO model. While the Brant test indicated no overall violation of the parallel-line assumption (χ2 = 25.23, p = .67), we identified two variables, age and education level (Secondary Education Years 10 and 11), which individually violated this assumption. To account for these, we estimated a PPO model, which allows the effects of these variables to vary across the thresholds of the ordinal outcome. The PPO performed better than the ordered logit model (AIC = 2,768.78 vs. 2,772.17; pseudo-R2 = .1018 vs. .0993). The estimations for the ordered logit model and the PPO are presented in Table 2.

Factors influencing perceived increase of cybercrime victimisation risk.

Note. Dashes (-) indicate that data are not applicable or are unavailable.

p ≤ .1; *p ≤ .05; ^p ≤ .01; **p ≤ .001.

The PPO model estimated one beta coefficient for each variable, gamma coefficients for variables violating the proportional odds assumption, and alpha coefficients for the cut-off points between categories. For age, the beta coefficient was 0.023 (p = .003), and the gamma coefficient (γ₂) was −0.018 (p < .05), indicating that the effect of age diminishes at higher thresholds of perceived increase of cybercrime risk. Specifically, the adjusted coefficient in the second equation becomes 0.005, suggesting a strong influence at lower levels of perceived concern, which then tapers off. In contrast, the education category Secondary Education Years 10 and 11 had a base coefficient of −0.735 and a gamma of 0.717 (p < .05), resulting in a smaller negative effect in the higher outcome category (adjusted coefficient = −0.018). This indicates that the influence of lower secondary education weakens as perceived increase of risk rises.

Overall, the findings show that age, language spoken at home, and certain levels of education were significantly associated with individuals’ perceived increase of cybercrime risk. A 1-year increase in age was associated with a 2.3% increase in the odds of perceiving a higher increase of risk (odds ratio [OR] = 1.023, p = .003). With a marginal significance, respondents with a bachelor’s degree had 29.4% higher odds of perceiving an increased risk compared to those with postgraduate qualifications (OR = 1.294, p = .077). Similarly, those with Certificates I and II or secondary education (Year 9 and below) were 69.2% more likely to report greater perceived increase of risk (OR = 1.692, p = .08). In contrast, respondents with Secondary Education Years 10 and 11 had significantly lower odds of perceiving increased risk (OR = 0.480, p = .04), although this effect varied across thresholds due to violation of the proportional odds assumption. With marginal significance, respondents who spoke only English at home were 26% less likely to perceive an increased risk (OR = 0.743, p = .07). No significant associations were found for past victimisation, gender, Indigenous status, citizenship, area-level SES, or other education levels.

In terms of psychological and behavioural predictors, the results do not support Hypothesis 1a. Respondents with a greater self-reported ability to protect against cybercrime were not significantly less likely to perceive an increased risk (OR = 0.860, p > .1). In contrast, the findings support Hypothesis 1b. Several personal safety concerns were associated with increased perceived risk:

- Identity crime and misuse. With a marginal significance, respondents concerned about identity theft had 33.3% higher odds of perceiving increased risk (OR = 1.333, p = .067), while those concerned about fraudulent emails or calls were 34.8% more likely to report higher perceived risk (OR = 1.348, p = .034). - Malware attacks. Concerns about discovering malicious software on one's device were associated with 62.3% higher odds of perceiving greater risk (OR = 1.623, p = .001). - Fraud and scams. Concerns about online goods scams were associated with a 28.9% increase in the odds of perceiving a higher increase of cybercrime risk (OR = 1.289, p = .04), while concerns about online banking fraud were associated with a 52.8% increase in odds (OR = 1.528, p = .009).

By contrast, concerns about online abuse and harassment, including exposure to child pornography, hate/extremist content, and hacking of email or social media accounts, were not significantly associated with perceived increase of cybercrime risk.

Finally, the analysis provides strong support for Hypothesis 1c, which proposed that concerns about guardianship would be positively associated with risk perceptions. Respondents who were concerned about website security were almost 2.7 times more likely to perceive a higher increase of cybercrime risk (OR = 2.683, p < .001). Similarly, those concerned about public authority security were nearly twice as likely to perceive a greater increase of risk (OR = 1.953, p < .001).

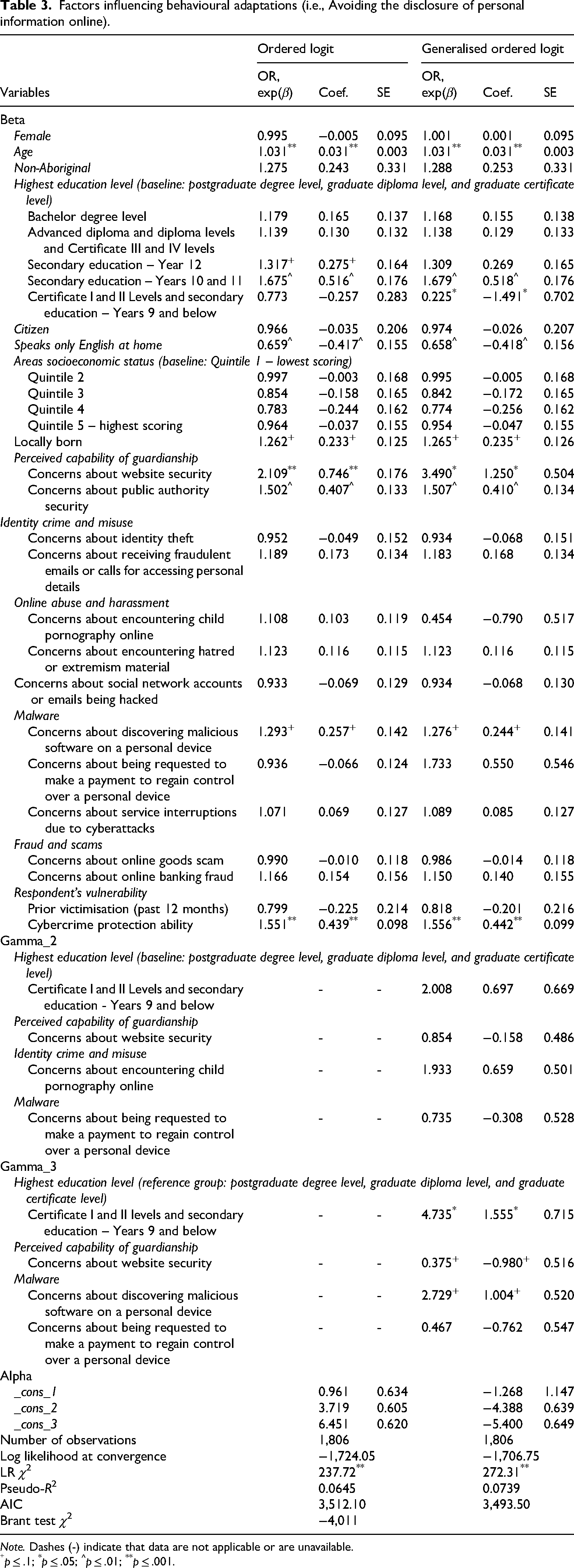

Factors influencing respondents' disclosure of personal information online

Results of the Brant test indicate that the overall model does not violate the parallel-line assumption (see Table 3). The PPO was fitted with four variables that changed across equations (i.e., the highest level of education achieved (baseline vs. ‘Certificate I and II level and secondary education (Years 9 and below)’ and concerns about website security, child pornography online, and payment for device control). The PPO outperformed the ordered logit model (AIC = 3,493.50 vs. 3,512.10; pseudo-R2 = .0739 vs. .0645). The estimations for the ordered logit model and the PPO are presented in Table 3.

Factors influencing behavioural adaptations (i.e., Avoiding the disclosure of personal information online).

Note. Dashes (-) indicate that data are not applicable or are unavailable.

p ≤ .1; *p ≤ .05; ^p ≤ .01; **p ≤ .001.

The estimated PPO model included one beta coefficient for each variable, two gamma coefficients for the four variables identified as violating the parallel-line assumption, and three alpha coefficients representing the thresholds between ordinal categories. While Gamma 2 coefficients were not statistically significant, several Gamma 3 coefficients were. Notably, significant deviations from proportionality were observed for (1) respondents with Certificate I and II levels or secondary education to Years 9 and below, (2) those with concerns about website security, and (3) those with concerns about encountering child pornography online.

For respondents in the lowest education category, the base OR indicated substantially lower odds of avoiding disclosure compared to postgraduates (OR = 0.225, p = .034). However, a significant positive gamma adjustment (γ3 = 1.555) suggests this effect reversed at higher thresholds, yielding an adjusted OR of approximately 1.066 in the highest category of avoidance. Similarly, respondents with concern about child pornography showed no significant effect at lower levels, but at the upper threshold, the odds of avoidance increased (adjusted OR = 1.238). Conversely, the effect of concerns about website security decreased at higher levels: While the base OR was 3.491 (p = .013), the adjusted OR in the third threshold dropped to approximately 1.308 due to a negative gamma effect (γ3 = –0.980).

The results support the view that individuals’ perceived capability to protect themselves online, as well as their concerns about cybersecurity, influence privacy-protective behaviours. Our results strongly support H2a, which predicted that individuals who feel more capable of protecting themselves against cybercrime would be more likely to take defensive action, in this case, by avoiding the disclosure of personal information. Specifically, those with higher self-reported cybercrime protection capability were 55.6% more likely to avoid disclosing information (OR = 1.556, p < .001).

The results partially support H2b. Among the four broad categories of cybercrime concerns, only concerns about malicious software were significantly associated with higher odds of avoiding disclosure (OR = 1.276, p = .084), though the result was marginal. The base effect of concerns about child pornography was not significant, but the Gamma 3 adjustment was, indicating that respondents with this concern had increased odds of avoidance at higher levels of the DV (adjusted OR = 1.238). However, other concerns such as identity theft, fraud, social hacking, or extremist content did not significantly predict avoidance.

H2c hypothesised that individuals with lower concerns about website and public authority security would be more likely to disclose personal information (i.e., less likely to avoid disclosure). The findings strongly support H2c in the inverse form: those with higher concerns about guardianship were significantly more likely to engage in defensive behaviour. Concerns about website security were associated with a more than threefold increase in the odds of avoiding disclosure (OR = 3.491, p = .013). However, this effect decreased at higher thresholds (adjusted OR = 1.308) due to a negative Gamma 3 term, suggesting that the strength of concerns may taper off among those already highly cautious. Concerns about public authority security also significantly predicted avoidance (OR = 1.507, p = .002), with no indication of non-proportionality.

Among demographic variables, several were significantly associated with the avoidance of disclosing personal information online. Each additional year of age increased the odds of avoidance by 3.2% (OR = 1.032, p < .001). Respondents with Secondary Education Years 10 and 11 had 67.9% higher odds of avoidance compared to those with postgraduate education (OR = 1.679, p = .003). By contrast, those with Certificate I and II levels or lower education were 77.5% less likely to avoid disclosure at the baseline level (OR = 0.225, p = .034), though this effect diminished at higher thresholds as noted. Speaking only English at home was associated with 34.2% lower odds of avoidance (OR = 0.658, p = .007). Respondents who were locally born had 26.5% higher odds of avoidance (OR = 1.265, p = .062), although this effect was marginally significant.

Discussion

Using a representative survey comprising 1,911 Australians, we adopted ordered probability models and PPO models to examine whether personal attributes and individual safety concerns influence an individual's perceived increase of cybercrime victimisation risk. We also examined factors associated with an individual's protection and disclosure of personal information. This study makes a unique contribution to the extant literature by:

focusing on the disclosure of personal information online, which has important implications for understanding the potential effect and prevention of data breaches that have affected Australian and international computer users in recent years; understanding why, despite the well-known prevalence and risk of various forms of cybercrime, computer users continue to forego important online safety strategies and engage in unsafe online behaviours; measuring the perceived risk of cybercrime victimisation and multiple concern factors across all Australian states and territories; predicting the perceived risk of victimisation and preventative behavioural adaptations of Australian computer users.

Below, we interpret the findings through the lens of Ferraro's (1995) risk interpretation model and Beck's (1992, 2009) risk society paradigm, beginning with personal attributes before turning to perceptions of capability to avoid cybercrime (H1a and H2a), personal safety concerns (H1b and H2b), and perceptions of institutional guardianship (H1c and H2c).

Personal attributes and risk perception in the digital risk society

Consistent with Ferraro's (1995) model, our findings confirm that personal characteristics, particularly age, language spoken at home, and education, are associated with both perceived increase in risk of cybercrime victimisation and behavioural adaptations in cyberspace. Ferraro's model highlights the way individual attributes (e.g., age and social status) shape how risk information is processed and interpreted. In the context of cybercrime, this interpretive process is further complicated by the abstract, often invisible nature of online threats, as described in Beck's (2009) risk society thesis.

Our findings show that older individuals are more likely to perceive a heightened risk of cybercrime victimisation, consistent with previous studies (Kanan & Pruitt, 2002; McGarrell et al., 1997; Reisig & Parks, 2004; Weitzer & Kubrin, 2004). This may reflect their reduced digital literacy or greater uncertainty in navigating emerging technologies. However, the alignment between perceived and actual risk remains complex. Older people may not necessarily be the most victimised group (Burton et al., 2022), but their vulnerability is amplified through situational characteristics such as social isolation, limited cybersecurity knowledge, and exposure to targeted scams. Ferraro's emphasis on social and physical vulnerability helps explain why age remains a significant predictor of perceived risk and why behavioural adaptations such as avoiding personal information disclosure increase with age.

Similarly, individuals who speak only English at home reported lower perceived increase of cybercrime risk and were more likely to disclose personal information online. This aligns with risk interpretation theory in suggesting that perceived control, rooted in linguistic proficiency and digital confidence, reduces fear and fosters perceived safety. However, as Beck (2009) argued, this confidence may be misplaced in a digital risk society where system-level threats and opaque data practices erode the effectiveness of individual judgement. English speakers may feel more in control but are not necessarily safer.

Educational background also influenced perceived risk and information disclosure, although in a non-linear pattern. Individuals with a bachelor's degree and those with the lowest education levels reported heightened perceived risk, whereas those with mid-level education (Years 10 and 11) reported the lowest risk. This echoes inconsistent findings in the literature (e.g., Akdemir, 2020; Brands & van Wilsem, 2019) and underscores Ferraro's point that individual interpretation of risk is shaped not just by cognitive ability but by social context, including access to technical knowledge and self-perceived vulnerability. Higher education may foster greater awareness of cyber risks, leading to cautious behaviour, while the lowest-education groups may experience increased fear due to limited understanding of risk mitigation strategies.

It is also worth noting that while prior victimisation is frequently cited as a predictor of fear or perceived risk (Virtanen, 2017), the variable was not significantly associated with perceived risk of cybercrime victimisation in this study. This could be attributed to (1) the nature of the general, non-cybercrime-specific, victimisation variable or (2) how perceived cyber risk may be shaped more strongly by concern factors (e.g., website security and malware) and sociodemographic attributes than by past experience.

Perceived capability to avoid cybercrime (H1a and H2a)

Drawing on the concept of reflexive trust (Luhmann, 1979) and the privacy paradox (Barnes, 2006), we tested whether individuals’ self-perceived ability to protect themselves influenced both their perceived increase of risk (H1a) and their protective behaviours (H2a). The findings partially support this hypothesis set.

We found no significant relationship between perceived capability and perceived increase of victimisation risk (H1a not supported). This suggests that individuals’ sense of control or technical competence does not meaningfully influence their judgement of cybercrime likelihood. This aligns with Ferraro's argument that risk perception is more strongly shaped by external social cues and vulnerabilities than internal assessments of preparedness. Furthermore, Beck's (2009) risk suggests that system-level conditions such as opaque governance and structural vulnerabilities may overpower individual confidence.

Conversely, we found strong support for H2a: Individuals with greater perceived capability to protect themselves were significantly more likely to avoid disclosing personal information online. This finding reinforces the idea that self-efficacy is critical to enacting defensive behaviours even if it does not shape emotional risk perception. In other words, individuals who feel capable act cautiously, even if they do not necessarily feel afraid.

This apparent disconnect between cognitive capability and emotional concern echoes Ferraro's distinction between perceived risk and fear and supports Beck's (2009) notion that in a digital risk society, behaviour is often shaped by habituation to invisible risks rather than active emotional fear.

Although this study modelled perceived capability linearly, future research could explore potential non-linear effects. It is plausible that individuals with moderate levels of perceived protective ability may report greater perceived increases in cybercrime risk than those who feel either highly capable or entirely incapable, reflecting an inverted U-shaped relationship between capability and risk perception.

Personal safety concerns (H1b and H2b)

We also examined whether specific cybercrime concerns influenced perceived increase of victimisation risk and disclosure behaviour. H1b was supported: Several personal safety concerns, especially those related to identity theft, fraudulent communications, malware, and banking fraud, were significantly associated with higher perceived increase in cybercrime risk. These concerns likely stem from widespread media coverage and direct exposure to threats that feel both plausible and personally consequential. In Ferraro's terms, they serve as cognitive signals that increase perceived likelihood of victimisation.

Interestingly, H2b received only partial support. Only concerns about malicious software and, to a lesser extent, child pornography (at the upper thresholds of caution) were associated with increased avoidance of personal information disclosure. This limited effect highlights the crime-specific nature of cyber-related fear. While users may feel exposed to scams or fraud, they may not perceive these threats as requiring behavioural change unless the risk is viewed as highly technical, targeted, or emotionally charged.

This aligns with the emerging literature (Akdemir, 2020; Virtanen, 2017) calling for disaggregated analysis of fear responses across cybercrime types. In the digital risk society, not all cyber risks are emotionally equal, malware and exploitation crimes appear to elicit stronger behavioural reactions than generic fraud or identity misuse.

Scams and frauds are often viewed as human-driven threats which rely on manipulation and social engineering, such as phishing, impersonation, or deception designed to make someone willingly hand over information or money (Australian Signals Directorate, 2025). Because these threats often involve the victim taking some action, such as clicking a link, transferring funds, or trusting a false claim, they are identified as being within an individual's control and sometimes defined as non-technical threats. This framing leads to an emphasis on personal responsibility, education, and vigilance. By contrast, malware and Child Sexual Abuse Material (CSAM) are typically understood as external or systemic threats. Malware often exploits technical vulnerabilities (Cletus et al., 2024), and CSAM is treated as a criminal issue, with less emphasis on individual user action. These threats are perceived as more inevitable and less controllable by ordinary people, which shifts the response towards legal enforcement, infrastructure-level defences, and systemic protections rather than personal vigilance alone. This difference in perceived locus of control, personal responsibility for scams versus systemic inevitability for malware and CSAM, therefore shapes how society, institutions, and policy frameworks respond to each type of threat. However, as noted by Cletus et al. (2024), “…the over-reliance on only technical controls at the expense of non-technical techniques (users, organisational) constitutes limitations that are exploited by the malware adversarial groups to compromise systems” (p. 668).

Perceived capability of guardianship (H1c and H2c)

Finally, we examined the role of perceived institutional guardianship in shaping both perceived increase of victimisation risk and disclosure behaviour. In both models, H1c and H2c were strongly supported. Respondents who were concerned about website security and public authority data handling were significantly more likely to perceive high cybercrime risk and more likely to avoid disclosing personal information online.

This is consistent with Beck's (2009) risk society paradigm, which emphasises declining trust in institutional actors. As digital infrastructures become more complex and opaque, individuals respond to perceived governance failures not only with fear, but with behavioural withdrawal, refusing to share data, avoiding engagement with certain platforms, or developing distrust in e-government services (Ngwenyama et al., 2023). In Ferraro's model, these concerns function as indirect social cues that elevate perceived risk and trigger fear-based behavioural adaptations.

Notably, concerns about website security had the strongest behavioural impact: individuals with this concern were over three times more likely to avoid disclosing information, though this effect weakened at higher levels of concern, suggesting a saturation point in the behavioural response. This may reflect what Beck (2009) termed the boomerang effect of modernisation: As institutional risks escalate and distrust deepens, protective behaviours intensify but may eventually plateau due to perceived futility or habituation.

Limitations

We note some limitations. First, the survey, although defined as representative of the Australian population (Centre for Social Research & Methods, 2018), uses a disproportionate stratified sampling methodology from all states and territories, which may not capture a fine-grained heterogenous distribution of the Australian population. Here, we point to two well-known disadvantages of stratified sampling: (1) difficulty in selecting the appropriate strata for a sample and (ii) difficulty in arranging and evaluating the results. Hence, while the sample is nationally representative due to probability-based recruitment and weighting adjustments, it may not be representative at the individual state or territory level due to disproportionate stratification across jurisdictions. Of course, this comes down to budget constraints, and here, we rely on the best available data at the time of publication and did not attempt to discuss findings specific to any individual state or territory. A second limitation is the level of fine-grained information on individual attributes. Another limitation of this study is the reliance on a cross-sectional survey design, which constrains our ability to infer causal direction or temporal ordering between the variables of interest. While we identify significant associations between personal attributes, perceived capability, concern factors, and behavioural responses, we cannot determine whether, for example, a higher sense of cybercrime protection leads to more cautious behaviour or whether those who are already cautious develop a greater sense of capability. Longitudinal or experimental designs would be better suited to establish the causal pathways and potential feedback loops between perceived risk, fear, and behavioural adaptation in cyberspace. With these data, we could further examine Ferraro's (1995) risk interpretation model and develop better prediction models on behavioural adaptations.

Implications for cybercrime prevention policy and public engagement

Our examination of the associations between personal safety concerns, perceived risk of victimisation, and online behaviour offers practical insights into how cybercrime prevention strategies can be designed, communicated, and targeted. The findings demonstrate that individuals’ behavioural adaptations such as avoiding the disclosure of personal information are shaped not only by internal factors like perceived capability to avoid cybercrime but also by external concerns about specific cyber threats and the perceived inadequacy of institutional guardianship.

In the context of Australia's National Cyber Security Strategy and ongoing public education campaigns such as Scamwatch, Be Connected, and the Stay Smart Online initiative, our study provides evidence to support the importance of tailoring these efforts to reflect the differentiated concerns of users. For example, we found that malware-related fears and concerns about website security are among the strongest drivers of defensive behaviours, suggesting that cyber safety messaging that emphasises these threats and how to detect and mitigate them may be especially effective in promoting protective action. Similarly, concern about public authority security is strongly linked to both perceived risk and reduced disclosure, reinforcing the need for transparent, proactive communication from government agencies that collect and manage personal data. This includes not only ensuring data are protected but also publicly demonstrating and communicating those protections in accessible ways.

Our results also highlight gaps in behavioural responses. Many individuals report concerns about identity crime and fraud but do not appear to take consistent protective action. This perception–action gap should inform future public awareness efforts. Education strategies could focus on helping individuals translate abstract fears into practical behaviours (e.g., password hygiene, secure browsing, and cautious data-sharing practices) particularly for less technical threats like phishing or identity misuse. These approaches should also be demographically tailored. For example, older individuals, who show high perceived risk but not necessarily high technical capability, may benefit from simplified, action-oriented guidance, while younger, more tech-savvy users may require more nuanced behavioural nudges.

In relation to regulatory compliance, while our original claims on institutional trust and well-being were broad, the more immediate policy implication of our findings is that building public trust in cyber governance structures is essential for participation. Citizens who perceive government and industry as ineffective digital guardians are more likely to withdraw from online engagement or to underreport cybercrime incidents, both of which hinder the effectiveness of national cybercrime prevention. Efforts to rebuild reflexive trust (Luhmann, 1979) in online systems must go beyond technical fixes to include community-level engagement, digital literacy campaigns, and visible enforcement of data protection regulations.

Finally, our study underscores the importance of cybercrime prevention as a shared responsibility. Consistent with the Australian Cyber Security Centre's messaging, improving cyber safety is not solely the task of governments or platforms but must also be supported by informed, capable, and cautious digital citizens. Policy frameworks that empower individuals to understand and manage cyber risks, especially those with lower education or digital skills, will be essential in enhancing national resilience to online threats. Internationally, these insights may apply in contexts where Internet penetration is high, institutional trust is strained, and users must make sense of complex, evolving cyber risks on their own.

Footnotes

Ethical Considerations

ANUpoll is conducted via Internet by the Social Research Centre's (SRC) ‘Life in Australia’ panel. Approximately 3,000 Australians will be sampled to undertake each ANUpoll survey. Those Australians have previously agreed to be contacted by the SRC as part of the company's ‘Life in Australia’ panel of survey respondents. The survey was undertaken in accordance with the following:

the Privacy Act (1988) and the Australian Privacy Principles contained therein; the Privacy (Market and Social Research) Code 2014; the Australian Market and Social Research Society's Code of Professional Practice; and ISO 20252 standards.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.