Abstract

This study provides a novel analysis of the roles played by trusting relationships and technology in enabling online investment fraud victimisation. Two hundred self-report victim testimonies collected from online forums were analysed using inductive thematic analysis. The themes that emerged described personal factors that may have increased victimisation risks, how victims perceived their relationship with the scammer and the nature of the scam. The findings suggested the applicability of several existing theories of trust building and technology use to understand the phenomenon of online investment fraud victimisation. Trusting relationship creation is seemingly important for building trust in longer-form scams, as well as shorter-form scams, and rich media is used by scammers both to facilitate hyperpersonal relationships and to enhance the legitimacy of both forms of investment fraud. Victims attempted to use technology to protect themselves from scammers, but these strategies fell short owing to a lack of digital literacy or inadequate technical safeguards. Future studies may further analyse the persuasive messaging used to advertise online investment fraud to understand how victims first become aware of a scam. The findings relating to victim self-protection also raised questions regarding the nature of “victimisation” in the context of online investment fraud, suggesting that future research should seek to explore the role played by online guardianship in online investment fraud further.

Introduction

Online fraud is a complex cybercrime that takes many forms. Investment fraud accounts for a large and growing percentage of online fraud losses (ACCC, 2018). The term investment fraud refers to the solicitation of money for non-existent or misrepresented investment opportunities (Button et al., 2009; Lacey et al., 2020). Online investment fraud refers to investment fraud advertised using Internet-based services such as social media platforms or Internet messaging (Lacey et al., 2020), representing a worldwide financial threat (Deliema et al., 2019). Cryptocurrency manipulation, such as “pump-and-dump” schemes (Nizzoli et al., 2020) and “pig-butchering scams” (McCready, 2022), are just some examples of the many forms of online investment fraud commonly encountered online today. In Australia, investment fraud has become the most prevalent form of fraud, with more than AUD 1.5 billion lost between 2022 and 2023 (ACCC, 2023). In the United States of America, the Federal Bureau of Investigation (FBI) reported losses in excess of USD 2.7 billion to investment scams amongst the Unted States public in 2022 (FBI, 2023). The growth of the Internet and the advent of new investment opportunities have given fraudsters access to a new pool of potential victims 1 (Reurink, 2019).

Research into online fraud suggests that the ubiquity of technology means that individuals must rely heavily on their digital literacy skills to protect themselves from fraud and online disinformation (Graham & Triplett, 2017). Digital literacy encompasses individual awareness and understanding of technology (Graham & Triplett, 2017) suggesting that technology plays a central role in victimisation (Third et al., 2014). Research examining the strategies employed by online offenders also indicates that trust is an important factor that perpetrators aim to use against their victims (Titus & Gover, 2001; Whitty, 2013b). The most relevant studies, such as investigations into online romance scams (Whitty, 2013b) and investment fraud in general (Lacey et al., 2020) also suggest that trust is essential in enabling these scams. However, the process of trusting relationship creation and maintenance in the context of the latter remains underexamined. Exploring the roles played by trust and technology in enabling online investment fraud will deepen academic understanding of online investment fraud and help to inform safeguards for protecting the general public from victimisation.

Literature review

Defining online investment fraud

“Fraud” is a general term used to describe a crime whereby an individual makes a gain, usually financial, through an act of deception against another (Reurink, 2019). Meanwhile, “scam” refers to the mechanism through which fraudsters interact with victims (Modic & Lea, 2013). The rapid rise of the Internet and information technologies has facilitated the expansion of the scale and scope of fraud, with fraudsters able to reach many more victims than was previously possible offline (Modic & Anderson, 2015). The key elements of online investment fraud include a misrepresented or non-existent investment opportunity (ACIC, 2017) that significantly involves online elements such as advertising, online payments and online communication and promises high returns with little or no risk (ACCC, 2021). Recent examples of online investment fraud exhibit many different structures for generating money and advertising to potential victims. Examples include the Finiko Ponzi scheme (Grauer & Updegrave, 2022), wherein a Russia-based cryptocurrency service offered investors a 30% return on investment. The collapse of the scheme is estimated to have caused losses of approximately USD 9 billion (Grauer & Updegrave, 2022). The 2018–2023 “Hyperverse” scheme, which is estimated to have cost Australian investors over USD 1.3 billion, seems to have operated both as a Ponzi scheme offering unrealistic returns on initial investment and as a pyramid scheme advertised through word-of-mouth, with additional opportunities for victimisation via a complex membership scheme (Martin, 2024). Despite online investment fraud occurring in a wide variety of forms, existing literature suggests that all forms of fraud that occur online are enabled by technology and seek to create a sense of trust with their intended victim.

The influence of trust and technology on fraud victimisation

Trust is a multidimensional concept, definable as a personal trait or a feature of an interaction between two parties where a degree of uncertainty is acknowledged and overcome (Mayer et al., 1995; Whitty & Joinson, 2008). Trust is a factor in activities from online shopping to communicating with others in virtual communities and can be both interpersonal and between people and technologies (Whitty & Joinson, 2008). When engaging in activities online such as shopping (Al-Debei et al., 2015) or engaging in legitimate investing (Carlos Roca et al., 2009), a certain degree of trust is required for a consumer to make a financial decision. Factors such as the perceived quality of a website and positive testimonials from other customers can affect the degree of trust a potential customer has in the perceived legitimacy of an online trading platform, and in turn, affect their investing intentions (Carlos Roca et al., 2009). In the context of scams, the creation of trust is vital to ensuring victimisation, as without it an individual will not view a scam as legitimate (Lea et al., 2009; Modic & Lea, 2013). Scammers often aim to position themselves as authoritative figures to create a sense of trust in their victims (Lea et al., 2009). Alternatively, Whitty (2013b) observed that trust was established through the creation of a romantic relationship in online romance scams. According to the Scammers Persuasive Techniques model created by Whitty (2013b), rather than attempting to appear legitimate and authoritative, romance scammers use relational intimacy to create the trust necessary to execute their scam. The research consulted in this literature review highlights the importance of trust in a variety of both legitimate and deceptive activities online.

Relevant studies that have specifically examined investment fraud have reinforced the importance of trust in the scam commission process. Lacey et al. (2020) conducted a study to explore the methods employed by investment fraudsters and factors that influenced victimisation to construct a model of the fraud commission process. Through a series of interviews with investment fraud victims, a variety of risk factors and scam methods were elicited, including several elements relating to trust (Lacey et al., 2020). The authors observed scammers using appeals to authority, establishing themselves as trusted sources of information, and trying to build interpersonal rapport with their victims. Though research such as Lacey et al. (2020) highlights that trust is a key factor in investment fraud victimisation, scope still exists for a deeper examination of how that trust forms and is maintained over technological communication mediums. The specific mechanism through which trust is created in the context of online investment fraud remains underexamined in the relevant literature.

Though trusting relationship creation is not deeply examined by studies such as Lacey et al. (2020), it has been examined in great detail in similar contexts by other researchers (Whitty, 2013a, 2015). For example, the creation of a trusting relationship between a scammer and their victim is a key feature of online romance scams (Walther & Whitty, 2021). A similar cybercrime that depends on a victim's willingness to trust is “phishing”. Phishing refers to a variety of social engineering attacks that aim to trick a victim into disclosing information about themselves (Jansson & von Solms, 2013). For example, phishing can consist of emails containing links to malicious content (Bullee & Junger, 2020), while more targeted “spear phishing” relies on an offender knowing information about a victim and using it to craft a more believable message (Caputo et al., 2013). In phishing, offenders rely on various tricks to convince victims to trust a message. Strategies include the impersonation of legitimate entities such as governments or businesses (Chen et al., 2018), the inclusion of real information alongside false (Caputo et al., 2013), or technological trickery, such as making an email appear to be from a legitimate email address (Jansson & von Solms, 2013), and some of these tactics can present themselves in the commencement of online investment scams. Phishing attacks seek to establish trust with their victims quickly through the exploitation of victim weaknesses to urgency and biased decision making (Luo et al., 2013), whereas longer form fraud such as online romance scams rely on rapport building and a significant time investment in the part of the scammer to gain the trust of the victim (Whitty, 2013b). Ultimately, the strategies used in phishing are only partially relevant to an overall understanding of the ways in which technology is used to build trust with victims of online investment fraud.

Previous research on online romance fraud (Walther & Whitty, 2020) has indicated that exploring online scams using theoretical models of trust-building, such as the hyperpersonal model of computer-mediated communication (Walther, 1996), can provide an analysis that considers both the participants and the technology involved. The process of romance scam commission reveals that fraudsters seek to create trusting relationships with their targets to manipulate and later financially victimise them (Whitty, 2013b). The mechanisms through which trusting relationships form remain underexplored in the literature relating to online investment fraud. This gap requires the consideration of theories that examine how trust develops over technological mediums and how they could be applied to the study of online investment fraud.

Current study

The prevalence and scale of online investment fraud continue to grow, expanding as communication and investment technology become ubiquitous in society (Reurink, 2019). The paucity of academic literature discussing the specific problem of online investment fraud necessitates an approach that considers research in related areas of online fraud, such as romance scams. Existing literature has examined both the risk factors for victimisation and the specific strategies scammers adopted to target these vulnerabilities. Scope exists for qualitative exploration of trusting relationships and the role played by technology in online investment fraud victimisation.

The overall objectives of this research are:

To gain an understanding of the role played by technology in facilitating online investment fraud To gain an understanding of the role played by trust in the process of online investment fraud victimisation.

Method

Research design

Extant literature relating to online investment fraud typically involves quantitative data analysis. Examples include data-driven analyses of how investment scams spread over social media platforms (Nizzoli et al., 2020) and the creation of experimental algorithms for detecting cryptocurrency tokens associated with fraud (Mazorra et al., 2022). However, despite the valuable technical insights gained through quantitative explorations of online investment fraud, these studies provide only limited insight into the victimisation process. For example, the previously cited studies focus only on a single feature of an online investment scam, such as the purported investment vehicle (Mazorra et al., 2022) or the method of advertising the scam (Nizzoli et al., 2020). Without the authors engaging with and by extension, experiencing online investment fraud victimisation themselves, it would not be possible to gain insights into the latter stages of the fraud commission process. A qualitative approach overcomes the difficulties in collecting a representative sample of data from victims who are often reluctant to report their victimisation (Modic & Lea, 2013) by instead allowing for an in-depth analysis of a smaller number of cases. As such, a clear need exists for qualitative analysis of online investment fraud cases to complement quantitative analyses of their technical aspects.

Data analysis

The analysis method chosen for this study was inductive thematic analysis. Thematic analysis is a process of analysing patterns in a given dataset and extracting “themes” (Braun & Clarke, 2006). The rapidly changing nature of online investing and online fraud suggested to the authors that an inductive approach would allow for a more flexible consideration of possible theories after the analysis was complete. Thematic analysis is a flexible analytic method that is compatible with various schools of epistemological thought, including the critical realist approach taken in this study (Braun & Clarke, 2006).

Sampling strategy and data collection

The lead author decided upon a purposive sampling strategy. Purposive sampling involves the collection of data in order to answer specific research questions (Elo et al., 2014). The lead author aimed to collect data that would provide insights into the online investment fraud commission process. Publicly available victim self-report testimony was chosen as large quantities were available and accessible. The first website identified was the blog of the U.S. Federal Trade Commission (FTC), and the second was the Reddit forum r/Scams, 2 both of which are international websites dedicated to the discussion of online safety and scams. Both websites were chosen as they each provided the project with easy access to a large amount of suitable and relevant data. Data was collected from both websites between July and September of 2021.

Data was collected from the FTC site using a modified version of the open-source software GNU-Wget. Wget allows for the direct downloading of HTML data from a website but does not perform any filtering of that data. The program downloaded the cases as raw HTML before being converted by software into the .xls file format for examination as an Excel spreadsheet. The FTC website was also manually searched for cases of online investment fraud during the analysis process to check for additional relevant cases posted after the initial collection. Data from Reddit was collected through manual searching.

The primary condition that had to be satisfied for a case to be included was the presence of an investment scam that matched the above definition. Secondly, the case had to include an identifiable victim. Finally, the case had to contain sufficient detail to be useful for answering the research questions. An initial search strategy in the FTC dataset involving keyword searching was deemed unsuitable due to frequent incorrect spelling found in the raw data, resulting in the accidental exclusion of relevant cases. For example, cases spelt “investment” as “invest emend”, “buy” as “by” and “profit” as “pforit”. Instead, the raw data was arranged in descending order of word count and manually searched for cases describing online investment fraud victimisation.

Initial searching identified 70 suitable cases for inclusion in the analysis. Keyword searching of data downloaded from the subreddit r/Scams provided additional cases, with no sufficiently new scam descriptions emerging regarding online investment fraud after 130 were collected. Human research ethics approval was sought and obtained for the collection of both data sets. 3 Additional capacity to collect more data was retained throughout the analysis process, however, it was determined by the lead author that thematic saturation had been achieved. The software package Nvivo was used to perform the inductive thematic analysis on the 200 total cases.

Sample overview—FTC blog

The FTC is an agency of the government of the United States of America, and the Consumer Information Blog allows comments on posts relating to scam awareness.

Sample overview—r/Scams

A highly active forum or “subreddit” with hundreds of anonymous posts per day, r/Scams users can be seen posting warnings on suspicious activity, recommendations for ways to protect themselves and others online, and their experience of being victimised by scams. Similarly to the comments on the FTC Blog, these self-report testimonies are anonymous.

Data analysis and reliability

In keeping with the coding reliability approach adopted for this study, a codebook was created to enable rigorous intercoder agreement (Braun & Clarke, 2006). The creation of a codebook followed the thematic coding process performed by the chief investigator. Each code was described and justified with supporting references in a separate Excel spreadsheet to allow the three supporting coders to understand the logic behind each coding decision. The supporting coders analysed a random sample consisting of ten percent of the total data. The document used in this initial process can be found in Appendix A.

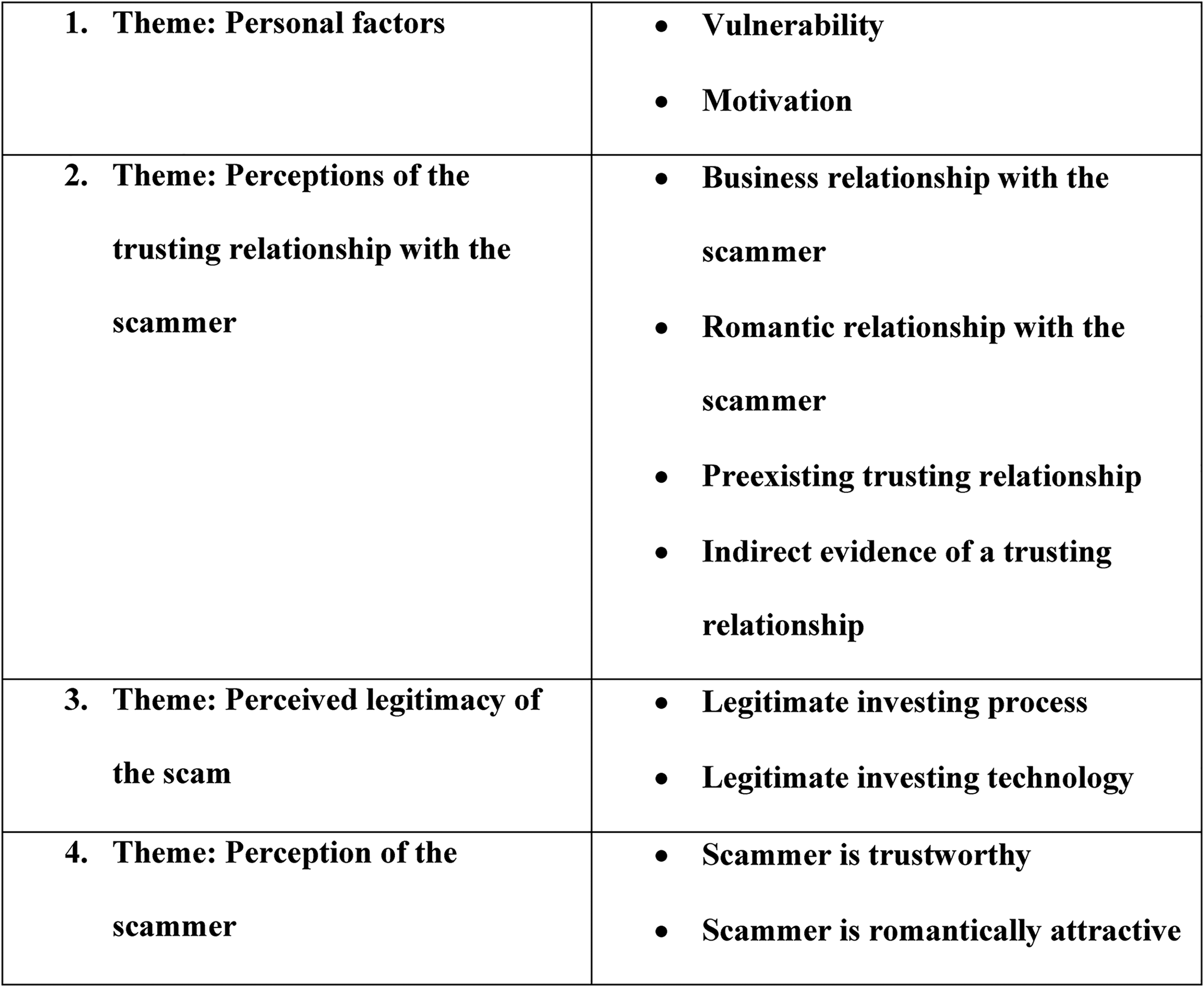

The reliability of the coding was assessed using Fleiss’ Kappa, an assessment of the degree of agreement between two or more coders (Gwet, 2014). The initial rating process yielded a Kappa of 0.618, indicating a substantial level of agreement between the raters (Landis & Koch, 1977). As subthemes relating to the role of technology had been the greatest source of disagreement between the raters, these were distributed amongst the remaining themes. With the elimination of the theme “Technology that facilitates the scam”, the distribution of the subthemes amongst the other themes, and the consolidation of the “Vulnerability” and “Motivation” themes into “Personal factors”, the overall number of themes was reduced from six to four. Fleiss’ Kappa was re-calculated after this consolidation, yielding a slightly improved score of 0.621, indicating a substantial level of agreement between the raters (Landis & Koch, 1977), as well as a percentage agreement statistic of 74.19%.

Results

Introduction

Cases from the sample are referred to throughout in the order of their collection (Case #) and their website of origin (either the FTC blog or r/Scams). Figure 1 provides a concise overview of the themes and subthemes emerging in the data. These themes are explained and elaborated upon with quotes in the remainder of the Results section.

Table of themes and subthemes.

Theme: Personal factors

Motivation for engaging with the scam.

My first real foray into crypto! Sounded like everybody was making money, why shouldn't I! (r/Scams, Case 89)

Victims described being motivated to engage with online investment out of a desire to learn investing strategies, gain financial independence or repay debts. Others were looking for a relationship or to make new personal connections. The fear of missing out on making a profit using the popular cryptocurrency was cited as a motivating factor when engaging with scams featuring cryptocurrency, a factor that has been identified in previous research into decision-making with regard to cryptocurrency investment (Gerrans et al., 2023).

Victim perception of vulnerability.

I've realised that I'm an incredibly naive and overly trusting individual. (r/Scams, Case 112)

Throughout the cases, victims displayed varying degrees of introspection about factors they believed may have increased their vulnerability to being scammed. In some cases, victims attributed their vulnerability to personal factors, including depression, loneliness or intoxication. Others blamed a willingness to trust others and a general sense of naivety in using platforms such as online dating. The attractiveness of the image chosen by the scammer for their profile pictures was sometimes enough to convince the victim to participate. This idealisation based on physical appearance has been previously observed in romance scams (Whitty, 2013b).

Immediately alarm bells began ringing, but after receiving a voice message and not finding any results after reverse imaging her photos, I began to trust her. (r/Scams, Case 122)

This crypto space is so hard to navigate even for the people who develop tech and software as a living. It hurts. (r/Scams, Case 99)

Victims also displayed varying levels of online technical competency, and some suggested that greater technical/scam knowledge or experience may have helped them avoid victimisation.

I only double-checked, googling for crypto scams and stuff after I already transferred thousands of my hard-earned money. (r/Scams, Case 100)

Other victims made use of technology to verify the legitimacy of the scam, but were ultimately still victimised when their validation search did not provide sufficient scam evidence, or their online investigation occurred only after they had suffered substantial financial loss despite experiencing earlier suspicion of a potential scam.

Overall, the theme of vulnerability was supported by victim statements that echoed the findings of previous research into risk factors for online fraud victimisation. A willingness to engage in risky online behaviours (Leukfeldt, 2015), fear of missing out (Gerrans et al., 2023) and circumstantial vulnerabilities such as depression or loneliness appear to have contributed to the process of victimisation in the sample. With regard to trust and technology, victims suggested both a willingness to trust and possible unfamiliarity with technology as possible risk factors for victimisation.

Theme: Perceived legitimacy of the scam

Legitimate investing technology

The tawk.to app for messaging customer support pops up quickly with a tech appearing to be 1 of 3 employees, Alicia, Jerry, Or Arnold. They are all super quick to message and answer any questions. (r/Scams, Case 117) Everything seems legit the chat rep is always quick to respond and helpful but they aren’t forthright about some things. (r/Scams, Case 77)

The use of interactive websites that allowed victims to view their investment balance was often described by victims as a feature that helped them trust the legitimacy of the scam. As observed in previous literature, interactive elements can significantly impact a victim's decision to trust online material and can be predictors of behavioural intention (Lu et al., 2014). Interactivity in the data came in two forms. Scammers provided their victims with services such as support chats that gave them the impression that their concerns and questions could be quickly answered.

I deposited my crypto, and we began ‘trading’. I turned my initial 200 dollar investment into 600 and things were ‘good’. (r/Scams, Case 98)

Sure enough I deposited a few hundred, made a small profit and withdrew the money. Tried again with a larger amount of money, made a slightly larger profit and withdrew the money again. At this point I believed the website was safe to use.

It was real! I was going to be rich. (r/Scams, Case 121)

Another reported aspect of this interactivity was the perception the victims had that they were actually earning money from their initial investment. Victims reported feeling reassured when they were presented with evidence that their investment had been successful. Some scams even allowed the victim to withdraw small amounts initially, a feature that is also arguably part of the legitimacy of the overall investing process.

I have transferred a considerable amount of AUD to a fake crypto exchange called Bitslead, they have an elaborate white paper … (r/Scams, Case 96) and a stack of staged reviews that are top tiered on a google search. (r/Scams, Case 96)

Victims in the data set reported investment scams that contained significant amounts of detail. This included customer testimonials, detailed white papers and processes that mimicked legitimate investing. Scammers were also able to manipulate search results to ensure that scams or reviews for their scams were seen by victims.

Legitimate investing process

Online investment fraudsters from both data sources engaged in a variety of strategies to convince their intended victims that the information they were seeing, hearing and reading was trustworthy and legitimate. In their self-report testimonies, victims describe interacting with what they believed were real people, real companies, and legitimate investment vehicles. My buddy put his life savings in a Spanish company call ‘trust investing’. r/Scams, Case 162)

…believing he's an expert ifx forex trader! (FTC, Case 38)

…a technique of investing in shares and cryptocurrency. (FTC, Case 50)

…started investing in crypto and me being curious, I tag along too. (r/Scams, Case 189)

Scammers in the data set generally claimed to use investment strategies that may be familiar to experienced investors. By providing victims with large amounts of information and rich content that matched the expectations of potential investors, scammers were able to induce a sense of trust in the scheme.

Overall, victims were provided with large amounts of rich media and media with interactive elements to convince them of the legitimacy of the investment opportunity and the identity of the scammers. Interactive elements appeared to be particularly important, providing victims with a sense that their investment was a success and that the scam was a legitimate investing opportunity, thus encouraging them to invest further in the scheme.

Theme: Perceptions of the trusting relationship with the scammer

Personal relationship with the scammer

We have talked to each other every day for about 3 months. Long and deep conversations, selfies, pictures. Everything was sooo smooth. (r/Scams, Case 89)

Out of the 200 cases collected for this study, 84 began with an online relationship that later morphed into an investment scam. Across these cases, the thematic analysis revealed evidence that scammers attempted to create online relationships with their victims before the later success of the investment scam. Scammers in these cases conversed with the victims for extended periods before mentioning or steering the conversation towards investing. This process is akin to the grooming process in romance scams whereby the scammer creates a relationship with the victim before attempting to extract money (Whitty, 2023). Despite warnings from others, the victims were then unable to recognise the scam. Once the romantic relationship had been established, the scammer introduced the topic of investing.

I lost 45 k due to the same scam. Met on a dating app then transitioned to WhatsApp. Causal conversation and he didn’t mention making money via trading for at least a month and it was me who asked him. (r/Scams, Case 82)

We have talked to each other every day for about 3 months. Long and deep conversations, selfies, pictures. Everything was sooo smooth. (r/Scams, Case 89)

She ultimately suggested (after a long trust-formation period) I invest in ultra-fast cryptocurrency trading. (r/Scams, Case 161)

Business relationship with the scammer

I started investing with a woman known as Christy Jay, someone like that and I started off with $500 and she said I made a profit, while she actually put her $500 in as well, next thing, I needed to pay 2500 to withdraw which I thought that's too big but unfortunately, I did it anyways, so next thing? (FTC, Case 16)

Rather than offering an investment opportunity to the victim, in seven cases, the scammer posed as a fellow investor. The closest parallel to this strategy found in previous literature could be the practice of “liking” in online romance scams, whereby a scammer attempts to craft a character that matches the interests of the victim to forge a common bond (Fischer et al., 2013; Whitty, 2023). The novelty of this observation lies in the notion that the character of the scammer is also suffering occasional losses alongside the victim, thus strengthening the relationship through shared successes and failures.

So I received a message from a beautiful woman who had carelessly entered my number on WhatsApp instead of the real estate agent she meant to contact. Wow, I thought. What are the chances of this? So being such a nice guy I kindly informed her she had the wrong number. Well, she was very happy that I was such a gentleman and had taken the time to reply. (r/Scams, Case 76)

Within the cases that began with an online relationship, five cases began with the scammer reaching out in an apparent case of mistaken identity. This strategy aligns with the scam strategies described by Cukier et al. (2007), whereby politeness can influence scam compliance, and “reciprocity” (Fischer et al., 2013). Not wishing to appear rude, the victim responds to be helpful rather than ignoring the contact, and the scam proceeds from there.

Indirect evidence of a trusting relationship

I lost all my savings and, especially, my dignity. The second one will be tough to get back … I mean, we got really good friends! This sounds so ridiculous that i’ve [sic] been crying for the last three days. (r/Scams, Case 88) This ‘guy’ made me feel in a way I haven't felt in a very long time since my first love (15 + years ago). I've been guarding my heart since my first love and I ended things. I thought I would never feel that way again but this scammer managed to get into my mind. (r/Scams, Case 130)

The extent to which the relationships in the data became trusting was reflected in the strong feelings the victims reported having towards the fraudsters. Indeed, in cases where the nature of the relationship was vague, it was still possible to deduce that a trusting relationship had at some point existed between the scammer and the victim based upon the emotions described in the testimonial.

Pre-existing trusting relationship

By impersonating a friend of the victim on social media, scammers were able to bypass the trust-building process; Apparently, someone drained my financial savings and hacked her Instagram. A friend of hers made a video about some investment opportunity and would easily make money of it. She signed up. They drained her savings of $2500 …. (r/Scams, Case 119)

Theme: Perception of the scammer

This theme contains codes wherein the victim describes how they perceived the scammer through the course of the scam. Examples include descriptions of the scammer's backstory, perceiving them as a “nice” or knowledgeable person.

Scammer as an attractive person

We started chatting casually for 3 weeks, all day long. We had good chemistry and we spoke about many topics: she sent me all her travel photos, daily cooking, when she was with her daughters, when she was in meetings … She went for a meeting in Shanghai, and she sent me photos of her in front of the iconic Shanghai building. And this all day long. (FTC, Case 65)

In the course of entering into a trusting relationship with a scammer, victims in the data described the aspects of the person they believed they were talking to and what about them they found believable or attractive. Victims received images of luxury lifestyles to reinforce the notion that the scammer was successful. This strategy was observed by Lacey et al. (2020) as an appeal to social influence. Romance scammers are also known to create profiles that are designed to encourage potential victims to idealise them as ideal romantic partners (Whitty, 2013b).

Scammer as an expert

I was messaged a few weeks ago by someone claiming to be a crypto expert. They claimed with their advice, I could make a lot of money investing in crypto. Like a fool, I bought it. (r/Scams, Case 200)

Rich media was also used to aid scammers in establishing themselves as investing experts and worthy of the trust of their victims. Screenshots and false testimonies provided by other supposed clients were used to help reinforce the authority of the scammer.

Additional findings

This is an exchange part of a Pig Butchering Scam involving a beautiful Chinese girl who invites people to send their crypto to this exchange and then guides them on trades. After the victim deposits a significant amount, they can no longer withdraw their funds. (r/Scams, Case 75) I’ve done some reading and learnt this is called a “Pig Butchering” scam. (r/Scams, Case 76)

Many victims from the Reddit dataset, acting on prior knowledge or on information obtained through conversations with other r/Scams users, described online investment scams with a specific name. “Pig-butchering” scams seem to include specific elements that victims from Reddit identified. These include the scammer posing as an attractive Asian man or woman, the initial contact being made through a dating app, and the long period of time between initial contact and the scam taking place.

Pig-butchering scams have yet to receive much if any academic attention, although the phrase has become commonplace in non-academic sources such as news articles. Examples include the Vice News report by McCready (2022) on the creation of call centres in Southeast Asia that facilitate pig-butchering scams through forced labour. The presence of this phrase could therefore be a sign that victims possess a degree of awareness of scams gleaned from unofficial sources.

Discussion

The findings from this inductive exploration of online investment fraud suggest that both trust and technology play an important role in the victimisation process. Victims were convinced by realistic-looking websites, apps and scammer identities, while some forms of online investment fraud fostered romantic relationships with their victims in order to build a trusting bond. The themes that emerged from the data suggest a number of novel applications of existing theoretical frameworks, while also echoing the findings of previous examinations of online fraud victimisation. The following section relates themes to existing theoretical models and discusses the extent of their applicability.

Personal factors and the cyber lifestyle—routine activity theory

The “Personal factors” theme described in the Findings section affords a view of what the victims believe may have been factors that influenced their victimisation. Routine Activity Theory (RAT) (Cohen & Felson, 1979) posits that crimes of opportunity occur when an attractive victim comes into contact with a motivated offender in an environment that lacks sufficient guardianship. The cyber-lifestyle RAT is a more recent adaptation of the theory that replaces the physical context of crime with factors that are not as spatially or temporally constrained (Reyns et al., 2011). Factors that may increase victimisation risks online include proximity to motivated offenders, victim attractiveness, a lack of online guardianship and peer deviance (Reyns et al., 2011).

In previous research, engaging in risky online behaviour has been linked to an increased risk of victimisation from online threats, from malware (Leukfeldt, 2015) to viruses (Reyns, 2015) and forms of online fraud such as romance scams (Whitty, 2019) and advanced fee fraud (Kigerl, 2021). Increased time spent using the Internet prolongs possible contact with potential sources of risk, and activities such as online shopping and posting personal details on social media could increase target attractiveness (Reyns, 2015). Online dating platforms were the source of almost half (86) of the cases of online investment fraud in this study, according to the findings of previous research that has identified dating sites as sources of online risk (Whitty, 2019).

Recent research suggests that examining risky routine behaviours in isolation does not provide a clear picture of the personal factors that influence victimisation (Whitty, 2020). Demographic factors such as age, financial literacy and education can impact individual risk when engaging in potentially risky online behaviour, producing counterintuitive results (Whitty, 2020). As an example, Reyns (2015) observed that individuals who took steps to protect themselves with antivirus software appeared to be more at risk of malware infection, suggesting that overconfidence in their abilities to stay safe online was also a key factor in their victimisation. Evidence from the present study suggests that victims lacked the digital literacy to investigate scammers, even when they were suspicious of the scam. The cyber-lifestyle RAT provides a theoretical framework for partially understanding how personal factors increase the target attractiveness of victims of online investment fraud.

Media richness theory

The theme of “perceived legitimacy” demonstrated the importance of communicating detailed information to the victim in order to assuage their doubts and reinforce the legitimacy of the scheme. Media richness theory (Daft & Lengel, 1986) is a prominent theory relating to interpersonal communication research and relationship development. The richness of a particular form of “media”, which refers to a form of communication such as text messaging or speaking face-to-face, can be assessed by its ability to communicate information effectively within a defined interval of time (Daft & Lengel, 1986). In available published research, media richness theory has yet to be used to examine the development of trust in the context of online investment fraud.

Research has suggested that the richness of the content found on a website can affect the decision-making of an Internet user (Lu et al., 2014). Websites with rich content, particularly interactive elements, can significantly affect behavioural intentions, influencing both their choice of website and their intention to follow the information presented to them (Lu et al., 2014). Lu et al. (2014) offered an explanation for this effect by linking rich media to the MAIN heuristics model. The MAIN model describes heuristics or mental decision-making shortcuts that occur when using technological interfaces, with modality (M), agency (A), interactivity (I) and navigability (N) serving as triggers (Sundar, 2008). Subsequent studies on the presentation of online content have reinforced the link described by Lu et al. (2014) between richer media and greater trust from consumers (Chen & Chang, 2018). The scams described in the dataset frequently featured interactive elements, such as live investment tracking websites and provisions for victims to take their money out to test the legitimacy of the scheme. The presence of these elements in the data strongly suggests that online investment fraudsters are aware of the trust-building power of interactive elements in technology.

Online investment fraud occurs using media channels such as social media or dating websites (Lacey et al., 2020), however, it is unknown if scammers deliberately select these channels to create trust with their victims. Lacey et al. (2020) observed investment scammers presenting themselves as figures of authority and attempting to position themselves as reliable sources of information, linking this approach to social influence theory. However, media richness theory also allows for an examination of the ways in which scammers can present themselves as authoritative sources of information, with additional scope for an interrogation of the role technology plays in these interactions. Media richness theory allows researchers to observe technology such as websites and messaging services and their effect on behavioural intentions (Lu et al., 2014). Media richness theory is also suitable for analysing technology's contributions towards developing trusting relationships online (Whitty & Joinson, 2008). Based on literature regarding trust-building over communication, media richness theory (Daft & Lengel, 1986) appears to have merit in examining the ways in which online investment fraudsters convince their victims. The application of this theory to the field of online investment fraud research is novel.

Further research suggests that individuals can differ in their preferences for selecting and consuming rich media. Individual differences such as gender (Dennis et al., 1999) or personality traits such as extraversion and low neuroticism (Karemaker, 2005) can influence media selection. However, the role of individual differences and social factors and how these affect victim perceptions of rich media in the context of online investment fraud remained outside the scope of this study.

The hyperpersonal model of computer-mediated communication

The process of trusting romantic relationship formation described in the “Perception of the trusting relationship with the scammer” and “Perception of the scammer” themes accords with previous research (Whitty, 2013b) examining the ways in which romance scammers create trusting relationships with their victims. The hyperpersonal model of computer-mediated communication (CMC) asserts that CMC users exploit technology in various ways to create and manage relationships through communication (Walther, 1996). These relationships can then become “hyperpersonal”, or more real than personal relationships to the victim (Walther & Whitty, 2020).

Across the cases involving a romantic relationship, the thematic analysis process revealed evidence that scammers attempted to create hyperpersonal online relationships with their victims to ensure the later success of the investment scam. Scammers in these cases conversed with the victims for extended periods before mentioning or steering the conversation towards investing. This process is akin to the grooming process in romance scams whereby the scammer creates a relationship with the victim before attempting to extract money (Whitty, 2023).

According to Walther (1996), online interactions take place between a sender and a receiver. Senders can use various technological communication mediums and techniques to craft their messages, and it is up to the receiver to use the cues these messages contain to form an impression of the sender (Walther, 1996). Receivers tend to compensate for this missing information by creating a positive impression of the sender (Walther & Whitty, 2020). This interaction was observed in the data, with victims forming positive impressions of scammers after brief exchanges. In turn, the scammers complimented the victims to reinforce their online self-presentation. Multiple communication channels, such as text messaging, email, social media platforms and voice calls, created a sense of asynchronous or constant communication between the two parties. These features of computer-mediated communication allowed the scammers to craft their messages to appeal to the victims. The application of the hyperpersonal model of CMC to the context of online investment fraud victimisation is novel.

Previous studies have also sought to understand instances where certain circumstances can bias individuals towards hyperpersonal relationship formation. The act of engaging in selective self-representation online has been found to increase feelings of self-esteem in social media users (Gonzales & Hancock, 2011) as well as reinforcing an idealised self-image (Walther & Whitty, 2020). In the context of online investment fraud, a willingness to give out personal information could enable a scammer to craft more targeted messaging to heighten relational intimacy.

Popular conceptions of scam victims as possessing certain traits that increase their likelihood of being victimised have been addressed by proponents of the hyperpersonal model of CMC, arguing that the model is not limited by disregarding them (Walther & Whitty, 2020). In the context of online romance scams, social factors such as loneliness (Buchanan & Whitty, 2014) or a lack of relevant education (Whitty, 2018) appear not to affect victimisation risks. Although some victims in the data for the present study referred to their mental state, or reflected upon their desire for a relationship as having possibly made them more susceptible to the manipulation of a scammer, this degree of detail was rare throughout the cases and not adequately supported. Therefore, based on the examples observed in relevant online romance fraud research, social factors seem unlikely to have played a significant role in the formation of hyperpersonal relationships.

Limitations

The data from both websites could not be said to be fully representative of online investment fraud as a whole. The data lacked descriptions of the more complex types of online investment fraud that can be encountered online, such as Ponzi schemes. 4 The absence of more complex forms of online investment fraud in the data may be a reflection of their rarity relative to more simplistic scams, or that victims of these scams do not report their victimisation on websites like Reddit. As the data was collected in 2021, the sample may also not be representative of the current attitudes of potential online investors or representative of the latest forms of online investment fraud, or of newer technologies such as the recent emergence of AI tools.

Implications for future research

As observed in the data, individuals used technology in various ways to try to protect themselves from online investment fraud victimisation. Viewed through the lens of the cyber RAT, this use of technology could be interpreted as a belief amongst victims that technology provided guardianship in the online investing space. The nature of capable guardianship online remains a matter of debate in cybercriminology (Reyns, 2010). Unlike the context of traditional crime, providers of guardianship such as police may be willing to respond to cybercrimes but be undertrained and under resourced (Wilson et al., 2022). In the absence of traditional providers of guardianships, such as police, capable guardianship online may have to be sourced collectively by the community in much the same way that informal guardianship can deter terrestrial crime (Bossler & Holt, 2009). In much the same way that locks, bars on windows and house sitters may deter offenders (Bossler & Holt, 2009), informal online guardians could include online reporting services or forum moderators (Reyns, 2010). The inability of victims to correctly seek help from effective online guardians may also suggest a lack of digital literacy, a factor previously identified as a risk factor for fraud victimisation (Graham & Triplett, 2017). A future study that applied the cyber RAT to the context of online investment fraud could aim to determine if capable online guardianship exists and to what extent it is effective in moderating interactions between motivated offenders and vulnerable victims. The perspective of the motivated offender was also completely absent from the data in this study, and a possible future study that applied the cyber RAT may wish to interview online investment fraudsters.

This study was also novel in revealing the importance of rich media in convincing victims that they were taking part in a legitimate investment scheme. However, there was a general lack of evidence in the data set regarding the content used to appeal to victims to begin engaging in the scam. A commonly observed assumption across technical analyses of online investment fraud such as Nizzoli et al. (2020) was that the spread of links for investment scams on social media led to victimisation, without actually interrogating the persuasive power of that link. A future study may seek to apply media richness theory (Daft & Lengel, 1986) to analyse the persuasive messaging found in advertising for online investment fraud. An experimental design wherein participants were shown hypothetical advertising for online investment fraud that used different persuasive strategies may reveal more information about the beginning of the victimisation process, evidence of which was lacking in the present data set.

Implications for fraud prevention

Rather than forewarning potential victims about specific scams, providing education about the techniques that are commonly used by fraudsters across different scams could provide more effective protection (Burke et al., 2022). The findings of the present study may aid in informing warning measures of this kind by identifying the broadly identifiable phases of commonly encountered online investment scams. Given that cybercrime is increasingly becoming organised (Whelan et al., 2024), it is likely that fraudsters will share common tactics and offer scam creation services to other criminals online, as already occurs in other cybercrime contexts such as ransomware. Faced with the threat posed by mass fraud campaigns online, strategies to educate the broader public on the identifiable phases of online investment scams seem warranted and prudent.

In acknowledging the usefulness of education as a form of fraud prevention, it should also be acknowledged that the responsibility for providing this education is unclear. Government bodies, such as the FTC, communities dedicated to scam prevention, such as r/Scams, and financial institutions can all provide education to victims about potential scams. Previous studies have observed that digital literacy training is fragmented and privatised (Graham & Triplett, 2017) and that education should be more broadly offered at educational institutions to raise online threat awareness. In the case of pig-butchering scams that are on online dating platforms, warnings or interventions by the platform could disrupt the formation of trusting relationship, an important first step in the scam commission process. Suspicious language, such as a discussion of moving a conversation to a less secure platform, or a discussion of cryptocurrency or investing platforms, could trigger a warning. The threat posed by fraud on online dating platforms has been acknowledged but requires further research to inform safety by design practices (Phan et al., 2021). Governments could push for safety by design principles to be introduced industry-wide, instead of individual technology companies choosing to adopt differing levels of protection. Examples of government safety frameworks in related contexts include the Australian eSafety Commission’s “Safety by Design” policy framework, which seeks consultation with the industry to enhance the overall safety of Internet users (eSafety, 2021).

Conclusion

The findings of this study concur with previous examinations of online investment fraud while suggesting new avenues of exploration. Findings such as the use of “persistent engagement” by scammers to establish a “friendship” (Lacey et al., 2020) mirror the results of the analysis, where scammers maintained their hyperpersonal relationships through the manipulation of the channels of communication. This study provides a novel link to established theories of media use and relationship creation to contextualise these findings and the earlier observations by studies such as Lacey et al. (2020). The findings relating to the presence of risky routine activities suggest that they may play a role in online fraud victimisation, although the lack of demographic data prevented further exploration (Whitty, 2020). The data analysed in this study lends support to the applicability of media richness theory (Daft & Lengel, 1986) and the hyperpersonal model of computer-mediated communication (Walther, 1996) for understanding the role of trust and technology in facilitating online investment fraud. In addition, this study raises questions relating to the nature and effectiveness of capable online guardianship in online investing spaces. Possible preventative measures have been discussed, although these would need to be regularly assessed and evidence-based in order to keep pace with the changing landscape of online investment fraud.

Footnotes

Acknowledgements

The authors wish to acknowledge Professor Monica Whitty for her guidance and feedback during the original conception of the project.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.