Abstract

Background

Implementation climate is an organizational construct theorized to facilitate the adoption and delivery of evidence-based practices. Within schools, teachers often are tasked with implementing universal prevention programs. Therefore, they are ideal informants when assessing school implementation climate for initial and continuous implementation improvement efforts. The purpose of this study was to examine the construct validity (i.e., factor structure and convergent/divergent validity) of a school-adapted measure of strategic implementation climate called the School Implementation Climate Scale (SICS).

Methods

Confirmatory factor analyses of SICS data, collected from 441 teachers in 52 schools, were used to compare uncorrelated and correlated first-order factor models and a second-order hierarchical model. Correlations with other school measures were examined to assess SICS convergent and divergent validities.

Results

Results demonstrated acceptable internal consistency for each SICS subscale (

Conclusions

Results from this study provide psychometric evidence that supports the use of the SICS to inform the implementation research and practice in schools.

Plain Language Summary

Schools are busy trying to implement various universal programs and systems to help support kids in their growth. Beginning and sustaining these efforts is quite challenging, and there is need for tools and ideas to help those implementation efforts. One concept is implementation climate, which is broadly the school staff’s perception of the implementation support for a given practice. However, no measure currently exists to help schools assess their implementation climate. The goal of our study was to adapt a measure of implementation climate used in other settings to the school environment. We used feedback from educational experts to make changes and used various analyses to determine if the newly adapted measure was psychometrically sound. Findings suggest the new measure is usable to guide implementation efforts in schools.

The adoption, high-quality delivery, and sustainment of evidence-based practices (EBPs) is an important priority for schools to prevent and address a wide range of social, emotional, behavioral, and academic needs (Lyon & Bruns, 2019). EBP implementation is subject to a constellation of inner and outer organizational factors (Aarons et al., 2011). Outer context factors include the environment external to the organization that exerts a distal influence on implementation efforts. Inner context factors are those within the organizational context where implementation ultimately occurs, like organizational climate and culture, and are more proximal to EBP implementation (Moullin et al., 2019). Considering this, researchers have developed and validated tools that measure key aspects of the inner context that can be used for data-informed decisions, as identified in frameworks such as Exploration, Preparation, Implementation and Sustainment (EPIS; Moullin et al., 2019).

General Organizational Climate Versus Strategic Implementation Climate

There are two main categories of organizational climate research: general and strategic. Broadly defined,

In contrast to general climate,

To date, researchers have demonstrated that the strategic implementation climate is a key determinant of successful EBP implementation (Williams et al., 2018). Considering its importance, Ehrhart et al. (2014) developed and validated the ICS in the context of EBP implementation in community mental health settings. The ICS included six dimensions measuring staff perceptions of the implementation climate: Focus on EBPs, representing how much implementing EPBs is an organizational priority; Educational Support for EBPs, representing how much training and materials are provided to support EBP implementation; Recognition for EBPs, representing how much the organization values and recognizes providers for EBP implementation; Rewards for EBPs, representing how the organization provides financial compensation or benefits for EBP implementation; Selection for EBPs, representing how the organization considers prior EBP experience when recruiting, selecting, and hiring employees; and Selection for Openness, representing how the organization considers applicant openness to new practices, adaptability, and flexibility when recruiting, selecting, and hiring employees. According to this research, organizations with low levels of strategic implementation climate do not demonstrate that EBP implementation is valued (Ehrhart et al., 2014). In these cases, there is limited focus on EBPs, support provided, and/or forms of recognition and acknowledgment for staff who invest in and improve their EBP implementation. Considering that schools are an ideal setting for the delivery of universal social, emotional, behavioral, and academic prevention problems, strategic implementation climate may be a critical construct to examine in schools (Locke et al., 2016).

Prior Research on the Implementation Climate Scale in Schools

Although there are well-established measures of organizational climate (Patterson et al., 2005) and school climate (You et al., 2014), there is not one that assess strategic implementation climate. Recently, researchers evaluated the original ICS with minor wording changes for the school context using informants representing a single behavioral health consultant per school (Lyon et al., 2018). The ICS measured the degree to which school-based behavioral health providers perceived the school leaders and systems expected, supported, and recognized staff for EBP implementation. Initial findings demonstrated a five-factor solution with a general second-order factor. The Rewards scale of the original ICS resulted in poor factor loadings and low internal consistency. An item review conducted by the researchers suggested that the items for the Rewards scale of the original ICS did not fit the school setting as it was less relevant for that setting (e.g., financial incentives are not provided in schools) and would need to be redesigned with stakeholder feedback. Overall, this first attempt at applying the ICS to the school setting demonstrated strong psychometric properties with evidence supporting acceptable internal consistency of five scales (α > 0.7) and strong correlations with other measures of the inner school implementation context including implementation leadership (

Although this research provided support for the use of the ICS in school settings, the items reflected the original ICS wording and subscales as developed in a community mental health context, without prior analysis to determine if the dimensions and items were appropriate for schools. Schools are unique systems involving multiple levels of administration, varied resource pools and allocations, multiple systems of support for students, and often overlapping role responsibilities between staff. As has been the case in past research adapting the ICS to other contexts (Ehrhart et al., 2016), the ICS may need adaptation to capture the idea of a school-specific implementation climate. As part of a federally funded project, researchers (Locke et al., 2019) held a series of focus groups with three different educational informant groups representing different systemic levels of school implementation efforts—district administrators, principals, and teachers—to adapt the ICS and additional organizational instruments for use in educational research and practice.

Purpose of this Research

The above qualitative adaptation study with educational stakeholders informed the current measurement validation study in elementary schools that were implementing one of two evidence-based universal prevention programs: School-Wide Positive Behavior Intervention and Supports (SW-PBIS, Horner et al., 2010) and Promoting Alternative THinking Strategies (PATHS, Greenberg et al., 1995). The study had three primary aims related to validating the School Implementation Climate Scale (SICS). The first aim was to adapt, refine, and remove items to better capture the current or new ICS dimensions. The second aim was to validate the hypothesized scales by conducting confirmatory analyses of the SICS. Within this aim, we recognized the need to examine if structural validity differed if the new SICS referred to a general EBP within the item stems (e.g., “implementing a universal EBP”) or a specific referent (e.g., “implementing PATHS). Both approaches to item development and measurement exist across studies and disciplines and to the best of our knowledge have not been directly investigated within schools. Considering schools are often integrating various efforts and systems (McIntosh & Goodman, 2016), it would be relevant to know if the SICS could apply to all implementation efforts or would need to be tailored to each effort. We hypothesized that a seven-factor model capturing four of the original ICS factors plus three additional factors could be identified with acceptable reliability and construct validity. We also hypothesized that the structural validity of measures would change based on the EBP referent. The third aim was to examine convergent and divergent validity with appropriate measures. Within this aim, we hypothesized that the SICS would evidence moderate associations with a measure of general organizational climate. We also hypothesized that the SICS would demonstrate moderate convergent validity with staff attitudes towards teaching, as both constructs are perceptual, measured using similar methods, and are associated with the environmental condition of the school. Finally, we hypothesized the SICS would evidence divergent associations with demographic characteristics as the demographic makeup of a school should have some, but negligible, effect on a school's ability to support an implementation effort.

Method

Setting and Participants

Teacher demographics for the SICS samples by type of program.

Procedures

This study took place as part of a large-scale, federally funded measurement adaptation and development project creating school-based tools for organizational constructs. Prior to conducting validation studies, the measures underwent a series of revisions to adapt them for use in schools by increasing the relevance, fit, and acceptability of each measure (Locke et al., 2019). The measures and constructs were adapted first via an expert summit and then mixed-methods focus group sessions with key educator stakeholder groups. This was an iterative process drawing upon the knowledge of these content experts, adjusting the ICS, and then re-examining the measure with the experts until consensus was reached on what items and scales to test. Based on information from these focus groups, four adaptions were made. First, although the original ICS had a Reward factor, stakeholders indicated that items assessing financial incentives were rare and inappropriate for the school context as in other service settings (Steele et al., 2009); thus, new items appropriate to the school context were needed. Second, the two implementation climate dimensions related to selection—Selection for EBP and Selection for Openness—were viewed as assessing information that was typically inaccessible to teachers. Third, stakeholders recommended changes to item wording throughout to increase comprehensibility and appropriateness for use with educators (Hambleton, 1996). Fourth, based on stakeholder input and a review of relevant literature, three additional scales for a school-adapted version of the ICS were identified: Existing Supports to Deliver EBPs, Use of Data to Support EBPs, and Integration of EBPs. Existing Supports to Deliver EBPs address the extent to which staff perceived that the school provides support such as professional development, coaching, and structured meetings to help them learn about and apply an EBP. This differed from the original scale on educational support because, in schools, educational support is often structural and ongoing rather than the time-limited trainings, conferences, and materials referenced in the original education support scale (Glisson et al., 2008). Use of Data to Support EBPs captures shifts in schools to incorporate data-based decision-making processes and tools as a way of creating accountability and encouraging school staff to implement an EBP. Integration of EBP indicates the extent to which EBP is integrated with other school systems and processes, including improvement plans, performance evaluations, and other ongoing work.

Institutional Review Board approval was obtained from the University of Washington Human Subjects Division and partnering school districts’ research and evaluation departments, when applicable. Recruitment of specific schools involved working with central administrators and communicating with site-based administrators regarding the project's benefits and data collection procedures. School administrators or an appointed liaison from partnering schools then recruited 4–12 teachers to participate in data collection. Contact information was obtained from teachers for research staff to contact them and send them a link to the survey.

To facilitate data collection, a web-based survey was constructed using Qualtrics Software (Qualtrics; Provo, UT). Data were collected during the 2017 fall academic semester. Each teacher was provided with a 1-month window to complete the survey from the time they were sent the initial email. Across all partnering schools, an average of 88% of respondents who were sent emails completed the online surveys, resulting in total of 441 sample size.

Measures

Organizational Health Inventory for Elementary Schools (OHI-E). The OHI-E (Hoy & Tarter, 1997) was administered as a molar climate measure that captures school staff perceptions of the health and climate of a school. Two scales from the OHI-E were used in this study: Teacher Affiliation (e.g.,

Data Analytic Approach

The data analytic procedure for this study involved first assessing the construct validity of the new SICS subscales using item response theory. We reviewed item information coverage of the underlying trait in question for SICS scales with the goal of limiting each scale to the three most informative items (De Ayala, 2013) for consistency with the original ICS and to keep the overall measure as short and practical as possible. Then, a series of confirmatory factor analyses (CFA) using weighted least squares means and variances (WLSMV) estimation with delta parameterization for the ordered-categorical scale items was conducted. The fit of each model was determined across several indices including the

Three CFA models were fit to the data: (a) a first-order factor model with correlations among the seven first-order factors constrained to zero, (b) a first-order factor model that allowed the correlations among the factors to be freely estimated, and (c) a hierarchical second-order factor model that assumed a general SICS factor in place of the first-order interfactor correlations. We compared the first two models using a chi-square difference test appropriate for WLSMV estimation of the categorical items (Brown, 2006), and compared the second two models using Marsh’s (1991) Target Coefficient 2 (

Next, a multi-group structural equation model was performed to determine whether the best underlying factor structure of the SICS was invariant across versions of the scale. We employed the same fit indices as the CFAs to determine best model fit.

Convergent validity was assessed via correlations between OHI-E and PSTQ measures. Correlations with the OHI-E scales and PSTQ were expected to be small to moderate. Divergent validity was assessed via correlations between the SICS scales and school-level demographic variables.

Results

Table 2 presents summary statistics for the SICS items and scales (i.e., means, and standard deviations by item, and coefficient alpha for scales). Across all scales, coefficient alphas were in the large range (

SICS dimension items and summary statistics.

Confirmatory Factor Analyses

Examination of item information curves from first-order correlated factor and second-order SICS CFA models indicated that two items within each of the scales were redundant and deleted for not contributing sufficient unique information to their respective scales, resulting in 21 items (seven dimension measures by three items each). The comparison between the uncorrelated first-order factor model,

Factor loadings and fit statistics of the final first-order factor only measurement model.

Correlations among SICS scales and with OHI-E and PSTQ measures.

*Correlation is significant at the

**Correlation is significant at the

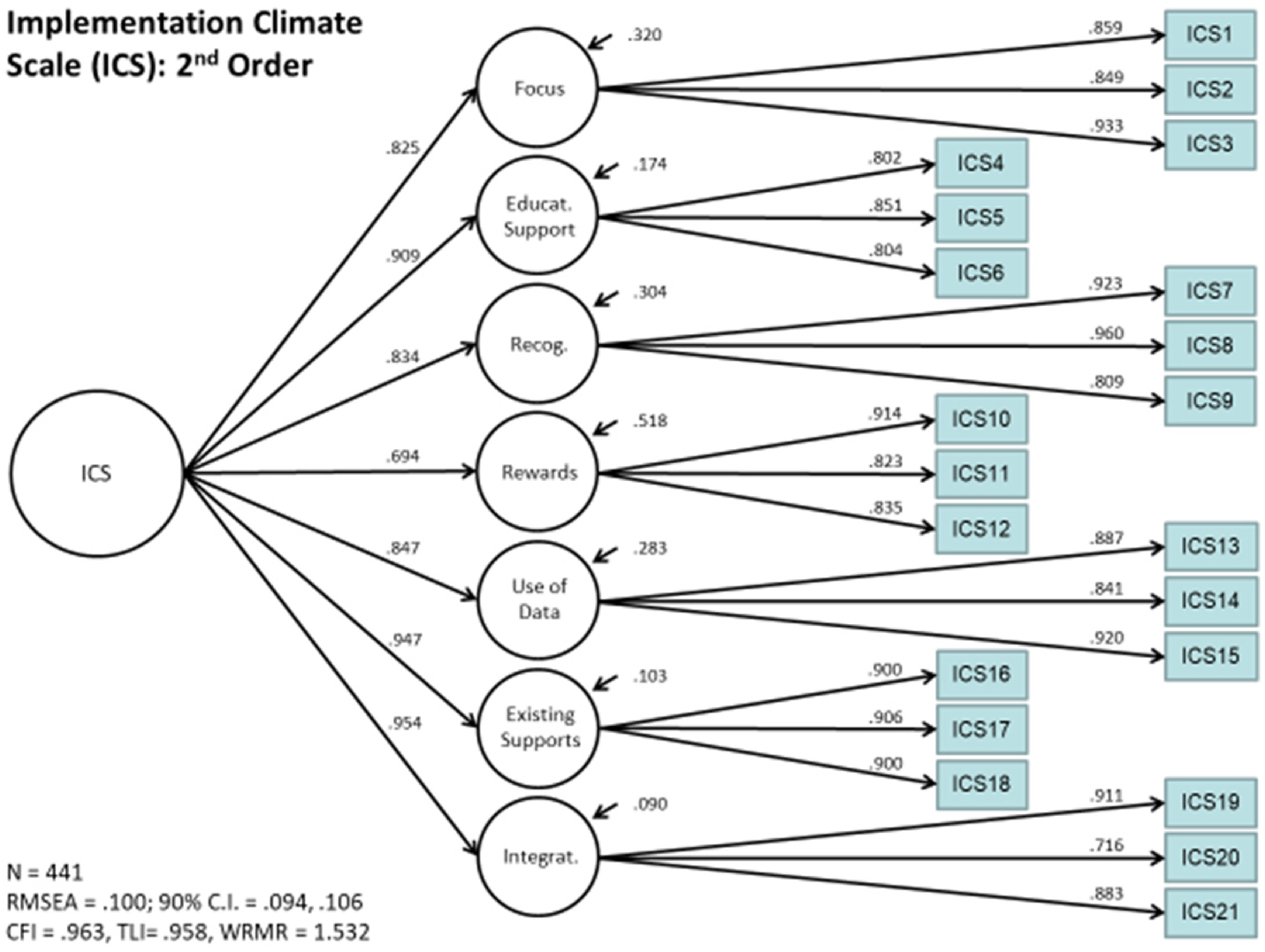

The hierarchical second-order SICS model is shown in Figure 2. This model also indicated an acceptable model fit the data,

Factor loadings and fit statistics of the final second-order composite factor measurement model.

Convergent and Divergent Validity

Table 3 depicts correlations between the ICS scales and OHI-E, PSTQ, and school demographic measures as indicators of convergent and divergent validity. Correlations between SICS scales and OHI-E and PSTQ measures in Table 3 were generally small to moderate (.300 ≤

Discussion

Organizational climate reflects staff perceptions of their work environment based on their shared experiences, which influences how they feel and function within a given setting (Schneider, 1990). When applied to EBP implementation, strategic implementation climate refers to staff perceptions that the use of an EBP is expected, supported, prioritized, and recognized/rewarded within their school. Assessing the influence of implementation strategies on implementation climate represents a promising practice for translating EBPs into routine practice (Anonymized for peer review). However, the ability to do so hinges upon the existence of psychometrically sound, contextually appropriate, and relevant measures of implementation climate.

Structural, Convergent, and Divergent Validity of the SICS

The aim of this study was to confirm the underlying factor structure of the school-adapted version of the ICS with the additional three scales deemed relevant for school-based EBP implementation by research and practice experts: Existing Supports for EBPs, Use of Data, and Integration of EBP. Confirmatory factor analyses of the seven SICS scales with a second-order global implementation climate factor provided a good fitting model for the data. Internal consistency estimates for all the 3-item scales were acceptable. Further, convergent and divergent relationships with other measures were consistent with prior research and our hypotheses, providing evidence for the construct validity of the SICS.

Rewarding EBP Implementation in Schools

Despite attempts to adapt the Reward construct to better align with the realities of the school context, inter-factor correlations with this construct were low to moderate (

SICS as a Pragmatic Measure

From a real-world implementation standpoint, Glasgow and Riley (2013) argued that implementation-oriented measures must be pragmatic; that is, measures should be actionable, sensitive to change, and important, yet not burdensome, to stakeholders. Findings from this study and previous studies of the ICS suggest that SICS meets or approaches each of the criteria of a pragmatic measure (Lewis et al., 2018). First, per focus group participants, the SICS includes important constructs for school-based implementation (Locke et al., 2019). Furthermore, the multigroup SEM analysis demonstrated that the SICS measurement properties are invariant across general and specific EBP references. This gives the SICS flexibility to meet the needs of each school's unique implementation context. Second, with lack of time being cited consistently as one of the biggest barriers to implementation (McGoey et al., 2014), the SICS includes only three items per subscale, reducing the time burden. Third, research from industrial/organizational psychology indicates that the constructs assessed in the SICS represent actionable targets for implementation strategies aimed at facilitating EBP uptake and use (e.g., Ehrhart et al., 2013). Last, prior intervention research demonstrated that the SICS's predecessor—the ICS—was sensitive to detecting changes in implementation climate, suggesting that the SICS may be sensitive to changes in school-based implementation climate (Aarons, 2017). This is an area that warrants additional research. Collectively, the above suggests that the SICS may be a low-burden, actionable, change-sensitive, and pragmatic measure. However, researchers should continue to explore the actual use of the SICS in real-world implementation conditions to gather evidence that demonstrates its pragmatic qualities.

Limitations and Future Directions

There are several limitations to consider when interpreting these findings. The first limitation is the sample, which was bound geographically to certain regions of the United States. Implementation climate is likely to vary across states and counties with different cultural and legislative emphases on EBP implementation, as well as funding available to support EBP materials, training, and coaching. A follow-up study including a sample of schools from diverse geographical, political, and cultural communities to determine the stability and generalizability of the factor structure is warranted. Similarly, we were only able to access public sources for student demographic data which was quite limited for our participant sites. We were unable to capture anything related to the number, or percent, of students that identified with particular ethnic and racial communities, received special education services or similar wraparound supports or experienced inequitable opportunity access based on their communities. These qualities of student communities within a school may influence the dynamics between teacher and student(s) when implementing a universal program and may also influence teachers’ perceptions of climate. It is unclear exactly what those influences and outcomes might be, and a future study involving implementation climate would benefit from the direct collection of student data on a variety of population characteristics to determine how implementation differs in response to differences in student population.

Another limitation is change sensitivity of the SICS was not assessed. This psychometric quality was beyond the current scope and purpose, which was to establish the general factor structure of the SICS. This allows schools to assess implementation climate at one time, ideally while the school is preparing large-scale implementation efforts. For schools undergoing change efforts to improve implementation climate, dynamic data on EBP implementation using the SICS could inform decision makers as to whether change efforts are working. Future research should use the SICS as a progress monitoring instrument and link changes in implementation climate to changes in implementation and student outcomes.

The validity evidence in this study is confined to the measures used to establish evidence convergent and divergent validity. Although the observed relationships were consistent with hypothesized associations within and between implementation constructs, and external measures, this study did not include measures of actual program implementation (e.g., dosage) or student outcomes (e.g., student behavior) as it was beyond the scope of this study. However, previous studies have demonstrated the relationship between the ICS—the measure upon which the SICS was developed—and implementation outcomes (e.g., Williams et al., 2018). Future research should aim to establish the magnitude of the relationship between

Finally, the focus groups for revising the initial ICS to fit the school context lacked certain stakeholders with potentially relevant insights including community partners and related service providers. This was done intentionally, as these individuals are not directly involved in the implementation of universal EBPs but may have valuable insight into the implementation climate.

Implications for School-Based Implementation Research and Practice

As schools face increasing demands from legislation (e.g., Act, 2015), professional organizations (e.g., NASP, 2010), and communities to use EBPs to address students’ social, emotional, behavioral, and academic needs, research is clear that schools need to prepare deliberately for implementation (Lyon & Bruns, 2019). The exploration and preparation phases of the EPIS framework are important phases of the implementation process, involving a comprehensive evaluation of the implementation context to establish organizational readiness for change. Organizational readiness creates the context for individuals within the system to exert greater effort and display more responsiveness to training and consultative support aimed at supporting effective implementation (Weiner, 2009). Without deliberate attention and action during this preparation phase, implementation efforts are likely to fail (Kotter, 2012). The SICS provides critical information that can be used during the preparation phase to create organizational readiness for change by identifying malleable organizational intervention targets. Empirical research on school organizational readiness for change is lacking, including understanding the role of general and implementation-specific organizational climate, as well as the effects of strategies on improving aspects of the implementation climate prior to initiating active implementation efforts.

Moreover, the SICS has utility during subsequent phases of the implementation process: implementation and sustainment (Aarons et al., 2011). The SICS provides a straightforward way of assessing a school's implementation climate while implementation is happening to examine factors that may obstruct or enable successful, sustainable delivery of an EBP. Once these factors are identified, data can inform the tailoring of site-specific action plans to address aspects of the school implementation climate by increasing staff perceptions that EBPs are expected, rewarded, recognized, and supported in their setting (Powell et al., 2019). Thus, the SICS can be used as part of a continuous quality improvement process during the active implementation phase.

Conclusion

Factors associated with the immediate context in which implementation happens influence the successful translation of EBPs into routine practice. Implementation climate has relevance at various phases of the implementation process, ranging from preparation to sustainment. The goal of this research was to provide a psychometrically sound and pragmatic instrument that can be used to inform the implementation research and practice in schools. Although more research is needed to identify strategies to effect change in implementation climate, the SICS has potential as a useful tool to monitor the impact of those efforts and to assess how changes in implementation climate exert a cascade of effects on both implementation and student outcomes.

Supplemental Material

sj-pdf-2-irp-10.1177_26334895221116065 - Supplemental material for Construct validity of the school-implementation climate scale

Supplemental material, sj-pdf-2-irp-10.1177_26334895221116065 for Construct validity of the school-implementation climate scale by Andrew J. Thayer, Clayton R. Cook, Chayna Davis and Eric C. Brown, Jill Locke, Mark G. Ehrhart, Gregory A. Aarons, Elissa Picozzi, Aaron R. Lyon in Implementation Research and Practice

Footnotes

Acknowledgments

The authors wish to thank the teachers, administrators, and school staff that participated in this study.

Declaration of Conflicting Interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Dr. Aaron Lyon is an Associate Editor of Implementation Research and Practice, and thus Dr. Lyon was not involved in any aspect of the peer review process for this manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project and publication were supported by Institute of Education Sciences (grants R305A160114 and R305A200023). Gregory Aarons was supported by National Institute of Mental Health (grant R03MH117493) and National Institute on Drug Abuse (grant R01DA049891).

Ethical Approval

Institutional Review Board approval was obtained from the University of Washington Human Subjects Division and partnering school districts’ research and evaluation departments, when applicable.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.