Abstract

Background

Organizational readiness for change (ORC), referring to psychological and behavioral preparedness of organizational members for implementation, is often cited in healthcare implementation research. However, evidence about whether and under which conditions ORC is relevant for positive implementation results remains ambiguous, with past studies building on various theories and assessing ORC with different measures. To strengthen the ORC knowledge base, we therefore identified factors investigated in the empirical literature alongside ORC, or as mediators and/or moderators of ORC and implementation.

Method

We conducted a systematic review of experimental, observational, and hybrid studies in physical, mental, and public health care that included a quantitative assessment of ORC and at least one other factor (e.g., ORC correlate, predictor, moderator, or mediator). Studies were identified searching five online databases and bibliographies of included studies, employing dual abstract and full text screening. The study synthesis was guided by the Consolidated Framework for Implementation Research integrated with the Theory of ORC. Study quality was appraised using the Mixed Methods Appraisal Tool.

Results

Of 2,907 identified studies, 47 met inclusion criteria, investigating a broad range of factors alongside ORC, particularly contextual factors related to individuals and the innovation. Various ORC measures, both home-grown or theory-informed, were used, confirming a lack of conceptual clarity surrounding ORC. In most studies, ORC was measured only once.

Conclusions

This systematic review highlights the broad range of factors investigated in relation to ORC, suggesting that such investigation may enhance interpretation of implementation results. However, the observed diversity in ORC conceptualization and measurement supports previous calls for clearer conceptual definitions of ORC. Future efforts should integrate team-level perspectives, recognizing ORC as both an individual and team attribute. Prioritizing the use of rigorous, repeated ORC measures in longitudinal implementation research is essential for advancing the collective ORC knowledge base.

Plain Language Summary

Keywords

Introduction

Organizational readiness for change (ORC) is a concept frequently considered in implementation science—often described as a linchpin between intentions, actual engagement, and observed outcomes in the implementation of new practices. When explaining the results of an implementation, be they positive, negative, or null findings, implementation scholars often point to ORC as one potential reason for either of these scenarios (Armenakis et al., 1993; Weiner, 2020). As a multidimensional concept, ORC is defined as “organizational members’ psychological and behavioral preparedness to implement change” (Weiner, 2020, p. 217). In aiming to explain that only half of all evidence-supported interventions (ESIs) make it into routine healthcare practice, scholars have suggested ORC but also contextual factors (Bauer & Kirchner, 2020), such as teamwork (Taylor et al., 2011), leadership (Aarons et al., 2016; Reichenpfader et al., 2015; Taylor et al., 2011), perceptions of trust (Vakola, 2013), implementation climate (Williams et al., 2020), resource availability (Helfrich et al., 2007), structural organizational characteristics (Taylor et al., 2011), or organizational climate (Kelly et al., 2018) as potential contributing factors. A deeper examination of these factors can help to accelerate and enhance ESI implementation in health care. This applies particularly to ORC, as claims about its importance often have not been based on the use of nuanced theories or empirical prospective designs clearly linking ORC to implementation outcomes (Scaccia et al., 2015; Weiner, 2020).

Current evidence on how ORC influences ESI implementation in health care is ambiguous (Weiner, 2020). For example, Noe et al. (2014) used the Organizational Readiness to Change Assessment (ORCA; Helfrich et al. (2009)) to assess ORC and capacity to provide culturally competent services for American Indian and Alaska Native veterans in Veterans Affairs facilities. The authors found that no ORCA subscale predicted implementation of native-specific services. Contrarily, Becan et al. (2012) reported a positive association among subscales of the ORC Scale (Lehman et al., 2002) and ESI adoption in substance use treatment settings. Possible explanations for why these two studies reach different conclusions about the role of ORC are manyfold.

First, the concept of ORC remains fuzzy despite many attempts to define its core and essence. In general, ORC conceptualizations entail psychological components (e.g., motivation), structural components (e.g., resources), or both. In Weiner's psychologically framed Theory of ORC (TORC), ORC consists of change commitment and change efficacy, with change commitment being organizational members’ shared resolve to show behaviors required by the change and change efficacy describing their shared sense of capability to pursue change behaviors (Weiner, 2009). According to the TORC, higher ORC scores result in higher change-related effort among organizational members, leading to positive implementation results (Weiner, 2009, 2020). However, this is only one of many definitions of ORC. Scaccia et al. (2015) define ORC as “the extent to which an organization is both willing and able to implement a particular innovation” (p. 485). Armenakis et al. (1993) define ORC as “organizational members’ beliefs, attitudes, and intentions regarding the extent to which changes are needed and the organization's capacity to successfully make those changes” (p. 681).

Second, based on different ORC conceptualizations, different ORC measurement tools have been developed. In a systematic review, Miake-Lye et al. (2020) identified 29 measures used for ORC assessments in health care. These measures range in scope and were developed for different settings. For instance, the 116-item ORC Scale was developed in an addiction treatment setting (Lehman et al., 2002), whereas the 74-item ORCA (Helfrich et al., 2009) was developed within three quality improvement projects in the U.S. Veterans Health Administration, while the 41-item Readiness for Organizational Change Scale was developed in a government organization setting (Holt, Armenakis, Feild, & Harris, 2007). More recently, Shea et al. (2014) developed the pragmatic 12-item Organizational Readiness for Implementing Change (ORIC) scale across different settings.

Third, differing study needs and contexts have led to a substantial number of homegrown or adapted, often single-use ORC measures (Miake-Lye et al., 2020), contributing to a landscape of measures with limited validity and reliability (Gagnon et al., 2014; Holt, Armenakis, Harris, & Feild, 2007; Weiner et al., 2008). In a more recent review, Weiner et al. (2020) state that 72% of ORC measures were used once only.

Finally, scholars continue to measure ORC either retrospectively or at baseline only, without prospectively linking ORC to implementation outcomes. This phenomenon is likely perpetuated by misconceptions of “readiness” as a static, binary condition (i.e., present or absent) to be assessed prior to implementation as a precondition for pursuing efforts. This is problematic, as ORC fluctuates with the ever-changing circumstances of healthcare settings and therefore merits longitudinal measurement (Scaccia et al., 2015). Retrospective ORC measurements may therefore misrepresent the reality at the time of change initiation. Baseline ORC measurements come with a similar limitation, as baseline circumstances may fail to reflect the conditions present during implementation activities or when implementation outcomes are measured. These shortcomings hamper the interpretation of studies about the role of ORC for implementation (Weiner et al., 2020). Consequently, the importance of ORC for implementation in health care remains unclear.

These central challenges become apparent in the two ORC studies introduced above. Noe et al. (2014) and Becan et al. (2012) were based on different theories, which led to the use of different ORC measures. As summarized in Table 1, Noe et al. (2014) used the ORCA (Helfrich et al., 2009), developed based on the Promoting Action on Research in Health Services model (Kitson et al., 1998; Rycroft-Malone, 2004), which is reflected in the ORCA scales: evidence, context, and facilitation. Becan et al. (2012) measured ORC with the ORC scale (Lehman et al., 2002). Its underlying model is the Program Change Model (Simpson, 2002), which contains four domains: motivation for change, adequacy of resources, staff attributes, and organizational climate.

Illustration of central challenges with two exemplary studies using different measures for organizational readiness for change

Taken together, the evidence surrounding ORC is ambiguous, offering limited knowledge about whether, how, and under which conditions ORC influences implementation. Moreover, other factors may influence implementation or moderate or mediate the role of ORC for implementation in health care. Furthermore, the importance of ORC can vary based on the degree to which implementation requires behavior change, implementer or end-user familiarity with these behaviors, or the use of implementation strategies.

Against this background, the overarching aim of this systematic literature review (SLR) is to identify studies reporting factors that—in relation to ORC—are potentially important for implementing change in healthcare settings. This SLR is part of a larger project aimed at identifying whether and under which conditions ORC influences change-related effort in implementing infection prevention and control practices in acute care. Its results will be used for a Coincidence Analysis, a case-based method rooted in Boolean algebra, designed to find factors that are necessary and sufficient for a given outcome (Whitaker et al., 2020). The SLR is therefore focused on identifying the breadth of factors—in relation to ORC—potentially influencing change-related effort as defined in the TORC (Weiner, 2020), further described below.

The research questions (RQs) to be answered are:

What factors have been investigated in the empirical literature in relation to ORC?

What factors have been investigated in the empirical literature as possible mediators or moderators of the relationship between ORC and implementation?

Methodology

We conducted an SLR allowing for the inclusion of experimental, nonexperimental, and quasi-experimental studies. This SLR was preregistered on PROSPERO (CRD42023368072) and reported based on the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines (Page et al., 2021). The PRISMA checklist is included in Supplemental Material A.

Theoretical underpinnings

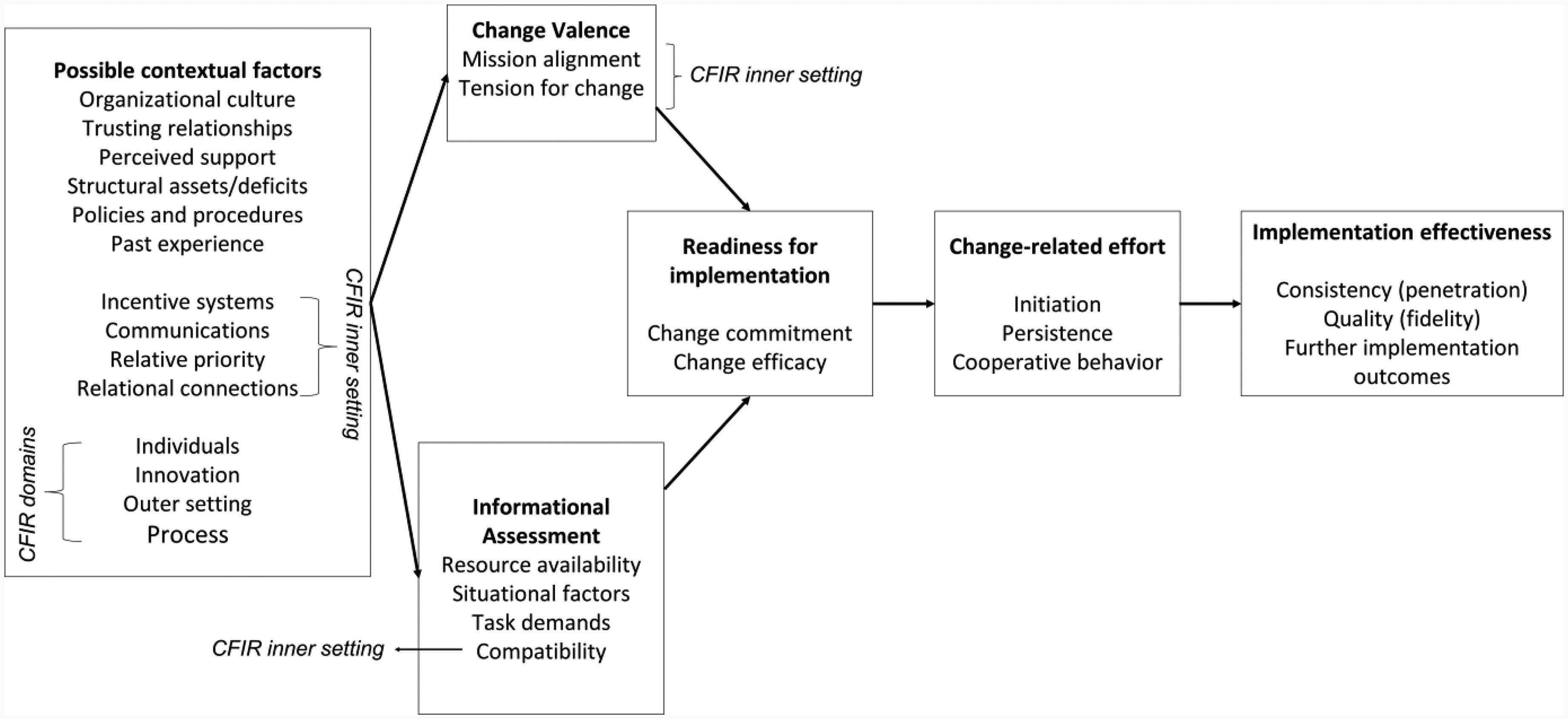

An integrated framework, including the TORC (Weiner, 2020) and the updated Consolidated Framework for Implementation Research (CFIR; Damschroder et al., 2022) guided the synthesis of included studies (Figure 1).

Guiding TORC-CFIR integrated framework used for this systematic review (adapted from Damschroder et al., 2022; Weiner, 2020). Further implementation effectiveness outcomes can be added to the TORC-CFIR framework as needed (e.g., acceptability, appropriateness, feasibility; Proctor et al., 2011)

The TORC describes change efficacy and change commitment as the two main ORC components. ORC is promoted by change valence and informational assessment, which are theorized as direct ORC determinants. Change valence describes the value that organizational members assign to the change, and informational assessment describes their cognitive combination of information about task demands, resource availability, and situational factors. Their interplay is assumed to be influenced by possible contextual factors that influence ORC indirectly through change valence and informational assessment. Possible contextual factors within the TORC include organizational culture and climate, policies and procedures, perceived organizational support, trusting relationships, and past experience. For example, depending on whether the change aligns with cultural values or not, organizational culture could influence change valence and affect ORC (Weiner, 2020).

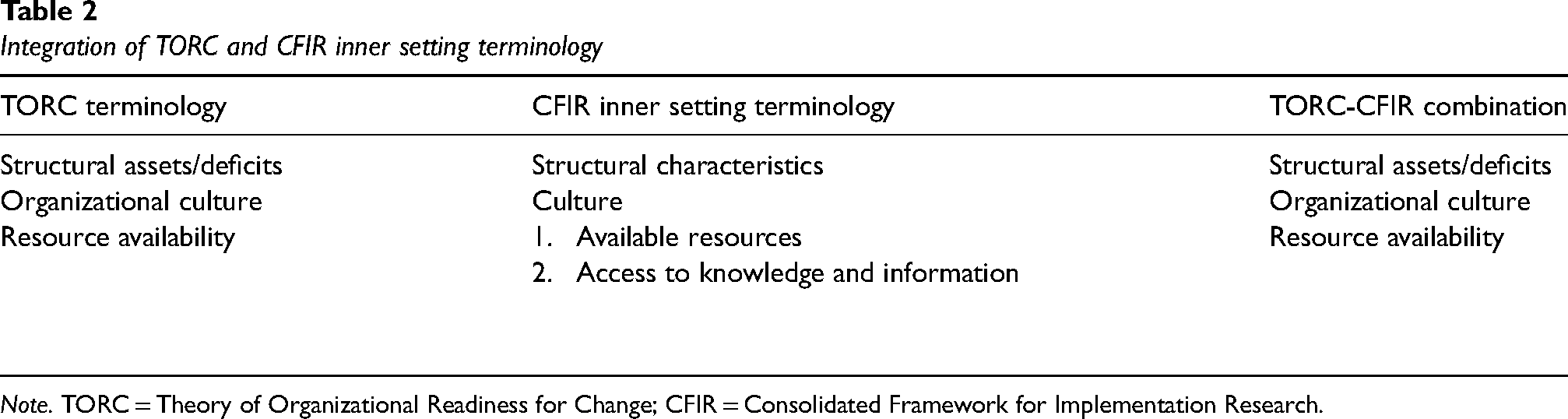

The usability of the TORC as guiding theory was enhanced by integrating CFIR domains. The CFIR is a determinant framework that outlines potential implementation barriers and facilitators. To combine the TORC and the CFIR, the CFIR domains individuals, innovation, outer setting, and implementation process were integrated into the possible contextual factors of the TORC. In anticipation of inner setting determinants playing a central role in ORC, we also integrated constructs of the CFIR inner setting domain into the TORC elements possible contextual factors, change valence, and informational assessment. Integrating CFIR domains in this way allowed for specifying and broadening the range of potential factors relevant to the RQs that guide this SLR. When TORC and CFIR terminologies overlapped, the term representing greater specificity regarding ORC and simultaneously minimizing ambiguity among factors was used (Table 2).

Integration of TORC and CFIR inner setting terminology

Note. TORC = Theory of Organizational Readiness for Change; CFIR = Consolidated Framework for Implementation Research.

Eligibility criteria

Studies eligible for inclusion had to be conducted in health care settings. Furthermore, a quantitative ORC measurement, and at least one additional reported factor (RF) had to be quantitatively or qualitatively studied in relation to ORC. Regardless of the emerging results of included studies, the investigation of RFs in relation to ORC could occur in two ways: RFs could be investigated as direct or indirect predictors or correlates of ORC or they could be examined as possible mediators or moderators of the relationship between ORC and implementation in included studies. Table 3 details eligibility criteria.

Eligibility criteria for inclusion of full-text articles

Note. RF = Reported Factors; PRISMA = Preferred Reporting Items for Systematic Reviews and Meta-Analyses.

Both criteria match the same reason for full text exclusion in PRISMA flowchart (Figure 2).

Information sources

A literature search was performed in August 2022. Five online databases were searched, displayed in Supplemental Material B. Additionally, two reviewers checked the bibliographies of included studies for further relevant articles.

Search strategy

Key terms used in the search strategy were related to the following concepts and adapted for each database: healthcare, organization, readiness, change. Table 4 shows the search strategy used in MEDLINE. Supplemental Material B contains the search strategies used for each database.

Search strategy for MEDLINE

Selection process

Two reviewers independently screened titles, abstracts, and full texts in duplicate against predefined eligibility criteria (Table 3) using Covidence (Covidence systematic review software, 2022). Reasons for full-text exclusion were indicated. Disagreements were either solved by consensus discussion or by a third reviewer (B.A.).

Data extraction

Pairs of independent reviewers extracted data from included studies using a predefined Microsoft Excel form. One reviewer (L.Ca.) extracted two additional studies identified through bibliography checks of included studies. Discrepancies were solved by consensus discussion. Data were extracted for: publication author and year, study setting, study methodology, study design, data collection, change implemented, and ORC measure used. Furthermore, we extracted information from methods and results sections of included studies on RFs investigated in relation to ORC (i.e., as predictors or correlates of ORC, or mediators or moderators of the relationship between ORC and implementation) and therefore relevant to our RQs.

Study risk of bias assessment

Due to the variety of study designs eligible for this SLR, reviewer pairs independently appraised study quality using the Mixed Methods Appraisal Tool (MMAT) version 2018 (Hong et al., 2018), developed for use in qualitative studies, randomized controlled trials, nonrandomized studies, quantitative descriptive studies, and mixed-methods studies. Reviewers discussed disagreements until consensus was reached. The MMAT provides differing sets of five quality criteria for each study category, with criteria being rated with “Yes” when a criterion is met, “No” when a criterion is not met, and “Can’t tell” when there is insufficient information to judge whether a criterion is met. The authors of the MMAT discourage computing a numerical overall score of study ratings, as this would not provide information on specific low-quality aspects of the studies (Hong et al., 2018). Therefore, the MMAT was not used to inform in- or exclusion, or synthesis, but to provide indications of the overarching quality of included studies and their evidence, thereby helping to contextualize our findings in the broader landscape of ORC literature.

Synthesis of findings

Two reviewers (B.A. and L.Ca.) conducted the synthesis of RFs in two steps:

Clustering of similar RFs: RFs were listed by name as reported in studies, and then grouped according to topical similarity, using construct definitions provided in the CFIR (Damschroder et al., 2022) and the TORC (Weiner, 2020). Each group of factors was then labeled to represent their key commonality. These are the synthesized factors (SFs). Allocation of SFs to framework constructs: SFs were allocated to TORC-CFIR constructs as described in Table 5. Each SF was assigned to one framework construct only.

Allocation of SFs framework constructs (synthesis step 2)

Note. TORC = Theory of Organizational Readiness for Change; CFIR = Consolidated Framework for Implementation Research; SFs = synthesized factors.

Results

Identified studies

After de-duplication and abstract screening, we assessed 346 full-texts for eligibility. We identified 47 studies for inclusion through database searches (n = 45) and bibliography screening (n = 2). The PRISMA flowchart (Figure 2) displays the screening process, including reasons for full-text exclusion.

PRISMA flowchart. * Hierarchy for exclusion from top to bottom

Study quality

Supplemental Material C presents quality ratings of quantitative studies, divided into quantitative descriptive studies (n = 27), nonrandomized studies (n = 1), and randomized controlled studies (n = 2), as well as quality ratings of mixed-methods studies (n = 17), all of which used quantitative descriptive designs.

Among the 30 quantitative studies, only five received “Yes” ratings across MMAT criteria. For most studies (n = 18), one or two MMAT criteria were assessed negatively, or could not be assessed at all. This particularly applied to the criteria about nonresponse bias (n = 19) and sample representativeness (n = 14).

Across the 17 mixed-methods studies, the qualitative elements were generally rated as satisfactory, with 12 of these being positively assessed across all MMAT criteria. One study (Chang et al., 2013) did not receive any positive rating for its qualitative, but solely positive ratings for its quantitative part. The quality of the quantitative sections of these mixed-methods studies was generally assessed as moderate, with only five studies being rated as solely positive across quantitative MMAT criteria. The remaining studies had either three to four (n = 9) or one to two (n = 3) positive ratings, with information lacking for the same criteria that dominated the quantitative studies: nonresponse bias and sample representativeness. The mixed-methods section was generally rated more critically, with only two studies (Elango et al., 2018; Hearld et al., 2022) achieving a purely positive assessment across all mixed-methods criteria. Further seven studies received three to four positive ratings, and the remaining eight studies had one or two positive ratings. Information was lacking for especially two MMAT criteria: addressing divergencies between qualitative and quantitative results (n = 13) and adherence to quality criteria established for the two methodological traditions (n = 15).

Study characteristics

Studies reported on the implementation of various changes across mental, behavioral, physical, and preventive health settings. Table 6 displays the characteristics of included studies, including study country and setting, change implemented, healthcare sector, ORC measures used, and reported ORC results.

Main study characteristics

Note. + = in mixed methods studies, n1 = quantitative sample size, n2 = qualitative sample size; * = mixed methods study; ‡ = study investigating RQ2, that is, factors investigated as possible mediators or moderators of ORC and implementation.

ORIC = Organizational Readiness for Implementing Change Scale (Shea et al., 2014).

ROC = Readiness for Organization Change (Holt et al., 2007a).

ORC = Organizational Readiness for Change Scale, the ORC-SA was designed for social service agencies and the ORC-S was designed for substance abuse treatment agencies (Lehman et al., 2002).

MORC = The Medical Organizational Readiness for Change (Bohman et al., 2008).

ORCA = Organizational readiness to change assessment instrument (Helfrich et al., 2009).

OITIRS = Organizational Information Technology Innovation Readiness Scale (Snyder-Halpern, 2002), of which only organizational readiness scale is considered for this SLR.

TCU ORC = Texas Christian University Organizational Readiness for Change scales (Lehman et al., 2002).

Items not reported, Myers et al. (2017).

Scale items of the self-developed measure included “recognition of existing safety problems,” “knowledge of how to tackle safety problems,” “systems and infrastructure to support safety improvement” (Pinto et al., 2011).

Source of instrument not reported.

Allred et al. (2005). Lundgren et al. (2013) builds on the previously conducted study by Lundgren et al. (2012). Peracca et al. (2021) and Peracca et al. (2023) are both studies as part of a larger trial (Done et al., 2018).

Research questions

Out of 47 included studies, 44 reported on factors falling under RQ1, that is, factors investigated in relation to ORC (e.g., Adelson et al., 2021; Saleh et al., 2016). Two studies investigated factors related to RQ2, that is, factors investigated as possible mediators or moderators of ORC and implementation (i.e., Chang et al., 2013; Gallant et al., 2023). For example, Chang et al. (2013) investigated the relation between ORC and the implementation of depression care models and found that some ORC subscales were related to the adoption of these models. Gallant et al. (2023) explored mediators between ORC and behavioral intentions to adopt an automated pain management monitoring system and found a partially mediating effect of effort and performance expectancy between ORC and the intention to adopt this system. One study RFs matching both RQs (Peracca et al., 2021). The RFs and SFs investigated in relation to RQs are listed in Table 6. A detailed account of SFs is provided in Supplemental Material D.

Study design and methodology

Forty-one studies used a nonexperimental or observational design, four used an experimental design, and two a hybrid design. Thirty studies used quantitative methods, whereas 17 studies employed mixed methods.

Study settings

Studies were conducted in a broad range of in- and out-patient settings. Health care organizations included acute care hospitals (regional, tertiary, pediatric, and university hospitals) or specific units within hospitals, such as neonatal intensive care units or emergency departments. Furthermore, outpatient studies were conducted in specialized clinics, primary care practices and centers, community health centers, substance use treatment centers, long-term care facilities, nursing homes, and dental care practices, among other settings. Different types of Veterans Affairs facilities were used in studies conducted in the United States.

ORC measures used

Various quantitative ORC measures (or adaptations thereof) were administered in included studies. Used most frequently was the ORIC (18 studies) developed based on the TORC by Shea et al. (2014). Five studies used the ORCA (Helfrich et al., 2009), and four each used the Readiness for Organization Change (ROC; Holt, Armenakis, Feild, & Harris, 2007), ORC (Lehman et al., 2002), and Texas Christian University ORC (TCU ORC; Lehman et al., 2002) scales. One study measured ORC with the Organizational Information Technology Innovation Readiness Scale (Snyder-Halpern, 2002). The remaining 11 studies used self-developed items, adaptations from existing surveys, or did not clearly report their ORC measures. Table 6 displays ORC measures used for each study.

Timing, frequency and level of ORC measurements

The timing and frequency with which ORC was measured are visualized in Table 7. Nineteen studies measured ORC before implementation only, eight studies reported having measured ORC during implementation only, and four studies measured ORC retrospectively, after implementation only. Three studies measured ORC before and during implementation, and three measured ORC before, during, and after implementation. The timing of ORC measurement was unclear in ten studies. The number of ORC measurements within a study ranged from one to 12 across 46 studies, with 40 reporting single ORC measurements. The frequency with which ORC was measured was unclear in one study.

Timing and frequency of ORC measurement per study.

Note. 1ORC = Organizational Readiness for Change. Light gray cells indicate that the timing of ORC measurement varied across the study sites.

aSome sites were planning to implement, some sites already did implement the change.

bSome were preparing for implementation, some had already begun implementing, and some had withdrawn by the time of the readiness assessment.

With ORC representing a collective rather than an individual construct, all studies measured ORC at the organizational level, as specified by the respective ORC measures used. Additionally, five studies further indicated team-level considerations in their ORC measurements. For example, Akande et al. (2019) worded items as referring to a group (e.g., “we know…” instead of “I know…”) to intentionally emphasize a team perspective in their ORC measurement. Further examples are shown in Supplemental Material E.

Synthesized factors

Figure 3 portrays a detailed landscape of SFs investigated in combination with ORC or investigated as potential mediators or moderators of the relationship between ORC and implementation, mapped to the TORC-CFIR framework. Overall, Figure 3 reflects that most SFs identified relate to the possible contextual factors component of the TORC-CFIR framework. Less prominently reported were SFs related to informational assessment, followed by those falling under change valence.

Synthesized factors (SFs) assigned to TORC-CFIR framework

Prominent among possible contextual factors are individual traits investigated in relation to ORC. These range from individual- (e.g., age, gender) and job-related demographics (e.g., seniority, years of experience) to psychological constructs (e.g., attitudes, self-efficacy). SFs related to the implemented innovation also had a focal role, with innovation acceptability, complexity, sustainability, and perceived effectiveness being central examples. Conversely, there was a notable absence of possible contextual factors for the TORC element policies and procedures and the CFIR domain outer setting, both of which are related. Furthermore, the CFIR inner setting constructs incentive systems, relative priority, and relational connections lack representation across included studies.

Within the TORC determinant informational assessment, resource availability has a striking presence among included studies, with most SFs belonging here, ranging from general availability of resources to more specific resources such as knowledge, team member availability, and access to interdisciplinary expertise.

The TORC change valence determinant includes SFs about change valence itself, and SFs about mission alignment. Contrary to informational assessment, change valence is underrepresented among the SFs investigated in combination with ORC in healthcare studies.

Discussion

With this SLR, we provide an overview of factors examined in relation to ORC and of potential relevance for explaining implementation results in healthcare studies, be these positive, negative, or null findings. We synthesized the empirical healthcare literature on factors investigated in relation to ORC, including possible mediators or moderators of the relationship between ORC and implementation. Forty-seven studies were included in this review. Factors were mostly investigated in relation to ORC rather than as possible mediators or moderators of the relationship between ORC and implementation. The limited number of studies linking ORC to implementation identified with this SLR confirms the previously critiqued shortage of studies examining ORC prospectively. In the context of the TORC-CFIR framework that guided this SLR (Figure 1), most factors reported were possible contextual factors, with the focus being on individual traits and the innovation itself. Less examined was the informational assessment determinant, with most factors representing aspects of resource availability. Least represented was the ORC determinant change valence.

Our results show that an extensive variety of factors has been researched in relation to ORC, with only few factors investigated across multiple studies, and many factors having been the focus of a single or few studies only. These factors are similar, but due to the use of slightly different concepts, they are assigned to different TORC-CFIR framework elements. The diversity of ORC conceptualizations and theories, in combination with this somewhat diffuse landscape of factors synthesized from the empirical literature, highlights the need to enhance the conceptual clarity surrounding ORC (Holt, Armenakis, Harris, & Feild, 2007; Kelly et al., 2017).

The knowledge base on whether and how change valence influences ORC remains unclear. While the implicit assumption that ORC may be stronger if organizational members value an intended change may seem intuitive, ORC studies focusing on this aspect are scarce. One reason may be that some scholars view change valence as an ORC component (e.g., Armenakis et al., 1993), whereas change valence is conceptualized as an ORC determinant in the TORC guiding this SLR (e.g., Weiner, 2020). Viewing change valence as a determinant or a component of ORC is a substantial conceptual difference—highlighting the importance of transparent theory use and conceptualization when measuring ORC. To set the conceptual boundaries of the theory and measure used, a clear description of the underlying constructs measured as part of an ORC assessment is indispensable.

Simultaneously, these findings illustrate two major obstacles to advancing the field. First, as already highlighted, lacking consensus about core ORC components hinders attempts to clearly define ORC and its conceptual boundaries. This may be due to the common use of “readiness” in everyday language (Weiner, 2020). Hence, scholars may mistakenly assume a preexisting shared understanding of “organizational readiness for change.” Validation studies of ORC measures are one attempt to create definitions of ORC. However, the plethora of available measures mirrors insufficient uniformity in ORC definition and conceptualization.

This lack of conceptual clarity exacerbates the second obstacle to advancing ORC research—the need for more nuanced theorizing about why certain implementation results occur, how ORC might contribute to such results, and about causal mechanisms between implementation determinants, strategies, and proximal and distal outcomes (Lewis et al., 2022, 2018). More nuanced, causal theories will help identify what might influence ORC, for example, in the form of national or regional culture, organizational structures, or team-level dynamics, and why these factors have such influences.

Most factors categorized in this SLR as possible contextual factors were defined at the individual level. This mirrors a tendency in ORC research to examine individual factors with the aim to understand ORC. This contrasts with the notion of ORC as a collective construct (Scaccia et al., 2015; Weiner et al., 2020) rather than an individual trait. It also warrants caution, as social scientists have long discussed the problem of data aggregation to a higher unit of analysis when researching multidimensional constructs (Chan, 2019), such as ORC. In healthcare settings, change is frequently implemented at a collective level, demanding behavior change from various individuals to achieve anticipated benefits (Michie et al., 2018). Hence, different roles with interdependent responsibilities contribute to the implementation effort, which can be analogized as a “team sport.” This implies that the sum of single individuals changing behaviors will not be sufficient for an organization to fully establish collective change (Weiner, 2020), suggesting that ORC cannot be sufficiently captured by aggregating individual-level measurement.

Furthermore, insights into how individual factors connect to ORC are only meaningful if they are of practical relevance, that is, can be leveraged by an organization when implementing change. Individual factors that are rarely modifiable have limited practical relevance (e.g., personality traits, attitudes, personal values), whereas others (e.g., staff seniority, job position) are within organizational control and may be investigated. Therefore, when implementing collective behavior change interventions, there may be value in examining higher-level dynamics and characteristics, either on the team (e.g., team member composition, teamwork) or organizational level (e.g., formal recognition of workflows, hierarchical structures), as implementation teams or the organization can influence these (Rafferty et al., 2013; Weiner, 2020). Team research offers various conceptual frameworks (i.e., composition models) specifically addressing issues related to measurement across multiple levels of analysis (Chan, 1998, 2019).

The authors of a recent review mapping items of ORC assessments to the CFIR (Miake-Lye et al., 2020) suggested capturing the team level in the conceptualization of ORC as a further unit of analysis. Similarly, Weiner et al. (2020) argue for ORC being important to consider at the individual, group, and organizational level, while Vakola (2013) reports that groups influence organizational members’ behaviors. Our SLR adds further to this debate by highlighting that such an intermediate team level rarely is the focus of studies examining ORC and related factors. In our sample, little attention was paid to the role of teams in (a) ORC measurements and (b) the factors investigated in relation to ORC. As the unit responsible for or affected by an implementation, the team is only limitedly represented in five studies’ ORC measurements (e.g., Akande et al., 2019; Scales et al., 2017). Further, only one study (Rodriguez et al., 2016), as part of the factors reported alongside ORC, examined the relations between teamwork, team member availability, and ORC in an effort to improve diabetes care in a community health center. However, if, as suggested previously, implementation is understood as a team sport, involving different team members with distinct responsibilities, examining team characteristics, dynamics, and functioning in implementation, would contribute to the knowledge base surrounding ORC and provide an opportunity to enhance implementation science (Chan, 2019).

Finally, this SLR confirms ORC measurement challenges highlighted in previous publications (Gagnon et al., 2014; Weiner et al., 2008). While there is increasing consensus that ORC can fluctuate throughout implementation (Scaccia et al., 2015; Weiner et al., 2020), its measurement continues to be primarily based on single (baseline and/or retrospective) timepoints. These flaws—retrospective and single-time assessments—are inconsistent with how ORC is inherently understood, as reflected in common sense and the scientific understanding (Scaccia et al., 2015; Weiner, 2020).

In everyday language the term “readiness” suggests future orientation, making retrospective inquiry about “being ready” nonsensical. Consequently, this prevents meaningful conclusions about change implementation. Additionally, many ORC measures are self-report measures, and hence subject to social desirability and/or recall bias and may even be affected by respondents’ situational mood (Martinez et al., 2014; Podsakoff et al., 2003). This emphasizes a need to integrate and triangulate multiple data types, for example, observational and administrative data, when measuring ORC (Martinez et al., 2014).

Furthermore, implementation is a nonlinear process (Rapport et al., 2018), during which change is implemented sequentially, to reach extended uptake beyond the “logical endpoint of implementable interventions” (Rapport et al., 2018, p. 119; Figure 1). Accordingly, change is likely implemented in multiple stages, necessitating repeated ORC measurements at different timepoints, since ORC may differ as organizations and their members move through different stages of a change.

In summary, the synthesis of studies included in this SLR depicts the current state of ORC research, calling for improved conceptual clarity around ORC, more nuanced theorizing, and for considering the team level. This need for more rigorous ORC research is further mirrored in the generally only moderate assessment of the quality of included studies, pointing to limitations in especially quantitative studies, as well as quantitative and mixed-methods sections of mixed-methods studies.

Implications

This SLR contributes to our understanding of ORC by identifying a collection of factors that have been investigated in relation to ORC in health care. As many constructs overlap with ORC (Bouckenooghe, 2010; Weiner, 2020), measuring ORC in isolation from other potentially relevant factors runs the risk of overlooking otherwise important influences on change implementation and thus of developing flawed explanations for implementation results. Although we found suboptimal study quality in line with prior critiques in the field, the factors emerging from this work provide a starting point for their selection and subsequent investigation in combination with ORC to provide richer interpretation and contribute to a deeper understanding of implementation outcomes.

In doing so, implementation scientists should build future ORC studies on transparent and coherent definitions of ORC, preferably using ORC theories to enhance the scientific knowledge base. Furthermore, empirical tests of ORC theories prospectively linking ORC to implementation outcomes are urgently needed to advance current best knowledge on whether and under which conditions ORC matters (Weiner, 2020). Ideally, this work would also focus more strongly on how to understand and measure ORC at the team level and give room to longitudinal research allowing to study ORC prospectively, over the course of multiple implementation stages, using multiple measurement points.

While waiting for this development of a more consolidated evidence base, implementation (support) practitioners are well advised in utilizing the scarce but nevertheless existing evidence to inform their work with considering, assessing and developing ORC prior to and during implementation processes. This implies using already existing ORC models, theories, and measures of greatest relevance to a given setting. In monitoring ORC and using ORC data to inform practice decisions, the use of these measures can be complemented with information gathered through previous experience with implementing change in an organization, observations, or other data collection. This will also allow for internal and external stakeholder engagement at different stages of change implementation, which is generally recommended (Gopichandran et al., 2016) and relevant to ensure continuity of implementation (Pellecchia et al., 2022). Stakeholder engagement may be of particular value when ORC remains fragile and requires to be reassessed, for example, when change implementation is disrupted due to unexpected local circumstances or failed implementation strategies.

Limitations

This study has limitations that should be considered when using the presented findings. First, we may have missed factors investigated in relation to ORC or as possible moderators or mediators between ORC and implementation, as we (a) included studies from the healthcare sector only, and (b) excluded studies that measured ORC qualitatively. Second, we excluded studies that measured ORC only, without linking ORC to potential correlates or predictors, or without exploring potential mediators or moderators between ORC and implementation. However, as shown in Miake-Lye et al. (2020), some ORC assessments capture a broader range of constructs than others. ORC studies that were excluded because they did not investigate further factors, may have used broader ORC assessments that include potentially relevant factors, which we may have missed. This leads to a third limitation related to our framework use. We mapped identified SFs to TORC-CFIR framework components. However, considering the items of ORC assessment instruments used in included studies was out of scope of this SLR. Investigating these in greater detail would have helped to uncover potential overlap between ORC assessment items and SFs, thereby also identifying factors that are not part of any ORC assessment but may be relevant to investigate alongside ORC, further detailing the overview provided through this SLR. Taking these leads further into future ORC-focused SLR work would be a meaningful contribution.

Conclusions

This systematic literature review of studies examining factors in relation to ORC highlights the still somewhat fuzzy boundaries that characterize ORC as it is reported and discussed in implementation science. Despite existing ORC theories, measures, and studies, subtle conceptual differences impact how ORC and related factors can be categorized, understood, and utilized to unify current best ORC knowledge. While we provide an overview of factors potentially relevant alongside ORC to interpreting implementation results, the precise role of ORC in implementation remains unclear. Our findings suggest that the assumption that ORC influences implementation needs reevaluation, calling for enhanced collaboration between implementation science and practice to enable ORC implementation research of greater conceptual clarity, rigor, and relevance.

Supplemental Material

sj-pdf-1-irp-10.1177_26334895251334536 - Supplemental material for Organizational readiness for change: A systematic review of the healthcare literature

Supplemental material, sj-pdf-1-irp-10.1177_26334895251334536 for Organizational readiness for change: A systematic review of the healthcare literature by Laura Caci, Emanuela Nyantakyi, Kathrin Blum, Ashlesha Sonpar, Marie-Therese Schultes, Bianca Albers and Lauren Clack in Implementation Research and Practice

Supplemental Material

sj-pdf-2-irp-10.1177_26334895251334536 - Supplemental material for Organizational readiness for change: A systematic review of the healthcare literature

Supplemental material, sj-pdf-2-irp-10.1177_26334895251334536 for Organizational readiness for change: A systematic review of the healthcare literature by Laura Caci, Emanuela Nyantakyi, Kathrin Blum, Ashlesha Sonpar, Marie-Therese Schultes, Bianca Albers and Lauren Clack in Implementation Research and Practice

Supplemental Material

sj-pdf-3-irp-10.1177_26334895251334536 - Supplemental material for Organizational readiness for change: A systematic review of the healthcare literature

Supplemental material, sj-pdf-3-irp-10.1177_26334895251334536 for Organizational readiness for change: A systematic review of the healthcare literature by Laura Caci, Emanuela Nyantakyi, Kathrin Blum, Ashlesha Sonpar, Marie-Therese Schultes, Bianca Albers and Lauren Clack in Implementation Research and Practice

Supplemental Material

sj-pdf-4-irp-10.1177_26334895251334536 - Supplemental material for Organizational readiness for change: A systematic review of the healthcare literature

Supplemental material, sj-pdf-4-irp-10.1177_26334895251334536 for Organizational readiness for change: A systematic review of the healthcare literature by Laura Caci, Emanuela Nyantakyi, Kathrin Blum, Ashlesha Sonpar, Marie-Therese Schultes, Bianca Albers and Lauren Clack in Implementation Research and Practice

Supplemental Material

sj-pdf-5-irp-10.1177_26334895251334536 - Supplemental material for Organizational readiness for change: A systematic review of the healthcare literature

Supplemental material, sj-pdf-5-irp-10.1177_26334895251334536 for Organizational readiness for change: A systematic review of the healthcare literature by Laura Caci, Emanuela Nyantakyi, Kathrin Blum, Ashlesha Sonpar, Marie-Therese Schultes, Bianca Albers and Lauren Clack in Implementation Research and Practice

Footnotes

Acknowledgements

We thank Dr Martina Gosteli, the liaison librarian of the University of Zurich, for her contributions to this systematic literature review.

Consent for publication

All authors gave their written consent for the publication of this manuscript.

Data availability statement

Data may be shared upon reasonable request to the corresponding author.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the European Union's Horizon 2020 Research and Innovation Program (Grant No. 965265, REVERSE).

ORCID iDs

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.