Abstract

Background:

Organizational culture, organizational climate, and implementation climate are key organizational constructs that influence the implementation of evidence-based practices. However, there has been little systematic investigation of the availability of psychometrically strong measures that can be used to assess these constructs in behavioral health. This systematic review identified and assessed the psychometric properties of measures of organizational culture, organizational climate, implementation climate, and related subconstructs as defined by the Consolidated Framework for Implementation Research (CFIR) and Ehrhart and colleagues.

Methods:

Data collection involved search string generation, title and abstract screening, full-text review, construct assignment, and citation searches for all known empirical uses. Data relevant to nine psychometric criteria from the Psychometric and Pragmatic Evidence Rating Scale (PAPERS) were extracted: internal consistency, convergent validity, discriminant validity, known-groups validity, predictive validity, concurrent validity, structural validity, responsiveness, and norms. Extracted data for each criterion were rated on a scale from −1 (“poor”) to 4 (“excellent”), and each measure was assigned a total score (highest possible score = 36) that formed the basis for head-to-head comparisons of measures for each focal construct.

Results:

We identified full measures or relevant subscales of broader measures for organizational culture (n = 21), organizational climate (n = 36), implementation climate (n = 2), tension for change (n = 2), compatibility (n = 6), relative priority (n = 2), organizational incentives and rewards (n = 3), goals and feedback (n = 3), and learning climate (n = 2). Psychometric evidence was most frequently available for internal consistency and norms. Information about other psychometric properties was less available. Median ratings for psychometric properties across categories of measures ranged from “poor” to “good.” There was limited evidence of responsiveness or predictive validity.

Conclusion:

While several promising measures were identified, the overall state of measurement related to these constructs is poor. To enhance understanding of how these constructs influence implementation research and practice, measures that are sensitive to change and predictive of key implementation and clinical outcomes are required. There is a need for further testing of the most promising measures, and ample opportunity to develop additional psychometrically strong measures of these important constructs.

Plain Language Summary

Organizational culture, organizational climate, and implementation climate can play a critical role in facilitating or impeding the successful implementation and sustainment of evidence-based practices. Advancing our understanding of how these contextual factors independently or collectively influence implementation and clinical outcomes requires measures that are reliable and valid. Previous systematic reviews identified measures of organizational factors that influence implementation, but none focused explicitly on behavioral health; focused solely on organizational culture, organizational climate, and implementation climate; or assessed the evidence base of all known uses of a measure within a given area, such as behavioral health–focused implementation efforts. The purpose of this study was to identify and assess the psychometric properties of measures of organizational culture, organizational climate, implementation climate, and related subconstructs that have been used in behavioral health-focused implementation research. We identified 21 measures of organizational culture, 36 measures of organizational climate, 2 measures of implementation climate, 2 measures of tension for change, 6 measures of compatibility, 2 measures of relative priority, 3 measures of organizational incentives and rewards, 3 measures of goals and feedback, and 2 measures of learning climate. Some promising measures were identified; however, the overall state of measurement across these constructs is poor. This review highlights specific areas for improvement and suggests the need to rigorously evaluate existing measures and develop new measures.

Keywords

Introduction

Because most behavioral health services are delivered within or through organizations (Aarons et al., 2018), organizational context plays a critical role in determining successful implementation of evidence-based practices (EBPs; Aarons et al., 2014, 2018; Glisson & Williams, 2015). Consequently, organizational context is included in ~95% of implementation frameworks (Tabak et al., 2012). The purpose of this systematic review was to identify and assess the psychometric properties of measures of organizational culture, organizational climate, and implementation climate used in behavioral health-related implementation studies. We drew upon conceptualizations of these constructs and related subconstructs from the Consolidated Framework for Implementation Research (CFIR; Damschroder et al., 2009) and from the book by Ehrhart, Schneider, et al. (2014) (see Table 1).

Construct definitions.

Note. Definitions from all constructs aside from organizational climate are drawn from Additional File 3 of Damschroder et al. (2009). The definition of organizational climate is taken from Ehrhart, Schneider, et al. (2014).

Organizational culture

Organizational culture is defined as “. . . the shared values and basic assumptions that explain why organizations do what they do and focus on what they focus on” (Schneider et al., 2017, p. 468). There are debates regarding its definition and measurement (Aarons et al., 2018; Ehrhart, Schneider, et al., 2014; Kimberly & Cook, 2008; Schein, 2017; Verbeke et al., 1998). Verbeke and colleagues (1998) identified 54 definitions of organizational culture, and Aarons and colleagues (2018) described six different measures that use 3–10 different dimensions each to measure culture (e.g., involvement, adaptability, mission, attention to detail, aggressiveness, innovation, supportiveness, leadership, planning, communication, hierarchy, proficiency, and apathy), with very little overlap. In behavioral health, organizational culture has been empirically linked to attitudes toward EBPs, sustainment, access to services, service quality, staff turnover, and mental health outcomes (Glisson & Williams, 2015).

Organizational climate

Organizational climate is defined as “the shared meaning organizational members attach to the events, policies, practices, and procedures they experience and the behaviors they see being rewarded, supported, and expected” (Ehrhart, Schneider, et al., 2014, p. 69). Scholars differentiate molar organizational climate from focused climates. Molar conceptualizations refer to the extent to which leadership provides positive experiences for employees (Aarons et al., 2018) and can include dimensions such as engagement, functionality, and stress (Glisson et al., 2008). In behavioral health, molar organizational climate has been empirically linked to service quality, treatment planning decisions, attitudes toward EBPs, staff turnover, and mental health outcomes (Glisson & Williams, 2015). Others have conceptualized and measured focused aspects of climate, such as an outcome (e.g., climate for safety) or organizational process (e.g., ethics, fairness) (Aarons et al., 2018).

Implementation climate

Implementation climate is a type of focused organizational climate defined as a summary of employees’ shared perceptions of the extent to which their use of an innovation is rewarded, supported, and expected (Klein & Sorra, 1996). Strong implementation climates encourage use of EBPs by (1) ensuring employees are skilled in their use, (2) incentivizing the use of EBP and eliminating disincentives, and (3) removing barriers to EBP use (Klein & Sorra, 1996). Implementation climate differs from molar organizational climate in that it is innovation-specific and focuses on organizational members who are expected to use or directly support an innovation (Weiner et al., 2011). Implementation climate may be critical to improving EBP implementation (Williams et al., 2018, 2020). Williams and colleagues’ (2020) 5-year panel analysis showed that organizations that improved from low to high levels of implementation climate had significantly greater increases in their clinicians’ average EBP use.

Previous reviews

Reviews of the organizational culture and organizational climate measures vary along several dimensions. First, some have systematically reviewed the literature (e.g., Allen et al., 2017; Chaudoir et al., 2013; Clinton-McHarg et al., 2016), whereas others have selectively reviewed measures at the discretion of the authors (e.g., Glisson & Williams, 2015; Kimberly & Cook, 2008; Schneider et al., 2013). Second, they range from narrow (e.g., organizational culture only; Jung et al., 2009; King & Byers, 2007; Scott et al., 2003) to broad assessments (e.g., a list of organizational characteristics; Allen et al., 2017; Brennan et al., 2012; Chaudoir et al., 2013). Third, they may or may not report psychometric properties and/or report them with varying degrees of granularity (e.g., Schneider et al., 2013, and Kimberly & Cook, 2008, do not report psychometric properties; Chaudoir et al., 2013, focused on criterion validity). Finally, they may (e.g., Clinton-McHarg et al., 2016) or may not (e.g., Gershon et al., 2004; Jung et al., 2009) be informed by a conceptual framework.

Two published systematic reviews examined psychometric properties of measures for constructs within the “inner setting” domain of the CFIR (Damschroder et al., 2009). Clinton-McHarg and colleagues (2016) examined quantitative measures developed for public health and community settings and located 51 measures. Most did not report on psychometric properties and those that did typically fell below accepted standards (Clinton-McHarg et al., 2016). Allen et al. (2017) identified 83 measures of the inner setting and the two constructs with the most measures were readiness for implementation and organizational climate. However, only 46% of studies (n = 35) included information about psychometric properties, and of those, 94% (33/35) described reliability and 71% (25/35) reported validity.

Aims and contribution of the current study

The current study sought to identify and assess the psychometric properties of measures of organizational culture, organizational climate, and implementation climate used in behavioral health-related implementation studies. This review contributes to the implementation and behavioral health literatures by (1) focusing explicitly on the assessment of these constructs within behavioral health; (2) identifying measures for key constructs and subconstructs of the widely used CFIR (Damschroder et al., 2009); and (3) rigorously assessing evidence of measures’ psychometric strength by using the Psychometric and Pragmatic Evidence Rating Scale (PAPERS; Lewis, Mettert, et al., 2018; Stanick et al., 2021). Our intent is to inform researchers’, EBP purveyors’ (Proctor et al., 2019), implementation support practitioners’ (Albers et al., 2020), and other stakeholders’ selection of high-quality measures, and to highlight areas in which further development and testing of measures is necessary.

Methods

Design overview

Data for this systematic review come from a project funded by the U.S. National Institute of Mental Health, which included multiple systematic reviews that identified implementation determinant (Damschroder et al., 2009) and outcome (Proctor et al., 2011) measures that were used within implementation studies in behavioral health (Lewis et al., 2018). The protocol for that study has been published elsewhere (Lewis et al., 2018). This systematic review was conducted in three phases. Phase I, data collection, included five steps: (1) search string generation, (2) title and abstract screening, (3) full text review, (4) construct assignment, and (5) measure-forward searches. Phase II, data extraction, consisted of coding relevant psychometric data, and Phase III involved data analysis.

Phase I: data collection

Literature searches were conducted in PubMed and Embase using search strings curated in consultation from PubMed support specialists and a library scientist. PubMed and Embase are commonly recommended for systematic reviews in health (Bramer et al., 2017; Higgins et al., 2021). Other databases such as PsycINFO were considered, but pilot testing revealed that search yields were low and did not identify a substantial number of studies for the constructs of interest. Search terms focused on: (1) implementation; (2) measurement; (3) EBP; (4) behavioral health; and (5) organizational culture and implementation climate. Our conceptualization of organizational culture and implementation climate was guided by the CFIR (Damschroder et al., 2009), and included search terms for organizational culture, implementation climate, and related subconstructs: tension for change, compatibility, relative priority, organizational incentives and rewards, goals and feedback, and learning climate (see Table 1). Table 2 includes a complete listing of search terms for PubMed and Embase. Articles published in English from 1985 onwards were included in the search. Searches were completed from 3 April 2017 to 25 May 2017.

Database search terms.

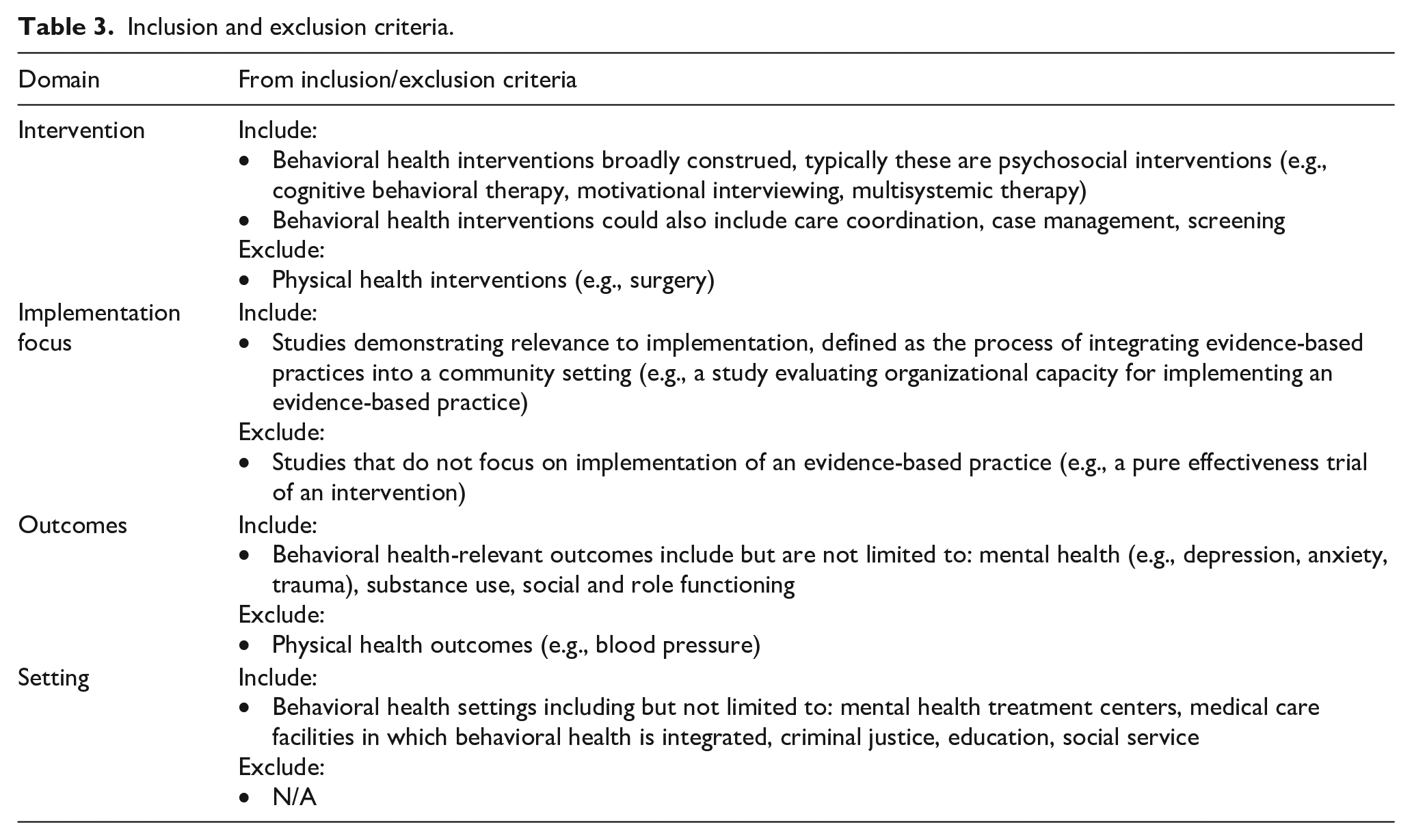

Identified titles and abstracts were screened, followed by full-text review to confirm relevance to study parameters. We included empirical studies that contained one or more quantitative measures of the target constructs if they were used in an evaluation of an implementation effort in a behavioral health context. See Table 3 for inclusion/exclusion criteria, and Appendix 1 for PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) flowcharts (Figures 10 to 17).

Inclusion and exclusion criteria.

The next step involved construct assignment, in which trained research specialists mapped measures and/or their subscales to the target constructs based on the authors’ conceptualization of the measure and content expert coding. Inherent to our search approach, measures of the target constructs could be identified through systematic reviews of related constructs (e.g., organizational readiness for change) conducted in the parent study (Lewis et al., 2018). For example, a subscale from Organizational Readiness for Change Assessment (“Staff Culture”) by Helfrich et al. (2009) was identified in a review of measures of organizational readiness for change (Weiner et al., 2020). The CFIR does not include molar organizational climate; thus, we did not conduct a search specifically for that construct. However, our searches for organizational culture and implementation climate identified a number of measures of molar organizational climate. We attempted to maintain a conceptual distinction between measures of molar organizational climate or focused organizational climates (e.g., risk taking climate; Cook et al., 2012) and the more intervention-specific implementation climate construct as described by Weiner et al. (2011). Thus, we ultimately categorized measures into nine different constructs: organizational culture, organizational climate, implementation climate, tension for change, compatibility, relative priority, organizational incentives and rewards, goals and feedback, and learning climate (Table 1).

Finally, “measure-forward” searches were conducted in May 2019 for each measure to identify empirical articles that used the measure in behavioral health implementation research. These searches were conducted using the “cited-by” feature in PubMed and Embase and by searching for measures’ formal names as available.

Phase II: data extraction

Next, articles were compiled into “measure packets,” including the measure itself (as available), the measure development article (or article with the first empirical use in a behavioral health context), and all identified empirical uses of the measure in behavioral health-related implementation efforts. Trained-research specialists reviewed each article and electronically extracted information relevant to nine psychometric rating criteria from the PAPERS (Lewis, Mettert, et al., 2018; Stanick et al., 2021): (1) internal consistency, (2) convergent validity, (3) discriminant validity, (4) known-groups validity, (5) predictive validity, (6) concurrent validity, (7) structural validity, (8) responsiveness, and (9) norms (Table 4). Data were collected on both full measure and subscale levels. If a full measure was relevant to a target construct, we reported psychometric evidence for the full measure. However, if only subscales of a broader measure were relevant, we reported psychometric evidence at the subscale level. We use the term “measures” throughout this article to refer to both full measures and subscales; however, the distinction between the two is maintained by using formal names of measures and subscales in relevant tables and figures.

Definitions of psychometric properties.

Note. See Additional File 2 of Lewis, Mettert, et al. (2018) for the complete rating scale for each psychometric criterion.

After PAPERS relevant data were extracted (Lewis, Mettert, et al., 2018; Stanick et al., 2021), each criterion was rated using the following scale for which nuanced anchors established: “poor” (−1), “none” (0), “minimal/emerging” (1), “adequate” (2), “good” (3), or “excellent” (4

In addition to assessing psychometric properties, we extracted: (1) whether the measure was used more than once, (2) country of origin, (3) setting (e.g., inpatient psychiatry, outpatient), (4) level of analysis (e.g., consumer, organization, provider), (5) population (e.g., general mental health, anxiety, depression), and (6) stage of implementation as defined by the exploration, adoption/preparation, implementation, sustainment model (Aarons et al., 2011).

Phase III: data analysis

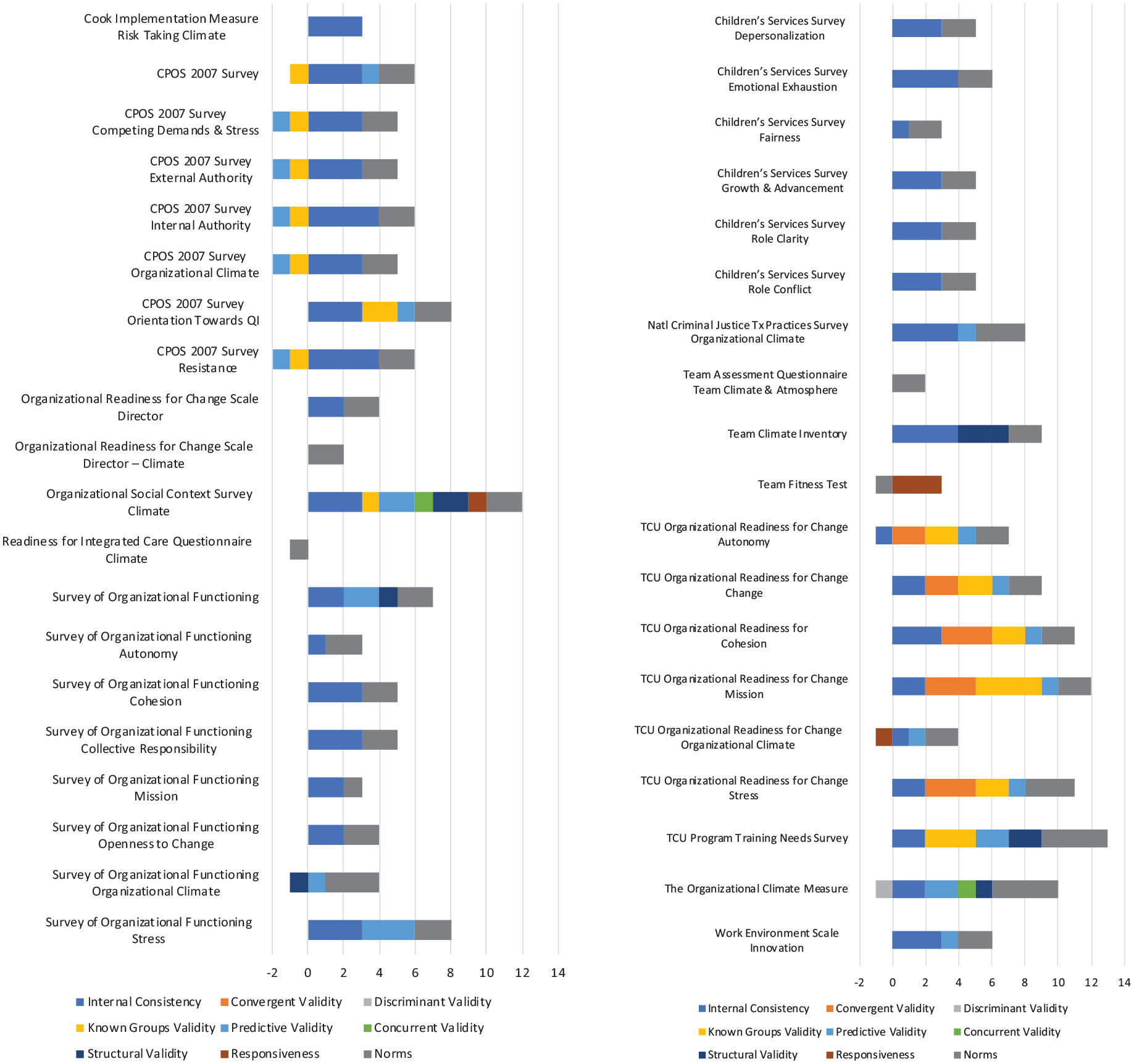

Simple statistics (frequencies, medians, ranges) were calculated to report on the presence and quality of psychometric data. Each measure was assigned a total score based upon the nine PAPERS criteria (highest possible score = 36). Bar charts were generated to display head-to-head comparisons across all measures within a given construct.

Results

Overview

Table 5 provides descriptive information. Table 6 shows availability of psychometric evidence. Table 7 includes the median and range of ratings of psychometric properties for measures with psychometric information available (i.e., those with non-zero ratings on the PAPERS criteria; Lewis, Mettert, et al., 2018; Stanick et al., 2021). Individual ratings for all measures are detailed in Table 8 and in head-to-head bar graphs in Figures 1 to 9.

Description of measures and subscales.

Some measures were used in multiple settings, levels, and phases of implementation.

Psychometric information availability.

Summary statistics for instrument ratings.

Note. Scores for individual measures ranged from −1 (“poor”) to 4 (“excellent”). Median, excluding zeros where psychometric information was not available. When the median of two scores would equal “0” (e.g., a score of −1 and 1), the lower score was taken.

Psychometric ratings for each measure by focal construct.

Head-to-head comparison of measures of organizational culture.

Head-to-head comparison of measures of organizational climate.

Head-to-head comparison of measures of implementation climate.

Head-to-head comparison of measures of tension for change.

Head-to-head comparison of measures of compatibility.

Head-to-head comparison of measures of relative priority.

Head-to-head comparison of measures of organizational incentives and rewards.

Head-to-head comparison of measures of goals and feedback.

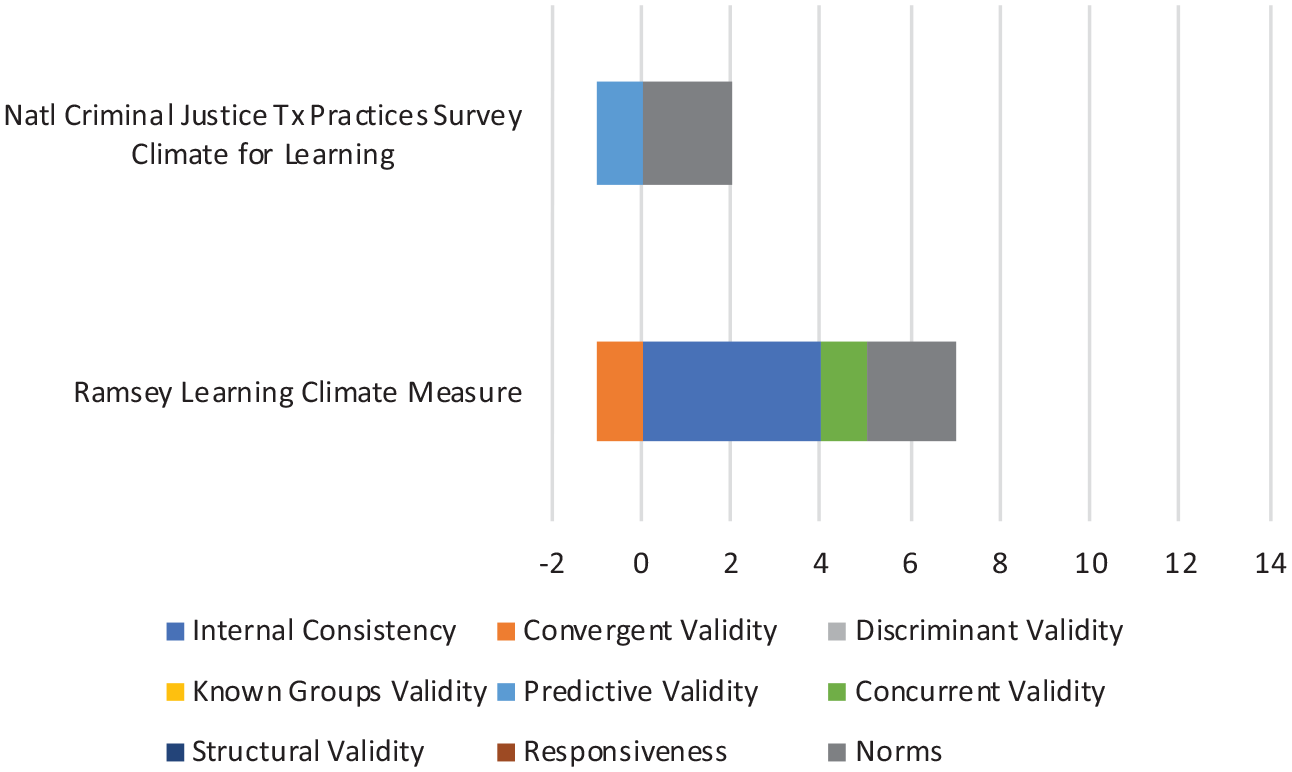

Head-to-head comparison of measures of learning climate.

Organizational culture

We identified 21 measures of organizational culture, 18 of which are subscales of broader measures (e.g., Organizational Readiness for Change Assessment–Staff Culture Scale; Helfrich et al., 2009). Measures were primarily developed in the United States (95%); used more than once (76%); used most frequently in outpatient community mental health (86%) and residential care settings (71%); administered most frequently at the provider (100%) or supervisor (52%) levels; used within general mental health (86%), alcohol use (57%), or substance use disorder (67%) services; and were used most frequently at the exploration (71%) and implementation (43%) phases.

Evidence of internal consistency was available for 18 measures, convergent validity for two measures, known-groups validity for two measures, predictive validity for 12 measures, concurrent validity for two measures, structural validity for one measure, responsiveness for one measure, and norms for 20 measures. No psychometric evidence was available for discriminant validity.

The median rating for internal consistency was “2—adequate,” for convergent validity “2—adequate,” for known-groups validity “−1—poor,” for predictive validity “1—minimal,” for concurrent validity “−1—poor,” for structural validity “2—adequate,” and for norms “2—adequate.” The median rating of “2—adequate” for structural validity was based on the rating of just one measure: the Organizational Social Context–Culture Scale (Glisson et al., 2008).

The most frequently used and highest rated measure of organizational culture in behavioral health (with 46 uses of culture and/or climate scales) was the Organizational Social Context–Culture Scale (Glisson et al., 2008). It received a total score of 11 (maximum possible score = 36) and had evidence of internal consistency (“3—good”), convergent validity (“1—minimal”), predictive validity (“1—minimal”), concurrent validity (“2—adequate”), structural validity (“2—adequate”), responsiveness (“2—adequate”), and norms (“1—minimal”), along with a “−1—poor” rating for known-groups validity. The next highest scoring measure of organizational culture was the Organizational Description Questionnaire (Parry & Proctor-Thomson, 2001) that was used eight times (total score = 9; maximum possible score = 36), with ratings of “2—adequate” for internal consistency, “3—good” for convergent validity, and “4—excellent” for norms.”

Organizational climate

We identified 36 measures of organizational climate, 32 of which are subscales of broader measures (e.g., Survey of Organizational Functioning–Organizational Climate Domain; Broome et al., 2007). Measures were primarily developed in the United States (94%); used more than once (75%); used most frequently in outpatient community mental health (92%) and residential care settings (47%); administered at the provider (78%), director (67%), supervisor (64%), and clinic/site levels (61%); used within substance use disorder (72%) and general mental health services (50%); and were used most often at the implementation (64%) and exploration phases (53%).

Evidence for internal consistency was available for 31 measures, convergent validity for five measures, discriminant validity for one measure, known-groups validity for 13 measures, predictive validity for 19 measures, concurrent validity for two measures, structural validity for five measures, responsiveness for three measures, and norms for 35 measures.

The median rating for internal consistency was “3—good,” for convergent validity “3—good,” for discriminant validity “−1—poor,” for known-groups validity “2—adequate,” for predictive validity “1—minimal,” for concurrent validity “1—minimal,” for structural validity “2—adequate,” for responsiveness “1—minimal,” and for norms “2—adequate.” The median rating of “−1—poor” for discriminant validity was based upon one measure: the Organizational Climate Measure (Patterson et al., 2005).

The measure that scored the highest (total score = 13; maximum possible score = 36) among the organizational climate measures was the Texas Christian University Program Training Needs Survey (Simpson, 2002), which was used five times and showed evidence of internal consistency (“2—adequate”), known-groups validity (3—good”), predictive validity (“2—adequate”), structural validity (“2—adequate”), and norms (“4—excellent”). The Organizational Social Context—Climate (Glisson et al., 2008), the most frequently used measure in behavioral health received a total score of 12 (maximum possible score = 36). This included evidence of internal consistency (“3—good”), known-groups validity (“1—minimal”), predictive validity (“2—adequate”), concurrent validity (“1—minimal”), structural validity (“2—adequate”), responsiveness (“1—minimal”), and norms (“2—adequate”). Finally, few measures used in behavioral health focus solely on organizational climate. One exception is the Organizational Climate Measure (Patterson et al., 2005). It had been used twice in behavioral health, had a total score of 9 (maximum possible score = 36), and had evidence of internal consistency (“2—adequate”), discriminant validity (“−1—poor”), predictive validity (“2—adequate”), concurrent validity (“1—minimal”), structural validity (“1—minimal”), and norms (“4—excellent”).

Implementation climate

For implementation climate, we included measures directly addressing implementation climate or measures of any of the six subconstructs that the CFIR includes as contributing to a positive implementation climate, including tension for change, compatibility, relative priority, organizational incentives and rewards, goals and feedback, and learning climate. We refer readers to Table 5 for descriptive information.

We identified two measures of implementation climate, one of which is a subscale of a broader measure (Readiness for Integrated Care Questionnaire–Implementation Climate Scale; Scott et al., 2017).

Of two measures of implementation climate, evidence of norms was available for both and evidence of internal consistency, convergent validity, discriminant validity, predictive validity, structural validity, and norms was available for one measure. Neither measure had evidence for known-groups validity, concurrent validity, or responsiveness.

The Implementation Climate Scale (Ehrhart, Aarons, et al., 2014) had the highest overall rating (total score = 11; maximum possible score = 36) from five uses in behavioral health, demonstrating evidence of internal consistency (“3—good”), convergent validity (“2—adequate”), discriminant validity (“1—minimal”), predictive validity (“1—minimal”), structural validity (“2—adequate”), and norms (“2—adequate”).

Tension for change

We identified two measures of tension for change, both of which were subscales of broader measures (e.g., Texas Christian University Organizational Readiness for Change–Pressures for Change Scale; Lehman et al., 2002). Evidence of internal consistency and norms was available for both measures, and evidence of convergent validity, known-groups validity, and predictive validity was available for one measure. There was no evidence of discriminant validity, concurrent validity, structural validity, or responsiveness. Both measures were rated the same (total score = 3; maximum possible score = 36). The Texas Christian University Organizational Readiness for Change–Pressures for Change Subscale (Lehman et al., 2002) demonstrated evidence of internal consistency (“2—adequate”), convergent validity (“1—minimal”), and norms (“2—adequate”); however, both known-groups validity and predictive validity were rated as (“−1—poor”) despite being used 37 times in behavioral health. The Survey of Organizational Functioning–Pressures for Change Subscale (Broome et al., 2007) was used 12 times and exhibited evidence of internal consistency (“1—minimal”) and norms (“2—adequate”).

Compatibility

We identified six measures of compatibility, all of which were subscales of broader measures (Perceived Characteristics of Intervention Scale–Compatibility Scale; Cook et al., 2015). Evidence of internal consistency was available for three measures, evidence of predictive validity was available for one measure, and evidence of norms was available for two measures. There was no evidence for convergent validity, discriminant validity, known-groups validity, concurrent validity, structural validity, or responsiveness. The highest rated measure was the Perceived Characteristics of Intervention Scale–Compatibility Subscale (Cook et al., 2015), which had been used twice and received a total score of five (maximum possible score = 36) and demonstrated evidence of internal consistency (“3—good”) and norms (“2—adequate”). The next highest rated measure was the Cook Implementation Measure–Compatibility Scale (Cook et al., 2012), which had been used four times and showed evidence of internal consistency (“3—good”) and predictive validity (“1—minimal”).

Relative priority

We identified two measures of relative priority, both of which are subscales of broader measures (e.g., Cook Implementation Measure–Goals and Priorities; Cook et al., 2012). Evidence of internal consistency was available for one measure and evidence of norms was available for one measure. There was no information available on any of the remaining psychometric criteria. The highest rated measure was the Cook Implementation Measure–Goals and Priorities (Cook et al., 2012), which had been used four times and a total score of three (maximum possible score = 36) based upon evidence of internal consistency (“3—good”).

Organizational incentives and rewards

We identified three measures of organizational incentives and rewards, all of which were subscales of broader measures (e.g., Implementation Climate Scale–Rewards Scale; Ehrhart, Aarons, et al., 2014. Evidence of internal consistency was available for all three measures, and evidence of predictive validity and norms was available for one measure. No further information about psychometric properties was available. The Implementation Climate Scale–Rewards Subscale (Ehrhart, Aarons, et al., 2014) was used five times and received the highest overall rating (total score = 5; maximum possible score = 36), demonstrating evidence of internal consistency (“2—adequate”), predictive validity (“1—minimal”), and norms (“2—adequate”).

Goals and feedback

We identified three measures of goals and feedback, all of which were subsets of broader measures (e.g., Chou Measure of Guideline Information–Feedback Scale; Chou et al., 2011). Evidence for internal consistency was available for two measures, and evidence of convergent validity, predictive validity, and norms was available for one measure. No other information on psychometric properties was available. The Organizational Readiness for Change Assessment–Project Progress Tracking Subscale (Helfrich et al., 2009; four uses in behavioral health) was rated the highest (total score = 4; maximum possible score = 36), with evidence of internal consistency (“3—good”) and norms (“1—minimal”). The Cook Implementation Measure–Goals and Priorities Subscale (Cook et al., 2012) received a total score of three, with evidence of internal consistency (“3—good”).

Learning climate

We identified two measures of learning climate, one of which was a subscale from a broader measure (The National Criminal Justice Treatment Practices Survey–Climate for Learning Scale; Taxman et al., 2007). Evidence of norms was available for two measures and evidence for internal consistency, convergent validity, predictive validity, and concurrent validity were available for one measure. There was no evidence of discriminant validity, known-groups validity, structural validity, or responsiveness. The Ramsey Learning Climate Measure (Ramsey et al., 2015) was rated the highest (total score = 6; maximum possible score = 36), with evidence of internal consistency (“4—excellent”), convergent validity (“−1—poor”), concurrent validity (“1—minimal”), and norms (“2—adequate”).

Discussion

Summary of findings

This systematic review of measures of organizational culture, organizational climate, implementation climate, and related constructs in behavioral health identified some promising measures; however, consistent with other reviews of organizational constructs (Allen et al., 2017; Clinton-McHarg et al., 2016; Weiner et al., 2020), the overall state of measurement across these constructs is poor. While 21 measures of organizational culture and 36 measures of organizational climate were identified, the vast majority were subscales within broader measures. Far fewer measures of implementation climate and related constructs were identified. Previous work has documented the problem of “home-grown” measures that are used only once (Lewis et al., 2015; Martinez et al., 2014). Encouragingly, more than 75% of measures of organizational culture and organizational climate identified in this review were used more than once, which may reflect the long tradition of these constructs in the broader literature (Ehrhart, Schneider, et al., 2014). In contrast, nearly half of the measures of implementation climate and related subconstructs were used only once, perhaps reflecting its more recent emergence in the field (Klein & Sorra, 1996; Weiner et al., 2011).

Limited psychometric evidence was available for the identified measures of organizational culture, organizational climate, implementation climate, and its subconstructs. This is consistent with findings from previous reviews of a broader set of implementation constructs (Chaudoir et al., 2013; Clinton-McHarg et al., 2016), as well as findings from a recent review of organizational readiness for change (Weiner et al., 2020). For organizational culture and organizational climate, evidence of internal consistency and norms was available for most measures. Evidence of predictive validity was available for over half of identified measures, though nine of them received a rating of “poor” suggesting that evidence did not support study hypotheses. Evidence for other psychometric properties like known-groups validity, concurrent validity, convergent validity, discriminant validity, structural validity, and responsiveness was sparse. Generally, psychometric evidence for implementation climate and its related subconstructs was less readily available. Only one measure of organizational culture (Glisson et al., 2008), five measures of organizational climate (Anderson & West, 1998; Broome et al., 2007; Glisson et al., 2008; Patterson et al., 2005; Simpson, 2002), and one measure of implementation climate (Ehrhart, Aarons, et al., 2014) were assessed for structural validity, which is concerning given that a measure’s dimensionality should be checked prior to checking its internal consistency (DeVellis, 2012). Also concerning is a striking lack of evidence for measure responsiveness (i.e., sensitivity to change), as only four measures among all focal constructs possessed evidence of responsiveness (Chodosh et al., 2015; Glisson et al., 2008; Lehman et al., 2002). This weakness will stymie efforts to identify organizational-level mechanisms that explain how and why implementation strategies can improve implementation and clinical outcomes (Lewis et al., 2020; Lewis, Klasnja, et al., 2018; Williams, 2016; Williams et al., 2017).

Overall measurement quality was found to be poor. With the exception of internal consistency, most median ratings ranged from “−1—poor” to “2—adequate.” Only seven measures received an overall score of 10 or higher (out of a possible score of 36) on the PAPERS psychometric rating criteria (Lewis, Mettert, et al., 2018; Stanick et al., 2021). The Organizational Social Context measures of culture and climate received scores of 11 and 12, respectively, and represent the most frequently studied measure in behavioral health-focused implementation research with national norms established in mental health (Glisson et al., 2008) and child welfare (Glisson et al., 2012). An additional four measures were in the organizational climate domain, including the Texas Christian University Program Training Needs Survey (total score = 13; Simpson, 2002) and three subscales from the Texas Christian University Organizational Readiness for Change measure (“Mission,” “Cohesion,” and “Stress”; Lehman et al., 2002) that total scores of 12, 11, and 11. The Texas Christian University Program Training Needs Survey (Simpson, 2002) has only been used five times in behavioral health, but with ratings of “2—adequate” to “4—excellent” on five different psychometric criteria, it may have promise for further use and evaluation. While the Texas Christian University Organizational Readiness for Change measure (Lehman et al., 2002) scored relatively high in comparison to other measures included in this review, there was no evidence of structural validity or responsiveness, and only “minimal” evidence of predictive validity despite 37 uses in behavioral health, suggesting that more uses may not offer more positive psychometric evidence. The last measure to receive a score of 10 or higher was the Implementation Climate Scale (total score = 11; Ehrhart, Aarons, et al., 2014), which has been used five times in behavioral health. Given its promising psychometric properties and desirable pragmatic properties (free, only 18 items), this scale demonstrates promise.

Future directions

There is a need to prioritize further psychometric evaluation of promising measures that have yet been used frequently in behavioral health. There are also opportunities to rigorously develop new measures of sparsely populated constructs, particularly for the subconstructs of measures of implementation climate.

Though we did not explicitly consider the extent to which identified measures are pragmatic (Powell et al., 2017; Stanick et al., 2018, 2021), it will be critical to do so moving forward. Some measures identified in this review are brief and freely available, while others are quite long and proprietary. Measures’ pragmatic properties are likely to influence their use in both research and applied implementation efforts.

Organizational culture and implementation climate are broad constructs that have been conceptualized and measured in a wide range of ways (Aarons et al., 2018; Ehrhart, Schneider, et al., 2014; Kimberly & Cook, 2008; Schneider et al., 2013; Verbeke et al., 1998). It would be useful to pursue conceptual and measurement work to delineate ways in which organizational culture and organizational climate have been measured. This work could guide stakeholders wanting to measure specific aspects of organizational culture and organizational climate and illustrate the trade-offs in prioritizing one conceptualization versus another. An additional opportunity may be to develop more holistic profiles of organizational culture and climate using latent profile analysis (Glisson et al., 2014; Williams et al., 2019). For example, Williams et al. (2019) demonstrated that when individual dimensions of culture and climate or the linear combination of all six dimensions were not predictive of fidelity to an EBP, a “comprehensive” profile (high proficiency culture, positive climate) was predictive of fidelity for two of three EBPs. This demonstrates that culture and climate may interact in complex ways, and that “the overall gestalt of the social context may be more important than the level of a single dimension” (Williams et al., 2019, p. 10).

Given calls for improved reporting in implementation research (Wilson et al., 2017), it may be useful to develop reporting guidelines for measurement in implementation studies. These may differ depending upon the type of study. For example, a measure development study may require different minimum criteria as compared to the use of a measure within a broader implementation study.

Limitations

This study has several limitations. First, as with all systematic reviews, it is possible that we failed to identify articles that could have detailed measures of the focal constructs or provided further data on their psychometric evidence. There are at least four potential reasons for this: (1) we did not search explicitly for molar organizational climate since that construct is not included in the CFIR, which was used to generate our search strategy for organizational culture, implementation climate, and related constructs; (2) we did not search the gray literature; (3) the original literature searches for this study were completed in 2017; and (4) we did not search all potentially relevant databases (e.g., PsycINFO, Google Scholar; Bramer et al., 2017). Additional measures of the focal constructs may have been published since the original search date; however, we captured more recent uses of the measures we identified in 2017 by conducting measure-forward “cited-by” searches in May of 2019. Nevertheless, there are also studies that provide additional evidence for included measures that have been published since our measure-forward search (e.g., Beidas et al., 2019; Williams et al., 2020). One measure of implementation climate developed by Jacobs et al. (2014) was not identified in this review (likely because initial development in testing was in both non-behavioral health and behavioral health settings), but appears to have promising psychometric and pragmatic properties. Second, there are inevitable measures of the focal constructs developed outside of behavioral health, and some of the measures identified in this review may have evidence of further use outside of implementation efforts in behavioral health service settings. Thus, it is important that readers interpret these ratings within this context rather than as an indicator of the measures’ overall quality or psychometric strength. Third, it is possible that our assignment of measures and/or subscales to the nine focal constructs was imperfect, particularly given the substantial overlap between the conceptualization and measurement of organizational culture, organizational climate, and related constructs (Kimberly & Cook, 2008). Finally, it is possible that poor reporting practices limit the extent to which evidence was available for identified measures (i.e., it is possible that more thorough evaluations of psychometric properties were conducted but not reported).

Conclusion

This systematic review identifies measures of organizational culture, organizational climate, and implementation climate used in behavioral health-focused implementation studies. Several promising measures were identified, and can inform researchers, EBP purveyors, implementation support practitioners, and others who wish to measure these constructs. However, to enhance understanding of how these constructs influence EBP implementation, there is a need for further testing of the most promising approaches, development of additional psychometrically and pragmatically strong measures, and approaches that elucidate the ways in which “dimensions of organizational culture and climate interact with, reinforce, and counteract one another in complex, non-linear ways as they relate to EBP implementation . . .” (Williams et al., 2019, p. 10).

Supplemental Material

sj-pdf-1-irp-10.1177_26334895211018862 – Supplemental material for Measures of organizational culture, organizational climate, and implementation climate in behavioral health: A systematic review

Supplemental material, sj-pdf-1-irp-10.1177_26334895211018862 for Measures of organizational culture, organizational climate, and implementation climate in behavioral health: A systematic review by Byron J Powell, Kayne D Mettert, Caitlin N Dorsey, Bryan J Weiner, Cameo F Stanick, Rebecca Lengnick-Hall, Mark G Ehrhart, Gregory A Aarons, Melanie A Barwick, Laura J Damschroder and Cara C Lewis in Implementation Research and Practice

Footnotes

Appendix 1

Declaration of conflicting interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Funding for this study came from the National Institute of Mental Health, awarded to Dr Cara C. Lewis as principal investigator. Dr Lewis is an author of this article and editor of the journal, Implementation Research and Practice. Due to this conflict, Dr Lewis was not involved in the editorial or review process for this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Institute of Mental Health (NIMH) through R01MH106510 (Lewis, PI). Byron Powell was also supported by the NIMH through K01MH113806 (Powell, PI).

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.